Abstract

This paper describes how advanced computational technologies and strategies are changing the way in which architecture and interior design are conceived and realized by designers. The classical drawing-based approach that relies on the connection between the human brain and the hand, through the use of analog devices as a pencil is called into question by new ways based on algorithms and evolutive systems that shift the complexity of both processes and outcomes. Further, those new methods have also an impact in terms of architectural representation and imagine-making: design processes that are affected by physical variables such as time or gravity cannot be properly represented by standard techniques. The final consequence is the birth of a design and representation language that is based on a new alphabet that deny the use of the hand as primary tool to shape unique ideas.

1. Introduction

One of the crucial aspects in contemporary design and architecture is the strong connection between those two branches with fields that generally do not have any contact with the built environment or with the world of objects we are encircled in. Since computers appeared in architecture practices and in the academy, two main trends could be recognized: the first, that simply translated the real drawing board into a digital one and, more recently, the trend that tries to merge and take the cue from different scientific areas such as biology, physics, material science and many more. Blending competences and skills from contrasting disciplines in order to explore new ways to solve architectural problems, automatically entails the need of moving from well-known representing tools to encompass technologies that allow the designer to explore a wider and more complex range of solutions.

This exploration finds his roots in the early 70s at the Artificial Intelligence Laboratory of the Massachusetts Institute of Technology and it has been later developed through the research conducted by the most influencing architects of the Deconstructivist movement in the 90s. Today more advanced tools and powerful computers allow us to push the boundaries of architectural design and representation.

This paper presents the starting point of a research by design project who focused his attention on the use of agent-based modelling processes at both urban and interior design scale, and the obtained results in terms of representation.

2. Background

Obtaining an image without the direct act of drawing is a quite old technique that is mostly referred to an ancient and religious environment. The mask of Agamemnon or the Veil of Veronica represent example of acheiropoietic images retrieved by the contact between a sheet of material, either tissue or metal, and the human body. The result is an image that magically appears and has a strict correlation with the divine [1].

Unexpectedly, current research in design and architecture seem to look back at the core idea of avoiding the interaction between the hand and a physical tool to sketch. Indeed, since the first researches on artificial intelligence have been conducted, the outcome obtained in terms of output of a code has always been interpreted as something that came out from someone that is not a human being. In this context grew also the idea of coding as a conversation between the machine and the user, based on a language made by numbers or a specific alphabet. One of the first implementation of those principles lead Papert and Solomon [2] to build a device, the Turtle, that could draw simply following instructions written by the user with a peculiar language. The consequence is a number of non-man-made drawings that respect imposed rules.

The introduction of computers in the architectural design workflow led to what has been defined by Mario Carpo as the digital turn in architecture [3]. This revolution apparently had the same impact of the invention of the printer but, at a closer look, it is possible to observe some remarkable difference. One of them concerns the discrepancy between the immutability of a printed copy and the dynamic essence of the digital image. The first one is of course defined within the boundaries of the paper and cannot be subjected to any change, while the second one is based on mathematical notations and can be informed with different data in order to retrieve disparate results.

Such a paradigmatic change implied also an innovation in terms of how the space and the drawings were conceived. In both cases indeed the first victim has been the Cartesian grid while the second was the classical representation based on the simulation of the human vision, the perspective [4].

Within this frame emerged also the problem connected with the concept of vision. According to Peter Eisenman’s statement, “Vision can be defined as essentially a way of organizing space and elements in space” [5], confirming the anthropocentric approach of classical architecture in which every gesture is based on the relationship between the subject and the object. Eisenman than argued that the shift due to digital based procedures should led to a change in the association between drawings and real space in order design more complex shapes that turn out almost impossible to be drawn with analog tools. In this frame emerges the need for the designer to rely on tools that allow him to translate, control and shape his own ideas in a different manner than the preceding ones.

This shift appears then related with the introduction of the concept of simulation as a new way for ideation. Simulating and predicting how the design choice will affect the environment or how a building component will react to users’ presence and activities are both part of the same instances conducted within the technological domain. Responsiveness and adaptation to external agents are the key words to understand the direction undertaken by contemporary architecture [6].

Lastly, even the role of the designer has been affected by this mutation switching from simply being the responsible for generating drawings to become a space shaper who actually lead the process of making cities, buildings and products. This new role is based on the use of rules that are governed by the logic of algorithms through an associative process rather than an additive one. But even if it could be intended as a cutting-edge technique, it has its own origins in the pre-mechanical civilization [7], from Roman architecture to the middle age era. Both some of the most important treaties on architecture than the great part of the architectonic production in that period have been made without the use of drawings but relying on oral instructions or on a system of variables that allow to obtain an endless range of different but similar solutions for a given task such as building a temple with a specific classic style. The end of the described classical parametricism arrived with the invention of printed images that favored the reproduction of exact copies of the same object.

But, nowadays is not acceptable that the result of research in architecture practices and faculties is an image that requires then to be engineered and, at a later stage, evaluated in terms of feasibility and economic or environmental sustainability [8]. Answers must arrive in real-time while designing and exploring the digital model in order to respond to the new requests addressed by the whole cohort of experts that gravitate around the project. Most important is then to realize that, thanks to the capacity of translating those answers into input for an algorithm, they are actually informing and improving the project itself even towards optimization processes. Data-informed design and architecture are not only advancing how they are conceived and built but even how they are represented and communicated through diagrams that highlight the relation among different parts of the project rather than the project itself.

3. Self-Organized Systems as Evolutive Dynamic Strategy for Urban Design

A self-organization system is based on the emergence of a general form of arrangement and coordination among a group of small agents, starting from a chaotic one. This process of self-organization is not affected by external components and it is led by parameters that control the distance between agents and the number of interactions among them.

The first principles of self-organization were formulated by the psychiatrist and cybernetics William Ross Ashby [9,10] in 1947. He defined that a dynamic system will naturally evolve towards a state of equilibrium among a number of different states, according with an attractor. Once the system reaches the first state of equilibrium it will be subjected to further evolutions without moving away from the attraction area. This implies that the whole system is based on the coordination among its sub-systems.

This strategy has been applied to investigate new possibilities in urban design and regeneration of a specific zone of the waterfront of Barletta (Italy).

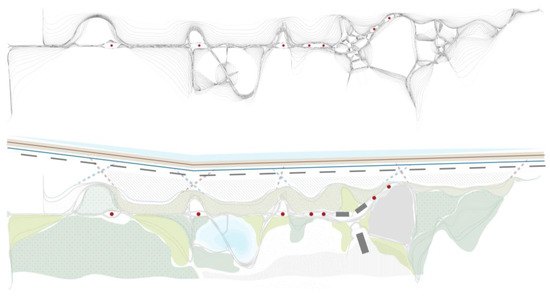

The research by design project took into account the necessity of creating a new layout for this abandoned area with the will of establishing a connection between the city and the water. Further, one of the goals of the research was to go beyond the classical urban schemes based on the Cartesian grid and on linear alignments to foster a more dynamic structure for the final layout of the area (Figure 1). The design programme was then completed by the will of preserving a few existent punctual and linear structures that have been incorporated within the algorithm as pivotal point to drive the first steps of the stabilization process.

Figure 1.

Final outcome of the stabilization process and final proposed layout for the project’ site.

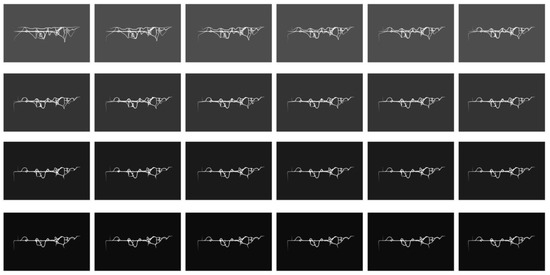

Implementing the self-organized system in order to design, and consequentially draw, a new urban plan for this area, required the definition of a draft to roughly define the main leading agents. Then, two main parameters have been defined to control the process. The first one was a threshold to manage the distance each vertex/agent of the starting curves could search for other agents and, the second one was a ratio to control the maximum distance that each vector can travel. Once the parameters are defined, few more data are necessary to get the final shape: the number of points to rebuild the new curve and a threshold to control possible points’ overlapping. Once the algorithm has been created it requires a time within which to perform the evolutive strategy. By the fact that the computing power is dramatically increased in the last decades, few milliseconds are enough to generate one step of the evolution. The outcome is a series of different steps that show the system while searching for his stability (Figure 2).

Figure 2.

Stabilization sequence of the self-organized system.

In terms of image, it is represented by a number of frames that combined together provide a dynamic arrangement of the space according with starting conditions, defined parameters and assigned time. Beside the initial definition of the algorithm, the designer does not play any role in the research of the stability that is self-conducted by the agents and, therefore he does not act as the draftsman of the new plan. The classical urban arrangement generally based on linear paths is substituted by a more complex and various system that optimizes distances and urban permeability as well as the usability of the spaces.

The complication of the process and the involved forces could not be controlled and represented without the essential help of computational tools and methods that define a clear difference between the canonic additive design of the hand drawing based approach and the associative method implemented in this research.

4. Flocking Behavior for Interior Design

Using digital rules to draw and design grants the possibility to explore emergent fields and to collect information and even inspiration from them. Contemporary research in design and architecture is gleaning always more data from scientific areas that cover from biology to behavioral sciences. In the latter case one of the most interesting field is the one that studies flocking behavior. It basically describes and simulates the behavior of flocks and swarms by setting up a very limited number of simple rules. Those rules are followed by a group of agents with a defined level and sort of interaction among them without needing a centralized coordination.

The three basic rules to describe this behavior work on the movement that can be executed by each single agent and on their interaction. The first rule concerns the degree of separation among the agents. A range of repulsion is set up in order to avoid collisions and the creation of crowded spots. Differently, the second one, works on the level of cohesion among the individuals. It is required in order to prevent the flock to spread away its own agents in the bi-dimensional or three-dimensional space destroying the flock nature itself. Lastly, the alignment behavior controls the direction trailed by the agents. Each agent defines his own alignment by calculating the average direction of the neighbors.

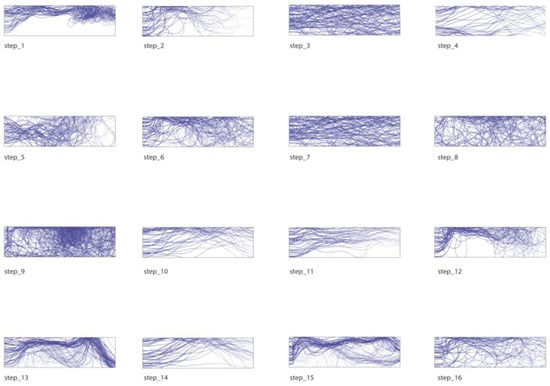

The algorithmic set up to simulate a flock requires an environment in which the agents can describe their movement. This could be either a surface or volume that will be crossed by an a priori defined number of agents both randomly or methodically distributed. The initial settings are stored and sent to an engine that represents the core of the algorithm. The engine runs the simulation computing a number of steps in a defined time, usually few seconds, and merging the initial settings with behavioral and visualization settings. Behavioral settings allow the designer to manage the three above mentioned rules inputting a numeric value, generally encompassed between zero and one, to define the intensity of each behavior. It appears immediately clear how the designer can actually control and balance the magnitude of each action the agents are carrying out. Further parameters such as the speed of the system and the search radius of the agents can be under the control of the designer. A key role is then played by the visualization settings that retrieve the final outcome in terms of trail curves. The trails are the paths performed by the agents in a given time and serve as final design outcome: they are made by a rebuilding process that transforms sharp polylines into smooth high-degree nurbs curves.

The described technique has been investigated to produce a wide range of different outcomes for a high-definition printing process employed to impress ceramic slabs for interior design (Figure 3). According to different design purposes it has been possible to produce several unique bi-dimensional patterns informed by different parameters. The final goal was to instill the sense of velocity and movement to address the space

Figure 3.

Flocking sequence with different behavioral combinations.

Agents can avoid to cross an area where an obstacle is set by the designer so that they do not occupy the space allocated for a door or other elements; or they can be guided in order to concentrate their presence on specific parts. The intrinsic dynamicity of this system has been deployed through the whole wall overtaking every limit in terms of shape and dimensions. This allows the designer to focus his attention on the design intent and on the definition of the agents’ behaviors rather than physically trace each line of the pattern.

Each solution represents the outcome of the same process that could not be handled without relying on computational design strategies and tools.

5. Conclusions

According to Tedeschi and Lombardi “Roughly speaking, the algorithmic modeling allows designers to create complex shapes by the computer without clicking the mouse, without any manipulation of digital forms. The designer creates a diagram (by a specific software) which embeds a set of procedures and instructions that “generate” the form: the shape is not actually “modeled”, but “emerges” from a series of instructions.” [11]. As well as to Bruce Mau’s statement “Process is more important than outcome. When the outcome drives the process, we will only ever go to where we’ve already been. If process drives outcome we may not know where we’re going, but we will know we want to be there.” [12]. it is evident how the new paradigm in architecture and design is process driven rather than drawing based.

The need to embed forces, elements and behaviours that aim to replicate real world’s conditions, pushes the discipline to rethink the role of the representation as well as the one of the designer itself. The evidence of this change is even underlined by the new function that the building industry is taking over, leaving the usual position of “building makers” to embrace new ways to virtualize and predict how the buildings and the cities will behave and develop within a given lapse of time. Further, creating diagrams rather than objects helps designer on combining the medieval idea of variations [7] with the potential of contemporary computers and machine for digital fabrication.

The concept of simulation, that in the last years is widely replacing the need of representing architecture and design, as well as the possibility to digitally produce and customize specific parts of a building, such as facades and walls, are leading this field through a new era of responsiveness and interaction between users and built environment. Looking towards the goal of evoking emotions through technology, architecture is going to be transformed from a branch that produces motionless large-scale objects to a field that dynamically responds to external stimulations and reacts adapting itself.

Within this frame designers’ ideas are no longer expressed by lines, surfaces and volumes rather than by groups of data and information that runs across different platforms gathering at the same time artistic and technical aspects of the project. The dialogue between the designer and the machine, based today on scripting and visual scripting software, will probably have further development with the implementation of virtual reality and instruments to translate brain waves into data thanks to brain control interfaces derived from medical technology, further reducing even the existing physical distance between the idea, that arise in the human mind and its hand-made representation.

As a consequence, and foreseeing the development of an always more automated building process entrusted to robotic arms and devices, it is possible to predict how the importance of drawings, as a medium to design and control the production stage, will decrease during the upcoming years. Even technical drawings such as plans and sections could be probably totally replaced by videos, to be presented to customers, and files that simulate either 3D printing steps or each kind of futuristic building technique to be controlled by designers and contractors.

The paper has presented a first study about how algorithmic strategies and agent-based techniques are affecting the design process and the creation of images. The first application has been developed has part of the master degree thesis discussed at the “G. d’Annunzio” University (Italy) by Mario Luca Barracchia (supervisor, Prof. Livio Sacchi/co-supervisor, Dr. Davide Lombardi) and investigated the implication of applying agent-based modelling at the urban scale. The second application is part of an ongoing speculative research conducted to explore the potential of algorithmic modelling and high-resolution printing techniques. Both applications show the potential of these tools when applied at a bi-dimensional level. Further studies will be conducted to explore the feasibility of three-dimensional application starting from the above-described frame.

References

- Garcia, M. Emerging technologies and drawings: The futures of images in architectural design. Archit. Design 2013, 83, 28–35. [Google Scholar] [CrossRef]

- Papert, S.; Salomon, C. Twenty Things to do with a Computer, Artificial Intelligence Laboratory, Memo n.248; M. I. T.: Cambridge, UK, 1971. [Google Scholar]

- Carpo, M. The Digital Turn in Architecture 1992–2012; John Wiley & Sons: Hoboken, NJ, USA, 2013. [Google Scholar]

- Eisenman, P. Architecture after the age of printing (1992). In The Digital Turn in Architecture 1992–2012; John Wiley & Sons: Hoboken, NJ, USA, 2013; pp. 15–27. [Google Scholar]

- Eisenman, P. Architecture after the age of printing (1992). In The Digital Turn in Architecture 1992–2012; John Wiley & Sons: Hoboken, NJ, USA, 2013; pp. 15–27. [Google Scholar]

- Scheer, D.R. The Death of Drawing: Architecture in the Age of Simulation; Routledge: Abingdon on Thames, UK, 2014. [Google Scholar]

- Carpo, M. Parametric notations: The birth of the non-standard. Archit. Design 2016, 86, 24–29. [Google Scholar] [CrossRef]

- Wirz, F. Foreword, in AAD—Algorithms-Aided Design: Parametric Strategies Using Grasshopper; Le Penseur Publisher: Brienza, Italy, 2014; pp. 9–11. [Google Scholar]

- Ashby, W.R. Principles of the self-organizing dynamic system. J. Gen. Psychol. 1947, 37, 125–128. [Google Scholar] [CrossRef] [PubMed]

- Ashby, W.R. Principles of the self-organizing system. In Principles of Self-Organization; von Foerster, H., George, W.Z., Jr., Eds.; U.S. Office of Naval Research: Arlington, VA, USA, 1962; pp. 255–278. [Google Scholar]

- Tedeschi, A.; Lombardi, D. The algorithm-aided design (AAD). In Informed Architecture: Computational Strategies in Architectural Design; Springer: Berlin, Germany, 2017; pp. 33–38. [Google Scholar]

- An incomplete Manifesto for Growth. Available online: www.manifestoproject.it/bruce-mau/ (accessed on 10 September 2017).

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2017 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).