1. Introduction

While the majority of top-selling video games in the past featured violence as the main action, the recent popular emergence of game content includes games with physical activity, often called exergames. Exergames encourage users to be physically active and exercise while playing the video game. Exergames train people in a fun and competitive manner, which also promotes a healthy lifestyle. Recently, exergames have attracted public attention, especially for children who exercise very little and the elderly [

1,

2,

3]. The first exergame was Konami’s DDR (Dance Dance Revolution), where people could learn popular dance moves by simply stepping on a sensor platform. In 2006, Nintendo’s Wii Fit and Wii Sport released their Wii remote control, which allowed for a full-body exergaming experience. Wii Fit translates traditional forms of exercise such as yoga into an interactive format. Wii Sports allows the average person to experience a wide variety of different sports, such as tennis, fishing, and golf.

On the heels of Wii’s immense popularity, Microsoft added the Kinect sensor to their Xbox games in 2010. The Kinect sensor tracks users’ movements without the need for additional gear, allowing users to freely enjoy the game. The success of these platforms has increased interest in motion-based full-body exergames. Xbox’s Nike Kinect training game, which includes lower body exercises, is a high-impact game requiring users to move and shift their weight to perform the exercises. Kinect sensors track users’ movements and, unlike Wii Sports, users must assume the proper body poses in order to do the exercises. A study showed that the community-dwelling senior citizens improved cognitive performance after a 12-week intervention of dual-task full body motion game training using Kinect [

4]. Another recent study has tried to verify whether or not the effects of physical exercise training using Wii Fit Plus with protein supplementation may improve the musculoskeletal function and risk of falls in pre-frail older women [

5].

In the elderly population, a decrease in muscle endurance leads to an overall decrease in physical activity, which puts this age group at risk of various diseases and injuries such as from falls [

6]. As population aging grows rapidly in many countries, it will also affect many aspects of public life. For example, health care needs to treat brain diseases, such as dementia. As such, studies are underway as to how virtual reality games and automatic coaching systems may be helpful for the elderly [

7,

8]. Others introduced the virtual reality game using a natural user interface (NUI) such as Kinect for the user to carry out physical and cognitive rehabilitation therapies [

9,

10]. A study demonstrated that Kinect-based exergaming was as effective as combined exercise (composed of resistance, aerobic, and balance exercises) in improving the frailty status and physical performance of the elderly [

11]. More Kinect-based rehabilitation systems are being developed to support physiotherapy sessions, and also help to reduce health care costs, while improving quality of life [

12,

13]. These studies are looking into how NUI-based rehabilitation exergames can be used to improve or replace existing physical therapy programs.

In previous studies, new digital technologies, such as virtual reality or video games, are being used to promote user’s engagement with rehabilitation [

14]. These technologies are effective in that they allow patients and clinicians to perform rehabilitation even if they are not together. In this research, we present the Chongchong Step Master virtual reality exercise game, which is designed to improve gait and balance function and prevent dementia for the elderly. We aim to create this system to encourage elderly people to actively engage in exercise training, such as walking and moderate intensity aerobics-type activities.

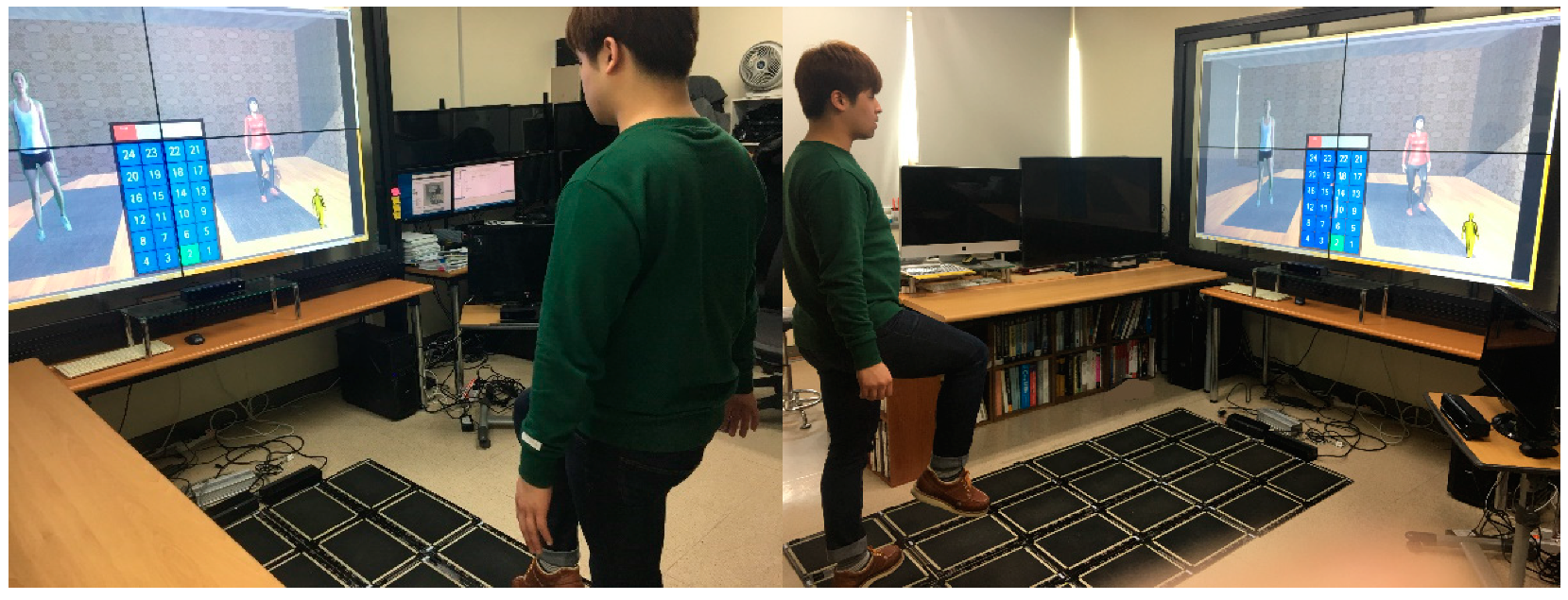

Figure 1 shows a user taking an exercise posture under the guidance of the game system. As shown in

Figure 1, the user can get engaged with exercise training by the guidance of the virtual trainer, such as where to step on the board and how to take an exercise posture. This system recognizes both walking and upper and lower body exercise movements using multiple Kinects and the stepping board. The stepping board is used to accurately identify walking movements, and the Kinect-based exercise pose estimation system was developed to distinguish between both arm and feet exercise postures. In this system, multiple Kinect sensors are positioned, one in front of the user and one on the right side, to better determine and track user exercise motions. The Kinect-based exercise posture recognition model is specified in XML-based scripts to make a comparative analysis possible with real-time user tracking data for the exercise programs.

This paper is structured as follows.

Section 2 reviews the related work on exergames or rehabilitation systems.

Section 3 presents the brief overview of the Chongchong Step Master virtual reality physical exercise game and the design and implementation details of this gaming system.

Section 4 presents preliminary evaluations on the exercise pose estimation system.

Section 5 then provides conclusions and discusses the directions we need to take for future research.

2. Related Work

Walking is a complex process involving brain control (motor), cognition, perception, and process integration. Walking ability is a useful diagnosis factor that can predict the decline of functional abilities of elderly people. Usually, walking exercises aim to improve and maintain basic body fitness. However, for the elderly with cognitive impairment, walking speed is slow while performing dual tasks. Their decreased memory and attention are factors affecting slower walking speed. Recently, various virtual reality technology and serious games have been developed for rehabilitation. These systems aim to supplement or replace the existing rehabilitation treatment that was performed by a physical therapist or exercise expert.

While walking is commonly performed at a basic level, various types of walking exercise programs are needed to improve balance, cognitive function, memory, and attention. Exercise program technology can also be used for the prevention and improvement of dementia, balance awareness for the elderly and for dementia prevention exercise programs. Experimental evaluation and verification of the model technical analysis is required. A walking program can include the fun elements of the game: movement dropout prevention, motivation, and step-by-step difficulty control. The system requires the development of a computer vision-based gesture recognition that can accurately recognize the user’s movement behavior (that is, posture and balance state).

While there are many available exergame products, they are mostly targeted at a younger population, and are not appropriate or engaging for an older population. An automated interactive exercise coaching system using Kinect was developed to provide a more engaging environment, which promotes physical activity for the elderly population [

7]. The coaching system guided users through a series of video exercises, tracked and measured user movements, provided real-time feedback, and recorded their performance over time. This system provided exercises to improve balance, flexibility, strength, and endurance, with the aim of reducing fall risk and improving the performance of daily activities. However, this system mainly focused on the movement of the user getting up from a sitting position and then sitting back down on a chair. It did not support various exercise postures.

A Kinect-based real-time posture correction game was developed to help the elderly to adopt proper posture while promoting the user’s physical activity [

8]. This system detected changes in user posture in real-time from the Kinect skeleton data. DTW (Dynamic Time Warping) algorithm was used to detect differences between the original correct posture and the user’s current posture. The recognition module was used to acquire the user’s image before the game started, and to hold all postures from the initial correct posture (where the user posed correctly upright), to the incorrect posture (where the user’s back tilted more than 20 degrees). In the posture correction module, nine out of the Kinect’s 25 skeleton body joints were used to construct the DTW feature vectors to determine a bad waist posture. However, the main aim of this system was to detect incorrect posture, rather than to promote physical exercise.

The ReaKinG virtual reality rehabilitation platform using the Kinect V2 sensor was developed for physical and cognitive rehabilitation therapies [

9]. It was designed to achieve prevention and rehabilitation of patients in areas such as strength, or their aerobic or cognitive capacities. This platform includes two different kinds of exergames. One game was to train aerobic capabilities by making the patients walk in front of Kinect along different landscapes and also collect coins on the ground or in the air while walking. The other game was designed to improve strength skills by practicing some exercises such as flexion of shoulders and extension of elbows. However, this platform provided simple movements of particular body parts, such as the abduction/flexion/extension of shoulders, elbows, and knees while playing the game in a virtual environment.

A game-based rehabilitation tool was developed using the Kinect sensor for the balance training of adults [

12]. In the prototype of this game, the player could travel through a mine, collect gems, and place them in a cart. The gems were placed at a distance tailored to the player’s level of ability, ensuring that the player performed motions for rehabilitation. A game was developed to specifically train reaching and weight shift, to improve balance in patients. However, this prototype supported only upper body motions. Similar to [

12], a prototype of a virtual reality-based game consisting of an integrated hardware and software platform using the Kinect sensor was developed for upper body exercise and rehabilitation [

13]. In this system, the exercise protocol was adopted from a shoulder exercise program for individuals with spinal cord injury.

As described above, there has been a lot of use of commercial video exergames and development of virtual reality-based games using Kinect for rehabilitation. However, most of them provided simple movements, such as navigating the virtual environment, collecting gems/coins, or specific body part exercises. There was no series of exercise programs for physical and cognitive rehabilitation of the elderly. A key component of our approach is the use of both Kinect sensors and a stepping board mat system, which provides the controlled, focused exercise required for physical and cognitive therapy. Our system was specially designed to facilitate walking and aerobic exercises for the elderly and improve their health.

3. Design and Implementation

3.1. System Overview

The Chongchong Step Master Exergame system allows a user to workout at various levels (beginner, intermediate, and advanced) without the need for a physical trainer, and provides guidelines to assist the user with the successive exercise movements. “Chongchong” step means “short and quick” or “swift” cheerful walking.

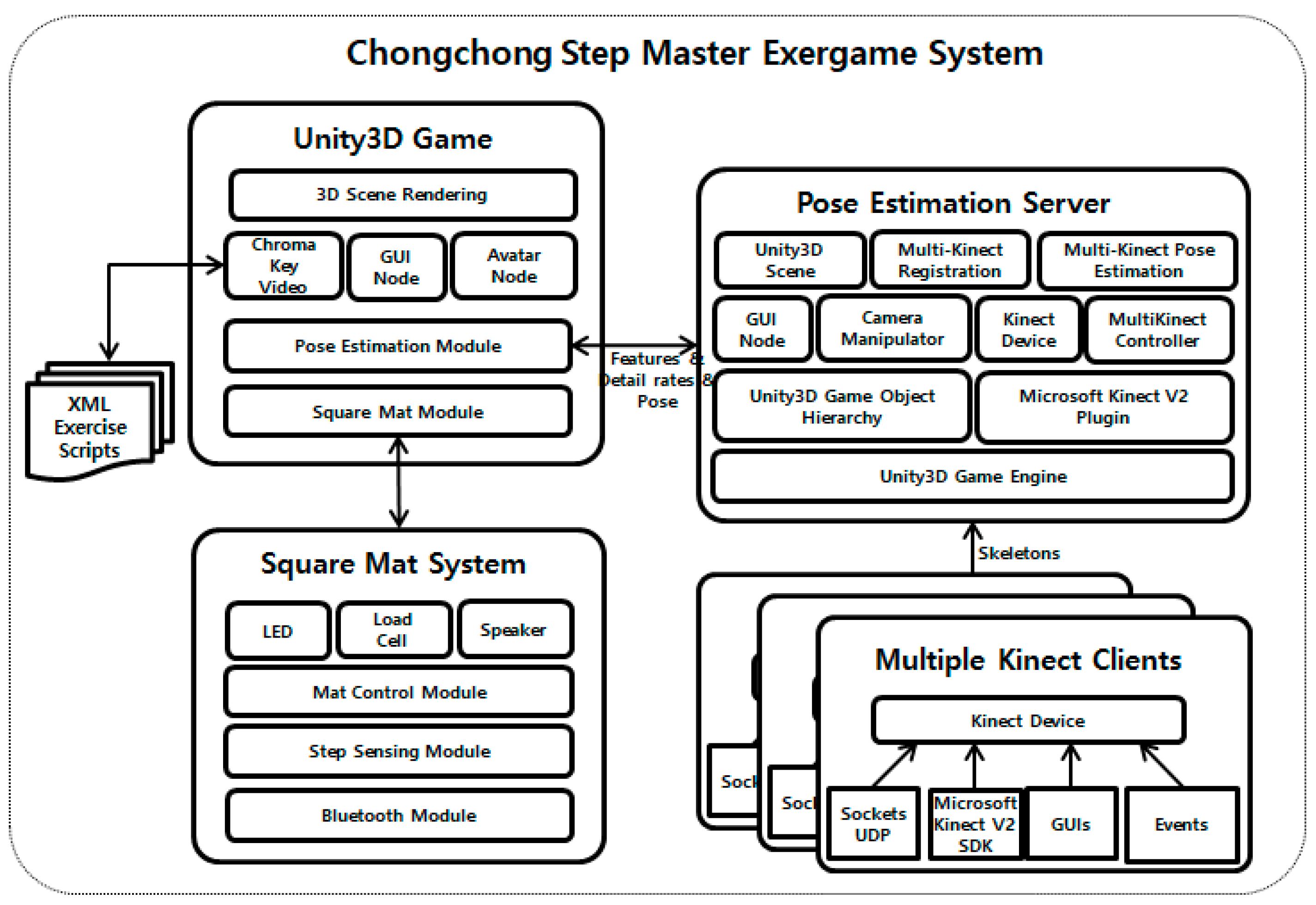

Figure 2 shows the overall system architecture of the Chongchong Step Master Exergame. This system consists of a square mat system, an exercise pose estimation system using multiple Kinect sensors, and an integrated Unity 3D exercise game system. The square mat system (in the stepping board) recognizes the user’s step information, in order to accurately read user’s walking movements. The Kinect-based pose estimation system accurately gauges the arm and leg exercise movements (left, right) by analyzing user movement data (i.e., body joints) in real-time. The integrated Unity3D exercise game receives step monitoring and exercise posture recognition, provides the user’s virtual avatar and a professional guide to correct exercise posture, and expresses the user’s posture and determines whether the user’s exercise posture is correct.

3.2. Exercise Program

The physical exercise programs were developed by clinical exercise physiologists, and each workout movement sequence was scripted in XML format to be used in the game. This script is used to analyze users’ exercise postures and compare them to actual workout postures (walking and arm/leg movements). There are a total of 60 walking exercise programs (4 types × 3 levels × 5 stages).

The exercise programs are vital walking, balance walking (for enhancing a sense of balance), dual task (for enhancing cognition), and visual-cognition walking. The vital walking program asks the user to perform a simple forward walking movement with a regular step rhythm. It induces brain activity while vital walking by increasing difficulty levels. The balance walking program asks the user to perform knee ups or squats in place, along with walking in order to strengthen the muscles used to maintain a sense of balance. The dual task asks the user to perform arm movements simultaneously with walking steps. This can trigger brain activity and have a positive effect on improving cognition. The visual-cognition program asks the user to perform walking after watching the step path he/she should walk on the screen and square mat.

Each exercise program has 3 different levels: beginner, intermediate, and advanced. In the beginner level, the user takes 10 steps while moving his/her arms movements (both arms up, both arms spread out in the air, arms at the side, repeat). In the intermediate level, the exercise movements are a bit more complex (arms to the side, life the knees, knee curls, kick leg out to the side, repeat) while taking a total of 10 steps. In the advanced level, the repetitive movements are a bit more difficult (arms to the side, arms spread out, and arms up in the air, lunge, and squat). Each difficulty level has 5 stages. The difficulty level and stage were gradually increased by changing the step sequence, location, and direction of the square mat.

3.3. Square Mat System

Figure 3 shows the square mat system, a 4 × 6 stepping board consisting of control, sensing and communication modules. The size of each compartment is 282.3 × 349 × 3 mm

3. Each has its own address value in the form of a switch. The control module manages 3 zones of 8 compartments of each independently configured cell. It monitors the ADC (analog-to-digital converter) signal of the load cells in real time and transmits the data to the communication module. The sensing module is equipped with an FSR (force sensing resistor) sensor that can distinguish each pressure input in each cell, and measures the user’s weight through the act of stepping on the FSR sensor. The sensing module also has a built-in interface to control the LED (light emitting diode) to indicate the reaction status. The communication module serves to connect the PC and the MCU (micro controller unit). It communicates using serial port profile Bluetooth.

3.4. Exercise Pose Estimation System

With an increasing number of tele-rehabilitation systems or virtual reality therapy exergames, human motion recognition and estimation becomes more important. This is because it is the core technology of these systems, which automatically evaluates the user’s exercises by recognizing the user’s movements [

15,

16].

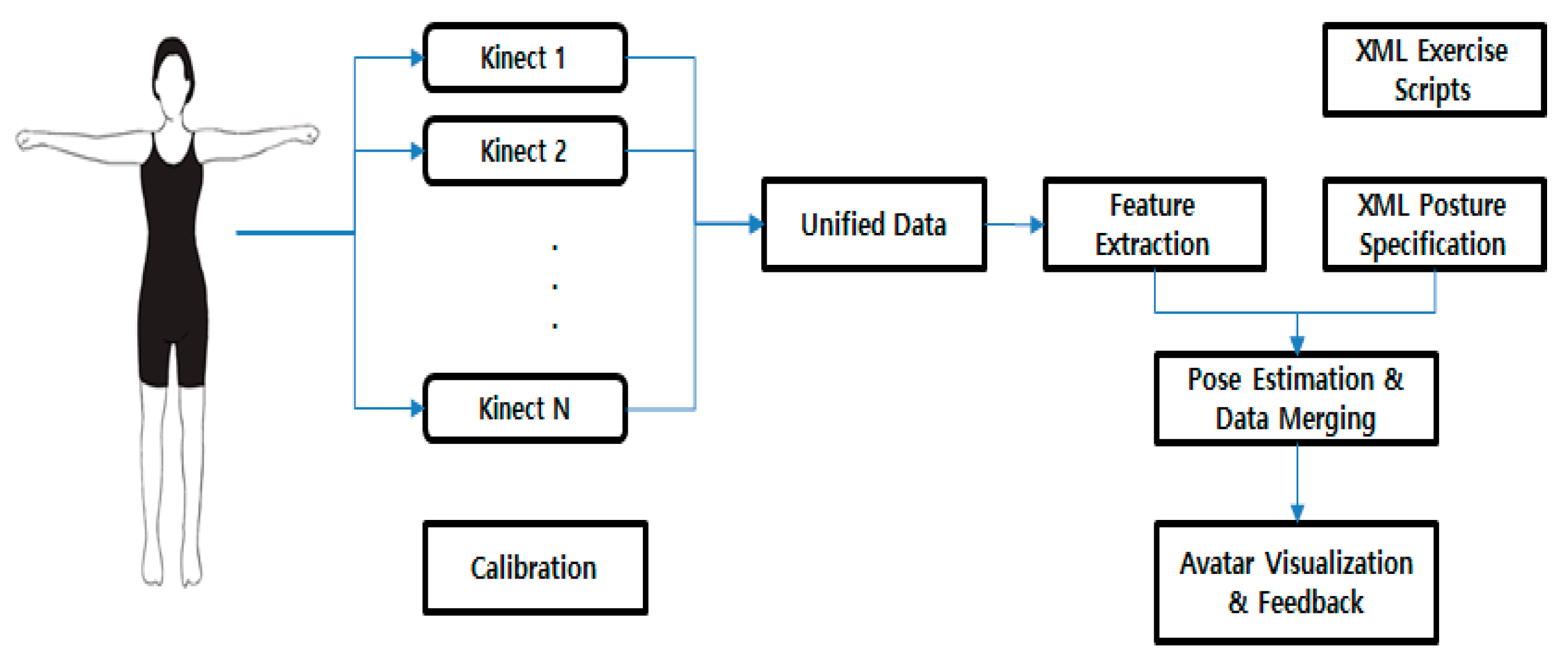

Figure 4 shows the overall process of the multiple Kinect-based physical exercise pose estimation system used in the Chongchong Step Master Exergame. A user motion is captured with multiple Kinects. First, the calibration is applied to match the coordinates among sensors placed in different locations. Then, the feature extraction from user motion is performed to define the Kinect-based posture recognition model. This model is used to compare postures between characters of different body sizes. Then, the exercise posture is estimated to accurately determine if the user is doing the workouts correctly, as defined in the exercise scripts. The captured user posture is compared in real-time with the XML posture specification, with the results of important parts compared being displayed to the user.

This pose estimation system is composed of the client program to which the multiple Kinect sensors are attached, and the server program that is connected to the Unity3D game contents. This system is based on a client/server architecture to overcome the USB bandwidth issues of Kinect V2 SDK, which made it difficult to connect more than two Kinect sensors to one computer. Hence, the information on the user body frame skeleton that is received from each Kinect device is sent to the server. There is a server module in the Unity3D game that receives body skeleton data from multiple clients, and then estimates body exercise movements. In this research, we combined multiple Kinect sensors to determine more complex body postures that are not easily tracked by one Kinect sensor, such as self-occlusion by other body parts.

3.4.1. Calibration

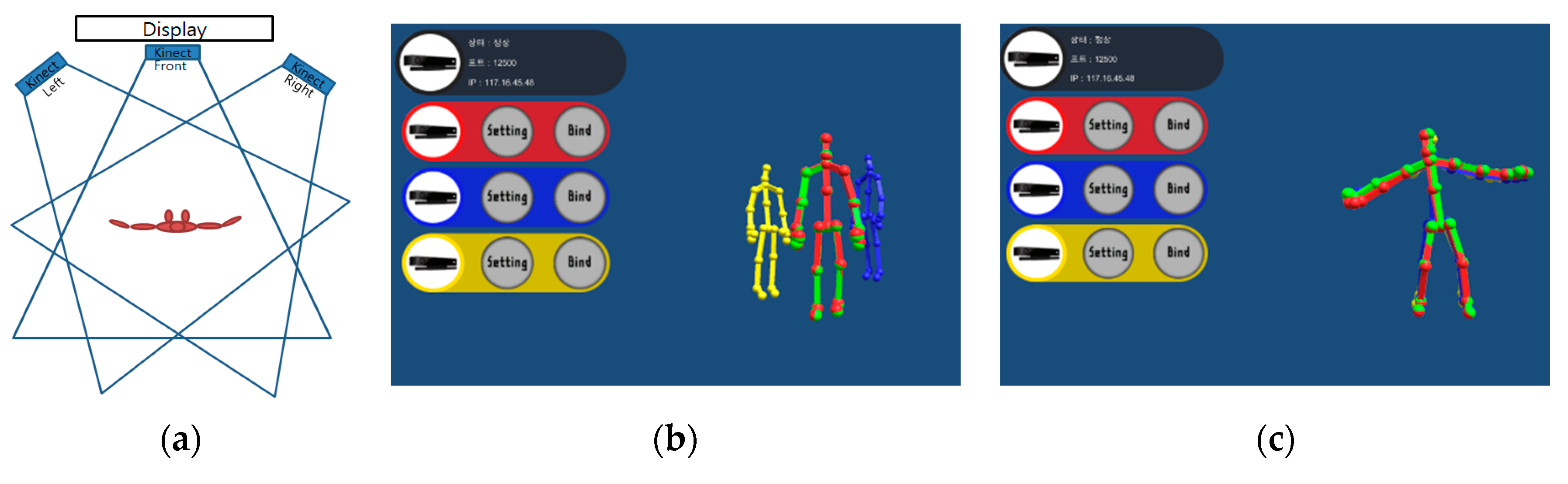

Figure 5 shows the placement layout of multiple Kinects (a) before (b) and after (c) the calibration of multiple Kinects (at the front and on the left and right sides). When using multiple cameras, each camera (at the front or on the sides) has its own coordinate system, and hence those different coordinate systems from the Kinects on the sides must be calibrated to be converted into the reference coordinate system at the front. Typically, a large planar rectangle [

17] or spherical ball [

18] is used for the calibration. In this research; however, the Kinect’s skeletal data are used directly. First, the user stands facing a direction at an angle of about 45 degrees, so that all body joints (25 joints) can be captured by multiple Kinects. Then, the user’s movements for the next 2–3 s are recorded, while having all body joints well tracked. The user’s skeletal data are then saved for each Kinect.

Then, the ICP (iterative closest point) [

19] algorithm is applied to the recorded skeletal data to calculate the exterior variables between the reference camera in the front and the side camera. This information is used to correct the relative location and direction of the Kinects to each other. The ICP algorithm is used in many applications to register the 2D or 3D points as a set using the Euclidean method. This ICP algorithm repeatedly tracks the correspondence between points and assesses the geometric (i.e., rotation and translation) changes between two sets of two points. Equations (1) and (2) are used to register two 3D points as a set.

In Equations (1) and (2), the closest point, j*, to the set of two points X = {xi}, i = 1, …, M and Y = {yj}, j = 1, …, N, is the optimal correspondence point of set X. The calibration process yields the transformation matrix (rotation matrix R and translation vector t). This process is repeated for each pair of the front and the side Kinects. That is, both left and right Kinect’s coordinate systems need to be matched against the reference coordinate system, i.e., the front Kinect.

3.4.2. Feature Extraction

Table 1 shows the 25 features constructed for the Kinect-based exercise posture recognition model. This generalized model is needed for comparing postures with different body sizes (such as tall or fat), without altering the user motion data. The 14 body joints (of the 25 joints offered by Kinect 2.0 SDK) are used in a Kinect-based exercise posture recognition model: Hand Left (HL), Hand Right (HR), Elbow Left (EL), Elbow Right (ER), Shoulder Left (SL), Shoulder Right (SR), Ankle Left (AL), Ankle Right (AR), Knee Left (KL), Knee Right (KR), Hip Left (PL), Hip Right (PR), Spine Shoulder (SS), and Spine Base (SB) [

20]. These body joints are taken into account to generate the feature data to judge the user’s real-time body posture in order to interpret the characteristics of exercise body posture.

Since the spatial information (X, Y, Z) for the body skeleton joints is sensitive to movement and rotation, feature vectors were created, and the angles between these vectors were calculated to determine the features representing each exercise body posture. In order to obtain angle information, the vectors were created at the left shoulder-right shoulder, right shoulder-right elbow, right elbow-right hand, right shoulder-left shoulder, left shoulder-left elbow, left elbow-left hand, right pelvis-right knee, right knee-right foot, left pelvis-left knee, and left knee-left foot, to calculate the angles of the shoulder, elbow, and knee. This allowed us to obtain the degree of bend in the arm and leg. In addition, the right shoulder-right hand, left shoulder-left hand, right pelvis-right foot, left pelvis-left foot, right knee-right foot, and left knee-left foot vector angles were calculated against the front, up, down, and 45 degree side vector, to determine the direction of the arms and legs.

The angles between vectors were calculated using Equation (3). In this equation, θ represents the angle between vectors u and v in 3D space. Here, u and v represent the vector of paired joints (for example, between the shoulder and elbow or between the elbow and hand) or the front, up and down vectors. In this way, this method uses the angular position of the torso, left/right arm, left/right leg, and the distance between both hands, feet, and knees. The distance between two points x and y in 3D space were calculated using Equation (4).

3.4.3. Exercise Pose Estimation

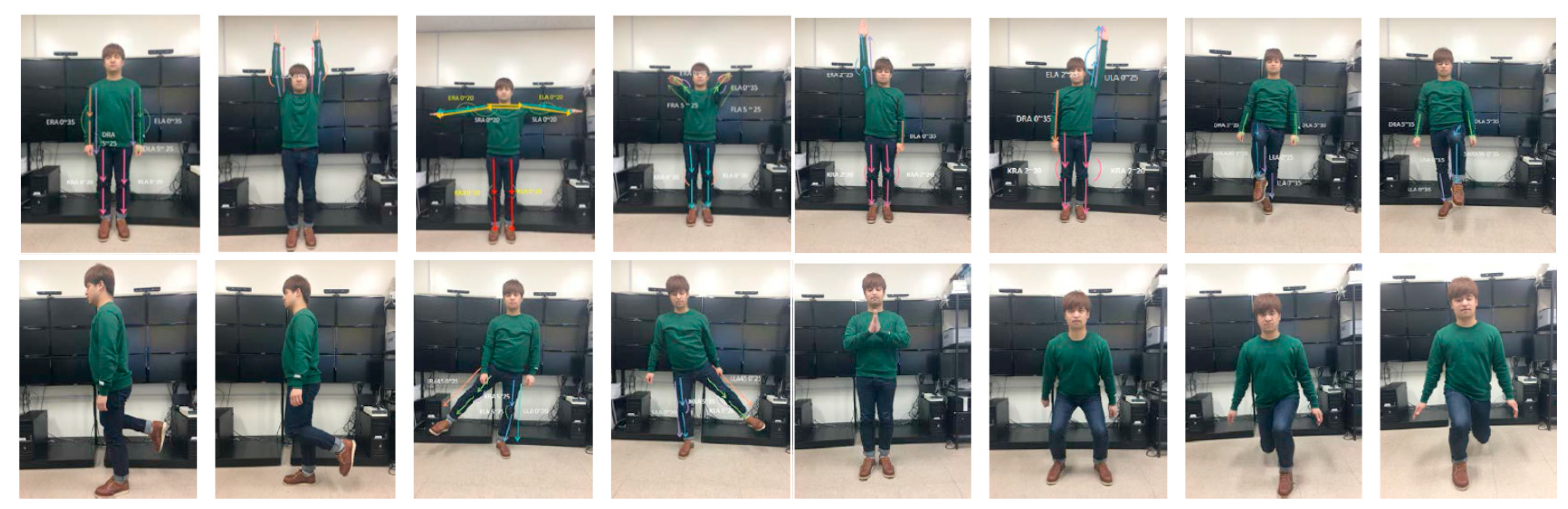

Figure 6 shows the sixteen postures used in the Chongchong Step Master Exergame: BSD (both hands down), BSU (both hands up in the air), BSO (both arms spread out), BSF (both arms stretched out in front), RHU (right hand up), LHU (left hand up), RKU (right knee up), LKU (left knee up), RKC (right leg curled backwards), LKC (left leg curled backwards), RLAB (right leg kicked out to the side), LLAB (left leg kicked out to the side), BHC (clap), SQUAT (squat), RLUNGE (right leg forward lunge), LLUNGE (left leg forward lunge).

The proper exercise form, Pi, is represented as Equation (5), using the angles representing the body, left/right arm, left/right leg and the distance between both hands and feet. A description of each feature is given in

Table 1. These angle and length features are used to analyze and distinguish between the different exercise body postures. For example, SSA is the angle of the spine when it is upright and is the angle between SS (Spine Shoulder), SB (Spine Base), and up vectors. ELA (Elbow Left Angle) and ERA (Elbow Right Angle) show how far the left and right elbows have been extended.

Table 2 shows the specific features used for estimating each posture. In order to estimate the user’s exercise postures, n amount of specific feature points is chosen from a total of 25 features for each workout form. For example, when both arms are spread out (BSO), we must make sure that the angle of both shoulders (SLA, SRA), both elbows (ELA, ERA), and both knees (KLA, KRA) are all close to parallel. In this research, the actual real-time exercise postures of the user read through the Kinect sensors are compared to the posture specifications descripted in the scripts of the exercise programs. Equation (6) is used to calculate the similarity score between the real-time postures and the scripts of the exercise program.

For each body posture, n number of feature points is used. Si refers to the reference feature value as defined by the exercise script, Pi is the feature value specific to the real-time actual exercise postures, and Ri is the range of the reference features. An exponential interpolated value between 100 and 70 from the actual value (i.e., angle or distance) is determined to calculate the pose similarity rate for one exercise posture. Each exercise program has a different number of exercise movement scripts, so an average of pose similarity rate for all movements is calculated and used as the overall score.

3.4.4. Data Merging

The Kinect sensor tracks user movement and, in the event that the speed of the movement or a certain movement is hidden from view and cannot be seen, infers the movement by referencing the previous frame. Kinect SDK provides basic information to indicate if a body joint is tracked, inferred based on neighboring parts, or not tracked when it is completely not visible. When we analyzed the Kinect tracking data, we can see that, in many instances, body joints were inferred rather than directly tracked. For this reason, if only one Kinect sensor is used to track body movement, in instances where body movements are too fast to be read or hidden from view, it will result in an inaccurate detection of the body joints.

However, if multiple Kinect sensors are used, a more accurate reading of the body movement will be possible. By placing Kinect sensors around the user, a movement that cannot be seen from the front can be picked up by the sensors at the left or right sides. By merging multiple Kinect data, the final score is calculated for each feature value specific to the particular body posture and for each Kinect input device with a different weighted value. In Equation (7), m number of Kinects for each exercise posture feature will be given a weight value, w, to estimate the final score, where the sum of the weighted values for each different Kinect is 1.

3.5. Unity3D Exercise Game

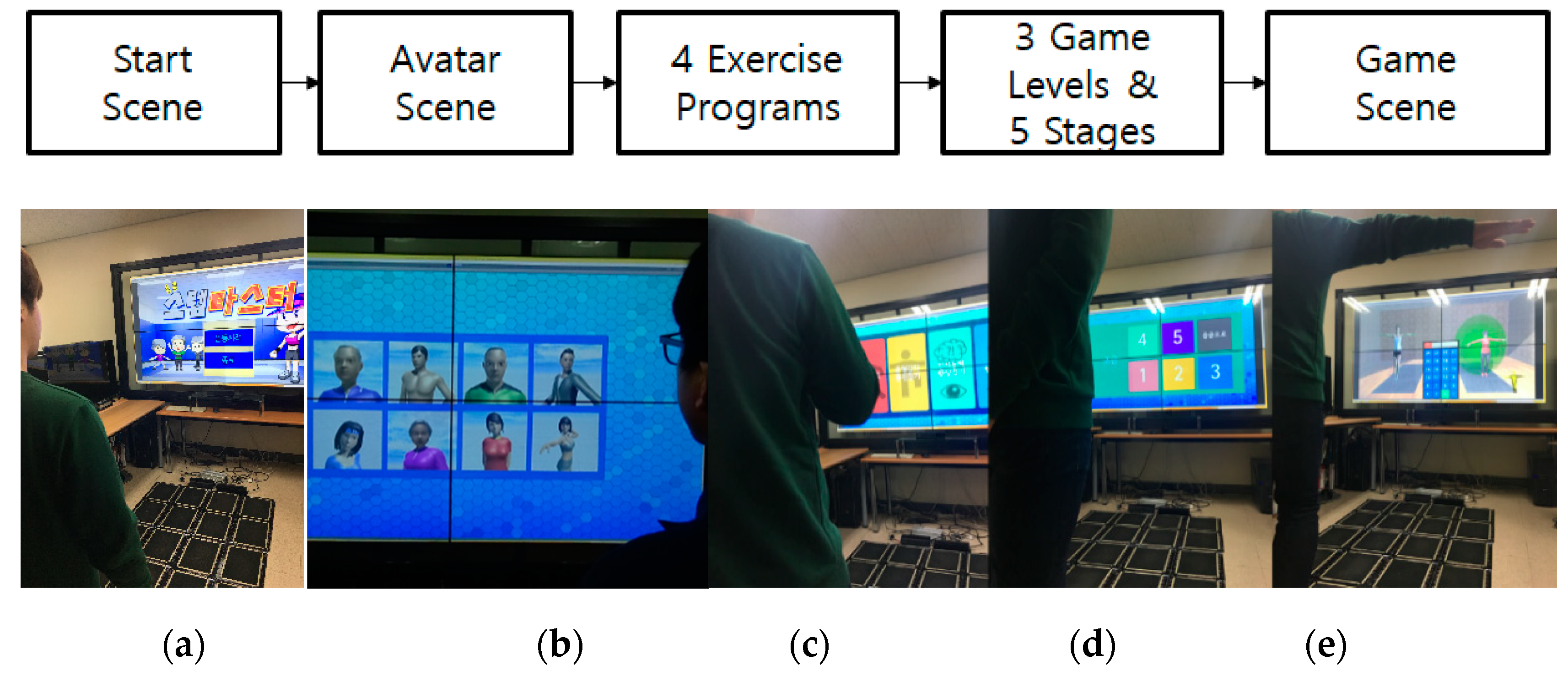

Figure 7 shows a sequence of screenshots taken from the Chongchong Step Master Exercise game. As shown in

Figure 7, the user first meets the game start screen (a), and then selects his/her own avatar (b) and chooses the exercise program (c). The exercise program consists of power walking, balance walking, dual task with additional cognitive training, and visual perception exercise. Then, the user picks out the appropriate level (beginner, intermediate, advanced) of the exercise program (d). Finally, the user plays the game (e). In the game scene, a guide avatar representing a trainer shows the correct exercise pose and stepping sequence, and a virtual avatar representing the user shows his/her exercise movements in the virtual environment interactively. This game system recognizes the user’s walking and whole-body movement at the same time, via the square mat system and the multiple Kinect-based pose estimation system. For each of the exercise movements, the game determines if the user is doing the exercises correctly. It then provides the user with feedback on their progress.

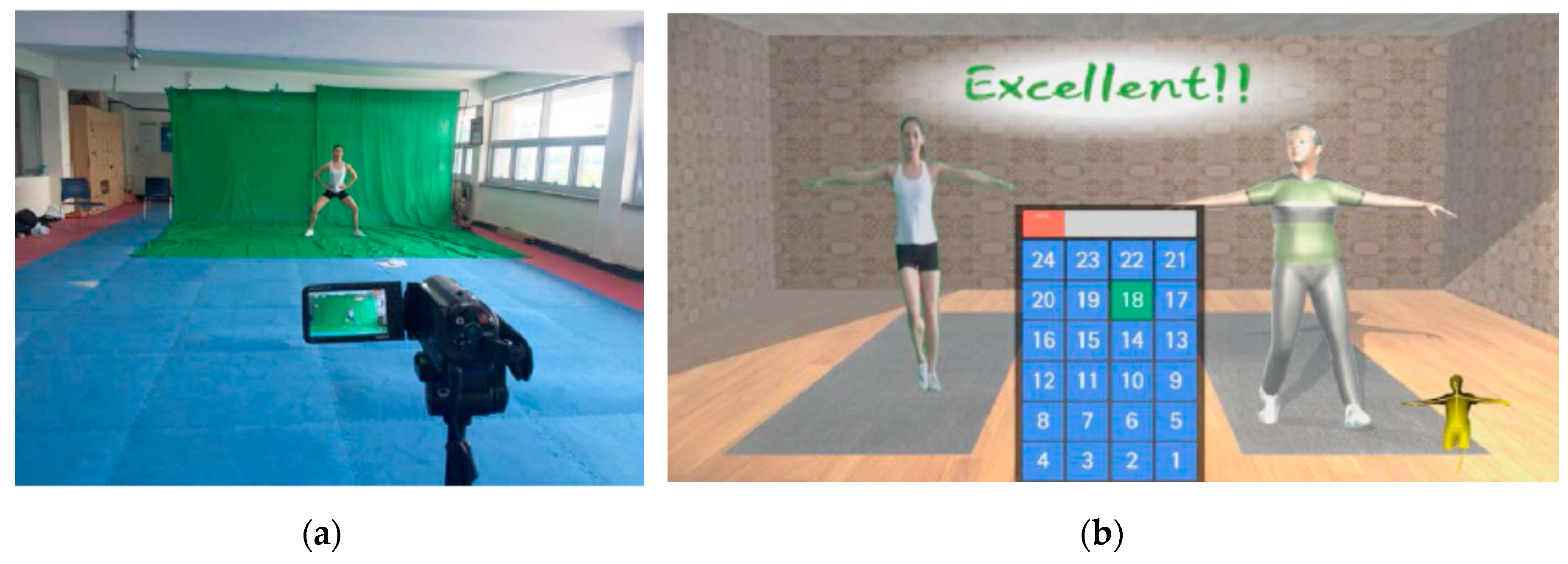

Figure 8 shows the chroma-keyed trainer video capture (on the left) and the game scene with the exercise step and posture information (on the right). As shown in

Figure 8, the game system also gave the user feedback on success or failure for each sequence in real-time. The user moves his/her avatar shown through the Kinect to follow the trainer’s exercise posture. When the movement of the avatar and the trainer match, the user is able to immediately identify whether they have followed the instructions well, leading to the correct exercise posture. If it fits well, the avatar gives “Excellent” to recognize that the user did well. If it is wrong, “Not bad” is given, to induce the user to adjust the movement posture in the next posture.

This exercise game was developed using the Unity 3D game engine. Each compartment of the square mat system is mapped to a unique identification number, and the user can step on the compartment to select the input choices of game scenes. In the tutorial session, the user was guided through a simple instruction to step on the LED lighted square mat compartment and follow the chroma-keyed guide posture. As the user simply steps on the square mat, vigorous walking and modified walking was implemented in the game mode. In order to develop a lower body movement with balanced walking, the user was guided to step on the square mat from left to right, and adopt the stance of various exercise postures during the game. The game scene showed the output of the guide posture and the next steps to be taken, which kept the user’s attention.

4. Evaluation

We performed a pilot study to evaluate the performance of pose estimation in the Chongchong Step Master Exercise game system. The server computer used an Intel Core i5-3550 3.7 GHz CPU, 16 GB RAM, a GeForce GTX 460 graphic card, and a Windows 10 operating system and Kinect 2.0 sensor installed in the front. The client computer used an Intel Core i5-750 2.67 GHz CPU, 16 GB RAM, a GeForce GTX 460 graphics card, a Windows 10 and a Kinect 2.0 sensor installed on the right side. Five subjects participated in this evaluation and they did not receive prior training before the test. The subjects were asked to follow 16 exercise postures (as described in the exercise program scripts) at a distance 2.65 m away from the front Kinect sensor. They repeated the same 16 exercise postures four times, while moving 0.3 m closer to the sensor each time.

Table 3 summarizes the results of the average of pose similarity rate performing the 16 exercises four times at each specified distance. The overall average pose similarity rate was 86.37. In the case of upper body exercise postures (such as, BSO, BSU, BSF, BSD, LHU, and RHU), the average of pose similarity rate was 88.02, and these exercise postures were mostly recognized by the front Kinect camera, rather than the side. The lower body exercise postures showed the average pose similarity of 86.60. LKU, RKU, LLAB, and RLAB postures were recognized in both front and side Kinect cameras, and some were measured with front or side cameras. As compared to other exercises, squat and lunge showed a lower rate of pose similarity.

It was seen that this system accurately estimated the 16 exercise postures when the subjects were within the Kinect sensor range, regardless of the subject’s location or body type. On average, the pose estimation failure percentage was 4.16%. The pose estimation failure percentage indicates the robustness of the system process. We found that a single Kinect often failed to track joint positions when some body parts are blocked, and hence, could not represent those blocked parts. In contrast, multiple Kinects produced a much higher performance in capturing users’ exercise postures, particularly knee curls. However, the system sometimes failed to track users, due to noisy depth measurement caused by fast movement.

5. Conclusions

This research presents the Chongchong Step Master Exergame using the square mat and the multiple Kinect-based exercise pose estimation system. It is designed for improving the gait and balance function in older adults. This exergame provides physical exercise programs that involve walking and repetitive arm and leg movements, to stimulate brain activity and improve perception for the elderly. This game allows the user to monitor each state of gait motion and sequence through the square mat and the upper and lower body exercise postures through the pose estimation system.

In this exergame, the pose estimation system extracts feature points from the skeletons provided by the Kinect sensor, to create feature vectors at each point, and to construct an exercise posture recognition model. This model is constructed to recognize user posture information, regardless of user body type. It allows the exercise programs to read and correct user postures that are necessary to create appropriate exercise contents. Moreover, it combines feature data from multiple Kinects to provide more robust and accurately retrievable user posture information, such as lunge and knee curl.

The Chongchong Step Master Exergame system can be utilized by improving elderly people’s participation in exercise, and providing materials to study the effects of exercise and prevent dementia. Currently, this system required a large space for installing multiple Kinect sensors and the square mat. Additionally, this system provides only four kinds of exercise program utilizing 16 exercise postures: vital walking, balance walking, dual task, and visual-cognition walking. In the future, we plan to further improve this system, to allow this system to be used at home, as well as to include other exercise programs with more complicated postures. Furthermore, we will conduct a user study of this exergame to see how users perceive the system, and to verify if users experience improved cognition and balance.

Author Contributions

Conceptualization, K.S.P. and Y.C.; methodology, K.S.P. and Y.C.; software, K.S.P.; validation, K.S.P. and Y.C.; formal analysis, K.S.P. and Y.C.; investigation, K.S.P. and Y.C.; resources, K.S.P. and Y.C.; data curation, K.S.P. and Y.C.; writing—original draft preparation, K.S.P. and Y.C.; writing—review and editing, K.S.P. and Y.C.; visualization, K.S.P. and Y.C.; supervision, K.S.P. and Y.C.; project administration, K.S.P. and Y.C. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Vaghetti, C.A.O.; Monteiro-Junior, R.S.; Finco, M.D.; Reategui, E.; Botelho, S.S.C. Exergames Experience in Physical Education: A Review. Phys. Cult. Sport Stud. Res. 2018, 76, 23–32. [Google Scholar] [CrossRef]

- Benzing, V.; Schmidt, M. Exergaming for Children and Adolescents: Strengths, Weaknesses, Opportunities and Threats. J. Clin. Med. 2018, 7, 422. [Google Scholar] [CrossRef] [PubMed]

- Zheng, L.; Li, G.; Wang, X.; Yin, H.; Jia, Y.; Leng, M.; Li, H.; Chen, L. Effects of exergames on physical outcomes in frail elderly: A systematic review. Aging Clin. Exp. Res. 2019, 1–14. [Google Scholar] [CrossRef] [PubMed]

- Kayama, H.; Okamoto, K.; Nishiguchi, S.; Yamada, M.; Kuroda, T.; Aoyama, T. Effect of a Kinect-based exercise game on improving executive cognitive performance in community-dwelling elderly: Case control study. J. Med. Internet Res. 2014, 16, e61. [Google Scholar] [CrossRef] [PubMed]

- Vojciechowski, A.S.; Biesek, S.; Melo Filho, J.; Rabito, E.I.; do Amaral, M.P.; Gomes, A.R.S.A.R.S. Effects of physical training with the Nintendo Wii Fit Plus and protein supplementation on musculoskeletal function and the risk of falls in pre-frail older women: Protocol for a randomized controlled clinical trial (the WiiProten study). Maturitas 2018, 111, 53–60. [Google Scholar] [CrossRef] [PubMed]

- Van Diest, M.; Lamoth, C.J.; Stegenga, J.; Verkerke, G.J.; Postema, K. Exergaming for balance training of elderly: State of the art and future developments. J. Neuroeng. Rehabil. 2013, 10, 101. [Google Scholar] [CrossRef] [PubMed]

- Ofli, F.; Kurillo, G.; Obdržálek, Š.; Bajcsy, R.; Jimison, H.B.; Pavel, M. Design and Evaluation of an Interactive Exercise Coaching System for Older Adults: Lessons Learned. IEEE J. Biomed. Health Inform. 2016, 20, 201–212. [Google Scholar] [CrossRef] [PubMed]

- Saenz-de-Urturi, Z.; Garcia-Zapirain, S.B. Kinect-Based Virtual Game for the Elderly that Detects Incorrect Body Postures in Real Time. Sensors 2016, 16, 704. [Google Scholar] [CrossRef] [PubMed]

- Pedraza-Hueso, M.; Martin-Calzon, S.; Diaz-Pernas, F.J.; Martinez-Zarzuela, M. Rehabilitation Using Kinect-based Games and Virtual Reality. Procedia Comput. Sci. 2015, 75, 161–168. [Google Scholar] [CrossRef]

- Huang, K.-T. Exergaming Executive Functions: An Immersive Virtual Reality-Based Cognitive Training for Adults Aged 50 and Older. Cyberpsychol. Behav. Soc. Netw. 2020, 23, 143–149. [Google Scholar] [CrossRef] [PubMed]

- Liao, Y.-Y.; Chen, I.-H.; Wang, R.-Y. Effects of Kinect-based exergaming on frailty status and physical performance in prefrail and frail elderly: A randomized controller trail. Sci. Rep. 2019, 9, 1–9. [Google Scholar] [CrossRef] [PubMed]

- Lange, B.; Chang, C.Y.; Suma, E.; Newman, B.; Rizzo, A.S.; Bolas, M. Development and evaluation of low cost game-based balance rehabilitation tool using the Microsoft Kinect sensor. In Proceedings of the Annual International Conference of the IEEE Engineering in Medicine and Biology Society, Boston, UK, 30 August–3 September 2011; pp. 1831–1834. [Google Scholar]

- Gotsis, M.; Lympouridis, V.; Turpin, D.; Tasse, A.; Poulos, I.C.; Tucker, D.; Swider, M.; Thin, A.G.; Jordan-Marsh, M. Mixed Reality Game Prototypes for Upper Body Exercise and Rehabilitation. In Proceedings of the IEEE Virtual Reality Workshops, Costa Mesa, CA, USA, 4–8 March 2012; pp. 181–182. [Google Scholar]

- Matamala-Gomez, M.; Maisto, M.; Montana, J.I.; Mavrodiev, P.A.; Baglio, F.; Rossetto, F.; Mantovani, F.; Riva, G.; Realdon, O. The Role of Engagement in Teleneurorehabilitation: A Systemetic Review. Front. Neurol. 2020, 11, 354. [Google Scholar] [CrossRef] [PubMed]

- Anton, D.; Goni, A.; Illarramendi, A. Exercise recognition for Kinect-based telerehabilitation. Methods Inf. Med. 2015, 54, 145–155. [Google Scholar] [PubMed]

- Dao, N.-L.; Zhang, Y.; Zheng, J.; Cai, J. Kinect-based Non-intrusive Human Gait Analysis and Visualization. In Proceedings of the International Workshop on Multimedia Signal Processing (MMSP), Xiamen, China, 19–21 October 2015; pp. 1–6. [Google Scholar]

- Auvinet, E.; Meunier, J.; Multon, F. Multiple depth cameras calibration and body volume reconstruction for gait analysis. In Proceedings of the International Conference on Information Science, Signal Processing and Their Applications (ISSPA), Montreal, QC, Canada, 2–5 July 2012; pp. 478–483. [Google Scholar]

- Ruan, M.; Huber, D. Extrinsic Calibration of 3D Sensors Using a Spherical Target. In Proceedings of the 2nd International Conference on 3D Vision, Tokyo, Japan, 8–11 December 2014; pp. 187–193. [Google Scholar]

- Yang, J.; Li, H.; Jia, Y. Go-ICP: Solving 3D Registration Efficiently and Globally Optimally. In Proceedings of the International Conference on Computer Vision (ICCV), Sydney, Australia, 1–8 December 2013; pp. 1457–1464. [Google Scholar]

- Park, K.S. Development of Kinect-based Pose Recognition Model for Exercise Game. KIPS Trans. Comput. Commun. Syst. 2016, 5, 303–310. [Google Scholar] [CrossRef]

Figure 1.

A user playing the Chongchong Step Master physical exercise game.

Figure 1.

A user playing the Chongchong Step Master physical exercise game.

Figure 2.

The overall system architecture of the Chongchong Step Master exergame.

Figure 2.

The overall system architecture of the Chongchong Step Master exergame.

Figure 3.

The square mat system, consisting of control, sensing, and communication modules.

Figure 3.

The square mat system, consisting of control, sensing, and communication modules.

Figure 4.

The process of multi-Kinect pose estimation for the Chongchong Step Master exergame.

Figure 4.

The process of multi-Kinect pose estimation for the Chongchong Step Master exergame.

Figure 5.

(a) The placement layout of multiple Kinects, (b) before and (c) after calibration.

Figure 5.

(a) The placement layout of multiple Kinects, (b) before and (c) after calibration.

Figure 6.

The 16 exercise postures: BSD, BSU, BSO, BSF, RHU, LHU, RKU, LKU, RKC, LKC, RLAB, LLAB, BHC, SQUAT, RLUNGE, LLUNGE (from top left to bottom right).

Figure 6.

The 16 exercise postures: BSD, BSU, BSO, BSF, RHU, LHU, RKU, LKU, RKC, LKC, RLAB, LLAB, BHC, SQUAT, RLUNGE, LLUNGE (from top left to bottom right).

Figure 7.

Screenshots of the Chongchong Step Master exergame (a) start, (b) avatar selection, (c) exercise program selection, (d) game level selection, and (e) game play scene.

Figure 7.

Screenshots of the Chongchong Step Master exergame (a) start, (b) avatar selection, (c) exercise program selection, (d) game level selection, and (e) game play scene.

Figure 8.

(a) Chroma-keyed trainer video capture and (b) exercise game scene with virtual trainer.

Figure 8.

(a) Chroma-keyed trainer video capture and (b) exercise game scene with virtual trainer.

Table 1.

Twenty-five Features for Exercise Posture Recognition Model.

Table 1.

Twenty-five Features for Exercise Posture Recognition Model.

| Symbol | Feature | Description |

|---|

| ELA | | Left elbow angle |

| ERA | | Right elbow angle |

| SLA | | Left shoulder angle |

| SRA | | Right shoulder angle |

| ULA | | Left arm up angle |

| URA | | Right arm up angle |

| DLA | | Left arm down angle |

| DRA | | Right arm down angle |

| FLA | | Left arm front angle |

| FRA | | right arm front angle |

| KLA | | Left knee angle |

| KRA | | right knee angle |

| KLA90 | | Left knee 90 angle |

| KRA90 | | right knee 90 angle |

| LLA | | Left leg down angle |

| LRA | | right leg down angle |

| LLA45 | | Left leg 45 angle |

| LRA45 | | right leg 45 angle |

| THLA90 | | Left thigh 90 angle |

| THRA90 | | right thigh 90 angle |

| SHLA90 | | Left shin 90 angle |

| SHRA90 | | right shin 90 angle |

| HD | | Both hand distance |

| AD | | both ankle distance |

| SSA | | Spine upright angle |

Table 2.

Feature Specifications for 16 Exercise Postures.

Table 2.

Feature Specifications for 16 Exercise Postures.

| Exercise Posture | Specific Feature Points | Descriptions |

|---|

| BSD (BOTH_SIDE_DOWN) | ELA, ERA, DLA, DRA, KLA, KRA, LLA, LRA | Both hands down at the side |

| BSU (BOTH_SIDE_UP) | RLA, ERA, ULA, URA, KLA, KRA | Both hands up in the air |

| BSO (BOTH_SIDE_OUT) | ELA, ERA, SLA, SRA, KLA, KRA | Both arms spread out |

| BSF (BOTH_SIDE_FRONT) | ELA, ERA, FLA, FRA, KLA, KRA | Both arms stretched out in front |

| RHU (RIGHT_HAND_UP) | ERA, URA, DLA, KLA, KRA | Right hand up |

| LHU (LEFT_HAND_UP) | ELA, ULA, DRA, LKA, KRA | Left hand up |

| RKU (RIGHT_KNEE_UP) | DLA, DRA, KLA, KRA90, LLA, THRA90 | Right knee up |

| LKU (LEFT_KNEE_UP) | DLA, DRA, LKA90, KRA, THLA90, LRA | Left knee up |

| RKC (RIGHT_KNEE_CURL) | DLA, DRA, KLA, KRA90, LLA, SHRA90 | Right knee curled backwards |

| LKC (LEFT_KNEE_CURL) | DLA, DRA, LKA90, KRA, SHLA90, LRA | Left knee curled backwards |

| RLAB (RIGHT_LEG_AB) | KLA, KRA, LLA, LRA45 | Right leg kicked out to the side |

| LLAB (LEFT_LEG_AB) | LKA, KRA, LLA45, LRA | Left leg kicked out the side |

| BHC (BOTH_HAND_CLAP) | KLA, KRA, AD, HD | Both hand clap |

| SQUAT (SQUAT) | SSA, KLA90, KRA90, THLA90, THRA90 | Squat |

| RLUNGE (RIGHT_LUNGE) | KLA90, THLA90, SHRA90 | Right leg forward lunge |

| LLUNGE (LEFT_LUNGE) | KRA90, THRA90, SHLA90 | Left leg forward lunge |

Table 3.

Average Rate of Posture Similarity.

Table 3.

Average Rate of Posture Similarity.

| Exercise Posture | 265 m | 235 m | 205 m | 175 m |

|---|

| BSD (BOTH_SIDE_DOWN) | 90.55 | 90.57 | 90.02 | 89.79 |

| BSU (BOTH_SIDE_UP) | 85.01 | 85.65 | 84.23 | 82.32 |

| BSO (BOTH_SIDE_OUT) | 84.79 | 90.17 | 89.94 | 90.56 |

| BSF (BOTH_SIDE_FRONT) | 87.49 | 89.47 | 89.30 | 98.51 |

| RHU (RIGHT_HAND_UP) | 89.21 | 89.73 | 85.88 | 86.95 |

| LHU (LEFT_HAND_UP) | 87.38 | 87.72 | 88.66 | 87.68 |

| RKU (RIGHT_KNEE_UP) | 87.55 | 89.19 | 91.38 | 88.40 |

| LKU (LEFT_KNEE_UP) | 87.57 | 88.50 | 87.91 | 84.99 |

| RKC (RIGHT_KNEE_CURL) | 88.05 | 86.82 | 87.55 | 82.85 |

| LKC (LEFT_KNEE_CURL) | 83.49 | 85.70 | 84.97 | 82.79 |

| RLAB (RIGHT_LEG_AB) | 88.94 | 86.43 | 89.15 | 83.20 |

| LLAB (LEFT_LEG_AB) | 85.73 | 85.79 | 86.53 | 85.09 |

| BHC (BOTH_HAND_CLAP) | 90.31 | 91.99 | 88.48 | 83.84 |

| SQUAT (SQUAT) | 81.08 | 79.28 | 81.10 | 81.08 |

| RLUNGE (RIGHT_LUNGE) | 78.77 | 82.96 | 84.14 | 80.99 |

| LLUNGE (LEFT_LUNGE) | 77.27 | 83.66 | 85.21 | 86.28 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).