Socrative in Higher Education: Game vs. Other Uses

Abstract

1. Introduction

2. Related Work

2.1. Clickers

2.2. Gamification

3. Socrative

4. Socrative for the University Classroom: Three Examples

4.1. Participants

4.2. Experiences

4.2.1. Activity 1: Collaborative Reading

4.2.2. Activity 2: Lecture

4.2.3. Activity 3: Cooperative Review Game

5. Methodology

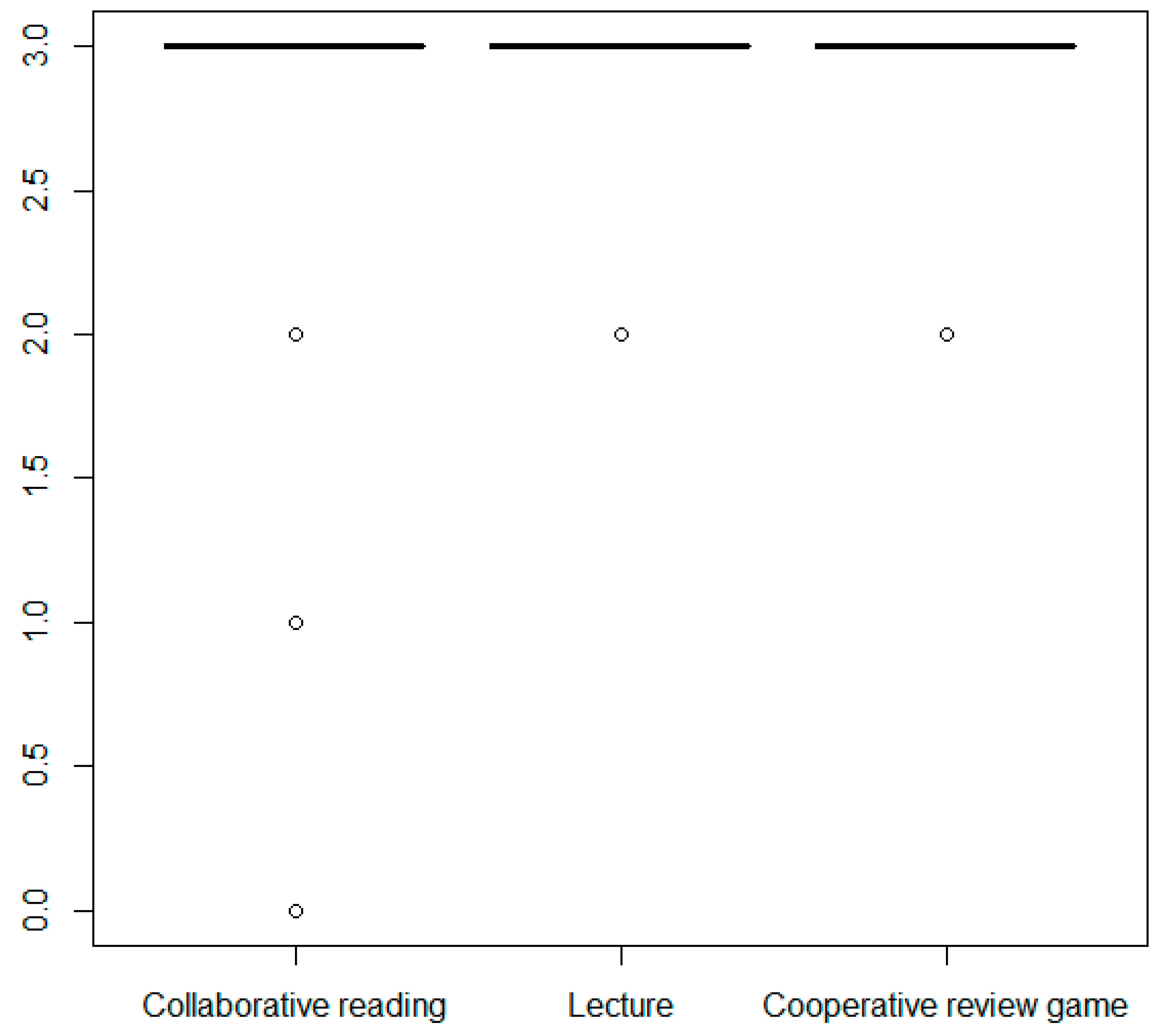

6. Results

6.1. Overall Results

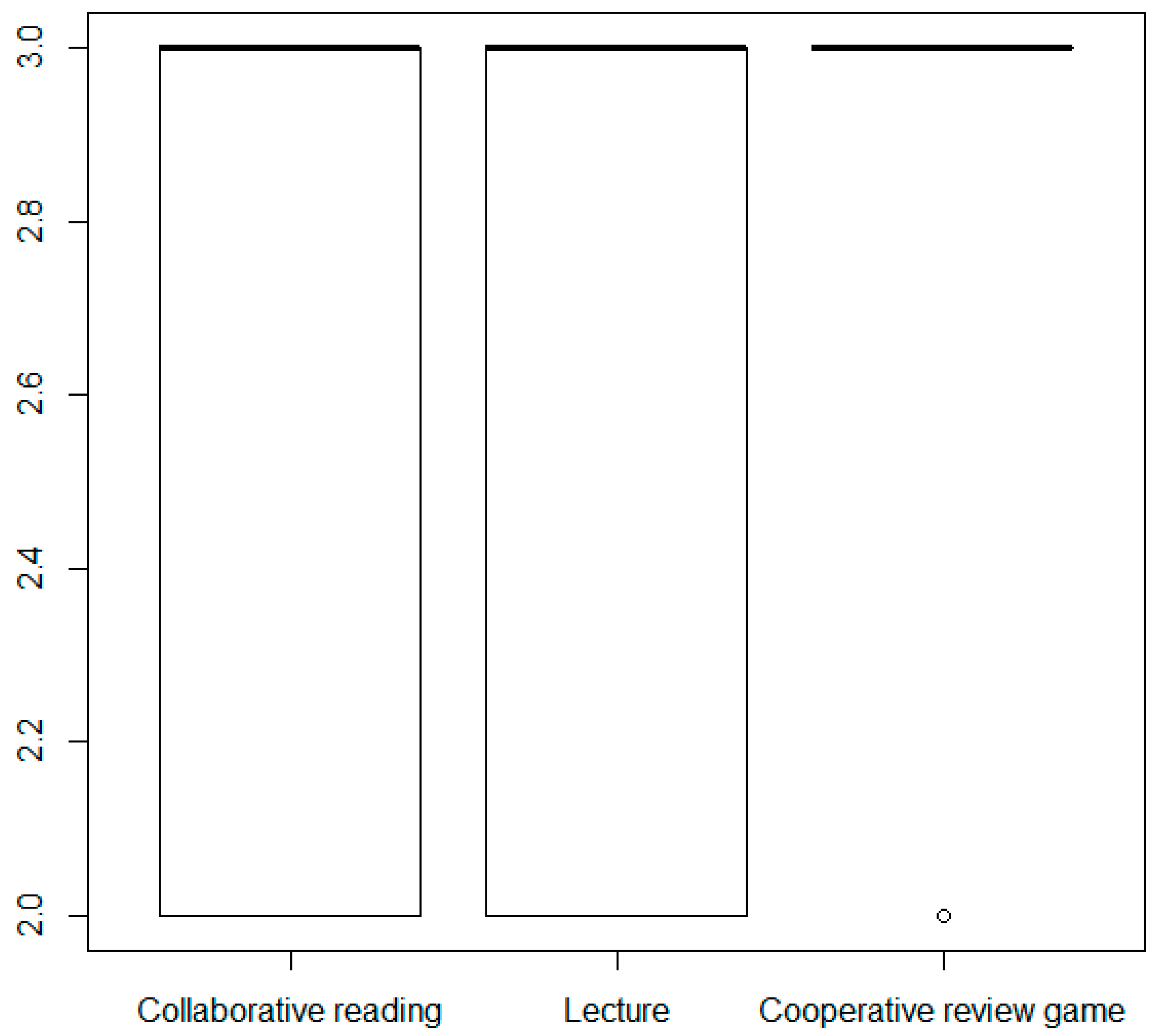

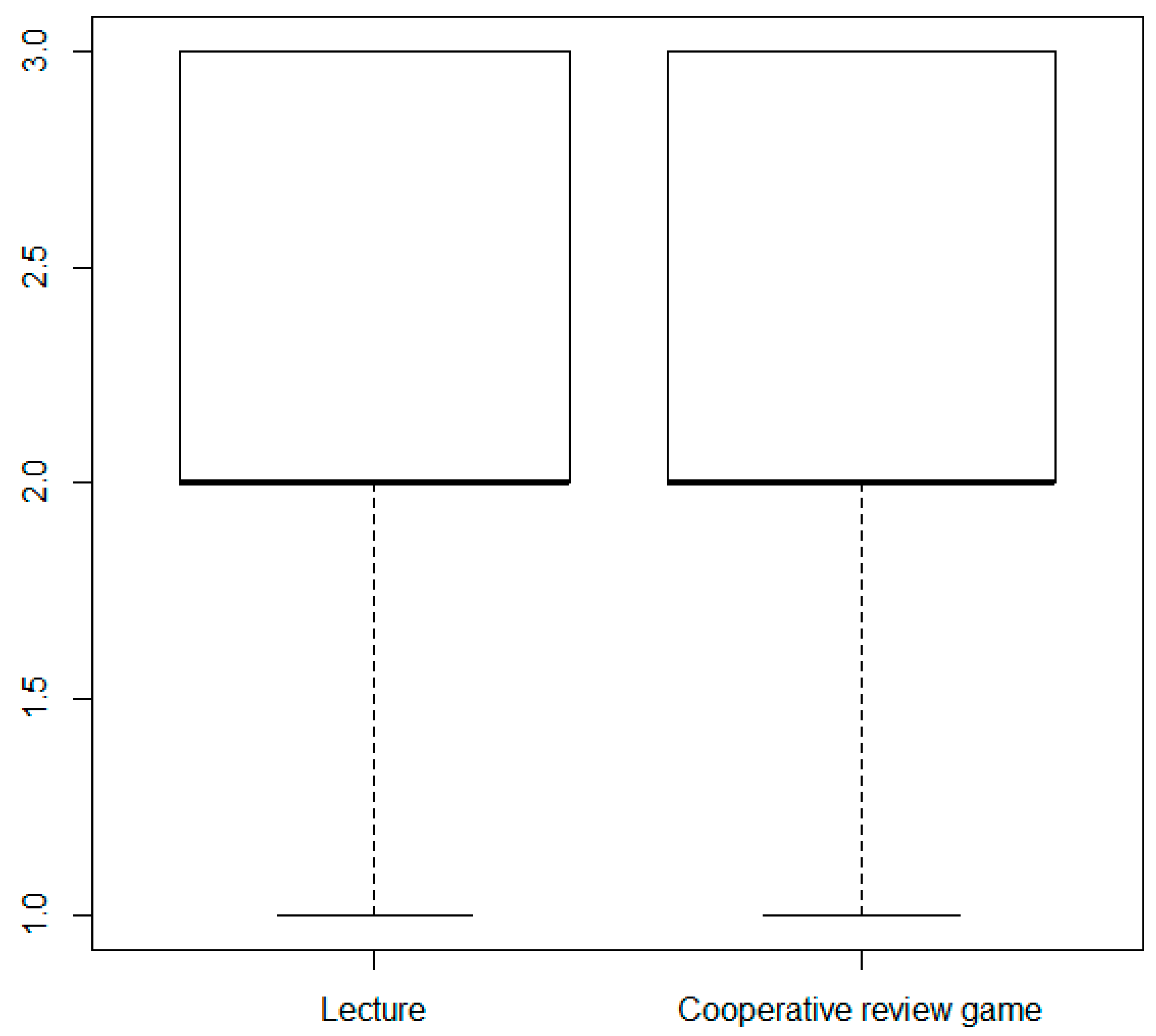

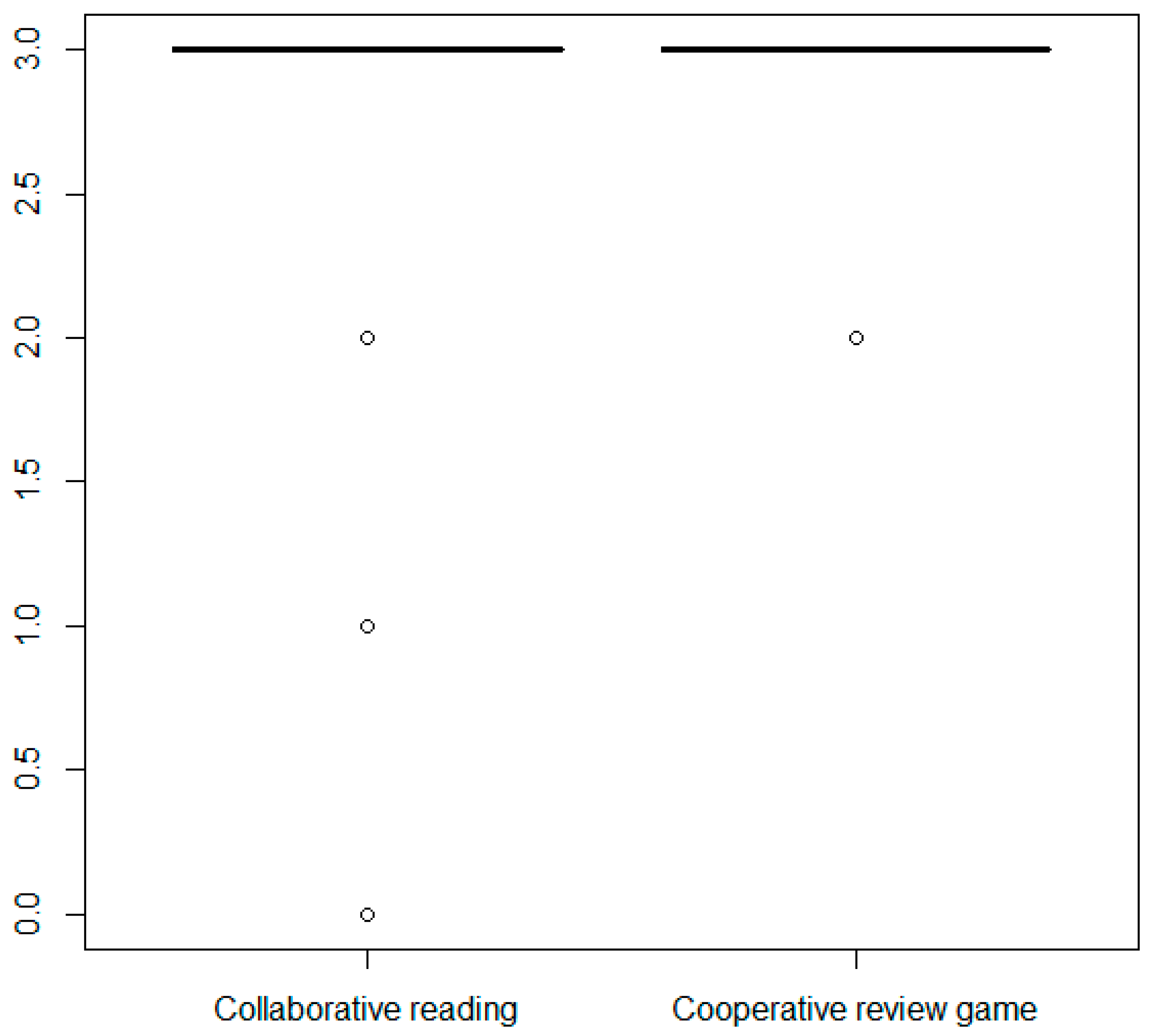

6.2. Comparisons between Socrative Uses

7. Discussion

8. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Chou, P.N.; Chang, C.C.; Lin, C.H. BYOD or not: A comparison of two assessment strategies for student learning. Comput. Hum. Behav. 2017, 74, 63–71. [Google Scholar] [CrossRef]

- Trindade, J. Promoção da interatividade na sala de aula com Socrative: estudo de caso. Indagatio Didact. 2014, 6, 1. [Google Scholar]

- Rashid, T.; Asghar, H.M. Technology use, self-directed learning, student engagement and academic performance: Examining the interrelations. Comput. Hum. Behav. 2016, 63, 604–612. [Google Scholar] [CrossRef]

- Blasco-Arcas, L.; Buil, I.; Hernández-Ortega, B.; Sesel, F.J. Using clickers in class. The role of interactivity, active collaborative learning and engagement in learning performance. Comput. Educ. 2013, 62, 102–110. [Google Scholar] [CrossRef]

- Dakka, S.M. Using Socrative to enhance in-class student engagement and collaboration. Int. J. Integr. Technol. Educ. 2015, 4, 13–19. [Google Scholar] [CrossRef]

- McDonough, K.; Foote, J.A. The impact of individual and shared clicker use on students’ collaborative learning. Comput. Educ. 2015, 86, 236–249. [Google Scholar] [CrossRef]

- Shute, V.J. Focus on formative feedback. Rev. Educ. Res. 2008, 78, 153–189. [Google Scholar] [CrossRef]

- Paschal, C.B. Formative assessment in physiology teaching using a wireless classroom communication system. Adv. Physiol. Educ. 2002, 26, 299–308. [Google Scholar] [CrossRef][Green Version]

- Kuriakose, R.B.; Luwes, N. Student perceptions to the use of paperless technology in assessments–a case study using clickers. Proc. Soc. Behav. Sci. 2016, 228, 78–85. [Google Scholar] [CrossRef]

- Stringer, M.; Stringer, K.; Hunter, J.A.; Finlay, C. Assuring quality through student evaluation. In Handbook of Quality Assurance for University Teaching; Roger, E., Elaine, H., Eds.; Routledge: New York, NY, USA, 2019. [Google Scholar]

- Stowell, J.R. Use of clickers vs. mobile devices for classroom polling. Comput. Educ. 2015, 82, 329–334. [Google Scholar] [CrossRef]

- Keough, S.M. Clickers in the classroom: A Review and a Replication. J. Manag. Educ. 2012, 36, 822–847. [Google Scholar] [CrossRef]

- Boscardin, C.; Penuel, W. Exploring benefits of audience-response systems on learning: A review of the literature. Acad. Psychiatry 2012, 36, 401–407. [Google Scholar] [CrossRef] [PubMed]

- Rana, N.P.; Dwivedi, Y.K.; Al-Khowaiter, W.A. A review of literature on the use of clickers in the business and management discipline. Int. J. Manag. Educ. 2016, 14, 74–91. [Google Scholar] [CrossRef]

- Awedh, M.; Mueen, A.; Zafar, B.; Manzoor, U. Using Socrative and smartphones for the support of collaborative learning. Int. J. Integr. Technol. Educ. 2014, 3, 17–24. [Google Scholar] [CrossRef]

- Chan, S.C.; Wan, J.C.; Ko, S. Interactivity, active collaborative learning, and learning performance: The moderating role of perceived fun by using personal response systems. Int. J. Manag. Educ. 2019, 17, 94–102. [Google Scholar] [CrossRef]

- Heflin, H.; Shewmaker, J.; Nguyen, J. Impact of mobile technology on student attitudes, engagement, and learning. Comput. Educ. 2017, 107, 91–99. [Google Scholar] [CrossRef]

- Kulikovskikh, I.M.; Prokhorov, S.A.; Suchkova, S.A. Promoting collaborative learning through regulation of guessing in clickers. Comput. Hum. Behav. 2017, 75, 81–91. [Google Scholar] [CrossRef]

- Black, P.; Wiliam, D. Assessment and classroom learning. Assess. Educ. Princ. Policy Pract. 1998, 5, 7–74. [Google Scholar] [CrossRef]

- Ludvigsen, K.; Krumsvik, R.; Furnes, B. Creating formative feedback spaces in large lectures. Comput. Educ. 2015, 88, 48–63. [Google Scholar] [CrossRef]

- Hatziapostolou, T.; Paraskakis, I. Enhancing the impact of formative feedback on student learning through an online feedback system. Electron. J. e-Learn. 2010, 8, 111–122. [Google Scholar]

- Egelandsdal, K.; Krumsvik, R.J. Clickers and formative feedback at university lectures. Educ. Inf. Technol. 2017, 22, 55–74. [Google Scholar] [CrossRef]

- Dunnett, A.J.; Shannahan, K.L.; Shannahan, R.J.; Treholm, B. Exploring the impact of clicker technology in a small classroom setting on student class attendance and course performance. J. Acad. Bus. Educ. 2011, 12, 43–56. [Google Scholar]

- Barwell, G.; Walker, R. Peer assessment of oral presentations using clickers: the student experience. In Proceedings of the 32nd HERDSA Annual Conference: The Student Experience, Hammondville, Australia, 6–9 July 2009; Wozniak, H., Bartoluzzi, S., Eds.; Milperra: Higher Education Research and Development Society of Australasia: Hammondville, Australia, 2009; pp. 23–32. [Google Scholar]

- Nájera, A.; Villalba, J.M.; Arribas, E.; Gallego, S.; Beléndez, A.; Francés, J.; de Pablo, F. Evaluación entre pares (peer evaluation) usando clickers. In Más Experiencias de Innovación Docente en la Enseñanza de la Física Universitaria; Nájera, A., Arribas, E., Eds.; Lulu Enterprises: Albacete, Spain, 2011; pp. 73–84. [Google Scholar]

- Mehring, J. Present research on the flipped classroom and potential tools for the EFL classroom. Comput. Sch. 2016, 33, 1–10. [Google Scholar] [CrossRef]

- Nouri, J. The flipped classroom: for active, effective and increased learning–especially for low achievers. Int. J. Educ. Technol. High. Educ. 2016, 13, 33. [Google Scholar] [CrossRef]

- Heinerichs, S.; Pazzaglia, G.; Gilboy, M.B. Using flipped classroom components in blended courses to maximize student learning. Athl. Train. Educ. J. 2016, 11, 54–57. [Google Scholar] [CrossRef]

- Hung, H.T. Clickers in the flipped classroom: bring your own device (BYOD) to promote student learning. Interact. Learn. Environ. 2017, 25, 983–995. [Google Scholar] [CrossRef]

- Kaya, A.; Balta, N. Taking advantages of technologies: Using the Socrative in English language teaching classes. Int. J. Soc. Sci. Educ. Stud. 2016, 2, 4–12. [Google Scholar]

- El Shaban, A. The use of Socrative in ESL classrooms: Towards active learning. Teach. Engl. Technol. 2017, 17, 64–77. [Google Scholar]

- Mork, C.M. Benefits of using online student response systems in Japanese EFL classrooms. JALT Call J. 2014, 10, 127–137. [Google Scholar]

- Ohashi, L. Enhancing EFL writing courses with the online student response system Socrative. Kokusaikeiei Bunkakenkyu 2015, 19, 135–145. [Google Scholar]

- Sprague, A. Improving the ESL graduate writing classroom using Socrative: (Re) considering exit tickets. TESOL J. 2016, 7, 989–998. [Google Scholar] [CrossRef]

- Zhonggen, Y. The influence of clickers use on metacognition and learning outcomes in college English classroom. In Exploring the New Era of Technology-Infused Education; Tomei, L., Ed.; IGI Global: Hershey, PA, USA, 2017; pp. 158–171. [Google Scholar]

- Paz-Albo, J. The impact of using smartphones as student response systems on prospective teacher education training: a case study. El Guiniguada. Rev. Investig. Exp. Cienc. Educ. 2014, 23, 125–133. [Google Scholar]

- Benítez-Porres, J. Socrative como herramienta para la integración de contenidos en la asignatura “Didáctica de los Deportes”. In XII Jornadas Internacionales de Innovación Universitaria Educar para Transformar: Aprendizaje Experiencial; Ruiz Rosillo, M.A., Ed.; Universidad Europea: Madrid, Spain, 2015; pp. 824–831. [Google Scholar]

- Aslan, B.; Seker, H. Interactive response systems (IRS) SOCRATIVE application sample. J. Educ. Learn. 2017, 6, 167–174. [Google Scholar] [CrossRef]

- Bicen, H.; Kocakoyun, S. Determination of university students’ most preferred mobile application for gamification. World J. Educ. Technol. Curr. Issues 2017, 9, 18–23. [Google Scholar] [CrossRef]

- Pettit, R.K.; McCoy, L.; Kinney, M.; Schwartz, F.N. Student perceptions of gamified audience response system interactions in large group lectures and via lecture capture technology. BMC Med. Educ. 2015, 15, 92. [Google Scholar] [CrossRef] [PubMed]

- Solmaz, E.; Çetin, E. Ask-response-play-learn: Students’ views on gamification based interactive response systems. WJEIS. 2017, 7, 28–40. [Google Scholar]

- Bullón, J.J.; Hernández Encinas, A.; Santos Sánchez, M.J.; Gayoso Martínez, V. Analysis of student feedback when using gamification tools in Math subjects. In Proceedings of the 2018 IEEE Global Engineering Education Conference (EDUCON), Tenerife, Spain, 17–20 April 2018; pp. 1818–1823. [Google Scholar]

- De Soto García, I.S. Herramientas de gamificación para el aprendizaje de ciencias de la tierra. Edutec Rev. Electrónica Tecnol. Educ. 2018, 65, 29–39. [Google Scholar] [CrossRef]

- Kapp, K.M. The Gamification of Learning and Instruction: Case-Based Methods and Strategies for Training and Education; Pfieffer: An Imprint of John Wiley & Sons: New York, NY, USA, 2012. [Google Scholar]

- Lee, J.J.; Hammer, J. Gamification in education: What, How, Why Bother? Acad. Exch. Q. 2011, 15, 1–5. [Google Scholar]

- Serrano Lara, J.J.; Fajardo Magraner, F. The ICT and gamification: tools for improving motivation and learning at universities. In Proceedings of the 3rd International Conference on Higher Education Advances, Valencia, Spain, 21–23 June 2017; Editorial Universitat Politècnica de València: Valencia, Spain, 2017; pp. 540–548. [Google Scholar]

- Subhash, S.; Cudney, E.A. Gamified learning in higher education: A systematic review of the literature. Comput. Hum. Behav. 2018, 87, 192–206. [Google Scholar] [CrossRef]

- Wang, A.I. The wear out effect of a game-based student response system. Comput. Educ. 2015, 82, 217–227. [Google Scholar] [CrossRef]

- Schwabe, G.; Göth, C. Mobile learning with a mobile game: Design and motivational effects. J. Comput. Assist. Learn. 2005, 21, 204–216. [Google Scholar] [CrossRef]

- Kittl, C.; Edegger, F.; Petrovic, O. Learning by pervasive gaming: An empirical study. In Innovative Mobile Learning: Techniques and Technologies; IGI Global: Hershey, PA, USA, 2009; pp. 60–82. [Google Scholar]

- Bartel, A.; Hagel, G. Engaging students with a mobile game-based learning system in university education. In Proceedings of the 2014 IEEE Global Engineering Education Conference (EDUCON), Istanbul, Turkey, 3–5 April 2014; pp. 957–960. [Google Scholar]

- Hakulinen, L.; Auvinen, T.; Korhonen, A. Empirical study on the effect of achievement badges in TRAKLA2 online learning environment. In Proceedings of the 2013 Learning and Teaching in Computing and Engineering, Macau, China, 21–24 March 2013; pp. 47–54. [Google Scholar]

- McGrath, N.; Bayerlein, L. Engaging online students through the gamification of learning materials: The present and the future. In ASCILITE-Australian Society for Computers in Learning in Tertiary Education Annual Conference; Australasian Society for Computers in Learning in Tertiary Education: Tugun, Australia, 2013; pp. 573–577. [Google Scholar]

- Pirker, J.; Gutl, C.; Astatke, Y. Enhancing online and mobile experimentations using gamification strategies. In Proceedings of the 2015 3rd Experiment International Conference (exp. at’15), Ponta Delgada, Portugal, 2–4 June 2015; pp. 224–229. [Google Scholar]

- Dicheva, D.; Dichev, C.; Agre, G.; Angelova, G. Gamification in education: A systematic mapping study. Educ. Technol. Soc. 2015, 18, 75–88. [Google Scholar]

- Ferrándiz, E.; Puentes, C.; Moreno, P.J.; Flores, E. Engaging and assessing students through their electronic devices and real time quizzes. Multidiscip. J. Educ. Soc. Technol. Sci. 2016, 3, 173–184. [Google Scholar] [CrossRef]

- Socrative. Available online: www.socrative.com (accessed on 10 May 2019).

- Council of Europe, Council for Cultural Co-operation. Education Committee, Modern Languages Division. Common European Framework of Reference for Languages: Learning, Teaching, Assessment; Cambridge University Press: Cambridge, UK, 2001. [Google Scholar]

- Sutherland-Smith, W. Weaving the literacy Web: Changes in reading from page to screen. Read. Teach. 2002, 55, 662–669. [Google Scholar]

- Lee, J.F.; Musumeci, D. On hierarchies of reading skills and text types. Mod. Lang. J. 1988, 72, 173–187. [Google Scholar] [CrossRef]

- Berardo, S.A. The use of authentic materials in the teaching of reading. Read. Matrix 2006, 6, 60–69. [Google Scholar]

- Storch, N.; Wigglesworth, G. Writing tasks: The effects of collaboration. In Second Language Acquisition: Investigating Tasks in Formal Language Learning; Multilingual Matters: Clevedon, UK, 2006; pp. 157–177. [Google Scholar]

- Martín-Macho Harrison, A.; Faya Cerqueiro, F. El juego en el aula de lengua inglesa para consolidar contenidos: experiencia con futuros docentes de educación infantil. In Aprendizajes Plurilingües y Literarios: Nuevos Enfoques Didácticos; Díez Mediavilla, A.E., Brotons Rico, V., Escandell, D., Rovira Collado, J., Eds.; Universitat d´Alacant, Servicio de Publicaciones: Alicante, Spain, 2016; pp. 873–878. [Google Scholar]

- Ahlberg, J.; Ahlberg, A. The Jolly Christmas Postman; Little, Brown & Co.: Boston, MA, USA, 1991. [Google Scholar]

| Sessions | |||

|---|---|---|---|

| Description | Collaborative Reading Task | Lecture | Cooperative Review Game |

| Gamification strategies | No | No | Yes |

| Type of session | Seminar | Plenary | Seminar |

| Respondents | 52 | 46 | 44 |

| Main methodology | Collaborative learning | Theoretical explanations | Cooperative learning |

| Group distribution | Small groups (3 members) | Pairs or small groups (3 members) | Small groups (4–5 members) |

| Teaching objectives | To promote reading skills through practice To raise awareness on reading strategies | To check understanding after explanations | To review contents at the end of a unit/semester |

| Socrative option | Teacher-paced quiz | Quick Question | Teacher-paced quiz |

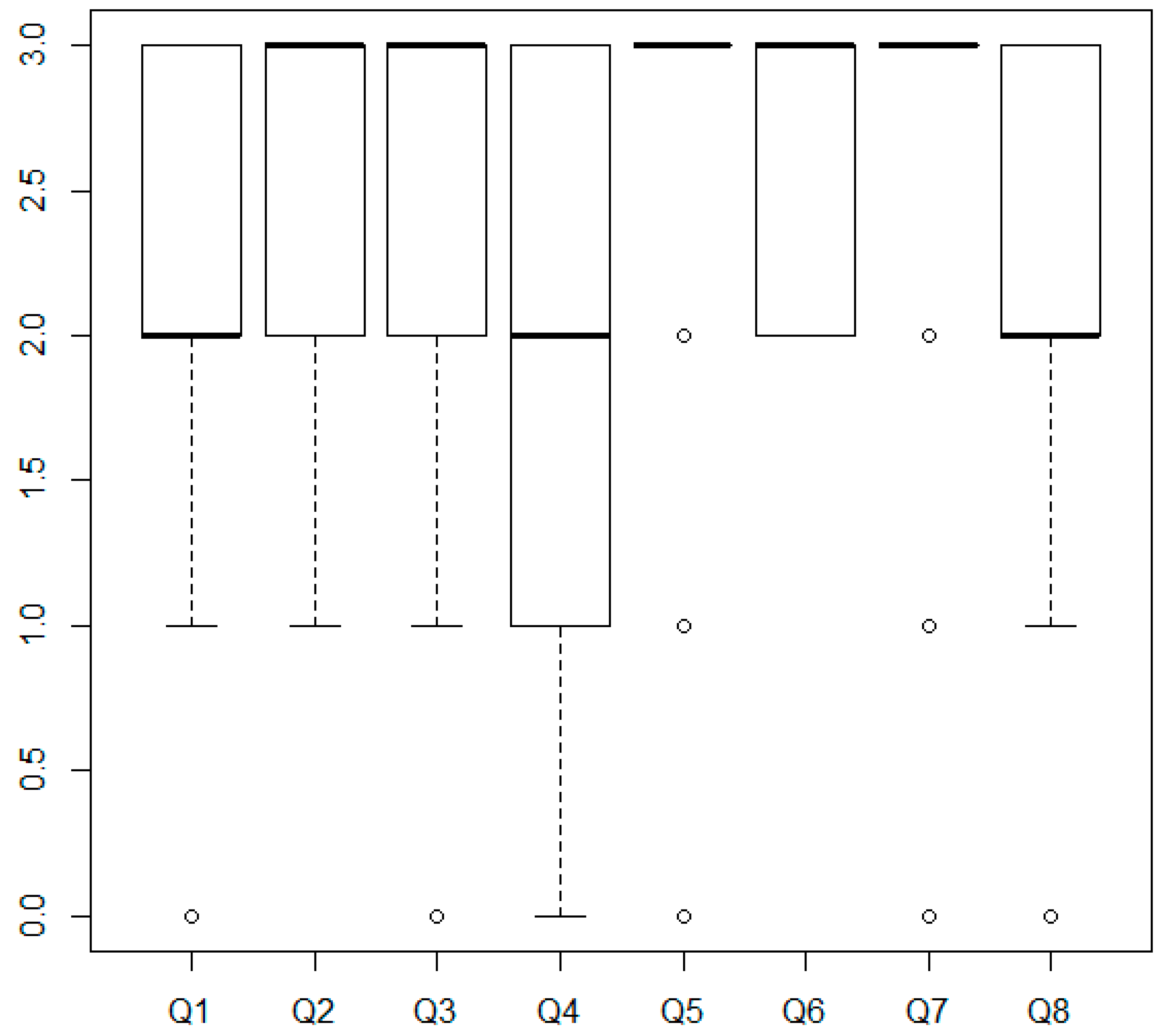

| Questions | Mean Score |

|---|---|

| 1. This task was useful to understand that each type of question implies a different type of reading. | 2.17 |

| 2. This task was a useful training to find answers in a text more quickly. | 2.63 |

| 3. This task helped me to realize that I can answer questions about a text without understanding everything it says. | 2.37 |

| 4. The time limit for each question helped me to find the information I was looking for more quickly. | 1.85 |

| 5. Socrative made the task more enjoyable than traditional reading tasks. | 2.69 |

| 6. The use of Socrative seemed appropriate for this type of task (brief readings, battery of short questions, multiple choice, etc.) | 2.71 |

| 7. The use of Socrative seemed appropriate to work in small groups. | 2.65 |

| 8. Working in a group during this reading task enabled me to notice the strategies used by my classmates. | 2.21 |

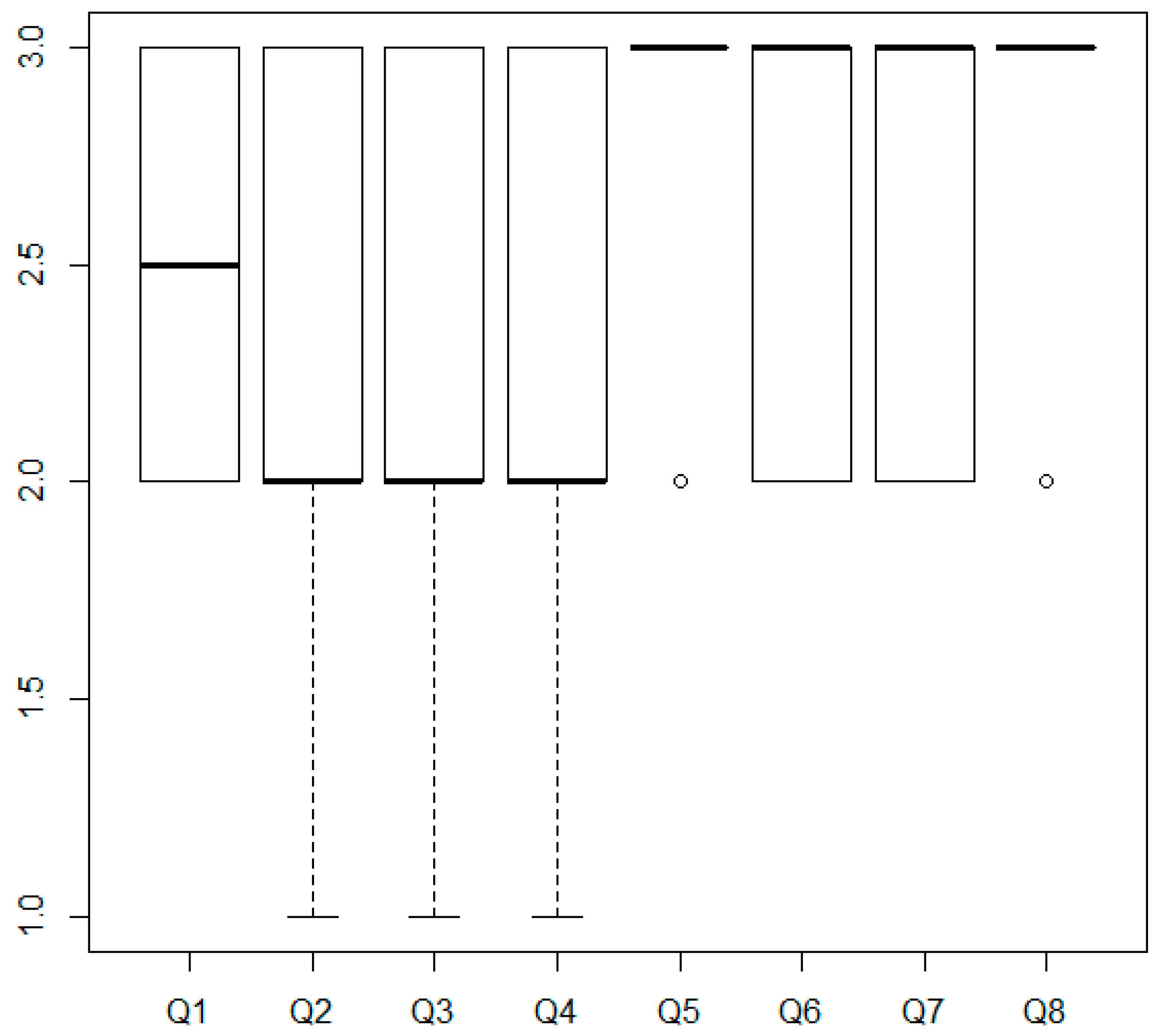

| Questions | Mean Score |

|---|---|

| 1. This task helped me to check what I had understood after each explanation. | 2.50 |

| 2. This task helped me to keep attention during the lesson. | 2.17 |

| 3. This task helped me to see which contents are the most relevant in the syllabus. | 2.33 |

| 4. This task helped me to better remember the aspects we have been asked about. | 2.43 |

| 5. Socrative made the task more enjoyable than a lesson consisting only of theoretical explanations. | 2.83 |

| 6. The use of Socrative seemed appropriate for this type of task (quick answer). | 2.62 |

| 7. The use of Socrative seemed appropriate to make all students’ participation more dynamic. | 2.70 |

| 8. I would like to use this type of tool more often in theoretical lessons. | 2.83 |

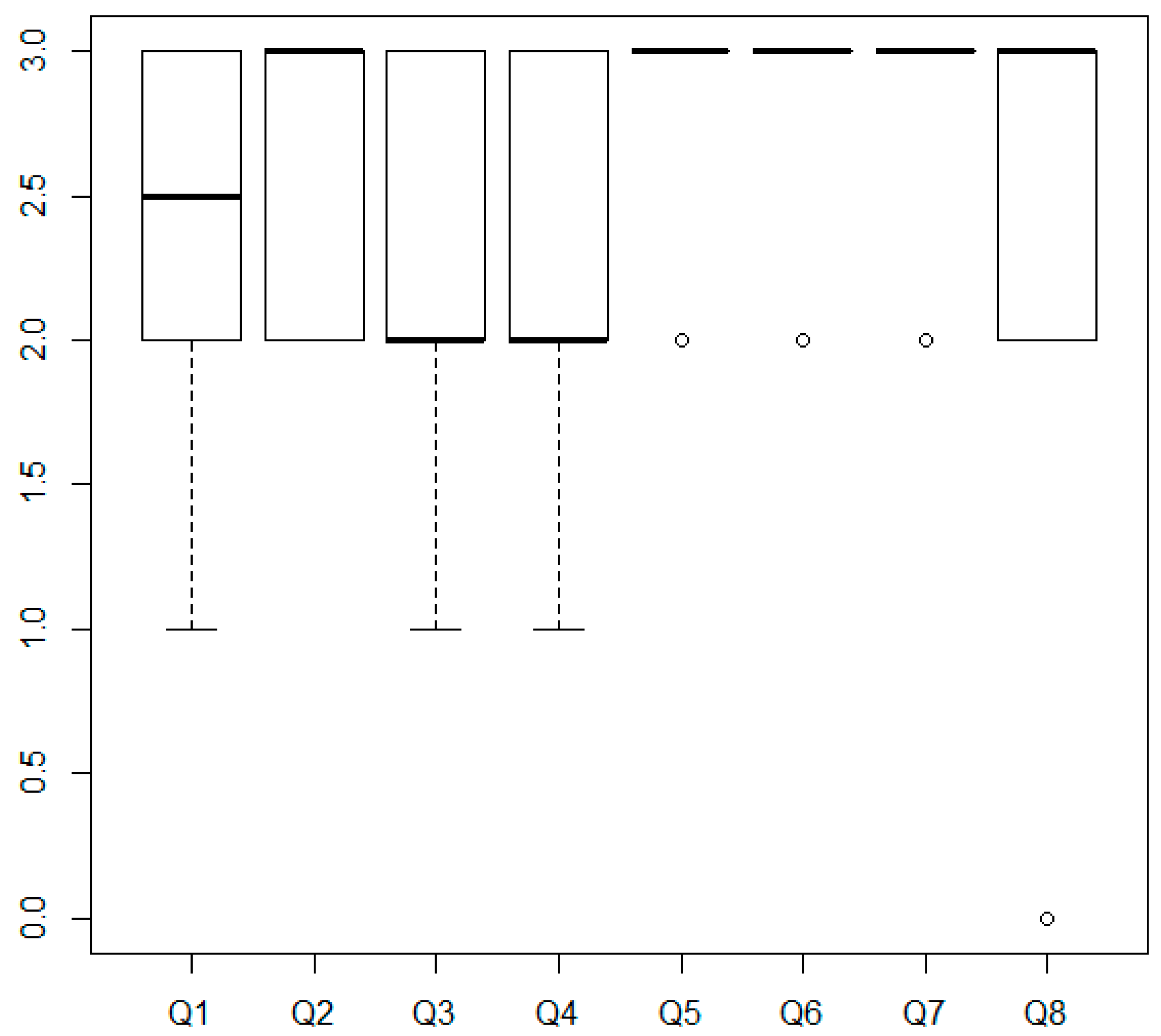

| Questions | Mean Score |

|---|---|

| 1. The task helped me to be aware of my own level. | 2.43 |

| 2. This task helped me to consolidate the contents (grammar, vocabulary, pronunciation) seen during the semester. | 2.61 |

| 3. The activity helped me to see which contents are the most relevant in the syllabus. | 2.34 |

| 4. The time limit for each question helped me to find the information I was looking for more quickly. | 2.25 |

| 5. Socrative made the task more enjoyable than traditional review tasks/lessons. | 2.84 |

| 6. The use of Socrative seemed appropriate for this type of task (battery of short questions, multiple choice, etc.). | 2.89 |

| 7. The use of Socrative seemed appropriate to work in small groups. | 2.86 |

| 8. When working in a group during this review activity, it was useful to agree the answers with my classmates. | 2.68 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Faya Cerqueiro, F.; Martín-Macho Harrison, A. Socrative in Higher Education: Game vs. Other Uses. Multimodal Technol. Interact. 2019, 3, 49. https://doi.org/10.3390/mti3030049

Faya Cerqueiro F, Martín-Macho Harrison A. Socrative in Higher Education: Game vs. Other Uses. Multimodal Technologies and Interaction. 2019; 3(3):49. https://doi.org/10.3390/mti3030049

Chicago/Turabian StyleFaya Cerqueiro, Fátima, and Ana Martín-Macho Harrison. 2019. "Socrative in Higher Education: Game vs. Other Uses" Multimodal Technologies and Interaction 3, no. 3: 49. https://doi.org/10.3390/mti3030049

APA StyleFaya Cerqueiro, F., & Martín-Macho Harrison, A. (2019). Socrative in Higher Education: Game vs. Other Uses. Multimodal Technologies and Interaction, 3(3), 49. https://doi.org/10.3390/mti3030049