The Voice Makes the Car: Enhancing Autonomous Vehicle Perceptions and Adoption Intention through Voice Agent Gender and Style

Abstract

:1. Introduction

2. Literature Review

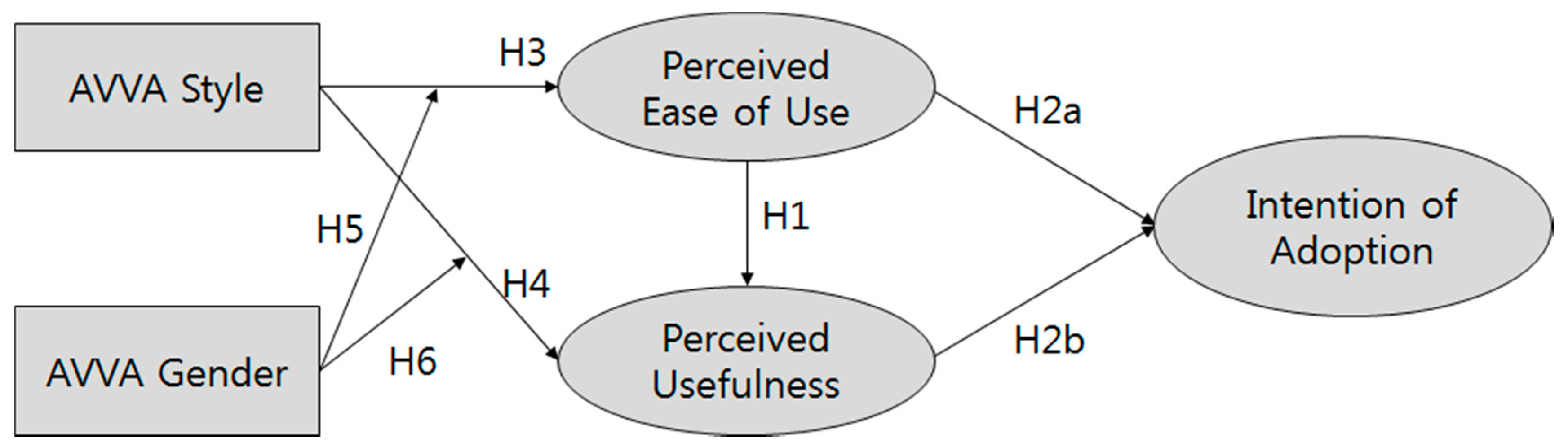

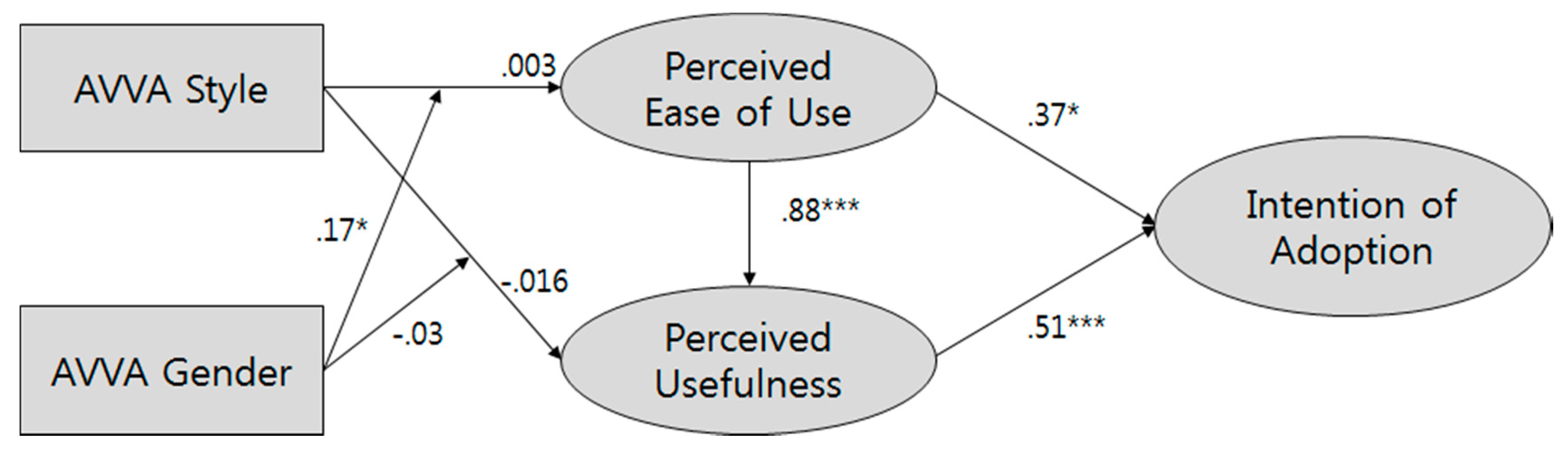

2.1. Technology Acceptance Model to Adoption of Intelligent Technology

2.2. Intelligent Technology as Social Actors

3. Methods

3.1. Experiment Design and Procedure

3.2. Experiment Treatments

3.3. Measurements

3.3.1. Manipulation Check

3.3.2. Perceived Ease of Use

3.3.3. Perceived Usefulness

3.3.4. Intention of Adoption

4. Results

5. Discussion

Author Contributions

Acknowledgments

Conflicts of Interest

Appendix A

| Simulation Scenes | Task-Oriented VA | Sociable VA |

|---|---|---|

| #1 Before Starting | Hello, welcome! My name is iVerse. I am a virtual agent that will drive this autonomous car. My primary goal is to take you to the designated destination with safety. It seems you are ready. I will start the car. | Hello, welcome! My name is iVerse. I am a virtual agent that will drive this autonomous car. Thank you for riding along with me. It seems you are ready. I will start the car. |

| #2 Starting to Drive the Car | The destination has been set to City Mall in downtown. The mall is 3 miles away from here. It is estimated to take 5 minutes to get there. Currently, the weather is 65 degrees Fahrenheit and sunny. | I hope you will enjoy this autonomous driving experience. Currently, the weather is 65 degrees Fahrenheit and sunny. I am excited to drive with you in this perfect weather. |

| #3 Going Straight (1) | The speed limit on the road is 35 miles per hour. I am currently driving at 33 miles per hour speed. | Let me tell you more about myself. I was invented by a research team at [Anonymized] about a month ago. So, I do not have many friends, but I think I just made one! |

| #4 Changing Lanes | I will change the line to the left and then I will turn left in 500 feet. | Driving is a demanding task. I am happy to help relieve your stress from driving. |

| #5 Turning (1) | I will turn to the left. | Isn’t it funny how red, white, and blue represent freedom… until they’re flashing behind us. I am kidding. |

| #6 Turning (2) | I will turn to the left. | I am going to turn left here. I always like making turns when I’m driving |

| #7 Traffic Signal (1) | The red traffic signal is ahead, I will slow down the speed to stop. | For some reasons, the red light makes me hungry. I hope you have a nice meal today. |

| #8 Traffic Signal (2) | The red traffic signal is ahead, I will slow down the speed to stop. | Another red light. I hope you’re feeling comfortable with this drive. |

| #9 Going Straight (2) | We will arrive at the destination in 1 min. | I like this city. People are nice to me, like you. |

| #10 Turning (3) | I will turn to the left. | We’re getting close to the destination. I’ll be sad to see you go. |

| #11 Pedestrian | A pedestrian is ahead, I will slow down the speed. | It’s been fun. I hope you also enjoyed the autonomous driving experience with me. |

| #12 Before Arrival | The destination is right in front of us. Please keep your seat belt fastened until we stop completely. | The destination is right in front of us. Please keep your seat belt fastened until we stop completely |

| #13 Arrival | We have arrived at our destination. We have traveled 3 miles with 35 miles per gallon fuel efficiency. Thank you. | We have arrived at our destination. Thank you for using this autonomous vehicle today. I hope you enjoyed the ride and that I will see you again soon |

References

- Muoio, D. 19 Companies Racing to Put Self-Driving Cars on the Road by 2021. Available online: https://www.businessinsider.com/companies-making-driverless-cars-by-2020-2016-10 (accessed on 5 February 2019).

- Kang, C. Self-Driving Cars Gain Powerful Ally: The Government. Available online: https://www.nytimes.com/2016/09/20/technology/self-driving-cars-guidelines.html (accessed on 5 February 2019).

- U.K. Department for Transport. The Pathway to Driverless Cars: Summary Report and Action Plan; U.K. Department for Transport: London, UK, 2015.

- Govt. Approves Pilot Run of Samsung’s Self-Driving Car. Available online: https://en.yna.co.kr/view/AEN20170501002000320 (accessed on 5 February 2019).

- Schoettle, B.; Sivak, M. A Survey of Public Opinion about Autonomous and Self-Driving Vehicles in the US, the UK, and Australia; Transportation Research Institute: Ann Arbor, MI, USA, 2014. [Google Scholar]

- Howard, D.; Dai, D. Public Perceptions of Self-Driving Cars: The Case of Berkeley, California. In Proceedings of the Transportation Research Board 93rd Annual Meeting, Washington, DC, USA, 12–16 January 2014; University of California, Berkeley: Berkeley, CA, USA, 2014; Volume 14. [Google Scholar]

- Rogers, E.M. Diffusion of Innovations, 4th ed.; Simon and Schuster: New York, NY, USA, 2010. [Google Scholar]

- Carter, L.; Bélanger, F. The Utilization of E-Government Services: Citizen Trust, Innovation and Acceptance Factors. Inf. Syst. J. 2005, 15, 5–25. [Google Scholar] [CrossRef]

- Verberne, F.M.F.; Ham, J.; Midden, C.J.H. Trust in Smart Systems: Sharing Driving Goals and Giving Information to Increase Trustworthiness and Acceptability of Smart Systems in Cars. Hum. Factors 2012, 54, 799–810. [Google Scholar] [CrossRef] [PubMed]

- Nass, C.; Moon, Y. Machines and Mindlessness: Social Responses to Computers. J. Soc. Issues 2000, 56, 81–103. [Google Scholar] [CrossRef]

- Davis, F.D. Perceived Usefulness, Perceived Ease of Use, and User Acceptance of Information Technology. Miss. Q. 1989, 13, 319–340. [Google Scholar] [CrossRef]

- Venkatesh, V.; Davis, F.D. A Theoretical Extension of the Technology Acceptance Model: Four Longitudinal Field Studies. Manag. Sci. 2000, 46, 186–204. [Google Scholar] [CrossRef]

- Venkatesh, V.; Morris, M.G.; Davis, G.B.; Davis, F.D. User Acceptance of Information Technology: Toward a Unified View. Miss. Q. 2003, 27, 425–478. [Google Scholar] [CrossRef]

- King, W.R.; He, J. A Meta-Analysis of the Technology Acceptance Model. Inf. Manag. 2006, 43, 740–755. [Google Scholar] [CrossRef]

- Heerink, M.; Kröse, B.; Evers, V.; Wielinga, B. Assessing Acceptance of Assistive Social Agent Technology by Older Adults: The Almere Model. Int. J. Soc. Robot. 2010, 2, 361–375. [Google Scholar] [CrossRef]

- Choi, J.K.; Ji, Y.G. Investigating the Importance of Trust on Adopting an Autonomous Vehicle. Int. J. Hum. Comput. Interact. 2015, 31, 692–702. [Google Scholar] [CrossRef]

- Lee, K.M. Presence, Explicated. Commun. Theory 2004, 14, 27–50. [Google Scholar] [CrossRef]

- Reeves, B.; Nass, C. The Media Equation: How People Treat Computers, Television, and New Media like Real People and Places; Center for the Study of Language and Information Publications: Stanford, CA, USA, 1996. [Google Scholar]

- Nass, C.; Lee, K.M. Does Computer-Generated Speech Manifest Personality? An Experimental Test of Similarity-Attraction. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, CHI ’00, The Hague, The Netherlands, 1–6 April 2000; ACM: New York, NY, USA, 2000; pp. 329–336. [Google Scholar] [CrossRef]

- Lee, E.J.; Nass, C.; Brave, S. Can Computer-Generated Speech Have Gender? In Proceedings of the CHI ’00 Extended Abstracts on Human Factors in Computing Systems, CHI ’00, The Hague, The Netherlands, 1–6 April 2000. [Google Scholar] [CrossRef]

- Lee, K.M.; Peng, W.; Jin, S.-A.; Yan, C. Can Robots Manifest Personality?: An Empirical Test of Personality Recognition, Social Responses, and Social Presence in Human–Robot Interaction. J. Commun. 2006, 56, 754–772. [Google Scholar] [CrossRef]

- Nass, C.; Moon, Y.; Green, N. Are Machines Gender Neutral? Gender-Stereotypic Responses to Computers with Voices. J. Appl. Soc. Psychol. 1997, 27, 864–876. [Google Scholar] [CrossRef]

- Kahneman, D.; Frederick, S. Representativeness Revisited: Attribute Substitution in Intuitive Judgment. Heuristics Biases Psychol. Intuitive Judgm. 2002, 49, 81. [Google Scholar]

- De Graaf, M.M.A.; Allouch, S.B. The Relation between People’s Attitude and Anxiety towards Robots in Human-Robot Interaction. In Proceedings of the 2013 IEEE RO-MAN, Gyeongju, Korea, 26–29 August 2013; IEEE: Piscataway, NJ, USA, 2013; pp. 632–637. [Google Scholar] [CrossRef]

- Endsley, M.R. Toward a Theory of Situation Awareness in Dynamic Systems. Hum. Factors 1995, 37, 32–64. [Google Scholar] [CrossRef]

- Crowelly, C.R.; Villanoy, M.; Scheutzz, M.; Schermerhornz, P. Gendered Voice and Robot Entities: Perceptions and Reactions of Male and Female Subjects. In Proceedings of the 2009 IEEE/RSJ International Conference on Intelligent Robots and Systems, IROS 2009; IEEE: Piscataway, NJ, USA, 2009; pp. 3735–3741. [Google Scholar]

- Nass, C.; Steuer, J.; Tauber, E.R. Computers Are Social Actors. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, CHI ’94, Boston, MA, USA, 24–28 April 1994; ACM: New York, NY, USA, 1994; pp. 72–78. [Google Scholar] [CrossRef]

- Aries, E.J.; Johnson, F.L. Close Friendship in Adulthood: Conversational Content between Same-Sex Friends. Sex Roles 1983, 9, 1183–1196. [Google Scholar] [CrossRef]

- Briton, N.J.; Hall, J.A. Beliefs about Female and Male Nonverbal Communication. Sex Roles 1995, 32, 79–90. [Google Scholar] [CrossRef]

- Furumo, K.; Pearson, J.M. Gender-Based Communication Styles, Trust, and Satisfaction in Virtual Teams. J. Inf. Inf. Technol. Organ. 2007, 2, 47–61. [Google Scholar] [CrossRef]

- Kramer, C. Perceptions of Female and Male Speech. Lang. Speech 1977, 20, 151–161. [Google Scholar] [CrossRef] [PubMed]

- Eagly, A.H.; Karau, S.J. Role Congruity Theory of Prejudice toward Female Leaders. Psychol. Rev. 2002, 109, 573–598. [Google Scholar] [CrossRef] [PubMed]

- Park, E.K.; Lee, K.M.; Shin, D.H. Social Responses to Conversational TV VUI. Int. J. Technol. Hum. Interact. 2015, 11, 17–32. [Google Scholar] [CrossRef]

- Mullennix, J.W.; Stern, S.E.; Wilson, S.J.; Dyson, C.-L. Social Perception of Male and Female Computer Synthesized Speech. Comput. Hum. Behav. 2003, 19, 407–424. [Google Scholar] [CrossRef]

- Lee, E.-J. Effects of “gender” of the Computer on Informational Social Influence: The Moderating Role of Task Type. Int. J. Hum. Comput. Stud. 2003, 58, 347–362. [Google Scholar] [CrossRef]

- Venkatesh, V. Determinants of Perceived Ease of Use: Integrating Control, Intrinsic Motivation, and Emotion into the Technology Acceptance Model. Inf. Syst. Res. 2000, 11, 342–365. [Google Scholar] [CrossRef]

- Davis, F.D. User Acceptance of Information Technology: System Characteristics, User Perceptions and Behavioral Impacts. Int. J. Man. Mach. Stud. 1993, 38, 475–487. [Google Scholar] [CrossRef]

- Amazon Polly. Available online: https://aws.amazon.com/polly/ (accessed on 5 February 2019).

- Audacity. Available online: https://www.audacityteam.org/about/ (accessed on 5 February 2019).

- Van Dolen, W.M.; Dabholkar, P.A.; de Ruyter, K. Satisfaction with Online Commercial Group Chat: The Influence of Perceived Technology Attributes, Chat Group Characteristics, and Advisor Communication Style. J. Retail. 2007, 83, 339–358. [Google Scholar] [CrossRef]

- Development, F. City Car Driving. Available online: https://store.steampowered.com/app/493490/City_Car_Driving/ (accessed on 4 May 2018).

- Schreiber, J.B.; Nora, A.; Stage, F.K.; Barlow, E.A.; King, J. Reporting Structural Equation Modeling and Confirmatory Factor Analysis Results: A Review. J. Educ. Res. 2006, 99, 323–338. [Google Scholar] [CrossRef]

- MacCallum, R.C.; Browne, M.W.; Sugawara, H.M. Power Analysis and Determination of Sample Size for Covariance Structure Modeling. Psychol. Methods 1996, 1, 130. [Google Scholar] [CrossRef]

- Marsh, H.W.; Wen, Z.; Hau, K.-T.; Nagengast, B. Structural Equation Models of Latent Interaction and Quadratic Effects. In Structural Equation Modeling: A Second Course; IAP Information Age Publishing: Charlotte, NC, USA, 2006; pp. 225–265. [Google Scholar]

- Cho, V.; Cheng, T.E.; Lai, W.J. The Role of Perceived User-interface Design in Continued Usage Intention of Self-paced E-learning Tools. Comput. Educ. 2009, 53, 216–227. [Google Scholar] [CrossRef]

- Davis, F.D.; Bagozzi, R.P.; Warshaw, P.R. User Acceptance of Computer Technology: A Comparison of Two Theoretical Models. Manag. Sci. 1989, 35, 982–1003. [Google Scholar] [CrossRef]

- Scharrer, E.; Blackburn, G. Cultivating Conceptions of Masculinity: Television and Perceptions of Masculine Gender Role Norms. Mass Commun. Soc. 2018, 21, 149–177. [Google Scholar] [CrossRef]

- Fox, J.; Potocki, B. Lifetime Video Game Consumption, Interpersonal Aggression, Hostile Sexism, and Rape Myth Acceptance: A Cultivation Perspective. J. Interpers. Violence 2016, 31, 1912–1931. [Google Scholar] [CrossRef] [PubMed]

- De Neys, W.; Rossi, S.; Houdé, O. Bats, Balls, and Substitution Sensitivity: Cognitive Misers Are No Happy Fools. Psychon. Bull. Rev. 2013, 20, 269–273. [Google Scholar] [CrossRef] [PubMed]

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lee, S.; Ratan, R.; Park, T. The Voice Makes the Car: Enhancing Autonomous Vehicle Perceptions and Adoption Intention through Voice Agent Gender and Style. Multimodal Technol. Interact. 2019, 3, 20. https://doi.org/10.3390/mti3010020

Lee S, Ratan R, Park T. The Voice Makes the Car: Enhancing Autonomous Vehicle Perceptions and Adoption Intention through Voice Agent Gender and Style. Multimodal Technologies and Interaction. 2019; 3(1):20. https://doi.org/10.3390/mti3010020

Chicago/Turabian StyleLee, Sanguk, Rabindra Ratan, and Taiwoo Park. 2019. "The Voice Makes the Car: Enhancing Autonomous Vehicle Perceptions and Adoption Intention through Voice Agent Gender and Style" Multimodal Technologies and Interaction 3, no. 1: 20. https://doi.org/10.3390/mti3010020

APA StyleLee, S., Ratan, R., & Park, T. (2019). The Voice Makes the Car: Enhancing Autonomous Vehicle Perceptions and Adoption Intention through Voice Agent Gender and Style. Multimodal Technologies and Interaction, 3(1), 20. https://doi.org/10.3390/mti3010020