1. Introduction

Lameness is a manifestation of painful disorders that result in an impaired movement or deviation from normal gait or posture [

1]. In dairy cattle, the main causes of lameness are lesions in the claws which cause bacterial infections and swelling in cows’ hooves and legs. Lameness causes severe pain and is associated with health issues such as the loss of fertility. Furthermore, lameness causes serious welfare and economic problems in the dairy industry. Some of the costs associated with lameness are the need for veterinary treatment and a reduction in milk production and cow’s reproductive performance. At advanced stages of the disease, a lame cow might die or have to be sacrificed. Lameness is a common issue in dairy cows, with some stables having up to 72% lame cows [

1].

The earlier a lame cow is identified, the earlier the causes of the disorder can be treated. Currently, lame cows are identified by visual inspection of their walking pattern, which is done by herdsmen. However, automation and the rapid growth in livestock production have led to more cattle and less employees per herd. As a consequence, herdsmen have less time to monitor the health condition of their cows. Automated systems for cow milking, feeding and cleaning are already being used in commercial herds. Neckbands with integrated motion sensors are used to predict whether a cow is undergoing estrus (i.e., the period of sexual fertility in a female mammal). Veterinarians monitor cow physical activity measured by these neckbands to determine the proper time to inseminate a cow. In contrast, systems to detect lameness are rarely used in commercial herds, despite the variety of solutions proposed by the scientific community.

Approaches for automated lameness detection have been studied using computer vision, external sensors such as pressure plates and wearable motion sensors. Most of the previous work on lameness detection using wearable motion sensors studied how to keep track of a cow’s physical activity (lying down, standing and walking) [

2,

3]. However, changes in physical activity due to lameness occur at more advanced stages of the disorder. The first observable symptom of lameness is a change in a cow’s usual walking pattern (i.e., gait).

This paper is an extended version of our previous work [

4]. In our previous publication, we presented a sensor device and a set of algorithmic steps to detect deviations in cows’ usual gait using a wearable motion sensor. The sensor device attached to a cow’s hind limb is shown in

Figure 1a. Assuming a cow is able to walk normally at the time the sensor is attached to it, our approach creates a model of the usual walking pattern of the cow using a combination of signal processing and machine learning methods. A trained machine learning model is used to detect deviations from the usual gait pattern of a cow later on.

In this article, we provide a more detailed insight of the algorithmic steps we introduced in [

4,

5]. Furthermore, we make our data available together with the publication, to facilitate the development and validation of other methods that might lead to an improvement in the life quality of cows. Furthermore, we list the requirements we elicited for a wearable sensor device for cows and describe the design decisions we made during this project. The design decisions we made can be used as a basis for designing future multimodal wearable sensing technologies for animals, as other wearable devices for animals will share similar requirements (e.g., low energy consumption, device robustness and waterproofness).

The rest of the paper is structured as follows:

Related Work: Provides an overview of other automated lameness detection systems and highlights how our approach differs from them.

Study Design: Discusses how we designed a study to collect data from 10 cows in order to develop and test our approach.

Requirements: Lists requirements we elicited for a wearable cow gait tracking system.

System Design: Lists the design decisions we made for a system able to detect lameness in dairy cattle and explains the rationale behind them.

Approach: Describes our approach in detail including the hardware we designed and how we process the signals acquired by the sensor device in order to classify cow strides into normal or abnormal.

Evaluation: Presents the results of a controlled experiment we conducted in order to validate our approach.

Ethical Considerations: Discusses how we addressed the ethical guidelines in [

6] during our study.

2. Related Work

Most modern stables collect data from cows’ daily activity such as the amount of milk cows yield and how much food they are fed. Different studies have suggested using this data to predict lameness [

7,

8]. However, changes in milk yield and feeding behavior due to lameness might manifest days after changes in gait. Detecting a lame cow based on its gait would make it possible to stop further development of the disorder. This would allow veterinarians to treat the cause of the disorder earlier, relieving the cow from pain and restoring its normal function.

Approaches for lameness detection based on gait analysis include those that use computer vision, weight/force sensing or motion sensing. Computer vision approaches extract lameness related information from a video recording, such as the arching of a cow’s back [

9], the amount of overlapping between a cow’s consecutive strides [

10] and the angle at which a cow’s fetlock joint makes contact with the ground during a stride [

11]. Most of these studies have used normal cameras [

9,

10,

11]. However, Van Hertem et al. [

12] studied lameness detection using a 3D camera and Eddy et al. [

13] used thermographic cameras.

Weight sensing approaches measure the weight a cow places on each limb while standing on force plates [

14] or walking over a force-sensitive mattress [

15,

16]. Based on this data, information about cows’ walking and standing behavior is calculated, such as the length and duration of a stride [

15,

17], the amount of kicks a cow performs while standing [

14], the weight distribution under single hooves [

16,

17,

18] and the frequency of steps [

16].

Computer vision and weight sensing approaches are limited to measuring a few strides per cow and face additional challenges such as the fact that cows near the measuring area might disrupt the measurements [

1] and the need for additional technologies to identify the cow being measured.

Motion-based lameness detection approaches rely on motion sensors that are attached to cows’ legs and/or neck. Most motion-based lameness detection approaches measure parameters related to cows’ daily physical activity, such as the amount of time cows spend lying, standing and walking [

19,

20], the number of strides cows perform per day [

3] and the time of the days when cows start and stop walking [

21]. These approaches do not analyze gait per se, but predict lameness based on cows’ daily activity.

A few studies—mostly coming from the veterinary medicine community—have investigated cow lameness detection based on motion data. Pastell et al. [

22] let lame and non-lame cows walk with accelerometers attached to all four limbs and developed a method based on wavelet analysis to predict the lameness. The study concluded that there is less symmetry in the acceleration of hind legs in lame cows than in healthy cows. Chapinal et al. [

23] combined an accelerometer device with a weighing platform to measure the weight distribution, speed and number of steps performed by cows. The system also determines whether cows are lying or standing. In a second study, Chapinal et al. [

24] found that the variance of acceleration of front and hind legs could be used to predict gait scores.

These studies compared lame cows with non-lame cows in order to discover differences in their gait and hence, did not consider the differences in the physical behavior and tolerance to pain of each individual cow. Alsaaod et al. [

20] found that the variation of physical activity among cows is significantly larger than the variation of physical activity caused by lameness. This suggests that lameness should be regarded on an individual basis rather than comparing a cow’s motion to a baseline established from other cows.

In contrast to previous approaches for lameness detection, our approach compares the gait of a cow to a baseline established by the cow itself during the first hours of use. Our approach is based in anomaly detection, a technique commonly used to detect bank fraud and intrusion in computer networks. The challenge at detecting such events, is that the anomaly data is usually not available at development time in order to train a computing device how to detect the abnormal events. Instead, what these methods do is to learn what the “usual” events are and try to detect anything that is not similar to them. Our approach learns the gait pattern of a particular cow and is able to detect deviations from this gait pattern afterwards. A deviation from the normal gait is the first indicator of a possible lameness. Our approach has two main advantages when compared to previous work on motion-based lameness detection approaches: (1) it takes into consideration the uniqueness of each cow’s gait [

20] and (2) it requires a single motion sensor attached to a hind limb.

3. Study Design

As described in our previous publication [

25], we collected data from 10 cows while walking with our motion sensor attached to their hind left limb. Cows were chosen to maximize the diversity of age, weight and breed.

Table 1 displays demographic information about each cow. We conducted five “runs” per cow. In each run, we let cows walk for approximately 7 min. In three of the runs, cows walked normally and in the other two runs, cows walked with a plastic block attached to the outer claw of either their left or right hind hoof. Runs were executed in different days. We performed only one normal run for cow 4 because it was isolated into a different stable due to pregnancy during the period this study lasted. Statistical information about the data we collected is shown in

Table 1.

Figure 2a shows a plastic block attached to the outer claw of the left hind hoof of a cow. Among the other approaches we considered to collect data to validate our algorithm, we found this approach to be the most appropriate for an animal-centered research, because it does not involve pain (e.g., forcing a lame cow to walk) and required a considerable shorter intervention to cows’ natural activity. The total intervention lasted approximately 40 min in total as was spread among different days.

We designed the experiment to resemble the conditions in which our approach would be used. We let cows walk in their usual environment rather than isolated walkways specially designed for the experiment. Furthermore, we included motion data of periods when cows stopped walking, turned and got bumped by other cows. Cows walked on two different types of ground: rubber and concrete.

4. Requirements

We elicited the requirements for a wearable sensor device in a series of interviews with a veterinary research team from the Ludwig Maximilian University (LMU) and pilot studies at an indoor stable in Munich, Germany. Paci et al. [

26] suggested that a wearable device that is not directly relevant to the animal’s intentions should ideally not get in its way (i.e., affect its daily activities or experiences). To this end, our goal was to design a system that keeps track of the gait of cows with little influence in their daily life.

Low energy consumption. An intervention to a cow’s natural activities is required every time a battery has to be replaced. Furthermore, farmers might not have time to collect every sensor device in an entire herd to replace a battery. Therefore, wearable devices to be used by herds of animals should consume little power and remain functional without intervention from veterinarians for as long as possible, ideally during the lifetime of the animal.

Attachment at a hind limb. The wearable device should be attached at a hind limb for three main reasons. First, cows usually lie down with their front legs bent and spread out their hind legs outwards. Second, when cows undergo oestrus, they jump with their front legs on other cows. Third, lameness is usually associated with diseases (mostly infections) occurring on hind legs.

Water, dirt and weight resistant. Cows in indoor stables are in contact with excrement and urine. Furthermore, cows might lick the device. In addition, cows might weight up to 1000 kg and might step on another cow’s device with a sharp hoof. Therefore, the device should be water and dirt resistant and be able to cope with high amounts of forces applied at its surface.

Deployment that maximizes use by cows. There might be little space in cow indoor stables for deploying large devices and providing the device with power might require additional infrastructure. In particular, power and cables are subject to the same robustness requirements mentioned in the previous point. As a consequence, the deployment of a receiver device should take into account the requirements for the device to function (e.g., access to power and connection to transmit data) as well as the practices of the animal species (e.g., placement in the stable where cows walk regularly).

5. System Design

In this section, we list the main design decisions we made for an automated lameness detection system. These decisions derive from the requirements elicited in the previous section.

Local computations. Streaming sensor data from a wearable device is (considerably) more energy costly than performing computations locally. In order to reduce the energy consumption of the device, we decided to perform computations and store computed results on the sensor device and transmit them once a day while the cow is milked.

Custom sensor device. We decided to design our own sensor device with a flat and lightweight form-factor in a robust plastic 3D printed material. The goal of our design was to minimize the risk that a cow injures itself, other cows in the stable (e.g., by bumping the device onto other cows) or the device itself.

Individualized tracking. Alsaaod et al. [

20] showed that the variation of physical activity among cows is significantly larger than the variation of physical activity caused by lameness. This suggests that comparing a cow’s motion to a baseline established from other cows might not be insightful at detecting lameness. The veterinarians we collaborated with stated that each cow has an individual walking pattern and reacts differently to pain. For this reason, we decided to create an individual gait profile for each cow and detect changes in their locomotion.

Receiver at milking robot. In order to minimize energy consumption, the data should be sent from the wearable device to a nearby receiver. The milking robot represents a good place to collect data recorded by a wearable device for two main reasons. First, cows usually visit the milking robot twice a day. Second, cows remain still in the same area for several minutes during milking, which represents an ideal opportunity for the data transmission.

6. Approach

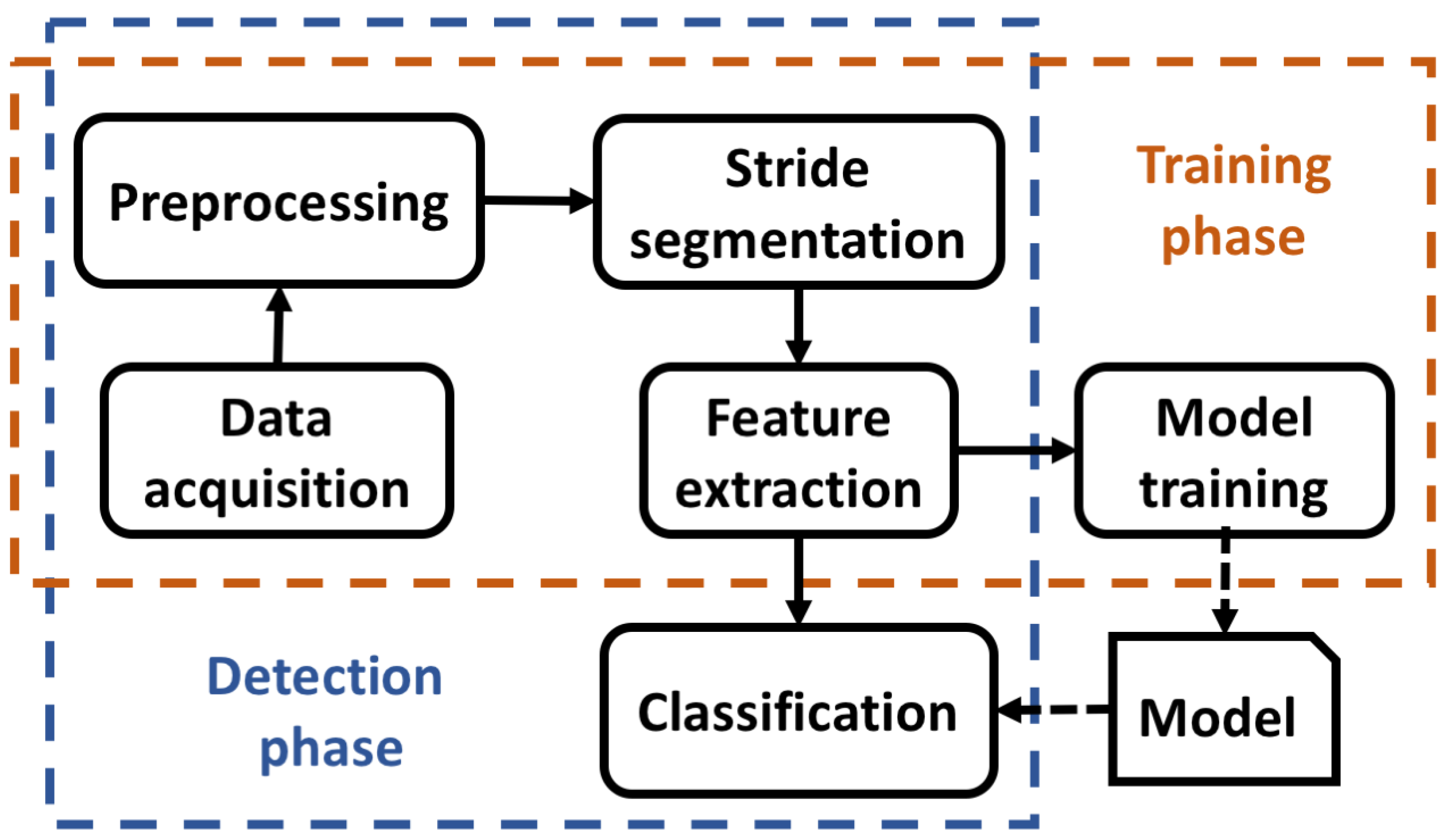

In this section, we describe our approach for anomaly detection of a cow’s gait, including the sensor device that senses motion data and computations it performs. Our approach consists of two phases: the training phase and the detection phase. The training phase builds a model of the usual gait of a cow using a machine learning algorithm. The procedure requires a cow to be healthy during the first hours of use. The detection phase classifies the gait of a cow into normal or abnormal based on a comparison of its current gait with the model created during the training phase. Both, the training phase and detection phase require the following computations:

Data acquisition. The sensor signals are read from the sensor device and stored in memory.

Preprocessing. The data is organized in chunks and filtered to eliminate noise.

Stride segmentation. Cow strides are detected and their boundaries identified.

Feature extraction. Information describing of a cow’s gait is extracted from each stride.

These computations produce a set of features (i.e., information describing the gait of a cow). This set of features is used during the

training phase to train a machine learning model—we call this

Model Training. During the

detection phase, the already trained machine learning model assigns a label

normal or

abnormal to each set of features - we call this

Classification. The following subsections describe each of these computations in more detail.

Figure 3 shows an overview of the different computations performed by our approach.

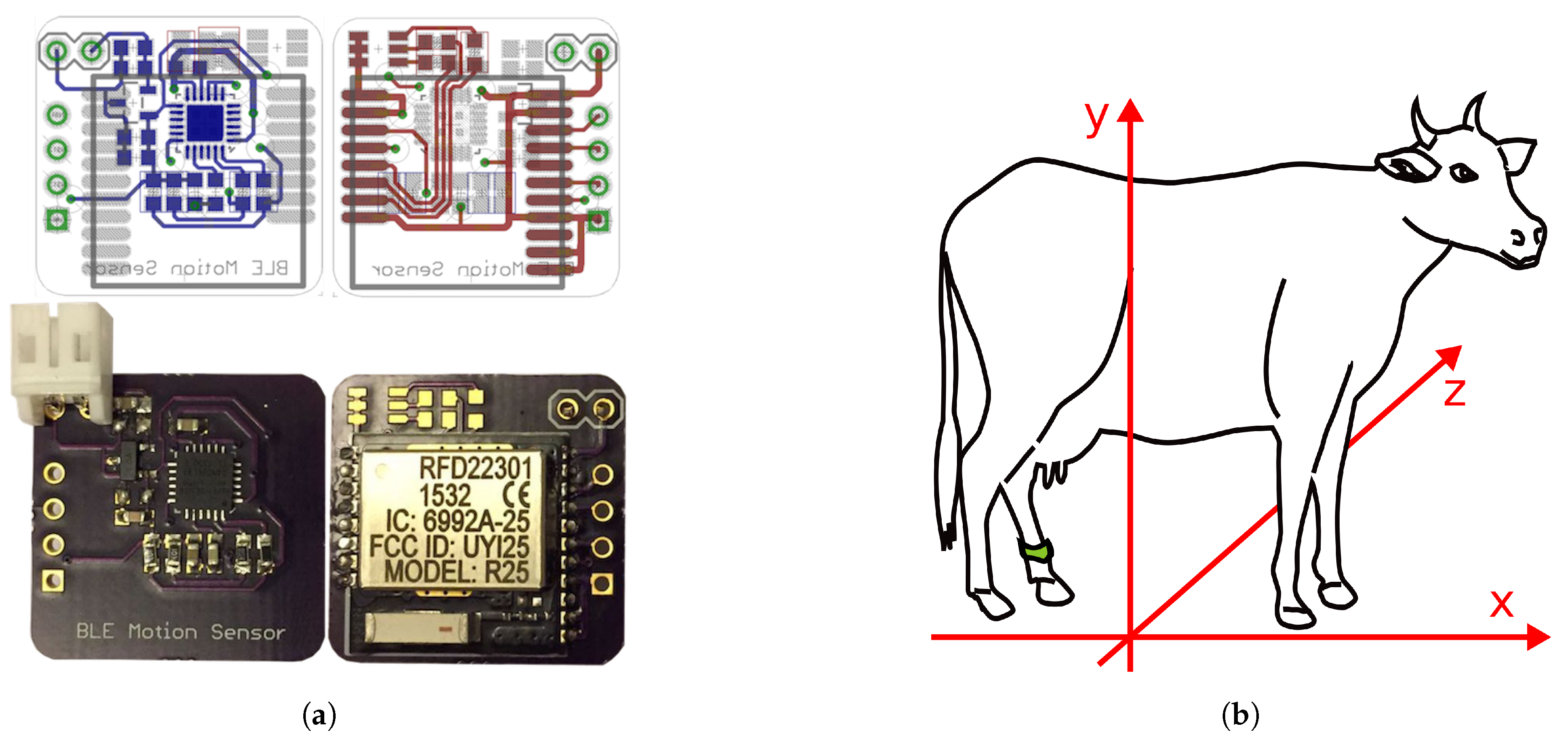

6.1. Sensor Device

The sensor device consists of an electronic device, a battery to power it and a 3D printed enclosure. The electronic device we designed is based on an ARM Cortex-M0 microcontroller, a 6-axis Inertial Measurement Unit (IMU) and a Bluetooth Low Energy (BLE) module. The ARM Cortex-M0 microcontroller operates at 16 MHz and is characterized by its low-power consumption rate and small footprint. The device has 128 kb of flash memory with 8 kb of ram. As a communication module, we decided to use the BLE technology due to it’s low-power consumption rate. We designed the electronic device as a two-layer printed circuit board (PCB) placing the motion sensor on the front side and the microcontroller and BLE module on the back side. The dimensions of the electronic device are 21 mm × 21 mm × 2.5 mm.

Figure 4a shows the front and back sides of the device. The device functions at 3.3 V and is powered by a 2000 mAh battery.

The enclosure we designed is shown in

Figure 1b. We designed the enclosure to have rounded corners and edges to avoid injuries to cows. The device has been printed with the Selective Laser Sintering (SLS) 3D printing technique. This printing technique produces robust and relatively lightweight objects with thin sides. We designed the enclosure in two parts such that a rubber seal can be fit between them in order to make the enclosure water-proof to protect the electronic device and battery from urine and excrement. According to a veterinarian, the sensor enclosure is “

robust, yet thin and lightweight for cows to wear” and “

should be able to resist forces and strain caused by other cows stepping on it”.

6.2. Data Acquisition

The sensor device measures linear acceleration (without gravity) and orientation (yaw, pitch, roll) at 100 Hz along 3 axes (

x,

y,

z). The accelerometer range is set to

g. Data is obtained with an analog-digital converter resolution of 16 bits. The orientation of the device is depicted in

Figure 4b. Data is stored in the device’s flash memory and processed every 128 samples (1.28 s).

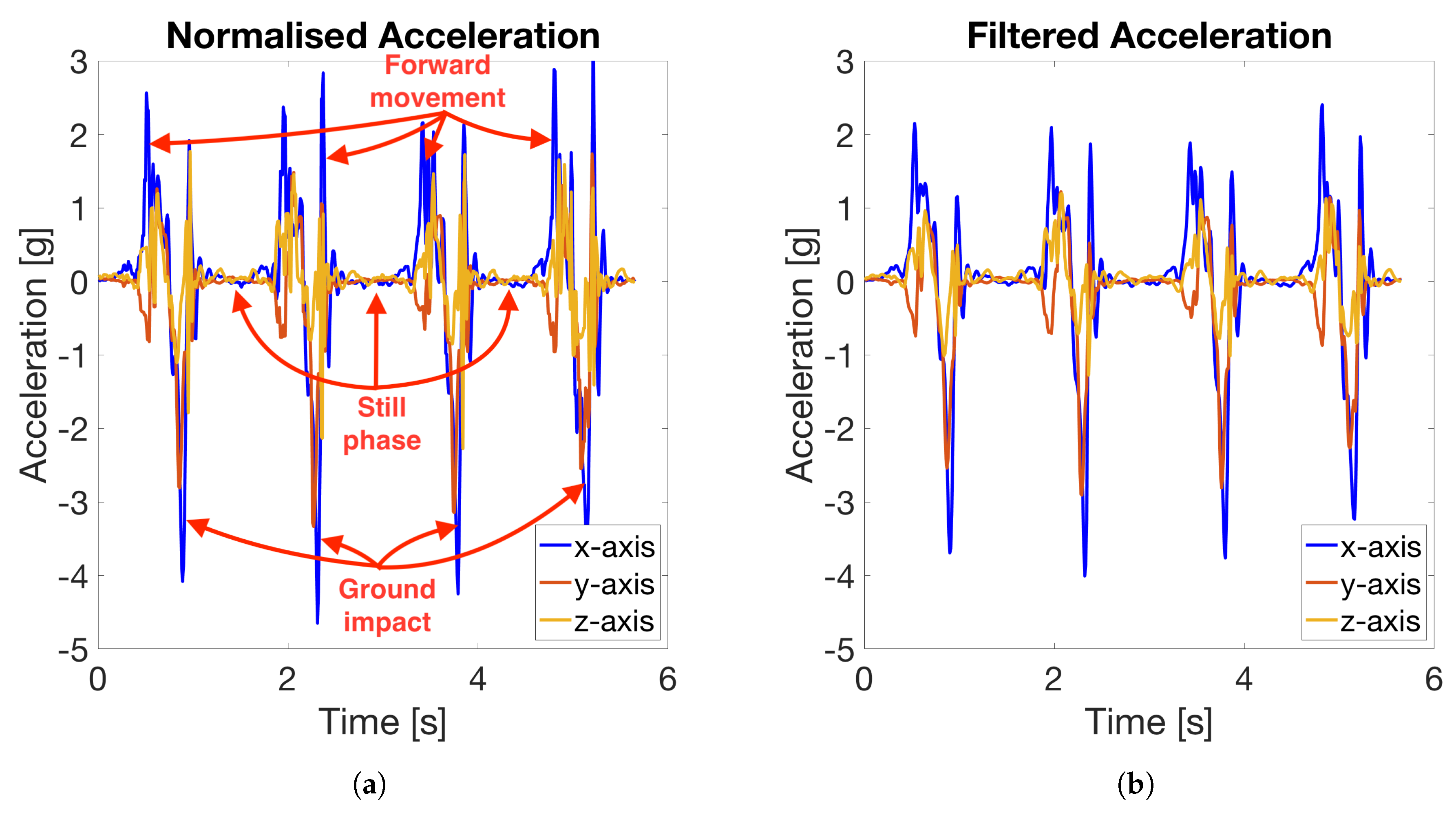

Figure 5a illustrates how the data acquired by the device correlate to different stride phases.

6.3. Preprocessing

In the preprocessing stage, we filter the signal acquired by the sensor in order to eliminate noise (i.e., information in the signal acquired not related to a cow’s gait). Noise might be introduced by the sensor device (e.g., shaking at high frequencies caused by the accelerometer) or by sudden movements such as when a cow is bumped by another cow in the stable. Therefore, we apply a first order Butterworth low-pass IIR filter with a

cutoff frequency of 20 Hz to the linear acceleration signal. This filter leaves frequencies in the range 0–20 Hz almost unmodified and attenuates frequencies higher than 20 Hz. A comparison of the signal before and after applying the filter is shown in

Figure 5.

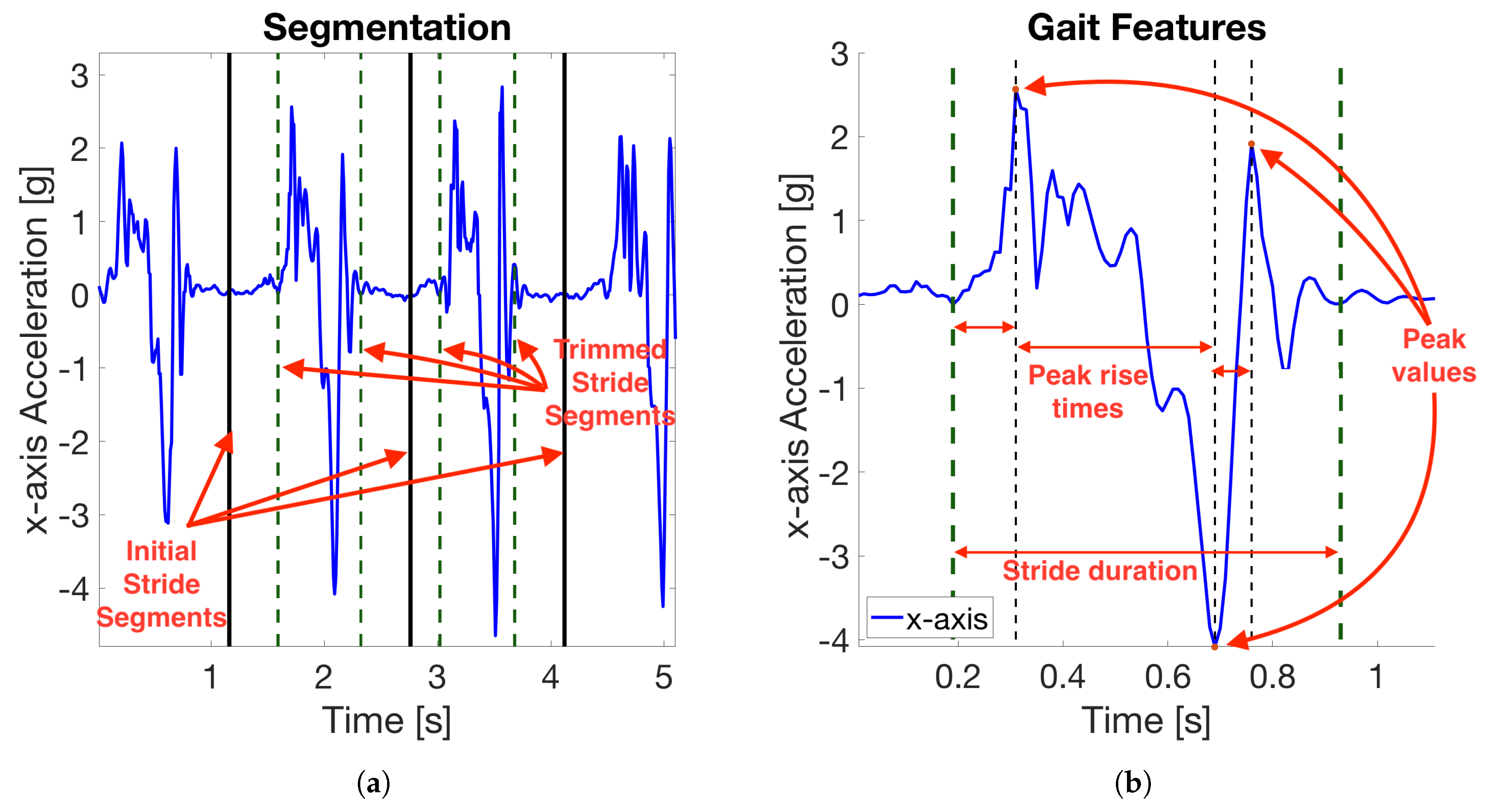

6.4. Stride Segmentation

The purpose of the

Stride Segmentation is to detect the beginning and ending of a stride. We segment the strides based on a peak detector. We use the acceleration along the

x-axis based on our observation that this axis has the strongest acceleration range during strides (as can be seen in

Figure 5). The

Stride Segmentation is done in the following three steps:

Every stride has two upper peaks. We detect the highest peak with a peak detection algorithm. We ignore peaks that are less than 60 samples away from a previously detected peak. This also filters out periods when cows did not walk.

Every stride is preceded by periods of small variance in acceleration. We find these periods by searching for the 9-sample window with smallest variance in acceleration among the 70 samples before and after the detected peak. We call the center of these windows initial stride segments.

Between two initial stride segments, additional samples are included that might not belong to a stride. Therefore, we trim the stride by shifting the initial stride segments towards the peak detected in step 1. The initial stride segments are shifted until the standard deviation of a 6-sample window centered at the shifted stride segment is larger than a constant . We found empirically.

Figure 6a shows the linear acceleration along the

x-axis of four consecutive strides with annotations pointing at initial and trimmed stride segments.

6.5. Feature Extraction

For each stride segmented, we compute a set of gait and statistical features. Gait features are measurements specific of a stride. Every stride is characterized by three peaks: two upper peaks and one lower peak. We first detect all three peaks. If any of the peaks could not be found, we ignore the stride. This might happen if the cow shortly lifted a leg or got bumped by another cow. For all three peaks, we compute its peak value and rise time. The rise times are computed as the difference in samples to the previous peak. The rise time of the first peak is computed as the difference in samples to the first sample in the trimmed stride segment. In addition, the total duration of the stride is added to the feature set.

Figure 6b illustrates how the gait features are computed based on the three peaks of a single stride.

Statistical features are measures to extract information from data sets. We extract the following statistical features: mean, median, standard deviation, Zero Crossing Rate (ZCR), Peak-to-Peak amplitude (P2P), Root Mean Square (RMS) and Average Acceleration Variation (AAV) for every stride. ZCR is a measure of the amount of times a signal crosses the zero value. A high ZCR might indicate a highly intense or periodic activity. P2P is the difference between the maximum and minimum acceleration value in a stride and provides information about the intensity of a stride. RMS is the square root of the mean of the values in a stride squared. This measurement provides information about the amount of acceleration and variation in a stride. AAV is calculated as the sum of the absolute differences between consecutive samples in a stride normalized by the number of samples. AAV provides an indication of how sudden changes in acceleration happen within a stride. These measurements are commonly used for activity recognition applications and have been recently used for fall-detection and gait analysis in humans [

27,

28].

The list of gait and statistical features are enumerated in

Table 2. Gait features are computed on linear acceleration and statistical features are computed on linear acceleration, rotation and magnitude of acceleration. ZCR is only computed on the linear acceleration. This gives us a total of 21 gait features and 45 statistical features per stride. A window might contain several strides. We average the features extracted from the same window. A vector containing the 66 averaged features is called

stride instance.

6.5.1. Feature Normalization

After extracting these features, we normalize every feature in the stride instance to have zero mean and a standard deviation in the range [−1, 1]. We do this by subtracting the mean and dividing by the standard deviation of every stride instance used during the training phase. This is a required computation for the machine learning algorithms. Not normalizing the features could cause features with a large range of values to have a larger influence on the outcome of the classification.

6.5.2. Feature Grouping

After extracting and normalizing the features, we group consecutive stride instances by computing the average of each feature in consecutive strides. Reducing the number of stride instances that have to be classified leads to less computations and a longer battery life. Furthermore, we observed that grouping stride instances increased the accuracy of the classification. We determined empirically.

6.6. Model Training and Classification

The

Model Training step trains a machine learning algorithm to classify stride instances as

normal or

abnormal. It should be noted that

Model Training and

Classification are two different steps, as shown in our overview in

Figure 3. We describe both steps in this section because they are closely related to each other.

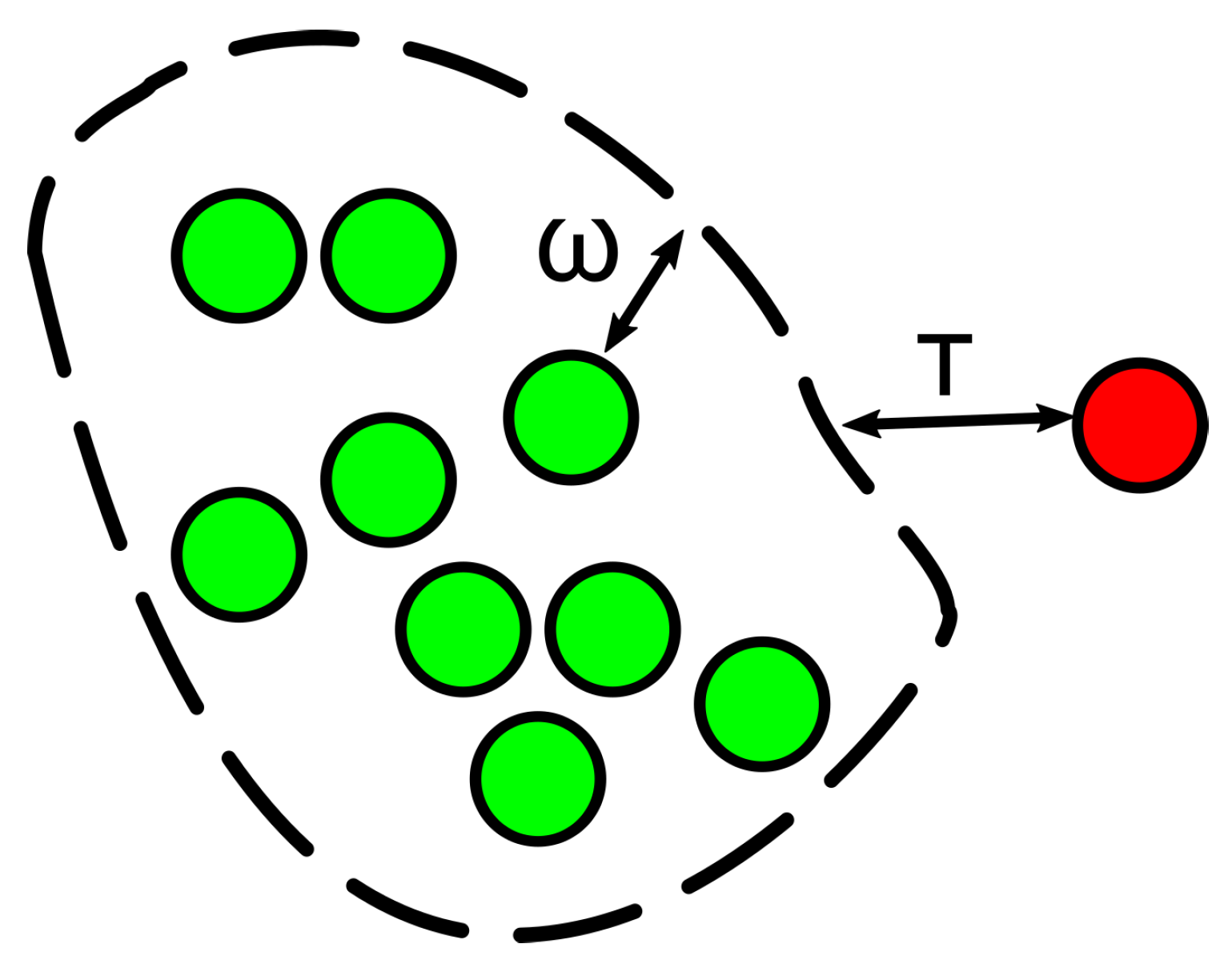

To classify stride instances into normal and abnormal, we use a Support Vector Machine (SVM) classifier. SVM is a classification algorithm that calculates a boundary that maximizes the distance between instances of two different classes in an N-dimensional space. A one-class SVM classifier finds a boundary around instances of one class and classifies new observations based on their distance to this boundary. We train a one-class SVM classifier using normal stride instances.

The boundary of the classifier is defined such that a fraction

of the instances is classified as

abnormal. The constant

is used to define how ‘compact’ the boundary around

normal stride instances is. A smaller

leads to more stride instances classified as

abnormal and a larger

leads to more instances classified as

normal. The one-class SVM computes a distance

for each new stride instance

. Our SVM classifier classifies stride instances as

normal if

or as

abnormal otherwise.

Figure 7 sketches the meaning of parameters

and

.

7. Evaluation

In this section, we present and discuss our results. Our approach intends to detect abnormal gait. Therefore, our positive class is the class of abnormal stride instances. We define the following variables:

- True Positive (TP)

Amount of abnormal stride instances classified as such.

- True Negative (TN)

Amount of normal stride instances classified as such.

- False Positive (FP)

Amount of normal stride instances classified as abnormal.

- False Negative (FN)

Amount of abnormal stride instances classified as normal.

We validated our results based on the metrics: accuracy, specificity and sensitivity, defined as follows:

Accuracy: The ability of our approach to classify stride instances correctly. It answers the question: “what percent of the classified stride instances is correct?”. Accuracy is calculated as: .

Specificity: The ability of our approach to identify normal stride instances. It answers the question: “when a cow walks normally, what percent of its stride instances does our approach classify as ‘normal’?”. This is also referred to as “true negative rate” and computed as: .

Sensitivity: The ability of our approach to identify abnormal stride instances. It answers the question: “when a cow walks abnormally, what percent of its stride instances does our approach classify as ‘abnormal’?”. This is also referred to as “true positive rate” and computed as: .

We computed the accuracy, specificity and sensitivity for a particular cow by means of the leave-one-out cross-validation technique as follows:

We trained the SVM algorithm with N-1 normal stride instances, where N is the total number of normal stride instances for a specific cow.

We used the model to classify the normal stride instance that was not used to train the algorithm and every abnormal stride instance.

We repeated steps 1 and 2 N times; each time we left out a different normal stride instance.

We averaged the accuracies, specificities and sensitivities computed in step 3.

7.1. Results

Table 3 shows the accuracy, specificity and sensitivity of our approach for each cow. We used the parameters:

and

. We found these parameters empirically with the goal to maximize the average accuracy of the classification for all cows. According to these results, our approach has an average accuracy of 91.1% (specificity: 91.6% and sensitivity: 74.2%). These results imply that our approach would classify 8.4% of the stride instances of cows walking normally as

abnormal. In contrast, when cows do indeed walk abnormally, our approach would classify 74.2% of their stride instances as

abnormal.

7.2. Discussion

The results we obtained suggest that our approach is able to detect a deviation from a cow’s usual walking pattern after this deviation occurs. These results meet the requirements of the system we propose, as stride classifications are meant to be tracked over time rather than used in isolation. In particular, missing steps of a lame cow (i.e., a low sensitivity) is acceptable because a cow will perform several steps a day even if it is lame (e.g., to get access to food). The lowest sensitivity obtained by our approach was 62.5% (for cow #7). That means 62.5% of its steps would still be detected and could be used to determine whether the cow needs treatment. Furthermore, our approach would be able to emit alerts if a cow should reach an advanced stage of a lameness disease that prevents it from walking altogether. On the other hand, a low specificity could make veterinarians loose trust in our system. The lowest specificity we obtained was 81.7% (for cow #6). This indicates 18.3% of its strides would be detected as abnormal when the cow is actually walking normally. However, 18.3% abnormal step detection would be usual for this cow, and would suddenly rise to 70.6% when it becomes lame.

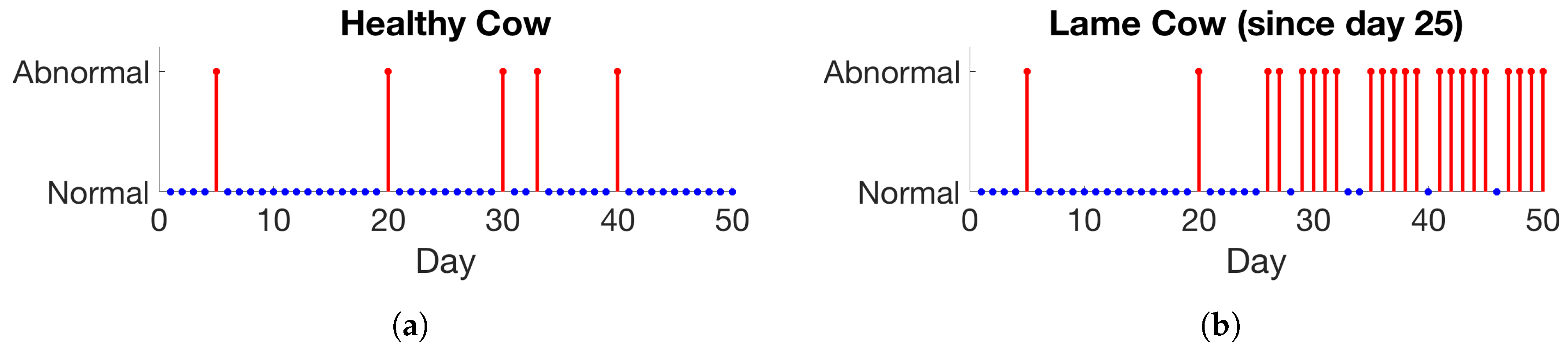

Figure 8 shows an example illustrating how the gait for a particular cow could be shown by a user interface.

We argue that the deviation from normal gait caused by lameness is more radical than the change in gait caused by the plastic block which we used to obtain a ground truth set in our study. This is because cows suffering lameness will try to avoid pain by bearing as little weight as they need to on the affected hoof. As a consequence, lame cows perform considerably shorter strides or stop using one limb all together. This causes an asymmetry in the gait, which is observable visually. In contrast, the change caused by the plastic block is more subtle. We were not able to assess visually whether a cow was walking with a plastic block or not by looking at its gait. As a consequence, we believe our approach might be more accurate at detecting deviations in gait caused by lameness than those caused by a plastic block.

8. Ethical Considerations

Our research required cows to take part in an experiment. In order to ensure an ethically appropriate treatment of the cows during our experiment, we designed it based on the ethical guidelines proposed by Mancini [

6] as follows:

Respecting and caring for every participant without discrimination. The participants of this experiment were cows of different ages and breeds. We did not harm any of the them or make any discrimination as for the selection of the specific cow subjects or treatment they received during the experiment.

Garnering participants mediated and contingent consent. We conducted this experiment together with a professional veterinarian team who are the legal representatives of the cows that participated in the experiment. Both veterinarians know the needs and welfare requirements of these cows and gave us their consent to conduct the experiment. Furthermore, they accompanied and supported us throughout the entire experiment to ensure these requirements were met.

Doing research that is relevant to participants and consistent with their welfare. The results of our research suggest that it is possible to automatically detect a condition that is painful for cows and highly detrimental to their health (e.g., might lead to death if not treated early enough). Therefore, our research has the potential to benefit the individual cows that participated in the experiment, as well as other cows. This research was conducted in the natural environment of the participating cows, an indoor stable located in the outskirts of Munich, Germany.

Avoiding research procedures that may be harmful to participants. According to the veterinarians that supported us throughout this study, attaching a sensor device and plastic block to cows and encouraging them to walk for less than 10 min did not cause any lasting harm to these cows. Veterinarians trimmed cows before attaching the plastic block to ensure the block was placed and fit properly to the claw. Trimming cow claws is a procedure undertaken to maintain a healthy hoof condition and prevent injury and disease. In addition, we limited the walking sessions to a maximum of 10 min per day and continued the data recording on a different day in order to reduce the level of fatigue caused to the cows.

Assessing research proposals and obtaining expert support. The cow interventions performed in this study were done by professional veterinarians and were approved by the ethics committee of the Ludwig Maximilian University (LMU) in Munich, Germany to ensure no harm was done to the cows.

9. Conclusions

We presented and evaluated a system to detect changes in cows’ usual gait that might occur due to a lameness-related disease. Our approach considers the differences in gait of a cow by comparing its walking pattern to a baseline established for that particular cow during the first hours of use. Our system could be used by veterinarians to keep track of the health condition of the cows in a herd. In particular, veterinarians might decide to examine a cow if the number of detected abnormal stride instances has exceeded considerably the usual amount for that particular cow.

In the future, our approach will have to be validated in a longer-term field study. In particular, it would have to be studied how veterinarians use our system in practice (e.g., how much they trust our system even in the presence of false positive detections). Furthermore, the usage of our system be validated for a longer period of time to study possible long-term effects that we did not observe during our first study. Furthermore, the energy consumption and battery duration of the wearable device should be optimized in a way that does not affect the accuracy of the stride classification.