A State-of-the-Art Review of Augmented Reality in Engineering Analysis and Simulation

Abstract

1. Introduction

2. Overview of Computer Aided Technologies in Engineering Analysis and Simulation

2.1. Traditional Computer-Aided Engineering Analysis and Simulation Technologies

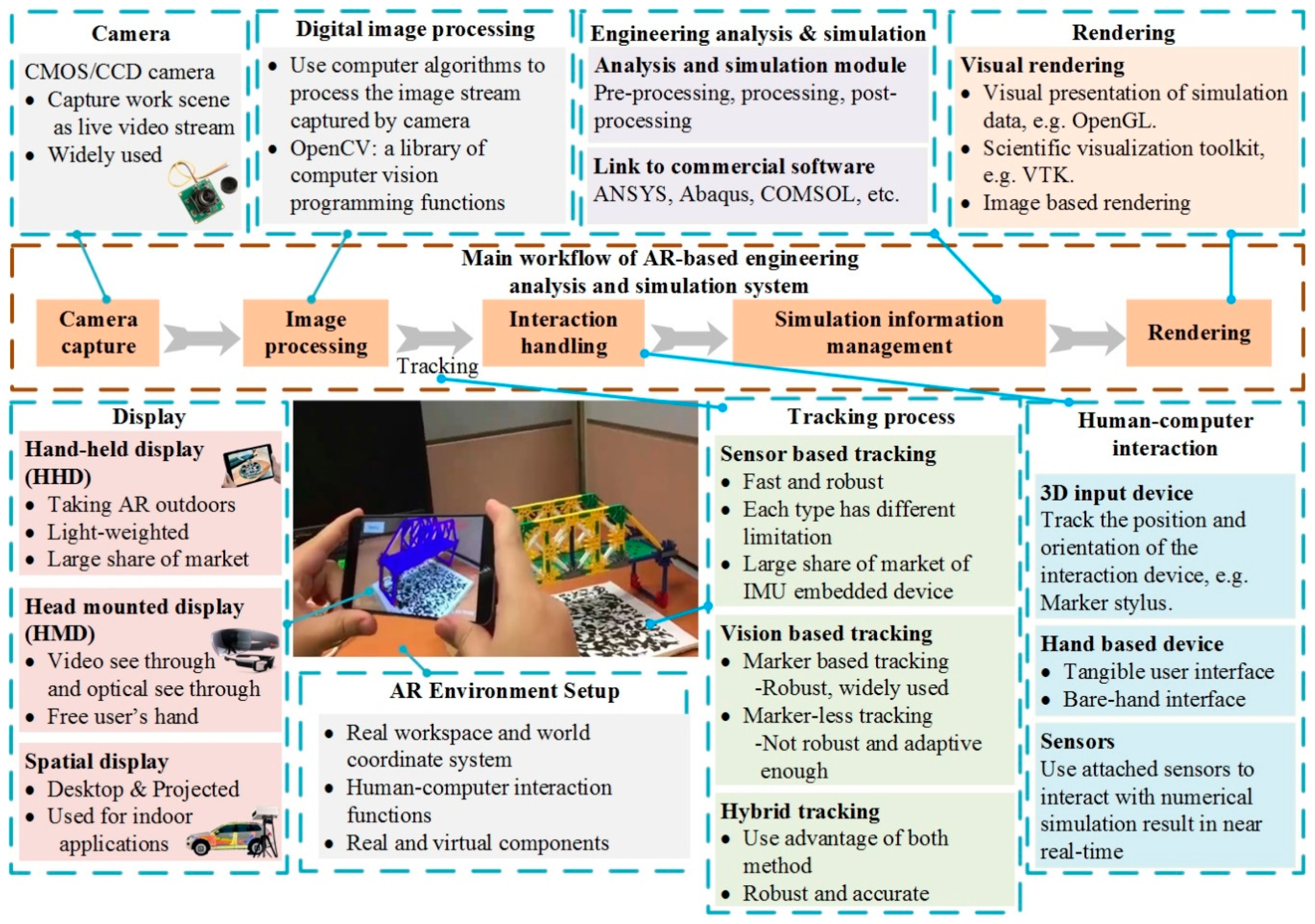

2.2. Basic Architecture in AR based System

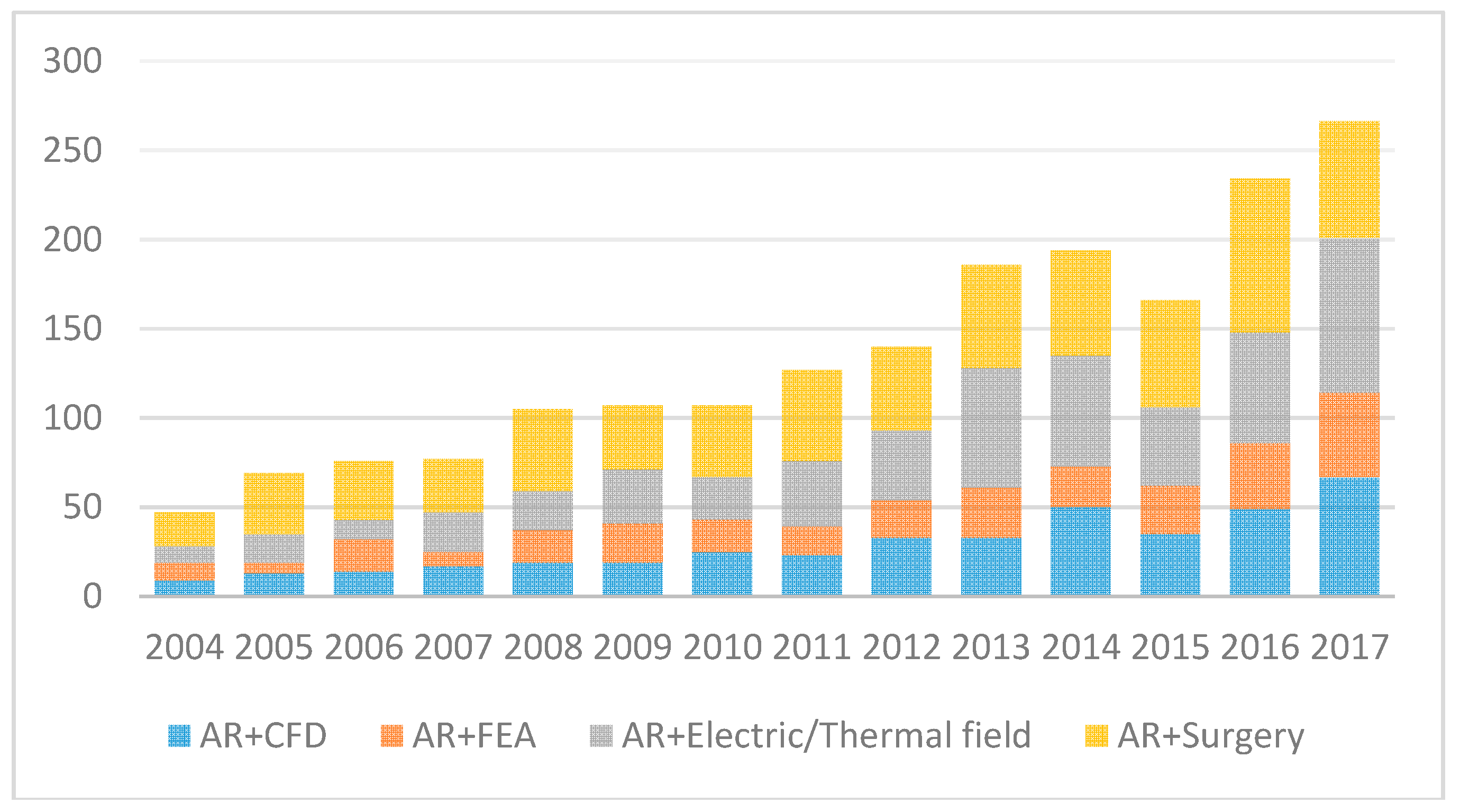

2.3. The Trend of Using AR in Engineering Analysis and Simulation

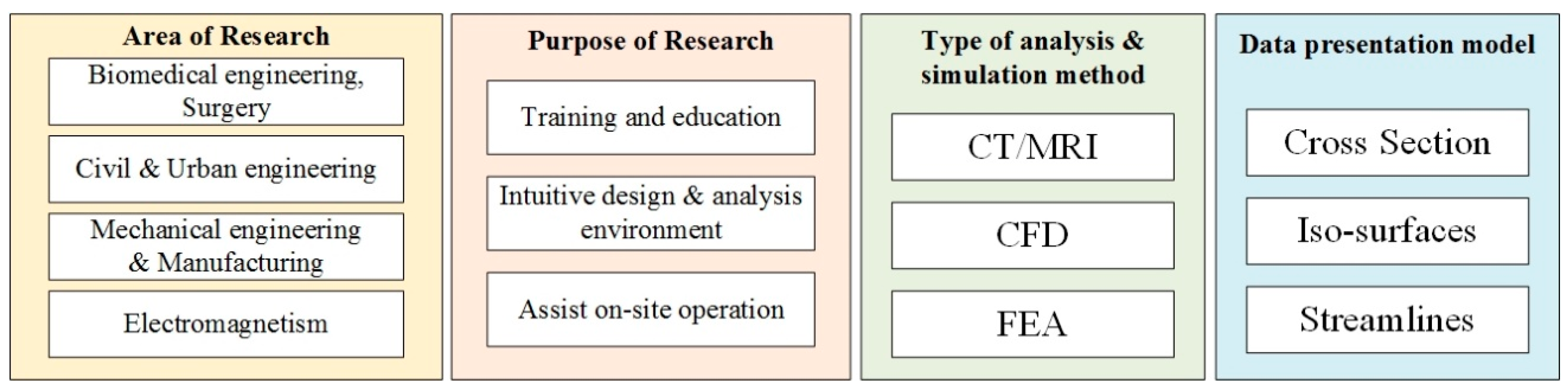

3. Current AR Applications in Engineering Analysis and Simulation

4. Techniques Used for AR Applications in Engineering Analysis and siMulation

4.1. Tracking

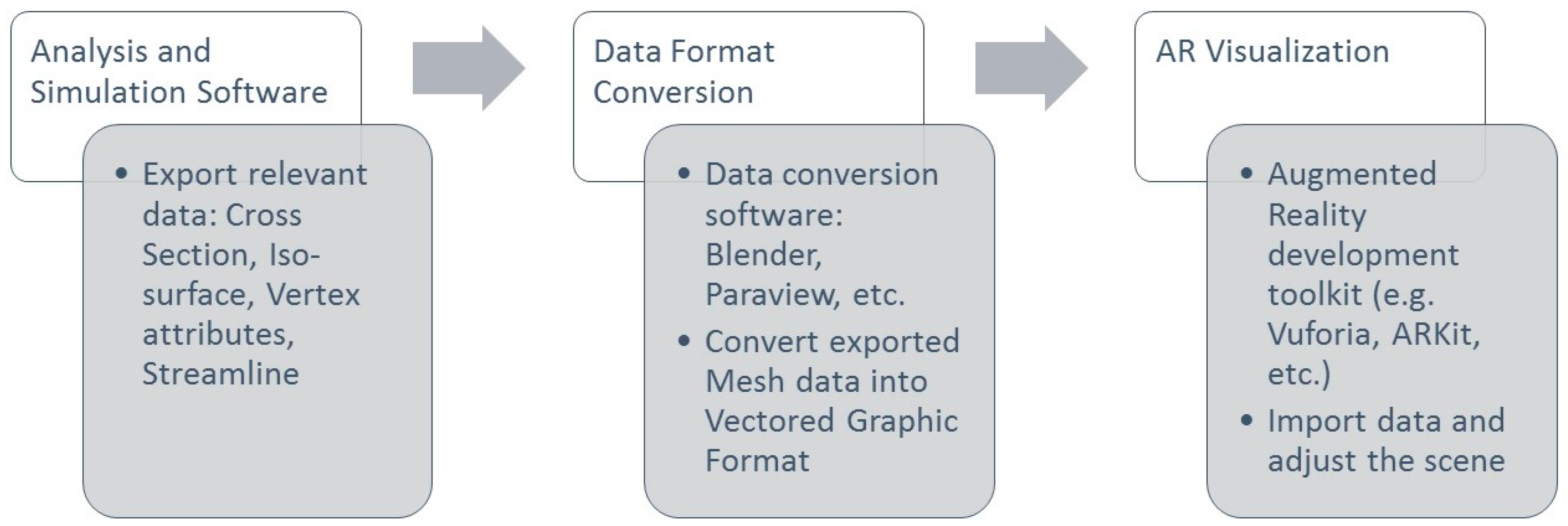

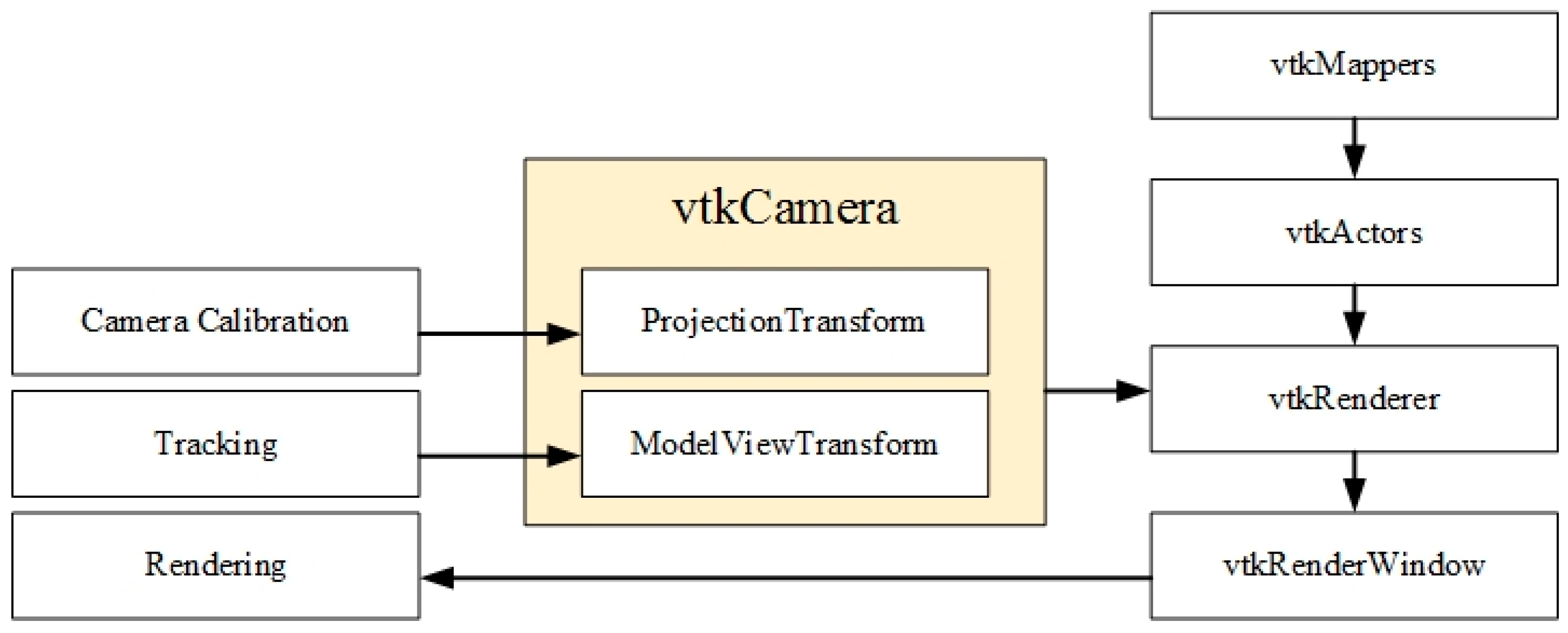

4.2. Result Visualization

4.3. Interaction and Collaboration

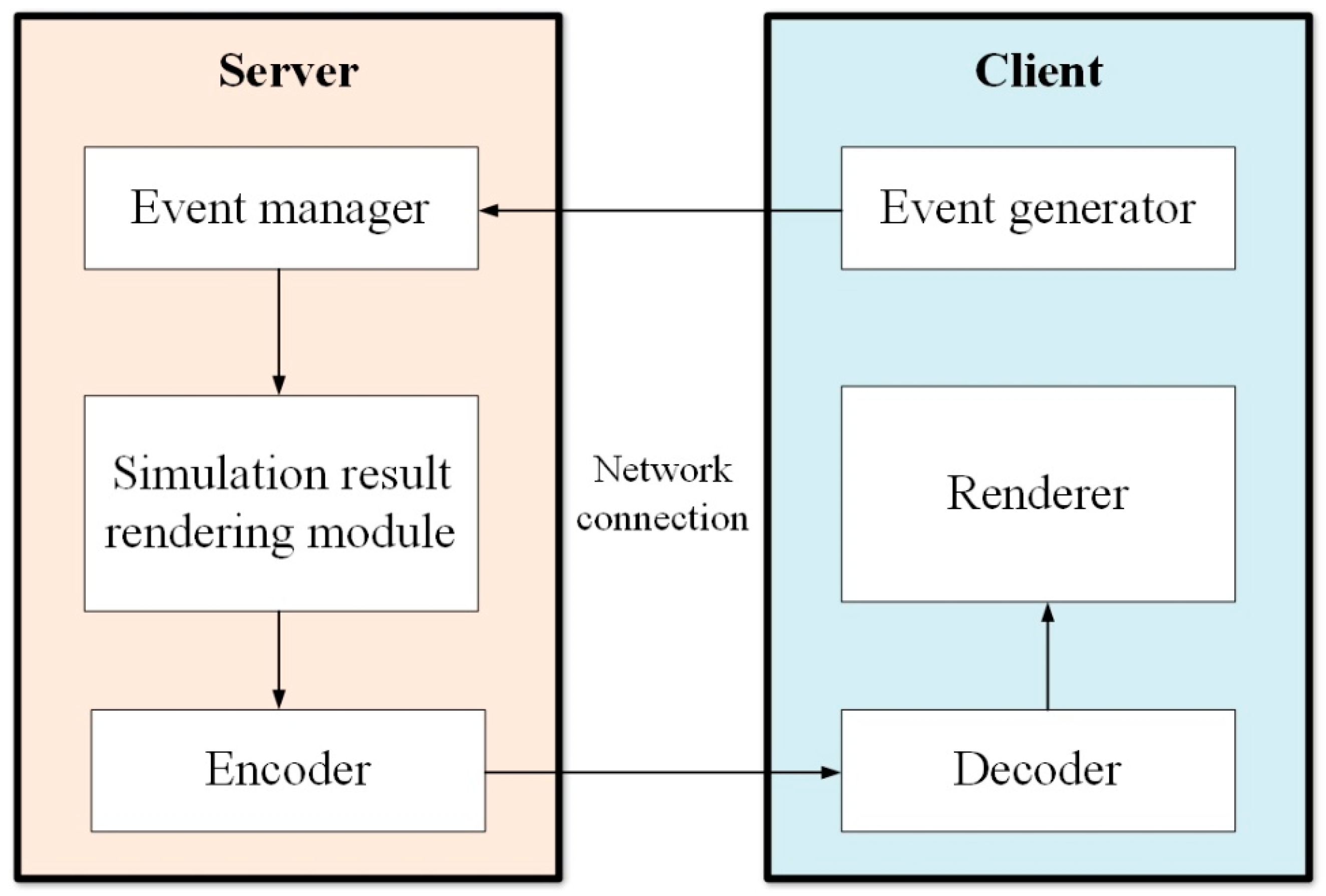

4.4. Client-Server Network Architecture

5. Conclusions and Potential Future Directions

- Robust tracking performance in the engineering scenario for enabling accurate registration of virtual contents;

- Accurate visualization techniques for numerical simulation results allowing engineers to evaluate the problems efficiently; and

- Intuitive interaction methods for volumetric data exploration.

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Moaveni, S. Finite Element Analysis Theory and Application with ANSYS. Available online: https://s3.amazonaws.com/academia.edu.documents/39672343/FINITE_ELEMENT_ANALYSIS.pdf?AWSAccessKeyId=AKIAIWOWYYGZ2Y53UL3A&Expires=1503551514&Signature=8llCti61A3gvv0%2BneizhZ%2Bo0egk%3D&response-content-disposition=inline%3B%20filename%3DFINITE_ELEMENT_ANALYSIS.pdf (accessed on 6 April 2007).

- Reddy, J.N. An. Introduction to the Finite Element Method, 3rd ed.; McGraw-Hill: New York, NY, USA, 2006. [Google Scholar]

- Azuma, R.T. A survey of augmented reality. Presence: Teleoperators. Virtual Env. 1997, 6, 355–385. [Google Scholar] [CrossRef]

- Behzadan, A.H.; Dong, S.; Kamat, V.R. Augmented reality visualization: A review of civil infrastructure system applications. Adv. Eng. Inform. 2015, 29, 252–267. [Google Scholar] [CrossRef]

- Barsom, E.Z.; Graafland, M.; Schijven, M.P. Systematic review on the effectiveness of augmented reality applications in medical training. Surg. Endosc. 2016, 30, 4174. [Google Scholar] [CrossRef] [PubMed]

- Nee, A.Y.C.; Ong, S.K. Virtual and Augmented Reality Applications in Manufacturing; Spring-Verlag: London, UK, 2004. [Google Scholar]

- Dong, F.H. Virtual reality research on vibration characteristics of long-span bridges with considering vehicle and wind loads based on neural networks and finite element method. Neural Comput. Appl. 2017. [Google Scholar] [CrossRef]

- Lian, D.; Oraifige, I.A.; Hall, F.R. Real-time finite element analysis with virtual hands: An introduction. In Proceedings of the WSCG POSTER, International Conference in Central Europe on Computer Graphics, Visualization and Computer Vision, Plzen, Czech Republic, 2–6 February 2004. [Google Scholar]

- Quesada, C.; González, D.; Alfaro, I.; Cueto, E.; Huerta, A.; Chinesta, F. Real-time simulation techniques for augmented learning in science and engineering. Vis. Comput. Int. J. Comput. Graph. 2016, 32, 1465–1479. [Google Scholar] [CrossRef]

- Ferrise, F.; Bordegoni, M.; Marseglia, L.; Fiorentino, M.; Uva, A.E. Can Interactive Finite Element Analysis Improve the Learning of Mechanical Behavior of Materials? A Case Study. Comput. Aided Des. Appl. 2015, 12, 45–51. [Google Scholar] [CrossRef]

- Rose, D.; Bidmon, K.; Ertl, T. Intuitive and Interactive Modification of Large finite Element models. Available online: http://www.visus.uni-stuttgart.de/uploads/tx_vispublications/rbevis04.pdf (accessed on 18 July 2017).

- Yagawa, G.; Kawai, H.; Yoshimura, S.; Yoshioka, A. Mesh-invisible finite element analysis system in a virtual reality environment. Comput. Model. Simul. Eng. 1996, 3, 289–314. [Google Scholar]

- Yeh, T.P.; Vance, J.M. Combining MSC/NASTRAN, sensitivity methods, and virtual reality to facilitate interactive design. Finite Elem. Anal. Des. 1997, 26, 161–169. [Google Scholar] [CrossRef]

- Scherer, S.; Wabner, M. Advanced visualization for finite elements analysis in virtual reality environments. Int. J. Interact. Des. Manuf. 2008, 2, 169–173. [Google Scholar] [CrossRef]

- Neugebauer, R.; Weidlich, D.; Scherer, S.; Wabner, M. Glyph based representation of principal stress tensors in virtual reality environments. Prod. Eng. 2008, 2, 179–183. [Google Scholar] [CrossRef]

- Buchau, A.; Rucker, W.M. Analysis of a Three-Phase Transformer using COMSOL Multiphysics and a Virtual Reality Environment. In Proceedings of the 2011 COMSOL Conference, Stuttgart, Germany, 26–28 October 2011. [Google Scholar]

- Avila, L.S.; Barre, S.; Blue, R.; Geveci, B.; Henderson, A.; Hoffman, W.A.; King, B.; Law, C.C.; Martin, K.M.; Schroeder, W.J. The VTK User’s Guide, 5th ed.; Kitware: New York, NY, USA, 2010. [Google Scholar]

- Hafner, M.; Schoning, M.; Antczak, M.; Demenko, A.; Hameyer, K. Interactive postprocessing in 3D electromagnetics. IEEE Trans. Magn. 2010, 46, 3437–3440. [Google Scholar] [CrossRef]

- Schoning, M.; Hameyer, K. Applying virtual reality techniques to finite element solutions. IEEE Trans. Magn. 2008, 44, 1422–1425. [Google Scholar] [CrossRef]

- Hambli, R.; Chamekh, A.; Salah, H.B.H. Real-time deformation of structure using finite element and neural networks in virtual reality applications. Finite Elem. Anal. Des. 2006, 42, 985–991. [Google Scholar] [CrossRef]

- Santhanam, A.; Fidopiastis, C.; Hamza-Lup, F.; Rolland, J.P.; Imielinska, C. Physically-based deformation of high-resolution 3D lung models for augmented reality based medical visualization. Available online: http://www.felixlup.net/papers/2004_MICCAI_Hamza-Lup.pdf (accessed on 18 July 2017).

- Tzong-Ming, C.; Tu, T.H. A fast parametric deformation mechanism for virtual reality applications. Comput. Ind. Eng. 2009, 57, 520–538. [Google Scholar] [CrossRef]

- Connell, M.; Tullberg, O. A framework for immersive FEM visualisation using transparent object communication in a distributed network environment. Adv. Eng. Softw. 2002, 33, 453–459. [Google Scholar] [CrossRef]

- Liverani, A.; Kuester, F. Towards Interactive Finite Element Analysis of Shell Structures in Virtual Reality. In Proceedings of the 1999 International Conference on Information Visualisation, London, UK, 14–16 July 1999. [Google Scholar]

- Ingrassia, T.; Cappello, F. VirDe: a new virtual reality design approach. Int. J. Interact. Des. Manuf. 2009, 3, 1–11. [Google Scholar] [CrossRef]

- Ong, S.K.; Yuan, M.L.; Nee, A.Y.C. Augmented reality applications in manufacturing: A survey. Int. J Prod. Res. 2008, 46, 2707–2742. [Google Scholar] [CrossRef]

- Ong, S.K.; Huang, J.M. Structure design and analysis with integrated AR-FEA. CIRP Ann. Manuf. Tech. 2017, 66, 149–152. [Google Scholar] [CrossRef]

- Zhou, F.; Duh, H.B.L.; Billinghurst, M. Trends in augmented reality tracking, interaction and display: A review of ten years of ISMAR. In Proceedings of the 7th IEEE/ACM International Symposium on Mixed and Augmented Reality, Cambridge, UK, 15–18 September 2008. [Google Scholar]

- Daponte, P.; De Vito, L.; Picariello, F.; Riccio, M. State of the art and future developments of the Augmented Reality for measurement applications. Measurement 2014, 57, 53–70. [Google Scholar] [CrossRef]

- Kato, H.; Billinghurst, M. Marker tracking and HMD calibration for a video-based augmented reality conferencing system. In Proceedings of the 2nd IEEE and ACM International Workshop on Augmented Reality, San Francisco, CA, USA, 20–21 October 1999. [Google Scholar]

- Salah, Z.; Preim, B.; Rose, G. An approach for enhanced slice visualization utilizing augmented reality: Algorithms and applications. In Proceedings of the 3rd Palestinian International Conference on Computer and Information Technology (PICCIT), Palestine Polytechnic University, 9–11 March 2010. [Google Scholar]

- Sutherland, C.; Hashtrudi-Zaad, K.; Sellens, R.; Abolmaesumi, P.; Mousavi, P. An augmented reality haptic training simulator for spinal needle procedures. IEEE Trans. Biomed. Eng. 2013, 60, 3009–3018. [Google Scholar] [CrossRef] [PubMed]

- Carmo, M.B.; Ana, P.C.; António, F.; Ana, P.A.; Paula, R.; Cristina, C.; Miguel, C.B.; Jose, N.P. Visualization of solar radiation data in augmented reality. In Proceedings of the IEEE International Symposium on Mixed and Augmented Reality (ISMAR), Munich, Germany, 10–12 September 2014. [Google Scholar]

- Carmo, M.B.; Cláudio, A.P.; Ferreira, A.; Afonso, A.P.; Redweik, P.; Catita, C.; and Meireles, C. Augmented reality for support decision on solar radiation harnessing. In Proceedings of the Computação Gráfica e Interação (EPCGI), Covilha, Portuguese, 24–25 November 2016. [Google Scholar]

- Heuveline, V.; Ritterbusch, S.; Ronnas, S. Augmented reality for urban simulation visualization. In Proceedings of the first international conference on advanced commnunications and computation, Barcelona, Spain, 23–28 October 2011. [Google Scholar]

- Ritterbusch, S.; Ronnås, S.; Waltschläger, I.; Heuveline, V. Augmented reality visualization of numerical simulations in urban environments. Int. J. Adv. Syst. Meas. 2013, 6, 26–39. [Google Scholar]

- Broll, W.; Lindt, I.; Ohlenburg, J.; Wittkämper, M.; Yuan, C.; Novotny, T.; Strothman, A. Arthur: A collaborative augmented environment for architectural design and urban planning. J. Virtual Real. Broadcast. 2004, 1, 1–10. [Google Scholar]

- Fukuda, T.; Mori, K.; Imaizumi, J. Integration of CFD, VR, AR and BIM for design feedback in a design process-an experimental study. In Proceedings of the 33rd International Conference on Education and Research in Computer Aided Architectural Design Europe (eCAADe33), Oulu, Finland, 22–26 August 2015. [Google Scholar]

- Yabuki, N.; Furubayashi, S.; Hamada, Y.; Fukuda, T. Collaborative visualization of environmental simulation result and sensing data using augmented reality. In Proceedings of the International Conference on Cooperative Design, Visualization and Engineering, Osaka, Japan, 2–5 September 2012. [Google Scholar]

- Bernasconi, A.; Kharshiduzzaman, M.; Anodio, L.F.; Bordegoni, M.; Re, G.M.; Braghin, F.; Comolli, L. Development of a monitoring system for crack growth in bonded single-lap joints based on the strain field and visualization by augmented reality. J. Adhes. 2014, 90, 496–510. [Google Scholar] [CrossRef]

- Huang, J.M.; Ong, S.K.; Nee, A.Y.C. Real-time finite element structural analysis in augmented reality. Adv. Eng. Softw. 2015, 87, 43–56. [Google Scholar] [CrossRef]

- Huang, J.M.; Ong, S.K.; Nee, A.Y.C. Visualization and interaction of finite element analysis in augmented reality. Comput. Aided Des. 2017, 84, 1–14. [Google Scholar] [CrossRef]

- Paulus, C.J.; Haouchine, N.; Cazier, D.; Cotin, S. Augmented reality during cutting and tearing of deformable objects. In Proceedings of the 2015 IEEE International Symposium on Mixed and Augmented Reality (ISMAR), Fukuoka, Japan, 29 September–3 October 2015. [Google Scholar]

- Fiorentino, M.; Monno, G.; Uva, A. Interactive “touch and see” FEM Simulation using Augmented Reality. Int. J. Eng. Educ. 2009, 25, 1124–1128. [Google Scholar]

- Fiorentino, M.; Monno, G.; Uva, A. Tangible Interfaces for Augmented Engineering Data Management. Available online: https://www.intechopen.com/books/augmented-reality/tangible-interfaces-for-augmented-engineering-data-management/ (accessed on 1 January 2010).

- Niebling, F.; Griesser, R.; Woessner, U. Using Augmented Reality and Interactive Simulations to Realize Hybrid Prototypes. Available online: https://www.researchgate.net/profile/Uwe_Woessner/publication/220844660_Using_Augmented_Reality_and_Interactive_Simulations_to_Realize_Hybrid_Prototypes/links/0c96052a9c0905da4e000000.pdf (accessed on 18 July 2017).

- Uva, A.E.; Cristiano, S.; Fiorentino, M.; Monno, G. Distributed design review using tangible augmented technical drawings. Comput. Aided Des. 2010, 42, 364–372. [Google Scholar] [CrossRef]

- Uva, A.E.; Fiorentino, M.; Monno, G. Augmented reality integration in product development. In Proceedings of the International conference on Innovative Methods in Product Design (IMProVe 2011), Venice, Italy, 15–17 June 2011. [Google Scholar]

- Valentini, P.P.; Pezzuti, E. Design and interactive simulation of cross-axis compliant pivot using dynamic splines. Int. J. Interact. Des. Manuf. 2013, 7, 261–269. [Google Scholar] [CrossRef]

- Valentini, P.P.; Pezzuti, E. Dynamic splines for interactive simulation of elastic beams in augmented reality. In Proceedings of the IMPROVE 2011 International Congress, Venice, Italy, 15–17 June 2011. [Google Scholar]

- Ibáñez, M.B.; Di Serio, Á.; Villarán, D.; Kloos, C.D. Experimenting with electromagnetism using augmented reality: Impact on flow student experience and educational effectiveness. Comput. Educ. 2014, 71, 1–13. [Google Scholar] [CrossRef]

- Mannuß, F.; Rubel, J.; Wagner, C.; Bingel, F.; Hinkenjann, A. Augmenting magnetic field lines for school experiments. In Proceedings of the 2011 10th IEEE International Symposium on Mixed and Augmented Reality (ISMAR), Basel, Switzerland, 26–29 October 2011. [Google Scholar]

- Matsutomo, S.; Mitsufuji, K.; Hiasa, Y.; Noguchi, S. Real time simulation method of magnetic field for visualization system with augmented reality technology. IEEE Trans. Magn. 2013, 49, 1665–1668. [Google Scholar] [CrossRef]

- Matsutomo, S.; Miyauchi, T.; Noguchi, S.; Yamashita, H. Real-time visualization system of magnetic field utilizing augmented reality technology for education. IEEE Trans. Magn. 2012, 48, 531–534. [Google Scholar] [CrossRef]

- Liao, H.; Inomata, T.; Sakuma, I.; Dohi, T. Three-dimensional augmented reality for MRI-guided surgery using integral videography auto stereoscopic-image overlay. IEEE Tran Biomed. Eng. 2010, 57, 1476–1486. [Google Scholar] [CrossRef] [PubMed]

- Haouchine, N.; Dequidt, J.; Berger, M.O.; Cotin, S. Single view augmentation of 3D elastic objects. In Proceedings of the IEEE International Symposium on Mixed and Augmented Reality (ISMAR), Munich, Germany, 10–12 September 2014. [Google Scholar]

- Haouchine, N.; Dequidt, J.; Kerrien, E.; Berger, M.O.; Cotin, S. Physics-based augmented reality for 3D deformable object. In Proceedings of the Eurographics Workshop on Virtual Reality Interaction and Physical Simulation, Darmstadt, Germany, 6–7 December 2012. [Google Scholar]

- Haouchine, N.; Dequidt, J.; Peterlik, I.; Kerrien, E.; Berger, M.O.; Cotin, S. Image-guided simulation of heterogeneous tissue deformation for augmented reality during hepatic surgery. In Proceedings of the IEEE International Symposium on Mixed and Augmented Reality (ISMAR), Adelaide, Australia, 1–4 October 2013. [Google Scholar]

- Kong, S.H.; Haouchine, N.; Soares, R.; Klymchenko, A.; Andreiuk, B.; Marques, B.; Marescaux, J. Robust augmented reality registration method for localization of solid organs' tumors using CT-derived virtual biomechanical model and fluorescent fiducials. Surg. Endosc. 2017, 31, 2863–2871. [Google Scholar] [CrossRef] [PubMed]

- Tawara, T.; Ono, K. A framework for volume segmentation and visualization using Augmented Reality. In Proceedings of the 2010 IEEE Symposium on 3D User Interface (3DUI), Westin Waltham-Boston Waltham, MA, USA, 20–21 March 2010. [Google Scholar]

- Kaladji, A.; Dumenil, A.; Castro, M.; Cardon, A.; Becquemin, J.P.; Bou-Saïd, B.; Haigron, P. Prediction of deformations during endovascular aortic aneurysm repair using finite element simulation. Comput. Med. Imaging Graph. 2013, 37, 142–149. [Google Scholar] [CrossRef] [PubMed]

- Ha, H.G.; Hong, J. Augmented Reality in Medicine. Hanyang Med. Rev. 2016, 36, 242–247. [Google Scholar] [CrossRef]

- Clothier, M.; Bailey, M. Augmented reality visualization tool for kings stormwater bridge. In Proceedings of the IASTED International Conference on Visualization, Imaging and Image Processing, Marballa, Spain, 6–8 September 2004. [Google Scholar]

- Underkoffler, J.; Ullmer, B.; Ishii, H. Emancipated pixels: Real-world graphics in the luminous room. In Proceedings of the 26th annual conference on Computer graphics and interactive techniques, Los Angeles, CA, USA, 8–13 August 1999. [Google Scholar]

- Lakaemper, R.; Malkawi, A.M. Integrating robot mapping and augmented building simulation. J. Comput. Civil. Eng. 2009, 23, 384–390. [Google Scholar] [CrossRef]

- Malkawi, A.M.; Srinivasan, R.S. A new paradigm for Human-Building Interaction: the use of CFD and Augmented Reality. Autom. Constr. 2005, 14, 71–84. [Google Scholar] [CrossRef]

- Golparvar-Fard, M.; Ham, Y. Automated diagnostics and visualization of potential energy performance problems in existing buildings using energy performance augmented reality models. J. Comput. Civil. Eng. 2013, 28, 17–29. [Google Scholar] [CrossRef]

- Ham, Y.; Golparvar-Fard, M. EPAR: Energy Performance Augmented Reality models for identification of building energy performance deviations between actual measurements and simulation results. Energy Build. 2013, 63, 15–28. [Google Scholar] [CrossRef]

- Graf, H.; Santos, P.; Stork, A. Augmented reality framework supporting conceptual urban planning and enhancing the awareness for environmental impact. In Proceedings of the 2010 Spring Simulation Multiconference, Orlando, FL, USA, 11–15 April 2010. [Google Scholar]

- Weidlich, D.; Scherer, S.; Wabner, M. Analyses using VR/AR visualization. IEEE Comput. Graph. Appl 2008, 28, 84–86. [Google Scholar] [CrossRef] [PubMed]

- Issartel, P.; Guéniat, F.; Ammi, M. Slicing techniques for handheld augmented reality. In Proceedings of the 2014 IEEE Symposium on 3D User Interfaces (3DUI), Minneapolis, MI, USA, 29–30 March 2014. [Google Scholar]

- Naets, F.; Cosco, F.; Desmet, W. Improved human-computer interaction for mechanical systems design through augmented strain/stress visualisation. Int. J. Intell. Eng. Inform. 2017, 5, 50–66. [Google Scholar] [CrossRef]

- Moreland, J.; Wang, J.; Liu, Y.; Li, F.; Shen, L.; Wu, B.; Zhou, C. Integration of Augmented Reality with Computational Fluid Dynamics for Power Plant Training. In Proceedings of the International Conference on Modeling, Simulation and Visualization Methods, Las Vegas, NE, USA, 22–25 July 2013. [Google Scholar]

- Regenbrecht, H.; Baratoff, G.; Wilke, W. Augmented reality projects in the automotive and aerospace industries. IEEE Comput. Graph. Appl. 2005, 25, 48–56. [Google Scholar] [CrossRef] [PubMed]

- Weidenhausen, J.; Knoepfle, C.; Stricker, D. Lessons learned on the way to industrial augmented reality applications, a retrospective on ARVIKA. Comput. Graph. 2003, 27, 887–891. [Google Scholar] [CrossRef]

- Buchau, A.; Rucker, W.M.; Wössner, U.; Becker, M. Augmented reality in teaching of electrodynamics. Int. J. Comput. Math. Electr. Electron. Eng. 2009, 28, 948–963. [Google Scholar] [CrossRef]

- Silva, R.L.; Rodrigues, P.S.; Oliveira, J.C.; Giraldi, G. Augmented Reality for Scientific Visualization: Bringing DataSets inside the RealWorld. In Proceedings of the Summer Computer Simulation Conference (SCSC 2004), Montreal, Québec, Canada, 20–24 July 2004. [Google Scholar]

- Engelke, T.; Keil, J.; Rojtberg, P.; Wientapper, F.; Schmitt, M.; Bockholt, U. Content first: A concept for industrial augmented reality maintenance applications using mobile devices. In Proceedings of the 6th ACM Multimedia Systems Conference, Portland, United States, 18–20 March 2015. [Google Scholar]

- Lowe, D.G. Distinctive image features from scale-invariant keypoints. Int. J. Comput. Vis. 2004, 60, 91–110. [Google Scholar] [CrossRef]

- Shi, J. Good features to track. In Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, 1994 (CVPR’94), Seattle, WA, USA, 21–23 June 1994. [Google Scholar]

- Gauglitz, S.; Höllerer, T.; and Turk, M. Evaluation of interest point detectors and feature descriptors for visual tracking. Int. J. Comput. Vision 2011, 94, 335–360. [Google Scholar] [CrossRef]

- Mikolajczyk, K.; Schmid, C. Scale affine invariant interest point detectors. Int. J. Comput. Vision 2004, 60, 63–86. [Google Scholar] [CrossRef]

- Mikolajczyk, K.; Schmid, C. A performance evaluation of local descriptors. IEEE Trans Pattern Anal. Mach. Intell. 2005, 27, 1615–1630. [Google Scholar] [CrossRef] [PubMed]

- Moreels, P.; and Perona, P. Evaluation of features detectors and descriptors based on 3D objects. Int. J. Comput. Vision 2007, 73, 263–284. [Google Scholar] [CrossRef]

- Koch, R.; Evers-Senne, J.F.; Schiller, I.; Wuest, H.; and Stricker, D. Architecture and tracking algorithms for a distributed mobile industrial AR system. In Proceedings of the 5th International Conference on Computer Vision Systems (ICVS07), Bielefeld University, Germany, 21–24 March 2007. [Google Scholar]

- Ufkes, A.; Fiala, M. A markerless augmented reality system for mobile devices. In Proceedings of the International Conference on Computer and Robot Vision (CRV2013), Regina, Saskatchewan, Canada, 17–19 May 2013. [Google Scholar]

- Yu, L.; Li, W.K.; Ong, S.K.; Nee, A.Y.C. Enhanced Planar Pattern Tracking for an Outdoor Augmented Reality System. Int. J. Comput. Electr. Autom. Control Inf. Eng. 2017, 11, 125–136. [Google Scholar]

- Yu, L.; Ong, S.K.; and Nee, A.Y.C. A tracking solution for mobile augmented reality based on sensor-aided marker-less tracking and panoramic mapping. Multimed. Tools Appl. 2016, 75, 3199–3220. [Google Scholar] [CrossRef]

- Gammeter, S.; Gassmann, A.; Bossard, L.; Quack, T.; and Van Gool, L. Server-side object recognition and client-side object tracking for mobile augmented reality. In Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition Workshops (CVPRW2010), San Francisco, CA, USA, 13–18 June 2010. [Google Scholar]

- Ha, J.; Cho, K.; Rojas, F.A.; Yang, H.S. Real-time scalable recognition and tracking based on the server-client model for mobile augmented reality. In Proceedings of the IEEE International Symposium on VR Innovation (ISVRI2011), Singapore, 19–20 March 2011. [Google Scholar]

- Jung, J.; Ha, J.; Lee, S.W.; Rojas, F.A.; and Yang, H.S. Efficient mobile AR technology using scalable recognition and tracking based on server-client model. Comput. Graph. 2012, 36, 131–139. [Google Scholar] [CrossRef]

- Mulloni, A.; Grubert, J.; Seichter, H.; Langlotz, T.; Grasset, R.; Reitmayr, G.; Schmalstieg, D. Experiences with the impact of tracking technology in mobile augmented reality evaluations. In Proceedings of the MobileHCI 2012 Workshop MobiVis, San Francisco, CA, USA, 21–24 September 2012. [Google Scholar]

- Helfrich-Schkarbanenko, A.; Heuveline, V.; Reiner, R.; Ritterbusch, S. Bandwidth-efficient parallel visualization for mobile devices. In Proceedings of the 2nd International Conference on Advanced Communications and Computation, Venice, Italy, 21–26 October 2012. [Google Scholar]

- Moser, M.; Weiskopf, D. Interactive volume rendering on mobile devices. Vision Model. Vis. 2008, 8, 217–226. [Google Scholar]

- Anzt, H.; Augustin, W.; Baumann, M.; Bockelmann, H.; Gengenbach, T.; Hahn, T.; Ritterbusch, S. Hiflow3--A Flexible and Hardware-Aware Parallel Finite Element Package. Available online: https://journals.ub.uni-heidelberg.de/index.php/emcl-pp/article/view/11675 (accessed on 18 July 2017).

- Schroeder, W.J.; Lorensen, B.; Martin, K. The Visualization Toolkit: An Object-Oriented Approach to 3D Graphics, 4th ed.; Kitware: New York, NY, USA, 2006. [Google Scholar]

- Bruno, F.; Caruso, F.; De Napoli, L.; Muzzupappa, M. Visualization of industrial engineering data visualization of industrial engineering data in augmented reality. J. Vis. 2006, 9, 319–329. [Google Scholar] [CrossRef]

- De Pascalis, F. VTK Remote Rendering of 3D Laser Scanner Ply files for Android Mobile Devices. Available online: http://hdl.handle.net/10380/3458 (accessed on 5 May 2014).

- Augmented Reality Sandbox. Available online: idav.ucdavis.edu/~okreylos/ResDev/SARandbox (accessed on 14 July 2017).

- Lukosch, S.; Billinghurst, M.; Alem, L.; Kiyokawa, K. Collaboration in augmented reality. Comput. Support. Coop. Work 2015, 24, 515–525. [Google Scholar] [CrossRef]

- Fuhrmann, A.; Loffelmann, H.; Schmalstieg, D.; Gervautz, M. Collaborative visualization in augmented reality. IEEE Comput. Graph. Appl. 1998, 18, 54–59. [Google Scholar] [CrossRef]

- Rekimoto, J. Transvision: A hand-held augmented reality system for collaborative design. In Proceedings of the International Conference on Virtual Systems and Multimedia, Gifu, Japan, 18–20 September 1996. [Google Scholar]

- Dong, S.; Behzadan, A.H.; Chen, F.; Kamat, V.R. Collaborative visualization of engineering processes using tabletop augmented reality. Adv. Eng. Softw. 2013, 55, 45–55. [Google Scholar] [CrossRef]

- Benko, H.; Wilson, A.D.; Zannier, F. Dyadic projected spatial augmented reality. In Proceedings of the 27th annual ACM symposium on User interface software and technology, Hawaii, United States, 5–8 October 2014. [Google Scholar]

- Boulanger, P. Application of augmented reality to industrial tele-training. In Proceedings of the First Canadian Conference on Computer and Robot Vision, London, ON, Canada, 17–19 May 2004. [Google Scholar]

- Shen, Y.; Ong, S.K.; Nee, A.Y.C. Product information visualization and augmentation in collaborative design. Comput. Aided Des. 2008, 40, 963–974. [Google Scholar] [CrossRef]

- Ong, S.K.; Shen, Y. A mixed reality environment for collaborative product design and development. CIRP Ann. Manuf. Tech. 2009, 58, 139–142. [Google Scholar] [CrossRef]

- Gauglitz, S.; Nuernberger, B.; Turk, M.; Höllerer, T. In touch with the remote world: Remote collaboration with augmented reality drawings and virtual navigation. In Proceedings of the 20th ACM Symposium on Virtual Reality Software and Technology, Edinburgh, UK, 11–13 November 2014. [Google Scholar]

- Tan, W.; Liu, H.; Dong, Z.; Zhang, G.; Bao, H. Robust monocular SLAM in dynamic environments. In Proceedings of the IEEE International Symposium on Mixed and Augmented Reality (ISMAR2013), Adelaide, Australia, 1–4 October 2013. [Google Scholar]

| Area of Research | Research Group | Purpose of Research |

|---|---|---|

| Biomedical engineering & surgery | Liao et al. [55] | Assist on-site operation |

| Haouchine et al. [56,57,58] | ||

| Kong et al. [59] | ||

| Salah et al. [31] | Intuitive analysis environment | |

| Tawara and Ono [60] | ||

| Kaladji et al. [61] | ||

| Sutherland [32] | Training and education | |

| ARMed, [62] | ||

| Civil & urban engineering | Clothier et al. [63] | Assist on-site operation |

| Underkoffler et al. [64] | ||

| Malkawi et al. [65,66] | Intuitive analysis environment | |

| Carmo et al. [33,34] | ||

| Heuveline et al. [35,36] | ||

| Golparvar-Fard et al. [67,68] | ||

| Graf et al. [69] | Intuitive design environment | |

| Broll et al. [37] | ||

| Fukuda et al. [38,39] | ||

| Mechanical engineering & Manufacturing | Weidlich et al. [70] | Intuitive analysis environment |

| NUS AR group, [27,41,42] | ||

| Paulus, et al. [43] | ||

| Uva et al. [44,45,47,48] | ||

| Issartel et al. [71] | ||

| Bernasconi et al. [40] | ||

| Valentini et al. [49,50] | ||

| Naets et al. [72] | ||

| Moreland et al. [73] | ||

| Regenbrecht et al. [74] | Intuitive design environment | |

| Niebling et al. [46] | ||

| Weidenhausen et al. [75] | ||

| Electromagnetism | Buchau et al. [76] | Training and education |

| Ibáñez et al. [51] | ||

| Silva et al. [77] | Intuitive analysis environment | |

| Mannuß et al. [52] | ||

| Matsutomo et al. [53,54] |

| Research Group | Visualization Method | Characteristics | Limitations |

|---|---|---|---|

| Liao et al. [55] | Stereoscopic image overlay | Increase accuracy and safety in surgery with image overlay navigation | The visualization equipment lacks contrast in operation lighting condition |

| Haouchine et al. [56,57,58] | Local image overlay | Real-time physics-based model for simulation

Include in vivo test on human data during surgery | The scalability of the system is restricted due to currently only liver surgery is supported |

| Kong et al. [59] | Local image overlay | Accurate automatic result registration on laparoscopic image

A biomechanical model is included and analyzed with FEM Use of fluorescent fiducials | The feasibility of widely use of fluorescent fiducials in surgery is restricted |

| Salah et al. [31] | OpenGL + Fast light toolkit (FLTK) | User interface for MRI data visualization and analysis

An optimized slicing algorithm is included | Lack of data support from real surgery scenario |

| Tawara and Ono [60] | Stereoscopic image overlay | Direct manipulation of human brain CT/MRI volume data using AR

Combined Wiimote and a motion tracking cube to get a tracked manipulation device for a volume data | Lack of support on system scalability |

| Kaladji et al. [61] | Local image overlay | The deformation of the organ can be simulated and visualized on CT image | Lack of interaction functions |

| Sutherland [32] | Visualization Toolkit (VTK) | Provide a simulation environment for CT volume data visualization

Result update from force feedback | The setup is pre-defined and is not adaptive for other applications |

| ARMed, [62] | Stereoscopic image overlay | Good educational system for diagnosis and surgery preparation, education | 1. Lack of real scene test

2. Only provided an educational environment |

| Research Group | Visualization Method | Characteristics | Limitations |

|---|---|---|---|

| Clothier et al. [63] | OpenGL | Sensor implementation for structure simulation | 1. Sensor data reading and visualization is not robust

2. Desktop based system is not suitable for outdoor use |

| Underkoffler et al. [64] | Local image overlay | A scalable design which integrate different digital graphics and simulation result together | The simulation module in this system is still in infant stage, only simple results are demonstrated |

| Malkawi et al. [65,66] | Java3D | Augment CFD datasets in real-time based on speech and gesture recognition

Interactive and immersive environment | 1. Support only indoor and pre-defined environment

2. Provided hand gesture cause ergonomic issue |

| Carmo et al. [33,34] | OpenGL | A mobile platform for visualize and analysis solar radiation in outdoor environment | 1. The solar energy data input has to be pre-defined

2. Without proper sensing technology, the system can hardly tap the potential of outdoor AR |

| Heuveline et al. [35,36] | Remote image overlay | Image based rendering to visualize numerical simulation data

Client-server framework for simulation data visualization Use VTK, paraview, and HiVision for result visualization on the server | 1. The simulation result is pre-defined in the system

2. Difficult to integrate into other applications |

| Golparvar-Fard et al. [67,68] | VR modeling language | 3D thermal mesh modelling

Automated visualization of deviations between actual and expected building energy | Requires thermal camera and HMD device may cause ergonomic problem |

| Graf et al. [69] | OpenGL | Volumetric data preparation and simulation | 1. Currently serves as a prototype system

2. Lack of real scene test |

| Broll et al. [37] | OpenGL | Co-location collaboration method

Focused on design and planning part | 1. The utilize of simulation data need to be described

2. The details of co-location collaboration could be further clarified |

| Fukuda et al. [38,39] | OpenGL, VR Markup language | Use CFD data to promote a lean design

Visualization of CFD simulation results in AR | Desktop based system restrict the use of the outdoor AR system |

| Research Group | Visualization Method | Characteristics | Limitations |

|---|---|---|---|

| Weidlich et al. [70] | Remote image overlay |

| 1. FEA results are pre-defined

2. Lack of interaction functions |

| NUS AR group, [27,41,42] | VTK |

| The loading position is pre-defined and the sensor can only be attached at specific position |

| Paulus, et al. [43] | OpenGL |

| Pre-defined model is required |

| Uva et al. [44,45,47,48] | Local image overlay |

| 1. The dataset visualization method is not described in detail

2. The precision of video tracking should be considered |

| Issartel et al. [71] | VTK |

| 1. The results are hardcoded in the application

2. The use of stylus and tablet itself cause ergonomic issue |

| Bernasconi et al. [40] | OpenGL |

| Desktop based system restrict the use of the system |

| Valentini et al. [49,50] | OpenGL |

| 1. Has limitations for models with complex geometries

2. The deformation of practical structures is usually small, which cannot be measured using regular trackers 3. The user interface could be redesigned to include more functions |

| Naets et al. [72] | Local image overlay |

| The setup of using marker based tracking with another optical tracking system may cause difficulties for implementing system into other applications |

| Moreland et al. [73] | Paraview |

| 1. The results are pre-defined in the system

2. Difficult to integrate into other applications |

| Regenbrecht et al. [74] | Local image overlay |

| Only pre-defined results are included for design purpose |

| Niebling et al. [46] | OpenGL |

| 1. The scalability of the system is limited

2. The simulation result is pre-defined |

| Weidenhausen et al. [75] | OpenSG |

| Simulated result needs to be pre-defined |

| Research Group | Visualization Method | Characteristics | Limitations |

|---|---|---|---|

| Buchau et al. [76] | OpenGL | 3D electromagnetic field in AR

Visualize analyzed result for pre-defined model in education | The interference of other magnetic fields is not included |

| Ibáñez et al. [51] | OpenGL | A handheld device based electromagnetic simulation platform | 1. Lack of interaction function

2. Limited system function may only suitable for education purpose |

| Silva et al. [77] | Local image overlay | Use bi-dimensional image to represent 3D scientific data | Image representation may not adaptive to other applications |

| Mannuß et al. [52] | OpenGL | Interactive magnetic field simulation | The cumbersome setup requires HMD device and desktop monitor |

| Matsutomo et al. [53,54] | OpenGL | Magnetic field visualization on background monitor

Generate flux lines for bar magnets Real-time magnetic field visualization | 1. Requires monitor under the working area

2. Magnetic model is restricted |

| Features | Limitations |

|---|---|

| Robust tracking performance is required for high precision engineering operations | Designed for one specific scenario with pre-defined model hardcoded. |

| Efficient visualization tools are implemented for near real-time display | Mainly developed on one platform only. The lack of multi-platform support limited the usage of the system. |

| Accurate registration of computer-generated volumetric data and numerical simulation result on real scene | Most of the system lacks effective and intuitive interaction method. The system was only used for visualizing the results. |

| Marker-Based Tracking | Marker-Less Tracking | GPS & Sensor Fusion |

|---|---|---|

| [27,32,37,40,41,42,44,45,46,47,48,49,50,51,60,62,70,71,72,74,75,77] | [31,38,39,43,52,53,54,55,56,57,58,59,61,64,69,73] | [33,34,35,36,65,66,67,68] |

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Li, W.; Nee, A.Y.C.; Ong, S.K. A State-of-the-Art Review of Augmented Reality in Engineering Analysis and Simulation. Multimodal Technol. Interact. 2017, 1, 17. https://doi.org/10.3390/mti1030017

Li W, Nee AYC, Ong SK. A State-of-the-Art Review of Augmented Reality in Engineering Analysis and Simulation. Multimodal Technologies and Interaction. 2017; 1(3):17. https://doi.org/10.3390/mti1030017

Chicago/Turabian StyleLi, Wenkai, A. Y. C. Nee, and S. K. Ong. 2017. "A State-of-the-Art Review of Augmented Reality in Engineering Analysis and Simulation" Multimodal Technologies and Interaction 1, no. 3: 17. https://doi.org/10.3390/mti1030017

APA StyleLi, W., Nee, A. Y. C., & Ong, S. K. (2017). A State-of-the-Art Review of Augmented Reality in Engineering Analysis and Simulation. Multimodal Technologies and Interaction, 1(3), 17. https://doi.org/10.3390/mti1030017