Vector Representations of Idioms in Conversational Systems

Abstract

:1. Introduction

2. Related Work

3. Materials and Methods

3.1. Multi-Domain Wizard-of-Oz (MultiWOZ) Dataset

3.2. The Dataset Used

Short data statement for the PIE-English idiom corpus.

This is the Potential Idiomatic Expression (PIE)-English idiom corpus for training and evaluating models in idiom identification.

The licence for using this dataset comes under CC-BY 4.0.

Total samples: 20,174

There are 1197 total cases of idioms and 10 classes.

Total samples of euphemism (2384), literal (1140), metaphor (14,666), personification (448), simile (1232), parallelism (64), paradox (112), hyperbole (48), oxymoron (48), and irony (32).

3.3. Classification Task

3.3.1. Bidirectional Encoder Representations from Transformers (BERT)

3.3.2. Text-to-Text Transfer Transformer (T5)

3.3.3. Fine-Tuning Process

3.4. Conversation Generation

3.4.1. Dialogue Generative Pre-Trained Transformer (DialoGPT)-Medium

3.4.2. Fine-Tuning Process

3.4.3. Evaluation

Instruction 1: Here are 94 different conversations by 2 speakers. Please, write Human-like (H) or Non-human-like (N) or Uncertain (U), based on your own understanding of what is human-like. Sometimes the speakers use idioms. If you wish, you may use a dictionary.

Instruction 2: Person 2 & Person 3 respond to Person 1. Please, write which (2 or 3) is the (a) more fitting response & (b) more diverse response (showing variety in language use).

3.4.4. Credibility Unanimous Score (CUS)

4. Results

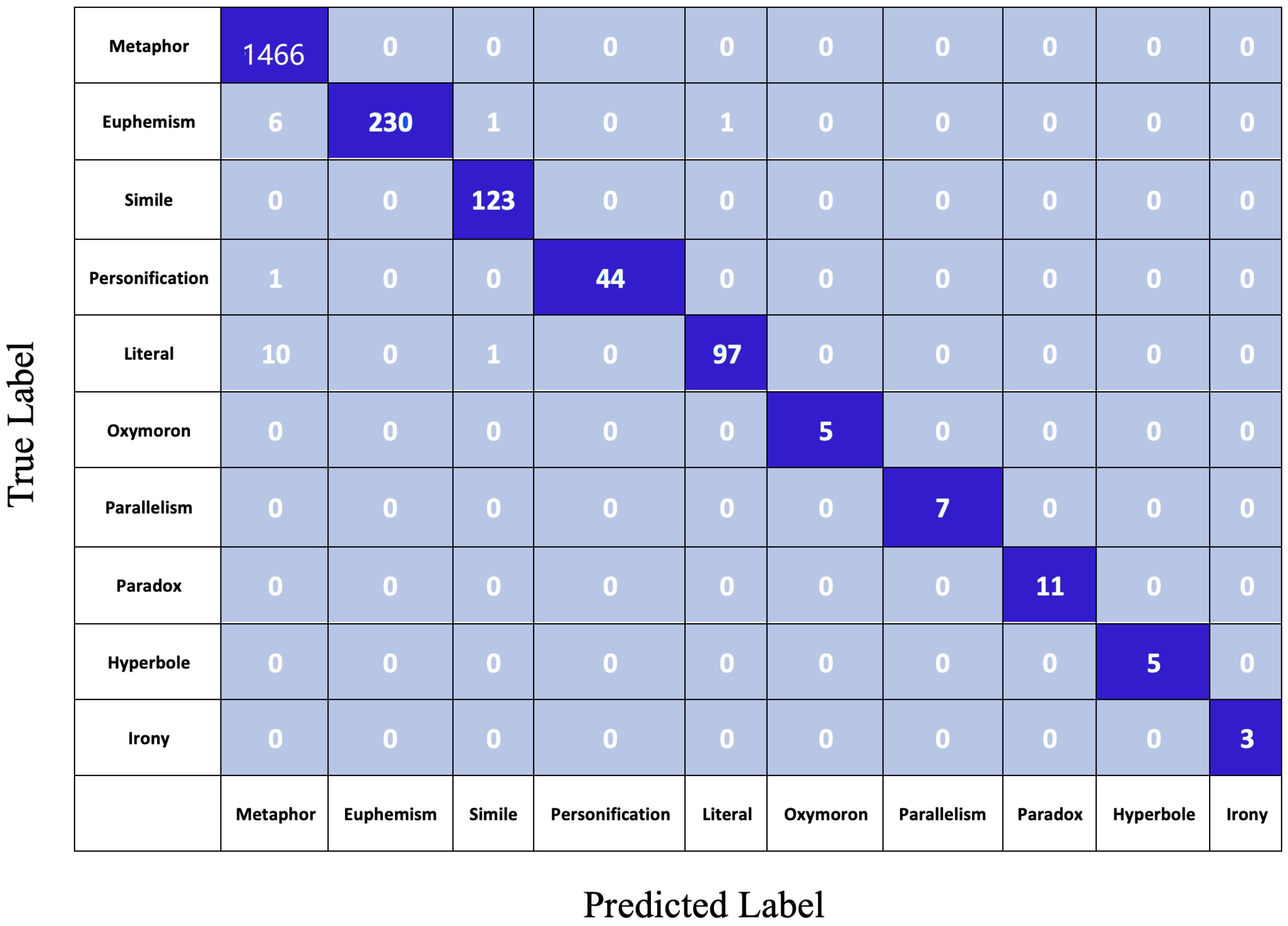

4.1. Classification

Error Analysis

4.2. Conversation Generation

5. Discussion and Evaluator Feedback

6. Limitations

7. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| NLP | Natural Language Processing |

| NER | Named Entity Recognition |

| SA | Sentiment Analysis |

| ML | Machine Learning |

| BoW | Bag-of-Words |

| CBoW | Continuous Bag-of-Words |

| SLTC | Swedish Language Technology Conference |

| ANN | Artificial Neural Network |

| NN | Neural Network |

| LSTM | Long Short-Term Memory Network |

| biLSTM | Bidirectional Long Short-Term Memory Network |

| SoTA | State-of-the-Art |

| NLG | Natural Language Generation |

| NLU | Natural Language Understanding |

| MWE | Multi-Word Expression |

| SW | Simple Wiki |

| MT | Machine Translation |

| BW | Billion Word |

| PIE | Potential Idiomatic Expression |

| IAA | Inter-Annotator Agreement |

| RTE | Recognizing Textual Entailment |

| IR | Information Retrieval |

| QA | Question Answering |

| BNC | British National Corpus |

| UKWaC | UK Web Pages |

| AI | Artificial Intelligence |

| GDC | Gothenburg Dialogue Corpus |

| dialogpt DialoGPT | Dialogue Generative Pre-trained Transformer |

| GPT | Generative Pre-trained Transformer |

| MultiWOZ | Multi-Domain Wizard-of-Oz |

| T5 | Text-to-Text Transfer Transformer |

| BART | Bidirectional and Auto-Regressive Transformer |

| XLM-R | Cross-Lingual Model-RoBERTa |

| M2M | Many-to-Many Multilingual Translation Model |

| BERT | Bidirectional Encoder Representations from Transformers |

| RoBERTa | Robustly Optimized BERT Pretraining Approach |

| ELMo | Embeddings from Language Models |

| PII | Personally Identifiable Information |

| QG | Question Generation |

| TC | Text Classification |

| PCL | Patronizing and Condescending Language |

| GUS | Genial Understander System |

| GMB | Groningen Meaning Bank |

| WSD | Word Sense Disambiguation |

| CC-BY4 | Creative Commons Attribution 4.0 |

| CI | Confidence Interval |

| BLEU | Bilingual Evaluation Understudy |

| GDPR | General Data Protection Regulation |

| SVM | Support Vector Machine |

| VS | Vector Space |

| VSM | Vector Space Model |

| NLTK | Natural Language Toolkit |

| tf-idf | Term Frequency–Inverse Document Frequency |

| PCA | Principal Component Analysis |

| SVD | Singular Value Decomposition |

| LSI | Latent Semantic Indexing |

| PLSI | Probabilistic Latent Semantic Indexing |

| LDA | Latent Dirichlet Allocation |

| LM | Language Model |

| biLM | Bidirectional Language Model |

| PoS | Part of Speech |

| NNLM | Neural Network Language Model |

| BPE | Byte-Pair Encoding |

| OOV | Out-of-Vocabulary |

| IMDB | Internet Movie Database |

| LR | Learning Rate |

| CUS | Credibility Unanimous Score |

| IE | Information Extraction |

| RL | Reinforcement Learning |

| MDL | Minimal Dependency Length |

| MLM | Masked Language Model |

| RQ | Research Questions |

| VNC | Verb–Noun Constructions |

| XAI | Explainable Artificial Intelligence |

Appendix A

| Details | |

|---|---|

| Model rationale | This is a fine-tuned model of DialoGPT (medium)-MultiWOZ v2.2 on the Potential Idiomatic Expression (PIE)-English idiom corpus. It is intended to be used as an idiom-aware conversational system. |

| Limitation | The dataset that it is trained on is limited in scope, as it covers only 10 classes, namely, metaphor, simile, euphemism, parallelism, personification, oxymoron, paradox, hyperbole, irony, and literal. |

| Result | The perplexities achieved on the test and validation sets are 160.9 and 160.46, respectively. |

| Others | |

| Model link | https://huggingface.co/tosin/dialogpt_mwoz_idioms (accessed date: 1 September 2022 ) |

| More | Information about the original pretrained model can be found at https://huggingface.co/tosin/dialogpt_mwoz accessed date: 1 September 2022 |

| Lisence | CC-BY 4.0. |

| Example of generation from an interactive environment: | |

| Role | Response |

| User | remind her to take it off the hook. |

| Bot | the bookie took it on the nod |

| User | A lengthy break is in order; so make sure you keep your nose clean |

| Bot | they are under a cloud because of fears about US President’s wife Hillary Clinton |

| User | Some families breed like rabbits and send us all their male heirs |

| Bot | he was out for the count |

References

- Jhamtani, H.; Gangal, V.; Hovy, E.; Berg-Kirkpatrick, T. Investigating Robustness of Dialog Models to Popular Figurative Language Constructs. In Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, Online, 7–11 November 2021; Association for Computational Linguistics: Stroudsburg, PA, USA, 2021; pp. 7476–7485. [Google Scholar] [CrossRef]

- Korkontzelos, I.; Zesch, T.; Zanzotto, F.M.; Biemann, C. Semeval-2013 task 5: Evaluating phrasal semantics. In Second Joint Conference on Lexical and Computational Semantics (*SEM), Proceedings of the Seventh International Workshop on Semantic Evaluation (SemEval 2013), Atlanta, GA, USA, 14–15 June 2013; Association for Computational Linguistics: Stroudsburg, PA, USA, 2013; Volume 2, pp. 39–47. [Google Scholar]

- Adewumi, T.; Vadoodi, R.; Tripathy, A.; Nikolaidou, K.; Liwicki, F.; Liwicki, M. Potential Idiomatic Expression (PIE)-English: Corpus for Classes of Idioms. In Proceedings of the Thirteenth International Conference on Language Resources and Evaluation (LREC 2022), Marseille, France, 21–23 June 2022; European Language Resources Association (ELRA): Paris, France, 2022. [Google Scholar]

- Zhang, Y.; Sun, S.; Galley, M.; Chen, Y.C.; Brockett, C.; Gao, X.; Gao, J.; Liu, J.; Dolan, B. DialoGPT: Large-Scale Generative Pre-training for Conversational Response Generation. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics: System Demonstrations, Online, 5–10 July 2020; pp. 270–278. [Google Scholar] [CrossRef]

- Peng, J.; Feldman, A.; Jazmati, H. Classifying idiomatic and literal expressions using vector space representations. In Proceedings of the International Conference Recent Advances in Natural Language Processing, Hissar, Bulgaria, 5–11 September 2015; pp. 507–511. [Google Scholar]

- Li, L.; Sporleder, C. Classifier combination for contextual idiom detection without labelled data. In Proceedings of the 2009 Conference on Empirical Methods in Natural Language Processing, Singapore, 6–7 August 2009; pp. 315–323. [Google Scholar]

- Sporleder, C.; Li, L.; Gorinski, P.; Koch, X. Idioms in Context: The IDIX Corpus. In Proceedings of the Seventh International Conference on Language Resources and Evaluation (LREC’10), Valletta, Malta, 17–23 May 2010. [Google Scholar]

- Li, Y.; Su, H.; Shen, X.; Li, W.; Cao, Z.; Niu, S. DailyDialog: A Manually Labelled Multi-turn Dialogue Dataset. In Proceedings of the Eighth International Joint Conference on Natural Language Processing (Volume 1: Long Papers), Taipei, Taiwan, 27 November–1 December 2017; Asian Federation of Natural Language Processing: Taipei, Taiwan, 2017; pp. 986–995. [Google Scholar]

- Zhang, S.; Dinan, E.; Urbanek, J.; Szlam, A.; Kiela, D.; Weston, J. Personalizing Dialogue Agents: I have a dog, do you have pets too? In Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), Melbourne, Australia, 15–20 July 2018; Association for Computational Linguistics: Stroudsburg, PA, USA, 2018; pp. 2204–2213. [Google Scholar] [CrossRef]

- Cook, P.; Fazly, A.; Stevenson, S. Pulling their weight: Exploiting syntactic forms for the automatic identification of idiomatic expressions in context. In Proceedings of the Workshop on a Broader Perspective on Multiword Expressions, Prague, Czech Republic, 25–27 June 2007; pp. 41–48. [Google Scholar]

- Mao, R.; Lin, C.; Guerin, F. Word Embedding and WordNet Based Metaphor Identification and Interpretation. In Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), Melbourne, Australia, 15–20 July 2018; Association for Computational Linguistics: Stroudsburg, PA, USA, 2018; pp. 1222–1231. [Google Scholar] [CrossRef]

- Bizzoni, Y.; Chatzikyriakidis, S.; Ghanimifard, M. “Deep” Learning : Detecting Metaphoricity in Adjective-Noun Pairs. In Proceedings of the Workshop on Stylistic Variation, Copenhagen, Denmark, 8 September 2017; Association for Computational Linguistics: Stroudsburg, PA, USA, 2017; pp. 43–52. [Google Scholar] [CrossRef]

- Diab, M.; Bhutada, P. Verb noun construction MWE token classification. In Proceedings of the Workshop on Multiword Expressions: Identification, Disambiguation and Applications (MWE 2009), Singapore, 6 August 2009; pp. 17–22. [Google Scholar]

- Tan, M.; Jiang, J. Does BERT Understand Idioms? A Probing-Based Empirical Study of BERT Encodings of Idioms. In Proceedings of the International Conference on Recent Advances in Natural Language Processing (RANLP 2021), Online, 9–10 September 2021; INCOMA Ltd.: Athens, Greece, 2021; pp. 1397–1407. [Google Scholar]

- Liu, Y.; Ott, M.; Goyal, N.; Du, J.; Joshi, M.; Chen, D.; Levy, O.; Lewis, M.; Zettlemoyer, L.; Stoyanov, V. Roberta: A robustly optimized bert pretraining approach. arXiv 2019, arXiv:1907.11692. [Google Scholar]

- Yang, Z.; Dai, Z.; Yang, Y.; Carbonell, J.; Salakhutdinov, R.; Le, Q.V. XLNet: Generalized Autoregressive Pretraining for Language Understanding. In Proceedings of the 33rd International Conference on Neural Information Processing Systems, Vancouver, BC, Canada, 8–14 December 2019; Curran Associates Inc.: Red Hook, NY, USA, 2019. [Google Scholar]

- Lan, Z.; Chen, M.; Goodman, S.; Gimpel, K.; Sharma, P.; Soricut, R. ALBERT: A Lite BERT for Self-supervised Learning of Language Representations. In Proceedings of the 8th International Conference on Learning Representations, ICLR 2020, Addis Ababa, Ethiopia, 26–30 April 2020. [Google Scholar]

- Clark, K.; Luong, M.T.; Le, Q.V.; Manning, C.D. Electra: Pre-training text encoders as discriminators rather than generators. arXiv 2020, arXiv:2003.10555. [Google Scholar]

- Obaid, H.S.; Dheyab, S.A.; Sabry, S.S. The impact of data pre-processing techniques and dimensionality reduction on the accuracy of machine learning. In Proceedings of the 2019 9th Annual Information Technology, Electromechanical Engineering and Microelectronics Conference (IEMECON), Jaipur, India, 13–15 March 2019; pp. 279–283. [Google Scholar]

- Javed, S.; Adewumi, T.P.; Liwicki, F.S.; Liwicki, M. Understanding the Role of Objectivity in Machine Learning and Research Evaluation. Philosophies 2021, 6, 22. [Google Scholar] [CrossRef]

- Bird, S.; Klein, E.; Loper, E. Natural Language Processing with Python: Analyzing Text with the Natural Language Toolkit; O’Reilly Media, Inc.: Sebastopol, CA, USA, 2009. [Google Scholar]

- Budzianowski, P.; Wen, T.H.; Tseng, B.H.; Casanueva, I.; Ultes, S.; Ramadan, O.; Gašić, M. MultiWOZ—A Large-Scale Multi-Domain Wizard-of-Oz Dataset for Task-Oriented Dialogue Modelling. In Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing, Brussels, Belgium, 31 October–4 November 2018; Association for Computational Linguistics: Stroudsburg, PA, USA, 2018; pp. 5016–5026. [Google Scholar] [CrossRef]

- Adewumi, T.; Brännvall, R.; Abid, N.; Pahlavan, M.; Sabry, S.S.; Liwicki, F.; Liwicki, M. Småprat: DialoGPT for Natural Language Generation of Swedish Dialogue by Transfer Learning. In Proceedings of the 5th Northern Lights Deep Learning Workshop, Tromsø, Norway, 10–12 January 2022; Septentrio Academic Publishing: Tromsø, Norway, 2022; Volume 3. [Google Scholar] [CrossRef]

- Adewumi, T.; Adeyemi, M.; Anuoluwapo, A.; Peters, B.; Buzaaba, H.; Samuel, O.; Rufai, A.M.; Ajibade, B.; Gwadabe, T.; Traore, M.M.K.; et al. Ìtàkúròso: Exploiting Cross-Lingual Transferability for Natural Language Generation of Dialogues in Low-Resource, African Languages. arXiv 2022, arXiv:2204.08083. [Google Scholar]

- Eric, M.; Goel, R.; Paul, S.; Sethi, A.; Agarwal, S.; Gao, S.; Kumar, A.; Goyal, A.; Ku, P.; Hakkani-Tur, D. MultiWOZ 2.1: A Consolidated Multi-Domain Dialogue Dataset with State Corrections and State Tracking Baselines. In Proceedings of the 12th Language Resources and Evaluation Conference, Marseille, France, 11–16 May 2020; European Language Resources Association: Marseille, France, 2020; pp. 422–428. [Google Scholar]

- Ferraresi, A.; Zanchetta, E.; Baroni, M.; Bernardini, S. Introducing and evaluating ukWaC, a very large web-derived corpus of English. In Proceedings of the 4th Web as Corpus Workshop (WAC-4) Can We Beat Google, Marrakech, Morocco, 1 June 2008; pp. 47–54. [Google Scholar]

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. Bert: Pre-training of deep bidirectional transformers for language understanding. arXiv 2018, arXiv:1810.04805. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention is All you Need. In Proceedings of the Advances in Neural Information Processing Systems; Guyon, I., Luxburg, U.V., Bengio, S., Wallach, H., Fergus, R., Vishwanathan, S., Garnett, R., Eds.; Curran Associates, Inc.: Red Hook, NY, USA, 2017; Volume 30. [Google Scholar]

- Raffel, C.; Shazeer, N.; Roberts, A.; Lee, K.; Narang, S.; Matena, M.; Zhou, Y.; Li, W.; Liu, P.J. Exploring the Limits of Transfer Learning with a Unified Text-to-Text Transformer. J. Mach. Learn. Res. 2020, 21, 5485–5551. [Google Scholar]

- Wolf, T.; Debut, L.; Sanh, V.; Chaumond, J.; Delangue, C.; Moi, A.; Cistac, P.; Rault, T.; Louf, R.; Funtowicz, M.; et al. Transformers: State-of-the-Art Natural Language Processing. In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing: System Demonstrations, Online, 16–20 November 2020; Association for Computational Linguistics: Stroudsburg, PA, USA, 2020; pp. 38–45. [Google Scholar] [CrossRef]

- Adewumi, T.; Liwicki, F.; Liwicki, M. Word2Vec: Optimal hyperparameters and their impact on natural language processing downstream tasks. Open Comput. Sci. 2022, 12, 134–141. [Google Scholar] [CrossRef]

- Adewumi, T.P.; Liwicki, F.; Liwicki, M. Exploring Swedish & English fastText embeddings for NER with the Transformer. arXiv 2020, arXiv:2007.16007. [Google Scholar]

- Radford, A.; Wu, J.; Child, R.; Luan, D.; Amodei, D.; Sutskever, I. Language models are unsupervised multitask learners. OpenAI Blog 2019, 1, 9. [Google Scholar]

- Zhang, Y.; Sun, S.; Gao, X.; Fang, Y.; Brockett, C.; Galley, M.; Gao, J.; Dolan, B. Joint Retrieval and Generation Training for Grounded Text Generation. arXiv 2021, arXiv:2105.06597. [Google Scholar]

- Lin, C.Y. ROUGE: A Package for Automatic Evaluation of Summaries. In Proceedings of the Text Summarization Branches Out, Barcelona, Spain, 1 September 2003; Association for Computational Linguistics: Stroudsburg, PA, USA, 2004; pp. 74–81. [Google Scholar]

- Papineni, K.; Roukos, S.; Ward, T.; Zhu, W.J. BLEU: A Method for Automatic Evaluation of Machine Translation. In Proceedings of the ACL’02, 40th Annual Meeting on Association for Computational Linguistics, Philadelphia, PA, USA, 7–12 July 2022; Association for Computational Linguistics: Stroudsburg, PA, USA, 2002; pp. 311–318. [Google Scholar] [CrossRef]

- Gehrmann, S.; Adewumi, T.; Aggarwal, K.; Ammanamanchi, P.S.; Aremu, A.; Bosselut, A.; Chandu, K.R.; Clinciu, M.A.; Das, D.; Dhole, K.; et al. The GEM Benchmark: Natural Language Generation, its Evaluation and Metrics. In Proceedings of the 1st Workshop on Natural Language Generation, Evaluation, and Metrics (GEM 2021), Online, 1 September 2020; Association for Computational Linguistics: Stroudsburg, PA, USA, 2021; pp. 96–120. [Google Scholar] [CrossRef]

- Jurafsky, D.; Martin, J. Speech and Language Processing: An Introduction to Natural Language Processing, Computational Linguistics, and Speech Recognition; Dorling Kindersley Pvt, Limited: London, UK, 2020. [Google Scholar]

- Liu, C.W.; Lowe, R.; Serban, I.V.; Noseworthy, M.; Charlin, L.; Pineau, J. How not to evaluate your dialogue system: An empirical study of unsupervised evaluation metrics for dialogue response generation. arXiv 2016, arXiv:1603.08023. [Google Scholar]

- Reiter, E. 20 Natural Language Generation. In The Handbook of Computational Linguistics and Natural Language Processing; Wiley: Hoboken, NJ, USA, 2010; p. 574. [Google Scholar]

- Adiwardana, D.; Luong, M.T.; So, D.R.; Hall, J.; Fiedel, N.; Thoppilan, R.; Yang, Z.; Kulshreshtha, A.; Nemade, G.; Lu, Y.; et al. Towards a human-like open-domain chatbot. arXiv 2020, arXiv:2001.09977. [Google Scholar]

- Aggarwal, C.C.; Zhai, C. A survey of text classification algorithms. In Mining Text Data; Springer: Berlin/Heidelberg, Germany, 2012; pp. 163–222. [Google Scholar]

- Landis, J.R.; Koch, G.G. The measurement of observer agreement for categorical data. Biometrics 1977, 33, 159–174. [Google Scholar] [CrossRef] [PubMed]

- Sim, J.; Wright, C.C. The kappa statistic in reliability studies: Use, interpretation, and sample size requirements. Phys. Ther. 2005, 85, 257–268. [Google Scholar] [CrossRef] [PubMed]

- Luque, A.; Carrasco, A.; Martín, A.; de las Heras, A. The impact of class imbalance in classification performance metrics based on the binary confusion matrix. Pattern Recognit. 2019, 91, 216–231. [Google Scholar] [CrossRef]

- Adewumi, T.; Alkhaled, L.; Mokayed, H.; Liwicki, F.; Liwicki, M. ML_LTU at SemEval-2022 Task 4: T5 Towards Identifying Patronizing and Condescending Language. In Proceedings of the 16th International Workshop on Semantic Evaluation (SemEval-2022), Online, 14–15 July 2022; Association for Computational Linguistics: Stroudsburg, PA, USA, 2022; pp. 473–478. [Google Scholar] [CrossRef]

- Sabry, S.S.; Adewumi, T.; Abid, N.; Kovacs, G.; Liwicki, F.; Liwicki, M. HaT5: Hate Language Identification using Text-to-Text Transfer Transformer. arXiv 2022, arXiv:2202.05690. [Google Scholar]

- Roller, S.; Dinan, E.; Goyal, N.; Ju, D.; Williamson, M.; Liu, Y.; Xu, J.; Ott, M.; Smith, E.M.; Boureau, Y.L.; et al. Recipes for Building an Open-Domain Chatbot. In Proceedings of the 16th Conference of the European Chapter of the Association for Computational Linguistics: Main Volume, Online, 19–23 April 2021; Association for Computational Linguistics: Stroudsburg, PA, USA, 2021; pp. 300–325. [Google Scholar] [CrossRef]

- Hashimoto, T.B.; Zhang, H.; Liang, P. Unifying Human and Statistical Evaluation for Natural Language Generation. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers), Minneapolis, MN, USA, 2–7 June 2019; Association for Computational Linguistics: Stroudsburg, PA, USA, 2019; pp. 1689–1701. [Google Scholar] [CrossRef]

- Mohammad, S.; Shutova, E.; Turney, P. Metaphor as a Medium for Emotion: An Empirical Study. In Proceedings of the Fifth Joint Conference on Lexical and Computational Semantics, Berlin, Germany, 11–12 August 2016; Association for Computational Linguistics: Stroudsburg, PA, USA, 2016; pp. 23–33. [Google Scholar] [CrossRef]

- Alm-Arvius, C. Figures of Speech; Studentlitteratur: Lund, Sweden, 2003. [Google Scholar]

| No | Samples | Class |

|---|---|---|

| 1 | Carry the day | Metaphor |

| 2 | Does the will of the Kuwaiti parliament transcend the will of the Emir and does parliament carry the day? | Metaphor |

| 3 | Time flies | Personification |

| 4 | ‘Eighty-four!’ she giggled. How time flies | Personification |

| 5 | As clear as a bell | Simile |

| 6 | It sounds as clear as a bell | Simile |

| 7 | Go belly up | Euphemism |

| 8 | If several clubs do go belly up, as Adam Pearson predicts. | Euphemism |

| 9 | The back of beyond | Hyperbole |

| 10 | There’d be no one about at all in the back of beyond. | Hyperbole |

| 11 | “Why couldn’t you just stay in the back of beyond?” she said. | Hyperbole |

| Model | Accuracy | Weighted F1 | Macro F1 | |||

|---|---|---|---|---|---|---|

| Dev (sd) | Test (sd) | Dev (sd) | Test (sd) | Dev (sd) | Test (sd) | |

| BERT | 0.96 (0) | 0.96 (0) | 0.96 (0) | 0.96 (0) | 0.75 (0.04) | 0.73 (0.01) |

| T5 | 0.99 (0) | 0.98 (0) | 0.98 (0) | 0.98 (0) | 0.97 (0) | 0.98 (0) |

| BERT * [3] | 0.93 | - | 0.95 | - | - | - |

| Model | Perplexity | |

|---|---|---|

| Dev (sd) | Test (sd) | |

| IdiomWOZ | 201.10 (34.82) | 200.68 (34.83) |

| IdiomOnly | 189.92 (1.83) | 185.62 (2.05) |

| MultiWOZ [23] | 6.41 (-) | 6.21 (-) |

| Model | Scale (Majority Votes) | |||

|---|---|---|---|---|

| H (%) | U (%) | N (%) | 3-Way (%) | |

| IdiomWOZ | 39.1 | 10.9 | 37.5 | 12.5 |

| IdiomOnly | 15.6 | 12.5 | 60.9 | 10.9 |

| MultiWOZ | 62.5 | 1.6 | 32.8 | 3.1 |

| Unanimous Votes—3/3 | ||||

| IdiomWOZ | 20.3 | 0 | 12.5 | - |

| IdiomOnly | 6.3 | 0 | 31.3 | - |

| MultiWOZ | 45.3 | 0 | 23.4 | - |

| Idiom-Only Maj. Votes (32 Samples) | ||||

| IdiomWOZ | 30 | 23.3 | 33.3 | 13.3 |

| IdiomOnly | 26.7 | 20 | 36.7 | 16.7 |

| MultiWOZ | 26.7 | 3.3 | 66.7 | 3.3 |

| Model | Scale (Majority Voting) | |

|---|---|---|

| More Fitting (%) | More Diverse (%) | |

| IdiomWOZ | 71.9 | 28.1 |

| MultiWOZ | 28.1 | 71.9 |

| Unanimous Votes—3/3 | ||

| IdiomWOZ | 46.9 | 9.4 |

| MultiWOZ | 9.4 | 15.6 |

| Person 1 | Person 2 |

|---|---|

| He knew his father’s grievance; and put off the evil day as long as he possibly could | he’s dead meat |

| achieving results that fly in the face of realism | I’m not here I’m just a fly on the wall |

| does that mean Jane is off the hook? | She had the nerve to call time on her three-year spell in charge |

| he’s still sulking; sounding like a broken record | the whole caboodle |

| we need new ways to do this; to chew the fat; to check ourselves out | the Government’s approach to the environment is geared more towards addressing the environment rather than helping the environment |

| the 24-year-old striker is self-critical enough to insist he is not yet firing on all cylinders | You’re the one up to your neck in it |

| remind her to take it off the hook | the bookie took it on the nod |

| A lengthy break is in order; so make sure you keep your nose clean | they are under a cloud because of fears about US President’s wife Hillary Clinton |

| Utd have shown they are in a league of their own | It’s a long shot him signing for Leeds. They’re a hard nut to crack |

| from now until the year dot | he was the apple of her eye |

| it’s a good idea to go the distance | it’ll just collapse and give up the ghost somewhere in South Africa |

| Person 1 | Person 3 |

|---|---|

| He knew his father’s grievance; and put off the evil day as long as he possibly could | That’s great; thanks. |

| achieving results that fly in the face of realism | Yes; realism. I like it. |

| does that mean Jane is off the hook? | I’m looking for a place to go in the centre. |

| he’s still sulking; sounding like a broken record | Well then I suppose I need to find something else to do for you. |

| we need new ways to do this; to chew the fat; to check ourselves out | To each their own. Let me know if you need any more info. |

| the 24-year-old striker is self-critical enough to insist he is not yet firing on all cylinders | Well if you’ll excuse me... let me ask my wife. Does she think we should try for a little earlier; say 20:30? |

| remind her to take it off the hook | I’m sorry; I forgot to hang up. Good bye. |

| A lengthy break is in order; so make sure you keep your nose clean | I am looking for a restaurant in the centre of town that serves unusual food. |

| Utd have shown they are in a league of their own | I’m sorry; but I’m not sure what you’re looking for. Could you please restate your requirements? |

| from now until the year dot | I’m sorry; but I don’t understand your request. Would you like to try a different restaurant? |

| it’s a good idea to go the distance | I can help with that! What are your departure and destination sites? |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Adewumi, T.; Liwicki, F.; Liwicki, M. Vector Representations of Idioms in Conversational Systems. Sci 2022, 4, 37. https://doi.org/10.3390/sci4040037

Adewumi T, Liwicki F, Liwicki M. Vector Representations of Idioms in Conversational Systems. Sci. 2022; 4(4):37. https://doi.org/10.3390/sci4040037

Chicago/Turabian StyleAdewumi, Tosin, Foteini Liwicki, and Marcus Liwicki. 2022. "Vector Representations of Idioms in Conversational Systems" Sci 4, no. 4: 37. https://doi.org/10.3390/sci4040037

APA StyleAdewumi, T., Liwicki, F., & Liwicki, M. (2022). Vector Representations of Idioms in Conversational Systems. Sci, 4(4), 37. https://doi.org/10.3390/sci4040037