Abstract

Airborne remote sensing, whether performed from conventional aerial survey platforms such as light aircraft or the more recent Remotely Piloted Airborne Systems (RPAS) has the ability to compliment mapping generated using earth-orbiting satellites, particularly for areas that may experience prolonged cloud cover. Traditional aerial platforms are costly but capture spectral resolution imagery over large areas. RPAS are relatively low-cost, and provide very-high resolution imagery but this is limited to small areas. We believe that we are the first group to retrofit these new, low-cost, lightweight sensors in a traditional aircraft. Unlike RPAS surveys which have a limited payload, this is the first time that a method has been designed to operate four distinct RPAS sensors simultaneously—hyperspectral, thermal, hyper, RGB, video. This means that imagery covering a broad range of the spectrum captured during a single survey, through different imaging capture techniques (frame, pushbroom, video) can be applied to investigate different multiple aspects of the surrounding environment such as, soil moisture, vegetation vitality, topography or drainage, etc. In this paper, we present the initial results validating our innovative hybrid system adapting dedicated RPAS sensors for a light aircraft sensor pod, thereby providing the benefits of both methodologies. Simultaneous image capture with a Nikon D800E SLR and a series of dedicated RPAS sensors, including a FLIR thermal imager, a four-band multispectral camera and a 100-band hyperspectral imager was enabled by integration in a single sensor pod operating from a Cessna c172. However, to enable accurate sensor fusion for image analysis, each sensor must first be combined in a common vehicle coordinate system and a method for triggering, time-stamping and calculating the position/pose of each sensor at the time of image capture devised. Initial tests were carried out over agricultural regions with geometric tests designed to assess the spatial accuracy of the fused imagery in terms of its absolute and relative accuracy. The results demonstrate that by using our innovative system, images captured simultaneously by the four sensors could be geometrically corrected successfully and then co-registered and fused exhibiting a root-mean-square error (RMSE) of approximately 10m independent of inertial measurements and ground control.

Keywords:

hyperspectral; multispectral; thermal; RGB; orthophoto; RPAS; UAV; sensor pod; image fusion; remote sensing; light aircraft 1. Introduction

Remote sensing is a survey and mapping technique enabling non-contact measurements of many land cover and environment types across a range of spatial, spectral and temporal resolutions. Spectral measurements recorded by multispectral or hyperspectral sensors enable comparison and classification of objects outside the visible portion of the spectrum with a variety of applications such as precision agriculture [1,2,3], land cover classification [4,5], change detection mapping [6,7] forest inventory assessment [8,9] and marine mapping [10,11] all benefiting from remote sensing techniques. Additionally, remote sensing platforms such as earth-orbiting satellites facilitate synoptic, time-series analysis for multiple applications. Space-borne constellations of sensors are increasing in number and provide the capability to record optical and non-optical measurements of the planet. For example, the Landsat family of satellites (current flagship satellite is Landsat 8 [12] with Landsat 9 under development), the recent launch of the Sentinel 2 satellites [13] or existing hyperspectral satellite sensors such as Hyperion [14] offer users regular mapping updates for the entire globe at high spatial, spectral and temporal resolutions.

1.1. Satellite vs Airborne Platforms

Multispectral or hyperspectral spaceborne surveys are performed using passive remote sensing techniques, and in such situations a convergence of cloud cover and the satellite overpass can result in occlusions at the test-site. Coastal regions or areas at high elevations are particularly prone to cloud at altitude and Ireland for example on the coast of NW Europe and alongside the Gulf stream experiences approximately 100% cloud cover over 50% of the year [15]. When active survey techniques such as Synthetic Aperture RADAR (SAR) are not suitable to record the feature (either due to spatial/temporal resolution or requirement for spectral data) established methodologies such as aerial surveys are required to survey under cloud cover. A trade-off is therefore always present between earth-orbiting satellites, whose low-earth, polar orbit can provide users with cost-free image capture at a high temporal resolution (providing regular image updates but to the detriment of spatial resolution) and aerial platforms who are relatively slow to mobilise, costly to operate for regular survey updates but can provide wide area coverage at very high spatial resolutions. The decommissioning of the EO-1 satellite housing the Hyperion sensor in January 2017 increases the urgency for new sources of hyperspectral data for researchers, whether through traditional aerial campaigns or low altitude Remotely Piloted Airborne Systems (RPAS) surveys. Operational aerial survey platforms deploying sensors such as the Airborne Prism Experiment (APEX) [16] or HYMAP [17] are available for high spectral resolution measurement campaigns but these platforms are costly to construct and operate and in great demand among researchers.

1.2. Airborne vs Remotely Piloted Airborne Systems

Hardware and fuel costs for high-specification aerial platforms can be prohibitive and require careful planning and resource management [18] as they require the concentration and deployment of multiple resources and staff, including; the pilot, survey planner/navigator, a sensor operator and sensors. They require action on the part of the surveyors to take advantage of a weather window, unlike space-borne platforms which are recording data constantly over the entire globe. To enable a more reactive aerial survey methodology and reduce the number of personnel required, small-scale survey platforms have evolved from hobbyist remote-control aircraft into the current generation of RPAS. These systems are relatively low-cost, quick to mobilise and can capture imagery at a very high spatial resolution and accuracy [19,20,21] for diverse applications in terrestrial remote sensing such as topographic mapping [22], precision agriculture [23,24] and livestock monitoring [25]. RPAS are also suitable for marine environments, providing a capability for mapping coastal bathymetry [26], river bathymetry [27], classifying submerged vegetation [28] and marine search and rescue [29]. However, RPAS have their own restrictions as the platform payload influences flight time and the weight of the Lithium Polymer batteries alone can significantly reduce this. Heavy-lifting rotary platforms can remain airborne for approximately 20 min [30,31], whereas fixed-wing platforms can remain airborne for 60 min or more [32] although work is ongoing to improve endurance and extend range of battery operated platforms [33] and solar [34] or petrol [35] powered drones offer greatly increased operating times. For the fixed-wing RPAS operated at the National Centre for Geocomputation (NCG), the Bramor from c-Astral, performing a topographic survey of an area at 16 m/s cruising speed (maintaining a high overlap along-track and across-track) the maximum area that can be covered on a single battery is approximately 1 km. Additionally, restrictions imposed by national and international aviation authorities limit the distance these platforms can be operated from the operator and it is required to maintain line of sight at all times between the ground operator and the RPAS. The max operating range in Ireland without special approval from the Irish Aviation Authority is 300 m [36].

1.3. Demonstrating the Potential of a Hybrid Approach

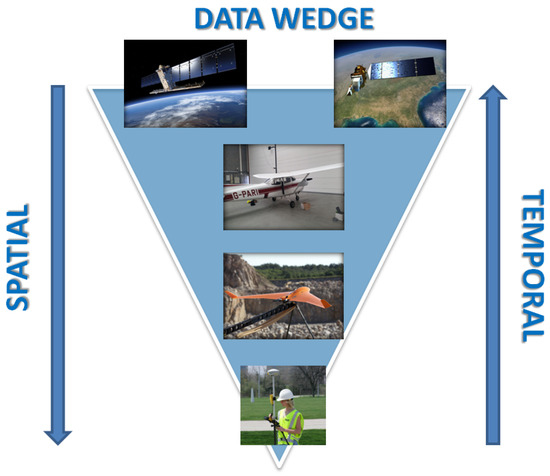

The ”data wedge” approach in Figure 1 illustrates the trade-off between the high temporal resolution of satellites with the relatively coarse spatial resolution, compared with the lower temporal resolution of airborne and RPAS platforms but achieving imagery of a higher spatial resolution. This study aims to demonstrate the feasibility of a hybrid approach, using light aircraft to bypass battery and RPAS regulations thereby extending the geographic extents of the survey platform, but simultaneously developing a low-cost, easily transferable light weight sensor pod utilising the current generation of RPAS sensors to make the light aircraft option more responsive and also less costly to deploy and operate. Although many RPAS on the market incorporate interchangeable sensors for single flights [37,38], we believe we are the first to retrofit these new, low-cost, lightweight sensors in a traditional aircraft. Unlike RPAS surveys which are limited by payload, this is the first time that a method has been designed to operate four distinct RPAS sensors simultaneously—hyperspectral, multispectral, thermal video and RGB. This means imagery that is captured during a single survey through different imaging methods (frame, pushbroom, video) is available for a wide area and can be applied to investigate diverse aspects of the surrounding environment such as, soil moisture, vegetation vitality, topography or drainage, etc. with no temporal variation between surveys.

Figure 1.

Data Capture at multiple resolutions: Earth orbiting satellite, light aircraft, Remotely Piloted Airborne Systems (RPAS) and field surveyors.

The innovative system that we have developed to enable this versatile approach to airborne remote sensing and spectral measurement is the first to:

- Design an aerial sensor pod to re-purpose four RPAS-dedicated spectral sensors.

- Develop logging and navigation capability to synchronize and spatially locate four spectrally diverse streams of RPAS imagery.

- Fuse the resulting snapshot, video and pushbroom imagery for spectral and spatial analysis.

This paper will validate our system by assessing the performance of four RPAS-dedicated sensors in a light aircraft and quantify the spatial accuracy of the fused imagery for each datatype in terms of both absolute and relative spatial accuracy.

2. Platform Development

Researchers at the NCG have adapted hardware and principles acquired during previous survey platform design and development projects (development of the XP1, a terrestrial mobile mapping system incorporating an inertial measurement unit and thermal, low light and LiDAR sensors [39] and also work with the dedicated aerial RGB sensors in the Compact Airborne Imaging System [40]) to develop this multi-resolution aerial survey platform. The sensor pod presented in this paper incorporates four separate cameras and is also capable of recording high definition (HD) video. The three spectral sensors and their capture and logging software were provided by NCG, whereas the RGB camera, image capture and flight planning software was provided by an industry partner company, Air Survey.

2.1. RPAS Sensors

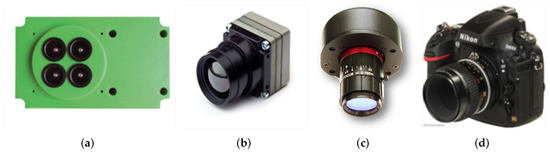

A comprehensive suite of sensors (Figure 2) was incorporated into the sensor pod on the light aircraft, with accompanying operating systems, control software, data logging capabilities and navigation sensors. The following hardware has been installed, calibrated and tested in the sensor pod. The hardware specifications are summarised in Table 1:

Figure 2.

Sensors included in the sensor pod installed on the Cessna C-172 (a) Airinov Agrosensor multispectral; (b) Tau 640 thermal; (c) OCI-UAV-1000 pushbroom hyperspectral; (d) Nikon D800E RGB with a Zeiss ZF2 lens.

Table 1.

Sensor and image parameters when operating at approximately 500 m Above Ground Level including footprint and ground smapling distance (GSD).

Airinov AgroSensor: A multispectral sensor [41] primarily designed for vegetation surveys due to its ability to record multi-band imagery in the green, red, ”red edge” and near-infrared (NIR) portions of the spectrum from approximately 0.53 to 0.83 m. The AgroSensor can be used to create false colour orthomosaics, multispectral point clouds and digital surface models for land cover classification, vegetation analysis or change detection. This sensor was connected to a light-metre positioned on the top of the airplane cockpit which recorded changes in illumination during surveys and applied during post-processing. The AgroSensor was operated in snapshot imaging mode using a time-delayed trigger but can also be used by pre-specifying image capture waypoints.

Tau 640 LWIR: This thermal imager [42] captured data in the long-wavelength infrared portion of the spectrum from 7.5 to 13.5 m. Thermal imagery is utilised at the NCG for agricultural drainage studies but also for identifying submarine groundwater discharge [43] and the Tau 640 has demonstrated capability for distinguishing a temperature differential and locating freshwater entering the ocean. The Tau 640 operates continuously in frame capture video mode following a ‘record’ command and individual frames were extracted for georeferencing.

OCI-UAV-1000: This novel hyperspectral imager [44] for RPAS recorded 100 bands across the red—NIR portion of the spectrum from approximately 0.6 m to 0.9 m. The OCI UAV1000 recorded continuously in pushbroom imaging mode from the beginning of each flight line and therefore differs in manner of image capture to the other sensors in the sensor pod and existing RPAS dedicated hyperspectral frame sensors [45]. Exposure, frames per second and shutter speed were calculated and correctly specified for the flying height and speed to avoid blurring or other problems during the subsequent image matching stage.

Nikon D800E SLR: This high-specification SLR [46] was equipped with a ZEISS ZF2 MAKRO-PLANAR T* 50/2,0 lens [47], and captured very-high resolution RGB imagery enabling the creation of orthomosaics, point clouds and 2.5D digital surface models, enabling true-colour comparisons with other datasets. The Nikon operates in snapshot imaging mode at predefined waypoints but can also be configured to record following a time delay, i.e., 0.5 Hz, 1 Hz for targets of opportunity where a flightline has not been pre-programmed.

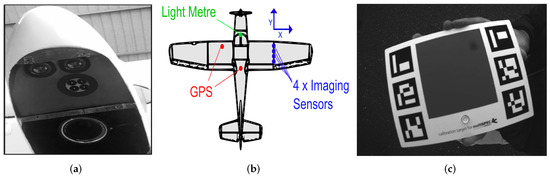

2.2. Sensor Pod

The sensor pod is a bespoke sensor housing mounted on the wing strut of Air Survey’s Cessna C-172. The sensor pod is a fibreglass compound moulded from a wheel spat of a Cessna 172 and its aerodynamic design ensures that it has very minimal drag characteristics during airborne surveys. The sensor pod is an advance [40] on previous systems introducing a greater payload capacity and streamlined shape. It is easily accessible during flight setup and calibration (Figure 3a) and the cables enabling data upload/download are protected inside the wing strut during flight. Retractable wheels on the plane and an exposed position for the sensor pod on the wing ensure that the each sensor in the sensor pod has an unobstructed field of view during the flight (Figure 3b).

Figure 3.

Sensor pod design (a) sensor pod is housed on the wing strut of a Cessna C-172 and all cables controlling upload/download are housed internally, protected from environmental effects; (b) exposed position on the wing strut results in an unobstructed view below the aircraft.

2.3. Sensor Fusion

A moulded housing was designed to contain the four imaging sensors for installation in the sensor pod (Figure 4a). The axes of measurement for each sensor were aligned with vehicle coordinate system (i.e., the direction of travel) during installation. Integrating the sensors in this fixed housing ensured that all images were in the same coordinate system to aid in image registration. Two Global Navigation Satellite System (GNSS) receivers were installed (Figure 4b) and were capable of recording at 2 Hz. This provided the required information for calculating the x, y and z of each image capture location and also calculating basic pose information such as heading by calculating change between subsequent positional measurements. This initial version of the system was not equipped with an inertial measurement unit (IMU) to record information such as pitch, roll and yaw, however a new version of the sensor pod is under development which will record six degrees of freedom throughout the flight. This will improve the accuracy of the results during the image matching and image registration process.

Figure 4.

Sensor position and calibration on the aircraft (a) internal view of four sensors installed in the sensor pod—RGB at the rear and multispectral, thermal and hyperspectral mounted at the front of the pod; (b) all sensors are orientated in the same vehicle coordinate system and the light metre and GNSS receivers control changes in position and illumination conditions; (c) sensors are calibrated prior to take-off using targets of known reflectance.

2.4. Sensor Calibration

Sensor calibration (Figure 4c) was required for the spectral sensors both prior to launch and during the flight. A specifically designed reflective target for the multispectral AgroSensor was imaged prior to take-off. Changes in illumination during the aerial survey were recorded using a light metre mounted on the roof of the aircraft and a series of white target (calibration target measured at 95% reflectance) and dark target reference measurements were captured for the hyperspectral sensor prior to take-off. No ground targets of known reflectance were located in the survey area during these initial airborne tests and therefore all reflectance measurements are relative (i.e., atmospheric errors have not been removed) and not absolute at-surface reflectance values. Additionally, all thermal measurements recorded with the Tau 640 are relative values and a default stretch was applied at the beginning of the series of flight lines at each site to ensure a good distribution of values in the default contrast stretch of the thermal imagery. This was done to ensure that no very warm or very cold surfaces visible to the sensor during the initial calibration and setup stages might affect later measurements in different regions of the flight.

3. Methodology

The initial airborne sensor pod tests involved three distinct stages. The first stage was to establish a flight plan for each site within the platforms operational range and ensure they were suitable for the sensor parameters. The second stage was image processing, including geometric correction and image registration. The final stage involved fusing the outputs of each image into the same image coordinate system enabling simultaneous analysis.

3.1. Flight Planning

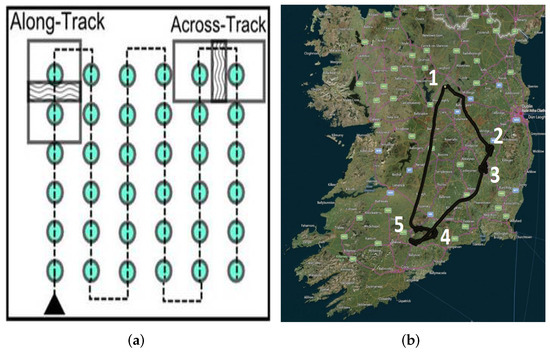

A sufficient image overlap must be pre-specified during mission planning stages to help identify matching features and ensure accurate imagery, point clouds and surface models. This is because the location and orientation of the images are calculated using automatic aerial triangulation and a bundle-block adjustment. A low image overlap may not provide sufficient matching features to resolve this calculation. The distribution of images and both types of overlap are illustrated in Figure 5a. Choosing a higher overlap than is required will increase data storage requirements and processing time but can result in a more accurate final model. A number of proprietary phorogrammetric software programmes are available for processing the overlapping RPAS imagery, such as Imagine Photogrammetry, NGATE and Photosynth [20]. Processing can also be performed using structure-from-motion software such as Bundler, Photoscan, ARC3D and CMVS [22], however dedicated RPAS software such as Pix4D are common in both commercial and research applications [19,21].

Figure 5.

Flight planning for aerial surveys (a) blue circles and dashed line represent image capture locations and flight path. The optimal along- and across-track overlap varies depending on the flying height, terrain type and sensor field of view (FOV) and identifying a suitable overlap is essential for increasing the number of matching features in overlapping imagery; (b) the navigation track from the initial test flight overlaid on a map of Ireland—five geographically distributed test sites were surveyed in a single flight using dedicated RPAS sensors, with four different high resolution datasets captured at each site.

The tests in this paper were carried out using the dedicated RPAS software, Pix4D. Overlap is terrain dependant, and for more challenging terrain such as forest, snow, sand or areas of vegetation and a high degree of overlap improves number of image matches. This increases the quality of the accompanying orthomosaic and surface model, as demonstrated in [19]. Overlap is also dependent on the flying height and the sensor field of view (FOV) and each sensor in the sensor pod has a different FOV. Due to the different image capture methodologies (snapshot, pushbroom) and different FOVs—a single sensor, the Nikon, was selected as the default for the flight planning stage and a suitable along- and across-track overlap of 80% and 60% respectively were selected. Flight planning was performed using a combination of the aeroscientific flight planning software [48] and the AVIATRIX flight mission software [49], enabling the selected flight path to be pre-programmed prior to a survey. The flight path for each aerial survey is displayed in Figure 5b.

3.2. Data Capture

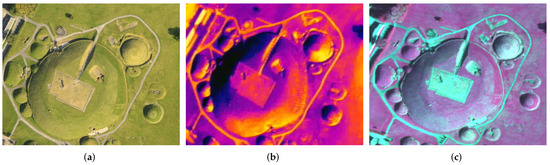

Five target sites were identified and surveyed in a single flight with all four sensors operating. RGB imagery was triggered at predefined waypoints during the aerial survey whereas both the multispectral camera and the thermal video were constantly recording at a predefined frequency throughout the entire flight. The hyperspectral sensor records approximately 1 Tb per hour at maximum frames-per-second (FPS) and therefore due to storage constraints the imagery was only recorded during each series of flight lines and deactivated during transit to subsequent test sites. The exposure and shutter speed of the hyperspectral camera was adapted mid-survey by the operator after aircraft ground speed, flying altitude and environmental conditions at the target area were considered. This abillity to capture data from multiple RPAS sensors simultaneously is one of the key benefits of our sensor pod. Figure 6 displays an example of high resolution multispectral, RGB and thermal imagery captured simultaneously during an overflight of Knowth Passage Tomb, Co. Meath, constructed over 5000 years ago and is now a popular cultural and tourist site located in an agricultural region of Ireland. In the high resolution RGB imagery in Figure 6a it is possible to identify both tourists and livestock and create high resolution elevation models and point clouds. In Figure 6b it is possible to distinguish the thermal signatures of different surfaces in the image—in this example the warmer side of each object is the South-East, suggesting these surfaces are facing the sun. In Figure 6c the spectral information can begin to help differentiate different surfaces through creation of a false colour composite (FCC). In this FCC, the colour of a target in the image does not have any resemblance to its true colour, but it allows vegetation to be detected easily. In this type of FCC, vegetation appears in different shades of red depending on the type and condition of the vegetation in that area. Each of these datasets has value for multiple applications, however the simultaneous image capture enables further interrogation through comparison of four different datasets captured simultaneously over the same area.

Figure 6.

Sensor pods enable in-depth assessment of objects in an area by comparing multiple datasets (a) high resolution RGB photography enables high resolution mapping; (b) thermal signatures identified in the SE of the objects; (c) a FCC enables differentiation of vegetation type, health and land cover.

3.3. Image Matching

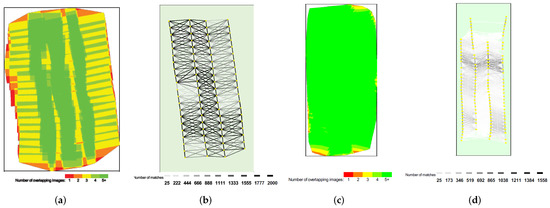

The FOV of each sensor can not be changed mid-flight once installed in the sensor pod and as a result, the footprint of each sensor in the pod is different and the footprint will therefore vary at different flying heights. To illustrate this, a plot of the overlap for the RGB imagery is illustrated in Figure 7a following the pre-specified flightpath. The overlap is highest (green—represents areas of overlap of more than five images) directly underneath the flightlines. The overlap decreases when moving towards the edge of the survey area for the final flight line as there can only ever be one flight line adjacent to the final line. This can be mitigated to a certain extent as Figure 7c demonstrates the versatility of the sensor pod. Multiple sensors, with a wider field of view such as the AgroSensor, have resulted in high image overlap across the entire survey area with more than five overlapping images achieved (consistent green). However, the trade-off with a higher FOV camera is resolution and level of detail. In this example, the higher resolution RGB camera has identified significantly more matching points in the terrain (Nikon RGB identifies a mean of 68,555 2D keypoints per image versus a mean for the AgroSensor multispectral sensor of 19,270 2D keypoints per image). Figure 7b plots the number of matching features that have been identified in the overlapping RGB imagery, with the heavy black lines representing the automatic recognition of over 2000 matching features in overlapping imagery. The lower spatial resolution of the multispectral sensor has resulted (Figure 7d) in fewer tie points being identified, hence fewer dark lines.

Figure 7.

Overlap and field of view (a) plot quantifying the overlap of the RGB data, image overlap is highest along the three flight lines; (b) trade-off between overlap and resolution; the narrower field of view of the Nikon enables it to record at a higher resolution, thereby identifying more features and more tie points being automatically detected; (c) overlap for the multispectral sensor; a wider field of view and flight lines designed for the Nikon have resulted in massive overlap across the survey area providing useful redundancy; (d) the wide field of view of the multispectral camera results in a lower spatial resolution and accordingly fewer tie points.

3.4. Co-Registration of Imagery

The latitude, longitude and altitude of the RGB and multispectral cameras during image capture were obtained from the EXIF data of the image. During theses surveys this data was recorded independently of the images and was then imported into the software in a comma separated file format (.CSV). GNSS positions recorded with the on-board receivers enabled coregistration of the RGB and multispectral imagery using existing RPAS image-rectification workflows using the Pix4D software. This system was assessed using its on-board navigation sensors only and therefore no GNSS ground control points were used to increase the accuracy of the resulting outputs. Using on-board navigation information and the camera model, the software was then calibrated and corrected. This was an iterative process where at first only two images are calibrated and subsequent images are added, validating the images. Once calibrated the images were then used to triangulate the location each of the key points using stereoscopic methods. The calibrated images were then used to produce a point cloud and 3D Mesh and these were then used to generated a Digital Surface Model (DSM) to produce an orthorectified image. Due to the image format and insufficient overlap between flight lines both the hyperspectral and thermal imagery could not be processed in Pix4d successfully. The hyperspectral imagery used proprietary software known as CubeCreator and CubeStitcher to create and stitch the individual bands into a hyperspectral cube. The cube was subdivided into manageable file sizes and was then transformed into the same mapping projection as the orthomosaics through application of a second-order transformation polynomial. The thermal video was processed using "Microsoft Image Composite Editor”, producing a single image of each flight line. This process isolates individual frame stitching them together along common points and all images were output as geotiffs.

4. Results and Discussion

This section will display the corrected outputs from the four sensors, present the results of the fusion of each image into the same coordinate system and also assess the spatial accuracy of the resulting imagery.

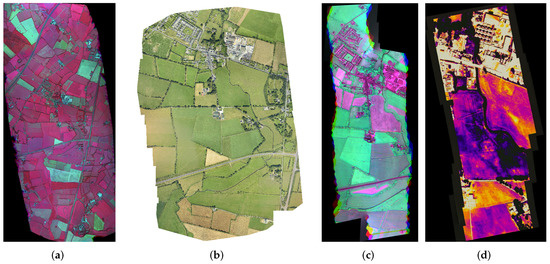

4.1. Sensor Outputs

The final outputs for each sensor following image correction and processing are displayed in Figure 8. Figure 8a displays the FCC orthomosaic created using the Agrosensor. The high resolution RGB orthomosaic is displayed in Figure 8b. All image distortions have been removed from these images using the DSM (2.5D model) that comes from the 3-dimensional densified point cloud created during image processing facilitating planar measurements. A false colour composite created using the hyperspectral cube (Figure 8c) and a stitched thermal image (Figure 8d) are also displayed, providing four complete datasets for this test area. The value of our hybrid approach using low-cost, RPAS dedicated sensors in a sensor pod is clearly demonstrated, with four spatially and spectrally rich datasets captured during a single flight over a large area. The FCCs highlight the agricultural area and condition of the crops, the bare earth, urban areas and infrastructure. The high density RGB orthomosaic can be used to derive a high resolution DSM and point cloud. The hyperspectral cube provides detailed information on the spectral signature of each land cover type across 100 bands, although in the current version of the sensor pod workflow all reflectance values are relative rather than absolute as no spectral ground control was acquired. The thermal imagery reveals thermal variations across agricultural fields, structures and land cover types.

Figure 8.

Final outputs from each sensor—image dimensions vary as different FOVs result in different image footprints (a) RGB Orthomosaic; (b) False Colour Composite from Agrosensor; (c) Hyperspectral cube from OCI UAV; (d) Thermal image stitched.

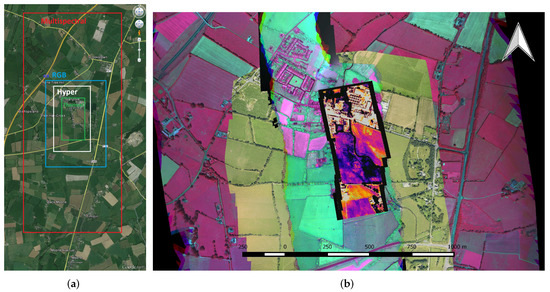

4.2. Sensor Fusion

The differing FOVs of each sensor will inevitably result in different extents of the imagery covered during each flight and the overlap is further influenced by the flying height. In the example displayed in Figure 9, the low FOV of the hyperspec and the thermal sensors has resulted in a smaller footprint than the RGB and multispectral sensors. Figure 9a displays the area for each sensor that has been combined into a single image. The multispectral AgroSensor, with the widest FOV, covers the largest area. The RGB Nikon D800E covers a large area at the highest detail. The pushbroom hyperspectral OCI-UAV-1000 covers a smaller area than the RGB and the thermal Tau 640, with the lowest FOV, has the smallest footprint per image. Figure 9b displays the imagery from each sensor fused into a single dataset.

Figure 9.

Sensor pod outputs. (a) Location map indicating extents of each corrected and stitched image; (b) image registration—thermal, hyperspectral cube and RGB orthmosaic overlaid on FCC created from multispectral sensors.

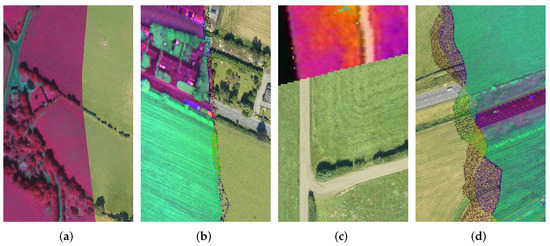

A visual inspection in Figure 10 of the image fusion demonstrates that it has been successful, with field boundaries joining seamlessly in the agricultural areas and roads representing an unbroken network in each image. The field boundaries to the south of the thermal image display registration errors, with an offset evident upon visual inspection. This is potentially due to the issue raised previously with the reduction in image overlap at the edges of the survey area but may also be due to the more homogeneous terrain in the agricultural areas and predominance of grass as a land cover type. The road in the south of the hyperspectral imagery is also mis-aligned, errors here are also potentially being caused by a lack of imagery at the end of each strip.

Figure 10.

Image fusion accuracy assessment; (a) seamless joining of boundaries between RGB and multispectral imagery; (b) high accuracy in the northern element of the image when comparing hyperspectral with RGB; (c) large errors in the south of the thermal image; (d) errors visible for hyperspectral in the south also.

4.3. Spatial Assessment—Absolute Accuracy

To further validate the spatial accuracy of the image fusion process, a second series of tests were designed to compare the outputs from each sensor in terms of their absolute accuracy with their position in the Irish Transverse Mercator (ITM) coordinate reference system available from Ordnance Survey Ireland (OSi) orthophotography. The OSi imagery is used to create both the 1:1000, 1:2500 and 1:5000 national vector mapping for Ireland and accuracies are quoted at root-mean-square error (RMSE) of +/—0.60, 0.69 and 1.22 m respectively [50] for these datasets. Features that were present in both datasets were identified and a series of ground control points were created by recording the X and Y coordinates in ITM correct to two places of decimals. Five ground control points (GCPs) were recorded for each image using an easily identifiable features such as a road marking or field boundary. These coordinates were then compared and the RMSE, mean and standard deviation calculated, Table 2 displays the results of these tests.

Table 2.

2D Positional spatial accuracy of the sensor pod—OSi orthphotography used to provide GCPs for comparison with the national mapping coordinate system.

The highest accuracy was achieved using the RGB imagery, an RMSE of 11.26. There are two potential explanations for this, the first being the fact the the RGB imagery was captured at a predefined location to ensure the correct overlap. The second reason is that the RGB had the highest spatial resolution of all four sensors, and is therefore capable of identifying more matching features per m than the others. The multispectral performed similarly to the RGB, a possible explanation being because its imagery was also registered with the assistance of an on-board GPS logger. The largest errors were present in the systems that were registered using the RGB dataset to provide matching points and a transformation polynomial. The largest error of 15.70 m was apparent for the thermal imagery with a mean error of 13.5 m. This error is potentially due to issues identifying features in the image matching stage, as the thermal imagery has a lower spatial resolution and a lower contrast than the other image types and feature boundaries are not always easily distinguished if they share a thermal signature with their surroundings. Additionally the low FOV of the Tau640 results in a low overlap resulting in even fewer matching points identified. The hyperspectral imagery was registered using the same process, however its accuracy is more acceptable at less than one metre higher than the multispectral.

4.4. Spatial Assessment—Relative Accuracy

A third series of tests were designed to verify the relative accuracy of the imagery by assessing the accuracy of internal dimensions rather than their absolute position in relation to the national coordinate system. Five separate sites were identified that were present in all four images and their area was measured. Due to the restricted FOV of the thermal imagery, only certain features were present in all four images and only one of the large fields was available in all for inclusion in the measurement process. Of the five sites chosen; three were fields (one large and two medium) one was an industrial plant and the fifth was a farmyard. The sites were distributed throughout the imagery to ensure a robust testing of the methodology and that there were no skewed features in a particular location in the image. The dimensions of each area were measured in the OSi orthophotography and then compared with the outputs from the sensor pod. Table 3 displays the results of these tests which demonstrate that each sensor has a high relative accuracy, average discrepancies being approximately 0.1 ha. Once again the largest errors in this case are visible in the thermal imagery, particularly for the farmyard in the south of the imagery. In this area, the thermal imagery had displayed stretching and skewing in the previous tests and this has resulted in an error of approximately 50% when measuring the dimensions of this site. This was a small site and the difficulties in identifying boundaries using the thermal in areas of similar temperature as previously discussed may have lead to human error when measuring the boundary of the site.

Table 3.

Validation of area calculation from the sensor pod—OSi orthophotography used to calculate reference areas.

5. Conclusions

These tests demonstrated the potential of an innovative methodology for combining multiple, low-cost, RPAS dedicated sensors in a sensor pod and performing a high spatial and spectral resolution survey quickly and efficiently. Thermal, RGB, multispectral and hyperspectral sensors were used in a single flight for simultaneous data-capture over five sites. The outputs from the sensor pod were rectified using existing workflows designed for RPAS imagery and the accuracy assessment. The proposed sensor pod and image fusion methodology is capable of recording multi-sensor imagery at a high spatial, spectral and temporal resolution with minimal errors, other than those comparable with an aerial survey relying on GNSS only for referencing imagery and no IMU or ground control. Accuracy estimates were influenced by the manual demarcation of boundaries for measurement of areas and definite stretching was present in most image types at the edges of the survey area. Future versions of the system will incorporate an IMU which will significantly improve spatial accuracy and will ensure that all images are registered independently of each other relying on GNSS position and pitch, roll and yaw recorded using an IMU. The innovative sensor pod developed during this project will enable repeat surveys of large areas at regular intervals, providing a method for remotely mapping countries with extended cloud cover such as Ireland over both marine and terrestrial sites.

Acknowledgments

This publication has emanated in part from research conducted with the financial support of Science Foundation Ireland under Grant Numbers 13/RC/2092, 13/IF/I2778, 13/IF/I2784 and is co-funded under the European Regional Development Fund, by PIPCO RSG and its member companies and the Geological Survey of Ireland-under the Infomar Short Call, 2015. RGB and multispectral imagery were processed with Pix4D Desktop under Pix4D Educational License.

Author Contributions

All authors contributed equally during hardware development and calibration. The aerial surveys were performed by Conor Cahalane, DaireWalsh, Aidan Magee and Sean Mannion. Data processing was performed by Conor Cahalane, DaireWalsh and Aidan Magee. The data validation tests were designed by Conor Cahalane, Tim McCarthy and Aidan Magee. The manuscript was prepared by Conor Cahalane, Tim McCarthy, DaireWalsh and Aidan Magee.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Noureldin, N.A.; Aboelghar, M.A.; Saudy, H.S.; Ali, A.M. Rice yield forecasting models using satellite imagery in Egypt. Egypt. J. Remote Sens. Space Sci. 2013, 16, 125–131. [Google Scholar] [CrossRef]

- Huang, Y.; Lee, M.A.; Thomson, S.J.; Reddy, K.N. Ground-based hyperspectral remote sensing for weed management in crop production. Int. J. Agric. Biol. Eng. 2016, 9, 98–109. [Google Scholar]

- Atzberger, C. Advances in Remote Sensing of Agriculture: Context Description, Existing Operational Monitoring Systems and Major Information Needs. Remote Sens. 2013, 5, 949–981. [Google Scholar] [CrossRef]

- Liu, Y.; Zhang, B.; Wang, L.-M.; Wang, N. A self-trained semisupervised SVM approach to the remote sensing land cover classification. Comput. Geosci. 2013, 59, 98–107. [Google Scholar] [CrossRef]

- Ghamisi, P.; Dalla Mura, M.; Benediktsson, J.A. A survey on spectral–spatial classification techniques based on attribute profiles. IEEE Trans. Geosci. Remote Sens. 2015, 53, 2335–2353. [Google Scholar] [CrossRef]

- Cai, S.; Liu, D. Detecting Change Dates from Dense Satellite Time Series Using a Sub-Annual Change Detection Algorithm. Remote Sens. 2015, 7, 8705–8727. [Google Scholar] [CrossRef]

- Schulz, J.J.; Cayuela, L.; Echeverria, C.; Salas, J.; Rey Benayas, J.M. Monitoring land cover change of the dryland forest landscape of Central Chile (1975–2008). Appl. Geogr. 2010, 30, 436–447. [Google Scholar] [CrossRef]

- Giri, C.; Pengra, B.; Long, J.; Loveland, T.R. Next generation of global land cover characterization, mapping, and monitoring. Int. J. Appl. Earth Obs. Geoinf. 2013, 25, 30–37. [Google Scholar] [CrossRef]

- Hansen, M.C.; Potapov, P.V.; Moore, R.; Hancher, M.; Turubanova, S.A.; Tyukavina, A.; Thau, D.; Stehman, S.V.; Goetz, S.J.; Loveland, T.R.; et al. High-resolution global maps of 21st-century forest cover change. Science 2013, 342, 850–853. [Google Scholar] [CrossRef] [PubMed]

- Niraula, R.R.; Gilani, H.; Pokharel, B.K.; Qamer, F.M. Measuring impacts of community forestry program through repeat photography and satellite remote sensing in the Dolakha district of Nepal. J. Environ. Manag. 2013, 126, 20–29. [Google Scholar] [CrossRef] [PubMed]

- Monteys, X.; Harris, P.; Caloca, S.; Cahalane, C. Spatial Prediction of Coastal Bathymetry Based on Multispectral Satellite Imagery and Multibeam Data. Remote Sens. 2015, 7, 13782–13806. [Google Scholar] [CrossRef]

- Landsat, Landsat 8 Characteristics. Available online: https://landsat.usgs.gov/landsat-8-history (accessed on 12 January 2017).

- Sentinel 2a, Sentinel 2a Handbook. Available online: https://sentinels.copernicus.eu/web/sentinel/user-guides/sentinel-2-msi (accessed on 12 January 2017).

- Hyperion, Hyperion Datasheet. Available online: https://eo1.usgs.gov/sensors/hyperion (accessed on 12 January 2017).

- Met Eireann, Ireland: Cloud Cover Report. Available online: http://www.met.ie/climate-ireland/sunshine.asp?prn=1 (accessed on 12 January 2017).

- Priem, F.; Canters, F. Synergistic Use of LiDAR and APEX Hyperspectral Data for High-Resolution Urban Land Cover Mapping. Remote Sens. 2016, 8, 787. [Google Scholar] [CrossRef]

- Gholizadeh, A.; Mišurec, J.; Kopačková, V.; Mielke, C.; Rogass, C. Assessment of Red-Edge Position Extraction Techniques: A Case Study for Norway Spruce Forests Using HyMap and Simulated Sentinel-2 Data. Forests 2016, 7, 226. [Google Scholar] [CrossRef]

- Hueni, A.; Damm, A.; Kneubuehler, M.; Schläpfer, D.; Schaepman, M.E. Field and Airborne Spectroscopy Cross Validation—Some Considerations. IEEE J. Sel. Top. Appl. Earth. Obs. Remote Sens. 2016, PP, 1–19. [Google Scholar] [CrossRef]

- Cahalane, C.; McCarthy, T. UAS Flight planning—An Initial Investigation into the Influence of VTOL UAS Mission Parameters on Orthomosaic and DSM Accuracy. In Proceedings of the RSPSoc Annual Conference, Glasgow, Scotland, 4–6 September 2013.

- Rosnell, T.; Honkavaara, E.; Nurminen, K. Geometric Processing of Multi-Temporal Image Data Collected By Light Uav Systems. ISPRS—Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2011, XXXVIII-1/C22, 63–68. [Google Scholar] [CrossRef]

- Vallet, J.; Panissod, F.; Strecha, C.; Tracol, M. Photogrammetric Performance of an Ultra Light Weight Swinglet “Uav”. ISPRS—Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2011, XXXVIII-1/C22, 253–258. [Google Scholar] [CrossRef]

- Neitzel, F.; Klonowski, J. Mobile 3D mapping with a low-cost UAV system. ISPRS—Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2011, XXXVIII, 1–6. [Google Scholar] [CrossRef]

- Primicerio, J.; Di Gennaro, S.F.; Fiorillo, E.; Genesio, L.; Lugato, E.; Matese, A.; Vaccari, F.P. A flexible unmanned aerial vehicle for precision agriculture. Precis. Agric. 2012, 13, 517–523. [Google Scholar] [CrossRef]

- Gómez-Candón, D.; De Castro, A.I.; López-Granados, F. Assessing the accuracy of mosaics from unmanned aerial vehicle (UAV) imagery for precision agriculture purposes in wheat. Precis. Agric. 2014, 15, 44–56. [Google Scholar] [CrossRef]

- Steen, K.A.; Villa-Henriksen, A.; Therkildsen, O.R.; Green, O. Automatic Detection of Animals in Mowing Operations Using Thermal Cameras. Sensors 2012, 12, 7587–7597. [Google Scholar] [CrossRef] [PubMed]

- Riegl, Riegl Bathycopter. Available online: http://www.riegl.com/products/unmanned-scanning/newbathycopter/ (accessed on 12 January 2017).

- Flener, C.; Vaaja, M.; Jaakkola, A.; Krooks, A.; Kaartinen, H.; Kukko, A.; Kasvi, E.; Hyyppä, H.; Hyyppä, J.; Alho, P. Seamless Mapping of River Channels at High Resolution Using Mobile LiDAR and UAV-Photography. Remote Sens. 2013, 5, 6382–6407. [Google Scholar] [CrossRef]

- Husson, E.; Hagner, O.; Ecke, F. Unmanned aircraft systems help to map aquatic vegetation. Appl. Veg. Sci. 2014, 17, 567–577. [Google Scholar] [CrossRef]

- Tomic, T.; Schmid, K.; Lutz, P.; Domel, A.; Kassecker, M.; Mair, E.; Grixa, I.L.; Ruess, F.; Suppa, M.; Burschka, D. Toward a Fully Autonomous UAV: Research Platform for Indoor and Outdoor Urban Search and Rescue. IEEE Robot. Autom. Mag. 2012, 19, 46–56. [Google Scholar] [CrossRef]

- DJI, Matrice 600 Datasheet. Available online: http://www.dji.com/matrice600 (accessed on 12 January 2017).

- DJI, S900 Datasheet. Available online: http://www.dji.com/spreading-wings-s900 (accessed on 12 January 2017).

- Bramor, Bramor c-Astral Safe Operating Parameters. Available online: http://www.c-astral.com/en/products/bramor-geospecs (accessed on 12 January 2017).

- Villa, T.F.; Gonzalez, F.; Miljievic, B.; Ristovski, Z.D.; Morawska, L. An Overview of Small Unmanned Aerial Vehicles for Air Quality Measurements: Present Applications and Future Prospectives. Sensors 2016, 16, 1072. [Google Scholar] [CrossRef] [PubMed]

- Salamí, E.; Barrado, C.; Pastor, E. UAV Flight Experiments Applied to the Remote Sensing of Vegetated Areas. Remote Sens. 2014, 6, 11051–11081. [Google Scholar] [CrossRef]

- UAV Factory, Penguin C UAS Datasheet. Available online: http://www.uavfactory.com/product/74 (accessed on 12 January 2017).

- Irish Aviation Authority, Statutory Instrument 563 of 2015. Available online: https://www.iaa.ie/docs/default-source/publications/legislation/statutory-instruments-(orders)/small-unmanned-aircraft-(drones)-and-rockets-order-s-i-563-of-2015.pdf?sfvrsn=6 (accessed on 12 January 2017).

- Precision Hawk, Precision Hawk DJI Farmer Datasheet. Available online: http://www.precisionhawk.com/DJIFarmer (accessed on 7 December 2016).

- Leica, Leica Aibotix X6 V2 Datasheet. Available online: http://uas.leica-geosystems.us/resources (accessed on 12 January 2017).

- Cahalane, C.; McElhinney, C.P.; Lewis, P.; McCarthy, T. Calculation of target-specific point distribution for 2D mobile laser scanners. Sensors 2014, 14, 9471–9488. [Google Scholar] [CrossRef] [PubMed]

- McCarthy, T.; Fotheringham, A.S.; O’Rian, G. Compact Airborne Image Mapping System (CAIMS). ISPRS—Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2007, XXXVI, 198–202. [Google Scholar]

- Airinov, Agrosensor Multispectral Camera Specifications. Available online: http://www.airinov.fr/en/uav-sensor/agrosensor/ (accessed on 12 January 2017).

- FLIR, Tau 640 Specifications and Characteristics. Available online: http://www.unmannedsystemstechnology.com/wp-content/uploads/2012/04/FLIR-Tau2-Brochure.pdf (accessed on 12 January 2017).

- McCaul, M.; Barland, J.; Cleary, J.; Cahalane, C.; McCarthy, T.; Diamond, D. Combining Remote Temperature Sensing with in-Situ Sensing to Track Marine/Freshwater Mixing Dynamics. Sensors 2016, 16, 1402. [Google Scholar] [CrossRef] [PubMed]

- Bayspec, OCIUAV 1000 Datasheet. Available online: http://www.bayspec.com/spectroscopy/oci-uav-hyperspectral-camera (accessed on 12 January 2017).

- Vakalopoulou, M.; Karantzalos, K. Automatic Descriptor-Based Co-Registration of Frame Hyperspectral Data. Remote Sens. 2014, 6, 3409–3426. [Google Scholar] [CrossRef]

- Nikon, Nikon D800E Datasheet. Available online: http://cdn-10.nikon-cdn.com/pdf/manuals/dslr/D800_EN.pdf (accessed on 12 January 2017).

- Zeiss, Zeiss ZF2 Datasheet. Available online: https://www.zeiss.com/camera-lenses/en_de/website/photography/what_makes_the_difference/camera_mounts/zf2_lenses.html (accessed on 12 January 2017).

- Aeroscientific, Aeroscientific Flight Planning Software. Available online: http://www.aeroscientific.com.au/ (accessed on 12 January 2017).

- Aviatrix, Aviatrix Flight Mission Software. Available online: http://www.aeroscientific.com.au/Aviatrix-demo-system-overview-Nov14.pdf (accessed on 12 January 2017).

- Prendergast, W.P.; Corrigan, P.; Scully, P.; Shackleton, C.; Sweeny, B. Coordinate Reference Systems. In Best Practice Guidelines for Precise Surveying in Ireland, 1st ed.; Irish Institution of Surveyors: Dublin, Ireland, 2004; pp. 17–20. [Google Scholar]

© 2017 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).