Combining Markov and Semi-Markov Modelling for Assessing Availability and Cybersecurity of Cloud and IoT Systems

Abstract

:1. Introduction

- Cloud and IoT systems are complex, multi-component, distributed systems. Therefore, a certain level of generalization is necessary for the analysis and assessment of reliability and cybersecurity, which determines the risks to the accuracy of calculating indicators. Indeed, such systems consist of hundreds and thousands of software and hardware components that have different vulnerabilities and intensities of failures and different laws of distribution of time to failure and recovery, as well as their values. Cloud and IoT systems (CISs) have many common features: they are often combined in integrated cyber-physical systems and IT infrastructure; therefore, it is advisable to solve the problems of assessment and provision of reliability, availability, and cybersecurity from a single point of view.

- The peculiarity of CISs is that their structures and values of parameters affecting the evaluation of indicators are not fixed, although cloud providers providing relevant services use simplified methods based on determining the ratio of the time when the system is in an operational condition until the total time of its use, considering downtime. This is a simplified definition of the stationary value of the availability function, considering downtime for various reasons (failures, cyber-attacks, lack of information sources).

- CISs operate in conditions of uncertainty and changes in the parameters of the physical and informational environment. This factor is particularly influential, as it causes uncertainty in the assessment of dependability and cybersecurity parameters of individual components and systems as a whole.

- firstly, the possibility of the reliable selection and construction of sufficiently fixed models, that is, models selected and intended for the evaluation of systems throughout the entire life cycle, including the operation stage;

- secondly, the possibility of building such sufficiently fixed models in general, considering various factors that lead to changes in the system and environmental parameters.

- What approach can be utilized to provide accurate CIS dependability and availability assessments considering cybersecurity issues?

- Which model attributes are important to classify Markov, semi-Markov, and other derived models that are applied to assess CISs and formulate the tasks to choose appropriate models?

- What consequences should be accepted and combined with the different types of MMs and SMMs for CISs considering the features and parameters of the physical and cyber environment?

- How to apply the methodology of choice and combination of MMs, SMMs, and their modifications to assess the availability and cybersecurity of implemented CISs?

2. State of the Art

- Cloud and IoT systems were considered in 25 and 8 papers, respectively;

- Only Markov and semi-Markov models were utilized in 23 and 9 papers, respectively;

- Both Markov and semi-Markov models were utilized in 1 paper;

- Approaches to availability and reliability assessment of IoT/Cloud systems were presented in 17 papers;

- Twelve papers demonstrated approaches to cybersecurity assessment of IoT/Cloud systems;

- Four papers considered the intrusion tolerance issues related to IoT/Cloud systems.

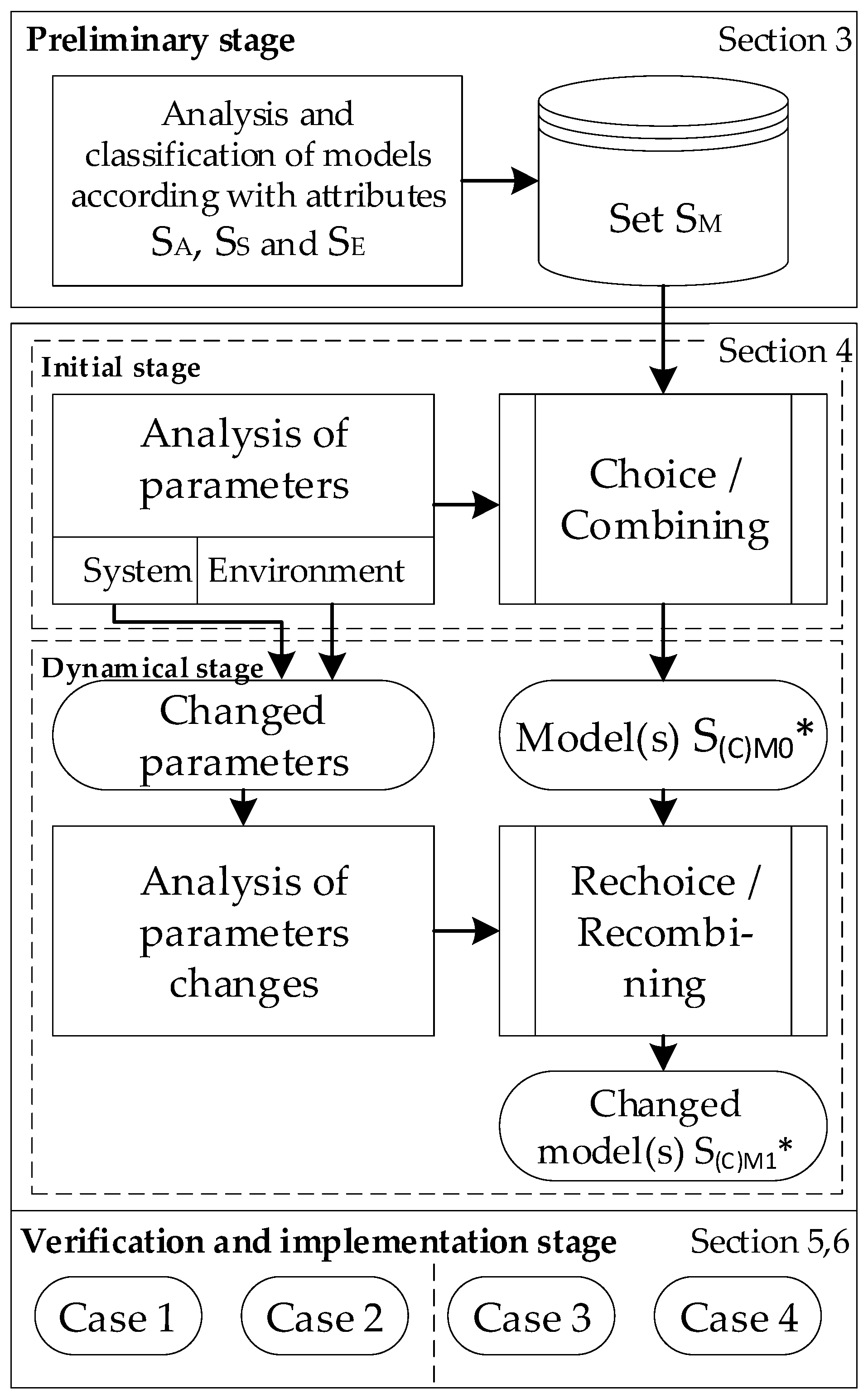

3. Approach and Stages

3.1. Selection of Models

- are (not only) selected in such a way that the peculiarities of systems, as well as physical and cyber environments during their development, are considered,

- (but also) vary (change) with the systems during their use, so that various events, changes in failure parameters, cyber-attacks, privacy violations, recovery, etc. are considered for improving the adequacy and accuracy of the assessment of availability and cybersecurity.

3.2. Combination of Models

3.3. Attributes and Indicators

- reliability as a continuity of correct functioning and delivery of service;

- availability as availability for correct functioning and delivery of service at any time;

- cybersecurity as a composite of the sub-attributes, first of all, integrity (absence of improper system alterations) and accessibility to services.

3.4. Stages of Modelling and Assessment

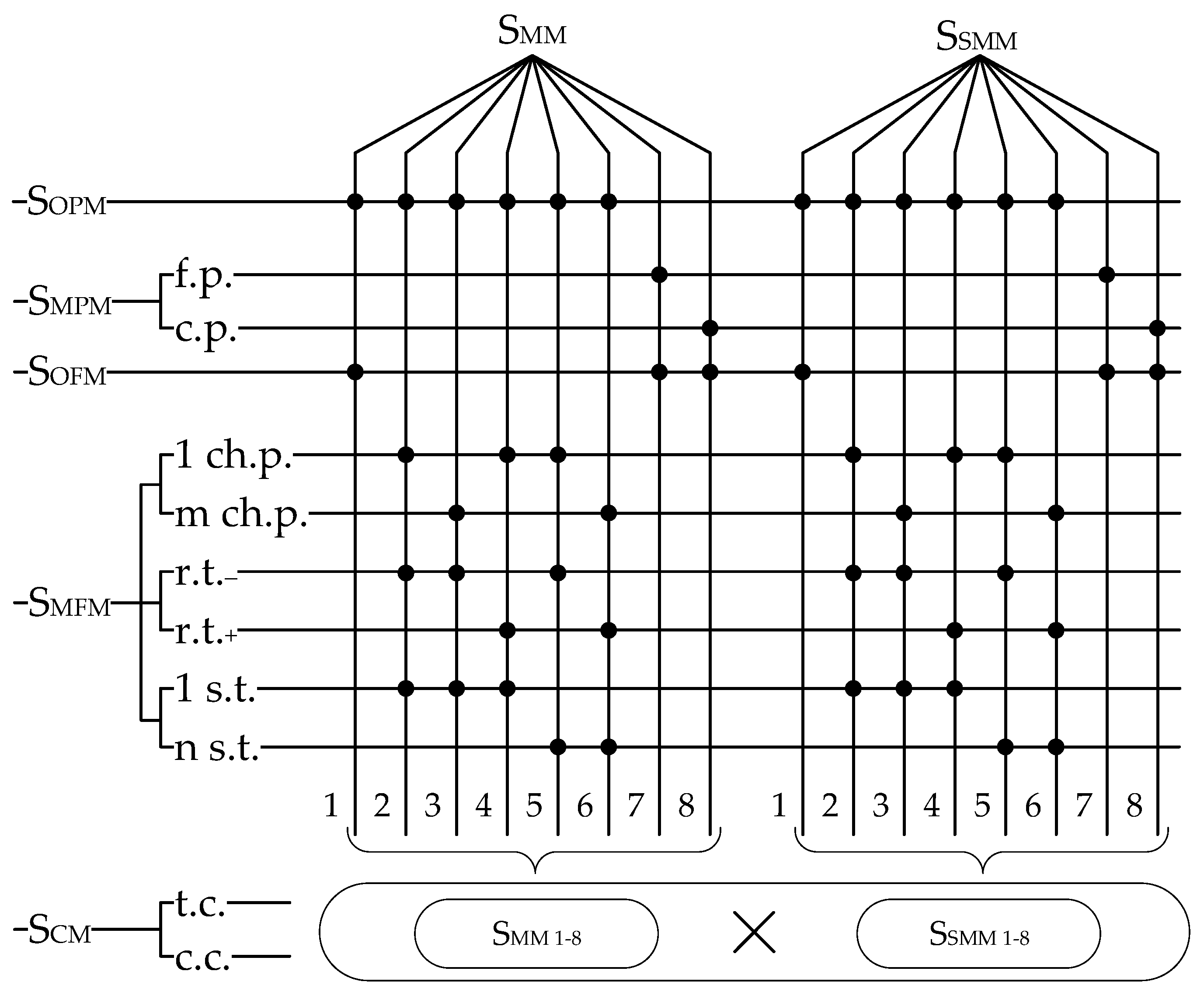

4. Classification of the Models for Choice and Combining

- the degree of Markovity;

- the number and types of phases;

- the number and types of fragments;

- the possibility of combining models.

4.1. The Degree of Markovity

4.2. Multiphase Models

4.3. Multi-Fragmental Models

- the number of changed parameters: MFMs with one (SMFM, 1 ch.p.) and more (SMFM, m ch.p.) changed parameters;

- the presence of reverse transitions between fragments: MFMs without reverse transitions (SMFM, r.t.-) and MFMs with reverse transitions (SMFM, r.t.+). Due to various types of faults and vulnerabilities for some of them, it is advisable to restart without deleting; for others, it is necessary to delete);

- the number of steps of transitions between fragments: MFM with one (SMFM, 1 s.t.) and more (SMFM, n s.t.) steps of transitions when several faults or vulnerabilities appear and/or are removed at once. In this case, additional distribution laws and their parameters should be identified (for example, for several simultaneously occurring/detected and eliminated design faults and vulnerabilities).

4.4. Combined Models

4.5. General Classification

- one-phase and one-fragmental models MM1 and MSM1;

- one-phase and multi-fragmental models MM2 and MSM2 with one changed parameter, one-step transition, and without reverse transitions;

- one-phase and multi-fragmental models MM3 and MSM3 with m changed parameters, one-step transition and without reverse transitions;

- one-phase and multi-fragmental models MM4 and MSM4 with one changed parameter, one-step transition, and reverse transitions;

- one-phase and multi-fragmental models MM5 and MSM5 with one changed parameter, n-step transitions and reverse transitions;

- one-phase and multi-fragmental models MM6 and MSM6 with m changed parameters, n-step transition and reverse transitions;

- one-fragmental and multi-phase models MM7 and MSM7 with a fixed value of the period of change;

- one-fragmental and multi-phase models MM8 and MSM8 with the changed value of the period.

5. Method of Model Choice and Combining

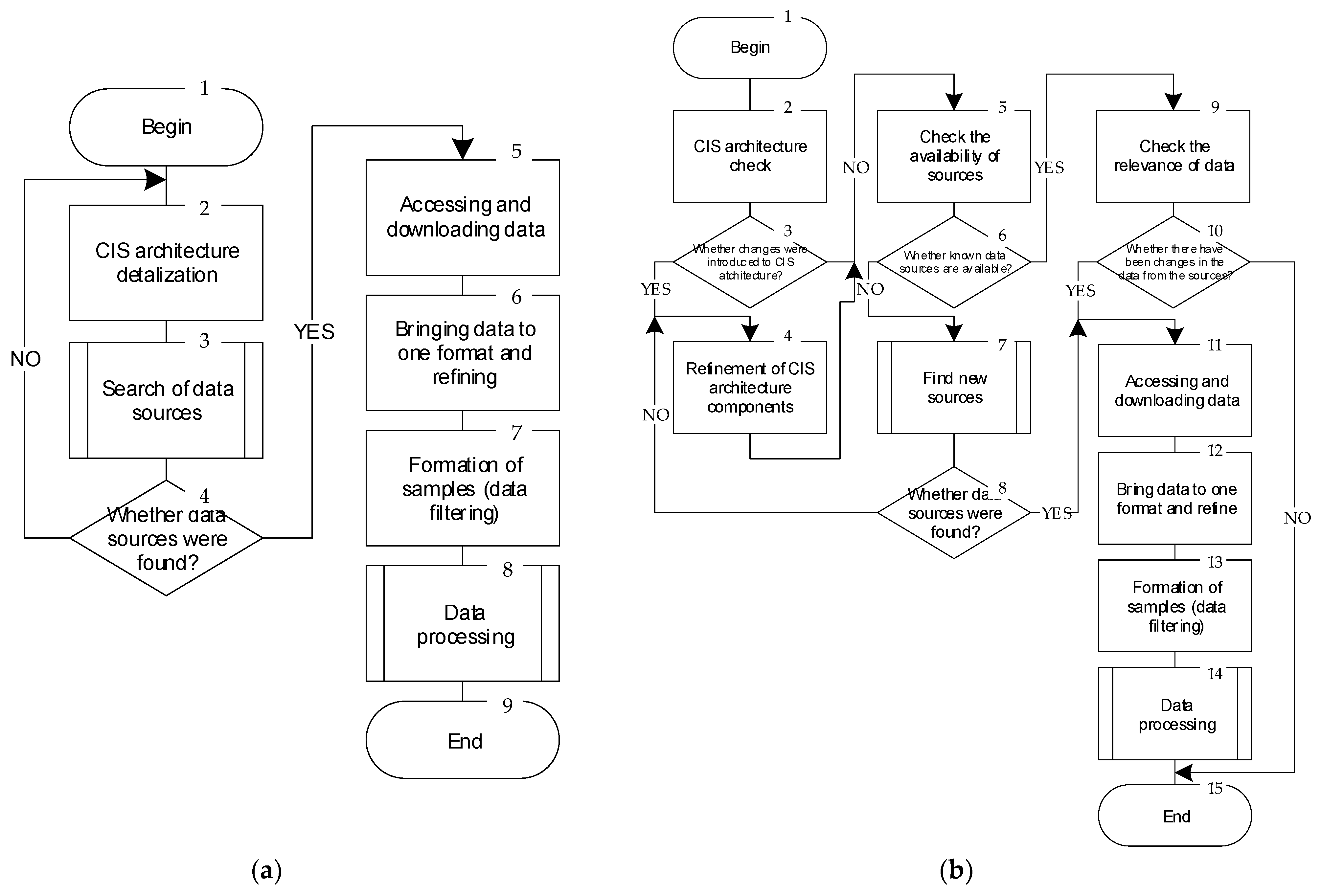

5.1. General Algorithm

5.2. Algorithm of Choice

5.3. Algorithms of Data Analysis for Combining Models

- statistical studies of input parameters of the system, results of checking statistical hypotheses about compliance with the exponential distribution law (or other corresponding distribution laws);

- requirements of control documents, standards, norms, rules, contracts, and customer agreements if they indicate the type or class of the model;

- time and computational resources for modelling (a model that is too detailed may be incalculable due to a lack of resources);

- analysis of the work of other researchers, ease of use of the resulting indicators, and other secondary factors.

6. Implementation of Suggested Approach and Algorithms of Choice and Combining Models

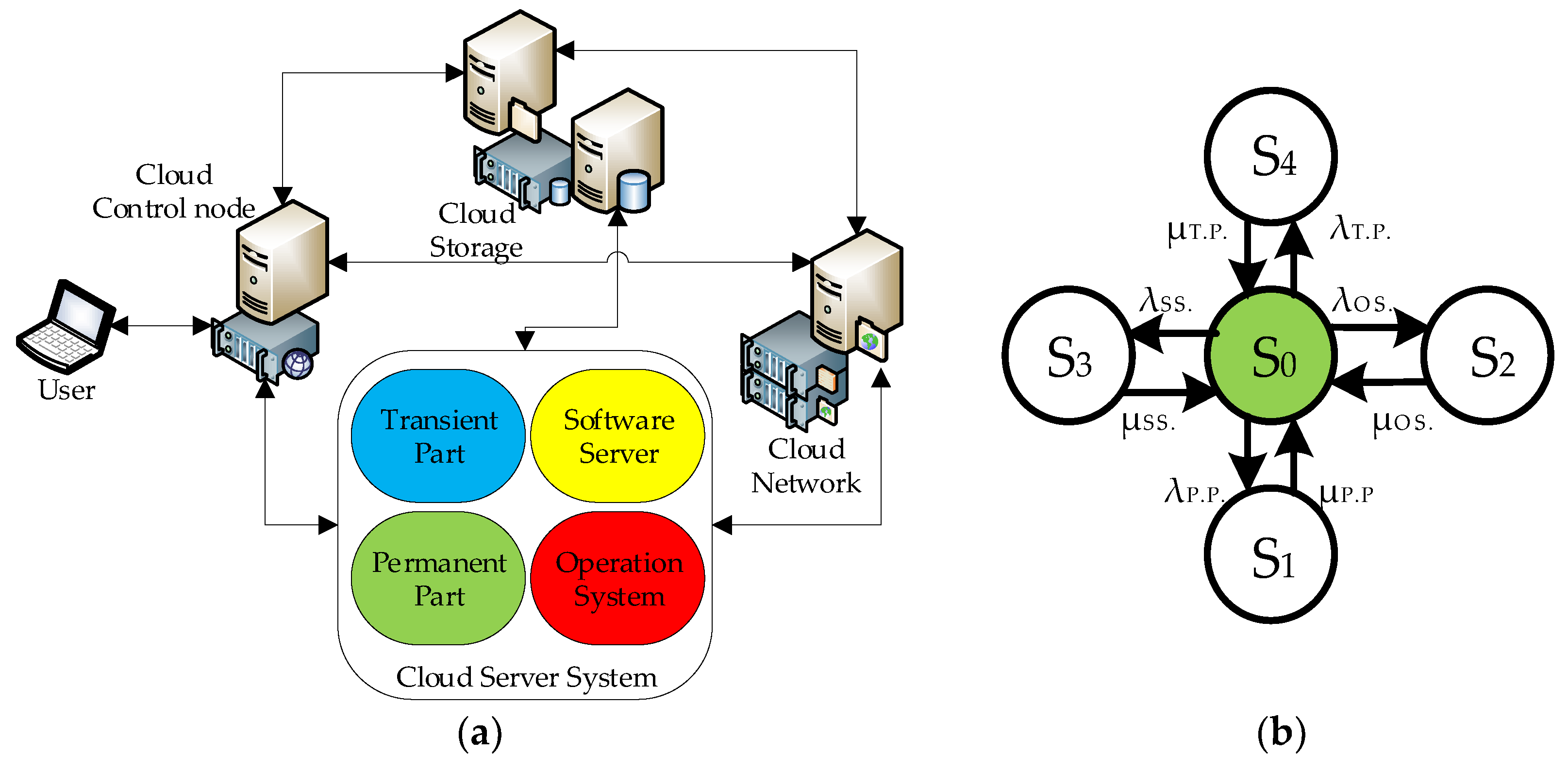

6.1. System 1: Cloud Server System

6.1.1. General Description of the CSS

- the first option, when block 6 is involved and a Markov model is built;

- the second option, when block 7 of the selection algorithms for building a semi-Markov model is involved.

6.1.2. Development of the CSS Model

Markov’s Availability Model

Semi-Markov Availability Model

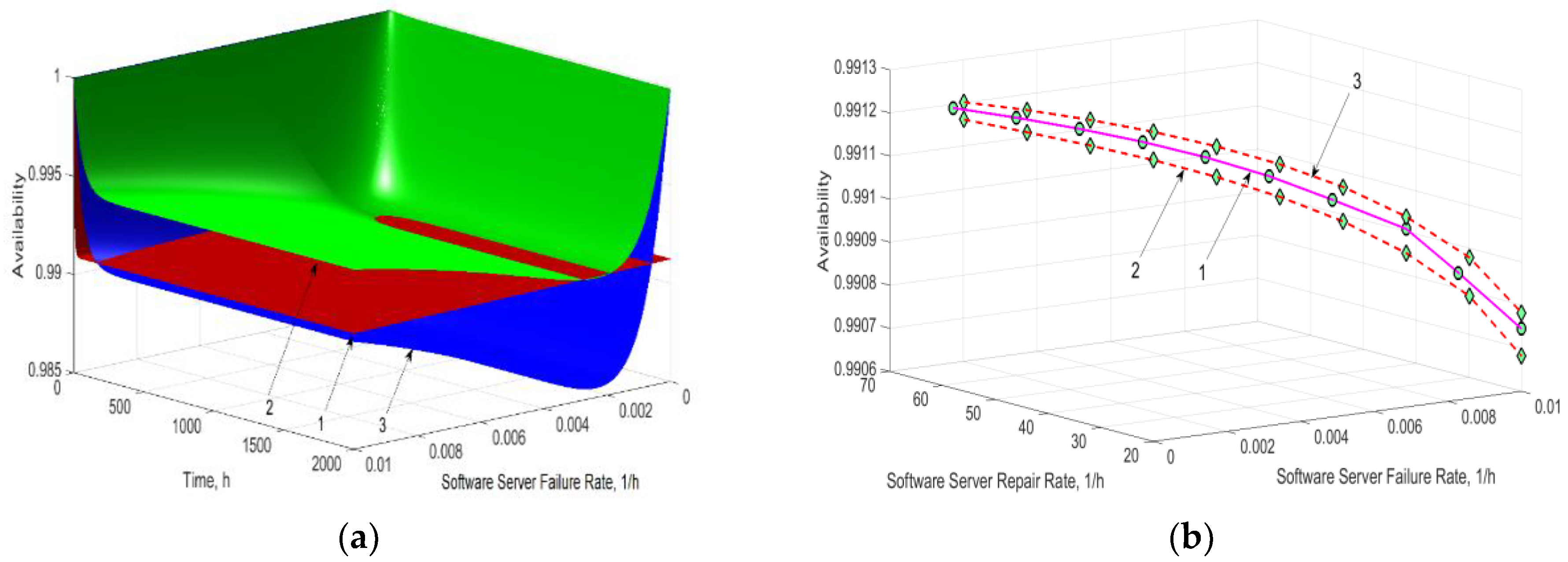

6.1.3. Research and Analysis of the Results

- the use of the Markov model allows obtaining the average result of the availability in comparison with the semi-Markov model for different distribution laws;

- if the statistical hypotheses for the input parameters assessment tend to the Weibull distribution with the parameter α = 0.5…1.5, using the MM is possible to simplify the modelling. However, such values of the Weibull parameter are rarely applicable for the assessment of cyber-attacks on the CIS, leading to the construction of more complex models.

6.2. System 2: Cloud Video System

6.2.1. General Description of the CVS

6.2.2. Development of the CVS Model

RBD Availability Model

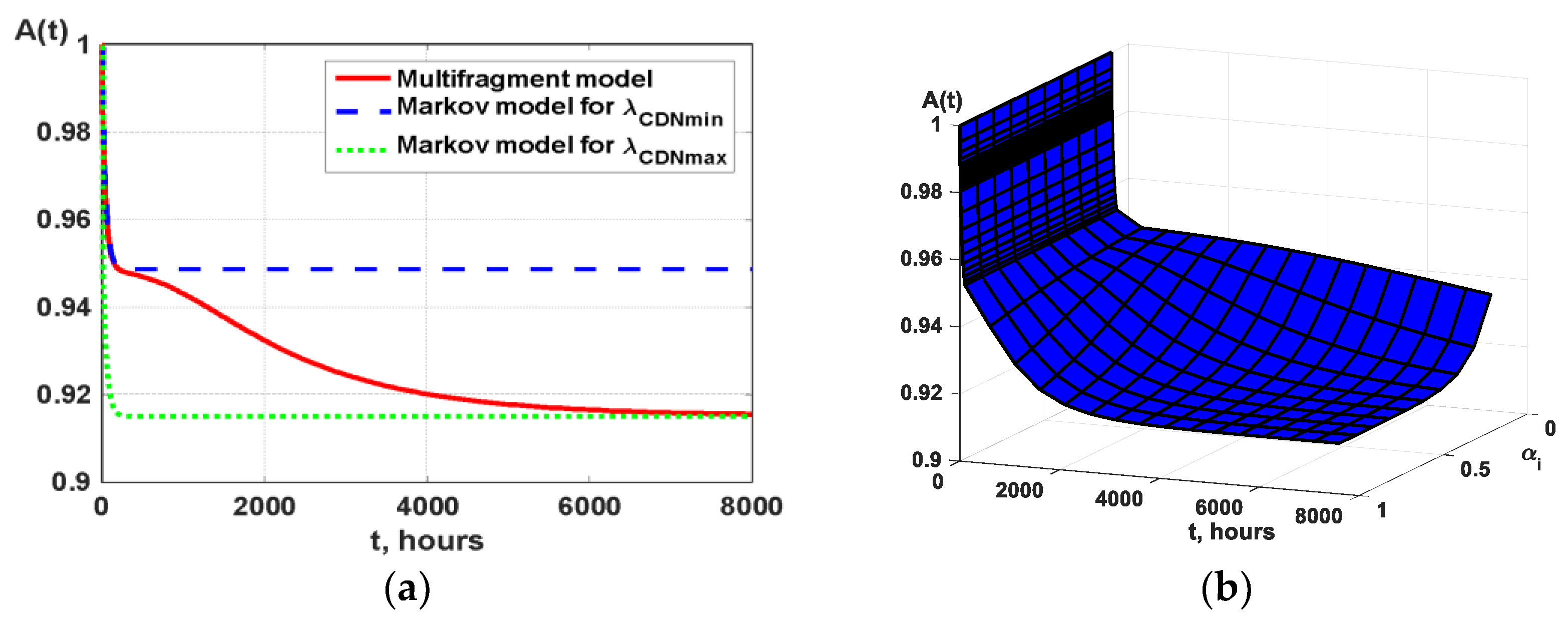

Multi-Fragment Availability Model Considering Attacks on CDN

6.2.3. Research and Analysis of the Results

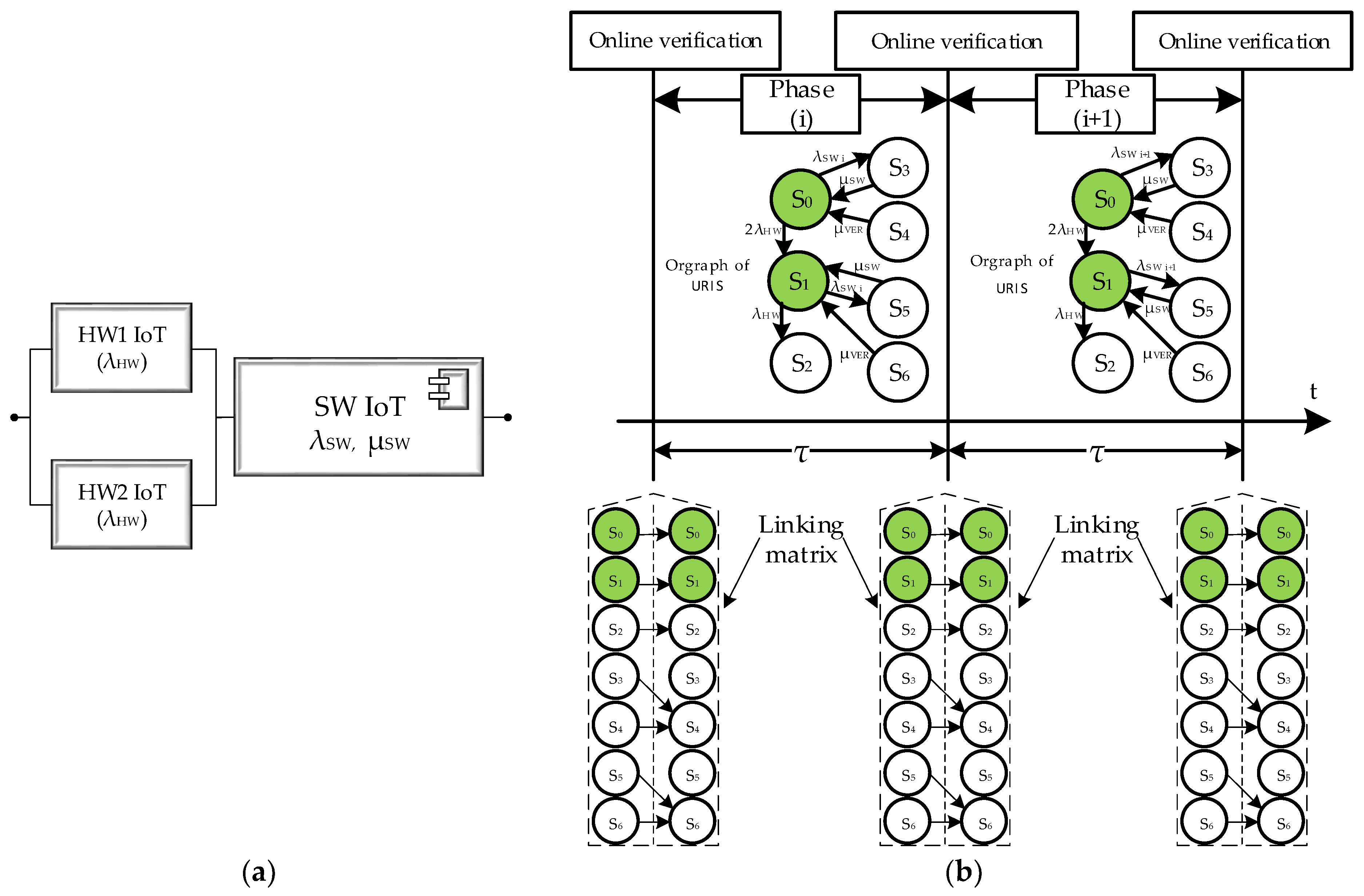

6.3. System 3: Unmanned Robotic IoT System (URIS)

6.3.1. General Description of URIS

6.3.2. Development of the URIS Model

- operational (S0 and S1—with a detected failure of one hardware channel),

- inoperable (S2—with two detected hardware errors, S3 and S5—with detected software errors; S4 and S6—states of software patching).

6.3.3. Research and Analysis of the Results

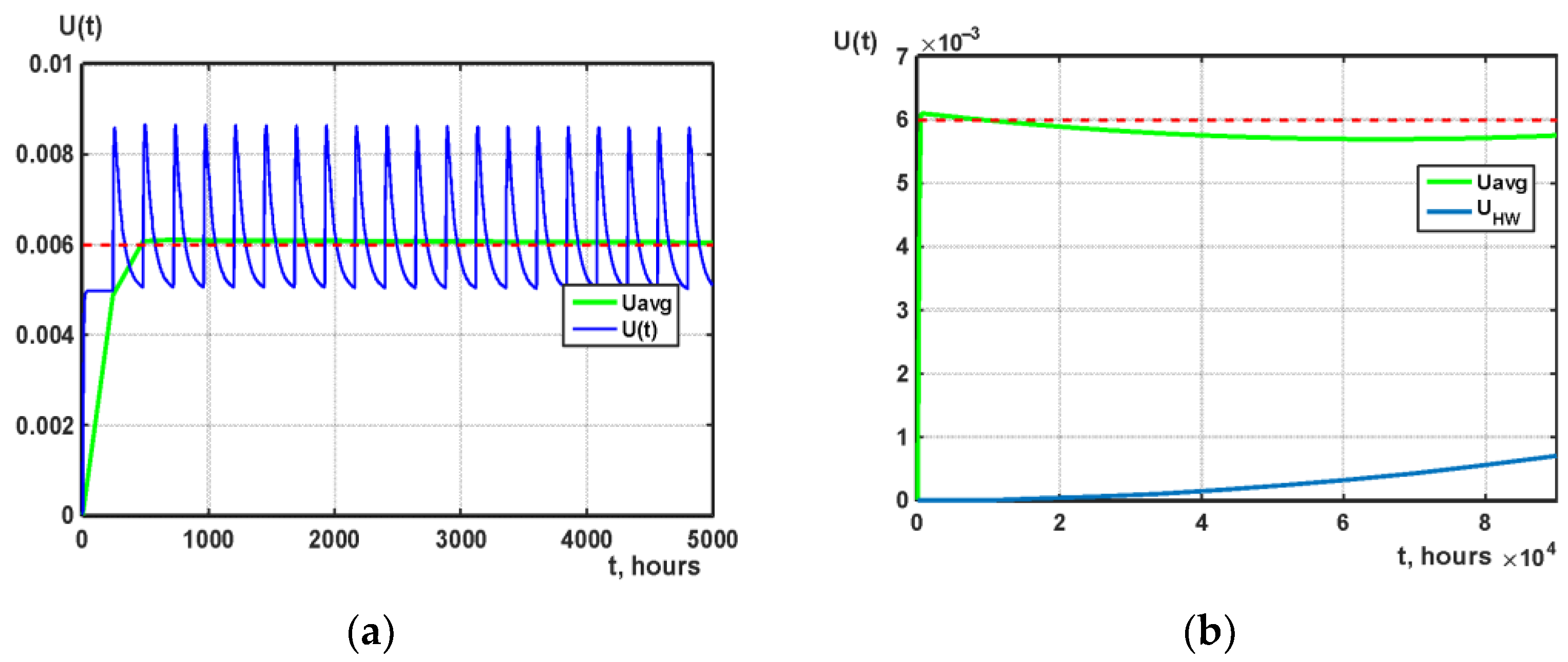

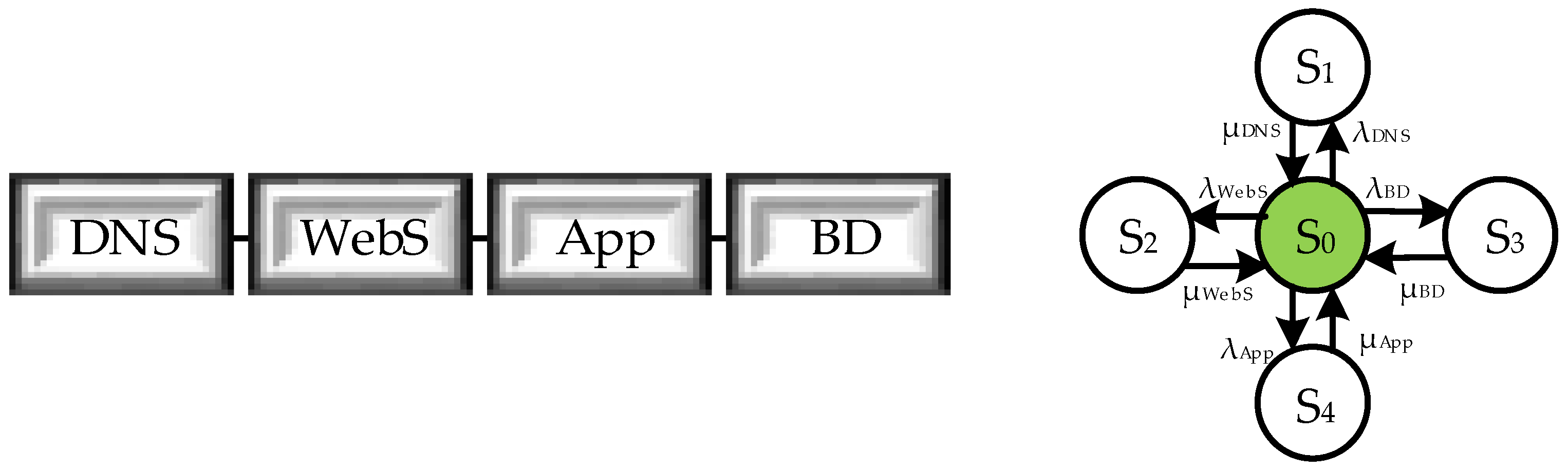

6.4. System 4: Web Service of Cloud System (WSC)

6.4.1. General Description of the WSC

6.4.2. Development of the WSC Model

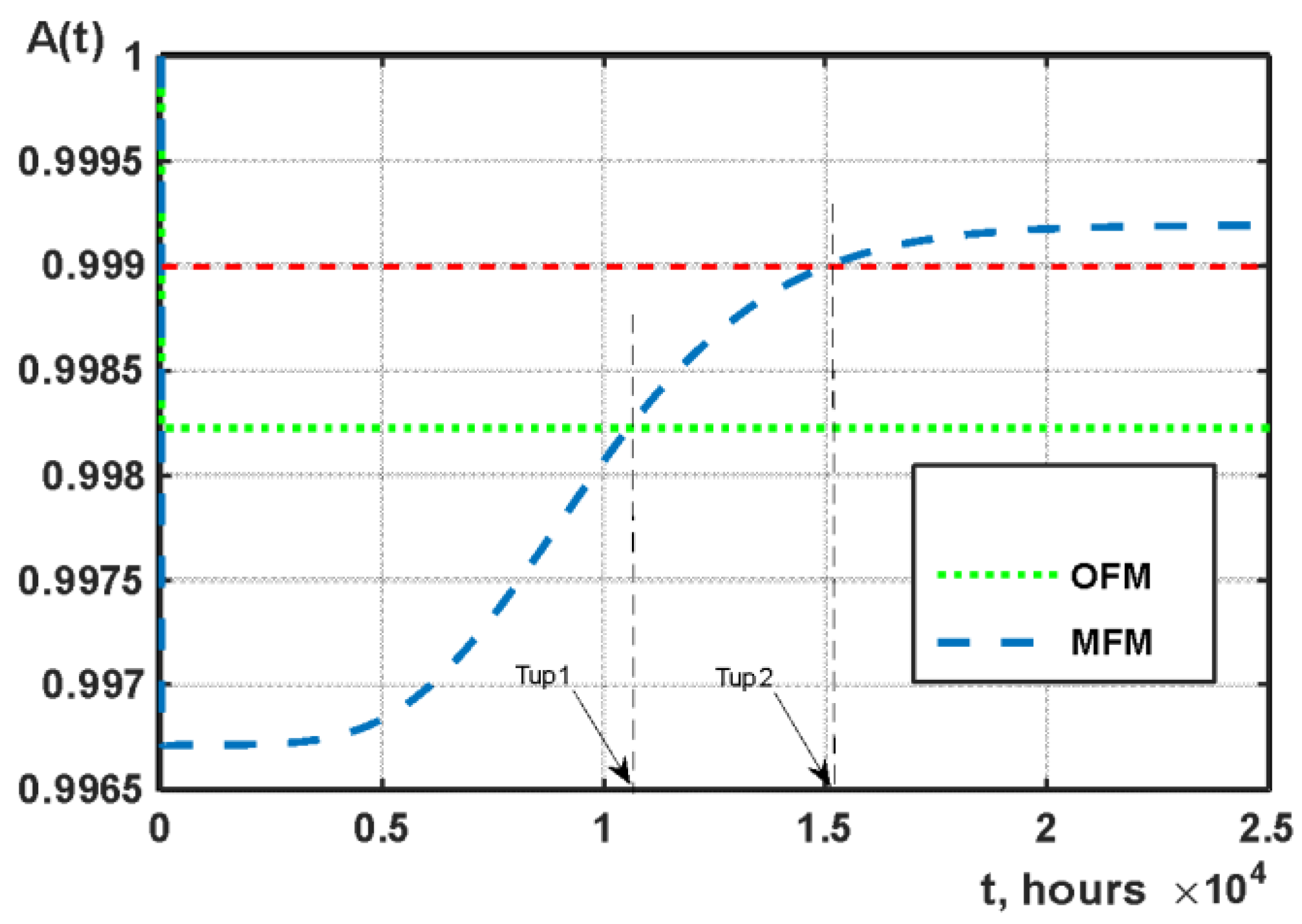

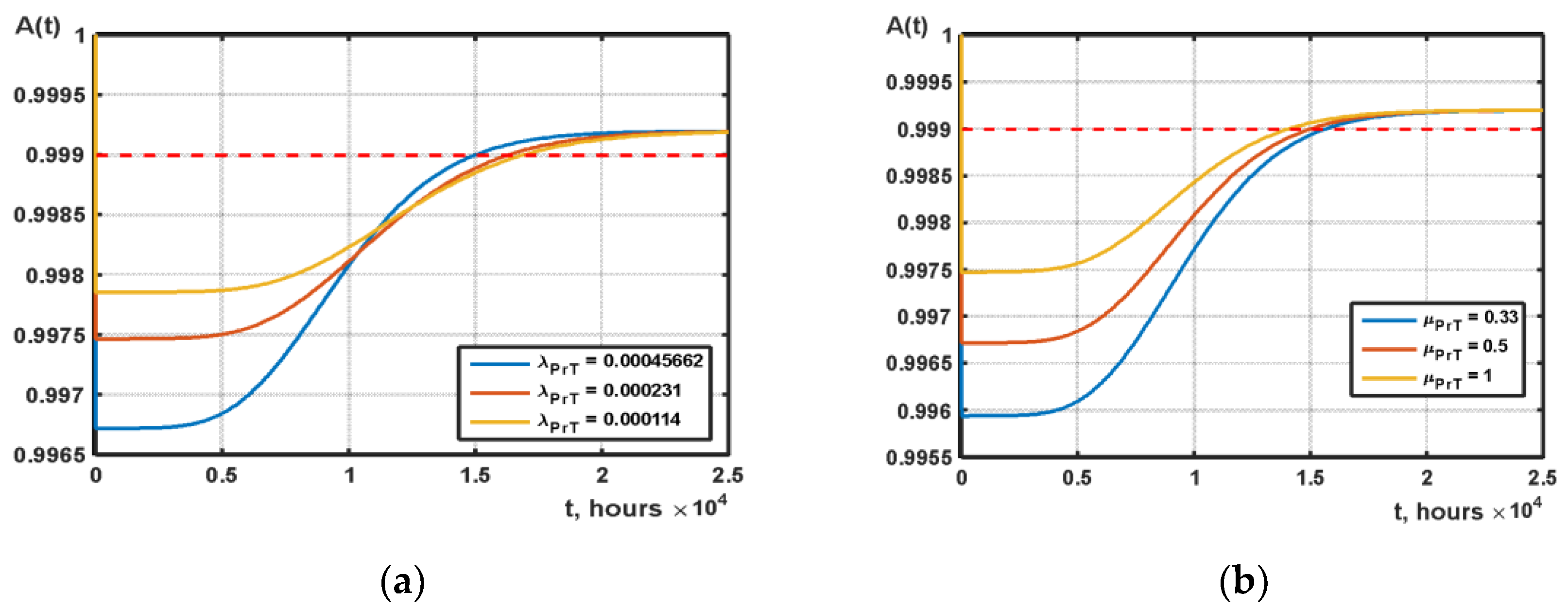

6.4.3. Research and Analysis of the Results

- the time when the multi-fragment model starts to show a gain in availability relative to the Markov model, which considers attacks Tup1 = 10,450.7 h.

- the time when the multi-frame model starts meeting the availability requirement of 0.999 Tup2 = 14,949.4 h.

- the greatest decrease in the level of availability of the multi-fragment model relative to the Markov single-fragment model ΔA = 0.0015.

7. Discussion

- the first one postulates continuous evolution of the model(s) together with the evolution of the system caused by (1) changing of its parameters and parameters of the physical and cyber environment, and (2) events that should be considered to assure more accurate calculation or prediction of CIS indicators;

- the second “C” describes the necessity of collecting data about faults, failures, vulnerabilities, cyber-attacks, violations of data privacy, applied patches, etc. to obtain as much actual data as possible for the assessment of CIS;

- the third one provides comparing and renewed classification of a model set based on analysis of CIS operation (or reengineering) data. It allows actualizing the model base and assuring completeness of the set of models that should be applied for assessment;

- the fourth “C” creates the possibility of choice and utilizing “off-the-shelf” models with understandable techniques for their development and implementation;

- the last one provides a composition of different models during the application of CIS by time, component or mixed combining.

8. Conclusions and Future Directions

- development of the framework for C5 approach automation for different types of cloud and IoT systems. The results of the paper (schemes and algorithms) can be used for designing such a framework as a service or embedded technology for online support of operating systems;

- more detailed development of the techniques for combining models and analytical description of the combined once considering different reasons for switching and composition of the models. It requires the specification of all important events and conditions that should be taken into account and strong procedures of implementing options for combining;

- collection of the data for estimation and prediction of CIS models’ parameters. It concerns, first of all, information about vulnerabilities and cyber-attacks, and application of ML to calculate parameters;

- the C5 approach should be added by proactive techniques for assessment and assurance of CIS dependability, cybersecurity, privacy, and resilience based on Big Data analytics [59,60] and machine learning [61] methods to analyze data and support decision-making about choice and combining and recombining models;

- application of this approach for combining hidden MMs and SMMs to assess privacy [62] extending the model base.

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- National Institute of Standards and Technology. NIST SP 500-291. Cloud Computing Standards Roadmap (August 2011). Available online: https://tsapps.nist.gov/publication/get_pdf.cfm?pub_id=909024 (accessed on 9 July 2022).

- European Union Agency for Cybersecurity (ENISA). EUCS—Cloud Services Scheme (December 2020). Available online: https://www.enisa.europa.eu/publications/eucs-cloud-service-scheme (accessed on 9 July 2022).

- IEEE Standard Association. Industry Connections Cybersecurity in Agile Cloud Computing. Cybersecurity Standards for Cloud Access (June 2020). Available online: https://standards.ieee.org/wp-content/uploads/2022/06/Cybersecurity_Standards_Cloud_Access.pdf (accessed on 9 July 2022).

- Xing, L. Reliability in Internet of Things: Current status and future perspectives. IEEE Internet Things J. 2020, 7, 6704–6721. [Google Scholar] [CrossRef]

- Prokhorenko, V.; Babar, M.A. Architectural Resilience in Cloud, Fog and Edge Systems: A Survey. IEEE Access 2020, 8, 28078–28095. [Google Scholar] [CrossRef]

- Kolisnyk, M. Vulnerability analysis and method of selection of communication protocols for information transfer in Internet of Things systems. Radioelectron. Comput. Syst. 2021, 1, 133–149. [Google Scholar] [CrossRef]

- Matos, R.; Dantas, J.; Araujo, J.; Trivedi, K.S.; Maciel, P. Redundant Eucalyptus Private Clouds: Availability Modeling and Sensitivity Analysis. J. Grid Comput. 2017, 15, 1–22. [Google Scholar] [CrossRef]

- Frolov, V.V. Analysis of Approaches Providing Security of Cloud Sevices. Radioelectron. Comput. Syst. 2020, 1, 70–82. [Google Scholar] [CrossRef]

- Junejo, A.K.; Jokhio, I.A.; Jan, T. A Multi-Dimensional and Multi-Factor Trust Computation Framework for Cloud Services. Electronics 2022, 11, 1932. [Google Scholar] [CrossRef]

- Ponochovnyi, Y.; Ivanchenko, O.; Kharchenko, V.; Udovyk, I.; Baiev, E. Models for Cloud System Availability Assessment Considering Attacks on CDN and ML Based Parametrization. CEUR Workshop Proc. 2022, 3171, 1149–1159. [Google Scholar]

- Mani, D.; Mahendran, A. Availability modelling of fault tolerant cloud computing system. Int. J. Intell. Eng. Syst. 2017, 10, 154–165. [Google Scholar] [CrossRef]

- Ghosh, R.; Longo, F.; Frattini, F.; Russo, S.; Trivedi, K.S. Scalable Analytics for IaaS Cloud Availability. IEEE Trans. Cloud Comput. 2014, 2, 57–70. [Google Scholar] [CrossRef]

- Torres, E.; Callou, G.; Andrade, E. A hierarchical approach for availability and performance analysis of private cloud storage services. Computing 2018, 100, 621–644. [Google Scholar] [CrossRef]

- Mengistu, T.M.; Che, D.; Alahmadi, A.; Lu, S. Semi-Markov Process Based Reliability and Availability Prediction for Volunteer Cloud Systems. In Proceedings of the 2018 IEEE 11th International Conference on Cloud Computing (CLOUD), San Francisco, CA, USA, 2–7 July 2018; pp. 359–366. [Google Scholar] [CrossRef]

- Chen, Z.; Chang, X.; Han, Z.; Li, L. Survivability modeling and analysis of cloud service in distributed data centers. Comput. J. 2018, 61, 1298–1305. [Google Scholar] [CrossRef]

- Zhou, Z.; Wei, H. A novel VM migration algorithm based on Markov model under the cloud environment. U.P.B. Sci. Bull. Ser. C 2020, 82, 55–68. [Google Scholar]

- Manglik, M.; Rawat, N.; Ram, M. Reliability and Availability analysis of a cloud computing transition system under multiple failures. Int. J. Qual. Reliab. Manag. 2020, 37, 823–835. [Google Scholar] [CrossRef]

- Gupta, S.; Gupta, V. Availability analysis of vehicular cloud computing. In De Gruyter Series on the Applications of Mathematics in Engineering and Information Sciences; Anand, A., Ram, M., Eds.; De Gruyter: Berlin, Germany, 2020; Volume 4, pp. 21–44. [Google Scholar] [CrossRef]

- Tyagi, V.; Rawat, N.; Ram, M. Reliability modelling and sensitivity analysis of IoT based flood alerting system. J. Qual. Maint. Eng. 2020, 27, 292–307. [Google Scholar] [CrossRef]

- Nguyen, T.A.; Min, D.; Choi, E. A hierarchical modeling and analysis framework for availability and security quantification of IoT infrastructures. Electronics 2020, 9, 155. [Google Scholar] [CrossRef]

- Martyna, J. Semi-Markov Model of Processing Requests Reliability and Availability in Mobile Cloud Computing Systems. In Advances in Intelligent Systems and Computing; Zamojski, W., Mazurkiewicz, J., Sugier, J., Walkowiak, T., Kacprzyk, J., Eds.; Springer International Publishing: Cham, Switzerland, 2021; Volume 1389, pp. 264–272. [Google Scholar] [CrossRef]

- Tang, S.; Xie, Y. Availability Modeling and Performance Improving of a Healthcare Internet of Things (IoT) System. IoT 2021, 2, 310–325. [Google Scholar] [CrossRef]

- Ivanchenko, O.; Kharchenko, V.; Moroz, B.; Ponochovnyi, Y.; Degtyareva, L. Availability Assessment of a Cloud Server System: Comparing Markov and Semi-Markov Models. In Proceedings of the 2021 11th IEEE International Conference on Intelligent Data Acquisition and Advanced Computing Systems: Technology and Applications (IDAACS), Cracow, Poland, 22–25 September 2021; Volume 2, pp. 1157–1162. [Google Scholar] [CrossRef]

- Altowaijri, S.M. Workflow Scheduling and Offloading for Service-based Applications in Hybrid Fog-Cloud Computing. Int. J. Adv. Comput. Sci. Appl. 2021, 12, 726–735. [Google Scholar] [CrossRef]

- Adjepon-Yamoah, D.E. Cloud Accountability Method: Towards Accountable Cloud Service-Level Agreements. In Lecture Notes in Networks and Systems; Yang, X.-S., Sherratt, S., Dey, N., Joshi, A., Eds.; Springer International Publishing: Singapore, 2022; Volume 216, pp. 439–458. [Google Scholar] [CrossRef]

- Zhou, Y.; Cheng, G.; Zhao, Y.; Chen, Z.; Jiang, S. Toward Proactive and Efficient DDoS Mitigation in IIoT Systems: A Moving Target Defense Approach. IEEE Trans. Ind. Inform. 2022, 18, 2734–2744. [Google Scholar] [CrossRef]

- Lu, J.B.; Yu, Y.; Pan, M.L. Reinforcement Learning-Based Auto-scaling Algorithm for Elastic Cloud Workflow Service. In Parallel and Distributed Computing, Applications and Technologies; Hong Shen, H., Sang, Y., Zhang, Y., Xiao, N., Arabnia, H.R., Fox, G., Gupta, A., Malek, M., Eds.; Lecture Notes in Computer Science (Including Subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics); Springer International Publishing: Cham, Switzerland, 2022; Volume 13148, pp. 303–310. [Google Scholar] [CrossRef]

- Han, B.; Wong, S.; Mannweiler, C.; Crippa, M.R.; Schotten, H.D. Context-Awareness Enhances 5G Multi-Access Edge Computing Reliability. IEEE Access 2019, 7, 21290–21299. [Google Scholar] [CrossRef]

- Le, N.T.; Hoang, D.B. A Threat Computation Model using a Markov Chain and Common Vulnerability Scoring System and its Application to Cloud Security. J. Telecommun. Digit. Econ. 2019, 7, 37–56. [Google Scholar] [CrossRef]

- Mesbahi, M.R.; Rahmani, A.M.; Hosseinzadeh, M. Dependability analysis for characterizing Google cluster reliability. Int. J. Commun. Syst. 2019, 32, e4127. [Google Scholar] [CrossRef]

- Carvalho, G.H.S.; Woungang, I.; Anpalagan, A.; Traore, I. Security- and Location-Aware Optimal Virtual Machine Management for 5G-Driven MEC Systems. In Lecture Notes on Data Engineering and Communications Technologies; Woungang, I., Dhurandher, S., Eds.; Springer International Publishing: Cham, Switzerland, 2020; Volume 51, pp. 123–134. [Google Scholar] [CrossRef]

- Almohri, H.M.J.; Watson, L.T.; Evans, D. Predictability of IP Address Allocations for Cloud Computing Platforms. IEEE Trans. Inf. Forens. Secur. 2020, 15, 500–511. [Google Scholar] [CrossRef]

- Samir, A.; Pahl, C. Detecting and Localizing Anomalies in Container Clusters Using Markov Models. Electronics 2020, 9, 64. [Google Scholar] [CrossRef]

- Carvalho, G.H.S.; Woungang, I.; Anpalagan, A.; Traore, I. Optimal Security-Aware Virtual Machine Management for Mobile Edge Computing Over 5G Networks. IEEE Syst. J. 2020, 15, 3403–3414. [Google Scholar] [CrossRef]

- Zheng, K.; Yao, X.; Zhang, Z.; Fang, L.; Huang, X. Model checking the Efficiency of Blockchain-based Edge Computing Network. In Proceedings of the 2021 IEEE 20th International Symposium on Distributed Computing and Applications for Business Engineering and Science (DCABES), Nanning, China, 10–12 December 2021; pp. 168–171. [Google Scholar] [CrossRef]

- Lalropuia, K.C.; Khaitan (nee Gupta), V. Availability and reliability analysis of cloud computing under economic denial of sustainability (EDoS) attack: A semi-Markov approach. Cluster Comput. 2021, 24, 2177–2191. [Google Scholar] [CrossRef]

- Venkatachalam, K.; Prabu, P.; Balaji, B.S.; Kang, B.G.; Nam, Y.; Abouhawwash, M. Cross-layer hidden Markov analysis for intrusion detection. CMC 2022, 70, 3685–3700. [Google Scholar] [CrossRef]

- Pereira, P.; Melo, C.; Araujo, J.; Dantas, J.; Santos, V.; Maciel, P. Availability model for edge-fog-cloud continuum: An evaluation of an end-to-end infrastructure of intelligent traffic management service. J. Supercomput. 2022, 78, 4421–4448. [Google Scholar] [CrossRef]

- Al-Karaki, J.N.; Gawanmeh, A.; Almalkawi, I.T.; Alfandi, O. Probabilistic analysis of security attacks in cloud environment using hidden Markov models. Trans. Emerg. Telecommun. Technol. 2022, 33, e3915. [Google Scholar] [CrossRef]

- El Mir, I.; Kim, D.S.; Haqiq, A. Cloud Computing Security Modeling and Analysis based on a Self-Cleansing Intrusion Tolerance Technique. J. Inf. Assur. Secur. 2016, 11, 273–282. [Google Scholar]

- Liu, Q.; Xing, L.; Wang, C. Framework of Probabilistic Risk Assessment for Security and Reliability. In Proceedings of the 2017 IEEE 2nd International Conference on Data Science in Cyberspace (DSC), Shenzhen, China, 26–29 June 2017; pp. 619–624. [Google Scholar] [CrossRef]

- Salah, K.; Calyam, P.; Boutaba, R. Analytical Model for Elastic Scaling of Cloud-Based Firewalls. IEEE Trans. Netw. Serv. Manag. 2017, 14, 136–146. [Google Scholar] [CrossRef]

- Liu, M.; Dou, W.; Yu, S. How to shutdown a cloud: A DDoS attack in a private infrastructure-as-a-service cloud. Int. J. Autonom. Adapt. Commun. Syst. 2017, 10, 1–14. [Google Scholar] [CrossRef]

- Avižienis, A.; Laprie, J.C.; Randell, B.; Landwehr, C. Basic concepts and taxonomy of dependable and secure computing. IEEE Trans. Depend. Secur. Comput. 2004, 1, 11–33. [Google Scholar] [CrossRef]

- Ponochovnyi, Y.; Kharchenko, V. Dependability Assurance Methodology of Information and Control Systems Using Multipurpose Service Strategies. Radioelectron. Comput. Syst. 2020, 3, 43–58. [Google Scholar] [CrossRef]

- Butpheng, C.; Yeh, K.-H.; Xiong, H. Security and Privacy in IoT-Cloud-Based e-Health Systems—A Comprehensive Review. Symmetry 2020, 12, 1191. [Google Scholar] [CrossRef]

- Hofman, D.; Duranti, L.; How, E. Trust in the Balance: Data Protection Laws as Tools for Privacy and Security in the Cloud. Algorithm 2017, 10, 47. [Google Scholar] [CrossRef]

- Kharchenko, V.; Dotsenko, S.; Ponochovnyi, Y.; Illiashenko, O. Cybernetic Approach to Developing Resilient Systems: Concept, Models and Application. Inform. Secur. Int. J. 2020, 47, 77–90. [Google Scholar] [CrossRef]

- Aslett, L.; Nagapetyan, T.; Vollmer, S. Multilevel Monte Carlo for Reliability Theory. Reliab. Eng. Syst. Saf. 2017, 165, 188–196. [Google Scholar] [CrossRef]

- Lu, J. Hierarchical Performance and Availability Analysis Methodology for Multi-Tiered Web Applications. Dissertation (July 2008). Available online: https://www.researchgate.net/publication/27401653_Hierarchical_performance_and_availability_analysis_methodology_for_multi-tiered_Web_applications (accessed on 9 July 2022).

- Trivedi, K.S.; Bobbio, A. Reliability and Availability Engineering—Modeling, Analysis, and Applications; Cambridge University Press: Cambridge, UK, 2017; pp. 1–730. [Google Scholar]

- Pinheiro, T.; Oliveira, D.; Matos, R.; Silva, B.; Pereira, P.; Melo, C.; Oliveira, F.; Tavares, E.; Dantas, J.; Maciel, P. The Mercury Environment: A Modeling Tool for Performance and Dependability Evaluation. Ambient Intell. Smart Environ. 2021, 29, 16–25. [Google Scholar] [CrossRef]

- National Vulnerability Database (NVD). Available online: https://nvd.nist.gov (accessed on 9 July 2022).

- Zhang, P.; Chen, Y.; Zhou, M.; Xu, G.; Huang, W.; Al-Turki, Y.; Abusorrah, A. A Fault-Tolerant Model for Performance Optimization of a Fog Computing System. IEEE Internet Things J. 2022, 9, 1725–1736. [Google Scholar] [CrossRef]

- Solve Stiff Differential Equations and DAEs—Variable Order Method—MATLAB ode15s. 2022. Available online: https://www.mathworks.com/help/matlab/ref/ode15s.html (accessed on 9 July 2022).

- Dantas, J.; Matos, R.; Araujo, J.; Maciel, P. Models for dependability analysis of cloud computing architectures for eucalyptus platform. Int. Trans. Syst. Sci. Appl. 2012, 8, 13–25. [Google Scholar]

- Distefano, S.; Trivedi, K.S. Non-markovian state-space models in dependability evaluation. Qual. Rel. Eng. Int. 2013, 29, 225–239. [Google Scholar] [CrossRef]

- Boulanger, J. Safety of Computer Architectures; Wiley-ISTE: London, UK, 2013; pp. 1–615. [Google Scholar]

- Razaque, A.; Shaldanbayeva, N.; Alotaibi, B.; Alotaibi, M.; Murat, A.; Alotaibi, A. Big Data Handling Approach for Unauthorized Cloud Computing Access. Electronics 2022, 11, 137. [Google Scholar] [CrossRef]

- Koo, J.; Kang, G.; Kim, Y.-G. Security and Privacy in Big Data Life Cycle: A Survey and Open Challenges. Sustainability 2020, 12, 10571. [Google Scholar] [CrossRef]

- Ahsan, M.; Nygard, K.E.; Gomes, R.; Chowdhury, M.M.; Rifat, N.; Connolly, J.F. Cybersecurity Threats and Their Mitigation Approaches Using Machine Learning—A Review. J. Cybersecur. Priv. 2022, 2, 527–555. [Google Scholar] [CrossRef]

- Liu, D.; Wang, B.Y.; Zhang, L. Verifying Pufferfish Privacy in Hidden Markov Models. In Verification, Model Checking, and Abstract Interpretation; Finkbeiner, B., Wies, T., Eds.; Springer International Publishing: Cham, Switzerland, 2022; Volume 13182, pp. 174–196. [Google Scholar] [CrossRef]

| Reference | Systems Considered | Models Utilized | Characteristics Assessed | ||||||

|---|---|---|---|---|---|---|---|---|---|

| Cloud | IoT | Markov | Semi-Markov | Availability | Reliability | Cyber Security | Dependability | Intrusion Tolerance | |

| [11] | + | − | + | − | + | + | − | − | − |

| [12] | + | − | + | − | + | + | − | − | − |

| [13] | + | − | + | − | + | + | − | − | − |

| [14] | + | − | − | + | + | + | − | − | − |

| [15] | + | − | − | + | + | + | − | − | − |

| [16] | + | − | + | − | + | + | − | − | − |

| [17] | + | − | + | − | + | + | − | − | − |

| [18] | + | − | − | + | + | + | − | − | − |

| [19] | − | + | + | − | + | + | − | − | − |

| [20] | − | + | + | − | + | + | − | − | − |

| [21] | + | − | − | + | + | + | − | − | − |

| [22] | − | + | + | − | + | + | − | − | − |

| [23] | + | − | + | + | + | + | − | − | − |

| [24] | + | − | + | − | + | + | − | − | − |

| [25] | + | − | + | − | + | + | − | − | − |

| [26] | − | + | + | − | + | + | − | − | − |

| [27] | + | − | − | + | + | + | − | − | − |

| [28] | − | + | + | − | − | − | + | − | − |

| [29] | + | − | + | − | − | − | + | − | − |

| [30] | + | − | + | − | − | − | + | − | − |

| [31] | + | − | − | + | − | − | + | − | − |

| [32] | + | − | + | − | − | − | + | − | − |

| [33] | + | − | + | − | − | − | + | − | − |

| [34] | − | + | − | + | − | − | + | − | − |

| [35] | − | + | + | − | − | − | + | − | − |

| [36] | + | − | − | + | − | − | + | − | − |

| [37] | + | − | + | − | − | − | + | − | − |

| [38] | + | − | + | − | − | − | + | − | − |

| [39] | + | − | + | − | − | − | + | − | − |

| [40] | − | + | − | + | − | − | − | + | + |

| [41] | + | − | + | − | − | − | − | + | + |

| [42] | + | − | + | − | − | − | − | + | + |

| [43] | + | − | + | − | − | − | − | + | + |

| Parameters | Value (1/Hour) | Parameters | Value (1/Hour) |

|---|---|---|---|

| 0.0014 | 0.1667 | ||

| 0.0042 | 12 | ||

| 0…0.01 | 20…70 | ||

| 0.0028 | 30 |

| No. | Name of Systems Component | Failure Rate | Value (1/h) | Repair Rate | Value (1/h) |

|---|---|---|---|---|---|

| 1 | Desktop and Mobile (DSN) | λdsm | 0.000925926 | µdsm | 0.02083 |

| 2 | Content Delivery Network Service (CDN) | λcdn | 0.001388889 | µcdn | 1 |

| 3 | Wi-Fi | λwifi | 0.001488095 | µwifi | 0.04167 |

| 4 | Mobile Network (MNT) | λmnt | 0.000462963 | µmnt | 0.5 |

| 5 | App Service (API) | λaps1 | 0.002083333 | µaps1 | 1.5 |

| 6 | Queue Service (QS) | λqs | 0.001302083 | µqs | 1 |

| 7 | Load Balancer (LB) | λlb | 0.001190476 | µlb | 1 |

| 8 | SignalR Socket Service (SGR) | λsgr | 0.001666667 | µsgr | 1 |

| 9 | VPN Gateway | λvpn | 0.001736111 | µvpn | 1 |

| 10 | App Service (Calls) | λaps2 | 0.00245098 | µaps2 | 0.66667 |

| 11 | Autoscaling Service | λaps3 | 0.002777778 | µaps3 | 1 |

| No. | Parameter | Variable | Value (1/h) |

|---|---|---|---|

| 1 | The minimum value of CDN failure rate due to hacker attack | λcdn_min | 0.001388889 |

| 2 | The maximum value of CDN failure rate due to hacker attack | λcdn_max | 0.041666667 |

| 3 | Delta of change in CDN failure rate | Δλcdn | 0.004027778 |

| 4 | Probability of successful attack | α | 0…1 |

| 5 | Number of fragments in multi-fragment model | nf | 10 |

| No. | Element of System | Markov Model Pi | RBD Model Ai | Δ |Pi − (1 − Ai)| |

|---|---|---|---|---|

| 1 | - | 0.895362266 | - | - |

| 2 | dsm | 0.039793882 | 0.957446805 | 0.002759313 |

| 3 | cdn | 0.001243559 | 0.998613037 | 0.000143404 |

| 4 | wifi | 0.031977218 | 0.965517247 | 0.002505535 |

| 5 | wifi/mnt | 6.66 × 10−4 | 0.99929627 | 3.75376 × 10−5 |

| 6 | mnt | 0.018653381 | 0.979591837 | 0.001754783 |

| 7 | aps1 | 0.001243559 | 0.998613038 | 0.000143404 |

| 8 | qs | 0.001165836 | 0.99869961 | 0.000134554 |

| 9 | lb | 1.07 × 10−3 | 0.99881094 | 0.000123153 |

| 10 | sgr | 0.001492271 | 0.998336106 | 0.000171623 |

| 11 | sgr/vpn | 2.59 × 10−6 | 0.999997116 | 2.9295 × 10−7 |

| 12 | vpn | 0.001554448 | 0.998266898 | 0.000178654 |

| 13 | aps2 | 0.003291773 | 0.996336997 | 0.000371231 |

| 14 | aps3 | 0.002487118 | 0.997229917 | 0.000282966 |

| ACVS | CVS | 0.949039584 | 0.945631343 | 0.003408241 |

| Symbol | Illustration | Value | Unit |

|---|---|---|---|

| λHW | failure rate of single hardware channel | 3 × 10−7 | 1/hour |

| λSW 0 | the initial software failure rate | 5 × 10−3 | 1/hour |

| μSW | system recovery rate after the occurrence of software fault | 0.2 | 1/hour |

| λSW k | software failure rate after fixing all faults is zero | 0 | 1/hour |

| μver | rate matches the average time verification procedure | 0.0667 | 1/hour |

| ΔλSW | additional limitation presents elimination of 10 undetected software defects and uniformity of load for their localization and elimination | λSW 0/10 | 1/hour |

| T | The time interval for analysis of availability function behavior | 90,000 | hours |

| No. | Parameter | Value | Unit |

|---|---|---|---|

| 1 | Failure rate caused by DNS software design faults | 3 × 10−5 | 1/hours |

| 2 | Failure rate caused by Apache HTTP server software design faults | 1.5 × 10−5 | 1/hours |

| 3 | Failure rate caused by Oracle WebLogic application server software design faults | 5 × 10−4 | 1/hours |

| 4 | Failure rate caused by software design faults of MySQL DBMS | 3 × 10−4 | 1/hours |

| 5 | DNS service recovery rate | 1.49992 | 1/hours |

| 6 | Apache HTTP Web Server recovery rate | 1.71420 | 1/hours |

| 7 | Oracle WebLogic Application Server recovery rate | 0.99995 | 1/hours |

| 8 | MySQL DBMS server recovery rate | 1.09085 | 1/hours |

| 9 | Rate of attacks on the vulnerability of the DNS service | 6.3 × 10−3 | 1/hours |

| 10 | Criticality of attacks on the vulnerability of the DNS service | 0.77 | 1/hours |

| 11 | The frequency of DNS service restart after an attack | 5 | 1/hours |

| 12 | The probability of eliminating a vulnerability after it appears during an attack | 0.15 | 1/hours |

| 13 | The preventive information security audit test rate | 4.63 × 10−4 … 1.14× 10−4 | 1/hours |

| 14 | The recovery after an information security audit rate | 0.33…1 | 1/hours |

| 15 | The number of undetected vulnerabilities in the initial stage | 10 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kharchenko, V.; Ponochovnyi, Y.; Ivanchenko, O.; Fesenko, H.; Illiashenko, O. Combining Markov and Semi-Markov Modelling for Assessing Availability and Cybersecurity of Cloud and IoT Systems. Cryptography 2022, 6, 44. https://doi.org/10.3390/cryptography6030044

Kharchenko V, Ponochovnyi Y, Ivanchenko O, Fesenko H, Illiashenko O. Combining Markov and Semi-Markov Modelling for Assessing Availability and Cybersecurity of Cloud and IoT Systems. Cryptography. 2022; 6(3):44. https://doi.org/10.3390/cryptography6030044

Chicago/Turabian StyleKharchenko, Vyacheslav, Yuriy Ponochovnyi, Oleg Ivanchenko, Herman Fesenko, and Oleg Illiashenko. 2022. "Combining Markov and Semi-Markov Modelling for Assessing Availability and Cybersecurity of Cloud and IoT Systems" Cryptography 6, no. 3: 44. https://doi.org/10.3390/cryptography6030044

APA StyleKharchenko, V., Ponochovnyi, Y., Ivanchenko, O., Fesenko, H., & Illiashenko, O. (2022). Combining Markov and Semi-Markov Modelling for Assessing Availability and Cybersecurity of Cloud and IoT Systems. Cryptography, 6(3), 44. https://doi.org/10.3390/cryptography6030044