How Can a Deep Learning Algorithm Improve Fracture Detection on X-rays in the Emergency Room?

Abstract

:1. Introduction

2. Materials and Methods

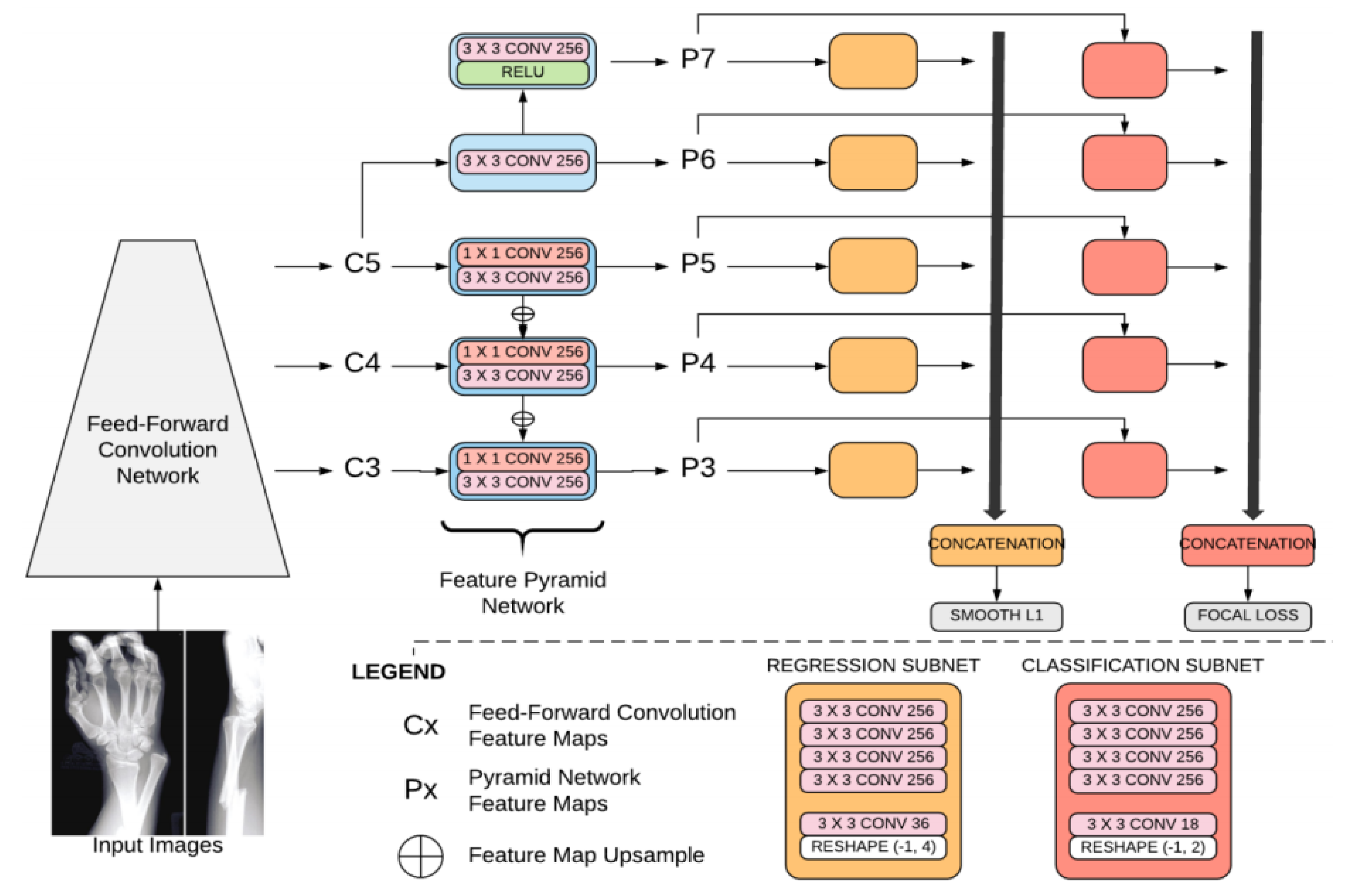

2.1. Algorithm

- Focal loss: Focal loss is a cross-entropy with a modulating factor with a gamma parameter. This parameter affects the loss such that easy-to-classify samples are down-weighted in the classification loss.

- A smooth L1 loss (such as regression loss), used to bound regression boxes. A smooth L1 loss is less sensitive to outliers than the L2 loss. The batch size is 4. The network was regularized during training, based on weight decay (L2). As the outline of a fracture is subjective, this loss has been smoothed for the purposes of fracture detection.

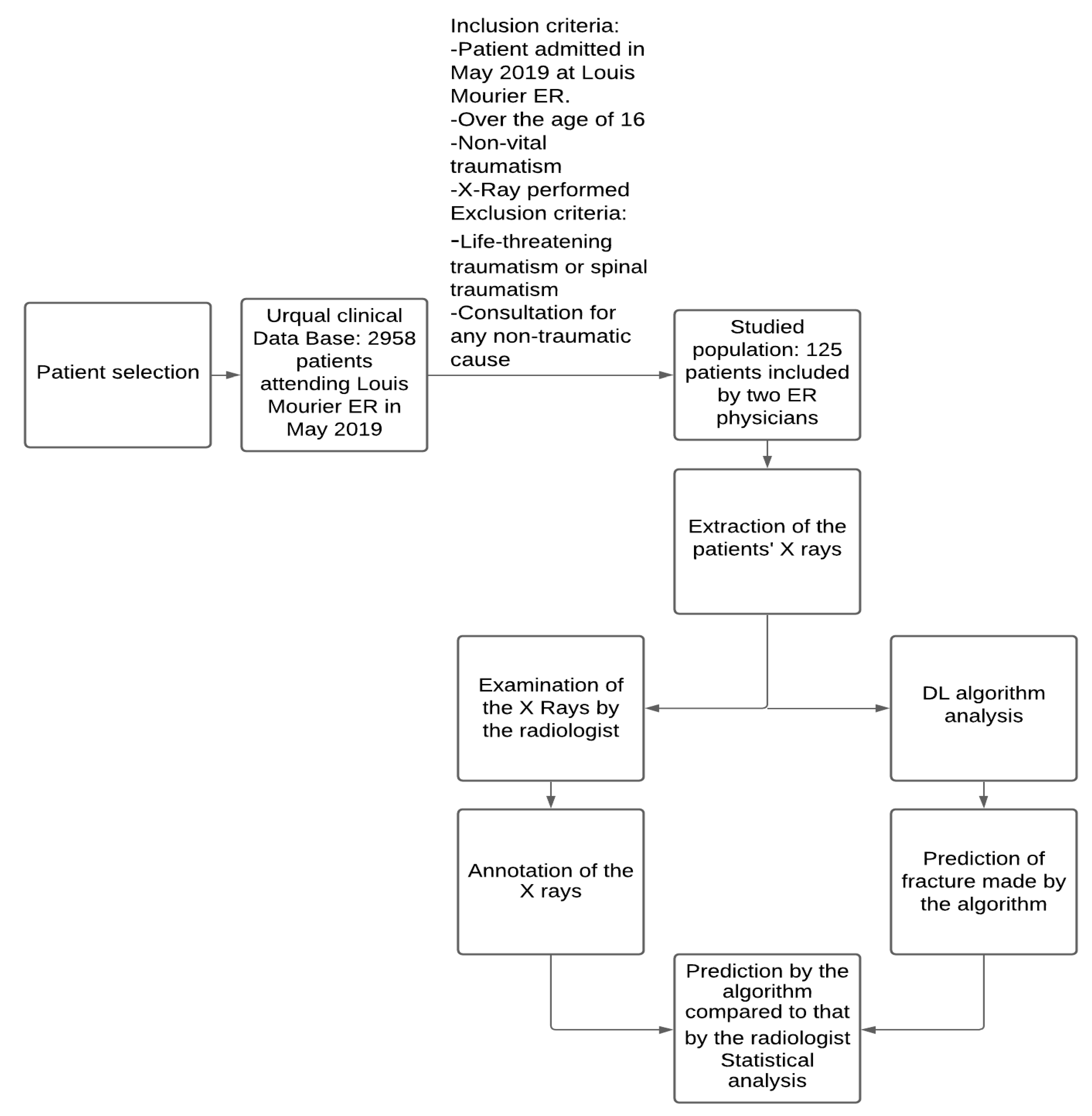

2.2. Dataset

2.3. Statistical Analysis

- Sensitivity measures the proportion of positives that are correctly identified. In the following formula, TP stands for true positive and FN stands for false negative. Patients with a fracture that is correctly identified are considered true positives, whereas patients with a fracture not identified by the algorithm are considered false negatives.

- Negative predictive value measures the proportion of individuals with negative test results who are correctly diagnosed. In the following formula, TN stands for true negative and FN stands for false negative. Patients without a fracture that are correctly classified are true negatives, whereas patients with a fracture who are identified by the algorithm as having no fracture are considered false negatives.Sensitivity, specificity, negative predictive value (NPV), and area under the curve (AUC) were calculated with Python (Python Software Foundation) and scikit-learn (https://scikit-learn.org, June 2007, a free Python library).

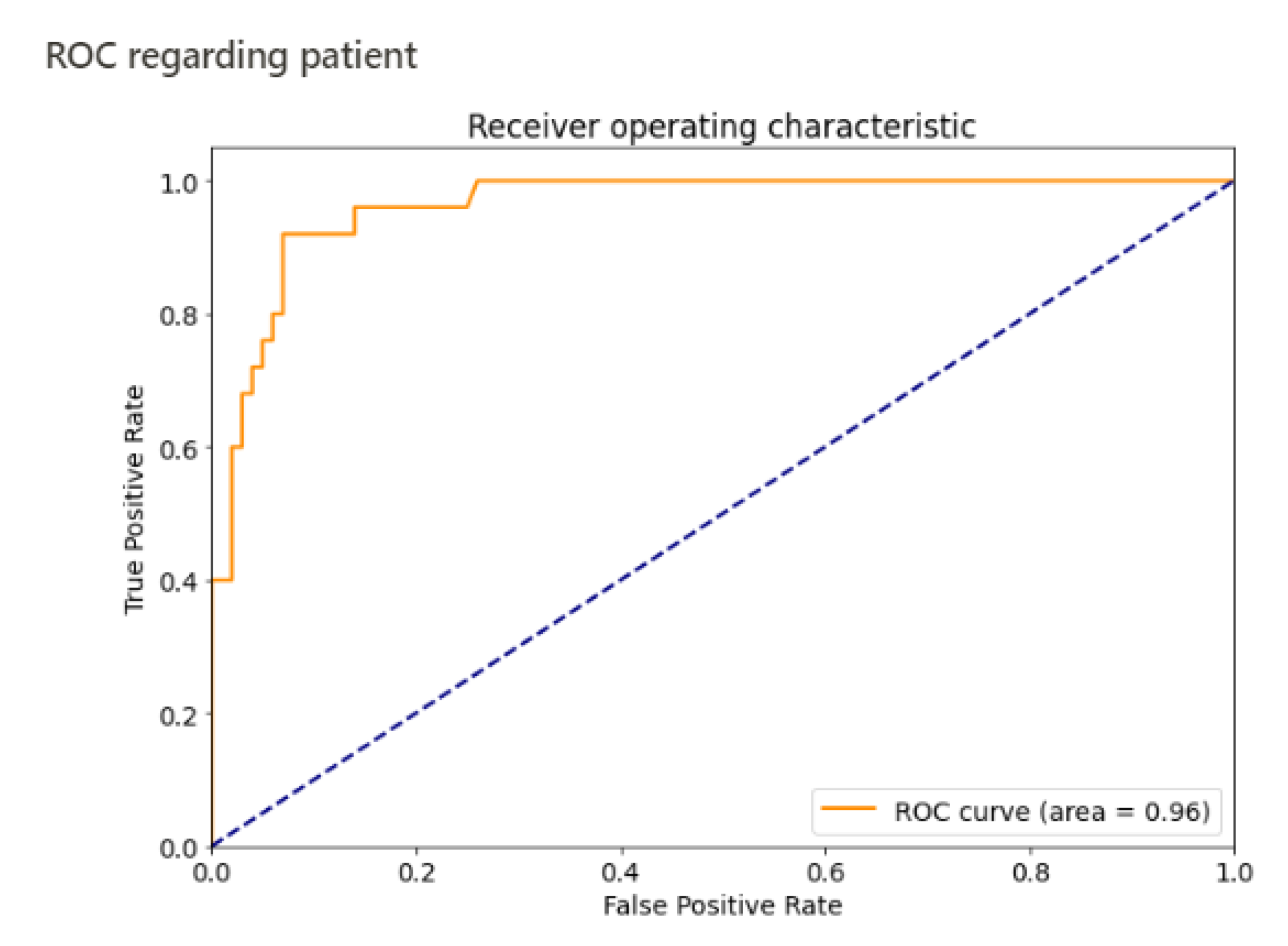

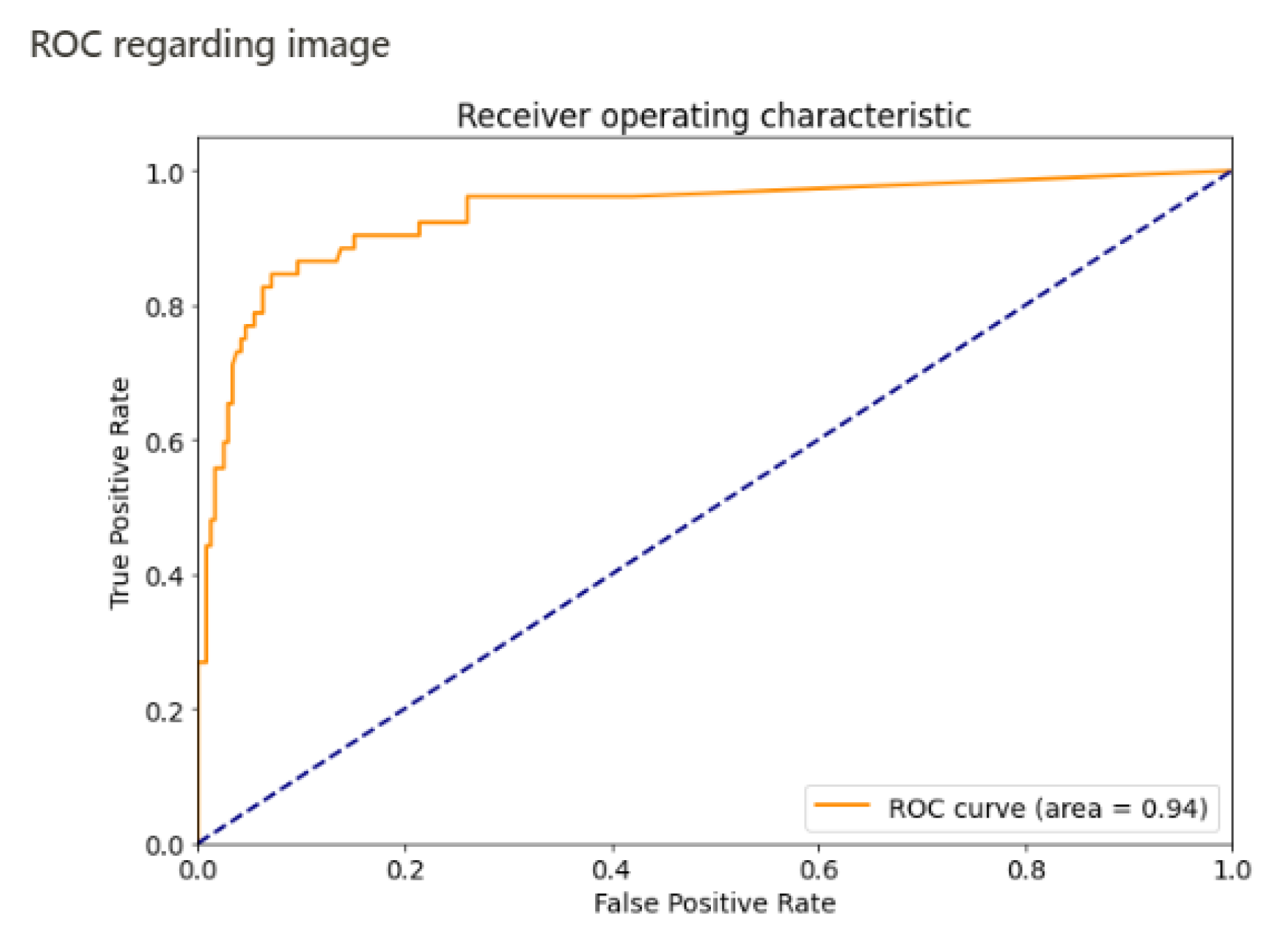

3. Results

4. Discussion

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Moonen, P.J.; Mercelina, L.; Boer, W.; Fret, T. Diagnostic error in the Emergency Department: Follow up of patients with minor trauma in the outpatient clinic. Scand. J. Traum. Resusc. Emerg. Med. 2017, 25. [Google Scholar] [CrossRef] [Green Version]

- Wei, C.J.; Tsai, W.C.; Tiu, C.M.; Wu, H.T.; Chiou, H.J.; Chang, C.Y. Systematic analysis of missed extremity fractures in emergency radiology. Acta Radiol. 2006, 47, 710–717. [Google Scholar] [CrossRef]

- Russakovsky, O.; Deng, J.; Su, H.; Krause, J.; Satheesh, S.; Ma, S.; Huang, Z.; Karpathy, A.; Khosla, A.; Bernstein, M.; et al. ImageNet Large Scale Visual Recognition Challenge. Int. J. Comput. Vis. 2015, 115, 211–252. [Google Scholar] [CrossRef] [Green Version]

- Lakhani, P.; Sundaram, B. Deep Learning at Chest Radiography: Automated Classification of Pulmonary Tuberculosis by Using Convolutional Neural Networks. Radiology 2017, 284, 574. [Google Scholar] [CrossRef] [PubMed]

- Oh, K.; Kang, H.M.; Leem, D.; Lee, H.; Seo, K.Y.; Yoon, S. Early detection of diabetic retinopathy based on deep learning and ultra-wide-field fundus images. Sci. Rep. 2021, 11, 1–9. [Google Scholar] [CrossRef] [PubMed]

- Alzubaidi, L.; Al-Amidie, M.; Al-Asadi, A.; Humaidi, A.J.; Al-Shamma, O.; Fadhel, M.A.; Zhang, J.; Santamaría, J.; Duan, Y. Novel transfer learning approach for medical imaging with limited labeled data. Cancers 2021, 13, 1590. [Google Scholar] [CrossRef]

- Kleppe, A.; Skrede, O.-J.; De Raedt, S.; Liestøl, K.; Kerr, D.J.; Danielsen, H.E. Designing deep learning studies in cancer diagnostics. Nat. Rev. Cancer 2021, 21, 199–211. [Google Scholar] [CrossRef]

- Kalmet, P.H.S.; Sanduleanu, S.; Primakov, S.; Wu, G.; Jochems, A.; Refaee, T.; Ibrahim, A.; Hulst, L.V.; Lambin, P.; Poeze, M. Deep learning in fracture detection: A narrative review. Acta Orthop. 2020, 91, 215–220. [Google Scholar] [CrossRef] [Green Version]

- Berg, H.E. Will intelligent machine learning revolutionize orthopedic imaging? Acta Orthop. 2017, 88, 577. [Google Scholar] [CrossRef] [Green Version]

- Kandel, I.; Castelli, M.; Popovič, A. Musculoskeletal Images Classification for Detection of Fractures Using Transfer Learning. J. Imaging 2020, 6, 127. [Google Scholar] [CrossRef]

- Yoon, A.P.; Lee, Y.-L.; Kane, R.L.; Kuo, C.-F.; Lin, C.; Chung, K.C. Development and Validation of a Deep Learning Model Using Convolutional Neural Networks to Identify Scaphoid Fractures in Radiographs. JAMA Netw. Open 2021, 4, e216096. [Google Scholar] [CrossRef]

- Olczak, J.; Fahlberg, N.; Maki, A.; Razavian, A.S.; Jilert, A.; Stark, A.; Sköldenberg, O.; Gordon, M. Artificial intelligence for analyzing orthopedic trauma radiographs: Deep learning algorithms—Are they on par with humans for diagnosing fractures? Acta Orthop. 2017, 88, 581–586. [Google Scholar] [CrossRef] [Green Version]

- Lindsey, R.; Daluiski, A.; Chopra, S.; Lachapelle, A.; Mozer, M.; Sicular, S.; Hanel, D.; Gardner, M.; Gupta, A.; Hotchkiss, R.; et al. Deep neural network improves fracture detection by clinicians. Proc. Natl. Acad. Sci. USA 2018, 115, 11591–11596. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Lin, T.Y.; Goyal, P.; Girshick, R.; He, K.; Dollar, P. Focal Loss for Dense Object Detection. IEEE Trans. Pattern Anal. Mach. Intell. 2020, 42, 318–327. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. In Proceedings of the 3rd International Conference on Learning Representations, ICLR, San Diego, CA, USA, 7–9 May 2015; pp. 1–14. [Google Scholar]

- Kingma, D.P.; Ba, J.L. Adam: A method for stochastic optimization. In Proceedings of the 3rd International Conference on Learning Representations, ICLR, San Diego, CA, USA, 7–9 May 2015; pp. 1–15. [Google Scholar]

- Chung, S.W.; Han, S.S.; Lee, J.W.; Oh, K.S.; Kim, N.R.; Yoon, J.P.; Kim, J.Y.; Moon, S.H.; Kwon, J.; Lee, H.J.; et al. Automated detection and classification of the proximal humerus fracture by using deep learning algorithm. Acta Orthop. 2018, 89, 468–473. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Kim, D.H.; MacKinnon, T. Artificial intelligence in fracture detection: Transfer learning from deep convolutional neural networks. Clin. Radiol. 2018, 73, 439–445. [Google Scholar] [CrossRef]

- Gan, K.; Xu, D.; Lin, Y.; Shen, Y.; Zhang, T.; Hu, K.; Zhou, K.; Bi, M.; Pan, L.; Wu, W.; et al. Artificial intelligence detection of distal radius fractures: A comparison between the convolutional neural network and professional assessments. Acta Orthop. 2019, 90, 394–400. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Kitamura, G. Deep learning evaluation of pelvic radiographs for position, hardware presence, and fracture detection. Eur. J. Radiol. 2020, 130, 109139. [Google Scholar] [CrossRef]

- Cheng, C.T.; Wang, Y.; Chen, H.W.; Hsiao, P.M.; Yeh, C.N.; Hsieh, C.H.; Miao, S.; Xiao, J.; Liao, C.H.; Lu, L. A scalable physician-level deep learning algorithm detects universal trauma on pelvic radiographs. Nat. Commun. 2021, 12. [Google Scholar] [CrossRef]

- Jones, R.M.; Sharma, A.; Hotchkiss, R.; Sperling, J.W.; Hamburger, J.; Ledig, C.; O’Toole, R.; Gardner, M.; Venkatesh, S.; Roberts, M.M.; et al. Assessment of a deep-learning system for fracture detection in musculoskeletal radiographs. Npj Digit. Med. 2020, 3, 1–6. [Google Scholar] [CrossRef] [PubMed]

- Buhrmester, V.; Münch, D.; Arens, M. Analysis of explainers of black box deep neural networks for computer vision: A survey. arXiv 2019, arXiv:1911.12116. [Google Scholar]

- Tobler, P.; Cyriac, J.; Kovacs, B.K.; Hofmann, V.; Sexauer, R.; Paciolla, F.; Stieltjes, B.; Amsler, F.; Hirschmann, A. AI-based detection and classification of distal radius fractures using low-effort data labeling: Evaluation of applicability and effect of training set size. Eur. Radiol. 2021. [Google Scholar] [CrossRef] [PubMed]

- Raisuddin, A.M.; Vaattovaara, E.; Nevalainen, M.; Nikki, M.; Järvenpää, E.; Makkonen, K.; Pinola, P.; Palsio, T.; Niemensivu, A.; Tervonen, O.; et al. Critical evaluation of deep neural networks for wrist fracture detection. Sci. Rep. 2021, 11. [Google Scholar] [CrossRef]

| Results/Fracture Status | Fracture | No Fracture |

|---|---|---|

| Detection | 24 | 14 |

| No detection | 1 | 86 |

| Total | 25 | 100 |

| Algorithm Performance for Detecting Patients with a Fracture | Algorithm Performance for Detecting Fractures Per Image | |

|---|---|---|

| Sensitivity | 96% (95% CI 0.88–1) | 84% |

| Specificity | 86% (95% CI 0.79–0.93) | 92% |

| AUC | 0.96 | 0.94 |

| Negative predictive value | 98.85% (95% CI 0.97–1) |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Reichert, G.; Bellamine, A.; Fontaine, M.; Naipeanu, B.; Altar, A.; Mejean, E.; Javaud, N.; Siauve, N. How Can a Deep Learning Algorithm Improve Fracture Detection on X-rays in the Emergency Room? J. Imaging 2021, 7, 105. https://doi.org/10.3390/jimaging7070105

Reichert G, Bellamine A, Fontaine M, Naipeanu B, Altar A, Mejean E, Javaud N, Siauve N. How Can a Deep Learning Algorithm Improve Fracture Detection on X-rays in the Emergency Room? Journal of Imaging. 2021; 7(7):105. https://doi.org/10.3390/jimaging7070105

Chicago/Turabian StyleReichert, Guillaume, Ali Bellamine, Matthieu Fontaine, Beatrice Naipeanu, Adrien Altar, Elodie Mejean, Nicolas Javaud, and Nathalie Siauve. 2021. "How Can a Deep Learning Algorithm Improve Fracture Detection on X-rays in the Emergency Room?" Journal of Imaging 7, no. 7: 105. https://doi.org/10.3390/jimaging7070105

APA StyleReichert, G., Bellamine, A., Fontaine, M., Naipeanu, B., Altar, A., Mejean, E., Javaud, N., & Siauve, N. (2021). How Can a Deep Learning Algorithm Improve Fracture Detection on X-rays in the Emergency Room? Journal of Imaging, 7(7), 105. https://doi.org/10.3390/jimaging7070105