1. Introduction

Many people with intracranial aneurysms (IAs), a common cerebrovascular disorder that affects 2–5% of the general population, do not exhibit any symptoms until they rupture [

1]. SAH (subarachnoid hemorrhage), a catastrophic event with a mortality rate ranging from 25% to 50%, is caused when unruptured intracranial aneurysms (UIAs) rupture. According to studies, almost half of the survivors experience severe disability after SAH [

2]. Due to its prevalence in middle-aged people, SAH contributes significantly to the socioeconomic burden and frequently results in a loss of years of productive life [

3]. Many studies have dealt with the development of growth and rupture of UIAs. These studies aim to evaluate the rupture probability and direct decision-making, guaranteeing that high-risk patients receive prompt intervention while avoiding needless procedures for those with low risk [

4]. The latest studies are focusing on inflammatory mediators and complement system components that have been found in aneurysm walls and luminal blood, suggesting persistent vascular inflammation [

5]. Inflammatory processes weaken the arterial wall of UIAs and increase their vulnerability to rupture by causing endothelial dysfunction, extracellular matrix degradation, and vascular remodeling [

6]. Their results demonstrate the importance of inflammation as a therapeutic target in the management of UIAs and research into anti-inflammatory techniques to slow their progression and reduce the risk of rupture [

7].

On the other hand, machine learning (ML) has become a potent tool for determining the rupture risk of intracranial aneurysms [

8]. While traditional rupture risk assessment techniques depend on predetermined criteria like aneurysm size and location, ML can spot subtle patterns that traditional analysis might miss [

9]. Recent research has shown that by utilizing morphologic characteristics like size ratio, aspect ratio, and wall shear stress, ML models such as Random Forest, support vector machines, and neural networks achieve high accuracy in identifying ruptured and unruptured aneurysms [

8,

10]). Despite their promise, a key challenge remains in ensuring the interpretability of these models for clinical application. Methods such as SHapley Additive exPlanations (SHAP) and Local Interpretable Model-agnostic Explanations (LIME) have been employed to provide insight into feature importance, fostering greater clinician trust in ML-based predictions [

11].

Several studies [

5,

6,

7,

12,

13] examined how inflammatory markers relate to the rupture of intracranial aneurysms. With the use of ML algorithms, we will try to identify connections between inflammatory markers and aneurysms’ morphological characteristics, trying to bridge the gap between the central role of inflammatory mediators and complement system components that have been found in the aneurysm wall and luminal blood, and how these immune signals interact with aneurysm geometry to influence rupture in patient-level data. In order to support clinical decision-making and enhance risk stratification for unruptured aneurysms, we aim to provide interpretable insights into ML predictions. This could facilitate clinical decision-making and enhance risk stratification for patients with UIAs.

This study aims to determine whether an explainable ML model that jointly leverages multi-site inflammatory biomarkers measured in the aneurysm sac, parent artery, and peripheral vein, and detailed aneurysm morphology, can accurately classify rupture status while providing case-level rationales suitable for clinical interpretation. A secondary aim is to explore how inflammatory activity interacts with structural features to illuminate mechanisms that may underlie aneurysm instability. Our contributions are threefold: (i) we demonstrate an ML model that achieves high performance on a small, clinically realistic cohort; (ii) we provide transparent, case-level explanations linking elevated CRP/complement activity with specific geometric patterns (e.g., narrow neck, irregular shape); and (iii) we position an interpretable ML model as a complementary tool to statistical analysis to support clinical decision-making and enhance risk stratification for patients with UIAs.

3. Results

This project was carried out in a Windows 11 environment, specifically a 11th Gen Intel Core i5 CPU and 8GB RAM. For data processing with machine learning algorithms, we used ANACONDA’s DISTRIBUTION PLATFORM Jupyter Notebook (version 7.2.2), and all tools and libraries obtained from scikit-learn Machine Learning in Python (version 3.11.9). All open sources. In an automated and powerful environment, the capabilities of these libraries were able to produce robust and reliable results.

3.1. Comparison of the Five Models

Due to the small number of cases, the stratified cross-validation (Skf-CV) technique was chosen to evaluate the performance of five algorithms on unseen data during testing. This technique is used in machine learning to make sure that the class distribution across the entire dataset is maintained across each fold of the cross-validation process. When working with small, unbalanced datasets where some classes might be underrepresented, this is especially crucial [

53]. In this study, performance evaluation on the training set was utilized primarily as a diagnostic framework during the model development phase. Monitoring training metrics allowed us to assess the models’ learning capacity and identify potential issues such as underfitting, data leakage, or misaligned labels [

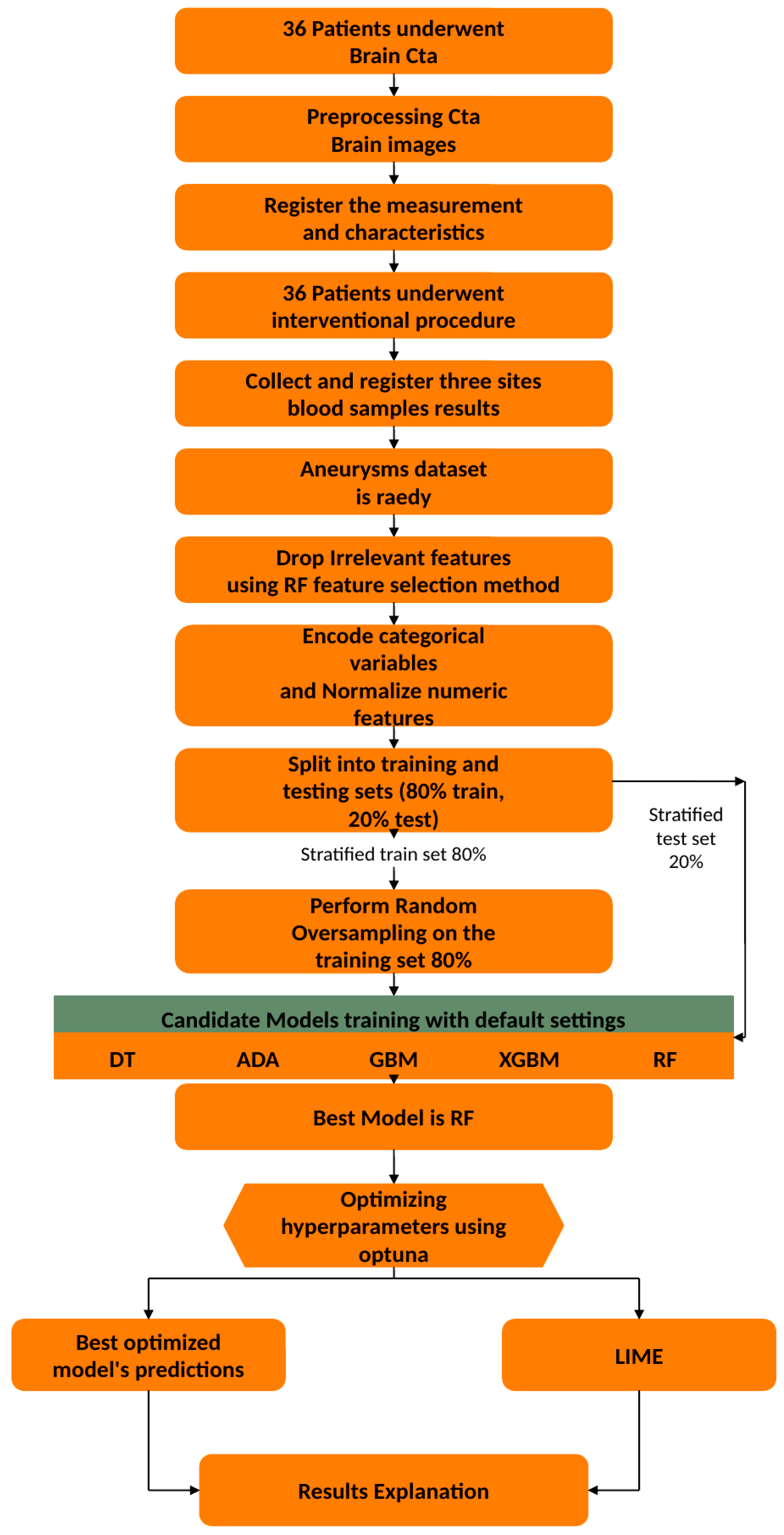

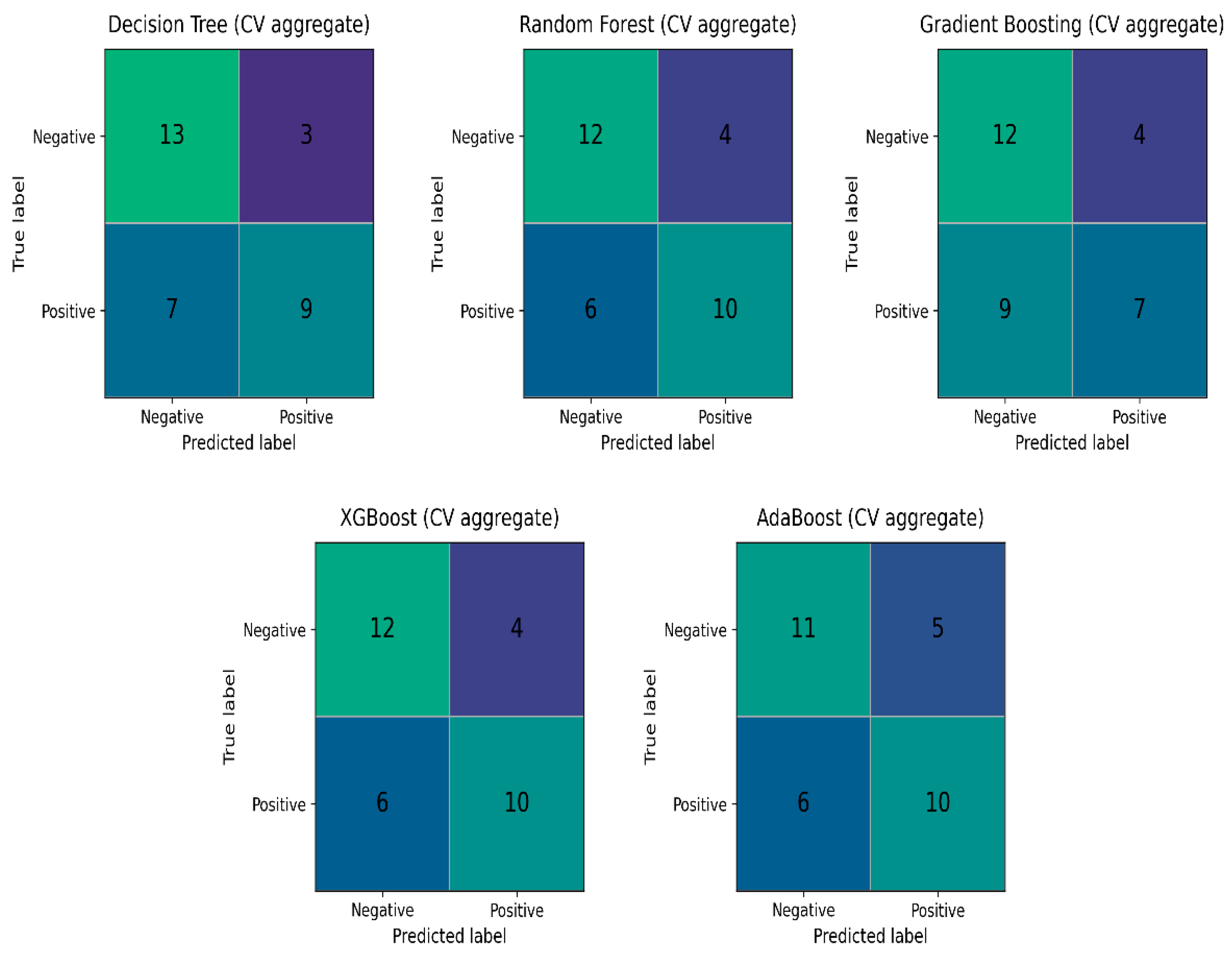

54]. While real-world performance is estimated through the held-out test set and cross-validation, these internal diagnostics were crucial for ensuring the stability of the algorithms, given the technical complexity of the multi-site intravascular features. The above-mentioned five algorithms were tested with their default settings, with random oversampling (ROS) on the training set and five-fold Skf-CV, with the results seen in

Table 3 and their aggregate confusion matrices in

Figure 7.

The totals in the aggregate confusion matrices sum to 32 rather than 35 because these matrices were computed on the resampled training data used during cross-validation, not on the full cohort. After the train/test split, the training set contained 28 cases (16 Broken and 12 Not-Broken). Random oversampling was applied on the training test to mitigate class imbalance by duplicating minority-class observations, bringing the effective training size to 32 with a balanced distribution (16 Broken and 16 Not-Broken). Consequently, the aggregated confusion matrices reflect 32 training instances (including duplicated samples), whereas the remaining cases from the original 35-case dataset belonged to the held-out test set and were evaluated separately without resampling.

As shown in

Table 3 and the aggregate confusion matrices (

Figure 7), Random Forest (RF) emerged as the most promising algorithm. The receiver operating characteristic (ROC) curve summarizes a classifier’s performance across decision thresholds by plotting the true-positive rate against the false-positive rate, while the area under the ROC curve (ROC–AUC) quantifies the model’s ability to discriminate between the two classes (ruptured vs. unruptured) [

43]. RF achieved a mean ROC–AUC of 0.903 (SD 0.095), indicating strong discrimination and comparatively stable performance across cross-validation folds. Although RF and XGBoost show identical aggregate confusion matrices in

Figure 7,

Table 3 indicates that RF attains a higher ROC–AUC with lower variability than XGBoost, supporting its selection as the classifier. In addition, RF is an ensemble method that combines predictions from multiple decision trees via majority voting, which generally improves robustness compared with a single classifier.

3.2. RF’s Results

In this section, we will describe the steps for our model’s improvement and the results. Starting from scratch, Random Forest (RF) was trained and tested with its default settings and without oversampling. The results are seen in

Table 4.

The next step for the improvement of our model, some additional techniques, such as Optuna study and Oversampling. Random oversampling balanced our dataset as the minority class was 15 unruptured to 20 ruptured aneurysms. The results of RF after random oversampling and Optuna tuning are more balanced and are seen in

Table 5.

For further validation of the performance of our RF model, a technique called OOF (out-of-fold) validation is used. The out-of-fold accuracy of our model is 0.8438. An OOF accuracy of 84.38% suggests that our model performs well during cross-validation and generalizes well to unseen data. If there is a significant drop in accuracy when tested on the test set, it could indicate overfitting or issues with the data. Such a problem does not exist because the final test accuracy is 86% (

Table 5).

Other metrics that were used for the RF model’s evaluation, such as the confusion matrix, area under the ROC curve (AUC–ROC), and precision–recall (PR) curve, gave a clear image of our model’s performance. Precision and recall metrics can be calculated from the confusion matrix. The confusion matrix is seen in

Figure 8.

The receiver operator characteristic (ROC) is a probability curve that plots and visualizes model performance at various threshold values. The area under the curve (AUC) is the measurement of the ability of a classifier to distinguish between classes. RF performs well, achieving an excellent ROC-AUC (97%). The results for ROC-AUC score were train ROC AUC 0.97, validation ROC AUC 0.98, and test ROC AUC 0.92. An outcome 97% of train ROC AUC suggests the model has a strong ability to distinguish between positive and negative classes. Validation performance (98%) that is almost identical to the training performance suggests that the model generalizes well and does not overfit to the training data. The test ROC-AUC is also high at 92%, indicating that the model maintains a strong ability to distinguish between classes on unseen data (

Figure 9).

The precision–recall (PR) curve evaluates a classifier across all decision thresholds, showing the trade-off between recall (how many true positives are found) and precision (how many predicted positives are actually correct). A larger area under this curve means the model maintains both high recall and high precision over many thresholds. High recall comes from avoiding false negatives, and high precision comes from limiting false positives. In

Figure 10, we present RF’s precision–recall curve.

In imbalanced problems, PR and especially average precision (AP) are more informative than accuracy. Here, the AP of 0.97 indicates that the model separates ruptured from unruptured aneurysms extremely well across thresholds.

The classification report is presented in

Table 6.

On the test set, sensitivity (TPR) and weighted average sensitivity are 0.75 and 0.86, respectively. Specificity (TNR) and weighted average specificity are 1.00 and 0.89, respectively. This means the model correctly identified 75% of truly ruptured cases (3/4) but missed 25% (1 false negative), while it perfectly ruled out all non-ruptured cases (0 false positives; 3/3 correct).

3.3. Global Interpretation of RF’s Results

The feature importance of RF (optimized model) represents how much each feature contributes to the model’s predictions. Higher importance values indicate a stronger influence on decision-making (

Figure 11).

The most important features in our model for the correct discrimination between classes are C.C3 (carotid C3), A.CRP (aneurysmal CRP), C.CRP (parent artery CRP), and IR.SH (shape of the aneurysm), C.C4 (parent artery C4), and NECK (aneurysm’s neck width). All these features are likely to have the strongest correlation or relationship with the target variable (Broken/Not-Broken).

The middle-ranking features include V.IgM, A.C4, C.IgG (various immunoglobulins and complement factors), height (height of the aneurysms), V.C3, V.CRP (vein blood C3 and CRP), C.IgM (carotid blood immunoglobulin M), A.IgA, and V.IgA (aneurysmal and vein blood immunoglobulin A, antibody response). These features are likely to have a moderate correlation or relationship with the target variable.

The lower-importance features, such as AR, A.C3, V.C4, A.IgM, VERTI, MD, SEX (male, female), and A.IgG, C.IgA (aneurysmal and vein immunoglobulin measurements), have minimal influence on prediction.

Summarizing, the results suggest that a combination of immune response markers (C3, CRP, C4) and aneurysm morphology (neck, irregular shape) is the primary driver of aneurysm risk of rupture in our dataset.

3.4. Local Interpretation of RF’s Results

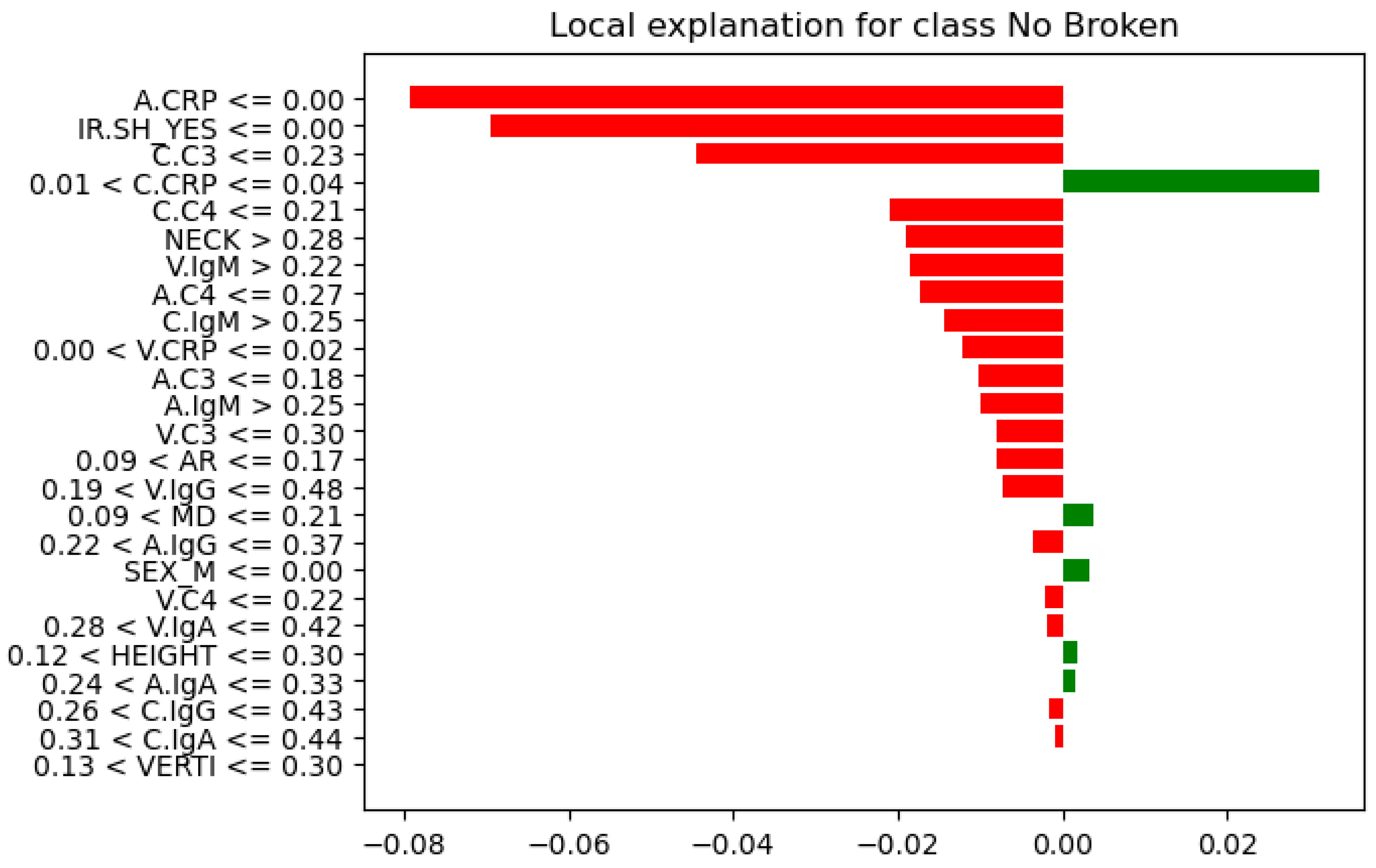

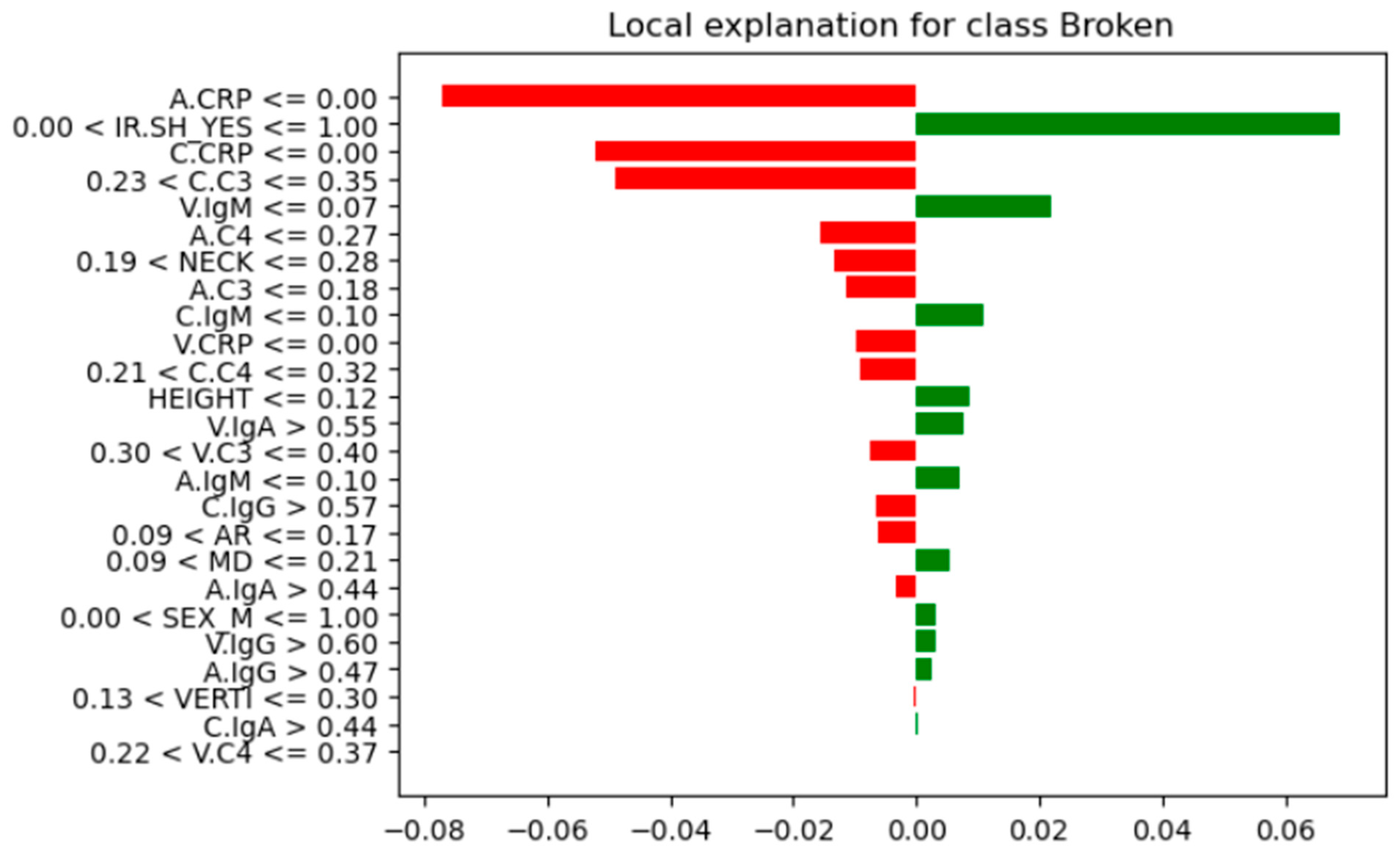

A tool that explains the model’s decision boundary in a human-understandable way. The name of this magical library is LIME. LIME (Local Interpretable Model-agnostic Explanations) is a novel explanation technique that explains the prediction of any classifier in an interpretable and faithful manner by learning an interpretable model locally around the prediction. Instead of providing a global understanding of the model on the entire dataset, LIME focuses on explaining the model’s prediction for individual instances.

Table 7 presents the final classification (true/false for ‘broken’) from the Random Forest (RF) model, along with the probability score that informed that decision. A probability threshold of 0.5 was applied, meaning instances with a probability of 0.5 or higher are classified as “Broken” (True), while those below are classified as “No” (False).

Further analysis of feature instances that contributed most to their correct classification was needed to identify differences, similarities, and patterns between the Not-Broken and Broken cases. Here comes the LIME explainer. From these seven instances (

Table 7), we present the one indexed 31 with the highest probability of being classified as Not-Broken, the one indexed three with the highest probability of being classified Broken based on their features as they were produced from LIME, and the wrong classification indexed 11 (

Figure 12,

Figure 13, and

Figure 14, respectively).

For these seven test-set instances, LIME explainer identified the following: across the cases that share common measurements, arterial and carotid CRP (A.CRP, C.CRP) at or near zero consistently aligned with the Not-Broken class, whereas non-zero CRP values pushed toward Broken. C3 and C4 showed a similar pattern: lower levels supported Not-Broken, while higher levels and most prominently venous C3/C4 and carotid C3 were frequently associated with Broken. Immunoglobulins were less consistent overall; venous IgA/IgG tended to be higher in Broken, while arterial IgA/IgM could be lower in Broken or moderate, making their direction weaker to moderate compared with CRP and complement. AR occasionally appeared high in Not-Broken and was not decisive. HEIGHT tended to be higher in Not-Broken, but its effect was modest. Smaller NECK and smaller VERTI values were characteristic of Broken. Consistent with that, higher V.C3 and V.C4 also pushed toward Broken. SEX(M/F) appeared in both classes with no clear predictive pattern. IR.SH (irregular shape = True) was frequently accompanied by Broken, but it was supportive rather than sufficient on its own. A synopsis of these class differences appears in

Table 8.

To contextualize the case-level LIME, we provide a descriptive visualization of the feature distributions for the held-out test instances (

Figure 15), highlighting misclassified cases to facilitate interpretation; no statistical inference is drawn due to the small test-set size. After plotting their features in relation to their rupture status (box plots,

Figure 15), CRP levels (A.CRP, C.CRP, V.CRP) clearly increased in Broken and remained low in Not-Broken, indicating a strong role in classification. Many theories have been postulated to explain why inflammatory markers such as CRP play a crucial role in aneurysm formation, growth, and rupture [

6,

7]. Likewise, there was a distinct separation in C3/C4 across sites: lower in Not-Broken and higher in Broken, consistent with immune activation. IgM showed a wider distribution and was not uniformly elevated, whereas IgA and IgG were often higher in Broken. The role of complement activation in aneurysm pathology has been the subject of numerous studies, which provide evidence that immune mechanisms play a major role in rupture risk [

5,

6]. Wider necks and larger aneurysm dimensions (VERTI, MD, HEIGHT) were more prevalent in Not-Broken, which is consistent with a lower rupture risk according to geometric features that matched the plots [

52,

53,

54]. SEX did not exhibit a distinct classification pattern. Regarding the misclassified instance, it had low CRP, placing it closer to the Not-Broken profile and contributing to the error. C3/C4 were low-to-moderate, further blurring the boundary, and IgA/IgG were extremely high, suggesting the model underweighted these signals relative to CRP/complement.

Correlation matrix (

Figure 16), a descriptive statistical tool that describes the strength and direction of the linear relationship between multiple pairs of variables, confirms both RF feature importance and LIME explainer for the inflammatory core made of parent-artery C3 (C.C3) and CRP in the aneurysm sac and parent artery (A.CRP, C.CRP). In the RF feature importance plot (

Figure 8), these three markers appear among the strongest overall predictors of rupture. The correlation matrix shows that BROKEN cases are clearly positively related to V.C3, C.C3, and A.C3, as well as to CRP at all three sampling sites (correlation coefficients of about 0.58–0.72), indicating that higher complement and CRP levels are associated with ruptured aneurysms in this small test set. Consistently, the LIME explainer, which describes the model’s behavior for each of the seven test cases, frequently highlights rules such as C.C3 > 0.35–0.55 and C.CRP/A.CRP > 0.04 as conditions that push the prediction toward the “Broken” class, whereas values below these ranges support a “Not-Broken” prediction.

Also, the correlation matrix confirms RF feature importance and LIME explainer results for the morphological instability captured by irregular shape (IR.SH) and the neck size (NECK). In the RF feature importance plot (

Figure 11), IR.SH is the most important non-biochemical feature, and NECK is the next most important geometric feature, just after C.C4. The correlation matrix shows that IR.SH has a moderate positive correlation with BROKEN_YES (~0.47), meaning that aneurysms labeled as irregular are more likely to be broken. NECK, on the other hand, has a strong negative correlation with BROKEN_YES (≈−0.72), indicating that aneurysms with a smaller neck are more often broken in these seven test cases. The LIME plots tell the same story: when IR.SH_YES = 1 it appears high among the influential features and supports the “Broken” prediction, while small neck values (for example, 0.09 < NECK ≤ 0.19 or NECK ≤ 0.28) push the model towards “Broken”, and larger necks push it towards “Not-Broken”.

Furthermore, the correlation matrix confirms RF feature importance and LIME explainer results for the role of IgM and the additional contribution of C4 and IgG. In the RF feature importance plot (

Figure 11), venous IgM (V.IgM) and, to a lesser degree, carotid IgM (C.IgM), are among the most important features, along with C4 in the parent artery and aneurysm (C.C4, A.C4) and carotid IgG (C.IgG). The correlation matrix shows that BROKEN_YES is strongly negatively correlated with V.IgM and C.IgM (around −0.7), but positively correlated with C4 and C.IgG. This means that ruptured aneurysms in this test set tend to have lower IgM levels in the blood, but higher levels of complement C4 and IgG. LIME support the same pattern: in cases predicted as Broken, very low IgM values (for example V.IgM ≤ 0.07–0.10 or C.IgM ≤ 0.10–0.17) are among the factors pushing the prediction toward “Broken”, whereas higher IgM values pull several cases towards “Not-Broken”; at the same time, higher C4 and C.IgG values contribute in favor of the “Broken” class.

4. Discussion

In a single-center cohort of 35 saccular intracranial aneurysms (IAs) in 35 patients (≈57% ruptured), we developed an interpretable Random Forest (RF) classifier. RF classifier integrates multi-site inflammatory biomarkers (CRP, C3, C4, immunoglobulins) with additional detailed morphometrics (e.g., NECK width, irregular shape, aspect ratio). Our target was to assess the risk of rupture status, including these additional features, and analyze their importance using Global and per-instance (Local) explanations from an RF classifier. The addition of these extra features appears to extend beyond the findings of the statistical studies [

5,

54,

55], providing novel insights into the relationship between aneurysm structure and biochemical markers. Model development addressed class imbalance with random oversampling and optimized hyperparameters with Optuna. Across stratified cross-validation (CV), the tuned RF achieved high discrimination (CV ROC–AUC ≈ 0.98 with accuracy ≈ 0.85). Generalization to the held-out test set remained strong (test ROC–AUC ≈ 0.92; accuracy ≈ 0.86; AP = 0.97). Out-of-fold (OOF) accuracy was ~0.84. The test confusion matrix showed one false negative (sensitivity 0.75; specificity 1.00).

Global feature importance, LIME explainer results on the seven test-set instances, and the correlation matrix converged on a consistent ranking of predictors. The dominant contributors were inflammatory and complement markers—particularly parent-artery C3 (C.C3) and CRP in the aneurysm sac and parent artery (A.CRP, C.CRP), followed by C4—alongside morphological instability (irregular shape, IR.SH_YES) and neck diameter (NECK). BROKEN status showed strong positive correlations with V/C/A-CRP and V/C/A-C3, and negative correlations with NECK, while LIME repeatedly identified high C.C3, elevated CRP, irregular shape, and small neck width as key conditions pushing predictions toward the “Broken” class. Many studies have highlighted the importance of these features and revealed higher rates of rupture in aneurysms associated with inflammatory markers. CRP, C3, and C4 levels may be indicative of systemic inflammation, which has been associated with vascular remodeling, thus aneurysm instability. Our results are in agreement with those of various studies [

5,

6,

7].

Our study highlighted the role of circulating IgM relative to C3/C4 and IgG/IgA. In the RF feature importance plot, venous IgM (V.IgM) and, to a lesser extent, carotid IgM (C.IgM), ranked highly among biological predictors, together with C.C4/A.C4 and C.IgG. LIME corroborated this pattern: low IgM values (e.g., V.IgM ≤ 0.07–0.10, C.IgM ≤ 0.10–0.17) frequently contributed to “Broken” predictions, whereas higher IgM levels shifted several instances toward “Not-Broken”, while elevated C4 and IgG supported classification as “Broken”. By contrast, IgA appeared as a modest, context-dependent contributor rather than a primary discriminator. Boulieris et al. also observed that venous, parent-artery, and intra-aneurysmal IgM concentrations are lower in ruptured than in unruptured aneurysms, interpreting this primarily as increased wall deposition of IgM in the former [

5]. Other structural features, such as HEIGHT and VERTI, had lower global importance and were generally larger in non-ruptured cases, and AR—despite its established clinical relevance—was overshadowed by inflammatory variables in this dataset. SEX had minimal impact on classification, with similar modeled risk in men and women. And as for the instance that was incorrectly classified, it had a low CRP level, which made it more consistent with the Not-Broken class. The misclassified instance’s moderate C3/C4 values made classification ambiguity worse. This contradiction in blood sample values and the wrong classification for this instance is strong evidence that CRP/C3/C4 are the most important features. The discrepancy that the misclassified instance had exceptionally high IgA and IgG levels, suggesting that the model undervalued their predictive power.

Another interesting finding is that neck size in the correlation matrix, RF feature importance plot, and LIME explainer are assigning a more prominent discriminative role to aneurysm neck size than to overall size or aspect ratio within an inflammatory context. Classical morphological studies, and subsequent reviews [

1,

56,

57,

58], emphasize dome size and aspect ratio as major predictors of rupture risk, with neck width receiving comparatively little independent attention. In our study, neck diameter exhibits one of the strongest correlations with aneurysm BROKEN status. Neck width is inversely related to inflammatory activity, implying that smaller neck aneurysms were correlated to higher A.CRP and V/C/A.C3 levels, which may suggest that tighter aneurysm necks are associated with greater inflammatory activity, thus instability. Also, neck width is the top-ranked geometric feature in the RF feature importance plot, immediately following the main inflammatory markers. Irregular shape also emerges as the most informative morphological predictor once inflammation is accounted for. It is moderately positively correlated with BROKEN and is the most important non-biochemical feature globally. LIME show that IR.SH regularly appears among the top local drivers of “Broken” predictions, and that specific small-neck ranges (e.g., 0.09 < NECK ≤ 0.19 or NECK ≤ 0.28) further increase rupture probability.

Beyond reproducing known associations between inflammation, complement activation, and rupture status, this study introduces an integrated, interpretable framework that jointly models multi-site inflammatory profiling and aneurysm morphology and explains each prediction at the patient level. Existing clinical and experimental literature typically addresses morphological risk factors (size, aspect ratio, irregular shape) separately from biochemical markers such as CRP, complement components, and immunoglobulins. Our findings instead delineate a composite high-risk phenotype—irregular shape, narrow neck, elevated C3 and CRP across vascular compartments, and reduced circulating IgM—that consistently characterizes aneurysms classified as ruptured in our cohort. This coupled geometry–inflammation profile is visible simultaneously in global RF feature importance, LIME, and empirical correlations, and is not explicitly described as a unified construct in prior work.

Relative to existing machine-learning studies that have focused mainly on morphological, radiomic, or hemodynamic descriptors, our model achieves strong discrimination while adding a rich biological layer. Most prior ML work on intracranial aneurysms has emphasized shape indices, wall shear stress, or imaging-derived radiomics, yielding powerful but predominantly geometry-driven models [

8,

9,

10,

11,

14,

15,

16,

17,

18]. In contrast, our pipeline incorporates multi-site inflammatory biomarkers and complement activation alongside morphology, producing explanations that are explicitly bio-mechanistic (inflammation plus geometry) rather than purely geometric. Furthermore, by using a transparent ensemble (RF) and pairing global importance with LIME, we adhere to recommendations for interpretable medical ML, avoiding fully black-box architectures. Finally, the use of random oversampling, Optuna-based hyperparameter tuning, and performance metrics suited to class imbalance (ROC–AUC, AP), combined with out-of-fold estimates and a held-out test set, aligns with best practices for small, imbalanced clinical datasets and facilitates comparison with both statistical and ML literature.

Taken together, our results indicate that morphology is necessary but not sufficient for reliable rupture discrimination. Morphological features—particularly NECK and IR.SH—contribute consistently and meaningfully to the model, yet the largest shifts in rupture probability occur when adverse geometry co-occurs with elevated CRP/C3/C4. In many LIME profiles, irregular shape or narrow neck in the context of low inflammatory and complement activity produced only modest increases in predicted rupture risk, whereas the same geometry combined with high CRP and C3/C4 yielded substantial probability changes. This pattern provides a plausible explanation for why morphology-only models, even when sophisticated, can misclassify cases where the inflammatory state diverges from shape-based expectations. Biologically, the findings support a biomechano-inflammatory view of aneurysm instability: geometry shapes local hemodynamics, which modulate endothelial function and immune activation, and rupture propensity reflects the joint product of both processes rather than geometry alone. Consequently, integrated models that combine morphology with inflammatory and complement markers appear more appropriate for clinical decision support and for guiding future prospective validation efforts than morphology-only approaches.

Limitations: While our findings offer valuable insights, this study has limitations that should be noted. First, our sample size is limited to 35 patients. This is a direct reflection of the technical challenges and serious risks—such as arterial dissection or aneurysm rupture—involved in sampling blood directly from the carotid artery and the aneurysm sac. Given these constraints, our work is best characterized as a pilot or proof-of-concept study rather than a definitive large-scale analysis.

To make the most of the available data, we used robust validation methods like stratified five-fold cross-validation and oversampling. However, we recognize that these results might not fully represent broader or more diverse populations. Similarly, although we included sex as a variable in our models and found no clear patterns, the study is not large enough (underpowered) to rule out subtle sex-specific biological differences.

Finally, since this was a single-center observational study, we can only report associations rather than clear cause-and-effect. While we used tools like LIME to make our ML models more transparent, the models are still inherently tied to this specific dataset. Future research with larger, multi-center groups will be vital to confirm these early results.