1. Introduction

In horses, the metacarpophalangeal and metatarsophalangeal joints are referred to as the ‘fetlock’. Fetlock fractures are a leading cause of catastrophic musculoskeletal injury in Thoroughbred racehorses, posing serious risks to animal welfare and racing careers and increasing veterinary costs. These injuries can vary in severity; while simple condylar fractures are often surgically repairable with good outcomes, more complex or comminuted fractures frequently necessitate euthanasia due to the biomechanical complexity of the fetlock joint and the challenges associated with stabilizing the joint under high loading conditions [

1,

2]. Current diagnostic methods, which are primarily radiographic assessment, are frequently limited by image quality, projection variability, and the difficulty of detecting subtle lesions, especially in high-performance animals under stress [

3]. Although advanced imaging such as computed tomography (CT) and magnetic resonance imaging (MRI) is increasingly used in specialist settings, these modalities remain less accessible.

Over the last decade, advances in artificial intelligence (AI) and deep learning (DL) have transformed diagnostic imaging in human medicine. Numerous studies have shown that convolutional neural networks (CNNs) and transformer-based models can perform at the human level in fracture detection, imaging modality classification, and anatomical localization [

4,

5]. Models trained on large-scale radiographic datasets, such as MURA and CheXpert, have demonstrated strong pattern recognition for a wide range of musculoskeletal and thoracic abnormalities [

6,

7].

Despite these advances, deep learning applications in the veterinary field are still in their early stages. In contrast to human medicine, where large scale datasets are publicly available and radiographic datasets [

8] have catalyzed AI development and benchmarking, there are currently no equivalent open datasets or research competitions (e.g., FastMRI and AIROGS Challenge) in veterinary imaging. Equine radiographs (XR), and veterinary imaging data more generally, remain largely absent from public repositories due to privacy concerns, a lack of standardized imaging protocols, and institutional barriers to data sharing. This scarcity of curated datasets has significantly limited progress in applying deep learning to veterinary diagnostics, despite its demonstrated success in human healthcare. Prior research in veterinary AI work has focused on areas such as lameness detection with wearable/motion sensors, the automated analysis of respiratory sounds, and disease prediction from routine laboratory data [

9,

10,

11]. Furthermore, the distinct anatomical differences between species make it difficult to transfer human-trained models directly into veterinary settings without significant domain adaptation [

12].

Transfer learning, which involves repurposing and fine-tuning a model trained on one task or domain for use on another, appears to be a promising approach to closing this data gap. For example, models pretrained on large human imaging cohorts have been successfully adapted to non-human primates with limited species-specific data, improving performance and generalization [

13]. However, few studies have investigated this in clinical settings like equine fracture detection.

This study presents a proof-of-concept cross-species deep learning framework for detecting fractures in equine athletes, with initial training based on human radiographic data and performance refined using a curated dataset of equine fetlock radiographs. The architecture combines Vision Transformers (ViTs) and ResNet backbones, as well as a custom loss function, to improve feature learning across anatomical domains. The dataset covers a wide range of clinical conditions, including fracture, post-treatment, and non-fracture, allowing for robust generalization across diverse equine cases.

Beyond equine applications, the model was further validated on feline datasets, highlighting its potential as a foundation for multi-species veterinary diagnostics. The results show strong localization capabilities and diagnostic accuracy, indicating that cross-species transfer learning is viable. This work lays the groundwork for future AI-assisted diagnostics in veterinary medicine, as well as translational models that could inform human clinical tools.

2. Materials and Methods

To develop a robust and generalizable model for cross-species fracture detection, we built a deep learning pipeline that included curated datasets, tailored preprocessing steps, and a hybrid model architecture (

Figure 1). This section describes the steps taken for data collection, annotation, model development, transfer learning, training and evaluation. Our methodology emphasizes adaptability across imaging modalities and species, drawing on both human and veterinary medical data.

2.1. Data Collection

This study used a diverse, cross-species dataset (images) that included equine, feline and human images (

Table 1). One hundred equine fetlock imaging cases (67 fracture cases, 33 non-fracture cases) were collated from the published veterinary literature and anonymized radiographs from equine hospital archives. These cases included radiographs (XR), computed tomography (CT) and magnetic resonance imaging (MRI). These images depicted a wide range of conditions, including fracture (acute injury), post-treatment (for example, with implants), and non-fracture (healthy or non-fracture pathology) states. To test the model’s cross-species applicability, an additional 70 feline imaging cases were obtained from the hospital archives. These companion animal datasets added anatomical variation and imaging diversity, allowing for a better evaluation of the model’s generalizability. Furthermore, approximately 4000 human limb radiographs were retrieved from public databases including MURA and CheXpert [

6,

7]. These data provided a foundation for transfer learning, allowing the model to learn high-level fracture detection features from a large and well-annotated dataset. All datasets were anonymized.

2.2. Preprocessing and Annotation

Prior to training, images were standardized to 224 × 224 pixels and preprocessed to improve diagnostic features. This included histogram equalization to normalize the contrast, Gaussian filtering to reduce noise, and image cropping or masking to isolate the fetlock joint or other relevant anatomical regions utilizing image processing libraries in Python. To increase data diversity and model robustness, synthetic image transformations like rotation, flipping, brightness variation, and zooming were used.

Histogram equalization was applied using OpenCV’s cv2.equalizeHist function on greyscale radiographs to standardize intensity distributions across scanners and exposure settings. This step was applied consistently to all radiographic inputs prior to augmentation. Histogram equalization was used to normalize contrast across radiographs, compensating for differences in exposure or equipment settings. This improved the visibility of subtle features like trabecular changes and early fracture lines. Gaussian filtering was used to reduce imaging noise while maintaining structural edge clarity, thereby improving signal quality and reducing distractions that could mislead the model during training.

In addition to improving visual features, preprocessing aimed to streamline and direct the model’s attention to clinically relevant regions. Cropping and masking techniques were used to isolate the fetlock joint or target anatomical area, removing background information and keeping the model focused on the region of interest. Data augmentation techniques, such as random rotations and horizontal flips, were used to simulate real-world variability in imaging conditions, which is especially useful in veterinary datasets with limited sample sizes.

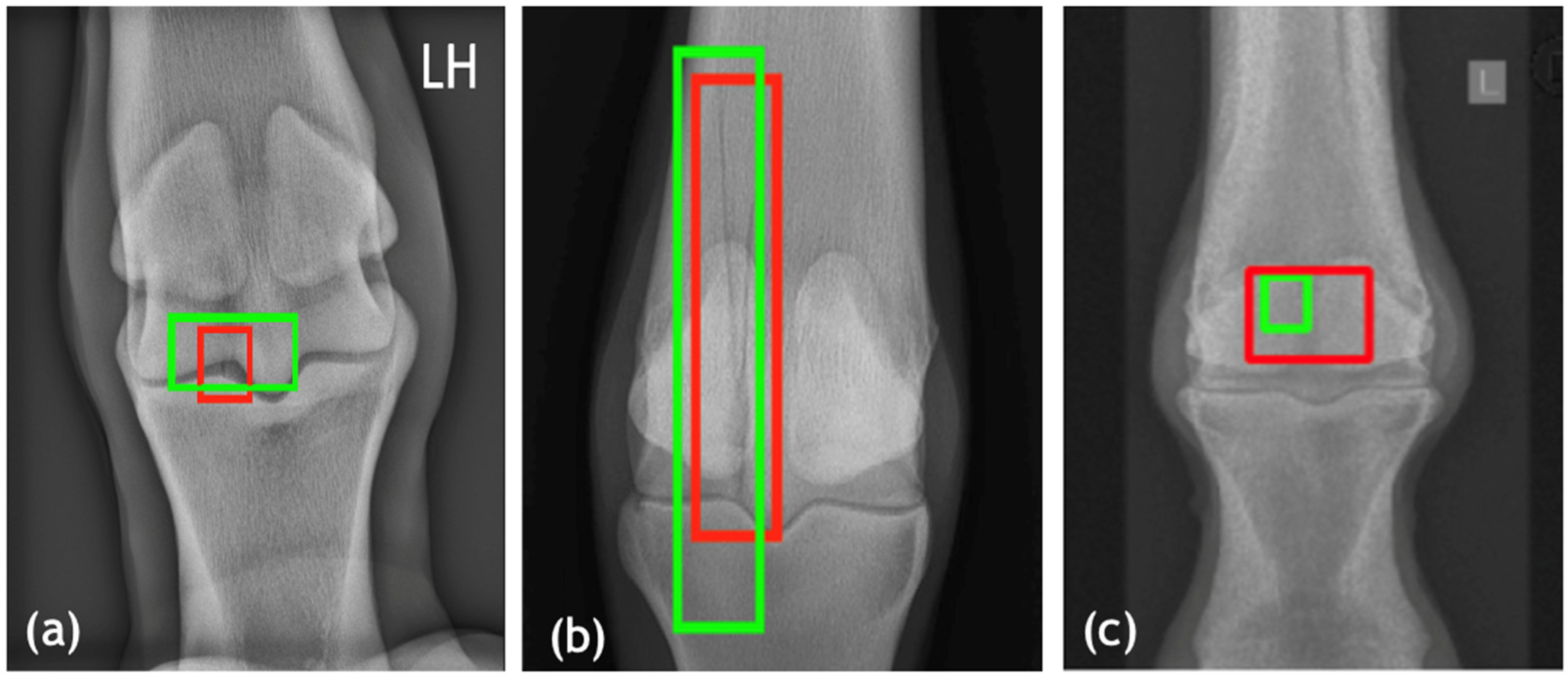

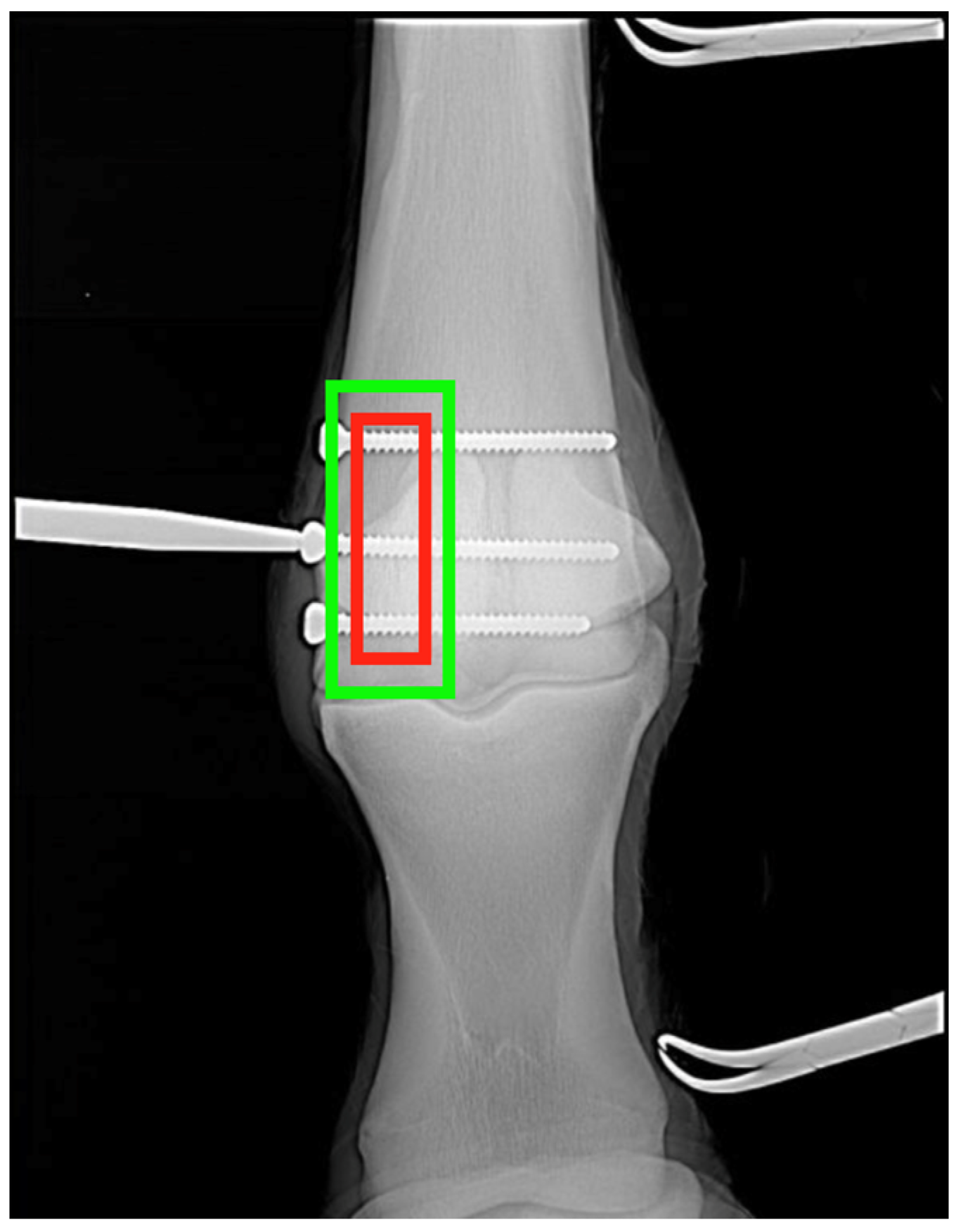

All fracture regions were manually found and evaluated by experts in veterinary diagnostic imaging (DB and FLD), and the majority of the regions were manually annotated using bounding boxes to define the areas of interest. Annotations were saved alongside metadata indicating the modality (XR, CT, MRI), anatomical region, projection angle, and fracture type. These annotations were critical for both classification and localization training tasks.

2.3. Model Architecture and Classification Pipeline

The framework developed in this study has a modular, multi-stage architecture that is specifically tailored to the challenges of veterinary imaging, which involves significant anatomical variability and modality heterogeneity. The first stage of the pipeline focuses on imaging modality classification, which is critical for determining the best downstream processing route. For this task, a ViT integrated with ResNet layers was used, combining ViT’s global attention mechanisms with ResNet’s localized feature extraction strength. For this task, a hybrid Vision Transformer (ViT)–ResNet architecture was employed, combining the global contextual modelling of ViT with the strong local feature extraction of convolutional residual networks.

The ViT component was based on the ViT-Base architecture. Input radiographs were divided into non-overlapping patches of size 32 × 32 pixels, each of which was flattened and linearly projected into a 768-dimensional embedding space. Positional encodings were added to preserve spatial information prior to transformer processing. All experiments used a ViT-Base configuration with patch size 32 × 32 and embedding dimension 768, following the standard formulation proposed by Dosovitskiy et al. [

14]. Self-attention was computed using the canonical scaled dot product attention mechanism. Given an input sequence of patch embeddings

, query (

), key (

), and value (

) matrices were obtained via learned linear projections,

where

. Attention was then calculated as

This standard formulation is included for completeness; the model follows the canonical transformer design and does not introduce modifications to the attention mechanism, consistent with Vaswani et al. [

14].

This hybrid model was further improved with adversarial training, which introduces perturbations during learning to increase the model’s robustness to domain shifts and minor input noise, essential when dealing with variable quality veterinary radiographs or scans. To address class imbalance and improve the classification of under-represented modalities (for example, MRI), a custom loss function combining categorical focal loss and standard cross-entropy was employed. Categorical focal loss (Equation (1)) dynamically reduces easy examples and focuses training on difficult-to-classify cases, increasing model sensitivity to rarer classes.

where N is the number of classes,

denotes the ground truth label for class

,

is the predicted probability for class

,

is a weighting factor to balance class importance, and

is the focusing parameter that down-weights well-classified examples to emphasize harder, under-represented cases.

The second module in the architecture was created to handle the view or projection classification of radiographic inputs, which is especially important in equine imaging where dorsopalmar (DP), lateromedial (LM), and oblique views can differ significantly in both appearance and diagnostic interpretation. For this task, a UNI Foundation Model was used. UNI is a lightweight and general-purpose convolutional neural network. This model was trained with active learning, which involved iteratively reintroducing uncertain or misclassified samples into the training set using entropy-based uncertainty sampling (Equation (2)). This approach made better use of a small, annotated dataset and improved generalization across non-standard imaging projections.

where

represents the uncertainty score for the sample

, N is the number of classes, and

is the predicted probability that sample

belongs to class

. Higher entropy values indicate greater uncertainty, and such samples were prioritized for reintroduction into the training set during active learning.

The fracture detection network is the pipeline’s third and most important module, responsible for localizing fracture regions across species. A Transformer Autoencoder was used, with a ViT-based encoder capturing global context and a decoder reconstructing and highlighting regions corresponding to structural abnormalities. This design enabled the model to learn spatial relationships in the anatomical structure while also focusing on subtle cues that indicated fractures. To improve interpretability and computational efficiency, the ViT encoder was combined with SqueezeNet, a compact architecture known for maintaining performance with far fewer parameters. The detection model was trained with a custom localization-aware loss function that combined binary cross-entropy loss for classification (fracture vs. non-fracture) and intersection over union (IoU) loss for bounding box regression (Equation (3)). The IoU component penalizes poor overlap between predicted and ground truth regions, promoting fracture localization with greater accuracy. For consistency and to minimize projection-based variability, only dorsopalmar (DP) views were used for fracture localization training and evaluation in the current model, as this projection provided the most consistent anatomical reference across the datasets. This combination ensured that the model not only correctly identified fractures but also localized them with high spatial precision, which is required for clinical utility. Overall, this modular design, integrating modality recognition, projection standardization, and interpretable localization, demonstrates the feasibility of building scalable, cross-species AI frameworks for veterinary diagnostics. It highlights how a structured, stepwise architecture can support model adaptability across diverse imaging contexts while maintaining clinical interpretability.

where

is the ground truth fracture label,

is the predicted probability,

denotes the predicted bounding box, and

is the ground truth bounding box.

captures classification accuracy (fracture vs. non-fracture), while

measures spatial overlap between prediction and ground truth, and

is a weighting factor to balance classification and localization. In practice

is applied only to fracture-positive cases (y = 1), since non-fracture images have no bounding box annotation. For non-fracture cases, the model is penalized exclusively by the BCE term, ensuring that bounding boxes are not forced when fractures are absent. This conditional design prevents spurious localization while maintaining accurate classification. The transfer learning framework was used to handle the domain shift between human and veterinary imaging. Initially, the fracture detection model was pretrained on the human dataset, which provided abundant, high-volume image data for foundational training. This phase allowed the model to capture common fracture characteristics like cortical discontinuity, trabecular disruption, and joint misalignment. The pretrained model was fine-tuned using the equine datasets. To strike a balance between transferability and adaptability, early transformer layers were frozen, and later layers were unfrozen and retrained with veterinary data. This strategy made domain adaptation more efficient, allowing the model to fine-tune its understanding of species-specific anatomical features without overfitting to smaller veterinary datasets.

To improve clarity and reproducibility, Algorithm 1 summarizes the overall processing flow of the proposed cross-species fracture detection pipeline. The algorithm provides a high-level overview of the sequential steps used during inference and training, including preprocessing, modality classification, projection identification, and fracture localization.

| Algorithm 1: Cross-Species Fracture Detection Pipeline |

1: Preprocess input image I

a. Apply contrast normalization and noise reduction

b. Crop or mask region of interest

c. Apply data augmentation during training

2: Modality classification

a. Extract global and local features using ViT–ResNet

b. Predict imaging modality (X-Ray, CT, or MRI)

3: Projection classification (X-Ray only)

a. Apply UNI-based classifier

b. Predict projection view (dorsopalmar, lateromedial, oblique)

4: Fracture localisation (dorsopalmar view only)

a. Encode image using ViT-based encoder

b. Predict fracture probability y

c. Predict bounding box B using localisation head

5: Loss computation (training only)

a. Compute binary cross entropy loss for fracture classification

b. Compute IoU-based loss for bounding box regression

6: Return final prediction (y, B) |

This modular design allows each stage to be independently evaluated or extended, supporting adaptability across species and imaging contexts.

Each model was trained with PyTorch 2.9.1 on NVIDIA A100 GPUs. The AdamW optimizer was used in the training configuration, with an initial learning rate of 1 × 10−4 and a cosine annealing scheduler. A batch size of 32 was maintained for 100 training epochs. The combined loss function used binary cross-entropy for classification and an IoU-based regression loss for localization. Within species, the dataset was divided at the case level into 70% for training, 20% for validation, and 10% for testing (test set), ensuring that the test subset remained completely unseen by the model during both training and hyperparameter tuning. All hyperparameters were fixed across experiments to ensure consistency and reproducibility. AdamW was used with a weight decay of . The combined loss function used equal weighting between binary cross-entropy and IoU terms. No exhaustive hyperparameter search was conducted due to dataset size constraints; values were selected based on the prior literature and preliminary stability testing.

For modalities and projection views, class-specific prediction accuracy was calculated as the proportion of correctly classified samples within each true class (i.e., sensitivity). Evaluations on equine training data were also done separately for veterinary images acquired through either hospital-acquired cases or literature-derived cases. Performance was evaluated at the image (patch) level using accuracy (overall classification correctness), sensitivity (true positive rate for fracture detection), specificity (true negative rate), and the mean IoU score (overlap between predicted and annotated fracture regions). To avoid overfitting, an early stop was applied based on validation loss. To account for variability arising from dataset heterogeneity and patch-level predictions, model performance was summarized using median values with interquartile ranges (IQRs). Metrics including accuracy, sensitivity, specificity, and intersection over union (IoU) were computed across test samples and reported as the median [IQR]. This approach provides a robust estimate of central tendency while reducing sensitivity to outliers, which is particularly important in small and imbalanced veterinary datasets.

4. Discussion

This study set out to develop and evaluate a modular, deep learning-based pipeline for automated fracture detection in equine fetlock radiographs, addressing a critical diagnostic challenge in veterinary orthopedics. The working hypothesis was that transfer learning from large-scale, annotated human datasets combined with targeted fine-tuning on curated veterinary images could produce a generalizable and high-performing model, even in low-data settings common to veterinary practice. The results of this study not only support this hypothesis but also suggest promising cross-species applicability, particularly in anatomically comparable musculoskeletal regions, suggesting that musculoskeletal imaging tasks may be particularly well suited to shared anatomical representations in artificial intelligence models.

From the perspective of existing research, this work fills a conspicuous gap. While deep learning is now widely applied in human musculoskeletal imaging, particularly for fracture detection in wrist, ankle, and hip radiographs, equivalent tools in veterinary medicine are limited in the literature. Unlike in human medicine, where large-scale open datasets and community challenges such as MURA and CheXpert have driven benchmarking and accelerated progress, veterinary imaging currently lacks any publicly available datasets or competitions. Equine radiographs, and veterinary imaging more broadly, remain fragmented across literature sources and institutional archives, limiting both reproducibility and model development. Prior efforts in veterinary AI have focused on applications such as lameness detection through gait analysis, sound-based respiratory diagnosis, or disease prediction from laboratory tests [

9,

10,

11]. Few, if any, studies have addressed the automated interpretation of diagnostic imaging in horses despite the fact that catastrophic limb fractures, particularly in the fetlock joint, remain one of the most devastating and costly injuries in equine sports medicine. By targeting this clinical area, our study responds directly to an unmet need and provides a replicable blueprint for broader applications in the field.

In the projection classification task, our findings further support the model’s clinical utility. The accurate identification of imaging projections or views is vital in equine radiology, where dorsopalmar, lateromedial, and oblique views provide non-redundant diagnostic information. The UNI-based projection classifier achieved above 97% validation accuracy overall. However, sensitivity varied across views, with notably lower detection rates for lateromedial (LM) projections. This echoes challenges in human imaging, where certain projections obscure key landmarks or create overlapping anatomical features that hinder both manual and automated diagnosis. Addressing this limitation will require expanded datasets and potentially multi-view fusion approaches in future versions of the pipeline.

Our results demonstrate that a transfer learning approach can overcome the limitations of small, fragmented veterinary datasets. Pretraining on over 4000 human radiographs enabled the model to learn generalized fracture features, such as cortical disruption, joint misalignment, and trabecular discontinuity, before being fine-tuned on only equine images. Most of the equine radiographs were literature-derived, supplemented by anonymized hospital archives, reflecting both the scarcity of open veterinary datasets and the need to collate from diverse sources. The model achieved strong localization performance in equine images, and the results are comparable to those reported in leading human studies. For example, Rajpurkar et al. (2017) reported CheXNet achieving over 90% accuracy for pneumonia detection in chest radiographs using a transfer learning strategy [

4]. Lindsey et al. (2018) demonstrated that deep learning models could match radiologist-level performance in wrist fracture detection [

5], while Kim and MacKinnon (2018) further confirmed the feasibility of transfer learning approaches for musculoskeletal fracture detection [

15]. Similarly, Gale et al. (2017) achieved radiologist-level accuracy for hip fracture detection using deep neural networks [

16], and Langerhuizen et al. (2019) systematically reviewed AI in fracture detection, highlighting consistent performance gains across multiple anatomical sites [

17]. More recently, Costa da Silva et al. (2023) demonstrated promising AI-assisted fracture classification in equine radiographs [

18], and Alam et al. (2025) reported robust localization performance in small animal cross-modal imaging tasks [

19]. Our findings suggest that the veterinary domain can benefit similarly, provided that curated data, architectural tuning, and domain-specific adaptation are prioritized.

Importantly, the model demonstrated strong performance on companion animals despite never being explicitly trained on feline images during the equine fine-tuning phase. Prior to fine-tuning on equine radiographs, the pretrained model (originally trained on human data) achieved 94.1% accuracy on human radiographs, which was sustained throughout the additional training, but performed poorly on equine images, with below 50% accuracy at the patch level due to domain and anatomical differences. After veterinary fine-tuning, the model maintained its strong diagnostic capability on human images (94.1% accuracy, 95.2% sensitivity, 92.8% specificity, and a median IoU of 0.79 [0.75–0.82]) while achieving comparable performance on equine datasets. This outcome demonstrates that the adaptation process did not compromise human diagnostic performance; instead, exposure to veterinary data improved generalizability by encouraging the model to learn more universal fracture-related features across species. Comparable performance on feline cases (accuracy = 85.9%; IoU = 0.74 [0.70–0.77]; sensitivity = 87.6% [85.0–89.8]; specificity = 84.2% [81.5–86.7]) underscores the effectiveness of the core hypothesis: that fracture-related radiographic features share enough inter-species consistency to enable transfer learning. While formal hypothesis testing was not performed due to limited sample sizes and the clinical focus of this study, the consistent separation of interquartile ranges across datasets supports the robustness of the observed performance improvements. The framework demonstrates improved performance across species, and the learning paradigm is best described as human-to-veterinary transfer learning with fine-tuning, rather than a fully bidirectional training framework.

These results have broad implications. Clinically, this pipeline could be further trained to serve as a decision support tool for equine veterinarians, radiologists, and racing authorities seeking supportive detection tools. Early warning systems based on AI-generated outputs could prompt further imaging, targeted rest, or pre-emptive treatment, thus enhancing animal welfare and reducing loss of use cases. More broadly, this approach provides a methodological foundation for future AI systems in veterinary imaging where data scarcity, imaging variability, and anatomical diversity have historically limited progress.

While the present framework focuses on fracture localization using dorsopalmar (DP) radiographic views, this design choice was intentional. DP projections provide the most consistent anatomical representation of the fetlock joint and offer a stable basis for localization under the constraints of limited and heterogeneous veterinary datasets. Restricting localization to a single, clinically dominant view reduced variability and contributed to the robustness observed across datasets. Future extensions of this work could explicitly model multiple projections (DP, lateromedial, and oblique) as parallel branches, with their outputs combined at a later decision stage to improve sensitivity in cases where fractures are subtle or partially obscured in a single view. Additionally, projection classification and fracture localization could share intermediate feature representations, enabling the joint learning of view-specific fracture patterns rather than relying on fully sequential decisions. From a clinical perspective, incorporating confidence or uncertainty estimates alongside localisation outputs would further enhance usability by highlighting ambiguous cases that may warrant additional imaging or expert review. Finally, while fine-tuning in this study was performed in a single adaptation step from human to veterinary images, future work could explore staged fine-tuning strategies that progressively adapt anatomical representations. This can potentially improve knowledge retention from large human datasets while optimizing performance for animal specific morphology. Although localization performance was evaluated only on DP views in this study, the learned fracture features are expected to remain partially transferable to other projections; however, quantitative generalization to lateromedial or oblique views will require explicit multi-view training and validation, which we identify as an important direction for future work.

Despite these encouraging results, several important limitations should be acknowledged. Despite strong performance across datasets, this study has several important limitations. First, fracture localization was evaluated only on dorsopalmar (DP) radiographic views. While this choice was intentional, DP views provide the most consistent anatomical representation of the fetlock joint, limiting the direct generalization of localization performance to lateromedial or oblique projections. Second, sensitivity was lower for lateromedial views during projection classification, highlighting the challenges posed by anatomical overlap and variable imaging protocols. Third, a substantial proportion of equine radiographs were literature-derived, introducing heterogeneity in image quality, acquisition settings, and annotation standards. In addition, the presence of implants, motion artifacts (which are more frequent in equine imaging, as horses are typically not under general anesthesia), and non-standard projections can all affect localization precision. Finally, although modality classification included CT and MRI, fracture detection was optimized for radiographs only. Further validation on larger, multi-institutional datasets and additional fracture types will be required before clinical deployment.

Future research should prioritize three areas. First, expanding annotated veterinary datasets, particularly including pre-fracture and post-treatment cases, would enable the model to detect subtler pathological changes and move beyond fracture detection towards risk stratification. Second, incorporating multi-view learning, where dorsopalmar, lateromedial, and oblique projections are processed in parallel and fused at the decision stage, may improve sensitivity for fractures that are partially obscured in single views. Third, extending the framework to multi-modality learning using CT and MRI volumes could enhance detection in complex or occult injury cases. Finally, integrating uncertainty estimates and explainable AI tools (e.g., Grad-CAM visualizations) would improve clinical interpretability and support real-world adoption. While receiver operating characteristic (ROC) analysis is commonly used in classification-based medical imaging studies, it was not included as a primary evaluation metric in this work. The proposed framework focuses on fracture localization using bounding box prediction, for which spatial metrics such as IoU, sensitivity, and specificity provide more clinically meaningful assessment. Moreover, evaluation was performed at the image (patch) level rather than at the case level, limiting the interpretability of ROC curves in this setting. Future work, particularly with larger case-level datasets and multi-view aggregation, will enable robust ROC and AUC analyses and facilitate direct comparison with alternative architectures.