Which Are the Tools Available for Scholars? A Review of Assisting Software for Authors during Peer Reviewing Process

Abstract

1. Introduction

1.1. Research Question and Objectives

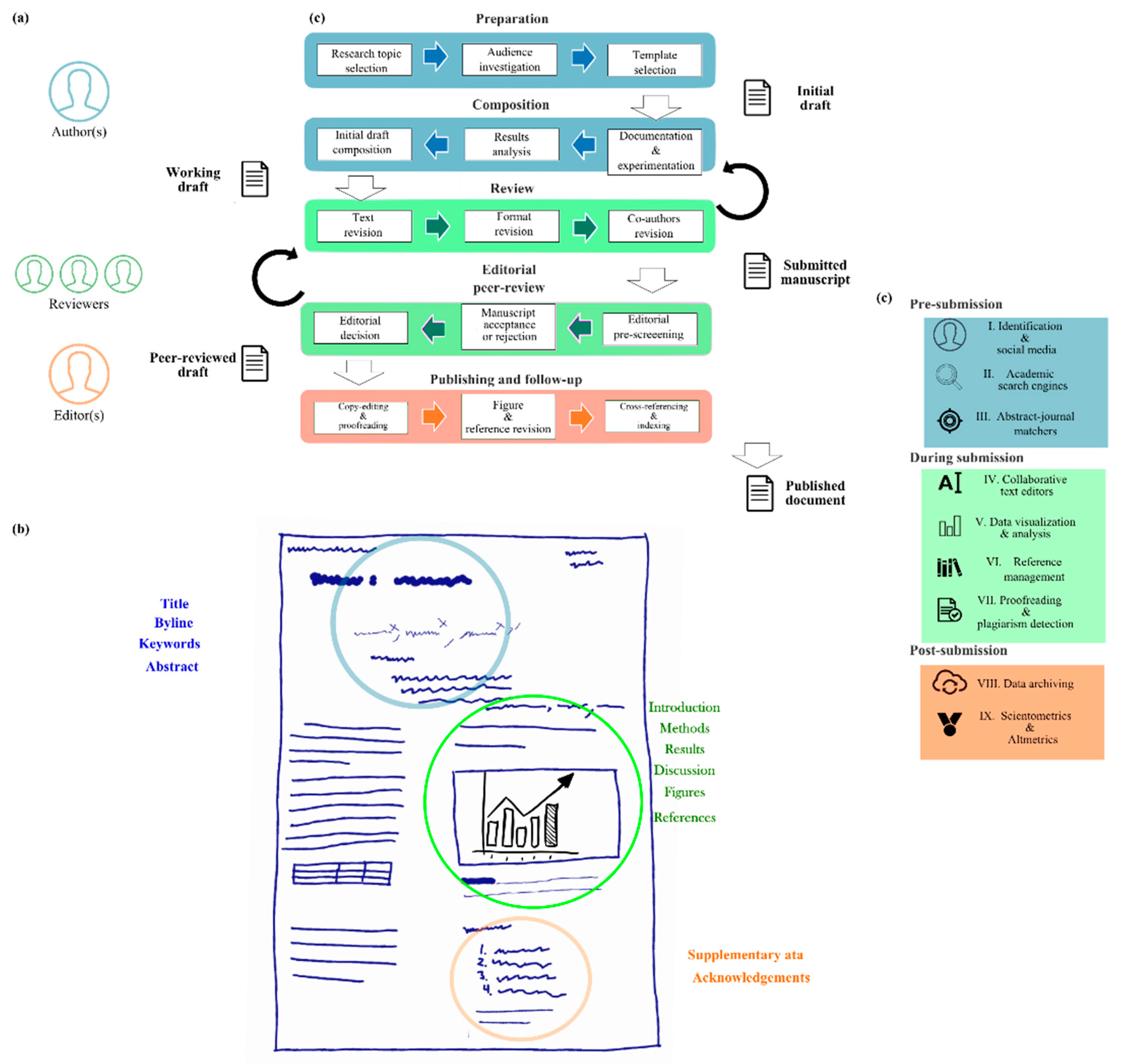

1.2. Peer-Review and the Digital Document

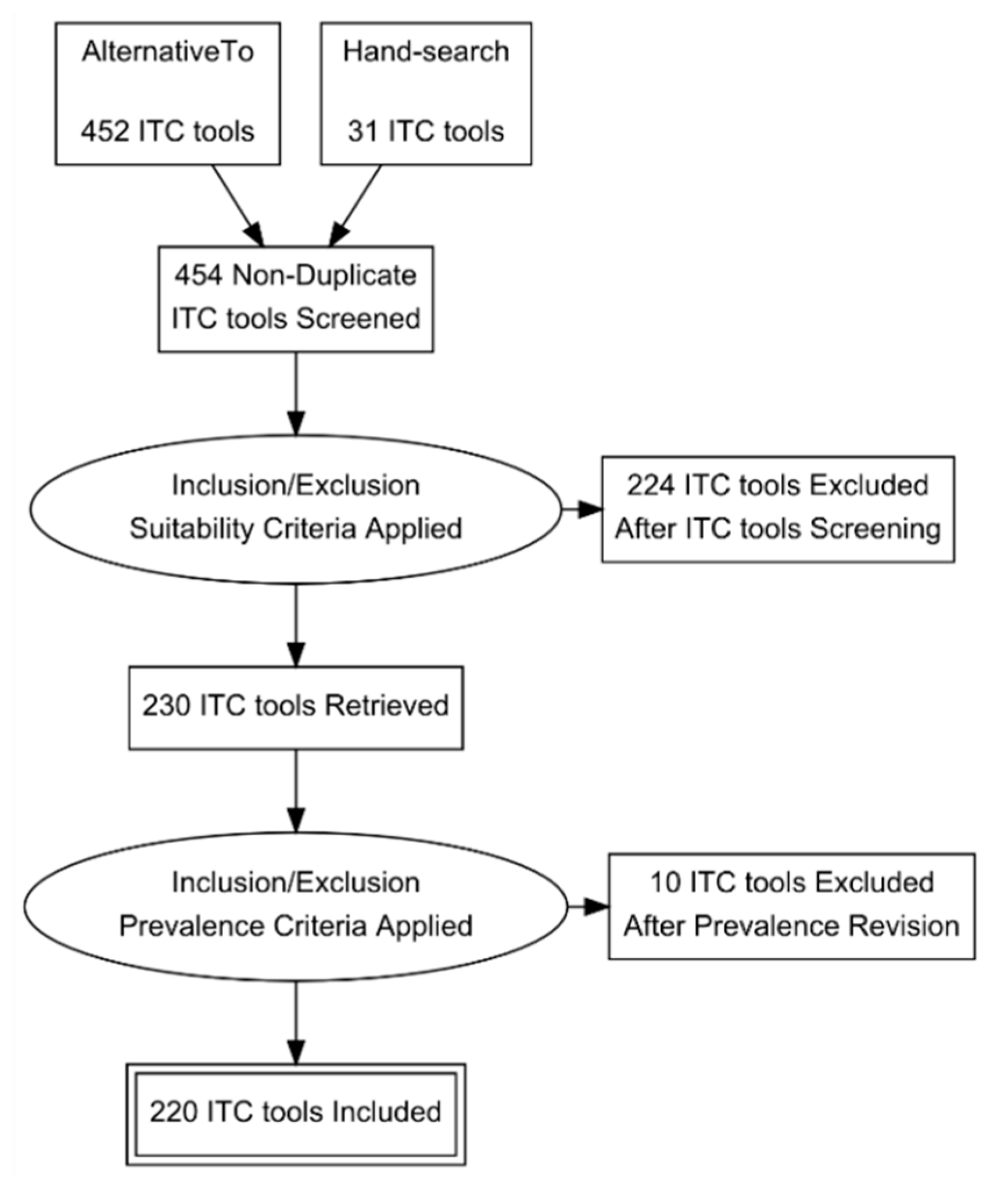

2. Materials and Methods

- Tools that are designed to aid the composition, submission, or review of peer-reviewed documents.

- Tools endorsed or recommended by scholars (i.e., the software found in other compilation reviews), or by research-related institutions.

- Tools that offer direct solutions to improve the author’s experience while peer-reviewing submissions.

2.1. Inclusion and Exclusion Criteria

2.2. Search Strategy

2.3. Data Extraction

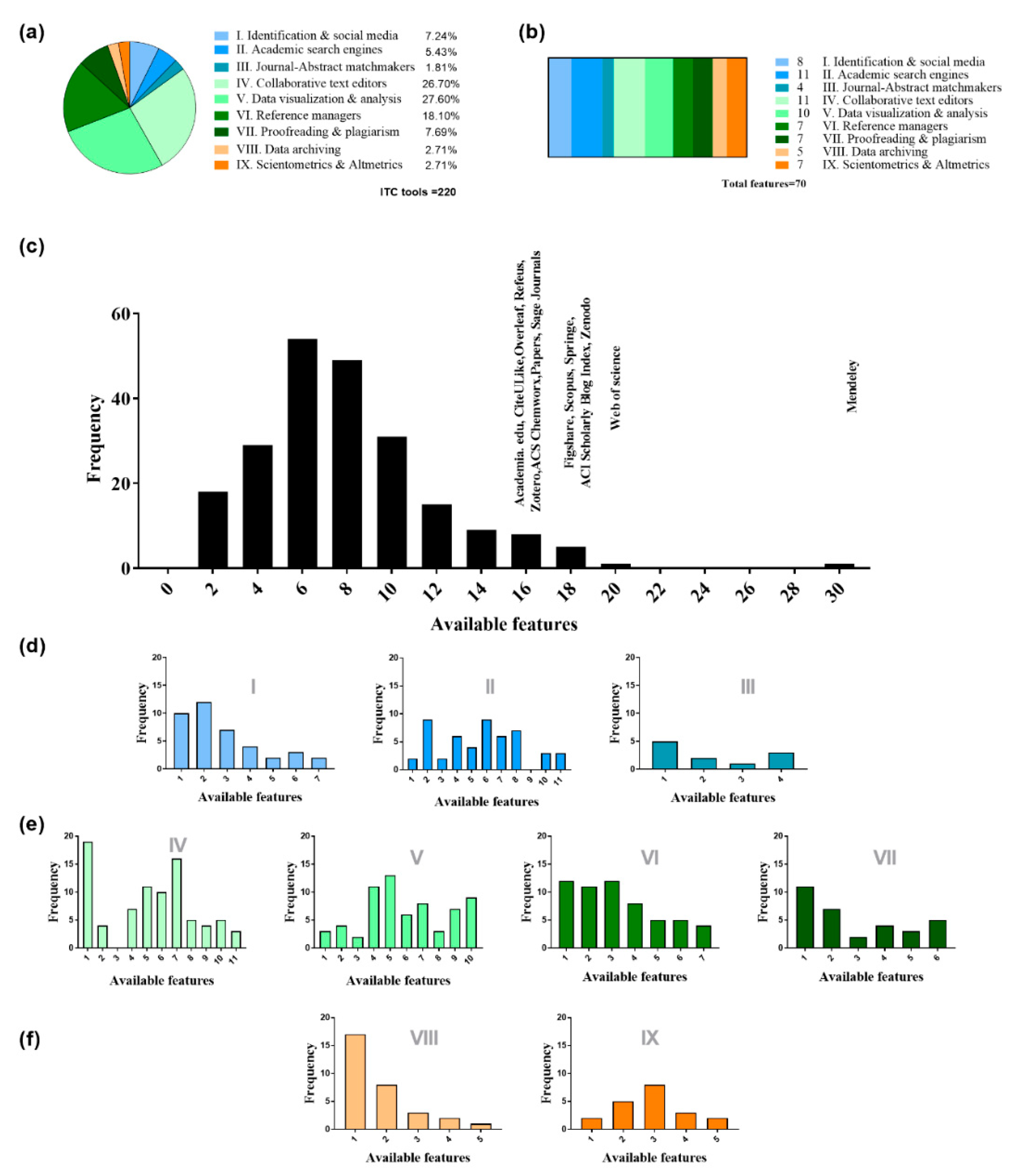

3. Results

3.1. Pre-Submission Tools

3.1.1. Identification and Social Media

3.1.2. Academic Search Engines

3.1.3. Journal-Abstract Matchmakers

3.2. Submission Tools

3.2.1. Collaborative Text Processors

- Enhance the capabilities of Word using complementary software and services (plugins).

- Migrate to a cloud-based online word processing software that expedites the interaction among co-authors and editors.

- Use newer hybrid online platforms developed purposefully for research.

3.2.2. Data Visualization and Analysis

- Editing and analyzing all sorts of data.

- Basic table and chart creation.

- Perform basic statistical tests and multivariate analyses.

- Nonparametric testing.

- Cluster analysis.

- Add-on modules for advanced statistics (GLMM, GLM, logilinear analysis, Bayesian statics, etc), Complex sampling and testing (decision trees, principal components analysis, neural networks, and time series analysis) and forecasting and decision trees (ARIMA modeling, C&RT, and Seasonal decomposition).

3.2.3. Reference Management

- Support for all popular operating systems

- Allow the organization of references in groups or folders.

- File attaching and previsualization capabilities.

- The ability to export and import among several file formats.

- Allow the integration of traditional text processors.

- Database connectivity to facilitate literature search.

- Customization of reference styles.

3.3. Post-Submission Tools

3.3.1. Proofreading and Plagiarism Detection

3.3.2. Data Archiving

3.3.3. Scientometrics and Altmetrics

3.4. Review Result

3.5. Top-Ranked ITC Tools

4. Conclusions

5. Study Limitations

Supplementary Materials

Author Contributions

Funding

Conflicts of Interest

References

- Borgman, C.L. Scholarship in the Digital Age: Information, Infrastructure, and the Internet, 1st ed.; The MIT Press: Cambridge, MA, USA, 2010; ISBN 978-0-262-51490-3. [Google Scholar]

- Matthews, J.R.; Matthews, R.W. Successful Scientific Writing: A Step-by-Step Guide for the Biological and Medical Sciences, 4th ed.; Cambridge University Press: Cambridge, UK, 2014; ISBN 978-1-107-69193-3. [Google Scholar]

- McMillan, V.E. Writing Papers in the Biological Sciences, 5th ed.; Bedford/St. Martin’s: Boston, MA, USA, 2011; ISBN 978-0-312-64971-5. [Google Scholar]

- Heard, S.B. The Scientist’s Guide to Writing: How to Write More Easily and Effectively throughout Your Scientific Career; Princeton University Press: Princeton, NJ, USA, 2016; ISBN 978-0-691-17022-0. [Google Scholar]

- Hanauer, S. How to Get Published. Available online: http://www.uta.fi/kirjasto/koulutukset/tutkijakoulutus/elsevier_seminaari_211114/Get%20Published%20Quick%20Guide_updatedurl.pdf (accessed on 12 August 2019).

- Author and Reviewer Tutorials-How to Peer Review | Springer. Available online: https://www.springer.com/gp/authors-editors/authorandreviewertutorials/howtopeerreview (accessed on 12 August 2019).

- Journal Authors. Available online: https://www.elsevier.com/authors/journal-authors (accessed on 12 August 2019).

- PLoS ONE: Accelerating the Publication of Peer-Reviewed Science. Available online: http://journals.plos.org/plosone/s/submission-guidelines (accessed on 12 August 2019).

- Morris, S.; Barnas, E.; LaFrenier, D.; Reich, M. The Handbook of Journal Publishing, 1st ed.; Cambridge University Press: Cambridge, UK; New York, NY, USA, 2013; ISBN 978-1-107-02085-6. [Google Scholar]

- Wager, E.; Kleinert, S. Cooperation Between Research Institutions and Journals on Research Integrity Cases: Guidance from the Committee on Publication Ethics COPE. Acta Inform. Med. 2012, 20, 136. [Google Scholar] [CrossRef] [PubMed]

- Brian Paltridge Learning to review submissions to peer reviewed journals: How do they do it? Int. J. Res. Dev. 2013, 4, 6–18.

- PLoS ONE: Accelerating the Publication of Peer-Reviewed Science. Available online: http://journals.plos.org/plosone/s/reviewer-guidelines (accessed on 12 August 2019).

- Wiley: Wiley-Publons Pilot Program Enhances Peer-Reviewer Recognition. Available online: http://www.wiley.com/WileyCDA/PressRelease/pressReleaseId-116922.html (accessed on 12 August 2019).

- Recommendations for the Conduct, Reporting, Editing, and Publication of Scholarly Work in Medical Journals. Available online: http://www.icmje.org/recommendations (accessed on 6 September 2019).

- Stephen McMinn, H. Library support of bibliographic management tools: A review. Ref. Serv. Rev. 2011, 39, 278–302. [Google Scholar] [CrossRef]

- Nández, G.; Ángel, B. Use of social networks for academic purposes: A case study. Electron. Libr. 2013, 31, 781–791. [Google Scholar] [CrossRef]

- Nedra, I.; Anja, H.C.; Mohamed, B.A. New scientometric indicator for the qualitative evaluation of scientific production. New Libr. World 2015, 116, 661–676. [Google Scholar]

- Akers, K.G.; Sarkozy, A.; Wu, W.; Slyman, A. ORCID Author Identifiers: A Primer for Librarians. Med. Ref. Serv. Q. 2016, 35, 135–144. [Google Scholar] [CrossRef] [PubMed]

- Barnes, C. The Use of Altmetrics as a Tool for Measuring Research Impact. Aust. Acad. Res. Libr. 2015, 46, 121–134. [Google Scholar] [CrossRef]

- Koffel, J.B. Use of Recommended Search Strategies in Systematic Reviews and the Impact of Librarian Involvement: A Cross-Sectional Survey of Recent Authors. PLoS ONE 2015, 10, e0125931. [Google Scholar] [CrossRef] [PubMed]

- Cronin, B.; Sugimoto, C.R. (Eds.) Beyond Bibliometrics: Harnessing Multidimensional Indicators of Scholarly Impact, 1st ed.; The MIT Press: Cambridge, MA, USA, 2014; ISBN 978-0-262-52551-0. [Google Scholar]

- Gingras, Y. Bibliometrics and Research Evaluation: Uses and Abuses; The MIT Press: Cambridge, MA, USA, 2016; ISBN 978-0-262-03512-5. [Google Scholar]

- Benos, D.J.; Bashari, E.; Chaves, J.M.; Gaggar, A.; Kapoor, N.; LaFrance, M.; Mans, R.; Mayhew, D.; McGowan, S.; Polter, A.; et al. The ups and downs of peer review. AJP Adv. Physiol. Educ. 2007, 31, 145–152. [Google Scholar] [CrossRef]

- Grainger, D.W. Peer review as professional responsibility: A quality control system only as good as the participants. Biomaterials 2007, 28, 5199–5203. [Google Scholar] [CrossRef]

- Ware, M.; Mabe, M. An overview of scientific and scholarly journal publishing; International Association of Scientific, Technical and Medical Publishers: Oxford, UK, 2009. [Google Scholar]

- Andy Tattersall For what it’s worth-The open peer review landscape. Online Inf. Rev. 2015, 39, 649–663. [CrossRef]

- Tennant, J.P.; Crane, H.; Crick, T.; Davila, J.; Enkhbayar, A.; Havemann, J.; Kramer, B.; Martin, R.; Masuzzo, P.; Nobes, A.; et al. Ten Hot Topics around Scholarly Publishing. Publications 2019, 7, 34. [Google Scholar] [CrossRef]

- Walker, R.; Rocha da Silva, P. Emerging trends in peer review—A survey. Brain Imaging Methods 2015, 9, 169. [Google Scholar]

- Hojat, M.; Gonnella, J.S.; Caelleigh, A.S. Impartial Judgment by the “Gatekeepers” of Science: Fallibility and Accountability in the Peer Review Process. Adv. Health Sci. Educ. 2003, 8, 75–96. [Google Scholar] [CrossRef]

- Rosenthal, R. The file drawer problem and tolerance for null results. Psychol. Bull. 1979, 86, 638–641. [Google Scholar] [CrossRef]

- Scargle, J.D. Publication Bias (The “File-Drawer Problem”) in Scientific Inference. arXiv 1999, arXiv:Physics/9909033. [Google Scholar]

- Bos, N.; Zimmerman, A.; Olson, J.; Yew, J.; Yerkie, J.; Dahl, E.; Olson, G. From Shared Databases to Communities of Practice: A Taxonomy of Collaboratories. J. Comput. Mediat. Commun. 2007, 12, 652–672. [Google Scholar] [CrossRef]

- Git-A Short History of Git. Available online: https://git-scm.com/book/en/v2/Getting-Started-A-Short-History-of-Git (accessed on 14 August 2019).

- The DOI System. Available online: http://www.doi.org/ (accessed on 14 August 2019).

- Paskin, N. Digital Object Identifier (DOI?) System. In Encyclopedia of Library and Information Sciences, 3rd ed.; CRC Press: Boca Raton, FL, USA, 2009; pp. 1586–1592. ISBN 978-0-8493-9712-7. [Google Scholar]

- Crossref.Org. Available online: www.crossref.org (accessed on 14 August 2019).

- Moher, D.; Liberati, A.; Tetzlaff, J.; Altman, D.G.; Group, T.P. Preferred Reporting Items for Systematic Reviews and Meta-Analyses: The PRISMA Statement. PLoS Med. 2009, 6, e1000097. [Google Scholar] [CrossRef]

- Alternative To. Available online: http://alternativeto.net/ (accessed on 12 August 2019).

- García-Gómez, C. ORCID: Un sistema global para la identificación de investigadores. El Prof. Inf. 2012, 21, 210–212. [Google Scholar] [CrossRef]

- Scopus-Search for an Author Profile. Available online: https://www.scopus.com/freelookup/form/author.uri (accessed on 22 January 2018).

- ResearcherID.com. Available online: http://www.researcherid.com.etechconricyt.idm.oclc.org/Home.action (accessed on 22 January 2018).

- arXiv.Org Help-Author Identifiers. Available online: https://arxiv.org/help/author_identifiers (accessed on 22 January 2018).

- ORCID. Available online: https://orcid.org/ (accessed on 22 January 2018).

- Credit where credit is due. Nature 2009, 462, 825. [CrossRef][Green Version]

- Our Mission. Available online: http://orcid.org/about/what-is-orcid/mission (accessed on 12 August 2019).

- Bik, H.M.; Goldstein, M.C. An introduction to social media for scientists. PLoS Biol. 2013, 11, e1001535. [Google Scholar] [CrossRef] [PubMed]

- Thelwall, M.; Kousha, K. Academia.edu: Social network or Academic Network? J. Assoc. Inf. Sci. Technol. 2014, 65, 721–731. [Google Scholar] [CrossRef]

- Ovadia, S. When Social Media Meets Scholarly Publishing. Behav. Soc. Sci. Libr. 2013, 32, 194–198. [Google Scholar] [CrossRef]

- Thelwall, M.; Kousha, K. ResearchGate: Disseminating, communicating, and measuring Scholarship? J. Assoc. Inf. Sci. Technol. 2015, 66, 876–889. [Google Scholar] [CrossRef]

- Lupton, D. ‘Feeling Better Connected’: Academics’ Use of Social Media. Available online: http://apo.org.au/resource/feeling-better-connected-academics-use-social-media (accessed on 12 August 2019).

- Mendeley-Reference Management Software & Researcher Network. Available online: https://www.mendeley.com/ (accessed on 22 January 2018).

- About Us | ResearchGate, the Professional Network for Scientists. Available online: https://www.researchgate.net/about (accessed on 22 January 2018).

- Academia.edu | About. Available online: https://www.academia.edu/about (accessed on 22 January 2018).

- CiteULike: Everyone’s Library. Available online: http://www.citeulike.org/ (accessed on 24 January 2018).

- Yu, M.C.; Wu, Y.C.J.; Alhalabi, W.; Kao, H.Y.; Wu, W.H. ResearchGate: An effective altmetric indicator for active researchers? Comput. Hum. Behav. 2016, 55, 1001–1006. [Google Scholar] [CrossRef]

- Ovadia, S. ResearchGate and Academia.edu: Academic Social Networks. Behav. Soc. Sci. Libr. 2014, 33, 165–169. [Google Scholar] [CrossRef]

- Kintisch, E. Is ResearchGate Facebook for science? Available online: http://sciencemag.org/careers/2014/08/researchgate-facebook-science (accessed on 12 August 2019).

- Menon, V.; Muraleedharan, A. Credit and visibility for peer reviewing: An overlooked aspect of scholarly publication. J. Neurosci. Rural Pract. 2016, 7, 330–331. [Google Scholar] [CrossRef] [PubMed]

- Publons. Available online: http://publons.com/ (accessed on 29 May 2019).

- Citrome, L. Peer review and Publons—Enhancements for the reviewer. Int. J. Clin. Pract. 2016, 70, 364. [Google Scholar] [CrossRef]

- Smith, D.R. Will Publons Popularize the Scientific Peer-Review Process? BioScience 2016, 66, 265–266. [Google Scholar] [CrossRef]

- Abbott, A. Digital Paper: A Manual for Research and Writing with Library and Internet Materials; University of Chicago Press: Chicago, IL, USA; London, UK, 2014; ISBN 978-0-226-16778-7. [Google Scholar]

- Öğrenci, A.S. Why do students prefer search engines over academic databases. In Proceedings of the 2013 International Conference on Information Technology Based Higher Education and Training (ITHET), Antalya, Turkey, 10–12 October 2013; pp. 1–3. [Google Scholar]

- van Dijck, J. Search engines and the production of academic knowledge. Int. J. Cult. Stud. 2010, 13, 574–592. [Google Scholar] [CrossRef]

- Ortega, J.L. Academic Search Engines: A Quantitative Outlook; Chandos Publishing: Oxford, UK, 2014; ISBN 978-1-84334-791-0. [Google Scholar]

- Dukić, D. Use and perceptions of online academic databases among Croatian university teachers and researchers. Libri Int. J. Libr. Inf. Serv. 2014, 64, 173–184. [Google Scholar] [CrossRef]

- Elsevier Journal Finder. Available online: http://journalfinder.elsevier.com/ (accessed on 14 August 2016).

- Springer Journal Suggester. Available online: https://journalsuggester.springer.com/ (accessed on 22 January 2018).

- Journal Finder. Available online: http://rnd.wiley.com/html/journalfinder.html (accessed on 22 January 2018).

- Journal / Author Name Estimator. Available online: http://jane.biosemantics.org/ (accessed on 13 August 2016).

- SciRev—Review the Scientific Review Process. Available online: https://scirev.sc/ (accessed on 12 August 2019).

- Open Access Spectrum Evaluation Tool. Available online: https://library.maastrichtuniversity.nl/the-open-access-spectrum-evaluation-tool/ (accessed on 12 August 2019).

- Beall’s List of Predatory Journals and Publishers. Available online: https://beallslist.weebly.com/ (accessed on 29 May 2019).

- About COPE | Committee on Publication Ethics: COPE. Available online: http://publicationethics.org/about (accessed on 12 August 2019).

- Checklist—Be Informed-LibGuides at Duke University Medical Center. Available online: http://guides.mclibrary.duke.edu/beinformed (accessed on 13 August 2016).

- LaTeX—A Document Preparation System. Available online: https://www.latex-project.org/ (accessed on 21 July 2016).

- Apache OpenOffice Product Description. Available online: https://www.openoffice.org/product/index.html (accessed on 22 January 2018).

- Who Are We? | LibreOffice-Free Office Suite-Fun Project-Fantastic People. Available online: https://www.libreoffice.org/about-us/who-are-we/ (accessed on 22 January 2018).

- Brischoux, F.; Legagneux, P. Don’t Format Manuscripts. Scientist 2009, 23, 24–25. [Google Scholar]

- Southavilay, V.; Yacef, K.; Reimann, P.; Calvo, R.A. Analysis of Collaborative Writing Processes Using Revision Maps and Probabilistic Topic Models. In Proceedings of the Third International Conference on Learning Analytics and Knowledge, Leuven, Belgium, 8–13 April 2013; ACM: New York, NY, USA, 2013; pp. 38–47. [Google Scholar]

- Knauff, M.; Nejasmic, J. An Efficiency Comparison of Document Preparation Systems Used in Academic Research and Development. PLoS ONE 2014, 9, e115069. [Google Scholar] [CrossRef] [PubMed]

- Suwantarathip, O.; Wichadee, S. The Effects of Collaborative Writing Activity Using Google Docs on Students’ Writing Abilities. Turk. Online J. Educ. Technol. TOJET 2014, 13, 148–156. [Google Scholar]

- Google Docs-Create and Edit Documents Online, for Free. Available online: https://www.google.com/docs/about/ (accessed on 12 August 2019).

- Perkel, J.M. Scientific writing: The online cooperative. Nature 2014, 514, 127–128. [Google Scholar] [CrossRef] [PubMed]

- Microsoft Word Online-Work Together on Word Documents. Available online: https://office.live.com/start/Word.aspx (accessed on 12 August 2019).

- Typewrite-Simple, Real-time Collaborative Writing Environment. Available online: https://typewrite.io/ (accessed on 14 August 2019).

- Penflip-Collaborative Writing and Version Control. Available online: https://www.penflip.com/ (accessed on 24 January 2018).

- Etherpad. Available online: http://etherpad.org/ (accessed on 14 August 2019).

- Gobby. Available online: http://gobby.github.io/ (accessed on 24 January 2018).

- ShareLaTeX, Online LaTeX Editor. Available online: https://www.sharelatex.com/ (accessed on 24 January 2018).

- Build Software Better, Together. Available online: https://github.com (accessed on 24 January 2018).

- Overleaf: Real-Time Collaborative Writing and Publishing Tools with Integrated PDF Preview. Available online: https://www.overleaf.com/ (accessed on 12 August 2019).

- Zotero | Home. Available online: https://www.zotero.org/ (accessed on 24 January 2018).

- Figshare-Credit for All Your Research. Available online: https://figshare.com/ (accessed on 24 January 2018).

- arXiv.Org E-Print Archive. Available online: https://arxiv.org/ (accessed on 24 January 2018).

- bioRxiv.Org-the Preprint Server for Biology. Available online: https://www.biorxiv.org/ (accessed on 24 January 2018).

- Modern Visualization for the Data Era. Available online: https://plot.ly (accessed on 24 January 2018).

- Mind the Graph. Available online: https://mindthegraph.com/ (accessed on 13 August 2019).

- IEEE. Available online: https://www.ieee.org/index.html (accessed on 24 January 2018).

- OSA | OSA Publishing. Available online: https://www.osapublishing.org/ (accessed on 2 June 2019).

- MDPI-Publisher of Open Access Journals. Available online: https://www.mdpi.com/ (accessed on 24 January 2018).

- PeerJ. Available online: https://peerj.com/ (accessed on 24 January 2018).

- Scientific Reports. Available online: https://www.nature.com/srep/ (accessed on 24 January 2018).

- Hinsen, K. Platforms for publishing and archiving computer-aided research. F1000Research 2014, 3, 289. [Google Scholar] [CrossRef] [PubMed]

- Write Research Documents Online, Together. | Authorea. Available online: https://www.authorea.com/ (accessed on 12 August 2019).

- Pubmeddev Home-PubMed-NCBI. Available online: https://www.ncbi.nlm.nih.gov/pubmed/ (accessed on 24 January 2018).

- Fidus Writer | a Semantic Word Processor for Academics. Available online: https://www.fiduswriter.org/ (accessed on 24 January 2018).

- Mayr, P.; Momeni, F.; Lange, C. Opening Scholarly Communication in Social Sciences: Supporting Open Peer Review with Fidus Writer. arXiv 2016, arXiv:160102927. [Google Scholar]

- Few, S. Show Me the Numbers: Designing Tables and Graphs to Enlighten; Analytics Press: Burlingame, CA, USA, 2012; ISBN 978-0970601971. [Google Scholar]

- Wellcome Image Awards. Available online: http://www.wellcomeimageawards.org/ (accessed on 13 August 2019).

- Tufte, E.R. The Visual Display of Quantitative Information, 2nd ed.; Graphics Pr: Cheshire, UK, 2001; ISBN 978-0-9613921-4-7. [Google Scholar]

- GIMP. Available online: https://www.gimp.org/ (accessed on 24 January 2018).

- Photo Editor Online-Pixlr.com. Available online: https://pixlr.com/ (accessed on 24 January 2018).

- Sumoware Sumopaint-Online Image Editor. Available online: https://sumopaint.com/ (accessed on 2 June 2019).

- Paint.NET-Free Software for Digital Photo Editing. Available online: https://www.getpaint.net/ (accessed on 24 January 2018).

- Draw Freely | Inkscape. Available online: https://inkscape.org/es/ (accessed on 24 January 2018).

- Vectr-Free Online Vector Graphics Editor. Available online: https:/vectr.com/ (accessed on 24 January 2018).

- GNSI | Guild of Natural Science Illustrators. Available online: https://gnsi.org/ (accessed on 13 August 2019).

- Association of Medical Illustrators. Available online: http://ami.org/ (accessed on 13 August 2019).

- Mellow, G. So You Want to Hire a Science Illustrator. Available online: https://blogs.scientificamerican.com/symbiartic/so-you-want-to-hire-a-science-illustrator/ (accessed on 13 August 2019).

- Microsoft Excel 2016: Programa de Hojas de Cálculo-XLS. Available online: https://products.office.com/es-mx/excel (accessed on 24 January 2018).

- Welcome to Python.Org. Available online: https://www.python.org/ (accessed on 24 January 2018).

- Main Page-MathLab. Available online: http://www.mathlab.mtu.edu/mediawiki/index.php/Main_Page (accessed on 24 January 2018).

- R: The R Project for Statistical Computing. Available online: https://www.r-project.org/ (accessed on 24 January 2018).

- NumPy—NumPy. Available online: http://www.numpy.org/ (accessed on 24 January 2018).

- Hunter, J.D. Matplotlib: A 2D Graphics Environment. Comput. Sci. Eng. 2007, 9, 90–95. [Google Scholar] [CrossRef]

- Matplotlib: Python Plotting—Matplotlib 2.1.1 Documentation. Available online: https://matplotlib.org/ (accessed on 24 January 2018).

- McKinney, W. Python for Data Analysis: Data Wrangling with Pandas, NumPy, and IPython, 1st ed.; O’Reilly Media: Beijing, China, 2012; ISBN 978-1-4493-1979-3. [Google Scholar]

- OriginLab-Origin and OriginPro-Data Analysis and Graphing Software. Available online: https://www.originlab.com/ (accessed on 24 January 2018).

- SigmaPlot-About Us. Available online: http://www.sigmaplot.co.uk/aboutus/aboutus.php (accessed on 24 January 2018).

- Prism-Graphpad.com. Available online: https://www.graphpad.com/scientific-software/prism/ (accessed on 24 January 2018).

- Hilbe, J.M. A Review of Current SPSS Products. Am. Stat. 2003, 57, 310–315. [Google Scholar] [CrossRef]

- Hilbe, J.M. A Review of SPSS 12.01, Part 2. Am. Stat. 2004, 58, 168–171. [Google Scholar] [CrossRef]

- SPSS Statistics-Overview. Available online: https://www.ibm.com/products/spss-statistics (accessed on 13 August 2019).

- SigmaStat | Systat Software, Inc. Available online: https://systatsoftware.com/products/sigmastat/ (accessed on 13 August 2019).

- www.statsoft.com > Products > STATISTICA Features. Available online: http://www.statsoft.com/Products/STATISTICA-Features (accessed on 14 August 2019).

- Paura, L.; Arhipova, I. Advantages and Disadvantages of Professional and Free Software for Teaching Statistics. Inf. Technol. Manag. Sci. 2012, 15, 9–64. [Google Scholar] [CrossRef]

- RStudio. Available online: https://www.rstudio.com/ (accessed on 14 August 2019).

- Zakaria, T.N.T.; Aziz, M.J.A.; Rizan, T.N.; Maasum, T.M. Transformation of L2 writers to correct English: The need for A computer-assisted writing tool. In Proceedings of the 2010 International Symposium on Information Technology, Kuala Lumpur, Malaysia, 15–17 June 2010; Volume 3, pp. 1508–1513. [Google Scholar]

- Aziz, A.; Fook, C.Y.; Alsree, Z. Computational Text Analysis: A More Comprehensive Approach to Determine Readability of Reading Materials. Adv. Lang. Lit. Stud. 2010, 1, 200–219. [Google Scholar] [CrossRef]

- Gilmour, R.; Cobus-Kuo, L.; Ithaca College Library. Reference Management Software: A Comparative Analysis of Four Products. Issues Sci. Technol. Librariansh. 2011, 66, 63–75. [Google Scholar]

- Kali, A. Reference management: A critical element of scientific writing. J. Adv. Pharm. Technol. Res. 2016, 7, 27–29. [Google Scholar] [CrossRef] [PubMed]

- Perkel, J.M. Eight ways to clean a digital library. Nature 2015, 527, 123–124. [Google Scholar] [CrossRef] [PubMed]

- Ullen, V.; Mary, K.; Kessler, J. Citation apps for mobile devices. Ref. Serv. Rev. 2016, 44, 48–60. [Google Scholar] [CrossRef][Green Version]

- BibMe: Free Bibliography & Citation Maker-MLA, APA, Chicago, Harvard. Available online: http://www.bibme.org/ (accessed on 24 January 2018).

- Save Time and Improve your Marks with CiteThisForMe, The No. 1 Citation Tool. Available online: http://www.citethisforme.com (accessed on 24 January 2018).

- EasyBib: The Free Automatic Bibliography Composer. Available online: http://www.easybib.com/ (accessed on 24 January 2018).

- Citavi: Organizar el Conocimiento. Gestión de Referencias Bibliográficas, Organización del Conocimiento y Planificación de Tareas. Available online: https://www.citavi.com/ (accessed on 24 January 2018).

- Colwiz: Free Reference Manager & Research Groups Manager. Available online: https://www.colwiz.com (accessed on 24 January 2018).

- EndNote. Available online: http://endnote.com/ (accessed on 24 January 2018).

- F1000Workspace. Available online: https://f1000.com/work (accessed on 24 January 2018).

- Acceder a RefWorks. Available online: https://www.refworks.com/refworks2/default.aspx?r=authentication::init (accessed on 24 January 2018).

- Alli, A.M.E.T.; Abdulla, H.M.D.; Snasel, V. Overview and comparison of plagiarism detection tools. In Proceedings of the Dateso 2011: Annual International Workshop on DAtabases, TExts, Specifications and Objects, Pisek, Czech Republic, 20–22 April 2011; pp. 61–172. [Google Scholar]

- Bakhtiyari, K.; Salehi, H.; Embi, M.A.; Shakiba, M.; Zavvari, A.; Shahbazi-Moghadam, M.; Ebrahim, N.A.; Mohammadjafari, M. Ethical and Unethical Methods of Plagiarism Prevention in Academic Writing. Int. Educ. Stud. 2014, 7, 52–62. [Google Scholar] [CrossRef]

- Baždarić, K. Plagiarism detection-quality management tool for all scientific journals. Croat. Med. J. 2012, 53, 1–3. [Google Scholar] [CrossRef] [PubMed]

- Eberle, M.E. Paraphrasing, Plagiarism, and Misrepresentation in Scientific Writing. Trans. Kans. Acad. Sci. 2013, 116, 157–167. [Google Scholar] [CrossRef]

- Landau, J.D.; Druen, P.B.; Arcuri, J.A. Methods for helping students avoid plagiarism. Teach. Psychol. 2002, 29, 112–115. [Google Scholar] [CrossRef]

- Mozgovoy, M.; Kakkonen, T.; Cosma, G. Automatic Student Plagiarism Detection: Future Perspectives. J. Educ. Comput. Res. 2010, 43, 511–531. [Google Scholar] [CrossRef]

- Curno, M.J. Challenges to ethical publishing in the digital era. J. Inf. Commun. Ethics Soc. 2016, 14, 4–15. [Google Scholar] [CrossRef]

- Crossref. Org: Similarity Check. Available online: http://www.crossref.org/crosscheck/index.html (accessed on 12 August 2019).

- Turnitin-Home. Available online: http://turnitin.com/en_us/home (accessed on 24 January 2018).

- Plagiarism Detection Software | iThenticate. Available online: http://www.ithenticate.com (accessed on 24 January 2018).

- Jones, K.O. Practical issues for academics using the Turnitin plagiarism detection software; ACM: New York, NY, USA, 2008; p. 50. [Google Scholar]

- Búsqueda y Seguimiento de Plagios: Plagium. Available online: http://www.plagium.com/ (accessed on 24 January 2018).

- Plagiarism Checker Online. Available online: http://plagiarismdetect.org/ (accessed on 24 January 2018).

- Free Online Proofreader: Grammar Check, Plagiarism Detection, and More. Available online: https://www.paperrater.com/ (accessed on 24 January 2018).

- Plagiarism Checker-Free Online Software For Plagiarism Detection. Available online: https://www.duplichecker.com/ (accessed on 24 January 2018).

- Viper Plagiarism Checker. Available online: https://www.scanmyessay.com (accessed on 24 January 2018).

- Plagiarism Detector is Best Free Plagiarism Checker for Students. Available online: https://plagiarismdetector.net/ (accessed on 24 January 2018).

- Plagiarism Checker | Grammarly. Available online: https://www.grammarly.com/plagiarism-checker (accessed on 24 January 2018).

- Mulligan, A.; Hall, L.; Raphael, E. Peer review in a changing world: An international study measuring the attitudes of researchers. J. Am. Soc. Inf. Sci. Technol. 2013, 64, 132–161. [Google Scholar] [CrossRef]

- Greenberg, J.; White, H.C.; Carrier, S.; Scherle, R. A Metadata Best Practice for a Scientific Data Repository. J. Libr. Metadata 2009, 9, 194–212. [Google Scholar] [CrossRef]

- Mayernik, M.S.; Callaghan, S.; Leigh, R.; Tedds, J.; Worley, S. Peer Review of Datasets: When, Why, and How. Bull. Am. Meteorol. Soc. 2015, 96, 191–201. [Google Scholar] [CrossRef]

- Kratz, J.E.; Strasser, C. Researcher Perspectives on Publication and Peer Review of Data. PLoS ONE 2015, 10, e0117619. [Google Scholar] [CrossRef]

- Disciplinary Metadata | Digital Curation Centre. Available online: http://www.dcc.ac.uk/resources/metadata-standards (accessed on 14 August 2019).

- Registry of Research Data Repositories. Available online: http://www.re3data.org/ (accessed on 27 August 2019).

- DataCite. Available online: https://www.datacite.org/ (accessed on 14 August 2019).

- The Repository-Dryad. Available online: https://datadryad.org/pages/repository (accessed on 14 August 2019).

- Zenodo. Available online: www.zenodo.org (accessed on 14 August 2019).

- Thelwall, M.; Kousha, K. Figshare: A universal repository for academic resource sharing? Online Inf. Rev. 2016, 40, 333–346. [Google Scholar] [CrossRef]

- Granovsky, Y.V. Is It Possible to Measure Science? V. V. Nalimov’s Research in Scientometrics. Scientometrics 2001, 52, 127–150. [Google Scholar] [CrossRef]

- Pritchard, A. Statistical Bibliography or Bibliometrics. J. Doc. 1969, 24, 348–349. [Google Scholar]

- Web of Science-Please Sign In to Access Web of Science. Available online: http://login.webofknowledge.com/error/Error?Error=IPError&PathInfo=%2F&RouterURL=http%3A%2F%2Fwww.webofknowledge.com%2F&Domain=.webofknowledge.com&Src=IP&Alias=WOK5 (accessed on 24 January 2018).

- About Google Scholar. Available online: https://scholar.google.com/intl/en/scholar/about.html (accessed on 24 January 2018).

- Garfield, E. Citation indexes for science; a new dimension in documentation through association of ideas. Science 1955, 122, 108–111. [Google Scholar] [CrossRef]

- Mingers, J.; Leydesdorff, L. A review of theory and practice in scientometrics. Eur. J. Oper. Res. 2015, 246, 1–19. [Google Scholar] [CrossRef]

- Torres-Salinas, D.; Cabezas-Clavijo, Á.; Jiménez-Contreras, E. Altmetrics: New Indicators for Scientific Communication in Web 2.0. Comunicar 2013, 21, 53–60. [Google Scholar] [CrossRef]

- Chin Roemer, R.; Borchardt, R. Major Altmetrics Tools. Libr. Technol. Rep. 2015, 51, 11–19. [Google Scholar]

- Priem, J.; Taraborelli, D.; Groth, P.; Neylon, C. Altmetrics: A manifesto. Available online: http://altmetrics.org/manifiesto (accessed on 4 June 2019).

- Thelwall, M.; Haustein, S.; Larivière, V.; Sugimoto, C.R. Do Altmetrics Work? Twitter and Ten Other Social Web Services. PLoS ONE 2013, 8, e64841. [Google Scholar] [CrossRef] [PubMed]

- Costas, R.; Zahedi, Z.; Wouters, P. Do “altmetrics” correlate with citations? Extensive comparison of altmetric indicators with citations from a multidisciplinary perspective: Do “Altmetrics“ Correlate With Citations? J. Assoc. Inf. Sci. Technol. 2015, 66, 2003–2019. [Google Scholar] [CrossRef]

- Papers app. Available online: https://www.papersapp.com/ (accessed on 4 June 2019).

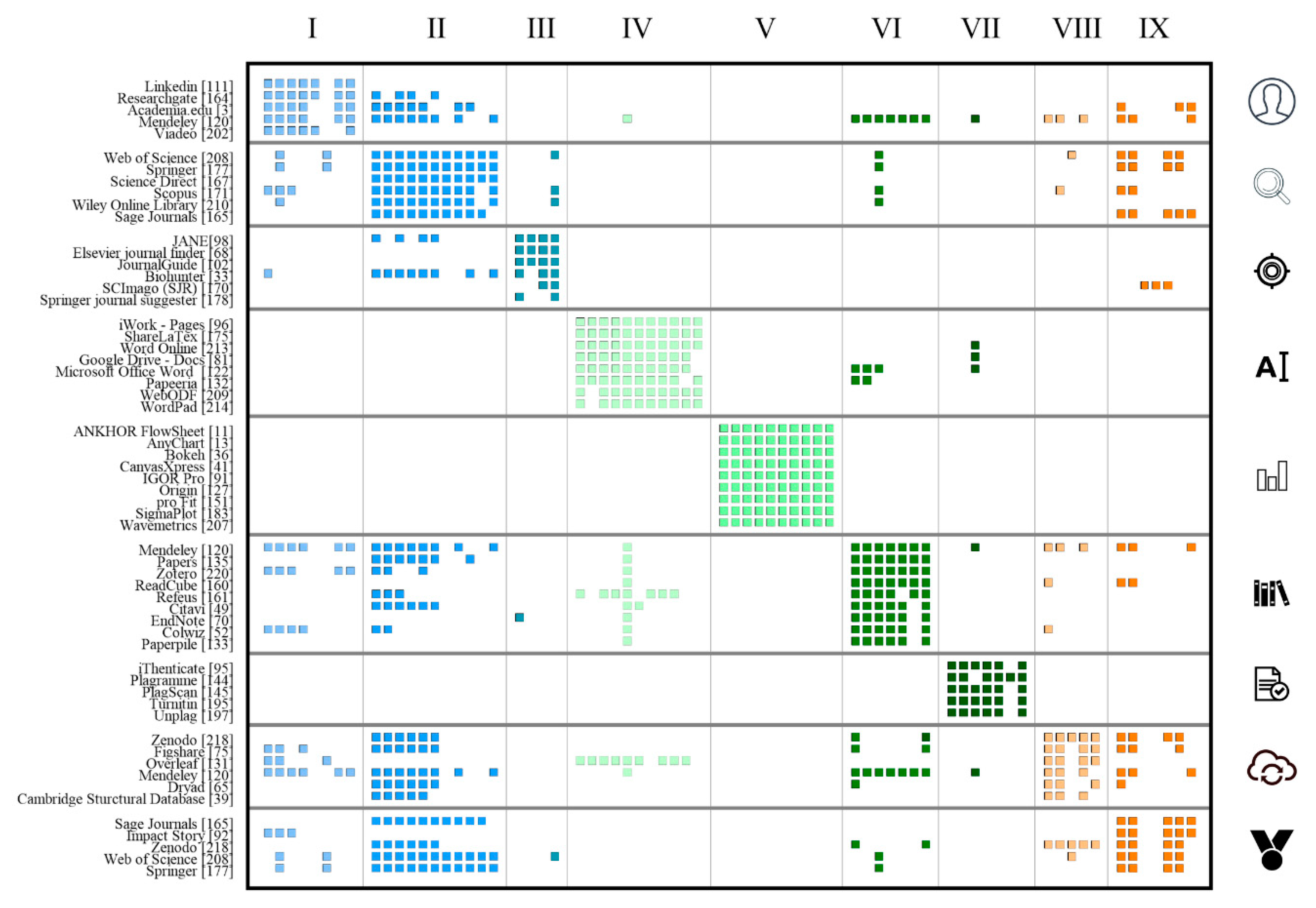

| Category | ITC Function |

|---|---|

| I. Identification and Social media | Pinpoint author’s identity among academics and provide means to relate them to their peers |

| II. Academic search engines | Found and retrieve academic information such as articles, books, and datasets |

| III. Journal-Abstract matchmakers | Suggest submission instances for ongoing research |

| IV. Collaborative text editors | Develop manuscript towards submission on a scholarly instance |

| V. Data visualization and analysis | Process data and generate visual support aids for a manuscript |

| VI. Reference management | Ease citation and manage references within a document |

| VII. Proofreading and plagiarism detection | Revise a document for potential grammar, spelling, and plagiarism issues |

| VIII. Data archiving | Sustain permanent access to scientific and scholarly data |

| IX. Scientometrics and Altmetrics | Track performance of authors and contributions among Academy and Social Media |

| Category | I | II | III | IV | V | VI | VII | IX | Overall Score |

|---|---|---|---|---|---|---|---|---|---|

| Mendeley [120] | 6 | 8 | - | 1 | - | 7 | 1 | 3 | 29/70 |

| Web of Science [208] | 2 | 11 | 1 | - | - | 1 | - | 1 | 20/70 |

| Scopus [171] | 3 | 10 | 1 | - | - | 1 | - | 1 | 18/70 |

| Springer [177] | 2 | 11 | - | - | - | 1 | - | - | 18/70 |

| Figshare [75] | 3 | 6 | - | - | - | 2 | - | 4 | 18/70 |

| ACI Scholarly Blog Index [5] | 4 | 8 | - | - | - | 2 | - | - | 17/70 |

| Zenodo [218] | - | 6 | - | - | - | 2 | - | 5 | 17/70 |

| Refeus [161] | - | 3 | - | 7 | - | 6 | - | - | 16/70 |

| Zotero [220] | 5 | 3 | - | 1 | - | 7 | - | - | 16/70 |

| Academia.edu [3] | 6 | 7 | - | - | - | - | - | - | 16/70 |

| CiteULike [51] | 5 | 8 | - | - | - | 2 | - | 1 | 16/70 |

| Overleaf [131] | 3 | - | - | 9 | - | - | - | 4 | 16/70 |

| Papers [135] | - | 7 | - | 1 | - | 7 | - | - | 15/70 |

| Sage Journals [165] | - | 10 | - | - | - | - | - | - | 15/70 |

| ACS ChemWorx [6] | 4 | 5 | - | 4 | - | 2 | - | - | 15/70 |

| Citavi [49] | - | 6 | - | 2 | - | 6 | - | - | 14/70 |

| Colwiz [52] | 4 | 2 | - | 1 | - | 6 | - | 1 | 14/70 |

| PubChase [152] | 2 | 8 | - | - | - | 3 | - | 1 | 14/70 |

| Authorea [22] | 4 | - | - | 7 | - | 3 | - | - | 14/70 |

| Microsoft Office Word [122] | - | - | - | 10 | - | 3 | 1 | - | 14/70 |

| Bookends [37] | - | 7 | - | - | - | 5 | - | 1 | 13/70 |

| Qiqqa [155] | - | 6 | - | 1 | - | 4 | - | 2 | 13/70 |

| Google Scholar [82] | 2 | 8 | - | - | - | 2 | - | - | 13/70 |

| Wiley Online Library [210] | 1 | 10 | 1 | - | - | 1 | - | - | 13/70 |

| Fidus Writer [74] | - | - | - | 9 | - | 3 | - | - | 12/70 |

| Papeeria [132] | - | - | - | 10 | - | 2 | - | - | 12/70 |

| Word Online [213] | - | - | - | 11 | - | - | 1 | - | 12/70 |

| Biohunter [33] | 1 | 8 | 3 | - | - | - | - | - | 12/70 |

| Software | Primary Function | Platform | Distribution |

|---|---|---|---|

| Mendeley | Reference manager | Desktop/web | Business to Customer |

| Web of Science | Academic search engine | Web | Business to Business |

| Scopus | Academic search engine | Web | Business to Business |

| Springer | Academic search engine | Web | Business to Business |

| Figshare | Data archiving | Web | Business to Business |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Martínez-López, J.I.; Barrón-González, S.; Martínez López, A. Which Are the Tools Available for Scholars? A Review of Assisting Software for Authors during Peer Reviewing Process. Publications 2019, 7, 59. https://doi.org/10.3390/publications7030059

Martínez-López JI, Barrón-González S, Martínez López A. Which Are the Tools Available for Scholars? A Review of Assisting Software for Authors during Peer Reviewing Process. Publications. 2019; 7(3):59. https://doi.org/10.3390/publications7030059

Chicago/Turabian StyleMartínez-López, J. Israel, Samantha Barrón-González, and Alejandro Martínez López. 2019. "Which Are the Tools Available for Scholars? A Review of Assisting Software for Authors during Peer Reviewing Process" Publications 7, no. 3: 59. https://doi.org/10.3390/publications7030059

APA StyleMartínez-López, J. I., Barrón-González, S., & Martínez López, A. (2019). Which Are the Tools Available for Scholars? A Review of Assisting Software for Authors during Peer Reviewing Process. Publications, 7(3), 59. https://doi.org/10.3390/publications7030059