Solve High-Dimensional Reflected Partial Differential Equations by Neural Network Method

Abstract

1. Introduction

2. Approximating Schemes for Reflected PDEs

2.1. Nonlinear Parabolic Reflected PDEs

2.2. From Reflected PDEs to Related Reflected BSDEs

2.3. Discretizing via Two Approaches

3. Numerical Experiments

3.1. Deep C-N Algorithm for Solving High-Dimensional Nonlinear Reflected PDEs

- ( and the same settings in 2 to 4) is a forward iterative procedure, which is determined by approximating scheme (6); this procedure does not contain any parameters that need to be optimized.

- is a forward iterative procedure too, which is characterized by approximating scheme (7). As in the previous step, no parameters need to be optimized in this operation.

- is the key step in the whole calculating procedure. Our goal in this step is approximating the spatial gradients, and meanwhile, the weights are optimized in the (N − 1) sub-networks.

- is a forward iteration procedure that yields the neural network’s final output as the unique approximation of , totally characterized by approximating scheme (14).

3.2. Allen–Cahn Equation

3.3. American Options

4. Discussion and Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Bensoussan, A.; Lions, J.L. Applications of Variational Inequalities in Stochastic Control; Elsevier: Amsterdam, The Netherlands, 2011. [Google Scholar]

- El Karoui, N.; Kapoudjian, C.; Pardoux, E.; Peng, S.; Quenez, M.C. Reflected solutions of backward SDE’s, and related obstacle problems for PDE. Ann. Probab. 1997, 25, 702–737. [Google Scholar] [CrossRef]

- Cagnetti, F.; Gomes, D.; Tran, H.V. Adjoint methods for obstacle problems and weakly coupled systems of PDE. ESAIM COCV 2013, 19, 754–779. [Google Scholar] [CrossRef]

- Huré, C.; Huyên, P.; Xavier, W. Deep backward schemes for high-dimensional PDEs. Math. Comput. 2020, 89, 1547–1579. [Google Scholar] [CrossRef]

- Zienkiewicz, O.C.; Taylor, R.L.; Zienkiewicz, O.C.; Taylor, R.L. The Finite Element Method; McGraw-Hill: London, UK, 1977; Volume 3. [Google Scholar]

- Brenner, S.; Scott, R. The Mathematical Theory of Finite Element Methods; Springer Science & Business Media: Heidelberg, Germany, 2007; Volume 15. [Google Scholar]

- Brennan, M.J.; Schwartz, E.S. The valuation of American put options. J. Financ. 1977, 32, 449–462. [Google Scholar] [CrossRef]

- Brennan, M.J.; Schwartz, E.S. Finite difference methods and jump processes arising in the pricing of contingent claims: A synthesis. J. Financ. Quant. Anal. 1978, 13, 461–474. [Google Scholar] [CrossRef]

- Zhao, J.; Davison, M.; Corless, R.M. Compact finite difference method for American option pricing. J. Comput. Appl. Math. 2007, 206, 306–321. [Google Scholar] [CrossRef]

- Giles, M.B. Multilevel Monte Carlo path simulation. Oper. Res. 2008, 56, 607–617. [Google Scholar] [CrossRef]

- Graham, C.; Talay, D. Stochastic Simulation and Monte Carlo Methods: Mathematical Foundations of Stochastic Simulation; Springer Science & Business Media: Heidelberg, Germany, 2013. [Google Scholar]

- Chrysafinos, K.; Karatzas, E.N. Symmetric errors estimates for discontinuous Galerkin approximations for an optimal control problem associated to semilinear parabolic PDE. Discret. Contin. Dyn. Syst. Ser. B 2012, 17, 1473–1506. [Google Scholar]

- Duan, Y.; Tan, Y.J. Meshless Galerkin method based on regions partitioned into subdomains. Appl. Math. Comput. 2005, 162, 317–327. [Google Scholar] [CrossRef]

- Zumbusch, G.W. A sparse grid PDE solver; discretization, adaptivity, software design and parallelization. In Advances in Software Tools for Scientific Computing; Springer: Berlin/Heidelberg, Germany, 2000; pp. 133–177. [Google Scholar]

- Griebel, M.; Oeltz, D. A sparse grid space-time discretization scheme for parabolic problems. Computing 2007, 81, 1–34. [Google Scholar] [CrossRef]

- Bellman, R.E. Dynamic Programming; Princeton University Press: Princeton, NJ, USA, 1957. [Google Scholar]

- Beck, C.; Becker, S.; Grohs, P.; Jaafari, N.; Jentzen, A. Solving the Kolmogorov PDE by means of deep learning. J. Sci. Comput. 2021, 88, 73–101. [Google Scholar] [CrossRef]

- Becker, S.; Braunwarth, R.; Hutzenthaler, M.; Jentzen, A.; Wurstemberger, P. Numerical simulations for full history recursive multilevel Picard approximations for systems of high-dimensional partial differential equations. Commun. Comput. Phys. 2020, 28, 2109–2138. [Google Scholar] [CrossRef]

- Sirignano, J.; Spiliopoulos, K. DGM: A deep learning algorithm for solving partial differential equations. J. Comput. Phys. 2018, 375, 1339–1364. [Google Scholar] [CrossRef]

- Beck, C.; Becker, S.; Cheridito, P.; Jentzen, A.; Neufeld, A. Deep splitting method for parabolic PDEs. SIAM J. Sci. Comput. 2021, 43, 3135–3154. [Google Scholar] [CrossRef]

- Hutzenthaler, M.; Jentzen, A.; Kruse, T. On multilevel Picard numerical approximations for High-dimensional parabolic partial differential equations and High-dimensional backward stochastic differential equations. J. Sci. Comput. 2019, 79, 1534–1571. [Google Scholar]

- Han, J.; Jentzen, A. Deep learning-based numerical methods for high-dimensional parabolic partial differential equations and backward stochastic differential equations. Community Math. Stat. 2017, 5, 349–380. [Google Scholar]

- Beck, C.; Becker, S.; Grohs, P.; Jaafari, N.; Jentzen, A. Solving stochastic differential equations and Kolmogorov equations by means of deep learning. arXiv 2018, arXiv:1806.00421. [Google Scholar]

- Han, J.; Jentzen, A.; Weinan, E. Solving high-dimensional partial differential equations using deep learning. Proc. Natl. Acad. Sci. USA 2018, 115, 8505–8510. [Google Scholar] [CrossRef] [PubMed]

- Khoo, Y.; Lu, J.; Ying, L. Solving parametric PDE problems with artificial neural networks. Eur. J. Appl. Math. 2021, 32, 421–435. [Google Scholar] [CrossRef]

- Li, Z.; Kovachki, N.; Azizzadenesheli, K.; Liu, B.; Bhattacharya, K.; Stuart, A.; Anandkumar, A. Fourier neural operator for parametric partial differential equations. arXiv 2020, arXiv:2010.08895, 2020. [Google Scholar]

| Number of Iteration Step | Standard | Relative -Approximate Error | Relative -Approximate Error | Mean Value of Loss Function | Standard Deviation of Loss Function | |

|---|---|---|---|---|---|---|

| 0 | 0.4740 | 0.0514 | 7.9775 | 0.9734 | 0.11630 | 0.02953 |

| 1000 | 0.1446 | 0.0340 | 1.7384 | 0.6436 | 0.00550 | 0.00344 |

| 2000 | 0.0598 | 0.0058 | 0.1318 | 0.1103 | 0.00029 | 0.00006 |

| 3000 | 0.0530 | 0.0002 | 0.0050 | 0.0041 | 0.00023 | 0.00001 |

| 4000 | 0.0528 | 0.0002 | 0.0030 | 0.0022 | 0.00020 | 0.00001 |

| Number of Iteration Step | Standard | Relative -Approximate Error | Relative -Approximate Error | Mean Value of Loss Function | Standard Deviation of Loss Function | |

|---|---|---|---|---|---|---|

| 0 | 0.5021 | 0.0791 | 0.2979 | 0.449313 | 0.137191 | 0.043493 |

| 2000 | 0.0659 | 0.0083 | 0.0131 | 0.011521 | 0.000407 | 0.000142 |

| 4000 | 0.0569 | 0.0021 | 0.0002 | 0.000040 | 0.000201 | 0.000027 |

| 6000 | 0.0531 | 0.0002 | 0.0002 | 0.000013 | 0.000118 | 0.000240 |

| 8000 | 0.0529 | 0.0002 | 0.0002 | 0.000156 | 0.000055 | 0.000012 |

| 10,000 | 0.0528 | 0.0001 | 0.0001 | 0.000117 | 0.000030 | 0.000010 |

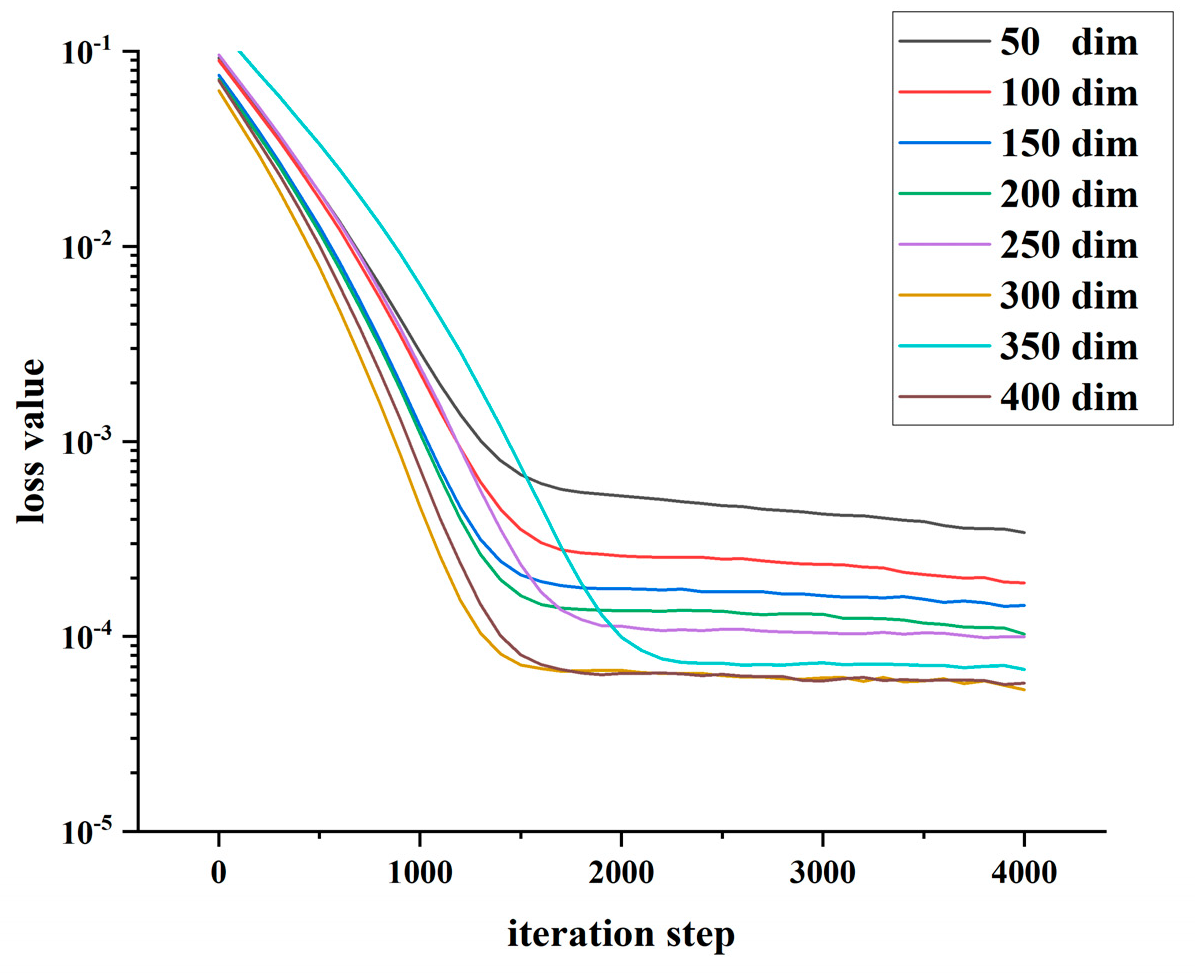

| Dimensions | 50 | 100 | 150 | 200 | 250 | 300 | 350 | 400 |

|---|---|---|---|---|---|---|---|---|

| Deep BSDE | 3.415 × 10−4 | 1.886 × 10−4 | 1.44 × 10−4 | 1.029 × 10−4 | 9.973 × 10−5 | 5.330 × 10−5 | 7.789 × 10−5 | 5.774 × 10−5 |

| Deep C-N | 1.095 × 10−4 | 3.095 × 10−5 | 1.853 × 10−5 | 1.435 × 10−5 | 1.398 × 10−5 | 1.303 × 10−5 | 1.337 × 10−5 | 1.506 × 10−5 |

| Models | Dimensions | Value | Reference | Relative Error |

|---|---|---|---|---|

| Deep C-N | 5 | 0.10720 | 0.10738 | 0.17% |

| RDBDP | 5 | 0.10657 | 0.10738 | 0.75% |

| Deep BSDE | 5 | NC | 0.10738 | NC |

| Deep C-N | 10 | 0.12687 | 0.12996 | 2.38% |

| RDBDP | 10 | 0.12829 | 0.12996 | 1.29% |

| Deep BSDE | 10 | NC | 0.12996 | NC |

| Deep C-N | 20 | 0.15140 | 0.15100 | 0.27% |

| RDBDP | 20 | 0.14430 | 0.15100 | 4.38% |

| Deep BSDE | 20 | NC | 0.15100 | NC |

| Deep C-N | 40 | 0.16213 | 0.16800 | 3.49% |

| RDBDP | 40 | 0.16167 | 0.16800 | 3.77% |

| Deep BSDE | 40 | NC | 0.16800 | NC |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Shi, X.; Zhang, X.; Tang, R.; Yang, J. Solve High-Dimensional Reflected Partial Differential Equations by Neural Network Method. Math. Comput. Appl. 2023, 28, 79. https://doi.org/10.3390/mca28040079

Shi X, Zhang X, Tang R, Yang J. Solve High-Dimensional Reflected Partial Differential Equations by Neural Network Method. Mathematical and Computational Applications. 2023; 28(4):79. https://doi.org/10.3390/mca28040079

Chicago/Turabian StyleShi, Xiaowen, Xiangyu Zhang, Renwu Tang, and Juan Yang. 2023. "Solve High-Dimensional Reflected Partial Differential Equations by Neural Network Method" Mathematical and Computational Applications 28, no. 4: 79. https://doi.org/10.3390/mca28040079

APA StyleShi, X., Zhang, X., Tang, R., & Yang, J. (2023). Solve High-Dimensional Reflected Partial Differential Equations by Neural Network Method. Mathematical and Computational Applications, 28(4), 79. https://doi.org/10.3390/mca28040079