Approximating the Steady-State Temperature of 3D Electronic Systems with Convolutional Neural Networks

Abstract

:1. Introduction

2. Materials and Methods

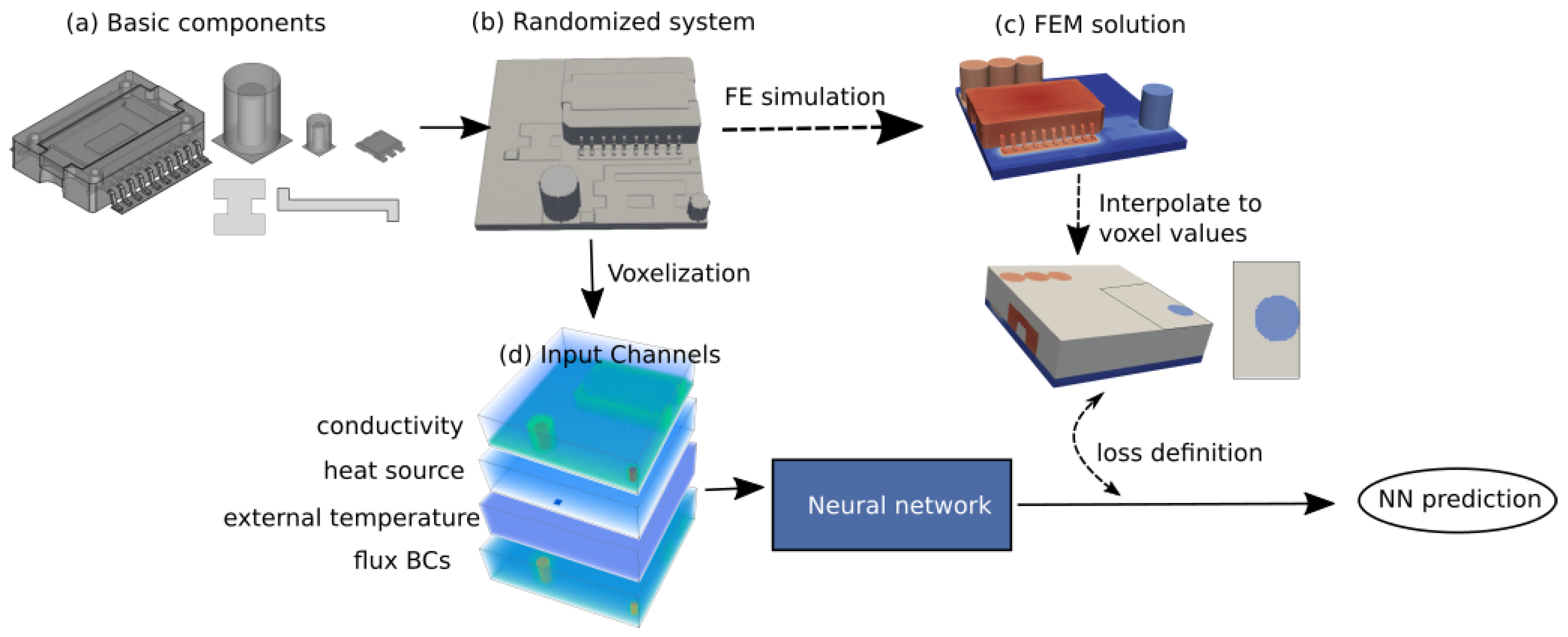

2.1. Dataset Generation

- (1)

- System generation: For each system the number and type of basic components were randomly chosen and they were placed at random locations on a PCB. Material parameters were assigned to the different parts of each component.

- (2)

- Generation of FEM solutions: A constant temperature was set at the bottom of the PCB . A heat sink on top of the large IC was mimicked by a heat flux boundary condition. All other outer surfaces were modelled as heat flux boundaries to air. For each of the created systems, an external temperature and heat transfer coefficient to air and for the sink were randomly chosen. Heat sources (i.e., electric losses) with random magnitude were assigned to some of the components. The systems were meshed. FEM simulations were performed to obtain the temperature solutions.

- (3)

- Voxelization: During postprocessing the systems and the FEM solutions were converted to a set of 3D images per system as input for the NN. Four 3D-images were created per system, one for the distribution of a material property, the external temperature, the heat sources and the heat transfer coefficient.

2.2. NN Architecture

2.2.1. Properties of Heat Propagation

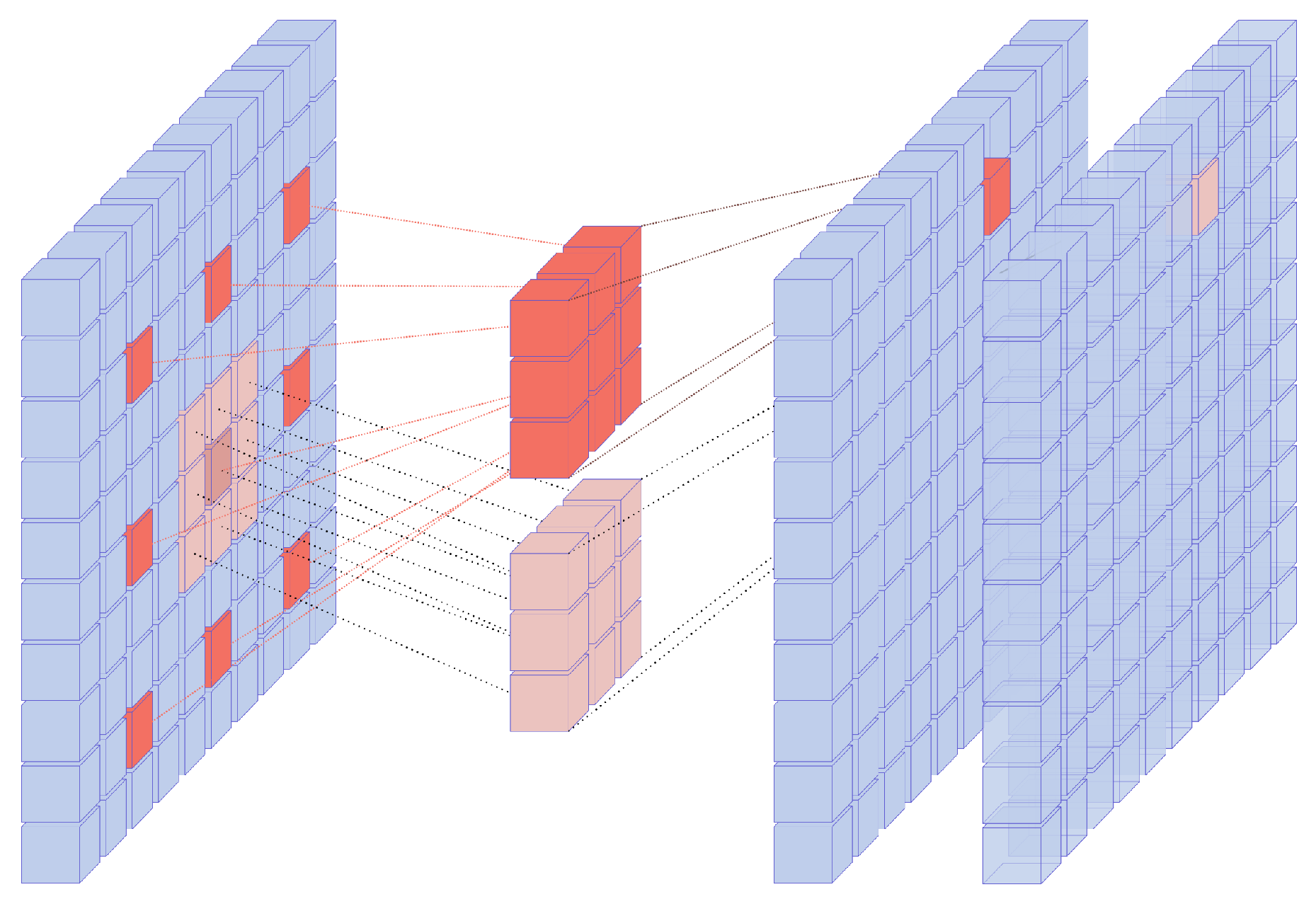

2.2.2. Long-Range Correlations. Fusion Blocks

2.2.3. Choice of Activation Functions

2.2.4. Input to the Network

2.2.5. Network Architecture

- Too many downsampling layers had a damaging effect on the accuracy of the output. Downsampling in CNNs is used to extract useful features from images. In our case, the most relevant features are already part of the input, as discussed above. The main reasons for downsampling in our case are to aggregate long-range effects in addition to the dilation in the fusion blocks, and to reduce the memory requirements. Thus, only two downsampling layers were used.

- As is well known in FCNs, skip connections help avoid the usual checkerboard artifacts in the output. In this work we found that using three additive skip connections led to the best results. We had skip connections from the output of the fusion blocks to the input of the two upsampling layers (transpose convolutions) and the final convolutional layer, respectively.

- An initial depthwise fusion block (depthwise means that channels are not mixed) provides the necessary additional preprocessing of the input data.

2.2.6. Objective Function and Training Process

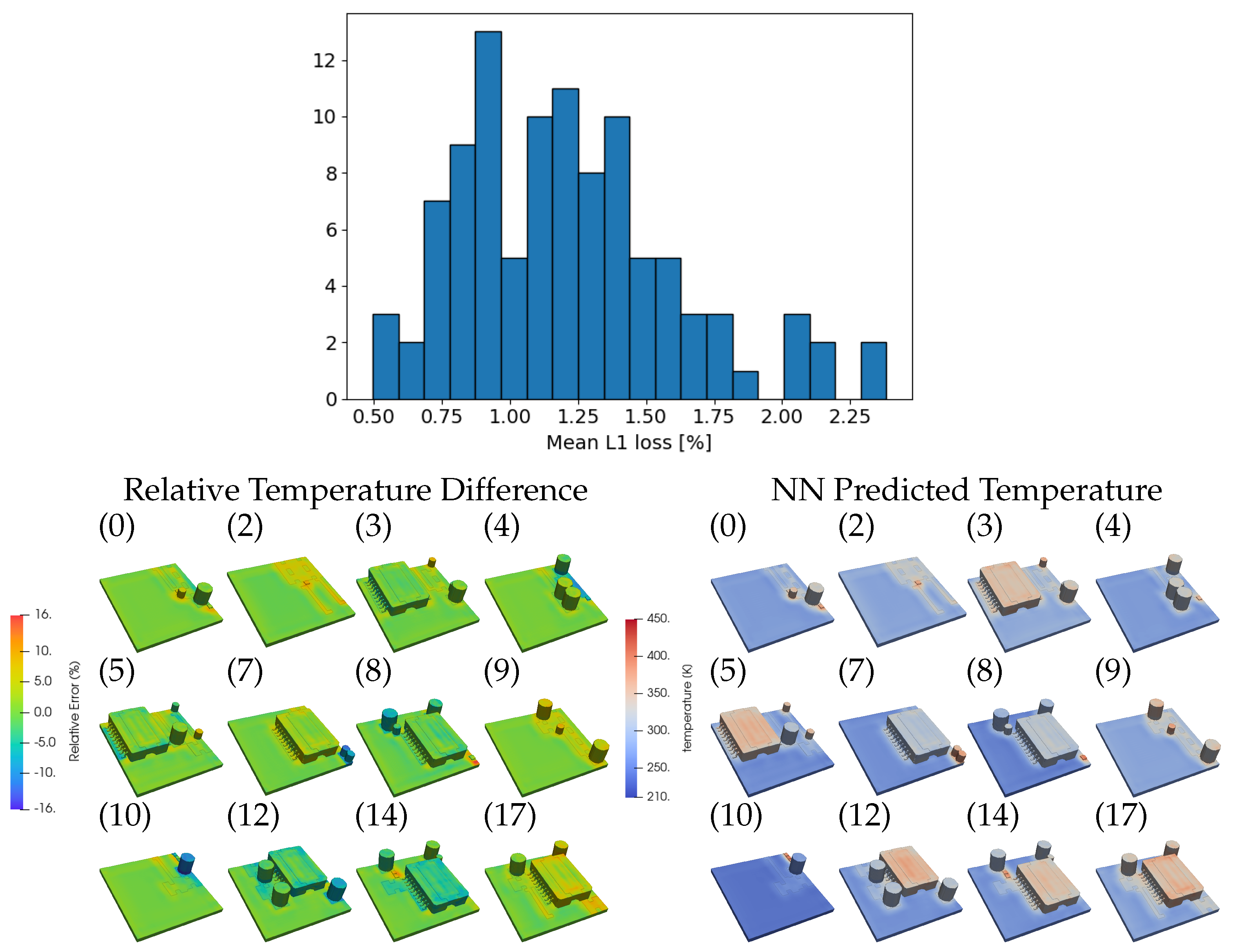

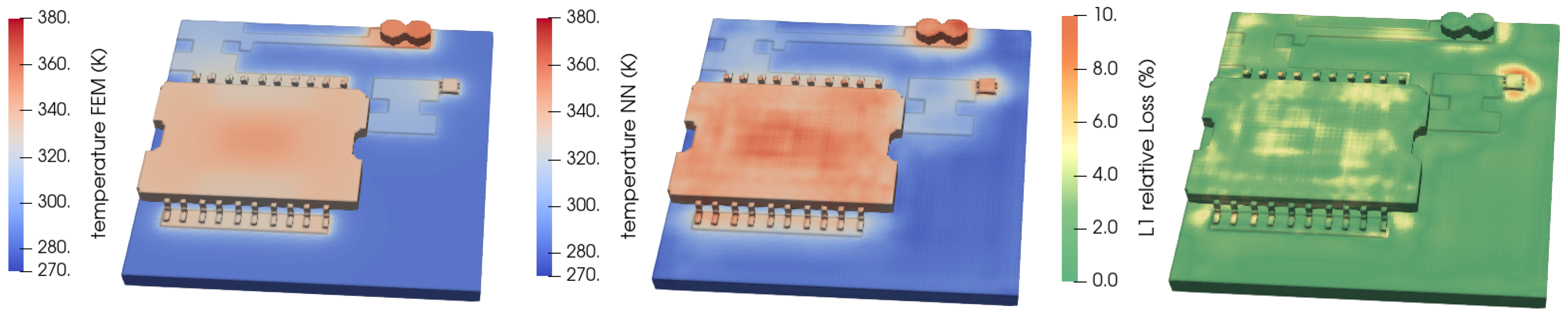

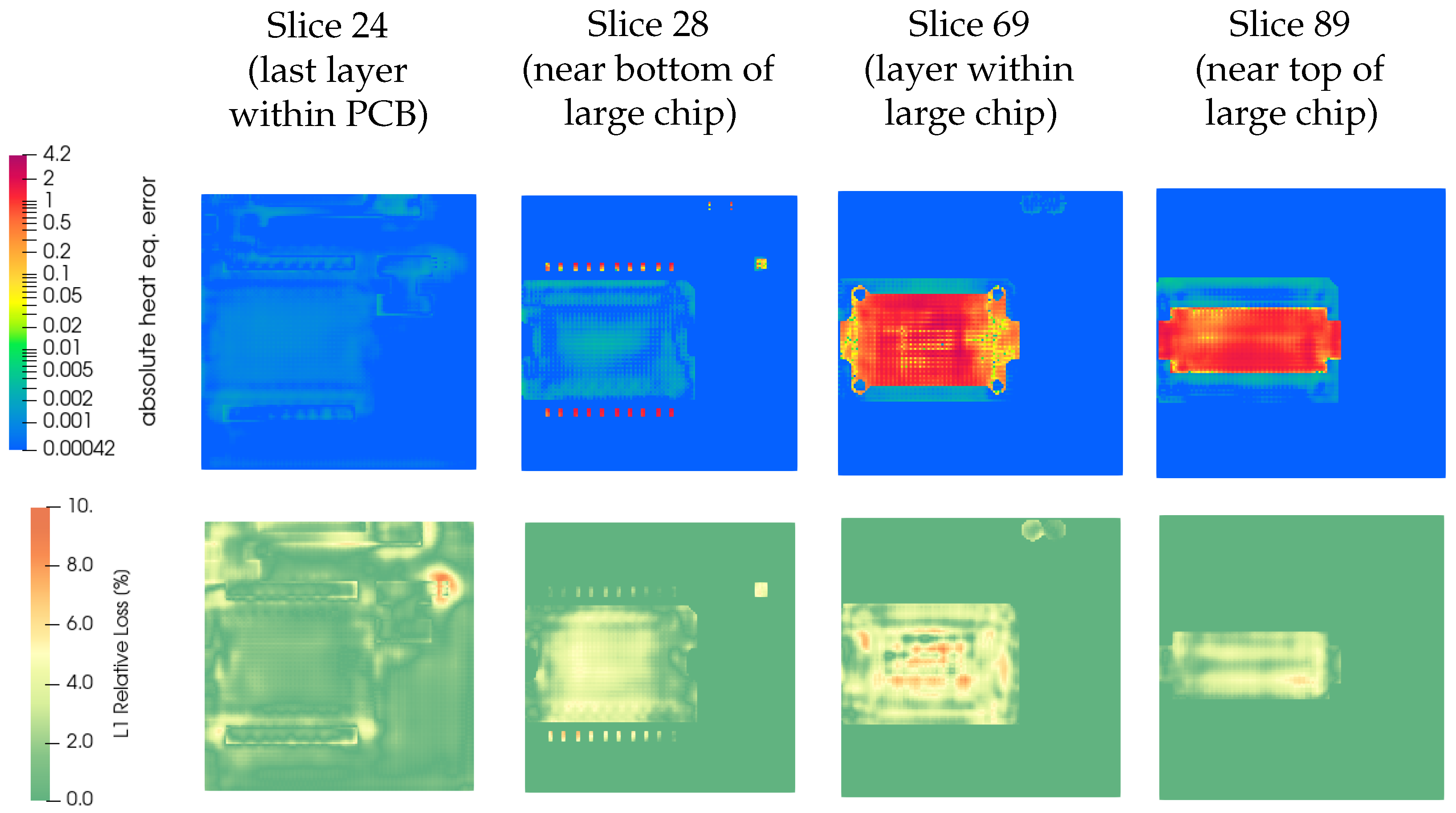

3. Results

Confidence Estimation

4. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Appendix A. System Generation Details

Appendix A.1. System Generation

| Property Unit | Avg. k (W/(m K)) | Avg. (J/(kg K)) | Avg. (kg/m3) |

|---|---|---|---|

| Silicon | 148 | 705 | 2330 |

| Copper | 384 | 385 | 8930 |

| Epoxy | 0.881 | 952 | 1682 |

| FR4 | 0.25 | 1200 | 1900 |

| Al2O3 | 35 | 880 | 3890 |

| Aluminum | 148 | 128 | 1930 |

Appendix A.2. Finite Element Simulations

| Component | Min | Max |

|---|---|---|

| Center of large capacitor | 0.1 | 0.3 |

| Silicon die of large chip | 10 | 19 |

| Silicon die of small chip | 0.1 | 0.5 |

Appendix A.3. Voxelization

Appendix B. Introduction to ANNs

References

- Langbauer, T.; Mentin, C.; Rindler, M.; Vollmaier, F.; Connaughton, A.; Krischan, K. Closing the Loop between Circuit and Thermal Simulation: A System Level Co-Simulation for Loss Related Electro-Thermal Interactions. In Proceedings of the 2019 25th International Workshop on Thermal Investigations of ICs and Systems (THERMINIC), Lecco, Italy, 25–27 September 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 1–6. [Google Scholar] [CrossRef]

- Zhao, S.; Blaabjerg, F.; Wang, H. An Overview of Artificial Intelligence Applications for Power Electronics. Trans. Power Electron. 2015, 30, 6791–6803. [Google Scholar] [CrossRef]

- Wu, T.; Wang, Z.; Ozpineci, B.; Chinthavali, M.; Campbell, S. Automated heatsink optimization for air-cooled power semiconductor modules. IEEE Trans. Power Electron. 2018, 34, 5027–5031. [Google Scholar] [CrossRef]

- Zhang, Y.; Wang, Z.; Wang, H.; Blaabjerg, F. Artificial Intelligence-Aided Thermal Model Considering Cross-Coupling Effects. IEEE Trans. Power Electron. 2020, 35, 9998–10002. [Google Scholar] [CrossRef]

- Delaram, H.; Dastfan, A.; Norouzi, M. Optimal Thermal Placement and Loss Estimation for Power Electronic Modules. IEEE Trans. Componen. Packag. Manuf. Technol. 2018, 8, 236–243. [Google Scholar] [CrossRef]

- Guillod, T.; Papamanolis, P.; Kolar, J.W. Artificial Neural Network (ANN) Based Fast and Accurate Inductor Modeling and Design. IEEE Open J. Power Electron. 2020, 1, 284–299. [Google Scholar] [CrossRef]

- Chiozzi, D.; Bernardoni, M.; Delmonte, N.; Cova, P. A Neural Network Based Approach to Simulate Electrothermal Device Interaction in SPICE Environment. IEEE Trans. Power Electron. 2019, 34, 4703–4710. [Google Scholar] [CrossRef]

- Xu, Z.; Gao, Y.; Wang, X.; Tao, X.; Xu, Q. Surrogate Thermal Model for Power Electronic Modules using Artificial Neural Network. In Proceedings of the 45th Annual Conference of the IEEE Industrial Electronics Society (IECON 2019), Lisbon, Portugal, 14–17 October 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 3160–3165. [Google Scholar] [CrossRef]

- Marie-Francoise, J.N.; Gualous, H.; Berthon, A. Supercapacitor thermal- and electrical-behaviour modelling using ANN. IEEE Proc. Electr. Power Appl. 2006, 153, 255. [Google Scholar] [CrossRef]

- Kim, J.; Lee, C. Prediction of turbulent heat transfer using convolutional neural networks. J. Fluid Mech. 2020, 882, A18. [Google Scholar] [CrossRef]

- Swischuk, R.; Mainini, L.; Peherstorfer, B.; Willcox, K. Projection-based model reduction: Formulations for physics-based machine learning. Comput. Fluids 2019, 179, 704–717. [Google Scholar] [CrossRef]

- Gao, H.; Sun, L.; Wang, J.X. PhyGeoNet: Physics-Informed Geometry-Adaptive Convolutional Neural Networks for Solving Parametric PDEs on Irregular Domain. arXiv 2020, arXiv:2004.13145. [Google Scholar] [CrossRef]

- Cai, S.; Wang, Z.; Lu, L.; Zaki, T.A.; Karniadakis, G.E. DeepM&Mnet: Inferring the electroconvection multiphysics fields based on operator approximation by neural networks. arXiv 2020, arXiv:2009.12935. [Google Scholar]

- He, Q.Z.; Barajas-Solano, D.; Tartakovsky, G.; Tartakovsky, A.M. Physics-informed neural networks for multiphysics data assimilation with application to subsurface transport. Adv. Water Resour. 2020, 141, 103610. [Google Scholar] [CrossRef]

- Kadeethum, T.; Jørgensen, T.M.; Nick, H.M. Physics-informed neural networks for solving nonlinear diffusivity and Biot’s equations. PLoS ONE 2020, 15, e0232683. [Google Scholar] [CrossRef]

- Khan, A.; Ghorbanian, V.; Lowther, D. Deep learning for magnetic field estimation. IEEE Trans. Magn. 2019, 55, 7202304. [Google Scholar] [CrossRef]

- Breen, P.G.; Foley, C.N.; Boekholt, T.; Zwart, S.P. Newton vs. the machine: Solving the chaotic three-body problem using deep neural networks. Mon. Not. R. Astron. Soc. 2019, 494, 2465–2470. [Google Scholar] [CrossRef]

- Sanchez-Gonzalez, A.; Godwin, J.; Pfaff, T.; Ying, R.; Leskovec, J.; Battaglia, P.W. Learning to Simulate Complex Physics with Graph Networks. In Proceedings of the 37th International Conference on Machine Learning, ICML, Virtual Event, 13–18 July 2020; pp. 8459–8468. [Google Scholar]

- Karniadakis, G.E.; Kevrekidis, I.G.; Lu, L.; Perdikaris, P.; Wang, S.; Yang, L. Physics-informed machine learning. Nat. Rev. Phys. 2021, 3, 422–440. [Google Scholar] [CrossRef]

- Raissi, M.; Perdikaris, P.; Karniadakis, G.E. Physics-informed neural networks: A deep learning framework for solving forward and inverse problems involving nonlinear partial differential equations. J. Comput. Phys. 2019, 378, 686–707. [Google Scholar] [CrossRef]

- Rasht-Behesht, M.; Huber, C.; Shukla, K.; Karniadakis, G.E. Physics-informed Neural Networks (PINNs) for Wave Propagation and Full Waveform Inversions. arXiv 2021, arXiv:2108.12035. [Google Scholar]

- Goswami, S.; Yin, M.; Yu, Y.; Karniadakis, G. A physics-informed variational DeepONet for predicting the crack path in brittle materials. arXiv 2021, arXiv:2108.06905. [Google Scholar]

- Lin, C.; Maxey, M.; Li, Z.; Karniadakis, G.E. A seamless multiscale operator neural network for inferring bubble dynamics. J. Fluid Mech. 2021, 929, A18. [Google Scholar] [CrossRef]

- Kovacs, A.; Exl, L.; Kornell, A.; Fischbacher, J.; Hovorka, M.; Gusenbauer, M.; Breth, L.; Oezelt, H.; Praetorius, D.; Suess, D.; et al. Magnetostatics and micromagnetics with physics informed neural networks. J. Magn. Magn. Mater. 2022, 548, 168951. [Google Scholar] [CrossRef]

- Jiang, J.; Zhao, J.; Pang, S.; Meraghni, F.; Siadat, A.; Chen, Q. Physics-informed deep neural network enabled discovery of size-dependent deformation mechanisms in nanostructures. Int. J. Solids Struct. 2022, 236–237, 111320. [Google Scholar] [CrossRef]

- Di Leoni, P.C.; Lu, L.; Meneveau, C.; Karniadakis, G.; Zaki, T.A. DeepONet prediction of linear instability waves in high-speed boundary layers. arXiv 2021, arXiv:2105.08697. [Google Scholar]

- Cai, S.; Wang, Z.; Wang, S.; Perdikaris, P.; Karniadakis, G.E. Physics-informed neural networks for heat transfer problems. J. Heat Transf. 2021, 143, 060801. [Google Scholar] [CrossRef]

- Battaglia, P.W.; Hamrick, J.B.; Bapst, V.; Sanchez-Gonzalez, A.; Zambaldi, V.; Malinowski, M.; Tacchetti, A.; Raposo, D.; Santoro, A.; Faulkner, R.; et al. Relational inductive biases, deep learning, and graph networks. arXiv 2018, arXiv:1806.01261. [Google Scholar]

- Blumberg, S.B.; Tanno, R.; Kokkinos, I.; Alexander, D.C. Deeper image quality transfer: Training low-memory neural networks for 3D images. In Proceedings of the International Conference on Medical Image Computing and Computer-Assisted Intervention, Granada, Spain, 16–20 September 2018; Springer: Berlin/Heidelberg, Germany, 2018; Volume 11070, pp. 118–125. [Google Scholar] [CrossRef] [Green Version]

- Ahmed, E.; Saint, A.; Shabayek, A.E.R.; Cherenkova, K.; Das, R.; Gusev, G.; Aouada, D.; Ottersten, B. A survey on Deep Learning Advances on Different 3D Data Representations. arXiv 2018, arXiv:1808.01462. [Google Scholar]

- Liu, W.; Sun, J.; Li, W.; Hu, T.; Wang, P. Deep Learning on Point Clouds and Its Application: A Survey. Sensors 2019, 19, 4188. [Google Scholar] [CrossRef] [Green Version]

- Bello, S.A.; Yu, S.; Wang, C. Review: Deep learning on 3D point clouds. Remote Sens. 2020, 12, 1729. [Google Scholar] [CrossRef]

- Rios, T.; Wollstadt, P.; Stein, B.V.; Back, T.; Xu, Z.; Sendhoff, B.; Menzel, S. Scalability of Learning Tasks on 3D CAE Models Using Point Cloud Autoencoders. In Proceedings of the 2019 IEEE Symposium Series on Computational Intelligence (SSCI), Xiamen, China, 6–9 December 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 1367–1374. [Google Scholar] [CrossRef]

- Riegler, G.; Ulusoy, A.O.; Geiger, A. OctNet: Learning Deep 3D Representations at High Resolutions. In Proceedings of the 30th IEEE Conference on Computer Vision and Pattern Recognition (CVPR 2017), Honolulu, HI, USA, 21–26 July 2017; pp. 6620–6629. [Google Scholar]

- He, H.; Pathak, J. An unsupervised learning approach to solving heat equations on chip based on auto encoder and image gradient. arXiv 2020, arXiv:2007.09684. [Google Scholar]

- Köpüklü, O.; Kose, N.; Gunduz, A.; Rigoll, G. Resource efficient 3d convolutional neural networks. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision Workshop (ICCVW), Seoul, Korea, 27–28 October 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 1910–1919. [Google Scholar]

- Klambauer, G.; Unterthiner, T.; Mayr, A.; Hochreiter, S. Self-normalizing neural networks. In Advances in Neural Information Processing Systems; MIT Press: Cambridge, MA, USA, 2017; pp. 971–980. [Google Scholar]

- Maas, A.L.; Hannun, A.Y.; Ng, A.Y. Rectifier Nonlinearities Improve Neural Network Acoustic Models; Citeseer: Princeton, NJ, USA, 2013. [Google Scholar]

- Iandola, F.N.; Han, S.; Moskewicz, M.W.; Ashraf, K.; Dally, W.J.; Keutzer, K. SqueezeNet: AlexNet-level accuracy with 50x fewer parameters and <0.5 MB model size. arXiv 2016, arXiv:1602.07360. [Google Scholar]

- Hinton, G.; Tieleman, T. Neural Networks for Machine Learning. Available online: http://www.cs.toronto.edu/~tijmen/csc321/slides/lecture_slides_lec6.pdf (accessed on 21 October 2021).

- Geuzaine, C.; Remacle, J.F. Gmsh: A three-dimensional finite element mesh generator with built-in pre-and post-processing facilities. Int. J. Numer. Methods Eng. 2009, 79, 1309–1331. [Google Scholar] [CrossRef]

- Malinen, M.; Råback, P. Elmer finite element solver for multiphysics and multiscale problems. Multiscale Model. Methods Appl. Mater. Sci. 2013, 19, 101–113. [Google Scholar]

- Råback, P.; Malinen, M.; Ruokolainen, J.; Pursula, A.; Zwinger, T. Elmer Models Manual; Technical Report; CSC—IT Center for Science: Espoo, Finland, 2020. [Google Scholar]

- Goodfellow, I.; Bengio, Y.; Courville, A.; Bengio, Y. Deep Learning; MIT Press: Cambridge, MA, USA, 2016; Volume 1. [Google Scholar]

| NN, GPU Transfer | NN Inference | Total NN | FEM (Single Core) |

|---|---|---|---|

| 0.033 s | 0.002 s | 0.035 s | 160 s |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Stipsitz, M.; Sanchis-Alepuz, H. Approximating the Steady-State Temperature of 3D Electronic Systems with Convolutional Neural Networks. Math. Comput. Appl. 2022, 27, 7. https://doi.org/10.3390/mca27010007

Stipsitz M, Sanchis-Alepuz H. Approximating the Steady-State Temperature of 3D Electronic Systems with Convolutional Neural Networks. Mathematical and Computational Applications. 2022; 27(1):7. https://doi.org/10.3390/mca27010007

Chicago/Turabian StyleStipsitz, Monika, and Hèlios Sanchis-Alepuz. 2022. "Approximating the Steady-State Temperature of 3D Electronic Systems with Convolutional Neural Networks" Mathematical and Computational Applications 27, no. 1: 7. https://doi.org/10.3390/mca27010007

APA StyleStipsitz, M., & Sanchis-Alepuz, H. (2022). Approximating the Steady-State Temperature of 3D Electronic Systems with Convolutional Neural Networks. Mathematical and Computational Applications, 27(1), 7. https://doi.org/10.3390/mca27010007