1. Introduction

Due to rising global energy demand and increasing extraction difficulties, late-stage oilfields face depleted formation energy and insufficient fluid supply. Intermittent oil extraction technology, as an effective means of addressing this issue, can significantly improve oil well extraction efficiency and reduce energy consumption costs by periodically controlling the production and shutdown processes of oil wells.

However, traditional pumping control still suffers from several well-known inefficiencies. Specifically, conventional beam-pump (sucker rod) systems exhibit very low energy efficiency, with overall system efficiency as low as 12–23% [

1]. Large-scale statistical analysis of nearly 45,000 wells in China further shows that under low daily production conditions—typical of late-stage or depleted wells—the normalized energy consumption (kW·h per ton per 100 m lift) increases sharply, meaning a substantial portion of input electrical energy is wasted as mechanical losses, friction, unbalanced torque, and pump-off empty pumping [

2]. These results quantitatively confirm that traditional continuous pumping control entails substantial energy losses and inefficiencies. Intermittent pumping relies heavily on precise scheduling, as the timing of pump start-up and shutdown directly determines whether liquids are fully extracted, whether the pump runs dry, and overall energy consumption. Any deviation significantly impacts production efficiency and economic benefits, whereas continuous pumping does not exhibit this cyclical dependency. Therefore, determining an optimal intermittent pumping schedule that balances production, energy use, and equipment lifespan remains a major challenge. Recent multi-objective optimization work on low-permeability oil wells further confirms that appropriately tuned production parameters can significantly enhance energy efficiency while maintaining liquid production, highlighting the need to systematically design intermittent control regimes rather than relying solely on empirical rules [

3].

Existing studies have provided an important theoretical foundation for the optimization of intermittent oil extraction systems. Liang et al. [

4] analyzed liquid level dynamics using power curves and manometer data, and proposed an intermittent extraction method based on liquid level recovery to determine optimal operating time. However, it focuses on analyzing single-well production parameters and does not adequately consider complex factors such as geological conditions. Sun et al. [

5] utilized data mining techniques to construct a mathematical model of the temporal variation in dynamic liquid level height during intermittent shutdown periods and pumping periods, and combined this with a particle swarm optimization algorithm to achieve intelligent optimization of the intermittent pumping regime, significantly enhancing oil recovery efficiency and economic benefits. However, such data-driven methods often lack deep integration with reservoir physical mechanisms, and the generalization capability and physical interpretability of the models require further enhancement. In the context of gas field development, Cai et al. [

6] proposed an optimization strategy for intermittent production wells in the Jingbian Gas Field that jointly considers single-well schedules and staggered multi-well operation under surface-network constraints, thereby improving both well-level production efficiency and gathering system stability. For shale gas wells, Fan et al. [

7] established an intermittent optimization framework based on reservoir–wellbore coupling, in which transient two-phase flow and pressure transmission are explicitly modeled to achieve more stable and efficient intermittent production.

Within the broader context of petroleum engineering practice, physically based numerical simulation has become an essential tool for analyzing complex flow processes and guiding optimized engineering design. Li et al. [

8] addressed the issue of gas hydrate formation within gas–water multiphase flows in wellbore and subsea gathering systems during deepwater turbidite reservoir development. They established a coupled multiphase flow and heat transfer numerical model for wellbore–subsea pipelines, quantitatively predicting hydrate formation risks in a deepwater gas field in the South China Sea. This provided a basis for wellbore insulation, choke control, and anti-blocking measures in the gathering system. Wu and Ansari [

9] developed an integrated numerical simulation framework encompassing CO

2 geological sequestration and hydrogen storage for depleted gas reservoir reuse. This systematically assessed formation pressure response, fluid phase evolution, and potential leakage risks under different injection–production scenarios, providing quantitative support for reservoir safety evaluation and long-term capacity prediction. Cao et al. [

10] employed three-dimensional numerical modeling coupling crosslinker properties with reservoir geological conditions to investigate fracture propagation morphology and flow capacity evolution during coalbed hydraulic fracturing. Their work elucidated the influence of crosslinker type, formation stress, and temperature on fracturing effectiveness. The aforementioned studies demonstrate that numerical simulation has been extensively applied to diverse engineering challenges, including wellbore flow safety, depleted reservoir reactivation, and unconventional reservoir fracturing. This provides crucial methodological reference for incorporating physical constraints and mechanism analysis into the optimization of the intermittent pumping regimes discussed herein.

In addition to the above approaches, several studies have employed statistical optimization methods such as Response Surface Methodology (RSM) and Analysis of Variance (ANOVA) to evaluate parameter influence and establish empirical optimization models [

11]. RSM enables the efficient exploration of parameter interactions and the identification of optimal regions, whereas ANOVA statistically validates the significance of influencing factors. Nevertheless, these methods depend on simplified assumptions and have limited ability to capture the nonlinear and strongly coupled characteristics of oil well production systems. This further demonstrates the need for a more comprehensive framework that integrates physical mechanisms with data-driven modeling. Moreover, dynamic load-optimized selection charts for flexible ultra-long stroke pumping units in low-yield wells have been developed to better match pumping equipment with operating conditions, further emphasizing the role of quantitative design tools in pumping system optimization [

12].

Currently, the optimization of the interval pumping system faces numerous challenges. While traditional physical methods can reveal the underlying mechanisms of oil well production, they struggle with parameter selection and adaptability under complex operating conditions. On the other hand, data-driven methods, though effective at processing large datasets, lack physical constraints, leading to unstable predictions when data are insufficient or well conditions change. On the data-driven side, adaptive fusion-based production forecasting models for unconventional oil and gas wells have demonstrated that combining multiple base learners can markedly improve predictive accuracy and robustness in complex reservoirs [

13]. Rapid classification and diagnosis workflows for gas wells driven by production data have further shown that high-frequency production monitoring can effectively support the identification of abnormal operating states and operational decision-making [

14]. For artificial lift systems, deep recurrent neural network models have been used to predict and optimize the energy consumption of electrical submersible pump well systems, revealing that sequence-learning algorithms can accurately capture the nonlinear mapping between operating parameters and power usage and thus support energy-saving operation strategies [

15]. Existing research often uses physical models and data-driven methods in isolation, failing to leverage their synergistic advantages.

To this end, this work proposes a data–physics dual-driven optimization method. In terms of the physics-driven, the relationships between parameters in physical processes such as reservoir flow, wellbore flow, and pump efficiency changes are derived through physical formulas. The correlations and relationships between parameters in the physical model and production parameters are explored, and by solving these correlations, so too are the key parameters reflecting the essence of oil well production, such as geological factors (porosity, formation coefficient), physical factors (pump diameter, collapse efficiency), and production factors (daily liquid production, water cut, stroke, stroke rate, and oil pressure). In this work, ‘physically driven parameters’ primarily refer to variables directly appearing in reservoir seepage and wellbore flow control equations. These include formation coefficients and porosity reflecting the porosity–permeability relationship; formation pressure/casing pressure characterizing reservoir energy state; and stroke, stroke rate, pump diameter, and pump efficiency constrained by pumping unit kinematics and the empirical relationship between submergence and pump efficiency. Correspondingly, daily liquid production and water cut serve as data-driven indicators characterizing production response. On the data-driven side, we collect massive historical data from the oil well production process, including liquid production volume, water cut, porosity, formation coefficient, stroke, stroke rate, pump diameter, and pump efficiency, among other multi-source information.

Subsequently, the data obtained from data-driven and physics-driven approaches are integrated and jointly fed into the improved CatBoost algorithm model as input. The improved CatBoost algorithm dynamically adjusts the hyperparameters through Bayesian optimization, combining a weight adaptation update mechanism with an attention mechanism. This design helps the model capture nonlinear relationships and handle noise and high-dimensional data. Additionally, the introduction of physical parameters provides the model with robust physical constraints, preventing it from producing unreasonable predictions. It ensures that our model not only possesses strong data processing capabilities but also maintains good physical interpretability and generalization ability. By combining the data–physics dual-driven approach with the improved CatBoost algorithm, our model aims to construct an efficient, precise, and adaptable intermittent oil production regime optimization system, providing strong technical support and decision-making basis for intelligent oilfield development and efficient production.

4. Based on a Data–Physics Dual-Driven Approach

4.1. Physics-Driven Method

According to the basic principles of seepage mechanics, reservoir physics, and reservoir engineering, it is known that the oil production volume is related to the capacity factor , formation pressure , index parameter, permeability , wellbore flow pressure , fluid viscosity , flow path length , and flow cross-sectional are among the physics-driven based parameters.

In classical theories of seepage mechanics and reservoir engineering, physical parameters such as average formation pressure, bottomhole flow pressure, permeability, and flow cross-sectional area are key factors governing the inflow capacity of production wells. To employ a production relationship with a clear engineering basis that facilitates subsequent modeling in this work, we have selected the classical Fetkovich empirical production equation to describe the inflow performance of a single well [

30]. Its form is as follows:

where

Q denotes the wellbore liquid production rate, reflecting the output capacity of the producing well;

represents the average formation pressure;

denotes the wellbore flow pressure;

C is the flow coefficient; and

n is the empirical exponent characterizing nonlinear flow behavior (e.g.,

n ≈ 1 typically applies to dissolved gas-driven reservoirs, whereas

n > 1 is often observed in low-permeability reservoirs). This formulation was first proposed by Fetkovich based on multi-point back-pressure testing and has since been widely adopted in extensive subsequent research and engineering practice to describe the empirical relationship between production well output and pressure.

In addition, Darcy’s law [

31] shows that, for single-phase laminar flow in a porous medium, the volumetric flow rate is proportional to the permeability

k and flow cross-sectional area A, as well as to the pressure difference between reservoir pressure and bottomhole flowing pressure, and inversely proportional to fluid viscosity

μ and flow path length

L. The classical one-dimensional form of Darcy’s law can be written as follows:

where

is the permeability, which measures the ability of the formation to transmit fluids. The higher the permeability, the greater the formation’s ability to conduct fluid, and the flow rate per unit of time

q increases.

is the flow cross-sectional area, which refers to the effective area through which the oil flow passes, such as the area of a seepage channel in a wellbore or formation. As the cross-sectional area increases, the flow rate per unit time passes increases.

is fluid viscosity, which is used to measure the resistance to flow between molecules within a fluid. The greater the viscosity is, the greater the resistance to fluid flow and the decrease in flow rate

are.

is the flow path length, the distance the fluid inside the formation travels from the start of the seepage to the wellbore. In deep reservoirs or highly porous formations, a longer flow path usually means a greater pressure drop and lower production.

The derivation of Equations (1) and (2) is based upon several standard assumptions in seepage mechanics:

- (1)

It is assumed that the reservoir contains a single-phase, slightly compressible liquid flow, with the porous medium being homogeneous and isotropic. Consequently, within each pumping cycle, the lithology and fluid properties within the control volume do not undergo significant variation.

- (2)

It is assumed that flow is laminar radial flow, rendering Darcy’s law applicable at the study scale.

- (3)

Porosity, permeability, and fluid viscosity are treated as near-constant values over short timeframes, whilst capillary and gravitational effects are neglected relative to viscous pressure loss.

- (4)

The system is assumed to be in a boundary-controlled (quasi-steady) flow phase, allowing the driving force to be characterized by the average formation pressure pe and wellbore flow pressure pwf, with wellbore storage and skin effects unified and equivalent to the production index J in Equation (1).

Therefore, fluid production from wells can be improved by optimizing formation pressure management, lowering bottomhole flow pressure, increasing permeability, and decreasing fluid viscosity. Furthermore, Khalaf et al. [

32] proposed a method for removing wellbore storage effects from pressure curves, based on a stable deconvolution algorithm. This approach addresses the distortion of pressure curves caused by wellbore storage effects, enabling the recovery of more accurate formation pressure response curves even under noisy conditions and variable flow rates. It provides a significant analytical tool for interpreting well testing data in complex operational scenarios.

In summary, the physics-driven section of this work explicitly employs two key seepage equations: firstly, the Fetkovich-type inflow property relation (Equation (1)), which characterizes the nonlinear pressure–production relationship between average formation pressure, bottomhole flow pressure, and fluid production rate; secondly, Darcy’s law (Equation (2)), which constrains the combined influence of permeability, flow channel cross-sectional area, viscosity, and seepage path length on flow rate.

4.2. Data-Driven Method

Based on the 19 production parameter data collected, namely “Well Number”, “Intermittent Pumping Type”, “Block”, “Horizon”, “Gas-Oil Ratio”, “Crude Oil Viscosity”, “Stroke Length”, “Stroke Frequency”, “Pump Diameter”, “Pump Efficiency”, “Submergence”, “Daily Oil Production”, “Daily Liquid Production”, “Water Cut”, “Tubing Pressure”, “Porosity”, “Formation Coefficient”, “Flowing Bottom-Hole Pressure”, and “Pay Zone”, the Pearson analysis method is used to screen key parameters, revealing the linear correlation between equipment operating parameters and production indicators and providing data support for the optimization of the intermittent pumping system.

Pearson correlation is the linear correlation between quantitative variables, and the formula is as follows:

where

is the sample size, i.e., the number of observations.

denotes the

th observation of the variable

.

denotes the

observation of the variable

.

denotes the mean of the variable

, i.e.,

.

denotes the mean value of variable

,

.

is the Pearson’s correlation coefficient, which takes values in the range of [−1, 1] and is used to measure the strength and direction of the linear correlation between variable

X and variable

Y. The closer the absolute value of

is to 1, the stronger the linear correlation between the two variables; the closer the absolute value of

is to 0, the weaker the linear correlation between the two variables. When

> 0, it indicates that the two variables are positively correlated, i.e., when one variable increases, the other tends to increase; when

< 0, it indicates that the two variables are negatively correlated, i.e., when one variable increases, the other tends to decrease.

According to the relationships among production parameters, dynamic liquid level, oil production, and geological factors, the most influential factor on oil production is the production parameters of the pumping unit well [

33]. The dynamic liquid level is related to submergence, pump setting depth, stroke length, and stroke frequency [

34]. Oil production is related to tubing pressure, water cut, daily oil production/daily liquid production. Geological factors are related to the porosity and formation coefficient. Meanwhile, physical factors during the pumping process, such as pump diameter and pump efficiency, also affect oil production. Pump efficiency increases with the increase in submergence, but there is a reasonable limit for submergence. When this limit is exceeded, pump efficiency decreases instead.

4.3. Data–Physics Dual-Driven

In porous media, the relationship of permeability and porosity can be described by the Kozeny–Carman equation:

where

is permeability,

is porosity,

is particle diameter, and

is surface area. However, in practice, permeability and porosity are often obtained by fitting well logging data, and a linear regression model exists:

where

is the slope,

reflecting the sensitivity of permeability to changes in porosity, and b is the intercept, reflecting the permeability at zero porosity (theoretically 0).

Similarly, according to the pressure gradient equation,

where

is the pressure gradient. Since the strokes of the pumping unit directly affect the flow rate per unit time

,

where

is the stroke frequency,

is the flow cross-sectional area, and

is the stroke length.

Substitute the unit time flow equation for the pressure gradient equation:

According to the formula, it can be seen that increasing the stroke length can have an increase in the single displacement, thus increasing the flow rate per unit time, leading to an increase in the pressure gradient, and fluid flow rate increases. By increasing the stroke frequency, increasing the pumping unit time working frequency, and directly increasing the flow rate, leading to a further increase in the pressure gradient, wellbore flow resistance increases. Thus, stroke length, stroke frequency, and pressure gradients exist:

When both stroke length and stroke frequency are compared, the pressure gradient increases, i.e., the pressure loss per unit length is significant. Due to the increase in flow velocity caused by the high stroke and stroke number, the fluid inertia force is enhanced, causing the flow resistance to rise. If the decay of oil reservoir energy in a short period of time is

Substitute the stroke length and stroke frequency equations:

where

is the initial differential pressure and

is the flow distance. High stroke length and high stroke frequency accelerate the rate of differential pressure decay, and the bottomhole pressure drops rapidly. If the pressure decay is too fast, the energy of the oil reservoir cannot be replenished in time, which eventually leads to unstable production. Under high-frequency pumping, the flow rate changes significantly, which is easy to produce pump suction emptying and wellbore fluid accumulation.

Therefore, combining physical parameters with production parameters has better theoretical support.

Based on the calculation using the Pearson correlation coefficient formula, five factor combinations with relatively high correlations were selected: Stroke Length, Daily Liquid Production, Water Cut, Porosity, and Formation Coefficient. The correlation analysis of various factors in the intermittent pumping system is shown in

Figure 1.

Considering both physics-driven parameters and data-driven parameters comprehensively, the liquid production is influenced by various production parameters. Finally, a combination of nine parameters is selected: Formation Coefficient, Daily Liquid Production, Porosity, Water Cut, Tubing Pressure, Stroke Length, Stroke Frequency, Pump Diameter, and Pump Efficiency. The correlation analysis diagram of characteristic parameters is shown in

Figure 2.

5. Optimization Algorithm for Intermittent Sampling System Driven by Data–Physics Models

Based on the CatBoost regression framework [

35], this inter-pumping regime selection method realizes high-precision prediction of well operation time and intelligent recommendation of inter-pumping regimes through systematic data preprocessing, feature construction, model training, and automated hyperparameter optimization processes. Throughout the modeling process, we always adhere to the principle of data-driven and engineering usability, and strive to ensure the prediction accuracy while improving the model’s stability, generalization ability, and landing feasibility.

5.1. Data Preparation

Through the combination of mathematical modeling and physical mechanism analysis, nine key characteristic parameters that are closely related to the operation system of inter-well pumping were screened out, namely, formation coefficient, daily liquid production, porosity, water content, oil pressure, stroke, stroke times, pump diameter, and pump efficiency. These characteristic variables portray the overall operating status of the wells from three dimensions of geological conditions, process parameters, and equipment performance, and have strong representativeness and interpretability. The target variable is set as the “running time” of each round, i.e., the duration of continuous operation after the well is turned on for a single time, aiming at realizing the accurate prediction of the pumping cycle.

In terms of data cleaning, the IQR (interquartile range) method is used to identify and eliminate outlier samples, and records with a high proportion of missing values are deleted to ensure the quality and consistency of the input data. In addition, in order to enhance the adaptability of the model to different operating modes, the original data are divided into two subsets based on the existing “Operating Cycle Classification” field: large cycle (classification labeled as 1) and small cycle (classification labeled as 2). This strategy takes into account the differences in the main factors affecting the operating time under different operating regimes, and modeling them separately helps to improve the model fitting accuracy and avoid data interference between different cycles. In the subsequent modeling, the two subsets will be trained and evaluated independently to obtain more targeted optimization suggestions for the inter-sampling regime.

5.2. Feature Engineering

To improve the model’s ability to capture complex nonlinear relationships, the feature engineering stage extends the third-order polynomials on top of the original 9 features to generate up to 165 combined features. This operation effectively enhances the model’s ability to express multi-dimensional feature interactions and improves its adaptability to complex working conditions. However, the high-dimensional feature space also brings the problems of increasing redundant information and overfitting risk.

In this work, F-value is introduced as a feature saliency index, and the SelectKBest algorithm is used to select the top 15 combinations of features that have a significant effect on the target variable “running time” as the final set of input variables, which retains the nonlinear information while effectively controlling the feature dimensions, which improves the generalization performance and computational efficiency of the model. In addition, all the features are Z-score standardized to eliminate the differences in scale, which further improves the convergence speed and stability of the model in the gradient iteration process.

5.3. Hyperparameter Tuning Based on Bayesian Optimization

In the optimization of the pumping regime between wells, a Bayesian optimization algorithm was introduced to tune the hyperparameters of the CatBoost model more efficiently. For the specific algorithm, see “Algorithm 1”.

| Algorithm 1 Hyperparameter optimization algorithm for CatBoost model based on Bayesian optimization |

Input:

CatBoost model hyper-parameter initial value ranges (learning rate range, tree maximum depth range, iteration number range, L2 regularization factor range) objective function (F1 score or AUC value on the validation set) Maximum number of iterations convergence conditions

Procedure: function BayesianOptimization ()

1: Several combinations of hyper-parameters are randomly selected and their objective function values are evaluated to obtain an initial dataset S

2: Initialize the Gaussian process agent model GP and fit GP with S

3: for i from 1 to the maximum number of iterations do

4: Use the upper confidence interval (UCB) collection function to select the next hyper-parameter combination in the hyper-parameter space xnext

5: Evaluate the objective function value ynext corresponding to xnext

6: Add (xnext,ynext) to the dataset S

7: Update the Gaussian process agent model GP and refit GP with the updated S

8: if the convergence condition is satisfied then

9: break

10: end if

11: end for

Output: optimized hyper-parameter combinations (optimal learning rate, optimal maximum depth of tree, optimal number of iterations, optimal L2 regularization factor) |

In this work, the convergence conditions for Bayesian optimization include the following: (1) the improvement in the objective function (F1 or AUC) becomes smaller than a predefined threshold for several consecutive iterations, indicating that further exploration yields negligible benefit; or (2) the predefined maximum number of iterations is reached. These criteria ensure computational efficiency while avoiding unnecessary evaluations.

Hyperparameter tuning based on Bayesian optimization explores the parameter space more efficiently and avoids falling into local optima by constructing an agent model and dynamically adjusting the hyperparameter search direction by utilizing prior knowledge and historical evaluation results.

The core of Bayesian optimization lies in constructing an agent model to approximate the real objective function. Bayesian optimization has been widely applied as an efficient strategy for hyperparameter tuning in machine learning models [

36]. In inter-well pumping regime optimization problems, the objective function is the model’s performance metrics on the validation set, such as F1 scores or AUC values. F1 and AUC are not used as final evaluation metrics of the pumping-time prediction model. Instead, they serve only as auxiliary surrogate signals within the Bayesian optimization process to guide the search toward regions where the model more reliably distinguishes valid from invalid predictions in intermediate classification-based assessments. A Gaussian process is usually chosen for the agent model because it captures the uncertainty of the objective function and provides the predicted mean and variance. Initially, we randomly select several hyperparameter combinations to evaluate, obtain the corresponding objective function values, and then use these data points to fit a Gaussian process model.

Next, the acquisition function is selected to determine the next most promising combination of hyperparameters. The capture function measures the potential contribution of each possible hyperparameter combination to the optimized objective function. Commonly used collection functions include Expected Improvement (EI) and Upper Confidence Bound (UCB), where EI focuses on the degree of improvement that can be achieved from the current optimal solution, and UCB strikes a balance between utilizing known information and exploring unknown regions. In the actual well production environment, there are various uncertainties, such as changes in geological conditions, aging equipment, etc., which will have an impact on the optimization effect of the working regime. The UCB can reflect the uncertainty based on the magnitude of the prediction variance and take this uncertainty into account in the selection of the sampling point, thus improving the robustness of the optimization scheme to a certain extent, so we chose the UCB acquisition function.

The Upper Confidence Bound (

UCB) acquisition function is defined as follows:

where

is the predicted mean of the surrogate model,

is the predicted standard deviation, and

k is a tunable parameter controlling the exploration–exploitation balance. The first term,

, encourages sampling near regions expected to yield high objective values, while the second term,

, promotes exploration in regions with large uncertainty. In this way, the

UCB criterion selects hyperparameter combinations that are not only potentially optimal but also informative about uncertain areas of the parameter space. This property makes

UCB particularly suitable for well production optimization problems, where geological and equipment uncertainties can lead to significant variability in model predictions.

The Expected Improvement (

EI) sampling function measures the expected improvement that the next sampling point may bring based on the current optimal value. Assuming that the current optimal value is

and the Gaussian process predicts the mean value at point

x as

µ(

x) and the variance as

σ2(

x), then the formula for

EI is

Under the assumption of a Gaussian distribution, the formula for

EI can be further expanded as follows:

where

is a parameter that controls the balance between exploration and exploitation (usually set to 0 or a small positive value).

is the cumulative distribution function (CDF) of the standard normal distribution,

is the probability density function (PDF) of the standard normal distribution.

In each iteration, the next hyperparameter combination is selected for evaluation based on the acquisition function. The new hyperparameter combination and its corresponding objective function value are added to the dataset and the Gaussian process agent model is updated. Through continuous iterations, the agent model gradually approaches the real objective function and the hyperparameter search direction is optimized. This process continues until a predetermined number of iterations is reached or a convergence condition is satisfied.

Bayesian optimization allows for the optimization of several key hyperparameters of the CatBoost model, including the number of iterations, the learning rate, the tree depth, and the regularization parameter. The number of iterations controls the number of training rounds for the model; too many may lead to overfitting and too few may lead to underfitting. The learning rate determines how much the model updates the weights in each iteration; too much learning rate may lead to unstable model training, too little may increase the training time. Tree depth limits the depth of each decision tree; a larger tree depth may increase the complexity of the model and improve the fitting ability, but it can also easily lead to overfitting. The regularization parameter is used to prevent the model from overfitting, and this work uses the L2 regularization parameter, which can constrain the weights and avoid the model from being too complex.

As

Table 1 demonstrates, following Bayesian optimization adjustments to hyperparameters, the CatBoost model exhibits markedly enhanced predictive performance across both fine-dropout and coarse-dropout tasks. This indicates that Bayesian optimization effectively identifies optimal hyperparameter combinations tailored to distinct dropout regimes, thereby bolstering the model’s fitting capability and generalization capacity. Further analysis reveals discernible patterns in the optimal hyperparameters across sampling regimes: the coarse sampling task performs better with smaller learning rates, shallower tree depths, and higher iteration counts, whereas the fine sampling task favors slightly higher learning rates, deeper tree structures, and fewer iterations. This demonstrates that Bayesian optimization can automatically balance model complexity and training processes according to task characteristics, enabling the model to better capture nonlinear relationships and dynamic variations within oil well production data.

In summary, the introduction of a hyperparameter tuning strategy incorporating Bayesian optimization not only enhances the predictive accuracy of the CatBoost model but also improves its stability and adaptability across different interval pumping regimes. This provides reliable model support for optimizing oil well interval pumping regimes. This optimization strategy offers a scientific basis for subsequent adjustments to production conditions and intelligent decision-making, while also serving as a reference for tuning ensemble learning models in similar oil and gas production forecasting tasks.

5.4. Adaptive Updating of Weights

In traditional integrated learning methods, the weights of the base classifiers are usually equal or fixed during the training process. However, in the inter-well pumping system optimization problem, different base classifiers may not contribute equally to the final prediction result. In order to integrate the advantages of each base classifier more accurately, the improved CatBoost model introduces a dynamic weight adaptive updating mechanism. This mechanism dynamically adjusts the weights of the base classifiers according to their prediction errors on the validation set. The smaller the prediction error is, the better the performance of the base classifier is, and the larger its corresponding weight is. The weight update formula is as follows:

where

denotes the weight of the

base classifier and

denotes the prediction error of the

base classifier. The prediction error can be measured by metrics such as mean square error (

MSE).

MSE is calculated by the formula

where

is the true value,

is the predicted value, and

n is the number of samples.

Specifically, assume there are T base classifiers. During training, each base classifier is first trained on the training set, then the prediction error

of each base classifier is calculated on the validation set, and finally the weights

of the base classifiers are updated based on the prediction errors. In the final prediction stage, the prediction results of all base classifiers are weighted and fused, i.e., the prediction result of each base classifier is multiplied by its corresponding weight, and then summed to obtain the final prediction result. This weight adaptive update mechanism enables the model to automatically adjust the contribution of each base classifier, ensuring that base classifiers with better performance have a greater influence in the final ensemble prediction, thereby improving overall prediction performance. Compared to traditional fixed-weight methods, this mechanism offers significant advantages. It dynamically adjusts weights based on the actual performance of base classifiers, enhancing the model’s adaptability and robustness, while more accurately leveraging the strengths of high-performing base classifiers to improve prediction accuracy. Such dynamic weighted ensemble strategies have been shown to significantly improve predictive robustness in complex environments [

37]. For the specific algorithm, see “Algorithm 2”.

| Algorithm 2 CatBoost integrated prediction algorithm based on adaptive updating of weights |

Input:

training set Dtrain = {(x1, y1), …, (xm, ym)} validation set Dval = {(xv1, yv1), …, (xvn, yvn)} number of base classifiers T mean square error (MSE)

Procedure: function AdaptiveWeightedEnsemble ()

1: Generate a list of weights, initialized to an all-0 list of length T

2: for t from 0 to T − 1 do

3: Train the base classifier Ct on the training set

4: Predicting the validation set with Ct yields the prediction

5: Calculate the prediction error for the base classifier

6: Evaluate the objective function value ynext corresponding to xnext

7: If the mean square error (MSE) is used,

8: Update weight[t] according to the prediction error .

9: end for

10: Normalize the weights list weights:

11: Calculate the sum of the weights

12: for t from 0 to T − 1 do

13:

14: end for

15: Initialize the final prediction final_prediction = 0

16: for t 0 to T − 1 do

17: Get the prediction for the base classifier

18: Multiply by weights[t] and accumulate to final_prediction:

19: final_prediction+ = × weights[t]

20: end for

Output: final prediction result final_prediction |

When implementing the adaptive updating of weights, it is first necessary to prepare a validation set which is used to evaluate the prediction error of each base classifier. The size of the validation set and the method of selecting it are crucial for the accuracy of the weight update. In general, the validation set should be able to represent the entire data distribution and not overlap with the training and test sets to ensure the objectivity and accuracy of the evaluation results.

When training each base classifier, its prediction results on the validation set need to be recorded and the corresponding prediction error calculated. The calculation method of prediction error can be selected according to the specific problem and data characteristics, and in addition to the mean square error (MSE), indicators such as the mean absolute error (MAE) and classification accuracy can also be used. For the problem of optimizing the pumping regime between wells, MSE is a commonly used evaluation index because the objective is to predict continuous variables such as the production and water content of the wells.

After calculating the prediction error of each base classifier, its weights are updated according to the above formula . The process of weight updating can be understood as a quantitative evaluation of the performance of the base classifiers, and the base classifiers with better performance will be given more weights, thus playing a greater role in the final integrated prediction. The frequency of weight update can be set flexibly according to the training progress and model convergence, and the weight update is generally performed immediately after the training of each base classifier is completed, so as to reflect the changes in model performance in a timely manner.

In the final prediction stage, the prediction results of all base classifiers are weighted and fused. The process of weighted fusion can be represented as follows:

where

denotes the final prediction result,

denotes the prediction result of the

th base classifier, and

denotes the weight of the

th base classifier.

In this way, the model can fully utilize the advantages of each base classifier to improve the accuracy and reliability of the prediction results. The introduction of the weight adaptive updating mechanism enables the improved CatBoost model to better adapt to the characteristics and changes in the data when dealing with complex well data, and enhances the model’s generalization ability and stability.

5.5. Dynamic Regulation of Parameters Under the Attention Mechanism

When constructing an integrated learning model with multiple levels, the improved CatBoost model introduces an attention mechanism to regulate the parameters of the next level in order to better utilize the information from the previous-level model so that the next level model can learn more purposefully. The core idea of the attention mechanism is to let the model automatically learn the importance of each base classifier output and use it as the weight of the input features of the next level model. This principle is consistent with the modern formulation of attention mechanisms, where models learn data-dependent importance weights to enhance representation learning [

38]. Specifically, prior to the training of the model at level

t, the outputs of the models at the previous

t − 1 levels are weighted and fused, with the weights determined by the attention mechanism. The fused results are then used as part of the input features of the level t model, thus influencing the parameter learning of the level

t model. The formula for the attentional weights is as follows:

where

denotes the attentional weight of the level t model to the

-th previous-level model output, and

denotes the un-normalized energy value of the level

t model to the

-th previous-level model output. The energy value

is usually generated by a small neural network or a simple linear transformation, which aims to measure the relevance of the previous-level model output to the current-level model’s target task.

Taking the multi-level model for the optimization of the inter-well pumping regime as an example, the first level of the model may mainly learn the influence of the basic geological characteristics of the wells on the production. These basic geologic features include the porosity, permeability, and thickness of the oil formation, which are the basic factors affecting the production capacity of the well. The first-level model outputs preliminary prediction results by learning the relationship between these features and oil well production. Then, the attention weights of the output of the first-level model are calculated through the attention mechanism, and these weights reflect the relevance of the output of the first-level model to the target task of the second-level model.

Next, the outputs of the first-level model are weighted and fused together with the original input features as the input features of the second-level model. During the learning process, the second-stage model can pay more attention to the information related to the dynamic production data of the wells in the first-stage model, such as the daily oil production and water content change in the wells. These dynamic production data reflect the actual performance of oil wells under different production regimes, and are important for predicting the production effect of oil wells under different inter-pumping regimes. In this way, the second-stage model can more accurately predict the production, water content, and other key indicators of oil wells under different inter-pumping regimes, thus providing a scientific basis for formulating a reasonable inter-pumping regime. The advantage of the attention mechanism is that it enables the next-level model to pay more attention to the useful information in the previous-level model and ignore the irrelevant or noisy information, thus improving the model’s learning ability and generalization performance. At the same time, it realizes the deep fusion of multi-level features and gradually extracts higher-level feature representations, which further improves the model’s understanding and prediction ability of complex oil well data. For the specific algorithm, see “Algorithm 3”.

| Algorithm 3 multi-level CatBoost integrated learning algorithm based on attention mechanism |

Input:

raw well data collection D model level n Energy calculation module configuration (e.g., number of neural network layers, parameters, etc.)

Process: Functions AttentionBasedMultiLevelEnsemble (D, n, energy_config)

1: Initialization:

2: antecedent output prev_output =

3: Current Input Data current_input = D

4: for i from 1 to n do

5: if i == 1 then:

6: Train the model at level i:

7: Calculate the output of level i:

8: else:

9: Build the energy calculation module: initialize the small neural network Ei according to energy_config, the input dimension is the number of features of , and the output is the scalar energy value

10: :

11: Weighted fusion of previous level outputs:

12: Splicing Features:

13: Train the model at level i:

14: Calculate the output of level i:

15: Updating of the previous level of outputs:

16: end for

Output: the final prediction of the nth level model |

In implementing the attention mechanism, it is first necessary to design an energy computation module for generating the un-normalized energy values of the previous-level model outputs . This energy computation module can be a small neural network or a simple linear transformation. Its central purpose is to measure the relevance of the previous-level model output to the target task of the current-level model. For example, in the problem of optimizing the pumping regime between wells, the energy value can be computed by a single-layer neural network whose inputs are the output features of the previous-level model and whose output is a scalar energy value.

After calculating the energy values, they are converted into attention weights by softmax function:

The softmax function is able to normalize the energy values to a probability distribution that ensures that all the attention weights sum to 1. This makes the weighted fusion process more stable and rational.

Next, the output of the previous-level model is multiplied by the corresponding attentional weights to obtain a weighted feature representation. Then, these weighted features are spliced with the original input features to form the input features of the second-level model. During the training process, the second-level model is not only able to learn the information of the original input features, but also able to utilize the weighted features of the output of the previous-level model, so as to capture the complex relationships in the data in a more comprehensive way.

During the training process of the multi-level model, each level of the model can adopt a similar attention mechanism to gradually fuse the output information of the previous level of the model. This level-by-level feature fusion allows the model to gradually extract higher-level feature representations to further enhance the understanding and prediction of complex data.

Through the synergy of the weight adaptive updating mechanism and the attention mechanism, the improved CatBoost model is able to better mine the relationship between features, improve the prediction accuracy, and enhance the stability and adaptability of the model when dealing with complex oil well data. In practical applications, by reasonably setting the model structure and parameters, the improved CatBoost model can provide stronger support for the optimization of the pumping regime between oil wells, and help to improve the recovery rate and economic efficiency of oilfields.

6. Optimization Results of the Inter-Sampling System

In order to comprehensively evaluate the performance of the proposed method, this work selected various classical machine learning models such as K-Nearest Neighbors (KNN), Plain Bayes, Least Absolute Shrinkage and Selection Operator (Lasso), Random Forests, Support Vector Machines, XGBoost, etc., as well as combinations of LightGBM with models such as XGBoost and CatBoost, and compared them with the improved CatBoost model (Ours) in a comparative experiment. In the experiments, the focus is on two key metrics: the average absolute error and the accuracy of the models in both large and small-interval pumping scenarios. Mean absolute error reflects the average difference between the model predicted value and the real value, and the smaller its value is, the more accurate the model prediction is; the accuracy rate reflects the proportion of correct prediction of the model, and the higher accuracy rate means the better performance of the model. The specific formula is as follows:

where

is the true value,

is the predicted value, and

n is the sample size.

6.1. Ablation Study

To validate the effectiveness of each component, we conducted ablation experiments.

The ablation results for both large-interval and small-interval production prediction tasks demonstrate that each proposed component contributes positively to model performance. Starting from the CatBoost baseline, the integration of cubic polynomial feature expansion consistently reduces MAE and increases accuracy, indicating that enhanced nonlinear feature interactions are beneficial for capturing production trends. The addition of F-value feature screening further improves performance by removing redundant variables and reducing noise, which stabilizes the learned model. Introducing the weight adaptation mechanism yields another notable performance gain in both MAE reduction and accuracy improvement. This confirms that differentiating the importance of high-error samples helps the model better handle complex or unstable production patterns. The subsequent attention mechanism further enhances the model’s ability to focus on the most influential multivariate relationships, providing clear incremental improvements in both large-interval and small-interval scenarios. Finally, Bayesian hyperparameter optimization produces the best overall results, demonstrating its effectiveness in finding optimal configurations across different data granularities.

Overall, the trends are consistent across both datasets: each component leads to monotonic, step-wise improvements, and the full model achieves the lowest MAE and highest accuracy. These results validate that the proposed enhancements are not merely heuristic additions but mutually reinforcing modules that substantially strengthen CatBoost’s predictive capability under different interval settings.

6.2. Comparison with Baseline and Ensemble Models

The performance of each model in different inter-pumping scenarios is shown in

Table 2.

The comparative experimental results with other algorithms are presented in

Table 3.

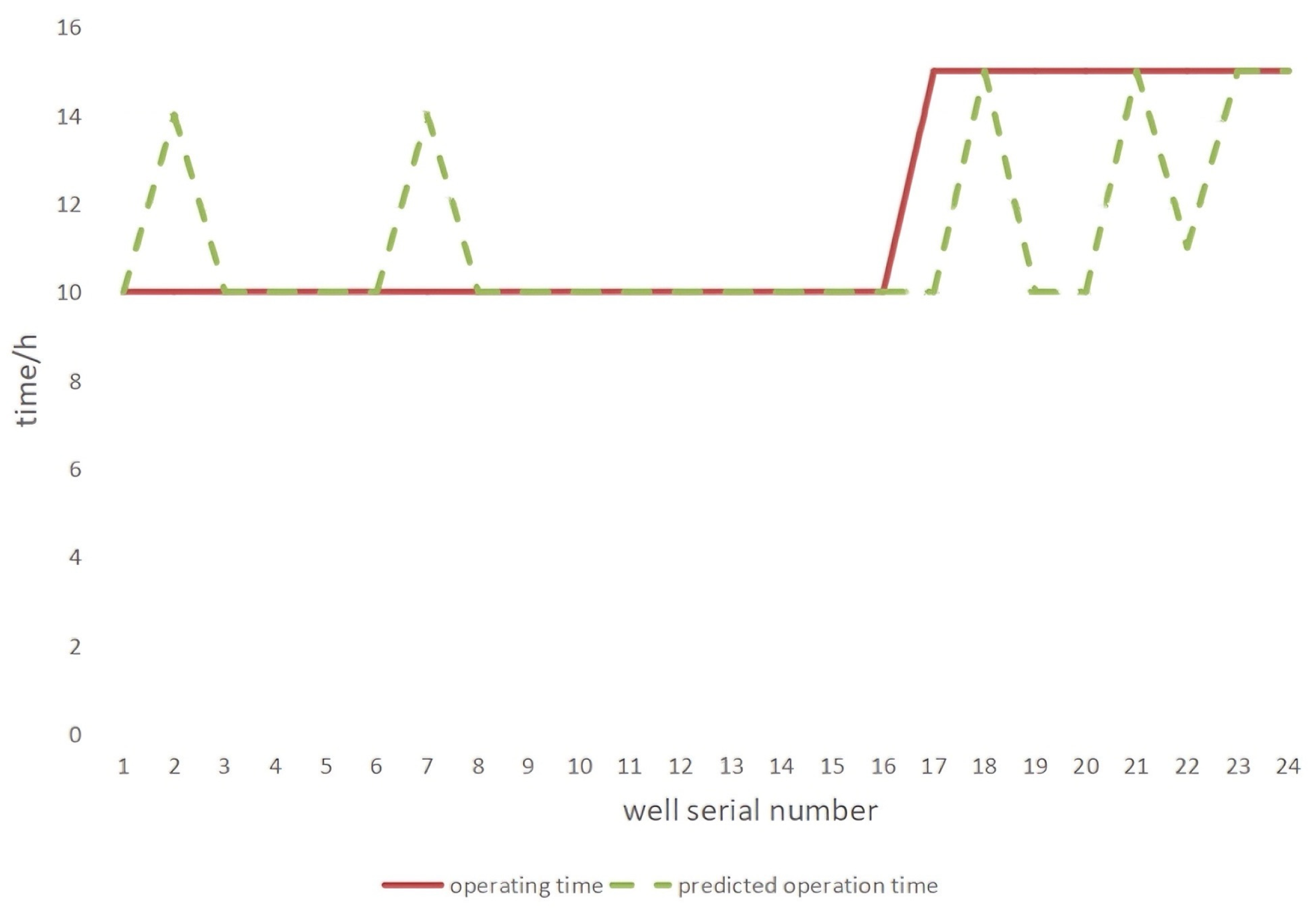

In order to deeply analyze the prediction effect of the improved model on the running time of each well number under different inter-pumping scenarios, we plotted the comparison of the running time and predicted running time of large inter-pumping wells and small-interval pumping wells. These visualization charts can intuitively present the dynamic changes and differences between the actual and predicted running times.

In the comparison chart for large-interval pumping wells (

Figure 3), we can observe the correspondence and fluctuation trend between the running time and the predicted running time for different well numbers, and clearly judge the accuracy of the model in predicting each well number in the large-interval pumping wells scenario. The comparison graph of small-interval pumping wells (

Figure 4) further shows the model’s time prediction ability for each well number in the small-interval pumping scenario. By comparing the trend of the two folded lines, the model’s ability to capture and predict the temporal changes in small-interval pumping can be effectively evaluated. These graphs provide an intuitive and powerful visual reference for model performance evaluation.

In terms of model performance, the improved CatBoost model (Ours) performs well through comparison experiments with a variety of classical machine learning models and model combinations. In the large-interval pumping scenario, its average absolute error is only 0.2659, with an accuracy of 0.9805; in the small-interval pumping scenario, the average absolute error is 1.0100, with an accuracy of 0.9422. This shows that the model has higher accuracy than other models in predicting the pumping operation time and recommending the pumping strategy, which can more accurately grasp the pumping pattern of oil wells and provide a reliable basis for actual production.

7. Limitations

Although the proposed data–physics dual-driven model demonstrates strong predictive capability and practical value, several limitations still remain and should be addressed in future work:

- (1)

Dependence on data quality and representativeness.

Although physical constraints reduce overfitting risk, the data-driven component still heavily relies on the accuracy and completeness of historical production data. Noise, sensor drift, and missing values may degrade model performance, especially in low-frequency or irregularly monitored wells.

- (2)

Simplified physical mechanism expressions.

The physical-driven part integrates seepage mechanics, pressure relationships, and pump efficiency, but these formulations inevitably simplify complex subsurface processes. Effects such as multiphase flow transitions, formation heterogeneity, wellbore multiphase interactions, and dynamic reservoir coupling are only partially represented, which may limit model applicability in highly heterogeneous reservoirs.

- (3)

Computational cost of enhanced CatBoost model.

The improved CatBoost incorporates polynomial features, attention-based multi-level modeling, Bayesian optimization, and weight adaptation mechanisms. These enhancements significantly increase computational demand during model training, which may not be suitable for low-resource environments or require longer training cycles for real-time deployment.