1. Introduction

The traditional processing and use of leather date back to around 7000 BCE. However, with the invention of chrome tanning in the early 19th century, leather production began to industrialize, marking the start of modern leather manufacturing. According to research by International Market Analysis Research and Consulting (IMARC), the company specialized in delivering comprehensive market insights, the global leather industry market size was valued at

$390.9 billion in 2023, with an estimated compound annual growth rate (CAGR) of 4.8% from 2024 to 2032 [

1]. Despite adopting alternatives like synthetic and vegan leather, genuine animal leather remains widely utilized, with its market estimated at approximately

$100 billion in 2023, and an expected growth rate of around 4% per year [

2]. Animal hides in use are cow (67%), sheep (12%), pig (11%), goat (10%), and other (0.5%) [

3]. Finished leather goods find extensive applications across various industries, including fashion, automotive, furniture, and footwear.

For manufacturers of finished leather hides and products, leather utilization poses significant challenges from business, environmental, and social perspectives. Increasing the utilization of finished leather hides before the cutting process can lead to cost savings, reduced leather waste, decreased inventory requirements, and a lower demand for animal hides. According to the Food and Agriculture Organization of the United Nations (FAO), this high consumption of genuine leather contributes to the death of about 1 billion animals annually worldwide [

4].

Leather utilization rates for finished leather vary based on the type of animal hide used and the specific industry or product. This paper focuses on optimizing the defect detection, classification, and segmentation of finished cowhide leather in the automotive industry, which is 17% of the global market for genuine leather products. We conducted research on automotive interior suppliers operating in Serbia. In total, 12 international companies that supply industrial leather have been surveyed: YanFeng (3), Adient (2), Magna (2), Grammer (1), AutoStop Interiors (2), SMP (1), and Passubio SPA (1).

Key findings relevant to this study indicate that the accuracy rate of quality inspection operators for finished leather hides ranges between 70% and 85%, directly affecting the leather utilization rate, which varies from 50% to 85%. Scrap rates following the cutting process range from 1.5% to 10%, while customer complaints are between 0.5% and 3%. Customer complaints and scrap rates further reduce utilization by an additional 6–10% due to losses in the optimization of post-processing leather hides or recalculations in the automatic pattern nesting for leather. The total number of finished leather hides cut daily by these companies ranges between 120 and 350. The average weight of a leather hide is around 25 kg, and the average purchase price is approximately 35 EUR per hide. Considering all these factors, the result is concerning: each of the surveyed companies produces approximately 3400 kg of leather waste daily, amounting to a loss of around 4700 EUR per day. The cause of such a poor process performance is the human factor and the inability to conduct a consistent inspection of industrial leather.

A comprehensive review [

5] of over 100 scientific papers highlights the evolution of defect detection and classification methods for industrial leather, ranging from traditional image-processing to state-of-the-art deep-learning approaches. While methods such as Mask R-CNN and U-Net have demonstrated reasonable accuracy, challenges persist in detecting subtle or complex defects [

6,

7,

8]. Recent advancements, particularly YOLO-based models, have emerged as the preferred solution for their superior speed and accuracy in real-time scenarios [

9,

10]. However, a gap remains in addressing defects on the flesh side of leather—a critical area this study focuses on, introducing a dual-side analysis approach that significantly enhances detection performance. The methods applied for defect detection and classification on finished leather include the following [

5]:

Traditional image-processing methods achieved an accuracy range of 70% to 85%;

Machine-learning-based methods reported accuracies ranging from 80% to 90%;

Deep-learning approaches demonstrated higher accuracy levels, often exceeding 90%.

Deep-learning techniques, particularly those based on You Only Look Once (YOLO) models, were identified as the most effective for leather defect inspection and classification among the methods analyzed. YOLO models not only provided higher accuracy rates but also improved processing efficiency compared to traditional methods. YOLOv2 and similar architectures have been noted for their real-time processing capabilities and high detection and classification rates, achieving accuracies of around 92% to 95% in some studies [

5]. To the best of our knowledge, the versions of YOLO models applied to defect detection and classification range from v1 to v9 [

5,

6,

7]. In this study, the YOLOv11 model is employed, which was released on 30 September 2024, and currently represents the state of the art in YOLO models, for defect detection and classification in industrial leather.

The key contributions of this work include the development of a dual-side analysis approach, which uniquely emphasizes defect visibility on the flesh side, and the implementation of a controlled digitization chamber to standardize environmental variables such as lighting and dust. By leveraging YOLOv11’s enhanced features—such as anchor-free detection and multi-task capabilities—this study achieves significant improvements in detection accuracy, surpassing previous YOLO models. These advancements demonstrate a practical and scalable solution to automate quality control processes, reduce leather waste, and improve industrial sustainability.

4. Results

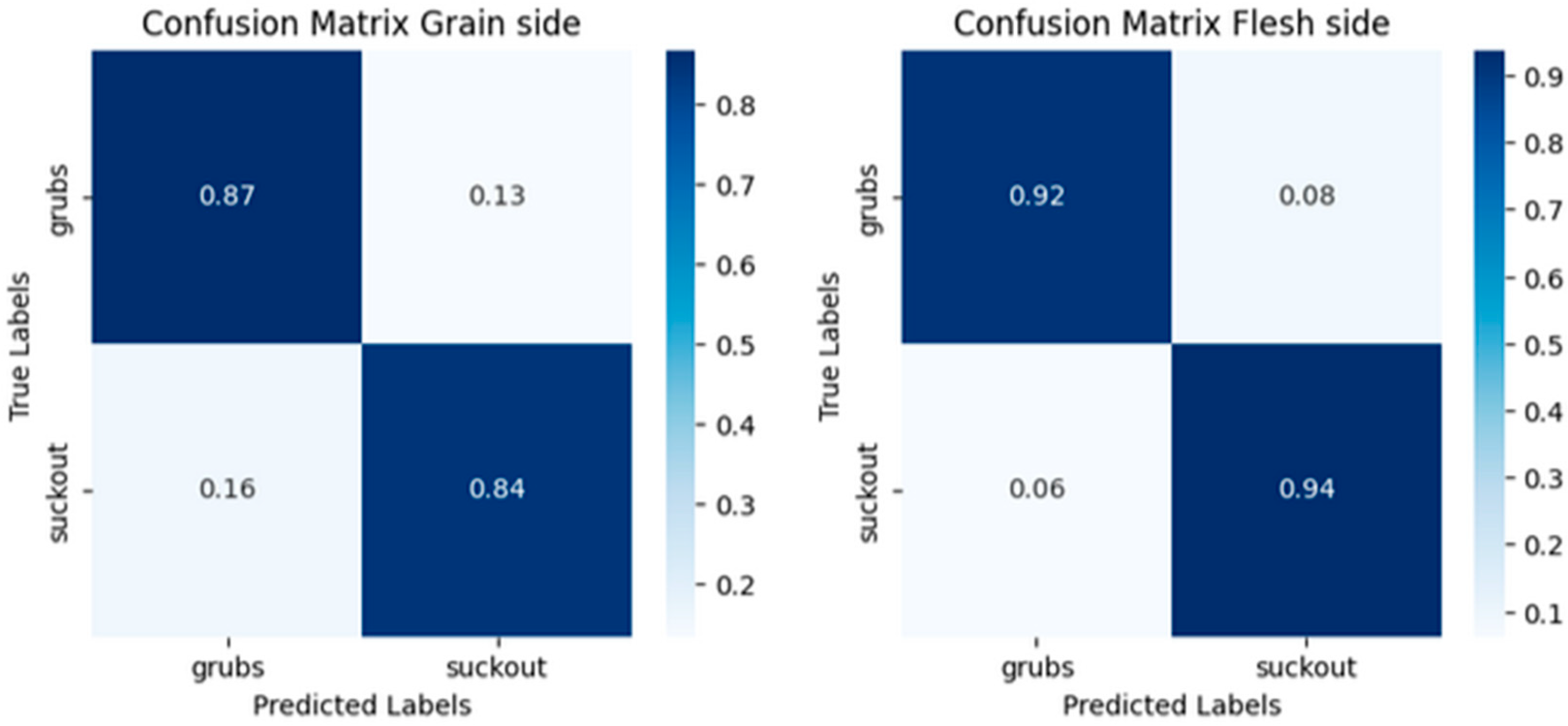

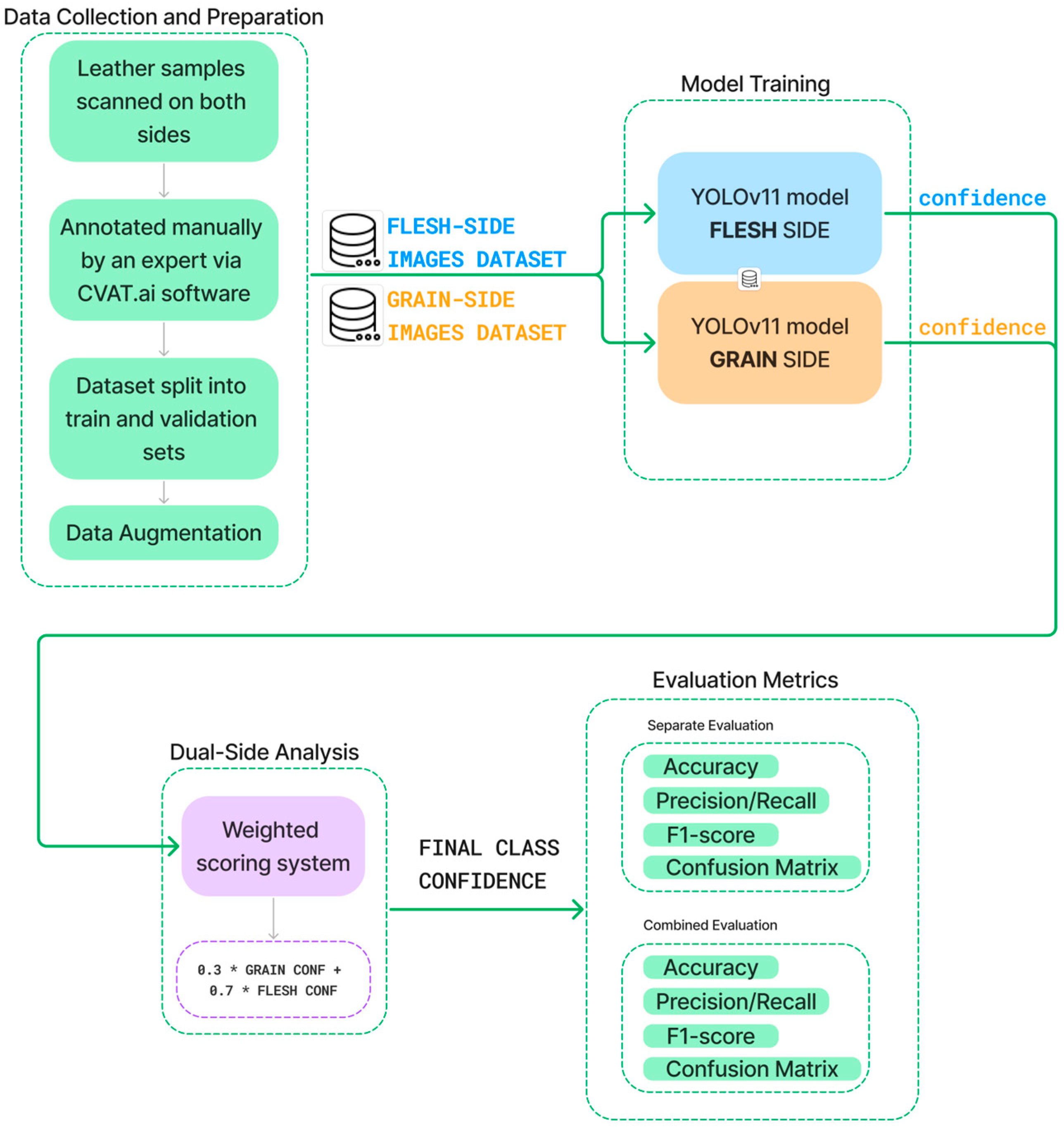

We applied the YOLO model on a dataset of 1200 defective samples from both sides of the leather, focusing on

grubs and

suckout defects, and the results are shown in

Table 3. In the case of detection on the grain side, we achieved a satisfactory accuracy of 85.8% for

grubs and 87.1% for

suckout. However, for defect detection on the flesh side, we achieved significantly better results, with 93.5% for

grubs and 91.8% for

suckout.

In terms of classification, we obtained relatively low results for grubs on the grain side at 66.8%, while the suckout classification accuracy was 97.6%. For defect classification on the flesh side, we achieved outstanding results of 98.2% for grubs and 97.6% for suckout.

The results demonstrate a notable improvement in defect detection accuracy on the flesh side of the leather, with an overall accuracy increase from 0.85 to 0.93. The F1-Score improved from 0.86 to 0.93, reflecting a more balanced and effective classification. Precision increased from 0.87 to 0.92, indicating fewer false positives on the flesh side, while recall rose from 0.84 to 0.94, showing a higher rate of correctly identified defects. This improvement across all three metrics suggests that the flesh side provides clearer defect signals, allowing for more accurate and reliable detection. In

Figure 5, confusion matrices show the performance of the two detectors.

Table 4 evaluates the performance of the YOLOv11 model using four key metrics: Accuracy, Precision, Recall, and F1-Score. Each metric provides a specific insight into the defect detection and classification performance. Accuracy measures the overall correctness of the predictions by considering both true positives and true negatives, giving a comprehensive view of the model’s reliability. It is calculated as follows:

Precision highlights the system’s ability to avoid false positives by calculating the proportion of true positive defect detections out of all positive predictions. It is defined as follows:

Recall emphasizes the system’s capability to minimize false negatives by measuring the proportion of actual defects correctly identified. The formula for Recall is as follows:

F1-Score combines Precision and Recall into a harmonic mean, providing a balanced evaluation, especially useful when Precision and Recall show significant differences. The F1-Score is calculated as as follows:

In these formulas,

TP (True Positive) represents correctly identified defective samples,

TN (True Negative) represents correctly identified non-defective samples,

FP (False Positive) represents non-defective samples incorrectly classified as defective, and

FN (False Negative) represents defective samples incorrectly classified as non-defective. These metrics collectively offer a comprehensive evaluation of the model’s performance, addressing both its reliability and robustness in defect detection.

These metrics were selected due to their relevance in assessing the performance for industrial applications, where both the over-detection and under-detection of defects carry critical consequences. Accuracy was chosen to offer a broad evaluation of the model’s effectiveness in correctly classifying all instances. Precision is essential in reducing unnecessary rejections of leather pieces, preventing waste and minimizing operational costs. Recall is equally critical to ensure that no defective pieces are overlooked, which could compromise product quality and lead to customer dissatisfaction. F1-Score was included to offer a holistic view, balancing Precision and Recall in scenarios where both metrics are equally significant. Together, these metrics provide a comprehensive validation of the YOLOv11 model, ensuring its practicality for defect detection in real-world industrial settings.

Furthermore,

Figure 6 illustrates the distribution and relationship between the class probability for the flesh side (blue) and the grain side (green). Scores are sorted in ascending order for both the front and flesh side to facilitate a direct comparison. The difference between the two distributions is significant, indicating a clear separation in scores for the front and flesh sides. This separation may contribute to a more nuanced approach to defect detection, where certain defects are more reliably detected on the flesh side, as confirmed by higher confidence scores for the flesh side.

It is also important to note that the grain side shows much greater variability in classification confidence, reinforcing the hypothesis that weighted scoring could significantly enhance accuracy (

Table 5). These results highlight differences in the detection scores for the defects

grubs and

suckout when comparing the flesh side and grain side of leather pieces. For both classes, the back-side scores are significantly higher than the grain-side scores, with an average difference of around 28.7% for

grubs and 18.2% for

suckout. This indicates that they are much more discernable on the flesh side compared to the front. The standard deviation on the flesh side is low, showing that flesh side scores are consistently high. In contrast, the front-side scores have a higher standard deviation, reflecting more variability and less consistent detection on the front.

The proposed workflow, illustrated in

Figure 7, outlines the process for leveraging YOLOv11 to detect and classify defects in finished leather. The methodology begins with the collection and annotation of leather samples scanned on both the grain and flesh sides, ensuring comprehensive defect coverage. The annotated dataset is divided into training, validation, and test sets, with data augmentation applied to enhance model performance. Separate YOLOv11 models are trained for each side, taking advantage of the model’s advanced architecture for defect detection. Results from the two models are combined using a weighted scoring system, prioritizing defects that are more visible on the flesh side. Finally, evaluation metrics, including Accuracy, Precision, Recall, and F1-Score, are used to assess both the individual and combined model performance, demonstrating the scalability and industrial applicability of the approach.

5. Discussion

The results of this study demonstrate the effectiveness of using YOLOv11 for defect detection and classification on finished leather, especially when analyzing both the grain and flesh sides. The experiment confirms that defect detection on the flesh side yields a higher accuracy and reliability for certain defects, such as grubs (larval damage) and suckout (cut damage). This outcome supports the hypothesis that examining the flesh side can reveal defects otherwise difficult to detect on the grain side, providing a promising approach for optimizing leather utilization.

Table 6 provides a comparative analysis of various YOLO-based models applied to leather defect detection and classification, highlighting their methodologies, accuracies, advantages, and limitations.

Wang et al. [

7] introduced the GEI-YOLOv9 model, which employed several innovative components, including the GhostNetV2 backbone to reduce redundant computations, EMA Attention for enhanced small-scale feature extraction, and the Inner-IOU loss function for faster and more accurate bounding box regression. These enhancements allowed the model to achieve a mean average precision (mAP) of 94.7% for defects such as bubbles, dents, and broken glue on the dataset provided by three leather manufacturers in the Pearl River Delta. Although the model demonstrated high precision and recall, its focus on the grain side limited its ability to detect subtle defects that are more prominent on the flesh side.

Wróbel and Szymczyk [

9] utilized the YOLOv5 model for general defect detection. Their approach, which did not focus on categorizing specific defect types, achieved an average precision (mAP) of 95%. This simplicity made YOLOv5 an adaptable and computationally efficient choice for real-time applications. However, the lack of categorization in defect classes reduces its suitability for specific industrial requirements, where detailed classification is often necessary. The origin of the dataset in this study is not specified.

Chen et al. [

6] conducted an extensive evaluation of YOLOv5, YOLOv6, YOLOv7, and YOLOv8 for detecting various leather defects, including pinholes, scratches, cavities, bacterial wounds, and creases. These models achieved up to an 85.1% accuracy for detecting multiple defect types, showcasing their reliability in diverse scenarios. However, the models faced challenges with detecting smaller defects or irregular shapes and lacked optimization for real-time industrial environments, which limited their applicability in high-speed inspection processes. The origin of the dataset used in this study is a leather production enterprise in Guangdong Province, China.

Thangakumar et al. [

10] applied YOLOv8 for precise defect detection and segmentation in pre-finished leather materials. This model achieved a detection accuracy of 92%, demonstrating its effectiveness in handling complex defect shapes and edges. Its focus on pre-finished leather, however, restricted its direct applicability to finished leather inspection, which requires more nuanced detection capabilities.

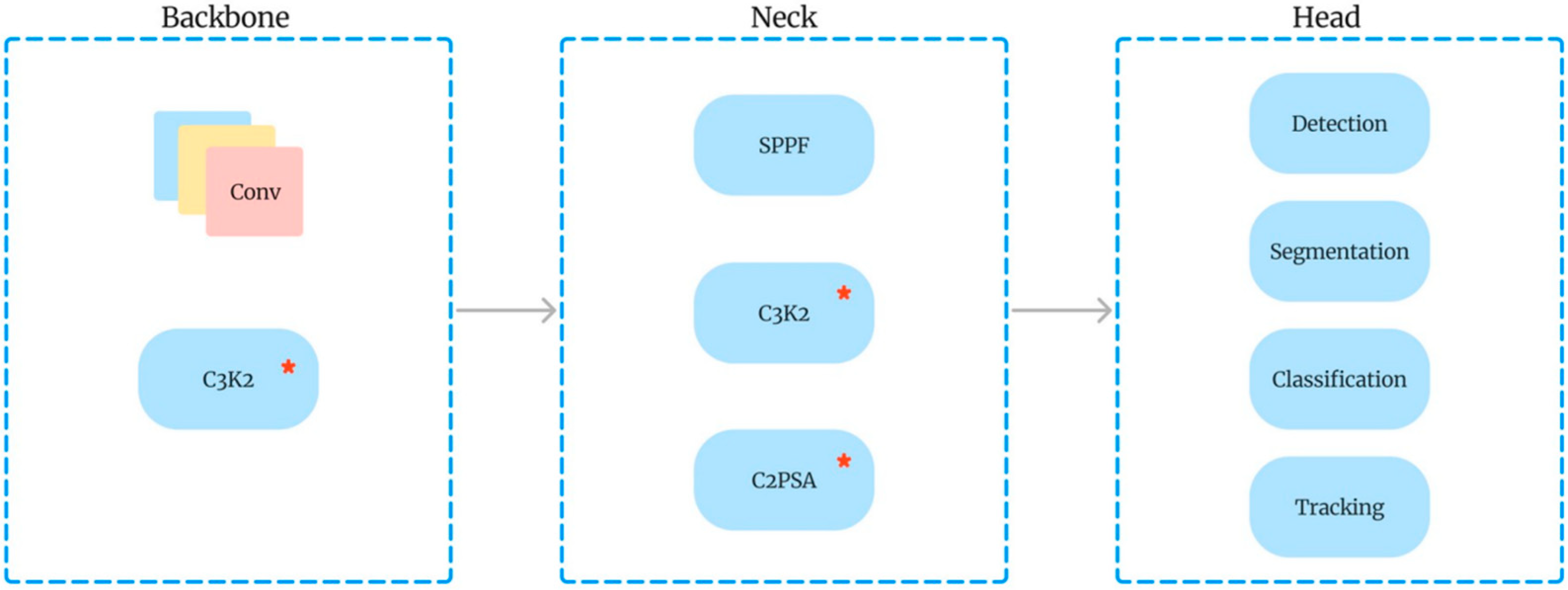

As illustrated in

Table 6, the YOLOv11 model offers distinct advantages over earlier iterations, including YOLOv5, YOLOv6, YOLOv7, and YOLOv8. While prior models achieved accuracies of up to 92% for grain-side defect detection [

9], YOLOv11 surpasses this with detection accuracies of 96.85% on the flesh side, owing to its novel C3k2 block architecture and optimized detection capabilities [

12,

13]. Additionally, YOLOv11’s multi-task output supports simultaneous detection, classification, and segmentation, making it a transformative tool for addressing the challenges of industrial defect inspection [

10,

11]. These models, while achieving respectable accuracies, faced challenges with certain defect types, particularly those involving subtle surface features. YOLOv11 addresses these challenges through several novel contributions: a dual-side analysis approach that enables detection on both the grain and flesh sides of the leather, a controlled digitization environment that ensures consistency and reduces external variability, and an anchor-free detection mechanism that improves both precision and speed.

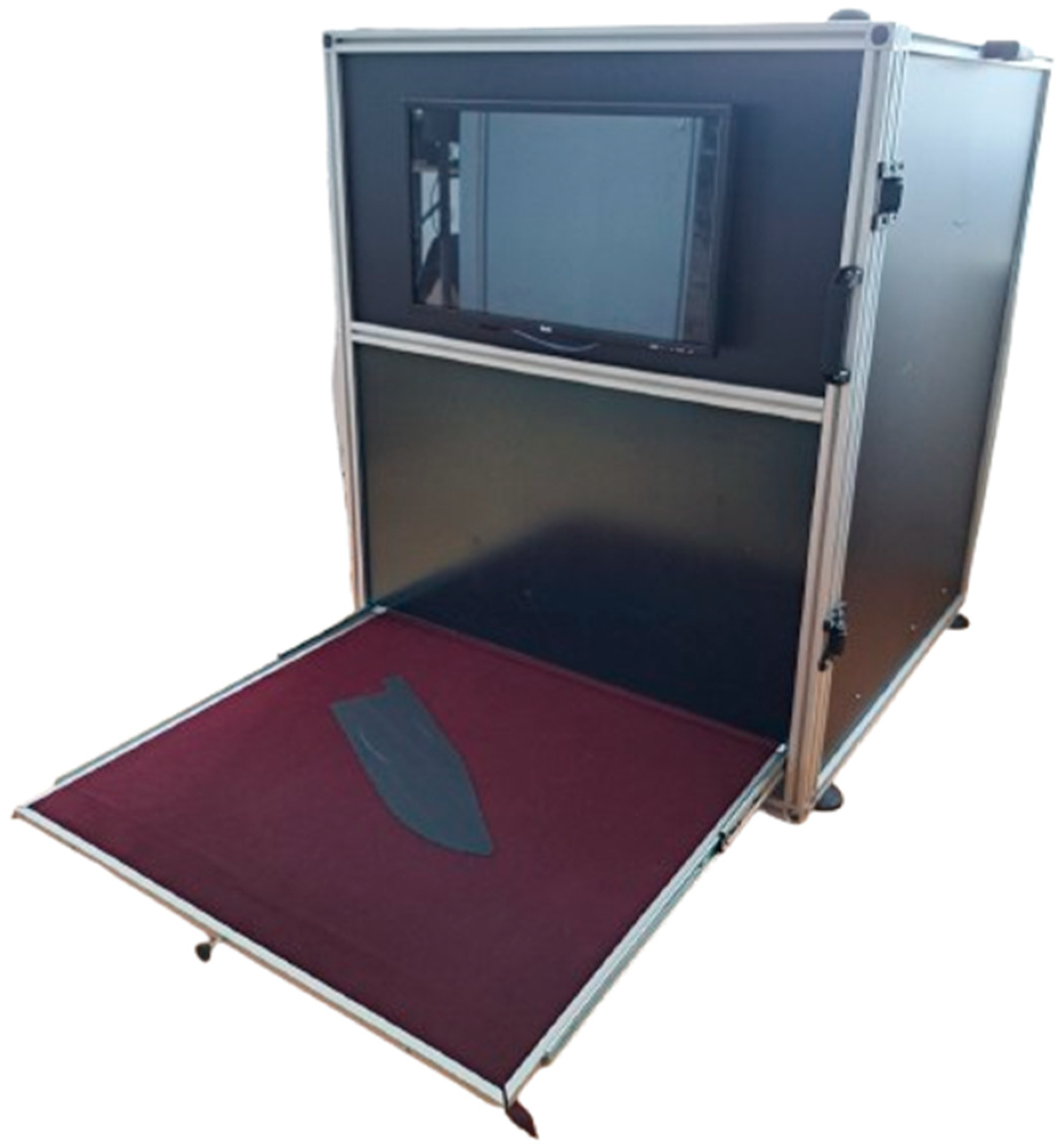

The results highlight YOLOv11’s superior performance, with detection accuracies of 93.5% and 91.8% for grubs and suckout defects on the flesh side, compared to the highest accuracy of 92% reported by YOLOv8 in earlier studies. Additionally, the introduction of a weighted scoring system allows YOLOv11 to leverage the unique strengths of each leather side, offering a tailored and adaptable solution for industrial applications. Unlike prior models, YOLOv11 also incorporates multi-task capabilities, enabling simultaneous detection, classification, and segmentation, further enhancing its utility. These advancements collectively position YOLOv11 as a state-of-the-art model, setting a new benchmark for automated leather defect detection and addressing the limitations of previous approaches. The specialized environmental setup, consisting of a light chamber equipped with a 20.3-megapixel industrial camera, played a crucial role in enhancing the detection accuracy by minimizing variables like lighting inconsistencies and dust interference, which are common in industrial environments. This controlled setting proved essential for achieving consistent, reliable defect detection, indicating that adopting similar environments could be beneficial in industrial applications.

The two-class weighted scoring system introduced here also proved advantageous, particularly when detection on one side provided better visibility or detail than the other. By assigning greater weight to the flesh side for specific defect types, this scoring method capitalized on the flesh side’s improved accuracy, as shown by an average accuracy difference of 28.7% for grubs and 18.2% for suckout. This approach highlights the potential of side-specific detection, where each side of the leather can provide unique data, refining the classification process and potentially reducing waste through more precise grading.

The increased precision and recall scores on the flesh side underscore the model’s capability to reduce false positives and false negatives, two critical aspects of automated leather inspection. With precision increasing from 0.87 to 0.92 and recall from 0.84 to 0.94 on the flesh side, these improvements suggest that incorporating backside analysis can significantly reduce inspection errors. Such accuracy has substantial implications for real-world applications, potentially allowing manufacturers to meet stricter quality standards and minimize waste due to misclassification or overlooked defects.

The low detection and classification results on the grain side for grubs and suckout defects are primarily due to the smooth surface texture, which makes subtle defects difficult to discern. The grain side often exhibits surface patterns and glare that can obscure critical features of these defects. For instance, grubs, caused by larval damage, are characterized by uniform circular or elliptical erosion, but these features are often masked by the grain side’s reflective properties. Similarly, suckout defects, which typically appear as linear separations, are less distinguishable against the grain side’s complex texture.

On the flesh side, these defects are much more evident due to its rough texture, which provides better contrast and visibility. Grubs defects appear as clusters of circular or elliptical patterns, while suckout defects are visible as distinct tears or splits, making them easier to classify. These observations, illustrated in

Figure 2 and

Figure 3, highlight the physical advantages of the flesh side in providing clearer visual cues for defect detection. Additionally, the controlled digitization environment used in this study further enhances the visibility of these features by minimizing glare and ensuring uniform lighting, enabling the YOLOv11 model to achieve a significantly higher detection accuracy on the flesh side.

For this reason, the application of YOLOv11 achieved relatively lower results on the grain side for grubs and suckout defects compared to other studies using YOLO models for different defect classes. However, by applying YOLOv11 to the flesh side, we achieved higher results than previously reported in the literature.

It is essential to recognize that not all defect types may benefit from dual-side analysis. Certain surface characteristics unique to the grain side may not manifest clearly on the flesh side, warranting further research to assess the applicability of this approach across all defect types. Thus, while dual-side analysis shows promise, expanding the classification system to accommodate a wider range of leather defect profiles could enhance its robustness.

The results presented in this study highlight the substantial practical benefits of employing YOLOv11 in the finished leather industry. The proposed approach addresses key challenges in defect detection and classification, offering several advancements that directly enhance operational efficiency, quality control, and sustainability in industrial leather production.

One of the most significant contributions is the high detection accuracy achieved by YOLOv11, particularly on the flesh side of leather, where subtle defects such as suckout and grubs are better detected. With detection accuracies of 91.8% for suckout and 93.5% for grubs, YOLOv11 surpasses traditional manual inspection methods, which typically achieve accuracies of 70–85%. This improved accuracy translates directly to optimized leather utilization, as accurate defect identification allows manufacturers to minimize waste and maximize the usable area of each hide, ultimately improving profitability.

The introduction of dual-side analysis represents another major innovation. Unlike prior approaches that are predominantly focused on the grain side, this study demonstrates the importance of leveraging the flesh side, where certain defects are more visible. By integrating this dual-side methodology, YOLOv11 enhances the comprehensiveness of leather quality inspections.

Furthermore, the system ensures consistency and scalability by automating the inspection process. Automation eliminates human error and ensures uniform defect detection across large-scale production batches, which is crucial for meeting industrial demands. The study also introduces a specialized digitization chamber with controlled lighting and dust elimination, enabling YOLOv11 to operate effectively under real-world conditions. This adaptability ensures the model’s suitability for diverse manufacturing environments.

While the practical benefits of YOLOv11 are clear, potential challenges for implementation must also be acknowledged. Initial investment costs, including the acquisition of digitization chambers, industrial cameras, and computing infrastructure, may pose a barrier for smaller manufacturers. Additionally, data preparation requirements for training the models such as extensive and precise defect annotations are both time-intensive and technically demanding. Furthermore, while YOLOv11 performs exceptionally well for certain defects, such as those on the flesh side, its effectiveness for certain grain-side defects may require further refinement or supplementary approaches. Finally, integration into existing workflows necessitates staff training and system adaptation, which could introduce temporary delays and additional costs.

Despite these challenges, the findings of this study underscore the transformative potential of YOLOv11 for the finished leather industry. By automating defect detection with unprecedented accuracy and scalability, this approach offers a pathway to enhance productivity, reduce costs, and support sustainability in leather manufacturing.

The results of this study firmly establish YOLOv11 as a robust solution for industrial defect detection, achieving a balanced integration of accuracy and speed. The dual-side analysis approach, coupled with a controlled digitization chamber, represents a significant advancement in detecting subtle defects such as grubs and suckout [

6,

8]. By enabling precise defect identification and reducing leather waste, this methodology not only enhances operational efficiency but also supports broader sustainability goals [

7]. Future work could explore expanding this approach to a wider range of defect types and other industrial applications, reinforcing its potential for a transformative impact across various sectors [

10,

13]. Recent advances in deep learning and computer vision, as exemplified by YOLOv11, offer a pathway to streamline leather inspection processes, reduce human error, and improve leather utilization. Future studies could expand the range of detectable defects, validate this approach across larger datasets, and adapt the methodology to other stages of leather processing. Practical considerations for industrial applications should also be considered, as both sides of the leather would need to be digitized, possibly simultaneously, to avoid errors. A potential solution could involve using a transparent surface for dual-side digitization, although this would require entirely new studies focused on the development of appropriate hardware and environments for digitization. The results demonstrate that a dataset of approximately 300 samples per defect provides sufficient variability for YOLOv11 to achieve high accuracy levels. Combined with the dual-side analysis and controlled digitization environment, this threshold ensures robust performance across diverse defect types, addressing the inconsistencies noted in prior studies.

6. Conclusions

This work is the result of an experimental study within a scientific innovation project. For the purposes of the experiment, dedicated hardware was developed, and the latest technology of the YOLO model series (YOLOv11) was used for defect recognition and localization on the grain and flesh side of industrial leather. Experimental validation confirmed that the application of YOLO on the flesh side of industrial leather led to the improved detection and classification of defects whose damage is more evident on the flesh side of industrial leather. For the experiment, we used the grubs defect, which is caused by damage inflicted by larvae on the flesh side of industrial leather, and suckout, which results from cuts during the separation of the hide from the animal’s body. The data used for training and validating the experiment were more than sufficient to achieve an appropriate accuracy in detection and classification.

In this study, we addressed critical challenges in leather defect detection and classification by offering novel methodologies and practical solutions tailored to industrial applications. The key contributions of this work are summarized as follows:

Dual-side analysis approach: This study introduces a novel dual-side analysis method, emphasizing defect detection on both the grain and flesh sides of leather. This approach uniquely highlights defects such as grubs and suckout, which are more discernible on the flesh side, improving overall detection rates.

Controlled digitization chamber: A dedicated digitization environment was developed to standardize imaging conditions by controlling variables such as lighting and dust. This innovation ensures consistency and reliability in defect detection, making the methodology robust for industrial-scale applications.

Application of YOLOv11’s advanced features: The study leverages YOLOv11’s enhanced capabilities, including anchor-free detection and multi-task learning for simultaneous detection, classification, and segmentation. These features enable the model to achieve superior accuracy and efficiency, surpassing previous YOLO models and other traditional methods.

Improved detection accuracy and scalability: The proposed approach demonstrates significant improvements in detection accuracy, achieving high performance with a dataset of approximately 300 samples per defect, providing a scalable and practical solution for industrial adoption.

Contribution to sustainability: By automating the quality control process and reducing leather waste through accurate defect detection, this study supports sustainability goals within the leather manufacturing industry.

The primary limitation of this study lies in its inapplicability to other types of defects commonly observed in industrial leather, particularly those that are either absent or minimally visible on the flesh side of the leather. Certain defects on the grain side exhibit unique surface characteristics that do not translate effectively to the flesh side, thereby limiting the scope of the current dual-side analysis. To fully address these challenges and evaluate the broader applicability of the YOLOv11 model, additional experimental studies would be required. Such studies should focus on individual defect types, assessing their distinct characteristics and determining the effectiveness of the YOLOv11 model in automating defect detection across a comprehensive range of industrial leather imperfections. These future investigations would provide a more robust understanding of the model’s potential for universal application in the industrial leather inspection process. It has been demonstrated that YOLOv11 is highly successful in detecting and classifying defects, even for those that are difficult to classify due to similar manifestations on the surface. By applying a controlled environment in the form of a digitization chamber and using the YOLO model on data obtained in controlled conditions, we have shown that it is possible to implement computer vision in industrial settings, creating the potential to overcome manual labor and reduce leather waste by optimizing utilization in the pre-cutting process of finished leather hides. Furthermore, the results underscore the importance of considering the physical properties of the leather’s grain and flesh sides when developing automated defect detection systems. The superior detection accuracy on the flesh side highlights how surface texture and defect visibility can significantly impact model performance. This study demonstrates how controlled lighting, optimized imaging conditions, and side-specific analysis can provide scalable solutions for improving quality control in the leather industry. The ability to accurately classify defects in an industrial environment contributes to the possibility of assessing tolerance and meeting different customer standards, which can significantly enhance utilization optimization.

This study highlights the practical implications of applying YOLOv11 in the finished leather industry. The model’s superior defect detection and classification accuracy, combined with its adaptability to industrial conditions, addresses long-standing challenges in quality control. These advancements offer manufacturers a pathway to enhance operational efficiency, reduce waste, and support sustainability, while also emphasizing the importance of adopting advanced AI models for automated quality inspection.