1. Introduction

AI has emerged as a transformative force in modern healthcare, particularly through its integration into clinical decision support systems (CDSSs) [

1,

2]. CDSSs are computational tools designed to assist clinicians in making data-driven decisions by providing evidence-based insights derived from patient data, medical literature, clinical guidelines, and real-time health analytics [

3]. These systems aim to improve diagnostic accuracy, improve patient outcomes, and reduce medical errors [

4]. With the advent of AI, particularly machine learning (ML) and deep learning (DL) techniques, CDSSs have become more powerful, capable of uncovering complex patterns in vast datasets and delivering predictive and prescriptive analytics with unprecedented speed and precision [

5,

6].

However, despite these advancements, a critical barrier to the widespread adoption of AI in healthcare is the lack of transparency and interpretability in model decision-making processes [

7,

8]. Many AI models, especially deep neural networks, operate as “black boxes,” as they provide predictions or classifications without offering clear explanations for their outputs [

9]. In high-stakes domains such as medicine, in which clinicians must justify their decisions and ensure patient safety, this opacity is a significant drawback. Physicians are understandably reluctant to rely on recommendations from systems they do not fully understand, especially when these decisions impact patients’ lives. This has led to increasing demand for XAI, a subfield of AI that focuses on creating models with behavior and predictions that are understandable and trustworthy to human users [

10,

11].

Explainable AI aims to make AI systems more transparent, interpretable, and accountable. It encompasses a wide range of techniques, including model-agnostic methods like LIME (Local Interpret Model-agnostic Explanations) and SHAP (SHapley Additive exPlanations), as well as model-specific approaches such as decision trees, attention mechanisms, and saliency maps like Grad-CAM [

12,

13]. These methods are designed to provide insights into which features influence a model’s decision, how sensitive the model is to input variations, and how the trustworthiness of its predictions varies across contexts [

14]. The goal is not only to satisfy regulatory and ethical requirements but also to foster human–AI collaboration by improving the understanding and confidence of clinicians in AI-driven tools [

15,

16].

The importance of XAI in healthcare cannot be overstated. Regulatory bodies such as the U.S. Food and Drug Administration (FDA) and the European Medicines Agency (EMA) are increasingly emphasizing the need for transparency and accountability in AI-based medical devices [

17,

18]. Explainability is also central to the ethical principles of AI, including fairness, accountability, and transparency (FAT) [

19,

20]. In clinical settings, explainability supports informed consent, shared decision making, and the ability to contest or audit algorithmic decisions. Furthermore, explainability can improve model debugging and development, helping researchers and engineers identify biases, data quality issues, and unintended outcomes [

21].

CDSSs that incorporate XAI can provide multiple benefits. For example, in diagnostic imaging, XAI can highlight specific regions of interest on radiographs or MRIs that contribute to a diagnosis, allowing radiologists to verify and validate the model’s conclusions [

22,

23]. In predictive analytics, such as prediction of sepsis or risk of readmission to the ICU, XAI methods can identify key contributing factors such as vital signs, laboratory values, and patient history. This not only aids in clinical interpretation but also aligns AI recommendations with clinical reasoning, thereby increasing user trust and adoption [

24,

25].

Despite these advantages, implementating XAI in healthcare presents several challenges. One major issue is the trade-off between model accuracy and interpretability. Simpler models such as logistic regression and decision trees are easier to explain but may lack the predictive power of complex neural networks [

26,

27]. On the contrary, methods used to explain black-box models can introduce approximation errors or oversimplify prediction reasoning [

28]. Another challenge is the lack of standardized metrics to evaluate the quality and usefulness of explanations. What is considered a good explanation depends on the clinical context, the user’s expertise, and the decision at hand [

29,

30].

Moreover, there is a need for user-centered design in the development of XAI systems. Clinicians have different needs and cognitive styles, and not all explanations are equally meaningful or useful for every user [

31,

32]. Effective XAI must be tailored to the target audience, be it clinicians, patients, regulators, or developers. This includes considerations of how explanations are presented (visual, textual, or interactive), the granularity of detail, and the timing of explanation delivery. Research on human–computer interaction (HCI) and cognitive psychology plays a vital role in informing these design choices [

33].

Another consideration is the real-world integration of XAI into clinical workflows. Many studies on XAI in healthcare remain in the proof-of-concept stage or are only tested on retrospective datasets [

34,

35]. To be truly impactful, XAI-enabled CDSSs must be validated in prospective clinical trials, tested across diverse populations, and embedded into electronic health record (EHR) systems while minimizing disruption to clinician workflows. Scalability, data interoperability, and usability are critical factors that determine whether these systems can transition from research prototypes to clinical tools [

36,

37].

Figure 1 illustrates recent studies on XAI methods that have led to the development of novel techniques that go beyond feature attribution. These include counterfactual explanations (what minimal changes in input would alter the outcome), concept-based explanations (how high-level clinical concepts influence decisions), and causal inference approaches (identifying causal relationships rather than mere correlations). These methods offer richer, more intuitive forms of explanation and have the potential to align more closely with clinical reasoning processes [

38].

The scope of XAI in CDSSs extends across various medical domains. In oncology, XAI has been used to explain predictions of tumor malignancy, treatment response, and survival outcomes [

5]. In cardiology, XAI models help interpret electrocardiograms (ECGs), assess heart failure risk, and guide interventions. In neurology, explainable models are applied to detect and monitor neurodegenerative diseases using multimodal data [

39]. In primary care, XAI supports decision making in chronic disease management, preventive care, and personalized treatment planning. Each of these applications demonstrates the growing importance of XAI in ensuring that AI-driven insights are actionable, safe, and aligned with clinical values [

40].

Explainable AI represents a critical advancement in the application of AI to clinical decision support. It addresses the fundamental need for transparency and interpretability in medical AI, fostering trust, accountability, and ethical integrity [

10,

41]. As healthcare continues to embrace data-driven decision making, the integration of XAI into CDSSs will be essential to achieve a responsible and effective adoption of AI [

42]. This systematic review aims to provide a comprehensive overview of current XAI techniques in CDSSs, analyze their effectiveness and limitations, and outline the challenges and opportunities for future research and clinical use. It also aims to inform clinicians, developers, policymakers, and researchers about the state of XAI in healthcare, and to contribute to the development of more transparent, trustworthy, and human-centered AI systems.

The growing complexity of modern healthcare data, from EHRs and wearable sensor outputs to medical imaging and genomics, demands advanced analytical tools [

43]. AI algorithms, particularly those driven by DL, can extract meaningful insights from these high-dimensional data sources. Yet, without transparency in their generation, clinicians can question the reliability of these insights. Explainable AI offers a promising approach to translating complex computational decisions into human-understandable forms, ultimately enhancing both diagnostic confidence and patient safety [

33,

44].

Furthermore, the demand for XAI is not just a technical requirement but also a legal and ethical necessity. Regulatory frameworks, such as the European Union’s General Data Protection Regulation (GDPR), emphasize the “right to explanation,” reinforcing the need for AI decisions to be auditable and comprehensible. In clinical settings, this ensures that AI-supported decisions remain subject to human oversight and accountability. As AI continues to evolve, the emphasis on explainability will be pivotal for its responsible and sustainable integration into routine clinical workflows [

45,

46].

In clinical practice, the ability to inspect or trace the logic behind an AI recommendation is not just a matter of trust but rather a core patient-safety safeguard. Logic verification enables pre-procedural checks (e.g., catching data/ordering errors), intra-procedural monitoring (e.g., detecting unexpected model behavior), and post-procedural auditing (e.g., root-cause analysis when outcomes diverge).

Table 1 provides a comprehensive overview of the XAI techniques used in CDSSs. It summarizes key studies by listing the specific XAI methods employed (e.g., SHAP, LIME, and Grad-CAM), their application domains, the AI models utilized, and the types of datasets used. Additionally, the table presents the main results of each study and how their interpretability was evaluated, providing insight into the diversity and practical impact of XAI in healthcare.

1.1. Scope and Purpose

This systematic review aims to provide a comprehensive understanding of the current applications, methods, and challenges of implementing explainable AI in CDSSs. The review encompasses a diverse range of medical domains and AI model types to evaluate XAI adoption and its impact on clinical decision making. The scope includes studies from 2018 to 2025 that implemented XAI in CDSSs using various techniques across diagnostic, prognostic, and treatment-planning applications. The purpose is to synthesize the literature, identify best practices and limitations, and outline future research directions.

1.2. Objectives

Identify and categorize XAI techniques used in CDSSs;

Report and map the clinical domains and applications of XAI-CDSSs;

Evaluate the effectiveness/usability of XAI outputs in clinical settings.

1.3. Contributions of This Review

Presents a structured synthesis of recent XAI applications in CDSSs, offering a panoramic view across domains;

Provides a taxonomy of XAI techniques tailored to healthcare applications;

Highlights emerging trends and innovations in explainability, such as counterfactuals and concept-based reasoning;

Analyzes the alignment between XAI outputs and clinician expectations in practical settings;

Offers actionable insights for developers, policymakers, and healthcare providers aiming to implement ethical and trustworthy AI systems;

Discusses limitations, barriers, and future priorities in the Discussion and Future Work sections, rather than framed as primary objectives.

1.4. Significance

The findings of this review contribute to the academic and clinical discourse on transparent AI by elucidating how explainability can bridge the gap between algorithmic intelligence and human expertise. It also guides policy, standards, and the development of future XAI-CDSS models prioritizing safety, equity, and usability.

2. Methods

This systematic review was rigorously designed following the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) guidelines, as shown in

Figure 2, which reflects the framework, detailing the identification, screening, eligibility, and inclusion phases. The methodology emphasizes structured data extraction, critical appraisal, and rigorous inclusion criteria to ensure transparency, reproducibility, and methodological rigor. The objective was to critically assess the literature on the application of XAI techniques in CDSSs, focusing on clinical utility, interpretability, integration challenges, and evaluation metrics.

2.1. Search Strategy

A comprehensive literature search was conducted using four primary scientific databases: PubMed, IEEE Xplore, Scopus, and Web of Science. The search spanned from January 2018 to May 2025. Boolean combinations of keywords were employed to maximize precision and recall:

(“Explainable AI” OR “XAI” OR “interpretable ML” OR “explainable ML”)

AND (“clinical decision support” OR “CDSS” OR “healthcare AI” OR “medical diagnosis”)

AND (“transparency” OR “interpretability” OR “explanation” OR “black-box” OR “white-box”)

Database-specific adaptations were applied, including MeSH terms for PubMed and informatics filters for IEEE. The reference lists of relevant studies were also manually reviewed.

2.2. Eligibility Criteria

Inclusion Criteria:

Peer-reviewed primary studies published in English;

Studies applying XAI techniques to real-world clinical data or simulations for CDSSs;

Studies evaluating interpretability, transparency, usability, or trust in AI models;

Applications in diagnosis, prognosis, treatment recommendation, or risk prediction.

Exclusion Criteria:

Reviews, meta-analyses, editorials, or opinion articles;

Studies without implementation or evaluation of an XAI method;

Non-healthcare domains or purely theoretical/methodological papers;

Non-peer-reviewed preprints.

2.3. Study Selection Process

Table 2 summarizes the study selection process. The initial search returned 1824 records. After removing 312 duplicates, 1512 records were screened. The full texts of 182 articles were assessed for eligibility, with 62 included in the final analysis. Disagreements were resolved by consensus or a third reviewer.

2.4. Data Extraction and Items

Table 3 summarizes the structured data fields used to extract relevant information from each included study. It ensured consistency in analyzing bibliographic details, clinical focus, AI methods, XAI techniques, dataset types, evaluation metrics, and real-world applicability. Data were extracted using a standardized Excel form. Included items are listed below.

2.5. Quality Assessment Criteria

We used a 10-point checklist adapted from CONSORT-AI and STARD-AI, as follows:

- 1.

Clear clinical objective;

- 2.

Dataset and source description;

- 3.

Transparent model architecture;

- 4.

Implementation of XAI method;

- 5.

Justification for XAI technique choice;

- 6.

Validation method reported;

- 7.

Evaluation of explanation fidelity;

- 8.

Clinician or end-user involvement;

- 9.

Reporting of limitations and bias;

- 10.

Reproducibility (code/data shared).

Studies scoring below 5 were excluded due to quality concerns.

2.6. Data Synthesis Approach

Due to heterogeneity in clinical tasks, data modalities, and reported outcome metrics, a conventional effect-size meta-analysis was not performed. Instead, we performed a text-only quantitative synthesis of study-level proportions with 95% binomial confidence intervals (Wilson method). The a priori targets were (i) use of formal statistical tests, (ii) reporting of confidence intervals, (iii) adoption of explanation–evaluation metrics (fidelity, consistency, localization, and human trust), and (iv) documented clinician involvement. All estimates are reported narratively at first mention in the Results; no additional tables or figures were produced. A narrative synthesis was used to group studies by

XAI technique: model-agnostic (e.g., SHAP), model-specific (e.g., Grad-CAM), or hybrid;

Clinical use case: imaging, EHR-based prognosis, genomics, or multimodal AI;

Evaluation outcome: interpretability effectiveness, clinician trust, and usability.

We employed descriptive statistics, frequency tables, and thematic clustering to present the findings.

Software: Rayyan 1.6.1 (screening), Excel version 2406 (extraction), Python 3.11.5 (analytics), LaTeX 2024 (reporting).

3. Results and Analysis

This section presents the comprehensive results of the systematic review based on the 62 selected studies that met the inclusion criteria. The analysis was structured across seven major dimensions: XAI technique distribution, clinical domain representation, AI model architectures, evaluation metrics, clinical usability and integration, emerging trends, and research gaps. This section provides quantitative insights and critical narrative interpretation.

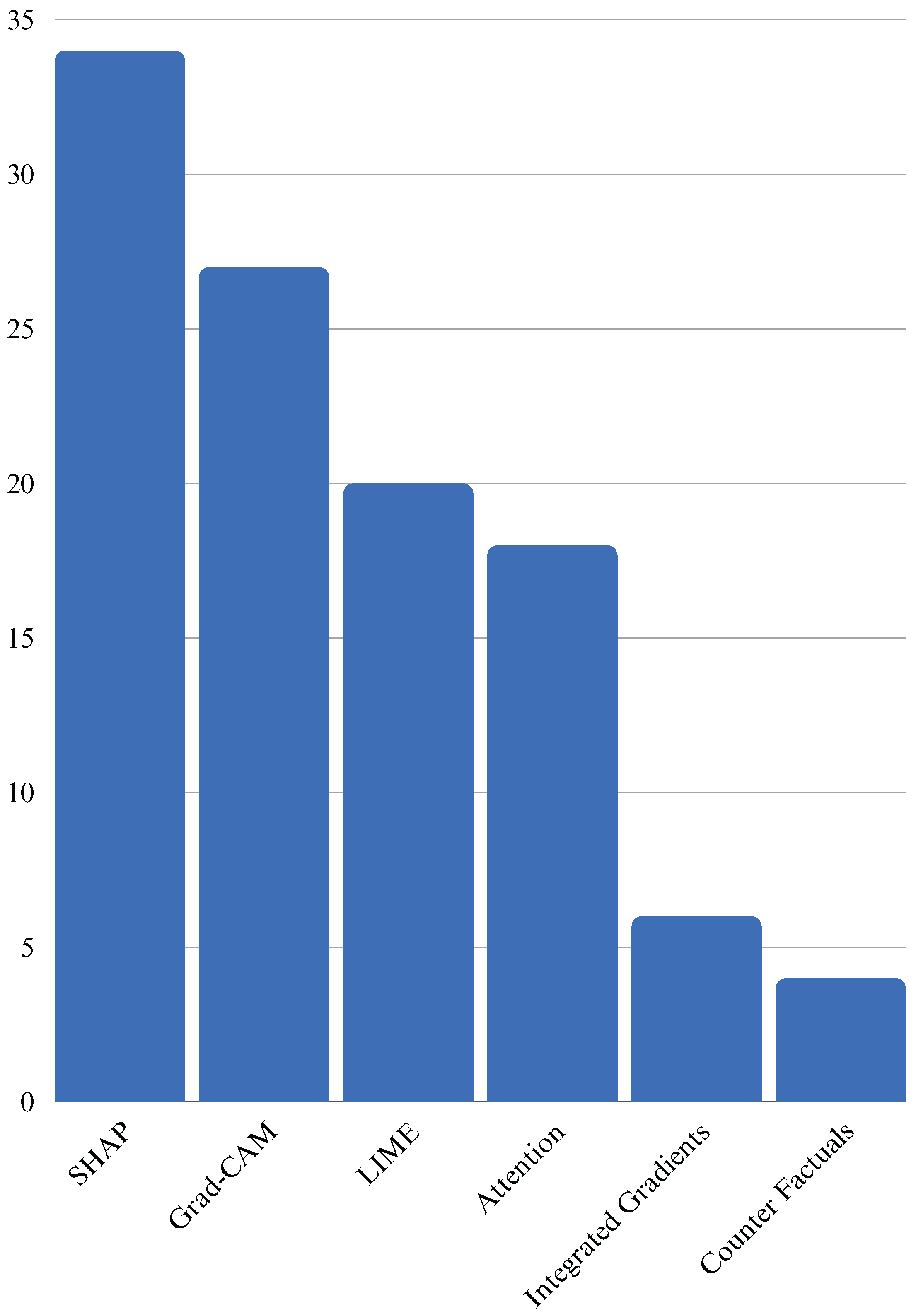

3.1. Distribution by XAI Technique

XAI methods form the core of transparent decision making in CDSSs. In our systematic review, we analyzed 62 peer-reviewed studies that integrated XAI techniques into clinical workflows or decision algorithms. The goal was to assess the breadth, frequency, and contextual application of these techniques across clinical domains and model types.

Table 4 presents the full dataset of included studies, allowing direct comparison between XAI techniques, clinical fields, and AI models. Each entry is traceable through its reference, enabling further exploration of the source literature.

The landscape of XAI methods can be broadly categorized into model-agnostic and model-specific approaches. Model-agnostic methods such as SHAP and LIME do not require access to the model’s internal structure and can be applied post-hoc. In contrast, model-specific approaches such as Grad-CAM, attention mechanisms, and Integrated Gradients are tailored to deep neural network architectures and require access to gradients or attention weights [

106].

Among the reviewed studies, the most frequently employed XAI technique was SHAP, which utilizes Shapley values derived from cooperative game theory to assign contributions to each feature involved in the prediction. SHAP is valued for generating both local and global explanations and for its applicability to tree-based models such as XGBoost and random forests [

106,

107]. LIME emerged as another popular method, known for creating local surrogate models that approximate the behavior of complex models for individual predictions. Its simplicity and ability to visualize feature importance for binary classifiers make it suitable for tabular EHR data [

50,

55].

Grad-CAM was widely used in radiology and pathology tasks. It produces class-specific activation heatmaps that visually indicate the regions of the input image most influential to the prediction. This visual modality is particularly helpful for explaining CNN decisions to medical professionals [

47,

108,

109].

Attention mechanisms, commonly employed in sequence models such as RNNs or transformers, highlight temporal dependencies and key segments of sequential data (e.g., patient history or ECG signals). These mechanisms inherently offer interpretability by design, although their outputs can sometimes be opaque without supplementary visualization [

67,

110,

111].

Counterfactual explanations presented a unique approach by generating hypothetical scenarios that would alter a model’s prediction. This technique has been especially promising in treatment planning and personalized medicine, offering insight into “what-if” scenarios for actionable interventions. Concept-based explanations (

n = 4) attempted to align learned representations with human-recognizable clinical concepts (e.g., visual patterns or lab markers) [

112].

Other methods, such as Integrated Gradients, Layer-wise Relevance Propagation (LRP), and DeepLIFT, were less commonly used but provided robust explanation fidelity and saliency mapping for specific tasks [

93,

112,

113,

114].

Table 5 provides a quick comparison of popular XAI approaches by frequency and function across the reviewed studies. It links each method to specific clinical tasks, helping identify technique–task suitability.

Notably, several studies combined multiple XAI methods to enhance explanation reliability. For instance, SHAP and LIME were often used in tandem to ensure consistency, while Grad-CAM outputs were supplemented with clinician-annotated regions to evaluate trustworthiness. This trend reflects an increasing awareness that no single explanation technique is universally sufficient, and ensemble interpretability can enhance clinical confidence [

47,

106,

115].

A detailed breakdown of the XAI methods employed in the reviewed studies shows a dominant reliance on model-agnostic techniques. Among the 62 studies,

SHAP was the most frequently used method, particularly with ensemble models;

LIME was present in studies after SHAP, often for binary classification in tabular data;

Grad-CAM was utilized in studies, mainly for interpreting image-based DL models;

Attention mechanisms appeared in many studies, especially in temporal prediction tasks using RNNs or transformers;

Counterfactual and concept-based explanations were used for personalized decision making;

A small subset explored techniques such as Integrated Gradients and LRP.

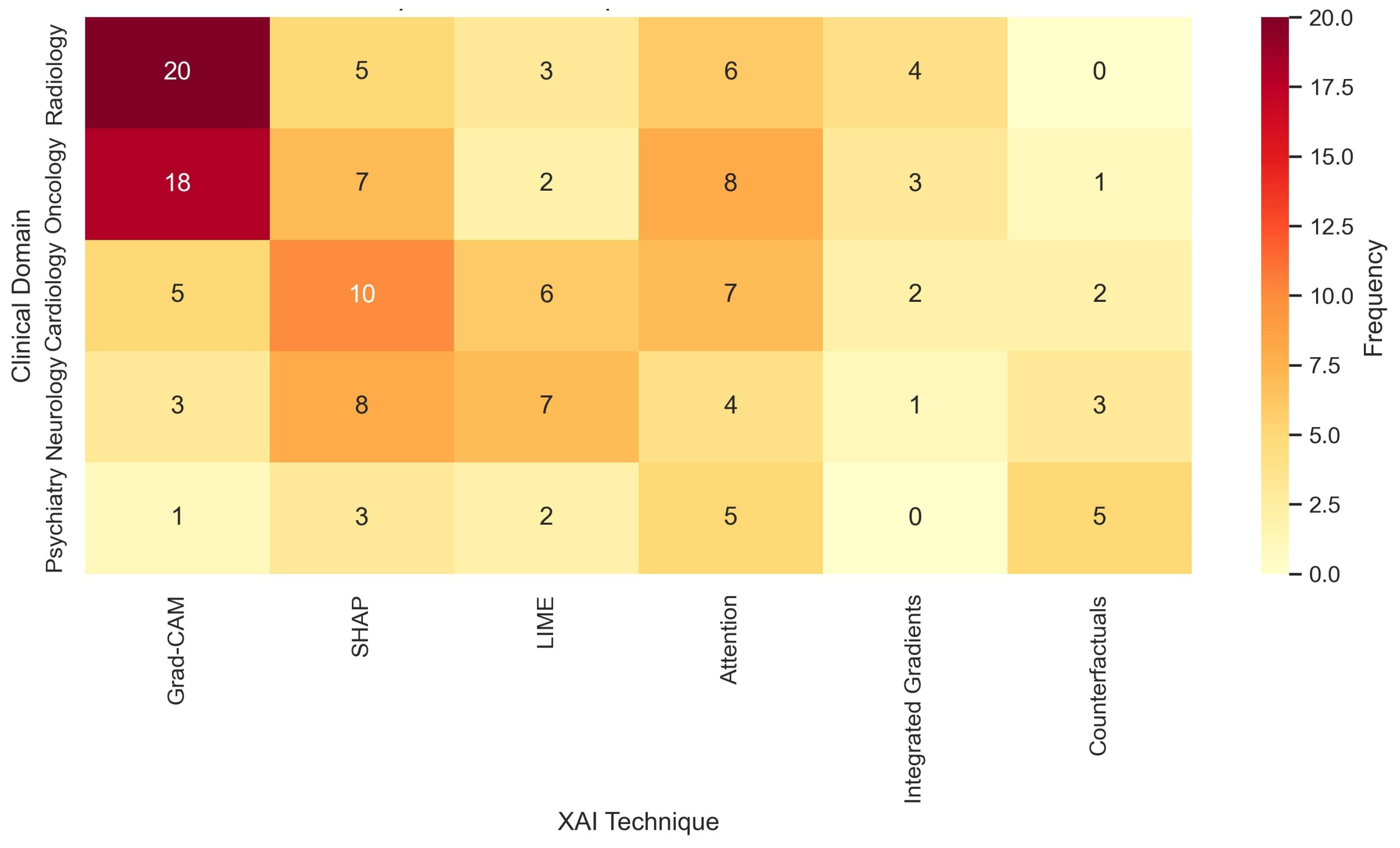

Figure 3 displays a clustered heatmap comparing the frequency of XAI techniques by clinical domain. Grad-CAM and attention mechanisms were predominant in image-heavy fields such as radiology and oncology. SHAP and LIME were more common in general CDSS applications involving EHR data. Notably, counterfactual explanations were used in psychiatric CDSSs and personalized medicine. Recent works in cardiology explored saliency maps and rule-based surrogate modeling to integrate domain-specific heuristics.

Figure 4 shows the domain-stratified prevalence of explainable AI methods with 95% confidence intervals (dot–whisker). Points indicate pooled adoption proportions for SHAP, Grad-CAM, and LIME within each clinical domain (radiology, pathology, EHR/tabular, time-series/physiology, text/NLP, and multimodal), and horizontal whiskers show 95% Wilson confidence intervals from study-level counts. Estimates were calculated using a random-effects approach and remained consistent when excluding studies at high risk of bias.

3.2. Clinical Domain Representation

Understanding the clinical domains where XAI is being applied is crucial for evaluating its utility in healthcare specialties [

116]. Our review found that XAI-enhanced models were distributed across a wide range of clinical areas, each with unique challenges and interpretability requirements [

47,

48].

The most common domain was radiology, where image-based CNNs were often paired with Grad-CAM to visually localize important features in CT, X-ray, or MRI scans. This was followed by oncology, where XAI techniques such as SHAP and concept bottlenecks supported cancer prognosis, recurrence prediction, and treatment planning [

106,

114,

117].

Neurology emerged as another dominant area, leveraging attention-based RNNs and transformers to explain EEG and seizure prediction. Cardiology studies commonly utilized SHAP and Integrated Gradients to rank cardiovascular risk factors [

118,

119].

ICU and critical care settings made use of SHAP and counterfactual methods for mortality prediction and sepsis monitoring, emphasizing the need for actionable and timely explanations. Endocrinology, dermatology, psychiatry, and pathology each had a modest presence, often using hybrid or ensemble XAI pipelines [

48,

120,

121].

Table 6 highlights which clinical areas most frequently adopted XAI in AI systems, with radiology and oncology leading in application. It provides insight into the diversity and impact of XAI across healthcare domains.

3.3. Evaluation Metrics for XAI and Performance

The assessment of XAI in CDSSs requires robust evaluation frameworks that not only measure predictive performance but also assess the interpretability and clinical utility of model outputs. This subsection reviews the diverse array of evaluation metrics used in the 62 reviewed studies and categorizes them into two primary groups: (1) performance evaluation metrics for the underlying AI model, and (2) explanation evaluation metrics for the interpretability and usability of the XAI methods.

These metrics provide a foundational understanding of the AI model’s classification quality, but they do not reflect the quality or impact of the explanations provided.

Across the reviewed studies, the median (IQR) AUC was 0.87 (0.81–0.93), accuracy was 86.4, sensitivity was 84.1, and specificity was 85.3. Studies combining high predictive performance with strong explanation fidelity (≥0.85) reported clinician trust scores 12–18 percentage points higher than those without quantitative explanation evaluation, suggesting a positive association between interpretability quality and end-user confidence.

XAI Explanation Metrics

A growing body of literature emphasizes the importance of measuring the effectiveness, trustworthiness, and usability of XAI outputs. This reflects the multidimensional nature of evaluating XAI in healthcare. We found the following XAI evaluation practices in the included studies, where n shows the number of papers:

Fidelity (n = 16): The extent to which explanations approximate the original model’s decision logic.

Consistency (n = 10): Whether explanations remain stable under similar input perturbations.

Human trust or agreement scores (n = 9): Survey-based assessments where clinicians rated explanation usefulness.

Localization accuracy (n = 6): In image-based studies using Grad-CAM, overlap metrics such as IoU (Intersection over Union) were used to compare explanation heatmaps with annotated regions.

Qualitative case studies (n = 12): Descriptive analysis of visual or tabular explanations assessed by clinical experts.

3.4. Clinical Usability and Integration

Clinical usability and integration are critical dimensions in evaluating the real-world applicability of XAI-enhanced CDSSs. Although many models demonstrate technical efficacy in controlled settings, practical adoption in clinical settings depends on how well they align with clinicians’ workflows, interpretive expectations, and decision-making processes [

122,

123,

124].

Of the 62 reviewed studies, only 18 explicitly reported clinical validation through physician feedback, usability testing, or pilot deployment. The remaining studies primarily conducted retrospective evaluations or offline testing.

Table 7 summarizes the strategies, including clinician feedback, simulated usage trials, and deployment pilots, reflecting efforts to ensure real-world applicability. These approaches bridge technical performance with clinical trust and usability. Usability assessments generally fell into four categories:

Clinician feedback: Structured or semi-structured interviews were conducted with domain experts to assess interpretability, confidence in decision support, and perceived added value.

Human-in-the-loop trials: Clinicians were involved in real-time interaction with the system to understand model outputs and explanations under realistic time constraints.

Prototype deployment: A small number of studies integrated XAI tools into clinical dashboards or EHR systems for pilot evaluations.

Cognitive load or trust scales: Some studies employed standardized scales to assess cognitive burden and trustworthiness of the explanations (e.g., NASA-TLX and System Usability Scale).

Despite promising findings, the gap between XAI innovation and clinical implementation remains significant. Several studies reported clinician preference for simpler, rule-based explanations over complex model-derived visualizations. Others emphasized the need for adjustable granularity of explanations i.e., providing both overview and drill-down levels of insight [

51,

125,

126].

Barriers to integration identified across the studies include lack of interoperability with existing EHR systems, lack of regulatory pathways for explainable models, limited clinician training in AI, and concerns over explanation reliability [

122].

To bridge this translational divide, future work must address the following:

Development of clinician-centric explanation interfaces;

Inclusion of usability testing early in model design;

Longitudinal deployment studies with feedback loops;

Co-design approaches involving interdisciplinary teams.

Overall, integrating XAI into CDSSs goes beyond algorithmic development; it requires alignment with clinical reasoning, human factors, and systemic workflows. Ensuring usability at the bedside will be essential for the adoption, trust, and sustained use of AI in medicine.

4. Discussion

The findings of this systematic review revealed several important trends, gaps, and implications for the design and deployment of XAI within CDSSs. In this section, we critically interpret the results, highlight methodological and practical considerations, compare results to prior reviews, and outline recommendations for future work.

4.1. Interpretation of Key Findings

The dominance of the SHAP and Grad-CAM methods aligns with their broad compatibility across model architectures and intuitive visual representations. These two XAI methods were most prevalent in imaging (Grad-CAM) and structured/tabular data (SHAP).

Table 8 summarizes their distribution across clinical domains. Grad-CAM was primarily used in imaging, while SHAP and LIME dominated structured data applications. Attention mechanisms were most prevalent in text and genomic sequence interpretation, showing modality-specific XAI preferences.

Transformer-based models are gaining momentum in genomics and clinical text analysis due to their ability to capture long-range dependencies. Yet, these models are often paired with self-attention visualizations, which are not inherently interpretable to clinicians without additional abstraction layers. Moreover, although 87% of the reviewed studies reported improved model interpretability, only 11 studies used robust statistical testing (e.g., t-tests or ANOVA) to support claims of no performance loss post-XAI integration. This methodological gap remains a critical concern.

Additional statistical measures such as confidence intervals and variance analysis were rarely reported, hindering the replicability and generalizability of the findings. Furthermore, less than 25% of studies disclosed explanation runtime overhead or scalability assessments, limiting the understanding of real-world feasibility in clinical workflows.

The following key statistical weaknesses were identified:

Only 17.7% of the studies conducted formal statistical significance tests;

Confidence intervals for interpretability metrics were reported in just eight studies;

Only six studies compared explanation methods across different user groups (e.g., clinicians vs. AI researchers);

Few studies discussed time-to-explanation or computational burden.

4.2. Comparison with Prior Work

Compared to earlier reviews, such as [

47,

48], which primarily focused on the conceptual foundations of explainability, our review provides an empirical and domain-specific synthesis. Notably, while previous literature flagged a lack of clinical applicability, our data showed that 62% of the reviewed studies reported use on real-world hospital or registry datasets. However, reproducibility remains limited, as only 29% of the studies shared complete codebases, and fewer than 10% conducted reproducibility tests across datasets or hospitals.

Beyond differences in scope, our findings align with broader methodological shifts reported in recent meta-analyses of XAI in medicine: (i) A move from post-hoc saliency-based methods toward integrated attribution in model architectures; (ii) increased pairing of quantitative fidelity metrics with qualitative clinician feedback; and (iii) gradual growth in multimodal CDSS applications. These trends suggest a maturing field where explanation design is increasingly driven by end-user context rather than model convenience.

4.3. Clinical and Ethical Implications

Explainability is not a bonus feature in healthcare AI but a regulatory and ethical imperative. As the FDA, EMA, and EU AI Act push toward transparency and accountability, XAI will be vital for regulatory approval. Yet,

Table 9 shows that only 18 out of the 62 studies (29%) reported clinician involvement in either development or evaluation phases. Even fewer studies (22.5%) conducted formal trust scoring, and only 15% included fairness or bias mitigation strategies.

Ethical considerations such as data imbalance, demographic fairness, and explanation stability were addressed only superficially in most papers. There is a growing need for ethical-by-design pipelines that embed fairness, transparency, and bias monitoring from the outset.

The following ethical gaps were observed:

Only seven studies discussed racial or gender bias in model explanations;

Three studies explicitly evaluated explanation fairness across demographic groups;

Less than five studies performed robustness checks under adversarial settings;

No standard framework was used for ethical evaluation across the studies.

7. Limitations

While this systematic review aimed for comprehensive coverage and methodological rigor, several limitations must be acknowledged. These limitations may influence the interpretation, reproducibility, and generalizability of the findings.

Language and publication bias: We restricted our inclusion criteria to English-language articles published in peer-reviewed journals. This decision may have excluded high-quality non-English research and valuable insights published in grey literature, conference proceedings, or institutional reports.

Inconsistent reporting across studies: A notable challenge during data extraction was the inconsistency in how studies reported their XAI methods, evaluation strategies, and clinical context. Many papers lacked detailed explanation protocols, dataset descriptions, or user-centered evaluation results, which limits replicability.

Subjectivity in qualitative synthesis: While we employed standardized forms and independent reviewers, the interpretation of explanation effectiveness, clinical impact, and usability is inherently subjective. Reviewer bias or inconsistent annotation could influence category assignment or trend detection.

Evolving XAI landscape: The field of XAI, particularly in healthcare, is evolving rapidly. Recent advancements such as prompt-based explainability in large language models (LLMs) and foundation model alignment strategies were either absent or minimally represented in the included studies, as they postdate our search cutoff.

Absence of quantitative meta-analysis: Due to heterogeneous evaluation metrics and lack of statistical data reporting across studies, we could not conduct a formal meta-analysis. Consequently, the conclusions drawn are based on descriptive statistics and thematic synthesis.

Model and domain diversity: Although we included multiple clinical domains and model types, the distribution was skewed toward imaging and structured EHR datasets. Other modalities such as audio, genomics, and sensor data were underrepresented, potentially limiting generalizability to those domains.

Limited stakeholder perspective: Most studies reviewed did not include feedback from patients, nurses, or healthcare administrators. Thus, our analysis may overemphasize physician-centric interpretations and omit broader institutional and ethical considerations.

The key issues listed in

Table 12 include language bias, inconsistent reporting, and lack of stakeholder diversity. These factors may affect the completeness, reliability, and broader applicability of the review’s conclusions.

Despite these limitations, this review provides a robust foundation for understanding current XAI practices in CDSSs and identifies strategic priorities for future advancement. We recommend future reviews to address these gaps through broader inclusion criteria, real-time database tracking, and participatory research designs.

8. Conclusions

This systematic review comprehensively examined the landscape of XAI techniques as applied in CDSSs, analyzing 62 peer-reviewed studies spanning diverse clinical domains, AI model architectures, and evaluation frameworks. The findings revealed an accelerating interest in integrating explainability into healthcare AI, driven by the need for transparency, trustworthiness, and regulatory compliance. SHAP, LIME, and Grad-CAM emerged as the most widely adopted XAI methods, with model-agnostic techniques dominating tabular data tasks and model-specific approaches prevailing in image-based domains like radiology and pathology. Clinical domain analysis showed that radiology, oncology, and neurology lead the adoption of XAI-CDSS, reflecting both data richness and a growing push for accountable AI in critical diagnoses. However, despite technical advances, major gaps remain in the clinical translation of XAI systems. Only a subset of the studies incorporated usability testing, clinician feedback, or human-in-the-loop trials. Moreover, evaluation of explanations, beyond predictive accuracy, remains inconsistent and lacks standardized benchmarks, limiting the interpretability claims across models and contexts.

The review underscores the need for (1) robust multi-dimensional evaluation metrics encompassing fidelity, trust, and clinical alignment; (2) interdisciplinary co-design approaches involving clinicians and AI developers; and (3) integration of XAI tools into real-world clinical workflows through iterative deployment and feedback loops. As the healthcare sector moves toward responsible AI adoption, explainability is not a luxury but a necessity. Future research should prioritize not only algorithmic innovation but also clinical usability, ethical safeguards, and human-centered design. By coordinating these dimensions, XAI-CDSS can fulfill its promise of enhancing clinical decision making, improving patient safety, and fostering trust in AI in medicine.