GMM Estimation of a Partially Linear Additive Spatial Error Model

Abstract

1. Introduction

2. Model and Estimation

2.1. Model Specification

2.2. Estimation Procedures

- Step 1. The initial estimation of unknown function . Following the idea of “working independence” in Lin and Carroll, [21], the correlation structure of can be ignored when we estimate . Next, a local linear estimation method is used to fit unknown function . Let represent the equivalent kernels for the local linear regression at z, it can be written as , where , , with for kernel function and bandwidth h. Thus, we can obtain the initial estimator of :Let be the smoother matrix whose rows are the equivalent kernels; we have

- Step 2. The initial GMM estimation of . Let be an matrix of instrumental variables, where , and we have the moment conditions E. Now we replace in Model (3) by and get the corresponding moment functions:where , . Let be a positive definite constant matrix, we can choose initial estimator of by minimizing object functionIt is easy to see that

- Step 3. The estimation of parameters and . For notational convenience, let , , , similarly, we have and , where , , are the ith element of , , respectively. Therefore, we get the following equationsBy squaring (5) and then summing, squaring (6) and summing, multiplying (5) by (6), and summing, we obtain the following equations:Assumption 2 implies , noting that and , where denotes the trace operator, let , taking the expectations of Equation (7), we havewhere , .In general, and are unknown, we present the estimator of by the two-stage estimation in Kelejian and Prucha [12]. Let and , where is obtained from in the second step, and denote , , as the ith element of , , respectively. Thus, the estimators of and can be obtained respectively as follows:, .Then, the empirical form of Formula (8) iswhere can be viewed as a vector of regression residuals. It follows from Formula (9) that the empirical estimator of can be given byBased on Formula (10), the nonlinear least square estimator can also be defined by Kelejian and Prucha [12]Kelejian and Prucha [12] proved that both and are consistent estimators of . Hence, it does not matter which one is chosen as the estimator of . In the following study, we need only consider as an estimator of .

- Step 4. The final estimation of and . Applying a Cochrane–Orcutt type transformation to Model (3) yieldswhere and . Therefore, we get the final estimator of as follows:where and .Finally, we replace in Formula (4) by and obtain the final estimator of as

- Step 1. The initial estimation of is fixed. Suppose that and are given, for any given , we can fit unknown function and its derivative by using local linear estimation method:where and . Let , then represents the smoother matrix for the local linear regression at observation . Like Opsomer and Ruppert [2], the smoother matrix is replaced by , where is an unit matrix and . Therefore, we get

- Step 2. The initial estimation of (). Based on backfitting algorithm, we choose the common Gauss–Seidel iteration, it schemes update one component at a time, based on the most recent components available Buja et al.( [22]). Let be estimation of the lth update for . Therefore, we haveIterate the above equation until convergence. Therefore, we obtainHastie and Tibshirani [24], Opsomer and Ruppert [25] proposed the backfitting estimator of in the bivariate additive model . Similar to Opsomer and Ruppert [2], Opsomer [26], we can get the estimators of additive part functions by solving the following normal equations:Generally, if the matrix is invertible, we may write the above estimators directly aswhere . Let is a partitioned matrix with an identity matrix as the jth “block” and zeros elsewhere. Then, we havewhere and . Next, we need the dimensional smoother matrix , which can be derived from the data generated by the modelBased on Lemma 2.1 in Opsomer [26], we know that the backfitting estimators convergence to a unique solution if for some . In this case, can be rewritten as

- Step 3. The initial GMM estimation of . Let be an matrix of instrumental variables (IV), and , we have the corresponding moment functionsLet be a positive definite constant matrix; we can obtain the initial estimator of by minimizingIt is easy to know that

- Step 4. The estimation of parameter of . Similar to Step 3 in the case , we get the estimator of by two-stage estimation in Kelejian and Prucha [12]. Denote as estimator of , we have

- Step 5. The final estimator of and . Applying a Cochrane–Orcutt type transformation to Model (2). Let and We can get the final estimator ofwhere and .By substituting into the expression (16), we obtain the final estimator of and respectively as

3. Asymptotic Properties

3.1. Assumptions

- (1)

- The elements of spatial weight matrix are non-random, and all elements on the primary diagonal satisfy .

- (2)

- The matrix is nonsingular for all .

- (3)

- The matrices and are uniformly bounded in both row and column sums in absolute value for all .

- (1)

- The covariate is a non-stochastic matrix and has full row rank, and the elements of are uniformly bounded in absolute value.

- (2)

- The column vectors of covariate are i.i.d. random variables.

- (3)

- The instrumental variables matrix is uniformly bounded in both row and column sums in absolute value.

- (4)

- The innovation sequence satisfies for some small δ, where is a positive constant.

- (5)

- The density of random variable z is positive and uniformly bounded away from zero on its compact support set. Furthermore, both and are bounded on their support sets.

- (1)

- The kernel function is a bounded and continuous nonnegative symmetric function on its closed support set.

- (2)

- Let and , where l is a nonnegative integer.

- (3)

- The second derivative of exists and is bounded and continuous.

- (1)

- , where is a positive semidefinite matrix.

- (2)

- .

- (3)

- .

- (4)

- .

- (5)

- .

- (1)

- The kernel function is a bounded and continuous nonnegative symmetric function on its closed support set.

- (2)

- Let and , where l is a nonnegative integer.

- (3)

- The second derivative of exists and is bounded and continuous.

- (1)

- .

- (2)

- .

- (3)

- .

- (4)

- .

3.2. Asymptotic Properties

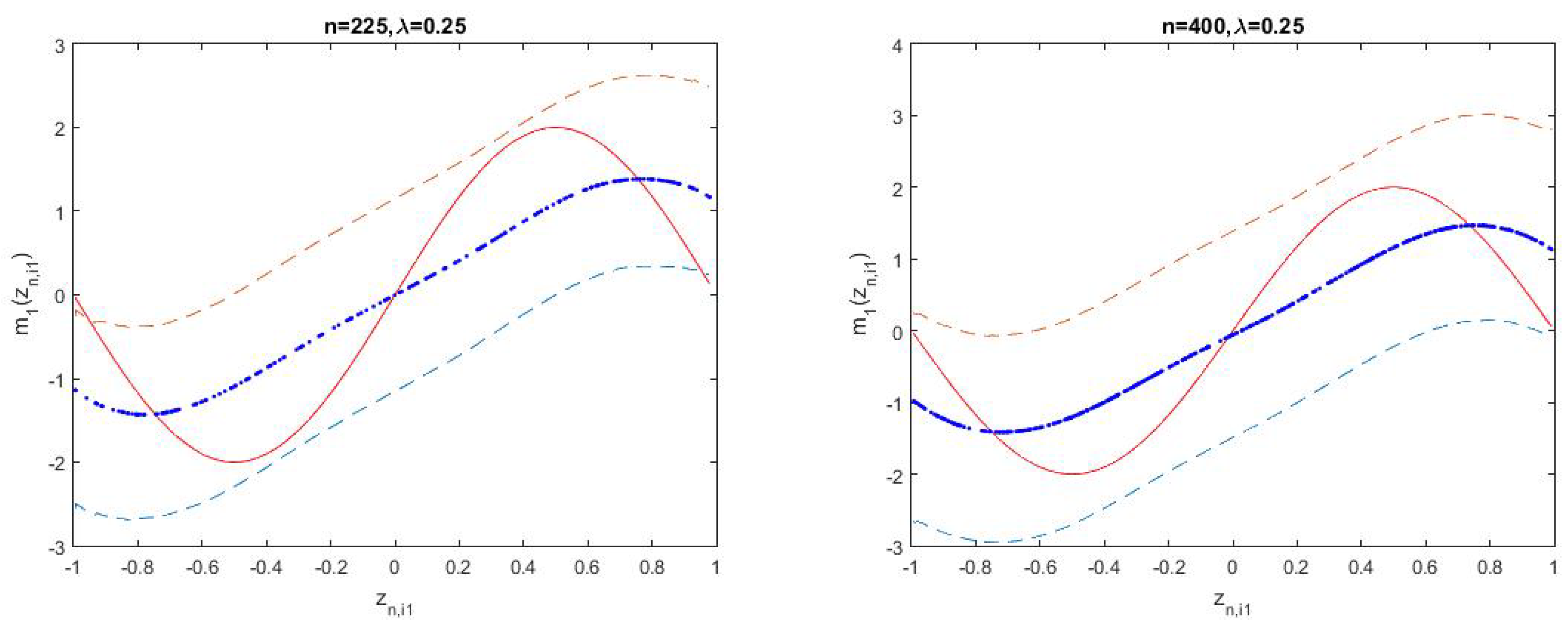

4. Simulation Studies

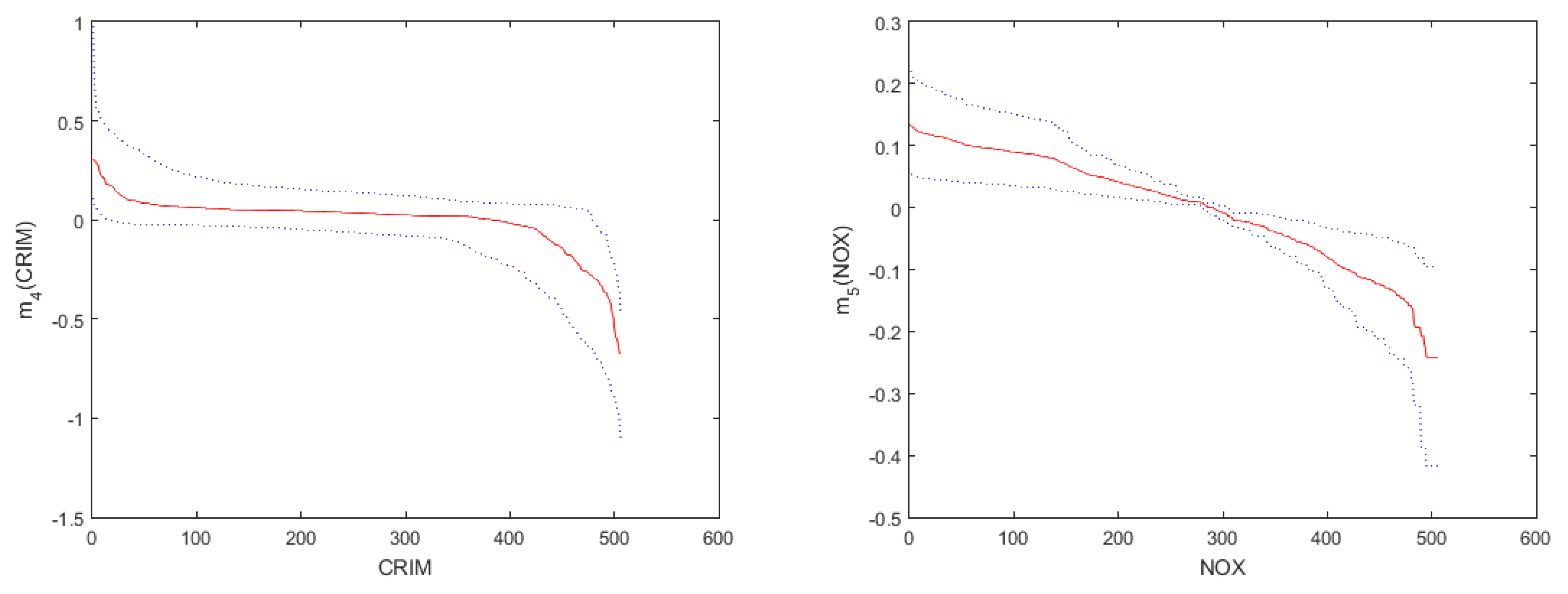

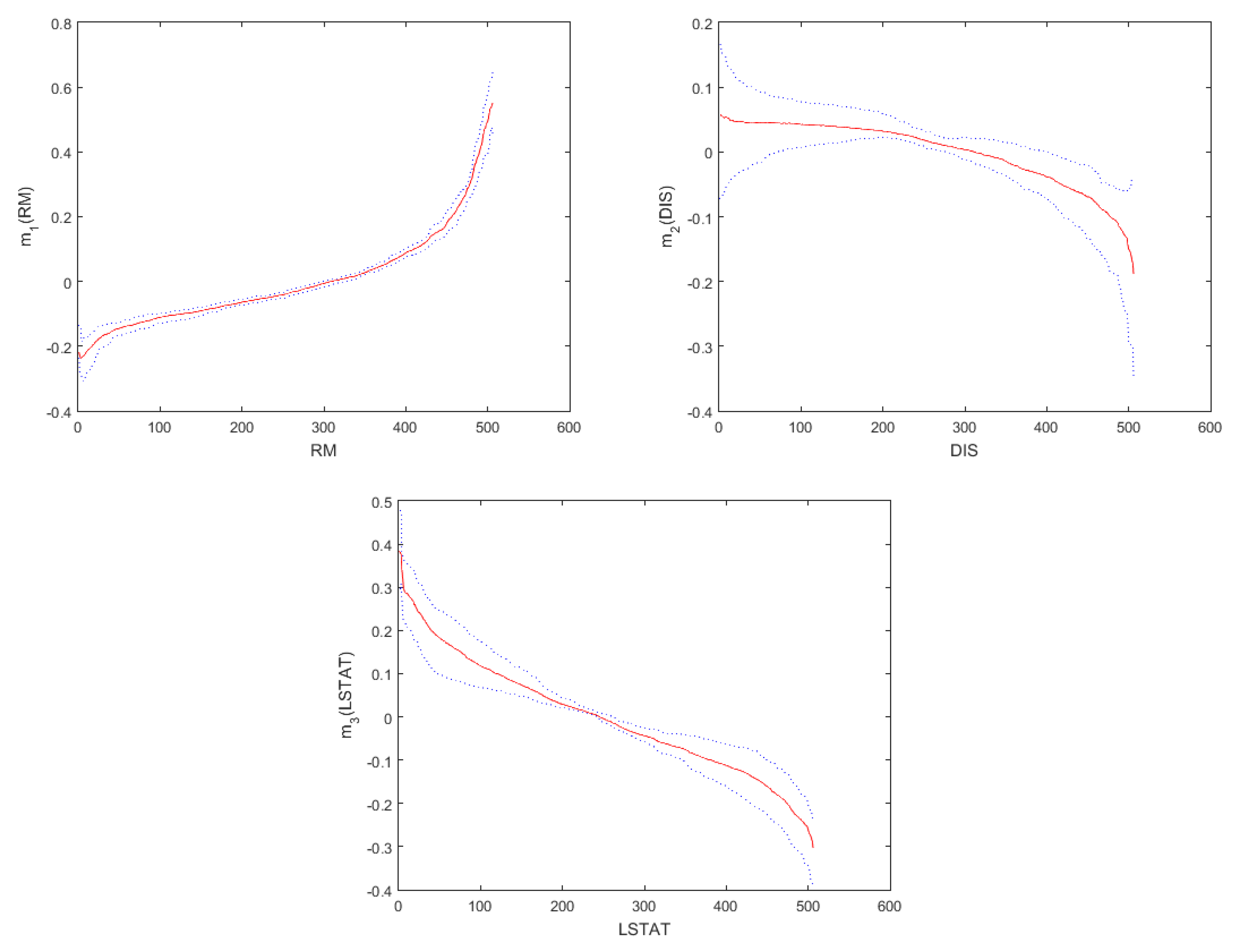

5. A Real Data Example

- MEDV: The median value of owner-occupied homes in USD 1000s.

- LON: Longitude coordinates.

- LAT: Lattitude coordinates.

- CRIM: Per capita crime rate by town.

- ZN: Proportion of residential land zoned for lots over 25,000 sq.ft.

- INDUS: Proportion of non-retail business acres per town.

- CHAS: Charles river dummy variable.

- NOX: Nitric oxides concentration.

- RM: Average number of rooms per dwelling.

- AGE: Proportion of owner-occupied units built prior to 1940.

- DIS: Weighted distances to five Boston employment centers.

- RAD: Index of accessibility to radial highways.

- TAX: Full-value property tax per USD 10,000.

- PTRATIO: Pupil–teacher ratio by town.

- B: 1000(B-0.63) where B is the proportion of blacks by town.

- LSTAT: Percentage of lower status of the population.

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Appendix A

References

- Fan, J.; Gijbels, I. Local Polynomial Modelling and Its Applications; Chapman & Hall: London, UK, 1996; pp. 19–56. [Google Scholar]

- Opsomer, J.D.; Ruppert, D. A root-n consistent backfitting estimator for semiparametric additive modeling. J. Comput. Graph. Stat. 1999, 8, 715–732. [Google Scholar] [CrossRef]

- Manzan, S.; Zerom, D. Kernel estimation of a partially linear additive model. Stat. Probabil. Lett. 2005, 72, 313–332. [Google Scholar] [CrossRef]

- Zhou, Z.; Jiang, R.; Qiao, W. Variable selection for additive partially linear models with measurement error. Metrika 2011, 74, 185–202. [Google Scholar] [CrossRef]

- Wei, C.; Luo, Y.; Wu, X. Empirical likelihood for partially linear additive errors–in–variables models. Stat. Pap. 2012, 53, 485–496. [Google Scholar] [CrossRef]

- Hoshino, T. Quantile regression estimation of partially linear additive models. J. Nonparametr. Stat. 2014, 26, 509–536. [Google Scholar] [CrossRef]

- Lou, Y.; Bien, J.; Caruana, R.; Gehrke, J. Sparse partially linear additive models. J. Comput. Graph. Stat. 2016, 25, 1126–1140. [Google Scholar] [CrossRef]

- Liu, R.; Härdle, W.K.; Zhang, G. Statistical inference for generalized additive partially linear models. J. Multivar. Anal. 2017, 162, 1–15. [Google Scholar] [CrossRef]

- Manghi, R.F.; Cysneiros, F.J.A.; Paula, G.A. Generalized additive partial linear models for analyzing correlated data. Comput. Stat. Data. An. 2019, 129, 47–60. [Google Scholar] [CrossRef]

- Li, T.; Mei, C. Statistical inference on the parametric component in partially linear spatial autoregressive models. Commun. Stat. Simul. Comput. 2016, 45, 1991–2006. [Google Scholar] [CrossRef]

- Anselin, L. Spatial Econometrics: Methods and Models; Kluwer Academic Publisher: Dordrecht, The Netherlands, 1988; pp. 16–28. [Google Scholar]

- Kelejian, H.H.; Prucha, I.R. A generalized spatial two-stage least squares procedure for estimation a spatial autoregressive model with autoregressive disturbances. J. Real. Estate. Financ. Econ. 1998, 17, 99–121. [Google Scholar] [CrossRef]

- Elhorst, J.P. Spatial Econometrics from Cross-Sectional Data to Spatial Panels; Springer: New York, NY, USA, 2014; pp. 5–23. [Google Scholar]

- Su, L.; Jin, S. Profile quasi-maximum likelihood estimation of partially linear spatial autoregressive models. J. Econom. 2010, 157, 18–33. [Google Scholar] [CrossRef]

- Su, L. Semiparametric GMM estimation of spatial autoregressive models. J. Econom. 2012, 167, 543–560. [Google Scholar] [CrossRef]

- Sun, Y. Estimation of single-index model with spatial interaction. Reg. Sci. Urban. Econ. 2017, 62, 36–45. [Google Scholar] [CrossRef]

- Cheng, S.; Chen, J.; Liu, X. GMM estimation of partially linear single-index spatial autoregressive model. Spat. Stat. 2019, 31, 100354. [Google Scholar] [CrossRef]

- Wei, H.; Sun, Y. Heteroskedasticity-robust semi-parametric GMM estimation of a spatial model with space-varying coefficients. Spat. Econ. Anal. 2017, 12, 113–128. [Google Scholar] [CrossRef]

- Dai, X.; Li, S.; Tian, M. Quantile Regression for Partially Linear Varying Coefficient Spatial Autoregressive Models. Available online: https://arxiv.org/pdf/1608.01739.pdf (accessed on 5 August 2016).

- Du, J.; Sun, X.; Cao, R.; Zhang, Z. Statistical inference for partially linear additive spatial autoregressive models. Spat. Stat. 2018, 25, 52–67. [Google Scholar] [CrossRef]

- Lin, X.; Carroll, R.J. Nonparametric function estimation for clustered data when the predictor is measured without/with error. J. Am. Stat. Assoc. 2000, 95, 520–534. [Google Scholar] [CrossRef]

- Buja, A.; Hastie, T.; Tibshirani, R. Linear smoothers and additive models. Ann. Stat. 1989, 17, 453–510. [Google Scholar] [CrossRef]

- Härdle, W.; Hall, P. On the backfitting algorithm for additive regression models. Stat. Neerl. 1993, 47, 43–57. [Google Scholar] [CrossRef]

- Hastie, T.J.; Tibshirani, R.J. Generalized Additive Models; Chapman & Hall: London, UK, 1990; pp. 136–167. [Google Scholar]

- Opsomer, J.D.; Ruppert, D. Fitting a bivariate additive model by local polynomial regression. Ann. Stat. 1997, 25, 186–211. [Google Scholar] [CrossRef]

- Opsomer, J.D. Asymptotic properties of backfitting estimators. J. Multivar. Anal. 2000, 73, 166–179. [Google Scholar] [CrossRef]

- Fan, J.; Wu, Y. Semiparametric estimation of covariance matrices for longitudinal data. J. Am. Stat. Assoc. 2008, 103, 1520–1533. [Google Scholar] [CrossRef]

- Zhang, H.H.; Cheng, G.; Liu, Y. Linear or nonlinear? Automatic structure discovery for partially linear models. J. Am. Stat. Assoc. 2011, 106, 1099–1112. [Google Scholar] [CrossRef] [PubMed]

- Davidson, J. Stochastic Limit Theory: An Introduction for Econometricians; Oxford University Press: Oxford, UK, 1994; pp. 369–373. [Google Scholar]

- Fan, J.; Jiang, J. Nonparametric inferences for additive models. J. Am. Stat. Assoc. 2005, 100, 890–907. [Google Scholar] [CrossRef]

| Parameter | True Value | MEAN | SD | MSE | MEAN | SD | MSE |

|---|---|---|---|---|---|---|---|

| 0.2500 | 0.2838 | 0.0560 | 0.0043 | 0.2837 | 0.0201 | 0.0015 | |

| 1.0000 | 1.0067 | 0.1318 | 0.0174 | 1.0020 | 0.1057 | 0.0112 | |

| 1.5000 | 1.4828 | 0.1256 | 0.0161 | 1.4996 | 0.1071 | 0.0115 | |

| 0.0100 | 0.0535 | 0.0678 | 0.0065 | 0.0335 | 0.0469 | 0.0028 | |

| - | 1.1696 | 0.7665 | - | 0.5390 | 0.4070 | - | |

| - | 3.4316 | 0.4778 | - | 2.9212 | 0.3052 | - | |

| 0.2500 | 0.2733 | 0.0172 | 0.0008 | 0.2730 | 0.0133 | 0.0007 | |

| 1.0000 | 0.9974 | 0.0923 | 0.0085 | 0.9934 | 0.0824 | 0.0068 | |

| 1.5000 | 1.4974 | 0.0905 | 0.0082 | 1.5025 | 0.0823 | 0.0068 | |

| 0.0100 | 0.0181 | 0.0276 | 0.0008 | 0.0132 | 0.0204 | 0.0004 | |

| - | 0.5216 | 0.4050 | - | 0.3775 | 0.2905 | - | |

| - | 2.7251 | 0.2396 | - | 2.3652 | 0.1914 | - | |

| 0.2500 | 0.2618 | 0.0053 | 0.0002 | 0.2614 | 0.0033 | 0.0001 | |

| 1.0000 | 1.0013 | 0.0573 | 0.0033 | 0.9979 | 0.0373 | 0.0014 | |

| 1.5000 | 1.5009 | 0.0546 | 0.0030 | 1.5026 | 0.0409 | 0.0017 | |

| 0.0100 | 0.0116 | 0.0049 | 2.66E-5 | 0.0113 | 0.0035 | 1.39E-5 | |

| - | 0.1673 | 0.1303 | - | 0.0858 | 0.0654 | - | |

| - | 1.5636 | 0.0852 | - | 1.1394 | 0.0473 | - | |

| Parameter | True Value | MEAN | SD | MSE | MEAN | SD | MSE |

|---|---|---|---|---|---|---|---|

| 0.5000 | 0.5438 | 0.0341 | 0.0031 | 0.5366 | 0.0209 | 0.0018 | |

| 1.0000 | 1.0019 | 0.1289 | 0.0166 | 0.9948 | 0.1147 | 0.0132 | |

| 1.5000 | 1.5015 | 0.1236 | 0.0153 | 1.4997 | 0.1106 | 0.0122 | |

| 0.0100 | 0.0477 | 0.0652 | 0.0057 | 0.0319 | 0.0434 | 0.0024 | |

| - | 1.1397 | 0.7554 | - | 0.5453 | 0.4035 | - | |

| - | 3.4167 | 0.4098 | - | 2.9159 | 0.3232 | - | |

| 0.5000 | 0.5348 | 0.0208 | 0.0016 | 0.5295 | 0.0129 | 0.0010 | |

| 1.0000 | 0.9979 | 0.0919 | 0.0085 | 0.9999 | 0.0824 | 0.0068 | |

| 1.5000 | 1.5027 | 0.0901 | 0.0081 | 1.4992 | 0.0877 | 0.0077 | |

| 0.0100 | 0.0200 | 0.0309 | 0.0011 | 0.0149 | 0.0228 | 0.0005 | |

| - | 0.5001 | 0.4017 | - | 0.3653 | 0.2852 | - | |

| - | 2.7182 | 0.2304 | - | 2.3661 | 0.1948 | - | |

| 0.5000 | 0.5184 | 0.0049 | 0.0004 | 0.5137 | 0.0028 | 0.0002 | |

| 1.0000 | 1.0013 | 0.0566 | 0.0032 | 1.0024 | 0.0414 | 0.0017 | |

| 1.5000 | 1.5005 | 0.0572 | 0.0033 | 1.4998 | 0.0408 | 0.0017 | |

| 0.0100 | 0.0032 | 0.0043 | 0.0001 | 0.0031 | 0.0031 | 0.0001 | |

| - | 0.1689 | 0.1238 | - | 0.0872 | 0.0645 | - | |

| - | 1.5603 | 0.0897 | - | 1.1407 | 0.0501 | - | |

| Parameter | True Value | MEAN | SD | MSE | MEAN | SD | MSE |

|---|---|---|---|---|---|---|---|

| 0.7500 | 0.7838 | 0.0559 | 0.0043 | 0.7837 | 0.0194 | 0.0015 | |

| 1.0000 | 0.9926 | 0.1317 | 0.0174 | 0.9894 | 0.1093 | 0.0121 | |

| 1.5000 | 1.5051 | 0.1252 | 0.0157 | 1.4942 | 0.1070 | 0.0115 | |

| 0.0100 | 0.0548 | 0.0735 | 0.0074 | 0.0343 | 0.0470 | 0.0028 | |

| - | 1.2188 | 0.8086 | - | 0.5739 | 0.4374 | - | |

| - | 3.4220 | 0.4281 | - | 2.9343 | 0.2837 | - | |

| 0.7500 | 0.7734 | 0.0187 | 0.0009 | 0.7730 | 0.0139 | 0.0007 | |

| 1.0000 | 0.9945 | 0.0940 | 0.0089 | 1.0052 | 0.0842 | 0.0071 | |

| 1.5000 | 1.4976 | 0.1030 | 0.0106 | 1.4965 | 0.0853 | 0.0073 | |

| 0.0100 | 0.0226 | 0.0340 | 0.0013 | 0.0131 | 0.0201 | 0.0004 | |

| - | 0.5159 | 0.3918 | - | 0.4090 | 0.2959 | - | |

| - | 2.7176 | 0.2388 | - | 2.3667 | 0.1983 | - | |

| 0.7500 | 0.7618 | 0.0049 | 0.0002 | 0.7614 | 0.0031 | 0.0001 | |

| 1.0000 | 1.0010 | 0.0564 | 0.0032 | 0.9997 | 0.0390 | 0.0015 | |

| 1.5000 | 1.5030 | 0.0538 | 0.0029 | 1.5013 | 0.0402 | 0.0016 | |

| 0.0100 | 0.0124 | 0.0046 | 2.69E-5 | 0.0103 | 0.0033 | 1.09E-5 | |

| - | 0.1671 | 0.1265 | - | 0.0818 | 0.0615 | - | |

| - | 1.5586 | 0.0850 | - | 1.1395 | 0.0471 | - | |

| Estimate | SD | MSE | Lower Bound | Upper Bound | |

|---|---|---|---|---|---|

| 0.4689 | 0.0916 | 0.0085 | 0.3797 | 0.5216 | |

| 0.1625 | 0.0468 | 0.0023 | 0.0708 | 0.2105 | |

| −0.3455 | 0.0790 | 0.0065 | −0.4336 | −0.1831 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Chen, J.; Cheng, S. GMM Estimation of a Partially Linear Additive Spatial Error Model. Mathematics 2021, 9, 622. https://doi.org/10.3390/math9060622

Chen J, Cheng S. GMM Estimation of a Partially Linear Additive Spatial Error Model. Mathematics. 2021; 9(6):622. https://doi.org/10.3390/math9060622

Chicago/Turabian StyleChen, Jianbao, and Suli Cheng. 2021. "GMM Estimation of a Partially Linear Additive Spatial Error Model" Mathematics 9, no. 6: 622. https://doi.org/10.3390/math9060622

APA StyleChen, J., & Cheng, S. (2021). GMM Estimation of a Partially Linear Additive Spatial Error Model. Mathematics, 9(6), 622. https://doi.org/10.3390/math9060622