1. Introduction

This paper examines and characterizes the fundamental matrix solution for the

n-vector autonomous linear retarded functional differential equation (RFDE)

with forcing function

and initial function

where

is an

n-vector, the kernel matrix

is an

matrix of Borel measures with support on the interval

, and

f and

g belong to function spaces that will be described in

Section 2.

We shall consider the case that

where

,

are

matrices over

,

is the Dirac delta function, and

is an integrable

matrix function on the interval

. Consequently, in terms of the Radon–Nikodym theorem,

has an absolutely continuous part

, a discrete singular part with a finite number of point masses (atoms) corresponding to Dirac delta measures, and a zero continuous singular part. It will be evident from the context whether the symbol

A refers to an

matrix over Borel measures, an

matrix over the real numbers, or a matrix function. The terms

and

respectively correspond to discrete delays and distributed delay of the kernel matrix

.

The fundamental solution

satisfies the RFDE

with initial conditions

where

I and

O are respectively the

identity and zero matrices. Note that the fundamental solution

also satisfies the Volterra integro-differential equation

with initial condition

Theorem 1. The fundamental solution is given bywhere the convolutional powers of the kernel matrix are defined by - 1.

,

- 2.

, and

- 3.

for ,

where ∗ denotes the convolution of two matrix measures.

Proof. See the proof of Theorem 2. □

This expression for the fundamental solution in the case

can be found in References [

1,

2,

3]. Note that the computation for the fundamental solution

is simplified by the restriction of a finite number of discrete atoms in the discrete singular component, and a zero continuous singular component.

The fundamental solution

for the autonomous linear ordinary differential equation (ODE)

is given (see Reference [

4]) by the exponential matrix function

Note that the fundamental solution

in Equation (

8) for the RFDE is analogous to and is a generalization of the fundamental solution in Equation (

9) for the ODE, and that it has the form of a convolutional exponential matrix function.

Equation (

4) establishes a relationship between two actors in an RFDE: the fundamental solution

and the kernel matrix

. Let us consider the other actors and their roles.

For

the Laplace transform

(see Reference [

5]) of the fundamental solution

is

Definition 1. We define the characteristic matrix , the characteristic determinant , and the resolvent matrix .

We shall show in

Section 4.1 that

or alternatively

, so that the fundamental solution

is the inverse Laplace of the resolvent matrix

.

The fundamental solution

can also be expressed in the form of Laplace inverse as a contour integral in the

z-plane

where contributions to the integral arise from the characteristic roots

with multiplicity

of the characteristic determinant

. We shall show in

Section 4 that this gives rise to a spectral representation of the fundamental solution

in terms of the exponential solutions of the RFDE

In the case that all characteristic roots

are simple with multiplicity

, we have

where

with

. The characteristic roots

can be arranged in some appropriate order, such as decreasing real part

or increasing modulus

.

It is known (References [

6,

7,

8,

9]) that

is convergent for a range of values of

t, where

. The case

arises in the scalar case in which the exponential solutions are complete and independent (see Reference [

10]). Even where convergence is not an issue, we could have

for

and

for

, as shown in the example in

Section 4.4, where

. An open question is to characterize the minimum time

for the equality of

and

given by

. Note that

plays the role of a spectral representation of the fundamental solution

for

.

Figure 1 shows the relationship between the RFDE actors: the kernel matrix

, the fundamental solution

, the characteristic matrix

, and the resolvent matrix

. Note that

Figure 1 shows two different routes to determine the fundamental solution

from the kernel matrix

: (i) the direct route from Equation (

8), and (ii) the indirect route through the characteristic matrix, the resolvent matrix, and the Laplace inverse. Depending on circumstances, either route may be preferable.

On its own, the fundamental solution is a representative proxy for the solutions of the RFDE. For example, the fundamental solution has exponential bounds that determine the asymptotic behavior of the RFDE solutions. Also the fundamental solution combined with a solution representation gives an exact expression in explicit form for the solution of an RFDE. However, the explicit form of the fundamental solution does not in and of itself convey all the information on its properties. It is the diversity of actors and their interplay that brings a variety of perspectives and the full force to bear on extracting information on the RFDE.

Remark 1. The right hand side of the linear autonomous RFDE (

1)

is expressed aswhere , to emphasize the convolution nature of the linear autonomous RFDE (see page 77 of Reference [11]), to highlight the convolutional composition of the fundamental solution, and to reflect the situation that for a linear autonomous RFDE essentially every operation is a convolution. Remark 2. The use of measures in the kernel matrix of the RFDE in Equation (

1)

is chosen over the alternatives of (i) distributions and (ii) functions of normalized bounded variation. This choice is mainly a matter of style. Whatever choice is made, the fundamental solution will involve convolutional powers of the kernel matrix. Some monographs dealing with the study of functional differential equations and related Volterra integro-differential equations are References [

7,

11,

12,

13,

14,

15,

16,

17,

18].

The remainder of the paper is organized as follows:

Section 2 on preliminaries establishes the foundation for the paper.

Section 3 applies algebraic graph theory to study RFDEs. It uses a weighted loop-digraph representation of an RFDE to illuminate the system structure, connectivity, and dynamics. The characteristics of the fundamental solution are explored in

Section 4. Examples are considered in the main body of the document to elucidate the behavior of the fundamental solution to an RFDE.

Section 5 extends the fundamental solution to other functional differential equations. Finally, the conclusions are presented in

Section 6.

2. Preliminaries

2.1. Ring of Borel Measures

Consider the set

of Borel measures on

with compact support of the form

with finite number of atoms at

,

,

, and integrable function

.

The addition operator + is given by

and convolution (aka multiplication) operator ∗ is given by

where

It is straightforward to show that is a commutative ring, with additive identity such that , and the multiplicative identity the Dirac delta measure such that .

Definition 2. For define norm .

Define the support as the complement in of the set for all neighborhoods of x that are sufficiently small.

We have the following standard results:

.

.

.

In particular, if , .

2.2. Matrix over Ring of Borel Measures

Definition 3. A matrix over a ring of Borel measures is a array of elements of the ring .

We have matrix addition

and matrix convolution (multiplication)

Note that the kernel matrix , and well as its its convolutional powers .

Definition 4. A matrix norm for with addition and multiplication defined conventionally on the ring has the following properties:

- 1.

- 2.

- 3.

- 4.

- 5.

Particular instances of matrix norms are the

-norm

and the Frobenius

-norm

. See Section 5.6 of Reference [

19].

2.3. Fundamental Solution

We now turn our attention to the Volterra integro-differential equation of convolutional type satisfied by the fundamental solution for an RFDE

By integrating we obtain the equivalent Volterra integral equation of convolutional type

where the kernel matrix function

with

It is well known that this Volterra integral equation has a Liouville–Neumann series solution in the form

where matrix functions

are defined by

, and

where ∗ denotes a convolution of two matrix functions. This form of the fundamental solution is promulgated in References [

14,

15,

20].

Proposition 1. The two forms of the fundamental solutionare equivalent. Proof. Since

, we have

.

Hence from equality of Laplace transforms

for

. □

Theorem 2. The solution of with exists in a form The solution is unique and has continuous dependence on the kernel matrix .

Proof. For

where

we have

so that the series converges absolutely and uniformly, thereby justifying the formal procedures in the following steps:

Hence is a solution.

To show that the solution is unique, suppose that we have two solutions

and

so that for the Volterra integral equation we have

Consequently

for some constant

, arbitrarily small

, and

. From Gronwall’s inequality (see page 24 of Reference [

21]) we have

. However, since

is arbitrarily small, we have

and consequently

.

To demonstrate continuous dependence on the kernel matrix

, consider two solutions

and

of

Choose

so that

,

so that

and

so that

. Then

so that by Gronwall’s inequality we have

. □

We have already seen an exponential bound on the interval . A more precise exponential bound is provided by the supremum of the real part of the characteristic roots of the characteristic determinant .

Theorem 3 (Theorem 1.21 of Reference [

15]).

Let . For with for . Remark 3. The term fundamental solution has several synonyms in the literature depending on context:

A. Cauchy matrix for the nonautonomous RFDE See page 51 of Reference [16]. For the autonomous equation . B. Differential resolvent for the Volterra integro-differential equation See page 77 of Reference [11]. C. Green’s function or impulse response function for RFDEwith and . In some parts of the literature, for example Reference [16], a Green’s function is associated with a boundary value problem. Proposition 2. so that the kernel matrix and the fundamental solution commute convolutionally. Proof.

where the steps involving an infinite sum are justified by uniform convergence. □

2.4. RFDE Solution

Standard choices for the initial function space are:

; h is Lebesgue measurable on , is well defined, , and .

, .

The standard choice for the forcing function space is .

Results on the existence, uniqueness and continuous dependence on the initial data can be found in Reference [

22] for function space

and Reference [

13] for function space

.

Theorem 4. The representation of the solution for the RFDE in Equation (

1)

is Proof. We first consider the case

,

and

. Taking the Laplace transform we obtain

and taking the Laplace inverse, we have

Now consider the case , , .

We have the equivalent equation

with

and

.

This equation has the solution

From linearity, we can combine the two solutions to obtain the complete solution. □

Remark 4. Note that the fundamental solution in combination with the representation of the solution gives an exact expression in explicit form for the solution of an RFDE.

Remark 5. Note that the initial function h and forcing function f enter into the three constituents of the RFDE solution as

- 1.

Initial function point value (with no history) in ,

- 2.

Initial function h (with history and hereditary information) as an integrand in ,

- 3.

Forcing function f as a convolution with the fundamental solution in .

Remark 6. The integration region R for the double integral is The alternative expressions for the double integral are: The change in the order of integration in the double integral is justified by the Fubini theorem. The change in the order of the kernel matrix and the fundamental solution is justified since they commute convolutionally.

3. Application of Algebraic Graph Theory

In this section we apply algebraic graph theory to examine and to provide a pictorial rendition of the system structure and system dynamics of an RFDE. We also use the theory to characterize the system connectivity of the RFDE, and to identify its strong and weak components. The main reference for the application of algebraic graph theory to RFDEs is the excellent book [

23].

3.1. Weighted Loop-Digraph Representation

We consider a weighted loop-digraph representation for the mathematical objects of the kernel matrix and its convolutional powers . These mathematical objects are prominent aspects of the RFDE and its fundamental solution .

In component form the fundamental solution

is

where

The component form expression for the RFDE is

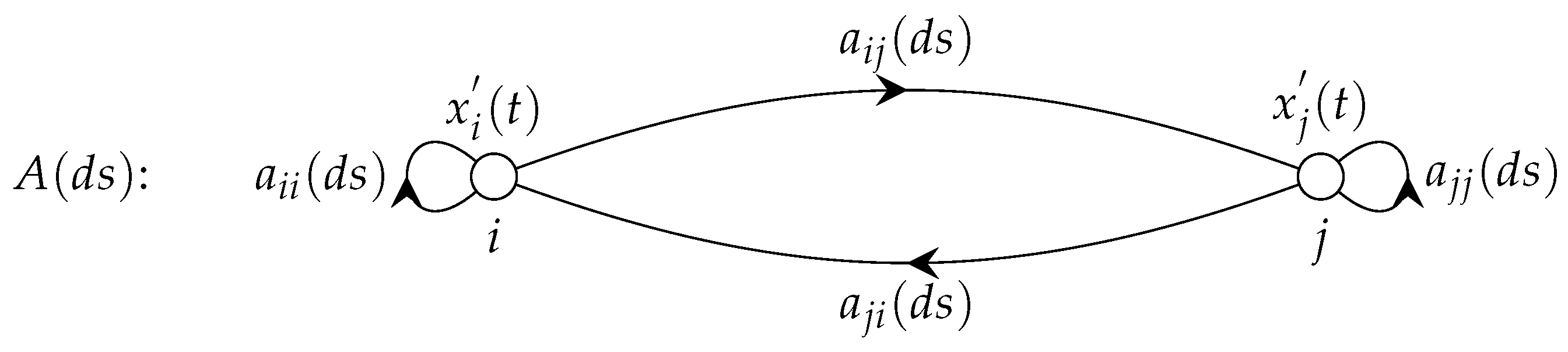

Figure 2 depicts the network structure and interactive systems dynamics for a general RFDE in the form of a weighted loop-digraph, albeit for simplicity focusing only on two nodes

i and

j.

Definition 5. A weighted loop-directed graph or loop-digraph is an ordered triple where V is a set of elements called vertices (or nodes, points), and E is a set of ordered pairs of vertices called directed edges and loops. The directed edges connect two distinct vertices in the given order. Loops connect a specific vertex with itself. W is the weight assigned to each directed edge and loop.

Although for the most part the book [

23] focuses on directed graphs without loops and without weights, the applied results in this paper are applicable to directed graphs with loops and weights.

Figure 2 shows a weighted loop-digraph for an

n-vector RFDE. The weights assigned to directed edge

and loop

are Borel measures

of

and

, respectively. By convention the direction on the directed edge

is

i to

j. However, note that in a physical interpretation the influence of node

j on the system dynamics at node

i is represented by the Borel measure

in the opposite direction. This consideration should be borne in mind in interpreting the system dynamics from the loop-digraph.

For the components of the second convolutional power

we have

This has the interpretation that the Borel measure is the sum of convolutions of the Borel measures encountered on the directed edges and loops on a walk of length 2 from vertex i to vertex j. More generally the Borel measure is the sum of convolutions of the Borel measures encountered on the directed edges and loops on a walk of length n from vertex i to vertex j. The Borel measures thus have a straightforward interpretation in the language of algebraic graph theory.

The following examples illustrate the concepts.

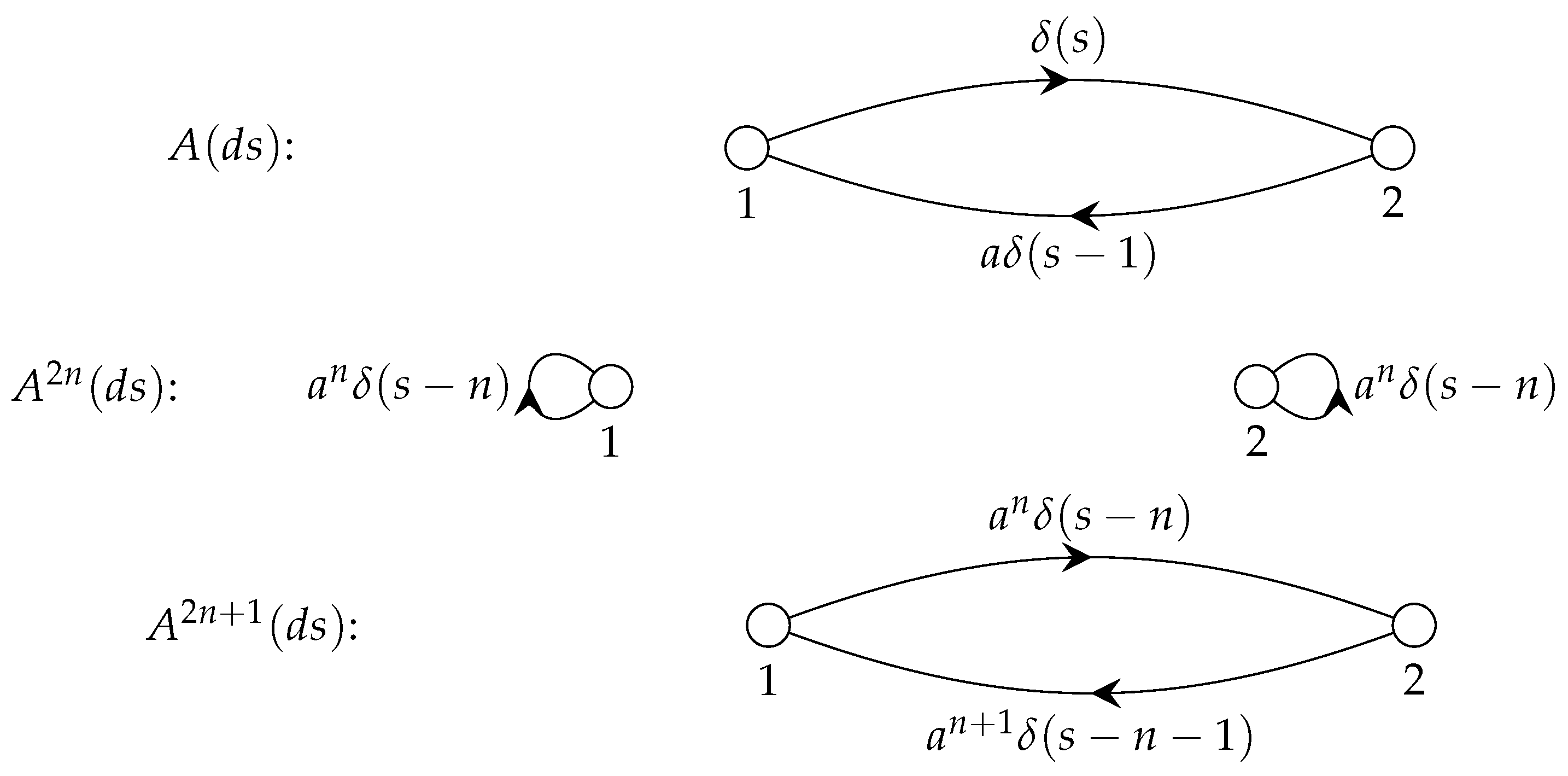

Example 1. The kernel matrix and its convolutional powers are The fundamental solution iswhere The weighted loop-digraphs for the kernel matrix and its convolutional powers for the RFDE are shown in Figure 3. Example 2. The kernel matrix and its convolutional powers are The fundamental solution is The weighted loop-digraphs for the kernel matrix and its convolutional powers for the RFDE are shown in Figure 4. 3.2. Strong and Weak Connectivity

Notice that in the first example from the previous subsection that the two components in the RFDE are interconnected and interact, and that there is no interaction in the second example. The two components are interconnected in the first example, and disconnected in the second example. The nature of the interaction between the RFDE components will be obvious in the case that the number of components is small, but less so when the number is large.

In this subsection we apply material from Chapters 2,3,5,14 of Reference [

23] to characterize the connectivity between RFDE components and to classify the nature of the connectivity. Notice that this approach focuses on the structure of the RDFE, and is not concerned with the dynamics produced by the Borel measures, i.e., the weights on the directed edges and loops.

We start with some definitions.

Definition 6. A path from to is an ordered collection of vertices interspersed with an ordered collection of directed edges .

A semipath from to is an ordered collection of vertices interspersed with an ordered collection of directed edges, one from each pair of directed edges or , or , ⋯, or .

A strict semipath is a semipath that is not a path.

A vertex v is reachable from a vertex u if there is a path from u to v.

Points u and v are 0—connected if they are not joined by a semipath; 1—connected if they are joined by a semipath but not a path; 2—connected if they are joined by a path in one direction but not the other; 3—connected if they are joined by paths in both directions.

A loop-digraph is strongly connected or strong if every two vertices are mutually reachable.

A loop-digraph is unilaterally connected or unilateral if for any two points, at least one is reachable from the other; it is strictly unilateral if it is unilateral but not strong.

A loop-digraph is weakly connected or weak if every two vertices are joined by a semipath; it is strictly weak if it is weak but not unilateral.

A subgraph of a loop-digraph D is a loop-digraph in which the vertices and loops/directed edges are vertices and loops/directed edges of D.

A strong component of a loop-digraph is a maximal strong loop-digraph.

A unilateral component of a loop-digraph is a maximal unilateral loop-digraph.

A weak component of a loop-digraph is a maximal weak loop-digraph.

Theorem 5 (Theorem 3.2 of Reference [

23]).

Every vertex and every directed edge is contained in exactly one weak component.Every vertex and every directed edge is contained in at least one unilateral component.

Every vertex and every directed edge is contained in exactly one strong component.

We shall now proceed to demonstrate how to decompose a loop-digraph into strong and weak components.

The reachability matrix

is defined as

In terms of the convolutional powers

for

of the kernel matrix

, the reachability matrix

is given by

Other variants of the reachability matrix come into play:

The transpose reachability matrix

is given by

The symmetric reachability matrix

arises from the symmetrized kernel matrix

where

. It is given by

The element-wise product

. It is given by

The following theorem says that the strong components of the RFDE are determined by the elementwise product reachability matrix .

Theorem 6 (Theorem 5.8 of Reference [

23]).

The strong component containing the vertex is given by the entries of 1 in the ith row (or column) in the elementwise product reachability matrix . The following theorem says that the weak components of the RFDE are determined by the symmetric reachability matrix .

Theorem 7 (Corollary 5.15a of Reference [

23]).

The weak component containing the vertex is given by the entries of 1 in the ith row (or column) in the symmetric reachability matrix . The connectedness matrix is obtained from the reachability matrix as follows:

If and are in the same weak component .

Otherwise .

The connectedness matrix

takes the values

Definition 7. A permutation matrix has exactly one entry in each row and in each column set to . All other entries are 0. A permutation matrix P can be construed as relabelling the vertices in a loop-digraph.

A matrix is said to be decomposable if ∃ a permutation matrix such thatwhere and are square matrices of sizes and respectively, and and are zero matrices of the indicated sizes. Theorem 8 (Theorem 5.17 of Reference [

23]).

The following conditions are equivalent.- 1.

The loop-digraph is disconnected.

- 2.

Its kernel matrix is decomposable.

- 3.

Its reachability matrix is decomposable.

Theorem 9. Suppose that the loop-digraph for an RFDE is disconnected so that the kernel matrix is decomposable in the form Then the fundamental solution is also decomposable in the formwhere and are the fundamental solutions corresponding respectively to the kernel matrices and . In general, if the loop-digraph for an RFDE has

p weak components, the kernel matrix

can be decomposed into the form

and the fundamental solution can also be decomposed into a corresponding form

where

is the fundamental solution for the kernel matrix

.

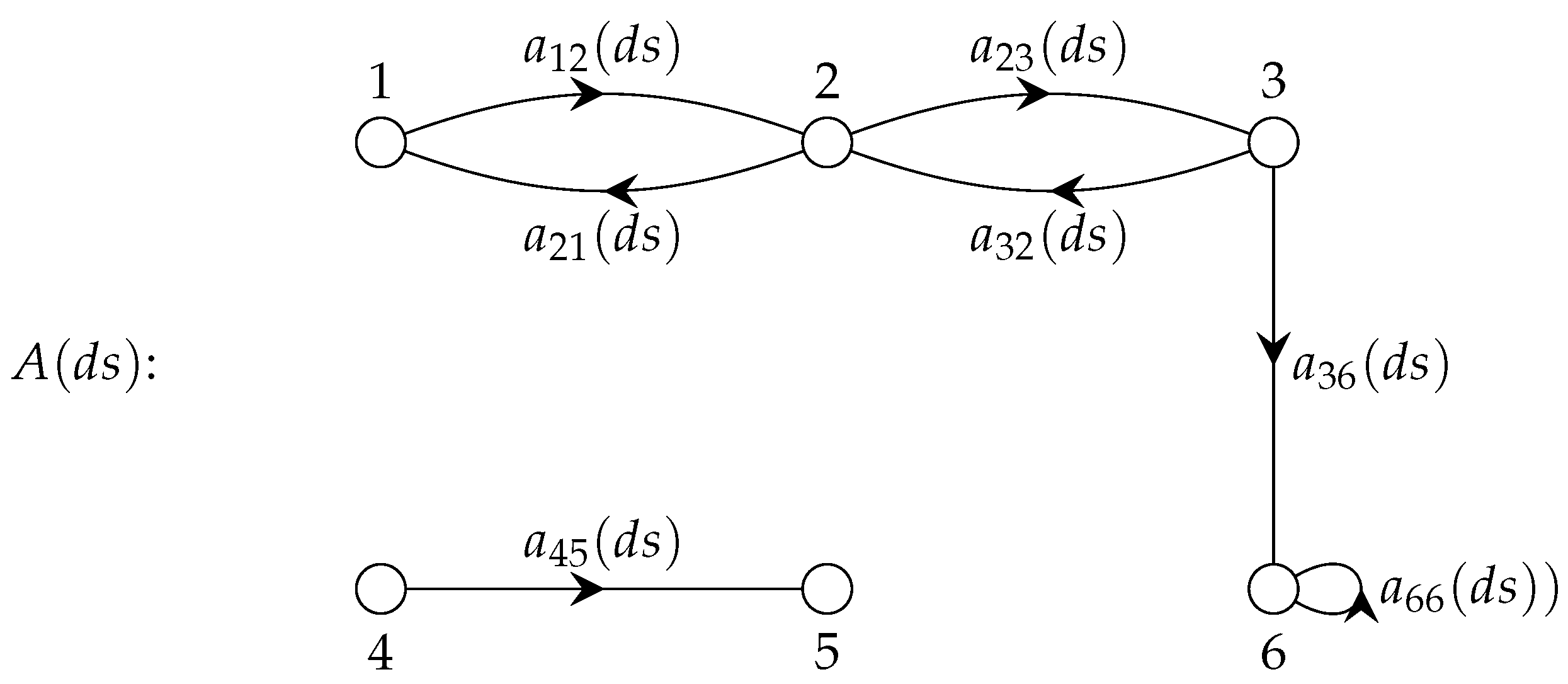

Example 3. To illustrate the results, we consider the RFDE weighted loop-digraph shown in Figure 5. The kernel matrix iswhere the dummy variable (ds) is suppressed within the matrix to reduce clutter. The symmetric kernel matrix is The reachability matrix is The transpose reachability matrix is The symmetric reachability matrix is This yields the weak components and .

The element-wise product reachability matrix is This yields the strong components , , , and .

The connectedness matrix C is given by By applying the appropriate permutation matrix or simply interchanging the rows of columns of the kernel matrix in accordance with the weak components, the kernel matrix is expressed in decomposed form, as follows:

The kernel matrix is The kernel matrix is expressed succinctly in the decomposed formwhere and are respectively and kernel matrices, and and are respectively and zero matrices. The fundamental solution can be expressed in decomposed formwhere and are respectively the fundamental solutions for the kernel matrices and . 4. Characteristics of Fundamental Solution

4.1. Fundamental Solution is Laplace Inverse of Resolvent Matrix

The fundamental solution satisfies the Volterra integro-differential equation of convolutional type

Taking the Laplace transform, we have

Consequently, the fundamental solution is the Laplace inverse of the resolvent matrix . Alternatively, the Laplace transform of the fundamental solution is the resolvent matrix .

4.2. Tauberian Behavior

From the previous subsection, we have

Assume that all the characteristic roots of the characteristic determinant

have real part less than zero, so that

. Choose

so that

. Then

is integrable and we have

In particular for

we have

4.3. Contour Integral Version of Fundamental Solution

Let us consider the expression for the fundamental solution

in the form of Laplace inverse as a contour integral in the

z-plane

For notational convenience, we put , , and .

To obtain the contribution to the integral of a characteristic root of with multiplicity , we consider the integral over a small circle centered at containing no other characteristic roots of .

Following the approach in Reference [

10], we have

We have that

, the reciprocal function of

, is given by

where

, and

for

is obtained recursively from

The residue for the characteristic root

is the coefficient of

corresponding to

. It is given by

The matrix coefficient

is given by

For example , and for .

We have arrived at the following theorem:

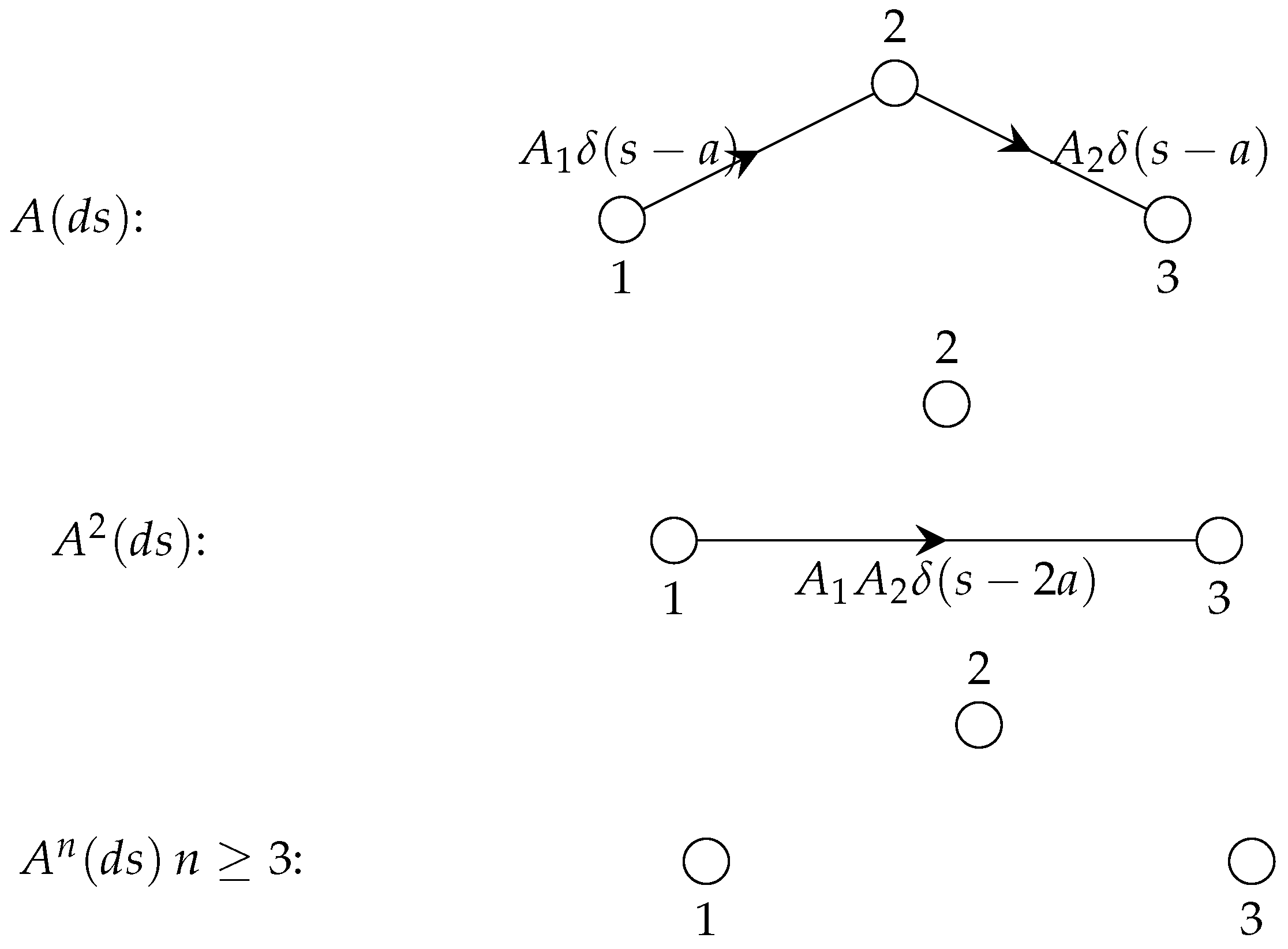

Theorem 10. Let be the characteristic roots of the characteristic determinant with multiplicity . The version of the fundamental solution arising from a contour integral has the form of spectral decomposition of exponential solutionswhere In the case that all characteristic roots are simple with multiplicity we havewhere . For the scalar case , so that . Example 4. for so that is a nilpotent matrix with index 3. The weighted loop-digraphs for the kernel matrix and its convolutional powers for the RFDE are shown in Figure 6. The fundamental matrix is .

The contribution to the contour integral is The contour integral version of the fundamental solution is given by Note for this specific example that for , but that for . As this example has only a finite number of terms in and , convergence is not a factor in the discrepancy. The issue is that is compelled to contain truncated powers of t to reflect delay activations, whereas by its nature is compelled to contain untruncated powers of t.

An open question is to characterize the minimum time

for the equality of

and

given by

. The matter has been encountered and considered in the literature (see References [

6,

7,

8,

9]) with values of

ranging from

to

.

4.4. Semigroup Relations

The fundamental solution

for the ODE

satisfies the semigroup relation

. The generalization for the fundamental solution

for an RFDE

is as follows:

Proof. This follows from the representation of the solution with and the consideration that the translate of a solution of an autonomous linear RFDE is also a solution. □

Remark 7. A related result is found in Reference [24]. Remark 8. It follows from the representation of solutions that the double integral has the following equivalent forms (see Equation (58)): 4.5. Semigroup of Solution Operators

In this subsection we consider the role played by the fundamental solution in solution operators mapping the initial function h to the solution in the function spaces and .

The solution

is given by

where

Remark 9. Note that a solution operator has to accommodate two aspects of the initial function h: (i) the initial value acted on by the fundamental solution , and (ii) the initial function h as an integrand with kernel .

For the function space

we use the notation

and

, where

The

solution operator

is given by

The

solution operator

is given by

The solution operator

on the function space

is a strongly continuous semigroup of linear operators for

satisfying: (i)

, (ii)

for

, and (iii)

. See References [

22,

25,

26,

27]. Likewise for the solution operator

on the function space

. See References [

8,

13,

15].

4.6. Picard Iteration

Definition 8. The Picard iteration for the fundamental solution based on its Volterra integral equation is Theorem 12. The Picard iteration is given by Proof. Arguing by induction, it suffices to show that

The first equation in the proof follows from a change in dummy integration variables, and the second from the definition of . The second equation in the theorem follows from . □