1. Introduction

Shape analysis of curves in

, where

, is an important branch in many applications, including computer vision and medical imaging. Using the landmark representation of objects, Dryden et al. [

1] studied the joint shape and size features of objects. However, an over-abundance of digital data, especially image data, is prompting the need for a different kind of shape analysis. In particular, the representation of shapes as elements of infinite-dimensional Riemannian manifolds with a given metric is of interest at this time and has important applications [

2,

3]. More recently, Srivastava et al. [

4] presented a special representation of curves, called the square-root velocity function, or SRVF, under which a specific elastic metric becomes an

metric and simplifies the shape analysis. This approach was analyzed by Kurtek et al. [

5] on different scenarios, corresponding to different combinations of physical properties of the curves: shape, size, location and orientation. When the metric used in these infinite-dimensional spaces is invariant with respect to scaling, translation, rotation and reparameterization, the Riemannian manifold that represents the space of curves is known as the shape space, and following the notation of [

5] will be denoted as

. However, in many of the applications, other features such as orientation or size (scale) also play important roles and need to be incorporated into the underlying framework. A prime example is medical imaging, where the size of the anatomical structure of interest can provide important diagnostic information. In the case where the curve length (size) is considered, the feature space is called the shape and size (or shape and scale) space and will be denoted as

[

5]. Other spaces denoted as

and

consider also changes in the orientations of the curves [

5]. In this work, we will focus on the shape space

and the shape and size space

, which are in general, completely different infinite-dimensional Riemannian manifolds. Curves in

are different elements of the space if their shape or scale are different. Curves in

are different elements of the space if their shape is different.

It seems natural, however, that the distance between two curves in space should be related to the distance of these same curves in space . We can thereby discern whether the distance between the curves in space is due, to a greater extent, to their difference in size or to their difference in shape.

In this sense, in [

6], the Sobolev-type metric given in [

3] for the shape space of planar closed curves is extended to the space of all planar closed curves where the metric considered exhibits a decomposition of the space of closed planar curves into a product space consisting of three components; that is, centroid translations, scale changes and curves in the shape space.

In this approach, we will consider representations of curves in

from square-root velocity functions (SRVF). Using these representations, we will consider two feature spaces studied in [

5]: the shape space

and the shape and size space

. The metric in

will be the same as in [

5]; however, we propose a new metric in

, which is completely different to the metric considered in [

5]. This metric enjoys the property that

can be decomposed into a product space consisting of two components:

, where the second space is related to the length (size) of the curve.

The outline of the paper is as follows: In

Section 2, we review the SRVF representation of curves and the standard elastic metrics. In

Section 3, we introduce the new metric in

. The mean shape and geodesics with this new metric are introduced in

Section 4 and

Section 5, respectively. A comparison of the proposed metric and the standard elastic metric is carried out in

Section 6 in a controlled setting with simulated curves, where we show the advantages of our proposal. We propose a procedure to detect outlier contours in

considering the SRVF representation. To the best of our knowledge, this is the first time this problem has been addressed with nearest-neighbor (NN) techniques. This is introduced in

Section 7. Not only that, but so far outlier detection (in the multivariate context) in Anthropometry has only been used as a cleaning technique, for correcting or removing the outliers before analyzing data in the multivariate context [

7,

8]. However, outliers report very valuable information in the footwear design process: outliers can indicate which kinds of feet are more different from the rest and could therefore cause fitting problems in footwear if the design is not appropriate. In

Section 8, our proposal is applied to a novel data set of foot contours. Finally,

Section 9 contains the conclusions.

2. Classical Spaces of Curves in for the SRVF Representation

In this section, we review some results from [

9]. In particular, we consider the SRVF representation of curves in

and we summarize the main results for the shape space,

, and for the shape and size space,

, with the standard elastic metrics.

Let

be a parameterized curve that is absolutely continuous on

. The square-root velocity (SRVF) of

is defined as the function

given by:

As it can be seen in [

9], this representation exists even where

.

For every

, there is a curve

(unique up to translation) such that the given

q is the SRVF function of that

. In fact,

If a curve

is of length one, then

. Furthermore, the hypersphere

is a Hilbert manifold.

One way to study the shape and size (scale) space of open curves is to consider as a pre-shape space with the usual inner product. To take care of the rotation and reparameterization of the curve , we remember that a rotation is an element of , the special orthogonal group of matrices; and a reparameterization is an element of is a diffeomorphism}.

The action of a reparameterization

transforms the curve

to the curve

. Hence, by the definition of the SRVF of a curve, we define the action of

in

by

Likewise, the action of

on

is just

We shall denote the combined action

The orbit of a function

is

If we consider the metric in

given by the usual inner product

the feature space of interest is:

and the distance in

is:

Then, the geodesic between

and the optimal reparameterization of

, which is denoted as

is

On the other hand, the shape space (without considering the size (scale) of the curves) is

The distance in

is given by

and the geodesic between

and

is

where

.

3. A New Metric in the Shape and Size Space of Curves in

When is considered as shape and size space, it is difficult to distinguish whether the distance between two shapes and is due to the difference in shape or to the difference in size between the corresponding curves and . We are therefore going to consider another shape-size space for curves that will be isometric to with another appropriate product metric.

Instead of considering in

the usual

-metric given in Equation (

5), if

, for any two vectors

in

, we will consider the following metric to endow

with a Riemannian structure,

where

The case will be excluded, which will mean that curves of length 0 are not considered in our space.

From this metric, we will endow (Theorem 1) with a Riemannian structure in such a way that will be isometric to This isometry will be exported (Theorem 2) to an isometry between and .

Therefore, we obtain a new metric which enjoys the property that

can be decomposed into a product space consisting of two components:

, where the second space is related with the length (size) of the curve. This new metric is associated with a distance function, see Corollary 3, given by

This distance is invariant under rotations and under rescaling in the sense that and for any O in and any .

3.1. An Isometry between and

We will begin this section defining a function

F which will provide an isometry between

and

. We consider the smooth map

Observe that

F is well defined for any

where

The function

F has immediate smooth inverse given by

The Functions R, and F and Their Properties

Now we state some properties of the function R, some properties of from to , that is the natural projection given by the normalization of an SRVF using its norm, and some properties of the function F:

Proposition 1. Properties of the functions R, π and F:

- 1.

Given a curve and . Then, is the square root of the length of the curve β.

- 2.

- 3.

- 4.

Namely, the rotation group commutes with the projection π.

- 5.

- 6.

Given , and then - 7.

F is smooth and admits smooth inverse with never vanishing differential map.

From the properties of , R and F, we can conclude the following diffeomorphisms.

Corollary 1. From the function F we have that

- 1.

- 2.

Since , then

As already mentioned at the beginning of the section, if

, the tangent space at

q can be identified with

itself,

and for any two vectors

in

we will use the following metric to endow

with a Riemannian structure,

Therefore, using , we can pullback the metric to in by the differential map in order to endow with a Riemannian structure in such a way that will be isometric to .

Theorem 1. There is an isometry given bywhere the usual product metric is considered in . Proof. We have shown in Corollary 1 that

F is a diffeomorphism; therefore, we only have to prove that the pullback

of the metric

is the usual product metric in

.

Given two vectors in

the pullback

is given by

But

because

. Therefore, for any two vectors

Now, first of all, we need to prove that in the tangent space to

at

,

is orthogonal to

. In order to do that, consider two vectors

and

and two curves

, such that

Then,

with

. Likewise,

where

. Hence,

because

for any

.

Similarly if

,

and if

□

Since we are using the usual product metric in , we conclude the following result:

Corollary 2. Let denote the distance function in , thenwhere is the usual distance in . From this explicit expression of the distance, it is easy to see that the metric is invariant under the action of reparameterizations and rotations.

Proposition 2. The group acts isometrically on .

Proof. We need to prove that

By Proposition 1 we know that

(and

). Hence, the proposition follows because

□

3.2. The Isometry between and

Using the isometry given by Theorem 1, an isometry between and can be constructed as stated in the following theorem:

Theorem 2. The isometry F can be exported to an isometry by using the following commutative diagramwhere and . Namely,for any . Proof. From Proposition 2 we know that acts by isometries on . Therefore, since the action of the group on and on is by isometries, and bearing in mind the diffeomorphisms in Corollary 1, we obtain the result. □

The isometry of the above theorem can be used to obtain the expression of the new distance function.

Corollary 3. Let denote the distance function in when the isometry of Theorem 2 is considered, then In the following proposition we are proving that is a well defined distance function.

Proposition 3. Let denote the distance function in when the isometry of Theorem 2 is considered, then

- 1.

for all .

- 2.

, if and only if, and .

- 3.

For any - 4.

for all , .

Proof. Most of the statements of the proposition follows directly from the definition and the properties of

and

R. We shall prove the triangle inequality for the sake of completeness. Let us denote by

the vectors given by

Then, by applying the triangle inequality for

,

□

5. Geodesics

Moreover, we can use the isometry given by the Theorem 2 to provide an explicit expression for the geodesics in .

Corollary 4. Any geodesic in is obtained aswhere α is a geodesic in and . Therefore any geodesic in can be written aswith and α a geodesic in . For any

p and

q in

, the above corollary allows us to obtain the geodesic segment (with respect to

) joining

p and

q. Namely, we want a geodesic curve (in the new metric)

such that

and

. By using the corollary, we only have to consider the geodesic segment in

with

and

and the geodesic segment in

joining

and

, i.e.,

Therefore, the geodesic segment joining

p and

q is

This geodesic segment in

can be understood as a family of deformations of curves in the following sense: if we have two curves

and

, we obtain two points in

with

and

. The geodesic segment

in

joining

and

is a family of length-one curves which can be labeled with

,

Finally, we obtain the family of curves

with

6. Application to a Simulated Data Set

In order to check the performance of the new metric (Equation (

13)), we have simulated several curves with different shapes and sizes. In particular, we have simulated ten 3D cylindric spirals,

,

, and ten circumferences,

,

, from:

where

and

,

,

,

,

,

,

(so all these spirals will have different shape and the same length, and all the circumferences will have the same shape but different lengths).

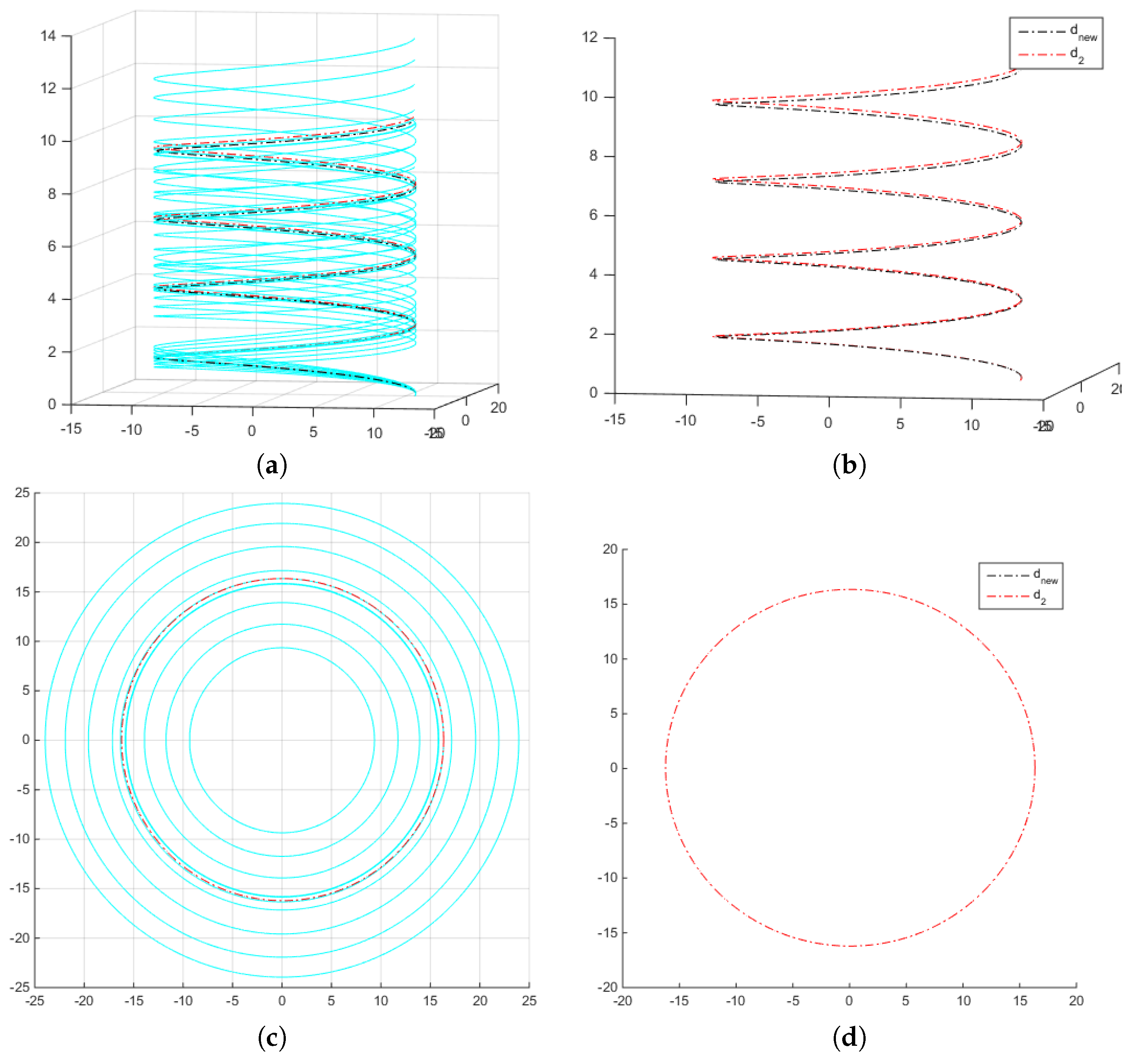

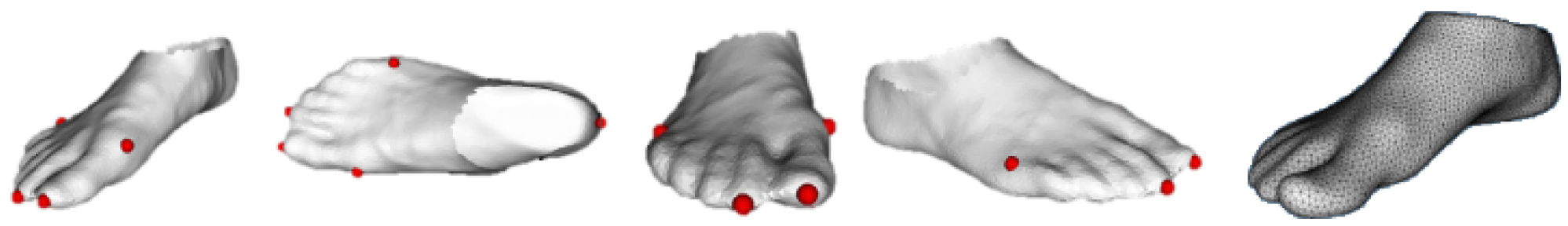

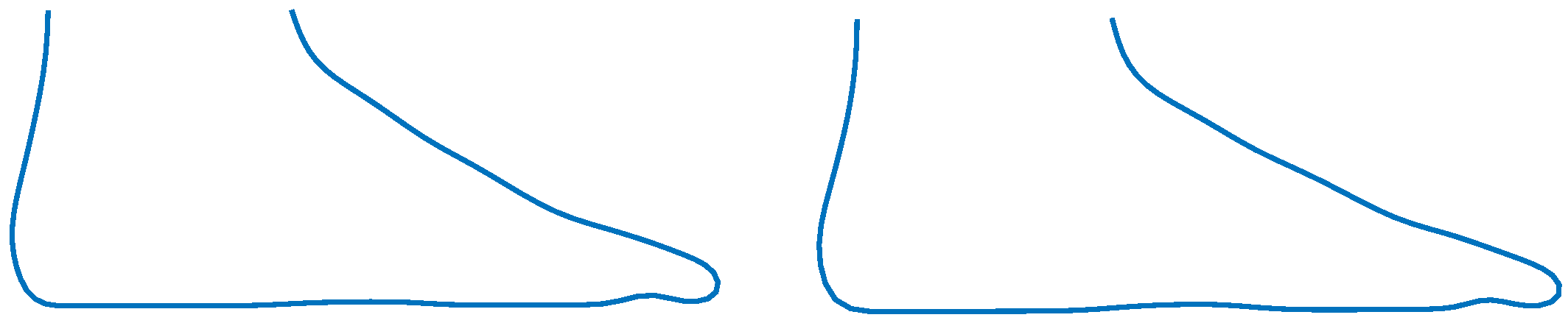

Figure 1a,b shows the simulations obtained.

The Karcher means of the ten spirals and of the ten circumferences are computed with the new metric (

, Equation (

18)) and by using the distance proposed by [

5] in the shape and size space

. These means are shown in

Figure 2, where the original curves are plotted in light blue;

is plotted in black color and

, the Karcher-mean using the distance

, is plotted in red color. As can be seen, in

Figure 2a,b, there is a very slight difference between the means

and

of the ten spirals.

Figure 2c,d show that the means coincide in the case of the circumferences.

An example comparing the geodesics obtained with

and

, can be seen in

Figure 3, without great differences among them.

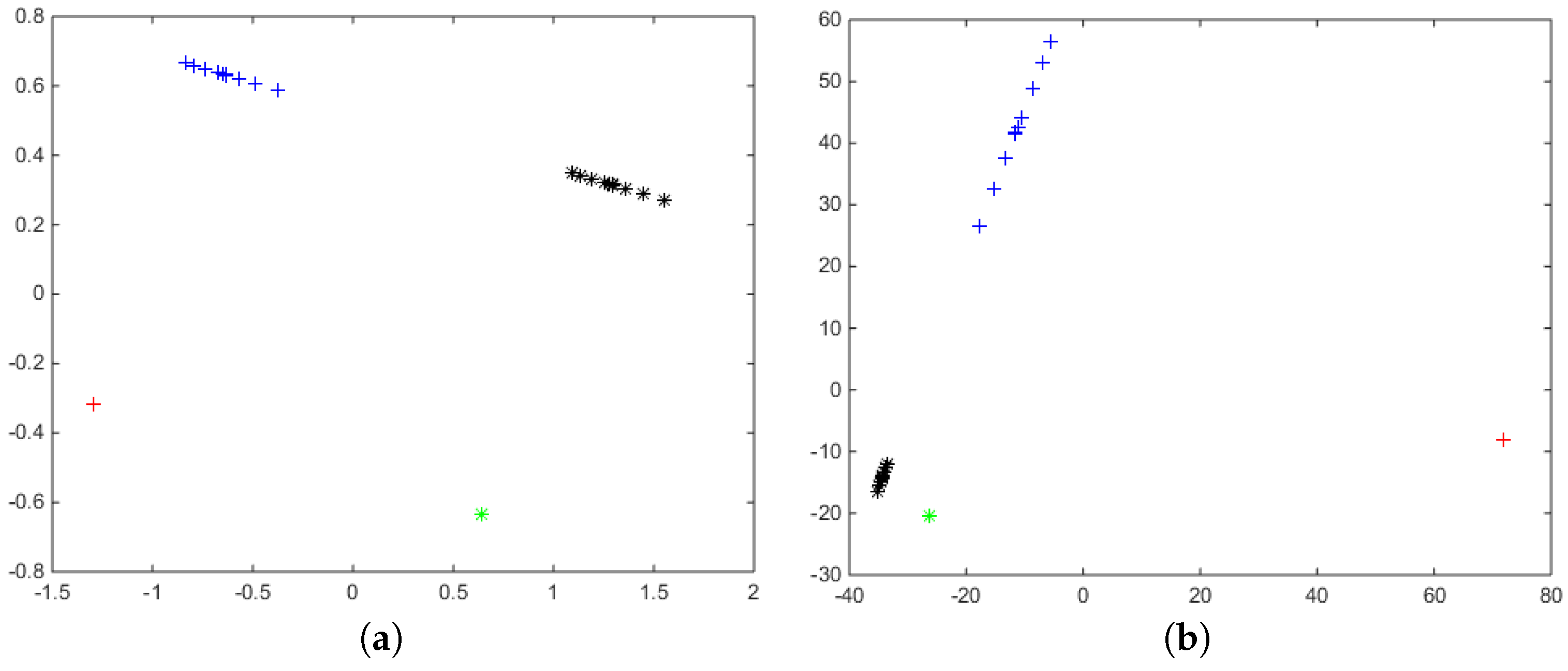

Finally, the distance matrices

and

between the twenty curves are computed using both metrics, and in order to compare the performance of

and

, a multidimensional scaling (MDS) analysis [

13] has been carried out. The MDS algorithm is a descriptive data reduction procedure to display the information contained in a

-distance matrix,

D, in a low-dimensional space such that the between-object distances are preserved as well as possible. Then, for each distance matrix

D, the method looks for a set of orthogonal variables

,

such that the Euclidean distances of the elements with respect to these variables are as close as possible to the distances given in the original matrix

D. In

Figure 4, MDS has been applied to the distance matrices computed with both metrics. In both graphics (

Figure 4a,b), the black points represent the twenty spirals

shown in

Figure 1a, and the green points represent the twenty circumferences

shown in

Figure 1b.

As can be seen, there are slights differences among the MDS representations of both metrics. If we perform a

k-means cluster with

k = 2 from

and

, in both cases the two groups are perfectly recovered. We also recover the two groups if we apply DBSCAN [

14,

15].

If we re-scale the twenty figures, multiplying them by 50, and we consider the twenty resulting curves jointly to the twenty original ones, we can compute again the distance matrices

and

between the 40 curves. The MDS scaling representation of these distance matrices can be found in

Figure 5. In both graphics, the initial spirals

are plotted in green; the circumferences

are plotted in black, and their scaled versions,

and

are plotted in red and blue color, respectively.

Figure 5 shows one important difference between the performance of both metrics. By definition,

is invariant to changes of scale i.e.,

,

and this equality does not hold for

, where the distance among curves increases with the scaling factor.

If we perform a

k-means cluster analysis with

k = 4 from the distance matrices, the four groups are recovered from

, but for

, the distance among the scaled circumferences increases regarding to the distance among the initial circumferences, so the group of large circumferences is splitted into two clusters while the initial (short) curves (spirals and circumferences) are joined in a unique cluster (

Figure 6). However, the algorithm DBSCAN applied on both distance matrices, allow us in both cases recover again the four initial groups.

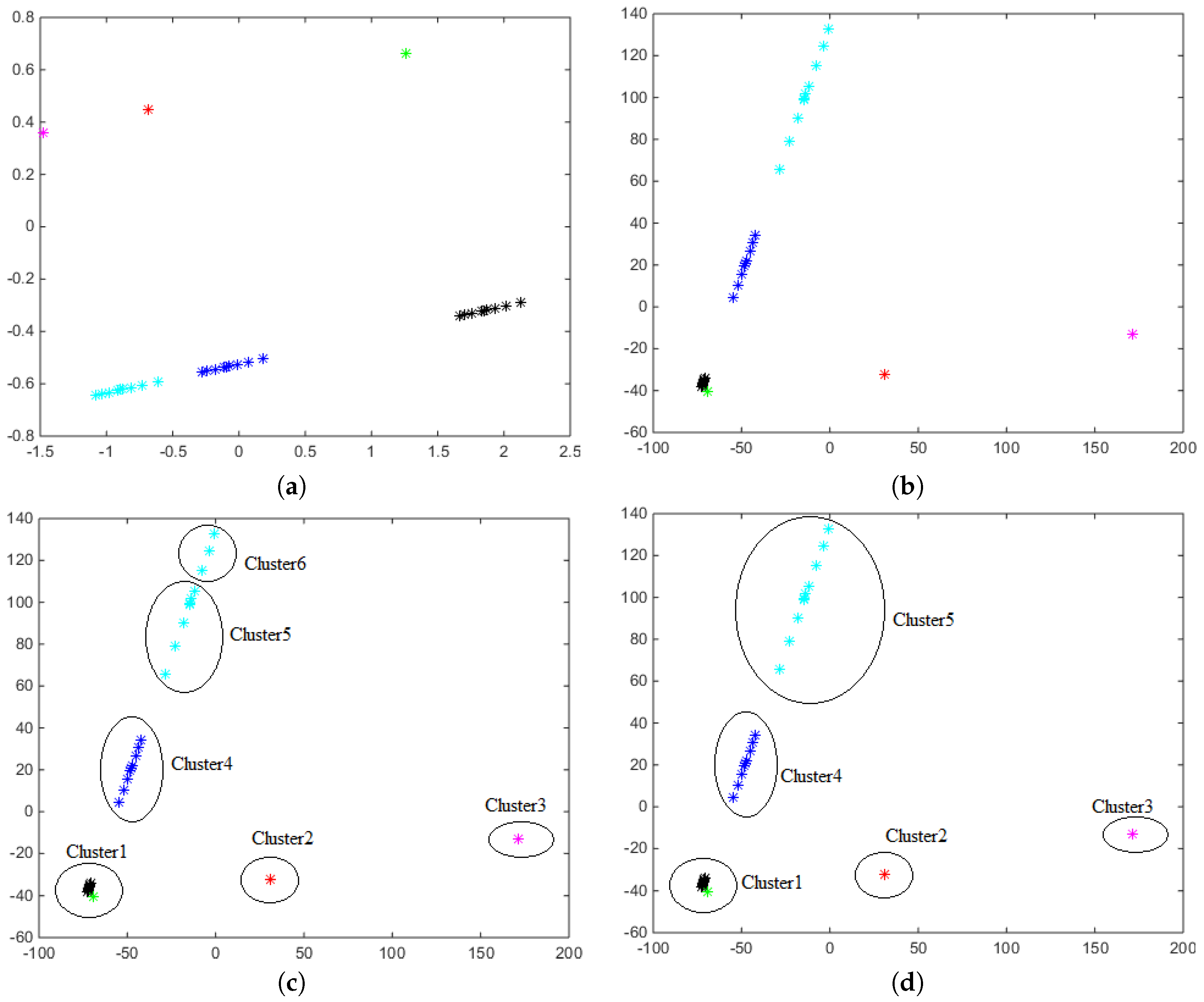

As a third step of the simulation study, let us consider a broader data set with the initial spirals

, the circumferences

, their scaled versions

and

, jointly with two new re-scaled sets

and

. The distance matrices

and

between the 60 curves are computed and the MDS scaling representation of these distance matrices can be found in

Figure 7.

A

k-means cluster analysis with

so as the DBSCAN algorithm applied on

recovers the 6 simulated groups. However, the DBSCAN algorithm applied on

provides 5 clusters on this data set joining in a single cluster the initial (short) curves (spirals and circumferences) and distinguishing the other groups (

Figure 7d). The

k-means algorithm with

provides the same result, but if the

k-means algorithm is applied with

clusters, the set of the largest circumferences is split into two groups (

Figure 7c). Once again, it can be clearly seen that the distance

among shapes increases with the scaling factor.

7. Detection of Outliers

Although there are a variety of techniques for outlier detection for different types of data in any metric space based on nearest-neighbor techniques (see [

16] for a detailed explanation), they have not been fully exploited in the shape and size space of curves. Some of the main references are based on box-plots of the distances to the median to detect outliers, such as [

12,

17], and more recently the method based on elastic depths proposed by [

18].

We propose a technique for outlier detection based on the proposed distance. Nearest-neighbor techniques are very popular due to their good results, conceptual simplicity and interpretability in the classic multivariate case [

19]. We consider this idea for the shape and size space of curves. The

k-NN Anomaly Detection algorithm searches for the nearest

k-neighbors, i.e., the

k closest curves, for every element in the database, and calculates the average distance of the

k-neighbors. In the multivariate case, the Euclidean distance is used, but here we use the proposed distance to find the neighbors. This procedure returns outlier scores; as usual, the highest score denotes the highest degree of outlierness. A way to establish a binary decision about whether or not to label a point as an outlier is to use a box-plot with the outlier scores and to consider the points detected as outliers by the box-plot as anomalies.

We compare our procedure with that introduced in [

12,

17,

18] using the data sets of open curves used in [

12,

17], which are available from [

20]. For the Example 1 considered in [

17] formed by 70 spirals, Ref. [

12] found 6 outliers, Ref. [

17] also found 6 outliers (2 scale outliers and 4 mild shape outliers) and [

18] found 4 outliers (3 due to amplitude and 1 due to phase) with the recommended value of

k = 2, which is the boxplot multiplier, while 9 outliers are found with the classical

k = 1.5. However, with our methodology, we detect 8 outliers (the results are stable, we obtain the same outliers with

k = 5, 10 or 15). We have also computed the square of the distance in Equation (

17) (

) and we have computed the contribution in percentage due to shape

and due to size

, for each outlier. The percentages of contribution due to shape for the 8 outliers are: 21%, 29%, 34%, 35%, 41%, 50%, 50% and 74%. For the Example 3 in [

17] formed by 176 fiber tracts in the human brain extracted from a diffusion tensor magnetic resonance image (DT-MRI), we detect the same 11 outliers also detected by [

12,

17], and all are due to shape, with percentages of contribution due to shape of 90%. However, [

18] with

k = 2 returned 62 outliers (62 due to amplitude, 23 of them are also outliers due to phase), i.e., 35% of points of the sample are considered outliers.

9. Conclusions

We have proposed a new metric in the shape and size space

that, unlike the previous proposals, allows us to distinguish whether the distance between two shapes

and

is due to the difference in shape or to the difference in size between the corresponding curves

and

. It has been compared with the metric proposed by [

5] in a simulation study, where our proposal is shown to perform better. Furthermore, we also show the advantages of the new metric, such as its invariance to changes of scale.

For the first time, we have also proposed a procedure based on the distances and NN techniques in for finding outlier curves in . We have applied it to a novel industrial data set. The foot outliers found by considering their contour can help shoe designers improve their designs in order to provide customers with a better fit.

In future work, in regards to the theory, closed curves could be considered and an the appropriate metric defined. Furthermore, the new metric could be used in other types of statistical problems besides outlier detection, such as classification, clustering, or new ones, where curves in

have never been used before, such as archetype analysis [

29] or archetypoid analysis [

30]. Finally, in regards to the footwear application, the outlier procedure with the new metric could be applied to other kinds of foot contours, such as the Ball Girth, and of course, scopes for other fields of application.