On the Duality of Discrete and Periodic Functions

Abstract

:1. Introduction

2. Notation

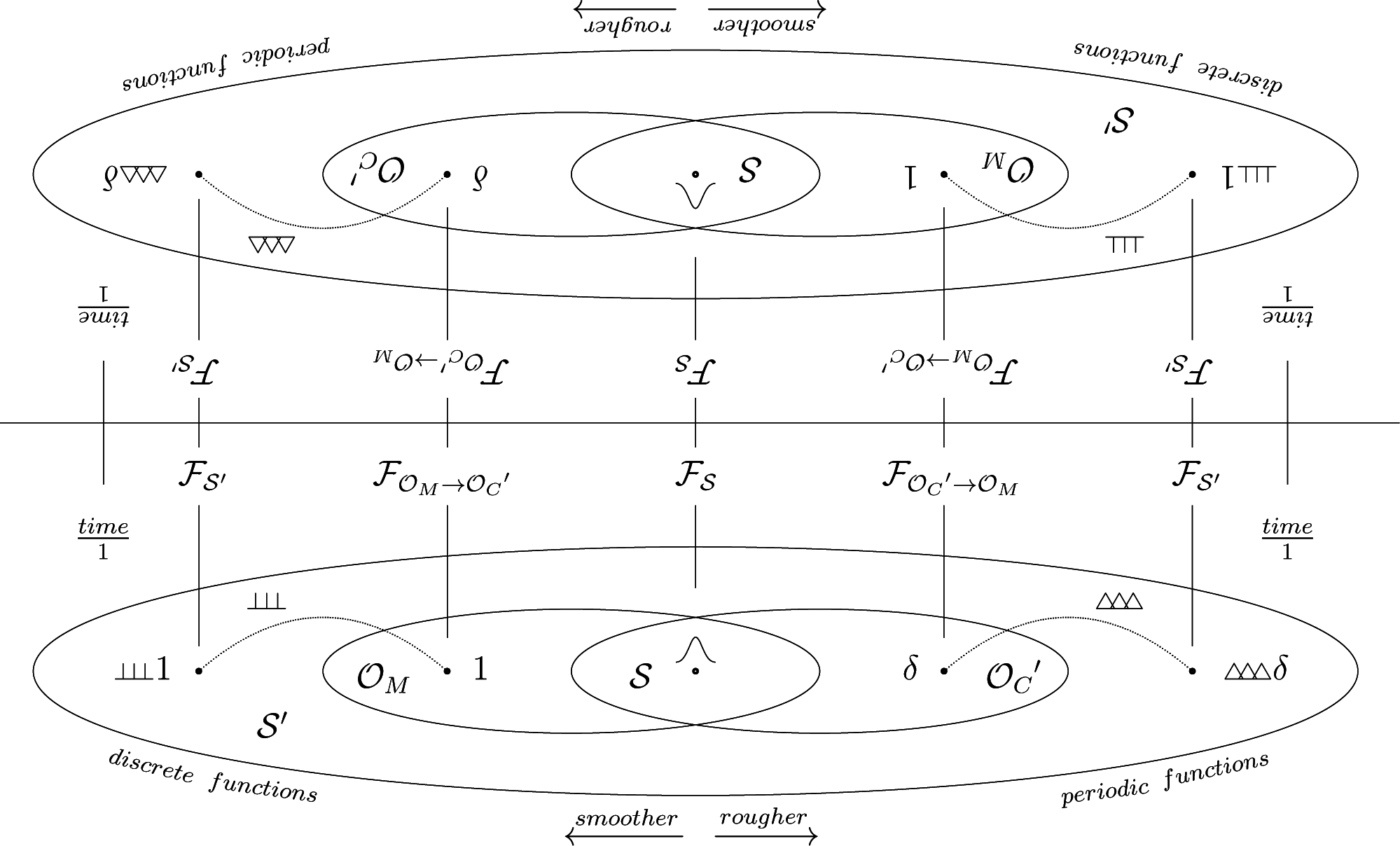

3. Idea

3.1. Distribution Theory

3.2. Applications

3.3. Generality

4. Definitions

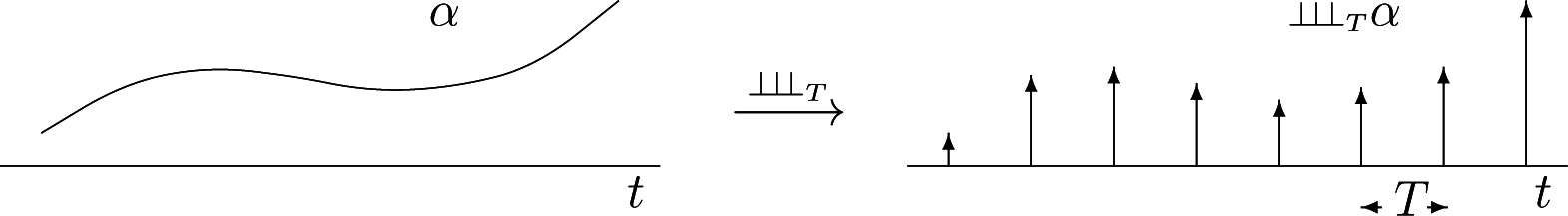

4.1. Discretization

4.2. Periodization

5. Motivation

5.1. Fourier Series and Fourier Transform

5.2. Fourier Series of Periodized Functions

5.3. Versions of Poisson Summation Formulas

6. Calculation Rules

7. The Discretization-Periodization Theorem

7.1. Unitary Increments

7.2. Arbitrary Increments

8. Conclusions

9. Outlook

Conflicts of Interest

Appendix

A. Proof

B. Summary

Acknowledgments

References

- Woodward, P.M. Probability and Information Theorywith Applications to Radar; Pergamon Press Ltd: Oxford, UK, 1953. [Google Scholar]

- Lighthill, M.J. An Introduction to Fourier Analysis and Generalised Functions; Cambridge University Press: Cambridge, NY, USA, 1958. [Google Scholar]

- Benedetto, J.J.; Zimmermann, G. Sampling Multipliers and the Poisson Summation Formula. J. Fourier Anal. Appl. 1997, 3, 505–523. [Google Scholar]

- Søndergaard, P.L. Gabor Frames by Sampling and Periodization. Adv. Comput. Math. 2007, 27, 355–373. [Google Scholar]

- Orr, R.S. Derivation of the Finite Discrete Gabor Transform by Periodization and Sampling. Signal Process 1993, 34, 85–97. [Google Scholar]

- Janssen, A. From Continuous to Discrete Weyl-Heisenberg Frames through Sampling. J. Fourier Anal. Appl. 1997, 3, 583–596. [Google Scholar]

- Kaiblinger, N. Approximation of the Fourier Transform and the Dual Gabor Window. J. Fourier Anal. Appl. 2005, 11, 25–42. [Google Scholar]

- Søndergaard, P.L. Finite Discrete Gabor Analysis. Ph.D. Thesis, Institute of Mathematics, Technical University of Denmark, Copenhagen, Denmark, 2007. [Google Scholar]

- Feichtinger, H.G.; Strohmer, T. Gabor Analysis and Algorithms: Theory and Applications; Birkhäuser: Basel, Switzerland, 1998. [Google Scholar]

- Ozaktas, H.M.; Sumbul, U. Interpolating between Periodicity and Discreteness through the Fractional Fourier Transform. IEEE Trans. Signal Process. 2006, 54, 4233–4243. [Google Scholar]

- Strohmer, T.; Tanner, J. Implementations of Shannon’s Sampling Theorem, a Time-Frequency Approach. Sampl. Theory Signal Image Process 2005, 4, 1–17. [Google Scholar]

- Proakis, J.G.; Manolakis, D.G. Digital Signal Processing: Principles, Algorithms and Applications, 2nd ed; Macmillan Publishers: Greenwich, CT, USA, 1992. [Google Scholar]

- Heil, C.E.; Walnut, D.F. Continuous and Discrete Wavelet Transforms. SIAM Rev. 1989, 31, 628–666. [Google Scholar]

- Daubechies, I. The Wavelet Transform, Time-Frequency Localization and Signal Analysis. IEEE Trans. Inf. Theory 1990, 36, 961–1005. [Google Scholar]

- Daubechies, I. Ten Lectures on Wavelets; Society for Industrial and Applied Mathematics (SIAM): Philadelphia, PA, USA, 1992. [Google Scholar]

- Benedetto, J.J. Harmonic Analysis Applications; CRC Press: Boca Raton, FL, USA, 1996. [Google Scholar]

- Girgensohn, R. Poisson Summation Formulas and Inversion Theorems for an Infinite Continuous Legendre Transform. J. Fourier Anal. Appl. 2005, 11, 151–173. [Google Scholar]

- Hunter, J.K.; Nachtergaele, B. Applied Analysis; World Scientific Publishing Company: Singapore, 2001. [Google Scholar]

- Strichartz, R.S. Mock Fourier Series and Transforms Associated with Certain Cantor Measures. J. Anal. Math. 2000, 81, 209–238. [Google Scholar]

- Schwartz, L. Théorie des Distributions Tome I; Hermann Paris: Paris, France, 1950. [Google Scholar]

- Schwartz, L. Théorie des Distributions Tome II; Hermann Paris: Paris, France, 1959. [Google Scholar]

- Temple, G. The Theory of Generalized Functions. Proc. R. Soc. Lond. Ser. A Math. Phys. Sci. 1955, 228, 175–190. [Google Scholar]

- Gelfand, I.; Schilow, G. Verallgemeinerte Funktionen (Distributionen), Teil I; VEB Deutscher Verlag der Wissenschaften Berlin: Berlin, Germany, 1969. [Google Scholar]

- Gelfand, I.; Schilow, G. Verallgemeinerte Funktionen (Distributionen), Teil II, Zweite Auflage; VEB Deutscher Verlag der Wissenschaften Berlin: Berlin, Germany, 1969. [Google Scholar]

- Zemanian, A. Distribution Theory And Transform Analysis—An Introduction To Generalized Functions, With Applications; McGraw-Hill Inc: New York, NY, USA, 1965. [Google Scholar]

- Strichartz, R.S. A Guide to Distribution TheorieFourier Transforms, 1st Ed ed; CRC Press: Boca Raton, FL, USA, 1994. [Google Scholar]

- Walter, W. Einführung in die Theorie der Distributionen; BI-Wissenschaftsverlag, Bibliographisches Institut & FA Brockhaus: Mannheim, Germany, 1994. [Google Scholar]

- Bracewell, R.N. Fourier Transform its Applications; McGraw-Hill Book Company: New York, NY, USA, 1965. [Google Scholar]

- Nashed, M.Z.; Walter, G.G. General Sampling Theorems for Functions in Reproducing Kernel Hilbert Spaces. Math. Control Signals Syst. 1991, 4, 363–390. [Google Scholar]

- Grubb, G. Distributions and Operators; Springer: Berlin, Germany, 2008. [Google Scholar]

- Feichtinger, H.G. Atomic Characterizations of Modulation Spaces through Gabor-Type Representations. Rocky Mt. J. Math 1989, 19, 113–125. [Google Scholar]

- Osgood, B. The Fourier Transform and its Applications; Lecture Notes for EE 261; Electrical Engineering Department, Stanford University: Stanford, CA, USA, 2005. [Google Scholar]

- Unser, M. Sampling-50 Years after Shannon. IEEE Proc. 2000, 88, 569–587. [Google Scholar]

- Brandwood, D. Fourier Transforms in Radar and Signal Processing; Artech House: Norwood, MA, USA, 2003. [Google Scholar]

- Cariolaro, G. Unified Signal Theory; Springer: Berlin, Germany, 2011.

- Jerri, A. Integral and Discrete Transforms with Applications and Error Analysis; Marcel Dekker Inc: New York, NY, USA, 1992. [Google Scholar]

- Auslander, L.; Meyer, Y. A Generalized Poisson Summation Formula. Appl. Comput. Harmon. Anal. 1996, 3, 372–376. [Google Scholar]

- Brigola, R. Fourieranalysis Distributionenund Anwendungen; Vieweg Verlag: Wiesbaden, Germany, 1997. [Google Scholar]

- Gröchenig, K. Foundations of Time-Frequency Analysis; Birkhäuser: Basel, Switzerland, 2001. [Google Scholar]

- Quegan, S. Signal Processing; Lecture Notes; University of Sheffield: Sheffield, UK, 1993.

- Gröchenig, K. An Uncertainty Principle related to the Poisson Summation Formula. Stud. Math. 1996, 121, 87–104. [Google Scholar]

- Papoulis, A.; Pillai, S.U. Probability Random Variables, and Stochastic Processes; Tata McGraw-Hill Education: Noida, India, 1996. [Google Scholar]

- Li, B.Z.; Tao, R.; Xu, T.Z.; Wang, Y. The Poisson Sum Formulae Associated with the Fractional Fourier Transform. Signal Process. 2009, 89, 851–856. [Google Scholar]

- Rahman, M. Applications of Fourier Transforms to Generalized Functions; Wessex Institute of Technology (WIT) Press: Ashurst Lodge UK, 2011. [Google Scholar]

- Jones, D. The Theory of Generalized Functions; Cambridge University Press: Cambridge, UK, 1982. [Google Scholar]

- Kahane, J.P.; Lemarié-Rieusset, P.G. Remarques sur la formule sommatoire de Poisson. Stud. Math. 1994, 109, 303–316. [Google Scholar]

- Balazard,, M.; France,, M.M.; Sebbar,, A. Variations on a Theme of Hardy’s. Ramanujan J. 2005, 9, 203–213. [Google Scholar]

- Lev, N.; Olevskii, A. Quasicrystals and Poisson’s Summation Formula. Invent. Math. 2013. [Google Scholar] [CrossRef]

- Mallat, S. A Wavelet Tour of Signal Processing; Academic Press: Waltham, MA, USA, 1999. [Google Scholar]

- Feichtinger, H.G. Elements of Postmodern Harmonic Analysis. In Operator-Related Function Theory and Time-Frequency Analysis; Springer: Berlin, Germany, 2015; pp. 77–105. [Google Scholar]

- Lev, N.; Olevskii, A. Measures with Uniformly Discrete Support and Spectrum. Compt. Rendus Math. 2013, 351, 599–603. [Google Scholar]

- Gasquet, C.; Witomski, P. Fourier Analysis and Applications: Filtering, Numerical Computation, Wavelets; Springer: Berlin, Germany, 1999. [Google Scholar]

- Edwards, R. On Factor Functions. Pac. J. Math. 1955, 5, 367–378. [Google Scholar]

- Betancor, J.J.; Marrero, I. Multipliers of Hankel Transformable Generalized Functions. Comment. Math. Univ. Carolin 1992, 33, 389–401. [Google Scholar]

- Bonet, J.; Frerick, L.; Jordá, E. The Division Problem for Tempered Distributions of One Variable. J. Funct. Anal. 2012, 262, 2349–2358. [Google Scholar]

- Morel, J.M.; Ladjal, S. Notes sur l’analyse de Fourier et la théorie de Shannon en traitement d’images. J. X-UPS 1998, 1998, 37–100. [Google Scholar]

- Gracia-Bondía, J.M.; Varilly, J.C. Algebras of Distributions Suitable for Phase-Space Quantum Mechanics. I. J. Math. Phys. 1988, 29, 869–879. [Google Scholar]

- Dubois-Violette, M.; Kriegl, A.; Maeda, Y.; Michor, P.W. Smooth*-Algebras. Prog. Theor. Phys. Supp. 2001, 144, 54–78. [Google Scholar]

© 2015 by the authors; licensee MDPI, Basel, Switzerland This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Fischer, J.V. On the Duality of Discrete and Periodic Functions. Mathematics 2015, 3, 299-318. https://doi.org/10.3390/math3020299

Fischer JV. On the Duality of Discrete and Periodic Functions. Mathematics. 2015; 3(2):299-318. https://doi.org/10.3390/math3020299

Chicago/Turabian StyleFischer, Jens V. 2015. "On the Duality of Discrete and Periodic Functions" Mathematics 3, no. 2: 299-318. https://doi.org/10.3390/math3020299

APA StyleFischer, J. V. (2015). On the Duality of Discrete and Periodic Functions. Mathematics, 3(2), 299-318. https://doi.org/10.3390/math3020299