2. Differential Game and Viability Kernels

Consider a differential game with the autonomous dynamics:

Here,

x is the state vector,

u and

v are control parameters of the first and second players, respectively, and

P and

Q are compacts of the corresponding dimensions. We suppose that

f satisfies standard requirements: continuity in

and

v; the Lipschitz condition in

x; and a growth condition that provides the continuability of the solutions to any time interval.

Based on the conflict control system (

1), consider for any

the differential inclusion:

Definition 1 (u-stability property [3]). Let T be an arbitrary time instant. A set is said to be stable on the interval if for any initial position, ,

for any time instant, ,

for any ,

there exists a solution, ,

to the differential inclusion (2) with the initial state ,

such that .

Definition 2 (viability property [2]). A set is said to be viable if for any ,

for any there exists a solution to the differential inclusion (2) with the initial state such that .

Definition 3 (viability kernel [2]). For a given compact set denote by the largest subset of G with the viability property. This subset is called the viability kernel of G.

The following assertions follow from Definitions 1–3:

- (1)

The closure of a u-stable (viable) set is a u-stable (viable) set.

- (2)

The union of any family of u-stable (viable) sets is a u-stable (viable) set.

- (3)

If

is a

u-stable closed set, then for any initial position,

, for any

, there exists a solution,

, to the differential inclusion (

2) with the initial state

such that

The first assertion follows from the compactness and semi-continuity of solution sets of differential inclusions; see e.g., [

13], and notice that the solution set of Equation (

2) depends, even Lipschitz continuous, on the initial state. The second assertion follows from the definition of

u-stability (viability). The third assertion can be proven by considering ever finer subdivisions of the interval

, step-by-step constructing solutions of Equation (

2) that satisfy the assertion at nodes of the subdivisions and using the compactness of the solution sets of Equation (

2).

Assertion (3) shows that Definition 2 is a special case of Definition 1 with and . Assertions (1) and (2) provide the existence of the viability kernel, , for any compact set, G, if there exists at least one viable subset of G. Thus, the following problem can be considered.

Problem. Given a compact set it is required to construct the viability kernel, .

To solve this problem, fix an arbitrary

and introduce the set

. Due to Assertions (1) and (2), there exists a closed maximal

u-stable on the interval

set

. For any time instant,

, denote

Remark 1. The family has the property of monotonicity: for . Really, if is a u-stable set, then the set defined by the cross-sections is also u-stable. Therefore, the maximal u-stable set, W, must have the required monotonicity property.

These facts immediately follow from the above definitions, and they are left to the reader to prove.

Proposition 1. Let be a sequence of nonempty compact sets such that if , and let , then and to X in the

Proof. First, X is nonempty as the intersection of embedded compacts. Second, assume that the convergence is absent. Then, there exists an , such that for all . Here, the upper index, , denotes the -neighborhood of sets, and the symbol “cl” denotes the closure operation. The sets , are compact and embedded. Therefore, there exists , which is a contradiction. ☐

Theorem 1. If for any ,

then there exists the Hausdorff limit and: Otherwise, there is not any viable subset of G.

Proof. Denote

for brevity. If for any

, the set,

, is nonempty, then

K is also nonempty as the intersection of nonempty embedded compacts. Show that

K is viable in the sense of Definition 2. Choose arbitrary

and

. By Proposition 1, for any

, there exists

, such that:

The condition,

, implies the inclusion,

. By virtue of

u-stability of the set

W, there exists a solution,

, of Equation (2) with the initial state

, such that

. With the autonomy of the differential inclusions (

2), we conclude the existence of a solution,

, of Equation (

2), such that

and

Thus, for arbitrarily small

, there exists a solution,

, of Equation (

2) that satisfies the relations,

and

. Since the solution set of Equation (

2) is compact, this gives a solution,

, such that

and

. Thus, we have proven the viability property of

K, and relation (

3) proves the Hausdorff convergence of

to

K as

.

Assume now that is a viable set, such that . As was noticed in the comment to Definition 2, the viability property of means the u-stability property of the set . Since , and W is the maximal u-stable set belonging to N, then . Thus, for all , and therefore, . This proves Theorem 1. ☐

3. Approximation

Immediate implementation of Theorem 1 for finding

requires precise computing of the sets

for

. If, for example, the step-by-step backward procedure from [

9] related to the dynamic programming method is used, then the computational error grows infinitely as

The algorithm proposed in the current paper uses the idea of decreasing the step of the backward procedure simultaneously with the passage to the limit as time goes to infinity. The algorithm looks as follows:

Here, S is the unit closed ball in , L is the Lipschitz constant of , and

Theorem 2. Let the family be defined by Equation (4), where the sequence { is such that and .

If for any ,

then there exists the Hausdorff limit,

,

and Otherwise, there is no viable subset of G.

Before starting the proof of Theorem 2, we prove three auxiliary lemmas. The first lemma shows a discrete

u-stability of the family

generated by Equation (

4). Assume just for the simplicity of subsequent calculations that

for all

. This requirement allows us to avoid the consideration of terms of the form

in the proof of Lemma 1, which reduces the amount of calculations.

Lemma 1. Let l and s be integers such that .

Let be fixed. If ,

then there exists a solution, ,

of Equation (2) with ,

such that ,

where ,

.

We first prove the following two auxiliary propositions.

Proposition 2. Let and .

If ,

then for any solution, ,

of differential inclusion (2) with the initial state ,

there exists a solution, ,

with the initial state ,

such that Proof of Proposition 2. The proof immediately follows from the Filippov–Gronwall inequality obtained in [

14], Theorem 1. ☐

Proposition 3. Let ,

and be fixed. If ,

then there is a solution, ,

of Equation (2) with the initial condition ,

such that ,

where Proof of Proposition 3. Choose

and

. Let

. Then, there exists a point,

, satisfying

. By the definition of

, there are a point

and vectors

and

,

, such that:

Using the Lipschitz property of the right-hand side of Equation (

2), choose a vector,

, to provide the inequality

. Denote

and calculate:

Accounting for the technical assumption

,

, simplifies the last estimate as follows:

Let

be the nearest point to

for every

. Obviously, the function

is continuous and satisfies the following inequality, due to the Lipschitz property of

:

Suppose

is a solution of the differential equation

with the initial state

. Then:

The last equation and the estimate (

6) yield the inequality:

and, with Equation (5), it holds that:

By Proposition 2, there exists a solution,

, of Equation (

2) with the initial state

, such that:

Then:

and therefore:

Proposition 3 is proven. ☐

Proof of Lemma 1. Let

be chosen. Setting

and applying Proposition 3, we construct a solution,

, of Equation (

2) that satisfies the conditions:

It is easy to estimate

to obtain the inequality:

where

This proves Lemma 1. ☐

Lemma 2. If for all ,

then the set: is nonempty and possesses the viability property.

Proof. If for all , then K is nonempty as the intersection of nonempty embedded compacts. Let and be arbitrary. For any , one can choose a large integer, k, to satisfy the following conditions:

(a) (see Proposition 1);

(b) for all .

Choose

, such that

Since

, it holds that

. Hence, for any

, there exist an integer

and a point,

, such that

. Using the Lipschitz continuity of the solution set of Equation (

2) (see Proposition 2), one can choose the value of

ξ to be so small that for any solution,

, with

, there exists a solution,

, with

, satisfying

. By virtue of Condition (b) and the choice of

and

s, there exists

, such that

Set

and

. By Lemma 1, there exists a solution,

, of Equation (

2) with

and

, where

. With Condition (b), it holds that

Taking into account the obvious inequality

, we have:

where

Condition (a) implies the inclusion

, and therefore:

where

Using the choice of

ξ, we obtain the existence of a solution,

, of Equation (

2) with

and

, where

Since

ε is arbitrary and the solution set of Equation (

2) is compact, there exists a solution,

, such that

This proves Lemma 2. ☐

Lemma 3 If a set has the viability property, then for all .

Proof. Define the following sequence:

where:

One can easily check the following properties of

:

- (a)

- (b)

For any point

and any vector

, there is a solution,

, of Equation (

2), such that

and

- (c)

If

, but

, then there exists

such that for any solution,

, of Equation (

2) with

it holds that

.

Arguing by induction, one can easily prove the maximality of in the following sense: for any family, , with Properties (a) and (b), the inclusion holds for any . However, the viability property of implies that the family obtained by the replication of has Properties (a), except for , and (b). Hence, for To complete the proof, it remains to check the inclusion for all . To do this, the following proposition will be proven.

Proposition 4. For arbitrary ,

and any solution, ,

of the differential inclusion with the initial state ,

there exists a vector, ,

such that: Proof of Proposition 4. Let

be the nearest point to

for any

. It is evident that the function

is measurable, and the following inequality holds:

This implies the estimate:

where:

Taking into account the obvious inequality:

we obtain the desired estimation, which proves Proposition 4. ☐

Thus, Lemma 3 is also proven. ☐

Proof of Theorem 2. Define

K by Formula Equation (

4). If

for all

, then

K is nonempty and viable by Lemma 1.

Show the maximality of

To this end, consider an arbitrary viable set,

. Lemma 3 says that

, and therefore:

This proves the maximality of K.

Prove now the Hausdorff convergence. Take an arbitrary

and use Proposition 1 to choose an integer,

k, such that

. Taking

and accounting for Lemma 3 yield the relations:

which prove the convergence of

to

K in the Hausdorff metric.

Notice, if there exists a viable set

, then, for every

, the condition

implies that

(these sets are defined by Equation (

7)). Hence,

for all

. As was noticed in the proof of Lemma 3, the inclusion

holds for any

. Therefore,

for any

Thus, the case

, for some

, contradicts with the existence of viable subsets of

G. Theorem 2 is proven. ☐

Remark 2. For a linear differential game with the dynamics: relations Equation (4) turn into the following ones: Here,

E is the identity matrix, and the sign “” denotes the geometric difference. If the sets, and Q,

are convex polyhedra, and D is the unit cube in (any bounded polyhedron containing the unit closed ball is appropriate), then all sets produced by Equation (8) are polyhedra, too. These formulas were used as the basis for a computer algorithm. A computer program developed by the authors permits the implementation of Equation (8) for the space dimension up to three.

Consider the case where the right-hand side of Equation (

2) does not depend on

In this case, Definition 2 coincides with the usual definition of viability (see [

2]), and the sets

appearing in Theorem 1 can be found as follows:

, where:

Thus, the following theorem holds.

Theorem 3. If for any ,

then there exists the Hausdorff limit, ,

and: Otherwise, there are no viable subsets of G.

The approximation theorem is now formulated as follows.

Theorem 4. Let where S is the unit ball,

is the Lipschitz constant of the right-hand side of differential inclusion Equation (2) and: Suppose the sequence satisfies the conditions and .

If for all ,

then there exists the Hausdorff limit, ,

and: Otherwise, there are no viable subsets of G.

4. Numerical Scheme

The idea of the numerical method consists in the representation of the viability kernel as a level set of an appropriate function. Assume that the constraint set,

G, is described by the relation:

where

g is a Lipschitz continuous function. It is required to construct a function,

V, such that:

Define the Hamiltonian of the inclusion Equation (

2) as follows:

Let

be a Lipschitz function satisfying the conditions:

- (i)

for all ;

- (ii)

for any point and any function , such that attains a local minimum at , the following inequality holds: .

Proposition 5. The function:

has the property: Notice that Condition (i) provides the embedding of the level sets of

V into the corresponding level sets of

g. Condition (ii) provides the

u-stability of functions

(see [

15,

16]), and therefore, the

u-stability of the function

V. The operation “inf” provides the minimality of the resulting function,

i.e., the maximality of its level sets. Thus, Proposition 5 is valid.

Unfortunately, the direct application of this proposition to the computation of

V is impossible, because the validation of Condition (ii) is very difficult algorithmically. On the other hand, Theorem 1 shows that the function

V can be computed as

, where

is the value function of the differential game with the Hamiltonian

and the objective functional

; see [

16]. This remark allows us to use the numerical methods developed for constructing time-dependent value functions in differential games with state constraints (see [

15,

16]).

Let us outline the numerical methods of [

15,

16] and show how they should be adopted to our aims.

Consider the following finite difference scheme. Let

be the backward time step and

space discretization steps. Set

. For any continuous function

, define:

Denote by:

the restrictions of

and

g to the grid.

Let

be an interpolation operator that maps grid functions to continuous functions and satisfies the estimate:

for any smooth function,

ϕ. Here,

is the restriction of

ϕ to the grid,

the point-wise maximum norm,

the Hessian matrix of

ϕ and

C an independent constant.

Notice that Estimate (

10) is typical for interpolation operators (see, e.g., [

17]). Roughly speaking, interpolation operators reconstruct values and gradients of interpolated functions, and therefore, the expected error is given by Equation (

10).

As an example, consider a multilinear interpolation operator constructed in the following way (see [

15]).

Let

be an integer and

the binary representation of

m, so that

is either zero or one. Thus, each multi-index

represents a vertex of the unit cube in

, and

m counts the vertices. Introduce the following functions:

Notice that the

i-th member in the product Equation (

11) is either

or

depending on the value of

Consider a point

Denote by

the lower and by

the upper grid points of the

i-th axis, such that

. Let

,

, be the values of a grid function,

, in the vertices of the n-brick

(the vertices are ordered in the same way as the vertices of the unit n-cube above). The multilinear interpolation of

at

is:

Let

be a sequence of positive reals, such that

and

. Consider the following grid scheme:

Notice that is a continuous function, which is then restricted to the grid and then compared with the grid function .

Relations Equation (

9) and Equation (

12) can be interpreted as follows. The shift,

, of the argument of the function

in Equation (

9) means the opposite shift of level sets of

; compare with Equation (

4). The minimum over

f means the union of level sets of

, and the maximum over

v results in the intersection of the level sets. The subtraction of the value,

, means adding of the ball

to the level sets. Moreover, the maximum in Equation (

12) means the intersection of the level sets with the constraint set,

G. Therefore, the numerical scheme Equation (

12) implements the relation Equation (

4) in the language of level sets.

Consider another numerical grid scheme (see [

16]) that approximately implements the limit

. Introduce the following upwind operator:

where

are the components of

f, and:

Notice that the new operator, F, can be immediately applied to a grid function and returns a grid function.

The numerical scheme is now of the form:

Notice that the application of the algorithm Equation (

13) requires the relation

for all

ℓ (remember that

M is the bound of

); see [

15] and [

16]. On the other hand, numerical experiments show a very nice property of this method: the noise usually coming from the boundary of the grid area is absent, so that the grid region may not be too much larger than the region where the solution is searched. The algorithm Equation (

12) does not possess such a property, so that larger grid regions are necessary in this case. On the other hand, this algorithm admits larger steps,

, which can compensate for the necessary extent of the region.

5. Examples

Example 1. The first example illustrates Theorem 3 (the case of one player). Let the differential inclusion be of the form:

where

is the state vector,

S the unit ball of

and

a positive real number. Let

, where

. According to Theorem 3, consider the differential inclusion in reverse time (utilize the symmetry of

S):

Using the function

as the Lyapunov function, one can easily see that any solution of Equation (

14) does not leave

G. Therefore,

i.e.,

is the attainable set of System Equation (

14) at the time instant,

τ. A calculation shows that the support function of

is given by the formula:

Thus, Theorem 3 yields that

Example 2. The second example illustrates Theorem 2 and the application of the grid algorithm Equation (

13). Consider a pendulum with a moving suspension point. The dynamics of the object is described by the system:

Here,

θ is the deflection angle,

l the length,

m the mass,

the gravity acceleration,

u the torque applied to the pendulum at the suspension point (control) and

and

the vertical and horizontal accelerations of the suspension point, respectively, (disturbances). The following values of parameters and bounds on the control and disturbances are chosen:

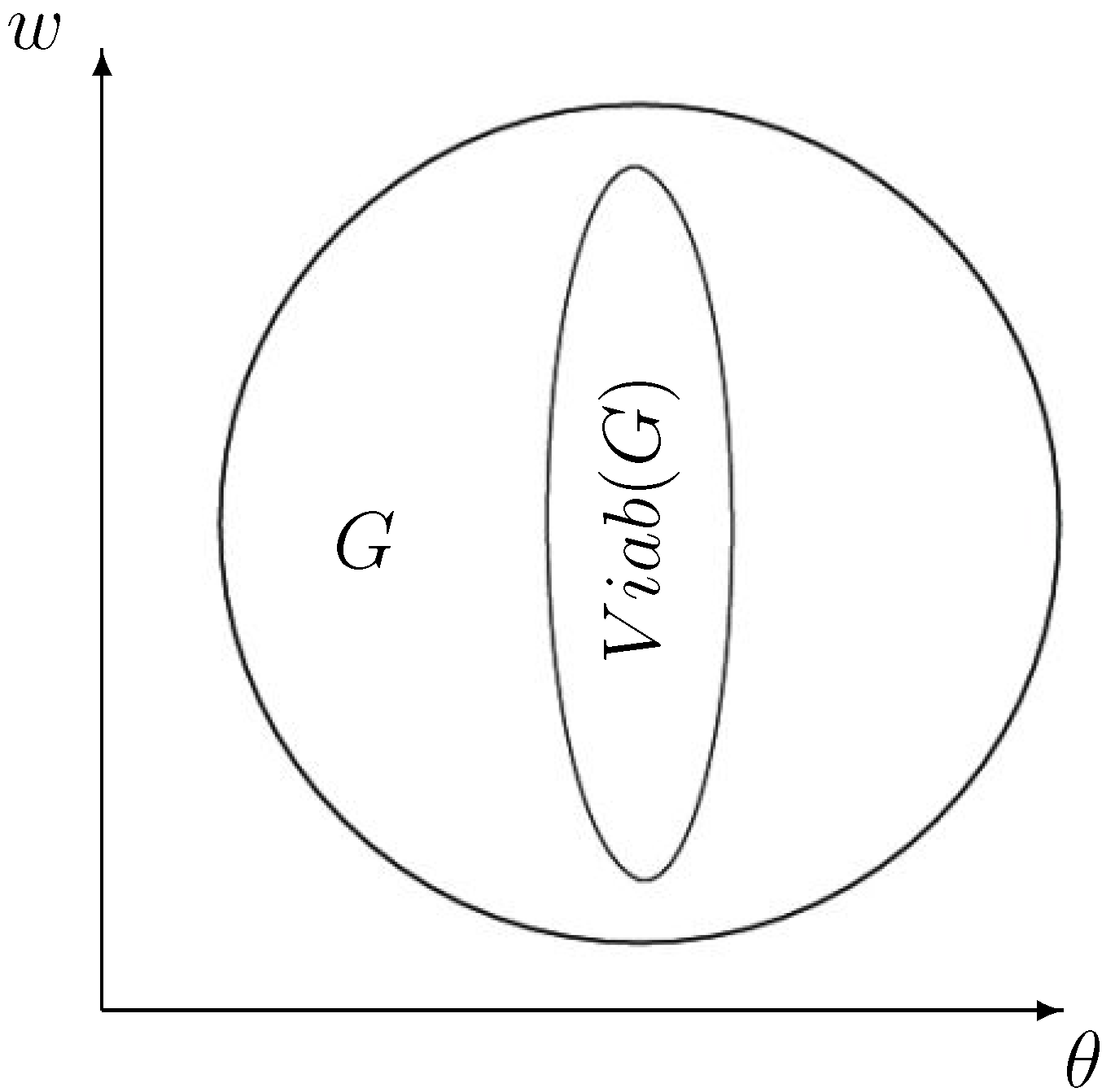

The constraint set, G, is the unit circle given by the relation , and the sequence is chosen as . The grid is formed by dividing the region into square cells.

The run tests of the algorithm Equation (

13) show that:

which is the stopping criterion. The runtime on a laptop with six threads is approximately 1 min.

Figure 1 shows the viability kernel obtained as

Figure 1.

The viability kernel for the problem Equation (

15) with the above described data.

Figure 1.

The viability kernel for the problem Equation (

15) with the above described data.