Music Genre Classification Based on VMD-IWOA-XGBOOST

Abstract

1. Introduction

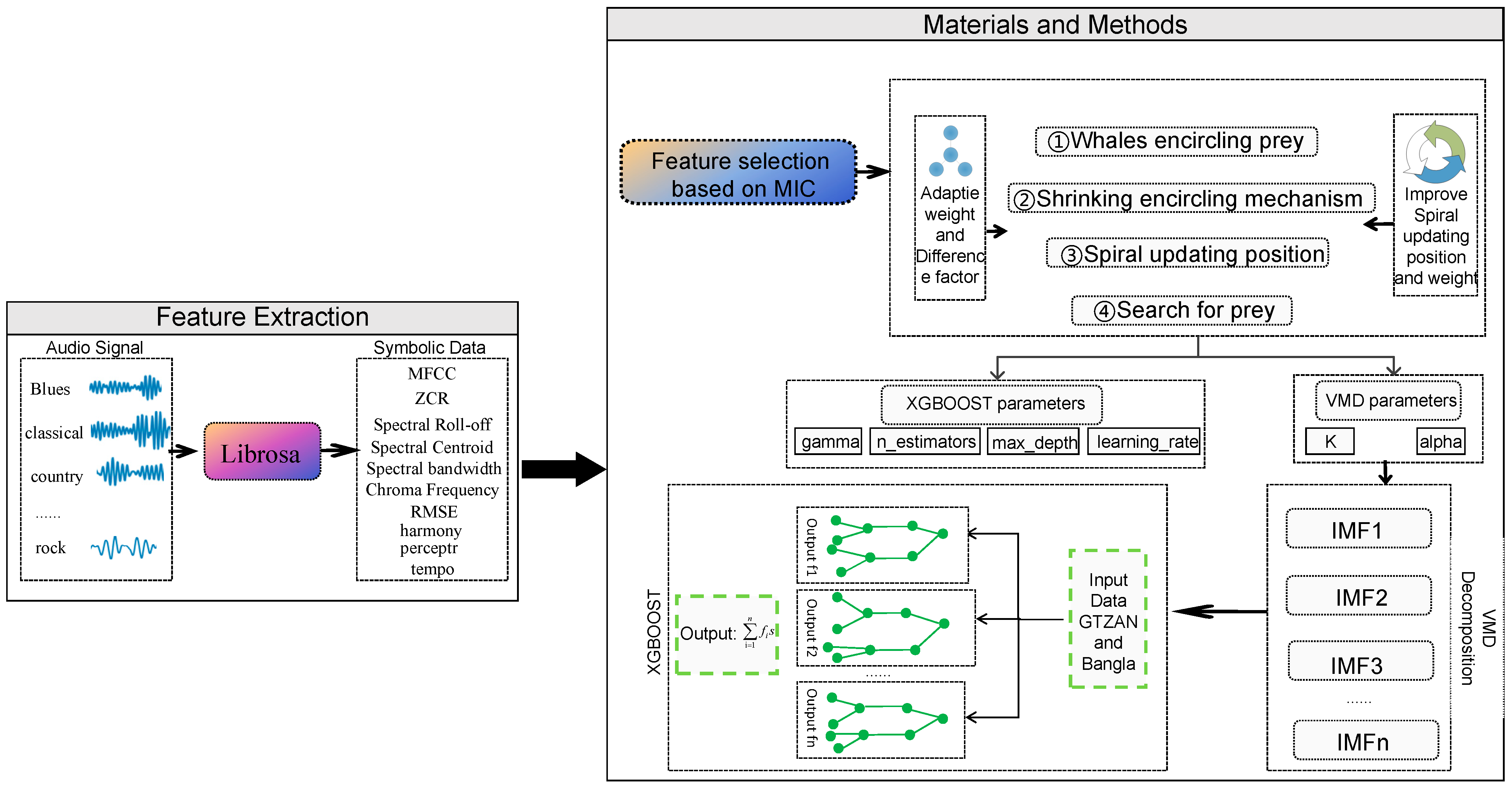

- A hybrid model with VMD-IWOA-XGBOOST is proposed for music genre classification. MIC is used to screen out high-correlation features, VMD is chosen to extract the key information of features, an Improved Whale Optimization Algorithm (IWOA) is proposed to improve the parameter setting, and XGBOOST is utilized as the classification model.

- An IWOA is proposed for parameter optimization. By refining the search process, contracting encircling, and altering the spiral position, comparative analysis reveals the superiority of the IWOA.

2. Methodology

2.1. Feature Extraction

- (1)

- The zero-crossing rate is the rate of change of a signal symbol, i.e., the probability of changing from a negative or opposite number to a positive number [26]. The over-zero rate is an important feature in the field of speech recognition and music information retrieval, and its defining formula is provided below:where is the signal length , and the function assigns a value of 1 when {} is true, and 0 otherwise.

- (2)

- The spectral center of mass is a critical physical parameter elucidating the timbral characteristics of a sound signal. It delineates the frequency-weighted average of energy distribution within a specified frequency band, functioning as the locus of gravity for its constituent frequencies. Consequently, it offers pivotal insights into the frequency and energy distributions inherent to the sound signal. It represents the brightness of the signal spectrum and is regarded as the cross-section of the STFT amplitude spectrum. The following is its defining formula:where is the spectrum of the DFT (discrete Fourier transform) at moment of amplitude.

- (3)

- Spectral roll-off generally means that the frame center frequency is below the default threshold of the spectrum (typically 85%). This is another attribute used to estimate the spectral pattern. Spectral roll-off points serve as discriminative indicators within audio signals, facilitating the identification of distinct sounds, including the timbral nuances exhibited by various instruments. These features, typically integrated with other descriptors such as MFCCs, zero-crossing rate, and bandwidth measures, are employed synergistically to enhance the efficacy of audio processing tasks. The calculation formula is provided below:

- (4)

- Spectral bandwidth refers to a fundamental parameter in signal processing and spectroscopy, representing the range of frequencies encompassed by a signal or a spectral distribution. It is calculated with the following formula:

- (5)

- Chroma frequency is used to indicate the energy of each tone level between musical signals, providing a metric characteristic in cases where there is a great similarity between musical segments.

- (6)

- RMSE is a method of characterizing the energy of a signal. It is expressed in Equation (5), while its rooted calculation is shown in Equation (6).where denotes the discrete time node signal.

- (7)

- In the case of Mel-frequency cepstral coefficients (MFCCs), the vast majority of its parameters are related to the amplitude of the frequency. The MFCC is an important feature of audio signals and it is used for rapid speech recognition [27]. Its equation is as follows:

- (8)

- The harmonic and percussive harmonic will reveal more horizontal or pitch-dependent changes. The percussive harmonic will show more vertical or time-dependent changes. These features are generally obtained using a fast Fourier transform (FFT).

- (9)

- Tempo is a fundamental aspect of music theory and analysis, denoting the rate or speed at which a musical piece progresses, typically measured in beats per minute (BPM).

2.2. The Maximal Information Coefficient

- (1)

- Firstly, and are arranged in ascending order, and, subsequently, an × grid is defined as a sequence partition, where each sample point of is partitioned into parts, each sample point of is partitioned into parts, and some cells are allowed to be empty sets.

- (2)

- The probability distribution function of all cells of the grid species is derived; at this time, the maximum mutual information value obtained is , and the value of its identity matrix is , as shown in Equation (8):where represents the joint probability density function of elements and within grid . and denotes the edge density distribution functions of and , respectively. is the number of cell samples falling in the th row and the th column of the grid , and is the total number of samples.

- (3)

- Since different grids lead to different probability distribution functions D|G, the maximum mutual information coefficients MIC of the variables and are searched for the optimal grid by the exhaustive method for the feature matrix:where represents the probability distribution function encompassing all elements within the grid . is the maximum grid for an exhaustive search.

2.3. Variational Mode Decomposition

- (1)

- The analytical signal of each mode is solved by the Hilbert transform, and the spectrum is constructed at the same time. Finally, the analytical signal of each decomposed mode component at time t is obtained:

- (2)

- The predicted center frequency is multiplied with the resolved signal of each IMF component for frequency correction, and the spectrum of each decomposed IMF component is shifted to the corresponding frequency band:where is the Hilbert transform functor and is the correction factor.

- (3)

- The variational problem with constraints is constructed by using the above-demodulated signal, calculating the bias, and then estimating the bandwidth from its squared paradigm, as shown below:where is the set of IMF components for each decomposition, denotes the set of center frequencies for each mode component, denotes the bias operation on the variable t, denotes the unit-pulse signal function, * denotes the convolution operation, denotes the original signal, and denotes the L2 paradigm.

- (4)

- In order to transform the constrained variational problem into an variational problem without constraints, the original problem can be converted into a problem of solving the Lagrange function maximum by introducing the Lagrange multiplier a with the quadratic penalty factor , which has the following expression:where denotes the Lagrange multiplier, denotes the quadratic penalty factor, and denotes the dot product operation.

- (5)

- The optimal solution of the constrained variational model is solved by updating , , and in the frequency domain using the alternating direction multiplier method, and the updated equation is shown below:where is the number of iterations; ,, and are the Fourier transforms of , , and , respectively; is the Wiener filtering of each component of IMF after Fourier transform; is the noise tolerance limit; is the center frequency of the th mode component at the th iteration; and is the set threshold of convergence accuracy. Using Equations (12)–(14), , , and are continuously updated until the termination condition of Equation (17) is satisfied; then, the iteration is terminated.

2.4. Improved Whale Optimization Algorithm

- (1)

- Adaptive weighting

- (2)

- Variable helix position

- (3)

- Differential variance scale factor

2.5. XGBOOST

2.6. The Proposed VMD-IWOA-XGBOOST Model

3. Experiment

3.1. Data Set

3.2. Evaluation Criteria

3.3. Parameter Settings

3.4. Experiment Results

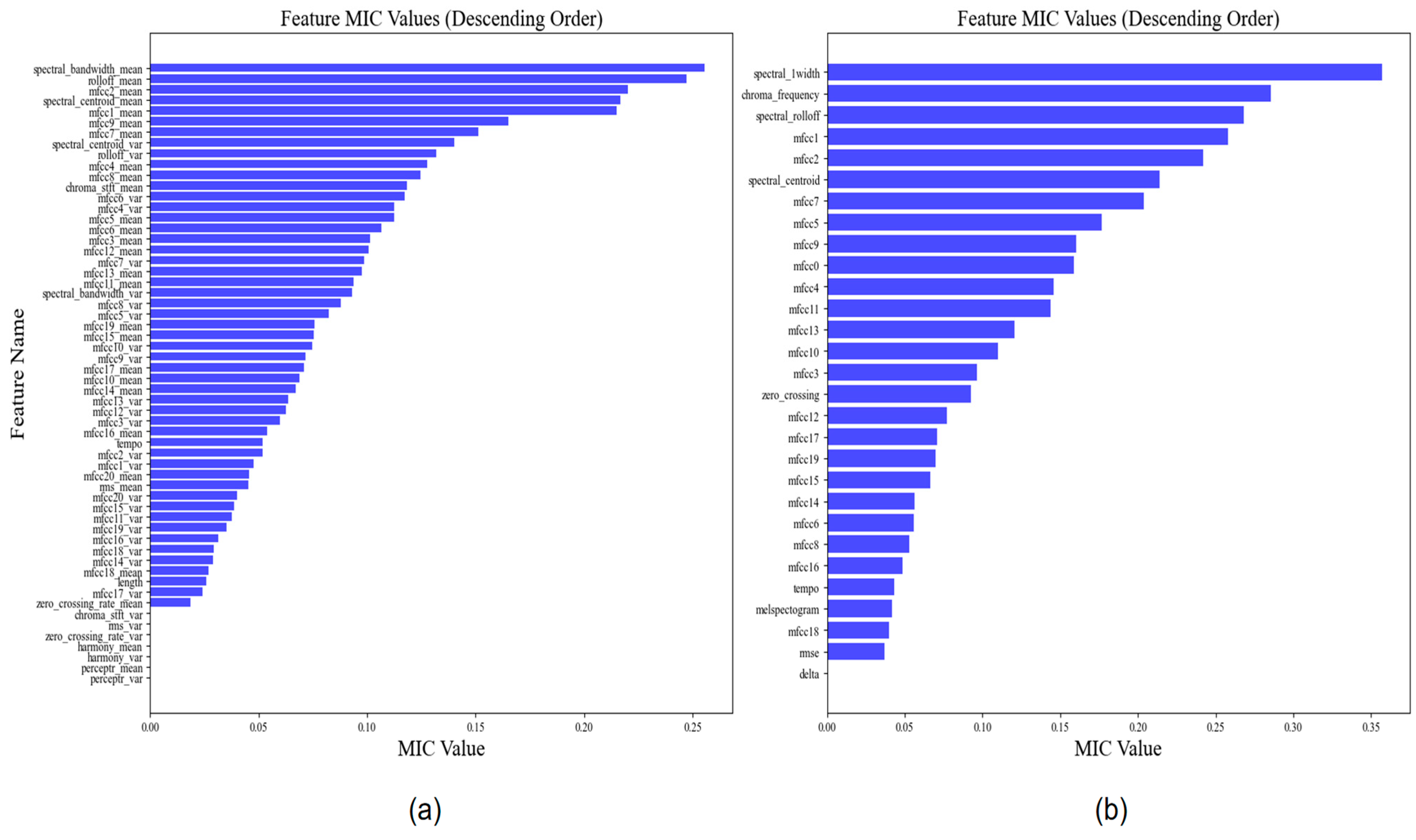

3.4.1. Feature Selection Results

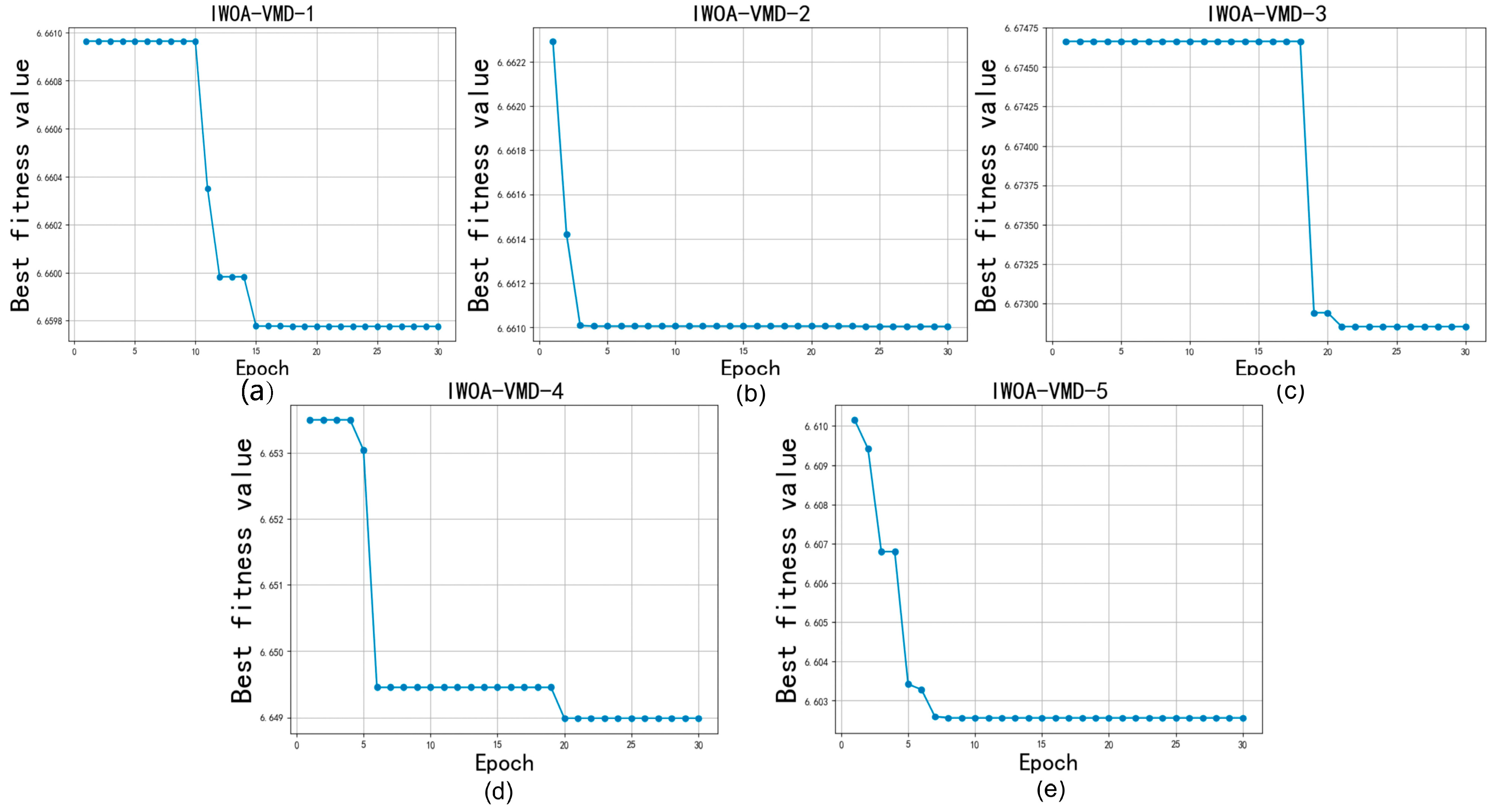

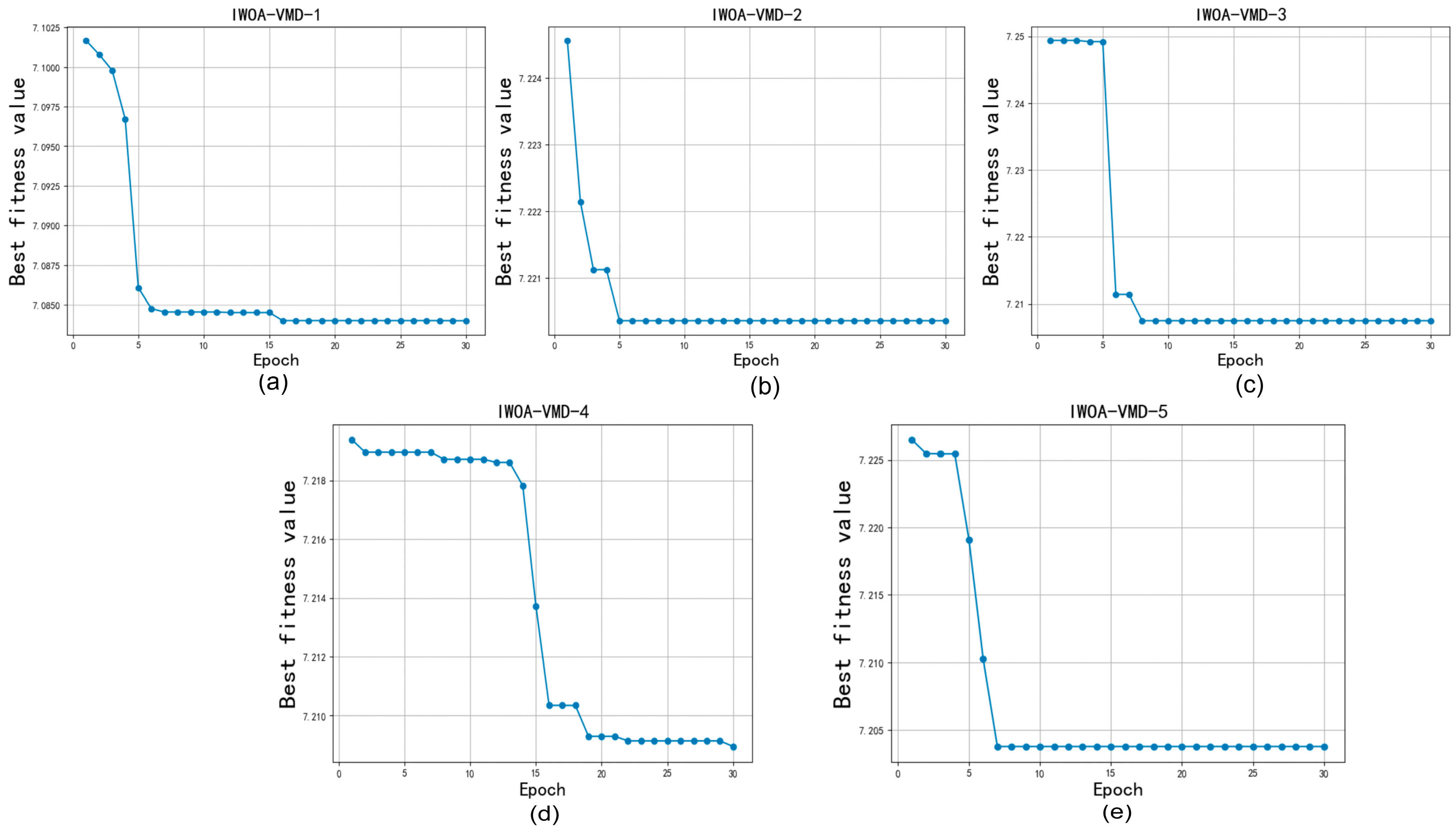

3.4.2. Decomposition Results

3.4.3. Analysis of Classification Results

4. Conclusions

- A hybrid model with VMD-IWOA-XGBOOST is proposed for music genre classification. MIC is used to screen out five high-correlation features, the signal decomposition technique VMD is chosen to extract the key information of features, IWOA is proposed to improve parameter optimization, and XGBOOST is utilized as the classification model.

- An IWOA is developed by refining the search process, contracting encircling, and altering the spiral position. We propose using an IWOA for parameter optimization. Comparative analysis reveals that the IWOA outperforms the WOA algorithm in terms of four evaluation metrics.

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Campobello, G.; Dell’Aquila, D.; Russo, M.; Segreto, A. Neuro-genetic programming for multigenre classification of music content. Appl. Soft Comput. 2020, 94, 106488. [Google Scholar] [CrossRef]

- Oramas, S.; Barbieri, F.; Nieto, O.; Serra, X. Multimodal deep learning for music genre classification. Trans. Int. Soc. Music. Inf. Retr. 2018, 1, 4–21. [Google Scholar] [CrossRef]

- Xie, C.; Song, H.; Zhu, H.; Mi, K.; Li, Z.; Zhang, Y.; Cheng, J.; Zhou, H.; Li, R.; Cai, H. Music genre classification based on res-gated CNN and attention mechanism. Multimed. Tools Appl. 2023, 83, 13527–13542. [Google Scholar] [CrossRef]

- Qiu, L.; Li, S.; Sung, Y. DBTMPE: Deep Bidirectional Transformers-Based Masked Predictive Encoder Approach for Music Genre Classification. Mathematics 2021, 9, 530. [Google Scholar] [CrossRef]

- Nag, S.; Basu, M.; Sanyal, S.; Banerjee, A.; Ghosh, D. On the application of deep learning and multifractal techniques to classify emotions and instruments using Indian Classical Music. Phys. A Stat. Mech. Its Appl. 2022, 597, 127261. [Google Scholar] [CrossRef]

- Costa, Y.M.; Oliveira, L.S.; Silla, C.N. An evaluation of Convolutional Neural Networks for music classification using spectrograms. Appl. Soft Comput. 2017, 52, 28–38. [Google Scholar] [CrossRef]

- Yu, Y.; Luo, S.; Liu, S.; Qiao, H.; Liu, Y.; Feng, L. Deep attention based music genre classification. Neurocomputing 2020, 372, 84–91. [Google Scholar] [CrossRef]

- Cheng, Y.-H.; Kuo, C.-N. Machine Learning for Music Genre Classification Using Visual Mel Spectrum. Mathematics 2022, 10, 4427. [Google Scholar] [CrossRef]

- Almazaydeh, L.; Atiewi, S.; Al Tawil, A.; Elleithy, K. Arabic music genre classification using deep convolutional neural networks (CNNS). Comput. Mater. Contin. 2022, 72, 5443–5458. [Google Scholar] [CrossRef]

- Costa, Y.M.G.; Oliveira, L.S.; Koerich, A.L.; Gouyon, F.; Martins, J.G. Music genre classification using LBP textural features. Signal Process. 2012, 92, 2723–2737. [Google Scholar] [CrossRef]

- Gan, J. Music Feature Classification Based on Recurrent Neural Networks with Channel Attention Mechanism. Mob. Inf. Syst. 2021, 2021, 7629994. [Google Scholar] [CrossRef]

- Kumaraswamy, B. Optimized deep learning for genre classification via improved moth flame algorithm. Multimed. Tools Appl. 2022, 81, 17071–17093. [Google Scholar] [CrossRef]

- Wang, H.; Siti, S.; Chen, Z.; Shan, Q.; Ren, L. An intelligent music genre analysis using feature extraction and classification using deep learning techniques. Comput. Electr. Eng. 2022, 100, 107978. [Google Scholar] [CrossRef]

- Tian, R.; Yin, R.; Gan, F. Music sentiment classification based on an optimized CNN-RF-QPSO model. Data Technol. Appl. 2023, 57, 719–733. [Google Scholar] [CrossRef]

- Li, J.; Han, L.; Wang, Y.; Yuan, B.; Yuan, X.; Yang, Y.; Yan, H. Combined angular margin and cosine margin softmax loss for music classification based on spectrograms. Neural Comput. Appl. 2022, 34, 10337–10353. [Google Scholar] [CrossRef]

- Chudy, M.; Nawrocka-Wysocka, A.; Łukasik, E.; Kuśmierek, E.; Parkoła, T. Incorporating symbolic representations of traditional music into a digital library. In Proceedings of the 10th International Conference on Digital Libraries for Musicology (DLfM ‘23), Milan, Italy, 10 November 2023; Association for Computing Machinery: New York, NY, USA, 2023; pp. 30–34. [Google Scholar] [CrossRef]

- Tzanetakis, G.; Ermolinskyi, A. Cook Pitch histograms in audio and symbolic music information retrieval. J. New Music Res. 2003, 32, 143–152. [Google Scholar] [CrossRef]

- Karydis, I. Symbolic Music Genre Classification Based on Note Pitch and Duration. In Advances in Databases and Information Systems. ADBIS 2006; Lecture Notes in Computer Science; Manolopoulos, Y., Pokorný, J., Sellis, T.K., Eds.; Springer: Berlin/Heidelberg, Germany, 2006; Volume 4152. [Google Scholar] [CrossRef]

- McKay, C.; Fujinaga, I. Automatic genre classification using large high-level musical feature sets. In Proceedings of the 5th International Symposium on Music Information Retrieval, Barcelona, Spain, 10–14 October 2004; pp. 1–6. [Google Scholar] [CrossRef]

- Valverde-Rebaza, J.; Soriano, A.; Berton, L.; de Oliveira, M.C.F.; De Andrade Lopes, A. Music Genre Classification Using Traditional and Relational Approaches. In Proceedings of the 2014 Brazilian Conference on Intelligent Systems, Sao Paulo, Brazil, 18–22 October 2014; pp. 259–264. [Google Scholar] [CrossRef]

- Lee, J.; Lee, M.; Jang, D.; Yoon, K. Korean Traditional Music Genre Classification Using Sample and MIDI Phrases. KSII Trans. Internet Inf. Syst. 2018, 12, 1869–1886. [Google Scholar] [CrossRef]

- Qiu, L.; Li, S.; Sung, Y. 3D-DCDAE: Unsupervised Music Latent Representations Learning Method Based on a Deep 3D Convolutional Denoising Autoencoder for Music Genre Classification. Mathematics 2021, 9, 2274. [Google Scholar] [CrossRef]

- Cheng, Y.-H.; Chang, P.-C.; Kuo, C.-N. Convolutional Neural Networks Approach for Music Genre Classification. In Proceedings of the 2020 International Symposium on Computer, Consumer and Control (IS3C), Taichung City, Taiwan, 13–16 November 2020; pp. 399–403. [Google Scholar] [CrossRef]

- Sakinat, O. Folorunso, Sulaimon A. Afolabi, Adeoye B. Owodeyi, Dissecting the genre of Nigerian music with machine learning models. J. King Saud Univ.-Comput. Inf. Sci. 2022, 34, 6266–6279. [Google Scholar] [CrossRef]

- Tzanetakis, G.; Cook, P. Musical genre classification of audio signals. IEEE Trans. Speech Audio Process. 2002, 10, 293–302. [Google Scholar] [CrossRef]

- Al Mamun, M.A.; Kadir, I.; Rabby, A.S.A.; Al Azmi, A. Bangla Music Genre Classification Using Neural Network. In Proceedings of the 2019 8th International Conference System Modeling and Advancement in Research Trends (SMART), Moradabad, India, 22–23 November 2019; pp. 397–403. [Google Scholar] [CrossRef]

- Abou-Abbas, L.; Tadj, C.; Fersaie, H.A. A fully automated approach for baby cry signal segmentation and boundary detection of expiratory and inspiratory episodes. J. Acoust. Soc. Am. 2017, 142, 1318. [Google Scholar] [CrossRef] [PubMed]

- Reshef, D.N.; Reshef, Y.A.; Finucane, H.K.; Grossman, S.R.; McVean, G.; Turnbaugh, P.J.; Lander, E.S.; Mitzenmacher, M.; Sabeti, P.C. Detecting Novel Associations in Large Data Sets. Science 2011, 334, 1518–1524. [Google Scholar] [CrossRef] [PubMed]

- Dragomiretskiy, K.; Zosso, D. Variational Mode Decomposition. IEEE Trans. Signal Process. 2014, 62, 531–544. [Google Scholar] [CrossRef]

- Mirjalili, S.; Lewis, A. The Whale Optimization Algorithm. Adv. Eng. Softw. 2016, 95, 51–67. [Google Scholar] [CrossRef]

- Adilaxmi, M.; Bhargavi, D.; Phaneendra, K. Numerical Solution of Singularly Perturbed Differential-Difference Equations using Multiple Fitting Factors. Commun. Math. Appl. 2019, 10, 681–691. [Google Scholar] [CrossRef]

- Chen, T.; Guestrin, C. XGBoost: A Scalable Tree Boosting System. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining (KDD ‘16), San Francisco, CA, USA, 13–17 August 2016; Association for Computing Machinery: New York, NY, USA, 2016; pp. 785–794. [Google Scholar] [CrossRef]

| Model | Parameters | Values |

|---|---|---|

| BP | epoch, batch_size | 10,000, 512 |

| LSTM | epoch, batch_size | 10,000, 512 |

| AdaBoost | n_estimators, learning_rate | 100, 0.01 |

| GBDT | n_estimators, learning_rate, max_depth | 100, 0.01, 5 |

| XGBOOST | gamma, n_estimators, learning_rate, max_depth | 0, 100, 0.01, 5 |

| RF | n_estimators, max_depth, min_samples_leaf | 100, 5, 2 |

| WOA-XGBOOST | gamma, n_estimators, learning_rate, max_depth | [0~10] [50~5000] [0.01~0.5] [1~20] |

| VMD-IWOA-XGBOOST | K, alpha, gamma, n_estimators, learning_rate, max_depth | [3~100] [100~25,000] [0~10] [50~5000] [0.01~0.5] [1~20] |

| Data | Features | Weight |

|---|---|---|

| GTZAN | spectral_bandwidth_mean | 0.2556 |

| rolloff_mean | 0.2473 | |

| mfcc2_mean | 0.2201 | |

| spectral_centroid_mean | 0.2165 | |

| mfcc1_mean | 0.2150 | |

| mfcc9_mean | 0.1650 | |

| Mfcc7_mean | 0.1512 | |

| spectral_centroid_var | 0.1403 | |

| rolloff_var | 0.1318 | |

| Mfcc4_mean | 0.1275 | |

| Mfcc8_mean | 0.1244 | |

| chroma_stft_mean | 0.1183 | |

| Mfcc6_var | 0.1172 | |

| Mfcc4_var | 0.1125 | |

| Mfcc5_mean | 0.1123 | |

| Mfcc6_mean | 0.1066 | |

| Mfcc3_mean | 0.1012 | |

| Mfcc12_mean | 0.1006 | |

| Mfcc7_var | 0.0984 | |

| Mfcc13_mean | 0.0973 | |

| Mfcc11_mean | 0.0938 | |

| spectral_bandwidth_var | 0.0929 | |

| Mfcc8_var | 0.0876 | |

| Mfcc5_var | 0.0823 | |

| Mfcc19_mean | 0.0755 | |

| Mfcc15_mean | 0.0754 | |

| Mfcc10_var | 0.0747 | |

| Mfcc9_var | 0.0714 | |

| Mfcc17_mean | 0.0709 | |

| Mfcc10_mean | 0.0688 | |

| Mfcc14_mean | 0.0672 | |

| Mfcc13_var | 0.0636 | |

| Mfcc12_var | 0.0624 | |

| Mfcc3_var | 0.0598 | |

| Mfcc16_mean | 0.0539 | |

| tempo | 0.0519 | |

| Mfcc2_var | 0.0518 | |

| Mfcc1_var | 0.0475 | |

| Mfcc20_mean | 0.0456 | |

| Rms_mean | 0.0450 | |

| Mfcc20_var | 0.0401 | |

| Mfcc15_var | 0.0387 | |

| Mfcc11_var | 0.0377 | |

| Mfcc19_var | 0.0352 | |

| Mfcc16_var | 0.0313 | |

| Mfcc18_var | 0.0294 | |

| Mfcc14_var | 0.0290 | |

| Mfcc18_mean | 0.0270 | |

| length | 0.0256 | |

| Mfcc17_var | 0.0241 | |

| zero_crossing_rate_mean | 0.0184 | |

| chroma_stft_var | 0 | |

| Rms_var | 0 | |

| zero_crossing_rate_var | 0 | |

| harmony_mean | 0 | |

| harmony_var | 0 | |

| perceptr_mean | 0 | |

| Bangla | spectral_1width | 0.3569 |

| chroma_frequency | 0.2854 | |

| spectral_rolloff | 0.2682 | |

| mfcc1 | 0.2579 | |

| mfcc2 | 0.2421 | |

| spectral_centroid | 0.2141 | |

| Mfcc7 | 0.2038 | |

| Mfcc5 | 0.1764 | |

| Mfcc9 | 0.1603 | |

| Mfcc0 | 0.1586 | |

| Mfcc4 | 0.1456 | |

| Mfcc11 | 0.1437 | |

| Mfcc13 | 0.1204 | |

| Mfcc10 | 0.1099 | |

| Mfcc3 | 0.0965 | |

| zero_crossing | 0.0923 | |

| Mfcc12 | 0.0771 | |

| Mfcc17 | 0.0710 | |

| Mfcc19 | 0.0697 | |

| Mfcc15 | 0.0664 | |

| Mfcc14 | 0.0564 | |

| Mfcc6 | 0.0555 | |

| Mfcc8 | 0.0528 | |

| Mfcc16 | 0.0483 | |

| tempo | 0.0434 | |

| melspectogram | 0.0415 | |

| Mfcc18 | 0.0396 | |

| rmse | 0.0370 | |

| delta | 0 | |

| perceptr_var | 0 |

| Data | Model | Accuracy | MCC | Macro-Precision | Macro-Recall | Macro-F1-Score |

|---|---|---|---|---|---|---|

| GTZAN | AdaBoost | 0.335 | 0.265 | 0.276 | 0.319 | 0.271 |

| BP | 0.625 | 0.588 | 0.648 | 0.639 | 0.641 | |

| LSTM | 0.645 | 0.660 | 0.661 | 0.653 | 0.647 | |

| GBDT | 0.640 | 0.601 | 0.649 | 0.661 | 0.642 | |

| RF | 0.655 | 0.620 | 0.687 | 0.673 | 0.659 | |

| XGBOOST | 0.665 | 0.630 | 0.678 | 0.686 | 0.665 | |

| WOA-XGBOOST | 0.785 | 0.760 | 0.787 | 0.796 | 0.790 | |

| VMD-IWOA-XGBOOST | 0.855 | 0.844 | 0.854 | 0.866 | 0.855 | |

| Bangla | AdaBoost | 0.438 | 0.339 | 0.427 | 0.447 | 0.405 |

| BP | 0.647 | 0.583 | 0.637 | 0.638 | 0.636 | |

| LSTM | 0.679 | 0.643 | 0.645 | 0.669 | 0.667 | |

| GBDT | 0.679 | 0.616 | 0.677 | 0.676 | 0.673 | |

| RF | 0.653 | 0.585 | 0.652 | 0.652 | 0.648 | |

| XGBOOST | 0.689 | 0.618 | 0.674 | 0.675 | 0.672 | |

| WOA-XGBOOST | 0.767 | 0.720 | 0.759 | 0.759 | 0.759 | |

| VMD-IWOA-XGBOOST | 0.785 | 0.742 | 0.782 | 0.782 | 0.780 |

| Data | Model | Running Time (s) | p-Values |

|---|---|---|---|

| GTZAN | AdaBoost | 6.675 | 0.000 |

| BP | 329.710 | 0.027 | |

| LSTM | 1422.501 | 0.010 | |

| GBDT | 90.445 | 0.003 | |

| RF | 4.588 | 0.003 | |

| XGBOOST | 4.742 | 0.011 | |

| WOA-XGBOOST | 2382.100 | 0.350 | |

| VMD-IWOA-XGBOOST | 1017.203 | / | |

| Bangla | AdaBoost | 6.125 | 0.000 |

| BP | 448.431 | 0.556 | |

| LSTM | 2104.9 | 0.041 | |

| GBDT | 55.12 | 0.044 | |

| RF | 4.322 | 0.001 | |

| XGBOOST | 5.235 | 0.037 | |

| WOA-XGBOOST | 2879 | 0.376 | |

| VMD-IWOA-XGBOOST | 1854.5 | / |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Gan, R.; Huang, T.; Shao, J.; Wang, F. Music Genre Classification Based on VMD-IWOA-XGBOOST. Mathematics 2024, 12, 1549. https://doi.org/10.3390/math12101549

Gan R, Huang T, Shao J, Wang F. Music Genre Classification Based on VMD-IWOA-XGBOOST. Mathematics. 2024; 12(10):1549. https://doi.org/10.3390/math12101549

Chicago/Turabian StyleGan, Rumeijiang, Tichen Huang, Jin Shao, and Fuyu Wang. 2024. "Music Genre Classification Based on VMD-IWOA-XGBOOST" Mathematics 12, no. 10: 1549. https://doi.org/10.3390/math12101549

APA StyleGan, R., Huang, T., Shao, J., & Wang, F. (2024). Music Genre Classification Based on VMD-IWOA-XGBOOST. Mathematics, 12(10), 1549. https://doi.org/10.3390/math12101549