1. Introduction

A Massive Open Online Course (MOOC) is usually a free course that is open (globally) to anyone who wishes to enroll, has video and text-based instructional content that can be downloaded, quizzes, assignments, and forums made available through an online platform. MOOCs are accessible to people who plan to take a course or to be schooled in a particular subject area [

1]. MOOCs vary from most online courses due to the fact that MOOCs are normally time-based such that cohorts of students complete the course over the same time period.

Two pioneers of online learning in Canada, George Siemens and Stephen Downes created and taught the first course that could be classified as a MOOC [

1]. In 2008, Siemens and Downes taught the course “Connectivism and Connected Knowledge (CCK08)” to 25 students at the University of Manitoba. ‘Connectivism’ is an educational theory proposed by George Siemens that highlights the importance of connections between people and knowledge [

2]. CCK08 was a traditional fee-paying course, but Siemens and Downes made a decision to open up access to the course to anyone who desired to join it online [

2]. About 2200 additional students joined the course online.

Between 2008 and 2012, MOOCs did not catch the attention of mainstream media, nor find much adoption amongst educational institutions [

3]. The course credited to have been the catalyst for the explosion of the term “MOOCs” was “CS 271: Introduction to Artificial Intelligence”. This course was taught in 2012 by Sebastian Thrun, a professor at Stanford University, and Peter Norvig, the Director of Research at Google [

4]. CS 271 was a regular course offered at Stanford University which students took for credits, but Thrun and Norvig used a learning management system (LMS) to host short videos, quizzes, tests and discussion boards for people who wanted to have access to the course materials. Anyone interested in the course eventually had access to the content; both students and non-students [

5]. Participants in the course were not required to create and maintain active student-student and student-tutor connections [

6]. By the time the course ended, there were 30 regular students and about 160,000 online participants [

7]. CS 271 created a lot of popularity for MOOCs, which resulted in the creation of a range of MOOC platforms such as Coursera, Udacity, and edX [

8].

CCK08 and CS 271 were courses that influenced the categorization of MOOCs into two major types; the cMOOC and the xMOOC respectively [

1]. The cMOOC (CCK08 course) was created based on the learning theory of Connectivism, a concept that has principles developed by George Siemens [

9]. The Connectivist theory proposes that people learn through active connections with others, and through interactions, knowledge is transferred and learning is achieved. There is no tutor as such; the course instructor only serves as a moderator who ensures that the course meets the objectives for which it was designed [

9]. cMOOCs can be considered as extensions of Personal Learning Environments (PLEs) and Personal Learning Networks (PLNs) [

10].

The xMOOC (CS 271) falls into the cognitive-behaviorist pedagogical classification; first and foremost, it is an existing course, made available online [

11]. It is similar to the traditional pedagogical model; it involves the use of an instructor(s) who transmits knowledge via downloadable videos and text files. In addition to these, the course contains quizzes, assignments, and online group forums. The popular MOOC platforms such as Coursera and edX offer xMOOCs, making them more popular than the cMOOCs [

12].

Despite the huge growth in the number of MOOCs and a proportionate growth in enrolment numbers, participation in the MOOCs after enrolment, as well as completion of the courses has been widely criticized [

2]. The leading MOOC platforms such as Coursera and EdX have typical completion rates of less than 13 percent of those who registered for the course prior to their start [

13]. Several MOOCs have completion rates as low as 4 percent or 5 percent [

13]. For example, the University of Edinburg ran six MOOCs in 2013 with over 600,000 students registering for the courses. After the courses had ended, Statements of Accomplishment (which show that the learner has seen most or all of the MOOC content) were give out to only 30,000 students, which represented 12 percent of the total number of students who registered [

2]. In another instance, Duke University offered the Bioelectricity MOOC which had 12,725 students registering. Out of those that registered, only 7761 students watched a video and only 3658 of them took at least one quiz, while 313 passed the course with a certificate, a dropout rate of about 97 percent [

13]. A study conducted by Zhang and Yuan [

14] on 79,186 users and 39 courses on the Chinese XuetangX MOOC platform showed a dropout rate of between 66.09 and 92.93%. Between 2012 and 2015, more than 25 million people worldwide enrolled on MOOCs; however reports indicate that only a small percentage, approximately 10%, of these millions completed the courses [

15].

Generally, MOOC completion can be described as performing all the necessary activities that could earn a student a certificate [

2], however, MOOC completion remains a controversial topic. Some authors have argued that people enroll on MOOCs for various reasons, not primarily to complete the MOOC so completion rates cannot be measured only by the performance of all tasks that will enable a student earn a certificate. For instance, Porter [

2] argues that a second look must be taken at the issue of MOOC completion by investigating the expectations and priorities of the target group of participants before one begins to analyze completion figures [

2]. Zheng et al. [

16] also argue that there are four general types of student motivations for joining MOOCs: fulfilling current needs, preparing for the future, satisfying curiosity, and connecting with people, hence course completion may not be the ultimate aim for joining a MOOC [

16].

Some authors have also argued that student intrinsic motivations alone should not be seen as the most important factor that influences MOOC usage and subsequent completion, but course design is also an important factor that influences completion. For instance, Porter [

2] states that the design of the learning environment in online educational systems is important in influencing usage; it entails the design of the learning platform as well as the form of support and encouragement given to the learner [

2]. Encouragement to the learner could be in the form of weekly emails reminding them of their progress in the course and also to emphasize progress made by their peers [

2]. Cole [

17] also argues that in order to improve learner retention, online learning materials should be designed properly [

17].

The MOOC is a form of online learning. Online learning offers many benefits and opportunities to both tutors and learners. In order to harness the opportunities offered by online educational systems, some lecturers in selected universities in Ghana have tried to engage students to use MOOCs to improve their learning. However, as with international trends, the implementation of the MOOCs has seen poor participation or usage. As the literature suggests, poor participation in the MOOCs after enrolment, as well as low completion rates are a source of concern.

A review of the literature revealed that little research has been done in the area of MOOC usage; for instance, there is little exploratory work on intention to use MOOCs and actual MOOC usage. This study intends to address the paucity of research in the area of MOOC adoption and usage, and specifically probe the peculiar factors that account for this in universities in Ghana. There is very little research on MOOC usage in African countries, hence this study will give academics a platform to do a cross-cultural evaluation of the application of certain theories and models in research.

1.1. Literature Review

The aim of the study was to identify the factors that influence the adoption and use of MOOCs as an online educational technology to support student learning. Technology adoption is therefore an essential aspect of the framework of the study. A review of the literature indicated that there are several models/theories of technology adoption, such as the Theory of Reasoned Action (TRA), the Theory of Planned Behavior (TPB), Social Cognitive Theory (SCT), the Technology Acceptance Model (TAM), the Extended Technology Acceptance Model (TAM2), and the Unified Theory of Acceptance and Use of Technology (UTAUT). The current study adapted the UTAUT for the theoretical framework; the reason for this choice is explained in the next section.

The Unified Theory of Acceptance and Use of Technology (UTAUT) model was developed by Venkatesh, Morris, Davis and Davis in 2003 [

18] to address the limitations of the Technology Acceptance Model (TAM) and other popular models used in the study of information systems adoption. Their goal was to create a unified model by merging different models of technology adoption as well as results from several researchers in the area of technology adoption [

19]. Venkatesh et al. [

18] identified and studied eight previously established models;

Theory of Reasoned Action (TRA)—Ajzen & Fishbein (1980)

Technology Acceptance Model (TAM)—Davis (1989)

Motivational model (MM)—Davis et al. (1992)

Theory of Planned Behaviour (TPB)—Ajzen (1985)

C-TAM-TPB—a model combining TAM and the Theory of Planned Behaviour (TPB)—Taylor and Todd (1995)

Model of Personal Computer Utilization (MPCU)—Thompson et al. (1991)

Innovation Diffusion Theory (IDT)—Rogers (1983 and 2003)

Social Cognitive Theory (SCT)—Compeau & Higgins (1995) [

19]

Venkatesh et al. [

18] used longitudinal data from four organizations over a 6-month period to empirically compare the eight models in both voluntary and mandatory settings. At the end of the study, the resultant model was the Unified Theory of Acceptance and Use of Technology (UTAUT) model. UTAUT posits that four constructs play a significant role in determining user acceptance and user behavior, namely, performance expectancy, effort expectancy, social influence, and facilitating conditions [

18]. The researchers also discovered certain moderating factors that had been found to significantly influence the resolve to use technology. Venkatesh et al. [

18] explored the effect of these moderating variables on technology use. These moderating factors are gender, age, experience and voluntariness [

18].

According to Venkatesh et al. [

18] the variance in intention to use explained by the contributing models ranged from 17 to 53%. The UTAUT model was found to perform better in terms of variance in intention to use compared to any of the other eight models [

18]. The researchers also found that the moderating factors significantly improved the predictive power of the rest of the eight models, except the Motivational Model and the Social Cognitive Model. The UTAUT is one of the most powerful and thorough theories for explaining IT adoption; largely because of the integration of as many as eight theories [

20].

Once an appropriate model for technology adoption and use had been identified for the study, it was critical to find out how extensively this model had been used in the study of technology use in e-learning settings. UTAUT was originally created to assess technology adoption in organizational/corporate settings, but a review of the literature showed that UTAUT has been applied in the context of e-learning, as it has been beneficial in understanding technology use in various e-learning settings. The next section will discuss studies in the area of e-learning that involved the use of the UTAUT as a technology adoption model.

Dečman [

21] conducted a study to determine the impact of the UTAUT variables on the intention to use an e-learning system in a mandatory setting. The study used factor analysis and structural equation modeling for data validation. UTAUT was shown to be an appropriate model to examine technology adoption in an e-learning setting. Social influence and performance expectancy were shown to significantly influence usage intention of the technology. Results also showed no significant influence of students’ previous education or gender on the model fit [

21].

A study conducted by Wang et al. [

22] on mobile learning (m-learning) usage showed that performance expectancy, effort expectancy, social influence, perceived playfulness, and self-management of learning were all significant determinants of behavioral intention to use m-learning. The study used a framework based on the UTAUT by adding the perceived playfulness and self-management of m-learning constructs. A survey was conducted and data analysis was done using structural equation modeling [

22].

Using UTAUT as the theoretical framework, Pynoo et al. [

23] studied secondary school teachers’ acceptance of a digital learning environment (DLE). The study revealed that the main predictors of DLE acceptance were performance expectancy and social influence by superiors to use the DLE, while effort expectancy and facilitating conditions were not significant [

23].

Juinn & Tan [

24] conducted a study in Taiwan using UTAUT as a theoretical lens to investigate Taiwanese college students’ acceptance of English e-learning websites. They found that effort expectancy had a positive effect on behavioral intentions. Their study also revealed that facilitating conditions had a direct effect on the use of English e-learning websites [

24]. In the area of open access educational content, Dulle [

25] conducted a study in Tanzanian universities using UTAUT. The study revealed that effort expectancy is a key determinant of researchers’ behavioral intention to use open-access educational content. Dulle [

25] also found that facilitating conditions significantly affect researchers’ actual usage of open access educational content [

25].

While UTAUT focuses on technology adoption in general, when considering the adoption and usage of e-learning systems such as MOOCs, additional factors need to be taken into account. Further review of the literature showed that several other factors have been found to influence the adoption and use of e-learning systems. As several researchers have extended UTAUT to study technology adoption in the context of e-learning, this study will follow suit and extend UTAUT with relevant variables found to be significant in e-learning adoption and use.

The following variables will be used to extend UTAUT since they have been found to be significant in influencing e-learning adoption and use from several studies; instructional quality, computer self-efficacy, and service quality. Instructional quality refers to students’ opinions of the effectiveness of teaching methods and the total quality of the course design. Instructional quality includes the student’s views on the skills of the instructor as well as the quality of information provided. Instructor characteristics and teaching materials have a positive effect on perceived usefulness, which in turn has a positive effect on intention to use e-learning. Design of learning content has a positive effect on perceived ease of use, which in turn has a positive effect on intention to use e-learning [

26]. Instructional quality is a significant positive predictor of students’ satisfaction in an online course [

27]. E-learning effectiveness in a Blackboard e-learning system was found to be influenced by the quality of multimedia instruction [

28].

System quality refers to the perceived overall quality of the e-learning system. It is derived from the Information Systems Success Model [

29]. System quality influences system usage intention and subsequent system usage [

29]. Users’ continuance intention of online learning platforms is determined by satisfaction, which is in turn determined by perceived system quality [

30]. System quality is positively related to intention to continue usage of online learning systems [

31]. Perceived system quality influences students’ behavioral intentions to use online learning course websites [

32].

Self-efficacy can be described as a person’s subjective judgment of his or her skill level to execute certain behaviors or obtain certain results in the future. Computer self-efficacy has a significant effect on behavioral intention to use e-learning [

33]. Self-efficacy is significantly positively related to students’ overall satisfaction with a self-paced, online course [

27]. Computer self-efficacy affects students’ behavioral intentions to use online learning course websites [

32].

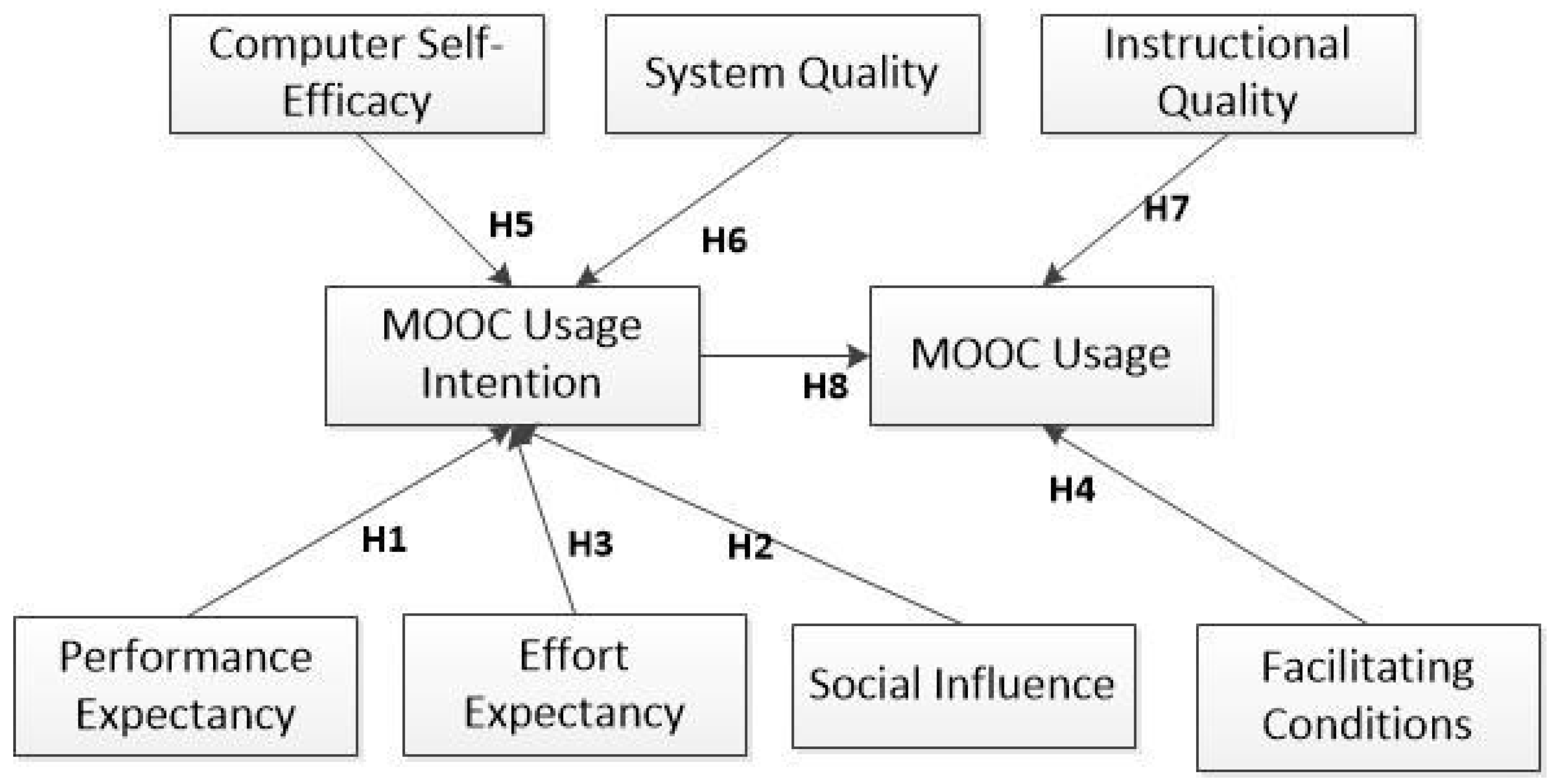

From the review of the literature, the following variables from UTAUT were used for the construction of the research model; performance expectancy, effort expectancy, social influence, facilitating conditions, behavioral intention, and usage behavior. The following additional variables will be used to extend UTAUT; instructional quality, computer self-efficacy, and service quality.

1.2. Research Model and Hypothesis Development

Current research has shown that performance expectancy, effort expectancy, social influence and facilitating conditions have significant effects on intention to use e-learning systems [

21,

22,

23,

24,

25]. Additionally we argue, based on the research by Artino [

27], Delone and McLean [

29], Ramayah et al. [

31] and Chan and Tung [

32] that computer self-efficacy, service quality, and instructional quality also have a significant effect on the use of e-learning systems.

The hypotheses are stated in the following sections:

Performance Expectancy: Performance expectancy describes an individual’s perceptions about the potential of a particular technology to perform different activities [

15]. UTAUT proposed that performance expectancy has a direct influence on behavioral intention to use a particular technology.

In line with theory and the findings from Dečman [

21], Wang et al. [

23], and Pynoo et al. [

23], we posit that:

Hypothesis 1. Performance expectancy has a significant effect on students’ intentions to use MOOCs.

Social Influence: Venkatesh et al. [

18] defined social influence as the extent to which one senses how significant it is that ‘‘other people’’ believe he or she should use a technology [

15]. Social influence has a direct effect on behavioral intention to use a particular technology, and is moderated by gender, age, experience, and voluntariness of use [

15].

In line with theory and the findings from Dečman [

21], Wang et al. [

23], Pynoo et al. [

23], we posit that:

Hypothesis 2. Social influence has a significant effect on students’ intentions to use MOOCs.

Effort Expectancy: Effort expectancy is defined as the level of ease related to the use of technology [

15]. UTAUT proposes that effort expectancy has a direct influence on behavioral intention to use a particular technology; moderated by gender, age and experience [

15].

In line with theory and the findings from the works of Dečman [

21], Wang et al. [

23], Pynoo et al. [

23], Juinn & Tan [

24], and Dulle [

25], we posit that:

Hypothesis 3. Effort expectancy has a significant effect on students’ intentions to use MOOCs.

Facilitating Conditions: Facilitating Conditions is the extent to which users are convinced that essential organizational and technical infrastructures promote the use of technology [

15]. According to Venkatesh et al. [

18], facilitating conditions influence usage behavior directly, and are moderated by age and experience [

15].

In line theory and the findings from the works of Dečman [

21], Wang et al. [

23], Pynoo et al. [

23], Juinn & Tan [

24], and Dulle [

25], we posit that:

Hypothesis 4. Facilitating conditions has a significant effect on students’ MOOC usage behavior.

Computer Self-efficacy: Self-efficacy can be defined as a subjective judgment of a person’s skill level to execute certain behaviors or obtain certain results in the future. Computer self-efficacy therefore refers to self-efficacy with regards to the use of computers. Studies by Artino [

27] and Chang and Tung [

32] showed that computer self-efficacy significantly influenced e-learning system usage. Artino [

27] adopted and expanded TAM while Chang and Tung [

32] combined the innovation diffusion theory (IDT) and the technology acceptance model (TAM) in their studies [

24,

29]. Malek and Karim [

34] conducted a study in Saudi Arabian government universities and found that computer self-efficacy significantly influences students’ intention to use e-learning [

34].

In line with the findings above we posit that:

Hypothesis 5. Computer self-efficacy has a significant effect on students’ intentions to use MOOCs.

System quality: System Quality refers to the perceived overall quality of the e-learning system. This construct is derived from the Information Systems Success Model [

35]. System quality influences system usage intention and subsequent system usage [

26]. Chiu et al. [

30] conducted research on students in a Taiwanese university and found that user continuance intention is determined by satisfaction, which is in turn determined by perceived system quality [

27]. Ramayah et al. [

31] explored quality factors in intention to continue using an e-learning system in Malaysia. Their study showed that service quality, information quality and system quality were positively related to intention to continue usage [

36]. Chang and Tung [

32] also state that perceived system quality influences students’ behavioral intention to use online learning course websites [

29].

In line with theory and the findings above we posit that:

Hypothesis 6. System quality has a significant effect on MOOC usage intention.

Instructional quality: Instructional Quality refers to students’ views of the effectiveness of teaching methods and the overall quality of the course design. Instructional quality includes the student’s perceptions of the skills of the instructor as well as the quality of information delivered.

Instructor characteristics and quality of teaching materials were found by Lee et al. [

26] to have a positive influence on perceived usefulness, which in turn had a positive influence on intention to use e-learning. Lee et al. [

26] surveyed 250 undergraduate students in a university in South Korea for the study. Design of learning content had a positive effect on perceived ease of use, which in turn had a positive effect on intention to use e-learning [

26].

Artino [

27] states that instructional quality is a significant positive predictor of students’ satisfaction. Liaw [

28] conducted a study on students’ usage of a Blackboard e-learning system at the University of Central Taiwan. His findings showed that e-learning effectiveness can be influenced by the quality of multimedia instruction.

In line with the findings above we posit that:

Hypothesis 7. Instructional quality has a significant effect on students’ MOOC usage behavior.

Intention to Use and Usage Behaviour: MOOC usage intention refers to the pre-determined decision of a student to use the MOOC in the near future. Usage intention is theorized to result in usage behavior. Several theories/models have proposed a direct influence of behavioral intention on usage behavior; for instance TAM [

37], TPB [

38], UTAUT [

18], and UTAUT2 [

39].

Several studies have also confirmed the influence of behavioral intention on usage behavior; Ain et al. [

40], Dečman [

21], Chiu & Wang [

41], Pynoo et al. [

23], and Wang et al. [

23],

In line with theory and the findings above we posit that:

Hypothesis 8. Students’ intention to use MOOCs has a significant effect on students’ MOOC usage behavior.

The proposed research model is shown in

Figure 1.

3. Results

Consistent with the two-step approach for assessing structural equation models recommended by Chin [

50], we first evaluated the measurement model for reliability and validity. Next, we went on to test the structural paths between the variables in the proposed model. The Smart PLS 3 software was used to evaluate the reliability and validity of the measurement model, as well as test the structural model.

Measurement model assessment: The measurement model was assessed based on reliability, discriminant validity and convergent validity. Reliability of constructs was assessed using Cronbach’s α. From

Table 1, it can be seen that Cronbach’s α values are higher than the threshold of 0.7 [

51]. It can therefore be concluded that the measurement model exhibits good reliability.

Convergent validity of the measurement model was also evaluated based on recommendations by Henseler et al. [

52] that the average variance extracted (AVE) for each latent construct should be greater than 0.5 [

53]. From

Table 2 it can be seen that AVE values for all constructs are higher than the 0.5 threshold. We can therefore conclude that the measurement model exhibits good convergent validity.

Discriminant validity was assessed using the Fornell-Larker criterion, which states that the AVE of each latent construct should be greater than the highest squared correlations between any other construct [

54]. It can be seen from

Table 2 that the square root of the AVEs for each construct is greater than the cross-correlation with other constructs. Based on these results, we conclude that the measurement model exhibits good discriminant validity.

Structural Model Assessment: After confirming the validity and reliability of the measurement model, the structural model was assessed. The structural model was assessed based on the sign, magnitude and significance of path coefficients of each hypothesized path. Bootstrapping was performed to determine the significance of each estimated path. To determine the explanatory power of the structural model, its ability to predict the endogenous constructs was assessed using the coefficient of determination R

2. Results for the structural model assessment are presented in

Table 3.

Performance Expectancy was found to have a significant positive effect on MOOC Usage Intention (β = 0.318, p = 0.000), which provides support for H1. However, Social Influence was found not to have a significant effect on MOOC Usage Intention (β = 0.078, p = 0.129), which does not provide support for H2. Contrary to expectation, Effort Expectancy was found not to have a significant effect on MOOC Usage Intention (β = 0.084, p = 0.103), which does not provide support for H3. Facilitating Conditions was found to have a significant positive effect on MOOC usage (β = 0.378, p = 0.000), which provides support for H4. Computer Self-Efficacy was found to have a significant positive effect on MOOC Usage Intention (β = 0.103, p = 0.026), which provides support for H5. System Quality was also found to have a significant positive effect on MOOC Usage Intention (β = 0.318, p = 0.000), which provides support for H6. Instructional Quality was found to have a significant positive effect on MOOC usage (β = 0.359, p = 0.000); this provides support for H7. As expected, MOOC Usage Intention was found to have a significant positive effect on MOOC usage (β = 0.354, p = 0.000), which provides support for H8.

In all, the proposed model accounted for 75.8 percent of the variance in MOOC Usage, and 77.4 percent of the variance in MOOC Usage Intention (R

2 of 0.758 and 0.774 respectively). To assess model fit in PLS we used the standardized root mean square residual (SRMR). The SRMR value for the model was 0.093; a value less than 0.08 is generally considered a good fit [

55]. Although the SRMR value does not indicate a very good fit as recommended by Hu and Bentler [

55], reliability, validity, and R

2 measures show that the model is able to explain the relationships in the hypothesized paths.

4. Discussion

The current study sought to investigate the factors that influence MOOC usage by students in selected tertiary institutions in Ghana. The study was motivated by the fact that lecturers who assigned MOOCs to their students reported a general lack of interest and poor usage of the MOOCs. As stated earlier, participation in MOOCs after enrolment, as well as completion of the courses has been widely criticized [

2].

We approached the study from a technology adoption perspective; the findings of our study show that MOOC usage intention is influenced by computer self-efficacy, performance expectancy, and system quality. The study also showed that MOOC usage is influenced by facilitating conditions, instructional quality, and MOOC usage intention.

Six out of the eight hypotheses stated were supported, which, to a large extent supports our research model. A review of the literature reveals a paucity of research in the area of MOOC adoption, usage and continuance intention as compared to e-learning in general.

Our study provides support for studies conducted by Artino [

27], Chang and Tung [

32], and Malek and Karim [

34] which show that computer self-efficacy influences students’ usage of e-learning systems. It points to the fact that relevant skills in the usage of computing devices are important for the effective use of MOOCs. We therefore propose that lecturers must ensure that students who don’t have the necessary skills in the use of computing devices be given some training to enable them to use the MOOC and any other e-learning systems effectively. Consistent with work done by Ain et al. [

40] on Learning Management System use by students in Malaysia, and Al-Shafi et al. [

56] on e-government services adoption [

56], the current study did not provide support for the relationship established in the UTAUT model between effort expectancy and usage intention. In their study, effort expectancy was found not to have a significant influence on Learning Management System use by students. This may be due to students placing more importance on usefulness and learning from the MOOC than the effort required to learn. Unlike traditional technology systems which are often designed to improve efficiencies and hence reduce effort, learning is seen as an activity that requires effort. Tools that support the process are not necessarily used to reduce effort but rather improve the effectiveness of the learning. Also, the study did not provide support for the relationship established in the UTAUT model between social influence and usage intention. This result is consistent with findings by Magsamen-Conrad et al. [

57], who found that social influence has no significant influence on tablet use intentions. The implication is that students feel they don’t need the support of their social circle to be motivated to use the MOOC. It could also mean that students have the perception that lecturers and the university as a whole will not support them in the use of MOOCs, since the social influence construct was measured with items that considered perceived help from lecturers and support from the university. We propose that lecturers in the universities should encourage students to use MOOCs and assure them of their willingness to help, and also check on their progress. Universities should provide students with the needed resources such as good internet connectivity and computer labs to encourage students to participate in MOOCs.

As was the case with studies conducted by Dečman [

21], Wang et al. [

22], Pynoo et al. [

23], and Juinn and Tan [

24], facilitating conditions was found to have a significant influence on MOOC usage. This Universities must therefore provide the necessary structures and resources that will promote the use of MOOCs by students. Lecturers should incorporate the use of MOOCs into their teaching and if possible. Universities must also provide controlled internet access to students and set up libraries or laboratories where students can have access to computers for the purposes of e-learning.Consistent with Lee et al. [

23], the study showed that instructional quality has a significant relationship with MOOC usage. MOOC providers must therefore strive to provide a platform that provides personalized feedback, address individual differences, motivates students while avoiding information overload. The MOOC platform must also create real-life context, encourage social interaction, provide hands-on activities, and encourage more student reflection [

58]. This means the right pedagogical approach must be adopted in designing the instructional materials. MOOCs tend to be based on the cognitive—social-behaviorist pedagogical approach [

59,

60]. As a result, as recommended by Alzaghoul [

61], the following need to considered in designing instructional content; learners need to be informed of learning outcomes of the online course, the online lesson must have tests at particular sections to check the learner’s level of understanding of the material, the learning materials must be sequenced properly to promote learning, and finally, the learners must be given feedback so they can monitor their progress and take corrective action if need be [

61]

Our study also provides support for studies conducted by Dečman [

21], Wang et al. [

22], Pynoo et al. [

23] which show that performance expectancy has a significant effect on usage intention. This shows that students are of the view that consistent participation in the MOOC can positively impact their academic performance. We propose that lecturers and the university management as a whole find ways of motivating students to participate in MOOCs. This can be done by consistent encouragement from lecturers, pasting of MOOC information on student notice boards, formation of MOOC clubs and commissioning of MOOC champions. Lastly, system quality was found to have a significant influence on MOOC usage intention. This is consistent with work done by Chang and Tung [

32] and Ramayah et al. [

31]. This reinforces the fact that MOOC designers must ensure that MOOCs are of good quality. They can achieve this by ensuring the site loads quickly, is easy to use, easy to navigate, visually attractive, and that mobile access with responsive designs are implemented.