To achieve our objective, the students’ experience is presented and exhaustively analyzed and discussed, with and without the use of the LoT@UNED platform, during their learning process.

4.1. Participants and Materials

The current study was conducted with the second generation of IoT laboratories (that is, the LoT@UNED platform) in the cybersecurity undergraduate level subject, taught at UNED in the second semester of the 2017–2018 academic year. The amount of students enrolled in the subject was 238. Among respondents (the 54.2% of students), 115 out of 129 were male and 14 out of 129 were female, as observed in

Table 3, for the 2017–2018 academic year. Some of the results will be analyzed and discussed compared with the data gathered in the previous 2016–2017 academic year, when a traditional approach was employed (with a distance methodology). For this reason,

Table 3 also shows the corresponding demographic data for the 2016–2017 academic year.

From the academic performance point of view in the 2017–2018 academic year, only 57% of students successfully passed the course, and 72% of students had performed all the evaluation activities throughout the course. Whereas, during 2016–2017, 246 students were enrolled in the subject. In total, 64.6% of the students successfully passed the course, and 68% of students had performed all the evaluation activities during the 2016–2017 academic year. The students’ academic goals, in terms of competence and evaluations, are given in the cybersecurity context from a previous section.

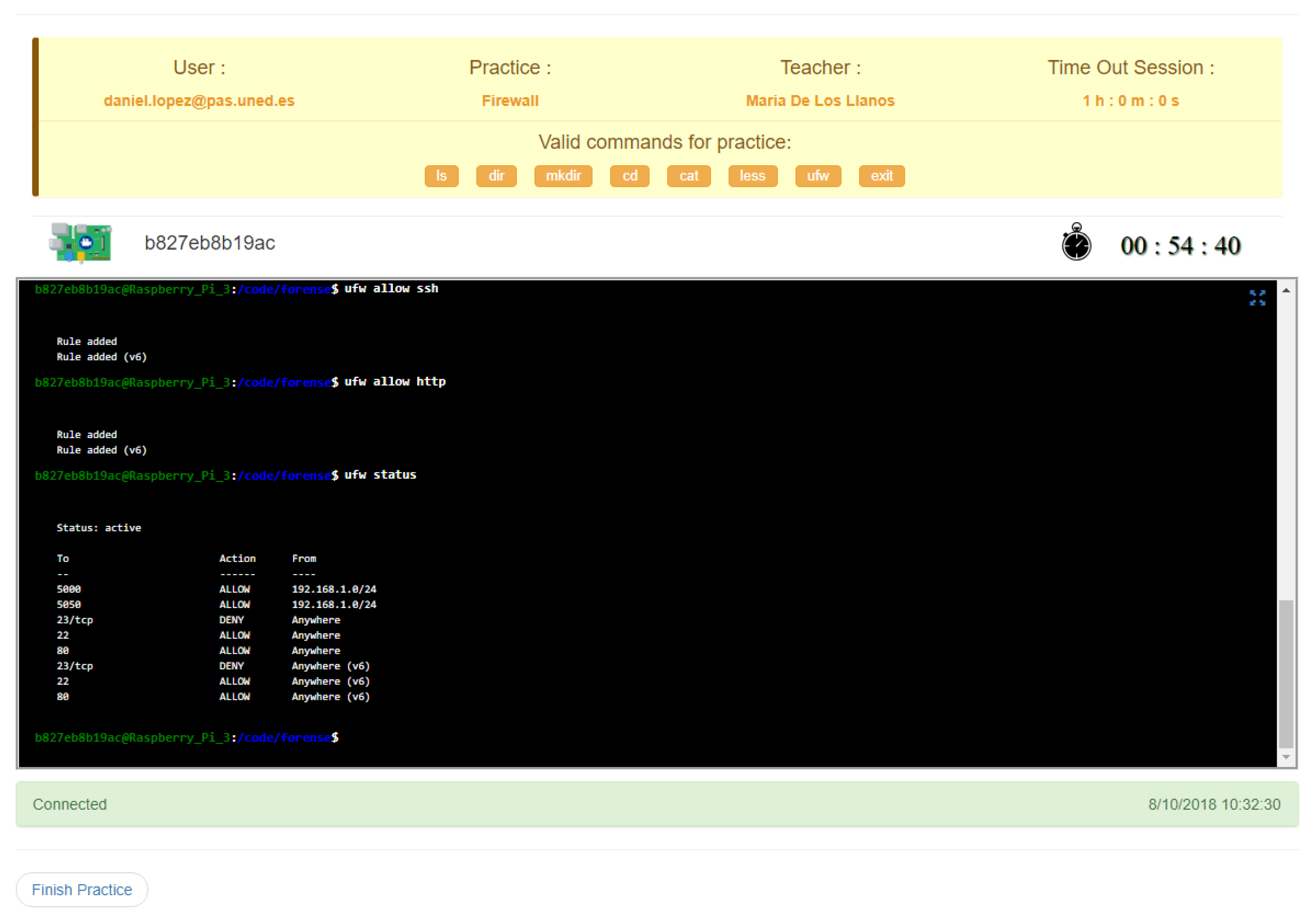

As previously described, the purpose of this experience has been the implementation of a firewall within the system, carrying out the execution of a series of configuration rules. To achieve this, students had to log-in, book a session, and solve this practical activity. The maximum time-slot for each session was one hour. Students could take as many sessions as they considered necessary to solve the activity. The period to complete the activity was 2 months, but the deadline was extended by two more weeks.

4.2. Instruments and Data Collection Procedures

When a student enrolled in the subject, he/she was asked to fill in a questionnaire with occupation, demographic information, and other factors related to the participants in the experience, in order to get student profiles. This questionnaire contained information about students’ sex and age, and they were asked to specify their job occupation, familiarity with cybersecurity, and if they are open to the use the LoT@UNED platform. More details are given in

Table 3.

After that, students accessed the theoretical and practical resources, hosted in the learning platform. Each practical activity started and ended in a particular period of time within the course. Once a practical activity started, students were provided with a guide, a PDF document, and a dedicated YouTube video-lesson, linked to the virtual space. The video-lessons on the YouTube platform provided us with significant statistics about the duration of the students’ views, and so on. The practical activity score was scored by lecturers, after assessing the students’ reports and the trace of the sessions recorded at the LoT@UNED platform.

On the other hand, students were encouraged to answer an opinion survey after the period of the practical activity. The format of this questionnaire was based on UTAH methodology [

43,

44,

45] for evaluating the user acceptance of a new technology.

After the period of the subject, students performed a face-to-face exam in order to pass the subject. Both activities and exam scores were compared, to determine if there was an improvement in the global learning process.

Therefore, the interactions of students with the LoT@UNED platform were recorded and stored for statistical analysis. These include learning and device data:

Downloaded and accessed content. All interactions of students with video lessons and documents were recorded for the study.

Opinion survey to measure the users’ acceptance of technology.

Student marks/scores, from both practical activities and the face-to-face exam.

Device data, composed of log files from IoT devices, to calculate an effort estimation of students.

The LoT@UNED platform records each command issued by a student with the corresponding timestamp. Thus, lecturers can estimate the effective time spent in each session. This report is available for both lecturer and student.

The comparison between both course editions is given in

Table 4. Several indicators (materials, structure, student outcomes, and communication tools) are summarized for each academic year, with and without the employment of the LoT@UNED platform.

4.3. Data Analysis

This analysis approach is based on a mixed search approach, known as the sequential explanatory design method [

46,

47]. A sequential explanatory design method is considered a legitimate, stand-alone research design in engineering education, since it combines the strengths of both qualitative and quantitative features [

48,

49]. This research method is composed of two phases: A quantitative phase, followed by a qualitative phase [

50]. For the quantitative one, data about the interactions of students by using the LoT@UNED platform, surveys, and so on, were collected, and then statistically analyzed using parametric and non-parametric techniques. For each quantitative indicator, a corresponding qualitative criteria is defined, in terms of the hypotheses.

In order to validate the considered hypotheses for this research study,

Table 5 depicts a set of evaluation criteria based on the selected indicators in a qualitative way, the corresponding quantitative indicators, and the source of the information to gather these qualitative and quantitative indicators.

First, it is possible to know whether the use of the LoT@UNED platform improves the learning results and student engagement (defined by H1), by means of the results obtained from the evaluation questionnaire in the second semester of 2016–2017 academic year, in comparison with the ones in the second semester of the 2017–2018 academic year. Furthermore, in relation with student engagement, we expect to have an indicator that shows this engagement after the inclusion of real IoT devices in the course.

Since our students are immersed in a distance education environment, there is an approximate way to know whether the IoT technology gains student attention by analyzing his/her access to the course materials and the analysis performed on the final survey. As the students are provided with online videos hosted on YouTube, this platform offers us a detailed range of statistics related to the student retention and visualizations of the video.

In order to get student’s effort for using a new technology in the subject, it is necessary to know the period of time that students need to complete an activity session properly. In particular, the spend time/total time rate gives us a quantitative approach of this effort. Using this information, it is possible to make a comparison between data of the activity hosted in the LoT@UNED platform and the previous case. Thus, we know whether the student has needed a higher effort, proposed in the H2 hypothesis.

Finally, it is also interesting to know whether the academic performance improves the global course level. Therefore, we can draw the impact of use of WoT in courses with specific educational competences about cybersecurity. The classic model based on predictable data (static vectors or arrays of properties) is changed by others based on non-predictable data, which come from the IoT things in real-time. This way, it is possible to study and analyze the student’s response in terms of global scores, which are used as indicators to evaluate the H3 hypothesis. In this case, we compare the results obtained between the 2016–2017 and 2017–2018 academic years for the same activity.

For every quantitative indicator, a test of normality distribution will be performed. When data is correct, in terms of normality distribution, a t-test (paired or 2-sample) will be executed. All tests were conducted using an alpha level of 0.05.

4.4. Results and Discussion

In order to investigate whether the LoT@UNED platform had a positive impact on the students’ learning engagement and outcomes, the students’ effort by using the platform, and their academic performance when compared with a traditional approach, the gathered results are analyzed and discussed, both from a quantitative and qualitative point of view, according to the previously defined hypotheses.

Hypothesis 1 (H1). The use of the LoT@UNED platform improves the learning engagement of students and their learning outcomes.

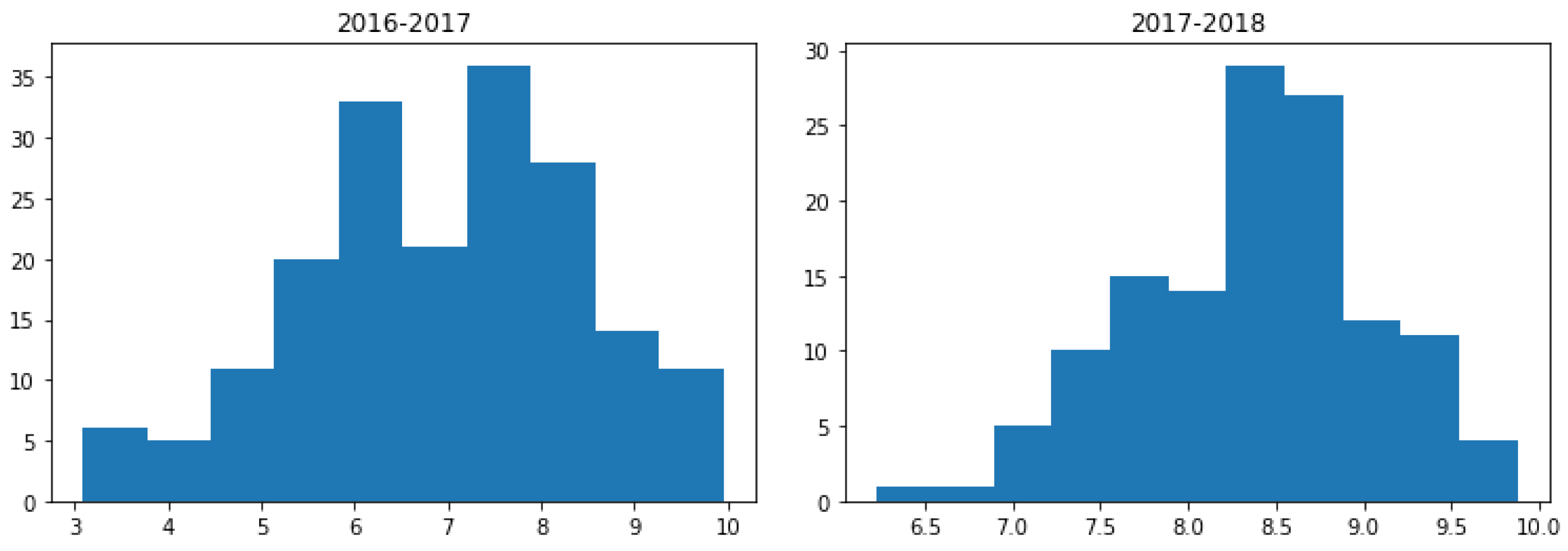

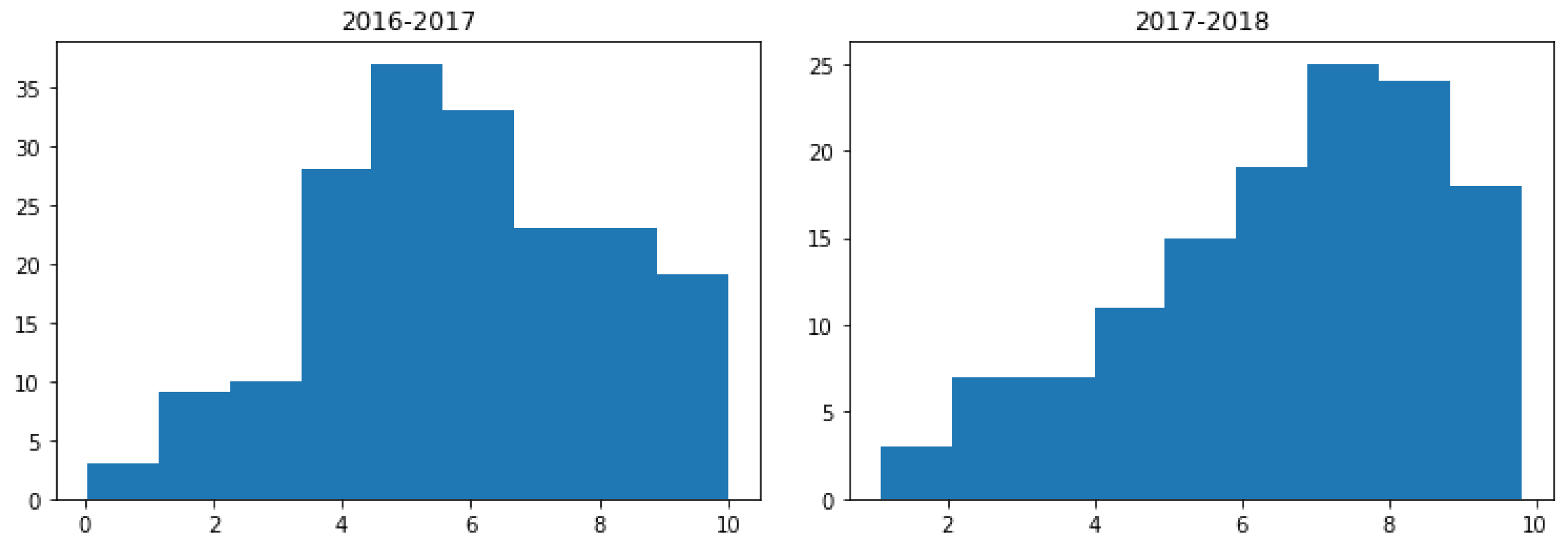

For the analysis of the students’ learning outcomes, a Shapiro-Wilk test [

51] with a normal distribution was performed to examine the distribution of the scores achieved by students, for both of the 2016–2017 and 2017–2018 academic years, including the mean (M) and standard deviation (SD) values. The obtained scores were (M = 7, SD = 1.86) and (M = 8.4, SD = 0.71), respectively. More than one point, by mean. See

Figure 6, where the histogram graphs represent data distribution for both academic years. The vertical axis represents the number of students inside the bin, and the horizontal axis is the grade bins.

The results of these tests indicate that data may come from a normally distributed population (W = 0.927

p-value = 0.05 for the 2016–2017 academic year; W = 0.729

p-value = 0.176 for the 2017–2018 academic year). For this reason, a paired

t-test [

52] was used, to compare the scores before and after the introduction of the LoT@UNED platform in the subject. The results of this test (

t =

,

p-value

) indicated that there is a statistically significant difference between the student scores of both academic years. For this reason, it can be concluded that students improved their comprehension of how a firewall is configured.

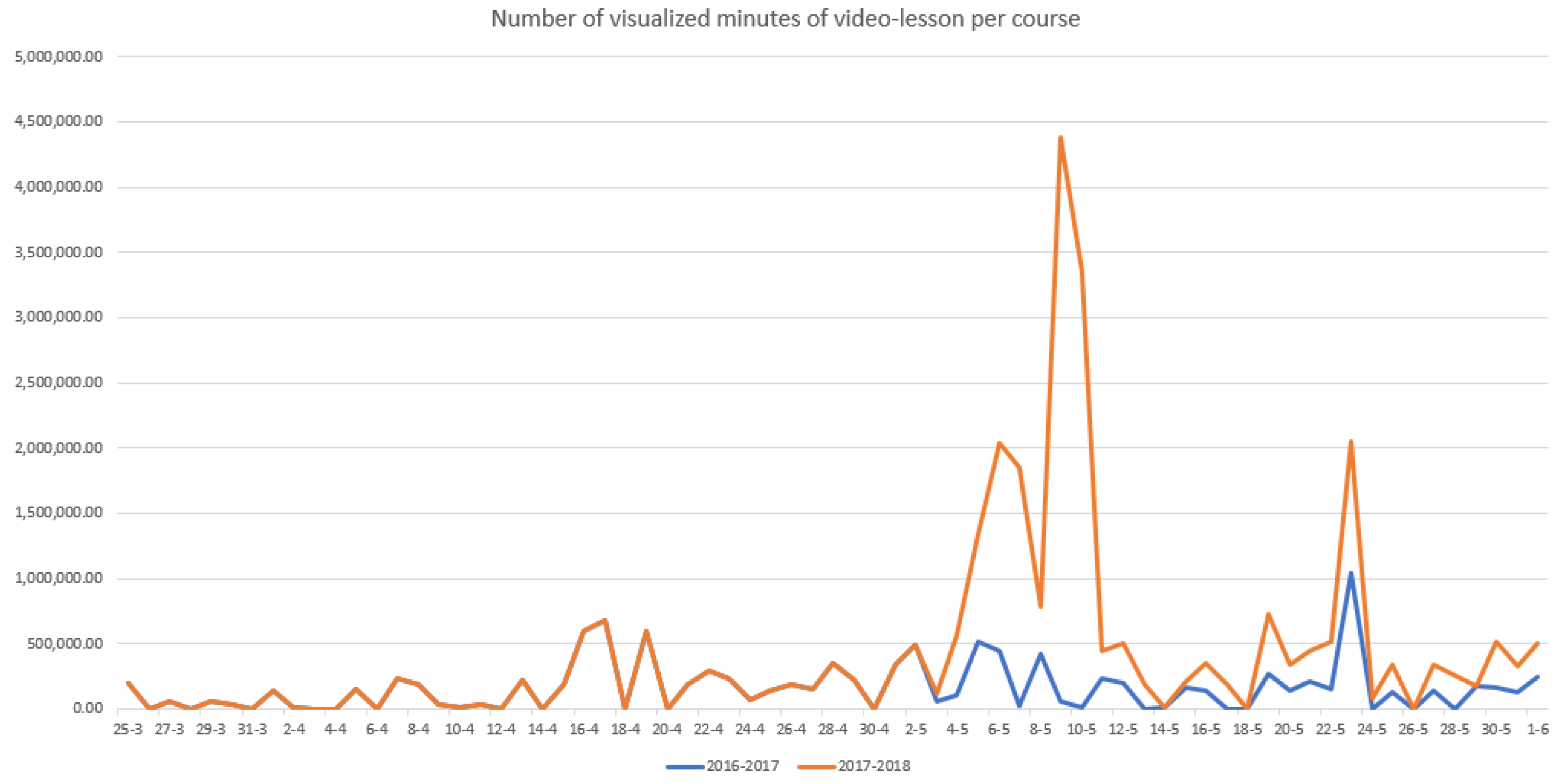

In order to get a measure of the learning engagement, the “virtual” participation in related resources accessed by students was analyzed. Again, the 2016–2017 and 2017–2018 academic years are compared, in order to test the benefits of using (or not using) the LoT@UNED platform. As described before, the supporting video-lessons were hosted on the YouTube platform, since it offers us detailed statistics about the visualization of the video (see

Table 6, for further details). For instance, the mean period of time in which a video was visualized is higher for the 2017–2018 academic year, 39.9% (8:18 out of 14:37 min) instead of 32% (5:13 out of 20:50 min), due to student interest. Visualization, visitors, and interaction also increased. It is worth remarking that that only 2% of the visualizations took place outside of the aLF platform.

A Shapiro-Wilk test with a normal distribution was also performed of the video-lessons visualizations. The test results indicated a normal distribution of data in the multimedia resources (W = 0.785,

p-value = 0.01 for the 2016–2017 academic year; W = 0.40,

p-value = 0.028 for the 2017–2018 academic year). Therefore, a

t-test can be used to determine whether there was a statistically significant difference between the visualization of the video-lessons. The

t-test results (

t = −1.027,

p-value = 0.003 for multimedia resources) can be used to conclude that there was a significant difference among them. As observed in

Figure 7, there was clear a increment of the average minutes that students spent watching video-lessons, from 5:13 min (32% of the video duration) during 2016–2017 to 8:18 min (39.9% of the video duration) during 2017–2018. Thus, student visualizations of the video-lessons were higher, with more visual retention, when the LoT@UNED platform was used for practical activities. As a conclusion, there was higher interest in the multimedia resources.

From the detailed quantitative results, it can be concluded the H1 hypothesis was proved. The analysis of the students’ learning outcomes was also done through the qualitative features from an opinion survey about their satisfaction with LoT@UNED for the configuration of a firewall, in terms of perceived usefulness, effort needed to learn (estimated effort), attitude, social influence, easy of access, and intention to use. This discussion will be tackled later.

Hypothesis 2 (H2). Student effort, by using the LoT@UNED platform, is higher than with a classic approach.

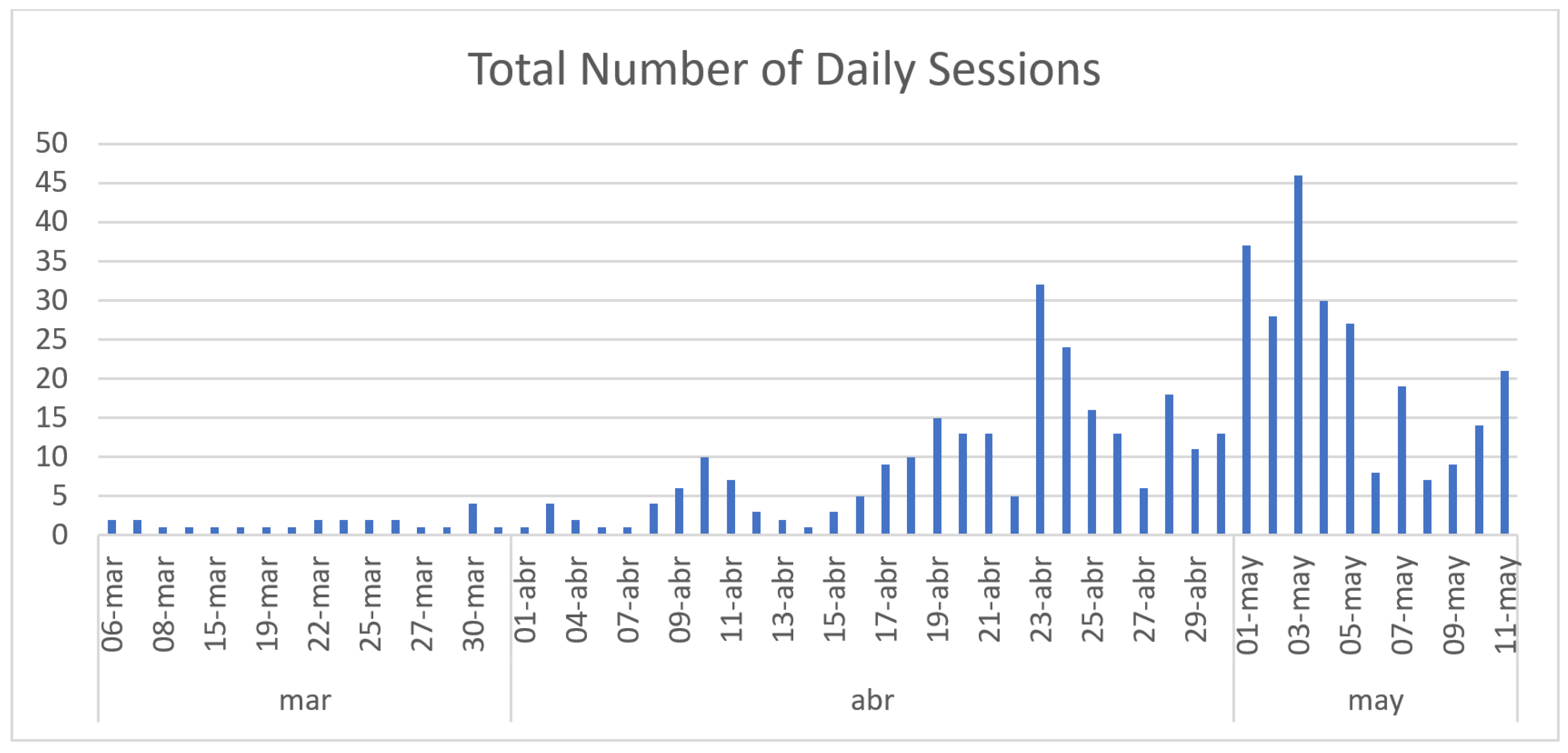

The overall amount of time (counted in hours) spent, in the case of the firewall configuration, is a set of collected data from students. This data is available for both of the 2016–2017 and 2017–2018 academic years. Therefore, we can utilize this data to get the mean values, and make an analysis to determine their significance.

During the 2016–2017 academic year, according to the information hosted in the virtual platform of UNED (aLF), each average session lasted almost an hour (M = 59 min, SD = 14), with only one session per student. This was recorded in the access logs (by SSH) of the remote virtual machine used to carry out the A2 task of the practical case. On the other hand, during the 2017–2018 academic year, the LoT@UNED platform had registered 520 sessions, with an average of 8 daily-sessions and 4 sessions per student. As observed in

Figure 8, the volume of sessions was concentrated in the final period of time for the subject, in some cases even reaching more than 40 daily-sessions. From these exploratory values, we can conclude that that students had spent more time doing the practical activity than in the 2017–2018 academic year.

It deserves to be highlighted that the recorded times are only related to the previously described A2 task. The A1 and A3 tasks were not measured, although we estimate that the times which are used in our computations are only a one-third part of the whole spent time. This estimation allows us to plan the activity and the posterior phase of conclusions.

First, a Shapiro-Wilk test of normal distribution was performed to examine the distribution of the estimated times (in hours) involved by student for the A2 task during 2016–2017 and 2017–2018, respectively. The test results showed that the data may have come from a normally distributed population: W = 0.952, p-value = 0.261 for 2016–2017; and W = 0.946, p-value=0.590 for 2017–2018. For this reason, a t-test was used to compare times in both periods. These results (p-value < 0.003, t = −2.65) indicated that there was a statistically significant difference between the minutes involved in the development of the A2 task per student in 2016–2017 academic year (M = 59, SD = 14) and 2017–2018 academic year (M = 240, SD = 6.24).

The H2 hypothesis states that student effort was bigger when IoT things were employed. This hypothesis was formulated by taking into account that the use of unknown technologies lead to more time spent by the students to assimilate them, as detailed in

Figure 8. According to the recorded logs of LoT@UNED, students spent more sessions preparing the activity, due to the open nature of its definition. Thus, they explored several ways of solving it. Whereas, in the previous course, they had to focus their attention on preparing the activity context (a network of virtual machines to run the firewall), and they had not explored multiple solutions. Using the proposed Lot@UNED platform, students had more available time to practice directly with the firewall and its configuration options, instead of preparing the activity context. Although, during the 2017–2018 academic year, students spent more time doing the activity, their main effort was concentrated in the activity objectives.

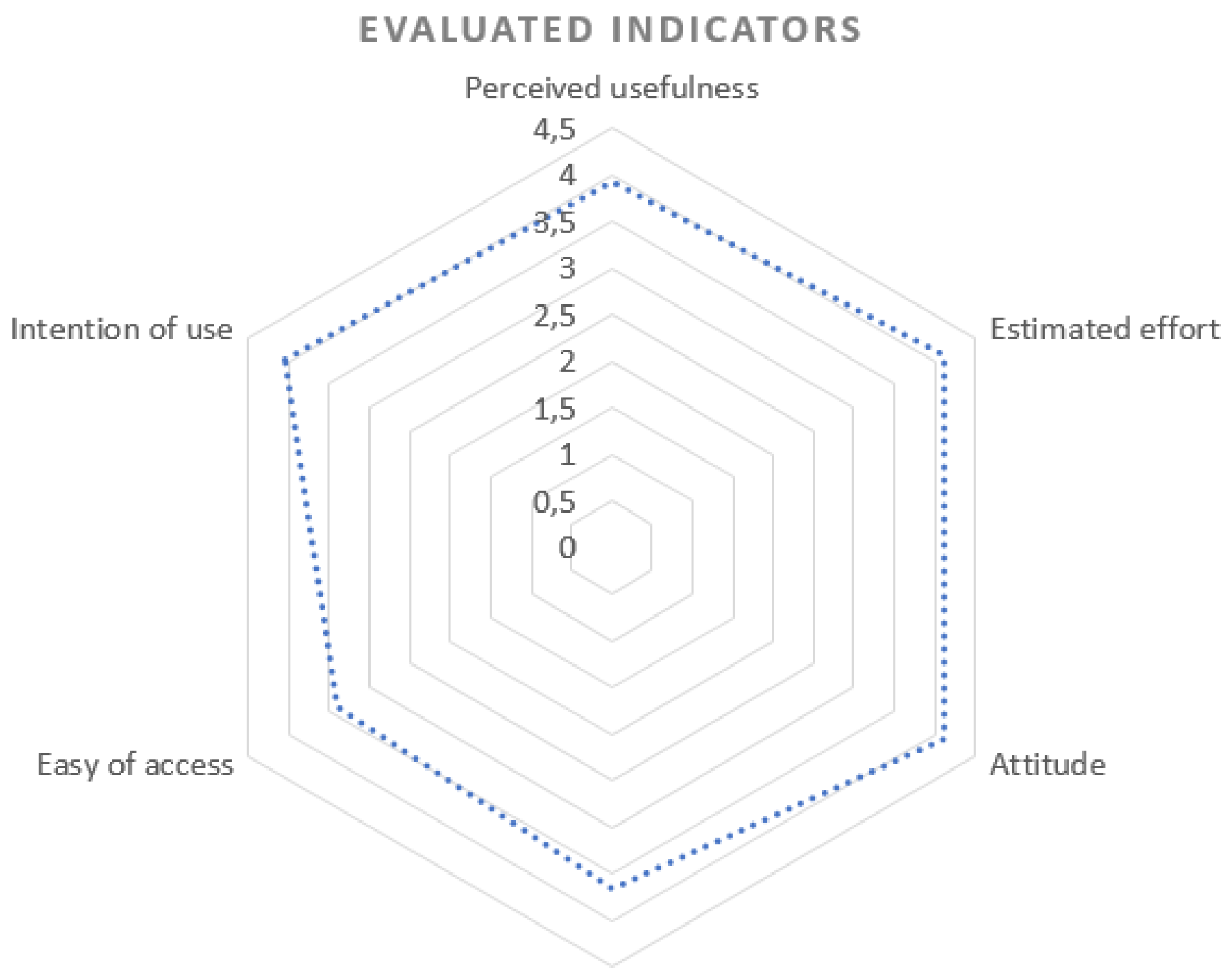

Additionally, students were asked their opinion about the LoT@UNED platform. The survey was a questionnaire, including four choice questions. Each statement is a five-point liker-type scale, ranging from (1) “strongly disagree” to (5) “strongly agree”. A summary of the results of this survey is represented in

Table 7. These questions were focused on the following indicators:

Perceived usefulness by the students using the platform and their experience.

Perceived effort needed to learn how to use the platform according to students’ opinion; that is, the ease of use of the tools involved in the taken experience.

Attitude towards the technological solution used, assessing whether the students perceive that using the system is beneficial or not for the purposed objectives.

Social influence, trying to reflect how the students’ opinion is perceived by other classmates and teachers about the experience.

Perceived ease of access and perceived availability of educational resources during the experience.

Intention of access; in other words, the students’ perspective about similar experiences supported by the same platform for other experiences.

According to the obtained results from the opinion survey (see

Figure 9), it is not perceived by students that the platform increases the effort of performing the activity. It is perceived by almost the 50% of students that the platform is easy of use, and it helps to improve the performance of the activity. It is not perceived that there is a social factor that influences students’ opinion about the LoT@UNED platform and the presented experience. Thus, it had been perceived by almost 80% of the students that the experience was useful or strongly useful for their learning. More than the 47% of the students were willing to use the LoT@UNED platform in other activities—even in other subjects.

However, according to these results, the perceived ease of access and perceived availability of resources was not so satisfactory, from their point of view. This indicator can be affected due to several access problems suffered during the activity. As the activity deadline was approaching, more students were trying to access to the platform. The nearer the day to the end of the activity, the higher load of the platform was (than the rest of the course), as depicted in

Figure 8. In concrete periods of time, there was not a free Raspberry Pi to perform the activity. As a consequence, some students had to wait, in order to access to the platform. Therefore, students perceive this fact as a lack of resource management availability.

As a conclusions, the H2 hypothesis is not valid, according to the students’ opinion gathered from the survey, although initially it could be interpreted as proven, according to our initial statistical analysis of log-data. A deep comprehension about the time that students have spent within the LoT@UNED platform has helped us to understand that students were checking different options to solve the practical case. But, they did not perceive it as a greater effort.

Hypothesis 3 (H3). Student academic performance is better with the LoT@UNED platform, in contrast to a classic approach.

The analysis of student academic performance was done by comparison of the scores achieved by the two selected groups of students. As 70% of the qualification corresponds to the final face-to-face exam, a specific question dedicated to the three practical cases/activities was included in this. Thus, it is useful to detect cheating behaviors and, also, to increase the final score of students who had successfully performed the practical activities during the period of the subject.

A Shapiro-Wilk test of normal distribution was performed to examine the distribution of the scores for both of the 2016–2017 and 2017–2018 academic years. The test results showed that the data may come from a normally distributed population: W = 0.9,

p-value = 0.059 for 2016–2017; and W = 0.90,

p-value = 0.015 for 2017–2018. Taking this assumption as valid, a

t-test was conducted to compare scores for the two academic years. The results (

p-value = 0.004,

t = 0.75) indicated that there is a statistically significant difference between the scores in 2016–2017 (M = 6.02, SD = 2.63) and 2017–2018 (M = 7.17, SD = 2.60). See

Figure 10, where the histogram graphs represent data distribution for both academic years. The vertical axis represents the number of students inside the bin, and the horizontal axis is the grade bins.

Thus, the H3 hypothesis was proved, and so it can concluded that students’ academic performance was slightly better when they used the LoT@UNED platform, in contrast to the “classic” approach.