1. Introduction

Notably valuable efforts have focused on helping people with special needs. Daily routine, which is trivial for most of us, is a real survival problem for groups of people with special needs and abilities, especially in a society with the bad habit of pushing such people to the side. A modern pedestrian navigation system for blind and visually impaired people has been presented in [

1]. BlindHelper primarily enhances the ability of a blind or visually impaired person (BVI) to navigate efficiently to desired destinations without the aid of guides. The BlindHelper system has been implemented as a smartphone application which interacts with a small embedded system responsible for reading simple user controls, high-accuracy global positioning system (GPS) tracking of pedestrian mobility in real time, and identifying near-field obstacles and traffic light status along the route. This information is communicated to the smartphone application, which in turn issues voice navigation instructions or undertakes further actions to help the user. An early BlindHelper prototype was presented in the 2016 Association for Computing Machinery (ACM) Pervasive Technologies Related to Assistive Environments Conference, winning the Best Innovation Paper Award among the set of PETRA 2016 papers.

In this work, we build on the experience from BlindHelper and present Blind MuseumTourer, a system for indoor interactive autonomous navigation for BVI and groups (e.g., pupils) in museums. The application is executed on Android smartphones and tablets to implement a voice-instructed, self-guided navigation service inside museum exhibition halls and ancillary spaces. The developed concept also applies to other indoor navigation use cases, such as in hospitals, shopping malls, airports, train stations, public services and municipality buildings, office buildings, university buildings, hotel resorts, passenger ships, etc. The presented application can be easily customized depending on the use case. The museum use case application comprises an accurate indoor positioning system using proximity sensors at the exhibits and unobtrusive assistive tactile route indicators marked on the floor of museum rooms (conforming to international standards for assistive tactile walking). In the near future, the application will comprise an indoor positioning system for completely free travel inside indoor spaces exploiting Bluetooth low energy (BLE) beacons fitted around the space. A preliminary pilot prototype has already been developed and validated by blindfolded sighted users, and is currently under fine tuning and evaluation with the collaboration of the Tactual Museum of the Lighthouse for the Blind of Greece [

2], towards the implementation of a brand new “best practice” regarding cultural voice-guided tours targeting BVI visitors. The Tactual Museum [

3], one of the 4–5 museums of its kind worldwide, was founded in 1984, realizing an excellent new way of approaching the ancient Greek civilization through the ability to touch and feel the exhibits not only for blind but for sighted people as well. The exhibits in the Tactual Museum are exact replicas of the originals which are displayed in the Museums of Greece. A key objective will be to render museums completely accessible to blind and visually impaired people, using the proposed technology and implementing a success story which can eventually be sustainably replicated to all Greek museums using accessible acoustic and tactual routes.

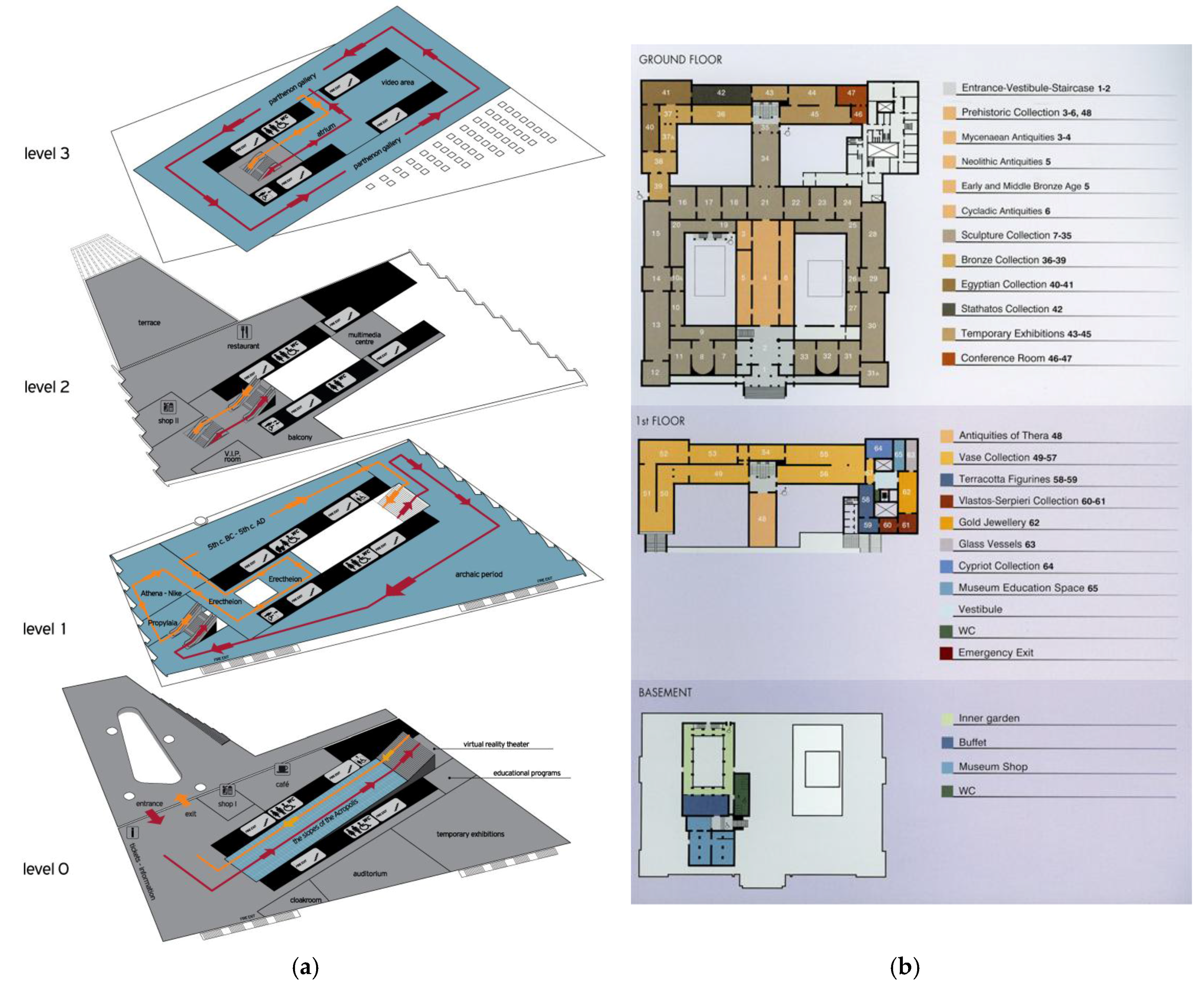

Subsequent pilots will follow up with the National Archaeological Museum [

4], the largest archaeological museum in Greece, with more than 11,000 exhibits, providing a panorama of Greek civilization from the beginnings of Prehistory to Late Antiquity, and the Acropolis Museum [

5], focused on the findings of the archaeological site of the Acropolis of Athens, exhibiting nearly 4000 objects over an area of 14,000 square meters. Both museums are particularly interested in integrating interdisciplinary research in personalization and adaptivity, digital storytelling, interaction methodologies, and narrative-oriented mobile and mixed reality technologies [

6].

Both BlindHelper, now renamed Blind RouteVision and MuseumTourer, state-of-the-art navigation applications for BVI, and other IndoorGuides to come (see

Section 6), comprise the MANTO BlindEscort Apps (in ancient Greek mythology, Manto was a daughter and blind escort of famous blind seer Tiresias). The MANTO applications aim to resolve the accessibility problems of BVI during pedestrian transportation and navigation in outdoor and indoor spaces. Independent living makes a key contribution to the social and professional inclusion, education and cultural edification and quality of life of BVI. MANTO apps aim to provide an unparalleled aid to BVI all over the world, so that they can walk outdoors safely and experience self-guided indoor navigation, including tours in museums. In parallel, the effort behind MANTO Blind MuseumTourer aims to enable and train cultural organizations to host and be accessible to people with such disabilities. These efforts will contribute decisively towards breaking social exclusion and address BVI at all ages. The development of the MANTO blind escort applications is supported by the Greek RTDI State Aid Action RESEARCH-CREATE-INNOVATE of the National Operational Programme Competitiveness, Entrepreneurship and Innovation 2014–2020 in the framework of the MANTO project, with the participation of the Lighthouse for the Blind of Greece. The Lighthouse for the Blind of Greece (Greece has approximately 25,000 blind persons), founded in 1946, is a non-profit philanthropic organization offering social, cultural and educational activities to the BVI community free of charge, including sheltered workshops and offering jobs to blind people.

The rest of the paper, starting from a rich literature review of blind indoor navigation systems, presents the application functionality with emphasis on the integrated indoor positioning system. It concludes with a preliminary system validation through blindfolded sighted user tests and a valuable discussion regarding key concerns in blind indoor navigation, outlining the strengths of the presented solution.

2. Review of Blind Navigation Systems

2.1. Blind Outdoor Navigation

Since the GPS system was introduced there have been many attempts to integrate it into a navigation-assistance system for blind and visually impaired people. Loadstone GPS is an early effort for satellite navigation for blind and visually impaired users that started in 2004 and is available in open source [

7]. It runs on the Symbian OS and on Nokia devices with the S60 platform and utilizes a GPS tracker, a screen reader application and the OpenStreetMap project. A similar project is LoroDux developed in JavaME, also using data imported from the OpenStreetMap project [

8]. Relevant products on modern platforms include Mobile Geo running on Windows mobile smartphones, including a screen reader and integrating technology from former Braille navigation products [

9]. The same company has also developed a similar application for the iOS platform, Seeing Eye GPS [

10]. Features unique to blind users include a simple menu structure, automatic announcement of intersections and points of interest, and routes with heads-up announcements for approaching turns. It uses Foursquare and Google Places for points of interest and Google Maps for street info. Another application on the iOS platform is BlindSquare, using crowd sourced data [

11]. It uses Foursquare for points of interest and OpenStreetMap for street info.

Besides these commercial systems, several other research efforts have delivered relevant outcomes. Reference [

12] proposes a tele-guidance system based on the idea that a blind pedestrian can be assisted by spoken instructions from a remote caregiver who receives a live video stream from a camera carried by the BVI. Reference [

13] describes a microprocessor-based system replacing the Braille keyboard with speech technology and introducing a joystick for direction selection and an ultrasonic sensor for obstacle detection. The reported location accuracy is 5 m. Another similarly-aged approach based on a microprocessor with synthetic speech output featuring an obstacle detection system using ultrasound is presented in [

14]. This system provides information to the user about urban walking routes to point out what decisions to make and the nearest obstacles. Another well-known older system is Drishti [

15] employing a “wearable” Pentium computer module and wired headset (quite weighty and intrusive nowadays), IBM’s ViaVoice vocal communication, and GIS database and Mapserver. It uses Differential-GPS (DGPS) as its outdoors location system and an ultrasound positioning system to provide the precise indoor location. Navigation in all these systems ([

13,

14,

15]) relies on the GIS-based model described in [

16], which provides a detailed valuable experiment on guidance. A valuable analysis of problems during the process of blind navigation by means of tele-assistance can be found in [

17]. A substantial number of problems are related to navigation instructions. These findings can serve as a basis to improve the training for visually impaired people to make the wayfinding process more efficient.

2.2. Blind Indoor Navigation

This section presents a literature review of blind indoor navigation systems, highlighting representative and impressive research outcomes in the field. Research outcomes are classified into categories according to the type of work and technology employed.

2.2.1. Requirement Analysis, Surveys and Future Directions

Several papers deal with user requirement analysis of indoor navigation applications which help blind people navigating for themselves in public buildings independently. Reference [

18] outlines the needs and challenges for indoor wayfinding and navigation faced by BVIs based on the findings from several years of needs assessment conducted with relevant experts and BVIs. Reference [

19] defines a set of criteria for evaluating the success of a potential navigation device. The requirements analysis has been broken down into the subcategories of positioning accuracy, robustness, seamlessness of integration with varying environments and the nature of information that is outputted to a BVI user. This framework is applied to many existing navigation solutions for the BVI, drawing upon the notable achievements that have been made thus far and the crucial issues that remain unresolved or are yet to receive attention. It was found that these key issues, which existing designs fail to overcome, can be attributed to the need for a new focus and user-centred design attitude—one which incorporates universal design, recognises the uniqueness of its audience and understands the challenges associated with the system/device’s intended environment. Reference [

20] additionally discusses a thorough user requirement analysis for multimodal applications that can be installed on mobile phones, which have been carried out with blind users, while [

21] identifies a number of research issues that could facilitate the large scale deployment of indoor navigation systems.

Reference [

22] highlights some of the navigational technologies available to blind individuals to support independent travel. The focus here is on blind navigation in large-scale, unfamiliar environments, but the technology discussed can also be used in well-known spaces. Reference [

23] provides a comparative survey among portable/wearable obstacle detection/avoidance systems to inform the research community and users about the capabilities of these systems and about the progress in assistive technology for visually impaired people.

Interesting directions for future research related to indoor wayfinding and navigation tools to assist BVIs are discussed in [

24]. Localization techniques incorporating a variety of sensors and crowdsourcing, user interfaces, landmark lists, accessibility instructions, floor plan representations and route planning will be enhanced in the short term. The grander vision for accessible navigation solutions will certainly involve a more systematic change in the general structure of urban environments, including building smart cities and ubiquitous assistive robotics technology. Smartphones and other mobile devices will be the primary modalities for BVI navigation accessibility.

2.2.2. RFID/NFC and Multimodal RFID Systems

Several systems exploit the concept of setting up a Radio Frequency IDentification (RFID) information grid for blind navigation and wayfinding in buildings and indoor environments [

25,

26,

27].

The Blind Interactive Guide System (BIGS) [

28] uses a RFID-based indoor positioning system to acquire the current location information of the user. The system consists of a smart floor and a portable terminal unit. Each tile of the floor has a passive RFID tag which transmits a unique ID number. The portable terminal unit is equipped with an RFID reader as an input device so that BIGS can get the current location information of the user. Using the preinstalled map of the target floor, the BVI can navigate to the final destination.

The project “Ways4all” [

29] is using passive RFID-tags to identify indoor routes and barriers for BVI as well as a tactile guidance system. At all strategic spots inside the building (entrance, platforms, intersections) a passive RFID-tag will be placed into the tactile guidance system. These RFID-tags send their unique code through an RFID-reader to the user’s smartphone. The smartphone reads the code and sends it on to an RFID-database server where all the tags together with some additional information are saved as location points. On the smartphone the routing information will be sent in an acoustic way to the blind person.

The PERCEPT system [

30] provides enhanced perception of the indoor environment using passive RFID tags deployed in the environment, a custom-designed handheld unit and a smartphone carried by the user, and a server that generates and stores the building information and the RFID tag deployment. When a user, equipped with the PERCEPT glove and a smartphone, enters a multistory building equipped with the PERCEPT system, s/he scans the destination at the kiosk located at the building entrance. The PERCEPT system directs the user to the chosen destination using landmarks (e.g., rooms, elevator, etc.). The PERCEPT-II follow up system [

31] allows the user to carry only a smartphone and exploits near field communication (NFC) tags on existing signage at specific landmarks in the environment (e.g., doors, stairs, elevators etc.). The users obtain audial navigation instructions when they touch the NFC tags using their phone.

Reference [

32] presents a smart robot (SR) for the BVI equipped with an RFID reader, GPS, and analog compass as input devices to obtain location and orientation. The SR can guide the user to a predefined destination, or create a new route on-the-fly for later use. The SR reaches the destination by avoiding obstacles using ultrasonic and infrared sensor inputs. The SR also provides user feedback through a speaker and vibrating motors on the glove.

The SmartVision system [

33] proposes the development of an electronic white cane that helps moving around, in both indoor and outdoor environments, providing contextualized geographical information using RFID technology. The objective of using RFID technology is to correct the GPS error in the case of outdoor positioning, since each tag cluster is appropriately georeferenced and to correct the Wi-Fi location error, in the case of indoor positioning. This also allows the user to receive warnings and information relative to each specific point where the RFID tags are planted. The SmartVision system works autonomously in a mini laptop, carried by the user, loading and updating the information required for the navigation/orientation from the GIS server through an Internet connection.

RSNAVI is an RFID-based, context-aware, indoor navigation system for the blind [

34]. The system uses 4D modelling of buildings—3D building and object geometry and the status of all sensors in time. To improve the navigation process, RSNAVI uses semantic-rich interior models to describe not only the position and shape of all objects, but also their characteristics. The algorithm for route planning uses multi-parametric optimization to obtain the optimal route for the BVI. The system allows automatic re-routing when the blind user deviates from the route or if it detects a change of status of the sensors, for example blocking access to a room due to a fire alarm.

Reference [

35] presents a sophisticated system which accurately locates persons indoors by fusing inertial navigation system (INS) techniques with active RFID technology. The authors present a tight (Kalman filter) KF-based INS/RFID integration, using the residuals between the INS-predicted reader-to-tag ranges and the ranges derived from a generic received signal strength (RSS) path-loss model. The presented approach further includes other drift reduction methods such as zero velocity updates (ZUPTs) at foot stance detections, zero angular-rate updates (ZARUs) when the user is motionless, and heading corrections using magnetometers. The integrated INS+RFID methodology eliminates the typical drift of inertial measurement unit (IMU)-alone solutions (approximately 1% of the total traveled distance), resulting in typical positioning errors along the walking path (no matter its length) of approximately 1.5 m.

2.2.3. Infrared, Visible Light and Commercial Ultra-Wideband (UWB) Systems

Reference [

36] presents a system which determines the user’s trajectory, locates possible obstacles on that route, and offers navigation information to the user. The system’s main components are an augmented white cane with various embedded infrared lights, two infrared cameras (embedded in a Wiimotes unit), a computer running a software application that receives via Bluetooth the user’s position and movement detected by the Wiimotes and processes information about the obstacles in the area, and a smartphone that delivers the navigation information to the user through voice messages.

Reference [

37] describes the construction of a micro PC based portable personal navigation device which utilizes a commercial ultra-wideband (UWB) asset tracking system that provides current position, useful contextual wayfinding information about the indoor environment based on static and dynamic descriptions of the indoor environment and directions to a destination to greatly improve access and independence for people with low vision.

Reference [

38] presents an indoor navigation system for BVI using visible light communication that makes use of LED lights and a geomagnetic sensor integrated into a smartphone.

Reference [

39] presents the design of a cellphone-based active indoor wayfinding system for BVI, including a small wearable infrared (IR) receiver device and IR transmitter wall modules retrofitted at specific locations in the building. These sensors transmit the unique IR tags corresponding to their location perpendicular to the direction of the motion of the user. Using floor plan files, the system provides step-by-step directions to the destination from any location in the building.

2.2.4. Magnetic Systems

Reference [

40] describes the development and evaluation of a prototype magnetic navigation system consisting of a wireless magnetometer, placed at the users’ hip, streaming magnetic readings to a smartphone processing location algorithms. Human trials were conducted to assess the efficacy of the system by studying route-following performance with blind and sighted subjects using the navigation system for real-time guidance. It is well known that environments within steel frame structures are subject to significant magnetic distortions. Many of these distortions are persistent and have sufficient strength and spatial characteristics to allow their use as the basis for a location technology.

In reference [

41], the authors collected an extensive data set of 2000 measurements by employing a mobile phone with a built-in magnetometer. Using these fields, they can identify landmarks and guideposts and distinguish rooms and corridors and create magnetic maps of building floors.

2.2.5. 3D Space Sensing and Augmented Reality Systems

Reference [

42] presents a system detecting changes in a 3D space based on fusing range data and image data captured by cameras and creating a 3D representation of the surrounding space. This 3D representation of the space and its changes are mapped onto a 2D vibration array placed on the chest of the blind user. The degree of vibration offers a sensing of the 3D space and its changes to the user.

Reference [

43] presents a vision-based indoor location positioning system using augmented reality. The proposed system automatically recognizes a location from image sequences of the indoor environment, and it realizes augmented reality by seamlessly overlaying the user’s view with location information. To recognize a location, the authors pre-constructed an image database and location model, which consists of locations and paths between locations, of an indoor environment. Location is recognized by using prior knowledge of the layout of the indoor environment.

Reference [

44] presents an indoor tracking model which manipulates the erratic and unstable received signal strength indicator (RSSI) signal to deliver stable and precise position information in an indoor environment. A sensor receives RSSI signals from at least three designated sensors and predicts the location on the basis of trilateration. The nature of a limited indoor space is such that there is signal fluctuation and noise in radio-frequency transmission between the sensors. Considering this issue, the authors proposed an accuracy refinement algorithm to filter out noise in the received RSSI signals. To display useful 3D information, an additional feature is required in conjunction with the user’s position to illustrate the view of the user. A digital magnetic compass is used to determine the orientation of the target in real-time and a sensor wirelessly transmits digital magnetic compass (DMC) data to a receiver.

Reference [

45] introduces a novel approach of utilizing the floor plan map posted on buildings to acquire a semantic plan. The visually extracted landmarks such as room numbers, doors, etc., act as a parameter to infer the waypoints to each room. The paper demonstrates the possibilities of augmented reality (AR) as a blind user interface to perceive the physical constraints of the real world using haptic and voice augmentation. The haptic belt vibrates to direct the user towards the travel destination based on the metric localization at each step. Moreover, the travel route is presented using voice guidance, which is achieved by accurate estimation of the user’s location and confirmed by extracting the landmarks, based on landmark localization. The results show that it is feasible to assist a blind user to travel independently by providing the constraints required for safe navigation with user-oriented augmented reality.

Reference [

46] developed a sensor module that can be handled like a flashlight by a blind user and can be used for searching tasks within the three-dimensional environment. By pressing keys, inquiries concerning object characteristics, position, orientation and navigation can be sent to a connected portable computer, or to a federation of data servers providing models of the environment. Finally, these inquiries are acoustically answered over a text-to-speech engine.

Reference [

47] presents a novel wearable RGBD (red, green, blue and depth)-camera-based navigation system for the BVI. The system is composed of a smartphone user interface, a glass-mounted RGBD camera device, a real-time navigation algorithm, and a haptic feedback system. In order to extract the orientational information of the blind users, the navigation algorithm performs real-time six-degrees-of-freedom (6-DOF) feature-based visual odometry using a glass-mounted RGBD camera as an input device. The navigation algorithm also builds a 3D voxel map of the environment and analyzes 3D traversability. A path planner integrates information from the egomotion estimation and mapping and generates a safe and and efficient path to a waypoint delivered to the haptic feedback system. The haptic feedback system, consisting of four micro-vibration motors, is designed to guide the visually impaired user along the computed path and to minimize cognitive load.

Reference [

48] proposes an ego-motion tracking method that utilizes Google Glass visual and inertial sensors for wearable blind navigation. The authors introduce a visual sanity check to select accurate visual estimations by comparing visually-estimated rotation with measured rotation by a gyroscope. The movement trajectory is recovered through the adaptive fusion of visual estimations and inertial measurements employing a multirate extended Kalman filter, where the visual estimation outputs motion transformation between consecutive image captures, and inertial sensors measure translational acceleration and angular velocities.

Reference [

49] presents an indoor navigation wearable system based on visual marker recognition and ultrasonic obstacle perception used as an audio assistance for BVIs. Visual markers identify the points of interest in the environment. A map lists these points and indicates the distance and direction between closer points, building a virtual path. The blind users wear glasses built with sensors like a RGB camera, ultrasonic, magnetometer, gyroscope, and accelerometer enhancing the amount and quality of the available information. The user navigates freely in the prepared environment identifying the location markers. Based on the origin point information or the location point information and on the gyro sensor value the path to next marker (target) is calculated.

The EU Horizon 2020 Sound of Vision project [

50] implements a non-invasive hardware and software system to assist BVIs by creating and conveying an auditory representation of the surrounding environment (indoor/outdoor) to a blind person continuously and in real time, without the need for predefined tags/sensors located in the surroundings. The main focus of the project is on design and implementation of optimum algorithms for the generation of a 3D model of the environment and for rendering the model using spatial sound signals.

Reference [

51] presents a novel mobile wearable context-aware indoor maps and navigation system with obstacle avoidance for the blind. The system includes an indoor map editor and an app on Google Tango device. The indoor map editor parses spatial semantic information from a building architectural model, and represents it as a high-level semantic map to support context awareness. An obstacle avoidance module detects objects in front using a depth sensor. Based on the ego-motion tracking within the Tango, integrating an RGB-depth camera with the capability of 6-DOF ego-motion visual-inertial odometry (VIO) tracking and feature-based localization, localization alignment on the semantic map, and obstacle detection, the system automatically generates a safe path to a desired destination. A speech–audio interface delivers user input, guidance and alert cues in real-time using a priority-based mechanism to reduce the user’s cognitive load.

Travi-Navi [

52] is another sophisticated vision-guided navigation system that enables a self-motivated user to easily bootstrap and deploy indoor navigation services on his/her smartphone, without comprehensive indoor localization systems or even the availability of floor maps. Travi-Navi records high-quality images during the course of a guider’s walk on the navigation paths, collects a rich set of sensor readings, and packs them into a navigation trace. The followers track the navigation trace, get prompt visual instructions and image tips, and receive alerts when they deviate from the correct paths. Travi-Navi also finds shortcuts whenever possible. The evaluation results show that Travi-Navi can track and navigate users with timely instructions, typically within a four-step offset, and detect deviation events within nine steps.

Reference [

53] introduces a validation framework for an indoor navigation system for BVI users. The validation framework includes the following three main components: (1) virtual reality-based simulation that simulates a BVI user traversing and interacting with the physical environment, developed using Unity game engine; (2) generation of action codes that emulate the avatar movement in the virtual environment, developed using a natural language processing parser of the navigation instructions; and (3) accessible user interface, which enables the user to access the navigation instructions. A case study illustrates the use of the validation tool using the PERCEPT system [

26].

2.2.6. Map Matching

Reference [

54] presents a wearable navigation system for BVI in unknown indoor and outdoor environments. This system will map and track the position of the pedestrian during the exploration of an unknown environment. In order to build this system, the well-known simultaneous localization and mapping (SLAM) from mobile robotics will be implemented. A similar approach is used in the enhanced RFID system presented in [

32]. Once a map is created, the user can be guided efficiently by a route-selecting method. The user will be equipped with a short range laser, an inertial measurement unit (IMU), a wearable computer for data processing and audio bone headphones. The purpose is to gather contextual information to aid the user in navigating with a white cane.

Reference [

55] presents an indoor navigation system with map-matching capabilities in real-time on a smartphone. This work presents a map-matching algorithm based on a new reduced-particle filter in order to use these maps later for real-time applications without an expensive laser ranger but relying only on the dual inertial system. It can be used with both pre-processed SLAM maps or with already available maps.

Reference [

56] develops path-planning and path-following algorithms for use in an indoor navigation model used to assist BVI within unfamiliar indoor environments and used to reduce the required accuracy of the underlying positioning and tracking system.

The objective of the research in [

57] was the implementation of a specific data model and navigation routines for indoor applications. The use of map-matching algorithms in order to enhance the navigation performance is absolutely necessary for indoor applications. The association between the estimated position given by the system and the location on the map database provided improved information to the user.

Reference [

58] is another approach for developing indoor navigation systems for BVIs using building information modeling. BIM provides rich semantic information on all building elements, objects and users located in the building, and allows the extraction of information about the topology of a specified part of the building, which is used by an algorithm for coarse-to-fine pathfinding. The proposed BIM can help solve existing problems in the area of indoor navigation for BVI.

2.2.7. WiFi Multimodal Systems

Reference [

59] presents an indoor navigation assistance system combining WiFi and vision information for moving human detection and localization. This combination offers some benefits in comparison with single technology systems such as setup cost, computational time and accuracy. Experimental results show that the proposed technologies are suitable for navigation assistance for visually impaired people. However, so far, the accuracy of the localization solution (1.71 m with reliability of 90%) is insufficient in real applications.

Reference [

60] presents a navigation structure for self-localization of an autonomous mobile device by fusing pedestrian dead reckoning and WiFi signal strength measurements. WiFi and inertial navigation systems (INS) are used for positioning and attitude determination in a wide range of applications. Over the last few years, a number of low-cost inertial sensors have become available. Although they exhibit large errors, WiFi measurements can be used to correct the drift weakening of navigation based on this technology. On the other hand, INS sensors can interact with the WiFi positioning system, as they provide high-accuracy, real-time navigation. A structure based on a Kalman filter and a particle filter is proposed. It fuses the heterogeneous information coming from those two independent technologies. Finally, the benefits of the proposed architecture are evaluated and compared with the pure WiFi and INS positioning systems.

Reference [

61] presents an indoor positioning system that has been designed in that way, examining the requirements of the BVI in terms of accuracy, reliability and interface design. The system runs locally on mid-range smartphone and relies at its core on a Kalman filter that fuses the information of all the sensors available on the phone Wi-Fi chipset, accelerometers and magnetic field sensor. Each part of the system is tested separately as well as the final solution quality. The system managed a 35% increase compared to the most advanced Wi-Fi-only algorithm.

2.2.8. Dead Reckoning Systems

Reference [

62] describes the construction and evaluation of an inertial dead reckoning navigation system that provides real-time auditory guidance along mapped routes. Inertial dead reckoning is a navigation technique coupling step counting together with heading estimation to compute changes in position at each step. The research described here outlines the development and evaluation of a novel navigation system that utilizes information from the mapped route to limit the problematic error accumulation inherent in traditional dead reckoning approaches. The prototype system consists of a wireless inertial sensor unit, placed at the users’ hip, which streams readings to a smartphone processing a navigation algorithm. Pilot human trials were conducted assessing system efficacy by studying route-following performance with blind and sighted subjects using the navigation system with real-time guidance, versus offline verbal directions.

The proposed system in [

63] is based on the inertial measurement unit, which is infrastructure-free and robust. This work investigates the kinematic characteristics of walking. The step frequency detection algorithm and the step length estimation method are developed. Moreover, an effective positioning correction algorithm has been proposed to improve locating accuracy.

2.2.9. Generation of Indoor Navigation Instructions

The complexity and diversity of indoor environments brings significant challenges to the automatic generation of navigation instructions for BVIs. Reference [

64] introduces a user-centric, graph-based solution for cane users that takes into account the blind users’ cognitive ability and mobility patterns. The generated graph describes all possible actions in the environment. Each action is assigned a weight, which represents the cognitive load required to cross the specific link. The path is represented by a linked list. Before it is translated into verbal sentences, it is segmented it into pieces properly, so that each segment fits into one instruction. The translation method is simply to concatenate different prepared sentence patterns. The system has been tested successfully against the efficiency of the instruction generation algorithm, the correctness of the generated paths, and the quality of the navigation instructions.

The system presented in [

65] generates shoreline-based optimal paths, including a series of recognizable landmarks and detailed instructions, which enables the visually impaired to navigate to their destinations by listening to the instructions on their smartphones. The paths and instructions are generated on a computer installed on an indoor kiosk where the visually impaired enters his or her destination, and then the generated instructions are wirelessly transferred to the user’s smartphone.

3. The Blind RouteVision Outdoor Blind Navigation Application Experience

Blind RouteVision, formerly BlindHelper, is an earlier application addressing the autonomous safe pedestrian outdoor navigation of BVI. Building upon very precise positioning, it aims to provide an accessibility, independent living, digital escort and safe walking aid for BVI demonstrating unparalleled state-of-the-art functionality. The application operates on Android smartphones, exploiting Google Maps to implement a voice-guided navigation service. In the third quarter of 2016, Android managed to capture a record 88% of the global market, according to Strategy Analytics. These figures reveal not only that the vast majority of people are using Android devices, but also fierce competition between Android smartphone manufacturers, which squeezes the product price, including the smartphone cost, to become lower than any other development platform. In the future, the application will be available for the iOS platform as well, as the perception of iOS superiority in accessibility applications is quite strong in the blind community, but characteristically doubted at the same time. However, from the development viewpoint, iOS does not present any clear technical advantage over Android which would benefit the presented application. On the other hand, especially in the past, more blind accessibility applications were available on the iOS platform and therefore many BVIs own iPhone devices. Under these clarifications, the cost of the aid is the prevailing factor for the majority of BVIs in Greece, who would prefer an Android version unless they already use some assistive iOS accessibility applications. This was clearly stated in discussions with members of the managing board of the Lighthouse for the Blind of Greece.

The smartphone application is supported by the following external components:

An external embedded device integrating a low power Atmega328p microcontroller. The microcontroller is the cornerstone of the embedded application, as it is running the code responsible for the reception of the geographic coordinates of the moving person, the handling of the keypad and user commands, the sending of application data to the Android application via Bluetooth, as well as measuring the object distance in real time along the route of the visually impaired person. The right choice of uC is a definitive step towards system implementation, with an impact on the total cost and future upgrades of the embedded application.

A high-precision GPS receiver based on the u-blox NEO-6M chip exploiting up to 16 geostationary satellites leading to a location accuracy of 0.11 m in the demanding context of pedestrian navigation for visually impaired people. Our trials measured deviations that are crucial for pedestrian mobility in the order of 10 m between the locations reported by the smartphone GPS tracker and the corresponding real geographic coordinates, while the external GPS tracker deviations were less than 0.4 m, receiving signal from 11 satellites.

A simple hex keypad (4 × 4 matrix) with dual button functionality to allow the BVI to interact easily with the app to select routes and other functions among 32 available offerings.

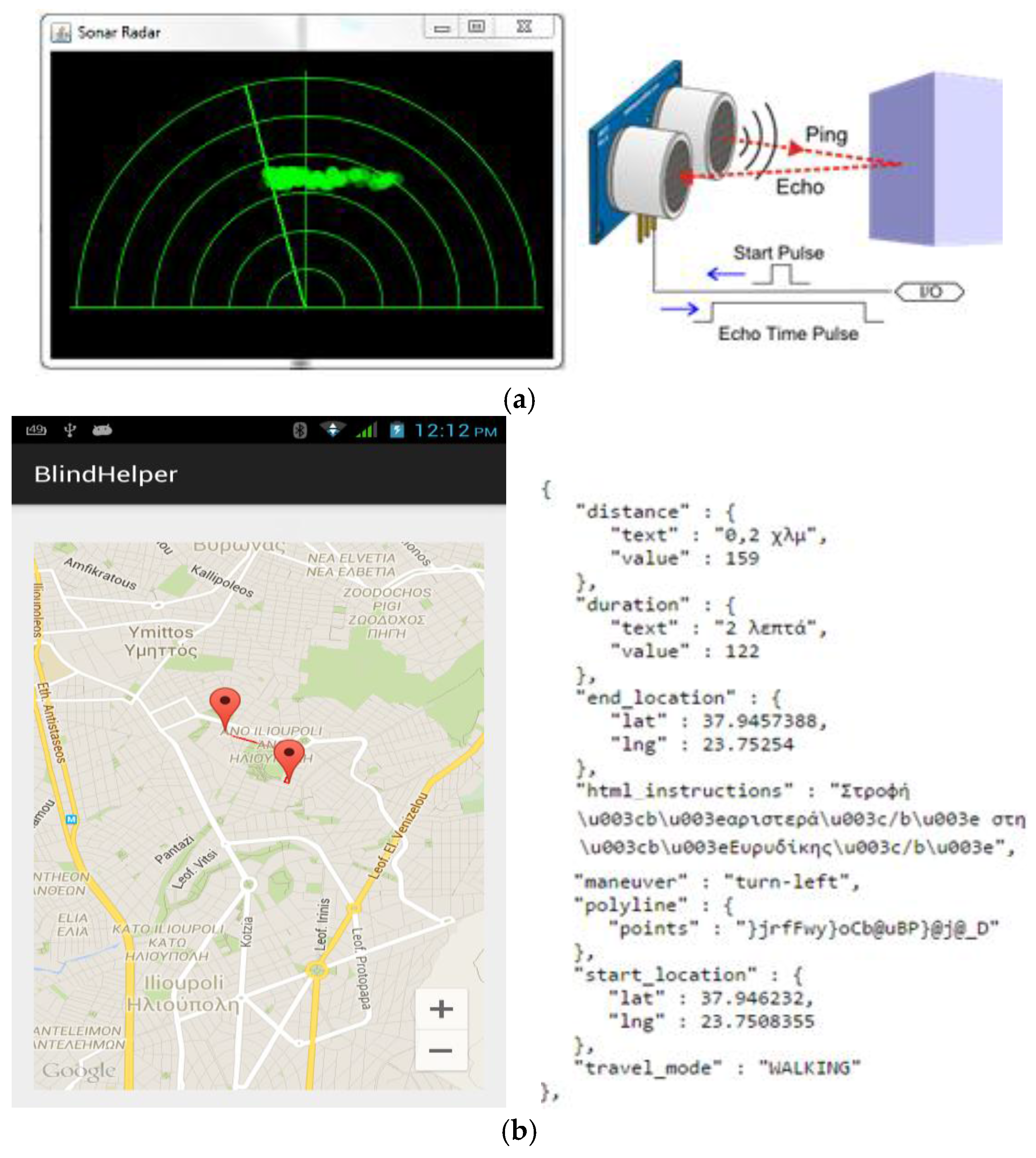

A sonar sensor (HC-SR04) mounted on a servomechanism able to quickly direct the sonar beam across a viewing angle, similar to a radar view function, for the real-time recognition and bypassing of obstacles in the near field along the route of the BVI (see

Figure 1a). To minimize the annoying frequent issuing of unnecessary sonar information, the implemented radar view functionality continuously calculates successive measurements and reports only those objects in collision trajectory which are stepwise approached by the BVI. Walking persons can be recognized among fixed obstacles via relevant velocity calculations. In case of a fixed obstacle identified by the radar in collision trajectory towards the BVI, the system issues avoidance commands considering the width of the obstacle (e.g., “Obstacle at 2 m. Move 1.5 m to the right to avoid it”). The sonar/radar function is able to reliably guide the user to walk at a safe distance parallel to building walls along his/her route as well as at a safe distance from parked cars along the pavement. Near-ground obstacles and abnormalities (e.g., curbs, potholes etc.) cannot be easily handled by sonar. The BVI can instead sense such ground obstacles through his/her white cane.

A Bluetooth module (EGBT-046S based on the CSR BC417 radio chip) interfaced to the microcontroller via universal asynchronous receiver-transmitter (UART) interface at 9600 baudrate, which undertakes to send the application data from the microcontroller to the smartphone application through serial communication.

Route navigation (see

Figure 1b) is accomplished as follows: The Android application reads the microcontroller’s Bluetooth packet with the start and destination location coordinates. Next, the navigation instructions are downloaded in javascript object notation (JSON) format and an asynchronous parser method undertakes to decode the streets and call the voice navigation method with the HTML instructions that speaks the navigation, using a text-to-speech speak method to convert the string type instruction to a voice command (e.g., “At 32 m head east on Mavrommateon toward Mezonos → BVI instruction: Head towards the 10 o’clock position. At 56 m slight right onto Thrasivoulou. BVI instruction: Head towards the 1 o’clock position. At 200 m turn left onto Evridikis. Turn left. At 100 m turn right toward Mavili. Turn right. At 44 m turn right. Turn right. Take the stairs. Destination will be on the left. BVI instruction: Head towards the 10 o’clock position.”). Google Maps navigation instructions typically contain details that must be memorized and processed by the user, meaning an important cognitive and memory load, which, unlike sighted users, might be tricky for a blind user. Therefore, a concern was to simplify the navigation instructions and cognitive load addressing the BVI.

Additional application features include a configurator to define a list of destinations which can be selected through the keypad, app synchronization with traffic lights, weather information to help with dressing appropriately (selected through the keypad and retrieved by the Android application via a web service), dialing and answering phone calls, emergency notification of family and carers about the current position of the BVI, and the exploitation of dynamic telematics information regarding public transportation timetables and bus stops for building composite routes, which may include public transportation segments in addition to pedestrian segments. The configurator allows an assistant person to easily configure and store destination locations of interest in the application for navigation purposes and to assign destinations and other useful functionality to the keypad buttons. A future work item is to provide a personalized system which can be adapted to the particular needs of the individual users. Furthermore, an innovative and challenging application component is currently under development to provide real-time visual information to the BVI along the route exploiting machine- and deep-learning technology.

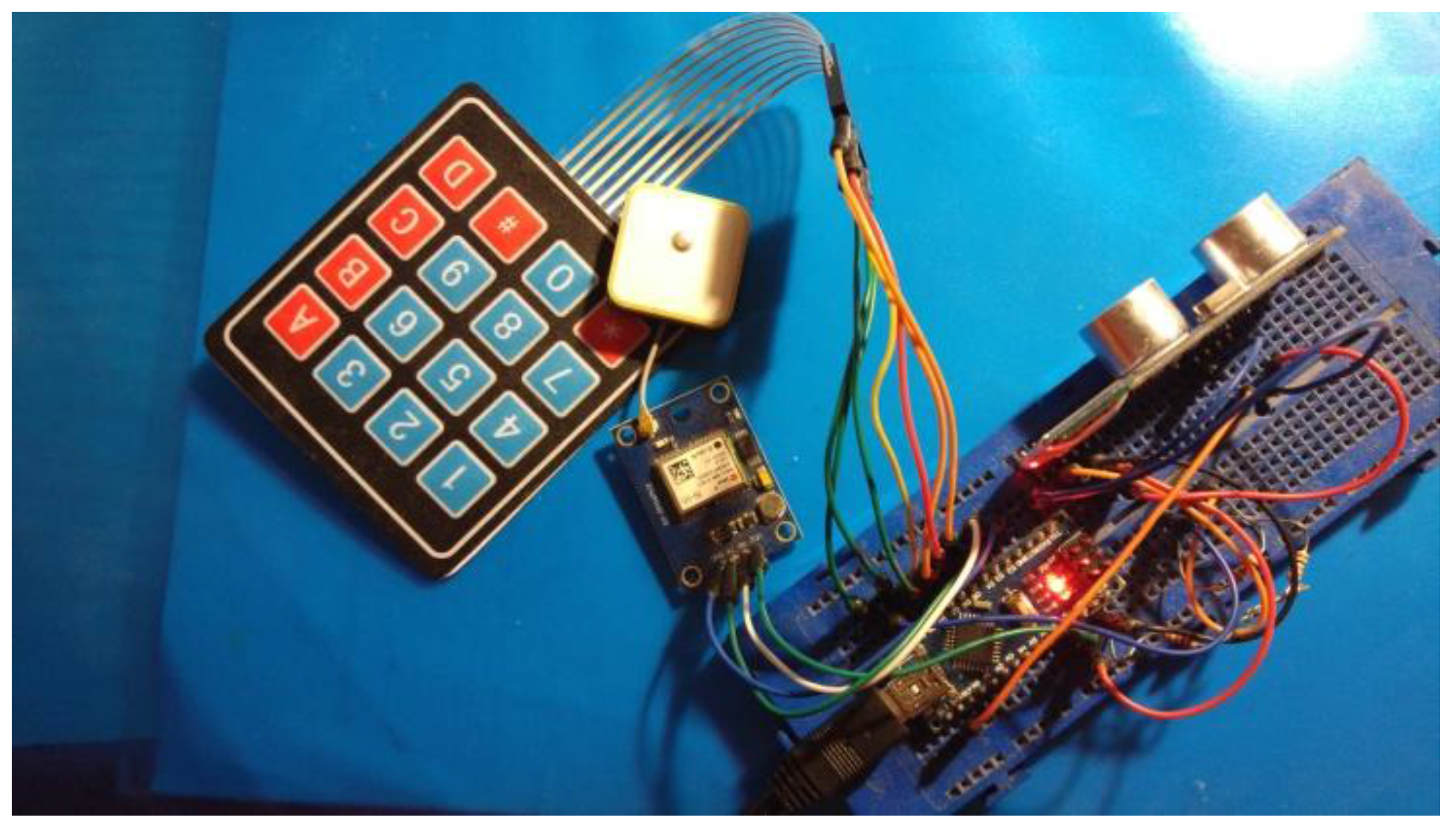

The Blind RouteVision embedded device is a small wearable device meeting the requirement for easy device portability. Currently, a few prototypes (see

Figure 2) have been given to the Lighthouse for the Blind of Greece in order to evaluate the system with real users and receive valuable feedback for system functionality improvement. The bill of materials (BOM) cost of the prototype external embedded device is less than

$30 (samples price), confirming the absence of financial obstacles in the way of the presented development. The pre-product embedded system is assembled on a small, simple to produce, two-layer printed circuit board (PCB), which is housed in a plastic PCB enclosure, mounting the keypad on the outer surface and with proper openings required for the operation of the sonar sensor.

4. Indoor Positioning System

The operation of the Blind MuseumTourer system relies on a reliable indoor navigation component that will help the BVI implement a self-guided visit across the museum. The system will be able to identify in real-time the position of the BVI in the internal space and guide the user towards the next exhibit along the guide route. When the exhibit is reached, it is presented orally to the user.

Different solutions to the problem of indoor positioning and navigation have been proposed which often prove not reliable enough [

66]. The satellite GPS system used for outdoor navigation cannot be a reliable solution for indoor navigation due to the significant attenuation of the satellite signal inside buildings. However, several proposed solutions adopt a similar geometric position-detection method and try to detect the current user location considering the received strength at the user device of the wireless radio frequency (RF) signals from multiple transmitters installed in the internal space. Due to the reflections of the wireless signals in the internal space, a reliable solution to the problem of indoor location detection for autonomous navigation is hard and highly complicated, and quite often the deviation of the calculated location from the real location makes the solution unreliable.

4.1. WLAN-Based Location Determination

Several research efforts have proposed GPS-like solutions to the indoor location detection problem employing multiple WiFi access points. Many such solutions exploit a feature of smart-phone/tablet devices, which calculates the signal strength received at the device from WLAN transmitters operating in the internal space. Both the Android and iOS frameworks provide relevant system calls. Simple implementations in this context usually fail to achieve very good location detection accuracy and normally calculate the current distance of the user device from a WiFi transmitter, considering the WiFi connection, the media access control (MAC) address and the RSSI (received signal strength) indication of the smart-phone/tablet. Knowledge of that distance overlaid on a map-making of the internal space can help estimate a most-likely current user location. The current RSSI value is cross-checked in real time against offline measured normalized signal power level values, stored in a database, across various distances from the transmitter and locations of the indoor place. Using multiple access points can help improve location detection accuracy.

In the context of the aforementioned solutions, a few research efforts have demonstrated that it is feasible to achieve adequate location detection accuracy (e.g., [

67]), but most implementations employ complex and complicated methodologies and require computationally intensive calculations in the applied mathematical models and methods. The generic outline of such solutions is the following:

A dense radio map is made for the indoor space including processing of various models which considers the RSS from various transmitters in all radio map positions.

During the online operation of the application the current user location is detected using the signal power level values received at the user device from a few access points operating in the indoor space. These signals comprise a received signal strength vector which is best matched in terms of Euclidian distance with a pre-calculated RSSI vector indicating a point on the radio map.

Briefly, the process of implementing the solution comprises the following tasks:

The radio map stores/represents the distribution of the signal power level received from the WLAN access points at every sampling position in the room which is included in the radio map (e.g., a radio map for a 40 m × 50 m indoor space in which the distance between neighboring points is 2 m yields a grid consisting of 500 sampling positions).

Using clustering techniques, the positions in the radio map are clustered according to the coverage range of the WLAN access points, thus reducing the computational requirements.

A discrete space estimator is implemented that returns the radio map position which most likely matches the current user position dynamically represented by an RSS vector.

Using an auto-regressive correlation model, the correlation between successive power level samples from the same WLAN access point is recognized. The aim is to improve the accuracy of the discrete space estimator using the average value of N correlated samples.

A continuous space estimator is implemented which takes as input the estimated discrete position (one of the radio map positions) and outputs a more accurate estimation of the real user position in the continuous space.

A small-scale compensator is implemented to manipulate small-scale variations in the wireless channel.

In conclusion, a reliable WLAN location determination component in support of the Blind MuseumTourer system/application requires extensive preparation actions and trials involving the museums for which the application will be available. Under the assumption that the museum administration gives the necessary permissions for the installation of multiple WLAN access points across appropriate indoor spots and the realization of extensive measurements in the indoor space required to build the radio map, as well as that installation is safe for public health, a WLAN-based solution can accurately determine the position of a moving person [

68]. Therefore, it is feasible to implement a system for the autonomous navigation and self-guidance of BVI inside museums using a smartphone which will vocally and accurately guide the user towards the exhibits.

Evidently, the implementation of a reliable WLAN-based location determination system is a pretty challenging project. A rough estimation of the set up effort required only for carrying out the measurements necessary to create a relatively dense radio map for a 30 × 45 m

2 indoor room involving 600 sampling positions, in which the distance between neighboring points in the grid is 1.5 m, could easily count 1–2 person weeks for just one museum hall. Creating a radio map covering an entire typical museum, such as those which will be addressed by Blind MuseumTourer [

3,

4,

5], could easily involve an effort which may well exceed 3–4 person months. The implementation of a simpler and still-reliable WLAN-based system should be feasible considering other parameters such as predetermined, transparent to the public, linear tactile indicators mounted on the floor for BVI, user step counting, positioning information from proximity sensors installed in the indoor space at exhibits, entrances/exits, columns and other guidance spots (see next section).

4.2. Location Detection Using Beacons and Surface Mounted Tactile Guiding Indicators

This section presents an indoor location detection technique which exploits beacons as proximity sensors at the museum exhibits and other guidance spots, along with tactile linear routes mounted on the floor of the museum rooms. A BVI who wishes to have an interactive autonomous navigation inside the museum can easily detect and follow these predetermined routes using the white cane. The marking of the linear blind navigation routes on the floors is according to the inclusive design and implementation guidelines issued by the public administration services for the facilitation of pedestrian movement and the daily routine of people with special needs. These guidelines conform to the international standards for assistive tactile walking through surface indicators (TWSI) and help a BVI travel independently [

69,

70]. The guiding indications (such as strips, crossings, warnings and endings) which are surface mounted on the floor of the museum rooms are transparent and non-invasive to the rest of museum visitors and neither affect nor hinder at all their movement.

Figure 3 depicts the main standardized TWSI patterns and suitable simplified indoor guiding strip and warning stud implementations as a tactile orientation system for BVI [

71].

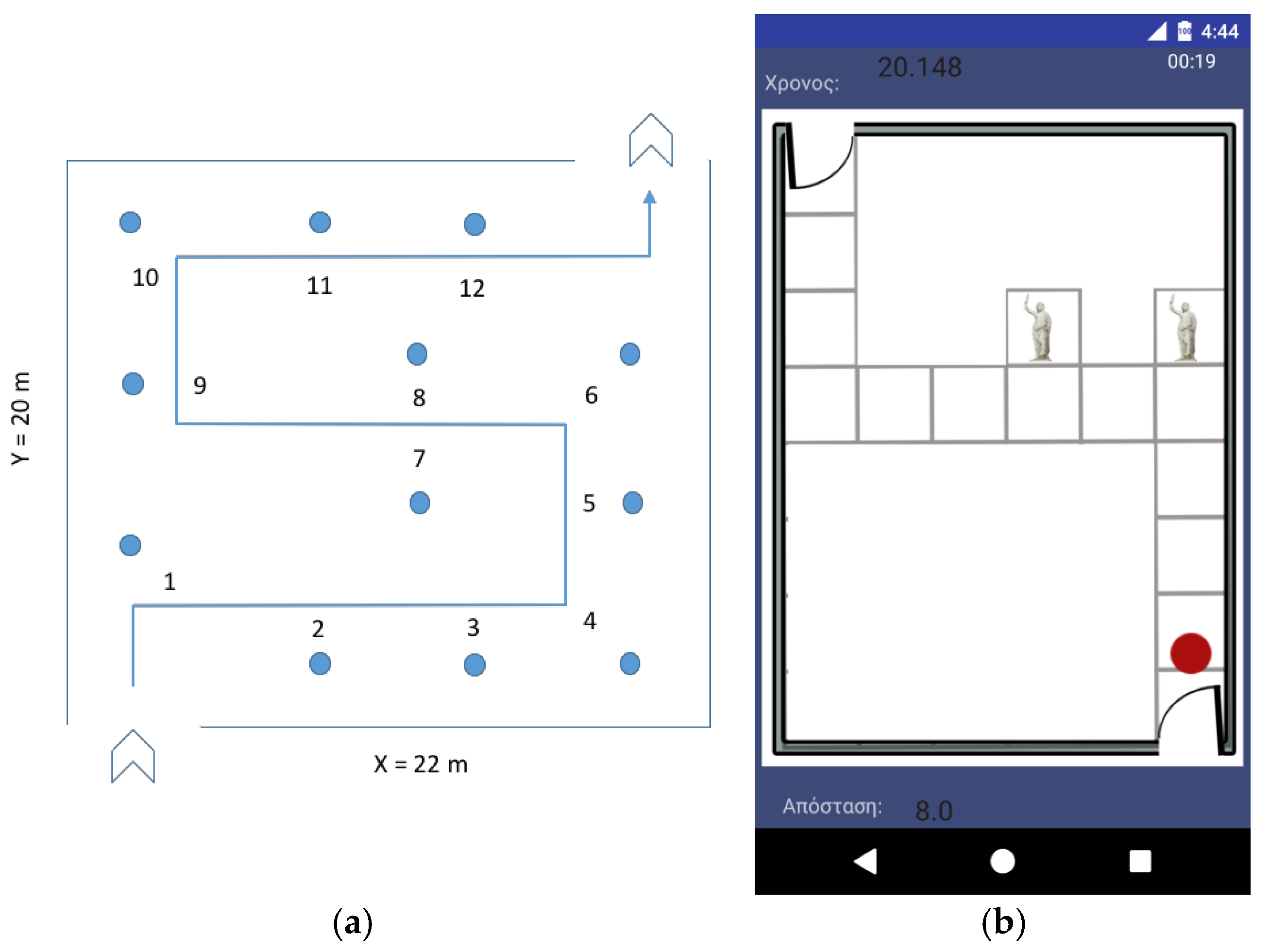

Figure 4a illustrates a typical floor plan of a museum room with an assistive tactile guiding route surface mounted on the floor for autonomous blind navigation/guidance, room dimensions, the positions of the exhibits, as well as the sequence of the exhibits and corresponding vocal presentation during the visit. Similar floor plans of all museum rooms will be designed and integrated with the smartphone application. The lengths of the rectilinear segments of the route are calculated in analogy with the x/y dimensions of the room. Proximity sensors at the exhibits inform the Blind MuseumTourer smartphone application about the current location of the user when an exhibit is approached and vocal presentation follows. The application interoperates with low cost Bluetooth beacons transmitting a small amount of data (the exhibit ID) over a short distance using the Bluetooth low energy (BLE) protocol [

72]. The smartphone application receives the beacon signals and determines the user location along the autonomous guidance route. At the same time, besides the discrete-space-estimator beacon-signaled user location, the application continuously performs inertial dead-reckoning calculations, i.e., BVI step counting and corresponding distance calculations as well as heading and turn recognition, using the smartphone accelerometer which, being a continuous space estimator, helps calculate the user trajectory and walked distance and determine the user position in the continuous indoor space. In many cases, a beacon can represent multiple exhibits, for instance exhibits No. 7 and 8 in

Figure 4.

Figure 4b depicts the corresponding implementation of the concept in an Android activity (see details in

Section 4).

BLE beacons usually do not interfere with other wireless networks and/or medical devices. However, undesired interference may occur in the case of multiple WiFi signals, as BLE and WiFi share the same 2.4 GHz frequency band. This potential problem can be easily avoided through configuring the WLAN access points to use channels one and 6–12 only, while Bluetooth is using the rest of the available channels in a uniform manner (frequency hopping).

Whenever the user enters a museum room, the event is detected by the application, which loads the corresponding floor map and initiates the navigation process in the indoor space, such as in

Figure 4. The user can interrupt the self-guided tour anytime to dial a call or to proceed to the restroom, the canteen/cafeteria, an information/emergency desk, or the exit. In the latter case, the application guides the user via voice navigation instructions towards the point of interest. The guided tour remains on pause until the user returns to the interruption point following the corresponding vocal instructions to continue the tour.

The Blind MuseumTourer system logs the self-guided tour details for future reference. For instance, at the end of the visit it may make the tour data available to the group leader with the user’s permission, in case the visit to the museum is made by a group of BVI followers (e.g., pupils) with a guide in charge.

The implementation of a reliable indoor location determination system using BLE beacons and assistive tactile linear route surface indicators on the floor of museum rooms enhanced with inertial dead-reckoning calculations is feasible and simpler than the alternative solutions. The initial system prototype integrates an indoor location determination component implemented according to the aforementioned specifications.

5. Blind MuseumTourer Application Functionality

5.1. Application Activities and User Interaction

The Blind MuseumTourer application runs on an Android smartphone. An iOS version will be made available in the near future. The use of headphones is recommended. Since drowning out the nearby ambient sounds can be life-threating in some cases, especially in outdoor navigation, it is advised to use bone conduction headphones allowing the BVI user’s ears to be unobstructed, so as to be aware of the surrounding environment. When typical headphones are used, it is advised to use a mono headphone, leaving one ear open to the traffic and ambient sounds.

The application is initiated through either a voice command or a widget on the smartphone screen. A welcome splash screen is presented and the application reads a welcome message such as the following, which at the same time informs the user how to interact with the application:

“Welcome to the museum self-guided tour application!

- >

For your convenience, you may interrupt the guided tour anytime by double tapping at the upper screen to move to the restroom, the cafeteria, or the exit, to talk to the help desk or make a phone call.

- >

Anytime you wish to go back to the previous menu, double tap at the bottom screen.

- >

To select an option double-tap the respective left, middle, or right section at the centre screen.

- >

To hear a selection, please single-tap at the respective screen section.”

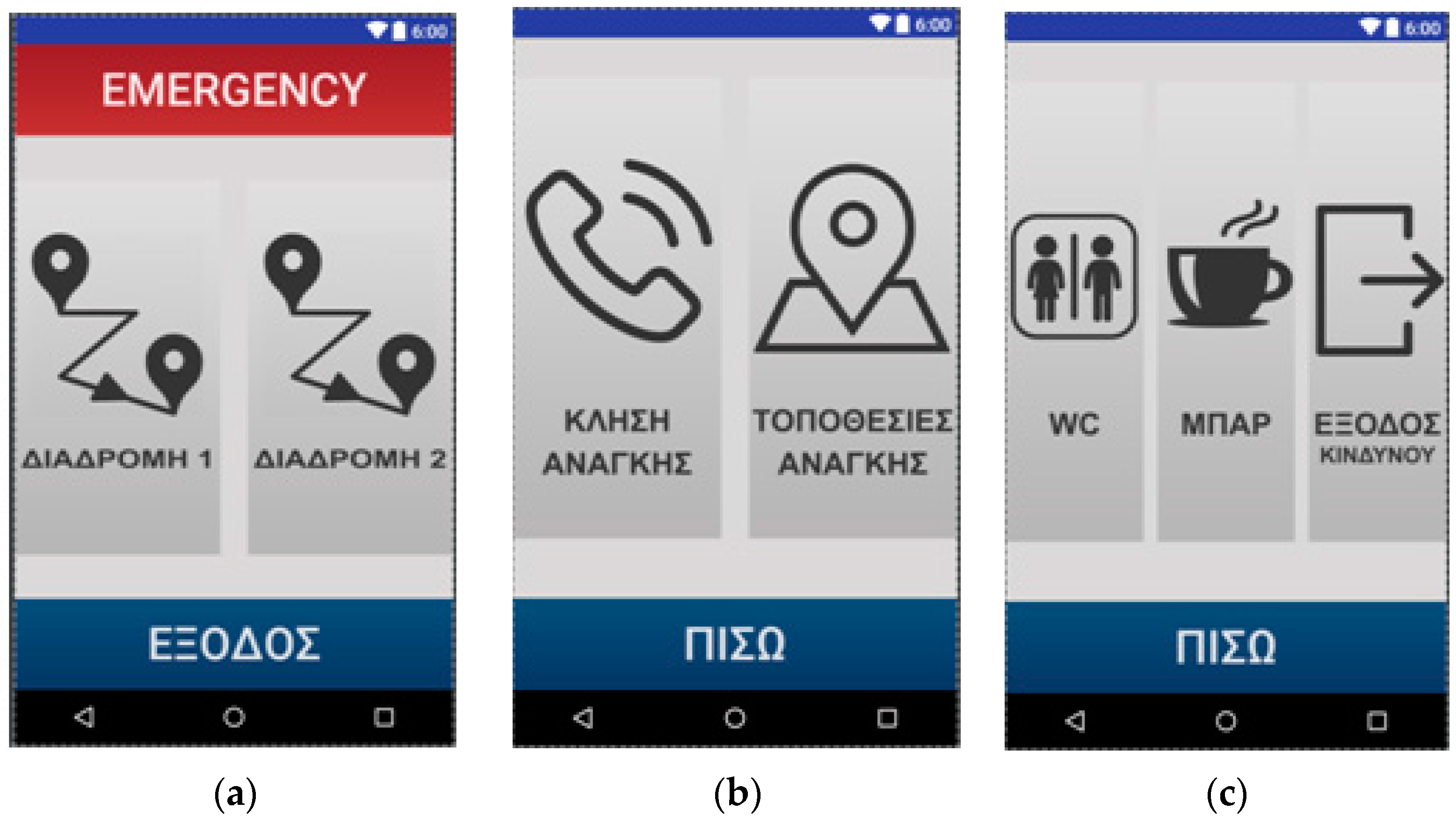

The application functionality is presented in the ensuing. First-time activation by a user will run a configuration activity in which the user should declare some personal details. The main activity will run next, presenting vocally four options to the user, dividing the smartphone touchscreen into four large sections, which can be easily pointed to by the BVI user: “Routes” 1–2, “Help”, and “Back” (

Figure 5a). The user interacts with the application either through a single tap for hearing a selection, a double tap for confirming a selection, or through voice. Selecting a route initiates the self-guided tour activity (

Figure 5b). This activity handles the dynamic navigation inside the museum rooms in real-time, determines the user location during the self-guided tour and presents the exhibits approached by the user along the tour route. The help option available anytime allows the user to talk/find the way to the help desk (

Figure 5c), or find the way to the restroom, to the canteen/cafeteria, or to the exit (

Figure 5d), following the voice navigation instructions. Depending on the number of options offered by the application’s activity screens, the smartphone touchscreen is divided into respective sections (

Figure 5c presents a bottom “back” touch section and left and right areas in the rest of the touchscreen;

Figure 5d divides the major touchscreen into three vertical areas corresponding to the three available options).

Figure 6 depicts indicative summary floor plans with exhibition routes at the National Archaeological Museum and at the Acropolis Museum. The Blind MuseumTourer application integrates an indoor positioning system using beacons and unobtrusive tactile route indicators on the floor, enhanced with inertial dead-reckoning functionality to improve navigation accuracy and reliability, according to the principles presented in

Section 4.2.

5.2. Inertial Dead Reckoning (IDR) Calculations

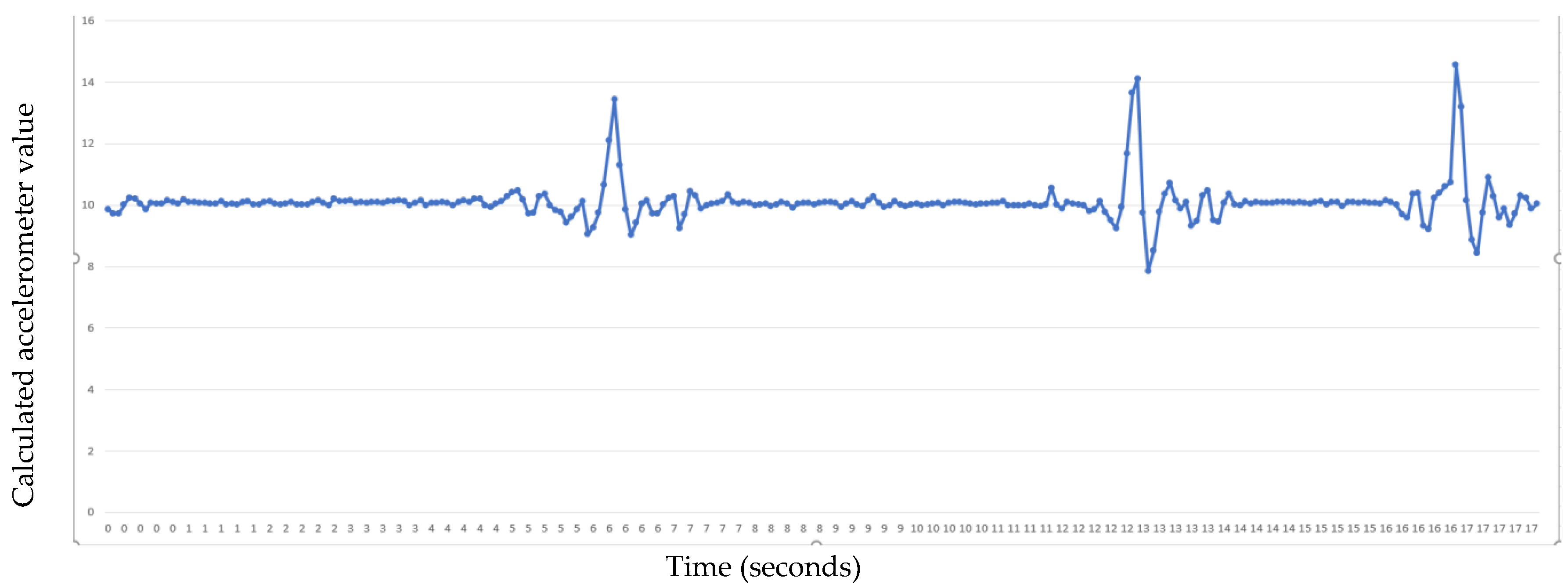

Besides the exploitation of a set of beacons as proximity sensors regarding points of interest (POIs) in the area, the Blind MuseumTourer application relies additionally on a step metering mechanism to implement precise positioning and a reliable system, which is crucial for BVI. Using the integrated accelerometer of the smartphone device, continuously sampling its x, y, z coordinate values (see

Figure 7), the system continuously calculates and monitors the average BVI stride length (distance from the heel of one foot to the heel of the other foot when taking a step) and pace along route segments of a known distance (e.g., between successive exhibits) in order to issue precise navigation instructions. Before starting the assisted self-guided tour, initial calculations can be performed precisely along a predetermined short route segment. The accelerometer sensor can report motion start and stop accurately and in real time. As the distance between successive exhibits along the navigation route is known, the application notifies the BVI before reaching the next exhibit announcing the number of remaining steps until the next POI. This way the BVI can be more effectively and accurately guided, through the combined use of beacons and step metering functionality, against typical positioning solutions relying on the exclusive use of beacons.

During the BVI self-guided tour, the step counting and associated distance calculation starts as soon as the user starts walking and is paused when the user stops at an exhibit, where the application reads a recorded message presenting information about the exhibit. Following a spoken presentation session, the application guides the user towards the next exhibit until the self-guided tour is ended or interrupted by the user.

The application further monitors the user turns to verify whether the user has acted according to the turn instructions. This mechanism relies on sensor fusion using the accelerometer and a rotation vector. Sensor fusion reports azimuth, pitch and roll values, with the first two values used to detect the user turn. Finally, using the smartphone magnetometer sensor, the integrated inertial dead-reckoning mechanism detects in real time the user heading through the relative orientation of the user device relative to the Earth’s magnetic north.

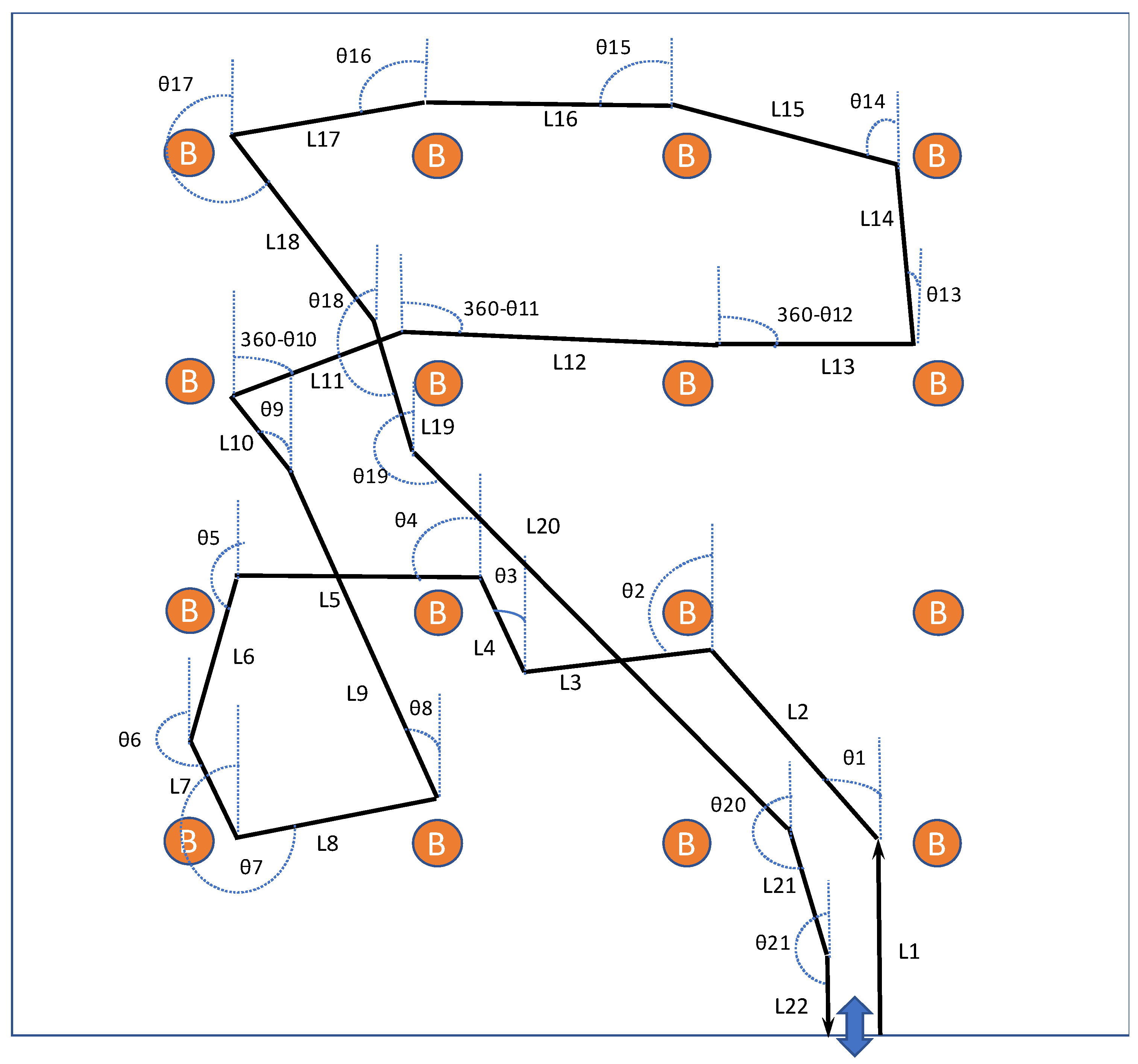

Figure 8 is a schematic depiction of the tracking capability of our hybrid indoor positioning mechanism relying on inertial dead reckoning and exploiting BLE beacons deployed in the indoor environment. Assuming the generic problem of BVI free travel inside the indoor environment, the developed location tracking mechanism can determine the motion trajectory of the BVI and represent it as a sequence of rectilinear segments, simulating very close to reality how blind and visually impaired persons walk. The lengths of the rectilinear segments are estimated using the integrated accelerometer, as explained, while their orientations, shown exemplarily in the figure relatively to the

y-axis, can be determined using the integrated magnetometer. Magnetic north is assumed in the direction of the

y-axis. In order to enhance the accuracy and reliability of the inertial dead reckoning positioning mechanism, beacons can be exploited for the verification and adjustment of the continuous real-time calculations and dynamic parameter valuation and continuous calibration of the tracking mechanism during the execution of the indoor navigation application.

5.3. Indoor Space Map and Positioning

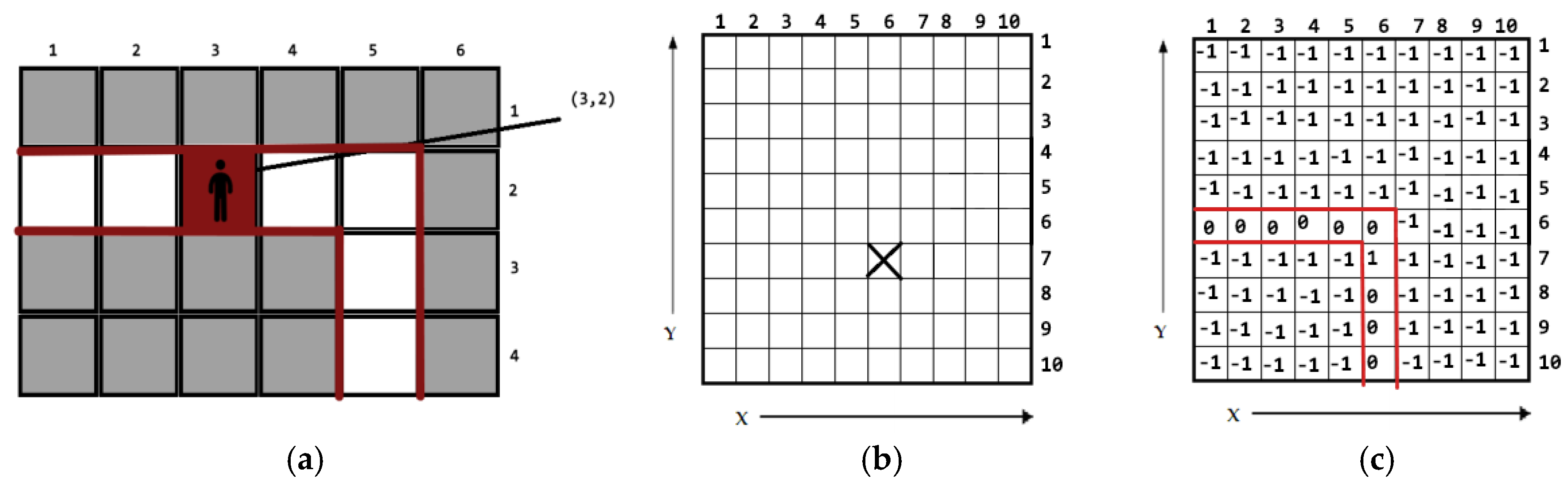

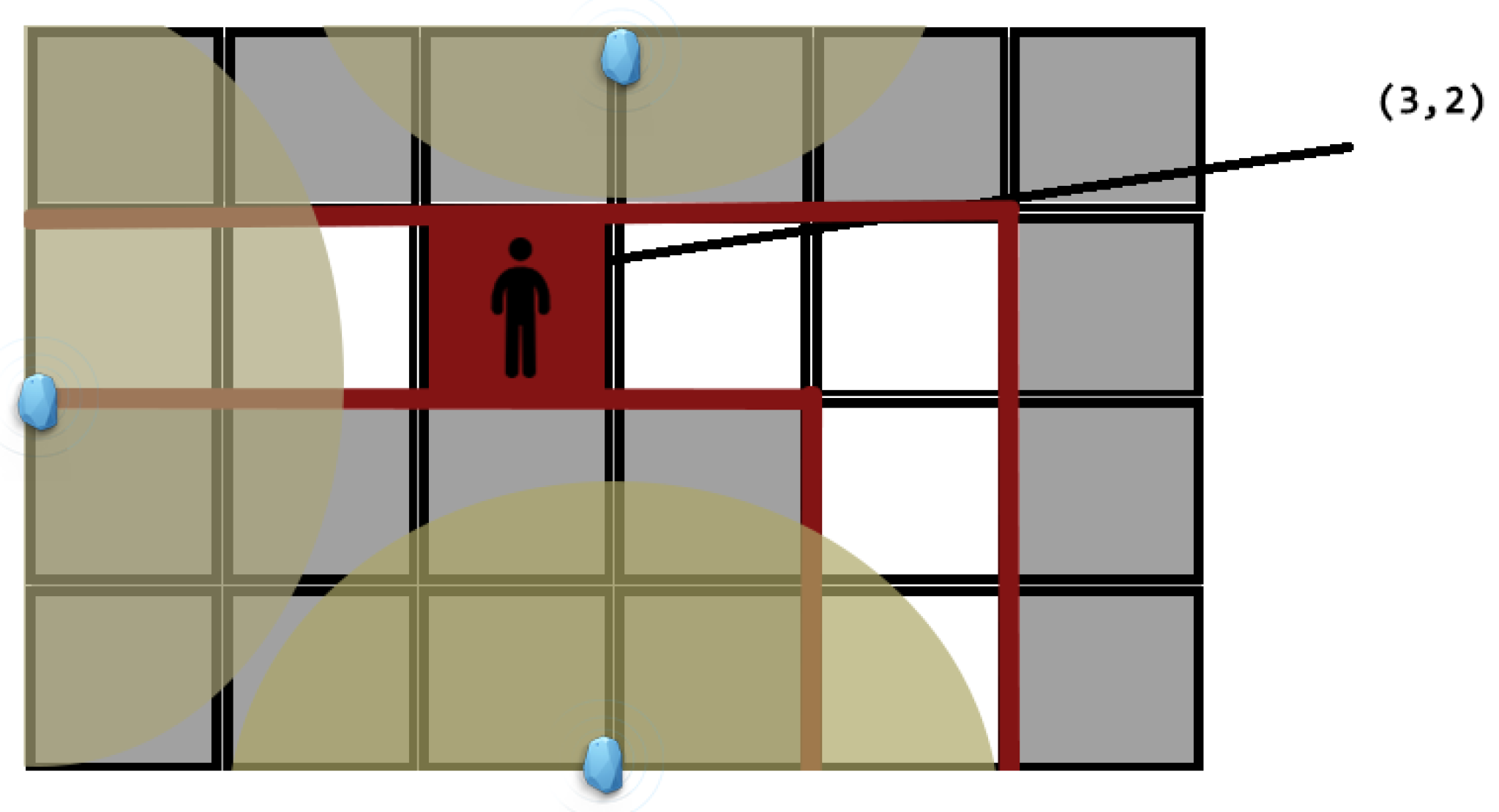

The Blind MuseumTourer smartphone application uses two-dimensional tile maps to represent the indoor spaces and museum halls (see

Figure 9). The tile area is set to 1.5 × 1.5 m

2. Each tile has its own x, y coordinates on the map. The current user position in the room, estimated through the combined beacon proximity and dead reckoning calculations, corresponds to a certain tile, marked red and associated with a true Boolean flag, on the room’s tile map. Moving to a neighboring tile turns the previous tile color white and its Boolean value false. For tiles which are far from the assistive tactile path, which are normally not accessible to the BVI, the Boolean value is set to −1.

Figure 10 depicts a subset of an indoor guide map with three beacons installed along an assistive tactile path for use by a BVI. The yellowish circular disks illustrate potential user positions than can be detected by the beacons. Through proper calibration using a set of transmit and receive power measurements, the application is able to report perfectly accurate positions (1 cm error) in distances up to 3 m from a beacon. In case two or more beacons detect the user, the nearest beacon is weighted more than the distant one when estimating the current user position.

Figure 4b illustrates a simple example of the implemented concepts, depicting a room tile map, an assistive tactile path with turns and points of interest (e.g., exhibits, help desk, exit etc.). The time on the upper left part of the screen reports the estimated time in seconds until the user reaches the next exhibit depending on his/her average speed. The value reported on the upper right is the total elapsed application time. The value reported on the lower left part of the screen is the distance in meters until the next exhibit.

6. Blind IndoorGuide: A Complete Solution for Blind Indoor Navigation

This section discusses briefly the evolution of Blind MuseumTourer into a generic IndoorGuide application and provides a brief technical insight regarding the navigation guidance process. The concepts and corresponding implementation presented in this paper, exploiting surface-mounted assistive tactile indicators, beacons as proximity sensors to POIs, inertial calculations and smartphone functionality, can be applied in a similar way to effectively address the problem of blind indoor navigation in many other indoor guidance use cases. BVI public indoor guidance needs can be effectively addressed in hospitals, shopping malls, airports, train stations, public and municipality buildings, office buildings, university campus buildings, hotel resorts, passenger ships, etc. The presented application for indoor navigation can be easily modified and customized to a specialized Blind IndoorGuide App, depending on the use case and can effectively assist a BVI in self-guiding him/herself inside complex buildings with hundreds of POIs. The main differentiation of a specific Blind IndoorGuide application addressing a particular use case against MuseumTourer is the integration of a simple and efficient destination/POI selection mechanism, which triggers the application to issue proper way-finding instructions from the current user position to the selected destination POI using a shortest path routing algorithm. In this way, the BVI can effectively navigate to a sequence of indoor POIs among a large available set of POIs.

Figure 11 illustrates the floor plan of the Department of Emergency Incidents of Evangelismos Hospital, the largest Greek hospital in Athens. It additionally depicts an overlay drawing with navigation paths and POIs (red line segments and dots respectively), including connectors to other floors, handled by our Blind HospitalGuide application. The application is able to guide the BVI between any two points (red dots) across the navigation map. Evangelismos, like any large hospital, comprises numerous departments and dozens of medical units (nursing, diagnosis, surgical etc.) arranged across many floors. This is just an example of what a typical complex floor plan and navigation path with many POIs inside a large building or complex indoor environment looks like, and the required complexity addressed by a Blind IndoorGuide application. Connectors to other floors are used to interconnect floor navigation graphs during runtime depending on the destination POI selected by the user, thus enabling navigation across the whole building.

7. System Evaluation

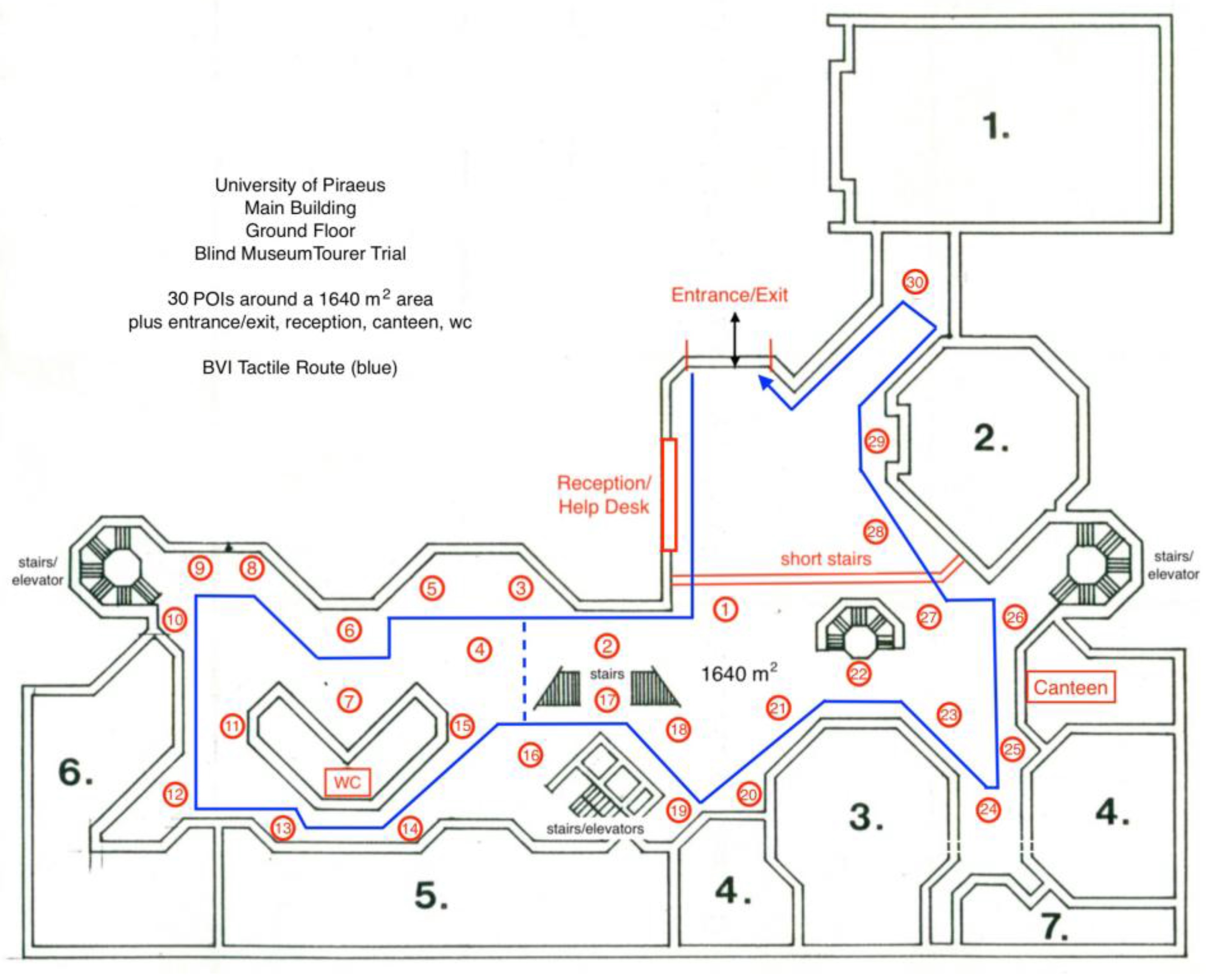

Successful tests of the presented system were performed with three blindfolded sighted users in the ground floor area of the University of Piraeus’ main building (

Figure 12). The users were given a Blind MuseumTourer smartphone and a cane at the entrance of the building. Guidelines on how to use the application and the surface mounted indicators were provided by the trial staff and the blindfolded users were left to navigate around the 1640 m

2 space following a predetermined tour between several POIs (30 POIs representing museum exhibits plus another four POIs: entrance/exit, reception/helpdesk, canteen/cafeteria and rest room) guided by the Blind MuseumTourer smartphone application. During the tour, the users were also able to interrupt the tour anytime asking the application to guide them to a specific place (any of the four non-exhibit POIs) and subsequently either resume the tour from the interruption point or move to the exit. Two shortcuts available in the tour ring (between POIs No. 3 and 16, as well as across the short stairs) can be exploited by the application to shorten the path to the requested POI in that case. At the end of this experience, when the blindfolded users had reached the exit, they were asked to evaluate three critical factors of the indoor navigation application: reliability, instruction efficiency and ease of use. All users gave excellent grades in all aspects. Besides the important subjective evaluation, the trial additionally evaluated the inertial dead reckoning localization error per path segment between successive POIs.

The problem of blind users possibly colliding during the self-guided navigation is resolved either through enhancing the Blind MuseumTourer system with the external sonar device of the Blind RouteVision outdoor navigation system (see

Section 3) and mixing the sonar warnings with the navigation instructions, or through implementing a server-side software and additional functionality into the smartphone application in order to monitor in real time the positions of all blind persons navigating in the place at the same time. In the latter case, the Blind MuseumTourer application keeps reporting the current BVI position to the application server, reading at the same time the positions of other BVIs along the navigation path. In case of a collision event with the blind person in front or behind, the application promptly warns the user. Alternatively, a sonar unit such as the Smart Guide developed by the Lighthouse for the Blind of Greece [

73] can be used in parallel with the Blind MuseumTourer application to ensure collisions along the navigation path are avoided.

Table 1 summarizes the measurements logged by the application regarding the trial. The length of the trial tour ring was 246.2 m. Path segment lengths (in meters) in between successive POIs are depicted, as well as the average time (in seconds) required by the blindfolded users to walk the distance between successive POIs. The average total travel time assuming no stops at POIs was 1177 s (19 min and 37 s). When reaching an exhibit POI, the user stops for 30 s to hear the narration and touch the exhibit. Therefore, another 900 s is added to the average total time of the self-guided tour (35 min). Path segments in between successive POIs containing surface-mounted warning signs for direction change reasonably take more time to travel against travelling the same distance directly.

Table 2 illustrates an extract of the navigation instructions during the self-guided tour.

As already stated, the trial evaluated the inertial dead reckoning localization error (in centimeters) per path segment between successive POIs. To this end, specific measurement functionality was implemented in the Blind MuseumTourer application, subtracting the Inertial Dead Reckoning (IDR) calculated localization information from the beacon one per segment. Despite the spoiling of the calculated distance corresponding to the user steps caused by surface-mounted warnings signaling a direction change, our IDR mechanism achieves a lower than average error rate during path segments, including surface warnings, through exploiting the smartphone’s integrated gyroscope. The gyroscope triggers the IDR mechanism to re-initialize the IDR calculations following a direction change until the next exhibit is reached yielding a much smaller error, which typically corresponds to the fraction of the last straight segment over the total path length between successive POIs containing the direction change warning sign. This yields an impressive 2.53% average error rate for our IDR mechanism, taking into account the typical IDR error rate figures (5–10%) reported in the literature.

8. Discussion

Unlike outdoor navigation, which exploits GPS technology to accurately resolve the problem of dynamic location determination and modern geo-information systems for routing determination, such as OpenStreetMap, Google Maps and Apple Maps services, there is no global solution to the indoor navigation problem. Especially regarding the problem of blind outdoor pedestrian navigation, there are several high-precision and reliable research and commercial systems available. Besides the accuracy issue, ease of use is another critical factor for system adoption by the BVI.

Regarding indoor navigation, the main problems that should be tackled refer to both blind navigation concerns. On the one hand, the accuracy of the positioning system is often unsatisfactory, with slight or major deviations from the real position coordinates causing problems to the BVI users. On the other hand, unlike outdoor map services exploiting satellite Earth observation technology, the creation of indoor environment maps of indoor navigation applications is performed on a per case basis and requires considerable effort, involving time-consuming staff set up works and autopsy visits to the indoor environments for the collection of map related measurements.

Nowadays, several technologies or technology combinations succeed in achieving very precise indoor positioning, (see

Section 2.2 and

Section 4.1), such as RFID and other various landmark/POI-understanding techniques or WiFi systems combined with inertial dead reckoning, sophisticated WLAN location determination, 3D space sensing and augmented reality systems, etc. Most such systems either entail an increased cost of indoor navigation equipment to the BVI user (e.g., RFID reader, mobile robot, specialized camera units, etc.) or require timely high-performance computations (e.g., 3D space representation and augmented reality systems). Furthermore, a consolidation of the use of smartphone devices in indoor navigation applications is undisputed nowadays. Their PC-like computational performance, integrated camera and inertial sensors, touch user interface, microphone, speaker, and speech recognition functionality, enhancing accessibility, all-in-one small wearable device, provides an invincible candidate device for blind indoor navigation applications.

Taking into serious account the ascertainments made by the previous discussion, as well as the uncompromised requirement for the ease-of-use of blind indoor navigation applications, we proposed and implemented a competitive system demonstrating the following advantages over other state-of-the-art solutions:

Achieving exceptional indoor positioning accuracy through combining assistive tactile walking through surface indicators (TWSIs) according to the International Organisation for Standardisation ISO 23599:2012 standard, inertial dead reckoning functionality, and BLE beacons as proximity sensors to POIs inside the indoor space.

The adoption of TWSI provides the advantage of creating a decisive safety and protection feeling for the BVI during the indoor navigation.

The adoption of BLE beacons over passive RFID tags to implement the positioning of POIs through close proximity identification allows the formation of one-to-many active–passive relations between an indoor space and its BVI users at a firm unitary cost which sums up the cost of the set of beacons installed in the indoor space. The alternative of implementing one-to-many passive–active relations between an indoor space and its BVI users entails a multiple total cost proportional to the number of BVIs using the blind indoor navigation application (e.g., requirement for an individual RFID reader per BVI).

It emphasizes the decisive role of a smartphone as an enabling device in the context of blind indoor navigation applications, which interacts via Bluetooth with the indoor environment beacons representing the POI positions. It is showcased that no additional specialized user equipment is required to adequately address the application. The only requirement is to steady the smartphone device in a belt around waist to constantly conform with the body orientation. BVIs holding their white cane in the right hand can steady the device on their left side, and vice versa for left-handed BVIs, so that they can easily interact with the touchscreen using their free hand. This approach was presented to blind users at the Lighthouse for the Blind of Greece and they were quite happy with it.

Besides the key importance of positioning accuracy and system reliability, our work emphasizes the importance of another key requirement for BVI adoption, which is “ease of use” through proper acoustic and touch interfaces. Unfortunately, several state-of-the-art applications disregard or do not focus enough on this critical issue. Any state-of-the-art indoor navigation application should be typically easy to use.

Finally, a successful blind indoor navigation application further relies decisively on the required indoor space mapping in the system context. Our system resolves the typical trade-off regarding indoor positioning systems between the quality of positioning accuracy and the complexity and cost of the manual indoor mapping process in favor of the simplification of the floor mapping process, while at the same time not compromising system positioning accuracy and reliability.

Future work on the Blind MuseumTourer and other IndoorGuide applications will focus on the optimization of our inertial dead-reckoning mechanism (see

Figure 8) and BVI acoustic user interface (see

Table 2), including simple POI selection, as well as an extensive and insightful system validation with blind users. Following the pilot project with the Tactual Museum, Blind MuseumTourer will be configured and proof tested at the National Archaeological Museum and at the Acropolis Museum. In addition, several Blind IndoorGuide use cases will be implemented and evaluated in the future, such as addressing BVI indoor navigation in hospitals and other public indoor environments (see

Section 6 and