Mitigating Wind Induced Noise in Outdoor Microphone Signals Using a Singular Spectral Subspace Method †

Abstract

1. Introduction

2. Rationale

3. The Method

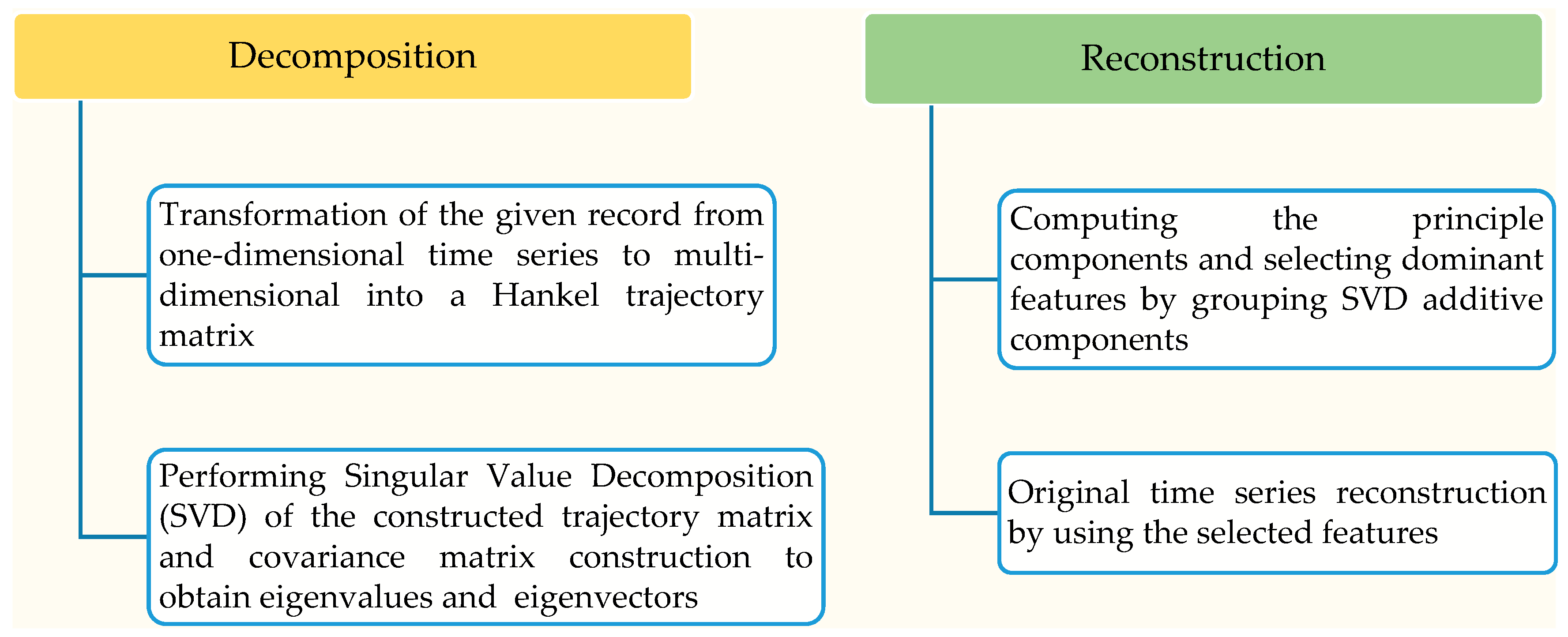

3.1. Overview

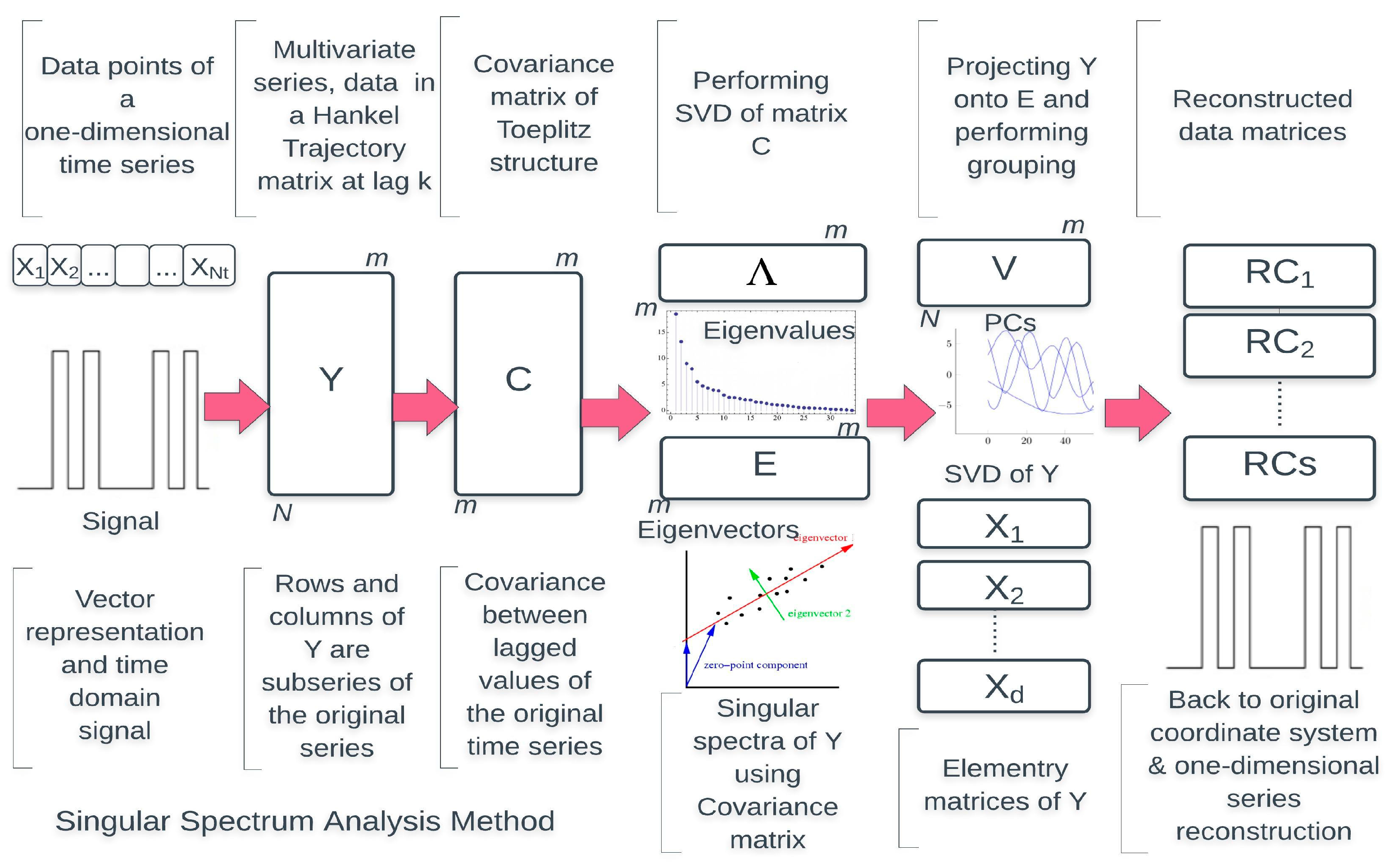

3.2. The SSA Theory

3.3. Mathematical Formulation of the SSA Method

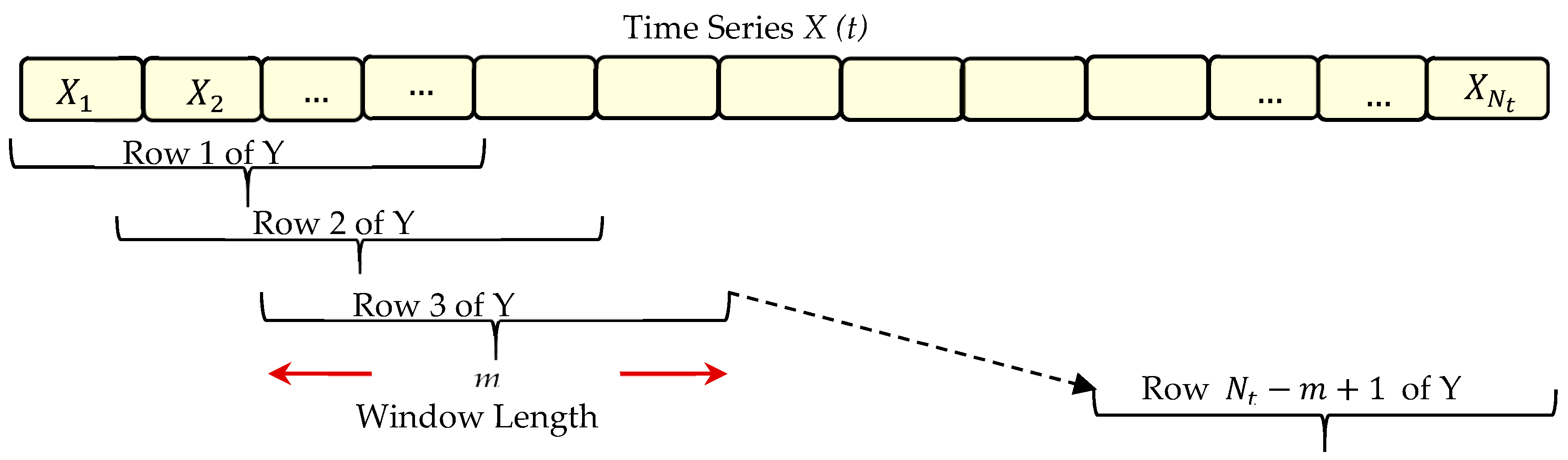

3.3.1. Embedding Process

- Step One: Vector RepresentationIn practice, the SSA is nonparametric spectral method based on embedding a given time series {X(t): t = 1, …, Nt} in a vector space. The vector X, whose entries are the data points of a time series, can clearly define and describe this time series at regular intervals [27,30,40]. If we consider a real-valued time series X(t) = (x1, x2, …, xNt) of length and x1, x2, …, xNt data points, therefore, the given time series can simply be represented as a column vector as shown in Equation (1):This column vector shows the original time series at zero lag (i.e., when there is no delay, ). At a given window length m, the lag k can therefore be expressed in the range from 0 to m − 1 in a 1 lag shifted version as in Equation (2):Hence, the window length m should be suitably identified in order to obtain the lag k which needed to construct a new matrix according to delay coordinates.In SSA jargon, this matrix is called “embedded time series” or trajectory matrix and denoted by Y. The window length is also called embedding dimension and it represents the number of time-series elements in each snapshot [27,30,33,40].The whole procedure of the SSA method depends upon the best selection of this parameter as well as the grouping criteria. These two key aspects are very important to develop the concept of reconstructing noise free series from a noisy series. Different rank-one matrices obtained from the SVD can be selected and grouped in order to be processed separately. If the groups are properly partitioned, they will reflect different components of the original time record [27,40].

- Step Two: Trajectory Matrix ConstructionThe trajectory matrix contains the original time series in the first column and a lag 1 shifted version of that time series for each of the next columns. We can obviously understand from Equation (2) that the column vector shown in Equation (1) is when . As explained in [31], according to delay coordinates, we will obtain a total number of column vectors equals m. Importantly, these vectors are similar in size to the first column vector but with a 1 lag shift at , , up to . This assumption is given when the last rows are supplemented by 0s based on the delay as a first method.In the second method, arranging the snapshots of any given time series as row vectors can lead to construct the trajectory matrix when the last rows are not supplemented by 0 s [27,40]. To simplify, we assume for example, an embedding dimension , therefore according to Equation (2), only lags of , 1, 2 and 3 will be considered. However, in this case, a trajectory matrix Y of size N × m will be constructed as in Equation (4). For more clarification, we consider a representation of any given time series according to our assumption as depicted in Figure 4.The coordinates of the phase space can be defined by using lagged copies of a single time series [33]. The trajectory matrix corresponds to a sliding window of size m that moves along the time series [12,40]. Since the sliding window has an overlap equals as shown in Figure 1 and values of k according to Equation (2), therefore the number of rows of Y which can be filled with the values of is denoted by N and can be calculated by Equation (3):where is the number of data points, m is the window length and is the overlap.As explained in [33], the snapshots of a given record when considering only number of rows of Y which can be filled with the values of according to Equation (3) are vectors and can be seen as , up to . Hence, the trajectory matrix can be constructed by arranging the snapshots as row vectors and only Nt − m + 1 rows can be filled with values of as in Equation (4):where is the convenient normalisation.The constructed trajectory matrix includes the complete record of patterns that have occurred within a window of length m. To generalise, we assume that is a given time series and t = 1, 2, 3, …, Nt, the augmented or trajectory matrix is constructed as in Equation (5):where the columns vectors of this matrix , the entries , , and .The arrangement of entries of the trajectory matrix depends on the lag, considering that the trajectory matrix has dimensions N by m. The trajectory matrix and its transpose are linear maps between the spaces and [40]. Two important properties of the trajectory matrix are stated in [49], the first is that both the rows and columns of Y are subseries of the original series. The second is that Y has equal elements on anti-diagonals; makes it a Hankel matrix (i.e., all the elements along the diagonal i + j = const are equal).

3.3.2. Covariance Matrix Construction

3.3.3. Computing the Eigenvalues and Eigenvectors

- Finding the EigenmodesFinding the eigenvalues and eigenvectors or the so-called eigenmodes is based on the fundamental question of eigenvector decomposition. In general, this question is for what values is the matrix singular? Such question of singularity regarding matrices can be answered with determinants [33,40]. Using determinants, however, the fundamental question which just has been asked above can be reduced to; for what values of is the determinant of the matrix equals to zero? or as in Equation (8):This is called the characteristic equation for the matrix A where that make the matrix singular are called eigenvalues [33]. The eigenvalues of this matrix can therefore be found by solving the characteristic equation.For each of these special values there is a corresponding set of vectors called the eigenvectors of A and should satisfy Equation (9):Equation (9) can be written as . Vector represents a set of eigenvectors correspond to .When representing the eigenvectors geometrically, they can be considered as the axes of a new coordinate system. Hence, any scalar multiple of these eigenvectors is also an eigenvector of matrix A [27]. To simplify, all eigenvectors of the form ( is scalar) will form an eigen-subspace spanned by , which means that the eigen-subspace is one-dimensional and is spanned by . In this case, only the scale of the eigenvectors is changing while their direction remains unchanged. The process of decomposition can be simplified as matrix A is usually symmetric with real coefficients. The eigenvalues and eigenvectors can be seen as a way to express the variability of a set of data [50].

- Diagonal Form of the Covariance MatrixThe covariance matrix C is an n by n symmetric matrix with n linearly independent eigenvectors , ; hence a matrix E, whose columns are the eigenvectors of C, can be constructed and satisfies Equation (10):The product on the left-hand side of Equation (10) is called the diagonal form of C and therefore Λ is a diagonal matrix whose nonnegative entries are the eigenvalues of C.The eigenvectors of C should be linearly independent in order to make C diagonalisable in this way. Also matrix E is not unique because the eigenvectors can always be multiplied by a constant scalar preserving their nature as eigenvectors [33].

- Spectral DecompositionWe assume C is a real symmetric matrix where . Now, in this case, every eigenvalue of C is also real and if all eigenvalues are distinct, then their corresponding eigenvectors are orthogonal. Our real, symmetric matrix C can therefore be diagonalised by an orthogonal matrix E whose columns are the orthonormal eigenvectors of C [44]. It is important now to state a principal theorem when there is a diagonalizable matrix E whose columns are orthonormal of a real and symmetric matrix C.Since and , then Equation (10) can be rewritten as:Matrices Λ and E can be seen as:Matrix Λ is symmetric with entries along the leading diagonal for , however is the corresponding normalised column eigenvector for as well. The eigenvectors matrix consists of a set of column vectors with entries that represent the jth component of the kth eigenvector. Once we conserve our matrices square, then we have . The diagonal matrix Λ consists of ordered values [21,22,27].

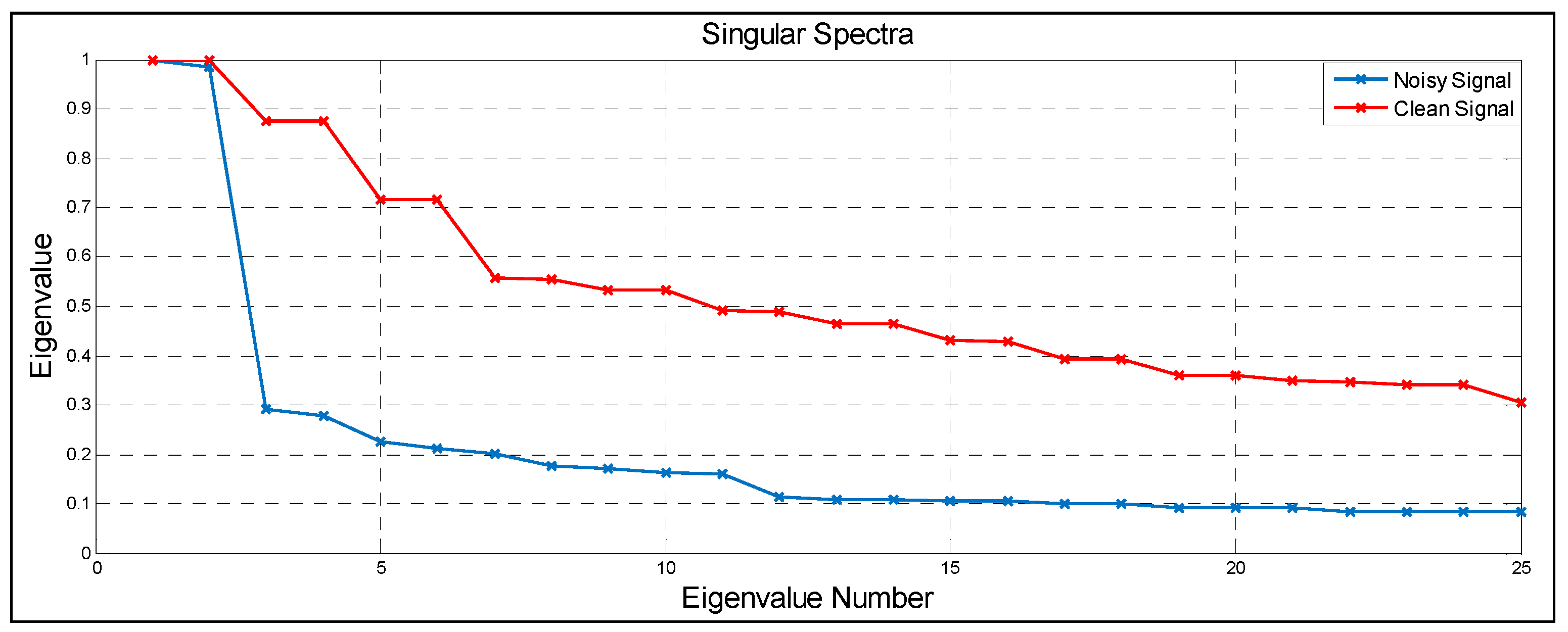

- The Singular SpectrumThe square roots of the eigenvalues of matrix C are called the singular values of the trajectory matrix [27,28,33]. These ordered singular values are referred to collectively as the singular spectrum. From the (SVD), the trajectory matrix can be written as:where U and E are left and right singular vectors of Y and S is a diagonal matrix of singular values.The singular spectrum of Y consists of the square roots of the eigenvalues of C which called the singular values of Y with the singular vectors being identical to the eigenvectors that given in matrix E [33]. The decomposition of matrix C can also be performed by substituting Equation (13) in the form to yield . Since , we find:For the decomposition being unique, it follows that . The right singular vectors of Y are the eigenvectors of C and the left singular vectors of Y are the eigenvectors of the matrix [27,40]. Importantly, the number of eigenvalues is equal to the window length and in turn the number of the associated eigenvectors that matrix E contains [33].

3.3.4. Principle Components

3.3.5. Reconstruction of the Time Series

4. The SSA Algorithm

4.1. Description of the SSA Algorithm

4.1.1. Signal Decomposition

4.1.2. Computing the Diagonal Matrix C

4.1.3. Performing SVD of Matrix C

4.1.4. Computing the Principle Components and Performing Grouping

4.1.5. Reconstruction the One-Dimensional Series

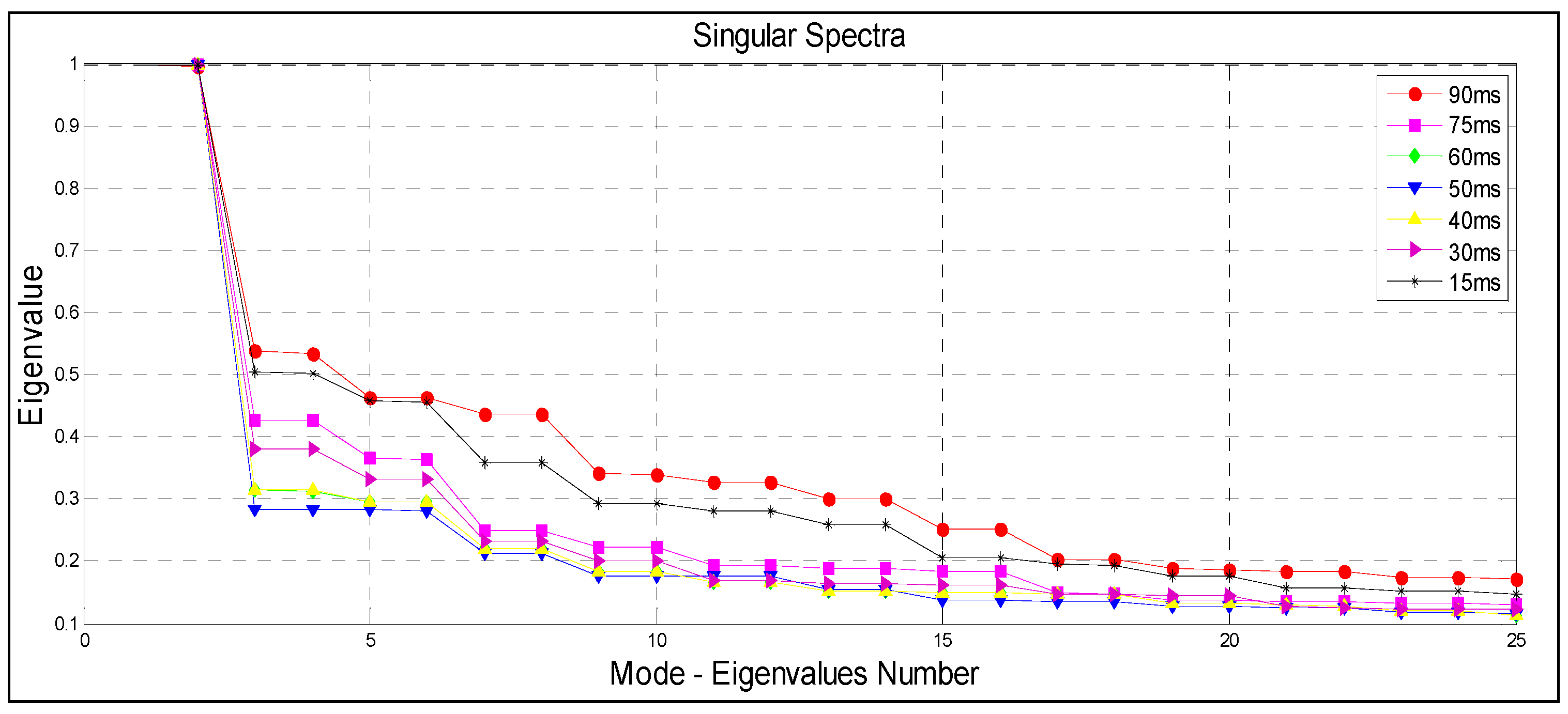

4.2. Parameters of the SSA Algorithm

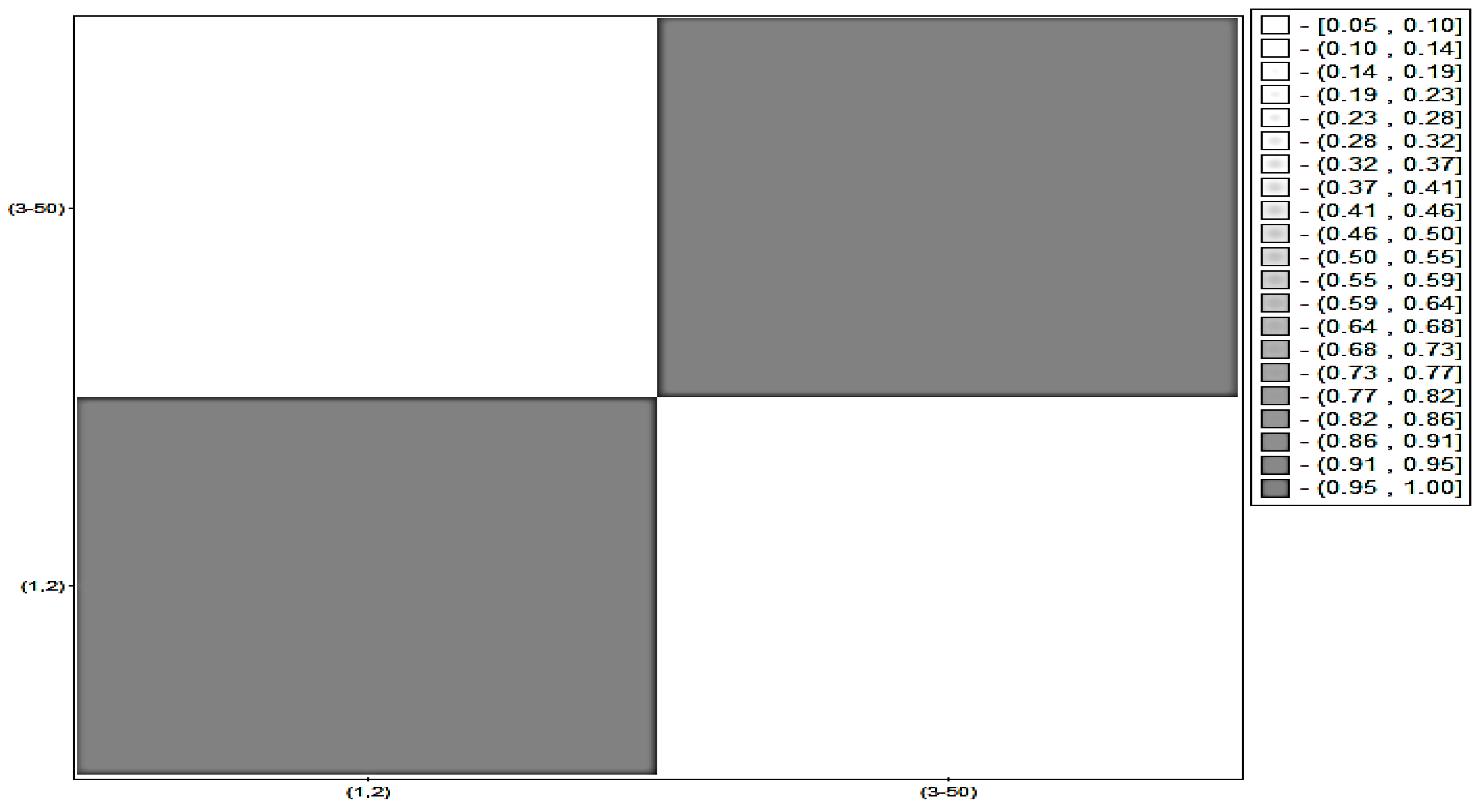

4.3. Grouping and Separability

5. Experimental Procedure

5.1. Description of Experiments

5.2. Dataset

5.3. Window Length Optimisation

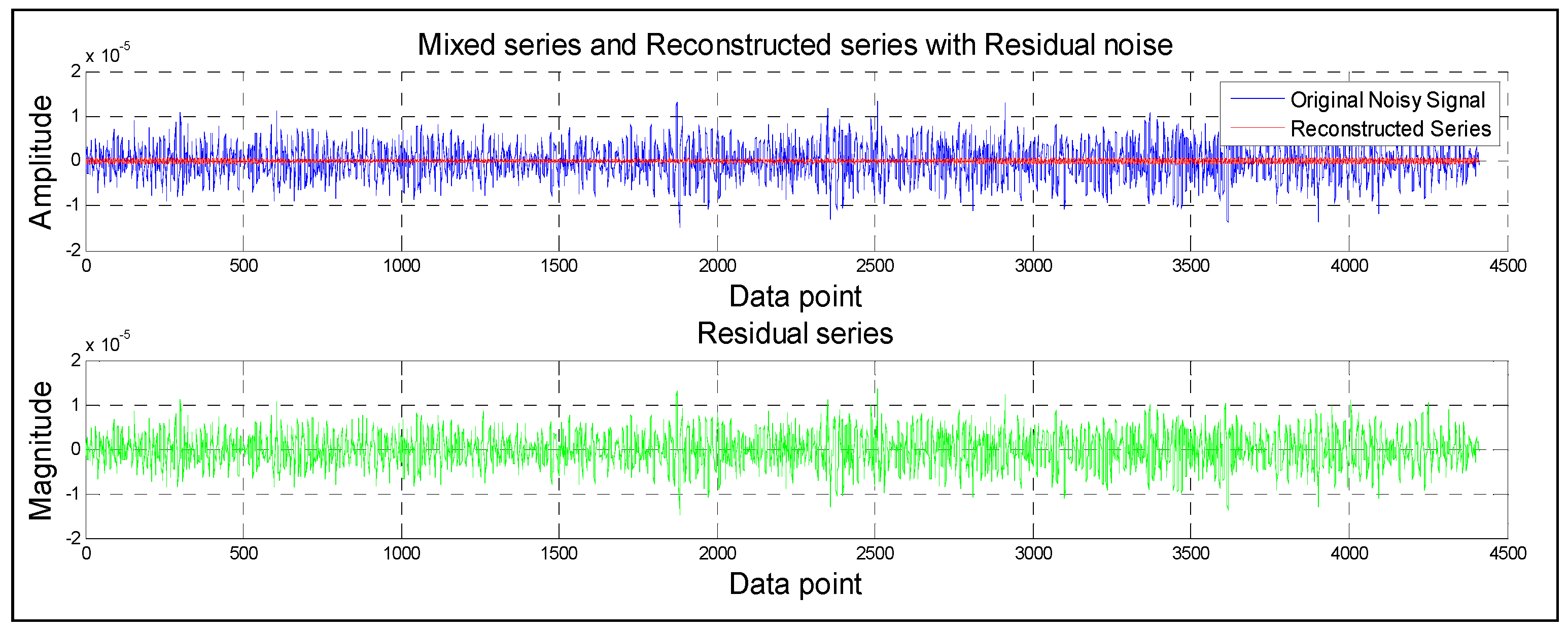

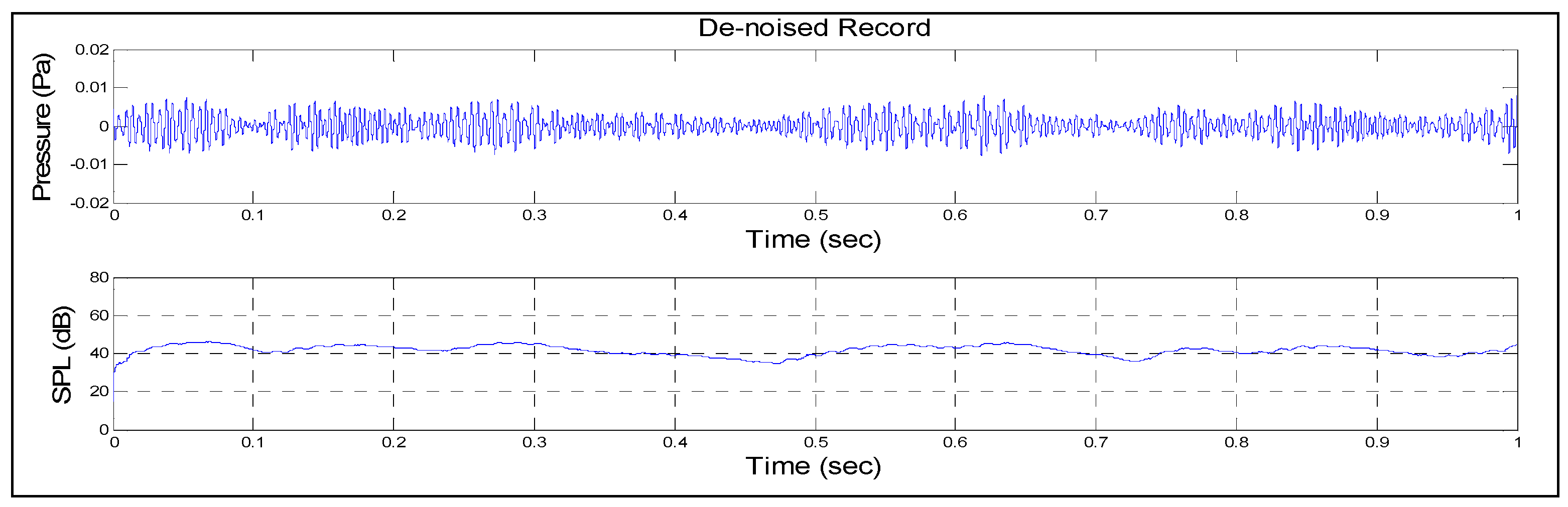

6. Results

7. Discussion and Conclusions

Author Contributions

Conflicts of Interest

References

- Glowacz, A.; Glowacz, W.; Glowacz, Z.; Kozik, J. Early fault diagnosis of bearing and stator faults of the single-phase induction motor using acoustic signals. Measurement 2018, 113, 1–9. [Google Scholar] [CrossRef]

- Glowacz, A.; Glowacz, Z. Diagnosis of stator faults of the single-phase induction motor using acoustic signals. Appl. Acoust. 2017, 117, 20–27. [Google Scholar] [CrossRef]

- Jena, D.P.; Panigrahi, S.N. Automatic gear and bearing fault localization using vibration and acoustic signals. Appl. Acoust. 2015, 98, 20–33. [Google Scholar] [CrossRef]

- O’Brien, R.J.; Fontana, J.M.; Ponso, N.; Molisani, L. A pattern recognition system based on acoustic signals for fault detection on composite materials. Eur. J. Mech. A Solids 2017, 64, 1–10. [Google Scholar] [CrossRef]

- Głowacz, A.; Głowacz, Z. Recognition of rotor damages in a DC motor using acoustic signals. Bull. Polish Acad. Sci. Tech. Sci. 2017, 65, 187–194. [Google Scholar] [CrossRef]

- Glowacz, A. Diagnostics of Rotor Damages of Three-Phase Induction Motors Using Acoustic Signals and SMOFS-20-EXPANDED. Arch. Acoust. 2016, 41, 507–515. [Google Scholar] [CrossRef]

- Glowacz, A. Fault diagnostics of acoustic signals of loaded synchronous motor using SMOFS-25-EXPANDED and selected classifiers. Teh. Vjesn. 2016, 23, 1365–1372. [Google Scholar]

- Caesarendra, W.; Kosasih, B.; Tieu, A.K.; Zhu, H.; Moodie, C.A.S.; Zhu, Q. Acoustic emission-based condition monitoring methods: Review and application for low speed slew bearing. Mech. Syst. Signal Process. 2016, 72–73, 134–159. [Google Scholar] [CrossRef]

- Mika, D.; Józwik, J. Normative Measurements of Noise at CNC Machines Work Stations. Adv. Sci. Technol. Res. J. 2016, 10, 138–143. [Google Scholar] [CrossRef]

- Józwik, J. Identification and Monitoring of Noise Sources of CNC Machine Tools by Acoustic Holography Methods. Adv. Sci. Technol. Res. J. 2016, 10, 127–137. [Google Scholar] [CrossRef]

- Fukuda, K. Noise reduction approach for decision tree construction: A case study of knowledge discovery on climate and air pollution. In Proceedings of the 2007 IEEE Symposium on Computational Intelligence and Data Mining, Honolulu, HI, USA, 1 March–5 April 2007. [Google Scholar]

- Yang, B.; Dong, Y.; Yu, C.; Hou, Z. Singular Spectrum Analysis Window Length Selection in Processing Capacitive Captured Biopotential Signals. IEEE Sens. J. 2016, 16, 7183–7193. [Google Scholar] [CrossRef]

- Ma, L.; Milner, B.; Smith, D. Acoustic environment classification. ACM Trans. Speech Lang. Process. 2006, 3, 1–22. [Google Scholar] [CrossRef]

- Chu, S.; Narayanan, S.; Kuo, C. Environmental sound recognition using MP-based features. In Proceedings of the 2008 IEEE International Conference on Acoustics, Speech and Signal Processing, Las Vegas, NV, USA, 31 March–4 April 2008. [Google Scholar]

- Nemer, E.; Leblanc, W. Single-microphone wind noise reduction by adaptive postfiltering. In Proceedings of the Applications of Signal Processing to Audio and Acoustics, New Paltz, NY, USA, 18–21 October 2009; pp. 177–180. [Google Scholar]

- Luzzi, S.; Natale, R.; Mariconte, R. Acoustics for smart cities. In Proceedings of the Annual Conference on Acoustics (AIA-DAGA), Merano, Italy, 18–21 March 2013. [Google Scholar]

- Slabbekoorn, H. Songs of the city: Noise-dependent spectral plasticity in the acoustic phenotype of urban birds. Anim. Behav. 2013, 85, 1089–1099. [Google Scholar] [CrossRef]

- Schmidt, M.N.; Larsen, J.; Hsiao, F.-T. Wind Noise Reduction using Non-Negative Sparse Coding. In Proceedings of the 2007 IEEE Workshop on Machine Learning for Signal Processing, Thessaloniki, Greece, 27–29 August 2007; pp. 431–436. [Google Scholar]

- Schoellhamer, D. H. Singular spectrum analysis for time series with missing data. Geophys. Res. Lett. 2001, 28, 3187–3190. [Google Scholar] [CrossRef]

- King, B.; Atlas, L. Coherent modulation comb filtering for enhancing speech in wind noise. In Proceedings of the 2008 International Workshop on Acoustic Echo and Noise Control (IWAENC 2008), Seattle, WA, USA, 14–17 September 2008. [Google Scholar]

- Harmouche, J.; Fourer, D.; Auger, F.; Borgnat, P.; Flandrin, P. The Sliding Singular Spectrum Analysis: A Data-Driven Non-Stationary Signal Decomposition Tool. IEEE Trans. Signal Process. 2017, 66, 251–263. [Google Scholar] [CrossRef]

- Eldwaik, O.; Li, F.F. Mitigating wind noise in outdoor microphone signals using a singular spectral subspace method. In Proceedings of the Seventh International Conference on Innovative Computing Technology (INTECH 2107), Luton, UK, 16–18 August 2017. [Google Scholar]

- Hassani, H. A Brief Introduction to Singular Spectrum Analysis. 2010. Available online: https://www.researchgate.net/publication/267723014_A_Brief_Introduction_to_Singular_Spectrum_Analysis (accessed on 25 January 2018).

- Jiang, J.; Xie, H. Denoising Nonlinear Time Series Using Singular Spectrum Analysis and Fuzzy Entropy Denoising Nonlinear Time Series Using Singular Spectrum Analysis and Fuzzy Entropy. Chin. Phys. Lett. 2016, 33. [Google Scholar] [CrossRef]

- Qiao, T.; Ren, J.; Wang, Z.; Zabalza, J.; Sun, M.; Zhao, H.; Li, S.; Benediktsson, J.A.; Dai, Q.; Marshall, S. Effective Denoising and Classification of Hyperspectral Images Using Curvelet Transform and Singular Spectrum Analysis. IEEE Trans. Geosci. Remote Sens. 2017, 55, 119–133. [Google Scholar] [CrossRef]

- Hassani, H.; Zokaei, M.; von Rosen, D.; Amiri, S. Does noise reduction matter for curve fitting in growth curve models? Comput. Methods 2009, 96, 173–181. [Google Scholar] [CrossRef] [PubMed]

- Golyandina, N.; Shlemov, A. Semi-nonparametric singular spectrum analysis with projection. Stat. Interface 2015, 10, 47–57. [Google Scholar] [CrossRef]

- Hassani, H.; Soofi, A.; Zhigljavsky, A. Predicting daily exchange rate with singular spectrum analysis. Nonlinear Anal. Real World Appl. 2010, 11, 2023–2034. [Google Scholar] [CrossRef]

- Xu, X.; Zhao, M.; Lin, J. Detecting weak position fluctuations from encoder signal using singular spectrum analysis. ISA Trans. 2017, 71, 440–447. [Google Scholar] [CrossRef] [PubMed]

- García Plaza, E.; Núñez López, P.J. Surface roughness monitoring by singular spectrum analysis of vibration signals. Mech. Syst. Signal Process. 2017, 84, 516–530. [Google Scholar] [CrossRef]

- Claessen, D.; Groth, A. A Beginner’s Guide to SSA. 2002. Available online: http://environnement.ens.fr/IMG/file/DavidPDF/SSA_beginners_guide_v9.pdf (accessed on 25 January 2018).

- Moskvina, V.; Zhigljavsky, A. An Algorithm Based on Singular Spectrum Analysis for Change-Point Detection. Commun. Stat. Simul. Comput. 2003, 32, 319–352. [Google Scholar] [CrossRef]

- Elsner, J.B.; Tsonis, A.A. Singular Spectrum Analysis: A New Tool in Time Series Analysis; Springer: New York, NY, USA, 2013. [Google Scholar]

- Ghodsi, M.; Hassani, H.; Sanei, S.; Hicks, Y. The use of noise information for detection of temporomandibular disorder. Biomed. Signal Process. 2009, 4, 79–85. [Google Scholar] [CrossRef]

- Hu, H.; Guo, S.; Liu, R.; Wang, P. An adaptive singular spectrum analysis method for extracting brain rhythms of electroencephalography. PeerJ 2017, 5, e3474. [Google Scholar] [CrossRef] [PubMed]

- Lakshmi, K.; Rao, A.R.M.; Gopalakrishnan, N. Singular spectrum analysis combined with ARMAX model for structural damage detection. Struct. Control Heal. Monit. 2017, 24, 1–21. [Google Scholar] [CrossRef]

- Alonso, F.; del Castillo, J.; Pintado, P. Application of singular spectrum analysis to the smoothing of raw kinematic signals. J. Biomech. 2005, 38, 1085–1092. [Google Scholar] [CrossRef] [PubMed]

- Traore, O.I.; Pantera, L.; Favretto-Cristini, N.; Cristini, P.; Viguier-Pla, S.; Vieu, P. Structure analysis and denoising using Singular Spectrum Analysis: Application to acoustic emission signals from nuclear safety experiments. Measurement 2017, 104, 78–88. [Google Scholar] [CrossRef]

- Hassani, H.; Heravi, S.; Zhigljavsky, A. Forecasting European industrial production with singular spectrum analysis. Int. J. Forecast. 2009, 25, 103–118. [Google Scholar] [CrossRef]

- Chu, M.T.; Lin, M.M.; Wang, L. A study of singular spectrum analysis with global optimization techniques. J. Glob. Optim. 2013, 60, 551–574. [Google Scholar] [CrossRef]

- Golyandina, N.; Nekrutkin, V.; Zhigljavsky, A. Analysis of Time Series Structure: Ssa and Related Techniques; CRC Press: Boca Raton, FL, USA, 2001. [Google Scholar]

- Launonen, I.; Holmström, L. Multivariate posterior singular spectrum analysis. Stat. Methods Appl. 2017, 26, 361–382. [Google Scholar] [CrossRef]

- Golyandina, N.E.; Lomtev, M.A. Improvement of separability of time series in singular spectrum analysis using the method of independent component analysis. Vestn. St. Petersbg Univ. Math. 2016, 49, 9–17. [Google Scholar] [CrossRef]

- Hassani, H. Singular spectrum analysis: Methodology and comparison. J. Data Sci. 2007, 5, 239–257. [Google Scholar]

- Clifford, G.D. Singular Value Decomposition & Independent Component Analysis for Blind Source Separation. Biomed. Signal Image Process. 2005, 44, 489–499. [Google Scholar]

- Ghil, M.; Allen, M.R.; Dettinger, M.D.; Ide, K.; Kondrashov, D.; Mann, M.E.; Robertson, A.W.; Saunders, A.; Tian, Y.; Varadi, F.; et al. Advanced spectral methods for climatic time series. Rev. Geophys. 2002, 40, 1003. [Google Scholar] [CrossRef]

- Patterson, K.; Hassani, H.; Heravi, S. Multivariate singular spectrum analysis for forecasting revisions to real-time data. J. Appl. 2011, 38. [Google Scholar] [CrossRef]

- Maddirala, A.; Shaik, R.A. Removal of EOG Artifacts from Single Channel EEG Signals using Combined Singular Spectrum Analysis and Adaptive Noise Canceler. IEEE Sens. J. 2016, 16, 8279–8287. [Google Scholar] [CrossRef]

- Golyandina, N.; Korobeynikov, A. Basic singular spectrum analysis and forecasting with R. Comput. Stat. Data Anal. 2014, 71, 934–954. [Google Scholar] [CrossRef]

- Vautard, R.; Ghil, M. Singular spectrum analysis in nonlinear dynamics, with applications to paleoclimatic time series. Physica D 1989, 35, 395–424. [Google Scholar] [CrossRef]

- Moore, J.; Glaciology, A. Singular spectrum analysis and envelope detection: Methods of enhancing the utility of ground-penetrating radar data. J. Glaciol. 2006, 52, 159–163. [Google Scholar] [CrossRef]

- Vautard, R.; Ghil, M. Interdecadal oscillations and the warming trend in global temperature time series. Nature 1991, 350, 324–327. [Google Scholar]

- Alexandrov, T. A Method of Trend Extraction Using Singular Spectrum Analysis. 2009. arXiv.org e-print archive. Available online: https://arxiv.org/abs/0804.3367 (accessed on 25 January 2018).

- Rodrigues, P.C.; Mahmoudvand, R. The benefits of multivariate singular spectrum analysis over the univariate version. J. Frankl. Inst. 2017, 355, 544–564. [Google Scholar] [CrossRef]

- Rukhin, A.L. Analysis of Time Series Structure SSA and Related Techniques. Technometrics 2002, 44, 290. [Google Scholar] [CrossRef]

- Hassani, H.; Mahmoudvand, R. Multivariate Singular Spectrum Analysis: A General View and New Vector Forecasting Approach. Int. J. Energy Stat. 2013, 1, 55–83. [Google Scholar] [CrossRef]

- Hansen, B.; Noguchi, K. Improved short-term point and interval forecasts of the daily maximum tropospheric ozone levels via singular spectrum analysis. Environmetrics 2017, 28, e2479. [Google Scholar] [CrossRef]

- Golyandina, N.; Shlemov, A. Variations of singular spectrum analysis for separability improvement: Non-orthogonal decompositions of time series. Stat. Interface 2015, 8, 277–294. [Google Scholar] [CrossRef]

- Pan, X.; Sagan, H. Digital image clustering algorithm based on multi-agent center optimization. J. Digit. Inf. Manag. 2016, 14, 8–14. [Google Scholar]

- Secco, E.L.; Deters, C.; Wurdemann, H.A.; Lam, H.K.; Seneviratne, L.; Althoefer, K. A K-Nearest Clamping Force Classifier for Bolt Tightening of Wind Turbine Hubs. J. Intell. Comput. 2016, 7, 18–30. [Google Scholar]

| The Objective Measure for Evaluating the Method | Before | After | Difference |

|---|---|---|---|

| SNR in dB | 0 dB | 9.47 dB | 9.47 dB |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Eldwaik, O.; F. Li, F. Mitigating Wind Induced Noise in Outdoor Microphone Signals Using a Singular Spectral Subspace Method. Technologies 2018, 6, 19. https://doi.org/10.3390/technologies6010019

Eldwaik O, F. Li F. Mitigating Wind Induced Noise in Outdoor Microphone Signals Using a Singular Spectral Subspace Method. Technologies. 2018; 6(1):19. https://doi.org/10.3390/technologies6010019

Chicago/Turabian StyleEldwaik, Omar, and Francis F. Li. 2018. "Mitigating Wind Induced Noise in Outdoor Microphone Signals Using a Singular Spectral Subspace Method" Technologies 6, no. 1: 19. https://doi.org/10.3390/technologies6010019

APA StyleEldwaik, O., & F. Li, F. (2018). Mitigating Wind Induced Noise in Outdoor Microphone Signals Using a Singular Spectral Subspace Method. Technologies, 6(1), 19. https://doi.org/10.3390/technologies6010019