1. Introduction

Modern artificial intelligence (AI) systems are increasingly being integrated into nearly every aspect of human activity—from medicine and autonomous vehicles to finance, industrial automation [

1,

2,

3]. Despite remarkable advances achieved through machine learning (ML) and deep learning (DL), all AI systems remain inherently prone to errors. These errors represent a critical obstacle to the safe, trustworthy, and effective deployment of AI in real-world contexts where accuracy, robustness, and reliability are paramount [

4,

5]. Understanding the sources of these errors and developing mechanisms to mitigate them is thus a major focus of current AI research.

In real-world machine learning practice, the classical assumption of

independent and identically distributed (IID) data is frequently violated [

6,

7,

8,

9]. According to this assumption, data samples are drawn independently from a fixed probability distribution over the joint space of inputs and outputs. However, in realistic environments, this assumption is overly restrictive and rarely holds. Data evolve over time—through aging, sensor degradation, or changing external conditions—and novel data patterns often emerge that differ from those seen during training [

10,

11]. Such deviations result in

distribution shifts,

concept drift, and

domain adaptation challenges [

12,

13].

Recent research has emphasized that the hypothesis of a stationary distribution is an extremely strong and often unrealistic simplification [

9]. To address this, auxiliary concepts like covariate shift and dataset drift have been introduced, relaxing the IID assumption and enabling adaptive learning mechanisms [

8,

14]. Nonetheless, the IID framework remains central to statistical learning theory and continues to shape the mathematical underpinnings of modern ML systems [

15].

Another fundamental source of error in AI systems is

data uncertainty, arising from the fact that training datasets cannot encompass the full diversity of possible real-world situations [

16,

17]. Two primary forms of uncertainty are commonly recognized:

aleatoric uncertainty, stemming from inherent noise or random measurement and labeling errors in the data, and

epistemic uncertainty, reflecting the model’s lack of knowledge or limited generalization beyond its training set [

16,

18]. Furthermore, selection bias—when training data do not accurately represent the target environment—leads to systematic performance degradation in deployment [

19,

20].

A related and particularly insidious issue is

shortcut learning (or spurious correlation learning) [

21]. In this case, models exploit superficial patterns in the training data that happen to correlate with the target labels, enabling them to achieve high test accuracy without capturing the true underlying causal relationships [

22]. As a result, such systems perform well on benchmarks but fail catastrophically when faced with even minor changes in input distribution, as shown in studies on dataset bias, adversarial shifts, and out-of-distribution (OOD) generalization [

23].

In many cases, AI system errors are opaque and difficult to interpret. The inexplicability of errors complicates both diagnosis and correction. These failures may arise from software faults, design flaws, unexpected human–AI interactions, or adversarial attacks. In particular, research on

adversarial examples has shown that small, seemingly insignificant perturbations to input data can cause dramatic and unpredictable failures in even highly accurate deep models [

24,

25,

26]. This fragility highlights fundamental weaknesses in how neural networks represent information and generalize to unseen data [

27].

Error correction has long been a core principle of learning algorithms. Classical perceptron models and their successors rely on backpropagation to iteratively minimize errors, forming the foundation of modern deep learning [

2,

28]. In reinforcement learning (RL), the concept of error correction extends to the continuous improvement of an agent’s policy through interaction with its environment [

29]. However, in model-based RL, discrepancies inevitably arise between the learned model of the environment and the true dynamics of the real world, leading to suboptimal or unsafe behavior [

30,

31].

A common response to AI errors is systematic retraining of the model to incorporate newly observed cases. However, this approach suffers from several critical drawbacks:

Preserving existing skills requires retraining on the full dataset.

Retraining demands substantial computational resources and time.

New errors may be introduced during retraining.

Retaining prior knowledge (avoiding catastrophic forgetting) is not guaranteed.

These limitations make retraining unsuitable for real-time or safety-critical error correction in dynamic environments [

32]. An alternative approach is the use of

AI correctors—external modules or algorithms that complement existing AI systems by diagnosing errors and producing corrected outputs [

33,

34,

35]. Correctors have key advantages: they are flexible, modular, and reversible, allowing the base AI system to remain unchanged and easily restorable if necessary. Recent work has explored correctors as a foundation for more resilient, interpretable, and self-healing AI architectures capable of maintaining reliability under non-stationary and uncertain conditions [

36].

Most traditional error correction methods rely on iterative retraining on large datasets to avoid introducing new errors. However, this process is computationally intensive and unsuitable for real-time systems. In contrast, the corrector method performs non-iterative correction using one or a few labeled examples, particularly effective in high-dimensional spaces [

34,

36,

37].

An AI corrector requires a small labeled error set, used to train a binary classifier that distinguishes between normal and erroneous states. This reduces the problem to learning with few examples, where the error class is sparsely represented [

38,

39]. Such learning challenges are addressed by one-shot and few-shot learning, which aim to generalize from minimal data [

38]. Modern approaches employ meta-learning and transfer learning, where models pre-trained on related tasks acquire generalizable meta-skills that enable rapid adaptation to new conditions [

40,

41,

42].

The success of learning from few examples often relies on either dimensionality reduction or the blessing of dimensionality [

37,

43,

44,

45].

Based on these principles, Gorban et al. [

36] proposed the concept of AI correctors, combining a binary classifier that identifies high-risk states without retraining the base system. Training uses two classes:

The correction algorithm operates as follows. The corrector first collects the input data, internal signals, and output values from the base classifier. Using these signals, it applies stochastic partitioning to determine whether the current situation corresponds to normal operation or exhibits a high probability of error. When a potentially erroneous state is detected, the corrector invokes an auxiliary classifier or regressor to generate an adjusted output, thereby compensating for the identified error. As new error types arise, the corrector can be rapidly updated through one-shot learning without full system retraining. For complex applications, a multi-corrector architecture may be employed, where several specialized correctors handle different error categories under the coordination of a central dispatcher.

Our work presents a novel algorithm for post-processing and error correction in object detectors constructed using the Viola–Jones framework enhanced with a modified census transform. The proposed method improves robustness and accuracy under data-limited conditions through image partitioning with a fixed-aspect-ratio sliding window and a census transform that compares each pixel intensity with the mean value within a rectangular neighborhood. Training samples for false-negative and false-positive correctors are selected using dual Intersection-over-Union (IoU) thresholds and probabilistic sampling. The corrector models are trained within a one- and few-shot learning paradigm based on high-dimensional separability principles and features extracted from the detector’s cascade stages. Decision boundaries are optimized using Fisher’s criterion with adaptive thresholding to ensure zero false acceptance. Experimental results demonstrate that the proposed correction scheme significantly improves detection accuracy by effectively compensating for classifier errors, particularly in scenarios with scarce training data.

The main contributions of this work are (i) a corrector architecture for modified Census-based Viola–Jones detectors that operates in a high-dimensional feature space and can be trained in a one-/few-shot regime; (ii) a principled feature construction and clustering scheme that exploits high-dimensional separability to build cluster-wise Fisher correctors with zero false acceptance on the correction set; (iii) an experimental study on two challenging railway datasets demonstrating that the proposed correctors significantly improve Precision and/or Recall under severe data limitations, with minimal computational overhead.

2. Detector Correction for Object Detection in Images

A biomorphic system for semantic analysis was developed that uses individual cascade detectors for each concept in its vocabulary, employing strong classifiers [

57] based on non-local binary patterns [

58]. The detectors are organized sequentially using a multi-stage detection technique, in which each detector uses a cascade of connected strong classifiers.

2.1. Object Detection Using a Cascade Detector

The cascade detector algorithm [

57] has proven to be an effective method for detecting objects (e.g., faces) in images by using cascades of weak classifiers trained on a set of simple features. The algorithm implements the idea of a sliding window, where each image fragment is classified as containing an object (1) or not (0). Feature cascades are organized as a truncated binary tree, with the response (0) of any strong classifier interpreted as “not an object,” and “an object” otherwise. The main stages of the detector algorithm can be described as follows.

Detector Feature Space. The classical version of the detector [

57] uses Haar features, which are calculated based on the differences in the sums of intensities in rectangular regions. The developed algorithm uses modified Census features. Based on this encoding, a weak classifier is obtained. For this, each binary pattern code is assigned a weak classifier response

, determined by comparing the distribution responses on the training database.

In the classical Viola–Jones detector [

57], Haar-like features computed from rectangular intensity differences are used as inputs to weak classifiers. In our implementation we replace Haar features with modified Census features inspired by [

58]. For each pixel, the standard Census transform encodes a local neighborhood by comparing each neighbor to the center pixel and forming a binary pattern. In the modified version, we compare each neighbor to the mean intensity within a rectangular neighborhood rather than to the center pixel. This change makes the descriptor less sensitive to local noise at a single pixel and to small illumination fluctuations, while preserving the strong invariance of Census-like encodings to monotonic intensity changes. The resulting binary patterns are then aggregated over predefined regions and mapped to weak classifier responses {0, 1} based on their empirical distributions on the training set.

Cascade Structure of the Detector. The cascade consists of a sequence of stages, where each stage quickly discards most of the negatives, leaving for further processing only those fragments that may contain the target object. If at least one stage rejects a fragment, further calculations for it are not performed. The “strong classifier” is created using the AdaBoost algorithm [

59], which combines features and weak classifiers. Its binary function 0.1 is determined during training, minimizing recognition errors in the training database.

Image Scanning. The detector uses a sliding window method, which moves across the image with specified steps

and

. Let the image have dimensions

, and the window have dimensions

, then the coordinates of the upper-left corner of the window are given by

For each window , features are calculated, and a binary classification is performed using a cascade architecture of strong classifiers, where “1” means object detection, and “0” indicates no object. This procedure allows the image to be divided into multiple overlapping or non-overlapping fragments, each of which is used for classification.

Scaling and Aspect Ratio. To account for objects of different sizes, the detector is applied at multiple scales, and the aspect ratio of the window can be arbitrary, allowing for adaptation to different object types and shooting conditions. The scheme used (

Figure 1) ensures high performance, as most windows are quickly discarded early in the cascade, and detailed checking is performed only on potentially relevant fragments.

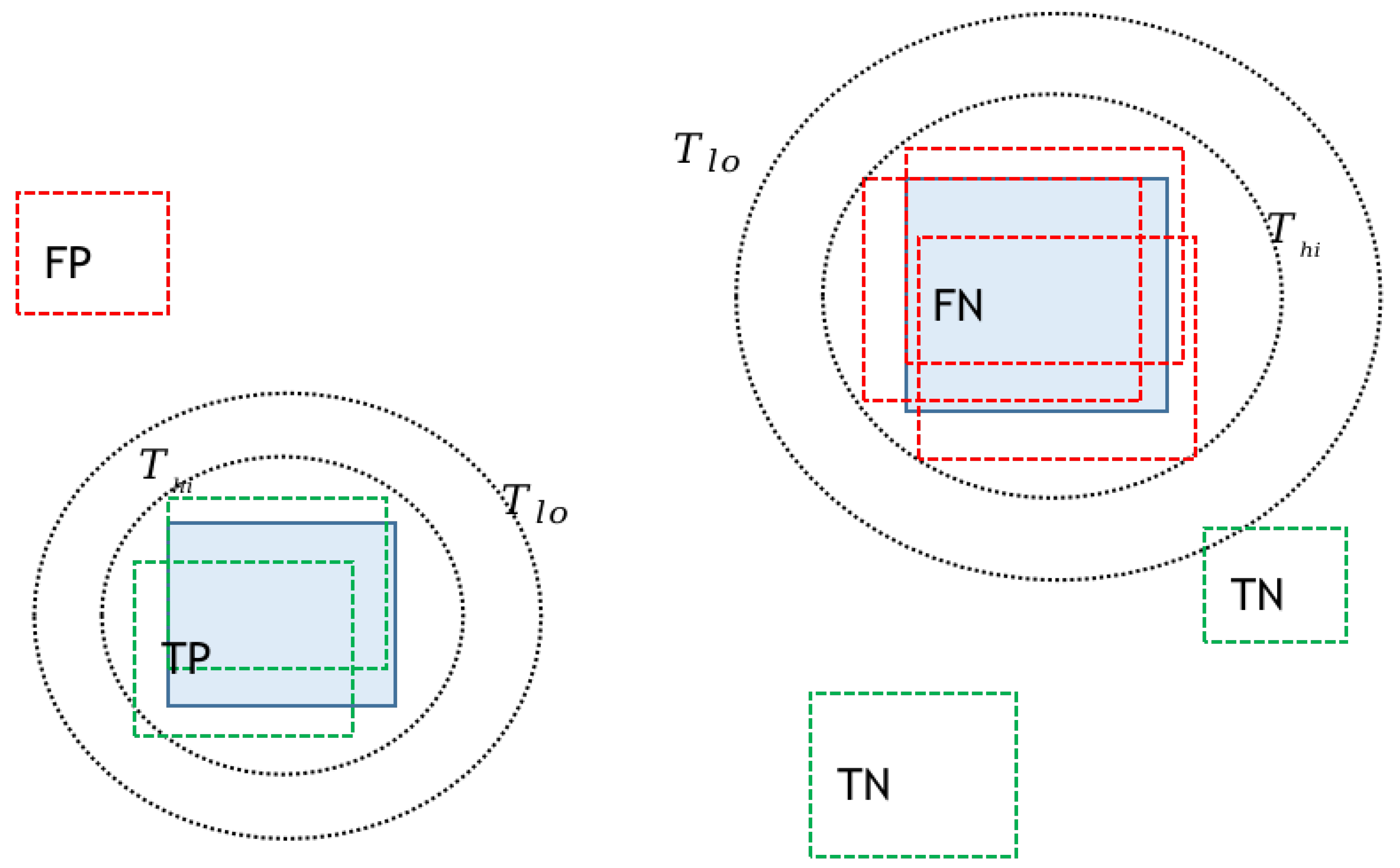

Statement of the Detector Correction Problem. Detector can make errors, generating two types of errors:

False Negative (FN): The object is present, but the detector returned 0.

False Positive (FP): The object is missing, but the detector returned a 1.

To improve detection accuracy, two types of correctors are introduced:

FN Corrector: Trained on errors where the detector fails (FN). The features used are data obtained only from the K detector stages, as well as the average intensity values within a fragment, calculated using the modified Census transform.

FP Corrector: Trained on false positives (FPs) and correctly identified positives (TP). It uses features similar to those for the FN corrector, but takes all detector stages into account.

A training scheme (

Figure 2) was developed to create and apply correctors during detector operation:

The scheme in

Figure 2 allows for the generation of correctors in cases of incomplete labeling on input images. Fragments used for training are accumulated in the training procedure’s internal database and are used once the required threshold number of examples is reached.

For the FN corrector, we use features extracted only from the first

stages of the cascade. This choice is motivated by an empirical analysis of where FN-related candidates are rejected within the cascade.

Figure 3 shows the cumulative share of rejected FN-related candidates as a function of the cascade stage number; the curve is averaged over all digit detectors obtained in our experiments. Approximately

of all false-negative events occur within the first three stages, while the contribution of later stages is comparatively small. At the same time, computing features from all cascade stages for every fragment would significantly reduce the overall speed of the system, as it would require evaluating the entire cascade for all candidates, thus negating the efficiency benefits of the early-rejection mechanism. Restricting the FN corrector to the first three stages therefore provides a good compromise: it covers the vast majority of FN-related errors while keeping the feature computation cost low.

2.2. Selecting Fragments for Building Correctors

For training correctors, fragments are selected based on the degree of matching with the labeling (G). The relative intersection metric (IoU) is used as a measure of matching:

where

R is a rectangular fragment and

G is the corresponding labeling (

Figure 4).

Selecting examples for the FN corrector. Fragment

R is considered a detector error and is selected for training the FN corrector according to the following rule:

Error-free fragments (TN) are selected with a predetermined probability

, provided that for any

the following condition is met:

The parameter

is necessary to reduce the number of fragments

in cases where the number of labeled fragments is much smaller than the background ones, and to balance the database for training the corrector (Equations (

3) and (

4)).

Selecting Examples for the FP Corrector. Two types of fragments are used to construct the FP corrector. The first type are false positives, which are determined using the intersection threshold with the labeling:

The second type of fragment are true positives, used as positive examples for the corrector.

They are also included in the training set with probability

. A visualization of the fragment selection process for constructing correctors according to rules (Equations (

3)–(

6)) is shown in

Figure 5.

Training samples for the correctors are collected until their size reaches a predetermined threshold of for the FN and FP correctors, respectively.

2.3. Construction of Correctors

The correctors FN and FP receive as input the combined feature vector for each fragment R and generate a binary response about the fragment’s membership in the sets being corrected. This is achieved by using an algorithm developed for high-dimensional spaces, which constructs a separating hyperplane based on an analysis of the distinguishability of classes (errors and correct solutions).

Using the theoretical justification for the possibility of applying the Fisher criterion to the problem of constructing a corrector for a base classifier, a general algorithm for obtaining correctors (Algorithm 1) is presented, which constructs a separating hyperplane between the set of errors

X and the set of correct solutions

Y.

| Algorithm 1 Corrector Construction Algorithm. |

- Require:

Sets ; number of clusters k; number of principal components m; thresholds or ; Fisher discriminants ; centroid matrices H and W. - Ensure:

Fisher discriminants and thresholds for each cluster. - 1:

Compute centroid of set X: - 2:

- 3:

Extract principal components from . - 4:

Select m components with the largest eigenvalues of the covariance matrix of . - 5:

Project and into the principal component space: Construct transition matrix . - 6:

Construct whitening matrix: - 7:

Partition into k clusters using the k-means algorithm; obtain centroids . - 8:

For each cluster , compute Fisher discriminant and corresponding threshold using . - 9:

return,

|

Algorithm 1 transforms the data into a multicluster feature space, where the task is to minimize errors in each cluster. To evaluate the resulting feature space, distributions of measurements in the studied cluster were constructed.

Once the corrector is built on the training data, the algorithm for applying it is as follows Algorithm 2:

| Algorithm 2 Correction Algorithm. |

- Require:

Input vector z; centroid ; cluster centroids ; threshold vector ; Fisher discriminants ; transition matrices H and W. - Ensure:

Corrected output classification. - 1:

Centralize input by subtracting the centroid : - 2:

Apply transformation matrices H and W: - 3:

Determine the closest cluster t from using Euclidean distance: - 4:

Classify the sample as belonging to the corrected (error) set if - 5:

return Correction decision for input z.

|

To construct correctors, features extracted from image fragments using a detector are used. The features are the average segment intensities calculated using the modified Census transform. The size of the input vector for the corrector is determined by the number of features in each cascade and the number of cascades:

where

is the average intensity of nine segments of weak classifier k at the m-th cascade in fragment R.

The FN corrector uses data obtained from the first K detector stages. The FP corrector uses features obtained from all stages, where is the total number of detector stages.

Training samples for the correctors are collected until the minimum sizes of and are reached. Thus, the correctors are trained on a sufficiently large number of examples, minimizing the risk of overfitting and ensuring high generalization ability.

To improve the accuracy of the FN and FP correctors, it is proposed to further divide errors into groups (clusters), since errors arising during detector operation often have different natures and differ in the nature of the feature space. Clustering allows for the specific characteristics of errors to be taken into account and separate hyperplanes to be constructed for each type of error. Suppose we have a set of detector errors (FN or FP), represented as a set of feature vectors:

where each feature

is a vector of feature values obtained from the fragment corresponding to the detection error.

The goal of pre-processing is to partition the set

into a predetermined number of clusters

K:

This partitioning (Equation (

9)) is performed using the K-means algorithm [

60], which minimizes the within-cluster variance.

After partitioning the errors into clusters, a separate separating hyperplane is constructed for each resulting cluster , which separates the errors of this cluster from the set of all positive (correct) examples .

For each cluster, a separate discrimination problem is solved using the Fisher criterion.

2.4. Fisher’s Rule for Decision Making

The correction algorithm is based on Fisher’s rule for linear discrimination using bases [

61]. Let there be two classes: an error class (e.g., FN or FP) with feature distributions

and a class of correct fragments with distributions

. The optimal weight vector

w for the linear discriminant is determined by maximizing the Fisher criterion:

The linear decision is made according to the following function:

where

b is the bias. The class is determined by the rule:

where

is the threshold.

When constructing correctors for working with a detector under conditions of imbalanced classes, the key requirement is to achieve zero foreign acceptance rate (FAR) on the selected data, since the number of fragments into which the image is divided significantly exceeds the number of fragments containing the target object. To achieve this, the threshold

is chosen such that no example from a class that is not an error is mistakenly classified as an error for the given dataset. Formally, let

be the set of erroneous fragments (

for the FN corrector and

for the FP corrector). Then, the threshold selection condition is written as

If condition (

13) is not satisfied, then additional weight adjustments are made or a more stringent threshold is selected to ensure the required level of

.

2.5. Computational Complexity

Let n denote the dimensionality of the feature vector used by a corrector after PCA projection and whitening. At test time, evaluating a corrector for a single fragment requires only a small number of matrix–vector multiplications and dot products: projection to the PCA subspace, whitening, computation of distances to a few cluster centers, and evaluation of the corresponding Fisher discriminants. All these operations scale linearly with n, so the inference complexity of the corrector is per fragment. This linear-time overhead is negligible compared to the cost of evaluating thousands of weak classifiers in the underlying modified Census-based Viola–Jones cascade.

In contrast, training a corrector involves estimating covariance matrices and solving small eigenvalue problems in the PCA space. These steps scale quadratically with the feature dimension, i.e., the training complexity is . Since training is performed offline and only on a relatively small correction set, this quadratic dependence is acceptable and, in fact, reflects an explicit design trade-off: we deliberately restrict ourselves to linear-time inference and moderate training in order to maintain the high speed of the base recognition system. Many recent few-shot and model-rectification methods rely on deep backbones and iterative gradient-based adaptation at test time, which introduces significantly higher computational overhead than our lightweight linear operations.

3. Experimental Results

To evaluate the effectiveness of the proposed approach, experiments were conducted on two datasets (databases):

The first database contains images of railway tank cars, in which areas containing digital tank car identifiers are detected. The database consists of 1153 images of railcars with marked identifiers. The second database contains images of numbers on railcars. The database consists of 1067 images of identifiers with marked digits [0…9] on each. Both databases were downloaded from the Kaggle website [

62].

The railway tank car dataset contains 1153 full images of tank cars with annotated rectangular regions corresponding to identifier areas. Image resolutions range from 480 to 1080 pixels by height, with substantial variability in viewing angles, background clutter (tracks, surrounding cars, infrastructure), and illumination (day/night, weather conditions). Size of objects variates from 12 percent of heights to 80. The second dataset contains 1067 cropped identifier images with bounding boxes for individual digits 0–9. In this case, the background is less cluttered but the digits exhibit strong variations in font, size, and contrast, as well as occlusions and motion blur. These characteristics make both datasets challenging for classical cascade detectors and representative of industrial inspection scenarios.

For each dataset, we employ a repeated random splitting strategy with a 5:4:1 ratio between training, correction, and test subsets. Concretely, all images are first randomly partitioned into 10 approximately equal folds. For each of the 10 experimental runs, we then randomly select one fold as the test set, four folds as the correction set, and the remaining five folds as the training set. Thus, in every run the test subset is strictly independent from both the training and correction subsets used to train the detector and the correctors, while across the 10 runs, every image appears in the test set at least once, which allows us to accumulate statistics over the entire dataset.

The correction set is used only to collect fragments on which the detector makes errors. Since the base detector does not fail on all images, only a fraction of fragments in the correction folds actually correspond to false negatives or false positives. The correctors are therefore trained on a subset of objects extracted from the correction folds—namely, on those fragments where the detector produces errors. This reflects the practical regime in which only relatively few error examples are available and motivates the one-/few-shot nature of our corrector construction. We report mean Precision and Recall (and, where appropriate, standard deviations) over the 10 runs to account for variability due to random splits.

Each database is randomly split into three parts in a 5/4/1 ratio for the corresponding samples:

The training set is used to build the cascade detector.

The test set is used exclusively to evaluate the performance of the algorithm (without and after applying correctors).

The correction set is used to obtain training sets and build the FN and FP correctors.

The splitting experiment is repeated 10 times to reduce the dependence on uneven distribution of test and training data.

The experiments use standard metrics characterizing the performance of the object detector: Precision and Recall.

where

is the number of correctly detected objects;

is the number of false positives; and

is the number of missed objects. To determine whether a detection is true or false, a threshold of 0.5 was used for

, and the results for each split were averaged.

Table 1 shows that, for digit detection, the FN corrector increases Recall from 0.94 to 0.98 while causing only a minor decrease in Precision (0.92 → 0.91). This indicates that the corrector successfully recovers a substantial fraction of previously missed digits with almost no additional false alarms. For identifier detection, the FP corrector exhibits a different trade-off: Precision improves dramatically from 0.36 to 0.65, whereas Recall slightly decreases from 0.98 to 0.94. In practice, this behaviour is desirable in industrial railway monitoring, where false alarms (spurious identifiers) incur high manual verification costs, while missing a small fraction of identifiers can be tolerated if downstream systems include redundancy (e.g., multiple frames per car or additional OCR checks).

The trained detector on the first part of the database was first applied to the test set without correctors, and metrics were calculated. Then, based on the errors (FN and FP) obtained on the correction set, the corresponding correctors were trained. Subsequently, retesting was conducted on the same test set using the trained correctors to evaluate the correctors’ effectiveness. For digit detection, 10 different detectors were built, localizing the corresponding digit in the image. The final results present the average metrics of all digit detectors (

Table 1).

To determine the stability of the corrector’s quality characteristics as a function of the fragment intersection thresholds with the markup, the

dependences were constructed for the two corrector types under study (

Figure 6a). Note that at high threshold values, the corrector lacks examples to construct the separating surface, which is related to the selected step of the sliding fragment window. Fragments become less variable, which impairs the corrector’s generalization to other images.

Figure 5 further analyses the dependence of corrector quality on the IoU thresholds

and

used for fragment selection. For the FN corrector (

Figure 6a), increasing

initially improves Recall but eventually leads to performance degradation when the pool of available positive fragments becomes too small. A similar pattern is observed for the FP corrector in

Figure 6b: higher

values reduce the diversity of background fragments, which harms generalization and leads to unstable Recall. These observations highlight the importance of balancing the strictness of IoU thresholds against the need for diverse training data when constructing correctors.

Table 1 should be interpreted in the context of the sequential use of the two detectors for semantic scene analysis. In the first stage, an identifier detector localizes wagon identifier regions; in the second stage, a digit detector is applied only within these regions. For the identifier stage, false negatives are critical, since missing an identifier discards the whole wagon instance; for the digit stage, both false negatives and false positives directly influence the correctness of the final identifier string. The proposed correctors modify this pipeline in a complementary manner. For identifiers, the FP corrector substantially increases Precision (from 0.36 to 0.65) with a moderate decrease in Recall (from 0.98 to 0.94). For digits, the FN corrector increases Recall (from 0.94 to 0.98) with a negligible change in Precision (from 0.92 to 0.91). As a result, the effective Recall of the two-stage chain “identifier → digits”, approximated by the product of stage recalls, remains essentially unchanged (about 0.92 both before and after applying the correctors), while the fraction of incorrectly recognized digits in the test set decreases from approximately

to approximately

, i.e., by about

in relative terms.

From the computational viewpoint, the sequential configuration with corrected detectors leads to a significant reduction in the number of fragments processed by the second stage. Increasing identifier Precision from 0.36 to 0.65 implies that the expected number of candidate identifier regions per true identifier decreases by about 45%. Since the computational cost of the digit detector is approximately proportional to the number of regions it processes, this reduction in candidates results in a decreased workload for the second stage and an overall per-frame processing time reduction on the order of 37%, while practically preserving the same end-to-end Recall of the semantic pipeline.

As the

threshold increases, the Recall characteristic of the FP corrector suffers, while the Precision characteristic improves (

Figure 6b).

The experimental results (

Table 1,

Figure 6) on the selected databases demonstrate the advantage of using correctors to improve detector performance. Using the FN corrector significantly increases the recall metric (Recall), which corresponds to a reduction in the number of missed objects. The FP corrector effectively suppresses false positives, increasing precision.

4. Discussion

This study proposes an approach for implementing correction algorithms that leverage the blessing of dimensionality in high-dimensional feature spaces. The proposed method enhances the accuracy and performance of existing detectors while maintaining computational efficiency. The corrector architecture offers several advantages that make it suitable for a wide range of applications. First, it enables the learning of new error classes without reconstructing or retraining the underlying model—an essential capability for autonomous and adaptive systems where rapid error correction is critical. Second, the design is computationally economical: once trained, the correctors introduce minimal processing overhead, as mapping new measurements to their corresponding clusters is considerably faster than retraining the base algorithm.

Promising applications of the proposed architecture include scenarios where the core model must rapidly adapt to new or rare events. In computer vision, correctors can be trained to recognize novel object types or detect production defects immediately after the first occurrence. In cybersecurity, they can identify emerging attack patterns or anomalous behaviors from single instances, enabling the generation of targeted filters for characteristic threat signals. In natural language processing, correctors may refine model outputs incrementally, adapting to new slang, misspellings, or linguistic variations as they appear.

The architecture also supports integration into multi-corrector systems, enabling the simultaneous handling of multiple error types. In such configurations, a dispatcher first assigns each input to a corresponding error cluster and routes it to the appropriate elementary corrector. Each corrector operates independently on its designated error class, ensuring modularity and scalability. As new error types emerge, additional modules can be trained on minimal data without affecting existing components.

Further architectural improvements may focus on identifying high-dimensional feature spaces that satisfy the conditions of stochastic separation theorems, facilitating the use of classical linear discriminants and hyperplane-based separation methods. Another promising direction involves combining correctors with novelty detection mechanisms—first identifying inputs that deviate from the training distribution, and then selectively activating correction modules. This integration could enhance overall stability and reduce unnecessary correction activations.

The requirement of zero false acceptance rate (FAR = 0) is enforced on the finite correction set by choosing conservative thresholds for the Fisher discriminants. While this is desirable from a safety perspective, it inevitably introduces a form of overfitting: in truly open-world deployments, previously unseen background patterns may still pass through the corrector. In practice, the FAR–FRR trade-off can be controlled by relaxing the thresholds using a separate validation set or by combining the correctors with explicit novelty detection mechanisms.

Compared to recent paradigms such as out-of-distribution (OOD) detection, test-time adaptation, and model rectification, our approach adopts a modular view: instead of modifying the base detector parameters at test time, we attach external corrector modules that operate in a high-dimensional feature space. This design avoids catastrophic forgetting and allows new error types to be incorporated via one-/few-shot training of additional modules, at the cost of maintaining a dedicated correction set and potentially multiple correctors. We believe that integrating high-dimensional correctors with OOD detectors or lightweight test-time adaptation could further improve robustness in highly non-stationary environments.