1. Introduction

There is a constantly-increasing demand for smart and innovative applications that take advantage of increased computation and communication capabilities of new technologies. These applications increase the quality of life, making daily life easy and comfortable. A system that supports the daily lives of people needs to be reliable and long-lasting [

1]. The idea of moving towards smart technologies is an inevitable path because the sensors have become inexpensive and relatively easier to deploy compared to the past. Moreover, with the evolution of Internet of Things (IoT) [

2], different sensors can be connected with each other, forming a wireless network that is capable of collecting frequent data with increased communication capabilities. The fusion of data collected by the same or different sensors has led to the discovery of several smart applications, as data can be used to explain a lot of patterns.

Nowadays, we have a lot of smart applications that leverage IoT infrastructure [

3] and provide different utilities, such as smart maintenance, smart space, autonomous vehicle driving, smart health, smart home, etc. All of these innovations have been developed considering the activities and behavior of users or people in the surrounding environment. It is necessary to identify human-related features, gestures or activities to develop or design smart applications where we need to make decisions in real-time with minimum delay. As a result, several research studies [

4,

5] focused on identifying human activities in different environments. Some domains, such as smart space, smart home, and smart health, can highly benefit from activity detection in smart environments. Such activity detection should rely on hardware setup, continuous data collection, and accurate and real-time machine learning methods.

In the smart space domain, detecting human presence is an important task in smart spaces to track people [

6], detect their activities [

1], provide services such as health-care [

7], or increase safety and security [

8]. For example, detecting activities such as falling can be helpful in medical applications such as elderly care for independent living that can improve their life expectancy [

9]. Additionally, real-time monitoring of certain activities might increase the safety of an environment, preventing dangerous situations, accidents, or theft. A common way to perform this task is to use audio/video data [

8,

10]. This method can provide accurate information, but leads to serious security/privacy concerns, and high computational overhead. Other studies track wearable device usage (e.g., phones, watches, etc.) to locate people [

4]. However, this also leads to privacy issues as well as creates high dependency to people (i.e., users have to carry these devices all the time). Another method includes using ambient sensors [

11,

12], e.g., infrared or ultrasonic sensors, but the outcomes of these can be highly unreliable due to sensor quality. A recent study [

1] showed that activity detection of a single person can be achieved with ambient sensors. However, the work does not show how this can be extended to multiple people. Thus, it becomes an important issue to differentiate people in a smart space (without identifying them) when using ambient sensors.

To address the issues above, we propose a system using low-cost, low-resolution thermal sensors to track the number of people in an indoor environment, and to understand their real-time static activities in the form of position tracking. The low-resolution nature of the sensors provide protection against privacy issues, and their ambient nature requires no active participation from the user, hence making our framework non-intrusive. We first built a smart environment with these ambient sensors and then performed two inter-dependent tasks through our intelligent system framework: (1) estimating the number of people; and (2) tracking their positions in terms of static activities of different people in the environment.

We studied different algorithms to accurately detect the number of people in the environment, as well as the effects of where to place the ambient sensors in the environment. We show that, by using the connected component algorithm and placing the sensor on the ceiling of the environment, we can achieve up to 100% accuracy performing this task.

After accurately estimating the number of people, next we extend our framework to detect the static activities of the different people in the smart environment. We first perform efficient pre-processing on the collected ambient thermal data, including normalization and resizing, and then feed the data into well-known machine learning methods. We tested the efficiency of our framework (including the hardware and software setup) by detecting four static activities. We evaluated our results using well-known metrics such as precision, recall, F1-score, and accuracy. Our results show that we can achieve up to 97.5% accuracy when detecting activities, with up to 100% class-wise precision and recall rates. Our framework can be very beneficial to several applications such as health-care, surveillance, and home automation, without causing any discomfort or privacy issues for the users.

3. Estimating the Number of People

This section presents the first functionality of our proposed system: estimating the number of people in a smart space. More specifically, we include the hardware setup, data collection, detection algorithms, and finally the results and evaluation of our framework.

3.1. Hardware Setup

We use low-resolution MLX90621 thermal sensors [

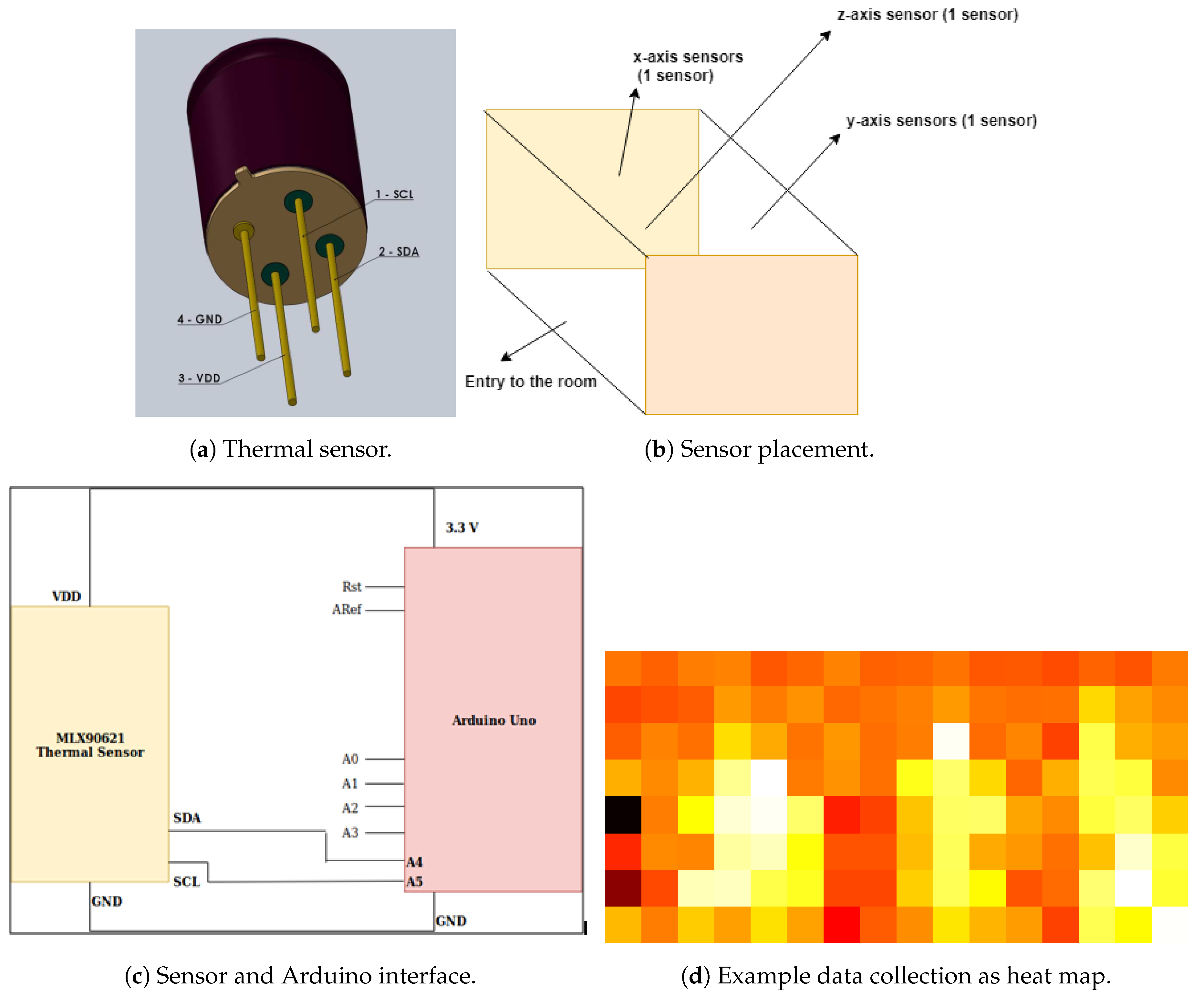

49] for data collection. This is a four-legged sensor, as shown in

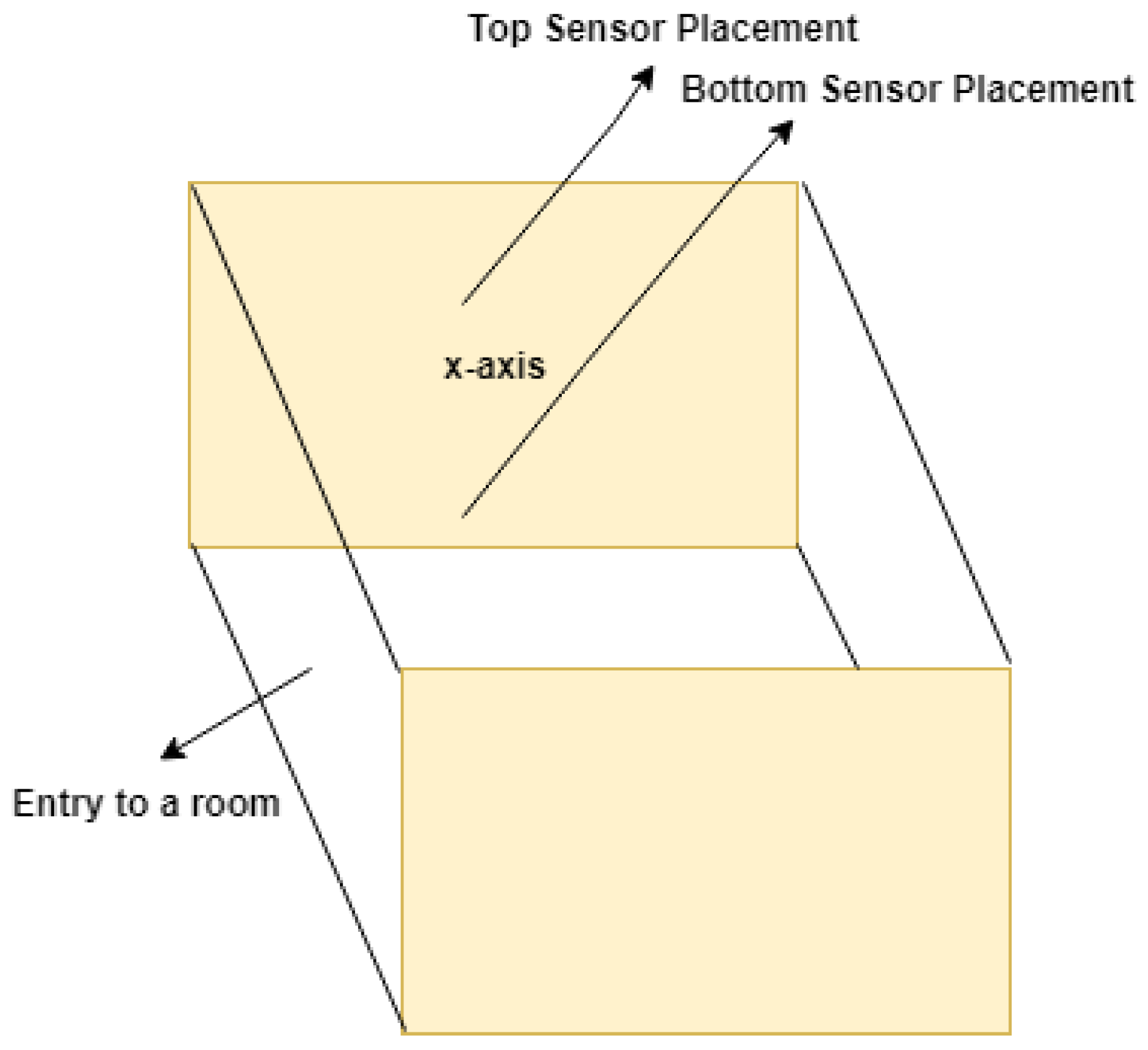

Figure 1a. The pins are: (1) SDA, sending/receiving data over serial data line; (2) SCL, serial clock line generating synchronous clock signal; (3) GND, provides GROUND power connection; and (4) VDD, voltage supply of 3.3 V for I2C data transfer. We deployed one sensor on three different walls of our smart space testbed over three different experiments. The three sensors were deployed on each of the walls of a 2.5-m × 2.75-m room, as shown in

Figure 1b.

The sensor is interfaced with an Arduino Uno for data collection (schematic diagram in

Figure 1c), which is connected to the PC via USB. The hardware setup uses a sequential approach, i.e., the data from the sensor are collected by the Arduino Uno before they are transferred to the PC. Thermal sensors have a field of view of 125 × 25 (125 degree horizontal and 25 degree vertical view). These sensors follow I2C communication protocol, i.e., the data are transmitted bit-wise to the Arduino device. In our setup, the thermal sensor acts as a master, sending the clock signal to the slave device (Arduino) before it starts transmitting the data over the SDA line. The data are divided into messages and each message is divided into frames (including start/stop bits, address frame, read/write bit, 8-bit data, and ACK/NACK bit).

3.2. Data Collection

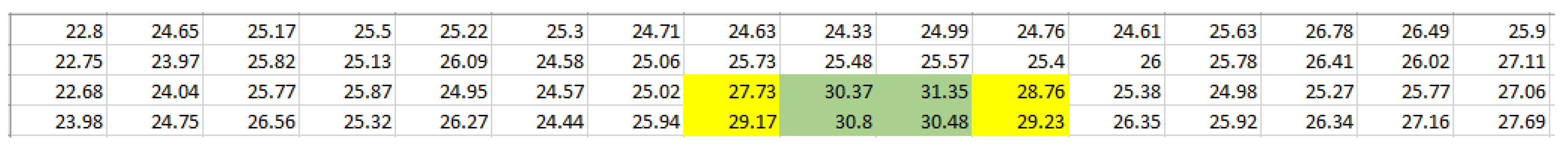

The data collected by Arduino are transmitted to a PC for finding the number of people. For a single reading, the thermal sensor returns 64 (4 × 16) values in °C. The temperature, when no one is present, is between 22 and 25 °C. The temperature varies between 27 and 32 °C when a human is present.

To study the effect of sensor placement, we placed three thermal sensors at different locations in the smart environment we setup: one on the ceiling, one on a side wall, and one on the adjacent side of the second sensor. The pictorial representation of this setup can be seen in

Figure 1b, placing sensors in x-, y- and z- directions, forming a 3D setup. Here, z-axis represents the ceiling, while x-axis and y-axis are the side walls. These sensors capture temperature data in the environment. The data are captured by running a Python script, saving the data as a

csv file and also

heat map images. The data in a csv file have two columns: timestamp and 64 (4 × 16) pixel values, separated by space. Each row in the csv file represents a data point at a single instance of time. The sampling data are 4 frames/s or 1 data sample/s because each frame capture 16 temperature values. Since a thermal sensor captures four frames in a second, it captures 64 temperature values which correspond to a single data point. Each row of the csv file also can be represented as a single heat map image as in

Figure 1d.

3.3. Algorithms

In this section, we introduce two different algorithms to estimate the number of people in an environment using the heat map images: window size algorithm and connected component algorithm.

3.3.1. Window Size Algorithm

This approach selects a window, e.g., 1 × 2, 2 × 3, etc., smaller than 4 × 16 (resolution of the thermal sensor). In this approach, we use the data that have been saved in a CSV (comma separated values) file. The algorithm iterates over each 4 × 16 data point window by window, and calculates the average of temperature values in each window. Let us consider a single data point, with 64 temperature values and try to understand the concept of window size. In

Figure 2, we take a single data point that has 64 values in it. We reshape this data point to a 4 × 16 array and a window size of 2 × 3 is selected. We iterate over the temperature values in the form of a window with the selected size. We obtain the range for rows and columns from Equations (

1) and (

2), respectively:

During the iteration, we keep the rows mutually exclusive to each other, however iterate the columns sequentially. The idea here is that, when there is a person in the environment, some of the window average values will be higher compared to the rest. We choose a threshold value to compare the window average values (please see below). Then, the algorithm basically finds the number of people as the number of windows where the average is greater than the threshold. The algorithm is shown in Algorithm 1.

| Algorithm 1 Window size algorithm. |

Require: Csv file

Ensure: Maximum Number of people in each image, - 1:

Calc. - 2:

- 3:

- 4:

- 5:

fordo - 6:

for do - 7:

- 8:

- 9:

- 10:

- 11:

for do - 12:

for do - 13:

Calc. - 14:

end for - 15:

end for - 16:

Calc. - 17:

if then - 18:

Calc. - 19:

end if - 20:

Calc. - 21:

end for - 22:

end for - 23:

max_count_people(List_count)

|

In

Figure 1d, we can observe that the values highlighted in green are higher compared to the rest of the numbers. Thus, while iterating with a window size of 2 × 3, this green highlighted region might give an average value bigger than the threshold value twice: once when we take this region values along with values highlighted with yellow on the left side and the other when we take these values with highlighted values in yellow on the right side. We can avoid such a problem by carefully selecting the threshold value of the algorithm. After performing extensive evaluations, we selected our temperature value as 29.96 °C, which gives the highest accuracy performance in our experiments. The biggest problem with inefficient threshold values is incorrect estimation of the number of people present in the environment.

3.3.2. Connected Component Algorithm

This approach does not require any manual parameter selection, an advantage over the previous method (e.g., the window size parameter). We first crop the images to remove all the white noise, and convert them to grayscale images, where pixel values range 0–255. We then process the grayscale images into binary images, where each pixel value is either 0 or 1 (if value is greater than a threshold it is 1, and 0 otherwise). Then, we find the number of people as the number of distinct connected components in the binary images. To achieve this, we scan the binary images, and group pixels into components, based on pixel connectivity. Adjacent pixels are connected if they both have value 1. There are different kinds of connectivity levels: (1) four-way connectivity checks the top, bottom, left and right pixels; and (2) eight-way connectivity checks diagonals along with four-way connectivity. We use eight-way connectivity check in our framework. If the pixels connect with each other, then they are given the same label and when a pixel with value 1 is evaluated for the first time, we assign it a different label. At the end, the number of people is the number of distinct labels. The algorithm is in Algorithm 2.

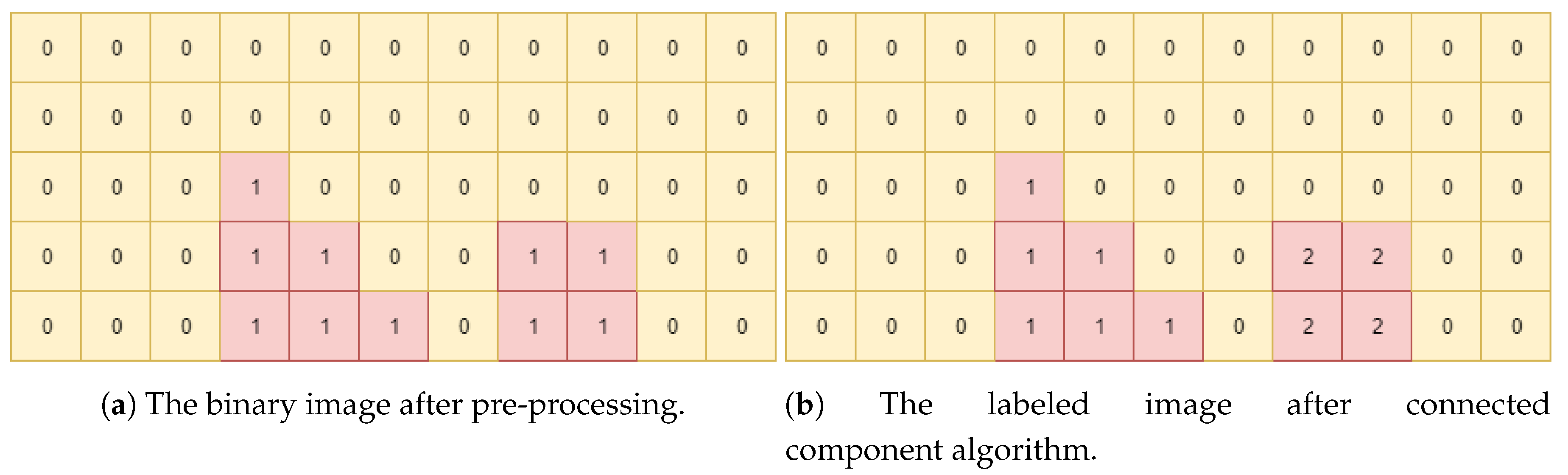

To show how the algorithm works visually, we present an example in

Figure 3. In

Figure 3a, we can see two values of 0 and 1. The ideal semantics is that if the pixel value is “1” then a person is present, else no one is present. For this algorithm, we use eight-way connectivity. The algorithm starts iterating over each pixel in

Figure 3a. It checks the first-pixel value, which is “0”, and gives it a label “0” as in

Figure 3b. Next, the algorithm iterates and checks the second pixel, which also has a value of “0”. However, before assigning it any label, it verifies whether any of the diagonals, top, bottom, left and right pixel have a value of “0” and whether any of these pixels are labeled previously. In this example case, the left pixel has the same value and has already been labeled. Thus, in this scenario, we can say that

first and second pixels are connected and grouped and, hence, assign a label of “0” to the second pixel. Similarly, it checks for all other pixel values. When the algorithm reaches the third row and fourth column, it observes that the pixel value is “1’,’ which is different from the other labeled pixels. Now, by using eight-way connectivity, it checks whether the surrounding pixel values have the same intensities. The third row, fourth column pixel has the same value in the pixel below it but the bottom pixel has not been labeled yet. Thus, the third row, fourth column pixel gets a label of “1”, as shown in

Figure 3b.

Similarly, when the algorithm reaches the fourth row, eighth column, it observes that pixel value is “1”, but none of the connected pixels have been labeled. Hence, it gets a label of “2”, as shown in

Figure 3b. Thus, to get the number of people in an environment, we run a loop over the obtained labels from the connected component algorithm. For each image, we select the label with the maximum value as the number of people in the room.

| Algorithm 2 Connected component algorithm. |

Require: Heat map Images

Ensure: Maximum Number of people in each image, - 1:

- 2:

- 3:

- 4:

for in fig do - 5:

Calc. - 6:

Calc. - 7:

Calc. - 8:

Calc. - 9:

- 10:

Calc. - 11:

end for - 12:

- 13:

for in do - 14:

- 15:

- 16:

- 17:

for do - 18:

for do - 19:

if != 0 and not in then - 20:

Calc. - 21:

Calc. - 22:

Calc. - 23:

end if - 24:

end for - 25:

end for - 26:

Calc. - 27:

end for - 28:

Calc.

|

3.4. Results

In our experiments, we evaluated the sensor placement on three axes and two algorithms, where the number of people (2–3) and their activities in the smart space (sitting vs. standing) varied. For each experiment, we report: (1) the number of data points per experiment; (2) how many times each algorithm can accurately detect the number of people; and (3) the resulting accuracy.

3.4.1. Window Size Algorithm

Table 1,

Table 2 and

Table 3 show the results of the window size algorithm, with two people sitting, two people standing, and three people sitting, respectively. In all experiments, the temperature threshold was 29.96 °C, with window size 2 × 3 (we show why we choose this size below). The first row in each table shows the number of data points (each data point includes 64 (4 × 16) temperature values), and the other rows show how many people were detected by the window size algorithm, and the last row is the accuracy.

We collected 315 and 608 data points for two people cases (sitting vs. standing). The max accuracy when two people were sitting was observed with z-axis sensor, 93.9%. The accuracy dropped to 17% for x-axis and 42.56% for y-axis placement (

Table 1). The accuracy when two people were standing was the best (98.35% in

Table 2) with z-axis sensor. In both cases, the y-axis sensor gave better accuracy than the x-axis sensor. This is because, in our experiments, two people were sitting and standing in front of the y-axis sensors facing each other, whereas they might be blocking each other facing x-axis sensors. Next, we increased the number of people to three, all sitting, with 458 data points.

Table 3 shows that, in this case, z-axis gave the best overall accuracy of 96.06%.

The problem with this approach is that, if a person slightly moves, the temperature values in other pixels increase. If the window size and threshold are not chosen accordingly, it might result in very inaccurate results, as in

Table 4, where the window size is 1 × 4. The experiment was done when two people were sitting on chairs. It can be observed that the average accuracy was much smaller compared to the best case. In this case, the window size value should be recalculated to account for different number of people and activities, making the algorithm less suitable for dynamic environments.

3.4.2. Connected Component Algorithm

We used the same dataset (number of data points for different number of people/activities) as in the previous case, with threshold value 182 to convert grayscale images to binary images (i.e., if the pixel value was greater than 182, the binary pixel value was 1). We achieved 100% accuracy when we had two and three people (

Table 5,

Table 6 and

Table 7), using the z-axis. In addition, as shown in in

Table 5,

Table 6 and

Table 7, x-axis and y-axis sensors gave much higher accuracy than the window size method, proving better performance for the connected component method, not only with z-axis but also with the other axes. In addition, this method does not require selection of window size, which makes it versatile and more suitable for dynamic environments. Thus, we next used connected component algorithm with z-axis sensor placement to find the number of people in our experimental setup.

4. System Framework for Position Tracking and Static Activity Detection

This section introduces our system framework to track the position of multiple people in a smart space and detect their static activities. This section builds on the previous one, which finds the number of people. Using that information, we demonstrate how we process the raw heat map images and then use machine learning on them. This section includes our hardware setup (how it differs from the previous section), data collection, data pre-processing, and finally the machine learning algorithms that use the pre-processed data. Finally, the output of our framework is to estimated static activity (where a user is performing the activity in the same location) taking place in the environment. In our work, we consider four static activities: standing, sitting on chair, sitting on ground, and laying on ground.

4.1. Hardware Setup

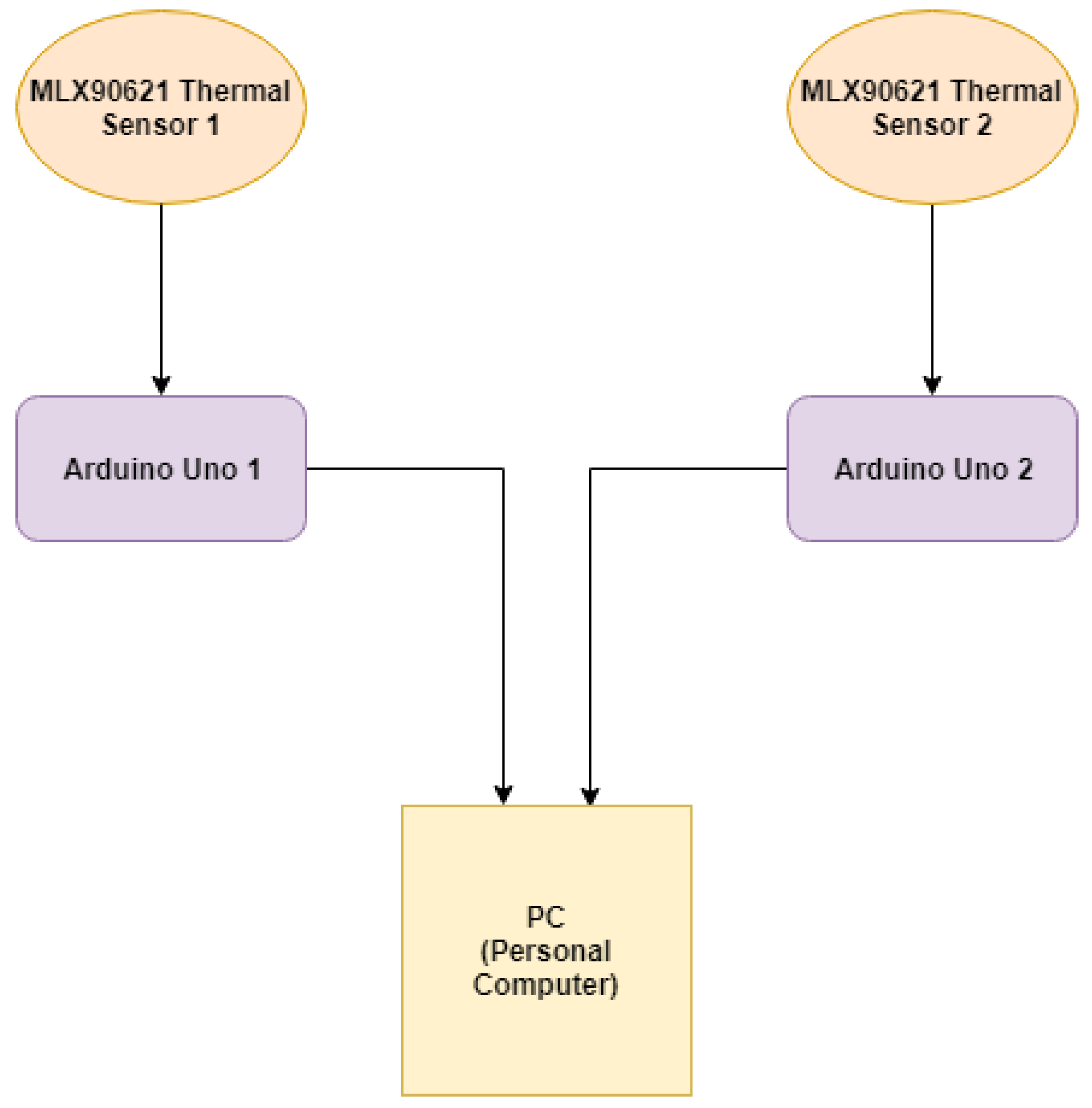

Our system setup uses the same low-resolution thermals sensor as before (4 × 16 MLX90621 thermal sensor [

49]), with two changes: (1) the placement of the thermal sensors; and (2) instead of one sensor, we use two of them to increase field of view (FoV). The thermal sensors use the I2C communication protocol to transfer the sensed data to two separate Arduino Unos. The block diagram in

Figure 4 shows the sequential process in which the data are collected by two sensors and transferred to two Arduino Unos, respectively. The data from both the sensors are appended and saved on a PC.

The pictorial representation of the hardware setup in the smart space is shown in

Figure 5. In this figure, we can observe that two sensors are placed one beneath the other in our smart room setup, with dimensions 2.5-m × 2.75-m. The two sensors are placed on the x-axis of the 3D environment to increase the field of view (FoV) vertically. This is crucial to get more data points to accurately capture the activities.

4.2. Data Collection

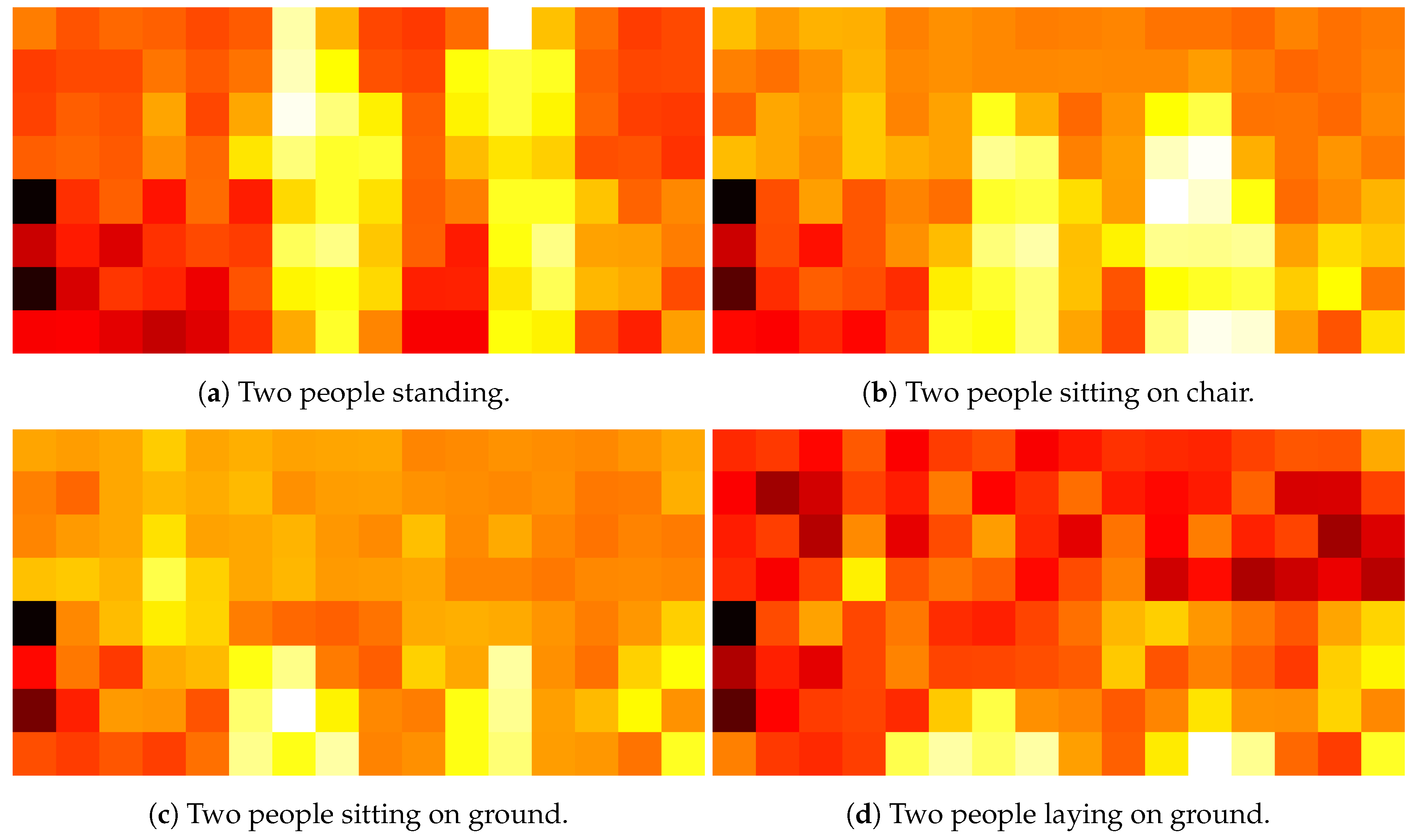

We collected data using two MLX90621 thermal sensors. The thermal data were then transmitted to two separate Arduinos. A Python script, based on multiprocessing, captured the data and stored them in the form of heat maps (as in previous section) and CSV file on the PC. The two sensors were placed on the x-axis one below the other so that they could be appended vertically to increase the field of view (FoV) from 4 × 16 to 8 × 16. This means that now for a single reading across a row, the combination of two sensors will return 128 temperature values (in °C). Example heat maps corresponding to different activities are shown in

Figure 6.

Meanwhile, the data were saved as a heat map as well as logged in as a CSV file. The CSV file had two columns, one for the timestamp and the other for 128 (8 × 16) temperature values. The temperature was high in regions where a human presence was detected. The data captured by two thermal sensors at a particular instance of time were represented either by a single heat map image or a single row for a CSV file.

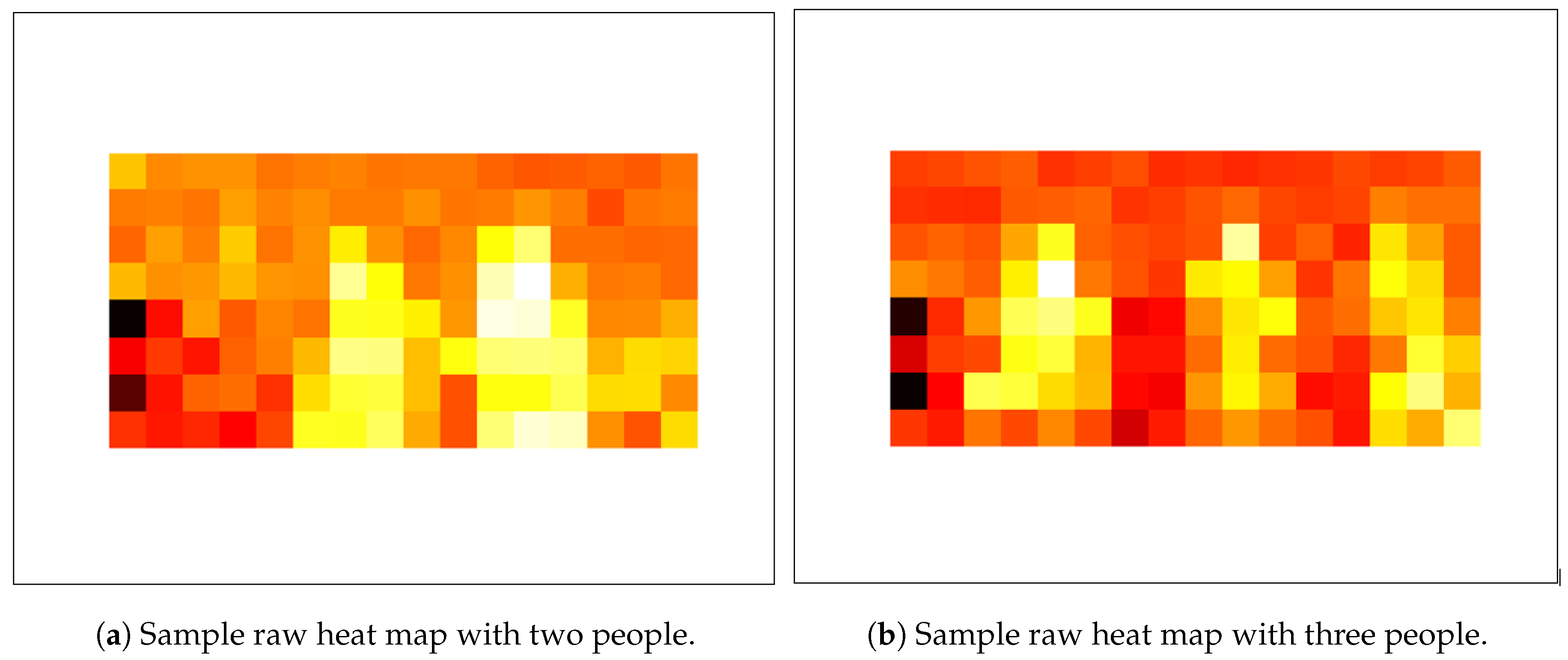

4.3. Data Pre-Processing

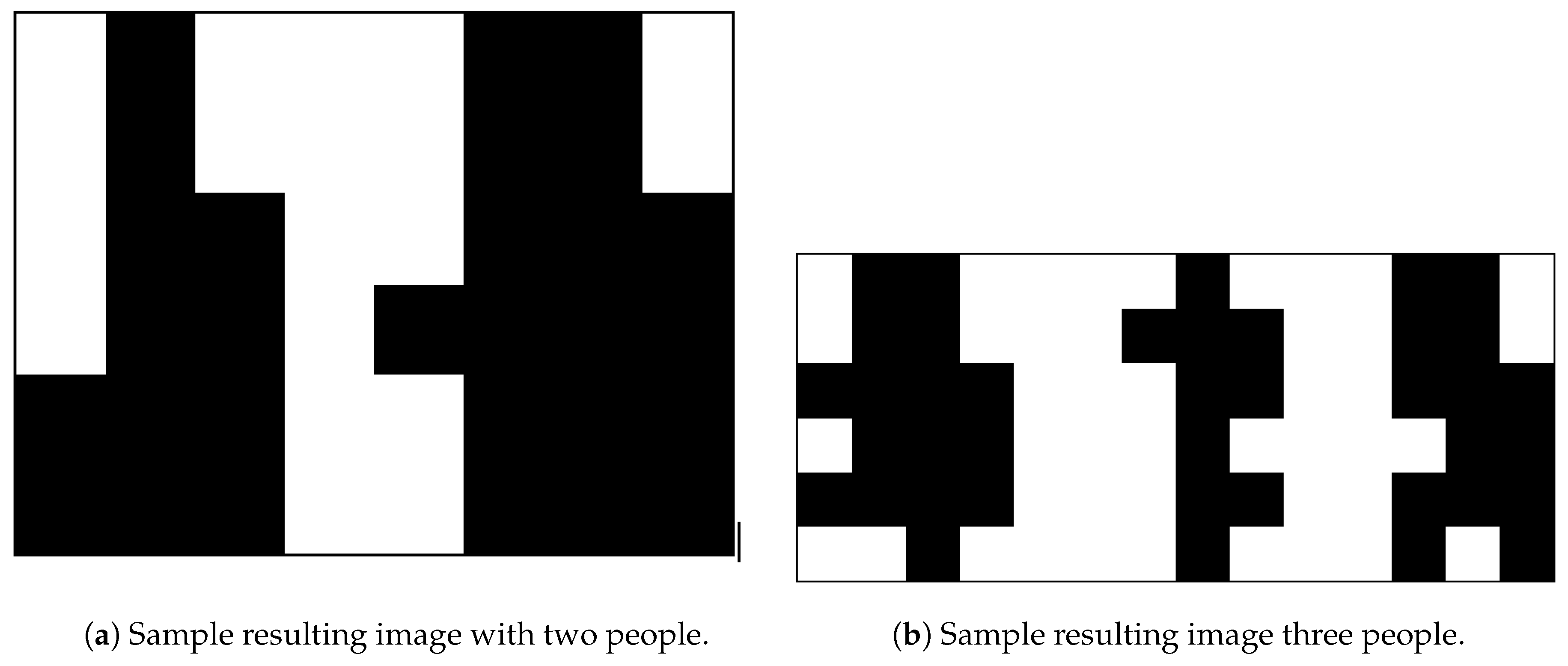

The data collected from two MLX90621 sensors were saved as raw data on the PC. The raw data mean the primary data that were obtained from the source. Our eventual task was to detect the activities of different people in our smart space. However, we cannot predict the activities using directly primary data as there will be external or internal noise present in them. We need to pre-process the data before giving them to any prediction algorithm so that we can remove unwanted noise from the dataset. If we do not perform this task on the primary dataset, then there might be an ambiguity in the results which would decrease the overall model performance. The steps we took to pre-process the raw heat map images include: white noise removal, background subtraction, extracting multiple regions of interest (RoI), separating each RoI, resizing each RoI, and finally converting the image to pixel values. Next, we go over each step in detail, using the two sample images in

Figure 7, one with two people (

Figure 7a) and the other with three people (

Figure 7b).

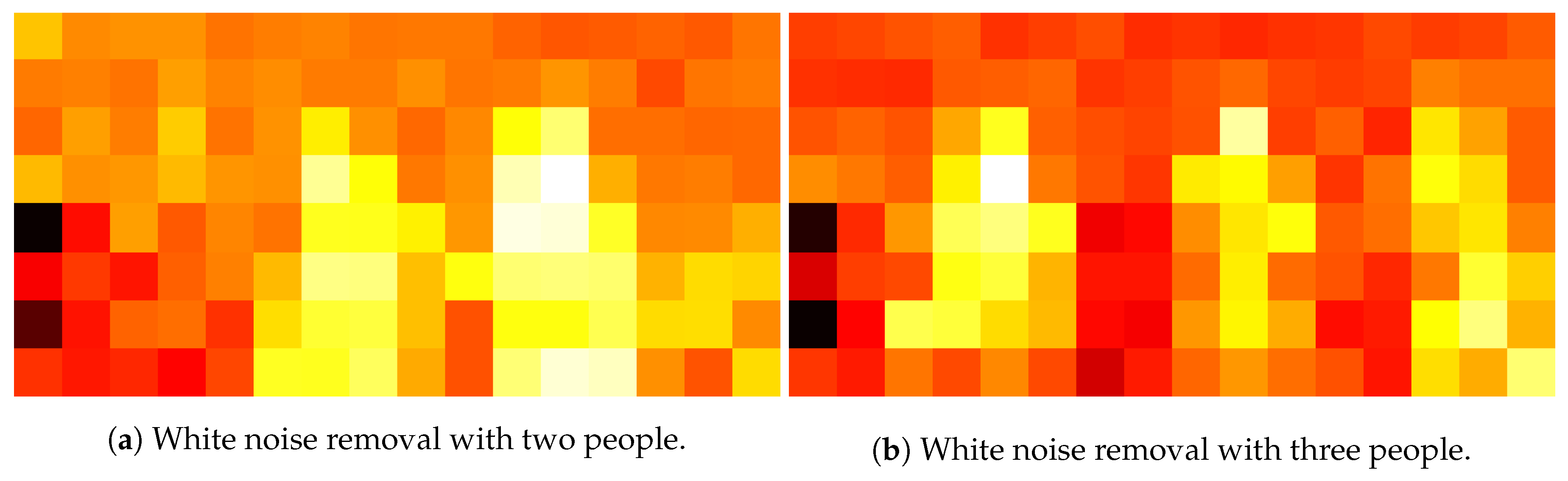

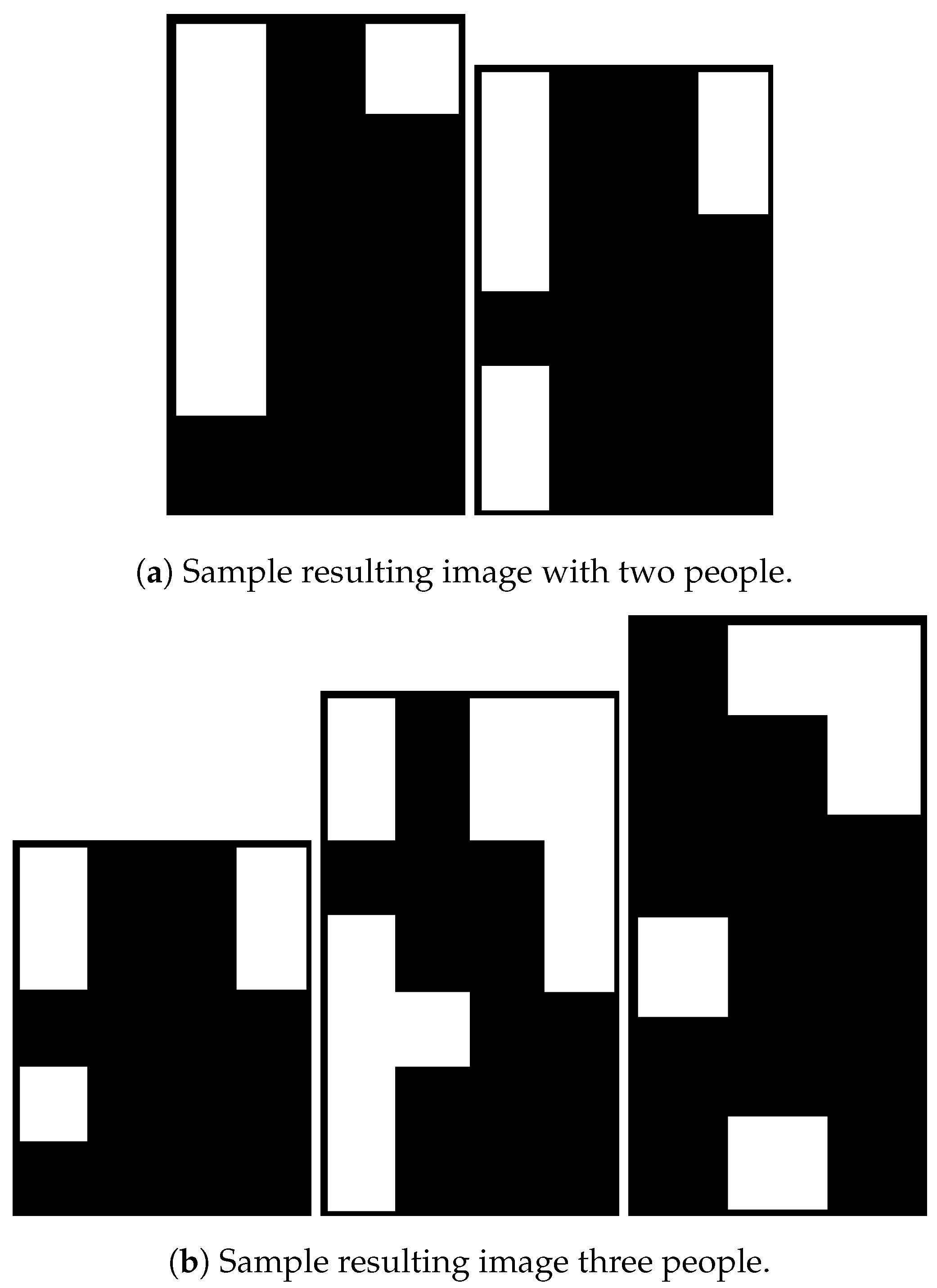

While Noise Removal: The raw heat map images from the sensors are in this step. The cropping of a heat map image means the removal of unwanted white noise outside the border of the heat map. After the white noise removal, the heat map images obtained are as in

Figure 8.

Background Subtraction: We collected and saved the data in separate images when there are no people in an environment to find how the empty space would look. Then, we converted the heat map images containing people and the heat map images without people to grayscale. Then, we compared each corresponding pixel value for both grayscale images. If the pixel values were not equal then set the final pixel image value equal to whatever is the pixel value for the grayscale image obtained when people are there in an environment; otherwise, we set the same pixel value. The new heat map images obtained after background subtraction were saved in separate images.

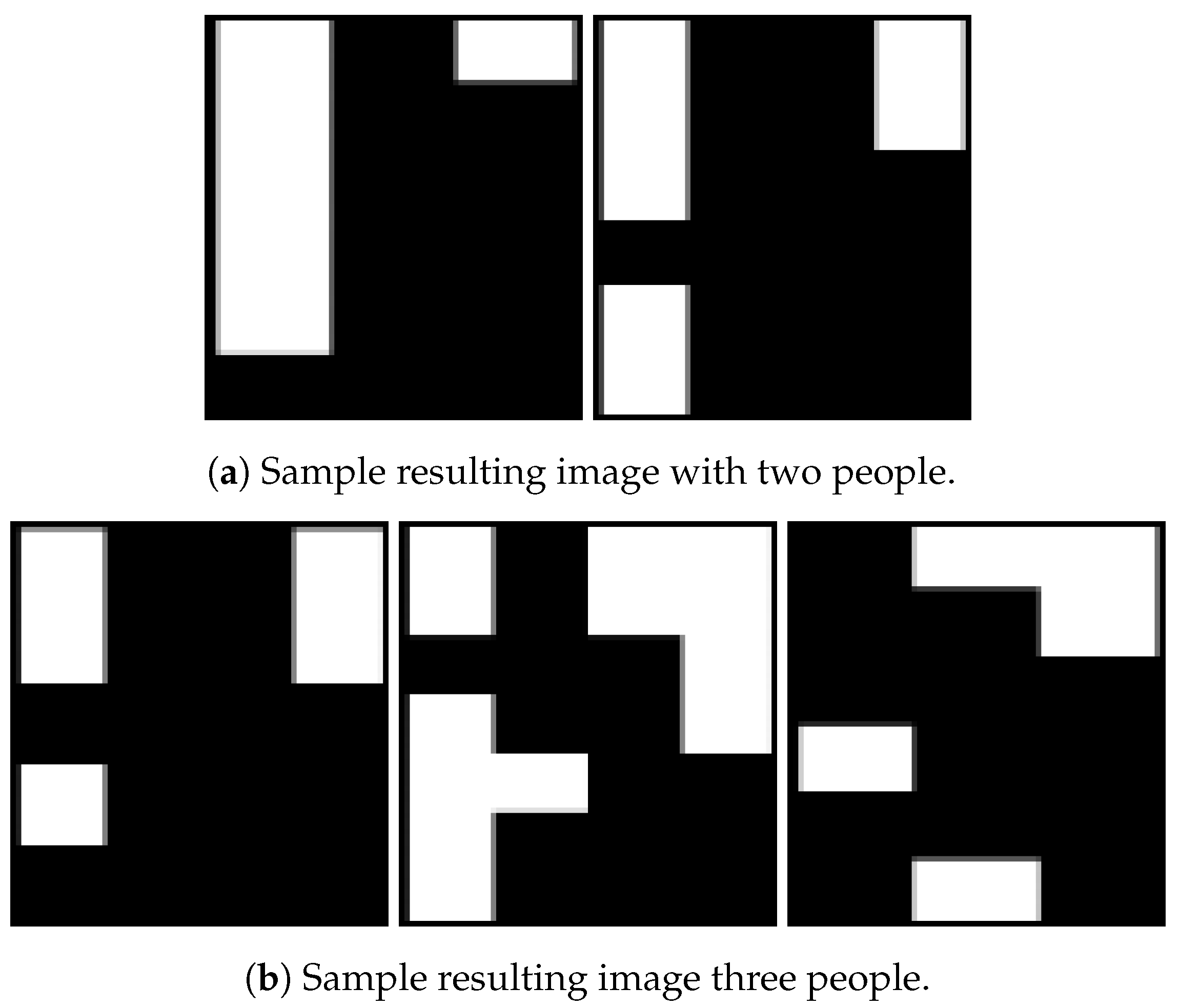

Extracting Multiple RoIs: The background subtraction process yielded images where the unwanted background noises were subtracted. The background subtraction process also returned the final pixel values list. These values were converted from an array/list to images. Thus, each image had multiple regions of interests that represent activities performed by different people in a single frame. The unwanted background was cropped and only the required blobs were retained in this step.

Separating Each RoI: To separate each RoI, each image was converted to grayscale image and then the threshold was applied to convert it to a binary image (1 for the required region and 0 otherwise). The images after background subtraction, extracting the required RoIs of images, and converting to binary images are shown in

Figure 9. As we obtained binary images, the next step was to find the borders of the required RoIs and then draw contours around them. We iterated over each contour to get x- and y-coordinate values along with the length and breadth of the required image. Finally, we extracted the image by slicing the original image with the help of the coordinates (x and y), length and breadth values. Sample resulting separate images are shown in

Figure 10a,b.

Resizing Each RoI: The following task was to resize all the images so that all the images had the same resolution. It is a necessary step because all the input features to any predictive algorithms should have the same size. The images in

Figure 10a,b show that all images can have variable sizes. Hence, in this step, we stored length and width of each image in separate array lists. All values in the length and width list were added and averaged out. If the length (width) of the image was less (more) than the average length (width) of all the images, then the length was scaled up (down). The resulting sample images are shown in

Figure 11a,b.

Converting the Image to Pixel Values: In this step, all resized images were converted to pixels and stored in a list. We iterated over the pixel list and stored each pixel value of an image to a CSV file.

4.4. Machine Learning Algorithms

The final pixel arrays, from Step 6, were the main input of the machine learning algorithms to detect human activities. In our framework, we tested several machine learning algorithms to find the best-performing method for our problem and hardware setup. Since our input data are labeled, we used supervised algorithms. These algorithms included: (1) logistic regression; (2) support vector machine; (3) k-nearest neighbor; (4) decision tree; and (5) random forest. We chose these methods as they are among the most well-known methods that are applicable to applications similar to ours. The input labels to these algorithms were the activities we detected. For all experiments presented below, we used the labels shown in

Table 8. In the next section, we list the experiment/algorithm parameters for the methods we used, give detailed information about the data we used, and activity detection performance along with timing analysis of all methods.

5. Results and Evaluation

This section presents the results of our multiple people position tracking framework along with our evaluation on the results. We organize the section into different parts, where we discuss how we collected data, how may data points we obtained for different static activities, the explanation of the performance metrics we used for evaluation, the results for two people and three people static activity detection separately, the timing analysis for activity detection, and finally a discussion section that summarizes the experiments and the results.

5.1. Data Collection and Performance Metrics

We collected data in our smart space, which has dimensions of 2.5 m × 2.75 m. We processed the collected raw detailed in

Section 4.3, and labeled the data, as shown in

Table 8. Then, we fed the final processed data and labels to the machine learning algorithms listed in

Section 4.4. Our data collection included four static activities (as shown in

Table 8), with two or three people. Due to the size of our smart environment, we did not pursue experiments with more people.

Table 9 shows the number of data points per activity for different number of people through our experiments. We refer to this table in the remaining part of the results section.

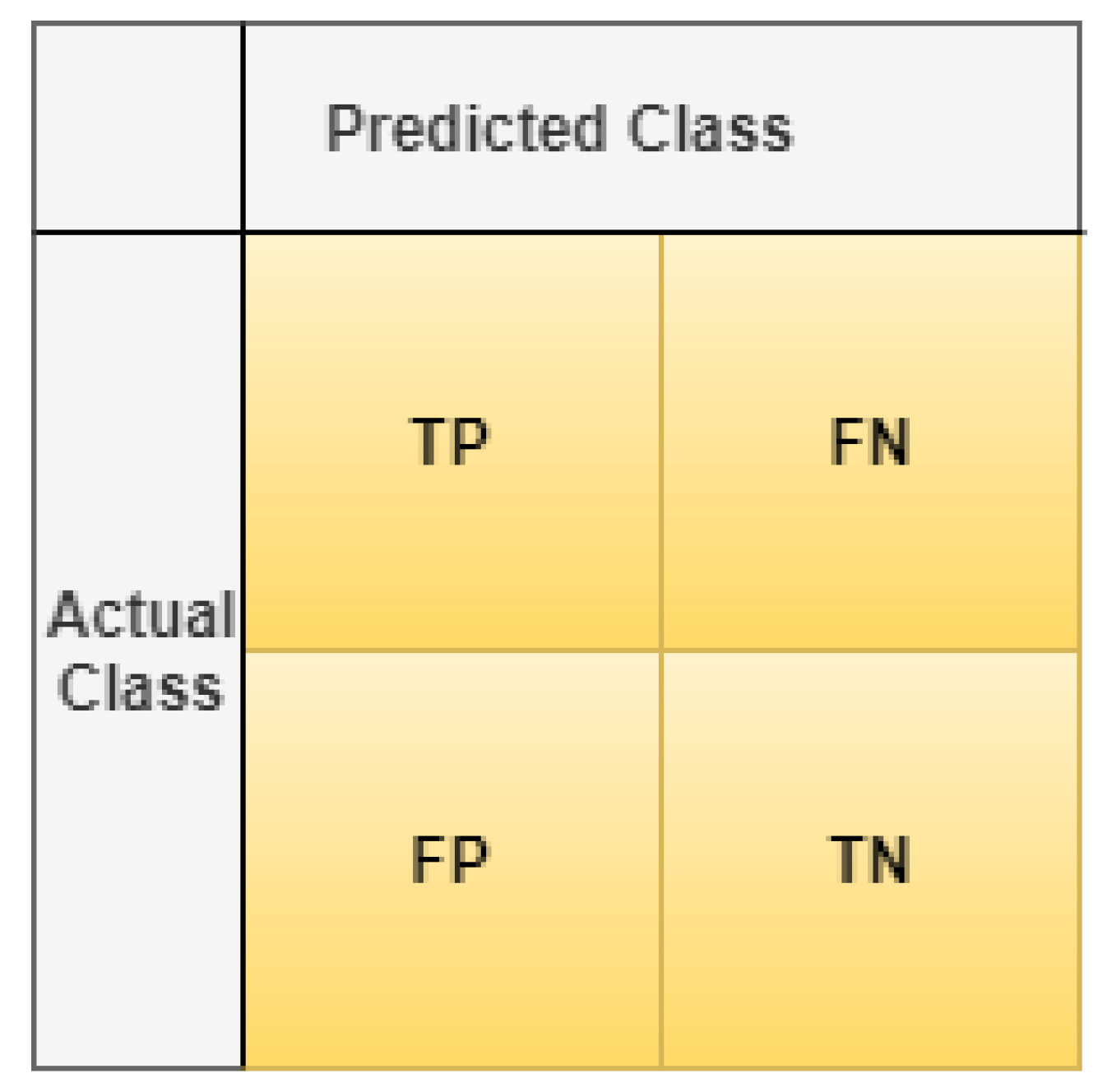

We applied five different machine learning algorithms (logistic regression, support vector machine, k-nearest neighbor, decision tree, and random forest) on the collected data to find the performed activities in the smart space. Since the problem we considered is essentially a classification problem, we leveraged the confusion matrix representation to demonstrate the results. An example confusion matrix representation is shown in

Figure 12. A confusion matrix in a classification problem is used to assess the performance of the classification model used in terms of how correctly the classes are determined. In more detail, a confusion matrix tells us information about:

TP: True Positive (when predicted value is correctly predicted to be positive)

FP: False Positive (when predicted value is falsely predicted to be positive)

FN: False Negative (when predicted value is falsely predicted to be negative)

TN: True Negative (when predicted value is correctly predicted to be negative).

Based on the confusion matrix structure, we report the performance using the following metrics:

Accuracy =

Precision =

Recall/Sensitivity =

Specificity =

In our results, we use class-wise metrics as they provide more insight towards the model performance. Below, we also include overall model accuracy as well for further comparison among different machine learning methods. Next, we show detailed results for two and three people experiments separately.

5.2. Results of Two People Experiments

Data: The overall data collected for two people performing different static activities of sitting, standing, sitting on ground, and lying on ground are shown in

Table 9. The data after pre-processing had separate Regions of Interest (RoIs), doubling the data points from the original dataset.

Performance: We took data for two people, as shown in

Table 9, and shuffled them before splitting them into training and test datasets. We did the split at the ratio of 70:30, where where 70% of the data were used for training and remaining 30% for testing. We first show the confusion matrices of each method to demonstrate how each method can achieve correct classification in

Table 14. In these tables, the labels are the same as in

Table 8 and the confusion matrix structure (actual classes vs. predicted classes) is the same as in

Figure 12. The diagonal entries in these tables have high values, which suggests that we have more TPs and TNs, increasing the model accuracy. We can also see that all algorithms other than

random forest algorithm have more false positives and false negatives when compared to

random forest algorithm. The false positives and false negatives are represented by non-diagonal cells.

Table 14 suggests that random forest performs better than the other algorithms as it results in fewer FPs and FNs. To prove this more numerically, we next demonstrate the precision, recall, and F1-score of each method in

Table 11,

Table 12 and

Table 13, respectively.

Analysis: From the above results, we can observe that random forest algorithm provided the best results as its F-1 score was greater than 95% for each activity. F1-score is a better parameter to observe than model accuracy because in the former we consider the weighted value of precision and recall rate, whereas in model accuracy we only look at the positive outcome (predicted and actual labels are same). We can also see that random forest had a high precision rate when compared to other algorithms. However, recall for SVM was higher for sitting on ground and sitting on chair, which resulted in higher F-1 score for SVM for these activities. However, when we observe the recall rate and F-1 score of SVM for standing activity, we see that it was about 6% and 4% lower than random forest, respectively.

The model accuracy is only good when we have an almost equal number of false positives and false negatives. Since the F-1 score considers both the precision and recall rate, it is a better metric to analyze the class-wise precision of the dataset.

From the results of the F-1 score in

Table 13, we observe that, for random forest, we had 98.32%, 96.80%,99.68% and 95.69% F-1 score for sitting on chair, standing, sitting on ground, and lying on ground, respectively. Although SVM had the F-1 score of

98.41% for

sitting on chair and

99.86% for

sitting on ground, it was not the most efficient algorithm because when we look at the F-1 score of other two activities (

standing and

lying on ground), we can clearly see that F-1 score was smaller for these two activities when compared to the random forest F-1 score. In summary, SVM had about 1% better F-1 score for

sitting on chair and

sitting on ground, whereas random forest gave better result by at least 4% for

standing and

lying on ground. This is the reason we consider

random forest as the highest-performance algorithm to detect the activities of two people in a smart space.

5.3. Results of Three People Experiments

Data: The structure of this section is the same as previous one, with the difference of the dataset used (i.e., three people dataset in

Table 9 was used). The data after pre-processing had separate Regions of Interest (RoIs), tripling the data points from the original dataset, as we had three people in the experiments.

Performance: Similar to the previous section, we first shuffled the dataset and then used a 70:30 train vs. test ratio over the shuffled dataset. Next, we present the confusion matrices of each method to showcase whether each method results in correct classification or not in

Table 14. The confusion matrix setup is the same as previous section (labels and actual vs. predicted class placement). We can again see that the number of diagonal entries is higher compared to non-diagonal entries, with higher TPs and TNs, compared to FPs and FNs. Similar to the previous case, random forest method had fewer non-diagonal entries, suggesting better performance compared to the other methods. To understand this better and prove this numerically, we next show the class-wise precision, recall, and F1-score values of each method, in

Table 15,

Table 16 and

Table 17, respectively.

Analysis: Examining the results, we can conclude that random forest method performed the best because it gave the highest precision and recall rates for each of the activities when compared with the other models. This is also the case when F1-score is considered. In summary, different from the previous case, we see that random forest resulted in better performance metric values compared to the others for each activity, leading to a clear best performance in three-people experiments.

5.4. Overall Model Accuracy

Up to this point, we discuss the results in terms of class-wise performance metrics with two- and three-people experiments in our smart space setup. Next, we look into the overall model accuracy for the same experiments.

Table 18 shows the overall model accuracy (across all classes instead of class-wise) for each machine learning algorithm we tested. We can observe from the table that random forest performed the best for detecting static activities of both two and three people.

Table 18 also shows that decision tree model also gave good results for two people but still the results are not as good as random forest method because it performed well for both two- and three-people static activity detection. Moreover, when we look at the per-class accuracy of the model, we can observe that decision tree method did not perform well for each activity.

Overall model accuracy is not an efficient parameter to evaluate the overall efficiency of a model because it does not specify results for each class. It is the reason supervised machine learning per-class results are the better parameters to verify the accuracy of a model. If we have high F-1 score, then our model is better.

5.5. Timing Analysis

This section discusses the time taken by different machine learning algorithms to train and test the models. Usually, for an efficient model, an algorithm should have small test time, as training data with an algorithm is a one-time procedure, whereas we have to predict the data for a new data point in real-time.

Table 19 shows the train and test times for each method. We see that decision tree and random forest models had good train and test time as they consider the best feature rather than all other features while predicting the labels. We also see that k-NN resulted in the maximum amount of test time followed by SVM. Thus, we should not consider these algorithms for predicting activities of multiple people in real-time applications where timing is critical. Moreover, logistic regression had a very large training time of 27.86 s. Overall, if we look at the test time of decision tree and random forest models, we see that they had very low train and test times, making them more suitable for real-time applications.

6. Conclusions

With the advancements in technology and widespread adoption of the Internet of Things phenomenon, smart applications have become prevalent. These smart applications leverage heterogeneous sensor deployment, real-time data collection, processing, and user feedback, using machine learning algorithms. Applications include smart health, smart home automation, safety/security/surveillance, etc. In almost all of these applications, understanding the human behavior in a given smart space is of utmost importance. The existing studies achieve this task usually with multimedia-based or wearable-based solutions. Despite their effectiveness, these methods pose significant risk for user privacy, exposing their identities; user discomfort, putting devices on the bodies of the users; or lead to high computational burden due to high volume of data. In this paper, we present a system setup that involves a hardware deployment along with necessary software framework, to accurately and non-intrusively detect the number of people in a smart environment and track their positions in terms of their static activities, where the activity is performed without changing the location. The fact that we use only ambient sensors with low-resolution, using no multimedia resources or wearable sensors, helps us preserve user privacy, with no user discomfort. In the paper, we first devise methods to find the number of people in an indoor space. We prove that algorithm selection should be done very carefully, with which we can achieve up to 100% accuracy when detecting multiple user presence. Our experiments also show that sensor placement has an important affect on algorithm performance. Placing the sensor on the ceiling of the room leads to the best performance. Having accurately found the number of people, next we extend our framework to find the static activities of the people in the environment using ambient data, which are thermal images of the smart environment. We first collect data, and then process the data with several steps (including normalization, resizing, converting to grayscale, etc.), and then give these processed data to classification algorithms to efficiently find the activity class of a corresponding image with multiple people. We show that we can obtain up to 97.5% accuracy when detecting static activities, with up to 100% class-wise precision and recall rates. Our framework can be very beneficial to several applications such as health-care, surveillance, home automation, without causing any discomfort or privacy issues for the users.