Cooperative Optimization of UAVs Formation Visual Tracking

Abstract

1. Introduction

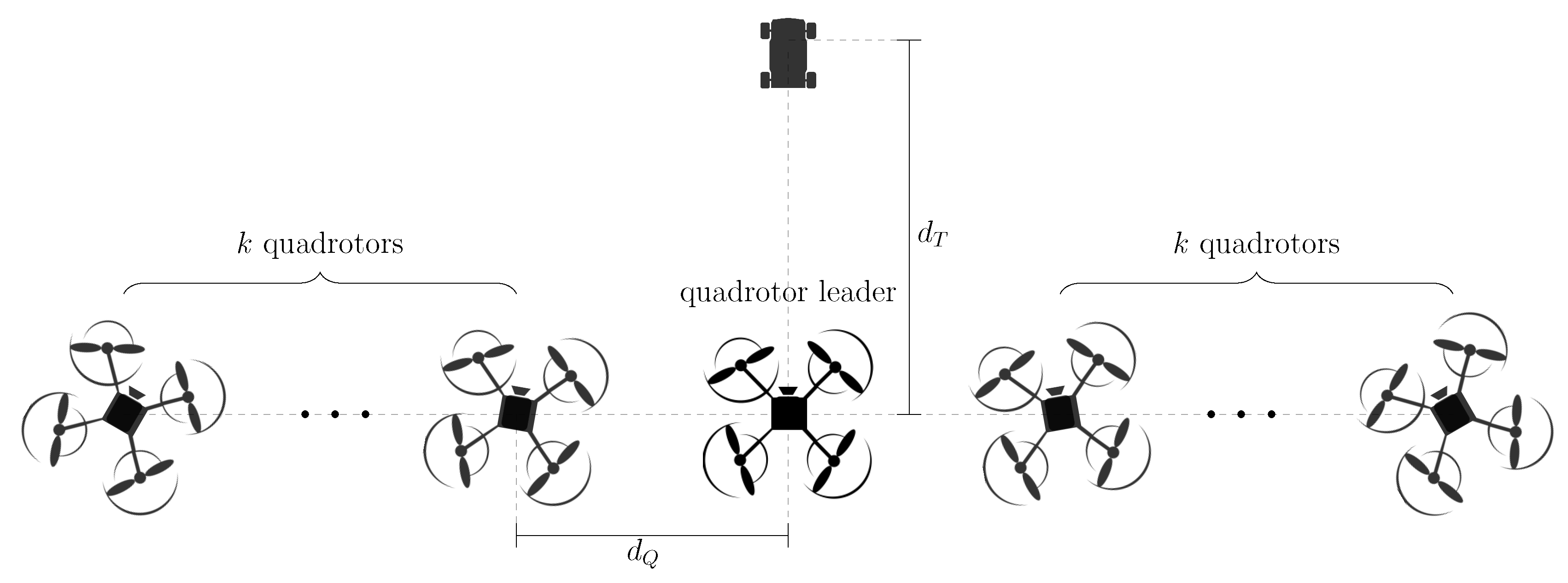

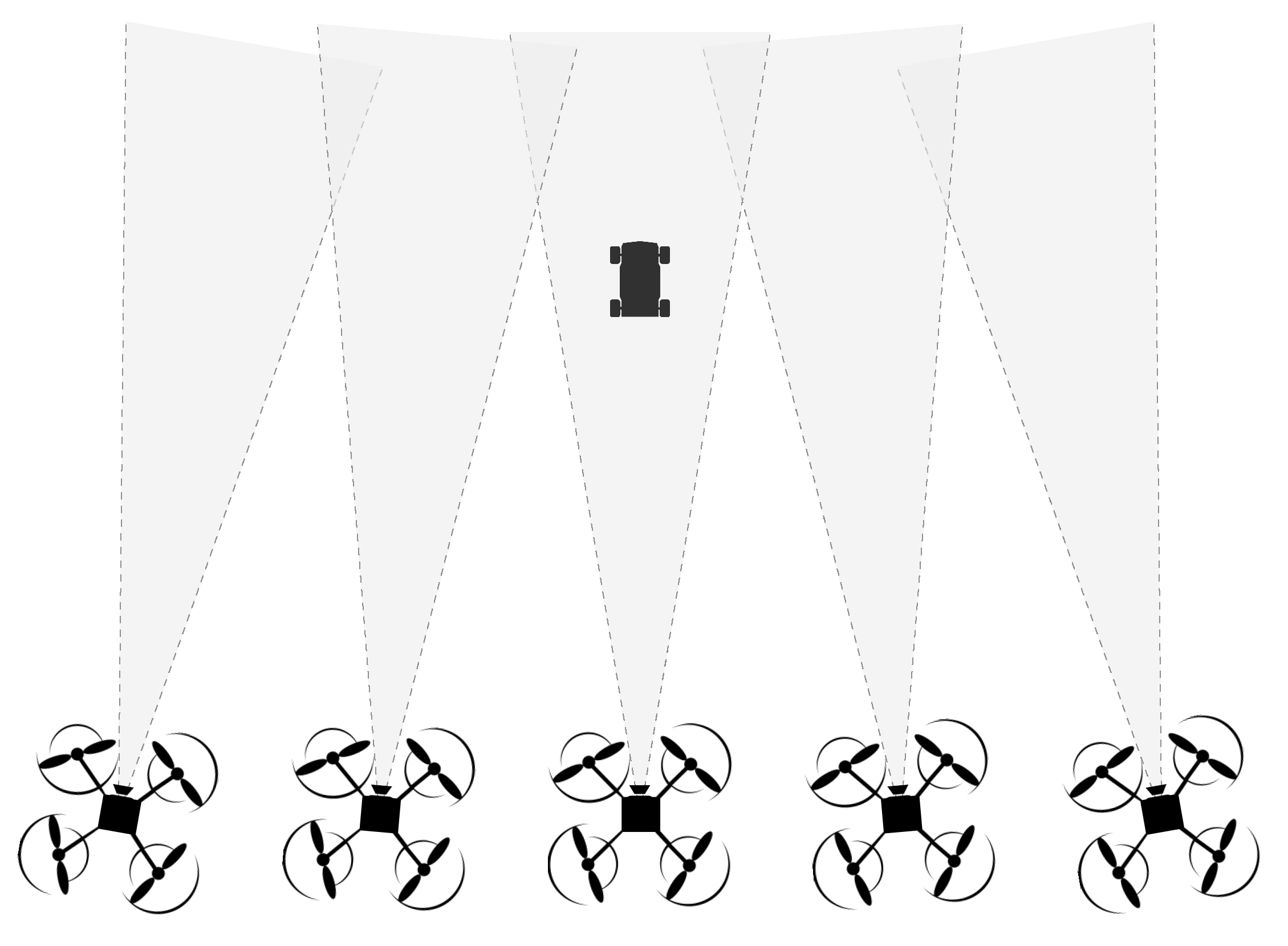

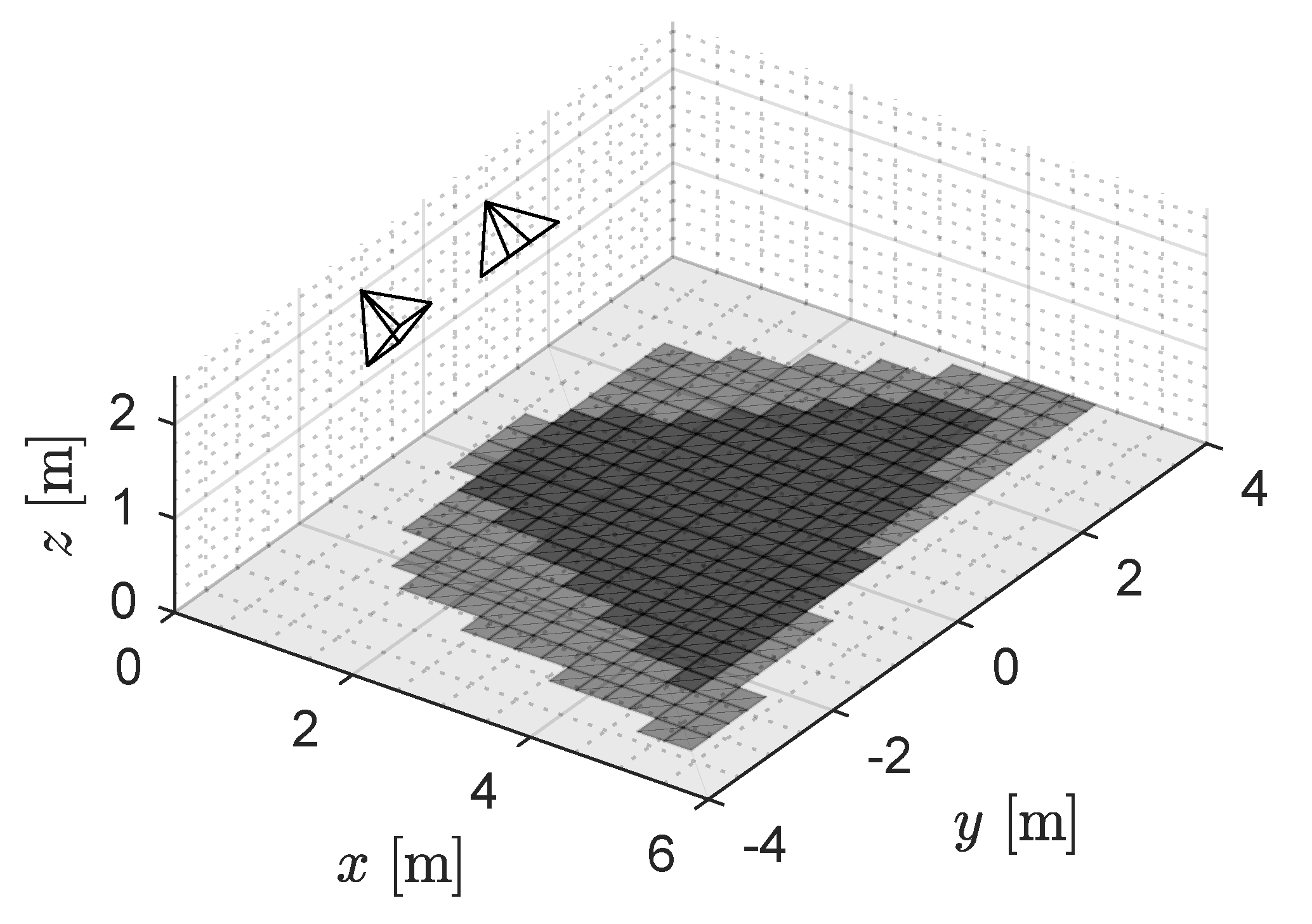

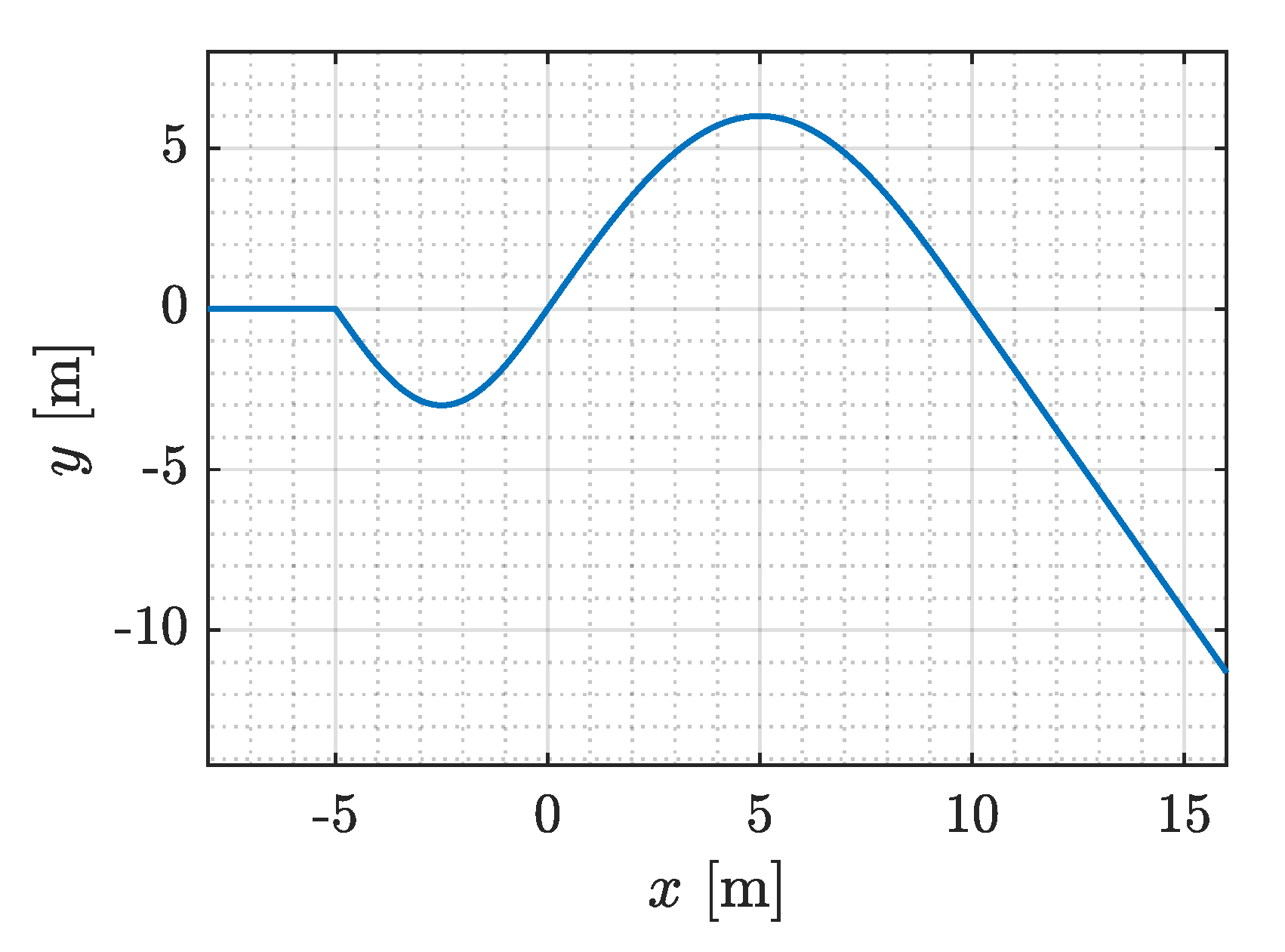

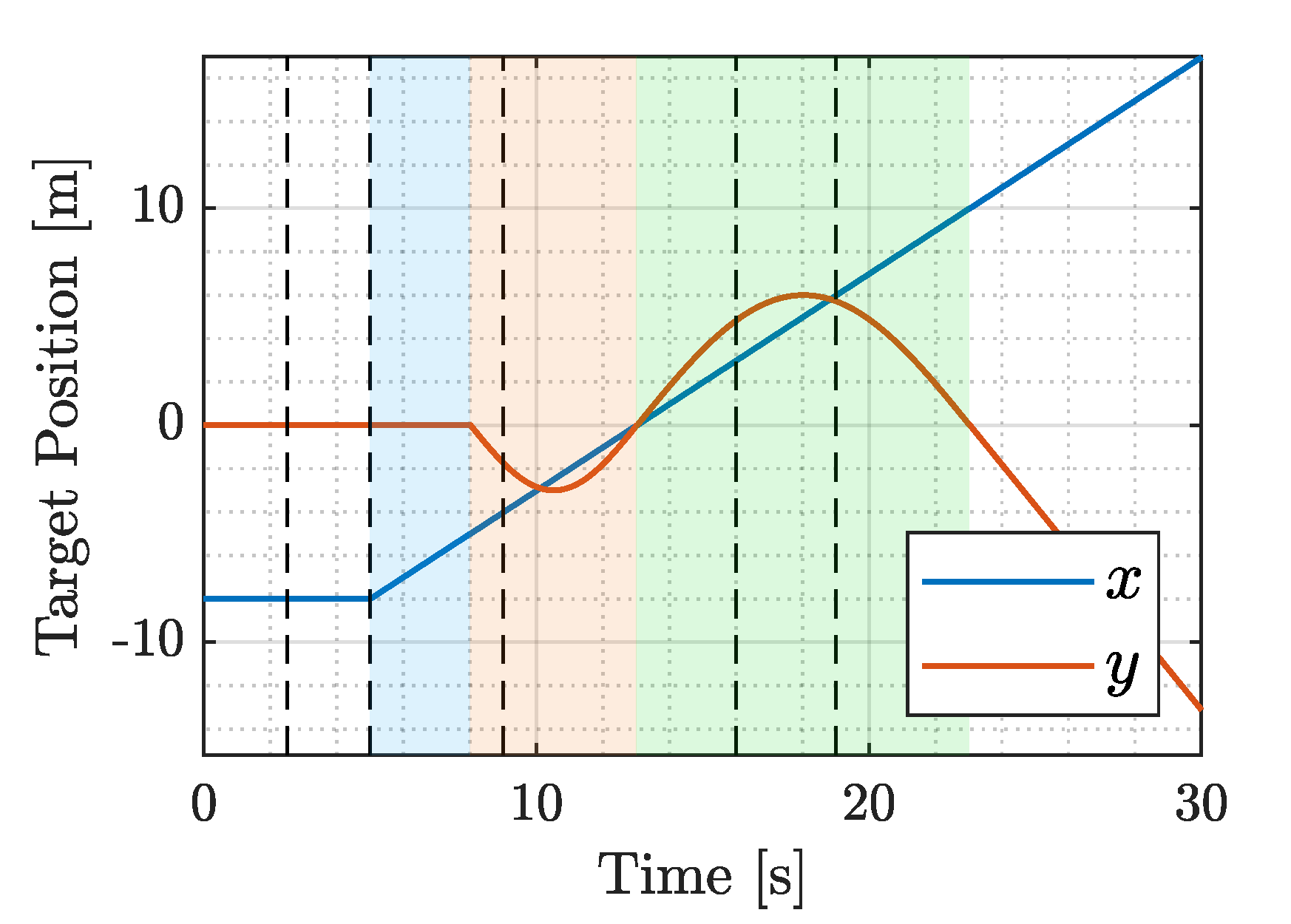

2. Scenario Description

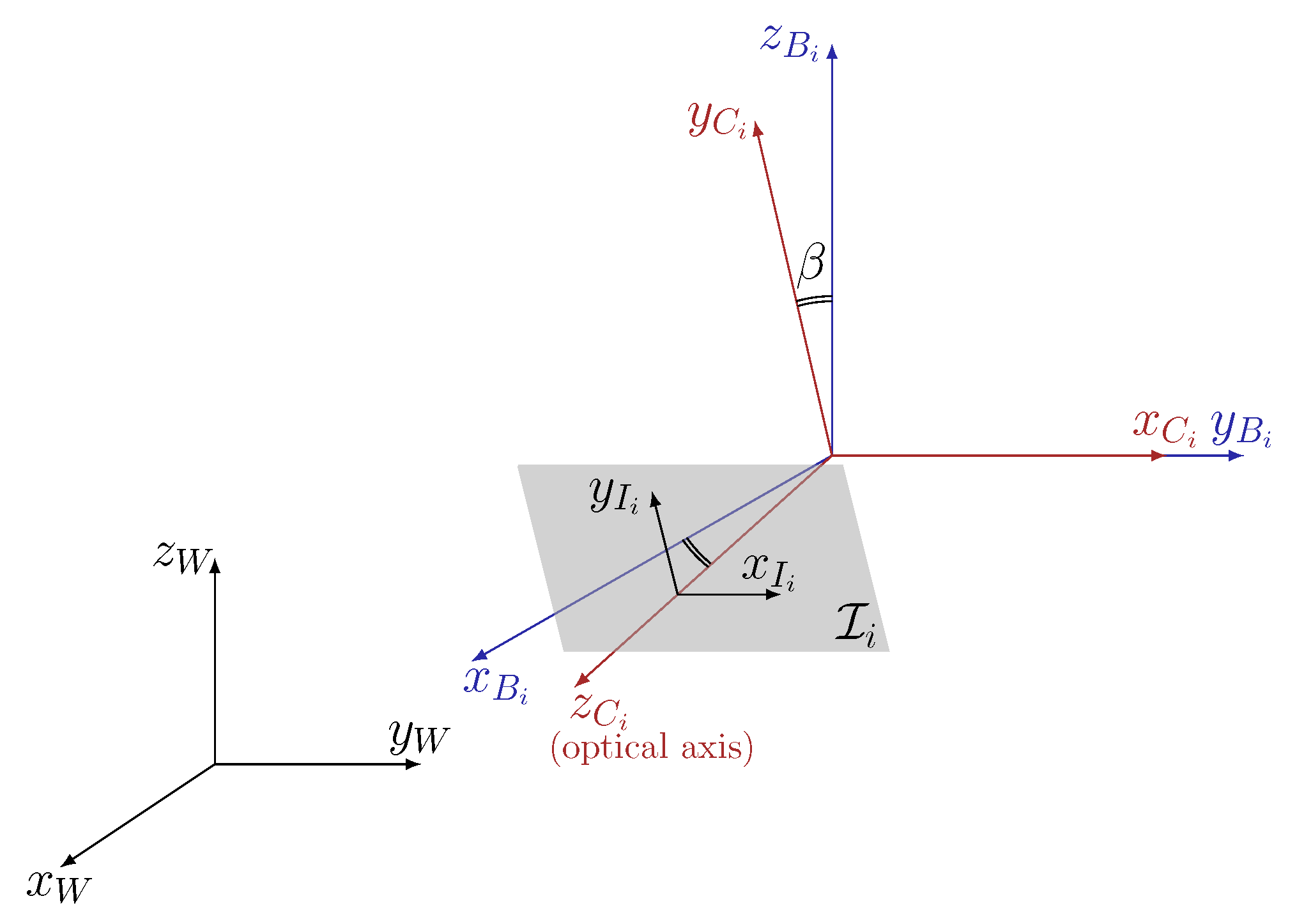

2.1. Quadrotor Model

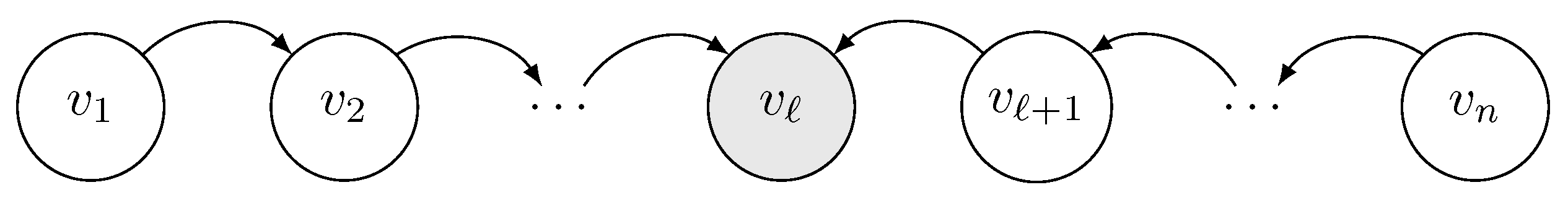

2.2. Formation Model

2.3. Problem Statement

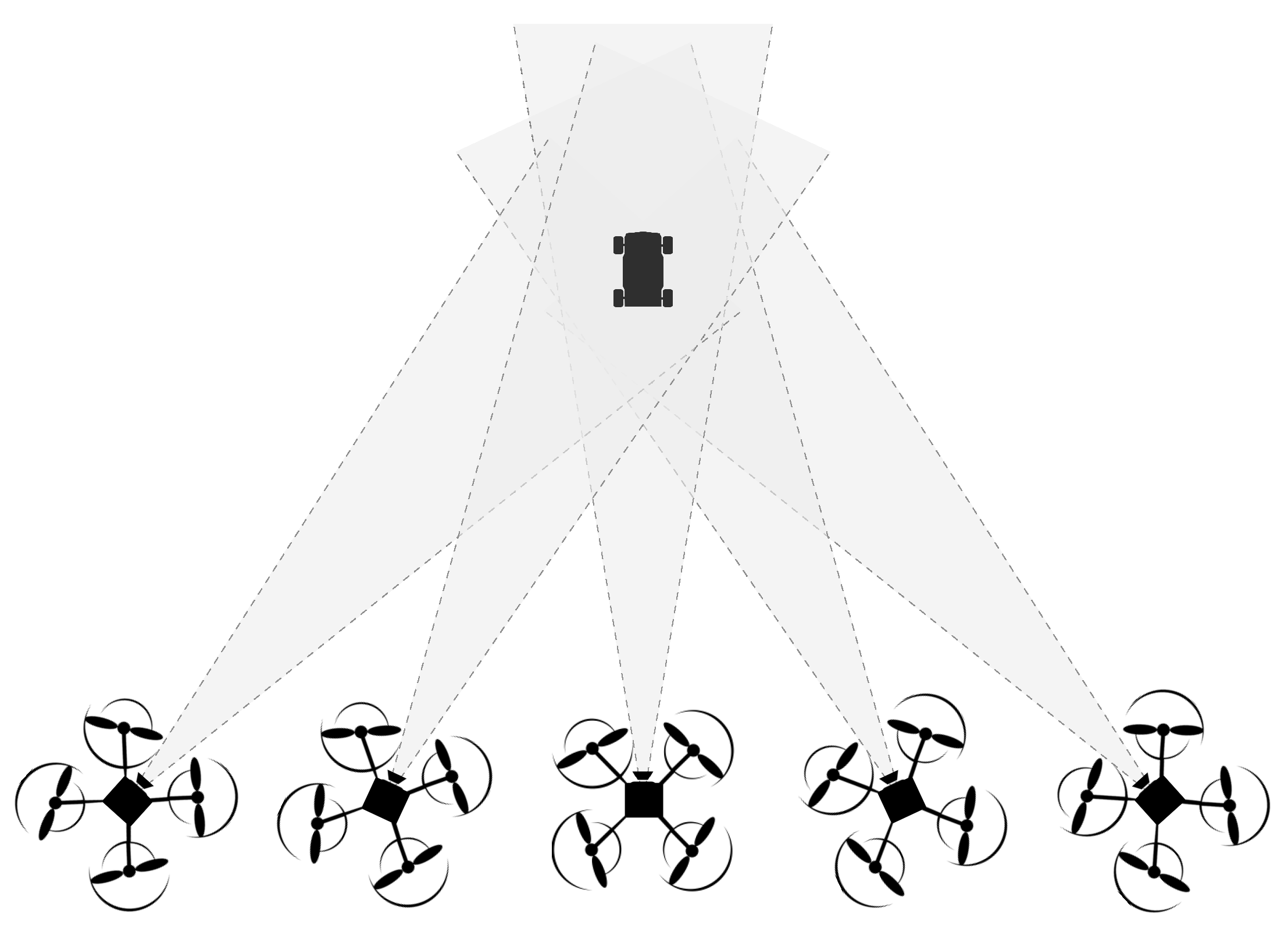

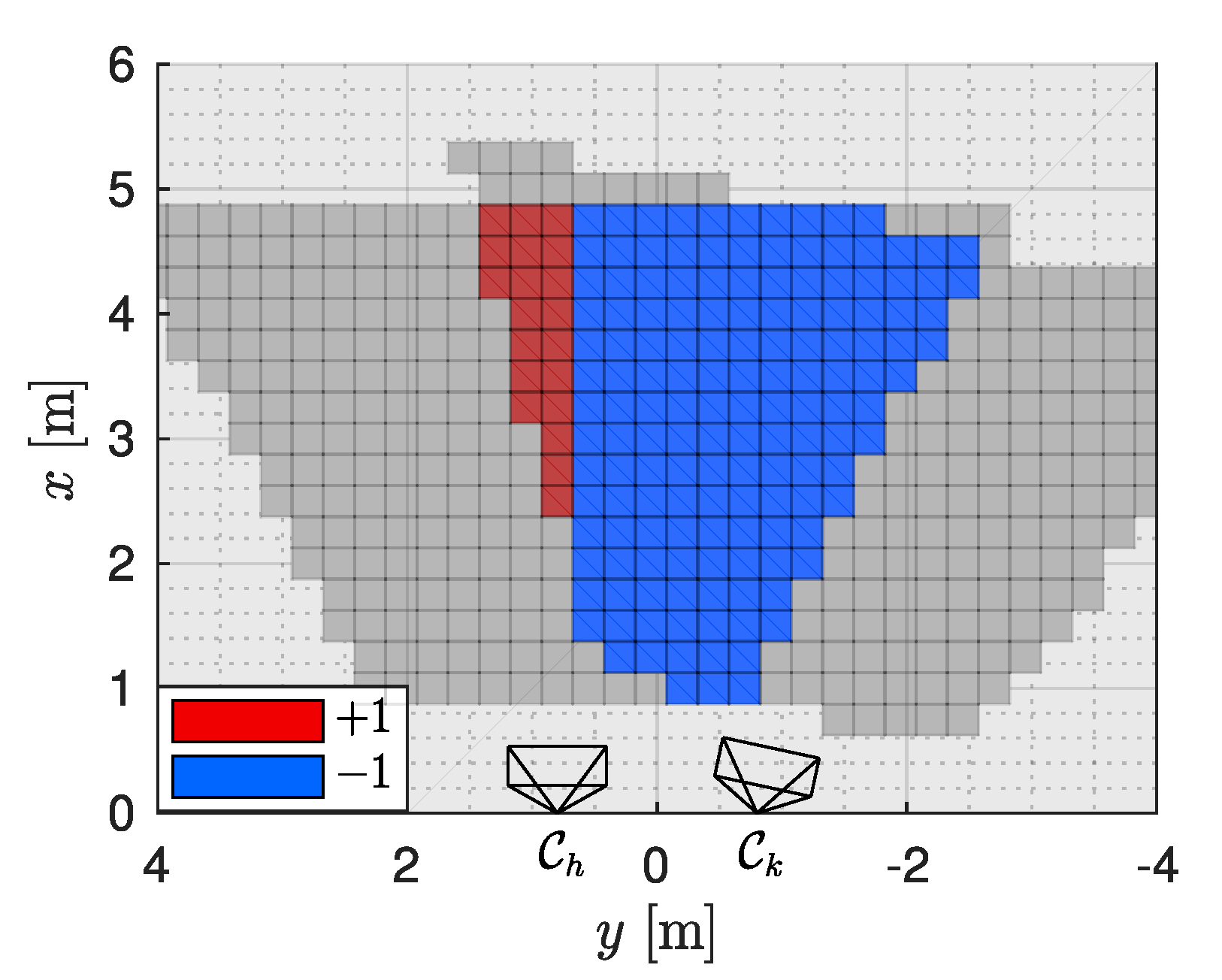

- Assuming that the target position is estimated through triangulation algorithms, the quality of target position estimate is related to the number of cameras that are able to see the target [45]. If the f.o.v. of all the cameras are overlapping, i.e., if their optical axes are pointing towards the target, all of them can contribute to some extent with visual information regarding the target and then the quality of the estimate is increased. In the light of this fact, we assume that the more the camera f.o.v. are overlapping, the more it is likely that the target is simultaneously visible from a larger number of cameras.

- On the other hand, the target loss probability decreases as the area of the total formation f.o.v., corresponding to the monitored region, increases. Indeed, when the covered area is minimal, it may happen that the target rapidly drifts outside of the view of any camera. Conversely, if the views are less overlapping, it is more likely that if one camera loses the target, it falls into the view of another camera that is looking in a slightly different direction.

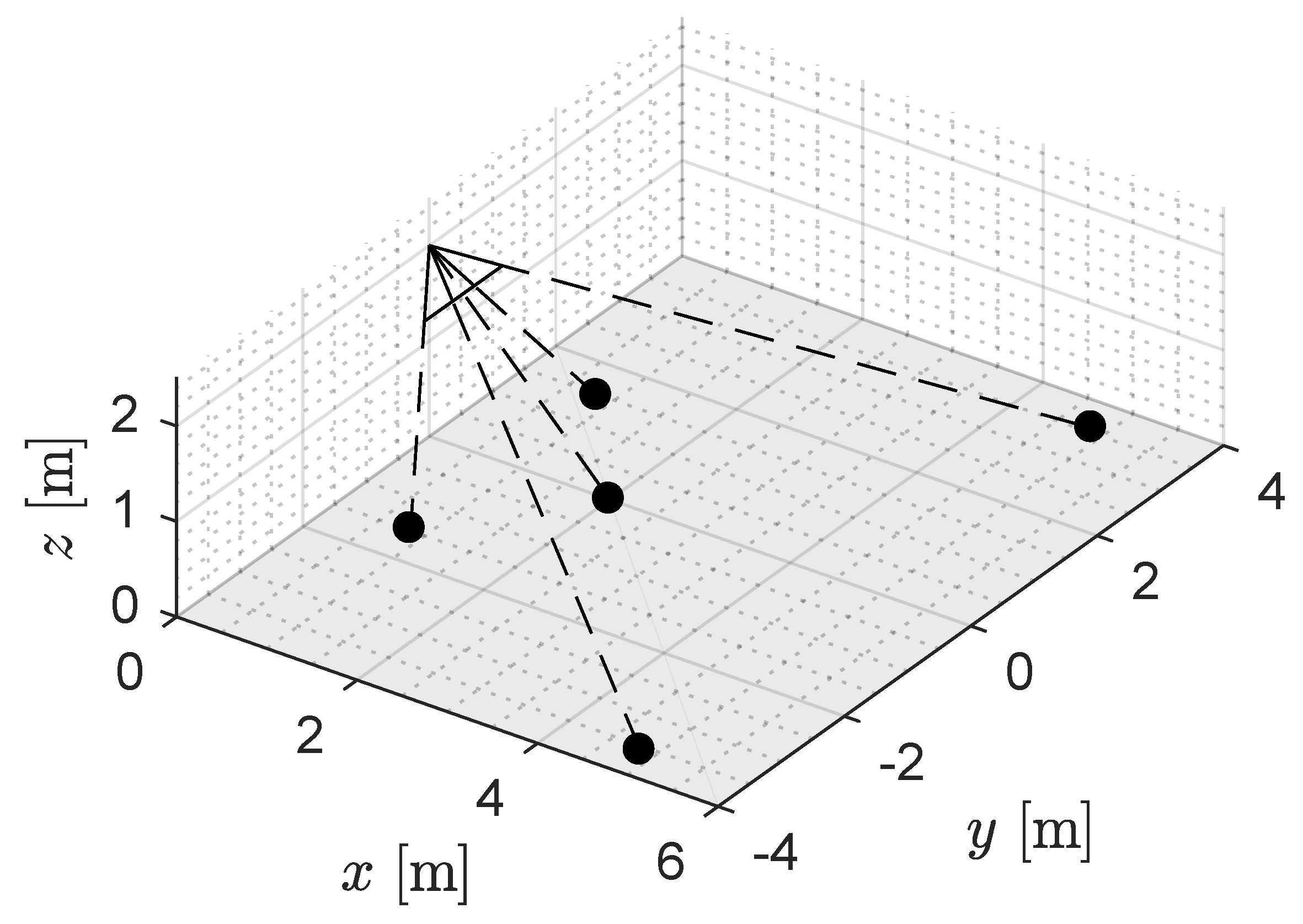

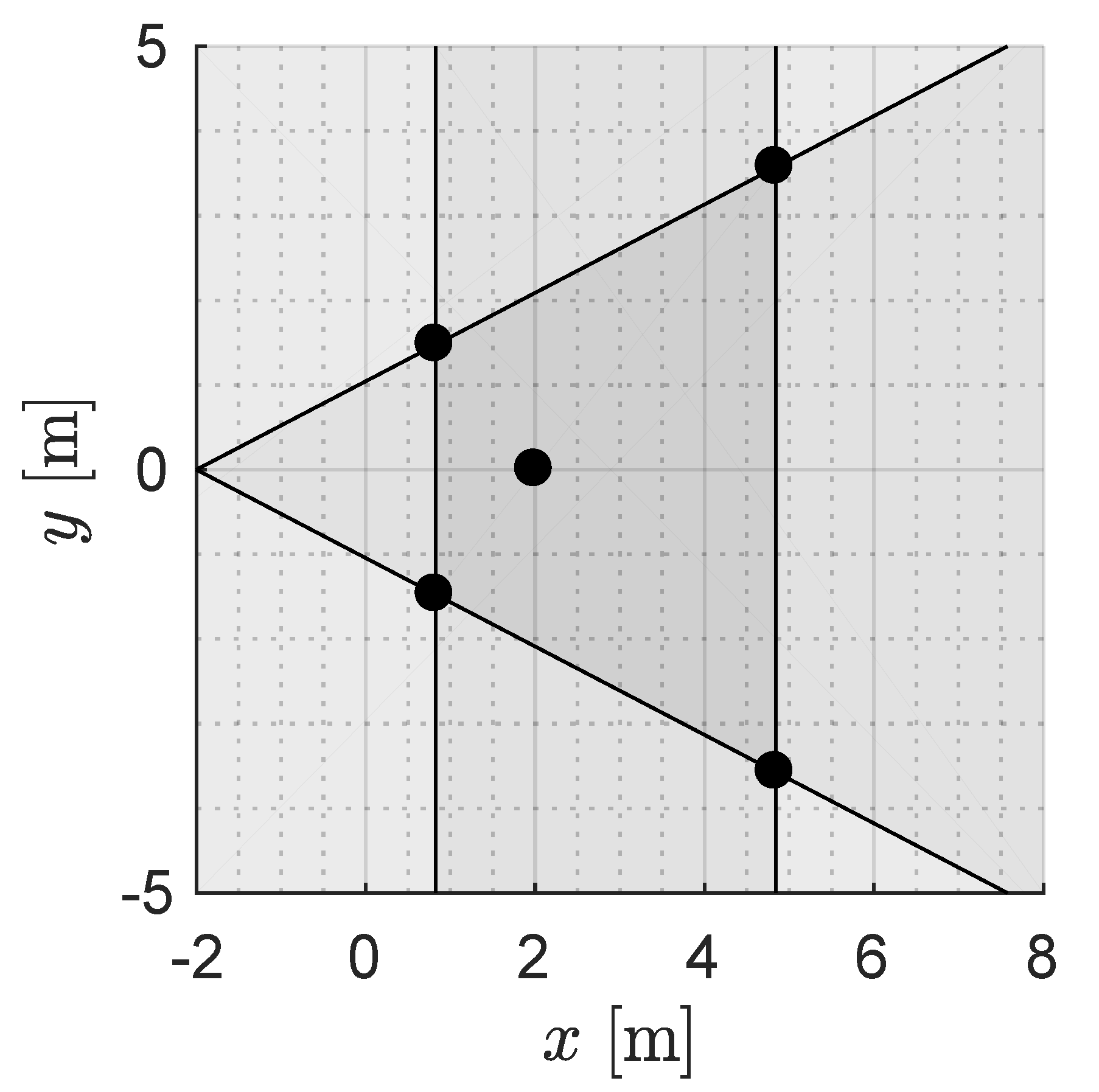

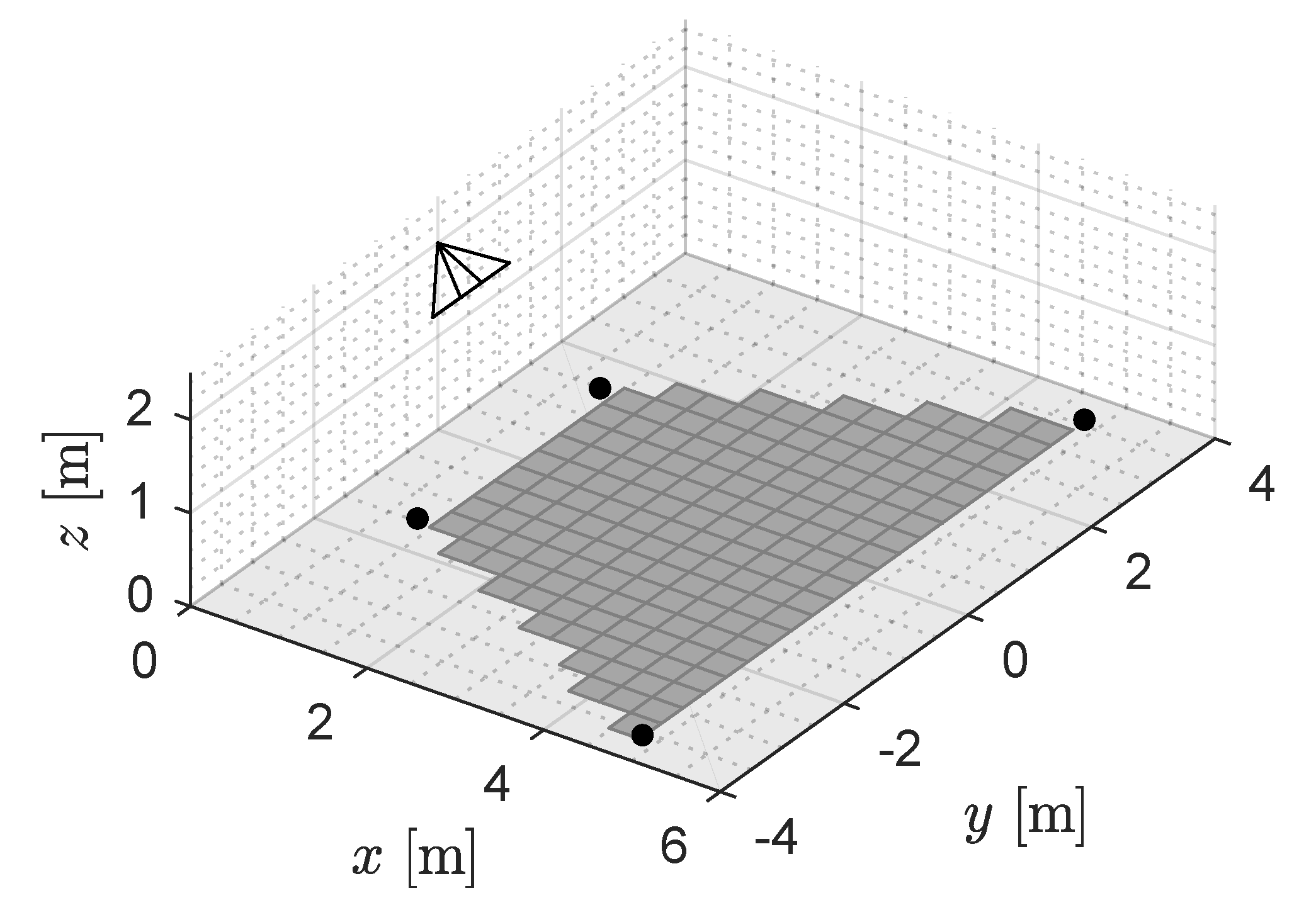

3. Computation of the Formation Total f.o.v.

3.1. Single Camera f.o.v. Approximation

- each entry , , , refers to the point placed at in the sampled plane , i.e., such that its position in the world frame is identified by the vector ;

- any non-zero value of indicates that the sample centered in is not visible from the i-th camera while a zero value means that this is not visible. More formally, if condition (7) holds, then, the value of is set to , otherwise it is set to zero. The definition of may be context-dependent: although the easiest choice is to impose , spatial information can be incorporate in this value, as will be explained later.

3.2. Formation Total f.o.v. Approximation

4. Optimization of Quadrotors Attitude

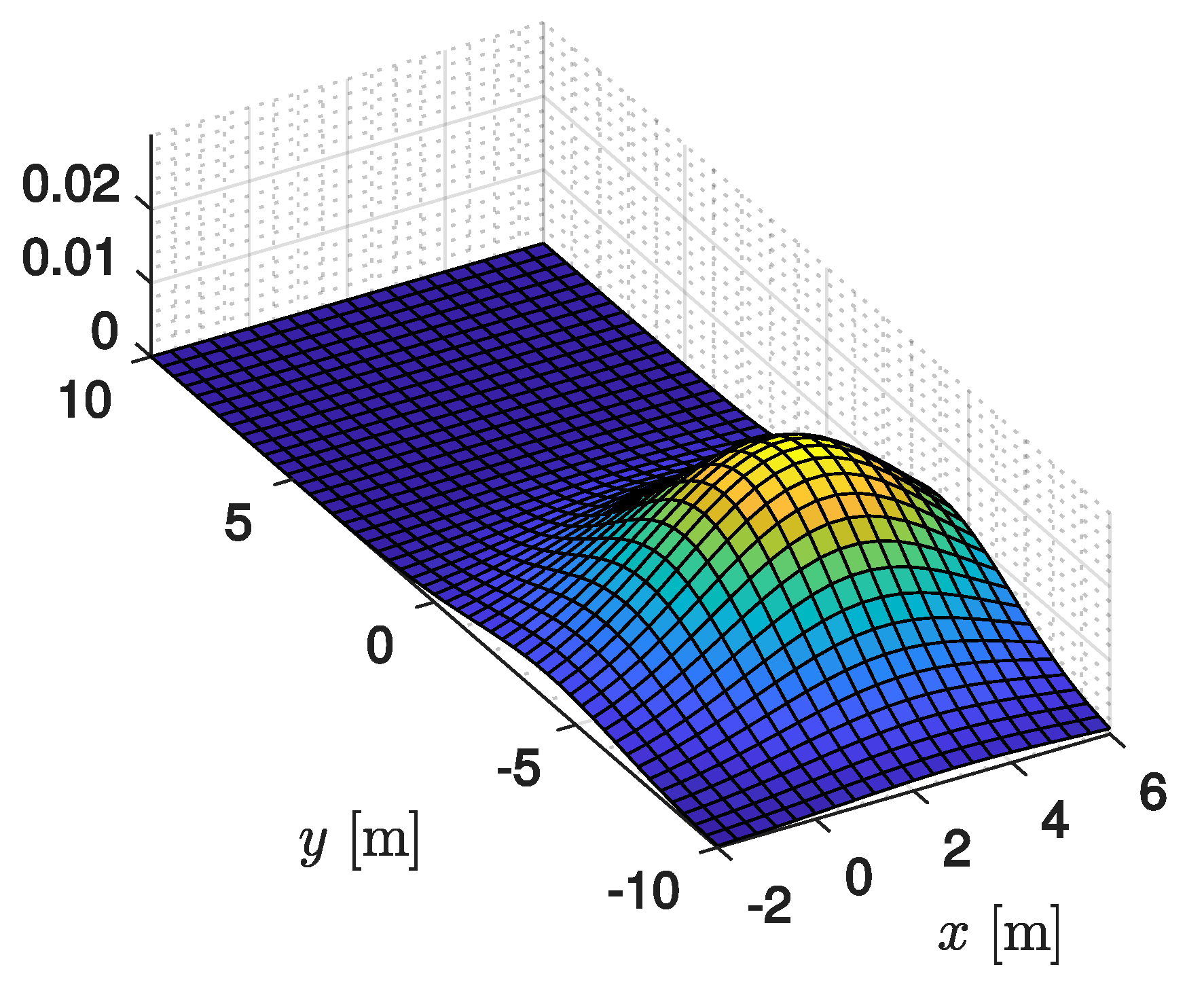

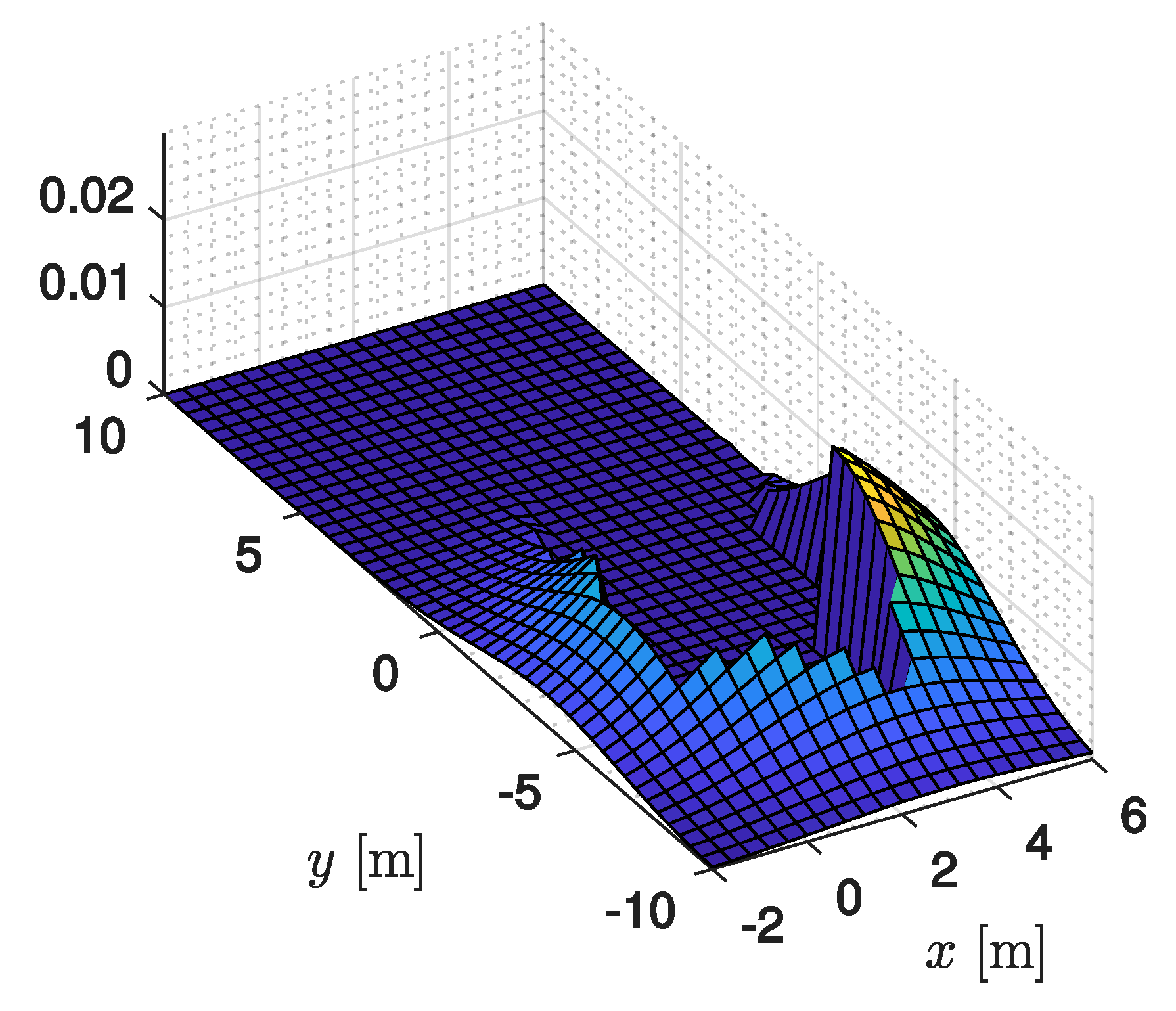

4.1. Minimization of Target Position Estimation Error

4.2. Target Loss Probability Definition

4.3. Regulated Solution

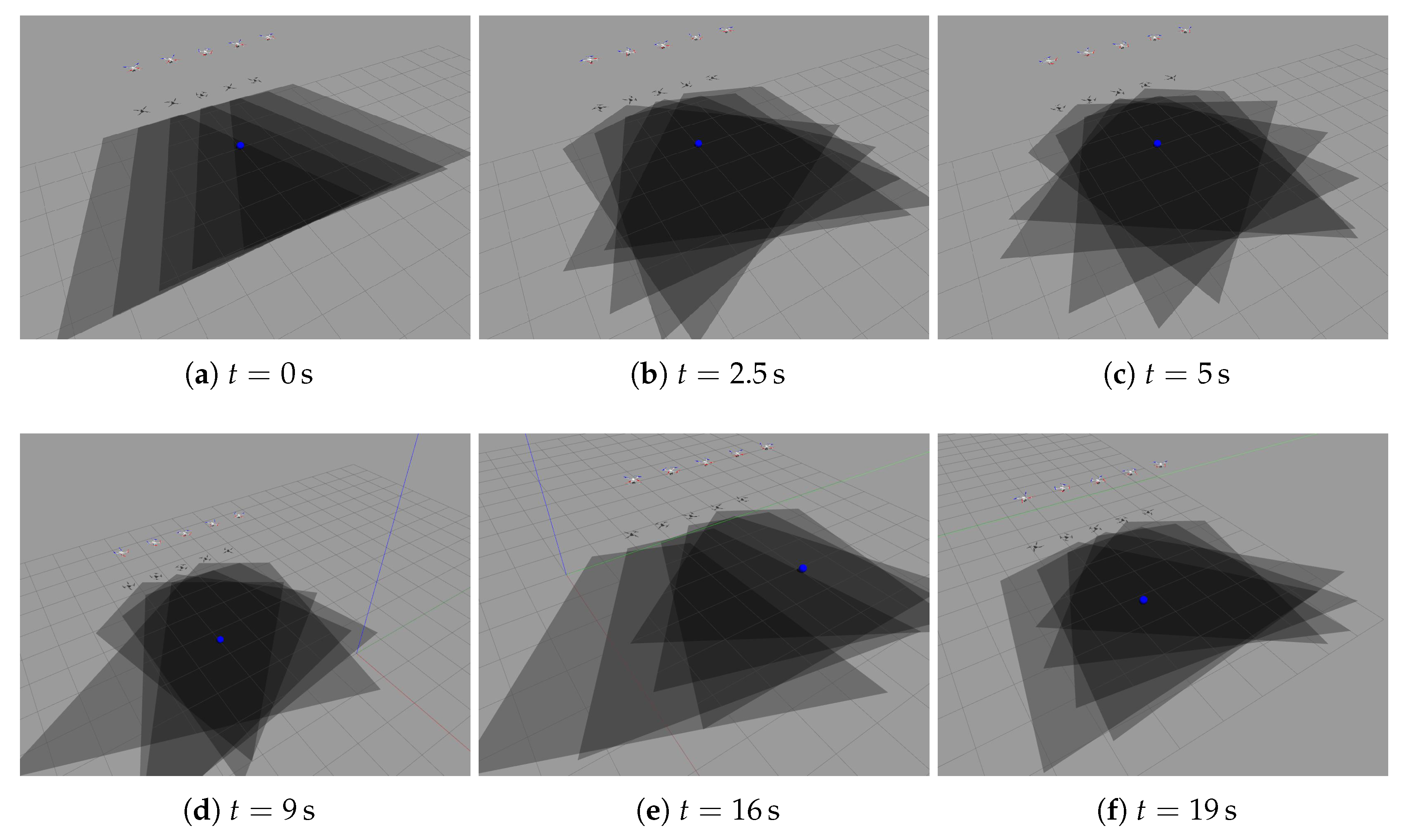

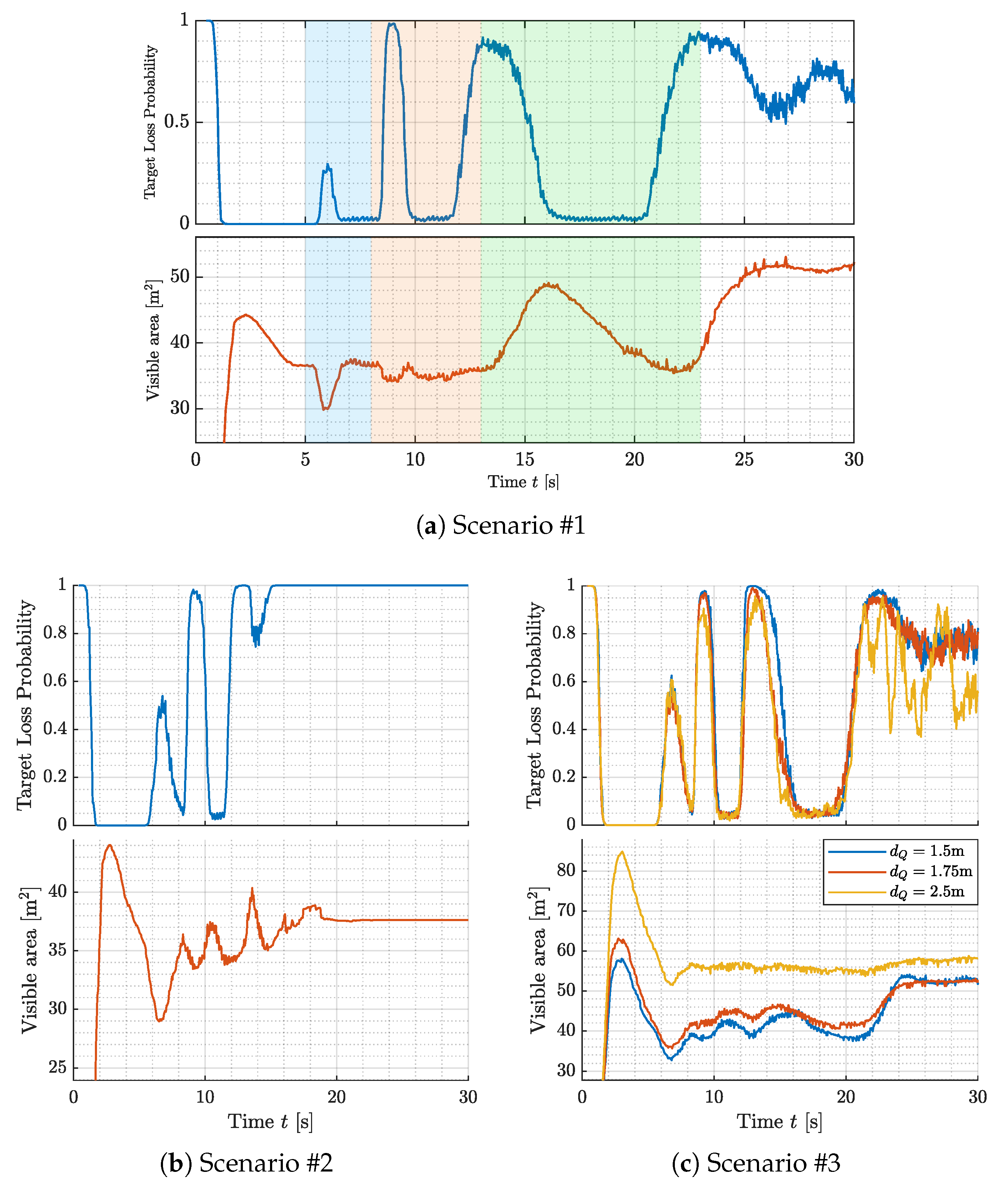

5. Simulation Results

Comparative Experiments

6. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Nonami, K.; Kendoul, F.; Suzuki, S.; Wang, W.; Nakazawa, D. Autonomous Flying Robots: Unmanned Aerial Vehicles and Micro Aerial Vehicles; Springer Science & Business Media: Berlin, Germany, 2010. [Google Scholar]

- Austin, R. Unmanned Aircraft Systems: UAVS Design, Development And Deployment; John Wiley & Sons: Hoboken, NJ, USA, 2011; Volume 54. [Google Scholar]

- Gupte, S.; Mohandas, P.I.T.; Conrad, J.M. A survey of quadrotor unmanned aerial vehicles. In Proceedings of the 2012 Proceedings of IEEE Southeastcon, Orlando, FL, USA, 15–18 March 2012; pp. 1–6. [Google Scholar]

- Mahony, R.; Kumar, V.; Corke, P. Multirotor aerial vehicles: Modeling, estimation, and control of quadrotor. IEEE Robot. Autom. Mag. 2012, 19, 20–32. [Google Scholar] [CrossRef]

- Kumar, V.; Michael, N. Opportunities and challenges with autonomous micro aerial vehicles. Int. J. Robot. Res. 2012, 31, 1279–1291. [Google Scholar] [CrossRef]

- Valavanis, K.P.; Vachtsevanos, G.J. Handbook of Unmanned Aerial Vehicles; Springer: Berlin, Germany, 2015. [Google Scholar]

- Zaheer, Z.; Usmani, A.; Khan, E.; Qadeer, M.A. Aerial surveillance system using UAV. In Proceedings of the 2016 Thirteenth International Conference on Wireless and Optical Communications Networks (WOCN), Hyderabad, Telangana State, India, 21–23 July 2016; pp. 1–7. [Google Scholar]

- Sun, J.; Li, B.; Jiang, Y.; Wen, C.y. A camera-based target detection and positioning UAV system for search and rescue (SAR) purposes. Sensors 2016, 16, 1778. [Google Scholar] [CrossRef] [PubMed]

- Al-Kaff, A.; Gómez-Silva, M.J.; Moreno, F.M.; de la Escalera, A.; Armingol, J.M. An Appearance-Based Tracking Algorithm for Aerial Search and Rescue Purposes. Sensors 2019, 19, 652. [Google Scholar] [CrossRef] [PubMed]

- Khamseh, H.B.; Janabi-Sharifi, F.; Abdessameud, A. Aerial manipulation—A literature survey. Robot. Auton. Syst. 2018, 107, 221–235. [Google Scholar] [CrossRef]

- Ruggiero, F.; Lippiello, V.; Ollero, A. Aerial manipulation: A literature review. IEEE Robot. Autom. Lett. 2018, 3, 1957–1964. [Google Scholar] [CrossRef]

- Sahingoz, O.K. Mobile networking with UAVs: Opportunities and challenges. In Proceedings of the 2013 International Conference on Unmanned Aircraft Systems (ICUAS), Atlanta, GA, USA, 28–31 May 2013; pp. 933–941. [Google Scholar]

- Hou, Z.; Wang, W.; Zhang, G.; Han, C. A survey on the formation control of multiple quadrotors. In Proceedings of the 2017 14th International Conference on Ubiquitous Robots and Ambient Intelligence (URAI), Jeju, South Korea, 28 June–1 July 2017; pp. 219–225. [Google Scholar]

- Chung, S.J.; Paranjape, A.A.; Dames, P.; Shen, S.; Kumar, V. A survey on aerial swarm robotics. IEEE Trans. Robot. 2018, 34, 837–855. [Google Scholar] [CrossRef]

- Liu, Y.; Bucknall, R. A survey of formation control and motion planning of multiple unmanned vehicles. Robotica 2018, 36, 1019–1047. [Google Scholar] [CrossRef]

- Gu, J.; Su, T.; Wang, Q.; Du, X.; Guizani, M. Multiple moving targets surveillance based on a cooperative network for multi-UAV. IEEE Commun. Mag. 2018, 56, 82–89. [Google Scholar] [CrossRef]

- Tan, Y.H.; Lai, S.; Wang, K.; Chen, B.M. Cooperative control of multiple unmanned aerial systems for heavy duty carrying. Ann. Rev. Control 2018, 46, 44–57. [Google Scholar] [CrossRef]

- Li, B.; Jiang, Y.; Sun, J.; Cai, L.; Wen, C.Y. Development and testing of a two-UAV communication relay system. Sensors 2016, 16, 1696. [Google Scholar] [CrossRef] [PubMed]

- Kanistras, K.; Martins, G.; Rutherford, M.J.; Valavanis, K.P. Survey of unmanned aerial vehicles (UAVs) for traffic monitoring. In Handbook of Unmanned Aerial Vehicles; Springer: Dordrecht, The Netherlands, 2015; pp. 2643–2666. [Google Scholar]

- Yanmaz, E. SEvent detection using unmanned aerial vehicles: Ordered versus self-organized search. In International Workshop on Self-Organizing Systems; Springer: Berlin, Germany, 2009; pp. 26–36. [Google Scholar]

- Zhao, J.; Xiao, G.; Zhang, X.; Bavirisetti, D.P. A Survey on Object Tracking in Aerial Surveillance. In International Conference on Aerospace System Science and Engineering; Springer: Berlin, Germany, 2018; pp. 53–68. [Google Scholar]

- Schwager, M.; Julian, B.J.; Rus, D. Optimal coverage for multiple hovering robots with downward facing cameras. In Proceedings of the 2009 IEEE International Conference on Robotics and Automation, Kobe, Japan, 12–17 May 2009; pp. 3515–3522. [Google Scholar]

- Doitsidis, L.; Weiss, S.; Renzaglia, A.; Achtelik, M.W.; Kosmatopoulos, E.; Siegwart, R.; Scaramuzza, D. Optimal surveillance coverage for teams of micro aerial vehicles in GPS-denied environments using onboard vision. Auton. Robots 2012, 33, 173–188. [Google Scholar] [CrossRef]

- Saska, M.; Chudoba, J.; Přeučil, L.; Thomas, J.; Loianno, G.; Třešňák, A.; Vonásek, V.; Kumar, V. Autonomous deployment of swarms of micro-aerial vehicles in cooperative surveillance. In Proceedings of the 2014 International Conference on Unmanned Aircraft Systems (ICUAS), Orlando, FL, USA, 27–30 May 2014; pp. 584–595. [Google Scholar]

- Mavrinac, A.; Chen, X. Modeling coverage in camera networks: A survey. Int. J. Comput. Vis. 2013, 101, 205–226. [Google Scholar] [CrossRef]

- Ganguli, A.; Cortés, J.; Bullo, F. Maximizing visibility in nonconvex polygons: nonsmooth analysis and gradient algorithm design. SIAM J. Control Optim. 2006, 45, 1657–1679. [Google Scholar] [CrossRef]

- Chevet, T.; Maniu, C.S.; Vlad, C.; Zhang, Y. Voronoi-based UAVs Formation Deployment and Reconfiguration using MPC Techniques. In Proceedings of the 2018 International Conference on Unmanned Aircraft Systems (ICUAS), Dallas, TX, USA, 12–15 June 2018; pp. 9–14. [Google Scholar]

- Munguía, R.; Urzua, S.; Bolea, Y.; Grau, A. Vision-based SLAM system for unmanned aerial vehicles. Sensors 2016, 16, 372. [Google Scholar] [CrossRef] [PubMed]

- Schmuck, P.; Chli, M. Multi-uav collaborative monocular slam. In Proceedings of the 2017 IEEE International Conference on Robotics and Automation (ICRA), Singapore, 29 May–3 June 2017; pp. 3863–3870. [Google Scholar]

- Robin, C.; Lacroix, S. Multi-robot target detection and tracking: Taxonomy and survey. Auton. Robots 2016, 40, 729–760. [Google Scholar] [CrossRef]

- Evans, M.; Osborne, C.J.; Ferryman, J. Multicamera object detection and tracking with object size estimation. In Proceedings of the 2013 10th IEEE International Conference on Advanced Video and Signal Based Surveillance, Krakow, Poland, 27–30 August 2013; pp. 177–182. [Google Scholar]

- Xu, B.; Bulan, O.; Kumar, J.; Wshah, S.; Kozitsky, V.; Paul, P. Comparison of early and late information fusion for multi-camera HOV lane enforcement. In Proceedings of the 2015 IEEE 18th International Conference on Intelligent Transportation Systems, Las Palmas, Spain, 15–18 September 2015; pp. 913–918. [Google Scholar]

- Coates, A.; Ng, A.Y. Multi-camera object detection for robotics. In Proceedings of the 2010 IEEE International Conference on Robotics and Automation, Anchorage, AK, USA, 3–7 May 2010; pp. 412–419. [Google Scholar]

- Del Rosario, J.R.B.; Bandala, A.A.; Dadios, E.P. Multi-view multi-object tracking in an intelligent transportation system: A literature review. In Proceedings of the 2017 IEEE 9th International Conference on Humanoid, Nanotechnology, Information Technology, Communication and Control, Environment and Management (HNICEM), Manila, Philippines, 1–3 December 2017; pp. 1–4. [Google Scholar]

- Poiesi, F.; Cavallaro, A. Distributed vision-based flying cameras to film a moving target. In Proceedings of the 2015 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Hamburg, Germany, 28 September–2 October 2015; pp. 2453–2459. [Google Scholar]

- Pounds, P.; Mahony, R.; Corke, P. Modelling and control of a large quadrotor robot. Control Eng. Pract. 2010, 18, 691–699. [Google Scholar] [CrossRef]

- Michael, N.; Mellinger, D.; Lindsey, Q.; Kumar, V. The GRASP multiple micro-UAV testbed. IEEE Robot. Autom. Mag. 2010, 17, 56–65. [Google Scholar] [CrossRef]

- Kalabić, U.; Gupta, R.; Di Cairano, S.; Bloch, A.; Kolmanovsky, I. MPC on Manifolds with an Application to SE (3). In Proceedings of the 2016 American Control Conference (ACC), Boston, MA, USA, 6–8 July 2016; pp. 7–12. [Google Scholar]

- Garcia, G.A.; Kim, A.R.; Jackson, E.; Kashmiri, S.S.; Shukla, D. Modeling and flight control of a commercial nano quadrotor. In Proceedings of the 2017 International Conference on Unmanned Aircraft Systems (ICUAS), Miami, FL, USA, 13–16 June 2017; pp. 524–532. [Google Scholar]

- Lee, T.; Leok, M.; McClamroch, N.H. Geometric tracking control of a quadrotor UAV on SE(3). In Proceedings of the 49th IEEE conference on decision and control (CDC), Atlanta, GA, USA, 15–17 December 2010; pp. 5420–5425. [Google Scholar]

- Lee, T. Geometric tracking control of the attitude dynamics of a rigid body on SO(3). In Proceedings of the 2011 American Control Conference, San Francisco, CA, USA, 29 June–1 July 2011; pp. 1200–1205. [Google Scholar]

- Ma, Y.; Soatto, S.; Kosecka, J.; Sastry, S.S. An Invitation to 3-D Vision: From Images to Geometric Models; Springer Science & Business Media: Berlin, Germany, 2012; Volume 26. [Google Scholar]

- Schiano, F.; Franchi, A.; Zelazo, D.; Giordano, P.R. A rigidity-based decentralized bearing formation controller for groups of quadrotor UAVs. In Proceedings of the 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Daejeon, South Korea, 9–14 October 2016; pp. 5099–5106. [Google Scholar]

- Kamthe, A.; Jiang, L.; Dudys, M.; Cerpa, A. Scopes: Smart cameras object position estimation system. In European Conference on Wireless Sensor Networks; Springer: Berlin, Germany, 2009; pp. 279–295. [Google Scholar]

- Masiero, A.; Cenedese, A. On triangulation algorithms in large scale camera network systems. In Proceedings of the 2012 American Control Conference (ACC), Montreal, QC, Canada, 27–29 June 2012; pp. 4096–4101. [Google Scholar]

- Fu, X.; Liu, K.; Gao, X. Multi-UAVs communication-aware cooperative target tracking. Appl. Sci. 2018, 8, 870. [Google Scholar] [CrossRef]

- Lissandrini, N.; Michieletto, G.; Antonello, R.; Galvan, M.; Franco, A.; Cenedese, A. Cooperative Optimization of UAVs Formation Visual Tracking. 2019. Available online: https://youtu.be/MXV0cQ4qmRk (accessed on 25 June 2019).

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lissandrini, N.; Michieletto, G.; Antonello, R.; Galvan, M.; Franco, A.; Cenedese, A. Cooperative Optimization of UAVs Formation Visual Tracking. Robotics 2019, 8, 52. https://doi.org/10.3390/robotics8030052

Lissandrini N, Michieletto G, Antonello R, Galvan M, Franco A, Cenedese A. Cooperative Optimization of UAVs Formation Visual Tracking. Robotics. 2019; 8(3):52. https://doi.org/10.3390/robotics8030052

Chicago/Turabian StyleLissandrini, Nicola, Giulia Michieletto, Riccardo Antonello, Marta Galvan, Alberto Franco, and Angelo Cenedese. 2019. "Cooperative Optimization of UAVs Formation Visual Tracking" Robotics 8, no. 3: 52. https://doi.org/10.3390/robotics8030052

APA StyleLissandrini, N., Michieletto, G., Antonello, R., Galvan, M., Franco, A., & Cenedese, A. (2019). Cooperative Optimization of UAVs Formation Visual Tracking. Robotics, 8(3), 52. https://doi.org/10.3390/robotics8030052