1. Introduction

E-mail is still the most common form of online business correspondence and is still a growing and effective communication tool for most enterprises and individuals [

1]. Meanwhile, E-mail is also an integral part of related personal Internet experience [

2]. For example, E-mail accounts (or E-mail addresses) are almost always required for registering on website accounts, including social networking sites, instant messaging and any other types of Internet services. Therefore, E-mail has become fully integrated into our daily lives and business activities. According to the Radicati Group’s statistics and projections [

1], more than 281 billion E-mails are sent and received worldwide every day, and this number is expected to increase by 18.5 per cent over the next four years. In 2018, more than half of the world’s population used E-mail, with more than 3.8 billion users. Based on the above statement, it can be determined that an average user sends and receives an average of 74 E-mails per day, which also reveals the problem of E-mail overload.

The problem of E-mail overload has not been solved for nearly half a century [

3]. Most users have numerous E-mails that they do not ever read or receive a reply to in time, which leads to E-mail management issues, predominantly a messy and overwhelming mailbox [

3]. Enterprises still maintain and manage customer resources using E-mails because of handling users’ feedback and consultation [

2]. To be specific, some customer service centres of various organisations receive hundreds of thousands of E-mails from customers every day. Although the staff in the customer service centres have high-level training, there is striking similarity among huge E-mail data. They need to spend lots of time replying to these E-mails, which results in a high labour cost during this process for enterprises. Every E-mail user also suffers from E-mail overload. The development of the information age brings various benefits to our daily life, however, alongside its convenience, attendant problems are equally persistent.

For E-mail systems, E-mail overload was proclaimed as a ‘universal problem’ [

3]. As a formal means of communication, E-mail is ‘central’ [

4], ‘ubiquitous’ [

5] and ‘indispensable’ [

6]. Whittaker and Sidner [

3] presented that E-mail users tend to leave an increasing volume of unread or non-replied messages every day. Most users have numerous E-mails that they do not ever read or get to reply to in time, which leads to E-mail management issues, predominantly a messy and overwhelming mailbox.

Researchers believe that NLP techniques using machine learning and deep learning algorithms have a significant role in reducing time and labour wasted due to repeated E-mail responses. They focus on developing E-mail systems with intelligent response functions. However, there are still research gaps either in reusing old E-mails based on an information retrieval method or the research of predictive generation-based responses based on neural networks. Even Gmail, now one of the best at responding intelligently to E-mails, uses technology based on word-level predictions. However, most E-mails are long-text; even the shortest E-mails usually are more than two sentences long. The research in this area still lacks ability to predict and generate E-mail reply consisting multiple sentences. Therefore this is our motivation of our research to postulate a novel EMS.

Our research objective is to present an intelligent E-mail response solution for individuals or corporate departments (such as service centres or help desks) that receive a large number of similar E-mails every day. This study contributes to the improvement of the existing models towards relevant practical usage scenarios. The analysis of this study identified a group of three essential models that can be applied in reducing E-mail overload. The TF-IDF model was applied in E-mail systems in 2017 [

7], there are few limitations such as not marking E-mail labels and too much noise in the datasets. Holistic analysis of the benefits of the Doc2Vec model has not been done before. Certainly, for the GRU-Sent2Vec hybrid model, it uses a combination of information generation and retrieval, which is an innovative model and is proposed for the first time. Therefore, the study confirmed our results of innovative research that also emphasised the contributions of three aspects. The paper made the following key contributions:

Current popular methods are limited to short text prediction, at the word-level. For example Chatbots can predict the next sentence based on the last sentence. However, E-mails are long-text, and if the content of the reply can be predicted from the received E-mail, work efficiency of E-mail users will be improved in particularly considering the customer services in both public and private corporate sectors. In this paper, Sent2Vec is combined with GRU and a novel hybrid model is constructed to make predictions based at the sentence-level rather than word-level. However, it should be noted that the scenarios applied by the models in this research are not limited to long text prediction but are also suited for short-text prediction scenarios, such as Chatbots used in automatic reply in a chat room.

Using mapping rules to improve the training corpus. The E-mail dataset is processed using a logical matching method, which matches sent and received E-mails and uses them as a reference for answering new E-mails. This study expands the sample diversity of the public E-mail dataset, and the processed dataset will be published on GitHub

https://github.com/fxyfeier for further research.

By creating user interfaces to implement core functions, our research is truly applicable for many enterprise customer service departments. This intelligent E-mail reply suggestion system allows users to choose an intelligent reply function or direct reply. The system provides an opportunity for users to review automatically generated replies before they are sent.

We evaluate the performance of Doc2Vec and GRU-Sent2Vec with TF-IDF. We also carry out human-based evaluation to validate our results.

The rest of the paper is structure as follows.

Section 2 presents literature review about the relevant approaches.

Section 3 describes design and methodology for our proposed approach.

Section 4 presents implementation and evaluation of our SEMS. Finally

Section 5 concludes the paper.

3. Design and Methodology

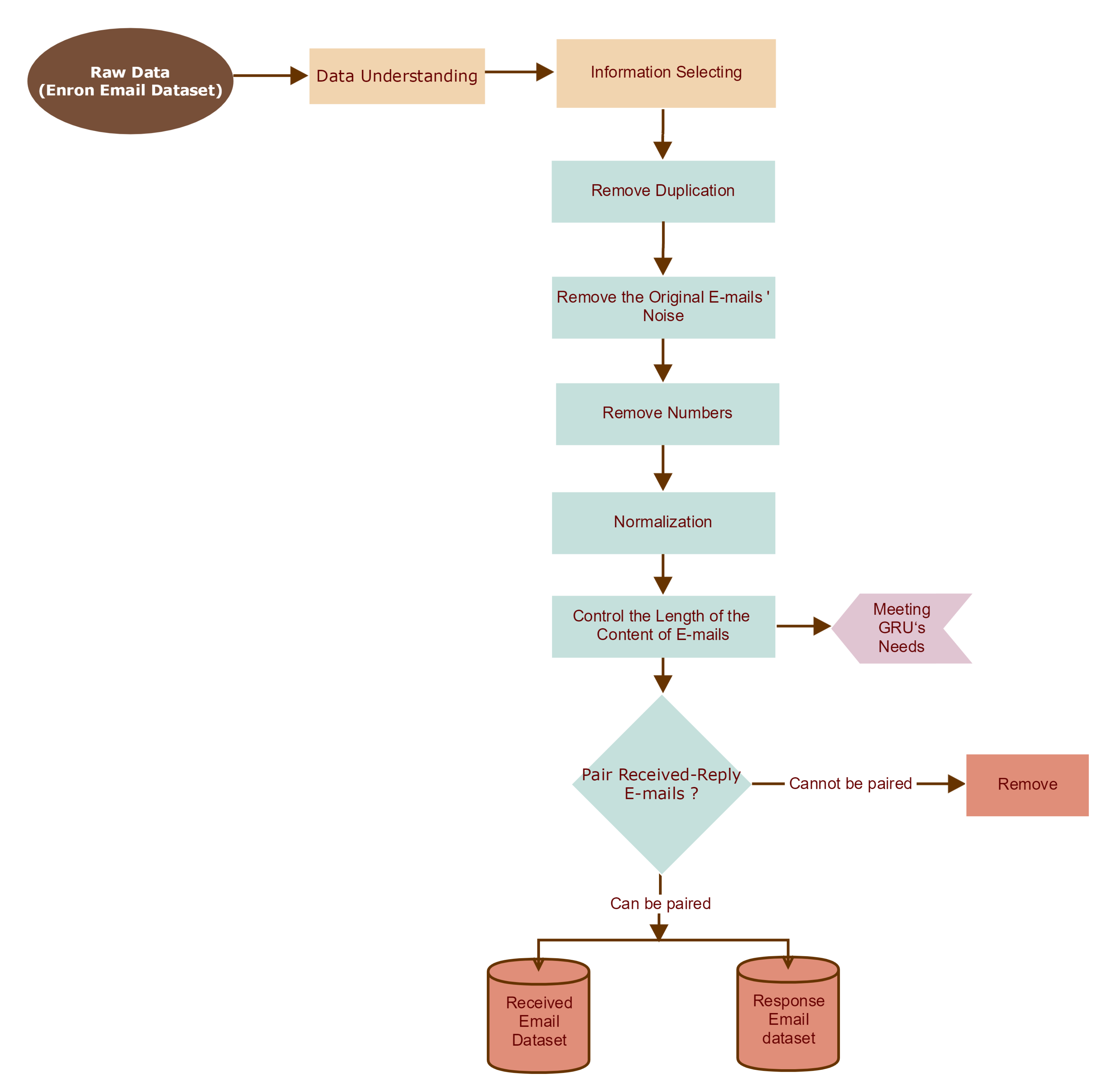

The system framework of this experiment is shown in

Figure 1. Initially, we needed to execute a series of text prepossessing steps on the original E-mail dataset. For the processed E-mails, we only kept the matching E-mail pairs (namely received E-mail–reply E-mail), so that we could obtain the relationships between the received E-mails and reply E-mails and put them into two databases. The next step is to train our three models using the results of text processing. Upon completion of the model training, when a new E-mail is received, SEMS client will offer users two options: one is to reply directly, and the other is to use the smart reply function. Regardless of whether users directly reply to an E-mail or modify the response suggestions generated by the models, after replying, the

newly received E-mail will be paired with the

sent E-mail. Finally the results will be stored in both databases in preparation for the models’ future learning.

3.1. TF-IDF Based Model

Figure 2 presents the workflow design for TF-IDF modelling. Firstly, after general dataset processing (which described in the next section),

Received E-mail Dataset and

Response E-mail Dataset are generated. Based on these datasets the data is not needed to be processed further to adapt to the different models. Data Processing for TF-IDF includes word lower-casing, word tokenization, stop words removal and word stemming. In the modelling process, vocabulary-building is a way to tag a collection of text documents, index each known word, and encode new documents using the index set as well.

After building corpus vocabulary and a sparse matrix, the next step is to count the IDF value and TF-IDF value of each word in the given corpus as well as a new document.For a new document, it is only needed to calculate its TF-IDF vector related to the entire corpus. By dot product with all other document vectors, we could calculate the cosine distance between a new document and all documents in the corpus, so that we could sort similar documents for retrieval.

Calculating IDF and TF-IDF Scores: After building corpus vocabulary and a sparse matrix by CountVectorizer, TF-IDFTransformer was used to count the IDF value and TF-IDF value of each word in the given corpus as well as a new document. It should be noted that if some specific words do not appear in the training corpus, their TF-IDF values can be 0.

Calculating Similarity Scores: Euclidean Normalisation is also applied in the Scikit-learn library. For a new document, we only needed to calculate its TF-IDF vector related to the entire corpus. By dot product with all other document vectors, we could calculate the cosine distance between a new document and all documents in the corpus, so that we could sort similar documents for retrieval.

3.2. Doc2Vec Based Model

Figure 3 interprets the training workflow based on the Doc2Vec model. As for data processing, it is similar to the process of TF-IDF.

Before training the model, we needed to tag each document (E-mail) in the corpus. To get the correlations between words in the document, we embedded the document’s ID with the words in the document. After building vocabulary, inferring vectors is the way to transfer the new documents to vectors. Using them makes it easy to calculate similarity scores among documents.

Tag Each E-mail: Before training the model, we needed to tag each document (E-mail) in the corpus. To get the correlations between words in the document, we embedded the document’s ID with the words in the document. Building Vocabulary: In the Gensim library, the function Word2VecVocab can create a vocabulary for the model. In addition to recording all unique words, this object provides additional functionality such as creating a Huffman tree (the more frequent the words, the closer they are to the roots of the tree), to eliminate uncommon words.

Inferring Vector: After training the model, the infer_vector function can infer vectors for new documents.

Calculating Similarity Scores: A built-in most_similar module in Gensim is used to calculate the similarity of document vectors.

3.3. GRU-Sent2Vec Hybrid Model

The essential part of GRU-Sent2Vec hybrid model is to put pre-trained sentence vectors into the embedding layer of GRU network for training together. The design idea of this model is to combine the information generation model with the information retrieval model, using both GRU and Sent2Vec technologies. In this design (

Figure 4), on the one hand, we expect to generate long-text reply E-mails, on the other side, we consider that our training data set is too small for the deep neural network. It is a novel attempt for intelligent generated E-mail system. The model of Sent2Vec is built to train the vector of each sentence. The process and principles of training are similar to Doc2Vec. Whereas, the difference is that the ID of the document is replaced with the ID of the sentence for training. We mapped each sentence into a 150-dimensional feature space and added an attention layer to allow the decoder to focus on certain parts of the input sequence to improve the decoder’s capability and prevent the loss of valuable information. In order to achieve better convergence, we used the teacher forcing method in iterative training.

Different from the previous two models, instead of a simple normalisation process, we continued to adopt Sent2Vec model to match similar sentences in the sentence list of the original training dataset. In the case of a small sample set, the intention is to ensure that the results generated by the GRU model are always valid.

4. Implementation and Evaluation

4.1. Data Preparing

Figure 5 shows the workflow of the data pre-processing. The purpose of this process is to produce a generic dataset for our three models.

The Enron E-mail Dataset

https://www.cs.cmu.edu/~enron/ is a suitable training dataset available online. This dataset is composed of a large number of Emails in TXT form. The first step is to transfer all the E-mails into a CSV file with the information we needed, including the sending date, sender E-mail address, receiver E-mail address, E-mail subject and E-mail content, respectively.

From the data overview (

Figure 6), it can be seen that the total number rows in the dataset is 517,401, among which there are 498,214 messages with titles, and the highest frequency of occurrence is ‘RE:’, appearing 6477 times. Meanwhile, in the content column of the E-mail dataset, nearly half of the content is duplicate. The reason is that if employee A sends an E-mail to employee B, the E-mail in A’s outbox will be the same as the E-mail received in B’s inbox. Therefore, duplicate messages firstly should be removed entirely, leaving unique messages for further processing. The process of this step is to compare the title and content at the same time to avoid the possibility that the content is the same but not sent by the same person.

The next step is to remove noise from the dataset, such as numbers, symbols, and the duplicate past responses in Emails because such non-useful information will lead to poor model training results.

The last but vital step is Pairing Received - Response E-mails, as it is the basic form for three models training. The method used in the E-mail pairing process is that we logically filtered messages using the sending time, title, and the name of the senders and receivers, then selected the E-mails that most likely relate to each other and placed them into two databases (Received E-mail Dataset and Response E-mail Dataset) separately. The result after processing is shown in

Figure 7. Finally, we got a total of 19,871 pairs of E-mails, each with a content-length range of 1 to 30 sentences (as shown in

Figure 8).

After general data processing, we need to further process the data to fit different models. The data processing methods for TF-IDF and Doc2Vec model are similar, including word lowercasing, word tokenization, stop word removal, word stemming. However, processing data for GRU models requires more effort. We need to separate each sentence in order to generate a sentence dictionary that is related to the unique index, and then apply the Sent2Vec model to train the sentence vectors, which will be used as an embedding layer of the GRU model. Therefore, the process of processing includes text standardisation, sentence tokenisation, generating sentence list, building up Sent2Vec model, mapping sentences and preparing embedding layers.

4.2. Parameter Tuning

According to the design flow of the three models in the previous section, we trained the models respectively, and use the method of call@K to self-evaluate the models. Here we only perform parameter tuning of TF-IDF and Doc2Vec models. We do not consider GRU-Sent2Vec model because of the limitations, which will be discussed later.

This process randomly selects 200 documents from the training corpus as queries, and then carries out vector inference on these documents and compared them with the vectors in the training corpus. This self-evaluation process is based on the similarity level between the same documents and the query.

We assume that the test dataset consisting of these 200 documents is some new data, and then evaluate them based on the models’ response to them. The expected result is that the same document in the training set will be extracted in either the first or the first three positions for the 200 test queries. The formula is expressed as Equation (

1):

We find that the most influential parameter for this model is N-gram size (

Table 1). The higher the value of N, the higher the accuracy (Recall rate@1). From the results, it seems not to have much impact on the recall rate of the top three. However, in the meantime, the training time has an exponential increase. Considering the range of our training dataset is not large, we ignore the time consumption and selected Ngram = (1, 4) as the model parameter of TF-IDF. The results are shown in

Table 1.

Compared with TF-IDF, the Doc2Vec model contains more parameters. As for these parameters, eleven combinations were selected to conduct eleven rounds of training for the model (M1–M11). The results of the test are illustrated in

Table 2 and

Figure 9.

After all steps of the test, we find that Vector-size 150, Window value 5, and Epochs 1500, is most suitable for the training dataset. It indicates that the Negative Sampling training method is generally superior to the Hierarchical Softmax training method. In the same Negative Sampling training mode, M3 with Distributed Memory (DM) architecture has the highest top-3 recall rate, and its top-1 recall rate performance is resonable, while M9 with Distributed Bag of Words (DBOW) architecture has the highest accuracy (Recall rate@1). To further confirm our parameter selection, we compare the similarity of the 200 documents tested by the two groups (

Figure 9), and find that M9 shows a more stable level than M3. Meanwhile, the training time of M9 exhibits strong competitiveness.

4.3. Setup for Human Evaluation

Among the various methods for evaluating the effects of Natural Language Generation (NLG), there are some methods for automatic evaluation, such as NIST, BLEU, and ROUGE. Belz and Reiter [

28] believed that automated evaluation methods had great potential after comparing various assessment methods, but the best way to evaluate NLG Models is through human assessment. Meanwhile, our training dataset contained non tagged information, and we could not find a uniform standard or a unified model to evaluate these three models simultaneously.

Since there is not much similarity between E-mails in the dataset (Enron E-mail Dataset), it is difficult to evaluate the functional performance and learning capabilities of the three models. In order to compare the effects of these models, we design the test data according to the training data, following the following rules: change the entity noun (such as time, place and name); change the sentence order of the paragraph; add some information to the E-mail; delete some information from the E-mail; change the expression of the sentence.

We use two criteria to evaluate the performance of three models. In the first case, we compare the final best responses given by the three models, respectively. In other words, we only select the responses of top1 related E-mails extracted by Doc2Vec based and TF-IDF based models, as well as the predicted response generated by the GRU-Sent2Vec hybrid model, to compare the final implementation results of our experiment. In the second case, we only compare the two information retrieval models. After the first four rounds of training on new test E-mails, the fifth designed E-mail will enter into the two models as a query. The experimental results of the two models may extract four similar E-mails with different performance. We will list the top five similar E-mails extracted by the two models respectively with their corresponding similarity scores, and then five participants from different academic fields will choose the model which gave the suggestion that matches the similarity most closely based on subjective judgement.

4.4. SEMS Client Implementation

To display the results and analyse the experimental effect intuitively, we designed a simple client for visualisation. Users can select either to reply directly or to use or modify intelligent suggestions. If the latter is chosen, by clicking the button on the robot in

Figure 10 three suggestion responses from each of the three models are presented.

4.5. Results and Discussion

4.5.1. Comparison of the Three Models

Figure 11 shows an example that is taken from topic 1 of the experimental results from the three models. As we mentioned earlier, these three models use two different methods. Therefore, in the first stage of human evaluation, we make subjective selections on the final response suggestions, which are also the final results of our experiment. We select the top 1 response from two information retrieval models as their best response suggestions. Also, the one predictive response generated by the GRU-Sent2Vec hybrid model is treated as the object of evaluation for this model.

After several rounds of training, the three models each suggested responses to five new E-mails. The first round of the evaluation of the overall result is shown in

Figure 12. According to the subjective evaluation, five participants chose relevant answers for each new E-mail, and this was a multiple-choice process. The results show that TF-IDF and Doc2Vec, the two information retrieval models, had better performance than the information generation model.

The GRU-Sent2Vec hybrid model is not ideal for several reasons. Although we considered this result at the beginning, the main reason is the quality of our training corpus. For deep neural networks, learning relatively accurate feature rules first requires vast datasets. The total number of sentences in our training dataset is 99,431, which is not sufficient. Second, the average number of repeated sentences in the training dataset is only 2.3 (

Figure 13), while the majority of sentences only appear once. Such extremely low probability distribution of repeated sentences can hardly provide adequate learning information for deep neural networks.

We expect that given the right environment, the GRU-Sent2Vec hybrid model can predict and generate a series of sentences as the output according to input sentences.

Figure 14 presents its workflow design.

4.5.2. Comparison of the Two Information Retrieval Models

Comparison of Learning Ability: First, we compared the learning ability of the Doc2Vec and TF-IDF Models. Since our training dataset initially did not contain many similar E-mails, we conducted five rounds of training on the models using five similar test E-mails designed and generated for each topic in the previous section. If at the end of each round of training, the model could always find the similar E-mails learned in previous rounds from the database for new E-mails, it meant that the model had the ability to learn new information. The specific process is as follows:

We tested it by simply replacing the names of five randomly selected E-mails from the original training dataset. Both models found the five related original E-mails in the first most similar ranking.

We put the first round of E-mails together with the responses of corresponding designs into the training set, and then we modified the five selected topic E-mails by changing the order of the sentences. In the second round of testing, the two models found 10 related E-mails in the first two most similar rankings including the original 5 E-mails and the 5 E-mails put into the training set after the first round.

We put the E-mails from the second round of E-mail modification into the training set together with the replies from the corresponding designs, and then we modified the 5 selected topic E-mails by adding some information. The results of the third round of testing showed that in the first 3 most similar rankings, TF-IDF found 14 related E-mails while Doc2Vec found 15 related E-mails with the original 5 E-mails and 10 E-mails put into the training set after the first two rounds.

We put the E-mails from the third round of E-mail modification into the training set together with the replies from the corresponding designs, and then we modified the 5 selected topic E-mails by deleting some information. In the fourth round of tests, TF-IDF found 18 related E-mails in the first 5 most similar rankings, while Doc2Vec found 19 related E-mails involving in the original 5 E-mails and 15 E-mails put into the training set after the first three rounds.

We put the E-mails from the fourth round of design into the training set together with the replies from the corresponding designs, and then we modified the 5 selected topic E-mails by changing the expression of some information. The results of the fifth round of testing showed that in the first 4 most similar rankings, TF-IDF found 19 related E-mails and Doc2Vec found 23 related E-mails with the original 5 E-mails and the 20 E-mails put into the training set after the first four rounds.

After five rounds of training, the results of each round could reflect the models’ learning abilities. The Doc2Vec model presented a more stable learning ability, especially in the fifth round. When the semantic expression was changed, it presented a better information resolution ability than the TF-IDF model. It was proven that Doc2Vec is capable of extracting semantic correlations between words in an article. The results are shown in

Figure 15.

Comparison of Effect: After five rounds of training, five participants subjectively evaluated the accuracy of the two models based on the information retrieval mechanism and compared the similarity score.

Figure 16 presents five new test E-mails on behalf of the five topics. The top five E-mails with the highest similarity in each topic were extracted from the training dataset by two models. Meanwhile, five participants compared and selected the results extracted from the two models under five topics, which means there are twenty-five results that should be selected for each topic, which was a single selection process.

From the results of the selection data given by the five participants, we concluded that the effect of the Doc2Vec model was significantly better than that of the TF-IDF model from the perspective of subjective evaluation.

5. Conclusions

This paper presents a Smart Email Management System (SEMS), a software application and design solution. SEMS is based on machine learning and deep learning technologies, which improves the effectiveness of people who are required to handle the practical problems of using E-mail in daily life and corporate business. The first thing to be emphasised is that we designed a novel GRU-Sent2Vec hybrid model that can be used to predict responses based on sentence-level, which goes beyond the limits of word-level prediction. Although the quality of the training corpus limits the effect, the results have been informative and have the potential to guide further development for industrial applications in the future. As for the evaluation of the effects, it has a subjective component. We designed a set of human evaluation questionnaires. The results of the questionnaire survey showed that this research project has significant application value.

Our experimental models gave coherent and reasonable responses after analysing newly received E-mails. However, there are still many limitations and challenges that we have plan to address in future. The first limitation is the training dataset. Unlike E-mail datasets from help desk or customer service centres, which contain many similar inquiries from customers, our experimental dataset has very little similarity between E-mails because the E-mails were collected from Enron employees. In future we plan to approach some customer service centres to enrich our dataset. The second limitation is the computing power for achieving machine learning or deep learning algorithms. The cost for training our models especially GRU was very high. In future we have a plane to minimise our training cost.