Robustness of Rhythmic-Based Dynamic Hand Gesture with Surface Electromyography (sEMG) for Authentication

Abstract

1. Introduction

- Password-based—Convenient due to its availability (e.g., keyboard, touchscreen) but can sometimes be difficult to memorize and easily attacked by impostors.

- Token-based—It is unique and changes over time, at the same time making it inconvenient as it can only be used once and requires new access the next time.

- Key-based—Unlike token-based, it does not change; and unlike password, it does not require memorization. But, it is inconvenient as it needs to be carried around either in physical or digital form.

- Static biometrics—Something that is unique on a person, i.e., fingerprint, iris, etc. It is robust, convenient, and require no memorization. But, once it is stolen, it cannot be easily reset.

- Dynamic biometrics—Person’s behavior, gait, gesture, etc. May require some memorization but after some repetitions and getting used to it, it can be embedded in the muscle memory [10]. It is considered to be less robust as it changes over time depending on the person’s behavior, but it can be an advantage as it can be difficult for impostors to mimic.

2. Methodology

2.1. Equipment and Technology

2.2. Comparison with Other Biometrics Modalities

2.3. Data Capturing

2.4. Data Processing and Analyzing

3. Results

- training data set consists of different scenario as that of the testing data set (i.e., sitting scenario for training, either standing or walking scenario for testing),

- comparisons between single fitting and multiple fittings,

- comparisons between no rotation and virtual rotation, and

- mimicking other participants gestures.

3.1. Different Scenario Training and Testing

- Training = Sitting scenario; Testing = Standing scenario.

- Training = Sitting scenario; Testing = Walking scenario.

- Training = Standing scenario; Testing = Sitting scenario.

- Training = Standing scenario; Testing = Walking scenario.

- Training = Walking scenario; Testing = Sitting scenario.

- Training = Walking scenario; Testing = Standing scenario.

3.2. One-Time Fitting with Multiple Similar Gestures versus Multiple Fittings with Single Similar Gesture

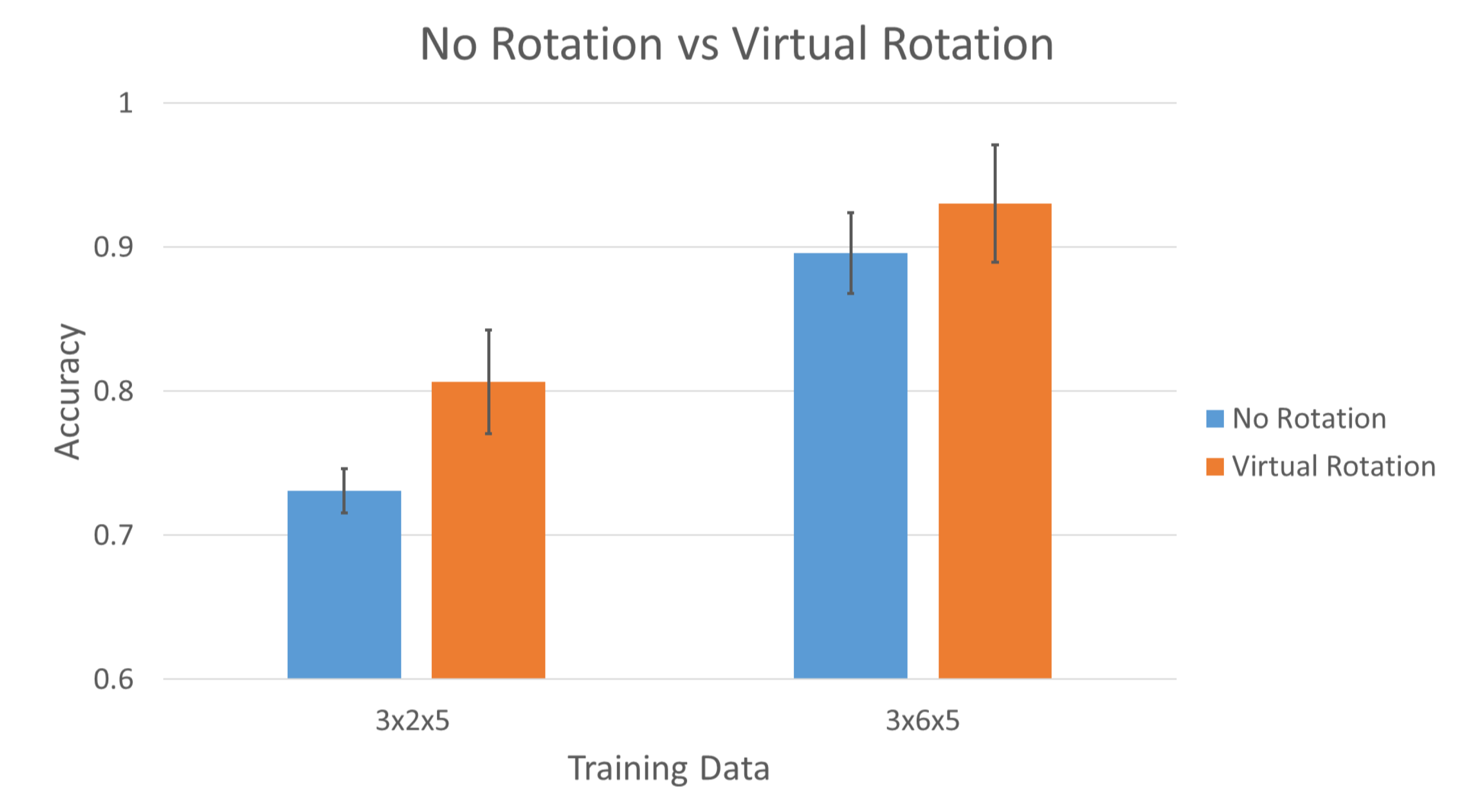

3.3. Virtual Rotation versus No Rotation

- Training = Session 1; Testing = Session 2.

- Training = Session 2; Testing = Session 1.

- Training = Session 2,3,4; Testing = Session 1.

- Training = Session 1,3,4; Testing = Session 2.

- Training = Session 1,2,4; Testing = Session 3.

- Training = Session 1,2,3; Testing = Session 4.

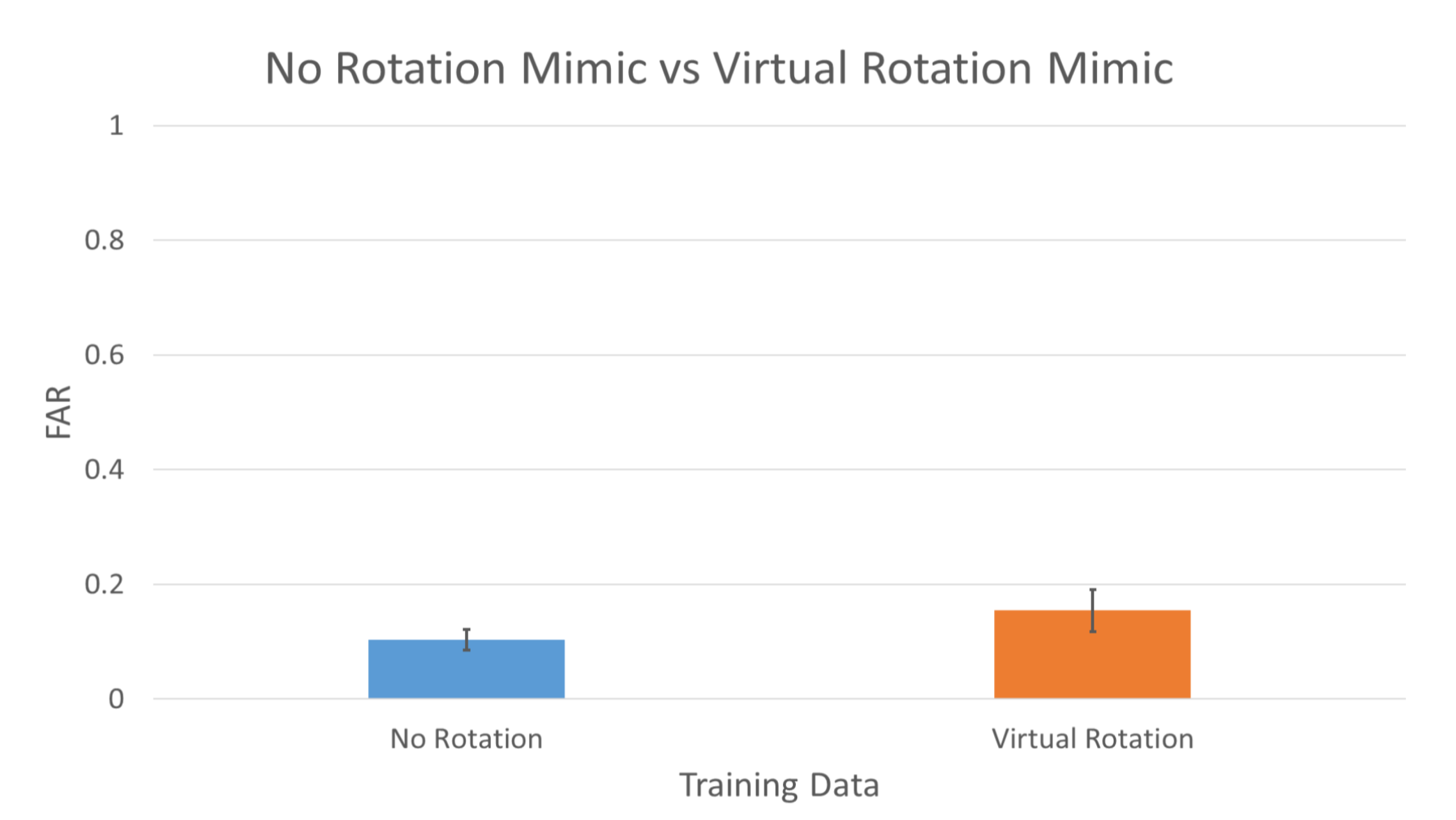

3.4. Virtual Rotation Mimic versus No Rotation Mimic

- Training = All Genuine User Data + Impostors Mimic Data 2,3,4;Testing = Impostors Mimic Data 1.

- Training = All Genuine User Data + Impostors Mimic Data 1,3,4;Testing = Impostors Mimic Data 2.

- Training = All Genuine User Data + Impostors Mimic Data 1,2,4;Testing = Impostors Mimic Data 3.

- Training = All Genuine User Data + Impostors Mimic Data 1,2,3;Testing = Impostors Mimic Data 4.

3.5. Summary of Results

4. Discussion

5. Materials

6. Conclusions

Author Contributions

Funding

Conflicts of Interest

Abbreviations

| EMG | electromyography |

| sEMG | surface electromyography |

| FAR | false acceptance rate |

| FRR | false rejection rate |

| TAR | true acceptance rate |

References

- Liu, C.; Clark, G.D.; Lindqvist, J. Where Usability and Security Go Hand-in-Hand: Robust Gesture-Based Authentication for Mobile Systems. In Proceedings of the 2017 CHI Conference on Human Factors in Computing Systems, New York, NY, USA, 6–11 May 2017; pp. 374–386. [Google Scholar] [CrossRef]

- Ometov, A.; Bezzateev, S.; Mäkitalo, N.; Andreev, S.; Mikkonen, T.; Koucheryavy, Y. Multi-Factor Authentication: A Survey. Cryptography 2018, 2, 1. [Google Scholar] [CrossRef]

- Harini, N.; Padmanabhan, T.R. 2CAuth: A New Two Factor Authentication Scheme Using QR-Code. Int. J. Eng. Technol. 2013, 5, 1087–1094. [Google Scholar]

- Abhishek, K.; Roshan, S.; Kumar, P.; Ranjan, R. A Comprehensive Study on Multifactor Authentication Schemes. In Advances in Intelligent Systems and Computing; Springer: Berlin, Germany, 2013; Volume 177, pp. 561–568. [Google Scholar] [CrossRef]

- Ogbanufe, O.; Kim, D.J. Comparing Fingerprint-Based Biometrics Authentication versus Traditional Authentication Methods for e-Payment. Decis. Support Syst. 2018, 106, 1–14. [Google Scholar] [CrossRef]

- Porubsky, J. Biometric Authentication in M-Payments: Analysing and Improving End-Users’ Acceptability. Master’s Thesis, Luleå University of Technology, Luleå, Sweden, 2020. [Google Scholar]

- Normalini, M.K.; Ramayah, T. Biometrics Technologies Implementation in Internet Banking Reduce Security Issues? Procedia Soc. Behav. Sci. 2012, 65, 364–369. [Google Scholar] [CrossRef][Green Version]

- Porwik, P. The Biometric Passport. The Technical Requirements and Possibilities of Using. In Proceedings of the 2009 International Conference on Biometrics and Kansei Engineering, Cieszyn, Poland, 25–28 June 2009; pp. 65–69. [Google Scholar] [CrossRef]

- Malík, D.; Drahansk, M. Anatomy of Biometric Passports. J. Biomed. Biotechnol. 2012, 2012, 490362. [Google Scholar] [CrossRef]

- Zaharis, A.; Martini, A.; Kikiras, P.; Stamoulis, G. User Authentication Method and Implementation Using a Three-Axis Accelerometer. Lect. Notes Inst. Comput. Sci. Soc. Telecommun. Eng. 2010, 45, 192–202. [Google Scholar] [CrossRef]

- O’Gorman, L. Comparing Passwords, Tokens, and Biometrics for User Authentication. Proc. IEEE 2003, 91, 2021–2040. [Google Scholar] [CrossRef]

- Janakiraman, R.; Sim, T. Keystroke Dynamics in a General Setting. Lect. Notes Comput. Sci. 2007, 4642, 584–593. [Google Scholar] [CrossRef]

- Ho, J.; Kang, D.K. Sequence Alignment of Dynamic Intervals for Keystroke Dynamics Based User Authentication. In Proceedings of the 2014 Joint 7th International Conference on Soft Computing and Intelligent Systems (SCIS) and 15th International Symposium on Advanced Intelligent Systems, Kitakyushu, Japan, 3–6 December 2014; pp. 1433–1438. [Google Scholar] [CrossRef]

- Wong, A.M.H.; Furukawa, M.; Ando, H.; Maeda, T. Dynamic Hand Gesture Authentication Using Electromyography (EMG). In Proceedings of the 2020 IEEE/SICE International Symposium on System Integration (SII), Honolulu, HI, USA, 12–15 January 2020; pp. 300–304. [Google Scholar] [CrossRef]

- Visconti, P.; Gaetani, F.; Zappatore, G.A.; Primiceri, P. Technical Features and Functionalities of Myo Armband: An Overview on Related Literature and Advanced Applications of Myoelectric Armbands Mainly Focused on Arm Prostheses. Int. J. Smart Sens. Intell. Syst. 2018, 11, 1–25. [Google Scholar] [CrossRef]

- Merletti, R.; Farina, A. Analysis of Intramuscular Electromyogram Signals. Philos. Trans. R. Soc. A Math. Phys. Eng. Sci. 2009, 367, 357–368. [Google Scholar] [CrossRef]

- Schofield, J.R. Electrocardiogram Signal Quality Comparison Between a Dry Electrode and a Standard Wet Electrode over a Period of Extended Wear. Master’s Thesis, Cleveland State University, Cleveland, OH, USA, 2012. [Google Scholar]

- Laferriere, P.; Lemaire, E.D.; Chan, A.D.C. Surface Electromyographic Signals Using Dry Electrodes. IEEE Trans. Instrum. Meas. 2011, 60, 3259–3268. [Google Scholar] [CrossRef]

- Ives, J.C.; Wigglesworth, J.K. Sampling Rate Effects on Surface EMG Timing and Amplitude Measures. Clin. Biomech. 2003, 18, 543–552. [Google Scholar] [CrossRef]

- Phinyomark, A.; Scheme, E. A Feature Extraction Issue for Myoelectric Control Based on Wearable EMG Sensors. In Proceedings of the IEEE Sensors Applications Symposium (SAS), Seoul, Korea, 12–14 March 2018; pp. 1–6. [Google Scholar] [CrossRef]

- Wong, A.M.H.; Kang, D.K. Stationary Hand Gesture Authentication Using Edit Distance on Finger Pointing Direction Interval. Sci. Program. 2016, 2016, 427980. [Google Scholar] [CrossRef]

- Kuo, A.D.; Donelan, J.M. Dynamic Principles of Gait and Their Clinical Implications. Phys. Ther. 2010, 90, 157–174. [Google Scholar] [CrossRef]

- El-abed, M.; Charrier, C.; Rosenberger, C. Evaluation of Biometric Systems. In New Trends and Developments in Biometrics; Yang, J., Xie, S.J., Eds.; IntechOpen: London, UK, 2012; pp. 149–169. [Google Scholar] [CrossRef]

- Roslan, N.I.; Salimin, N.; Idayu Mat Roh, N.S.; Anini Mohd Rashid, N. Possible Conditions of FTE and FTA in Fingerprint Recognition System and Countermeasures. In Proceedings of the 2018 4th International Conference on Computer and Information Sciences (ICCOINS), Kuala Lumpur, Malaysia, 13–14 August 2018; pp. 1–5. [Google Scholar] [CrossRef]

- Hariri, W. Efficient Masked Face Recognition Method during the COVID-19 Pandemic. Research Square. 2020. Available online: https://www.researchsquare.com/article/rs-39289/v2 (accessed on 12 December 2020).

- Anwar, A.; Raychowdhury, A. Masked Face Recognition for Secure Authentication. arXiv 2020, arXiv:2008.11104. [Google Scholar]

- Connor, P.; Ross, A. Biometric Recognition by Gait: A Survey of Modalities and Features. Comput. Vis. Image Underst. 2018, 167, 1–27. [Google Scholar] [CrossRef]

- Bartuzi, P.; Tokarski, T.; Roman-Liu, D. The Effect of the Fatty Tissue on EMG Signal in Young Women. Acta Bioeng. Biomech. 2010, 12, 87–92. [Google Scholar]

- Ptaszkowski, K.; Wlodarczyk, P.; Paprocka-Borowicz, M. The Relationship between the Electromyographic Activity of Rectus and Oblique Abdominal Muscles and Bioimpedance Body Composition Analysis—A Pilot Observational Study. Diabetes Metab. Syndr. Obes. Targets Ther. 2019, 12, 2033–2040. [Google Scholar] [CrossRef]

- Marasco, E.; Ross, A. A Survey on Antispoofing Schemes for Fingerprint Recognition Systems. ACM Comput. Surv. 2014, 47. [Google Scholar] [CrossRef]

- Ducray, B. Authentication by Gesture Recognition: A Dynamic Biometric Application. Ph.D. Thesis, Royal Holloway, University of London, London, UK, 2017. [Google Scholar]

- Hitchcock, D.C. Evaluation and Combination of Biometric Authentication. Master’s Thesis, University of Florida, Gainesville, FL, USA, 2003. [Google Scholar]

- Engelsma, J.J.; Cao, K.; Jain, A.K.; Fellow, L. Learning a Fixed-Length Fingerprint Representation. IEEE Trans. Pattern Anal. Mach. Intell. 2019. [Google Scholar] [CrossRef]

- Niwa, M.; Okada, S.; Sakaguchi, S.; Azuma, K.; Iizuka, H.; Ando, H.; Maeda, T. Detection and Transmission of “Tsumori”: An Archetype of Behavioral Intention in Controlling a Humanoid Robot. In Proceedings of the 20th International Conference on Artificial Reality and Telexistence (ICAT), University of South Australia, Adelaide, Australia, 1–3 December 2010; pp. 193–196. [Google Scholar]

- Peralta, D.; Triguero, I.; Sanchez-Reillo, R.; Herrera, F.; Benitez, J.M. Fast Fingerprint Identification for Large Databases. Pattern Recognit. 2014, 47, 588–602. [Google Scholar] [CrossRef]

- Sanchez, A.J.; Romero, L.F.; Tabik, S.; Medina-Pérez, M.A.; Herrera, F. A First Step to Accelerating Fingerprint Matching Based on Deformable Minutiae Clustering. Lect. Notes Comput. Sci. 2018, 11160, 361–371. [Google Scholar] [CrossRef]

- Qu, X.; Wei, T.; Peng, C.; Du, P. A Fast Face Recognition System Based on Deep Learning. In Proceedings of the 2018 11th International Symposium on Computational Intelligence and Design (ISCID), Hangzhou, China, 8–9 December 2018; Volume 1, pp. 289–292. [Google Scholar] [CrossRef]

- Matsumoto, T.; Matsumoto, H. Impact of Artificial Gummy Fingers on Fingerprint Systems. Opt. Secur. Counterfeit Deterrence Tech. 2002, 4677, 275–289. [Google Scholar]

- Yang, W.; Wang, S.; Hu, J.; Zheng, G.; Valli, C. Security and Accuracy of Fingerprint-Based Biometrics: A Review. Symmetry 2019, 11, 141. [Google Scholar] [CrossRef]

| Static Biometrics | Dynamic Biometrics | ||||

|---|---|---|---|---|---|

| Comparisons | Fingerprint | Face Recognition | Gait | Camera-Based Hand Gesture | Rhythmic Hand Gesture with EMG |

| Gesture Performing Time | Short—1 s or less | Short—1 s or less | Long—few seconds to minutes | Medium—1 to 5 s | Medium—1 to 5 s |

| Failure to Acquire (FTA) | High due to skin condition | High due to facial accessories | High due to types of clothes | Medium due to field of angle and obstruction | Low but can be affected by body fat |

| Prone to Mimicking | Medium as it leaves latent prints | Easy as it can be observed | Easy to observe but require training | Easy to observe but require training | Difficult as it has little cues |

| Resettable | No | No | Yes | Yes | Yes |

| Amount of Data | Small—few kilobytes | Medium—kilobytes to megabytes | Large—up to gigabytes with frequent updates | Large—up to gigabytes with frequent updates | Large—up to gigabytes with frequent updates |

| Training | Testing | Rotation | Cross Validation | Accuracy | FAR | FRR |

|---|---|---|---|---|---|---|

| (Scenarios × Fittings × Gestures) | ||||||

| Sit × 8 × 5 | Stand × 8 × 5 | No | No | 0.9360 | 0.0057 | 0.0640 |

| Sit × 8 × 5 | Walk × 8 × 5 | No | No | 0.8692 | 0.0111 | 0.1308 |

| Stand × 8 × 5 | Sit × 8 × 5 | No | No | 0.9138 | 0.0078 | 0.0862 |

| Stand × 8 × 5 | Walk × 8 × 5 | No | No | 0.8831 | 0.0099 | 0.1169 |

| Walk × 8 × 5 | Sit × 8 × 5 | No | No | 0.8869 | 0.0098 | 0.1131 |

| Walk × 8 × 5 | Stand × 8 × 5 | No | No | 0.8990 | 0.0085 | 0.1010 |

| 3 × 1 × 5 | 3 × 2 × 5 | No | No | 0.7377 | 0.0217 | 0.2623 |

| 3 × 5 × 1 | 3 × 2 × 5 | No | No | 0.8723 | 0.0106 | 0.1277 |

| 3 × 2 × 5 | 3 × 2 × 5 | No | 2 | 0.7308 ± 0.0154 | 0.0224 ± 0.0013 | 0.2692 ± 0.0154 |

| 3 × 2 × 5 | 3 × 2 × 5 | Yes | 2 | 0.8066 ± 0.0361 | 0.0161 ± 0.0030 | 0.1934 ± 0.0361 |

| 3 × 6 × 5 | 3 × 2 × 5 | No | 4 | 0.8960 ± 0.0280 | 0.0087 ± 0.0023 | 0.1041 ± 0.0280 |

| 3 × 6 × 5 | 3 × 2 × 5 | Yes | 4 | 0.9301 ± 0.0406 | 0.0058 ± 0.0034 | 0.0698 ± 0.0407 |

| Genuine + Impostor | Mimic | |||||

| 3 × 8 × 5 + 3 × 1 × 5 | 1 × 1 × 5 | No | 4 | N/A | 0.1038 ± 0.0179 | N/A |

| 3 × 8 × 5 + 3 × 1 × 5 | 1 × 1 × 5 | Yes | 4 | N/A | 0.1545±0.0365 | N/A |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Wong, A.M.H.; Furukawa, M.; Maeda, T. Robustness of Rhythmic-Based Dynamic Hand Gesture with Surface Electromyography (sEMG) for Authentication. Electronics 2020, 9, 2143. https://doi.org/10.3390/electronics9122143

Wong AMH, Furukawa M, Maeda T. Robustness of Rhythmic-Based Dynamic Hand Gesture with Surface Electromyography (sEMG) for Authentication. Electronics. 2020; 9(12):2143. https://doi.org/10.3390/electronics9122143

Chicago/Turabian StyleWong, Alex Ming Hui, Masahiro Furukawa, and Taro Maeda. 2020. "Robustness of Rhythmic-Based Dynamic Hand Gesture with Surface Electromyography (sEMG) for Authentication" Electronics 9, no. 12: 2143. https://doi.org/10.3390/electronics9122143

APA StyleWong, A. M. H., Furukawa, M., & Maeda, T. (2020). Robustness of Rhythmic-Based Dynamic Hand Gesture with Surface Electromyography (sEMG) for Authentication. Electronics, 9(12), 2143. https://doi.org/10.3390/electronics9122143