In this section, we will discuss the basic knowledge about SE and SA modules and the method of applying these modules to a 3D CNN. Moreover, we will analyze the importance of active movement in one way of applying both short-term and long-term temporal information.

3.1. Proposed SE Module

SE is a module for enhancing channels’ interdependencies with a low computation cost. Using an SE module can improve feature representation by explicitly developing channel interconnections so that the network can increase its sensitivity to information. More specifically, we give access to global data, and reset filter responses in two steps, i.e., squeeze and excitation, before transforming them into the next transformation.

We first consider the signal to each channel of output features to address the problem of the exploitation of channel dependencies. Since each convolutional filter operates only within a local field and is not able to provide information outside of this region, a squeeze global spatial operation is implemented to solve this problem. We use global average pooling to squeeze each channel into a single numeric value. The formula of the squeeze operation is:

where the transformation output,

, is a collection of local descriptors that are expressive for the entire video.

H,

W, and

S represent height, weight, and sequence, respectively. Bias terms are omitted to simplify the notation. To use the information in the squeeze operation, we implement a second excitation operation. The idea of the excitation method completely captures channel-wise dependencies provided by the squeeze operation:

where

δ represents the ReLU activation function,

Fex is the excitation operation,

, and

.

Equations (1) and (2) present methods operating on channels. For a sequence:

where

and

.

In contrast to the original SE for a 2D CNN [

16], we consider not only the channel but also the number of frames (

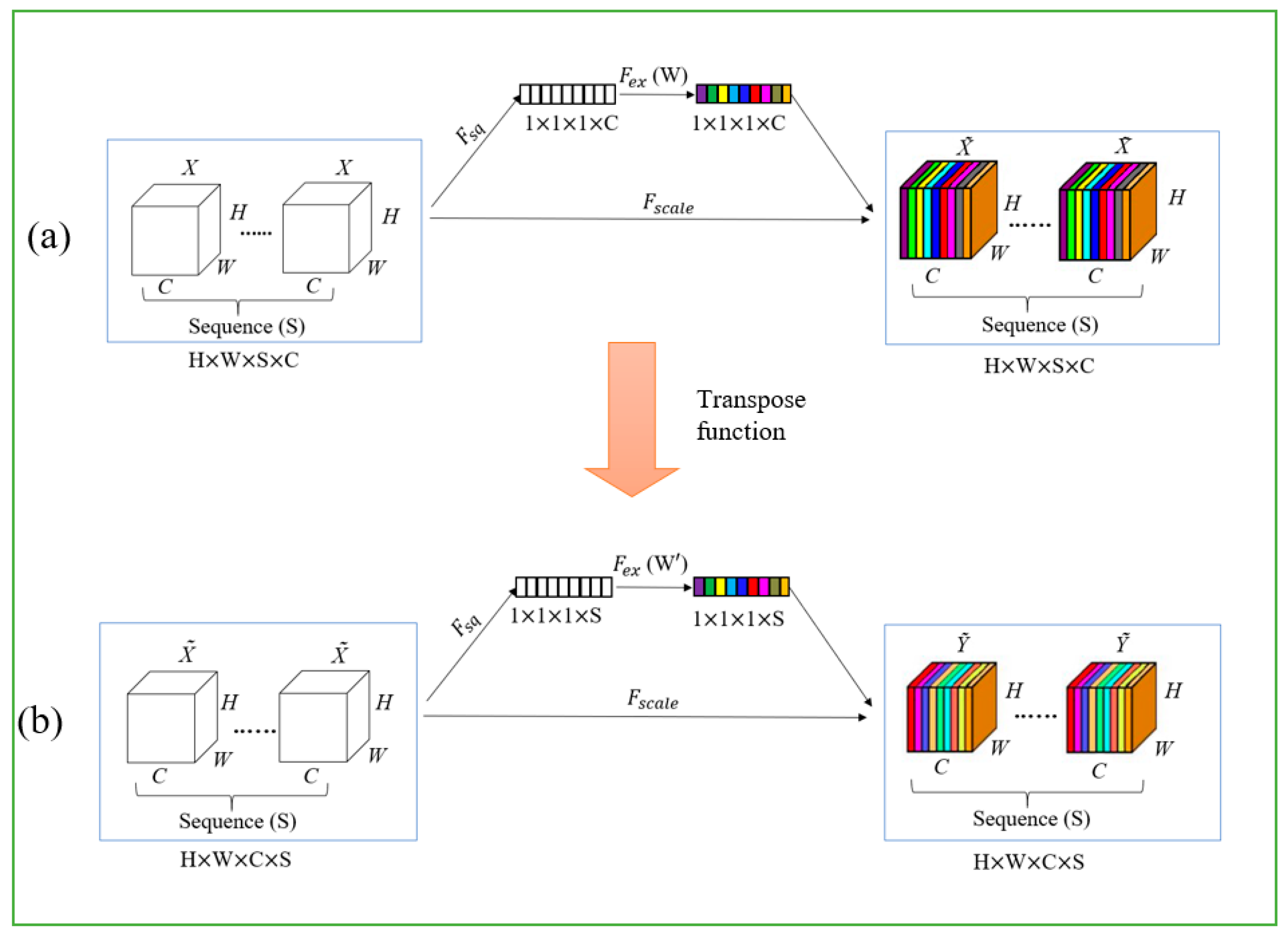

Figure 2). We consider the concatenation of channels and sequences after each layer in our network, which makes our module more effective and allows us to achieve better efficiency.

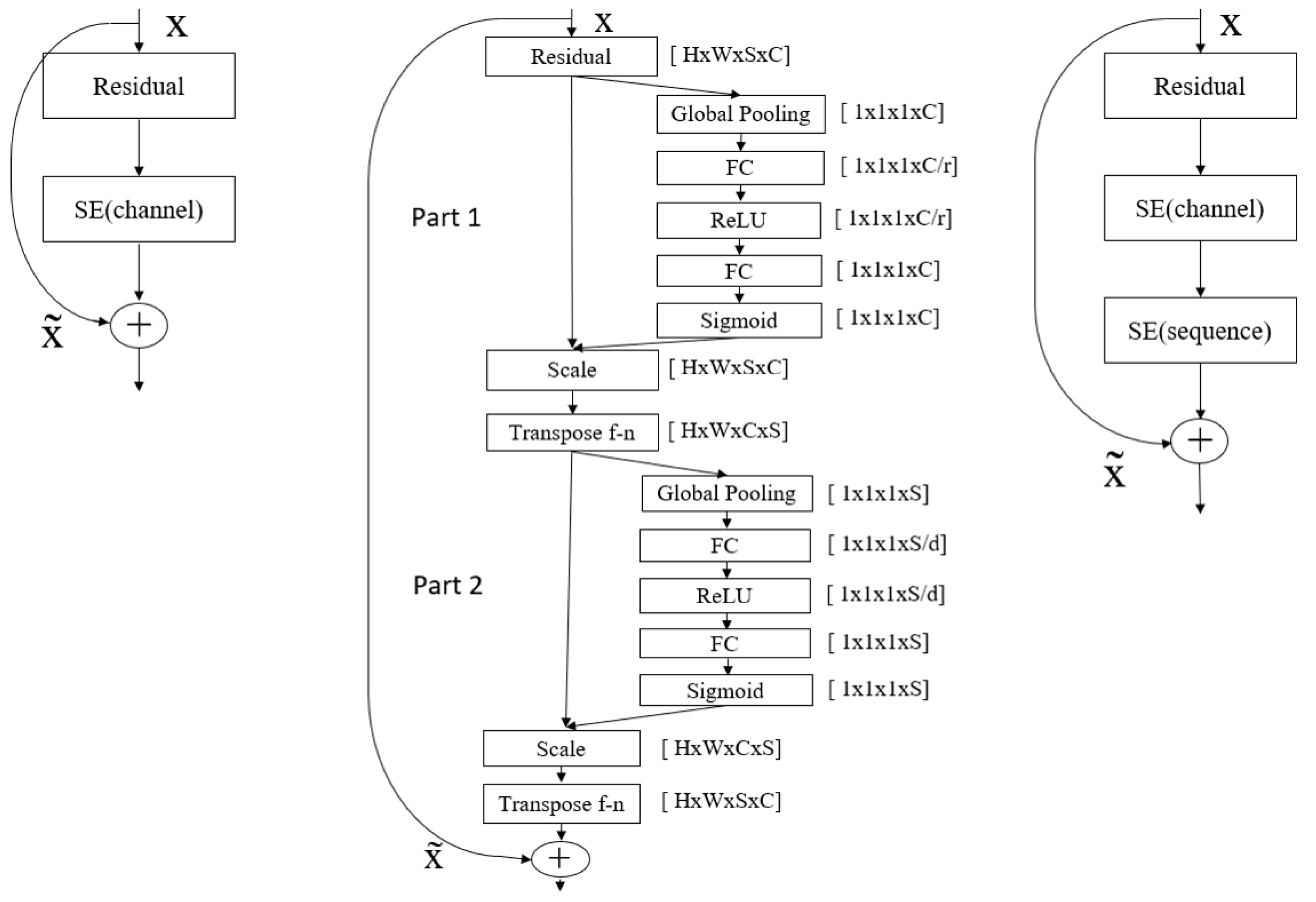

Figure 3 (left) demonstrates the SE module for image-based action recognition, and

Figure 3 (middle) represents the SE (channel) and SE (sequence) parts of the proposed method for video-based action recognition, which consider both channel and sequence of frames.

Figure 3 (right) is an abstract block diagram of

Figure 3 (middle) which consists of two parts. Each part consists of global pooling, two fully connected (FC) layers, ReLU, and sigmoid. In the first part, we applied the SE block for channels. When we implement the SE operation, we set all the values (weight, height, and sequence) to 1 except for the channel value. So, SE operates on the channels (in other words, we need only channels). The output of each SE block for channels (

) is obtained using rescaling with the activation

s:

where

is a channel-wise multiplication of scalar

and the feature map

.

In the second part, to conduct an experiment on the sequence, we swap channel and sequence places using the transpose function. Then the same process is applied as in the first part, but this time for the sequence. The output of the SE block for the sequence is obtained by:

where

is sequence-wise multiplication of scalar

and the feature map

.

In the end, we again apply the transpose function to make the order of weight, height, sequence, and channels the same as at the beginning. The reason for using the transpose function is to continue further operation; the output and input of the SE block should be the same. Then, we add the output of the transpose function with initial X and provide , which is the final output. Reduction numbers (d and r) are hyper-parameters that allow us to vary the capacity and computational cost of the SE blocks in the network. To provide a good balance between performance and computational cost, we conduct experiments with the SE block for different r values. Setting r = 16 (for channel) and d = 2 (for sequence) gave us the best trade-off between performance and complexity. Note that by applying squeeze-and-excitation for both channel and sequence, we can consider both long and short-term action correlations adaptively.

3.2. Self-Attention (SA) Module

Self-attention is a module that calculates the response as a weighted sum of the features at all positions. The main idea of self-attention is to help convolutions throughout the image domain to capture long-range, full-level interconnections. The network implemented with a self-attention module can help to determine images with small details that are connected with fine details in different areas of the image at each position [

20,

21,

22].

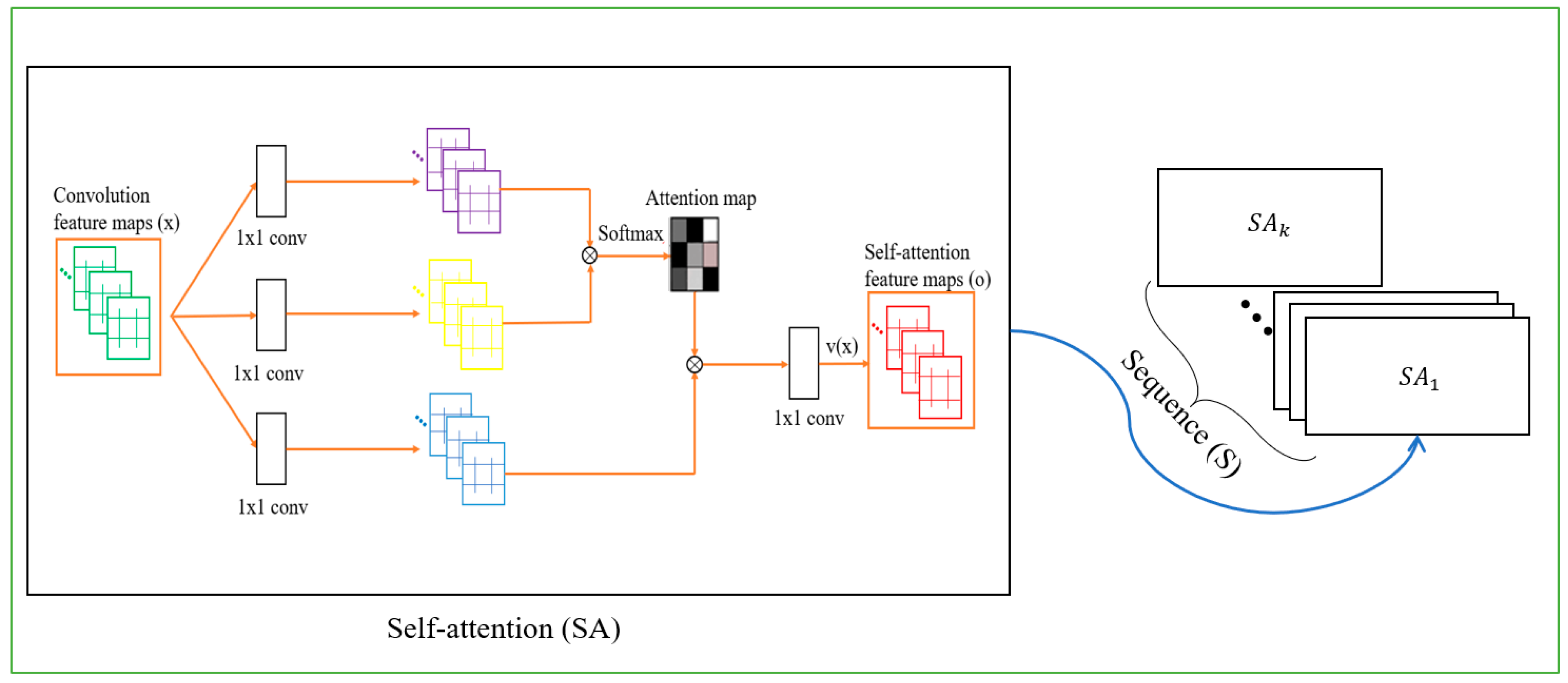

Our task in this experiment is to extend the SA idea to a 3D CNN; more concretely, we implemented the self-attention idea for multiple frames (a sequence). Unlike [

23], where the SA module is implemented on a single image, we examine a multi-frame module for video frames. Since we apply the SA block to every single image in a sequence (16 or 64 frames), the benefit we can get from the SA module is more valuable compared to the single-frame case, and the overall performance is higher.

Most videos in UCF-101 and HMDB51 involve human and item interactions. While the previous methods only focused on a single action [

23], in not all cases can a sequence exactly present the interaction. We implemented self-attention for sequential actions to better understand the interactions of humans and items, since the environment around humans is also an important part of defining actions. Since we consider a 3D CNN with multiple frames, the SA module helps to capture all images to make a better prediction based on the action aspects in images, and helps us use this knowledge to connect all frames logically.

Figure 4 demonstrates our self-attention module for a 3D CNN. Compared to the basic self-attention module, we concatenate these operations sequentially and embed to our SA module for the 3D CNN, as shown in

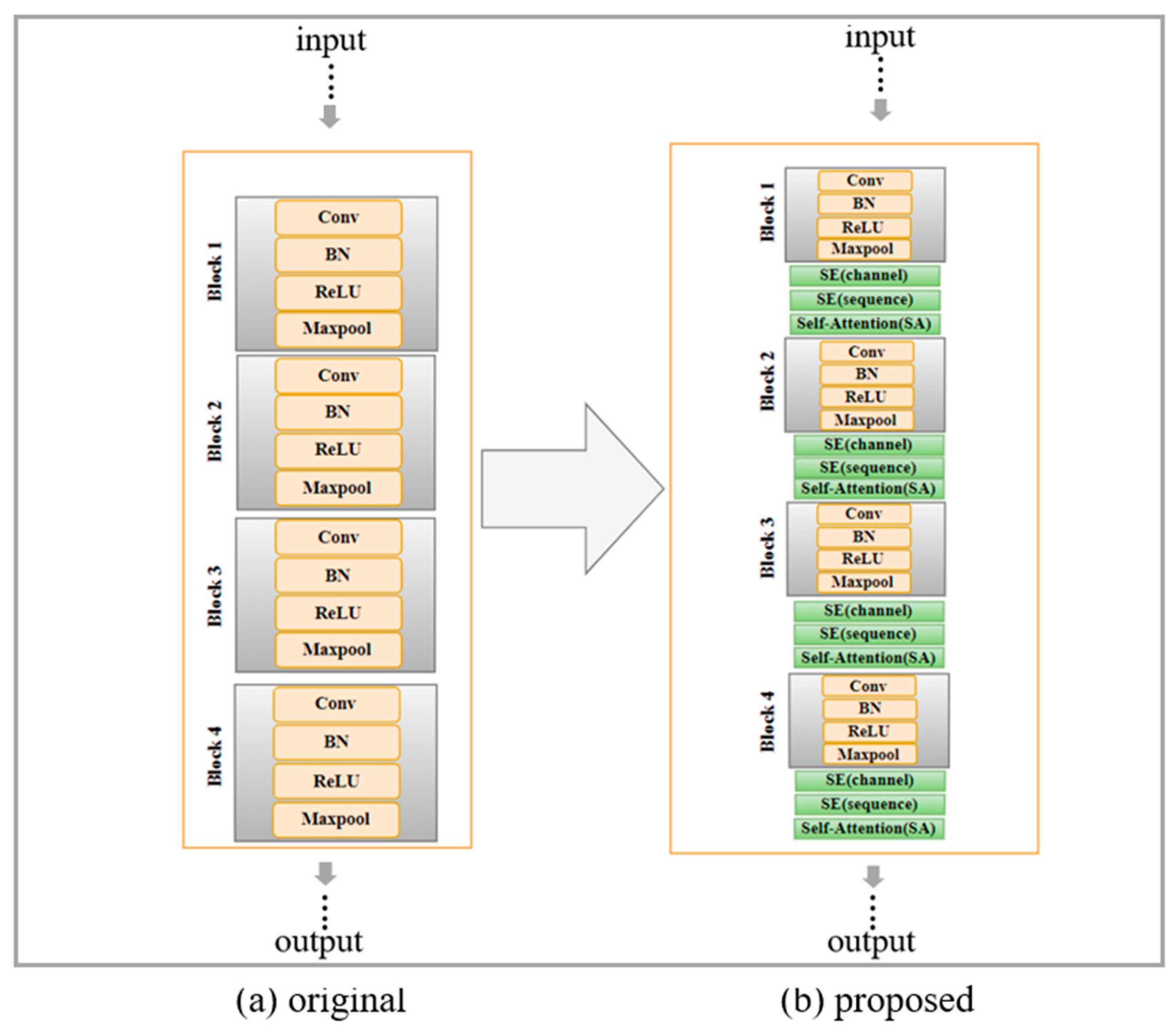

Figure 1.