PatchTST Coupled Reconstruction RFE-PLE Multitask Forecasting Method Based on RCMSE Clustering for Photovoltaic Power

Abstract

1. Introduction

1.1. Current Status and Analysis of PV Power Forecasting Research

1.2. Study Contribution and Paper Layout

- Multi-frequency feature grouping: In existing PV power time series studies, some literature employs CEEMDAN decomposition to address multi-frequency mode mixing. However, the handling of decomposed subsequences varies, and no unified approach has been established. We introduce “CEEMDAN decomposition + RCMSE–K-means clustering”, transforming the multi-frequency mixing problem into independently grouped feature clusters quantified by fluctuation complexity, thereby avoiding the blind treatment of heterogeneous IMFs in traditional methods.

- Unified modeling of long- and short-term dependencies: Existing studies often focus on LSTM for short-term dependencies or Transformer for long-term dependencies, which can lead to imbalances between long-term and short-term features. Here, a patching mechanism with an improved segmentation strategy is introduced. High-frequency IMFs (large RCMSE) are processed with small time-scale patches, while low-frequency IMFs (small RCMSE) use large time-scale patches, enabling simultaneous adaptation to both long-term and short-term temporal dependencies.

- RFE–PLE multi-task forecasting framework: RFE is used to remove redundant features that may interfere with the multi-task prediction of each power subsequence. High-frequency and low-frequency subsequence forecasting tasks are assigned to dedicated private and shared expert networks, while inter-expert interactions are considered, resulting in more balanced predictions across all subsequences.

- Validation on multi-season, multi-parameter, and high-frequency disturbance datasets: Experiments on the publicly available Alice Springs dataset demonstrate that the proposed method outperforms neural networks, SVM, LSTM, GRU, and common MMoE MTL models in terms of the mean absolute error (MAE) and root mean square error (RMSE), providing a novel approach for PV power forecasting research.

2. Coupled Feature Extraction and Feature Selection of Decomposed Power Subsequences

2.1. Decomposition and Aggregation of Power Data

2.1.1. CEEMDAN Decomposition of Power Signals

2.1.2. K-Means Clustering Based on RCMSE

- (1)

- Multiscale decomposition

- (2)

- Calculation of the Sample Entropy

- (3)

- Composite Strategy

- (4)

- K-means Clustering

2.2. PatchTST–BiLSTM Coupled Reconstruction

2.2.1. PatchTST Encoder: Local Perception and Temporal Enhancement

- (1)

- Patch segmentation mechanism and input structure

- (2)

- Patch embedding mapping and temporal information incorporation

- (3)

- Multi-head self-attention mechanism and global dependency modeling

- (4)

- Patch representation output and dimensionality reduction

2.2.2. BiLSTM Decoder: Bidirectional Coupling and Feature Reconstruction

3. Modeling Process

3.1. Model Framework

- Multiscale decomposition and initial decoupling

- 2.

- PatchTST-based reconstruction and secondary decoupling

- 3.

- RFE–PLE MTL prediction

3.2. RFE–PLE Multi-Task Predictive Model

- (1)

- Incorporation of a wrapper-based feature selection mechanism to optimize the multi-task input structure

- (2)

- Integrating Transformer Encoders to Enhance Expert Output Representation

4. Case Study

4.1. Numerical Example

4.2. Analysis of the Effectiveness of the Proposed Predictive Model

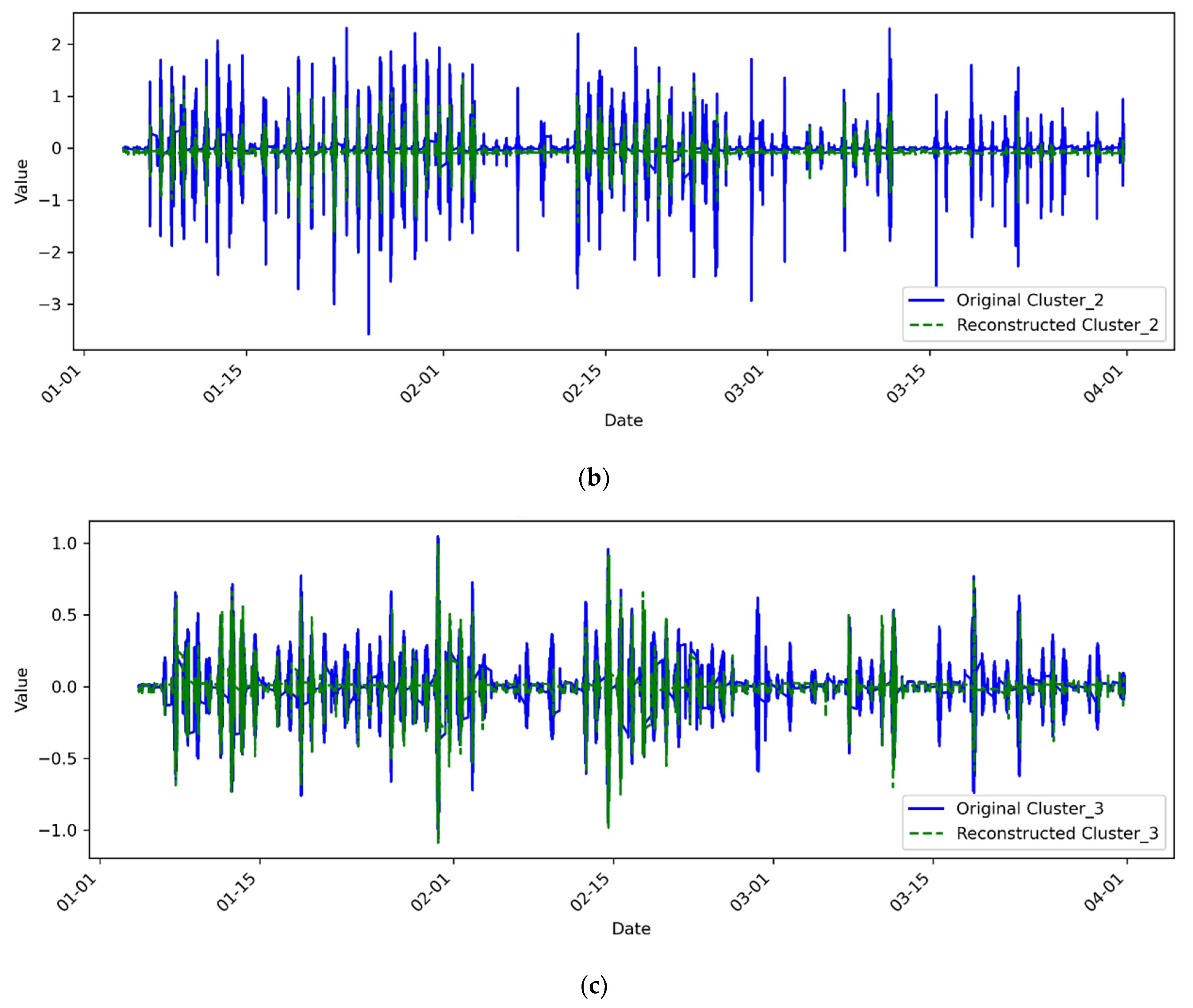

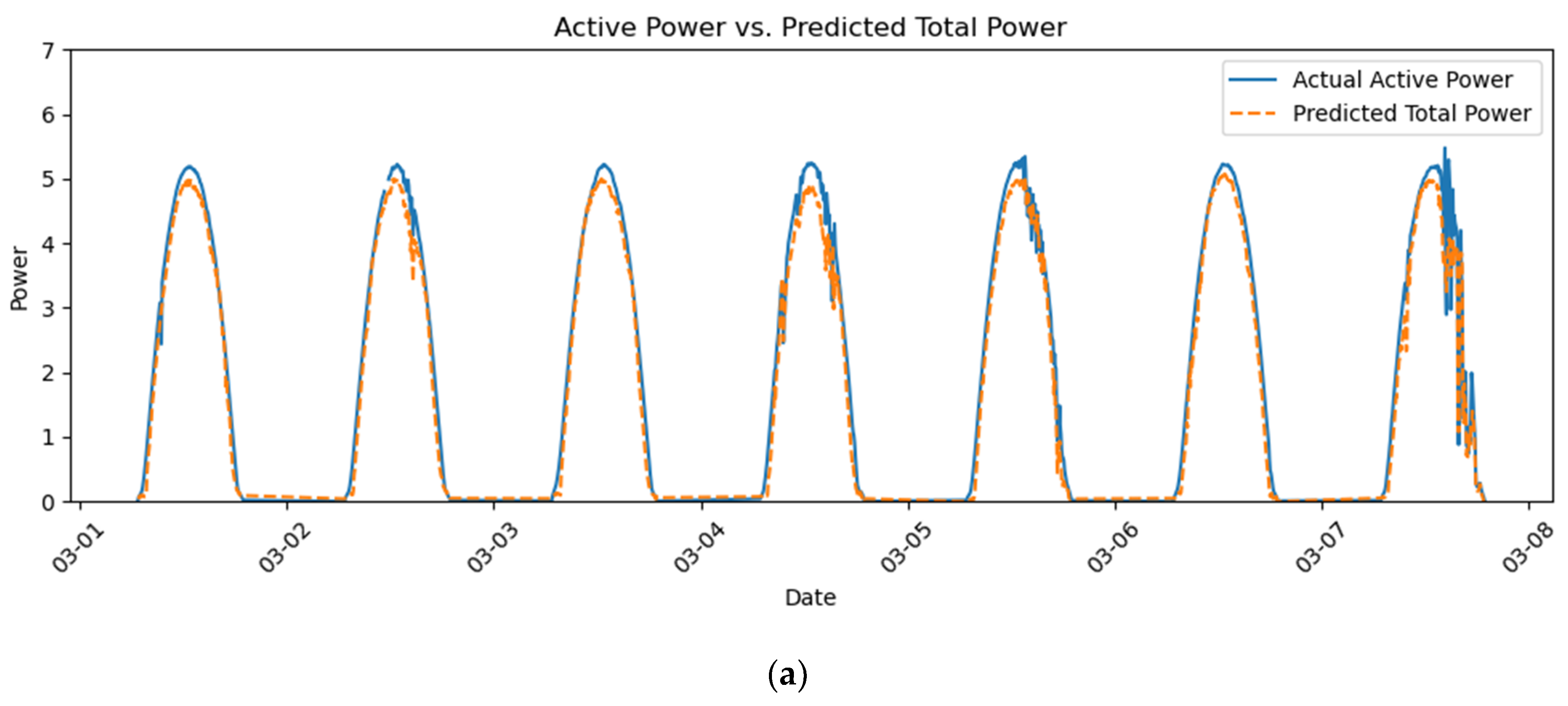

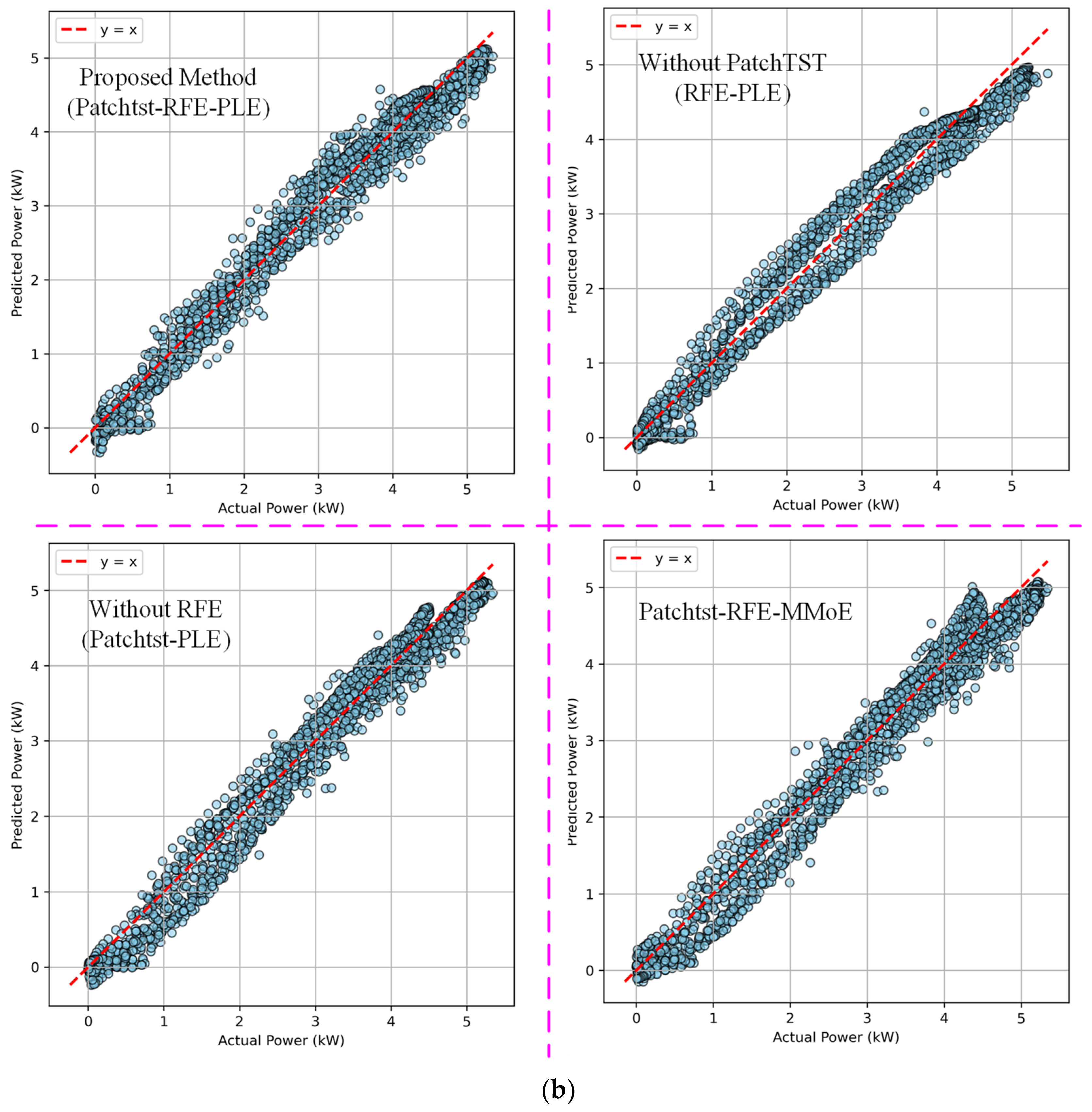

4.2.1. Comparative Analysis of the PatchTST Reconstruction Model

4.2.2. PLE Experiments Using Reconstructed Power Subsequences Without RFE Feature Selection

- (1)

- Experimental design

- (2)

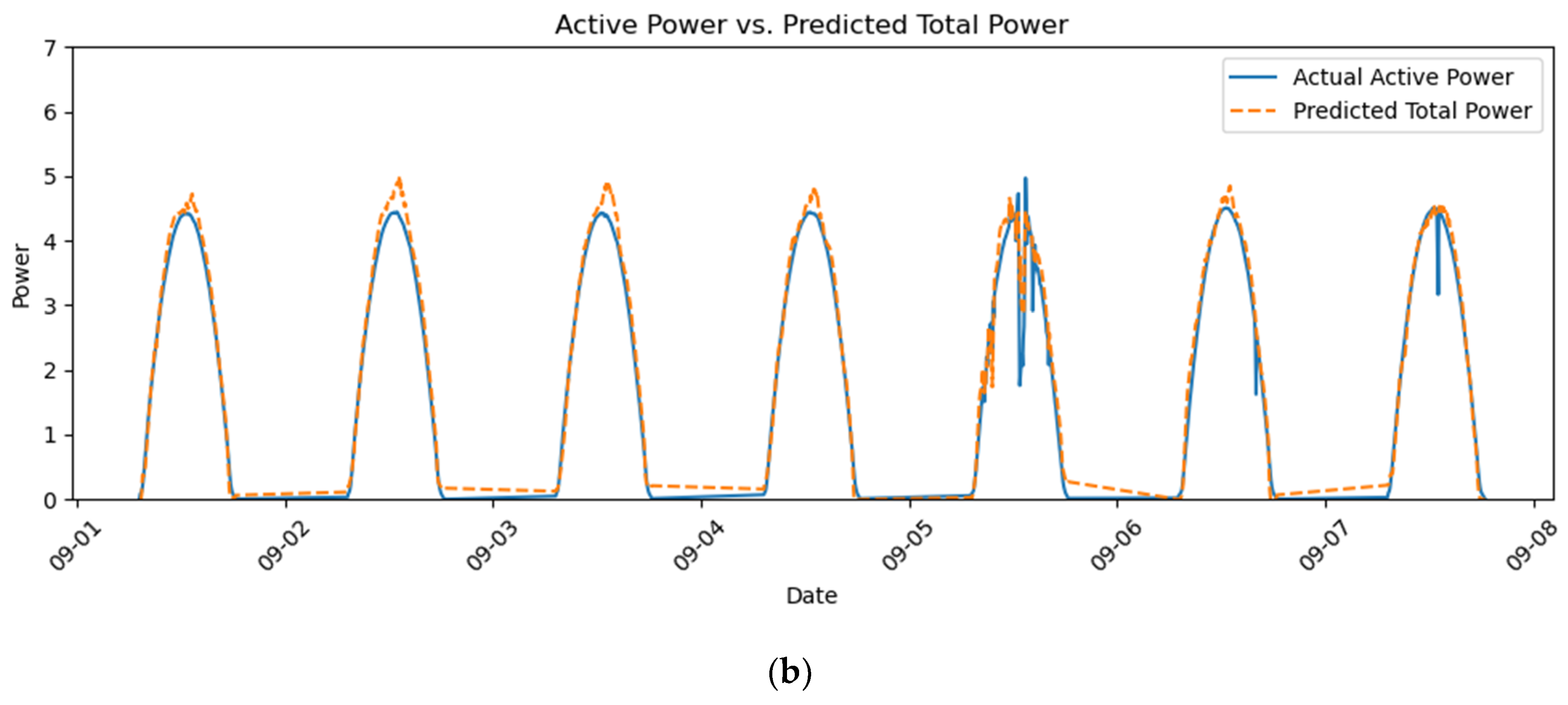

- Experimental results and effectiveness analysis

4.2.3. Replacing the RFE-PLE with the Original MMoE Model

- (1)

- Experimental design

- (2)

- Experimental results and effectiveness analysis

4.2.4. Experimental Results Arising from Use of the Proposed Model

4.2.5. Summary of Vertical Comparative Experiments

4.3. Comparison Between the Proposed Model and Other Models

5. Conclusions

- By employing CEEMDAN decomposition and RCMSE clustering, the power series can be initially decoupled, thereby avoiding the blind treatment of multiscale components in traditional approaches. On this basis, the PatchTST-BiLSTM reconstruction enhances the joint modeling of local disturbances and long-term dependencies, capturing the potential coupling relationships among multi-frequency components. Furthermore, the introduction of the RFE-PLE framework that integrates feature selection with hierarchical expert collaboration, significantly alleviates the problem of negative transfer in MTL.

- Empirical results on the Alice Springs PV power station dataset demonstrate a significant improvement in forecasting accuracy. Compared with the raw data, the introduction of PatchTST reconstruction reduces the average MAE from 0.23 to 0.22 (a reduction of 6.27%) and the RMSE from 0.30 to 0.29 (a reduction of 3.34%). With the addition of RFE-based feature selection, the average RMSE decreases from 0.31 to 0.29 (a reduction of 5.13%).

- Comparative experiments further validate the superiority of the proposed method. Relative to the MMoE framework, the RFE-PLE structure reduces the average MAE by 7.05% and the RMSE by 7.50%. In the CEEMDAN decomposition scenario, the proposed method achieves an average MAE of 0.22 and RMSE of 0.29, which are 45.9% and 44.6% lower than those of the MTL-Attention-LSTM model, respectively. It also outperforms Random Forest (MAE 0.32, RMSE 0.40) and MIMO (MAE 0.38, RMSE 0.48), achieving superior predictive performance.

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| PV | Photovoltaic |

| PLE | Progressive Layered Extraction |

| MMoE | Multi-gate Mixture-of-Experts |

| MTL | Multi-task Learning |

| RFE | Recursive Feature Elimination |

| CEEMDAN | Complete Ensemble Empirical Mode Decomposition with Adaptive Noise |

| STL | Seasonal-trend Decomposition Procedure based on Loess |

| VMD | Variational Mode Decomposition |

| RCMSE | Refined Composite Multiscale Entropy |

| K-Means | K-Means Clustering Algorithm |

| PatchTST | Patch Time Series Transformer |

| BiLSTM | Bidirectional Long Short-Term Memory |

| MLP | Multi-layer Perceptron |

| MAE | Mean Absolute Error |

| RMSE | Root Mean Square Error |

| R2 | Coefficient of Determination |

| IMF | Intrinsic Mode Function |

References

- Ferkous, K.; Guermoui, M.; Menakh, S.; Bellaour, A.; Boulmaiz, T. A novel learning approach for short-term photovoltaic power forecasting—A review and case studies. Eng. Appl. Artif. Intell. 2024, 133, 108502. [Google Scholar] [CrossRef]

- Khouili, O.; Hanine, M.; Louzazni, M.; Flores, M.A.; Villena, E.G.; Ashraf, I. Evaluating the impact of deep learning approaches on solar and photovoltaic power forecasting: A systematic review. Energy Strategy Rev. 2025, 59, 101735. [Google Scholar] [CrossRef]

- Alcañiz, A.; Grzebyk, D.; Ziar, H.; Isabella, O. Trends and gaps in photovoltaic power forecasting with machine learning: A comprehensive review. Energy Rep. 2023, 9, 447–471. [Google Scholar] [CrossRef]

- Sarmas, E.; Spiliotis, E.; Stamatopoulos, E.; Marinakis, V.; Doukas, H. Short-term photovoltaic power forecasting using meta-learning and numerical weather prediction independent LSTM models. Renew. Energy 2023, 216, 118997. [Google Scholar] [CrossRef]

- Mayer, M.J.; Gróf, G. Extensive comparison of physical models for photovoltaic power forecasting. Appl. Energy 2021, 283, 116239. [Google Scholar] [CrossRef]

- Hu, Z.; Gao, Y.; Ji, S.; Mae, M.; Imaizumi, T. Improved multistep ahead photovoltaic power prediction model based on LSTM and self-attention with weather forecast data. Appl. Energy 2024, 359, 122709. [Google Scholar] [CrossRef]

- Zhu, R.; Li, T.; Tang, B. Research on short-term photovoltaic power generation forecasting model based on multi-strategy improved squirrel search algorithm and support vector machine. Sci. Rep. 2024, 14, 14348. [Google Scholar] [CrossRef]

- Yang, M.; Zhao, M.; Liu, D.; Ma, M.; Su, X. Improved random forest method for ultra-short-term prediction of the output power of a photovoltaic cluster. Front. Energy Res. 2021, 9, 749367. [Google Scholar] [CrossRef]

- Ağır, T.T. Estimation of daily photovoltaic power one day ahead with hybrid Deep Learning and Machine Learning models. Energy Sci. Eng. 2025, 13, 1478–1491. [Google Scholar] [CrossRef]

- Yu, J.; Li, X.; Yang, L.; Li, L.; Huang, Z.; Shen, K.; Yang, X.; Yang, X.; Xu, Z.; Zhang, D.; et al. Deep learning models for PV power forecasting: Review. Energies 2024, 17, 3973. [Google Scholar] [CrossRef]

- Dimitriadis, C.N.; Passalis, N.; Georgiadis, M.C. A deep learning framework for photovoltaic power forecasting in multiple interconnected countries. Sustain. Energy Technol. Assess. 2025, 77, 104330. [Google Scholar] [CrossRef]

- Mouloud, L.A.; Kheldoun, A.; Oussidhoum, S.; Alharbi, H.; Alotaibi, S.; Alzahrani, T.; Agajie, T.F.-. Seasonal quantile forecasting of solar photovoltaic power using Q-CNN-GRU. Sci. Rep. 2025, 15, 27270. [Google Scholar] [CrossRef] [PubMed]

- Wang, J.; Zhang, Z.; Xu, W.; Li, Y.; Niu, G. Short-term photovoltaic power forecasting using a Bi-LSTM neural network optimized by hybrid algorithms. Sustainability 2025, 17, 5277. [Google Scholar] [CrossRef]

- Zhu, H.; Wang, Y.; Wu, J.; Zhang, X. A regional distributed photovoltaic power generation forecasting method based on grid division and TCN-BiLSTM. Renew. Energy 2026, 256, 123935. [Google Scholar] [CrossRef]

- Ren, X.; Zhang, F.; Sun, Y.; Liu, Y. A Novel dual-channel temporal convolutional network for photovoltaic power forecasting. Energies 2024, 17, 698. [Google Scholar] [CrossRef]

- Kim, J.; Obregon, J.; Park, H.; Jung, J.-Y. Multi-step photovoltaic power forecasting using transformer and recurrent neural networks. Renew. Sustain. Energy Rev. 2024, 200, 114479. [Google Scholar] [CrossRef]

- Zhao, X.-. A novel digital-twin approach based on transformer for photovoltaic power prediction. Sci. Rep. 2024, 14, 26661. [Google Scholar] [CrossRef]

- Liu, Y.; Wang, J.; Song, L.; Liu, Y.; Shen, L. Enhanced short-term PV power forecasting via a hybrid modified CEEMDAN-jellyfish search-optimized BiLSTM model. Energies 2025, 18, 3581. [Google Scholar] [CrossRef]

- Zhai, C.; He, X.; Cao, Z.; Abdou-Tankari, M.; Wang, Y.; Zhang, M. Photovoltaic power forecasting based on VMD-SSA transformer: Multidimensional analysis of dataset length, weather mutation and forecast accuracy. Energy 2025, 324, 135971. [Google Scholar] [CrossRef]

- Guermoui, M.; Fezzani, A.; Mohamed, Z.; Rabehi, A.; Ferkous, K.; Bailek, N.; Bouallit, S.; Riche, A.; Bajaj, M.; Mohammadi, S.A.D.; et al. An analysis of case studies for advancing photovoltaic power forecasting throughMulti-scale fusion techniques. Sci. Rep. 2024, 14, 6653. [Google Scholar] [CrossRef]

- Han, S.; Qiao, Y.; Yan, J.; Liu, Y.; Li, L.; Wang, Z. Mid-to-long term wind and photovoltaic power generation prediction based on copula function and long short term memory network. Appl. Energy 2019, 239, 181–191. [Google Scholar] [CrossRef]

- Gao, X.; Guo, W.; Mei, C.; Sha, J.; Guo, Y.; Sun, H. Short-term wind power forecasting based on SSA-VMD-LSTM. Energy Rep. 2023, 9 (Suppl. S10), 335–344. [Google Scholar] [CrossRef]

- Agga, A.; Abbou, A.; Labbadi, M.; El Houm, Y.; Ou Ali, I.H. CNN-LSTM: An efficient hybrid deep learning architecture for predicting short-term photovoltaic power production. Electr. Power Syst. Res. 2022, 208, 107908. [Google Scholar] [CrossRef]

- Abdelkader, D.; Fouzi, H.; Khaldi, B.; Sun, Y. Graph neural network-based spatiotemporal prediction of photovoltaic power: A comparative study. Neural Comput. Appl. 2025, 37, 4769–4795. [Google Scholar] [CrossRef]

- Bashir, T.; Wang, H.; Tahir, M.; Zhang, Y. Wind and solar power forecasting based on hybrid CNN-ABiLSTM, CNN-Transformer-MLP models. Renew. Energy. 2025, 239, 122055. [Google Scholar] [CrossRef]

- Chai, M.; Xia, F.; Hao, S.; Peng, D.; Cui, C.; Liu, W. PV power predictionbased on LSTM with adaptive hyperparameter adjustment. IEEE Access 2019, 7, 115473–115486. [Google Scholar] [CrossRef]

- Yu, Y.; Niu, T.; Wang, J.; Jiang, H. Intermittent solar power hybrid forecasting system based on pattern recognition and feature extraction. Energy Convers. Manag. 2023, 277, 116579. [Google Scholar] [CrossRef]

- Sun, S.; Wang, S.; Zhang, G.; Zheng, J. A decomposition-clustering-ensemble learning approach for solar radiation forecasting. Sol. Energy 2018, 163, 189–199. [Google Scholar] [CrossRef]

- Sun, H.; Cui, Q.; Wen, J.; Kou, L.; Ke, W. Short-term wind power prediction method based on CEEMDAN-GWO-Bi-LSTM. Energy Rep. 2024, 11, 1487–1502. [Google Scholar] [CrossRef]

- Khan, A.H.H.; Wang, Y.C. Drift-diffusion modeling-guided interface optimization in BaHfS3 chalcogenide perovskite solar cells. Sol. Energy Mater. Sol. Cells 2026, 294, 113889. [Google Scholar] [CrossRef]

- Hu, T.; Mo, Z.; Zhang, Z. Multi-task pointwise mutual information learning for bearing remaining useful life cross-domain imbalanced regression. IEEE Internet Things J. 2025, 15, 30415–30425. [Google Scholar] [CrossRef]

- Tang, H.; Liu, J.; Zhao, M.; Gong, X. Progressive layered extraction: A novel multi-task learning model for personalized recommendations. In Proceedings of the 14th ACM Conference on Recommender Systems, Online, 22–26 September 2020; pp. 269–278. [Google Scholar] [CrossRef]

- DKASC Solar Data Portal. Alice Springs Photovoltaic Generation Data. 2018. Available online: https://dkasolarcentre.com.au/download?Location=yulara (accessed on 12 August 2025).

- National Solar Radiation Database. Available online: https://nsrdb.nrel.gov/ (accessed on 12 August 2025).

- Xu, H.; Wu, Q.; Wen, J.; Yang, Z. Joint bidding and pricing for electricity retailers based on multi-task deep reinforcement learning. Int. J. Electr. Power Energy Syst. 2022, 138, 107897. [Google Scholar] [CrossRef]

- Wang, Y.; Zhang, D.; Wulamu, A. A multitask learning model with multiperspective attention and its application in recommendation. Comput. Intell. Neurosci. 2021, 2021, 8550270. [Google Scholar] [CrossRef]

- Zheng, R.; Chen, J.; Ma, M.; Huang, L. Fused acoustic and text encoding for multimodal bilingual pretraining and speech translation. In Proceedings of the 38th International Conference on Machine Learning, PMLR. Online, 18–24 July 2021; Volume 139, pp. 12736–12746. Available online: https://proceedings.mlr.press/v139/zheng21a.html (accessed on 12 August 2025).

- Jiang, P.; Nie, Y.; Wang, J.; Huang, X. Multivariable short-term electricity price forecasting using artificial intelligence and multi-input multi-output scheme. Energy Econ. 2023, 117, 106471. [Google Scholar] [CrossRef]

- Lodhi, E.; Dahmani, N.; Bukhari, S.M.S.; Gyawali, S.; Thapa, S.; Qiu, L.; Zafar, M.H.; Akhtar, N. Enhancing microgrid forecasting accuracy with SAQ-MTCLSTM: A self-adjusting quantized multi-task ConvLSTM for optimized solar power and load demand predictions. Energy Convers. Manag. X 2024, 24, 100767. [Google Scholar] [CrossRef]

- Liu, M.; Wang, X.; Zhong, Z. Ultra-Short-term photovoltaic power prediction Based on BiLSTM with wavelet decomposition and dual attention mechanism. Electronics 2025, 14, 306. [Google Scholar] [CrossRef]

- Zhu, M.; Liu, J.; Ji, J. Electrocardiogram Signal Classification Based on Bidirectional LSTM and Multi-Task Temporal Attention. J. Comput. Sci. Technol. 2025, 40, 1401–1413. [Google Scholar] [CrossRef]

| Prediction | Error | Without PatchTST | With PatchTST | Reduction Rate (%) |

|---|---|---|---|---|

| 9 days of stable days (3.1, 3.2, 3.3, 3.6, 9.1, 9.2, 9.3, 9.4, 9.6) | MAE (kW) | 0.21 | 0.20 | 4.57 |

| RMSE (kW) | 0.28 | 0.28 | 0 | |

| 5 days of volatile days (3.4, 3.5, 3.7, 9.5, 9.7) | MAE (kW) | 0.27 | 0.25 | 8.48 |

| RMSE (kW) | 0.31 | 0.31 | 0 | |

| Average | MAE (kW) | 0.23 | 0.22 | 6.27 |

| RMSE (kW) | 0.30 | 0.29 | 3.34 |

| Prediction | Error | Without RFE | With RFE | Reduction Rate (%) |

|---|---|---|---|---|

| 9 days of stable days (3.1, 3.2, 3.3, 3.6, 9.1, 9.2, 9.3, 9.4, 9.6) | MAE (kW) | 0.20 | 0.20 | 0 |

| RMSE (kW) | 0.28 | 0.28 | 0 | |

| 5 days of volatile days (3.4, 3.5, 3.7, 9.5, 9.7) | MAE (kW) | 0.26 | 0.24 | 6.39 |

| RMSE (kW) | 0.35 | 0.31 | 11.53 | |

| Average | MAE (kW) | 0.22 | 0.22 | 2.19 |

| RMSE (kW) | 0.31 | 0.29 | 5.13 |

| Prediction | Error | MMoE | RFE-PLE | Reduction Rate (%) |

|---|---|---|---|---|

| 9 days of stable days (3.1, 3.2, 3.3, 3.6, 9.1, 9.2, 9.3, 9.4, 9.6) | MAE (kW) | 0.21 | 0.20 | 4.12 |

| RMSE (kW) | 0.29 | 0.28 | 3.73 | |

| 5 days of volatile days (3.4, 3.5, 3.7, 9.5, 9.7) | MAE (kW) | 0.27 | 0.24 | 10.12 |

| RMSE (kW) | 0.35 | 0.31 | 11.83 | |

| Average | MAE (kW) | 0.24 | 0.22 | 7.05 |

| RMSE (kW) | 0.32 | 0.29 | 7.50 |

| Prediction Methods | MAE | RMSE | ||||

|---|---|---|---|---|---|---|

| Spring | Autumn | Average | Spring | Autumn | Average | |

| SVR | 0.63 | 0.43 | 0.53 | 0.89 | 0.59 | 0.74 |

| Random Forest | 0.35 | 0.28 | 0.32 | 0.45 | 0.35 | 0.40 |

| Shared_LSTM [35] (2022) | 1.24 | 1.01 | 1.13 | 1.39 | 1.14 | 1.27 |

| MMoE_LSTM_Attention [36] (2021) | 0.61 | 0.21 | 0.41 | 0.69 | 0.26 | 0.48 |

| Shared_Transformer [37] (2021) | 0.41 | 0.17 | 0.29 | 0.46 | 0.21 | 0.34 |

| MIMO [38] (2023) | 0.39 | 0.36 | 0.38 | 0.47 | 0.48 | 0.48 |

| MTL_CNN_LSTM [39] (2024) | 0.30 | 0.33 | 0.32 | 0.39 | 0.41 | 0.40 |

| BiLSTM_Attention [40] (2025) | 1.11 | 0.65 | 0.88 | 1.23 | 0.78 | 1.01 |

| MTL_Attention_LSTM [41] (2025) | 0.47 | 0.35 | 0.41 | 0.57 | 0.48 | 0.53 |

| Proposed | 0.24 | 0.20 | 0.22 | 0.31 | 0.27 | 0.29 |

| Different Stations (Proposed) | MAE | RMSE | ||||

|---|---|---|---|---|---|---|

| Spring | Autumn | Average | Spring | Autumn | Average | |

| 52-Site_33-REC | 0.16 | 0.17 | 0.16 | 0.20 | 0.20 | 0.20 |

| 56-Site_30-Q-CELLS | 0.18 | 0.17 | 0.18 | 0.22 | 0.21 | 0.22 |

| 93-Site_8-Kaneka | 0.28 | 0.13 | 0.21 | 0.34 | 0.18 | 0.26 |

| 91-Site_1A-Trina | 0.24 | 0.20 | 0.22 | 0.31 | 0.27 | 0.29 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Qu, Y. PatchTST Coupled Reconstruction RFE-PLE Multitask Forecasting Method Based on RCMSE Clustering for Photovoltaic Power. Electronics 2025, 14, 4613. https://doi.org/10.3390/electronics14234613

Qu Y. PatchTST Coupled Reconstruction RFE-PLE Multitask Forecasting Method Based on RCMSE Clustering for Photovoltaic Power. Electronics. 2025; 14(23):4613. https://doi.org/10.3390/electronics14234613

Chicago/Turabian StyleQu, Yiyang. 2025. "PatchTST Coupled Reconstruction RFE-PLE Multitask Forecasting Method Based on RCMSE Clustering for Photovoltaic Power" Electronics 14, no. 23: 4613. https://doi.org/10.3390/electronics14234613

APA StyleQu, Y. (2025). PatchTST Coupled Reconstruction RFE-PLE Multitask Forecasting Method Based on RCMSE Clustering for Photovoltaic Power. Electronics, 14(23), 4613. https://doi.org/10.3390/electronics14234613