Abstract

Accelerated development of mobile networks and applications leads to the exponential expansion of resources, which causes problems such as trek and overload of information. One of the practical approaches to ease these problems is recommendation systems (RSs) that can provide individualized service. Video recommendation is one of the most critical recommendation services. However, achieving satisfactory recommendation service on the sparse data is difficult for video recommendation service. Moreover, the cold start problem further exacerbates the research challenge. Recent state-of-the-art works attempted to solve this problem by utilizing the user and item information from some other perspective. However, the significance of user and item information changes under different applications. This paper proposes an autoencoder model to improve recommendation efficiency by utilizing attribute information and implementing the proposed algorithm for video recommendation. In the proposed model, we first extract the user features and the video features by combining the user attribute and the video category information simultaneously. Then, we integrate the attention mechanism into the extracted features to generate the vital features. Finally, we incorporate the user and item potential factor to generate the probability matrix and defines the user-item rating matrix using the factorized probability matrix. Experimental results on two shared datasets demonstrates that the proposed model can effectively ameliorate video recommendation quality compared with the state-of-the-art methods.

1. Introduction

Recommendation systems (RSs) [1,2], aiming at predicting the scores that users might give the items according to historical data, have been applied in many fields, such as social networking, news, information retrieval, courses [3], movies [4], music [5], and knowledge services [6]. However, with the rapid development of information science, more and more applications are continuously generating large-scale data, leading to an information explosion [7]. All these generated data can entail considerable convenience to our daily lives. Meanwhile, this phenomenon also leads to some problems, such as trek and overload of information. Moreover, how to extract valuable information from large-scale data quickly and efficiently becomes a significant challenge [8]. RSs [9] prove to be effective tools to extract useful information from the chaotic application data, and have attracted extensive attention from academics and the industry. As a result, a set of state-of-the-art research works have been proposed, and many practical applications have been developed. Collaborative filtering is one of the most widely used recommendation algorithms. The algorithm generates user groups close to the current user by analyzing their historical behavior data and then determines recommendation content on the basis of the preferences of the current user group. Presently, RS has been successfully applied in many areas, including social networking, news, information retrieval, and e-commerce, and so on. With the rapid development of mobile networks and mobile services, the user and item data are split into different blocks and stored in different devices. However, most current studies focus on web-based applications with the data stored in the same database. How to design the recommendation algorithm for the split data scenario is a new challenge.

The shallow learning-based approach cannot catch the deep features of the user and items given that only one hidden level exists and relying on manual design features, traditional recommendation algorithms have limited manageability against the background of big data. With the commercialization of big data, cloud computing, and other technologies, more and more data are being recorded and stored on the Internet. These data include multiple sources of heterogeneous information, such as video [10], audio, text, graphics, and images [11]. They encapsulate rich user and item background information and individualized demands [12,13,14]. How to effectively alleviate the day sparseness and cold start problems by using the multi-source heterogeneous auxiliary information in the RS has currently become the hottest topic in academic research [15,16]. As a vital branch of artificial intelligence, deep learning technology has outstanding performance in various fields, such as image processing, NLP, and speech recognition, which introduces new commercialization directions for RS [17]. Deep learning can automatically extract features from the massive data of users and items, and obtain deep-level feature representations of users and items. Moreover, deep learning can also integrate multi-source heterogeneous data through feature fusion to obtain a decentralized feature representation [18]. When it is combined with traditional recommendation algorithms, recommendation quality can be ameliorated significantly. Google proposed the Wide & Deep model [19], which combined various linear models and deep neural network models to take the advantages of memory and generalization to make recommendations. Google’s model has played a leading role. Recommendation algorithms based on autoencoders have also been extensively studied in the recommendation field. For example, AutoRec [20] is a classic application of early deep learning techniques in the recommendation field, which combines autoencoders and collaborative filtering to make recommendations. ACAE [21] is the latest application that uses an autoencoder fused with an attention mechanism to display recommendation applications, but it does not use auxiliary information. SASRec [22] is a sequence model based on self-attention. It considers the time correlation of the system to try to identify related items from the user’s operation history so as to predict the next item. It has accomplished impressive results. In [23], Tal et al. proposed two models—DARIA and SARAH—which use the user’s personalized historical information and item attribute information to assist recommendation through a dual attention mechanism. Good experimental results were obtained.

In this research, the author puts forward a multi-head attention autoencoder matrix factorization (MAAMF) model and an autoencoder model multi-head attention autoencoder (MAA) fused with a multi-head self-attention mechanism to mine users and items auxiliary information, and it is combined with probability matrix factorization to realize rating prediction. Autoencoders with a dual-multi-head self-attention mechanism effectively extract the hidden factors of user attribute information and video category information and observe the vital information on the user side and the video side, thus improving the interpretability of the recommendation model based on deep learning. It incorporates probability matrix decomposition to achieve rating prediction, effectively alleviating data sparseness and cold start problems. The main contributions of this paper are summarized as follows:

- (1)

- It utilizes the user’s attribute information and the category information of the video to reveal the potential factors of the user and the video succeedingly and combine the probability matrix factorization to realize the rating prediction.

- (2)

- It explores the role of the self-attention mechanism and multi-head self-attention mechanism in mining hidden factors of side information.

- (3)

- We have carried out numerous experiments on two public datasets. We extracted features by only using user attribute information, through item category information, through the self-attention mechanism, and through multi-head self-attention mechanism using user and item side information simultaneously to illustrate the effectiveness of the proposed MAAMF model.

2. Related Work

2.1. Matrix Factorization

Matrix factorization [24] is the most frequently used recommendation algorithm. The famous Netflix Prize in the RS uses matrix factorization technology. Matrix factorization has attracted considerable attention due to its high recommendation accuracy, strong model scalability, and easy integration of side information. Common matrix factorizations include SVD [25] and NMF [26]. These methods achieve rating prediction by decomposing a high-dimensional sparse rating matrix into multiple low-dimensional matrices and multiplying multiple low-dimensional matrices to approximate the original scoring matrix. EigenRec [27] realizes top-N recommendations by constructing a low-dimensional representation of similarity between items and combining it with the classic PureSVD algorithm. In [28,29,30], Liu et al. discussed the process from traditional matrix factorization to modern RS using neural networks for multi-objective learning [31]. The abovementioned recommendation methods are based only on the user’s attribute information or the category information of the videos. This paper aims to utilize the user attribute cues and videos category cue to predict the rating accurately, hence the self-attention and multi-head self-attention mechanisms are introduced to mine the hidden factors of side information from users and videos.

2.2. Collaborative Filtering

With the advancement of information technology, side material of users or videos, for instance, user or item attribute information, user portrait information, social network information, comment information, and so on, are recorded and stored. The features of users and videos or user preferences are hidden in these big data. How to ascertain useful information from these big data to ameliorate the accuracy of recommendation and reduce the problems of cold start and data sparsity is a current research hotspot. Numerous studies are applying text comment information to assist recommendation. For instance, ConvMF [32], DeepCoNN [33], D-Attn [34], ANR [35], CARL [36], DAML [37], and other models combine all comments of users or items into a long document and then build a model to assist recommendation. Meanwhile, NARRE [38], TARMF [39], and MPCN [40] build a model on the basis of every single piece of comment of the user or item to obtain the feature of each comment, which is then combined to form the features of the user or item to make recommendations. Moreover, many studies use social network information to assist recommendation, such as [41,42,43,44], or using social network and text information to assist recommendation, such as [14,45]. How to extract hidden information from large-scale data is the main challenge faced by RS. In [46], Anastasiu et al. put forward a good research plan on how to find the nearest neighbors from big data and how to expand the potential factors. In [47], Sardianos et al. and in [48], David et al. surveyed various aspects of large-scale social network-based RS, summarizing the challenges and attractive problems, and discussed specific solutions. Current research shows that the advantages of multi-task learning and ensemble learning are very obvious, and they are forceful means to solve multi-source information fusion problem [49,50].

However, in video recommendation, our intuition tells us that people of different genders, age groups, occupations, and regions will have distinct preferences. Similarly, many video websites practice classifying video information by category. One example the fact that children like to watch cartoons is judged by categories. Everyone prefers one or more categories of video. In [51], Zhao et al. applied the recommendation model on the basis of deep learning to the field of industrial video recommendation. He proposed a large-scale multi-objective ranking system, and applied it to the next video recommendation. The experiment achieved good results. In [52], Chen et al. assisted memory-based collaborative filtering recommendation by fusing video category information, which ameliorates the accuracy, coverage, precision, and recall rate of prediction, but it failed to consider the impact of user side information on video recommendation. Utilizing the side information of users and videos simultaneously for ensemble learning is a vital method to ameliorate the accuracy of video recommendation. Recently, the knowledge graph [53,54] is also introduced as the side information for the recommendation algorithms, which has achieved significant success in the field of natural language processing. Although the existing studies have made some progress in RS, no work has considered the user’s attribute information and the category information of the video in an online platform to predict rating.

3. Proposed MAAMF Model

This section first introduces the structure of the proposed deep hybrid rating prediction model MAAMF, which integrates the dual-multi-head self-attention mechanism. By using autoencoder with the multi-head self-attention mechanism, the potential factors of the user side and the project side are determined. The factors are then integrated into the probability matrix decomposition to achieve rating prediction. The principle of the MAAMF model is explained in detail subsequently. Finally, the MAAMF model is optimized through maximum a posteriori estimation. Table 1 summarizes all the symbols used in this article to clarify our method.

Table 1.

Summary of symbols description.

3.1. MAAMF Model Structure

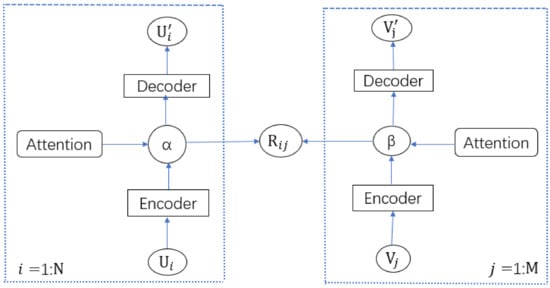

Figure 1 shows the structure of the deep hybrid rating prediction model MAAMF fused with the dual-multi-head self-attention mechanism. In the figure, N is the number of users and M is the number of videos, R is the user video rating matrix, and is the rating of the i-th user on the j-th video. is the side information of the i-th user, such as the user’s rating information and the user’s attribute information. shows the side information of the j-th item, such as the rating information of the video and the category information of the video. α is the compressed representation of , and β is the compressed representation of . λu is the user regularization parameter, λv is the project regularization parameter, and are the new approximate representations obtained by decompressing and through the autoencoder of the fusion multi-head attention mechanism, respectively. The purpose of MAAMF is to integrate MAA into the PMF framework and achieve rating predictions through PMF and two MAAs.

Figure 1.

MAAMF model structure.

This study aims to identify the potential matrix of users and videos ) to rebuild the rating matrix R, and the observed rating matrix is calculated as follows:

where is the probability density function of the Gaussian normal distribution with mean and variance . demonstrates the indicator function. If user i evaluates the video j, then it is set to 1, otherwise, it is set to 0.

3.2. MAAMF Model Principle

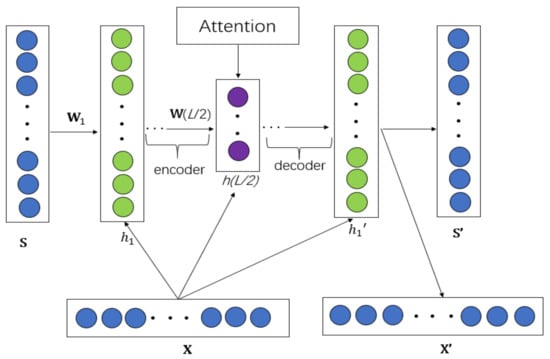

The core of our MAAMF model is the MAA module. The purpose is to use two MAA modules to obtain the potential factors of users and videos from user side information and video side information, respectively. The MAA module is shown in Figure 2. In the figure, S represents side information, specifically user attribute information or video category information in this model. X represents user rating or item rating information. S’ represents decompressed auxiliary information, and X’ represents the scoring information after decompression. h represents the hidden layer, and L represents the number of hidden layers. h1 represents the first hidden layer, h(L/2) represents the L/2-th hidden layer. W represents the weight parameter, and W1 represents the first layer weight parameter, W(L/2) represents the weight parameter of the L/2-th layer.

Figure 2.

MAA module.

The input layer uses the preprocessed tensor that is the side information as the input of the neural network. In this study, the user side information is the concatenation of the user rating matrix and the user characteristic information, and the video side information is the concatenation of the video rating matrix and the video classification information.

The coding layer contains four layers, which are compressed layer by layer from 400 to 200 to 100 to 50. After each layer passes through the fully connected layer, batch normalization is performed, and then activated by the relu activation function, and finally, dropout is performed to prevent overfitting.

The multi-head attention layer selects vital features. The multi-head attention mechanism [55] first conducts linear transformation, and then the outcome is input to the scaled dot-Product attention, which is done h times in total. One head for each time, so it becomes multi-head. means the time, means to query, means key, means the values. , , and respectively represent the parameter matrix of , , and in the mith transformation, is the weight initialization parameter, as shown in Equations (2) and (3). The parameters are not shared among the heads, and then the h times of scaled dot-Product attention results are concatenated, and the value is calculated by performing linear transformation, which is used as the result of multi-head attention:

The multi-head attention mechanism is developed on the basis of self-attention. The calculation of self-attention involves the connection between one input and all other inputs, and the calculation process mainly involves matrix multiplication. The self-attention mechanism is to perform three linear transformations on the result matrix of the embedding layer to obtain three new matrices named query matrix , key matrix , and value matrix . The query matrix is used as the score corresponding to each key matrix . The score and the key matrix are multiplied to calculate the corresponding weight, which is then multiplied by the value matrix . After performing a weighted average on the value matrix, we can finally obtain the scaled dot-Product attention. In practice, multiple parallel self-attention mechanisms are often used to form a multi-headed attention mechanism, that is, multiple attention matrices and multiple weights are used to weight the input values, and finally the weighted average results are concatenated. The self-attention mechanism can only capture the correlation between adjacent information. If multiple self-attention mechanisms are used, it can capture the correlation between relatively distant information. The final concatenation combines multiple attention mechanisms that can better describe the relationship between information at different distances.

The decoding layer corresponds to the coding layer, decompressing layer by layer from 50 to 100 to 200 to 400. Likewise, after each layer passes through the fully connected layer, batch normalization is performed, and then activated by the relu activation function, and finally, dropout is performed to prevent overfitting.

The probability density function of the zero-mean spherical Gaussian distribution above the user potential model with a difference of is shown in Equation (4):

Different from the traditional PMF model, we assume that the user latent vector is affected by the MAA internal weight , represents the ancillary information of the i-th user, and is the influence of Gaussian noise, and we obtain Equation (5) as follows:

Using them to optimize the rating of the user’s potential model, hence we obtain Equation (6):

where is its variance, and k represents the dimension of the latent factor. The user potential model based on conditional distribution is written as,

where A represents the side information of the user, the latent vector obtained by the MAA module is used as the mean of the Gaussian distribution, the variance is the variance of the Gaussian distribution, I is the identity matrix, and n is the user number.

Similarly, we can obtain the probability density function of the internal weight W of the item as shown in Equation (8). In addition, the probability density function of the item based on conditional distribution is shown in Equation (9), where is its variance, and k represents the dimension of the potential factor, C represents the ancillary information of the video, the latent vector obtained by the MAA module is used as the mean of the Gaussian distribution, the variance is the variance of the Gaussian distribution, I is the identity matrix, and m is the item number:

3.3. Model Optimization

The variables , ,, and are optimized by using maximum a posterior estimation. In Equation (10) A represents user side information, C represents project side information, and R represents predicted rating. U represents the user potential factor, V represents the project potential factor, and i and j represent the variables of the user and the project. n is the number of users, m is the number of videos, W is the internal weight of each model, and is the variance of the corresponding Gaussian distribution:

Taking the logarithm of both sides of Equation (10) we obtain Equation (11)

Substituting Equations (1), (6), (7) (8), (9) into Equation (11), and then making both sides negative we obtain Equation (12):

We utilized the coordinate descent method to minimize , and iteratively optimize the latent variables while fixing remaining parameters. Equation (12) is the quadratic function of U. Assuming that V and W are constants, the loss function can be obtained by differentiation. Take the same operation on V to obtain the following expressions:

where , , , and are diagonal matrices, and and are balance parameters. Equation (13) shows the effect of updating by the user potential vector through , and Equation (14) shows the effect of updating by the project potential vector through . However, we cannot update and similar to U and V, because and are linked to the nonlinearity in the MAA architecture. When U and V are temporarily constant, the loss function can be referred to as the regular term weighted square error function of . We obtain and as given in Equations (15) and (16).

By optimizing U, V, , and W, we can finally obtain the predicted user’s rating Equation (17):

where is the expected value of rating.

4. Experimental Results and Discussion

In this section, two shared datasets from the video field are used to verify the proposed MAAMF model. First, the datasets and evaluation indicators are described. Then the environment and basic parameter settings of the experiment are introduced, and then the principle of the baseline model is briefly described. Finally, the best result was obtained through experimental adjustment, and the final result was studied and compared to the baseline model.

4.1. Datasets and Evaluation Indicators

To accurately evaluate the performance of the MAAMF model, experiments were conducted on the MovieLens shared dataset. The MovieLens dataset [56] is one of the most widely used open-source datasets in the field of video recommendation. Users’ ratings of films range from 1 to 5. This experiment uses two subsets ML-100k and ML-1m, as shown in Table 2. The table presents that ML-100k is rating data in order of 0.1 million, and ML-1m is rating data in order of 1 million.

Table 2.

Statistics of the two preprocessed datasets.

Prediction accuracy measures the ability of a recommendation model to predict user behavior, and is the most vital offline evaluation indicator for recommendation algorithms. This research aims to ameliorate the accuracy of rating prediction. Root mean square error (RMSE) and mean absolute error (MAE) are used to evaluate the performance of the MAAMF model. For user U and video V in the dataset, is the actual rating data of user for video , and is the predicted rating data given by the recommendation model, is the number of ratings in the test set. The definitions are as (18) and (19), respectively:

4.2. Experimental Software and Hardware Environment and Parameter Settings

This experiment was carried out on the integrated development platform PyCharm Community Edition 2020.1.1 x64 of Windows 10, using Python 3.7 language development, and the Keras 2.3.1 deep learning library. The equipment environment is an Intel Core i7 4790K 4.00 GHz CPU, equipped with a GeForce GTX 1080 GPU and 32 GB of RAM.

The project side has 18 categories, and an empty category is designed and added to form 19 categories. If a video does not have a category, the 19th column is 1, otherwise, it is marked 1 in the corresponding column. If a video belongs to more than 1 category, then 1 is marked on each of these corresponding columns to obtain the category representation matrix of the video. The auxiliary information on the user side uses the user’s ID, gender, age, occupation, and postcode, which are transformed into a vector through one-hot encoding. Training data recorded for 80%, cross-validation data recorded for 10%, and test data recorded for 10%. The dimension of the hidden factor is 50.

4.3. Baseline Models

Six well-known baseline models, including PMF, SVD, aSDAE, R-ConvMF, PHD, and DUPIA are compared to the MAAMF model proposed in this study. The are briefly described as follows:

PMF [24]: Probability matrix decomposition is a widely known collaborative filtering model. It explains the rating prediction task from the perspective of probability. It assumes that the rating obeys a Gaussian distribution.

SVD [25]: Singular value decomposition model is a prominent matrix factorization model, which uses rating information to achieve rating prediction.

aSDAE [57]: aSDAE is a two-tower model, which extracts the hidden factors of the auxiliary information on the user side and the project side through two SDAEs. The item side information uses the word bag model as the input of the deep learning model.

R-ConvMF [58]: Convolutional matrix factorization is a text context-aware hybrid recommendation prototype. It uses item statistics that capture contextual information, integrates CNN into the PMF framework, and considers Gaussian noise in different ways.

PHD [59]: PHD is a new hybrid model that uses an autoencoder to extract user-side hidden factors. Meanwhile, it uses a convolutional neural network to extract item-side text hidden factors, which is then integrated into the PMF framework to achieve rating prediction.

DUPIA [60]: The DUPIA model is based on the PHD model and adds a self-attention to the CNN on the item side.

4.4. Analysis of Experimental Results

In this part, we first explored the influence of different parameters on the experimental results, and then compared the MAAMF model we proposed to the baseline models.

4.4.1. Influence of Balance Parameters on Experimental Results

Table 3 and Table 4 test the changes of RMSE and MAE on the two shared datasets under different combinations of λu and λv, and the best results are shown in bold. The tables exhibit that when λu = 8, λv = 50 on ML-100k and λu = 3, λv = 120 on ML-1m, the MAAMF model achieves the best results. It indicates that the appropriate combination of λu and λv plays a vital role in balancing user- and project-side information. Adjusting the λu and λv hyperparameters has a vital influence on RMSE and MAE.

Table 3.

Influence of λu and λv on RMSE and MAE on ML-100k.

Table 4.

Influence of λu and λv on RMSE and MAE on ML-1m.

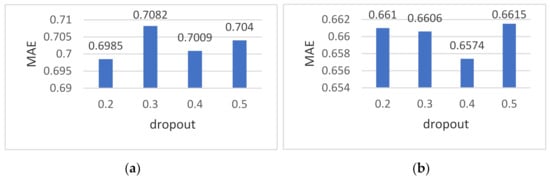

4.4.2. Influence of Different Dropouts on the Experimental Results

Dropout is one of the most effective and commonly used regularization methods in neural networks. It reduces the risk of overfitting by randomly discarding some layer output features. Figure 3a,b present that when the dropout is 0.2, the ML-100k achieves the optimal MAE value of 0.6985, and when the dropout is 0.4, the ML-1m achueves the optimal MAE value of 0.6574. To reduce overfitting, dropout regularization significantly improved the model’s generalization ability.

Figure 3.

(a) Description of MAE values under different dropouts on the ML-100k dataset; (b) Description of MAE values under different dropouts on the ML-1m dataset.

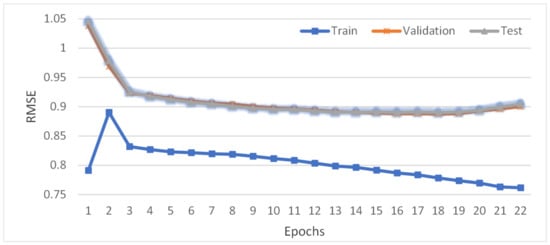

4.4.3. Changes of RMSE Value with the Number of Iterations

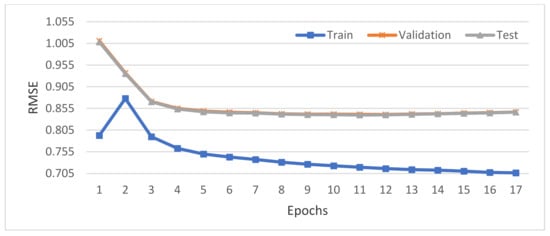

In the experiment, we assume that when the RMSE value of the test set increases more than five times during the iteration process, the training is terminated. Figure 4 and Figure 5 show that the RMSE values of the cross-validation and the test set are very close. The RMSE value of the training set increased once before decreasing continuously. The RMSE value on the cross-validation set and the test set initially decreased and then slowly increased.

Figure 4.

Changes of the RMSE of the training set, cross-validation set, and test set under ML-100k with the number of iterations.

Figure 5.

Changes of the RMSE of the training set, cross-validation set, and test set under ML-1m with the number of iterations.

4.4.4. Total Results of the Experiment

Table 5 shows the RMSE results of the proposed model and five well-known RS models on two shared datasets, where MAAMF-U in the table indicates that only user attribute information is used to assist recommendation, and MAAMF-I indicates that only item category information is used to assists recommendation. MAAMF-UI-S represents a user and item information-assisted recommendation through the fusion of self-attention mechanism, and MAAMF-UI-M represents user and item information assisted recommendation through the fusion of multi-head self-attention mechanism. The results of SVD and aSDAE in the table reveal that if there is little hidden information in the auxiliary information, or the training effect of deep learning is not good, the classic traditional recommendation models may outperform the recommendation models based on deep learning. The comparison of the experimental results of MAAMF-UI-S and MAAMF-UI-M with the results of other models shows that the vital information in the auxiliary information can be paid attention to by fusing the attention mechanism.

Table 5.

RMSE result of different models in the two shared datasets. Results of the state-of-the-art models are underlined, and the optimal result of our model is marked in bold.

Compared with the most advanced model DUPIA, the RMSE value of MAAMF-UI-S on the ML-100k dataset has improved by 3.46%, whereas the performance of DUPIA on the same dataset has improved by 1.70% compared with PHD, which is lower than the improvement of MAAMF-UI-S. The RMSE value of MAAMF-UI-M on the ML-1m dataset has improved by 0.47% when compared with DUPIA, which has an improvement of 0.31% on the same dataset when compared with PHD, lower than MAAMF-UI-M’s improvement. The comparison of experimental results of MAAMF-U and MAAMF-I shows that different auxiliary information affects the experimental results distinctly. In this study, the recommendation assisted with item category information achieves a better result than the recommendation assisted with user attributes. Compared with MAAMF-U, the RMSE value of MAAMF-I on the ML-100k dataset has improved by 1.24%.

Among the well-known recommendation algorithms, the PHD and DUPIA model that uses user and project auxiliary information simultaneously has the same design thought as MAAMF-UI-S and MAAMF-UI-M. It has an outstanding performance on ML-1m with a large amount of data, which shows that integrating multi-source information of users and projects simultaneously and expanding the number of samples in the training dataset are the future research directions. Given that the RMSE value of MAAMF-UI-S on ML-100k is lower than that of MAAMF-UI-M may result from the relatively small scale of the ML-100k dataset, and the deep learning algorithm shows instability on small-scale datasets. When PHD and MAAMF are compared, the optimal deep learning based model and the optimal neural attention mechanism based model respectively, the RMSE values of the latter are respectively 5.10% and 0.78% better than the former.

In addition, we performed a one-tailed paired t-test test on the results of the DUPIA model and the MAAMF-UI-M model under different training data percentages in the ML-1m dataset. We found that there is a significant difference between the results of the DUPIA model and the MAAMF-UI-M model, with the p-value of the t-test reported in Table 6. The experimental results prove that our model outperforms the state-of-the-art model.

Table 6.

The p-value of the one-tailed paired t-test between the DUPIA model and the MAAMF-UI-M model.

The overall results of the experiment show that user attribute information and video category information impact the score prediction results. Mining the potential factors on the user side and the potential factors on the item side simultaneously can more effectively assist the recommendation. In addition, importance of the information in the auxiliary information varies, and more attention on more important information will improve recommendation outcome. This provides support for future research on constructing a unified multi-source information fusion framework.

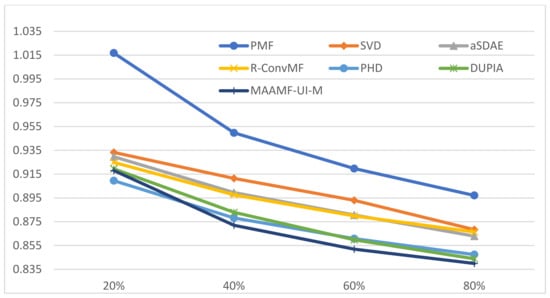

4.4.5. Comparison of Experimental Results under Different Percentages

Finally, the effect of different training data percentages on the RMSE results is also studied. The results are shown in Figure 6. The figure reveals that the proposed MAAMF model obtains the best result except for 20% training data. In the figure, except for the PMF and SVD models, the other models are applications of deep learning in the RS. The results in the figure show that recommendation models combined with deep learning achieve better results than the traditional recommendation models, but the auxiliary information source and the algorithm used to extract potential factors significantly impact the experimental results. The figure also presents that for the same model, as the percentage of training data increases, the result of RMSE gradually decreases, and the accuracy of rating prediction gradually increases. The RMSE curves of the aSDAE and R-ConvMF models cross, and the RMSE curves of the PHD and MAAMF-UI-M models cross, indicating that the robustness of the recommendation model based on deep learning must be ameliorated.

Figure 6.

Comparison of the influence of different training data percentages of different models on RMSE results.

In this experiment, we discussed the influence of the regularization parameters λu, λv, and dropout on the experimental results, and described the changes in the RMSE values of the training set, cross-validation set, and test set under different iteration times. A comparative study between the model proposed in this study and the five well-known baseline models reveals the feasibility of the proposed MAAMF model. The performance of different models is compared through experimental profits under different training data percentages. PMF and SVD are classic collaborative filtering recommendation algorithms. aSDAE, R-ConvMF, and PHD are recommendation algorithms based on deep learning. DUPIA and MAAMF are recommendation algorithms based on neural attention mechanism.

The overall results of the experiments and the comparison results under different percentages reveal that these models are constantly developing and improving, from solely using scoring data to using scoring data and auxiliary information, from using auxiliary information to paying more attention to important information in auxiliary information. The experiment unveils that the use of side details can ameliorate the accuracy of rating prediction, and different side information significantly impacts the prediction results. Making full and effective use of multi-source heterogeneous data can better assist recommendations. In addition, the success of MAAMF demonstrates the importance of the user’s attribute information and the category information of the video in the RS, which was ignored by previous research.

5. Conclusions

In this study, we proposed an autoencoder model fused with a multi-head self-attention mechanism to mine user and item auxiliary information combined with probability matrix decomposition to achieve rating prediction. The autoencoder model fused with the dual multi-head self-attention mechanism effectively extracts the hidden factors of the user’s basic attribute information and the item category information and pays attention to the vital information on the user side and the item side. It ameliorates the efficiency of the recommendation model on the basis of deep learning. The autoencoder model is combined with probability matrix decomposition to achieve rating prediction. Experimental results on two shared datasets of ML-100k and ML-1m demonstrate the usefulness of the proposed MAAMF model. Exploring more hidden factors in auxiliary information is a vital way to ameliorate the quality of recommendation. Furthermore, the time dimension of auxiliary information is also a vital factor affecting recommendation. For example, most people prefer to pay attention to the latest movies. How to better utilize time details is the focus of future research. We have also noticed that some auxiliary information will also influence one another. Ultimately, future studies must examine that how to effectively integrate the features of each auxiliary information in the RS.

Author Contributions

Conceptualization, Q.L., C.D.; methodology, Q.L.; software, C.D., K.L.; validation, C.D., J.S. and Q.L.; formal analysis, J.S., Q.L.; investigation, C.D.; resources, J.S., K.L.; data curation, J.S., K.L.; writing—original draft preparation, C.D.; writing—review and editing, Q.L.; visualization, C.D.; supervision, J.S.; project administration, Q.L.; funding acquisition, J.S., Q.L. All authors have read and agreed to the published version of the manuscript.

Funding

This paper financially is supported by the National Natural Science Foundation of China (61807012, 62077021, 61807013), the Humanity and Social Science Youth Foundation of Ministry of Education of China (20YJC880083), the Strategic research projects of Ministry of Education of China (2020JYKX04), and the Fundamental Research Funds for the Central Universities (CCNU20QN027,CCNU20ZN007).

Institutional Review Board Statement

The presented research was conducted according to the code of ethics of University of Minnesota.

Informed Consent Statement

Informed consent was obtained from all subjects involved in the study.

Data Availability Statement

Data Availability Statement: Data of our study is available upon request.

Conflicts of Interest

The authors declare that there is no conflict of interest regarding the publication of this paper.

References

- Ban, Y.; Lee, K. How the Multiplicity of Suggested Information Affects the Behavior of a User in a Recommender System. Electronics 2021, 10, 741. [Google Scholar] [CrossRef]

- Krichene, W.; Rendle, S. On Sampled Metrics for Item Recommendation. In Proceedings of the 26th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining, Virtual Event, CA, USA, 6–10 July 2020; ACM: New York, NY, USA, 2020; pp. 1748–1757. [Google Scholar] [CrossRef]

- Lin, Y.; Feng, S.; Zeng, W.; Lin, F.; Liu, Y.; Wu, P. Adaptive course recommendation in MOOCs. Knowl. Based Syst. 2021, 224, 107085. [Google Scholar] [CrossRef]

- Jin, J.; Qin, J.; Fang, Y.; Du, K.; Zhang, W.; Yu, Y.; Zhang, Z.; Smola, A.J. An Efficient Neighborhood-based Interaction Model for Recommendation on Heterogeneous Graph. In Proceedings of the 26th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining, Virtual Event, CA, USA, 6–10 July 2020; ACM: New York, NY, USA, 2020; pp. 75–84. [Google Scholar] [CrossRef]

- Paul, D.J.; Kundu, S. A survey of music recommendation systems with a proposed music recommendation system. In Emerging Technology in Modelling and Graphics. Advances in Intelligent Systems and Computing; Springer: New York, NY, USA, 2020; Volume 937. [Google Scholar] [CrossRef]

- Li, Z.; Liu, H.; Zhang, Z.; Liu, T.; Xiong, N.N. Learning Knowledge Graph Embedding with Heterogeneous Relation Attention Networks. IEEE Trans. Neural Netw. Learn. Syst. 2021, 10, 1–13. [Google Scholar]

- Warren, J. Big Data: Principles and Best Practices of Scalable Realtime Data Systems; Manning Publications: Shelter Island, NY, USA, 2015. [Google Scholar]

- Adomavicius, G.; Tuzhilin, A. Toward the next generation of recommender systems: A survey of the state-of-the-art and possible extensions. IEEE Trans. Knowl. Data Eng. 2005, 17, 734–749. [Google Scholar] [CrossRef]

- Li, D.; Liu, H.; Zhang, Z.; Lin, K.; Fang, S.; Li, Z.; Xiong, N.N. CARM: Confidence-aware recommender model via review representation learning and historical rating behavior in the online platforms. Neurocomputing 2021, 455, 283–296. [Google Scholar] [CrossRef]

- Liu, T.; Li, Y.; Liu, H.; Zhang, Z.; Liu, S. RISIR: Rapid Infrared Spectral Imaging Restoration Model for Industrial Material Detection in Intelligent Video Systems. IEEE Trans. Ind. Inf. 2019, 1. [Google Scholar] [CrossRef]

- Liu, T.; Liu, H.; Li, Y.; Zhang, Z.; Liu, S. Efficient Blind Signal Reconstruction with Wavelet Transforms Regularization for Educational Robot Infrared Vision Sensing. IEEE/ASME Trans. Mechatron 2019, 24, 384–394. [Google Scholar] [CrossRef]

- Sun, Z.; Guo, Q.; Yang, J.; Fang, H.; Guo, G.; Zhang, J.; Burke, R. Research commentary on recommendations with side information: A survey and research directions. Electron. Commer. Res. Appl. 2019, 37, 100879. [Google Scholar] [CrossRef] [Green Version]

- Ravanifard, R.; Buntine, W.; Mirzaei, A. Recommending content using side information. Appl. Intell. 2021, 51, 3353–3374. [Google Scholar] [CrossRef]

- Liu, T.; Wang, Z.; Tang, J.; Yang, S.; Huang, G.Y.; Liu, Z. Recommender systems with heterogeneous side information. In Proceedings of the World Wide Web Conference (WWW ’19), San Francisco, CA, USA, 13–17 May 2019; pp. 3027–3033. [Google Scholar]

- Zhang, S.; Yao, L.; Sun, A.; Tay, Y. Deep Learning Based recommender system: A survey and new perspectives. ACM Comput. Surv. 2019, 52, 1–38. [Google Scholar] [CrossRef] [Green Version]

- Batmaz, Z.; Yurekli, A.; Bilge, A.; Kaleli, C. A review on deep learning for recommender systems: Challenges and remedies. Artif. Intell. Rev. 2019, 52, 1–37. [Google Scholar] [CrossRef]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef]

- Zhang, G.; Liu, Y.; Jin, X. A survey of autoencoder-based recommender systems. Front. Comput. Sci. 2020, 14, 430–450. [Google Scholar] [CrossRef]

- Cheng, H.T.; Koc, L.; Harmsen, J.; Shaked, T.; Chandra, T.; Aradhye, H.; Anderson, G.; Corrado, G.; Chai, W.; Ispit, M.; et al. Wide & Deep Learning for Recommender Systems. In Proceedings of the 1st Workshop on Deep Learning for Recommender Systems, Boston, MA, USA, 15 September 2016; ACM: New York, NY, USA, 2016; pp. 7–10. [Google Scholar]

- Sedhain, S.; Menon, A.K.; Sanner, S.; Xie, L. AutoRec: Autoencoders meet collaborative filtering. In Proceedings of the 24th international conference on World Wide Web, Florence, Italy, 18–22 May 2015; pp. 111–112. [Google Scholar]

- Chen, S.; Wu, M. Attention collaborative autoencoder for explicit recommender systems. Electronics 2020, 9, 1716. [Google Scholar] [CrossRef]

- Kang, W.C.; Mcauley, J. Self-attentive sequential recommendation. In Proceedings of the 2018 IEEE Conference on Data Mining (ICDM), Singapore, 17–20 November 2018; IEEE: Piscataway, NJ, USA, 2018; pp. 197–206. [Google Scholar]

- Tal, O.; Liu, Y.; Huang, J.; Yu, X.; Aljbawi, B. Neural Attention Frameworks for Explainable Recommendation. IEEE Trans. Knowl. Data Eng. 2021, 33, 2137–2150. [Google Scholar] [CrossRef]

- Koren, Y.; Bell, R.; Volinsky, C. Matrix factorization techniques for recommender systems. Computer 2009, 42, 30–37. [Google Scholar] [CrossRef]

- Sarwar, B.; Karypis, G.; Konstan, J.; Riedl, J. Application of dimensionality reduction in recommender system—A case study. In Proceedings of the WebKDD-2000 Workshop, Boston, MA, USA, 20 August 2000. [Google Scholar]

- Lee, D.D.; Seung, H.S. Learning the parts of objects by non-negative matrix factorization. Nature 1999, 401, 788–791. [Google Scholar] [CrossRef]

- Nikolakopoulos, A.N.; Kalantzis, V.; Gallopoulos, E.; Garofalakis, J.D. Eigenrec: Generalizing puresvd for effective and efficient top-n recommendations. Knowl. Inf. Syst. 2018, 58, 59–81. [Google Scholar] [CrossRef] [Green Version]

- Koutrika, G. Modern recommender systems: From computing matrices to thinking with neurons. In Proceedings of the International Conference on Management of Data, Houston, TX, USA, 10–15 June 2018; pp. 1651–1654. [Google Scholar]

- Shen, X.; Yi, B.; Liu, H.; Zhang, W.; Zhang, Z.; Liu, S.; Xiong, N. Deep variational matrix factorization with knowledge embedding for recommendation system. IEEE Trans. Knowl. Data Eng. 2021, 33, 1906–1918. [Google Scholar] [CrossRef]

- Yi, B.; Shen, X.; Liu, H.; Zhang, Z.; Zhang, W.; Liu, S.; Xiong, N. Deep matrix factorization with implicit feedback embedding for recommendation system. IEEE Trans. Knowl. Data Eng. 2019, 15, 4591–4601. [Google Scholar] [CrossRef]

- Liu, T.; Liu, H.; Li, Y.; Chen, Z.; Zhang, Z.; Liu, S. Flexible FTIR Spectral Imaging Enhancement for Industrial Robot Infrared Vision Sensing. IEEE Trans. Ind. Inf. 2020, 16, 544–554. [Google Scholar] [CrossRef]

- Kim, D.; Park, C.; Oh, J.; Lee, S.; Yu, H. Convolutional matrix factorization for document context-aware recommendation. In Proceedings of the 10th ACM Conference on Recommender Systems, Boston, MA, USA, 15–19 September 2016; ACM: New York, NY, USA, 2016; pp. 233–240. [Google Scholar]

- Zheng, L.; Noroozi, V.; Yu, P.S. Joint deep modeling of users and items using reviews for recommendation. In Proceedings of the Tenth ACM International Conference on Web Search and Data Mining, Cambridge, UK, 6–10 February 2017; ACM: New York, NY, USA, 2017; pp. 425–434. [Google Scholar]

- Seo, S.; Huang, J.; Yang, H.; Liu, Y. Interpretable convolutional neural networks with dual local and global attention for review rating prediction. In Proceedings of the Eleventh ACM Conference, Como, Italy, 27–31 August 2017; ACM: New York, NY, USA, 2017; pp. 297–305. [Google Scholar]

- Wu, L.; Quan, C.; Li, C.; Wang, Q.; Zheng, B. A context-aware user-item representation learning for item recommendation. IEEE Trans. Knowl. Data Eng. 2017, 37, 1–29. [Google Scholar] [CrossRef] [Green Version]

- Chin, J.Y.; Zhao, K.; Joty, S.; Cong, G. ANR: Aspect-based neural recommender. In Proceedings of the 27th ACM International Conference on Information and Knowledge Management, CIKM, Torino, Italy, 22–26 October 2018; ACM: New York, NY, USA, 2018; pp. 147–156. [Google Scholar]

- Liu, D.; Li, J.; Du, B.; Chang, J.; Gao, R. DAML: Dual Attention Mutual Learning between Ratings and Reviews for Item Recommendation. In Proceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining, Anchorage, AK, USA, 4–8 August 2019; ACM: New York, NY, USA, 2019; pp. 344–352. [Google Scholar]

- Chong, C.; Min, Z.; Liu, Y.; Ma, S. Neural attentional rating regression with review-level explanations. In Proceedings of the 2018 World Wide Web Conference, Lyon, France, 23–27 April 2018; pp. 1583–1592. [Google Scholar]

- Lu, Y.; Dong, R.; Smyth, B. Coevolutionary recommendation model: Mutual learning between ratings and reviews. In Proceedings of the 2018 World Wide Web Conference, Lyon, France, 23–27 April 2018; pp. 773–782. [Google Scholar]

- Yi, T.; Luu, A.T.; Hui, S.C. Multi-pointer co-attention networks for recommendation. In Proceedings of the 24th ACM SIGKDD International Conference, London, UK, 19–23 August 2018; ACM: New York, NY, USA, 2018; pp. 2309–2318. [Google Scholar]

- Huang, X.; Liao, G.; Xiong, N.; Vasilakos, A.; Lan, T. A survey of context-aware recommendation schemes in event-based social networks. Electronics 2020, 9, 1583. [Google Scholar] [CrossRef]

- Guo, G.; Zhang, J.; Yorke-Smith, N. TrustSVD: Collaborative filtering with both the explicit and implicit influence of user trust and of item ratings. In Proceedings of the Twenty-Ninth AAAI Conference on Artificial Intelligence, Austin, TX, USA, 25–30 January 2015; pp. 123–129. [Google Scholar]

- Zhu, Z.; Wang, J. James Caverlee. In Improving top-K recommendation via joint collaborative autoencoders. In Proceedings of the 30th International Conference on World Wide Web, San Francisco, CA, USA, 13–17 May 2019; pp. 3482–3483. [Google Scholar]

- Park, C.; Kim, D.H.; Oh, J.; Yu, H. TRecSo: Enhancing top-k recommendation with social information. In Proceedings of the WWW (Companion Volume), Montréal, QC, Canada, 11–15 April 2016; pp. 89–90. [Google Scholar]

- Chen, C.; Zheng, X.; Yan, W.; Hong, F.; Zhen, L. Context-ware collaborative topic regression with social matrix factorization for recommender systems. In Proceedings of the Twenty-Eighth AAAI Conference on Artificial Intelligence (AAAI-14), Québec City, QC, Canada, 27–31 July 2014; AAAI Press: Palo Alto, CA, USA, 2014; pp. 9–15. [Google Scholar]

- Anastasiu, D.C.; Christakopoulou, E.; Smith, S.; Sharma, M.; Karypis, G. Big Data and Recommender Systems. 2016. Available online: https://conservancy.umn.edu/handle/11299/215998 (accessed on 2 June 2021).

- Sardianos, C.; Tsirakis, N.; Varlamis, I. A Survey on the Scalability of Recommender Systems for Social Networks; Springer: Cham, Switzerland, 2018. [Google Scholar] [CrossRef]

- Eirinaki, M.; Gao, J.; Varlamis, I.; Tserpes, K. Recommender Systems for Large-Scale Social Networks: A review of challenges and solutions. Future Gener. Comput. Syst. FGCS 2018, 78, 413–418. [Google Scholar] [CrossRef]

- Zhu, N.; Cao, J.; Lu, X.; Gu, Q. Leveraging pointwise prediction with learning to rank for top-N recommendation. World Wide Web 2021, 24, 375–396. [Google Scholar] [CrossRef]

- Liu, H.; Fang, S.; Zhang, Z.; Li, D.; Lin, K.; Wang, J. MFDNet: Collaborative Poses Perception and Matrix Fisher Distribution for Head Pose Estimation. IEEE Trans. Multimed. 2021, 1. [Google Scholar] [CrossRef]

- Zhao, Z.; Chi, E.; Hong, L.; Chen, J.; Nath, A.; Andrews, S.; Kumthekar, A.; Sathiamoorthy, M.; Yi, X.; Chi, E. Recommending what video to watch next: A multitask ranking system. In Proceedings of the 13th ACM Conference, Copenhagen, Denmark, 16–20 September 2019; ACM: New York, NY, USA, 2019; pp. 43–51. [Google Scholar]

- Chen, L.; Yuan, Y.; Yang, J.; Zahir, A. Improving the prediction quality in memory-based collaborative filtering using categorical features. Electronics 2021, 10, 214. [Google Scholar] [CrossRef]

- Zhang, Z.; Li, Z.; Liu, H.; Xiong, N.N. Multi-scale Dynamic Convolutional Network for Knowledge Graph Embedding. IEEE Trans. Knowl. Data Eng. 2021, 1–10. [Google Scholar] [CrossRef]

- Li, Z.; Liu, H.; Zhang, Z.; Liu, T.; Shu, J. Recalibration Convolutional Networks for Learning Interaction Knowledge Graph Embedding. Neurocomputing 2021, 427, 118–130. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N. Attention is all you need. In Proceedings of the Neural Information Processing Systems 30: Annual Conference on Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; pp. 5998–6008. [Google Scholar]

- Harper, F.M.; Konstan, J.A. The MovieLens datasets: History and context. ACM Trans. Interact. Intell. Syst. 2016, 5, 1–19. [Google Scholar] [CrossRef]

- Dong, X.; Yu, L.; Wu, Z. A hybrid collaborative filtering model with deep structure for recommender systems. In AAAI; AAAI Press: San Francisco, CA, USA, 2017; pp. 1309–1315. [Google Scholar]

- Kim, D.; Park, C.; Oh, J.; Yu, H. Deep hybrid recommender systems via exploiting document context and statistics of items. Inf. Sci. 2017, 417, 72–87. [Google Scholar] [CrossRef]

- Liu, J.; Wang, D.; Ding, Y. PHD: A probabilistic model of hybrid deep collaborative filtering for recommender systems. In Proceedings of the Asian Conference on Machine Learning, Seoul, Korea, 15–17 November 2017; PMLR: Seoul, Korea; Volume 77, pp. 224–239. [Google Scholar]

- Xz, A.; Hl, A.; Xc, B.; Zhong, J.; Wang, D. A novel hybrid deep recommendation system to differentiate user’s preference and item’s attractiveness. Inf. Sci. 2020, 519, 306–316. [Google Scholar]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).