Abstract

Network analytic methods that are ubiquitous in other areas, such as systems neuroscience, have recently been used to test network theories in psychology, including intelligence research. The network or mutualism theory of intelligence proposes that the statistical associations among cognitive abilities (e.g., specific abilities such as vocabulary or memory) stem from causal relations among them throughout development. In this study, we used network models (specifically LASSO) of cognitive abilities and brain structural covariance (grey and white matter) to simultaneously model brain–behavior relationships essential for general intelligence in a large (behavioral, N = 805; cortical volume, N = 246; fractional anisotropy, N = 165) developmental (ages 5–18) cohort of struggling learners (CALM). We found that mostly positive, small partial correlations pervade our cognitive, neural, and multilayer networks. Moreover, using community detection (Walktrap algorithm) and calculating node centrality (absolute strength and bridge strength), we found convergent evidence that subsets of both cognitive and neural nodes play an intermediary role ‘between’ brain and behavior. We discuss implications and possible avenues for future studies.

1. Introduction

General intelligence, or g (Spearman 1904), captures cognitive ability across a variety of domains and predicts a wide range of important life outcomes, such as educational and occupational achievement (Hegelund et al. 2018), and mortality (Calvin et al. 2011). In recent years, methods from network analysis have shed new light on both the cognitive abilities that make up general intelligence (van der Maas et al. 2017), as well as the brain systems purported to support these abilities (Girn et al. 2019; Seidlitz et al. 2018). For instance, the mutualism model (van der Maas et al. 2006), inspired by ecosystem models of prey–predator relations, states that the positive manifold (Spearman 1904), rather than existing in final form since birth, emerges gradually from the positive interactions among different cognitive abilities (e.g., reasoning and vocabulary) over time (see Kievit et al. 2017; Kievit et al. 2019). Hence, the positive manifold (and, thus, general intelligence) can arise even from originally weakly correlated cognitive faculties. Therefore, according to the mutualism model (also see van der Maas et al. 2017), general intelligence can be conceptualized as a complex dynamical system. This paradigm allows us to evaluate general intelligence using the statistical tools of network science (Barabási 2016) to estimate the relationships among elements of the system(s) under investigation (Fried 2020; Fried and Robinaugh 2020).

For example, new innovations in network psychometrics (Epskamp et al. 2018) have led to a rapid increase in popularity of behavioral network analysis, especially in psychopathology (Borsboom 2017; Robinaugh et al. 2019). In this framework, psychological constructs (e.g., mental disorders such as depression) are theorized as complex systems, whereby relationships (edges) between nodes (e.g., item responses on a questionnaire) are estimated using weighted partial correlation networks. The use of partial correlations enables the determination of conditional dependencies among variables, after controlling for the associations among every other node in the network (Epskamp et al. 2018).

This approach has also recently been used to analyze cross-sectional data on general intelligence. For instance, both Kan et al. (2019) (N = 1800; age range: 16–89 years) as well as Schmank et al. (2019) (N = 1112; age range: 12–90 years) used a network model approach to analyze data from the WAIS-IV cognitive battery (Wechsler 2008). Model fit to the pattern of intelligence scores was more consistent with the network model than a latent variable approach (g factor). Furthermore, Mareva and Holmes (2020), in two separate samples, one the same group of struggling learners as studied here (CALM) but with fewer participants (N = 350), no neuroimaging data, and including tasks not analyzed in this study (e.g., motor speed and tower achievement), observed links between cognitive abilities and learning, especially between mathematics skills and more “domain-general” faculties such as backward digit span and matrix reasoning. Although mutualism is an inherently dynamical theory, therefore requiring longitudinal data to adequately assess, these results are compatible with a cross-sectional interpretation of mutualism’s assumption of (mostly) positive associations among cognitive abilities. Moreover, it must be noted that latent variable (Kline 2015) and network models should not solely be compared using goodness-of-fit indices, but should instead be judged based on “theory compatibility” (see Schmank et al. 2021) and the proposed “data-generating mechanism” (van Bork et al. 2019).

In addition to psychology, network analysis methods have been widely used in neuroscience to describe the relations among brain regions, ushering in the field of network neuroscience (Bassett and Sporns 2017; Fornito et al. 2016). Rather than focusing on individual brain regions in isolation, the brain is conceived (similarly to network psychometrics and mutualism) as a complex system of interconnected networks that facilitate behavioral functions, ranging from sensorimotor control to learning. In this light, several influential studies have revealed pervasive properties of brain networks, such as small-world topology (Bassett and Bullmore 2006, 2017), modularity (Meunier et al. 2010; Sporns and Betzel 2016), and hubs (Sporns et al. 2007; van den Heuvel and Sporns 2013), which are nodes (e.g., individual brain regions) that share many connections with other nodes within the brain. Together, these organizational properties of brains enable an economical trade-off between minimizing wiring cost and maximizing efficiency (e.g., information transfer) that enable adaptive behavior (Bullmore and Sporns 2012).

Although network approaches have provided unique insights within cognitive neuroscience as well as psychology (e.g., psychopathology and intelligence), few studies have integrated them into a so-called multilayer network paradigm (Bianconi 2018), which models the relationships among variables simultaneously across time (e.g., days, weeks, months, and years) and/or levels of organization (e.g., behavior and brain variables). Two studies have recently pushed this boundary. Hilland et al. (2020) examined the relations between brain structure (cortical thickness and volume) and depression symptoms. They found (via a partial correlation network model) that certain clusters of brain regions (cingulate, fusiform gyrus, hippocampus, and insula) were conditionally dependent, with a subset of depression symptoms (crying, irritability, and sadness). Secondly, in 172 male autistic participants (ages 10–21 years), Bathelt, Geurts, and Borsboom 2020 used “network-based regression” to estimate the relationship between the unique variance of both the autism symptom network and functional brain connectivity (resting-state fMRI). Moreover, they applied Bayesian network analysis to create a directed acyclic graph between autism symptoms’ sub-scores and their neural correlates. They found that communication and social behavior were predicted by their respective resting-state MRI neural correlates (termed “Comm Brain” and “Social Brain”, respectively).

This study builds on these findings and the recent studies mentioned above, by combining a network psychometrics approach to understand individual differences in cognitive ability (general intelligence) with brain structural covariance networks derived from grey matter cortical volume and white matter fractional anisotropy. In doing so, we created a network of networks, which differs from multiplex (same nodes, different edge types across layers) and multi-slice (same nodes and edge types over time such as in fMRI time-series data) networks (see Bianconi 2018, p. 81, Fig. 4.1). The advantages of applying this approach are three-fold and complementary. First, it places the brain and behavior, which often do not map onto each other in a simple and reductionistic one-to-one fashion, into the same analytical paradigm (network analysis using partial correlations). This allows for simultaneous estimations and easier visualizations of potential causal links between cognition and structural brain properties, which to our knowledge, has only been performed in a similar way in two other studies, one involving depression (Hilland et al. 2020), the other in autism (Bathelt et al. 2020). Second, it enables the use of centrality estimates (i.e., strength) and community detection algorithms (i.e., Walktrap) to tease apart major clusters of cognitive abilities and brain regions, which could help to pinpoint potential intervention targets (e.g., using cognitive training and/or transcranial magnetic stimulation). Lastly, it aids in establishing a coherent framework for theory building, which has been lacking in both the neuroscience (Levenstein et al. 2020) and psychological (Fried 2020) literature. This is accomplished by treating both the brain (algorithmic) and behavior (computational) as equally important levels of analysis to study (Marr and Poggio 1976), and attempting to more directly translate findings from one level to the other. Ultimately, the hope is that relations between brain–behavior nodes can help identify candidate targets (e.g., nodes that ‘bridge’ the brain and cognition) for future interventions in developmental samples of struggling learners, in particular individuals considered ‘low-performing’ on cognitive ability tasks (e.g., students struggling to learn in school).

2. Materials and Methods

2.1. Participants

The present cross-sectional sample (behavioral, N = 805; cortical volume, N = 246; fractional anisotropy, N = 165; age range: 5 to 18 years) was obtained from the Centre for Attention, Learning and Memory (CALM) located in Cambridge, UK (Holmes et al. 2019). This developmental cohort consists of children and adolescents recruited by referrals for perceived difficulties in attention, memory, language, reading, and/or mathematics problems. A formal diagnosis was neither required nor an exclusion criterion. Exclusion criteria included any known significant and uncorrected problems in vision or hearing, and/or being a non-native English speaker.

Cognitive data were obtained on a one-to-one basis by an examiner in a designated child-friendly testing room. The tasks analyzed in this study comprised a comprehensive array of standardized assessments of cognitive ability, including crystallized intelligence (peabody picture vocabulary test, spelling, single-word reading, and numerical operations), fluid intelligence (matrix reasoning), and working memory (forward and backward digit recall, Mr. X, dot matrix, and following instructions). See Table 1 for task descriptions, relevant citations, and summary statistics (Note: from raw cognitive task scores).

Table 1.

List, descriptions, and summary statistics (mean, standard deviation, range, and percentage of missing data) of cognitive assessments used in this study from the CALM sample. Note, task descriptions (except following instructions) are taken directly or paraphrased from Simpson-Kent et al. (2020).

Participants were allotted regular breaks throughout each session. When necessary, testing was split into two separate sessions for participants who did not complete the assessments in a single sitting. A subset of participants also underwent MRI scanning (see below for details). It should be noted that, when compared to age-matched controls, CALM sample participants tend to score lower than their peers. For example, a recent study (Simpson-Kent et al. 2020) compared the CALM cohort with the NKI-Rockland Sample (see Nooner et al. 2012) to assess how cognition and its white matter correlates (fractional anisotropy) differed from childhood to adolescence. In terms of cognitive performance, CALM reliably scored lower than the NKI-Rockland Sample, a ‘typically’ developing cohort, on tasks of crystallized and fluid intelligence (see Level I of Figure 2 from Simpson-Kent et al. 2020). For more information about CALM and its procedures, see http://calm.mrc-cbu.cam.ac.uk/ (accessed on 8 June 2021).

2.2. Structural Neuroimaging: Cortical Volume (CV) and Fractional Anisotropy (FA)

CALM neuroimaging data were obtained at the MRC Cognition and Brain Sciences Unit, Cambridge, UK. Scans were acquired on the Siemens 3 T Tim Trio system (Siemens Healthcare, Erlangen, Germany) via a 32-channel quadrature head coil. T1-weighted volume scans were acquired using a whole brain coverage 3D magnetization-prepared rapid acquisition gradient echo (MPRAGE) sequence, with 1 mm isotropic image resolution. The following parameters were used: Repetition time (TR) = 2250 ms; Echo time (TE) = 3.02 ms; Inversion time (TI) = 900 ms; Flip angle = 9 degrees; Voxel dimensions = 1 mm isotropic; GRAPPA acceleration factor = 2. Diffusion-Weighted Images (DWI) were acquired using a Diffusion Tensor Imaging (DTI) sequence with 64 diffusion gradient directions, with a b-value of 1000 s/mm2, plus one image acquired with a b-value of 0. Relevant parameters include: TR = 8500 ms, TE = 90 ms, and voxel dimensions = 2 mm isotropic.

We undertook several procedures to ensure adequate MRI data quality and minimize potential biases due to subject movement. For all participants in CALM, children were trained to lie still inside a realistic mock scanner prior to their scan. All T1-weighted images and FA maps were examined by an expert to remove low-quality scans. Moreover, only data with a maximum between-volume displacement below 3 mm were included in the analyses.

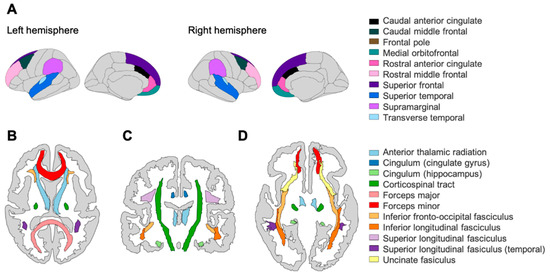

As our grey matter metric, we use region-based cortical volume (CV in mm3, N = 246, averaged across contralateral homologues), based on the Desikan–Killiany atlas (Desikan et al. 2006) and defined as the distance between the outer edge of cortical grey matter and subcortical white matter (Fischl and Dale 2000). Tissue classification and anatomical labelling were performed on the basis of the T1-weighted scan using FreeSurfer v5.3.0 software, which is documented and freely available for download online (http://surfer.nmr.mgh.harvard.edu/, accessed on 8 June 2021). The technical details of these procedures are described in prior publications (Dale et al. 1999; Fischl et al. 1999, 2002). FreeSurfer morphology output statistics were computed for each ROI, and also included cortical thickness and surface area (see Supplementary Materials Figures S5 and S6 for analyses involving these two metrics). Based on a recent meta-analysis on functional and structural correlates of intelligence (Basten et al. 2015), as well as a previous longitudinal analysis of the UK Biobank sample (see Kievit et al. 2018), we included a subset of 10 cortical volume regions in this study: caudal anterior cingulate (CAC), caudal middle frontal gyrus (CMF), frontal pole (FP), medial orbitofrontal cortex (MOF), rostral anterior cingulate gyrus (RAC), rostral middle frontal gyrus (RMF), superior frontal gyrus (SFG), superior temporal gyrus (STG), supramarginal gyrus (SMG), and transverse temporal gyrus (TTG).

From a subset of our neuroimaging data (see Simpson-Kent et al. 2020), we also calculated fractional anisotropy (FA, N = 165), a proxy for white matter integrity (Wandell 2016). We included 10 regions using the Johns Hopkins University DTI-based white matter tractography atlas (see Hua et al. 2008): anterior thalamic radiations (ATR), corticospinal tract (CST), cingulate gyrus (CING), cingulum (hippocampus) (CINGh), forceps major (FMaj), forceps minor (FMin), inferior fronto-occipital fasciculus (IFOF), inferior longitudinal fasciculus (ILF), superior longitudinal fasciculus (SLF), and uncinate fasciculus (UNC).

All steps to compute regional CV estimation and FA maps were implemented using NiPyPe v0.13.0 (see https://nipype.readthedocs.io/en/latest/, accessed on 8 June 2021). To create a brain mask based on the b0-weighted image (FSL BET; Smith 2002) and correct for movement and eddy current-induced distortions (eddy; Graham et al. 2016), diffusion-weighted images were pre-processed. The diffusion tensor model was then fitted, and fractional anisotropy (FA) maps were calculated using dtifit. Images with a between-image displacement > 3 mm were then excluded from subsequent analysis steps. This was completed using FSL v5.0.9. To extract FA values for major white matter tracts, FA images were registered to the FMRIB58 FA template in MNI space using a sequence of rigid, affine, and symmetric diffeomorphic image registration (SyN). This was implemented in ANTS v1.9 (Avants et al. 2008). For all participants, visual inspection indicated good image registration. Binary masks from a probabilistic white matter atlas (thresholded at > 50% probability) in the same space were applied to extract FA values.

We used these region-based measures to study brain structural covariance (Alexander-Bloch et al. 2013), which have been used in cross-sectional and longitudinal designs of cognitive ability in childhood and adolescence (e.g., Solé-Casals et al. 2019; see Kievit and Simpson-Kent 2021 for a recent review of longitudinal studies). Emerging theoretical proposals emphasize the role of networks of brain areas in producing intelligent behavior (e.g., Parieto-Frontal Integration Theory (P-FIT), Jung and Haier (2007) and The Network Neuroscience Theory of Human Intelligence, Barbey 2018) rather than individual regions-of-interest (ROIs) in isolation (e.g., primarily the prefrontal cortex). As stated above, we selected 10 grey matter and 10 white matter ROIs based upon combined evidence from a recent meta-analysis (Basten et al. 2015) on associations between functional and structural ROIs and cognitive ability, that further extended the P-FIT theory, but also more recent work performed in two large cohorts, one in longitudinal analysis of the UK Biobank sample (grey matter, Kievit et al. 2018) and another in the same (cross-sectional) developmental cohort, although with a smaller behavioral sample size (cognitive data, N = 551; white matter, N = 165, same fractional anisotropy data as the present study; no grey matter data used), as that studied here (see, Simpson-Kent et al. 2020). See Figure 1 for illustrations of ROIs analyzed in this study.

Figure 1.

(A) Grey matter ROIs based on the Desikan–Killiany atlas (cortical volume, N = 246) in the left and right hemisphere. White matter ROIs based on the John’s Hopkin’s University atlas (fractional anisotropy, N = 165) in (B) transverse plane (superior), (C) coronal plane, and (D) transverse plane (inferior). Note that the frontal pole is not visible in these planes.

2.3. Network Estimation Methods

All statistical analyses and plots were completed using R (R Core Team 2020) version 3.6.3 (“Holding the Windsock”). Network estimation was performed using the packages bootnet (version 1.4.3, Epskamp and Fried 2020), igraph (version 1.2.6, Amestoy et al. 2020), qgraph (version 1.6.5, Epskamp et al. 2020), and networktools (version 1.2.3, Jones 2020). We used these tools to estimate weighted partial correlation networks, which allowed determination of conditional dependencies among our cognitive and neural variables. For example, in a multilayer network, any partial correlation between node A (e.g., matrix reasoning) and node B (e.g., the caudal anterior cingulate) is one that remains after controlling for the associations among A and B with every other node in the network (e.g., other cognitive abilities and cortical volume ROIs). To estimate these networks, we applied Gaussian Graphical Models (Pearson correlations) using regularization (graphical lasso, see Friedman et al. 2008) with a threshold tuning parameter of 0.5 and pairwise deletion to account for missingness. These methods have been widely used to generate sparser networks by penalizing for more complex models, thus decreasing the risk of potentially spurious (e.g., false positive) connections and enabling simpler visualization and interpretation of conditional dependencies between nodes (Epskamp and Fried 2018). We hypothesized that our results would show positive partial correlations (in line with mutualism theory) both within cognitive (e.g., as observed in Mareva and Holmes 2020; Schmank et al. 2019) and within neural measures (single-layer networks), as well as between brain–behavior variables in the multilayer networks.

Note that age was included as a node in the estimation procedures (i.e., edge weights, centrality, network stability, and community detection) of all partial correlation networks but was not included in the visualizations of our networks and centrality plots, or in network descriptive statistics (i.e., mean, median, and range of edge weights). For a comparison of the use of age (i.e., included in estimation, regressed out beforehand, or removed from the dataset prior to network estimation), see the Supplementary Material Tables S2 and S3.

2.4. Node Strength Centrality (Single-Layer Networks)

To assess the statistical interconnectedness or connectivity of cognitive and neural nodes relative to their neighbors within our single-layer networks, we estimated node strength, a weighted degree centrality measure calculated by summing the absolute partial correlation coefficients (edge weights) between a node and all other nodes it connects to within the network. Note that our brain structural covariance networks involve ROIs that are not necessarily anatomically connected, preventing certain inferences such as information flow. Nodes were classified as central if the magnitude of their strength z-score was positive and equal to or greater than one standard deviation above the mean. We do not discuss or interpret negative centrality values for our single-layer networks.

2.5. Community Detection and Bridge Strength Centrality (Multilayer Networks)

In our multilayer networks, we applied the Walktrap community detection algorithm (Pons and Latapy 2005) to determine in a data-driven manner whether clustering, or grouping, of nodes (e.g., cognitive and/or neural) occurred. The Walktrap algorithm assesses how strongly related nodes are to each other (that can be due to similarity, e.g., because nodes A and B are similar, or it can be because nodes A and B are different, but node A has a strong impact on node B; see “Topological overlap and missing nodes” of Fried and Cramer 2017). The Walktrap algorithm works by taking recursive random walks between node pairs and classifies communities according to how densely connected these parts are within the network (wherever the random walks become ‘trapped’). Walktrap is widely used in the network psychometrics literature and, in a Monte Carlo simulation study, was shown to outperform other algorithms (e.g., InfoMap) for sparse count networks (e.g., those used in diffusion tensor imaging), although it must be noted that this result was for networks made up of 500 nodes or higher (Gates et al. 2016). We also calculated the maximum modularity index value (Q), which estimates the robustness of the community partition (Newman 2006). We interpreted values of 0.5 or above as evidence for reliable grouping.

Instead of traditional absolute strength, we calculated bridge strength, a novel weighted degree centrality measure originality developed to study comorbidity between mental disorders (see Jones et al. 2019 for overview). Bridge strength centrality sums the absolute value of every edge that connects one node (e.g., matrix reasoning) in one pre-assigned community (e.g., cognition) to another node (e.g., caudal anterior cingulate) in another pre-assigned community (e.g., brain). Recent simulation work has shown that the method can reliably recover true structures of bridge nodes in both directed and undirected networks (Jones et al. 2019). Rather than relying on straightforward ‘brain’ or ‘behavior’ assignments to classify nodes, we pre-assigned communities for bridge strength calculation based on results from the Walktrap algorithm.

The presence of bridges between communities (e.g., if nodes from topological distinct clusters such as cognition vs. brain feature relations) might suggest the existence of intermediate endophenotypes (Fornito and Bullmore 2012; Kievit et al. 2016), and potentially identify potential nodes (both cognitive and neural) that might one day guide intervention studies. Nodes were classified as central if the magnitude of their strength z-score was positive and equal to or greater than one standard deviation above the mean. We do not discuss or interpret negative centrality z-score values for our multilayer networks.

2.6. Node Centrality Stability (Single and Multilayer Networks)

Lastly, we quantified the reliability of our centrality estimates for all single-layer (absolute strength of cognitive and brain structural covariance nodes) and multilayer networks (bridge strength). We estimated the correlation stability (CS) coefficient, calculated as the maximum proportion (out of 2000 bootstraps) of the sample that can be dropped out and, with 95% probability, still retain a correlation of 0.7 (correlation between rank order of centrality in network estimated on full sample with order of subsampled network in smaller N), with a CS value of 0.5 considered to be stable (Epskamp et al. 2018). Lastly, also using bootstrapping, we determined the stability of the edge-weight coefficients, but present these results in the Supplementary Material Figures S2–S4.

3. Results

3.1. Single-Layer Network Models (Cognitive, Cortical Volume, and Fractional Anisotropy)

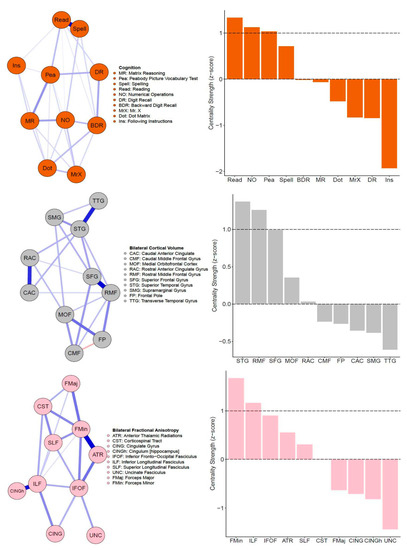

The regularized partial correlation (PC) network for the CALM cognitive data is shown in Figure 2 (top left). This network shows that all partial correlations are positive, and most have small magnitude (mean PC = 0.08, median PC = 0.07, PC range = 0–0.63). One edge (between reading and spelling) was an outlier (PC = 0.63, all others are between 0 and 0.27), likely due to close content overlap (verbal ability). Regarding centrality, three nodes emerged as strong (positive z-score at or greater than one standard deviation above the mean): (in descending order of centrality strength) reading, numerical operations, and peabody picture vocabulary test (Figure 2, top right). Overall, centrality estimates were stable, indicated by a high correlation stability (CS) coefficient of 0.75, revealing that at least 75% of the sample could be dropped while maintaining a correlation of 0.7 with the original sample at 95% probability.

Figure 2.

Single-layer partial correlation networks. Top: Network visualization (spring layout, left) of CALM cognitive data (N = 805). Centrality estimates (z-scores) of all cognitive tasks (right). Middle: Network visualization (spring layout, left) of CALM cortical volume data (N = 246). Centrality estimates (z-scores) of all cortical volume nodes (right). Bottom: Network visualization (spring layout, left) of CALM fractional anisotropy data (N = 165). Centrality estimates (z-scores) of all fractional anisotropy nodes (right). Dashed lines in centrality plots indicate mean strength and one standard deviation above the mean.

Next, we estimated the partial correlation network among 10 grey matter regions, as shown in Figure 1 (top) above. All edges weights (mean PC = 0.09, median PC = 0, PC range = −0.15–0.52) of the cortical volume network (Figure 2, middle left) were positive, apart from one negative path (caudal middle frontal gyrus and frontal pole PC = −0.15). Note that the negative path between the caudal middle frontal gyrus and frontal pole might be due to the frontal pole correlating surprisingly weakly with other grey matter nodes and displaying a steeper decrease pattern across age (see Figure 3 below and Supplementary Figure S1). Two ROIs emerged as central (in descending order of centrality strength): superior temporal gyrus and rostral middle frontal gyrus (Figure 2, middle right). Similar to the cognitive network, cortical volume centrality was stable (CS coefficient = 0.52), indicating that about 52% of the sample could be subtracted to maintain a correlation of centrality estimates above 0.7 compared to the full sample. This finding is despite the lower sample size compared to the behavioral data (N = 805 for behavior vs. N = 246 for cortical volume).

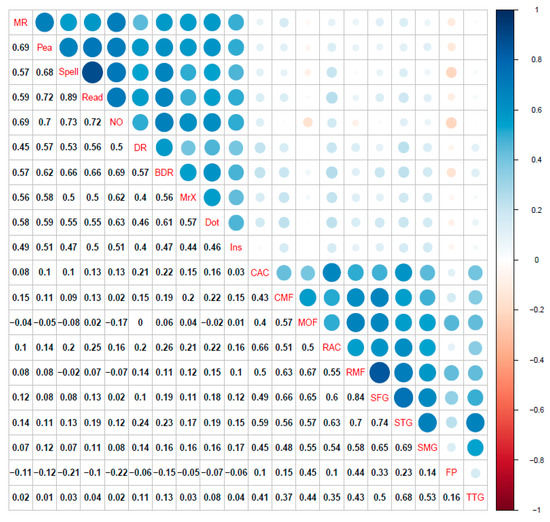

Figure 3.

(Top) Correlation plot for cognitive raw scores and bilateral cortical volume ROIs. (Middle) Correlation plot for cognitive raw scores and bilateral fractional anisotropy ROIs. (Bottom) Correlation plot for bilateral cortical volume and bilateral fractional anisotropy ROIs. All coefficients shown are Pearson correlations. Blue represents positive correlations while red signifies negative correlations among variables. Size of circles indicates the magnitude of the association (e.g., larger circle = higher correlation). Correlations calculated using pairwise complete observations. Abbreviations: matrix reasoning (MR), peabody picture vocabulary test (Pea), spelling (Spell), single word reading (Read), numerical operations (NO), digit recall (DR), backward digit recall (BDR), Mr. X (MrX), dot matrix (Dot), following instructions (Ins), caudal anterior cingulate (CAC), caudal middle frontal gyrus (CMF), medial orbital frontal cortex (MOF), rostral anterior cingulate gyrus (RAC), rostral middle frontal gyrus (RMF), superior frontal gyrus (SFG), superior temporal gyrus (STG), supramarginal gyrus (SMG), frontal pole (FP), transverse temporal gyrus (TTG), anterior thalamic radiations (ATR), corticospinal tract (CST), cingulate gyrus (CING), cingulum (hippocampus) (CINGh), inferior fronto-occipital fasciculus (IFOF), inferior longitudinal fasciculus (ILF), superior longitudinal fasciculus (SLF), uncinate fasciculus (UNC), forceps major (FMaj), and forceps minor (FMin).

Finally, similar to the cognitive and the grey matter covariance network, the fractional anisotropy network (Figure 2, bottom left) has positive partial correlations, with all edge weights varying between small and moderate values: mean PC = 0.08, median PC = 0, and PC range = 0–0.44. Two white matter ROIs displayed centrality (Figure 2, bottom right). These included (in descending order) the forceps minor and inferior longitudinal fasciculus. Finally, fractional anisotropy centrality was moderately stable (CS coefficient = 0.44), indicating that about 44% of the sample could be removed while maintaining a 0.7 association with 95% probability. This is possibly due to the much lower sample size (N = 165) compared to the cognitive (N = 805) and grey matter (N = 246) networks.

3.2. Bridging the Gap: Multilayer Networks

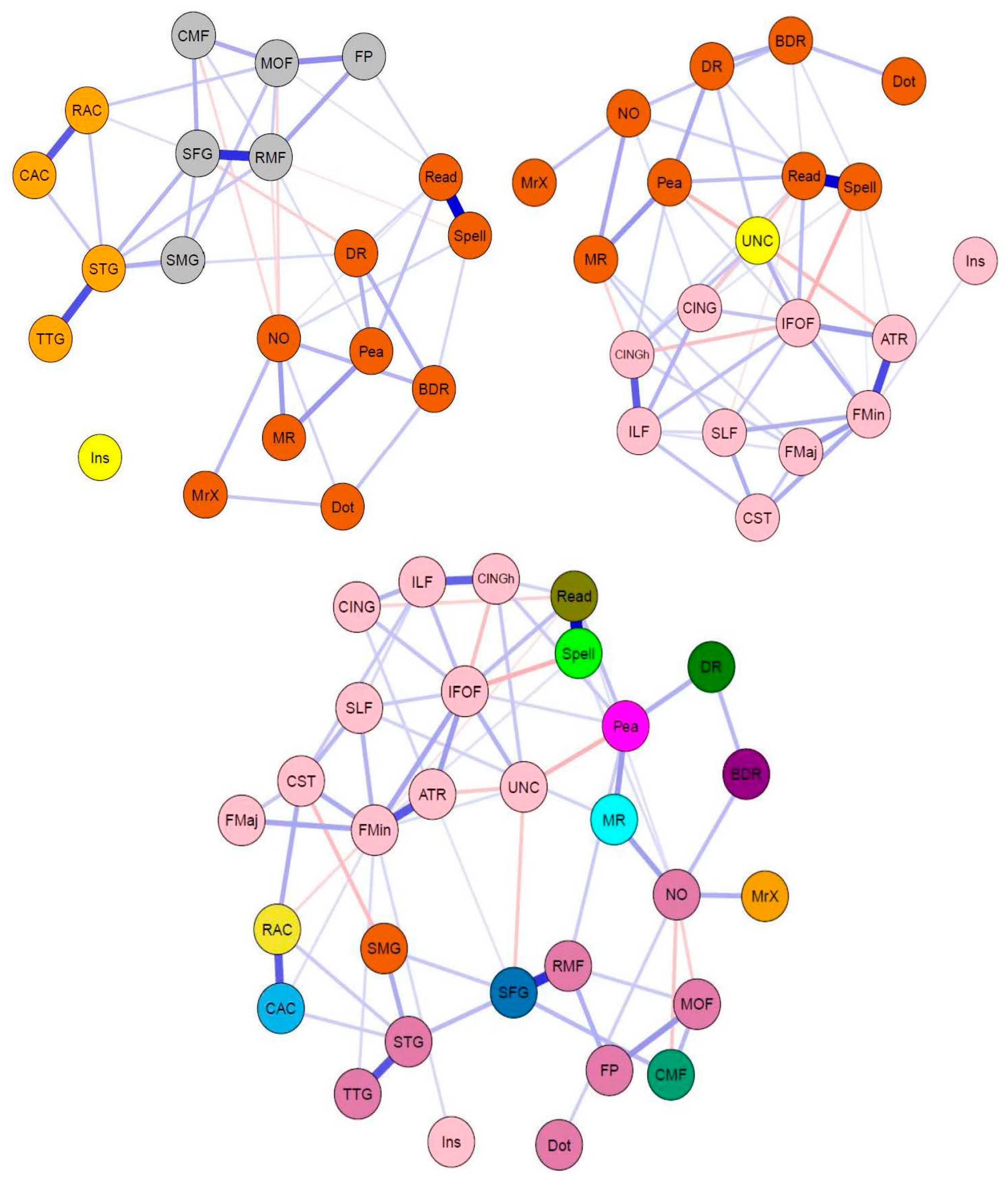

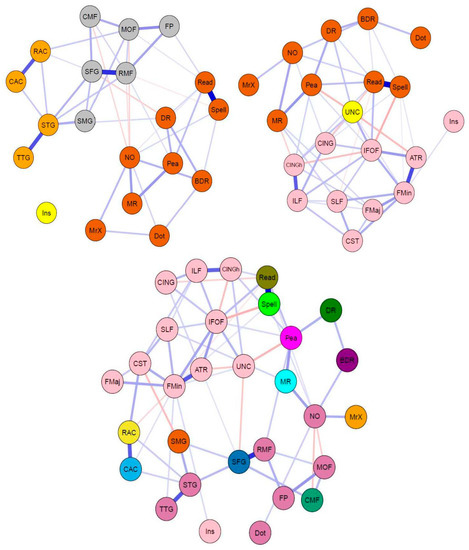

For a correlation plot of cognitive tasks and neuroimaging measures, see Figure 3. The regularized partial correlation network analyses for the CALM multilayer networks data are shown in Figure 4. Consistent with the pattern found in the single-layer networks, the cognitive and grey matter multilayer network (top left of Figure 4) edges are mostly positive and small to moderate weights (mean PC = 0.04, median PC = 0, PC range = −0.12–0.64). Comparably, the cognitive and white matter multilayer network (Figure 4, top right) had similar edge weight estimates (mean PC = 0.04, median = 0, range = −0.2–0.65). Finally, combining all measures together (tri-layer network consisting of cognition, grey and white matter, bottom center of Figure 4) produced a network with similar characteristics to the bi-layer networks (mean PC = 0.02, median PC = 0, PC range = −0.2–0.66). For the bi-layer networks, the Walktrap algorithm produced either three (cognition-white matter) or four (cognition-grey matter) clusters that consisted entirely of cognitive or neural nodes, except for following instructions (Ins), which was either kicked out (cognition-grey matter network, Q = 0.56, indicating strong modularity) or grouped with a neural node (forceps minor of the cognition-white matter network, Q = 0.39, indicating moderate modularity). The result for the tri-layer network (Q = 0.25, indicating weak modularity) was more complex, with a total of 15 communities (Figure 4, bottom center; note, age was found to be in a community by itself but is not shown in the figure).

Figure 4.

Network visualizations (spring layout) of partial correlation multilayer networks for CALM data. Colors indicate groups determined by the Walktrap algorithm (see above). (Top) Bi-layer networks consisting of cognition and grey matter (top left), and cognition and white matter (top right). (Bottom) Tri-layer network consisting of cognition, grey matter, and white matter (center).

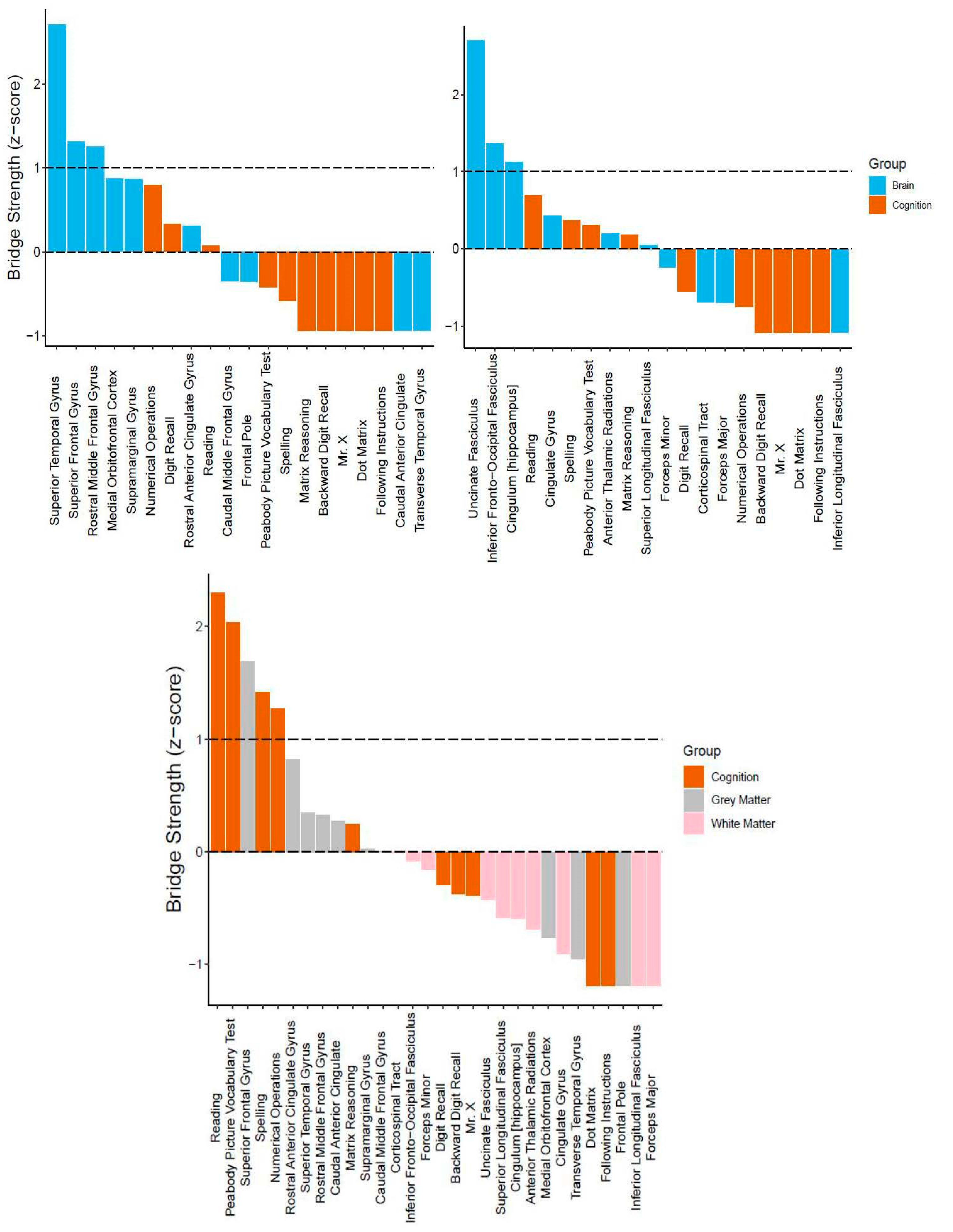

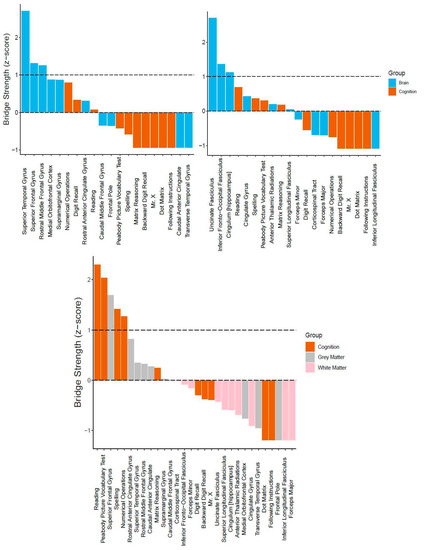

Regarding centrality, we report bridge strength (Figure 5). In the cognitive-grey matter network, three bridge nodes surfaced (in descending order: superior temporal gyrus, superior frontal gyrus, and rostral middle frontal gyrus, Figure 5, top left). In terms of stability, the CS coefficient was 0.20, indicating that the bridge strength estimates were unstable under bootstrapping conditions. In the cognitive-white matter bi-layer network, three nodes (in descending order: uncinate fasciculus, inferior frontal-occipital fasciculus, and hippocampal cingulum) emerged as possible bridge nodes (Figure 5, top right). Moreover, the centrality estimates had a CS coefficient of 0.13, once again suggesting that the bridge strength estimates were unstable. Lastly, for the tri-layer network, five nodes displayed positive bridge strength equal to or greater than one standard deviation above the mean (Figure 5, bottom center). These included (in descending order): reading, peabody picture vocabulary test, superior frontal gyrus, spelling, and numerical operations. Much better than the bi-layer networks, the tri-layer network bridge strength estimates were moderately stable (CS coefficient = 0.44).

Figure 5.

Bridge centrality estimates (z-scores) for multilayer networks. (Top) Bi-layer networks consisting of cognition and grey matter (top left), and cognition and white matter (top right). (Bottom) Tri-layer network consisting of cognition, grey matter, and white matter (center). Dashed lines indicate mean strength and one standard deviation above the mean.

4. Discussion

4.1. Summary of Main Findings

In this study, we used network analysis (partial correlations) to examine the neurocognitive structure of general intelligence in a childhood and adolescent cohort of struggling learners (CALM). For our single-layer networks (Figure 2), we found that cognitive, grey matter, and white matter networks contained mostly (if not all) positive partial correlations. Moreover, in all single-layer networks, at least two nodes emerged as more central than others (as indexed by node strength equal to or greater than one standard deviation above the mean), which varied in stability from moderately to highly reliable. In the cognitive network, this included verbal ability (specifically reading and peabody picture vocabulary test) and crystallized intelligence (i.e., numerical operations). In the structural brain networks (grey matter cortical volume and white matter fractional anisotropy), two nodes passed the centrality threshold, for both the grey matter network (superior temporal gyrus and rostral middle frontal gyrus) and white matter network (forceps minor and inferior longitudinal fasciculus). Furthermore, we extended previous approaches by integrating networks of structural brain data with a cognitive network, forming bi- and tri-layer networks (Figure 4). In doing so, we observed multiple (both positive and negative) partial correlations between brain and behavior variables. Using bridge strength as a metric, we found that, in our bi-layer networks, only neural nodes harbored significant connections across communities (defined by the Walktrap algorithm) and levels of organization (Figure 5, top). In contrast, in the tri-layer network, we found support that mostly cognitive nodes connected across different communities (Figure 5, bottom). Overall, our results suggest which behavioral and neural variables have greater (possible) influence among or might be more influenced by other nodes and potentially serve as bridges between the brain and cognition within general intelligence. However, the literature on drawing inferences from networks to the most likely consequences of intervening on the network is complex and rapidly changing, (e.g., Dablander and Hinne 2019; Henry et al. 2020; Levine and Leucht 2016).

4.2. Interpretation of Network Models and Community Detection Analyses

For the cognitive network, each node corresponded to a single cognitive task (e.g., matrix reasoning), while partial correlations (weighted edges) between nodes were interpreted as compatible with (possible) causal consequences of interactions among cognitive abilities during development. The existence of only positive edges in our cognitive network would be expected under a mutualistic perspective (i.e., interactions among cognitive variables), although longitudinal analyses are needed to further substantiate this claim. Mutualism, which at its essence is a network theory of general intelligence (van der Maas et al. 2006), hypothesizes that the positive manifold and general intelligence (Spearman 1904) emerge from causal interactions among abilities rather than a general latent factor (Fried 2020; Kan et al. 2019). Hence, cognition is viewed as a complex system derived from the dynamic relations of specific abilities that become more intertwined over development. Initially, it was surprising that two of the three most central nodes (i.e., reading and peabody picture vocabulary test) relate to verbal ability rather than abilities such as fluid intelligence and working memory (matrix reasoning and (forward and backward) digit recall), which are traditionally viewed as causal influences on cognitive development (Cattell 1971). However, an emerging body of literature suggests that verbal ability plays a crucial role in cognitive development (e.g., between reading and working memory before 4th grade, Peng et al. 2018 and Zhang and Joshi 2020), as well as driving the emergence of reasoning (Kievit et al. 2019; also see Gathercole et al. 1999).

As for our neural networks (here, grey matter cortical volume and white matter fractional anisotropy), individual nodes were comprised of a single ROI. Importantly, we did not interpret weighted edges as an index of direct connectivity. Instead, the presence of strong associations between these ROIs would be compatible with the hypothesis of coordinated development (see Alexander-Bloch et al. 2013), whereby certain brain regions show preferential correlations to each other than more peripheral regions over time (e.g., childhood to late adolescence), as well as the notion of “rich” (van den Heuvel and Sporns 2011) and “diverse” (Bertolero et al. 2017) clubs that enable local and global integration. The most central grey matter node, the superior temporal gyrus, has been implicated in verbal reasoning (e.g., Khundrakpam et al. 2017). Regarding white matter, the two strongest nodes (forceps minor and inferior longitudinal fasciculus), while not anatomically close, instead represent long-range connections (see de Mooij et al. 2018) and have been linked to mathematical ability (Navas-Sánchez et al. 2014) and visuospatial working memory (Krogsrud et al. 2018).

Finally, we integrated both domains (cognitive abilities and brain metrics) into combined multilayer networks (cognition-grey matter, cognition-white matter, and cognition-grey and white matter). Doing so allowed us to attempt comparison and integration simultaneously across explanatory levels within the same analytical paradigm (network analysis) and statistical metrics (partial correlations, centrality, and community detection). From this analysis, three observations immediately stood out. First, there were multiple partial correlations between cognitive and neural nodes (especially in the cognitive-white matter and cognitive-grey matter and white matter networks). Second, compared to the single-layer networks, the multilayer networks have more negative partial correlations. Together, these two findings further suggest that associations between the brain and cognition are complex as they defy straightforward (e.g., only positive and/or one-to-one) relationships and interpretations. However, it should be noted that causality (e.g., conditioning on colliders, see Rohrer (2018) for overview of interpretations of correlations in graphical causal models in observational data) becomes even more difficult to determine with networks incorporating multiple levels of organization (e.g., cognition and structural brain covariance). Finally, we found a peculiar role of the cognitive task following instructions (Ins) within all multilayer networks. For example, in the cognitive-grey matter network, Ins had no partial correlations with any other nodes within the network, while in both the cognitive-white matter and tri-layer network (cognition, grey and white matter) Ins only correlated with the forceps minor (FMin), a neural node, and not any of the cognitive variables. This might suggest that following instructions, traditionally a working memory task and often analyzed using structural equation modeling, may have distinct psychometric properties (e.g., one-to-one mapping) when compared to other cognitive tasks when modeled through network science approaches, and/or when adjusted for all shared correlations.

Further inspection of bridge strength centrality showed an interesting pattern: (discounting the one standard deviation cutoff) the neural nodes are stronger than the cognitive variables within the multilayer networks, despite there being an equal number of cognitive nodes for each brain metric. This is possibly due to the large number of edges between them (grey and white matter regions) and both cognitive and other neural nodes. In other words, since the neural nodes contain a larger number of connections (partial correlations) across explanatory levels, they display greater bridge strength (bridge strength sums inter-network correlations).

In other ways, the multilayer networks differed. First, in the tri-layer network, four of the five central nodes were cognitive variables, while, in the bi-layer networks, the central nodes were neural ROIs. Three of these central cognitive nodes in the tri-layer network (reading, peabody picture vocabulary test, and numerical operations) were also found to be central in the single-layer cognitive network. This further suggests the importance of mathematical and verbal ability in understanding the cognitive neuroscience of general intelligence. Secondly, the fact that cognitive nodes were found to be central only in the tri-layer network suggests that grey and white matter, while related, possibly reveal unique information about cognition when combined and analyzed together simultaneously.

4.3. Limitations of the Current Study

This study contains several limitations that require caution when interpreting the results. First and foremost, these findings are based on cross-sectional data. While adequate to help tease apart individual differences in cognition between people, cross-sectional data cannot be used to elucidate differences in changes within individuals over time, such as during development. Therefore, longitudinal analyses are needed before attempting to make strong inferences about the dynamics of these networks. Reiterating this point, a recent study using intelligence data (Schmiedek et al. 2020) found that a cross-sectional analysis of the g factor of cognitive ability was unable to capture within-person changes in cognitive abilities over time. This finding further stresses the necessity to integrate cross-sectional (between-person) differences and longitudinal (within-person) changes when studying cognition.

Moreover, the CALM sample represents an atypical sample (Holmes et al. 2019), with participants who consistently score lower on measures of attention, learning, and/or memory than age-matched controls (see Figure 2 (Level I) of Simpson-Kent et al. 2020 for comparison to a typically developing sample). As a result, these analyses would need to be replicated in additional (ideally larger) samples with different cognitive profiles before our results can be generalized. This shortcoming of the present study is echoed by the low stability estimates found for the centrality values in the bi-layer networks, which might be due to the differences between the sample sizes of the neural data (grey matter, N = 246; white matter, N = 165) compared to cognition (N = 805). While these discrepancies could affect the statistical power of our results, the amount of neural data used in the present study is considerably larger than the sample sizes commonly used in standard neuroimaging studies (Poldrack et al. 2017). However, given that the tri-layer network showed moderate bridge strength stability but also displayed weak modularity, and the Walktrap algorithm produced 15 communities in the network, which contained only 31 nodes (including age), we strongly suggest that our results should be interpreted with caution and advise that future studies should aim to analyze neuroimaging data from larger cohorts (e.g., ABCD study, Casey et al. 2018).

Lastly, we re-ran our analyses to test the sensitivity of our main findings (e.g., positive partial correlations and central nodes) to potential outliers (defined as ±4 standard deviations). Doing so did not severely alter the partial correlation weights between nodes in our networks (see Supplementary Material Table S1 for detailed comparisons). It must be restated that our data come from an atypical sample, which might influence brain metrics even with rigorous quality control procedures. Therefore, despite this discrepancy, our data supports brain–behavior ‘bridges’ in general intelligence.

4.4. Future Directions toward Theory Building in Cognitive Neuroscience

Our results that suggest verbal abilities rather than fluid intelligence or working memory might play a more pivotal role in the development of cognitive ability fits with the gradual progression in schooling. For example, before children can successfully be taught more advanced subjects (e.g., history, reading comprehension, etc.), they must first become competent in basic language faculties. In other words, it may be that verbal skills (e.g., reading and spelling) facilitate performance on abstract tests, even in the absence of direct knowledge-based task demands. Recent evidence has been found supporting this notion and suggest that verbal ability, particularly reading and vocabulary in relation to working memory and reasoning, might drive early cognitive development (Kievit et al. 2019; Peng et al. 2018; Zhang and Joshi 2020). Therefore, future studies could further examine whether greater verbal ability in early development facilitates greater acquisition of higher-level cognitive skills by lowering computational demands in working memory.

Moreover, in this context, the fact that the numerical operations task was also found to be central (tri-layer network only) should be expected since mathematics (e.g., arithmetic) also involves symbol manipulation. In terms of mutualism (van der Maas et al. 2006), future models (ideally in longitudinal samples) could test whether language and other symbolic abilities show progressively higher reciprocal associations during early development compared to other abilities until more complex cognition (i.e., fluid reasoning and working memory) develops in later childhood (also see Kievit et al. 2019; Peng et al. 2018).

We argue that future studies should aim to incorporate data from different scales, not only temporal (e.g., development) but also levels of organization (e.g., brain and behavior). Furthermore, results from different levels can more easily be interpreted if these datasets are analyzed and interpreted using a unified quantitative and conceptual framework, such as network science. Last, and perhaps most important, cognitive neuroscientists must formulate mechanistic (e.g., Bertolero et al. 2018) and generative models (for instance, Akarca et al. 2020) to gain further insights from the past and help guide future controlled experiments.

One proposal attempting to explain general intelligence using network neuroscience is The Network Neuroscience Theory of Human Intelligence (NNTHI, Barbey 2018). Barbey argues that general intelligence arises from the dynamic small-world typology of the brain, which permits transitions between “regular” or “easy-to-reach” network states (needed to access prior knowledge for specific abilities) and “random” or “difficult-to-reach” (required to integrate information for broad abilities) network states (i.e., as in network control theory, see Gu et al. 2015). Together, this constrained flexibility allows the brain to adapt to novel cognitive domains (e.g., in abstract reasoning) while still preserving access to previously learned skills (e.g., from schooling).

Evidence supporting the NNTHI has been inconclusive so far (Girn et al. 2019). However, two recent studies, although not directly testing the NNTHI, have shed light on the network neuroscience of cognition. Bertolero et al. (2018) found that a mechanistic model assuming that “connector hubs” (diverse club nodes, see Bertolero et al. 2017), which regulate the activity of their neighboring communities to be more modular but maintain the capability of “task-appropriate information integration across communities”, significantly predicted higher cognitive performance on various tasks, including language and working memory. Furthermore, in the same sample studied here, Akarca et al. (2020) applied a generative network modeling approach to simulate the growth of brain network connectomes, finding that it is possible to simulate structural networks with statistical properties mirroring the spatial embedding of those observed. The parameters of these generative models were shown to correlate with neuroimaging measures not used to train the models (including grey matter measures), cognitive performance (including vocabulary and mathematics), and relate to gene expression in the cortex.

Together, these studies point the field toward a better mechanistic understanding of the development of human brain structure, function, and their relationship with cognitive ability. Researchers must not shy away from but rather embrace the complexity of the brain and cognition (see Fried and Robinaugh 2020 for a similar argument for mental health research). Intelligence is a complex system—to understand it, we must treat it as such.

Supplementary Materials

The following are available online at https://www.mdpi.com/article/10.3390/jintelligence9020032/s1.

Author Contributions

Conceptualization, I.L.S.-K. and R.A.K.; methodology, I.L.S.-K., E.I.F., and S.M.; validation, all authors (including D.A. and E.T.B.), except the CALM Team; formal analysis, I.L.S.-K., E.I.F., and S.M.; investigation, I.L.S.-K.; data curation, the CALM Team and D.A.; writing—original draft preparation, I.L.S.-K. and R.A.K.; writing—review and editing, all authors, except the CALM Team; visualization, I.L.S.-K., D.A., and S.M.; supervision, R.A.K.; project administration, R.A.K.; funding acquisition, R.A.K. All authors have read and agreed to the published version of the manuscript.

Funding

I.L.S.-K. is supported by the Cambridge Trust, E.I.F. did not receive funding for this project, S.M. is funded by the Medical Research Council PhD Studentship, and D.A. by the Medical Research Council Doctoral Training Partnership Studentship (Project Code: SUAH/012; Award: RG86932) and the Cambridge Trust. E.T.B. is supported by an NIHR Senior Investigator award. This project also received funding from the European Union’s Horizon 2020 research and innovation programme (grant agreement number 732592). R.A.K. is supported by the Wellcome Trust (Grant No. 107392/Z/15/Z), the UK Medical Research Council SUAG/014 RG91365, and the Hypatia Fellowship by Radboud University.

Institutional Review Board Statement

Ethical approval was granted by the National Health Service (NHS) Health Research Authority NRES Committee East of England, REC approval reference 13/EE/0157, IRAS 127675.

Informed Consent Statement

Written informed consent was provided by parents/caregivers with verbal assent given by children.

Data Availability Statement

Due to privacy issues, we cannot share the data.

Acknowledgments

The Centre for Attention Learning and Memory (CALM) research clinic is based at and supported by funding from the MRC Cognition and Brain Sciences Unit, University of Cambridge. The Principal Investigators are Joni Holmes (Head of CALM), Susan Gathercole (Chair of CALM Management Committee), Duncan Astle, Tom Manly, Kate Baker, and Rogier Kievit. Data collection is assisted by a team of researchers and PhD students at the CBU that includes Joe Bathelt, Giacomo Bignardi, Sarah Bishop, Erica Bottacin, Lara Bridge, Annie Bryant, Sally Butterfield, Elizabeth Byrne, Gemma Crickmore, Edwin Dalmaijer, Fanchea Daily, Tina Emery, Laura Forde, Grace Franckel, Delia Fuhrmann, Andrew Gadie, Sara Gharooni, Jacalyn Guy, Erin Hawkins, Agniezska Jaroslawska, Amy Johnson, Jonathon Jones, Silvana Mareva, Elise Ng-Cordell, Sinead O’Brien, Cliodhna O’Leary, Joseph Rennie, Ivan Simpson-Kent, Roma Siugzdaite, Tess Smith, Stepheni Uh, Francesca Woolgar, and Mengya Zhang. The authors wish to thank the many professionals working in children’s services in the South-East and East of England for their support, and to the children and their families for giving up their time to visit the clinic. We also want to acknowledge Joe Bathelt, whose neuroimaging pipeline enabled the construction of the white matter (fractional anisotropy) data for Simpson-Kent el al. (2020), data which was again used in the current study.

Conflicts of Interest

E.T.B. is a member of the scientific advisory board of Sosei Heptares.

References

- Akarca, Danyal, Petra E. Vértes, Edward T. Bullmore, the CALM Team, and Duncan E. Astle. 2020. A Generative Network Model of Neurodevelopment. BioRxiv. [Google Scholar] [CrossRef]

- Alexander-Bloch, Aaron, Jay N. Giedd, and Ed Bullmore. 2013. Imaging Structural Co-Variance between Human Brain Regions. Nature Reviews Neuroscience 14: 322–36. [Google Scholar] [CrossRef]

- Alloway, Tracy P. 2007. Automated Working Memory Assessment (AWMA). London: Harcourt Assessment. [Google Scholar]

- Amestoy, Patrick R., Adelchi Azzalini, Tamas Badics, Gregory Benison, Adrian Bowman, Walter Böhm, Keith Briggs, Jeroen Bruggeman, Juergen Buchmueller, Carter T. Butts, and et al. 2020. Igraph: Network Analysis and Visualization (Version 1.2.6). Available online: https://CRAN.R-project.org/package=igraph (accessed on 11 June 2021).

- Avants, Brian B., Charles L. Epstein, Murray Grossman, and James C. Gee. 2008. Symmetric Diffeomorphic Image Registration with Cross-Correlation: Evaluating Automated Labeling of Elderly and Neurodegenerative Brain. Medical Image Analysis 12: 26–41. [Google Scholar] [CrossRef]

- Barabási, Albert-László. 2016. Network Science. Cambridge: Cambridge University Press. Available online: http://networksciencebook.com/ (accessed on 11 June 2021).

- Barbey, Aron K. 2018. Network Neuroscience Theory of Human Intelligence. Trends in Cognitive Sciences 22: 8–20. [Google Scholar] [CrossRef]

- Bassett, Danielle Smith, and Ed Bullmore. 2006. Small-World Brain Networks. The Neuroscientist 12: 512–23. [Google Scholar] [CrossRef] [PubMed]

- Bassett, Danielle S., and Edward T. Bullmore. 2017. Small-World Brain Networks Revisited. The Neuroscientist 23: 499–516. [Google Scholar] [CrossRef] [PubMed]

- Bassett, Danielle S., and Olaf Sporns. 2017. Network Neuroscience. Nature Neuroscience 20: 353–64. [Google Scholar] [CrossRef]

- Basten, Ulrike, Kirsten Hilger, and Christian J. Fiebach. 2015. Where Smart Brains Are Different: A Quantitative Meta-Analysis of Functional and Structural Brain Imaging Studies on Intelligence. Intelligence 51: 10–27. [Google Scholar] [CrossRef]

- Bathelt, Joe, Hilde M. Geurts, and Denny Borsboom. 2020. More than the Sum of Its Parts: Merging Network Psychometrics and Network Neuroscience with Application in Autism. BioRxiv. [Google Scholar] [CrossRef]

- Bertolero, Max A., B. T. Thomas Yeo, and Mark D’Esposito. 2017. The Diverse Club. Nature Communications 8: 1277. [Google Scholar] [CrossRef] [PubMed]

- Bertolero, Maxwell A., B. T. Thomas Yeo, Danielle S. Bassett, and Mark D’Esposito. 2018. A Mechanistic Model of Connector Hubs, Modularity and Cognition. Nature Human Behaviour 2: 765–77. [Google Scholar] [CrossRef]

- Bianconi, Ginestra. 2018. Multilayer Networks: Structure and Function. Oxford: Oxford University Press. [Google Scholar]

- Borsboom, Denny. 2017. A Network Theory of Mental Disorders. World Psychiatry 16: 5–13. [Google Scholar] [CrossRef] [PubMed]

- Bullmore, Ed, and Olaf Sporns. 2012. The Economy of Brain Network Organization. Nature Reviews Neuroscience 13: 336–49. [Google Scholar] [CrossRef] [PubMed]

- Calvin, Catherine M., Ian J. Deary, Candida Fenton, Beverly A. Roberts, Geoff Der, Nicola Leckenby, and G. David Batty. 2011. Intelligence in Youth and All-Cause-Mortality: Systematic Review with Meta-Analysis. International Journal of Epidemiology 40: 626–44. [Google Scholar] [CrossRef]

- Casey, B. J., Tariq Cannonier, May I. Conley, Alexandra O. Cohen, Deanna M. Barch, Mary M. Heitzeg, Mary E. Soules, Theresa Teslovich, Danielle V. Dellarco, Hugh Garavan, and et al. 2018. The Adolescent Brain Cognitive Development (ABCD) Study: Imaging Acquisition across 21 Sites. Developmental Cognitive Neuroscience 32: 43–54. [Google Scholar] [CrossRef] [PubMed]

- Cattell, Raymond B. 1971. Abilities: Their Structure, Growth, and Action. Oxford: Houghton Mifflin. [Google Scholar]

- Dablander, Fabian, and Max Hinne. 2019. Node Centrality Measures Are a Poor Substitute for Causal Inference. Scientific Reports 9: 1–13. [Google Scholar] [CrossRef] [PubMed]

- Dale, Anders M., Bruce Fischl, and Martin I. Sereno. 1999. Cortical Surface-Based Analysis: I. Segmentation and Surface Reconstruction. NeuroImage 9: 179–94. [Google Scholar] [CrossRef]

- de Mooij, Susanne M. M., Richard N. A. Henson, Lourens J. Waldorp, and Rogier A. Kievit. 2018. Age Differentiation within Gray Matter, White Matter, and between Memory and White Matter in an Adult Life Span Cohort. The Journal of Neuroscience 38: 5826–36. [Google Scholar] [CrossRef]

- Desikan, Rahul S., Florent Ségonne, Bruce Fischl, Brian T. Quinn, Bradford C. Dickerson, Deborah Blacker, Randy L. Buckner, Anders M. Dale, R. Paul Maguire, Bradley T. Hyman, and et al. 2006. An Automated Labeling System for Subdividing the Human Cerebral Cortex on MRI Scans into Gyral Based Regions of Interest. NeuroImage 31: 968–80. [Google Scholar] [CrossRef]

- Dunn, Lloyd M., and Douglas M. Dunn. 2007. PPVT-4: Peabody Picture Vocabulary Test. London and San Antonio: Pearson Assessments. [Google Scholar]

- Epskamp, Sacha, and Eiko I. Fried. 2018. A Tutorial on Regularized Partial Correlation Networks. Psychological Methods 23: 617–34. [Google Scholar] [CrossRef]

- Epskamp, Sacha, and Eiko I. Fried. 2020. Bootnet: Bootstrap Methods for Various Network Estimation Routines (Version 1.4.3). Available online: https://CRAN.R-project.org/package=bootnet (accessed on 11 June 2021).

- Epskamp, Sacha, Denny Borsboom, and Eiko I. Fried. 2018. Estimating Psychological Networks and Their Accuracy: A Tutorial Paper. Behavior Research Methods 50: 195–212. [Google Scholar] [CrossRef]

- Epskamp, Sacha, Giulio Costantini, Jonas Haslbeck, Adela Isvoranu, Angelique O. J. Cramer, Lourens J. Waldorp, Verena D. Schmittmann, and Denny Borsboom. 2020. Qgraph: Graph Plotting Methods, Psychometric Data Visualization and Graphical Model Estimation (Version 1.6.5). Available online: https://CRAN.R-project.org/package=qgraph (accessed on 11 June 2021).

- Fischl, Bruce, and Anders M. Dale. 2000. Measuring the Thickness of the Human Cerebral Cortex from Magnetic Resonance Images. Proceedings of the National Academy of Sciences 97: 11050–55. [Google Scholar] [CrossRef]

- Fischl, Bruce, Martin I. Sereno, and Anders M. Dale. 1999. Cortical Surface-Based Analysis. II: Inflation, Flattening, and a Surface-Based Coordinate System. NeuroImage 9: 195–207. [Google Scholar] [CrossRef] [PubMed]

- Fischl, Bruce, David H. Salat, Evelina Busa, Marilyn Albert, Megan Dieterich, Christian Haselgrove, and Andre van der Kouwe. 2002. Whole Brain Segmentation: Automated Labeling of Neuroanatomical Structures in the Human Brain. Neuron 33: 341–55. [Google Scholar] [CrossRef]

- Fornito, Alex, and Edward T. Bullmore. 2012. Connectomic Intermediate Phenotypes for Psychiatric Disorders. Frontiers in Psychiatry 3: 1–15. [Google Scholar] [CrossRef] [PubMed]

- Fornito, Alex, Andrew Zalesky, and Edward Bullmore. 2016. Fundamentals of Brain Network Analysis. London, San Diego, Cambridge, and Oxford: Academic Press. [Google Scholar]

- Fried, Eiko I. 2020. Lack of Theory Building and Testing Impedes Progress in The Factor and Network Literature. Psychological Inquiry 31: 271–88. [Google Scholar] [CrossRef]

- Fried, Eiko I., and Angélique O. J. Cramer. 2017. Moving Forward: Challenges and Directions for Psychopathological Network Theory and Methodology. Perspectives on Psychological Science 12: 999–1020. [Google Scholar] [CrossRef]

- Fried, Eiko I., and Donald J. Robinaugh. 2020. Systems All the Way down: Embracing Complexity in Mental Health Research. BMC Medicine 18: 205. [Google Scholar] [CrossRef]

- Friedman, Jerome, Trevor Hastie, and Robert Tibshirani. 2008. Sparse Inverse Covariance Estimation with the Graphical Lasso. Biostatistics 9: 432–41. [Google Scholar] [CrossRef]

- Gates, Kathleen M., Teague Henry, Doug Steinley, and Damien A. Fair. 2016. A Monte Carlo Evaluation of Weighted Community Detection Algorithms. Frontiers in Neuroinformatics 10: 45. [Google Scholar] [CrossRef]

- Gathercole, Susan E., Elisabet Service, Graham J. Hitch, Anne-Marie Adams, and Amanda J. Martin. 1999. Phonological Short-Term Memory and Vocabulary Development: Further Evidence on the Nature of the Relationship. Applied Cognitive Psychology 13: 65–77. [Google Scholar] [CrossRef]

- Gathercole, Susan E., Emily Durling, Matthew Evans, Sarah Jeffcock, and Sarah Stone. 2008. Working Memory Abilities and Children’s Performance in Laboratory Analogues of Classroom Activities. Applied Cognitive Psychology 22: 1019–37. [Google Scholar] [CrossRef]

- Girn, Manesh, Caitlin Mills, and Kalina Christoff. 2019. Linking Brain Network Reconfiguration and Intelligence: Are We There Yet? Trends in Neuroscience and Education 15: 62–70. [Google Scholar] [CrossRef]

- Graham, Mark S., Ivana Drobnjak, and Hui Zhang. 2016. Realistic Simulation of Artefacts in Diffusion MRI for Validating Post-Processing Correction Techniques. NeuroImage 125: 1079–94. [Google Scholar] [CrossRef]

- Gu, Shi, Fabio Pasqualetti, Matthew Cieslak, Qawi K. Telesford, Alfred B. Yu, Ari E. Kahn, John D. Medaglia, Jean M. Vettel, Michael B. Miller, Scott T. Grafton, and et al. 2015. Controllability of Structural Brain Networks. Nature Communications 6: 1–10. [Google Scholar] [CrossRef] [PubMed]

- Hegelund, Emilie Rune, Trine Flensborg-Madsen, Jesper Dammeyer, and Erik Lykke Mortensen. 2018. Low IQ as a Predictor of Unsuccessful Educational and Occupational Achievement: A Register-Based Study of 1,098,742 Men in Denmark 1968–2016. Intelligence 71: 46–53. [Google Scholar] [CrossRef]

- Henry, Teague R., Donald Robinaugh, and Eiko I. Fried. 2020. On the Control of Psychological Networks. PsyArXiv. [Google Scholar] [CrossRef]

- Hilland, Eva, Nils I. Landrø, Brage Kraft, Christian K. Tamnes, Eiko I. Fried, Luigi A. Maglanoc, and Rune Jonassen. 2020. Exploring the Links between Specific Depression Symptoms and Brain Structure: A Network Study. Psychiatry and Clinical Neurosciences 74: 220–21. [Google Scholar] [CrossRef]

- Holmes, Joni, Annie Bryant, Susan Elizabeth Gathercole, and the CALM Team. 2019. Protocol for a Transdiagnostic Study of Children with Problems of Attention, Learning and Memory (CALM). BMC Pediatrics 19: 1–11. [Google Scholar] [CrossRef]

- Hua, Kegang, Jiangyang Zhang, Setsu Wakana, Hangyi Jiang, Xin Li, Daniel S. Reich, Peter A. Calabresi, James J. Pekar, Peter C.M. van Zijl, and Susumu Mori. 2008. Tract Probability Maps in Stereotaxic Spaces: Analyses of White Matter Anatomy and Tract-Specific Quantification. NeuroImage 39: 336–47. [Google Scholar] [CrossRef]

- Jones, Payton. 2020. Networktools: Tools for Identifying Important Nodes in Networks (Version 1.2.3). Available online: https://CRAN.R-project.org/package=networktools (accessed on 11 June 2021).

- Jones, Payton J., Ruofan Ma, and Richard J. McNally. 2019. Bridge Centrality: A Network Approach to Understanding Comorbidity. Multivariate Behavioral Research, 1–15. [Google Scholar] [CrossRef]

- Jung, Rex E., and Richard J. Haier. 2007. The Parieto-Frontal Integration Theory (P-FIT) of Intelligence: Converging Neuroimaging Evidence. Behavioral and Brain Sciences 30: 135. [Google Scholar] [CrossRef]

- Kan, Kees-Jan, Han L. J. van der Maas, and Stephen Z. Levine. 2019. Extending Psychometric Network Analysis: Empirical Evidence against g in Favor of Mutualism? Intelligence 73: 52–62. [Google Scholar] [CrossRef]

- Khundrakpam, Budhachandra S., John D. Lewis, Andrew Reid, Sherif Karama, Lu Zhao, Francois Chouinard-Decorte, and Alan C. Evans. 2017. Imaging Structural Covariance in the Development of Intelligence. NeuroImage 144: 227–40. [Google Scholar] [CrossRef]

- Kievit, Rogier A., and Ivan L. Simpson-Kent. 2021. It’s About Time: Towards a Longitudinal Cognitive Neuroscience of Intelligence. In The Cambridge Handbook of Intelligence and Cognitive Neuroscience. Cambridge: Cambridge University Press. [Google Scholar]

- Kievit, Rogier A., Simon W. Davis, John Griffiths, Marta M. Correia, Cam-CAN, and Richard N. Henson. 2016. A Watershed Model of Individual Differences in Fluid Intelligence. Neuropsychologia 91: 186–98. [Google Scholar] [CrossRef]

- Kievit, Rogier A., Ulman Lindenberger, Ian M. Goodyer, Peter B. Jones, Peter Fonagy, Edward T. Bullmore, and Raymond J. Dolan. 2017. Mutualistic Coupling Between Vocabulary and Reasoning Supports Cognitive Development During Late Adolescence and Early Adulthood. Psychological Science 28: 1419–31. [Google Scholar] [CrossRef]

- Kievit, Rogier A., Delia Fuhrmann, Gesa Sophia Borgeest, Ivan L. Simpson-Kent, and Richard N. A. Henson. 2018. The Neural Determinants of Age-Related Changes in Fluid Intelligence: A Pre-Registered, Longitudinal Analysis in UK Biobank. Wellcome Open Research 3: 38. [Google Scholar] [CrossRef] [PubMed]

- Kievit, Rogier A., Abe D. Hofman, and Kate Nation. 2019. Mutualistic Coupling between Vocabulary and Reasoning in Young Children: A Replication and Extension of the Study by Kievit et al. (2017). Psychological Science 30: 1245–52. [Google Scholar] [CrossRef]

- Kline, Rex B. 2015. Principles and Practice of Structural Equation Modeling, 4th ed. New York: Guilford Press. [Google Scholar]

- Krogsrud, Stine K., Anders M. Fjell, Christian K. Tamnes, Håkon Grydeland, Paulina Due-Tønnessen, Atle Bjørnerud, Cassandra Sampaio-Baptista, Jesper Andersson, Heidi Johansen-Berg, and Kristine B. Walhovd. 2018. Development of White Matter Microstructure in Relation to Verbal and Visuospatial Working Memory—A Longitudinal Study. PLoS ONE 13: e0195540. [Google Scholar] [CrossRef] [PubMed]

- Levenstein, Daniel, Veronica A. Alvarez, Asohan Amarasingham, Habiba Azab, Richard C. Gerkin, Andrea Hasenstaub, and Ramakrishnan Iyer. 2020. On the Role of Theory and Modeling in Neuroscience. arXiv arXiv:2003.13825. Available online: http://arxiv.org/abs/2003.13825 (accessed on 11 June 2021).

- Levine, Stephen Z., and Stefan Leucht. 2016. Identifying a System of Predominant Negative Symptoms: Network Analysis of Three Randomized Clinical Trials. Schizophrenia Research 178: 17–22. [Google Scholar] [CrossRef] [PubMed]

- Mareva, Silvana, and Joni Holmes. 2020. Network Models of Learning and Cognition in Typical and Atypical Learners. September. OSF Preprints. [Google Scholar] [CrossRef]

- Marr, David, and Tomaso Poggio. 1976. From Understanding Computation to Understanding Neural Circuitry. May. Available online: https://dspace.mit.edu/handle/1721.1/5782 (accessed on 11 June 2021).

- Meunier, David, Renaud Lambiotte, and Edward T. Bullmore. 2010. Modular and Hierarchically Modular Organization of Brain Networks. Frontiers in Neuroscience 4: 200. [Google Scholar] [CrossRef]

- Navas-Sánchez, Francisco J., Yasser Alemán-Gómez, Javier Sánchez-Gonzalez, Juan A. Guzmán-De-Villoria, Carolina Franco, Olalla Robles, Celso Arango, and Manuel Desco. 2014. White Matter Microstructure Correlates of Mathematical Giftedness and Intelligence Quotient: White Matter Microstructure. Human Brain Mapping 35: 2619–31. [Google Scholar] [CrossRef]

- Newman, Mark E. J. 2006. Modularity and Community Structure in Networks. Proceedings of the National Academy of Sciences 103: 8577–82. [Google Scholar] [CrossRef]

- Nooner, Kate Brody, Stanley J. Colcombe, Russell H. Tobe, Maarten Mennes, Melissa M. Benedict, Alexis L. Moreno, and Laura J. Panek. 2012. The NKI-Rockland Sample: A Model for Accelerating the Pace of Discovery Science in Psychiatry. Frontiers in Neuroscience 6: 152. [Google Scholar] [CrossRef]

- Peng, Peng, Marcia Barnes, CuiCui Wang, Wei Wang, Shan Li, H. Lee Swanson, William Dardick, and Sha Tao. 2018. A Meta-Analysis on the Relation between Reading and Working Memory. Psychological Bulletin 144: 48–76. [Google Scholar] [CrossRef] [PubMed]

- Poldrack, Russell A., Chris I. Baker, Joke Durnez, Krzysztof J. Gorgolewski, Paul M. Matthews, Marcus R. Munafò, Thomas E. Nichols, Jean-Baptiste Poline, Edward Vul, and Tal Yarkoni. 2017. Scanning the Horizon: Towards Transparent and Reproducible Neuroimaging Research. Nature Reviews Neuroscience 18: 115–26. [Google Scholar] [CrossRef] [PubMed]

- Pons, Pascal, and Matthieu Latapy. 2005. Computing Communities in Large Networks Using Random Walks. In Computer and Information Sciences—ISCIS 2005. Edited by Pinar Yolum, Tunga Güngör, Fikret Gürgen and Can Özturan. Lecture Notes in Computer Science. Berlin/Heidelberg: Springer, pp. 284–93. [Google Scholar] [CrossRef]

- R Core Team. 2020. R: A Language and Environment for Statistical Computing. Vienna: R Foundation for Statistical Computing. Available online: https://www.R-project.org (accessed on 11 June 2021).

- Robinaugh, Donald J., Ria H. A. Hoekstra, Emma R. Toner, and Denny Borsboom. 2019. The Network Approach to Psychopathology: A Review of the Literature 2008–2018 and an Agenda for Future Research. Psychological Medicine 50: 353–66. [Google Scholar] [CrossRef]

- Rohrer, Julia M. 2018. Thinking Clearly About Correlations and Causation: Graphical Causal Models for Observational Data. Advances in Methods and Practices in Psychological Science 1: 27–42. [Google Scholar] [CrossRef]

- Schmank, Christopher J., Sara Anne Goring, Kristof Kovacs, and Andrew R. A. Conway. 2019. Psychometric Network Analysis of the Hungarian WAIS. Journal of Intelligence 7: 21. [Google Scholar] [CrossRef]

- Schmank, Christopher J., Sara Anne Goring, Kristof Kovacs, and Andrew R. A. Conway. 2021. Investigating the Structure of Intelligence Using Latent Variable and Psychometric Network Modeling: A Commentary and Reanalysis. Journal of Intelligence 9: 8. [Google Scholar] [CrossRef] [PubMed]

- Schmiedek, Florian, Martin Lövdén, Timo von Oertzen, and Ulman Lindenberger. 2020. Within-Person Structures of Daily Cognitive Performance Differ from between-Person Structures of Cognitive Abilities. PeerJ 8: e9290. [Google Scholar] [CrossRef] [PubMed]

- Seidlitz, Jakob, František Váša, Maxwell Shinn, Rafael Romero-Garcia, Kirstie J. Whitaker, Petra E. Vértes, Konrad Wagstyl, Paul Kirkpatrick Reardon, Liv Clasen, Siyuan Liu, and et al. 2018. Morphometric Similarity Networks Detect Microscale Cortical Organization and Predict Inter-Individual Cognitive Variation. Neuron 97: 231–47. [Google Scholar] [CrossRef] [PubMed]

- Simpson-Kent, Ivan L., Delia Fuhrmann, Joe Bathelt, Jascha Achterberg, Gesa Sophia Borgeest, and Rogier A. Kievit. 2020. Neurocognitive Reorganization between Crystallized Intelligence, Fluid Intelligence and White Matter Microstructure in Two Age-Heterogeneous Developmental Cohorts. Developmental Cognitive Neuroscience 41: 100743. [Google Scholar] [CrossRef]

- Smith, Stephen M. 2002. Fast Robust Automated Brain Extraction. Human Brain Mapping 17: 143–55. [Google Scholar] [CrossRef]

- Solé-Casals, Jordi, Josep M. Serra-Grabulosa, Rafael Romero-Garcia, Gemma Vilaseca, Ana Adan, Núria Vilaró, Núria Bargalló, and Edward T. Bullmore. 2019. Structural Brain Network of Gifted Children Has a More Integrated and Versatile Topology. Brain Structure and Function 224: 2373–83. [Google Scholar] [CrossRef]

- Spearman, Charles. 1904. ‘General Intelligence’, Objectively Determined and Measured. The American Journal of Psychology 15: 201–92. [Google Scholar] [CrossRef]

- Sporns, Olaf, and Richard F. Betzel. 2016. Modular Brain Networks. Annual Review of Psychology 67: 613–40. [Google Scholar] [CrossRef]

- Sporns, Olaf, Christopher J. Honey, and Rolf Kötter. 2007. Identification and Classification of Hubs in Brain Networks. PLoS ONE 2: e1049. [Google Scholar] [CrossRef]

- van Bork, Riet, Mijke Rhemtulla, Lourens J. Waldorp, Joost Kruis, Shirin Rezvanifar, and Denny Borsboom. 2019. Latent Variable Models and Networks: Statistical Equivalence and Testability. Multivariate Behavioral Research, 1–24. [Google Scholar] [CrossRef] [PubMed]

- van den Heuvel, Martijn P., and Olaf Sporns. 2011. Rich-Club Organization of the Human Connectome. Journal of Neuroscience 31: 15775–86. [Google Scholar] [CrossRef] [PubMed]

- van den Heuvel, Martijn P., and Olaf Sporns. 2013. Network Hubs in the Human Brain. Trends in Cognitive Sciences 17: 683–96. [Google Scholar] [CrossRef]

- van der Maas, Han L. J., Conor V. Dolan, Raoul P. P. P. Grasman, Jelte M. Wicherts, Hilde M. Huizenga, and Maartje E. J. Raijmakers. 2006. A Dynamical Model of General Intelligence: The Positive Manifold of Intelligence by Mutualism. Psychological Review 113: 842–61. [Google Scholar] [CrossRef]

- van der Maas, Han L. J., Kees-Jan Kan, Maarten Marsman, and Claire E. Stevenson. 2017. Network Models for Cognitive Development and Intelligence. Journal of Intelligence 5: 16. [Google Scholar] [CrossRef]

- Wandell, Brian A. 2016. Clarifying Human White Matter. Annual Review of Neuroscience 39: 103–28. [Google Scholar] [CrossRef] [PubMed]

- Wechsler, David. 2005. Wechsler Individual Achievement Test, 2nd ed. London: Pearson. [Google Scholar]

- Wechsler, David. 2008. Wechsler Adult Intelligence Scale, 4nd ed. San Antonio: The Psychological Corporation. [Google Scholar]

- Wechsler, David. 2011. Wechsler Abbreviated Scales of Intelligence, 2nd ed. London: Pearson. [Google Scholar]

- Zhang, Shuai, and R. Malatesha Joshi. 2020. Longitudinal Relations between Verbal Working Memory and Reading in Students from Diverse Linguistic Backgrounds. Journal of Experimental Child Psychology 190: 104727. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).