1. Introduction

It is widely accepted that diagnostic decisions need to be based on strong assessment practices. There are several indicators suggesting that the diagnostic process can be challenging when a standardized, norm-referenced test is used to assess students from diverse backgrounds. One is the disproportionate number of students from diverse backgrounds qualifying for special education services under a variety of disability categories (

Harry and Klingner 2014). This problem has been described as being “among the most longstanding and intransigent issues in the field” (

Skiba et al. 2008, p. 264). Although multiple factors contribute to the diagnostic process, some researchers have focused their attention on a type of test (e.g., cognitive measures) used to inform their diagnostic decisions (e.g.,

Cormier et al. 2014;

Styck and Watkins 2013). However, even as researchers focus on one type of test, there is a myriad of potential contributing factors that they examine. For example, some researchers have investigated potential differences in the linguistic environments of students from diverse backgrounds compared to those of the majority, which are better represented in a test’s normative sample (

Ortiz et al. 2012). Regardless of their focus, one of the limitations of many of these studies is that the group of interest is defined as culturally and linguistically diverse students, which is very broad. More recently,

Ortiz (

2019) has argued for a narrower focus on English learners (EL), so recommendations for practice can be applied more appropriately by considering a single attribute, such as their language abilities.

English learner (EL) has frequently been used as the primary descriptor for the group of students who are likely going to struggle to demonstrate their true abilities on a standardized measure that is normed using an English-speaking population (

Ortiz 2019). EL specifically refers to students who are non-native English speakers with acquired proficiency in a second language (

Ortiz 2019). At times, the term EL is confused with bilingual. This confusion is problematic because the word bilingual does not necessarily imply that English is the second language acquired by the student. EL is more appropriate given that the students within this group can fall at various points along the English proficiency continuum (

Ortiz 2019). Furthermore, any given level of English proficiency does not negate the fact that these students are either continuing to develop their proficiency skills in English or are working towards doing so (

Ortiz 2019). To improve accuracy in diagnostic decisions, psychologists and other professionals conducting assessments must take a comprehensive approach to understand both the examinee and test characteristics that may influence test performance for EL students when they are administered standardized, norm-referenced tests.

1.1. Assessing the Abilities of EL Students

The valid assessment of EL students often poses a significant challenge for psychologists. Although a number of broad and specific professional standards exist, best practices are still unclear. For example, Standard 9.9 from the

Standards for Psychological and Educational Testing (

AERA et al. 2014) emphasizes the requirement of having a “sound rationale and empirical evidence, when possible, for concluding that […] the validity of interpretations based on the scores will not be compromised” (p. 144). The

Standards further stress that when a test is administered to an EL student, “the test user should investigate the validity of the score interpretations for test-takers with limited proficiency in the language of the test” (p. 145). The commentary associated with this specific standard highlights the responsibility of the psychologist in ensuring that language proficiency is minimized as a factor in the interpretation of the test scores.

If practicing psychologists were to consult the available evidence, they would need to weigh multiple variables and attempt to infer the extent to which a particular student’s assessment data is influenced by such variables. To date, some studies have suggested varying degrees of observed score attenuation for linguistically diverse students (e.g.,

Cormier et al. 2014;

Kranzler et al. 2010;

Tychanska 2009). Other studies have reported that the rate of differentiation between groups is no better than chance (

Styck and Watkins 2013). Regardless of the underlying causal variable, these studies only suggest that practitioners should be cautious when interpreting test results. Thus, the extant literature does not provide specific recommendations or strategies for generating or testing hypotheses that would help practitioners better understand which test or examinee characteristics influence observed test score performance. Nonetheless, the two key areas initially identified and described by

Flanagan et al. (

2007)—test characteristics and examinee characteristics—continue to be focal areas of interest, as researchers attempt to develop approaches to assessing EL students that are in line with best practices.

1.1.1. Test Characteristics

For decades, the content, administration, scoring, and interpretation of tests—essentially, tests’ psychometric properties—were highly criticized as producing biased scores for EL students (see

Reynolds 2000, for a comprehensive review). Years of research produced arguments (e.g.,

Helms 1992) and counterarguments (e.g.,

Brown et al. 1999;

Jensen 1980) with respect to this controversy. Although there was some evidence of bias in the measures used in previous decades, test developers appear to have taken note of the limitations of previous editions and, as a result, contemporary measures have significantly reduced the psychometric biases between groups (

Reynolds 2000).

Despite these advances within the test development process, researchers have continued to investigate potential issues. For example,

Cormier et al. (

2011) were the first to quantify the linguistic demand of the directions associated with the administration of a standardized measure. Their goal was to examine the relative influence of this variable across a measure’s individual tests (i.e., subtests); if there was considerable variability among a measure’s individual tests, then this may be a meaningful variable to consider as practitioners selected their battery when assessing EL students. They accomplished this goal by using an approach suggested by Dr. John “Jack” Carroll, a prominent scholar who was not only a major contributor to the initial Cattell-Horn-Carroll (CHC) Theory of Intelligence, but who also had a passion for psycholinguistics. The methodology involved using text readability formulas to approximate the linguistic demand of test directions required with the 20 tests from the Woodcock-Johnson Tests of Cognitive Abilities, Third Edition (WJ III;

Woodcock et al. 2001). A subsequent study (

Cormier et al. 2016) investigated the linguistic demand of test directions across two editions of the same cognitive battery—the WJ III and the Woodcock-Johnson Tests of Cognitive Abilities, Fourth Edition (WJ IV COG;

Schrank et al. 2014a). Eventually,

Cormier et al. (

2018) identified several test outliers across commonly used cognitive test batteries by applying the methodology used by

Cormier et al. (

2011). Although this series of studies appears to have produced meaningful recommendations to practitioners with respect to test selection, the extent to which test directions have a significant influence on the actual performance of examinees remains unknown.

1.1.2. Examinee Characteristics

Standardized measures are constructed based on the presumption that the students who are assessed using the measures possess a normative level of English proficiency. (

Flanagan et al. 2007). Under this presumption, the average examinee would be able to understand test directions, produce verbal responses, or otherwise use their English language skills at a level that is consistent with their peers (

Flanagan et al. 2007). However, as noted by

Ortiz (

2019), there is likely to be a continuum of language levels within an EL population. Unfortunately, researchers have not focused on the level of English language proficiency as a way of differentiating performance on standardized measures. As a result, much of the research completed to date only provides information on general group-level trends that may or may not be observed by practitioners

after they have administered a battery of standardized measures. One of the potential reasons for the lack of consistency may be related to the population of interest being defined as culturally

and linguistically diverse, instead of focusing on a specific, measurable student characteristic, such as English language proficiency. As a result, practitioners may be reluctant to incorporate additional measures into their assessment batteries when testing EL students because: (a) there is no clear definition of the type of student these recommendations would apply to; and (b) the evidence regarding the validity of patterns of performance on standardized measures is, at best, still mixed (e.g.,

Styck and Watkins 2013). Thus, there appears to be a need to examine the influence of both test and examinee characteristics together to better understand the impact of these characteristics on test performance when standardized measures are used.

1.2. Current Study

A review of the literature has led to the conclusion that researchers have, perhaps, overlooked the potential of considering examinee characteristics as they attempt to produce research with empirical recommendations for the assessment of EL students. Moreover, the data produced by

Cormier et al. (

2018) provide a quantification of the linguistic demands of many commonly used measures of cognitive abilities. Taken together, it is now possible to investigate test and examinee characteristics together, to understand their relative contributions to performance on standardized measures. Consequently, we sought to answer the following research question: What are the relative contributions of test characteristics (i.e., the linguistic demand of test directions) and examinee characteristics (i.e., oral English language skills) to performance on a standardized measure of cognitive abilities?

3. Results

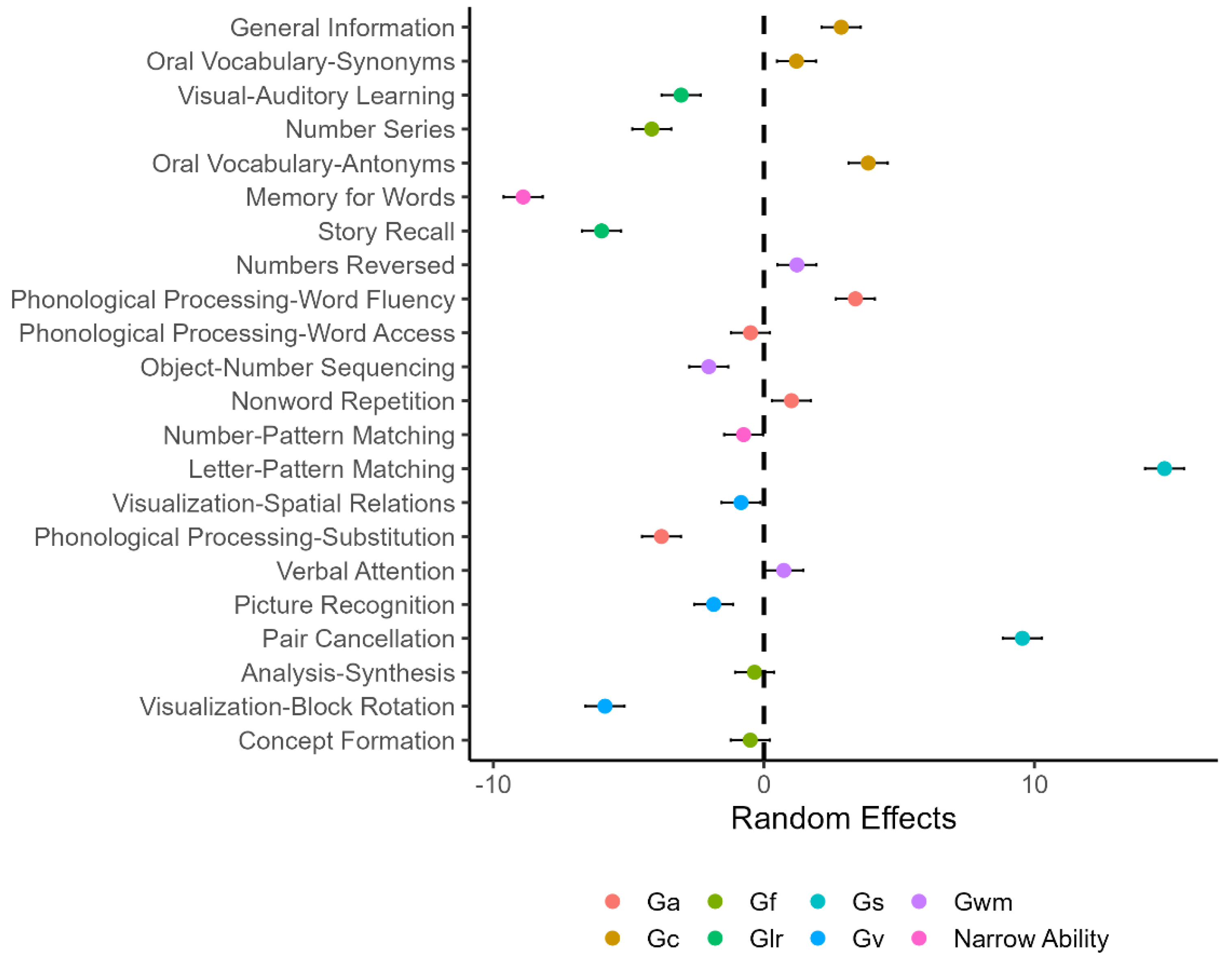

The results of the mixed-effects models are summarized in

Table 2 and

Table 3. The variance estimates for Model 1 indicated that there was considerable variation in the students’ scores at both levels 1 and 2. The random effect estimates in

Figure 1 essentially represent the differences between the average scores for the tests and the average score across all tests (i.e., vertical, dashed line). In addition, the WJ IV COG tests on the

y-axis are sorted in descending order by the linguistic demand of the test directions. Relative to the overall average score, the average score for Memory for Words was the smallest, whereas the average score for Letter-Pattern Matching was the highest.

Figure 1 shows that although the Gc tests (i.e., Comprehension-Knowledge) seem to have higher linguistic demand than the remaining tests, there is no systematic pattern in terms of the relationship between the average scores and the linguistic demand of their test directions.

Figure 1 also indicates that the WJ IV COG tests differ regarding students’ performance on the tests. Thus, test- and student-level predictors can be used to further explain the variation among the tests.

The first model with fixed-effect predictors was Model 2.

Table 2 shows that both the GIA scores and age were significant, positive predictors of student performance on individual cognitive tests. This result was anticipated given that all the abilities measured by the WJ IV COG are expected to continue to develop at an accelerated rate within the age range of the sample used for this study (see

McGrew et al. 2014, p. 137). Similarly, the GIA score is expected to have a positive relationship with individual cognitive tests because it is the composite of the theoretical constructs (i.e., first-order factors) underlying the individual tests in the WJ IV COG. The GIA score is interpreted as a robust measure of statistical or psychometric

g.

It should be noted that there are renewed debates regarding what intelligence test global composite scores (e.g., GIA, Wechsler Full-Scale IQ) represent. Briefly, the finding of a statistical or psychometric

g factor is one of the most robust findings over the last 100+ years in psychology (

Wasserman 2019). However, recent statistical and theoretical advances using network science methods (viz., psychometric network analysis; PNA) have suggested that psychometric

g is only a necessary mathematical convenience and a statistical abstraction. The reification of the

g factor in psychometrics is due, in large part, to the conflation of psychometric and theoretical

g and has contributed to the theory crises in intelligence research (

Fried 2020). Researchers using contemporary cognitive theories of intelligence (e.g., dynamic mutualism; process overlap theory; wired intelligence) have shown valid alternative non-latent trait common cause factor explanations of the positive manifold of intelligence tests. Furthermore, the previously mentioned period of 100+ years of research regarding general intelligence has also robustly demonstrated that there is yet no known biological or cognitive process theoretical basis of psychometric

g (

Barbey 2018;

Detterman et al. 2016;

Kovacs and Conway 2019;

van der Maas et al. 2019;

Protzko and Colom 2021). Therefore, in this paper, the GIA score is interpreted to reflect an emergent property that is a pragmatic statistical proxy for psychometric

g, and not a theoretical individual differences latent trait characteristic of people. This conceptualization of the GIA score is similar to the emergent property of an engine’s horsepower: an emergent property index that summarizes the efficiency of the complex interaction of engine components (i.e., interacting brain networks), in the absence of a “horsepower” component or factor (i.e., theoretical or psychological

g). Given that this debate is still unresolved after 100+ years of research, the GIA cluster was left in the analysis to acknowledge the possibility that theoretical or psychological

g may exist and to recognize the strong pragmatic predictive powers of such a general proxy. Leaving GIA out of the analysis, which would reflect a strong “there is no

g” position (

McGrew et al. 2022), reflects the authors’ recognition that many intelligence scholars still maintain a belief in the existence of an elusive underlying biological brain-based common cause mechanism. More importantly, the inclusion of GIA recognizes the overwhelming evidence of the robust pragmatic predictive power of psychometric

g. As such, the inclusion of age and the GIA scores in the model allowed us to control for their effects when including additional variables. The inclusion of age and the GIA scores also explained a large amount of variance at the student level (level 1), reducing the level-1 unexplained variance from 573.39 to 265.72 (i.e., 46% reduction).

When the variable

test directions and its interaction with age were added to the model (Model 3), the variance estimate for level 2 (i.e., tests) remained relatively unchanged. This finding was mainly because

test directions was not a significant predictor of the variation in the subtest scores. An interesting finding was that despite the variable

test directions not being a significant predictor of cognitive test performance, its interaction with age was a statistically significant, negative predictor of cognitive test performance. This finding indicates that the impact of

test directions was larger for younger students who are expected to have lower language proficiency compared with older students. This trend—

test directions not being a significant predictor of individual cognitive test performance, but its interaction with age being a significant predictor of individual cognitive test performance—continued as the two additional variables were added to the model (see Models 4, 5, and 6 in

Table 2). Models 4 and 5 showed that both

oral expression and

listening comprehension were statistically significant, positive predictors of individual cognitive test performance, after controlling for the effects of age and general intelligence.

Another important finding was that when both

oral expression and

listening comprehension were included in the model (Model 6), the two predictors remained statistically significant. The standardized beta coefficients produced from models 4 and 5 suggest that oral expression and listening comprehension were stronger predictors of cognitive test performance than students’ age, but relatively weaker predictors compared with the GIA score (see

Table 3). When these four predictors (age, GIA, oral expression, and listening comprehension) were used together in the final model (Model 6), the GIA remains the strongest predictor, followed by listening comprehension (receptive language ability), oral expression (expressive language ability), and age, based on the standardized coefficients. The standardized beta coefficients also provided additional information regarding the negligible contribution of test directions within the models.

4. Discussion

The results of this study represent an integration of multiple pieces of empirical research completed over several years and across numerous studies. When examined in isolation, it appeared that examinee characteristics and test characteristics (e.g., test directions) both played meaningful roles in the administration and interpretation of cognitive tests for students. However, the integration of these potential influences into a single model has led to a surprising finding: the influence of examinee characteristics appears to eliminate the contribution of this test characteristic (i.e., the linguistic demand of test directions) on test performance. In addition, the influence of language ability, particularly receptive language ability, is more influential than age on cognitive test performance. This last point highlights the importance of considering language abilities when assessing students’ cognitive abilities.

4.1. Examinee versus Test Characteristics

There appears to be increasing evidence that previous claims related to test characteristics (e.g.,

Cormier et al. 2011) no longer apply to contemporary tests of cognitive abilities. For example, the observed lack of a relationship between test directions and performance on the WJ IV in our study appears to draw a parallel with comments made by Cormier, Wang, and Kennedy, as they observed a “reduction in the relative verbosity of the test directions” when comparing the most recent version of the Wechsler Intelligence Scale for Children (WISC;

Wechsler 2014) to the previous version (

Wechsler 2003). These findings, in addition to the generation of clear guidelines for test development (e.g.,

AERA et al. 2014), both support the notion that large-scale, standardized measures include greater evidence of validity for the diverse population from which they are normed. This, in turn, likely contributes to increased fairness for the students that are assessed using these tests.

Despite the advances in test development, considerable challenges in assessing EL students remain for psychologists. One such challenge is assessing the cognitive abilities of the growing number of students who are considered ELs; limited English proficiency can lead to linguistically biased test results, which would lead to a misrepresentation of the examinee’s true cognitive abilities. To eliminate this potential source of bias, psychologists testing EL students could consider examinee characteristics

before administering a standardized measure of cognitive ability. This idea is not new. More than a decade ago,

Flanagan et al. (

2007) noted the critical need for psychologists to collect information regarding students’ level of English proficiency, and the level of English required for the student to be able to comprehend test directions, formulate and communicate responses, or otherwise use their English language abilities within the testing process. Nonetheless, the results of our study provide an

empirical basis in support of this broad recommendation.

4.2. Assessing English Language Abilities

The primary reason for assessing an examinee’s English language skills is to determine if the examinee has receptive and expressive language skills that are comparable to the measure’s normative sample. However, relying on one’s clinical judgment when assessing an examinee’s expressive and receptive language abilities is not likely to lead to positive outcomes. If practitioners only rely on their own judgment to determine the examinee’s receptive and expressive language abilities, this could lead to either an under- or over-estimation of these abilities. An under-estimation could occur if the examiner deviates from the standardized administration because they do not believe that the examinee has understood the directions. Thus, the linguistic demand of the actual, standardized test directions is potentially reduced. An over-estimation may occur if the examiner disregards the influence of the examinee’s language abilities during testing and the results are interpreted the same for all examinees, regardless of their language abilities. In either case, an examiner who relies on their own judgment introduces unnecessary error into the assessment process. Therefore, especially in the context of testing EL students, practitioners should collect data on the receptive and expressive language abilities of examinees, so they can more accurately and reliably consider the potential influence of these variables on test performance.

Testing both expressive and receptive language abilities is critical for several other reasons. First, the results of the current study suggest that both make unique contributions to cognitive test performance. Second, a student’s receptive and expressive language abilities are not always at the same level of proficiency. Moreover, although a student’s conversational level of English language proficiency could be perceived to be relatively consistent with their peers’, their level of academic language proficiency may not be sufficient to fully benefit from classroom instruction or understand test directions to the same extent of a native English language speaker (

Cummins 2008).

Some practitioners may have concerns regarding the additional testing time required to administer, score, and interpret performance on language ability tests.

Flanagan et al. (

2013) addressed this concern well, as they explained:

Irrespective of whether test scores ultimately prove to have utility or not, practitioners must endeavor to ascertain the extent to which the validity of any obtained test scores may have been compromised prior to and before any interpretation is offered or any meaning assigned to them.

(p. 309)

Therefore, not only would this process be consistent with the aforementioned standards, but it would also lead to recommendations that are better informed and tailored to individual examinee characteristics.

4.3. Limitations and Future Research

This study was the first to integrate multiple sources of influence on test performance using a large, representative sample of the United States school-age population. However, the study was not without limitations, some of which may inform future efforts to continue with this line of inquiry. First, although the large, representative sample used in this study contained a wide range of language ability levels, it did not contain many EL students. Continuing to investigate the extent to which examinee characteristics matter, particularly within a well-defined sub-population of EL students, would better inform best practices with respect to the assessment of EL students.

The influence of examiner characteristics was noted as one of the three potential contributors to test performance. However, only examinee characteristics and test characteristics were included in the models produced for this study. Although examiner characteristics likely precede the psychoeducational assessment process with respect to assessing one’s ability to engage in culturally sensitive practices and mastering the various aspects of test administration, scoring, and interpretation, their potential influence on test performance for EL students could be the focus of future research.