1. Introduction

When designing, analyzing, optimizing, or solving direct or inverse problems in aerodynamics, flow fields are typically modeled using CFD solvers. However, CFD simulations are usually computationally expensive, requiring significant memory and long simulation times. These limitations restrict the ability to explore the design space and prevent interactive design. In recent years, the application of deep learning [

1,

2,

3,

4] and data-driven methods has attracted considerable interest due to their potential for faster flow prediction and reduced computational costs compared to traditional CFD methods [

5,

6], especially for inverse problems [

7].

An approximation model for the Navier–Stokes equations in aerodynamics was proposed for real-time prediction of non-uniform steady laminar flows using convolutional neural networks (CNNs) [

8]. Machine learning was combined with flux limiting for property-preserving subgrid-scale modeling within flux-limited finite-volume methods for the one-dimensional shallow-water equations. Numerical fluxes of a conservative target scheme were fitted to coarse-mesh averages of a monotone fine-grid discretization, with a neural network parametrizing the subgrid-scale components.

Numerical simulations were conducted for a laminar mixed-convection problem in a lid-driven square cavity containing two internal rectangular blocks, oriented vertically or horizontally [

9]. CFD results were then used to train and test an artificial neural network (ANN) to predict new thermal behavior cases due to mixed convection, reducing computational time.

Physics-informed neural networks (PINNs), which integrate data-driven and physics-driven modules for numerical simulation modeling, including diffusion, flow, and phase-transition problems, were investigated in [

10]. This study explored the underlying physical laws embedded in data and extended PINN applications to multi-physics coupled systems, addressing inverse problems for governing equations of phase, temperature, and flow fields, thereby enabling parameter inversion under multi-physical conditions.

The Large Language Model Meta AI (Llama) 3 was investigated for predicting fluid flows with varying dynamical complexities [

11]. Results demonstrated that the Llama 3 model can be applied to fluid dynamics problems with minimal engineering adaptation and without fine-tuning pre-trained weights, achieving improved accuracy and robustness compared to conventional fully connected or recurrent neural networks with comparable capacity and training data.

It has been shown that strict error bounds exist when approximating the incompressible Navier–Stokes equations with PINNs [

12], and that the underlying PDE residual can be made arbitrarily small using tanh neural networks with two hidden layers. The total error is estimated based on the training error, network size, and number of quadrature points.

A review in [

13] explored the integration of CFD and artificial intelligence (AI) for modeling multiphase flows and thermochemical systems, which involve nonlinear interactions, complex geometries, and high computational costs. Despite this promise, AI-enhanced CFD still faces challenges, as many AI models rely heavily on empirical data rather than physics-based simulations, limiting generalizability and physical consistency.

CNNs have been applied to design linear parameter-varying approximations of incompressible Navier–Stokes equations [

14]. Considering potentially low-dimensional parameterizations, the use of deep neural networks (DNNs) in a semi-discrete PDE context was discussed and compared to approaches based on proper orthogonal decomposition.

A neural-network-based method for obtaining analytical-function solutions of the Navier–Stokes equations was proposed in [

15], consisting of two parts: the first satisfies the boundary conditions with no adjustable parameters, and the second ensures that the governing equations are satisfied within the domain while the boundary conditions remain intact. The second part involves a neural network whose parameters are determined to minimize the resulting approximation error.

Integrated computational heuristics were applied to heat transfer and thermal radiation problems in two-phase magnetohydrodynamic flows with nanoparticles, combining neural networks for accurate approximation with global optimization via genetic algorithms and local refinement using sequential quadratic programming [

16].

A reduced-order model exploiting PINNs for solving inverse problems in Navier–Stokes equations was presented in [

17]. In [

18], a numerical method was developed coupling PDE solutions via machine-learning approaches: PDEs are solved in subdomains, while ANNs are trained to couple solutions across interfaces, yielding full-domain solutions.

A combination of machine learning and flux limiting for subgrid-scale modeling in flux-limited finite-volume methods for the 1D shallow-water equations was also proposed [

19], fitting conservative scheme fluxes to coarse-mesh averages of monotone fine-grid discretizations.

Simulations of turbulent flows remain limited by the inability of heuristics and supervised learning to model near-wall dynamics [

20]. Scientific multi-agent reinforcement learning (SciMARL) was introduced for discovering wall models for LES. In SciMARL, discretization points act as cooperating agents that learn LES closure models from limited data, generalizing to extreme Reynolds numbers and unseen geometries. This approach reduces computational cost by several orders of magnitude while reproducing key flow quantities.

A diffusion model utilizing high-fidelity training data was proposed in [

21], enabling reconstruction of high-fidelity fields from low-fidelity or randomly sampled inputs. Physics-informed conditioning based on known PDEs further enhances accuracy when available.

Accurate approximation of the convective term in nonlinear advection equations is critical, as it strongly affects solution quality [

22,

23]. This is especially important for finite-volume discretizations on arbitrary unstructured meshes, where only second-order schemes are typically achievable, often highly dissipative due to cell irregularity [

24,

25].

Modern CAE software suites, including LOGOS-Aerohydro [

26,

27], employ finite-volume discretizations for advection equations. Therefore, developing neural-network-based convective term approximation schemes using one-dimensional problems as a testbed is a reasonable starting point.

Research on basic convective flow discretization schemes on unstructured meshes is presented in [

22,

24,

25]. Dissipative properties of second-order central-difference and upwind schemes on hexahedral and tetrahedral meshes are investigated in [

24], showing that central-difference schemes with artificial viscosity are required for stability. In [

22], a blended central-upwind scheme is described, activating central differences where stable and necessary for resolving large-scale vortices, while first-order upwind is used near walls or in vortex-free regions.

A survey of high-order, low-dissipation schemes is presented in [

28], including variations in NVD (Normalized Variable Diagram) schemes constrained by the CBC criterion [

29]. Generalizations of NVD schemes for unstructured meshes are described in [

30]. While CBC ensures monotonicity, it introduces additional diffusion [

29].

Analysis shows that no existing approximation scheme guarantees accuracy across all conditions. Neural-network-based deep reinforcement learning schemes appear promising. Research applying deep machine learning to CFD has increased recently [

31], focusing on turbulent-flow modeling [

32,

33,

34], accuracy improvement on coarse grids [

35,

36], and overall simulation optimization [

37,

38,

39].

Reinforcement learning (RL), including Deep Reinforcement Learning (DRL), offers a framework to optimize numerical schemes by maximizing long-term accuracy metrics over simulation trajectories [

40,

41]. This approach can, in principle, discover new dependencies between key dynamic parameters such as the Courant number, the transported scalar gradient, and the scalar itself, enabling acceleration of simulations by increasing the admissible time step.

The novelty of this work is the use of deep reinforcement learning for local fluxes reconstruction in a conservative finite-volume solver, rather than learning global time-stepping operators as in Bar-Sinai et al. (2019) [

42] and Kochkov et al. (2021) [

43]. This approach preserves discrete conservation, enables stable high-CFL operation, and integrates seamlessly with standard finite-volume methods, providing a pathway for extension to complex geometries. Our goal is to increase the time step while preserving or improving solution accuracy, thereby reducing the number of integration steps and computational time. We demonstrate the method on a scalar transport problem, comparing the neural-network scheme against classical first-order (UD) and second-order (LUD) finite-volume schemes as well as the analytical solution, across a range of Courant numbers. Results show that the RL-based scheme can improve accuracy by factors of 2–50, even at higher CFL numbers.

Regarding the “Warp-DG” work [

44], we share a strong interest in hybrid methods. However, our goal is to advance the classical Finite Volume Method (FVM), which remains the cornerstone of industrial CAE simulation. The proposed approach enables the integration of intelligent approximations into existing solvers without altering their core architecture, ensuring practical applicability. Therefore, our work does not compete with the Discontinuous Galerkin (DG) method but rather offers an alternative pathway for enhancing the efficiency of FVM, particularly for long-duration simulations.

This study is intentionally restricted to a one-dimensional benchmark to establish a baseline feasibility framework. Extensions to multidimensional flows and more complex transport problems are reserved for future work, as they require additional model development and training.

2. Main Equations and Discretization Schemes for Convective Flow

The simplified form of the advection equation without the diffusion term, sources, or sinks is written as follows [

45]:

Discretization of Equation (1) on an arbitrary unstructured mesh is most appropriately performed using the finite volume method [

46], which is optimal for the numerical solution of computational fluid dynamics problems [

26,

27]. A simplified view of the interaction between mesh cells is shown in

Figure 1.

Here, k is the set of faces of cell P consisting of the set of internal faces and the set of external faces . The neighboring cell across the internal face is denoted as M. is the area vector of face k, where i is the component index of the vector. The vector drawn from the center of cell P to the center of cell M across the face is denoted as , and the vector drawn from the center of P to the center of face as , where is the radius vector. The flow through the face is denoted by f and its direction is indicated by an arrow.

Time discretization of Equation (1) (the first term) can be performed using any available scheme, which can be either explicit or implicit. Then, for the sake of simplicity, the Euler scheme will be used for spatial discretization, with time indices omitted, where their values are evident. To perform the spatial discretization of Equation (1), we integrate it over the volume of cell P and proceed to integrate the convective term over the surface (the second term in (1)):

To approximate the convective term on an arbitrary unstructured mesh using the finite-volume discretization of the governing equations, it is written as follows:

where

is the volumetric flux through face

k. The value of the transported quantity on the face

is determined by the applied convective term discretization scheme, which will be discussed in the next section.

The numerical dissipation of the discretization scheme is affected the most by the scheme used specifically for the convective terms [

24].

Choosing the optimal discretization scheme for the convective term is among the key challenges in modeling viscous incompressible fluid flows using unstructured meshes. The scheme should, on the one hand, have low dissipation, i.e., generate as little numerical diffusion as possible, and on the other hand, ensure stable computation. There are many discretization schemes applicable to arbitrary unstructured meshes [

22,

24,

28,

29,

30,

45,

47]. Among them, several schemes can be shortlisted as having the “highest applicability rating” for solving practical problems, namely Upwind Differences (UD) [

45], Linear Upwind Differences (LUD) [

45], the QUICK scheme [

24], Central Differences (CD) [

22,

28,

47], the NVD (Normalized Variable Diagram) schemes [

24,

29,

30], and hybrid schemes (combinations of the above with the upwind scheme to increase monotonicity).

These schemes differ in the way the value of the transported quantity is reconstructed onto the face, and therefore, their dissipative properties differ as well. The reconstruction algorithm for the presented schemes is approximately the same. A brief description of the most commonly used first- and second-order schemes, namely UD, LUD, and CD, will be given below.

The UD scheme is a first-order scheme proving stable on unstructured meshes while demonstrating high numerical diffusion [

45]:

Here,

is the scalar value interpolated onto the common face between cells

P and

M.

The LUD scheme is similar to the UD scheme but uses linear interpolation to reconstruct the value onto the face [

45]:

Here,

and

are the distances from the centers of cells

P and

M to the common face

k. The LUD scheme also suffers from numerical diffusion, but to a much lesser extent than the UD scheme. Its main disadvantage is the occurrence of nonphysical oscillations in areas with sharp gradients.

The CD scheme is the least dissipative, but it is absolutely unstable (this is especially true for finite-volume discretization of Navier–Stokes equations on arbitrary unstructured meshes consisting of randomly shaped polyhedra, where designing a scheme with an accuracy above second order is essentially impossible) [

22,

28,

47], as its use in the presence of large gradients leads to oscillations in the solution field.

Figure 2 shows an example of how three numerical schemes differ in their numerical diffusion.

Hybrid schemes are linear combinations of high- and low-order schemes [

45], which leads to increased monotonicity of the solution. A hybrid scheme, for example, for the CD scheme, can be written as follows:

where

is the blending factor. The scope of the present paper is limited to the schemes where the blending factor stays constant throughout the whole computational domain. Hybrid schemes partially mitigate the disadvantages of first- and second-order schemes but do not eliminate them in principle, and their effectiveness depends on manually selected coefficients and conditions of a specific task.

The results of calculations using the aforementioned schemes depend on both the dynamic parameters of transport and the geometric parameters of mesh cells, and the more complex the scheme, the higher the number of these parameters and their interdependencies. Taking this into account, one may attempt to construct an interpolation scheme based on a trained neural network that calculates the interpolated value based on the optimal relationship between the dynamic and geometric parameters of neighboring cells. The neural network can be trained to predict such values on the faces that converge the numerical solution of the problem to the reference one (at each computational step). The reference solution can be either an analytical solution or a numerical solution obtained using a classical discretization scheme. To accelerate the training process, the neural network can be pre-trained to determine the values on the faces based on a solution obtained using a classical approximation scheme. Here, the LUD scheme will be used.

An important aspect of the proposed approach is the preservation of the discrete conservation property inherent to the finite-volume formulation. In the present method, the neural network is not used to directly predict fluxes independently for each control volume. Instead, the network reconstructs the value of the transported scalar at the cell face, denoted as , based on the local information from the neighboring cells. The convective flux through the face is then computed using the standard finite-volume expression . For internal faces shared by two adjacent cells P and M, the same reconstructed face value is used in the flux computation for both cells. As a result, the flux contribution appears in the discrete balance equations with opposite signs, , which ensures that the amount of scalar leaving one control volume through a face exactly enters the neighboring control volume through the same face. Consequently, the local and global conservation properties of the finite-volume method are preserved.

From this perspective, the neural network replaces only the interpolation procedure used to estimate the scalar value at the face, while the conservative flux balance structure of the finite-volume discretization remains unchanged. This is conceptually similar to classical convective schemes such as UD or LUD, where the face value is reconstructed from neighboring cell values, but the conservative formulation of the numerical flux is retained.

To verify this property in practice, the total scalar quantity in the computational domain was monitored during the simulations. The results show that the total scalar mass remains constant up to numerical precision, confirming that the neural reconstruction does not violate the discrete conservation law.

It should be emphasized that the term high-CFL in the present work refers to the admissible Courant number range within explicit finite-volume discretizations. The proposed neural network modifies only the spatial reconstruction of the convective flux, while the time integration scheme remains explicit. Consequently, the goal of the method is not to remove CFL limitations entirely, as is often achieved with implicit time-integration schemes, but rather to extend the practical CFL range at which explicit convective discretizations maintain acceptable accuracy and stability.

Since the neural network acts as a face-value reconstruction operator, similar to classical schemes such as UD or LUD, it is in principle independent of the choice of time-integration method. Therefore, the proposed reconstruction could potentially be incorporated into both explicit and implicit finite-volume solvers, although the present study focuses on the explicit case as a proof-of-concept.

3. Constructing the Convective Scheme Using Deep Reinforcement Learning

According to (4) and (5), the following data are involved in interpolation using a neural network in convective term approximation schemes: scalar values ( and ) in neighboring cells P and M, respectively, as well as the gradient of the scalar quantity depending on the flow direction.

In addition to the parameters mentioned above, the accuracy of convective term discretization depends on the Courant number. Therefore, Equations (4) and (5) can be refined by introducing additional parameters, namely the time step Δt and the Courant number CFL in cells P and M, respectively. Although Δt and CFL are mathematically related through the local flow velocity and cell size, in the present study both parameters were provided separately as inputs to the neural network. This choice was made as an initial experimental simplification to allow the network to independently learn the sensitivity of the convective term to both the absolute time step and the non-dimensional Courant number. In other words, this setup enables the network to capture potential nonlinear interactions between the step size and CFL that may affect stability and accuracy.

We acknowledge that a more rigorous formulation could use only one of these parameters, computing the other internally; however, the current approach served as a practical proof-of-concept and facilitated faster convergence during early-stage training. Future work will refine the input parameterization to reduce redundancy and improve theoretical consistency while preserving the observed generalization and performance gains.

Thus, based on the defined input data, the number of input neurons in the first layer of the neural network will be six. The neural network predicts a single value—the scalar value on the face

, so the output layer will contain only one neuron (see

Figure 3).

According to theory [

48], a network with one hidden layer, where neuron values are computed as values of a continuously differentiable function, can approximate any dependency. In addition, a deep architecture is not always better than a single-layer one because complicating the model can lead to overfitting, deterioration of generalizing ability, and excessive computational costs, whereas for a simple dependency, a single-layer network is often enough for a more stable and accurate result. Therefore, to simplify the structure, we will use a neural network with one hidden layer. The number of neurons in the hidden layer should be greater than the number of input neurons and several times smaller than the number of training dataset examples [

48] (to answer the implied question, the training dataset will contain about 2250 examples).

During numerical experiments, networks with different numbers of neurons were tested, starting from 10 neurons up to 25, with a step of 5 neurons. It was shown that networks with 20 or more neurons have acceptable training accuracy. Therefore, for further numerical experiments, we will use a network consisting of 20 neurons.

Thus, the neural network used is a perceptron (6; 20; 1) with one hidden layer [

49]—6 neurons in the input layer, 20 neurons in the hidden layer, and 1 neuron in the output layer.

It is believed that a neural network using the activation function sin(

x) is better at extrapolating data beyond the training range [

50,

51].

A standard Multilayer Perceptron (MLP) requires supervised learning, which necessitates a pre-existing dataset of “input parameters → target flux values.” However, in the problem we address, such reference data are a priori unavailable, as there is no single “correct” value for the convective flux at an arbitrary boundary face. The objective of our work is not to approximate a pre-defined function but to find an optimal discretization scheme that ensures the physical consistency of the solution throughout the entire simulation. This constitutes a policy optimization problem, which can only be solved using reinforcement learning methods. These methods allow for the maximization of a long-term reward, such as numerical stability and accuracy at large time steps. We employ reinforcement learning to train the neural network, leveraging the fact that the values of the passive scalar in the grid cells are known, which enables the formulation of a scoring function.

Reinforcement learning is performed using the Deep Deterministic Policy Gradient (DDPG) algorithm [

49,

52] and, separately, the genetic algorithm (GA) [

53]. In both methods, the trained neural networks represented as an individual in the genetic algorithm and as an actor in the DDPG algorithm are the same model.

The training incorporates simulation of a series of problems with different characteristic CFL numbers.

To evaluate the suitability of the model, the following function is introduced, which is suitable for both implemented learning algorithms:

Here,

t is the simulation time,

k is the numerical designation of the internal face,

n is the number of internal faces,

T is the final simulation time, and

is the time step.

To form local rewards, pointwise convergence of the simulated scalar values in the cells with the corresponding reference values is considered. The reward should increase as the absolute difference grows smaller, so this difference emerges with a negative sign .

The face, for which the neural network determines

, is shared by two cells,

P and

M, so the average difference from these two cells is calculated as:

where

and

are the values in cells

P and

M, respectively.

Thus, the reward function

for internal face

k at time

t is calculated as follows:

As the neural network is trained, the value of the reward function should increase and tend toward 0, and the numerical solution of the problem using the neural scheme will approach the desired .

The implementation of the neural network class and teaching methods were written by us in the C++ programming language.

Problem Description

Let us consider the advection problem for scalar quantity

transported by a constant velocity field

along a one-dimensional channel with length

. The initial distribution of

in the channel is described by a function

(see

Figure 4).

Advection Equation (1) with the passive scalar quantity

defined as shown above, there exists an analytical solution:

Here,

is the wave number,

is the angular frequency, and

is the amplitude. The computational domain is divided into cells sized

.

Let us introduce the variable , after which the scalar distribution shifts by one cell along the direction of velocity , i.e., .

The training of the artificial neural network, which is used as the convective term approximation scheme for solving Equation (1), was carried out by simulating the problem up until the time . Training was also performed at different Courant numbers (i.e., for different time steps of the problem) ranging from 0.01 to 0.5 with a step of 0.01.

The following sections present a comparative study of the numerical solution of the problem using the neural network as the approximation scheme, in comparison with the first-order accuracy scheme UD and the second-order scheme LUD. The analysis is carried out for various parameters falling outside the range of the training data.

Notably, the parameter indicating the scalar transport time, at which the spatial distribution is evaluated, exceeds the value used during the neural network training and equals seconds. This allows us to assess the stability and adaptability of the proposed approach when solving problems with extended temporal characteristics.

4. Numerical Experiments

This chapter presents the results of a comprehensive study on the effectiveness of “neural” schemes for approximating the convective term in the scalar transport equation. The focus is on comparing different approaches to training artificial neural networks: the genetic algorithm and the deep reinforcement learning algorithm DDPG, as well as evaluating the accuracy of the obtained solutions.

A series of numerical experiments is conducted to assess the potential of the neural network approach. A key component is the verification against an analytical solution, enabling a quantitative evaluation of the “neural” scheme’s accuracy against the first-order UD and second-order LUD schemes. The experiments further analyze the generalization ability and robustness of the trained networks to input parameter variations. The obtained results provide a foundation for conclusions on the scheme’s prospects and help identify directions for further research.

4.1. Genetic Algorithm

The parameters of the algorithm are as follows:

Each generation consists of a population of 20 individuals;

The selection operator chooses the top 5 individuals of the current generation and also retains the top 5 individuals of all time;

Crossover occurs between the top 5 individuals of the current generation and the top 5 of all time;

Mutation is applied to every weight of all individuals, where each weight is modified by adding a random number drawn from a normal distribution. The standard deviation of the distribution decreases by a factor of 1.01 each time the fitness of a new individual differs by 10% or more from the best fitness of all time and increases by a factor of 1.01 otherwise.

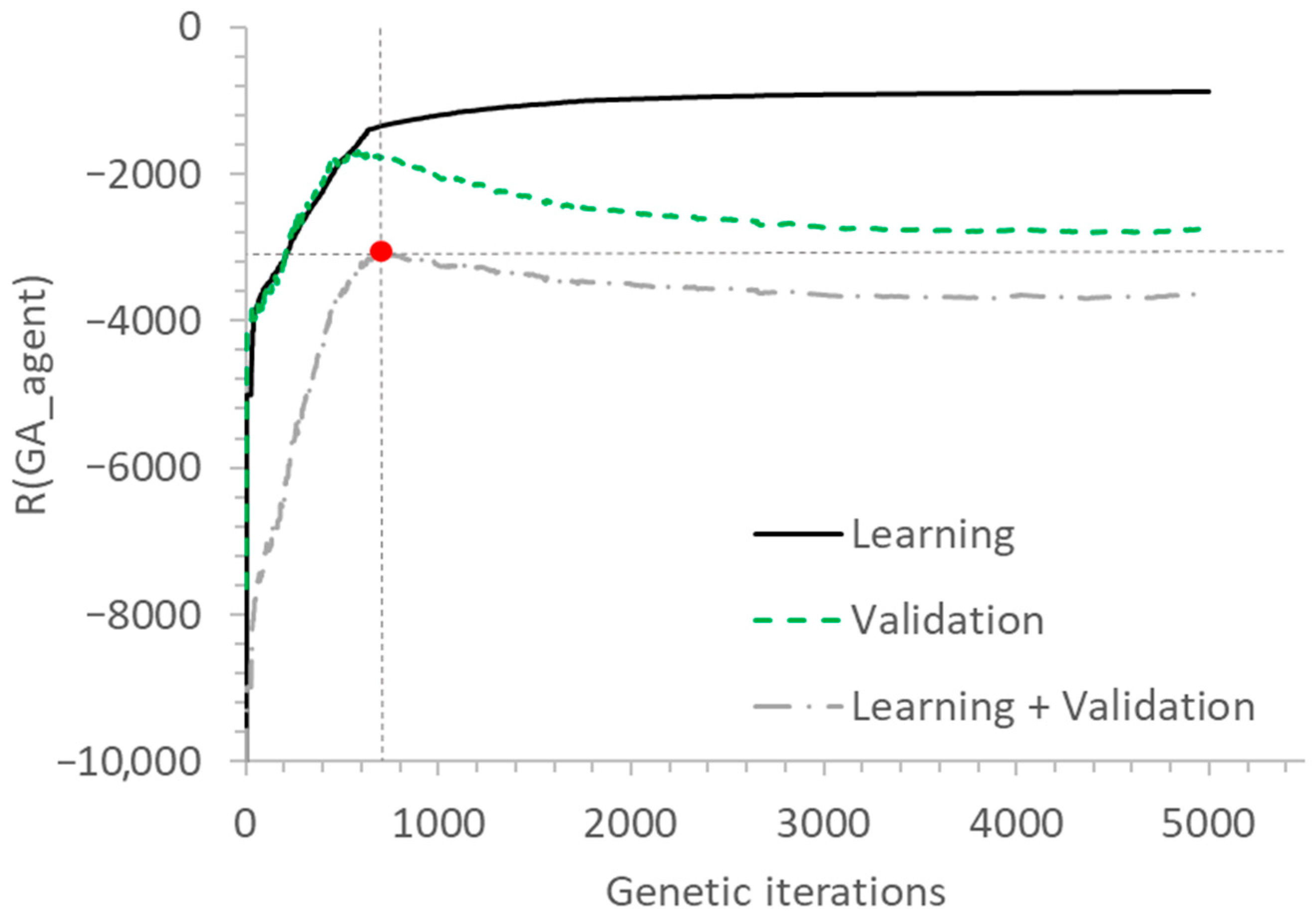

The training process of the neural network continues until a specified accuracy is reached or the maximum number of generations is exceeded. A characteristic feature is that as the target value of the fitness function

R(

GA_agent) = 0 (7) is approached, the magnitude of changes in the neural network parameters decreases, which slows down the training process. In the context of the considered problem, where the artificial neural network is used as a convective term approximation scheme for solving Equation (1), training was stopped after 5000 generations, since the value of the loss function during further training remained within ± 1% of its average value taken over the last 10 generations (see

Figure 5). This stopping criterion is based on achieving an acceptable balance between solution accuracy and computational cost.

The Learning plot in

Figure 5 shows the dependence of the fitness value obtained by neural network

R(

GA_agent) on the current step of the genetic algorithm, with the fitting value obtained by the best neural network displayed for all the steps of the genetic algorithm preceding the current one. The fitness function (7) of the best agent reached the value of

R(

GA_agent) = −878.

Similarly, the Validation plot shows the fitness value of the best neural network from the Learning plot obtained by solving problems with Courant number values CFL = 0.5, 0.6, 0.7, 0.8, 0.9 (the ones previously not utilized in the training process). The value CFL = 0.5 was also used due to its large magnitude and boundary nature in the training dataset. This plot reflects the degree of overfitting of the neural network [

48,

49], i.e., the phenomenon of the trained neural network losing its generalization ability [

48,

54] and being unable to perform approximation on the data outside the training set.

The third plot, Learning + Validation, obtained by aggregating the first two, is also presented. This final plot was used to determine the most optimal neural network in terms of performance.

The fitness function (7) of the most optimal agent reached a value of R(GA_agent) = −1326 on the Learning plot (according to Equation (8)) at the 739th step of genetic algorithm training. Considering that each generation includes 20 agents (neural networks) and each agent solves the problem times per generation, the total number of problem solutions is 739 × 20 × 50 = 739,000 times.

For comparison, if we evaluate the solution obtained using the LUD scheme, the fitness function for this approximation scheme is approximately R(LUD_agent) = −11,000. Neural network training slows down as the fitness function (7) increases, while the number of problem solutions grows.

The time required to solve the problem using classical convective term approximation schemes in the numerical experiment coincides with the time required to solve the same problem using the neural network-based approximation scheme.

However, training the neural network takes about 7 h on a single Intel Core i7@2.50 GHz CPU (Intel Corporation, Santa Clara, CA, USA). This significant time cost is due to the nature of the genetic algorithm, in which the training time of the neural network is orders of magnitude greater than the time required to solve the original problem, which turns out to be the primary shortcoming of the method.

The neural scheme was implemented in C++ (in-house code) and compiled using Microsoft Visual Studio 2022. The choice of a CPU-based implementation was deliberate and motivated by the need to ensure compatibility with widely used industrial CFD software CFD software packages, such as ANSYS Fluent, LOGOS-Aerohydro, STAR-CCM+, and OpenFOAM, which predominantly rely on CPU-based high-performance computing architectures. Although GPU acceleration has recently been introduced in some of these tools, CPU-based implementations remain the de facto standard in industrial and legacy CFD workflows. Since our goal is the eventual integration of this method into existing production-level solvers that lack native GPU support, it was methodologically essential to develop and validate our approach within the same computational environment. This ensures that the performance gains we demonstrate are directly relevant and transferable to real-world engineering applications.

4.2. DDPG Training

As a deterministic policy gradient algorithm, DDPG learns a policy that maps states to specific, precise actions from a continuous space. This contrasts with stochastic policy methods (e.g., PPO), which learn a probability distribution over actions. DDPG combines ideas from value-based (DQL [

49,

55]) and policy-based (DPG [

49,

56]) methods, belonging to the actor-critic class [

49].

The agent’s action is selected by an artificial neural network called the actor. The actor follows a policy-based approach and learns to act by directly estimating the optimal policy and maximizing the reward through gradient ascent.

On the other hand, the chosen action can be evaluated by a second neural network—the critic. The critic uses a value-based approach and learns to assess the value of different state-action pairs.

As a result of combining the actor and critic, we use two separate neural networks. The role of the actor network is to determine the optimal action in a given state. The critic, by evaluating the expected return, assesses the action generated by the actor.

The algorithm works as follows:

The actor performs actions (the neural network predicts scalar values on internal faces);

The problem is solved using the selected actions (step 1 is repeated at each time step of the simulation);

Based on the complete solution of the problem, the actor’s actions are evaluated (the actor’s actions are rewarded, and evaluation functions Q(a,s) are derived based on the rewards for each action a selected in state s at the first time step; the overall solution evaluation is performed as well);

Based on the evaluations obtained, the critic network is trained via supervised learning, performing one step of gradient descent [

48];

The gradient of the critic’s evaluation function with respect to the action is calculated. This gradient is used to train the actor network by performing one step of gradient ascent (in the direction that increases the evaluation function of the action);

The DDPG algorithm cycle (steps 1–5) is repeated until the optimal solution is obtained.

The content of

Figure 6 is as follows: the environment state

s (a vector of input parameters) is fed into both the actor and critic networks. The actor network outputs an action

a, which, together with

s, is input into the critic network. The critic then outputs a value

Q(

a,

s), evaluating the choice of action

a for the environment state

s.

The idea of DDPG training is that the critic learns to predict the evaluation of different actions for different environment states, i.e., it turns into a continuous function with an extreme value corresponding to the highest evaluation for each state. Since Q(a,s) depends on a, gradient ascent can be used to find such actions a that maximize Q(a,s). Then, knowing the correct actions a, it is easy to train the actor network to output these same actions a.

The direct training of the critic is performed by using the fitness functions R(agent) defined earlier as reference evaluations Q*(a,s), which we want the critic to learn. Although the same evaluation Q*(a,s) = R(agent) is used for different actions and states, this does not affect the training process, since all these actions and states correspond to the same configuration of the actor network.

Based on the information available regarding the face (6 input neurons), which represents the environment state s, the desired neural network determines the scalar value on that face. The same information along with the predicted value is used by the critic network to produce an evaluation of that prediction. Thus, the critic represents the dependence of the actor network configuration (its weights), while also acting as the criterion for the correct problem solution.

This method demonstrates high sensitivity to the fine-tuning of algorithm parameters. Here, the effectiveness of training largely depends on the quality of the critic’s approximation of the dependence of the evaluation function on action, since the gradient used to guide the actor toward the optimal strategy is derived from this exact dependence. Thus, one should recognize a significant contribution of the exploration noise parameter, which is a relatively small random value added to the actor’s prediction (not exceeding 10% of said prediction value).

It was not possible to achieve optimal tuning of the algorithm parameters during the timeframe of the present paper, which led to slower training of the actor network. As a result, the actor failed to reach the optimal evaluation R(GA_agent) = −1326 achieved by the genetic algorithm.

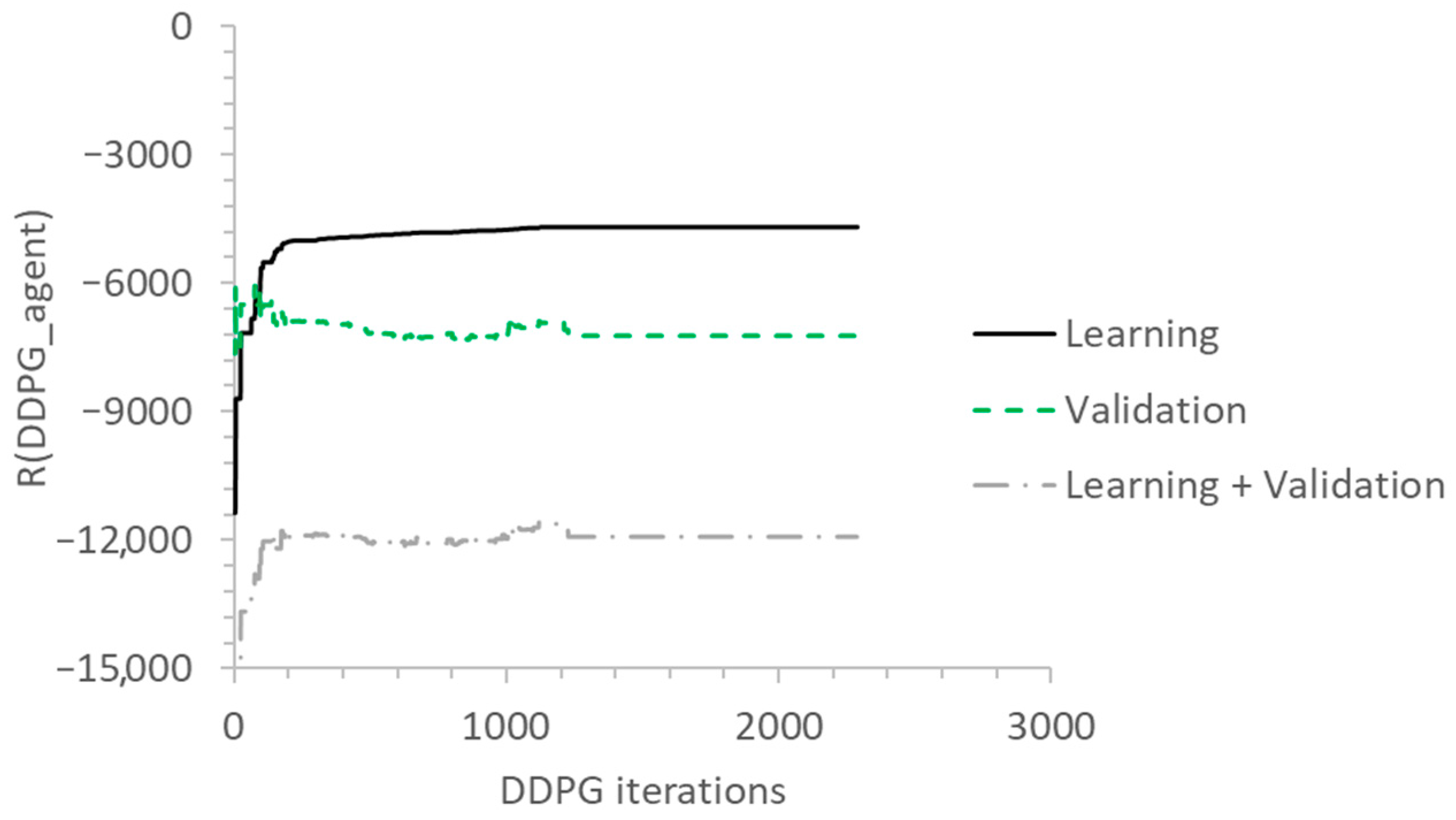

Despite the mentioned difficulties, the DDPG training process showed a positive trend in terms of the neural network parameter updates as illustrated in

Figure 7. The total training duration was about 2000 generations, with the problem being solved 50 times per generation considering time steps, resulting in approximately 2000 × 50 = 100,000 problem solutions. The fitness function value (7) for the actor reached

R(

DDPG_agent) = −4690.

One key reason is that DDPG, in its classical formulation, relies on continuous action spaces and gradient-based policy updates, which assume relatively smooth and well-behaved reward landscapes. In CFD problems, especially when optimizing discretization schemes, the reward function (e.g., accuracy of the numerical solution) is highly nonlinear and often discontinuous due to numerical instabilities and abrupt changes in solution error with small variations in the scheme parameters. This leads to poor gradient estimates and unstable training.

Additionally, DDPG requires dense feedback from the environment to propagate meaningful gradients. In our setup, computing the reward involves running a full numerical solver, which is computationally expensive and produces noisy feedback due to the discrete nature of grid resolution and time-stepping. As a result, the algorithm struggles to converge within a reasonable number of training episodes.

Finally, CFD discretization problems inherently involve global constraints (e.g., CFL conditions, stability limits) that cannot be easily encoded in the standard DDPG framework. While DDPG excels in control tasks with continuous, bounded actions, enforcing stability constraints in a purely gradient-driven manner is challenging, often resulting in actions that violate physical feasibility or lead to divergence of the numerical solution.

These limitations justify the choice of a genetic algorithm in the present study. Evolutionary methods are inherently more robust to noisy, discontinuous, and constrained objective functions, allowing effective training of the neural convective term approximation even in early proof-of-concept 1D cases. Future work will explore modifications to the DDPG algorithm that address these challenges, including hybrid gradient-evolutionary approaches and physics-informed reward shaping.

4.3. Comparison of Simulation Results with the Analytical Solution

The most effective neural network trained using the genetic algorithm was selected as the reference “neural” scheme. The training process for both methods was carried out under fixed parameters: computational domain size

l = 101 m, final advection time

T = 5 s, computational domain cell size Δ

x = 1 m, advection rate

u0 = 1 m/s, amplitude

φ0 = 1, and Courant number values CFL [0.01, 0.5] with a step of 0.01. To ensure the reliability of the results, the experimental verification process included testing the performance of the “neural” scheme under various values of these parameters as follows:

The analytical solution (9) is compared with the results obtained using the LUD scheme, the UD scheme, and the “neural” scheme. To illustrate the features of scalar quantity distribution along the one-dimensional channel, graphical dependencies are presented (see

Figure 8) for various Courant numbers: CFL = 0.01, 0.1, 0.5, and 0.7 under fixed parameters Δ

x = 1 m,

u0 = 1 m/s, and

φ0 = 1.0. Since the scalar distribution is periodic, the plots only show the distribution near the center of the channel over a width equal to the wavelength of the distribution

λ.

An analysis of the graphs shows that the neural scheme yields the best results for Courant numbers above approximately 0.5, as reflected in all tables starting from

Table 1. The LUD scheme shows noticeable error, with the solutions starting to diverge significantly from the analytical solution at CFL ≥ 0.1, as demonstrated in

Table 2. The UD scheme works adequately for the stated problem only around CFL = 1.0 due to the explicit nature of the solution but diverges significantly at larger or smaller CFL values, so it is excluded from further comparison. The neural network solution also starts diverging at CFL ≥ 0.5 but does so several times as slowly as the LUD scheme, as demonstrated in

Table 1,

Table 2 and

Table 3.

To quantify the accuracy of the solutions, the integral error for each approximation method was calculated using Equation (10).

The results presented are obtained at fixed flow velocity

u0 = 1 m/s and oscillation amplitude

φ0 = 1 and are summarized in

Table 1,

Table 2 and

Table 3.

where

n is the number of cells.

What is of interest here is the behavior of the neural scheme at higher Courant number values, where the LUD scheme fails with an error Δφ above 10%. This value range for the discussed problem is CFL > 0.1.

The analysis of the results presented in

Table 1,

Table 2 and

Table 3 shows that the neural network-based solutions converge more closely to the analytical solution than the LUD scheme at higher Courant numbers.

However, from

Table 1 it is clear that the LUD scheme demonstrates a smaller integral error Δ

φ in

Figure 9 compared to the neural scheme on finer grids and at lower Courant numbers:

Although the LUD scheme shows better accuracy in some cases at low CFLs (particularly, on finer meshes not used in training), this is not critical, as the primary goal of the neural network is to improve simulation efficiency. The neural scheme makes it possible to maintain solution accuracy at higher CFLs, enabling simulations with larger time steps or coarser meshes. The possibility of using reinforcement learning to improve scalar transport modeling accuracy is confirmed by the obtained neural scheme, which can be considered an efficient implementation of this concept as supported by the data in

Table 1,

Table 2 and

Table 3.

After that, it seems reasonable to investigate the neural network’s responsiveness to flow velocity and oscillation amplitude values falling outside the training data range.

Let us first obtain the results for the varying amplitude values

at the parameter settings as follows: Δ

x = 1 m,

u0 = 1 m/s,

. Here, the results for

can be seen in

Table 2 above.

It can be seen that increasing the amplitude of the scalar quantity distribution leads to higher integral errors in the neural scheme. The amplitude variations cause changes to scalar values in the cells and the respective gradients acting as inputs for the neural network. Since these values differ significantly from the ones used in training, the neural network’s response to these values turns out to be unpredictable, which is to be expected. However, it can be seen from

Table 4,

Table 5 and

Table 6 that the convergence is maintained upon reducing the CFLs, which means that the generalization capability has still developed. One may attempt to alleviate this shortcoming via normalization of scalar distribution values.

Let us then obtain the results for the varying advection rates at the parameter settings as follows: Δx = 1 m, , .

It can be seen from

Table 7 that the neural network-based scheme still outperforms LUD in terms of approximation. However, we can also see that the error increases when the simulation is performed with the data falling outside the training range used for neural network fine-tuning. Still, the analysis of the results shows that the neural network-based convection term approximation scheme demonstrates generalization ability even with these datasets.

Notably, the neural scheme ensures higher accuracy compared to the conventional method at higher Courant numbers (CFL > 0.1), even with flow velocity and oscillation amplitude deviating significantly from the training data ranges.

A formal Fourier or von Neumann analysis was not performed in the present study because the neural reconstruction introduces a nonlinear and state-dependent discretization operator. Instead, dispersive effects were evaluated qualitatively through long-time advection tests of periodic scalar distributions.

5. Conclusions

The goal of this paper was to investigate the feasibility of using neural networks, trained via reinforcement learning, to improve the numerical solution of the scalar transport equation using the finite-volume method. Unlike prior approaches focused on coarse-grid accuracy, our method aims to increase the time step while preserving or improving solution accuracy, thereby reducing the total number of integration steps and accelerating computations.

Experimental results show that the neural-network-based convective term approximation outperforms the classical second-order LUD scheme in both accuracy and computational efficiency. Specifically, for CFL numbers above 0.1, the neural scheme maintains high accuracy, while LUD requires much smaller time steps to achieve comparable results. In some cases, the neural scheme at CFL = 0.5 achieves accuracy that LUD cannot reach even at CFL = 0.001, demonstrating a potential speedup of orders of magnitude.

Among the reinforcement learning strategies explored, the genetic algorithm was effective, whereas the classical DDPG formulation did not converge to satisfactory solutions. This limitation is likely due to the sparse and delayed reward structure of the convective term optimization problem, which challenges standard actor-critic methods and necessitates further investigation into RL design and hyperparameter tuning.

Overall, this study establishes a proof of concept for neural-network-based convective term approximation, demonstrating that combining RL-trained neural networks with classical finite-volume schemes can enable larger time steps, improve accuracy, and accelerate CFD simulations. Future work will focus on extending the approach to higher-dimensional problems and optimizing both training and inference efficiency.