Enhancing Short-Term Wind Energy Forecasting with XGBoost and Conformal Prediction for Robust Uncertainty Quantification

Abstract

1. Introduction

1.1. Research Motivation

1.2. Literature Review

1.2.1. Evolution of Forecasting Methods: From Statistics to Machine Learning

1.2.2. XGBoost and Hyperparameter Optimisation in Wind Forecasting

1.2.3. Uncertainty Quantification and the Role of PCR

1.2.4. Summary of the Literature and Research Gap

1.3. Contributions and Research Highlights

- The time series of wind energy data has two essential characteristics that underpin our hybrid model: (i) nonlinear transitions between regimes based on atmospheric stability constraints, which are addressed by the tree-based model, and (ii) linear trend components during stable atmospheric regimes, which are addressed by PLAQR. Our hybrid model is not a result of heuristics, but rather a consequence of the physical insight that wind energy production occurs on multiple scales: nonlinear atmospheric processes control transitions between regimes. However, linear relationships dominate during stable operation. PLAQR captures this hierarchical structure by permitting tree-based models to divide the feature space into regions where linear relationships hold.

- The stochastic process of wind generation, which is heteroscedastic and non-stationary, makes it difficult to apply conventional parametric uncertainty analysis. Conformal prediction is especially useful in this case because it is a distribution-free method for uncertainty analysis that holds under the actual data-generating process, which is unknown. In the context of wind energy prediction, where the distribution varies with weather and seasonal patterns, this is especially useful because it ensures that the prediction intervals have the correct coverage regardless of the true error distribution.

- Instead of offering a heuristic combination, PLAQR provides calibrated probabilistic predictions via the theoretical guarantee of finite-sample coverage provided by the conformal framework for prediction sets. The incorporation of tree-based nonlinearities improves point prediction accuracy (lower RMSE) and directional correctness (higher POCID), while preserving valid estimates of uncertainty, as verified by Probability Integral Transform (PIT) histograms. This tackles the inherent trade-off between sharpness and calibration in probabilistic forecasting.

- The improvement in performance with an increase in the amount of training data from 80% to 85% is not only empirical but also reflects the consistency properties of both the ensemble technique and conformal prediction. As data volumes increase, tree-based techniques will be able to distinguish between smaller regimes. On the other hand, the non-asymptotic properties of the conformal framework will be more refined.

- The proposed modelling framework offers a template for forecasting renewable energy, addressing the key challenge of producing point forecasts and uncertainty measures for a non-standard data-generating process. The underlying principles of the approach, including regime-based hybrid modelling and distribution-free uncertainty quantification, can be applied to other areas of renewable energies.

2. Models

2.1. eXtreme Gradient Boosting

2.1.1. Additive Learning

2.1.2. Loss Function

2.1.3. Regularisation

2.2. Principal Component Regression

2.3. Quantile Regression

2.4. Partial Linear Additive Quantile Regression Framework for Forecast Combination

- be the point forecast from an XGBoost model at time t.

- be the point forecast from a PCR model at time t.

- be the actual realized value.

2.5. Evaluation Metrics

2.5.1. Root Mean Square Error

2.5.2. Mean Absolute Error

2.5.3. Mean Absolute Scaled Error

2.5.4. Mean Bias Error

2.5.5. Prediction of Change in Direction

2.6. Conformal Prediction

2.6.1. Mathematical Framework

Nonconformity Measure

Calibration

- Compute nonconformity scores for all points in :

- For a desired miscoverage rate , calculate the critical quantile q from the empirical distribution of the scores:

Prediction Interval

2.6.2. Coverage Guarantee

2.7. Prediction Interval Evaluation Metrics

2.7.1. Prediction Interval Coverage Probability

2.7.2. Mean Prediction Interval Width

2.7.3. Coverage Width-Based Criterion

2.7.4. Probability Integral Transform Histogram

2.7.5. Diebold–Mariano Test

3. Results

3.1. Exploratory Data Analysis

3.1.1. Data Source

3.1.2. Data Characteristics

- difLag1is the first-order hourly difference in the Wind energy produced time series:

- difLag2 is the second-order hourly difference in the Wind energy produced time series:

- difLag12 is the difference from half a day prior:

- difLag24 is the daily seasonal difference:

- Hour represents the hour of the day (0 to 23).

- Day represents the day of the week.

- noltrend is the estimated nonlinear trend component of the wind energy produced time series. This component was extracted through seasonal and trend decomposition using Loess.

3.1.3. Summary Statistics

3.2. Data Processing

3.2.1. Dataset Description

3.2.2. Missing Values

3.2.3. Relationship Between Variables

3.2.4. Variable Importance

3.3. Choosing Number of Components

3.4. Selecting Number of Components

3.5. Results of the Diebold–Mariano Tests

3.6. Probability of Change in Direction and Fitness Tests

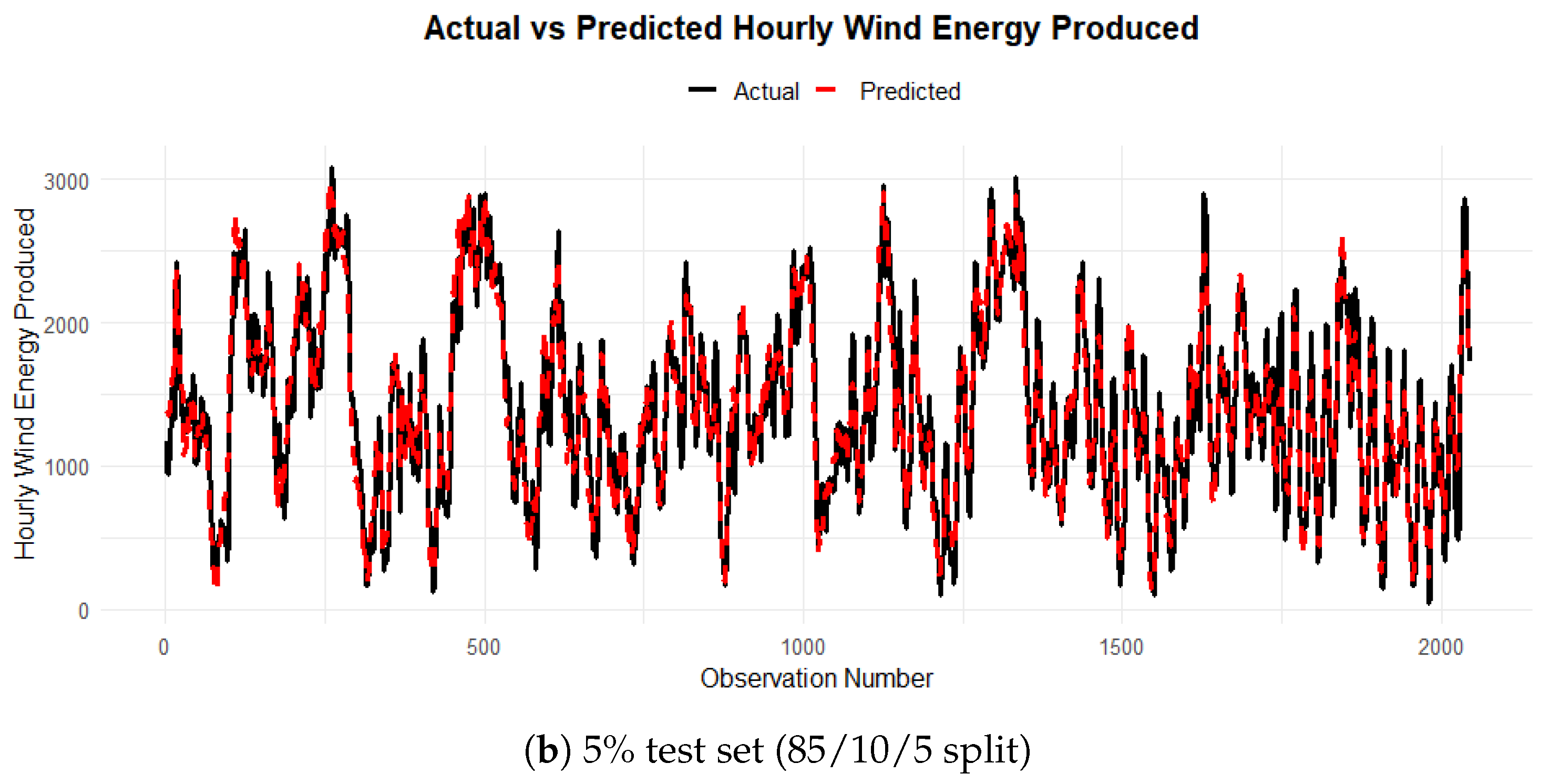

3.6.1. XGBoost and PCR (80% Training Test, 10% Validation and 10% Test)

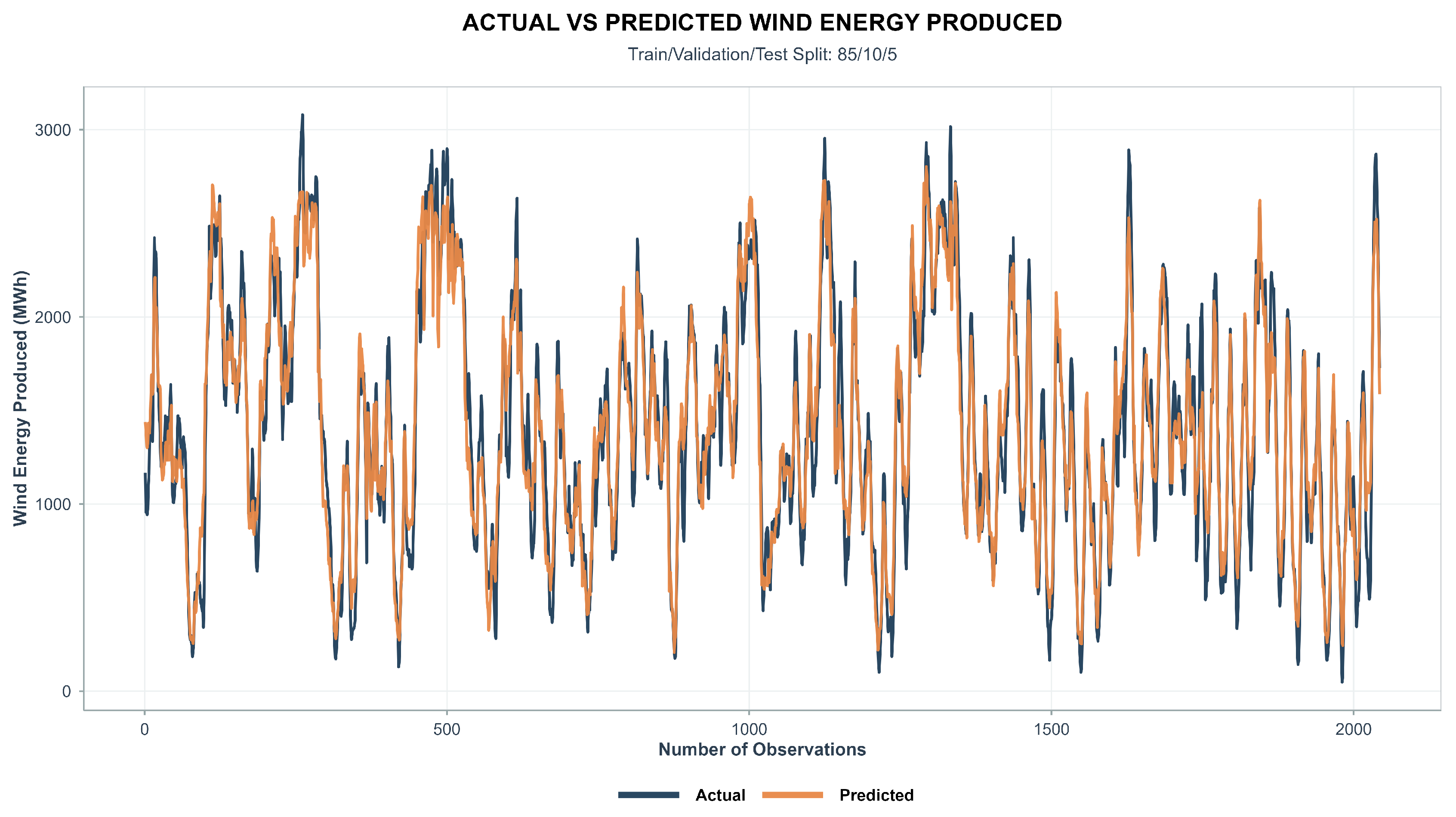

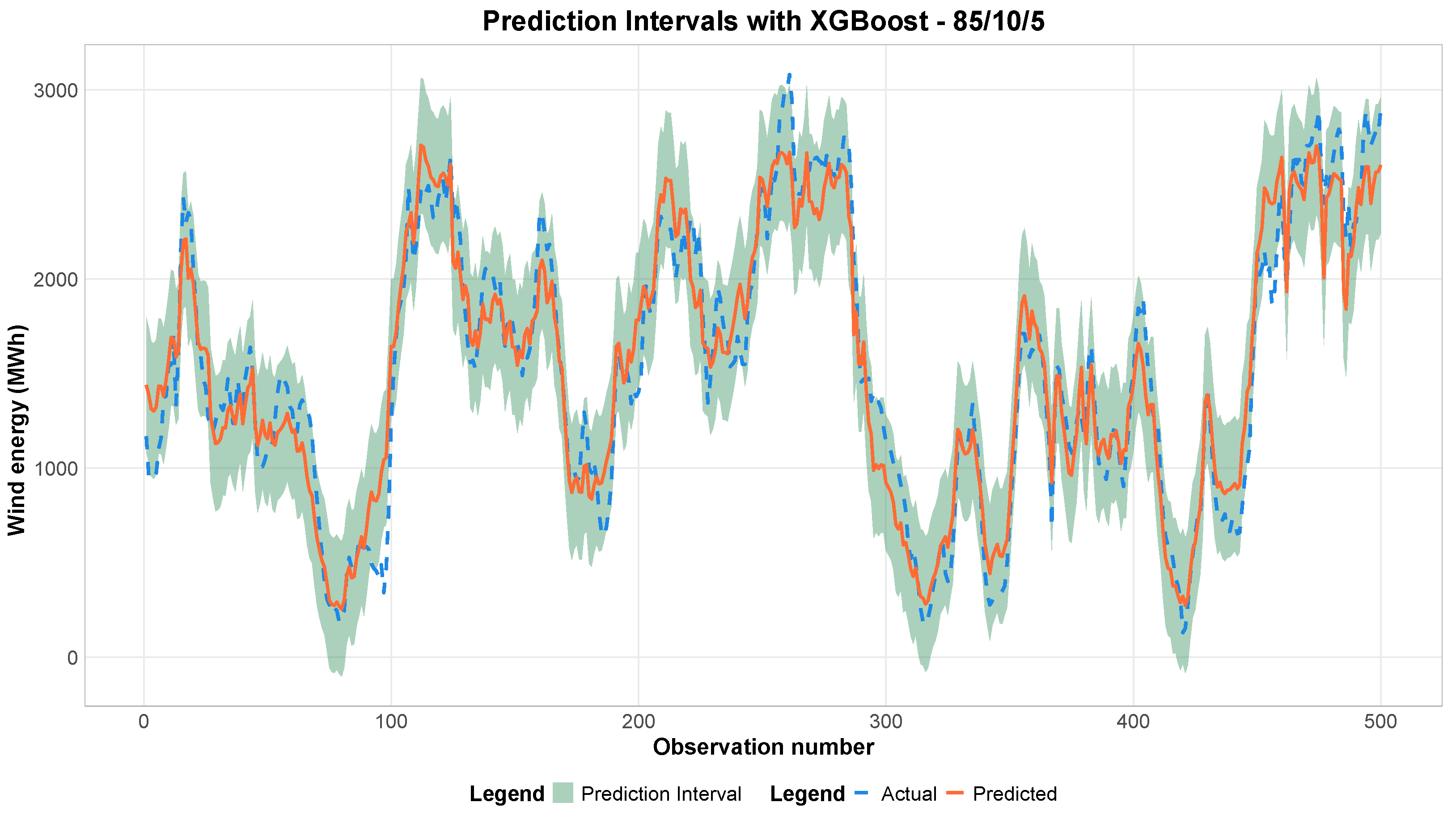

3.6.2. XGBoost and PCR (85% Training Test, 10% Validation and 5% Test)

4. Discussion

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| ANN | Artificial Neural Networks |

| ARIMA | AutoRegressive Integrated Moving Average |

| BH-XGBoost | Bayesian Hyperparameter-optimised XGBoost |

| Boost-LR | Boosting with Linear Regression |

| CNN GRU | Convolutional Neural Network and Gated Recurrent Unit |

| CWC | Coverage Width-based Criterion |

| GBM | Gradient Boosting Machines |

| GPR | Gaussian Process Regression |

| KDJ | Stochastic Oscillator |

| KNN | K-Nearest Neighbors |

| LSTM | Long Short-Term Memory |

| MACD | Moving Average Convergence and Divergence |

| MAE | Mean Absolute Error |

| MASE | Mean Absolute Scaled Error |

| MBE | Mean Bias Error |

| MLP ANN | Multi-Layer Perceptron Artificial Neural Network |

| MPIW | Mean Prediction Interval Width |

| NMAE | Normalised Mean Absolute Error |

| NN | Neural Networks |

| PCA | Principal Component Analysis |

| PCR | Principal Component Regression |

| PICP | Prediction Interval Coverage Probability |

| PIT | Probability Integral Transform |

| RF | Random Forest |

| RMSE | Root Mean Square Error |

| SVM | Support Vector Machines |

| XGBoost | eXtreme Gradient Boosting |

Appendix A. Supplementary Plots

References

- Behabtu, H.A.; Vafaeipour, M.; Kebede, A.A.; Berecibar, M.; Van Mierlo, J.; Fante, K.A.; Messagie, M.; Coosemans, T. Smoothing Intermittent Output Power in Grid-Connected Doubly Fed Induction Generator Wind Turbines with Li-Ion Batteries. Energies 2023, 16, 7637. [Google Scholar] [CrossRef]

- Kim, D.; Hur, J. Short-term probabilistic forecasting of wind energy resources using the enhanced ensemble method. Energy 2018, 157, 211–226. [Google Scholar] [CrossRef]

- Foley, A.M.; Leahy, P.G.; Marvuglia, A.; McKeogh, E.J. Current methods and advances in forecasting of wind power generation. Renew. Energy 2012, 37, 1–8. [Google Scholar] [CrossRef]

- Ekinci, G.; Ozturk, H.K. Forecasting Wind Farm Production in the Short, Medium, and Long Terms Using Various Machine Learning Algorithms. Energies 2025, 18, 1125. [Google Scholar] [CrossRef]

- Zheng, Y.; Guan, S.; Guo, K.; Zhao, Y.; Ye, L. Technical indicator enhanced ultra-short-term wind power forecasting based on long short-term memory network combined XGBoost algorithm. IET Renew. Power Gener. 2025, 19, e12952. [Google Scholar] [CrossRef]

- Giebel, G.; Brownsword, R.; Kariniotakis, G.; Denhard, M.; Draxl, C. The State-of-the-Art in Short-Term Prediction of Wind Power: A Literature Overview, 2nd ed.; ANEMOS.plus: Crete, Greece, 2011. [Google Scholar] [CrossRef]

- Liu, Z.; Guo, H.; Zhang, Y.; Zuo, Z. A Comprehensive Review of Wind Power Prediction Based on Machine Learning: Models, Applications, and Challenges. Energies 2025, 18, 350. [Google Scholar] [CrossRef]

- Lei, M.; Shiyan, L.; Chuanwen, J.; Hongling, L.; Yan, Z. A review on the forecasting of wind speed and generated power. Renew. Sustain. Energy Rev. 2009, 13, 915–920. [Google Scholar] [CrossRef]

- Park, S.; Jung, S.; Lee, J.; Hur, J. A short-term forecasting of wind power outputs based on gradient boosting regression tree algorithms. Energies 2023, 16, 1132. [Google Scholar] [CrossRef]

- Lahouar, A.; Slama, J.B.H. Hour-ahead wind power forecast based on random forests. Renew. Energy 2017, 109, 529–541. [Google Scholar] [CrossRef]

- Chen, T.; Guestrin, C. XGBoost: A Scalable Tree Boosting System. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, San Francisco, CA, USA, 13–17 August 2016; ACM: New York, NY, USA, 2016; pp. 785–794. [Google Scholar]

- Xiong, X.; Guo, X.; Zeng, P.; Zou, R.; Wang, X. A short-term wind power forecast method via XGBoost hyper-parameters optimization. Front. Energy Res. 2022, 10, 905155. [Google Scholar] [CrossRef]

- García-Puente, B.; Rodríguez-Hurtado, A.; Santos, M.; Sierra-García, J. Evaluation of XGBoost vs. other Machine Learning models for wind parameters identification. Renew. Energy Power Qual. J. 2023, 21, 388–393. [Google Scholar] [CrossRef]

- Sunku, V.S.R.P.; Namboodiri, V.; Mukkamala, R. The Short-Term Wind Power Forecasting by Utilizing Machine Learning and Hybrid Deep Learning Frameworks. Probl. Reg. Energetics 2025, 1, 1–11. [Google Scholar] [CrossRef]

- Ahmed, U.; Muhammad, R.; Abbas, S.S.; Aziz, I.; Mahmood, A. Short-term wind power forecasting using integrated boosting approach. Front. Energy Res. 2024, 12, 1401978. [Google Scholar] [CrossRef]

- Vovk, V.; Gammerman, A.; Shafer, G. Algorithmic Learning in a Random World; Springer: Berlin/Heidelberg, Germany, 2005. [Google Scholar]

- Angelopoulos, A.N.; Bates, S. A gentle introduction to conformal prediction and distribution-free uncertainty quantification. arXiv 2021, arXiv:2107.07511. [Google Scholar]

- Dheur, V. Distribution-Free and Calibrated Predictive Uncertainty in Probabilistic Machine Learning. Ph.D. Thesis, UMONS—University of Mons [Faculté des Sciences], Mons, Belgium, 2025. [Google Scholar]

- Angelopoulos, A.N.; Barber, R.F.; Bates, S. Theoretical Foundations of Conformal Prediction. arXiv 2025, arXiv:2411.11824. [Google Scholar] [CrossRef]

- Kavzoglu, T.; Teke, A. Advanced hyperparameter optimization for improved spatial prediction of shallow landslides using extreme gradient boosting (XGBoost). Bull. Eng. Geol. Environ. 2022, 81, 201. [Google Scholar] [CrossRef]

- Ponkumar, G.; Jayaprakash, S.; Kanagarathinam, K. Advanced machine learning techniques for accurate very-short-term wind power forecasting in wind energy systems using historical data analysis. Energies 2023, 16, 5459. [Google Scholar] [CrossRef]

- Zhao, X.; Li, Q.; Xue, W.; Zhao, Y.; Zhao, H.; Guo, S. Research on Ultra-Short-Term Load Forecasting Based on Real-Time Electricity Price and Window-Based XGBoost Model. Energies 2022, 15, 7367. [Google Scholar] [CrossRef]

- Friedman, J.H. Stochastic gradient boosting. Comput. Stat. Data Anal. 2002, 38, 367–378. [Google Scholar] [CrossRef]

- Chen, T.; He, T.; Benesty, M.; Khotilovich, V.; Tang, Y.; Cho, H.; Chen, K.; Mitchell, R.; Cano, I.; Zhou, T.; et al. Xgboost: Extreme gradient boosting. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, San Francisco, CA, USA, 13–17 August 2016. [Google Scholar] [CrossRef]

- Koenker, R.W.; Bassett, G. Regression Quantiles. Econometrica 1978, 46, 33–50. [Google Scholar] [CrossRef]

- Hoshino, T. Quantile regression estimation of partially linear additive models. J. Nonparametr. Stat. 2014, 26, 509–536. [Google Scholar] [CrossRef]

- Fallahtafti, A.; Aghaaminiha, M.; Akbarghanadian, S.; Weckman, G.R. Forecasting ATM Cash Demand Before and During the COVID-19 Pandemic Using an Extensive Evaluation of Statistical and Machine Learning Models. SN Comput. Sci. 2022, 3, 164. [Google Scholar] [CrossRef] [PubMed]

- Stocker, M.; Małgorzewicz, W.; Fontana, M.; Taieb, S.B. A Gentle Introduction to Conformal Time Series Forecasting. arXiv 2025, arXiv:2511.13608. [Google Scholar] [CrossRef]

- Khosravi, A.; Nahavandi, S.; Creighton, D.; Atiya, A.F. Comprehensive review of neural network-based prediction intervals and new advances. IEEE Trans. Neural Netw. 2011, 22, 1341–1356. [Google Scholar] [CrossRef]

- Gneiting, T.; Balabdaoui, F.; Raftery, A.E. Probabilistic forecasts, calibration and sharpness. J. R. Stat. Soc. Ser. B Stat. Methodol. 2007, 69, 243–268. [Google Scholar] [CrossRef]

- Diebold, F.; Mariano, R. Comparing predictive accuracy. J. Bus. Econ. Stat. 1995, 13, 253–265. [Google Scholar] [CrossRef]

- Triacca, U. Comparing Predictive Accuracy of Two Forecasts. 2018. Available online: https://www.lem.sssup.it/phd/documents/Lesson19.pdf (accessed on 17 September 2025).

- Mevik, B.H.; Wehrens, R.; Liland, K.H. Introduction to the pls Package. 2015. Available online: https://cran.r-project.org/web/packages/pls/vignettes/pls-manual.html (accessed on 23 August 2025).

- Nowotarski, J.; Weron, R. Computing electricity spot price prediction intervals using quantile regression and forecast averaging. Comput. Stat. 2015, 30, 791–803. [Google Scholar] [CrossRef]

- Mpfumali, P.; Sigauke, C.; Bere, A.; Mulaudzi, S. Day Ahead Hourly Global Horizontal Irradiance Forecasting—Application to South African Data. Energies 2019, 12, 3569. [Google Scholar] [CrossRef]

| Author/Year | Methodology Type | Forecasting Horizon | Uncertainty Quantification | Key Performance Metrics | Main Limitations |

|---|---|---|---|---|---|

| [6] | Statistical (ARIMA, Persistence) | Short-term | Not addressed | Qualitative review | Struggle with nonlinear relationships between wind power and weather variables |

| [8] | Machine Learning (ANNs, SVMs) | Short-term | Not addressed | Comparative review | Early-stage ML applications, limited uncertainty quantification |

| [10] | Random Forest | Hour-ahead | Not addressed | Accuracy improvements demonstrated | Point predictions only, no uncertainty estimates |

| [2] | Ensemble methods (temporal and geographical ensembles) | Short-term | Probabilistic forecasting with analogue ensemble methods | Improved uncertainty estimation | Complex implementation, computational intensity |

| [12] | XGBoost with Bayesian hyperparameter optimisation (BH-XGBoost) | Short-term | Not addressed | Superior performance vs. SVM, KELM, LSTM in all test conditions | Point predictions only, no uncertainty quantification |

| [20] | Advanced optimisation algorithms | Not specified | Not addressed | Improved model performance | Focus on optimisation rather than uncertainty |

| [9] | Gradient Boosting Machine (GBM) | Short-term (15-min intervals) | Not addressed | NMAE: 5.15% | Point predictions only, limited to specific temporal resolution |

| [13] | XGBoost vs. SVR, GPR, NN | Short-term | Not addressed | XGBoost most effective for short-term predictions | No uncertainty quantification |

| [21] | LightGBM, RF, CatBoost, XGBoost | Very short-term | Not addressed | MAE, MSE, RMSE, R-squared comparisons | Point predictions only |

| [15] | Boost-LR (XGBoost, CatBoost, RF + Linear Regression) | Short-term | Not addressed | MAE improvements: 31.42%, 32.14%, 27.55% | Ensemble improves accuracy but lacks uncertainty intervals |

| [5] | XGBoost + LSTM + Technical Indicators (KDJ, SO, MACD) | Ultra-short-term | Not addressed | NMAE: 0.0396; Processing time: 550 s | Computational complexity, no uncertainty quantification |

| [4] | XGBoost, RF, ANNs, KNN, MLP | Medium to long-term | Not addressed | Superior stability and accuracy vs. statistical methods | Focus on accuracy, not forecast reliability |

| [14] | CNN-GRU vs. XGBoost, RF | Day-ahead | Statistical validation (Diebold–Mariano test) | Deep learning marginally better; XGBoost competitive | Hypothesis testing rather than operational uncertainty quantification |

| [7] | XGBoost, RF, LSTM vs. traditional | Comprehensive review | Not addressed | ML superiority demonstrated | Review format, no empirical uncertainty analysis |

| [18] | Machine Learning + Conformal Prediction | Various | Conformal prediction | Quantifiable uncertainty intervals | Not specifically applied to wind energy forecasting |

| Summary | Value |

|---|---|

| Minimum | 19.8 |

| First Quartile (Q1) | 568.4 |

| Median (Q2) | 903.0 |

| Third Quartile (Q3) | 1306.8 |

| Maximum | 3102.2 |

| Mean | 982.5 |

| Skewness | 0.7557 |

| Kurtosis | 3.3057 |

| Variables | Importance (80% Train) | Importance (85% Train) |

|---|---|---|

| noltrend | 0.6637 | 0.6670 |

| difLag12 | 0.2309 | 0.2223 |

| difLag24 | 0.0605 | 0.0508 |

| Hour | 0.0199 | 0.0302 |

| difLag2 | 0.0161 | 0.0198 |

| difLag1 | 0.0075 | 0.0082 |

| Day | 0.0015 | 0.0016 |

| Model/Set | Evaluation Metrics | Optimal Rounds = 143 | nrounds = 500 | nrounds = 1000 |

|---|---|---|---|---|

| 80% train | MASE | 1.3284 | 1.4037 | 1.4188 |

| 10% validation | RMSE | 182.4441 | 193.5536 | 195.9357 |

| 10% test | MAE | 144.1475 | 152.3166 | 153.9614 |

| MBE | 0.0677 | −7.1082 | −7.9733 | |

| Model/Set | Evaluation Metrics | Optimal Rounds = 152 | nrounds = 500 | nrounds = 1000 |

| 85% train | MASE | 1.2551 | 1.3146 | 1.3421 |

| 10% validation | RMSE | 182.781 | 192.4822 | 197.4568 |

| 5% test | MAE | 143.5088 | 150.3099 | 153.4492 |

| MBE | −3.7311 | −17.5414 | −12.7386 |

| Model/Set | Evaluation Metrics | 80%/10%/10% | 85%/10%/5% |

|---|---|---|---|

| Training/Validation/Test Split | MASE | 1.3822 | 1.3224 |

| RMSE | 189.0324 | 190.2073 | |

| MAE | 149.9833 | 144.6136 | |

| MBE | −3.4655 | −4.1088 | |

| Prediction Intervals | PICP | 0.9275 | 0.9364 |

| MPIW | 654.7578 | 693.342 | |

| CWC | 2669.333 | 2064.1 |

| Metric | XGBoost | PCR | Winner |

|---|---|---|---|

| MSE | 34,081.89 | 35,733.24 | XGBoost |

| RMSE | 184.61 | 189.03 | XGBoost |

| MAE | 145.22 | 149.98 | XGBoost |

| MAPE (%) | 13.58 | 14.27 | XGBoost |

| Diebold–Mariano Test | ||||

|---|---|---|---|---|

| Null Hypothesis | Test Statistic | p-Value | Mean Loss Differential | Result |

| M1 = M2 | −3.182 | 0.0015 | −1651.345 | Not equally accurate |

| Model | MASE | RMSE | MSE | MAE | MBE | POCID (%) | Fitness |

|---|---|---|---|---|---|---|---|

| fplaqrTest10 | 1.2993 | 179.2473 | 32,129.60 | 140.9943 | −0.7070 | 71.1487 | 34.2805 |

| f1XG | 1.3284 | 182.4441 | 33,285.86 | 144.1475 | 0.0677 | 70.7078 | 33.7562 |

| f3PCR | 1.3822 | 189.0324 | 35,733.24 | 149.9833 | −3.4655 | 71.7120 | 33.6014 |

| Model | MASE | RMSE | MSE | MAE | MBE | POCID (%) | Fitness |

|---|---|---|---|---|---|---|---|

| fplaqrTest5. | 1.2168 | 178.8877 | 32,000.81 | 139.1227 | 0.8072 | 74.2409 | 35.8077 |

| f2XG | 1.2551 | 182.7810 | 33,408.91 | 143.5088 | −3.7311 | 72.9677 | 34.8014 |

| f4PCR | 1.3224 | 190.2073 | 36,178.81 | 151.2035 | −4.1088 | 73.3105 | 34.2373 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Nthangeni, R.I.; Sigauke, C.; Ravele, T.; Tshisikhawe, T.H. Enhancing Short-Term Wind Energy Forecasting with XGBoost and Conformal Prediction for Robust Uncertainty Quantification. Computation 2026, 14, 56. https://doi.org/10.3390/computation14030056

Nthangeni RI, Sigauke C, Ravele T, Tshisikhawe TH. Enhancing Short-Term Wind Energy Forecasting with XGBoost and Conformal Prediction for Robust Uncertainty Quantification. Computation. 2026; 14(3):56. https://doi.org/10.3390/computation14030056

Chicago/Turabian StyleNthangeni, Rabelani Innocent, Caston Sigauke, Thakhani Ravele, and Thinawanga Hangwani Tshisikhawe. 2026. "Enhancing Short-Term Wind Energy Forecasting with XGBoost and Conformal Prediction for Robust Uncertainty Quantification" Computation 14, no. 3: 56. https://doi.org/10.3390/computation14030056

APA StyleNthangeni, R. I., Sigauke, C., Ravele, T., & Tshisikhawe, T. H. (2026). Enhancing Short-Term Wind Energy Forecasting with XGBoost and Conformal Prediction for Robust Uncertainty Quantification. Computation, 14(3), 56. https://doi.org/10.3390/computation14030056