1. Introduction

Major advances on phonetic sciences in the last decades contributed to better description of the variety of speech sounds in the world languages, to the expansion of new methodologies to less common languages and varieties contributing to a better understanding of spoken language in general. Speech sounds are not sequential nor isolated, but sequences of consonants and vowels are produced in a temporally overlapping way with coarticulatory differences in timing being language specific and varying according to syllable type (simplex, complex), syllable position (Marin and Pouplier [

1] for timing in English, Cunha [

2,

3] for European Portuguese), and many other factors. Because of the coarticulation with adjacent sounds, articulators that are not relevant (i.e., noncritical) for the production of the analysed sound can be activated. In this regard, noncritical articulators can entail gestures which are not actively involved in a specific articulation (e.g., a bilabial gestures for /p/), but show anticipatory movement influenced by the following vowel.

Despite the advances, our knowledge regarding how the sounds of the world languages are articulated is still fragmentary, being knowledge particularly limited for temporal organization, coarticulation, and dynamic aspects. These limitations in knowledge prevent both the advances in speech production theories, such as Articulatory Phonology, language teaching, speech therapy, and technological applications. More knowledge, particularly regarding the temporal organization and dynamic aspects, is essential for technologies such as articulatory and audiovisual synthesis. Recent developments show that articulatory synthesis is worth revisiting as a research tool [

4,

5], as part of text-to-speech (TTS) systems or to provide the basis for articulatory-based audiovisual speech synthesis [

6].

Some articulatory based phonological descriptions of speech sounds appeared for different languages boosted by the increased access to direct measures of the vowel tract, such as electromagnetic articulography (EMA) or magnetic resonance imaging (MRI). For EP there are some segmental descriptions using EMA [

7] and MRI (static and real time, for example, Reference [

8]) and some work on onset coordination using EMA [

2,

3]. Also an initial description of EP adopting the Articulatory Phonology framework was proposed [

7]. However, these descriptions analysed a reduced set of images or participants and need to be revised and improved.

Recent advances in the collection and processing of real-time MRI (RT-MRI) [

9,

10] are promising to improve existing descriptions providing high temporal and spatial resolutions along with automated extraction of the relevant structures (for example, the vocal tract [

11,

12]). The huge amounts of collected data are only manageable by proposing automatic data-driven approaches. In these scenarios with huge amounts of data need to be tackled (e.g., RT-MRI, EMA), the community has made an effort to contribute with methods to extract and analyse features of interest [

11,

13,

14,

15,

16]. In order to determine the more important articulator for each specific sound, articulator criticality, several authors have proposed data-driven methods, for example, References [

17,

18,

19,

20,

21,

22]. Of particular interest, the statistical method proposed by Jackson and Singampali [

19] considers the position of the EMA pellets as representative of the articulators and uses a statistical approach to determine the critical articulators for each phone.

The authors’ previous work [

23,

24,

25] explored those computational methods initially proposed for EMA [

19,

26], to determine the critical articulators for EP phones from real-time MRI data at 14 and 50 fps. demonstrating the applicability of the methods to MRI data, and present (albeit preliminary) interesting results. To further pursue this topic, this work presents first results towards critical variable (articulator) determination considering a representation of the vocal tract aligned with the Articulatory Phonology and the Task Dynamics framework [

27]. It extends previous work [

25], further confirming our previous findings, by: (a) considering more articulatory data, both increasing it for previously considered speakers and adding a novel speaker; (b) improving on the computation of tract variables (particularly the velum); (c) adding additional analysis details for all speakers (e.g., regarding the correlation among tract variables); and (d) tackling a more indepth exploration of individual tract variable components (e.g., constriction degree or velopharyngeal passage).

The remainder of this document is organized as follows:

Section 2 and

Section 3 provide a brief overview on relevant background and related work;

Section 4 provides an overall description of the methods adopted to acquire, annotate, process, and revise articulatory data obtained from RT-MRI of the vocal tract to determine critical articulators;

Section 5 presents the outcomes of the main stages of the critical articulator determination obtained considering tract variables aligned with the Task Dynamics framework and these are discussed in

Section 6; finally,

Section 7, highlights the main contributions of this work and proposes routes for future efforts.

2. Background: Articulatory Phonology, Gestures and Critical Tract Variables

Speech sounds are not static target configurations clearly defined, their production involves complex tempo-spatial trajectories in the vocal tract articulators responsible for their production from the start of the movement till the release and back (e.g., in bilabial /p/ both lips move until the closure that produces the bilabial and the lips open again). All this movement is a so called articulatory gesture. Instead of phonological features, the dynamic gestures are the unities of speech in Articulatory Phonology [

27,

28] and define each particular sound. Therefore, gestures are, on one hand, the physically tractable movements of articulators that are highly variable, depending, for example, on context and speaking rate, and, on the other hand, the representations of motor commands for individual phones in the minds of the speakers which are invariant. In other words, they are both instructions to achieve the formation (and release) of a constriction at some place in the vocal tract (for example, an opening of the lips) and abstract phonological units with a distinctive function [

27].

Since the vowel tract is contiguous, more articulators are activated simultaneously than the intended ones. Consequently it is important to differentiate between the actively activated (critical) articulators and the less activated or passive ones: For example, in the production of alveolar sounds as /t/ or /l/, tongue tip needs to move up in the alveolar region (critical articulator) and simultaneously also tongue back and tongue body show some movement, since they all are connected. For laterals, for example, also tongue body may have a secondary importance in their production [

29,

30]. Some segments can be defined only based on one or two gestures, bilabials are defined based on the lips trajectories; laterals as mentioned before, are more complex and may include tongue tip and tongue body gestures.

Gestures are tempo-spatial entities, structured with a duration and a cycle. The cycle begins with the movement’s onset, continues with the movement toward the target – that can be reached or not –, then to the release, where the movement away from the constriction begins, ending with the offset, where the articulators cease to be under active control of the gesture. Individual gestures are combined to form segments, consonant clusters, syllables, words.

Gestures are specified by a set of tract variables and their constriction location and degree: Tract variables are related to the articulators and include: Lips (LIPS), Tongue Tip (TT), Tongue Body (TB), Velum (VEL) and Glottis (GLO); Constriction location specifies the place of the constriction in the vocal tract and can assume the values: labial, dental, [alveolar, postalveolar, palatal, velar, uvular and pharyngeal; Constriction degree includes: closed (for stops), critical (for fricatives), narrow, mid and wide (approximants and vowels). For example, a possible specification for the alveolar stop /t/ in terms of gestures is Tongue Tip [constriction degree: closed, constriction location: alveolar] [

31].

The tract variables involved in the critical gestures are considered critical tract variables and the involved articulators the critical articulators.

The articulatory phonology approach has been incorporated into a computational model by Haskins Laboratories researchers [

32,

33]. It is composed by three main processes, in sequence: (1) Linguistic Gestural Model, responsible for transforming the input into a gestural score (set of discrete, concurrently active gestures); (2) Task Dynamic Model [

32,

33], that calculates the articulatory trajectories given the gestural score; and (3) Articulatory Synthesizer, capable of, based on the articulators’ trajectory, obtaining the global vocal tract shape, and, ultimately, the speech waveform.

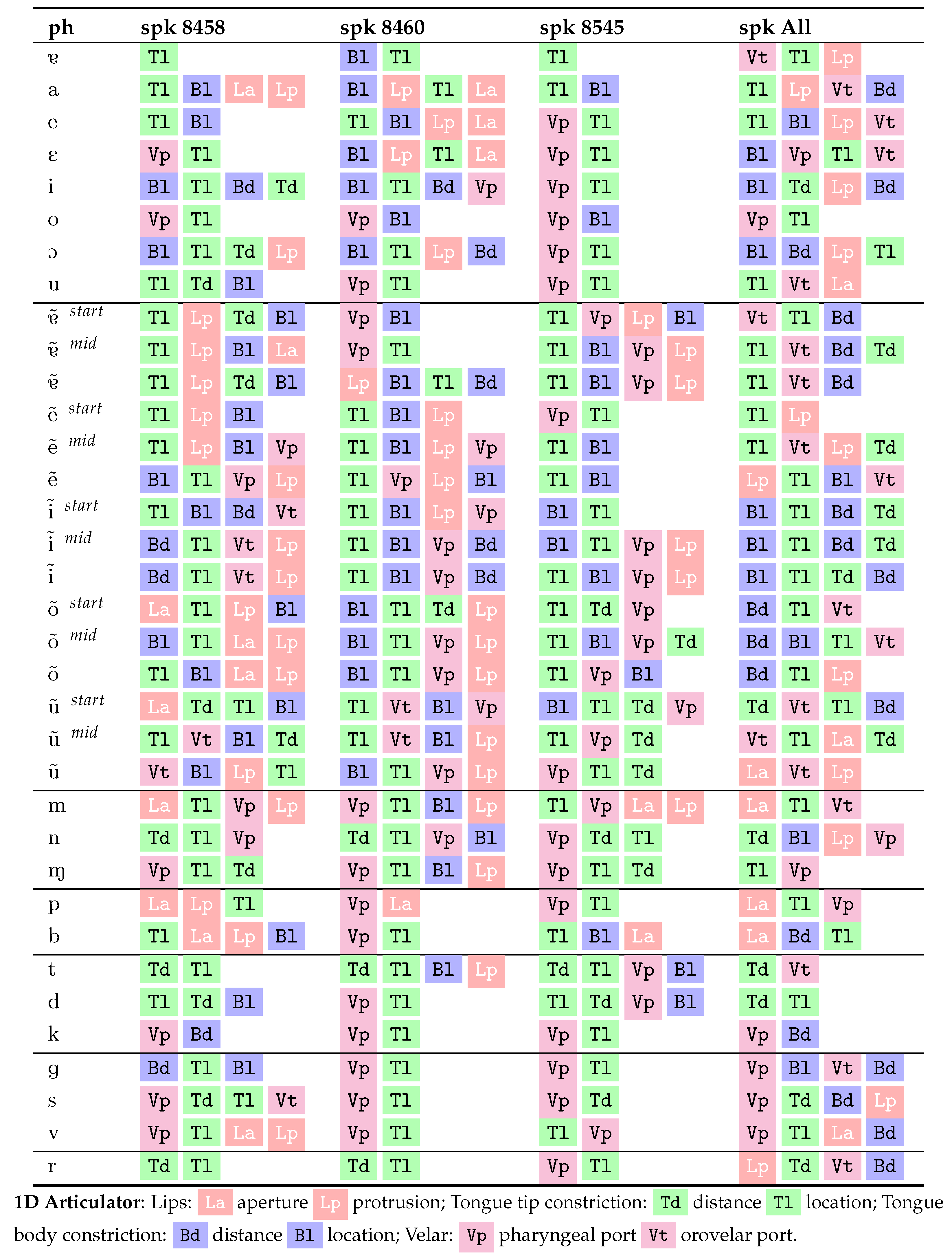

6. Discussion

When comparing our preliminary work [

25] and the results presented here, several aspects are worth noting. First, a novel speaker was considered (8545, in the third column of

Table 4 and

Table 5) and the obtained results are consistent with those for the previously analysed speakers; second, the larger number of data samples considered for speaker 8460, entailing a larger number of samples per phone and including more phonetic contexts, turned some of the results more consistent with those of speaker 8458, as previously hypothesized [

25]; and third, the consideration of one additional speaker for the normalized speaker, did not disrupt the overall previous findings for the critical variable (articulator) analysis.

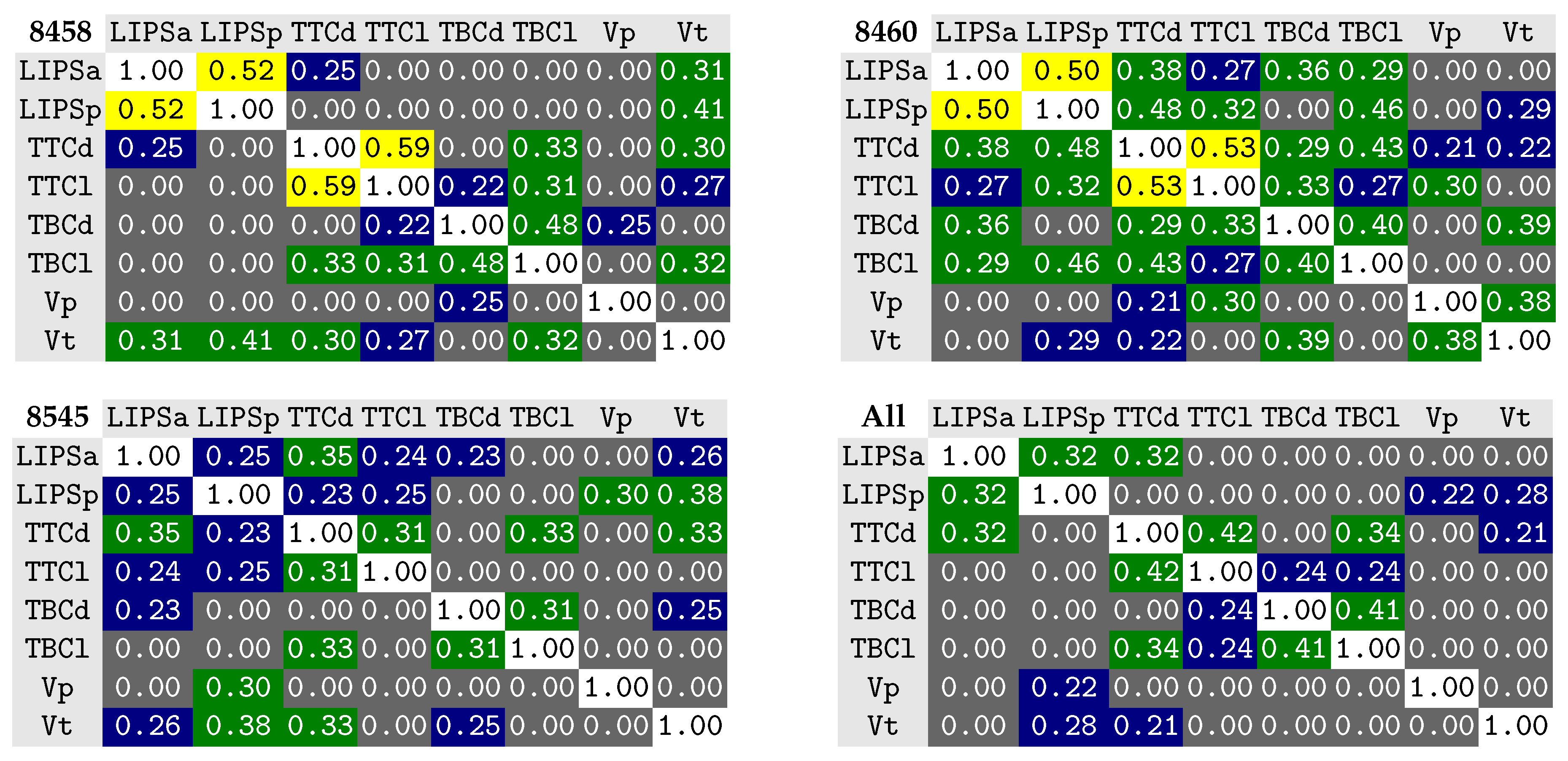

Concerning the 1D correlation, among the different variable dimensions (see

Figure 6), the variables are, overall, more decorrelated than in previous approaches considering landmarks over the vocal tract (e.g., see Silva et al. [

24]) and has been further improved by the novel representation for the velar data considered in this work. The larger amount of data, in comparison with our first testing of the tract variable aligned with Articulatory Phonology [

25], resulted in an even smaller number of correlations. Speaker 8545, along with the normalized speaker, do not show any correlation worth noting.

The mild/weak correlation observed for the lips (protrusion vs aperture) and tongue tip constriction (location vs degree) are, probably, due to a bias introduced by the characteristics of the considered corpus. Regarding the tongue tip, mild correlations between TTCl and TTCd may appear due to the fact the the strongest constrictions happen, typically, at the highest location angle.

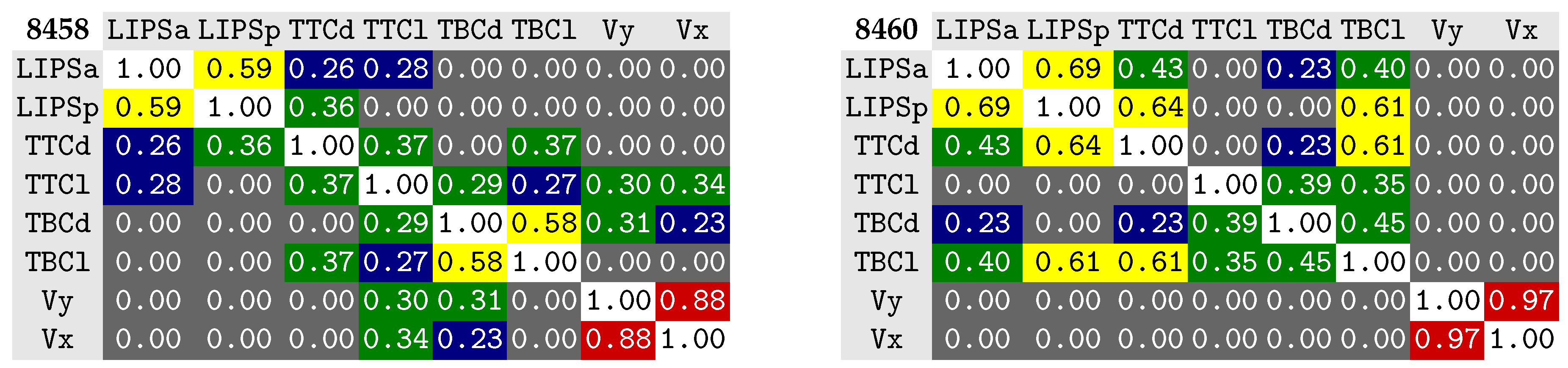

The correlations observed, in our previous work (refer to

Figure 7), for speaker 8460, between the lips and the tongue body and tongue tip have disappeared with the larger number of data samples considered, as hypothesized [

25].

6.1. Individual Tract Variable Components

The analysis of critical articulators treating each tract variable dimension as an independent variable is much more prone to being affected by the amount of data samples considered [

19]. Therefore, while a few interesting results can be observed for some phones and speakers, some notable trends are not phonologically meaningful, such as the tongue tip constriction location (Tl) appearing prominently for the nasal vowels. Because the normalized speaker considers more data, some improvements are expected here when compared to the individual speakers and, indeed, it shows several promising results. Therefore, our discussion will mostly concern the normalized data. At a first glance, the tongue body (Bl and Bd) appears as critical in a prominent position for many of the vowels, as expected. The lip aperture (La) appears as critical for all bilabials segments (/p/, /b/, and /m/). The tongue tip constriction degree (Td) appears forthe alveolars /n/, /t/ (with Vt) and /d/, the latter also with Tl, which seems to assert tighter conditions for the tongue tip positioning for the /d/. The velopharyngeal passage (Vp) appears as critical for the velar sounds /k/ (with Bd), /g/ (with Bl, Vt, and Bd), probably because some reajustments in the soft palate region preceding the velum. Also labiodental /v/ (with La) and for M, which makes sense, since later concerns the nasal tail.

Concerning the lips, it is solely lip aperture (La) that appears as critical for /u/ and its nasal congenere and lip protrusion (Lp) appears across several of the vowels. This might be a similar effect to what we have previously observed for the velum: an articulator may appear as critical for those cases when it will be in a more fixed position during the articulation of a sound. The velum, for instance, tends to appear more prominent for oral vowels since, at the middle of their production, it is closed, while, at the end of a nasal vowel, it can be open to different extents. Therefore, Lp may appear as critical not because the sound entails protrusion, but because the amount of observed protrusion throughout the different occurrences does not vary much.

Given the restricted number of speakers and occurrences, one aspect that seems interesting and should foster further future analysis, is the appearance of the orovelar (Vt) and not the velopharingeal (Vp) passage as critical for nasal vowels. This does not diminish the velum opening, but points out that the extent of the orovelar passage is more stable across occurrences and, hence, more critical. Additionally, it is also relevant to note that for /ũ/ and its oral congenere, the tongue body constriction does not appear as critical as happens, for example, with /õ/. Since the velopharyngeal passage and the tongue body constriction do not appear as critical—only the orovelar passage—this may hint that any variation of velar aperture, across occurrences, is compensated with tongue adjustments to keep the oral passage [

42,

43]. Also of note is the absence of Vt for the more fronted vowel /ĩ/ and its oral congenere. Given the fronted position of the tongue, Vt is large and more variable since its variation is not as limited, as for the back vowels, by velar opening.

One example that shows a different behavior between the tongue and velum is /g/, where both Vp and Vt are determined as critical and coincide with Bl and Bd, hinting that both the velum and tongue body are in a very fixed position along the occurrences of /g/. A similar result can also notably be observed for /k/.

Overall, Tl is still widely present (as with the individual speakers), mostly not agreeing with current phonological descriptions for EP and should motivate further analysis considering more data (speakers and phonetic contexts) and different alternatives for the computation of the tongue tip constriction.

6.2. Critical Tract Variables

Overall, and considering that the corpus is prone to strong coarticulation effects, the obtained results strongly follow our preliminary results presented in Silva et al. [

25] and are mostly in accordance with previous descriptions considering Articulatory Phonology [

7].

The TB is determined as the most critical articulator, for most vowels, in accordance to the descriptions available in the literature. The appearance of V, as critical articulator for some oral vowels, earlier than for nasals, is aligned with previous outcomes of the method [

19,

23,

24]. This is probably due to a more stable position of V at the middle of oral vowels (the selected frame) than at the different stages selected for the nasal vowels for which it appears, mostly, in the fourth place, eventually due to the adopted conservative stopping criteria, to avoid phones without any reported critical articulator. It is also relevant to note that, for instance, if some of the nasal vowels are preceded by a nasal consonant it affects velum position during the initial phase of the vowel, which will have an incomplete movement towards closure [

44]. This might explain why V does not appear as critical in the first frame (

start) of some nasal vowels (typically referred as the oral stage [

45]) since the velum is not in a stable position. The lips correctly appear, with some prominence for the back rounded vowels /u/ and /o/ and their nasal congeneres, but the appearance of this articulator for unrounded low vowels, probably due to the limitations of the corpus, does not allow any conclusion for this articulator.

Regarding consonants, for /d/, /t/, /s/ and /r/, as expected, T is identified as the most critical articulator, although, for /s/, it disappears in the normalized speaker. For bilabials, /p/, /b/ and /m/ correctly present L as the most critical articulator, and this is also observed for /v/, along with the expected prominence of V, except for speaker 8460. For /m/, V also appears, along with L, as expected. For /p/, the tongue tip appears as critical, for two of the speakers, probably due to coarticulatory reasons, but disappears in the normalized speaker which exhibits L and V, as expected. For /k/, V and TB are identified as the most critical articulators. Finally, M, which denotes the nasal tail, makes sense to have V as critical. The appearance of L, in the normalized speaker is unexpected, since it does not appear for any of the other speakers.

By gathering the normalized data, for the three speakers, in speaker ALL, the method provided lists of critical articulators that are, overall, more succinct, cleaner, and closer to the expected outcomes, when compared to the literature [

7], even considering a simple normalization method.

This seems to point out that the amount of considered data has a relevant impact on the outcomes. While this is expectable, the amount of data seems to have a stronger effect than in previous approaches using more variables [

24], probably due to the fewer number of dimensions representing the configuration for each phone.

These good results, obtained with a very simple normalization approach, gathering the data for three speakers, may hint on how the elected tract variables are not strongly prone to the influence of articulator shape differences, among speakers, as was the case when we considered landmarks over the tongue. Instead, they depict the outcomes of the relation between parts of the vocal tract, for example, the tongue and hard palate (constriction). Nevertheless, some cases where the normalized speaker failed to follow the trend observed for the individual speakers, as alluded above, for example, for M, hint on the need to further improve the data normalization method.

Author Contributions

Conceptualization, S.S. and A.T.; methodology, S.S. and A.T.; software, S.S. and N.A.; validation, S.S., N.A. and C.C.; formal analysis, S.S.; investigation, S.S., C.C., N.A., A.T., J.F. and A.J; resources, A.T., C.C., S.S., J.F. and A.J.; data curation, S.S., C.C. and N.A.; writing–original draft preparation, S.S., A.T. and C.C.; writing–review and editing, A.T., S.S. and C.C.; visualization, S.S. and N.A.; supervision, A.T. and S.S.; project administration, S.S. and A.T.; funding acquisition, A.T., S.S. and C.C. All authors have read and agreed to the published version of the manuscript.

Funding

This research is partially funded by the German Federal Ministry of Education and Research (BMBF, with the project ‘Synchonic variability and change in European Portuguese’, 01UL1712X), by IEETA Research Unit funding (UID/CEC/00127/2019), by Portugal 2020 under the Competitiveness and Internationalization Operational Program, and the European Regional Development Fund through project SOCA—Smart Open Campus (CENTRO-01-0145-FEDER-000010) and project MEMNON (POCI-01-0145-FEDER-028976).

Acknowledgments

We thank all the participants for their time and voice and Philip Hoole for the scripts for noise suppression.

Conflicts of Interest

The authors declare no conflict of interest. The funders had no role in the design of the study; in the collection, analyses, or interpretation of data; in the writing of the manuscript, or in the decision to publish the results.

References

- Marin, S.; Pouplier, M. Temporal organization of complex onsets and codas in American English: Testing the predictions of a gestural coupling model. Mot. Control 2010, 14, 380–407. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Cunha, C. Portuguese lexical clusters and CVC sequences in speech perception and production. Phonetica 2015, 72, 138–161. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Cunha, C. Die Organisation von Konsonantenclustern und CVC-Sequenzen in zwei portugiesischen Varietäten. Ph.D. Thesis, Ludwig Maximilian University of Munich, München, Germany, July 2012. [Google Scholar]

- Xu, A.; Birkholz, P.; Xu, Y. Coarticulation as synchronized dimension-specific sequential target approximation: An articulatory synthesis simulation. In Proceedings of the 19th International Congress of Phonetic Sciences, Melbourne, Australia, 5–9 August 2019. [Google Scholar]

- Alexander, R.; Sorensen, T.; Toutios, A.; Narayanan, S. A modular architecture for articulatory synthesis from gestural specification. J. Acoust. Soc. Am. 2019, 146, 4458–4471. [Google Scholar] [CrossRef] [PubMed]

- Silva, S.; Teixeira, A.; Orvalho, V. Articulatory-based Audiovisual Speech Synthesis: Proof of Concept for European Portuguese. In Proceedings of the Iberspeech 2016, Lisbon, Portugal, 23– 25 November 2016; pp. 119–126. [Google Scholar]

- Oliveira, C. From Grapheme to Gesture. Linguistic Contributions for an Articulatory Based Text-To-Speech System. Ph.D. Thesis, University of Aveiro, Aveiro, Portugal, 2009. [Google Scholar]

- Martins, P.; Oliveira, C.; Silva, S.; Teixeira, A. Velar movement in European Portuguese nasal vowels. In Proceedings of the Iberspeech 2012, Madrid, Spain, 21–13 November 2012; pp. 231–240. [Google Scholar]

- Scott, A.D.; Wylezinska, M.; Birch, M.J.; Miquel, M.E. Speech MRI: Morphology and function. Phys. Med. 2014, 30, 604–618. [Google Scholar] [CrossRef] [PubMed]

- Lingala, S.G.; Sutton, B.P.; Miquel, M.E.; Nayak, K.S. Recommendations for real-time speech MRI. J. Magn. Reson. Imaging 2016, 43, 28–44. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Silva, S.; Teixeira, A. Unsupervised Segmentation of the Vocal Tract from Real-Time MRI Sequences. Comput. Speech Lang. 2015, 33, 25–46. [Google Scholar] [CrossRef]

- Labrunie, M.; Badin, P.; Voit, D.; Joseph, A.A.; Frahm, J.; Lamalle, L.; Vilain, C.; Boë, L.J. Automatic segmentation of speech articulators from real-time midsagittal MRI based on supervised learning. Speech Commun. 2018, 99, 27–46. [Google Scholar] [CrossRef]

- Lammert, A.C.; Proctor, M.I.; Narayanan, S.S. Data-driven analysis of realtime vocal tract MRI using correlated image regions. In Proceedings of the Eleventh Annual Conference of the International Speech Communication Association, Chiba, Japan, 26–30 September 2010. [Google Scholar]

- Chao, Q. Data-Driven Approaches to Articulatory Speech Processing. Ph.D. Thesis, University of California, Merced, CA, USA, May 2011. [Google Scholar]

- Black, M.P.; Bone, D.; Skordilis, Z.I.; Gupta, R.; Xia, W.; Papadopoulos, P.; Chakravarthula, S.N.; Xiao, B.; Segbroeck, V.M.; Kim, J.; et al. Automated evaluation of non-native English pronunciation quality: Combining knowledge-and data-driven features at multiple time scales. In Proceedings of the Sixteenth Annual Conference of the International Speech Communication Association, Dresden, Germany, 6–10 September 2015. [Google Scholar]

- Silva, S.; Teixeira, A. Quantitative systematic analysis of vocal tract data. Comput. Speech Lang. 2016, 36, 307–329. [Google Scholar] [CrossRef] [Green Version]

- Kim, J.; Toutios, A.; Lee, S.; Narayanan, S.S. A kinematic study of critical and non-critical articulators in emotional speech production. J. Acoust. Soc. Am. 2015, 137, 1411–1429. [Google Scholar] [CrossRef] [Green Version]

- Sepulveda, A.; Castellanos-Domínguez, G.; Guido, R.C. Time-frequency relevant features for critical articulators movement inference. In Proceedings of the 20th European Signal Processing Conference (EUSIPCO), Bucharest, Romania, 27–31 August 2012. [Google Scholar]

- Jackson, P.J.; Singampalli, V.D. Statistical identification of articulation constraints in the production of speech. Speech Commun. 2009, 51, 695–710. [Google Scholar] [CrossRef] [Green Version]

- Ananthakrishnan, G.; Engwall, O. Important regions in the articulator trajectory. In Proceedings of the 8th International Seminar on Speech Production (ISSP’08), Strasbourg, France, 8–12 December 2008; pp. 305–308. [Google Scholar]

- Ramanarayanan, V.; Segbroeck, M.V.; Narayanan, S.S. Directly data-derived articulatory gesture-like representations retain discriminatory information about phone categories. Comput. Speech Lang. 2016, 36, 330–346. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Prasad, A.; Ghosh, P.K. Information theoretic optimal vocal tract region selection from real time magnetic resonance images for broad phonetic class recognition. Comput. Speech Lang. 2016, 39, 108–128. [Google Scholar] [CrossRef]

- Silva, S.; Teixeira, A.J. Critical Articulators Identification from RT-MRI of the Vocal Tract. In Proceedings of the INTERSPEECH, Stockholm, Sweden, 20–24 August 2017. [Google Scholar]

- Silva, S.; Teixeira, A.; Cunha, C.; Almeida, N.; Joseph, A.A.; Frahm, J. Exploring Critical Articulator Identification from 50Hz RT-MRI Data of the Vocal Tract. In Proceedings of the INTERSPEECH, Graz, Ausria, 15–19 September 2019. [Google Scholar] [CrossRef]

- Silva, S.; Cunha, C.; Teixeira, A.; Joseph, A.; Frahm, J. Towards Automatic Determination of Critical Gestures for European Portuguese Sounds. In Proceedings of the International Conference on Computational Processing of the Portuguese Language, Vora, Portugal, 2–4 March 2020. [Google Scholar]

- Jackson, P.J.; Singampalli, V.D. Statistical identification of critical, dependent and redundant articulators. J. Acoust. Soc. Am. 2008, 123, 3321. [Google Scholar] [CrossRef] [Green Version]

- Goldstein, L.; Byrd, D.; Saltzman, E. The role of vocal tract gestural action units in understanding the evolution of phonology. In Action to Language via Mirror Neuron System; Cambridge University Press: Cambridge, UK, 2006; pp. 215–249. [Google Scholar]

- Browman, C.P.; Goldstein, L. Gestural specification using dynamically-defined articulatory structures. J. Phon. 1990, 18, 299–320. [Google Scholar] [CrossRef]

- Proctor, M. Towards a gestural characterization of liquids: Evidence from Spanish and Russian. Lab. Phonol. 2011, 2, 451–485. [Google Scholar] [CrossRef] [Green Version]

- Recasens, D. A cross-language acoustic study of initial and final allophones of/l. Speech Commun. 2012, 54, 368–383. [Google Scholar] [CrossRef]

- Teixeira, A.; Oliveira, C.; Barbosa, P. European Portuguese articulatory based text-to-speech: First results. In Proceedings of the International Conference on Computational Processing of the Portuguese Language, Aveiro, Portugal, 8–10 September 2008. [Google Scholar]

- Saltzman, E.L.; Munhall, K.G. A dynamical approach to gestural patterning in speech production. Ecol. Psychol. 1989, 1, 333–382. [Google Scholar] [CrossRef]

- Nam, H.; Goldstein, L.; Saltzman, E.; Byrd, D. TADA: An enhanced, portable Task Dynamics model in MATLAB. J. Acoust. Soc. Am. 2004, 115, 2430. [Google Scholar] [CrossRef]

- Oliveira, C.; Teixeira, A. On gestures timing in European Portuguese nasals. In Proceedings of the ICPhS, Saarbrücken, Germany, 6–10 August 2007; pp. 405–408. [Google Scholar]

- Almeida, N.; Silva, S.; Teixeira, A.; Cunha, C. Collaborative Quantitative Analysis of RT-MRI. In Proceedings of the 12th International Seminar on Speech Production (ISSP), Providence, RI, USA, 14–18 December 2020. [Google Scholar]

- Uecker, M.; Zhang, S.; Voit, D.; Karaus, A.; Merboldt, K.D.; Frahm, J. Real-time MRI at a resolution of 20 ms. NMR Biomed. 2010, 23, 986–994. [Google Scholar] [CrossRef] [Green Version]

- Browman, C.P.; Goldstein, L. Some notes on syllable structure in articulatory phonology. Phonetica 1988, 45, 140–155. [Google Scholar] [CrossRef] [Green Version]

- Feng, G.; Castelli, E. Some acoustic features of nasal and nasalized vowels: A target for vowel nasalization. J. Acoust. Soc. Am. 1996, 99, 3694–3706. [Google Scholar] [CrossRef] [PubMed]

- Teixeira, A.; Vaz, F. European Portuguese nasal vowels: An EMMA study. In Proceedings of the Seventh European Conference on Speech Communication and Technology, Aalborg, Denmark, 3–7 September 2001. [Google Scholar]

- Rao, M.; Seth, S.; Xu, J.; Chen, Y.; Tagare, H.; Príncipe, J.C. A test of independence based on a generalized correlation function. Signal Process. 2011, 91, 15–27. [Google Scholar] [CrossRef] [Green Version]

- Johnson, R.A.; Wichern, D.W. Applied Multivariate Statistical Analysis; McGraw-Hill: New York, NY, USA, 2007. [Google Scholar]

- Cunha, C.; Silva, S.; Teixeira, A.; Oliveira, C.; Martins, P.; Joseph, A.A.; Frahm, J. On the Role of Oral Configurations in European Portuguese Nasal Vowels. In Proceedings of the INTERSPEECH 2019, Graz, Austria, 15–19 September 2019. [Google Scholar] [CrossRef] [Green Version]

- Carignan, C. Covariation of nasalization, tongue height, and breathiness in the realization of F1 of Southern French nasal vowels. J. Phon. 2017, 63, 87–105. [Google Scholar] [CrossRef]

- Teixeira, A.; Vaz, F.; Príncipe, J.C. Nasal Vowels After Nasal Consonants. In Proceedings of the 5th Seminar on Speech Production: Models and Data, Bavaria, Germany, 1–4 May 2000. [Google Scholar]

- Parkinson, S. Portuguese nasal vowels as phonological diphthongs. Lingua 1983, 61, 157–177. [Google Scholar] [CrossRef]

- Mitra, V.; Nam, H.; Espy-Wilson, C.Y.; Saltzman, E.; Goldstein, L. Retrieving tract variables from acoustics: A comparison of different machine learning strategies. IEEE J. Sel. Top. Signal Process. 2010, 4, 1027–1045. [Google Scholar] [CrossRef] [Green Version]

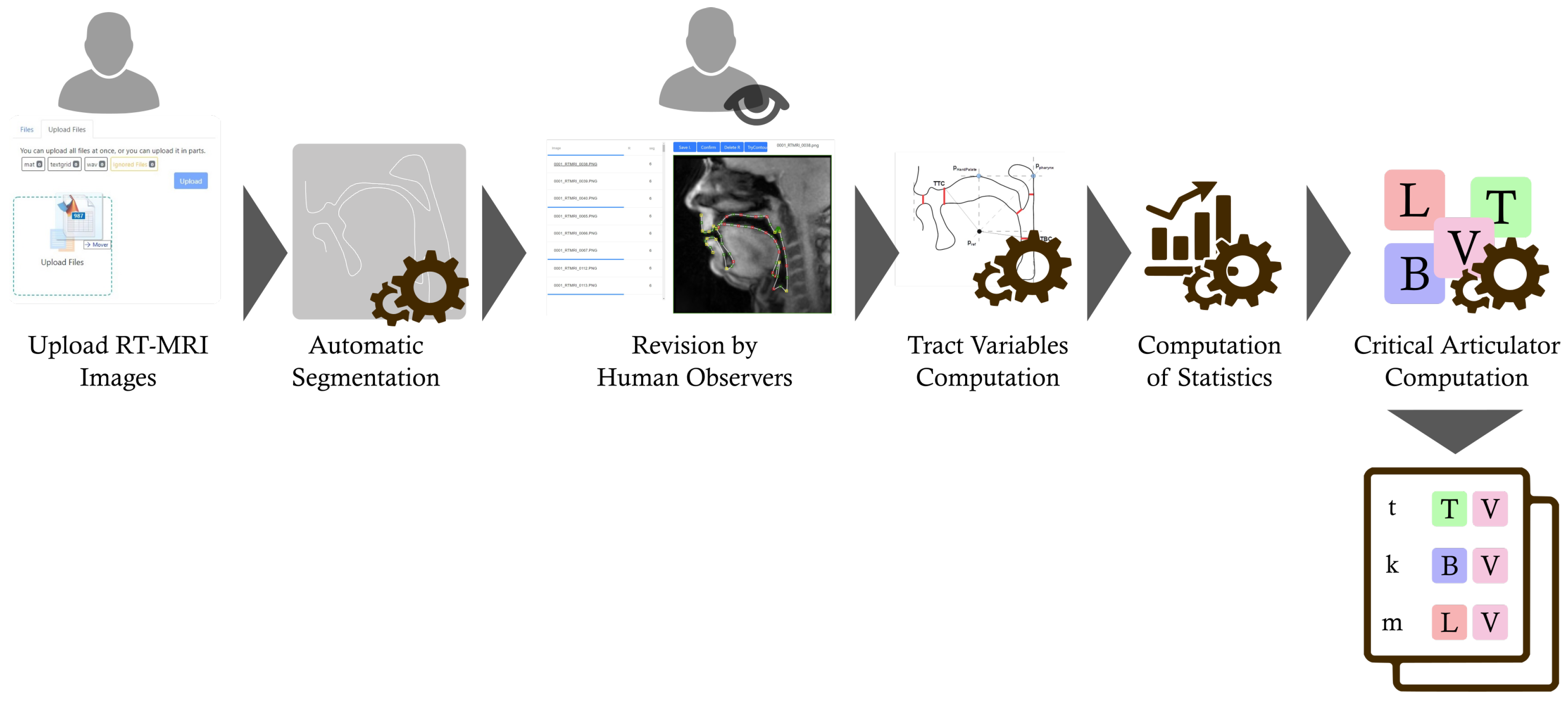

Figure 1.

Overall steps of the method to determine the critical articulators from real-time MRI (RT-MRI) images of the vocal tract. After MRI acquisition and audio annotation, the data is uploaded to our speech studies platform, under development [

35], and its processing and analysis are carried out resulting in a list of critical tract variables per phone. Refer to the text for additional details.

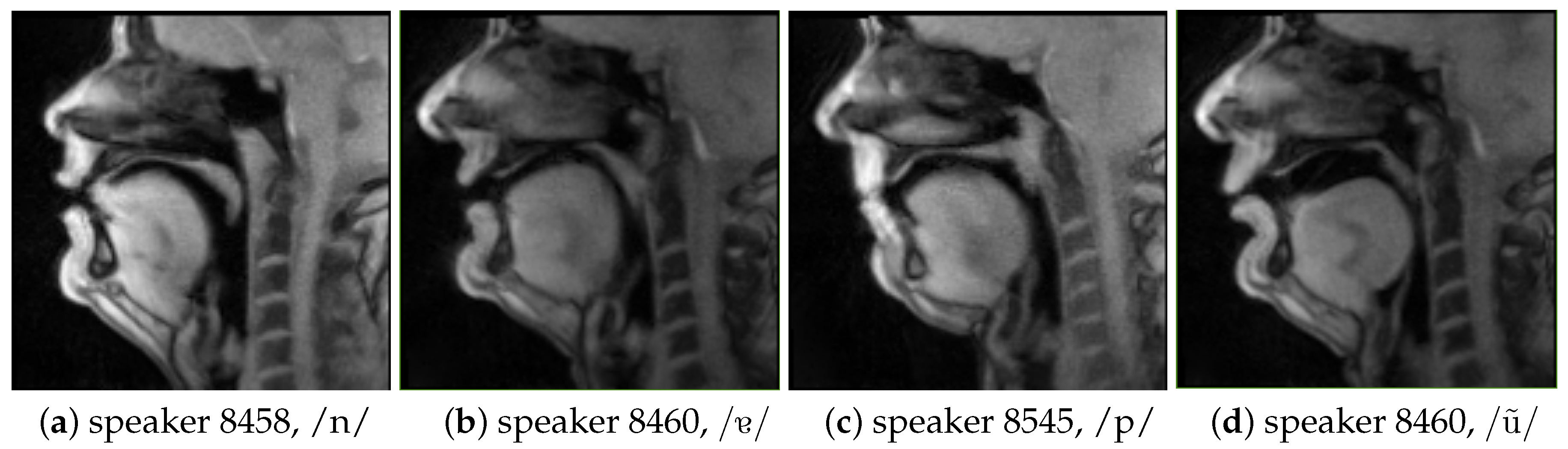

Figure 2.

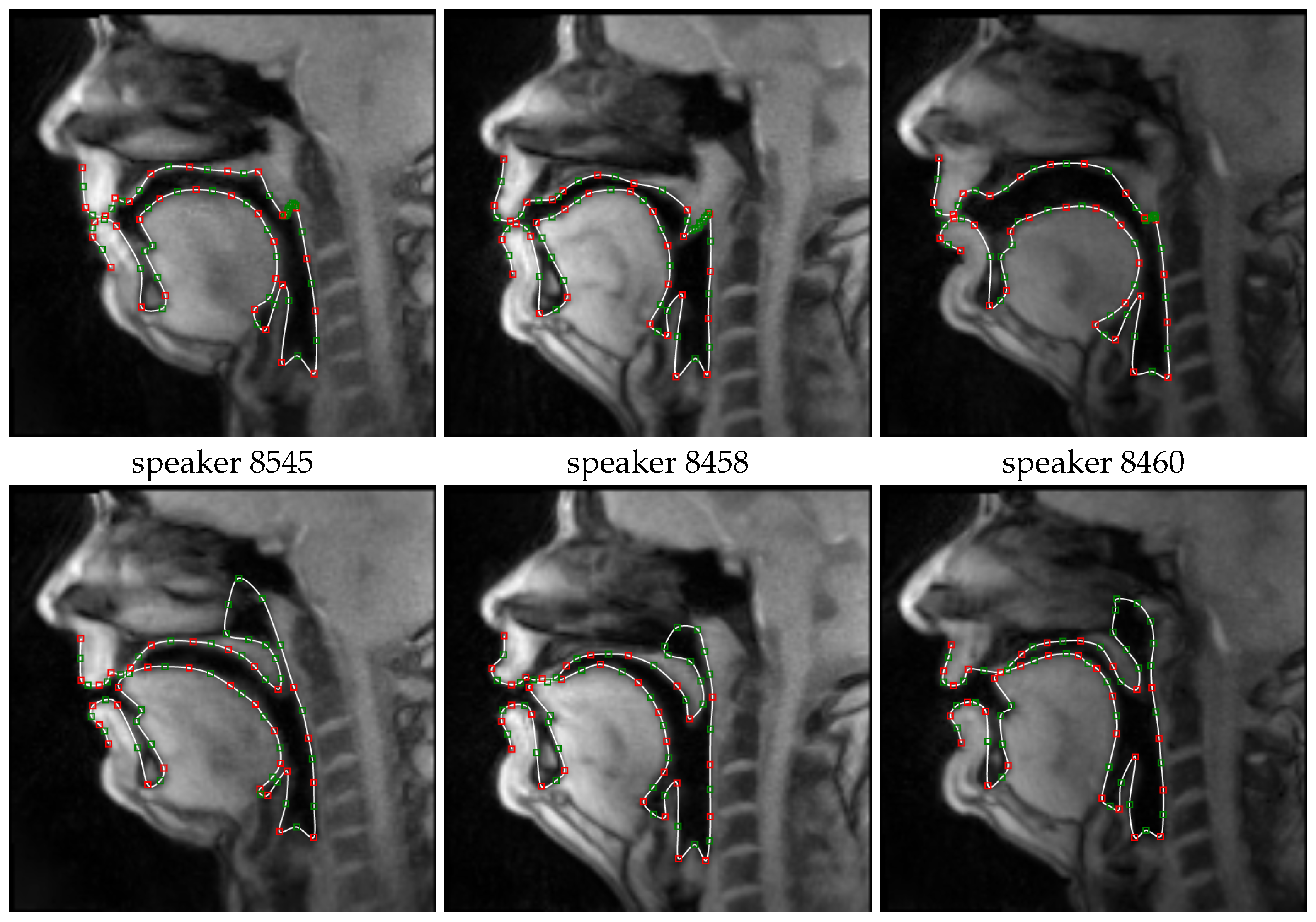

Illustrative examples of midsagittal real-time MRI images of the vocal tract, for different speakers and sounds.

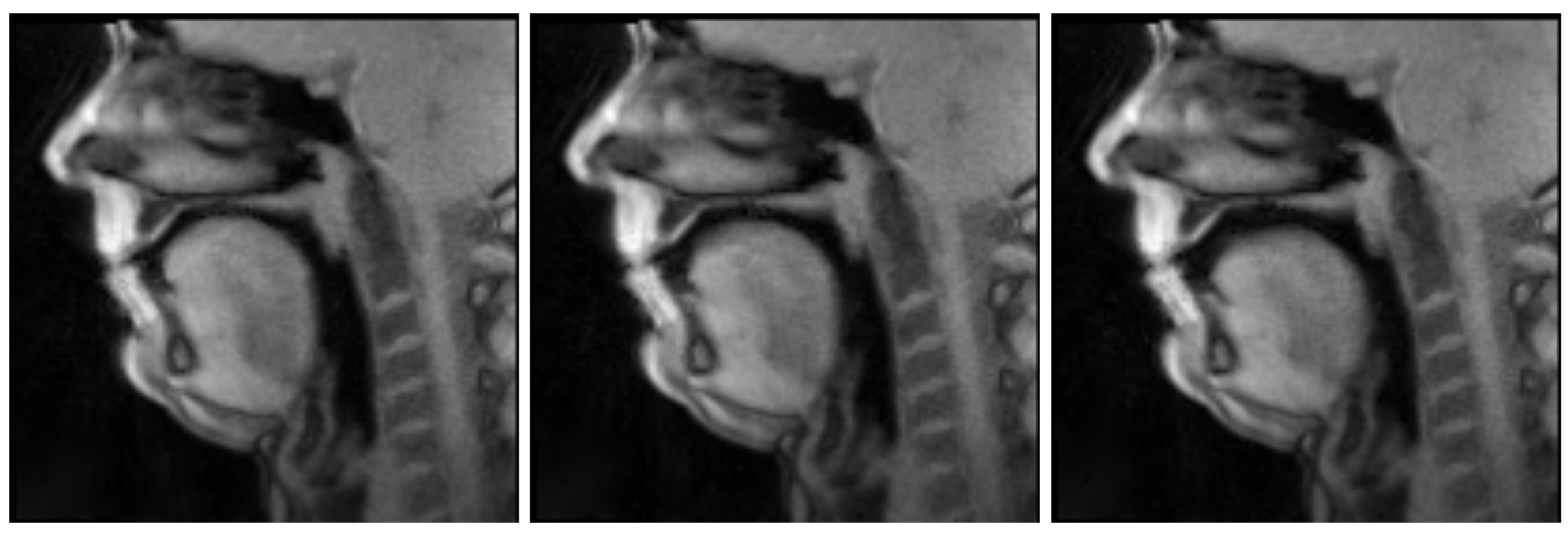

Figure 3.

Midsagital real-time MRI image sequence of speaker 8545 articulating /p/ as in the nonsense word [pɐnetɐ]. The images have been automatically identified considering the corresponding time interval annotated based on the audio recorded during the acquisition. Note the closed lips, throughout and their opening, in the last frame, to produce the following /ɐ/.

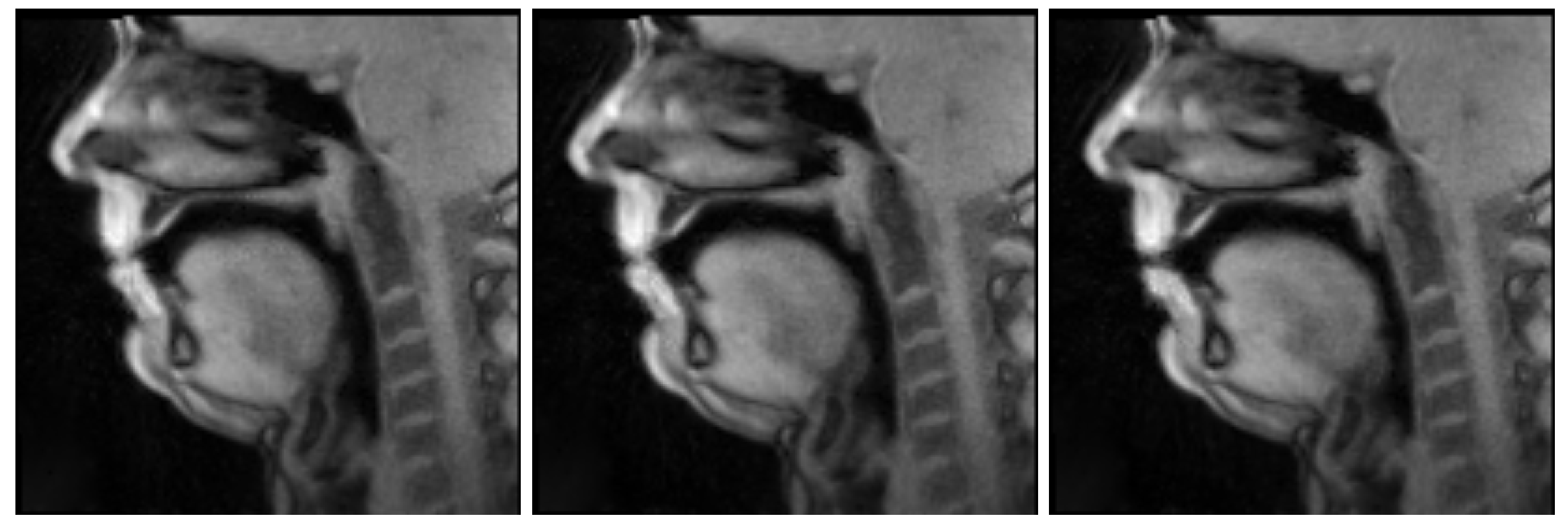

Figure 4.

Illustrative examples of the automatically segmented vocal tract contours represented over the corresponding midsagittal real-time MRI images for three speakers uttering /p/, on the top row, and /n/ on the bottom row.

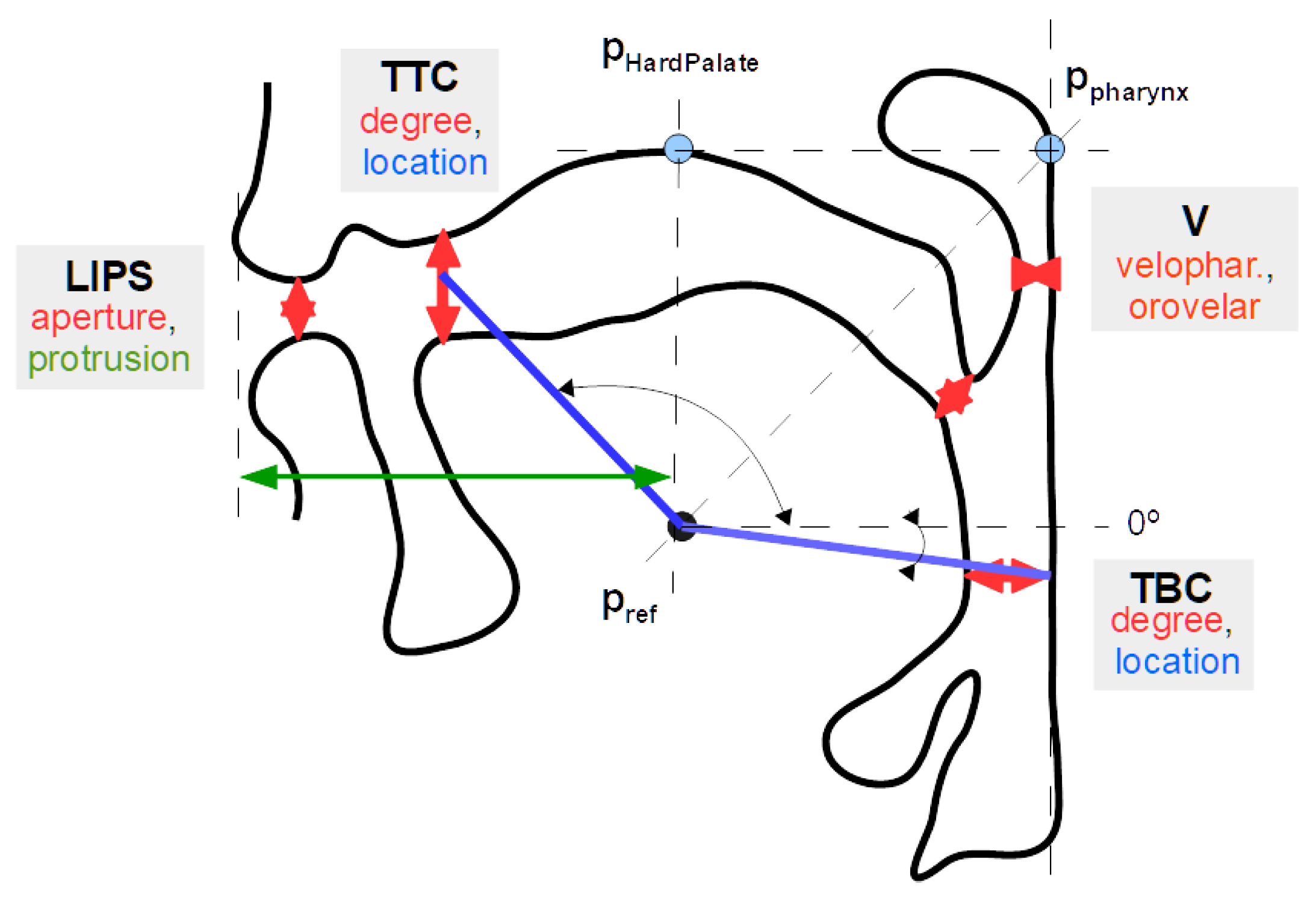

Figure 5.

Illustrative vocal tract representation depicting the main aspects of the considered tract variables: tongue tip constriction (TTC, defined by degree and location); tongue body constriction (TBC, defined by degree and location), computed considering both the pharyngeal wall and hard palate; velum (V, defined by the extent of the velopharyngeal and orovelar passages); and lips (LIPS, defined by aperture and protrusion). The point is used as a reference for computing constriction angular locations. Please refer to the text for further details.

Figure 6.

Correlation among the different components of the considered tract variables (1D correlation) for the three speakers 8458, 8460 and 8545, and for the speaker gathering the normalized data. Tract variables for 1D correlation: LIPSa: lip aperture; LIPSp: lip protrusion; TTCd: tongue tip constriction distance; TTCl: tongue tip constriction location; TBCd: tongue body constriction distance; TBCl: tongue body constriction location; Vp: velar port distance; Vt: orovelar port distance.

Figure 7.

Correlation matrices for previous results [

25] considering Articulatory Phonology aligned tract variables for two of the speakers also considered in this work (8458 and 8460). In this previous work, we considered less data samples per speaker and represented the velum by the

x and

y coordinates of a landmark positioned at its back. Please refer to

Figure 6 for the corresponding matrices obtained in the current work.

Tract variables for 1D correlation (previous work):LIPSa: lip aperture;

LIPSp: lip protrusion;

TTCd: tongue tip constriction distance;

TTCl: tongue tip constriction location;

TBCd: tongue body constriction distance;

TBCl: tongue body constriction location;

Vx: velar landmark

x;

Vy: velar landmark

y.

Table 1.

Summary of the criteria used for selecting the representative frame for particular phones.

| Phone (SAMPA) | Criterion |

|---|

Oral Vowels

6, a, e, E, i, o, O, u | midpoint |

Nasal Vowels

, , , , | three classes were created, taking the first, middle, and final frames |

Nasal Consonants

m, n | [m], frame with minimum inter-lip distance; [n], midpoint |

Stops

p, b, k, d, g, t | [p] and [b], frame with minimum inter-lip distance; [k],[d], [g] and [t], midpoint |

Fricatives

s, v | midpoint |

Table 2.

Summary of the computed statistics for each landmark and corresponding notation as in Reference [

19].

| Grand Stats | Not. | Comment |

| grand mean | M | all selected frames |

| grand variance | | all selected frames |

| total sample size | N | Spk 8458: 870; Spk 8460: 750; Spk 8545: 853; Spk All: 2473; |

| corr. matrix | | keeping statistically significant and strong correlations ( and ) |

| Phone Stats | Not. | Comment |

| mean | | frames selected for each phone |

| variance | | frames selected for each phone |

| sample size | | variable among phones |

| corr. matrix | | not attending to significance and module |

Table 3.

Canonical correlation for pairs of the chosen tract variables (2D canonical correlation) for the three speakers (8458, 8460, and 8545) and for the speaker gathering the normalized data.

| | 8458 | 8460 | 8545 | All |

|---|

| 0.26 | 0.00 | 0.51 | 0.00 | 0.43 | 0.00 | 0.37 | 0.00 |

| 0.00 | 0.00 | 0.47 | 0.31 | 0.25 | 0.00 | 0.00 | 0.00 |

| 0.44 | 0.00 | 0.37 | 0.00 | 0.50 | 0.00 | 0.37 | 0.00 |

| 0.36 | 0.00 | 0.47 | 0.00 | 0.33 | 0.00 | 0.42 | 0.00 |

| 0.33 | 0.21 | 0.38 | 0.25 | 0.34 | 0.00 | 0.32 | 0.00 |

| 0.32 | 0.30 | 0.49 | 0.00 | 0.36 | 0.00 | 0.30 | 0.00 |

Table 4.

Critical articulators for the different phones and speakers. Each component of the considered tract variables is considered an articulator (1D analysis). For the sake of brevity, for phones yielding a list of more than four critical articulators, only four articulators are presented. The order of the articulators reflects their determined importance. For the sake of space economy, in the tract variable listing the T and C were omitted, for example, TBCd became Bd.

Table 5.

Critical articulators for the different phones and speakers. Each tract variable is considered as an articulator (2D analysis). The order of the different articulators, for each phone, reflects their importance. The two rightmost columns present the determined critical articulators gathering the normalized data for all speakers (spk All) and a characterization of EP sounds based on the principle of Articulatory Phonology as found in Oliveira [

7]. For the sake of space economy, in the tract variable listing the T and C were omitted, for example, TBC became B.

| Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).