Mobile Applications for Resting Tremor Assessment in Parkinson’s Disease: A Systematic Review

Abstract

1. Introduction

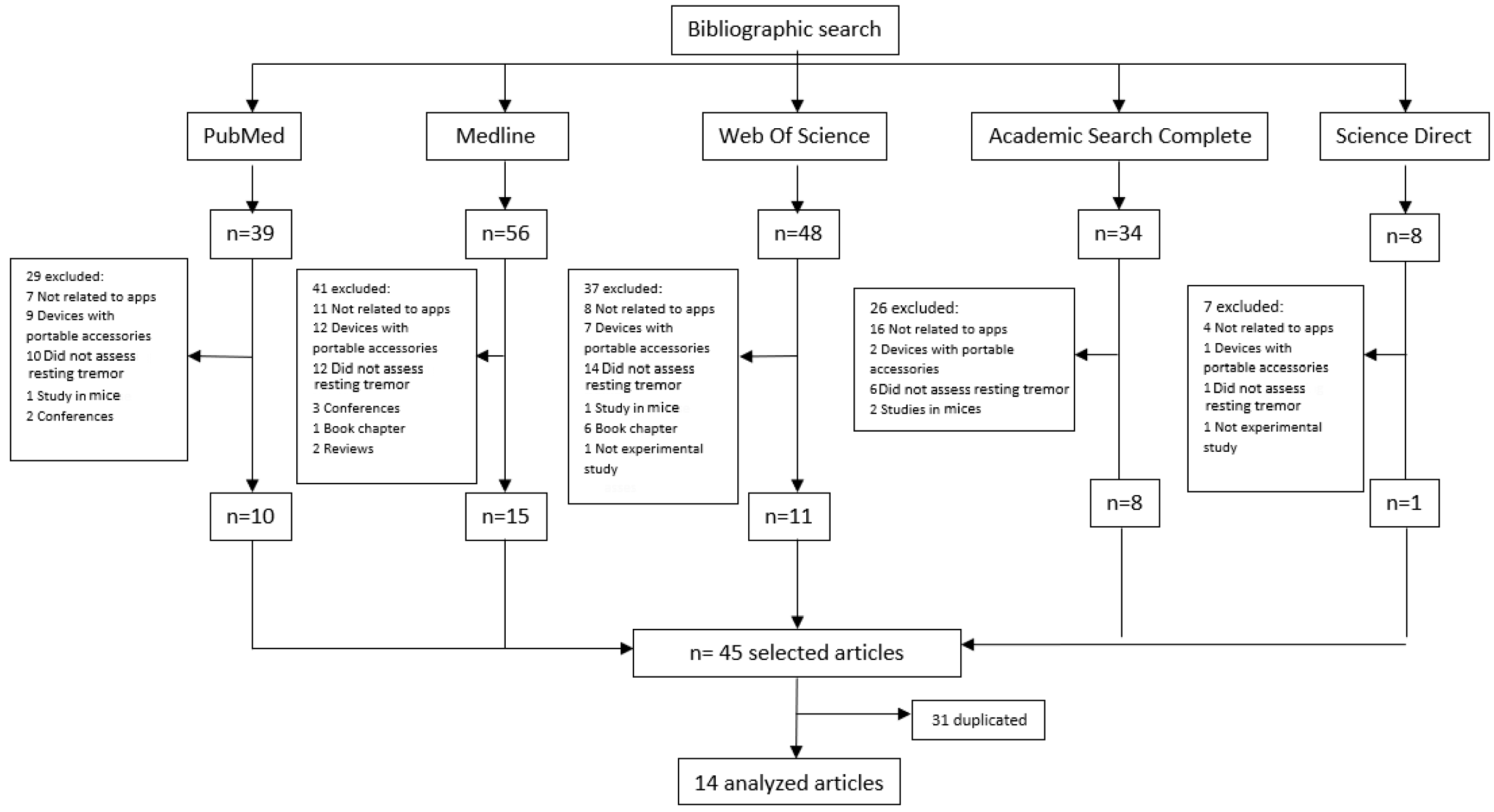

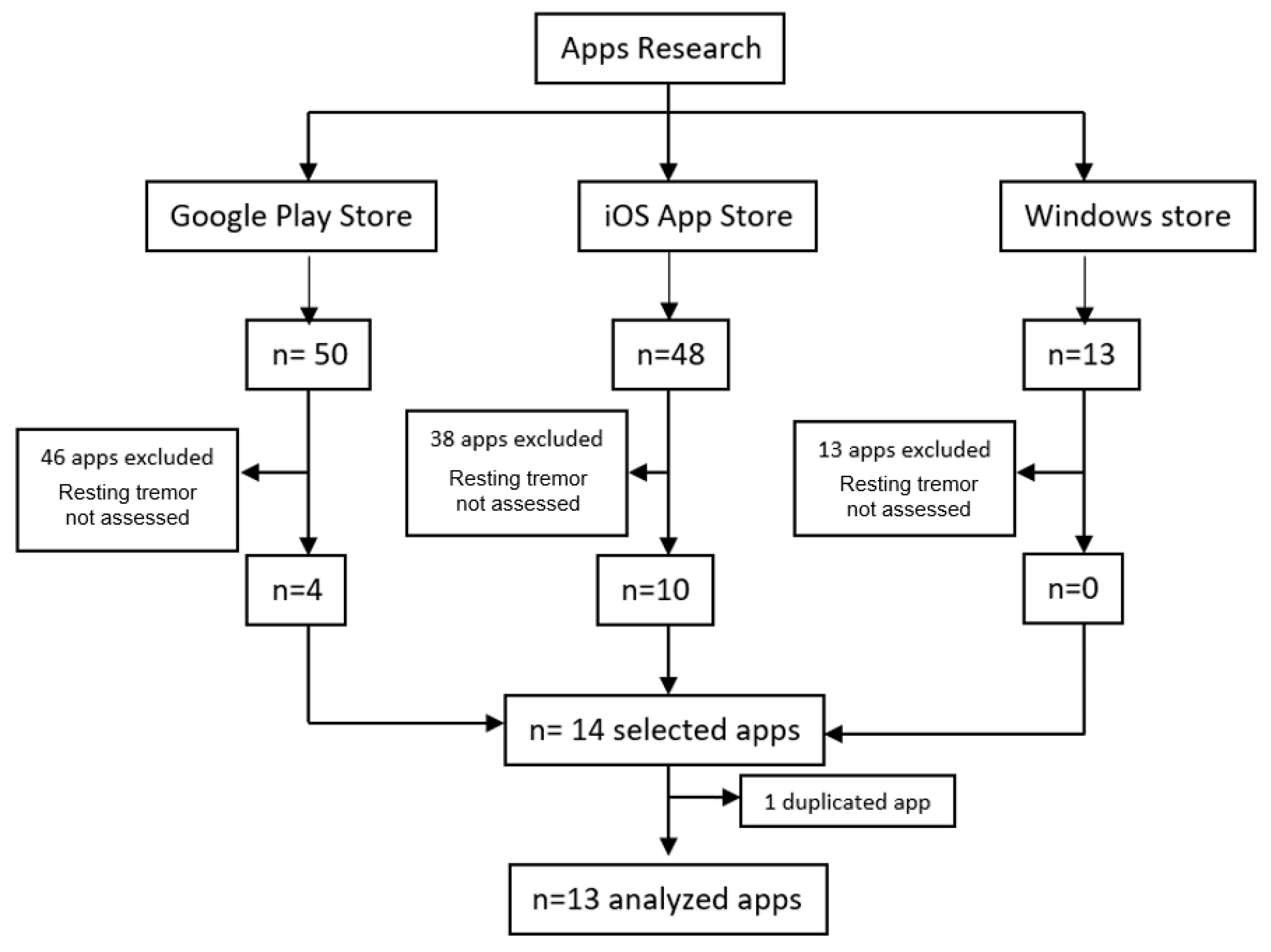

2. Materials and Methods

2.1. Design

2.2. Search Strategy

2.3. Eligibility Criteria

2.4. Extracting Information and Managing Data

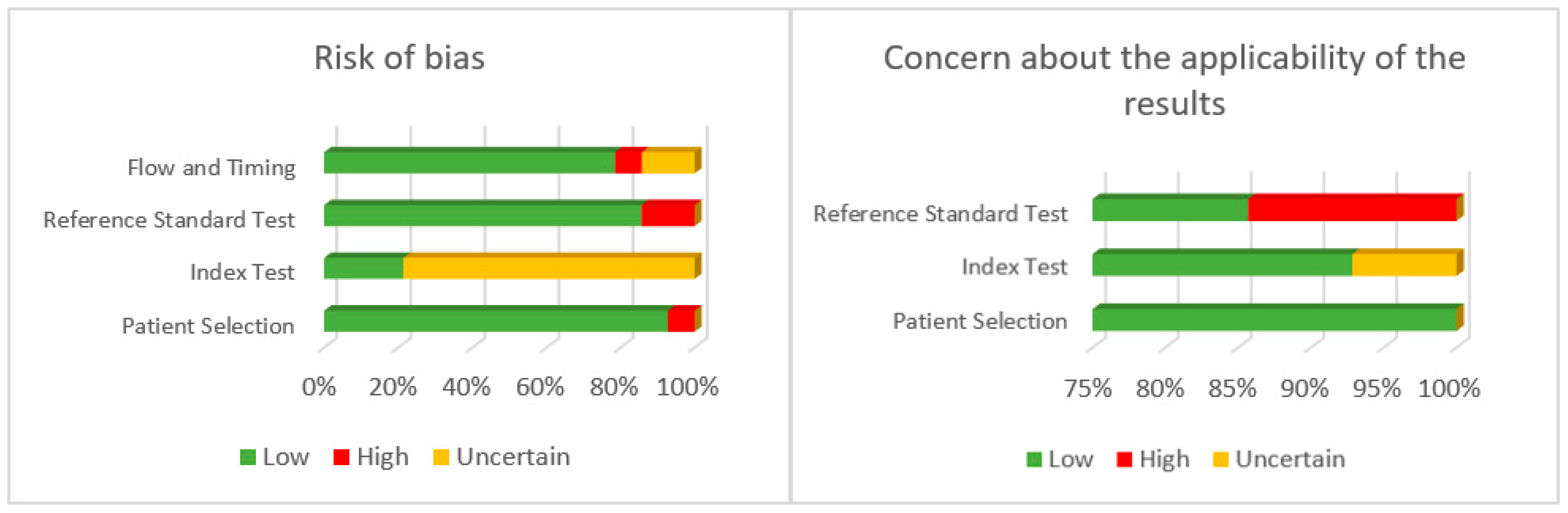

2.5. Assessing the Quality of Evidence

3. Results

3.1. Participants

3.2. Apps in Databases

3.3. App Markets

3.4. Quality of Evidence

4. Discussion

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Kalia, L.V.; Lang, A.E. Parkinson’s disease. Lancet 2015, 386, 896–912. [Google Scholar] [CrossRef]

- Obeso, J.A.; Stamelou, M.; Goetz, C.G.; Poewe, W.; Lang, A.E.; Weintraub, D.; Burn, D.; Halliday, G.M.; Bezard, E.; Przedborski, S.; et al. Past, present, and future of Parkinson’s disease: A special essay on the 200th Anniversary of the Shaking Palsy. Mov. Disord. 2017, 32, 1264–1310. [Google Scholar] [CrossRef]

- Sveinbjornsdottir, S. The clinical symptoms of Parkinson’s disease. J. Neurochem. 2016, 139, 318–324. [Google Scholar] [CrossRef] [PubMed]

- Gövert, F.; Becktepe, J.; Deuschl, G. The new tremor classification of the International Parkinson and Movement Disorder Society: Update on frequent tremors. Nervenarzt 2018, 89, 376–385. [Google Scholar] [CrossRef]

- Hallett, M. Parkinson’s disease tremor: Pathophysiology. Park. Relat. Disord. 2012, 18, 85–86. [Google Scholar] [CrossRef] [PubMed]

- Elble, R.; Bain, P.; Forjaz, M.J.; Haubenberger, D.; Testa, C.; Goetz, C.G.; Leentjens, A.F.G.; Martinez-Martin, P.; Traon, A.P.; Post, B.; et al. Task force report: Scales for screening and evaluating tremor: Critique and recommendations. Mov. Disord. 2013, 28, 1793–1800. [Google Scholar] [CrossRef] [PubMed]

- Ondo, W.; Hashem, V.; LeWitt, P.A.; Pahwa, R.; Shih, L.; Tarsy, D.; Zesiewicz, T.; Elble, R. Comparison of the Fahn-Tolosa-Marin Clinical Rating Scale and the Essential Tremor Rating Assessment Scale. Mov. Disord. Clin. Pract. 2017, 5, 60–65. [Google Scholar] [CrossRef]

- Forjaz, M.J.; Ayala, A.; Testa, C.M.; Bain, P.G.; Elble, R.; Haubenberger, D.; Rodriguez-Blazquez, C.; Deuschl, G.; Martínez-Martín, P. Proposing a Parkinson’s disease-specific tremor scale from the MDS-UPDRS. Mov. Disord. 2015, 30, 1139–1143. [Google Scholar] [CrossRef]

- Shaw, T.; McGregor, D.; Brunner, M.; Keep, M.; Janssen, A.; Barnet, S. What is eHealth? Development of a Conceptual Model for eHealth: Qualitative Study with Key Informants. J. Med. Internet Res. 2017, 19, e324. [Google Scholar] [CrossRef]

- Sociedad Digital en España: 2020–2021. Available online: https://www.fundaciontelefonica.com/cultura-digital/publicaciones/sociedad-digital-en-espana-2020-2021/730/ (accessed on 20 March 2022).

- La Revolución del mHealth en Salud: De las Apps al Dato de Salud Integrado. Available online: https://www.ehcos.com/la-revolucion-del-mhealth-en-salud/ (accessed on 20 March 2022).

- Linares-del Rey, M.; Vela-Desojo, L.; Cano-de la Cuerda, R. Mobile phone applications in Parkinson’s disease: A systematic review. Neurología 2017, 34, 38–54. [Google Scholar] [CrossRef] [PubMed]

- Sánchez-Rodríguez, M.T.; Collado-Vázquez, S.; Martín-Casas, P.; Cano-de la-Cuerda, R. Neurorehabilitation and apps: A systematic review of mobile applications. Neurología 2015, 33, 313–326. [Google Scholar] [CrossRef] [PubMed]

- Velseboer, D.C.; Broeders, M.; Post, B.; van Geloven, N.; Speelman, J.D.; Schmand, B.; de Haan, R.J.; de Bie, R.M.; CARPA Study Group. Prognostic factors of motor impairment, disability, and quality of life in newly diagnosed PD. Neurology 2013, 80, 627–633. [Google Scholar] [CrossRef] [PubMed]

- Estévez-Martín, S.; Cambronero, M.E.; García-Ruiz, Y.; Llana, L. Mobile Applications for People with Parkinson’s Disease: A Systematic Search in App Stores and Content Review. J. Univers. Comput. Sci. 2019, 5, 740–763. [Google Scholar]

- Page, M.J.; McKenzie, J.E.; Bossuyt, P.M.; Boutron, I.; Hoffmann, T.C.; Mulrow, C.D.; Shamseer, L.; Tetzlaff, J.M.; Akl, E.A.; Brennan, S.E.; et al. The PRISMA 2020 statement: An updated guideline for reporting systematic reviews. Int. J. Surg. 2021, 372, 71. [Google Scholar] [CrossRef]

- Hughes, A.J.; Daniel, S.E.; Kilford, L.; Lees, A.J. Accuracy of clinical diagnosis of idiopathic Parkinson’s disease: A clinic-pathological study of 100 cases. J. Neurol. Neurosurg. Psychiatr. 1992, 55, 181–184. [Google Scholar] [CrossRef] [PubMed]

- Whiting, P.F.; Rutjes, A.W.S.; Westwood, M.E.; Mallett, S.; Deeks, J.J.; Reitsma, J.B.; Leeflang, M.M.G.; Sterne, J.A.C.; Bossuyt, P.M.M.; QUADAS-2 Group. QUADAS-2: A revised tool for the quality assessment of diagnostic accuracy studies. Ann. Intern. Med. 2011, 155, 529–536. [Google Scholar] [CrossRef]

- Recomendaciones para el Diseño, Uso y Evaluación de Apps de Salud. Available online: http://www.calidadappsalud.com/recomendaciones/ (accessed on 24 March 2022).

- Araújo, R.; Tábuas-Pereira, M.; Almendra, L.; Ribeiro, J.; Arenga, M.; Negrão, L.; Matos, A.; Morgadinho, A.; Januário, C. Tremor Frequency Assessment by iPhone® Applications: Correlation with EMG Analysis. J. Park. Dis. 2016, 6, 717–721. [Google Scholar] [CrossRef]

- Barrantes, S.; Egea, A.J.S.; Rojas, H.A.G.; Martí, M.J.; Compta, Y.; Valldeoriola, F.; Mezquita, E.S.; Tolosa, E.; Valls-Solè, J. Differential diagnosis between Parkinson’s disease and essential tremor using the smartphone’s accelerometer. PLoS ONE. 2017, 12, 0183843. [Google Scholar] [CrossRef]

- Van Brummelen, E.M.J.; Ziagkos, D.; De Boon, W.M.I.; Hart, E.P.; Doll, R.J.; Huttunen, T.; Kolehmainen, P.; Groeneveld, G.J. Quantification of tremor using consumer product accelerometry is feasible in patients with essential tremor and Parkinson’s disease: A comparative study. J. Clin. Mov. Disord. 2020, 7, 4–7. [Google Scholar] [CrossRef] [PubMed]

- Chen, O.Y.; Lipsmeier, F.; Phan, H.; Prince, J.; Taylor, K.I.; Gossens, C.; Lindemann, M.; de Vos, M. Building a Machine-Learning Framework to Remotely Assess Parkinson’s Disease Using Smartphones. IEEE Trans. Biomed. Eng. 2020, 67, 3491–3500. [Google Scholar] [CrossRef] [PubMed]

- Chronowski, M.; Kłaczyński, M.; Dec-Cwiek, M.; Porębska, K.; Sawczyńska, K. Speech and Tremor Tester—Monitoring of Neurodegenerative Diseases using Smartphone Technology. Diagnostyka 2020, 2, 31–39. [Google Scholar] [CrossRef]

- Fraiwan, L.; Khnouf, R.; Mashagbeh, A.R. Parkinson’s disease hand tremor detection system for mobile application. J. Med. Eng. Technol. 2016, 40, 127–134. [Google Scholar] [CrossRef] [PubMed]

- Garcia-Magarino, I.; Medrano, C.; Plaza, I.; Olivan, B. A smartphone-based system for detecting hand tremors in unconstrained environments. Pers. Ubiquitous Comput. 2016, 20, 959–971. [Google Scholar] [CrossRef]

- Kassavetis, P.; Saifee, T.A.; Roussos, G.; Drougkas, L.; Kojovic, M.; Rothwell, J.C.; Edwards, M.J.; Bhatia, K.P. Developing a Tool for Remote Digital Assessment of Parkinson’s Disease. Mov. Disord. Clin. Pract. 2015, 3, 59–64. [Google Scholar] [CrossRef] [PubMed]

- Kostikis, N.; Hristu-Varsakelis, D.; Arnaoutoglou, M.; Kotsavasiloglou, C. A Smartphone-Based Tool for Assessing Parkinsonian Hand Tremor. IEEE J. Biomed. Health Inform. 2015, 19, 1835–1842. [Google Scholar] [CrossRef]

- Kuosmanen, E.; Wolling, F.; Vega, J.; Kan, V.; Nishiyama, Y.; Harper, S.; Van Laerhoven, K.; Hosio, S.; Ferreira, D. Smartphone-Based Monitoring of Parkinson Disease: Quasi-Experimental Study to Quantify Hand Tremor Severity and Medication Effectiveness. JMIR Mhealth Uhealth 2020, 8, 21543. [Google Scholar] [CrossRef]

- Lipsmeier, F.; Taylor, K.I.; Kilchenmann, T.; Wolf, D.; Scotland, A.; Schjodt-Eriksen, J.; Cheng, W.-Y.; Fernandez-Garcia, I.; Siebourg-Polster, J.; Jin, L.; et al. Evaluation of Smartphone-Based Testing to Generate Exploratory Outcome Measures in a Phase 1 Parkinson’s Disease Clinical Trial. Mov. Disord. 2018, 33, 1287–1297. [Google Scholar] [CrossRef]

- Motolese, F.; Magliozzi, A.; Puttini, F.; Rossi, M.; Capone, F.; Karlinski, K.; Stark-Inbar, A.; Yekutieli, Z.; Di Lazzaro, V.; Marano, M. Parkinson’s Disease Remote Patient Monitoring During the COVID-19 Lockdown. Front. Neurol. 2020, 11, 567413. [Google Scholar] [CrossRef] [PubMed]

- Pan, D.; Dhall, R.; Lieberman, A.; Petitti, D.B. A mobile cloud-based Parkinson’s disease assessment system for home-based monitoring. JMIR Mhealth Uhealth 2015, 3, 29. [Google Scholar] [CrossRef]

- Woods, A.M.; Nowostawski, M.; Franz, E.A.; Purvis, M. Parkinson’s disease and essential tremor classification on mobile device. Pervasive Mob. Comput. 2014, 13, 1–12. [Google Scholar] [CrossRef]

- Tosin, M.H.S.; Goetz, C.G.; Luo, S.; Choi, D.; Stebbins, G.T. Item Response Theory Analysis of the MDS-UPDRS Motor Examination: Tremor vs. Nontremor Items. Mov. Disord. 2020, 35, 1587–1595. [Google Scholar] [CrossRef] [PubMed]

- Raciti, L.; Nicoletti, A.; Mostile, G.; Bonomo, R.; Dibilio, V.; Donzuso, G.; Sciacca, G.; Cicero, C.E.; Luca, A.; Zappia, M. Accuracy of MDS-UPDRS section IV for detecting motor fluctuations in Parkinson’s disease. Neurol. Sci. 2019, 40, 1271–1273. [Google Scholar] [CrossRef] [PubMed]

- Zayas-García, S.; Cano-De-La-Cuerda, R. Mobile applications related to multiple sclerosis: A systematic review. Rev. Neurol. 2018, 67, 473–483. [Google Scholar] [PubMed]

- Mobile Medical Applications. Guidance for Industry and Food and Drug Administration Staff. Available online: http://www.fda.gov/downloads/MedicalDevices/DeviceRegulationandGuidance/GuidanceDocuments/UCM263366.pdf (accessed on 24 March 2022).

- Belloch Ortí, C. Evaluación de las Aplicaciones Multimedia: Criterios de Calidad. Available online: https://www.uv.es/bellochc/pdf/pwtic4.pdf (accessed on 24 April 2022).

- Meulendijk, M.; Meulendijks, J.; Paul, A.; Edwin, N.; Mattijs, E. What concerns users of medical apps? Exploring non functional requirements of medical mobile applications. In Proceedings of the European Conference on Information Systems (ECIS), Tel Aviv, Israel, 9–11 June 2014. [Google Scholar]

| Results | |

|---|---|

| PUBMED (Advance)—Search 1 | |

| #1 “Parkinson Disease” [Mesh] | 76,971 |

| #2 “Mobile Applications” [Mesh] | 10,081 |

| #3 “Tremor” [Mesh] | 10,406 |

| #4 #1 AND #2 AND #3 | 3 |

| Filters: 2011–2021 | 3 |

| Total | 3 |

| PUBMED (Advance)—Search 2 | |

| #1 “Parkinson Disease” [TA] | 13,991 |

| #2 “Parkinson” [TA] | 125,152 |

| #3 #1 OR #2 | 125,152 |

| #4 “Mobile Applications” [TA] | 2739 |

| #5 “App” [TA] | 37,469 |

| #6 “Smartphone” [TA] | 17,921 |

| #7 #4 OR #5 OR #6 | 51,979 |

| #8 “Tremor” [TA] | 22,518 |

| #9 “Rest tremor” [TA] | 556 |

| #10 “Tremor Parkinson” [TA] | 80 |

| #11 #8 OR #9 OR #10 | 22,518 |

| #12 #3 AND #7 AND #11 | 38 |

| Filters: 2011–2021 | 38 |

| Total | 38 |

| MEDLINE/EBSCO (Advance) | |

| S1 “Parkinson” [AB] | 1,077,993 |

| S2 “Tremor” [AB] | 22,569 |

| S3 “Mobile Applications” [AB] | 1582 |

| S4 “App” [AB] | 36,617 |

| S5 “Smartphone” [AB] | 18,495 |

| S6 S3 OR S4 OR S5 | 50,754 |

| S7 S1 AND S2 AND S6 | 56 |

| Filters: 2011–2021 | 56 |

| Total | 56 |

| WEB OF SCIENCE (Advance) | |

| #1 Smartphone [Topic] | 42,768 |

| #2 Mobile application [Topic] | 129,396 |

| #3 App [Topic] | 62,309 |

| #4 Parkinson [Topic] | 149,640 |

| #5 Tremor [Topic] | 31,585 |

| #6 #1OR #2 OR #3 | 213,036 |

| #7 #6 AND #4 AND #5 | 81 |

| Filters: 2011–2021, Articles | 48 |

| Total | 48 |

| ACADEMIC SEARCH PREMIER/EBSCO (Advance) | |

| #1 Smartphone OR App OR Mobile applications | 126,614 |

| #2 Parkinson | 90,117 |

| #3 Tremor | 40,406 |

| #4 #1 AND #2 AND #3 | 35 |

| Filters: 2011–2021 | 34 |

| Total | 34 |

| SCIENCE DIRECT (Advance) | |

| #1 (“Smartphone” OR “App” OR “Mobile application”) AND “Parkinson” AND “Tremor” [Title, abstract or author-specified keywords] | 8 |

| Filters: 2011–2021 | 8 |

| Total | 8 |

| Author and Year | Participants | Method | Results |

|---|---|---|---|

| Araújo et al., 2016 [20] | n = 22 (12 PD, 9 ET, 1 HT) | 3 apps were tested by an iPhone (“LiftPulse”, “iSeismometer”, “StudyMyTremor”) tied to the patient’s hand, while needle EMG data were collected from the most relevant muscles in UL tremor. | All three apps showed good correlation with needle EMG, with statistically significant results. Although the results of all three were very similar, “LiftPulse” showed greater correlation. |

| Barrantes et al., 2017 [21] | n = 52 (17 PD, 16 ET, 7 undiagnosed, and 12 healthy participants) | Tremor data were collected through the “SensoryLog” app by tying the mobile phone to the hand for 30 s at rest and 30 s with the arms stretched at 90° of shoulder flexion while sitting. | Part 1. Differentiate patients with tremor from healthy patients with a specificity of 83.3% and a sensitivity of 97.96%. Part 2. Discriminate between patients with PD and ET: 27 patients out of 34 were correctly classified (84.38% accuracy). |

| Brummelen et al., 2020 [22] | n = 20 (10 PD and 10 ET) | Comparing the measurement of tremor simultaneously between a laboratory accelerometer with different equipment (iPhone, iPod, Apple watch®) with two apps: “Make Helsinki app” and “Centre for Human Drug Research app” by holding the mobile in the hand. | The tremor frequency peaks were similar between the laboratory accelerometer and the measuring equipment in both PD and ET. Greater amplitude of the tremor was recorded in the equipment that was placed more distally. |

| Chen et al., 2020 [23] | n = 72 (37 PD, 35 healthy participants) | Through data collected by a mobile app (“Roche PD Mobile Application v1”) such as gait, balance, dexterity, voice, and resting and postural tremor. It was intended to create a computer model to classify patients with or without PD and its severity. Patients had to perform activities with the mobile every day for 17 days; the data are collected when they hold the mobile in their hand. | They found that the most important characteristics to differentiate PD patients and healthy participants are resting tremor and dexterity. The model had an accuracy of 0.972, specificity of 0.971, and sensitivity of 0.973. Good correlation was found with the MDS-UPDRS scale. Greater relevance was found in the characteristics of dexterity, gait, and tremor at rest. |

| Chronowski et al., 2020 [24] | Healthy participants and PD patients. 49 samples were taken. | Thanks to an app on a smartphone tied to the patient’s hand, voice and resting tremor and intentional data were collected to discriminate between patients with PD and healthy participants. | 85% accuracy was demonstrated by distinguishing patients with PD and healthy participants, but aspects of the interface need to be improved to make data analysis easier. |

| Fraiwan et al., 2016 [25] | n = 42 (21 PD, 21 healthy participants) | Data were collected through the “Android Mobile” app tied to the patient’s hand, which transmitted data collected from the smartphone’s accelerometer to a computer to be analyzed. Measurements were made for 30 s at rest to measure tremor. | The app and the data analysis system presented had 95% accuracy, 95% sensitivity, 95% specificity, with a kappa coefficient of 90%, diagnosing patients with PD due to resting tremor. |

| García-Magariño et al., 2016 [26] | Study 1, n = 21 PD (11 patients with tremor) Study 2, n = 3 PD (1 patient with tremor) | An app (“Hand Trembling detector App”) was designed to distinguish hand tremor in ADL. Study 1: The participants carried the smartphone with the app in their pocket and performed ADL. Study 2: Participants carried the smartphone for several hours at a time per day. | Study 1: The app was able to detect tremor with 95.83% sensitivity, 99.51% specificity, and an accuracy of 99.41%. Study 2: The app discriminated between tremor and normal movements in ADL, showing high specificity and sensitivity. |

| Kassavetis et al., 2015 [27] | n = 14 (patients with dopamine transport deficit) | Using an application on a smartphone, tremor (rest, postural, and action) and bradykinesia were measured to correlate them with MDS-UPDRS. The participants were measured in off-medication periods with the mobile phone on the palm of the hand in supination. | A significant correlation was found for resting and postural tremor as well as bradykinesia with the MDS-UPDRS scale, but not with action tremor (due in part to the characteristics of the sample). |

| Kostikis et al., 2015 [28] | n = 45 (25 PD and 20 healthy participants) | Thanks to an app also available in web version on a smartphone, resting and postural tremor in the hands of the participants were measured. The data were collected with the mobile phone tied to the patient’s hand. | 82% of participants with PD and 90% of healthy participants were correctly identified. Better specificity and sensitivity were found in the data obtained from the gyroscope than from the app’s accelerometer. |

| Kuosmanen et al., 2020 [29] | n = 13 (13 PD) | The mobile “Sentient Tracking of Parkinson’s” (STOP) app measured the tremor through a game in which they held the phone on the palm of their hand for 13 s. The goal was to determine whether the app could detect and quantify tremor, as well as differences in tremor with and without medication. | The app was able to detect and quantify the severity of the tremor. A significant correlation was found with the items of the UPDRS III scale referring to tremor. No difference was found in tremor in patients with or without medication. |

| Lipsmeier et al., 2018 [30] | n = 79 (44 PD, 35 healthy participants) | Through data collected by a mobile app (“Roche PD Mobile Application v1”) of tremor at rest, bradykinesia, rigidity, postural instability, gait, and voice for 30 s and carrying the mobile all day. They performed two experiments, one lasting 6 months and the other 6 weeks. The data of resting tremor were collected with the mobile on the palm of the hand. | The app was able to discriminate PD patients from healthy participants with excellent reliability for tremor and moderate to good for the rest of the characteristics. It demonstrated moderate to good test–retest reliability. There was also a significant correlation with the MDS-UPDRS scale, except for the voice item. In addition, the app was able to discriminate other phenomena in Parkinson’s patients. Adherence was 61% in the long experiment and 100% in the short experiment. |

| Motolese et al., 2020 [31] | n = 54 PD | During the COVID-19 lockdown, patients had to use the “EncephaLog HomeTM” mobile application at least 2 times a week for 3 weeks to monitor symptoms (tremor among them). | 83.3% of participants used the app at least once. 53.7% of the participants showed average conformity with the app, and 29.6% were very satisfied with it. Adherence was 38.7%. 18.5% underwent PD treatment changes upon request due to clinical reasons. All performed therapeutic interventions were routine modifications of ongoing medications. None was driven by the app outcomes, due to the observational nature of the study. |

| Pan et al., 2015 [32] | n = 40 PD | The mobile application “PD Dr” was developed to detect the motor symptoms of Parkinson’s (tremor and gait difficulties). Participants performed a test for 5 min to obtain the data, in which they carried the mobile tied to their hand. | Resting tremor obtained a sensitivity of 0.77 and an accuracy of 0.82. A strong correlation was demonstrated between test results and disease severity in participants. |

| Woods et al., 2014 [33] | n = 32 (14 PD and 18 ET) | Thanks to an app designed specifically for the study, they evaluated tremor at rest by holding the mobile phone with their hand, as well as during attention and distraction tasks to discriminate between patients with PD and ET. | 92% of patients were well discriminated. |

| Name | Logo | Operating System | Price | Users | Brief Description |

|---|---|---|---|---|---|

| cloudUPDRS |  | Google Play | Free | Professionals and patients participating in the study | App to measure gait, tremor, and reaction time. The test can be performed complete or in parts. |

| MyTremorApp |  | Google Play | Free | Professionals and patients | App to measure hand tremor and bradykinesia. |

| ParkinsonAI |  | Google Play/iOS | Free | Patients | App to measure tremor and posture. It also allows you to record medical history and proposes exercises and diets for the control of symptoms. |

| Tremor Measurement |  | Google Play | EUR 1,39 | Professionals | App to assess tremor in amplitude and frequency. |

| Cepha |  | iOS | Free | Professionals and patients | App to measure dysphonia, resting tremor, action tremor, and postural tremor. It was created to distinguish essential tremor from PD in a study not cited by the developers. |

| CYPD |  | iOS | Free | Professionals and patients | App to monitor symptoms and medication effectiveness. This information is sent to the clinician. Can also be used with an Apple watch®. |

| Parkinson’s LifeKit |  | iOS | EUR 3.99 | Patients | App to manage PD. Presents cognitive and motor tests (voice, tapping, and tremor); a personal diary; medication reminders; and a result graphic to see variations in symptoms. |

| Patana AI |  | iOS | Free | Professionals | App to evaluate tremor, posture, and movement. |

| StudyMyTremor |  | iOS | EUR 3.99 | Professionals and patients | App to evaluate tremor in amplitude and frequency. You can record the data in a calendar to compare the data. |

| Tremor Analysis |  | iOS | Free | Professionals and patients | App to assess tremor in frequency. It can be customized by choosing which parameters you want to measure. It can also be used with an Apple watch®. |

| Tremor Measurer |  | iOS | EUR 1.99 | Professionals | App to assess tremor quantitatively. |

| Tremor Measurer Lite |  | iOS | Free | Professionals | App to assess tremor quantitatively. |

| TREMOR12 |  | iOS | Free | Professionals | App to measure tremor parameters and analyze them later. Updated to also be used with Apple watch®. |

| Risk of Bias | Concern about the Applicability of the Results | ||||||

|---|---|---|---|---|---|---|---|

| Article (App) | Patient Selection | Index Test | Reference Standard Test | Flow and Timing | Patient Selection | Index Test | Reference Standard Test |

| Araújo et al., 2016 [20] (“LiftPulse”, “iSeismometer”, “StudyMyTremor”) | L | U | L | L | L | L | L |

| Barrantes et al., 2017 [21] (“SensoryLog”) | L | U | L | L | L | L | L |

| Brummelen et al., 2020 [22] (“Make Helsinki app”, “Centre for Human Drug Research app”) | L | U | L | L | L | L | L |

| Chen et al., 2020 [23] (“Roche PD Mobile Application v1”) | L | U | L | L | L | U | L |

| Chronowski et al., 2020 [24] | L | U | L | H | L | L | L |

| Fraiwan et al., 2016 [25] (“Android Mobile” app) | L | L | H | U | L | L | H |

| García-Magariño et al., 2016 [26] (“Hand Trembling detector App”) | H | U | H | U | L | L | H |

| Kassavetis et al., 2015 [27] | L | L | L | L | L | L | L |

| Kostikis et al., 2015 [28] | L | U | L | L | L | L | L |

| Kuosmanen et al., 2020 [29] (“Sentient Tracking of Parkinson’s” (STOP)) | L | U | L | L | L | L | L |

| Lipsmeier et al., 2018 [30] (“Roche PD Mobile Application v1”) | L | L | L | L | L | L | L |

| Motolese et al., 2020 [31] (EncephaLog HomeTM) | L | U | L | L | L | L | L |

| Pan et al., 2015 [32] (“PD Dr”) | L | U | L | L | L | L | L |

| Woods et al., 2014 [33] | L | U | L | L | L | L | L |

| App | Design and Relevance | Information Quality and Security | Provision of Services | Confidentiality and Privacy | Compliance of Recommendation (%) | ||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Relevance | Accessibility | Design | Usability/Testing | Audience Adequacy | Transparency | Authorship | Revisions | Contents and Sources of Information | Risk Management | Technical Support | E-Commerce | Bandwidth | Publicity | Privacy and Data Protection | Logical Security | ||

| cloudUPDRS | ✓ | ✓ | ✓ | NE | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✕ | ✓ | ✓ | ✓ | ✓ | 87.5% |

| MyTremorApp | ✓ | ✓ | ✓ | NE | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | 93.75% |

| ParkinsonAI | ✓ | ✓ | ✓ | NE | ✓ | ✓ | ✓ | ✓ | ✕ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | 87.5% |

| Tremor Measurement | ✓ | ✓ | ✓ | NE | ✓ | ✓ | ✓ | ✓ | ✕ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | 87.5% |

| Cepha | ✓ | ✓ | ✓ | NE | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✕ | ✓ | ✓ | ✓ | ✓ | 87.5% |

| CYPD | ✓ | ✓ | ✓ | NE | ✓ | ✓ | ✓ | ✓ | ✕ | ✓ | ✓ | ✕ | ✓ | ✓ | ✓ | ✓ | 81.25 |

| Parkinson’s LifeKit | ✓ | ✓ | ✓ | NE | ✓ | ✓ | ✓ | ✓ | ✕ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | 87.5% |

| Patana AI | ✕ | ✓ | ✓ | NE | ✓ | ✓ | ✓ | ✕ | ✓ | ✓ | ✓ | ✕ | ✓ | ✓ | ✓ | ✓ | 75% |

| StudyMyTremor | ✓ | ✓ | ✓ | NE | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | 93.75% |

| Tremor Analysis | ✓ | ✓ | ✓ | NE | ✓ | ✓ | ✓ | ✓ | ✕ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | 87.5% |

| Tremor Measurer | ✕ | ✓ | ✓ | NE | ✓ | ✓ | ✓ | ✓ | ✕ | ✓ | ✓ | ✕ | ✓ | ✓ | ✓ | ✓ | 75% |

| Tremor Measurer Lite | ✕ | ✓ | ✓ | NE | ✓ | ✓ | ✓ | ✓ | ✕ | ✓ | ✓ | ✕ | ✓ | ✓ | ✓ | ✓ | 75% |

| TREMOR12 | ✓ | ✓ | ✓ | NE | ✓ | ✓ | ✓ | ✓ | ✕ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | 87.5% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Moreta-de-Esteban, P.; Martín-Casas, P.; Ortiz-Gutiérrez, R.M.; Straudi, S.; Cano-de-la-Cuerda, R. Mobile Applications for Resting Tremor Assessment in Parkinson’s Disease: A Systematic Review. J. Clin. Med. 2023, 12, 2334. https://doi.org/10.3390/jcm12062334

Moreta-de-Esteban P, Martín-Casas P, Ortiz-Gutiérrez RM, Straudi S, Cano-de-la-Cuerda R. Mobile Applications for Resting Tremor Assessment in Parkinson’s Disease: A Systematic Review. Journal of Clinical Medicine. 2023; 12(6):2334. https://doi.org/10.3390/jcm12062334

Chicago/Turabian StyleMoreta-de-Esteban, Paloma, Patricia Martín-Casas, Rosa María Ortiz-Gutiérrez, Sofía Straudi, and Roberto Cano-de-la-Cuerda. 2023. "Mobile Applications for Resting Tremor Assessment in Parkinson’s Disease: A Systematic Review" Journal of Clinical Medicine 12, no. 6: 2334. https://doi.org/10.3390/jcm12062334

APA StyleMoreta-de-Esteban, P., Martín-Casas, P., Ortiz-Gutiérrez, R. M., Straudi, S., & Cano-de-la-Cuerda, R. (2023). Mobile Applications for Resting Tremor Assessment in Parkinson’s Disease: A Systematic Review. Journal of Clinical Medicine, 12(6), 2334. https://doi.org/10.3390/jcm12062334