A Novel Hybrid Mental Spelling Application Based on Eye Tracking and SSVEP-Based BCI

Abstract

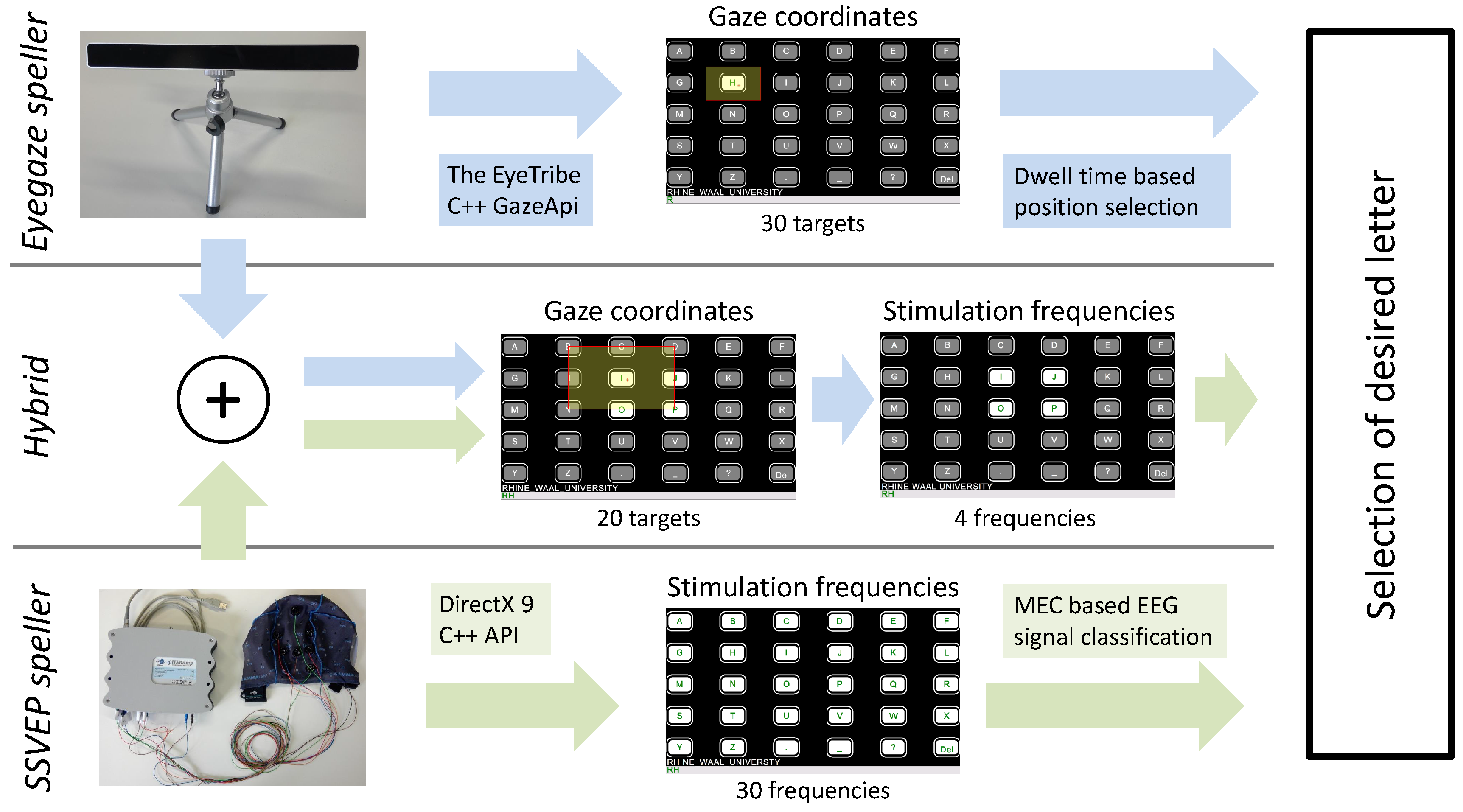

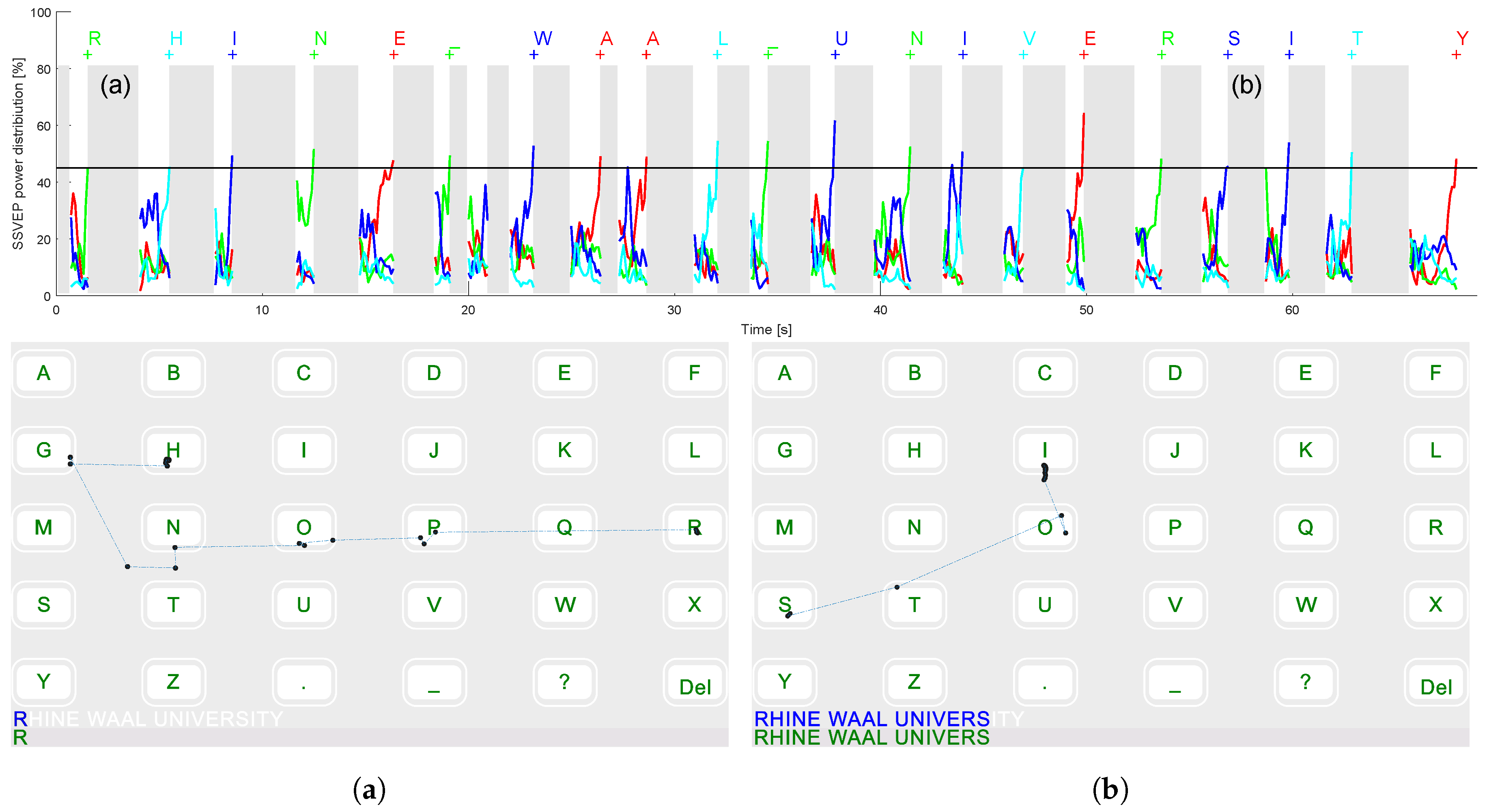

:1. Introduction

- Eye tracking data is not used for activation of letters, the Midas touch problem is circumvented.

- Dynamic gaze shifting phases, ensuring that EEG data are only considered if the target object is fixated.

- Only four SSVEP stimuli need to be distinguished resulting in high classification accuracy.

- Little precision is expected from the eye tracking device, allowing a low cost hardware solution.

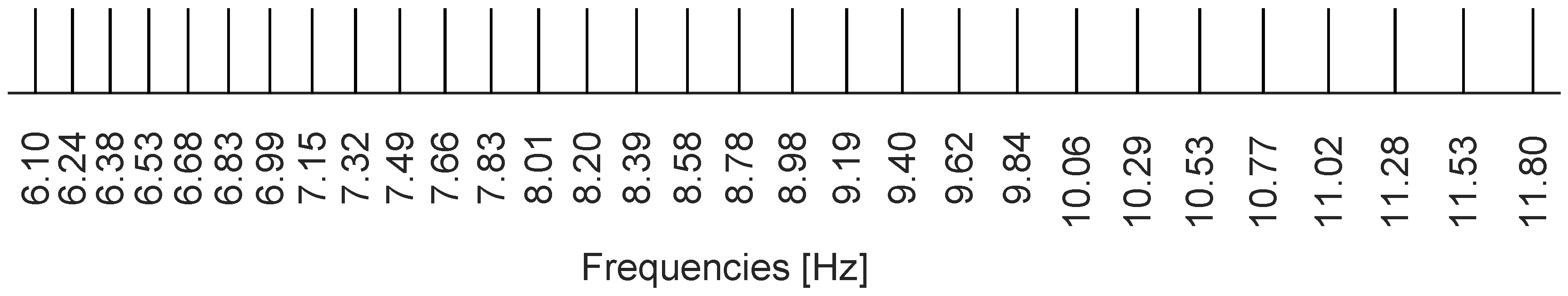

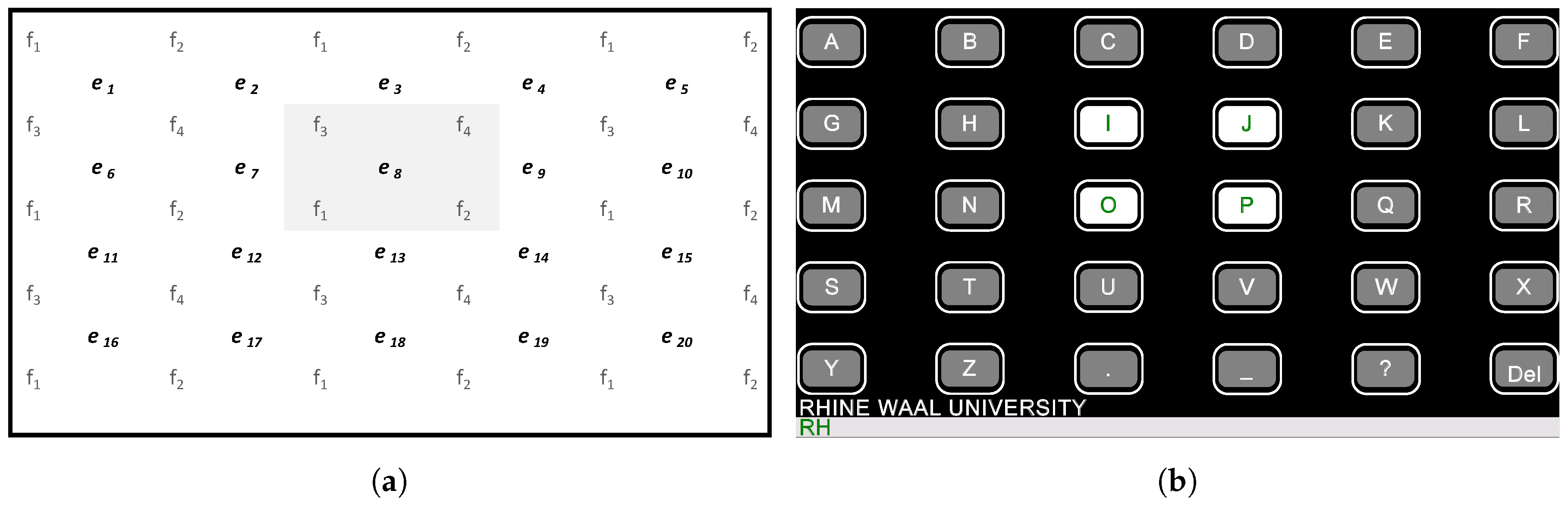

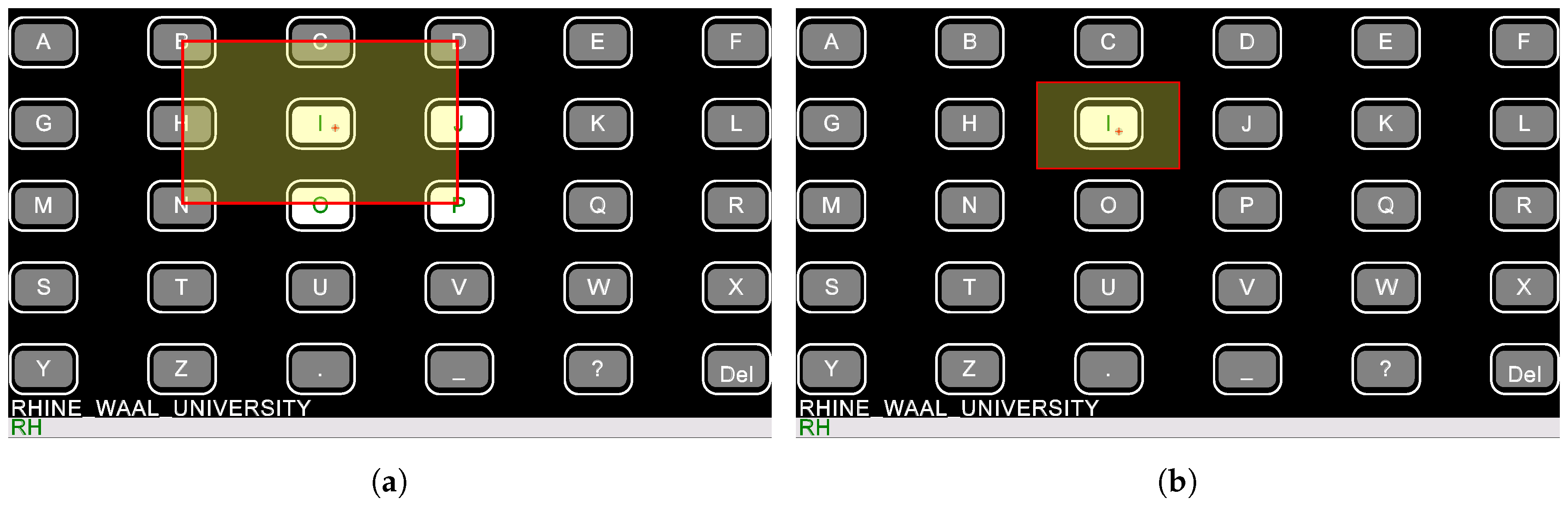

2. Experimental Section

2.1. Participants

2.2. Hardware

2.3. Signal Processing

2.4. Software

2.5. Experimental Setup

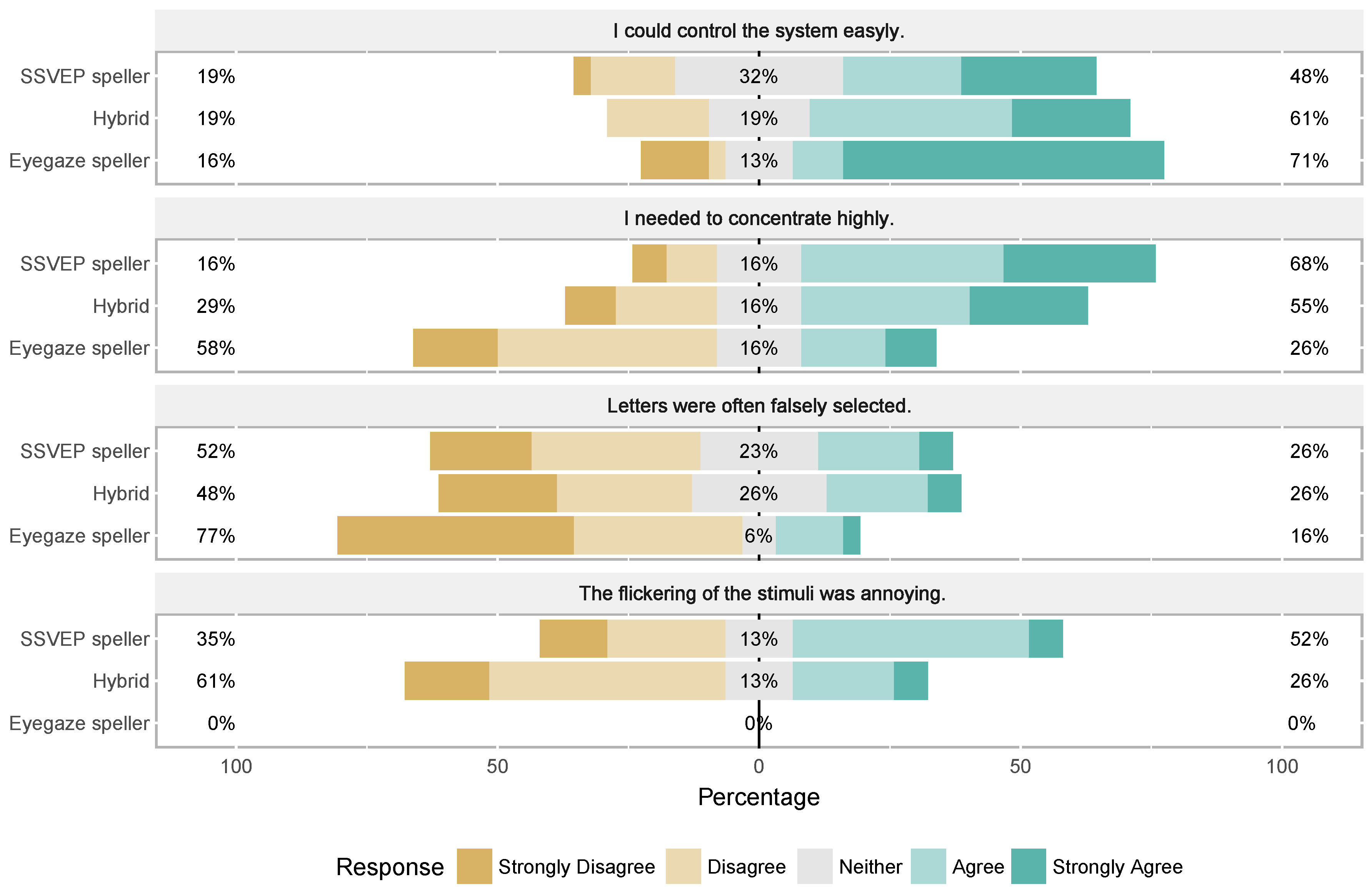

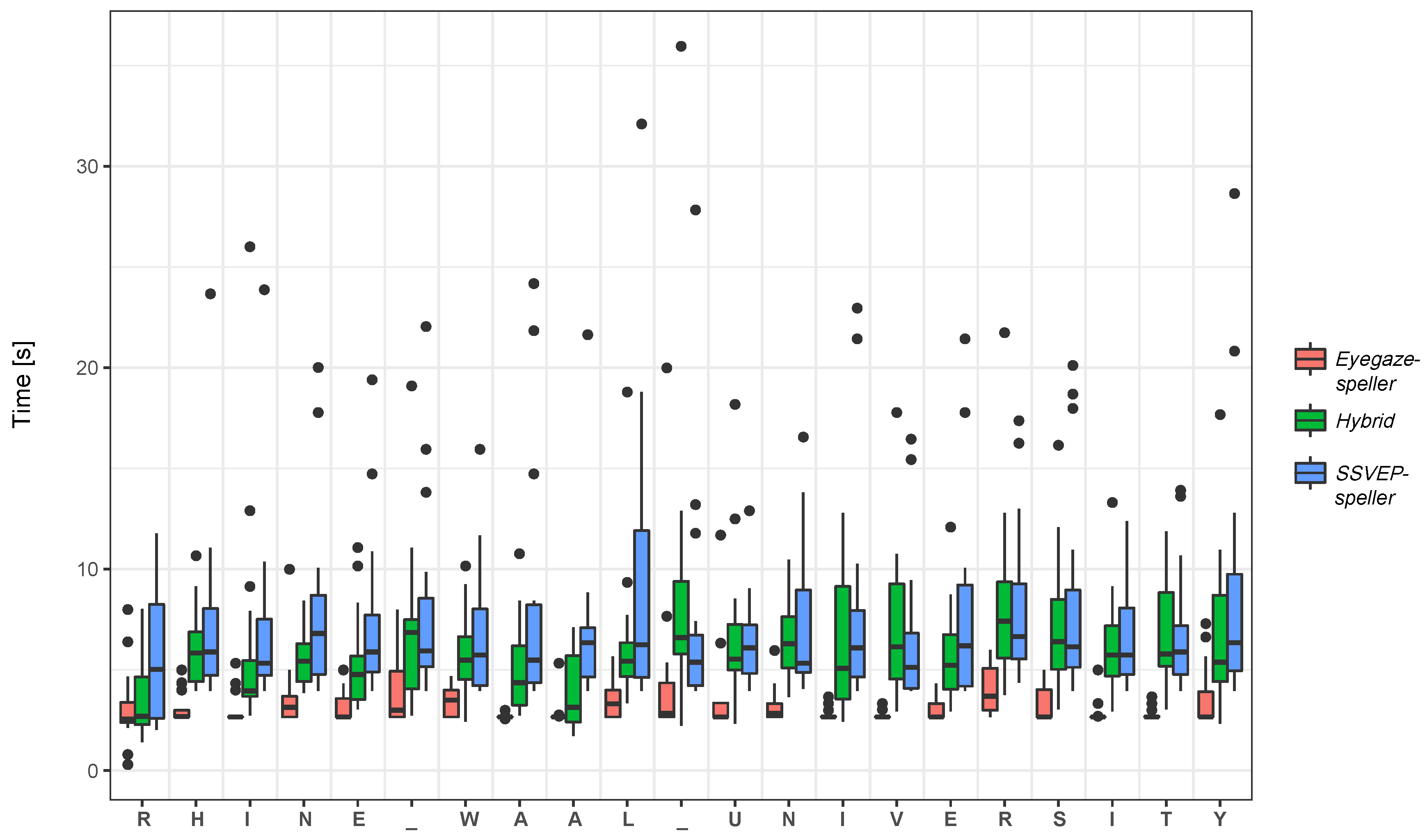

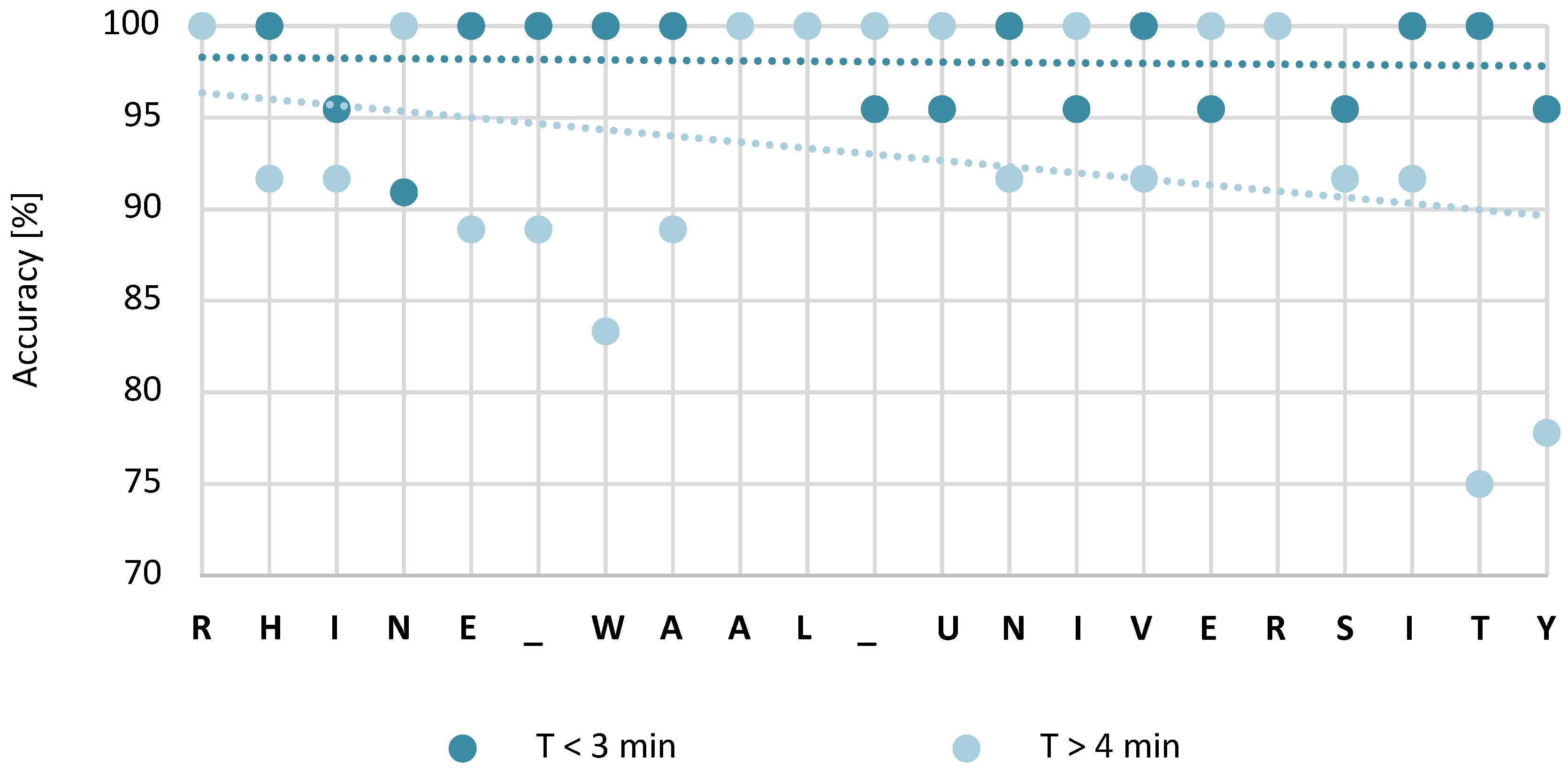

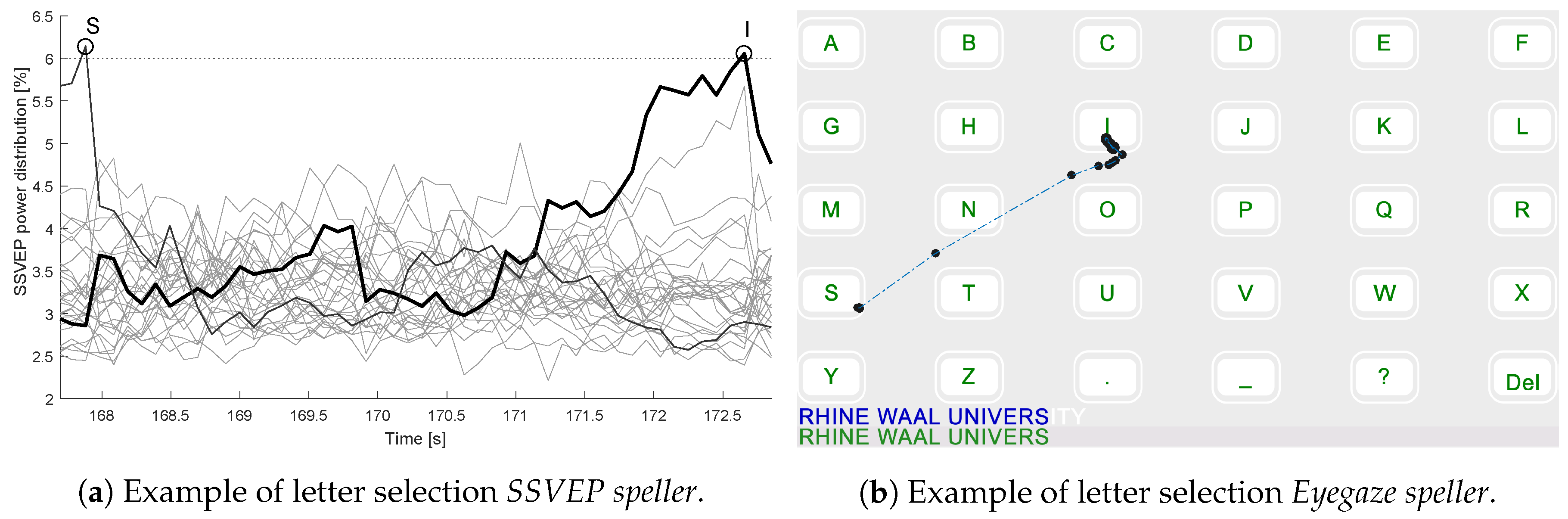

3. Results

4. Discussion

5. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Wolpaw, J.; Birbaumer, N.; McFarland, D.; Pfurtscheller, G.; Vaughan, T. Brain-computer interfaces for communication and control. Clin. Neurophysiol. 2002, 113, 767–791. [Google Scholar] [CrossRef]

- Gao, S.; Wang, Y.; Gao, X.; Hong, B. Visual and auditory brain-computer interfaces. IEEE Trans. Biomed. Eng. 2014, 61, 1436–1447. [Google Scholar] [CrossRef] [PubMed]

- Muller-Putz, G.R.; Pfurtscheller, G. Control of an electrical prosthesis with an SSVEP-based BCI. IEEE Trans. Biomed. Eng. 2008, 55, 361–364. [Google Scholar] [CrossRef] [PubMed]

- Yin, E.; Zhou, Z.; Jiang, J.; Yu, Y.; Hu, D. A dynamically optimized SSVEP brain-computer interface (BCI) speller. IEEE Trans. Biomed. Eng. 2015, 62, 1447–1456. [Google Scholar] [CrossRef] [PubMed]

- Zhang, Y.; Zhou, G.; Jin, J.; Wang, X.; Cichocki, A. SSVEP recognition using common feature analysis in brain-computer interface. J. Neurosci. Methods 2015, 244, 8–15. [Google Scholar] [CrossRef] [PubMed]

- Guger, C.; Allison, B.Z.; Windhager, B.G.; Prückl, R.; Hintermüller, C.; Kapeller, C.; Bruckner, M.; Krausz, G.; Edlinger, G. How many people could use an SSVEP BCI? Front. Neurosci. 2012, 6, 1–6. [Google Scholar] [CrossRef] [PubMed]

- Gembler, F.; Stawicki, P.; Volosyak, I. Autonomous parameter adjustment for SSVEP-based BCIs with a novel BCI wizard. Front. Neurosci. 2015, 9, 1–12. [Google Scholar] [CrossRef] [PubMed]

- Zhang, D.; Maye, A.; Gao, X.; Hong, B.; Engel, A.K.; Gao, S. An independent brain-computer interface using covert non-spatial visual selective attention. J. Neural Eng. 2010, 7, 016010. [Google Scholar] [CrossRef] [PubMed]

- Lopez-Gordo, M.; Prieto, A.; Pelayo, F.; Morillas, C. Customized stimulation enhances performance of independent binary SSVEP-BCIs. Clin. Neurophysiol. 2011, 122, 128–133. [Google Scholar] [CrossRef] [PubMed]

- Lesenfants, D.; Habbal, D.; Lugo, Z.; Lebeau, M.; Horki, P.; Amico, E.; Pokorny, C.; Gomez, F.; Soddu, A.; Müller-Putz, G.; et al. An independent SSVEP-based brain–computer interface in locked-in syndrome. J. Neural Eng. 2014, 11, 035002. [Google Scholar] [CrossRef] [PubMed]

- Lupu, R.G.; Ungureanu, F. A survey of eye tracking methods and applications. Bul. Inst. Polit. Iasi 2013, 84, 71–86. [Google Scholar]

- Harezlak, K.; Kasprowski, P.; Stasch, M. Towards accurate eye tracker calibration—Methods and procedures. Procedia Comput. Sci. 2014, 35, 1073–1081. [Google Scholar] [CrossRef]

- Pasqualotto, E.; Matuz, T.; Federici, S.; Ruf, C.A.; Bartl, M.; Belardinelli, M.O.; Birbaumer, N.; Halder, S. Usability and workload of access technology for people with severe motor impairment. Neurorehabil. Neural Repair 2015, 29, 950–957. [Google Scholar] [CrossRef] [PubMed]

- Debeljak, M.; Ocepek, J.; Zupan, A. Eye controlled human computer interaction for severely motor disabled children. In Proceedings of the 13th International Conference on Computers Helping People with Special Needs, Linz, Austria, 11–13 July 2012; Springer: Berlin/Heidelberg, Germany, 2012; pp. 153–156. [Google Scholar]

- Newbutt, N.; Creed, C. Assistive tools for disability arts: Collaborative experiences in working with disabled artists and stakeholders. J. Assist. Technol. 2016, 10, 121–129. [Google Scholar]

- Kishore, S.; González-Franco, M.; Hintemüller, C.; Kapeller, C.; Guger, C.; Slater, M.; Blom, K.J. Comparison of SSVEP BCI and eye tracking for controlling a humanoid robot in a social environment. Presence Teleoper. Virtual Environ. 2014, 23, 242–252. [Google Scholar] [CrossRef]

- Kos’myna, N.; Tarpin-Bernard, F. Evaluation and comparison of a multimodal combination of BCI paradigms and Eye-tracking in a gaming context. IEEE Trans. Comput. Intell. AI Games (T-CIAIG) 2013, 5, 150–154. [Google Scholar] [CrossRef]

- Jacob, R.J. Eye tracking in advanced interface design. In Virtual Enviroments and Advanced Interface Design; Oxford University Press: Oxford, UK, 1995; pp. 258–288. [Google Scholar]

- Vilimek, R.; Zander, T.O. BC (eye): Combining eye-gaze input with brain-computer interaction. In Proceedings of the 5th International on Conference on Universal Access in Human-Computer Interaction, San Diego, CA, USA, 19–24 July 2009; Springer: Berlin/Heidelberg, Germany, 2009; pp. 593–602. [Google Scholar]

- McMullen, D.P.; Hotson, G.; Katyal, K.D.; Wester, B.A.; Fifer, M.S.; McGee, T.G.; Harris, A.; Johannes, M.S.; Vogelstein, R.J.; Ravitz, A.D.; et al. Demonstration of a semi-autonomous hybrid brain–machine interface using human intracranial EEG, eye tracking, and computer vision to control a robotic upper limb prosthetic. IEEE Trans. Neural Syst. Rehabil. Eng. 2014, 22, 784–796. [Google Scholar] [CrossRef] [PubMed]

- Kim, B.H.; Kim, M.; Jo, S. Quadcopter flight control using a low-cost hybrid interface with EEG-based classification and eye tracking. Comput. Biol. Med. 2014, 51, 82–92. [Google Scholar] [CrossRef] [PubMed]

- McCullagh, P.; Galway, L.; Lightbody, G. Investigation into a mixed hybrid using SSVEP and eye gaze for optimising user interaction within a virtual environment. In Proceedings of the 7th International Conference on Universal Access in Human-Computer Interaction, Las Vegas, NV, USA, 21–26 July 2013; Springer: Berlin/Heidelberg, Germany, 2013; pp. 530–539. [Google Scholar]

- Lim, J.H.; Lee, J.H.; Hwang, H.J.; Kim, D.H.; Im, C.H. Development of a hybrid mental spelling system combining SSVEP-based brain-computer interface and webcam-based eye tracking. Biomed. Signal Process. Control 2015, 21, 99–104. [Google Scholar] [CrossRef]

- McCullagh, P.; Brennan, C.; Lightbody, G.; Galway, L.; Thompson, E.; Martin, S. An SSVEP and Eye Tracking Hybrid BNCI: Potential Beyond Communication and Control. In Proceedings of the 10th International Conference on Augmented Cognition, Toronto, ON, Canada, 7–22 July 2016; Springer: New York, NY, USA, 2016; pp. 69–78. [Google Scholar]

- Janthanasub, V.; Meesad, P. Evaluation of a low-cost eye tracking system for computer input. King Mongkutś Univ. Technol. North Bangkok Int. J. Appl. Sci. Technol. 2015, 8, 185–196. [Google Scholar] [CrossRef]

- Friman, O.; Volosyak, I.; Gräser, A. Multiple channel detection of steady-state visual evoked potentials for brain-computer interfaces. IEEE Trans. Biomed. Eng. 2007, 54, 742–750. [Google Scholar] [CrossRef] [PubMed]

- Volosyak, I.; Valbuena, D.; Lüth, T.; Malechka, T.; Gräser, A. BCI demographics II: How many (and what kinds of) people can use an SSVEP BCI? IEEE Trans. Neural Syst. Rehabil. Eng. 2011, 19, 232–239. [Google Scholar] [CrossRef] [PubMed]

- Volosyak, I.; Moor, A.; Gräser, A. A dictionary-driven SSVEP speller with a modified graphical user interface. In Advances in Computational Intelligence; Springer: Berlin/Heidelberg, Germany, 2011; pp. 353–361. [Google Scholar]

- Volosyak, I. SSVEP-based Bremen-BCI interface—Boosting information transfer rates. J. Neural Eng. 2011, 8, 036020. [Google Scholar] [CrossRef] [PubMed]

- Wang, Y.; Wang, Y.T.; Jung, T.P. Visual stimulus design for high-rate SSVEP BCI. Electron. Lett. 2010, 46, 1057–1058. [Google Scholar] [CrossRef]

- Chen, X.; Wang, Y.; Nakanishi, M.; Jung, T.P.; Gao, X. Hybrid frequency and phase coding for a high-speed SSVEP-based BCI speller. In Proceedings of the 2014 36th Annual International Conference of the IEEE Engineering in Medicine and Biology Society, Chicago, IL, USA, 26–30 August 2014; pp. 3993–3996. [Google Scholar]

- Gembler, F.; Stawicki, P.; Volosyak, I. Exploring the possibilities and limitations of multitarget SSVEP-based BCI applications. In Proceedings of the 2016 38th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Orlando, FL, USA, 16–20 August 2016; pp. 1488–1491. [Google Scholar]

- Stawicki, P.; Gembler, F.; Volosyak, I. Evaluation of suitable frequency differences in SSVEP-based BCIs. In Symbiotic Interaction; Springer: Cham, Switzerland, 2015; pp. 159–165. [Google Scholar]

- Volosyak, I.; Cecotti, H.; Gräser, A. Steady-state visual evoked potential response—Impact of the time segment length. In Proceedings of the 7th International Conference on Biomedical Engineering BioMed2010, Innsbruck, Austria, 17–19 February 2010; pp. 288–292. [Google Scholar]

- Cecotti, H.; Coyle, D. Calibration-less detection of steady-state visual evoked potentials-comparisons and combinations of methods. In Proceedings of the 2014 International Joint Conference on Neural Networks (IJCNN), Beijing, China, 6–11 July 2014; pp. 4050–4055. [Google Scholar]

- Blignaut, P.; Wium, D. Eye-tracking data quality as affected by ethnicity and experimental design. Behav. Res. Methods 2014, 46, 67–80. [Google Scholar] [CrossRef] [PubMed]

- Räihä, K.J.; Ovaska, S. An exploratory study of eye typing fundamentals: Dwell time, text entry rate, errors, and workload. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems (CHI ’12), Austin, TX, USA, 5–10 May 2012; ACM: New York, NY, USA, 2012; pp. 3001–3010. [Google Scholar]

- Martinez, P.; Bakardjian, H.; Cichocki, A. Fully online multicommand brain-computer interface with visual neurofeedback using SSVEP paradigm. Intell. Neurosci. 2007, 2007, 94561. [Google Scholar] [CrossRef] [PubMed]

- Gembler, F.; Stawicki, P.; Volosyak, I. Towards a user-friendly BCI for elderly people. In Proceedings of the 6th International Brain-Computer Interface Conference Graz, Graz, Austria, 16–19 September 2014. [Google Scholar]

- Chien, Y.Y.; Lin, F.C.; Zao, J.; Chou, C.C.; Huang, Y.P.; Kuo, H.Y.; Wang, Y.; Jung, T.P.; Shieh, H.P.D. Polychromatic SSVEP stimuli with subtle flickering adapted to brain-display interactions. J. Neural Eng. 2016, 14, 016018. [Google Scholar] [CrossRef] [PubMed]

- Won, D.O.; Hwang, H.J.; Dähne, S.; Müller, K.R.; Lee, S.W. Effect of higher frequency on the classification of steady-state visual evoked potentials. J. Neural Eng. 2015, 13, 016014. [Google Scholar] [CrossRef] [PubMed]

- Käthner, I.; Kübler, A.; Halder, S. Comparison of eye tracking, electrooculography and an auditory brain-computer interface for binary communication: A case study with a participant in the locked-in state. J. Neuroeng. Rehabil. 2015, 12, 76. [Google Scholar] [CrossRef] [PubMed]

- Gembler, F.; Stawicki, P.; Volosyak, I. A comparison of SSVEP-based BCI-performance between different age groups. In Advances in Computational Intelligence; Springer: Cham, Switzerland, 2015; pp. 71–77. [Google Scholar]

- Volosyak, I.; Gembler, F.; Stawicki, P. Age-related differences in SSVEP-based BCI performance. Neurocomputing 2017, 1–8, In Press. [Google Scholar] [CrossRef]

- Mannan, M.M.N.; Kim, S.; Jeong, M.Y.; Kamran, M.A. Hybrid EEG-Eye tracker: Automatic identification and removal of eye movement and blink artifacts from electroencephalographic signal. Sensors 2016, 16, 241. [Google Scholar] [CrossRef] [PubMed]

| No. of Target Characters | No. of Steps for Character Selection | No. of Classes Eye Tracking | Visual Angle Eye Target | No. of Classes SSVEP | Min. Difference between Frequencies | Min. Time Character Selection | |

|---|---|---|---|---|---|---|---|

| SSVEP speller | 30 | 1 | - | - | 30 | 0.14 Hz | 2.031 s |

| Eyegaze speller | 30 | 1 | 30 | - | - | 1.000 s | |

| Hybrid | 30 | 2 | 20 | 4 | 0.67 Hz | 1.813 s |

| Subject | SSVEP speller | Hybrid | Eyegaze speller | ||||||

|---|---|---|---|---|---|---|---|---|---|

| # | Time (s) | Acc. (%) | ITR (bpm) | Time (s) | Acc. (%) | ITR (bpm) | Time (s) | Acc. (%) | ITR (bpm) |

| 1 | 184.133 | 81.82 | 35.91 | 162.906 | 92.00 | 37.90 | 62.867 | 100.00 | 98.35 |

| 2 | 188.906 | 78.38 | 36.47 | 112.531 | 88.89 | 55.62 | 64.796 | 100.00 | 95.42 |

| 3 | - | - | - | 208.508 | 88.89 | 30.02 | 107.111 | 86.21 | 59.43 |

| 4 | - | - | - | 321.648 | 100.00 | 19.22 | - | - | - |

| 5 | 91.711 | 100.00 | 67.41 | 67.9453 | 100.00 | 91.00 | 56.063 | 100.00 | 110.28 |

| 6 | 431.641 | 73.33 | 17.36 | 113.953 | 100.00 | 54.26 | 60.125 | 100.00 | 102.83 |

| 7 | 200.180 | 83.87 | 32.39 | 110.602 | 100.00 | 55.37 | 70.890 | 95.65 | 86.39 |

| 8 | 114.359 | 100.00 | 54.06 | 117.914 | 100.00 | 52.43 | 61.242 | 100.00 | 100.96 |

| 9 | 156.914 | 95.65 | 39.03 | 185.758 | 92.00 | 33.24 | 75.563 | 95.65 | 81.05 |

| 10 | 276.859 | 86.21 | 22.99 | - | - | - | - | - | - |

| 11 | - | - | - | 494.406 | 72.34 | 15.47 | 108.010 | 100.00 | 57.24 |

| 12 | - | - | - | 677.828 | 81.82 | 9.76 | 64.492 | 100.00 | 95.87 |

| 13 | - | - | - | 175.094 | 86.21 | 36.35 | 78.000 | 92.00 | 79.16 |

| 14 | 138.531 | 100.00 | 44.63 | 138.734 | 95.65 | 44.14 | 63.477 | 100.00 | 97.40 |

| 15 | 218.258 | 100.00 | 28.33 | - | - | - | - | - | - |

| 16 | 195.609 | 100.00 | 31.61 | 120.352 | 95.65 | 50.88 | 62.359 | 100.00 | 99.15 |

| 17 | 436.516 | 86.21 | 14.58 | 186.266 | 95.65 | 32.88 | 82.875 | 100.00 | 74.60 |

| 18 | 92.652 | 95.65 | 66.12 | 108.773 | 92.00 | 56.76 | - | - | - |

| 19 | 145.336 | 95.65 | 42.14 | 239.383 | 82.76 | 24.76 | - | - | - |

| 20 | 148.891 | 92.00 | 41.47 | 116.289 | 86.21 | 54.73 | 75.766 | 100.00 | 81.60 |

| 21 | - | - | - | 388.477 | 76.92 | 18.11 | 85.211 | 92.00 | 72.46 |

| 22 | 247.406 | 86.21 | 25.73 | 203.633 | 88.88 | 30.74 | 70.891 | 100.00 | 87.21 |

| 23 | 165.445 | 80.65 | 39.63 | 107.758 | 92.00 | 57.30 | 57.992 | 100.00 | 106.61 |

| 24 | 1536.760 | 63.83 | 4.04 | 635.375 | 66.15 | 14.36 | 58.703 | 100.00 | 105.32 |

| 25 | 231.156 | 85.19 | 25.10 | 239.281 | 88.89 | 26.16 | 71.805 | 100.00 | 86.10 |

| 26 | 125.227 | 100.00 | 49.37 | 138.531 | 100.00 | 44.63 | 73.836 | 100.00 | 83.74 |

| 27 | - | - | - | 220.80 | 95.65 | 27.74 | 67.378 | 100.00 | 91.76 |

| 28 | 253.297 | 95.65 | 24.18 | 306.008 | 81.82 | 21.61 | 63.172 | 100.00 | 97.87 |

| 29 | 155.695 | 100.00 | 39.71 | 490.242 | 74.42 | 14.97 | - | - | - |

| 30 | 268.125 | 95.65 | 22.84 | 157.219 | 92.00 | 39.27 | 91.914 | 92.00 | 67.17 |

| 31 | 228.21 | 100.00 | 27.09 | 232.27 | 86.21 | 27.40 | - | - | - |

| 32 | 146.047 | 100.00 | 42.33 | 128.375 | 100.00 | 48.16 | 111.475 | 88.89 | 56.15 |

| Mean | 255.115 | 91.04 | 34.98 | 230.229 | 89.77 | 37.51 | 73.840 | 97.70 | 86.96 |

| SD | 275.114 | 9.78 | 14.55 | 155.121 | 8.90 | 17.66 | 15.622 | 4.05 | 15.33 |

| Literacy rate (%) | 75.00 | 90.63 | 78.13 | ||||||

| Accuracy (%) | ITR (bpm) | Char/Min | Time (s) | |

|---|---|---|---|---|

| SSVEP speller | 90.81 (8.89) | 35.78 (13.36) | 8.65 (2.62) | 206.894 (96.051) |

| Hybrid | 93.87 (5.57) | 46.13 (15.75) | 10.60 (3.17) | 150.781 (56.637) |

| Eyegaze speller | 98.46 (3.27) | 89.60 (14.14) | 18.78 (2.23) | 70.950 (13.659) |

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Stawicki, P.; Gembler, F.; Rezeika, A.; Volosyak, I. A Novel Hybrid Mental Spelling Application Based on Eye Tracking and SSVEP-Based BCI. Brain Sci. 2017, 7, 35. https://doi.org/10.3390/brainsci7040035

Stawicki P, Gembler F, Rezeika A, Volosyak I. A Novel Hybrid Mental Spelling Application Based on Eye Tracking and SSVEP-Based BCI. Brain Sciences. 2017; 7(4):35. https://doi.org/10.3390/brainsci7040035

Chicago/Turabian StyleStawicki, Piotr, Felix Gembler, Aya Rezeika, and Ivan Volosyak. 2017. "A Novel Hybrid Mental Spelling Application Based on Eye Tracking and SSVEP-Based BCI" Brain Sciences 7, no. 4: 35. https://doi.org/10.3390/brainsci7040035

APA StyleStawicki, P., Gembler, F., Rezeika, A., & Volosyak, I. (2017). A Novel Hybrid Mental Spelling Application Based on Eye Tracking and SSVEP-Based BCI. Brain Sciences, 7(4), 35. https://doi.org/10.3390/brainsci7040035