Induced and Evoked Brain Activation Related to the Processing of Onomatopoetic Verbs

Abstract

1. Introduction

2. Materials and Methods

2.1. Participants

2.2. Stimuli

- Has the process implied by the verb anything to do with liquids?

- Is the process implied by the verb performed with the mouth?

- Is the process implied by the verb performed with an electric tool?

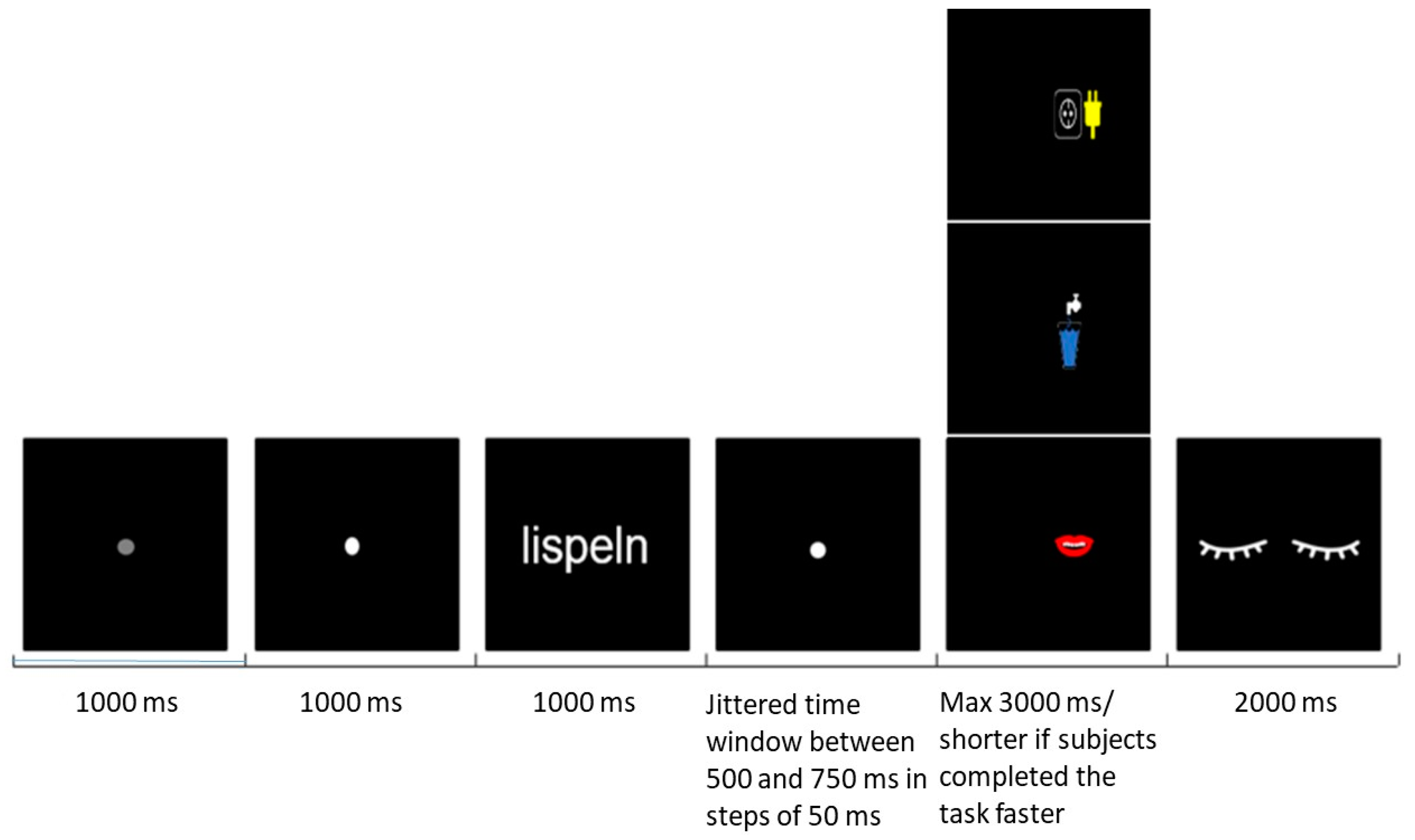

2.3. Procedures

2.4. Data Acquisition and Analysis

2.5. Meg Data Pre-Processing

2.6. Time–Frequency Representations and Event-Related Field Analysis

2.7. Statistics

3. Results

3.1. Behavioural Results

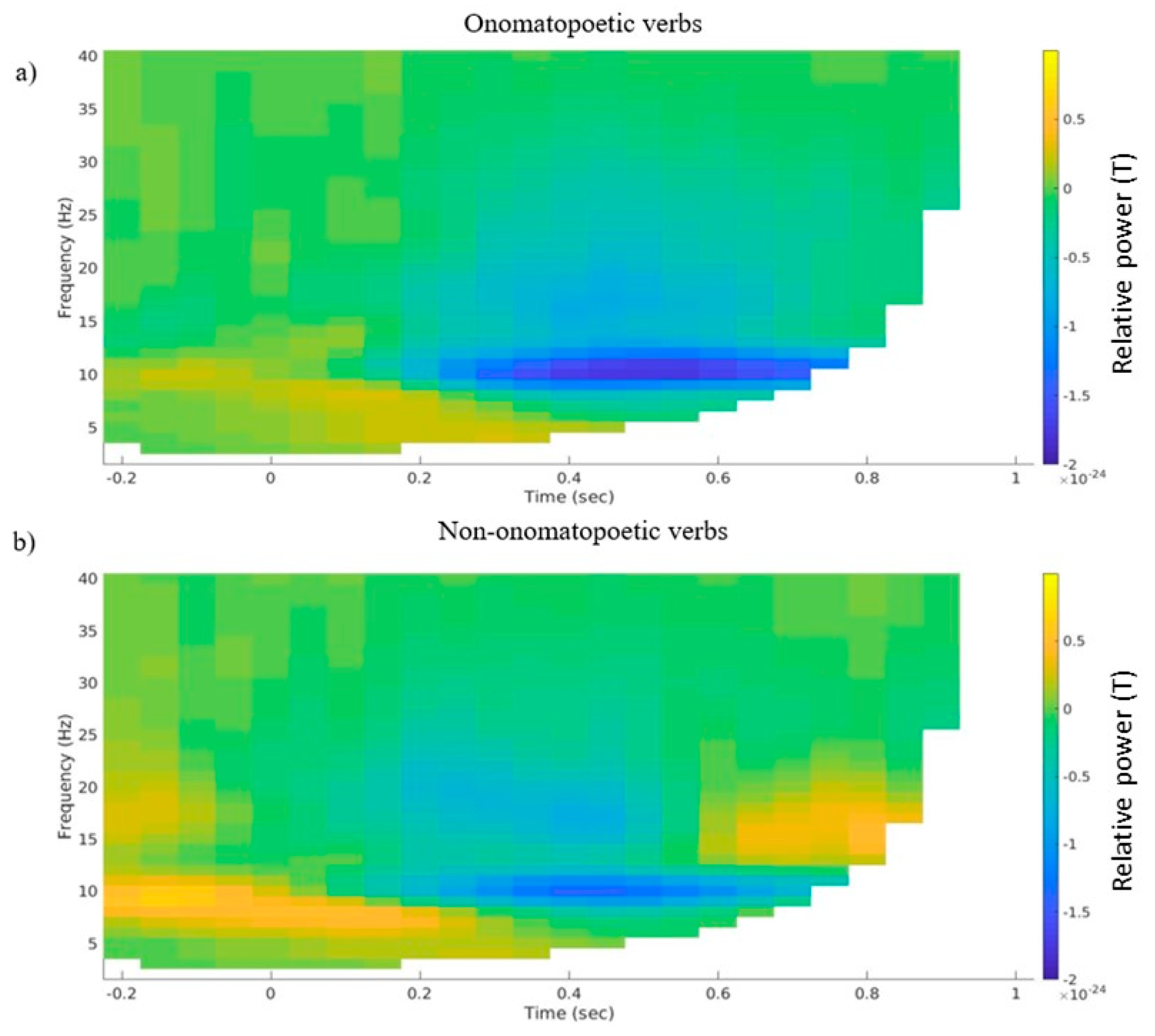

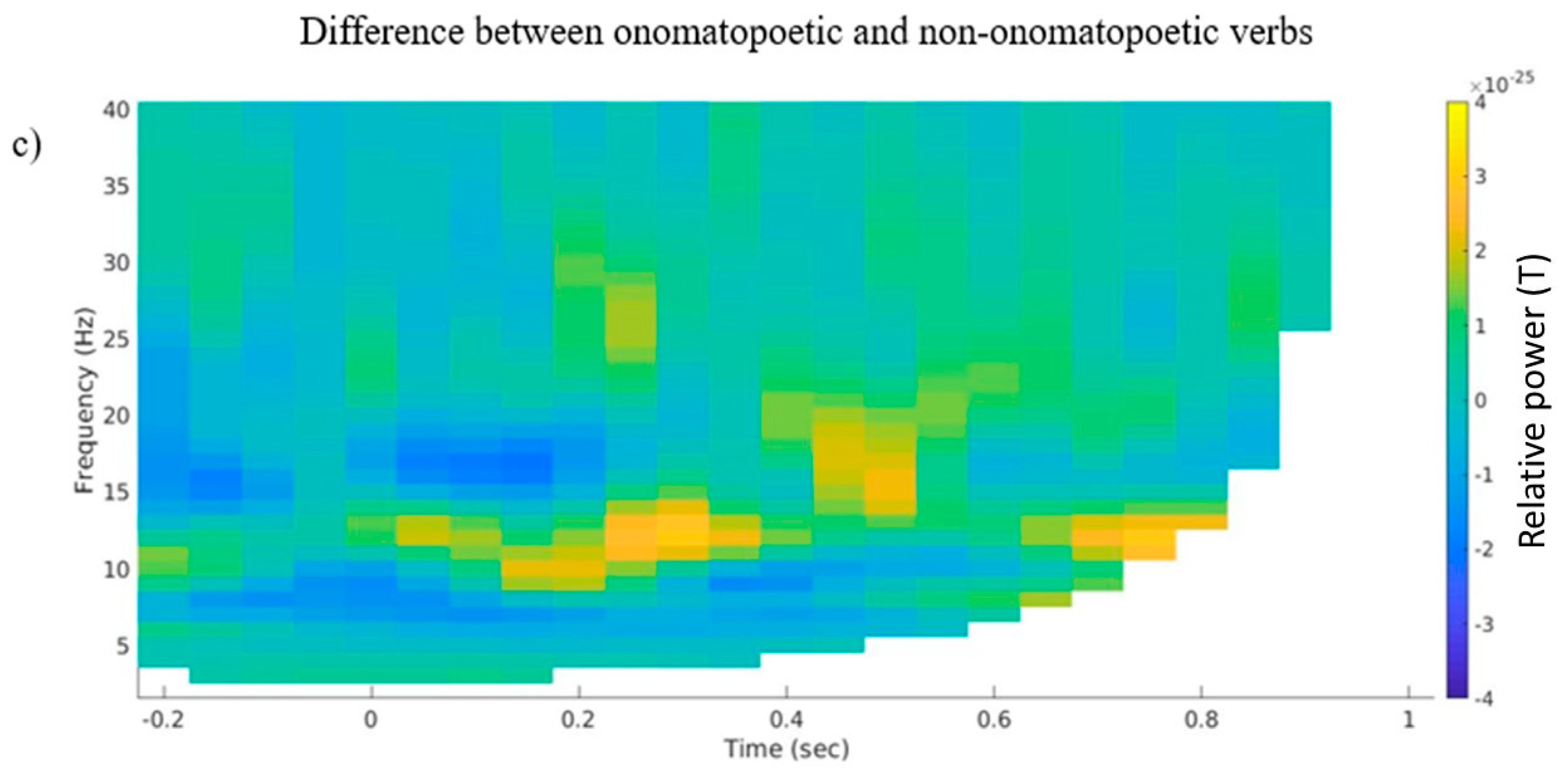

3.2. Time–Frequency Representations

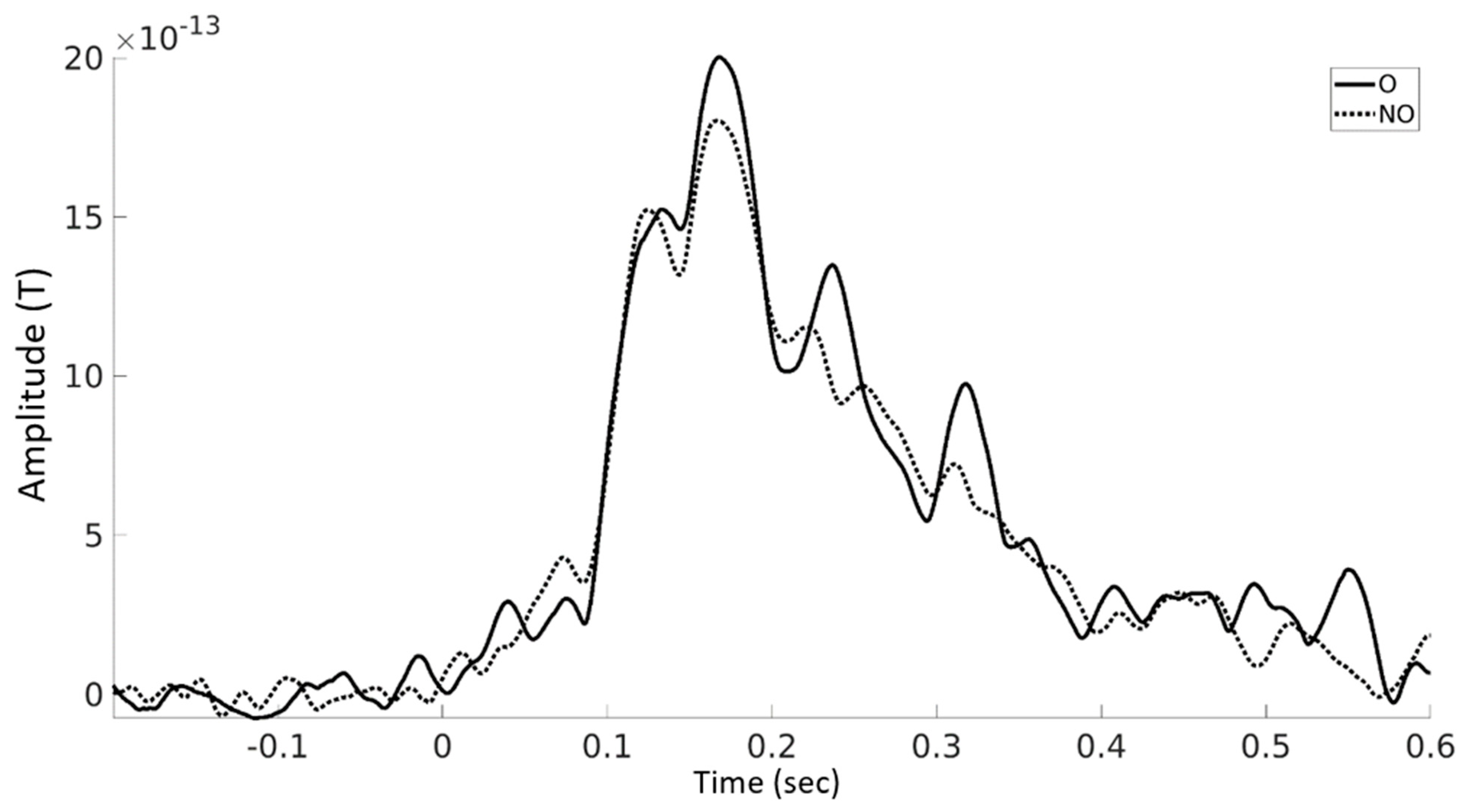

3.3. Event-Related Fields

4. Discussion

Clinical Applications

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Conflicts of Interest

References

- Barsalou, L.W. Grounded Cognition. Annu. Rev. Psychol. 2008, 59, 617–645. [Google Scholar] [CrossRef]

- Aziz-Zadeh, L.; Wilson, S.M.; Rizzolatti, G.; Iacoboni, M. Congruent Embodied Representations for Visually Presented Actions and Linguistic Phrases Describing Actions. Curr. Biol. 2006, 16, 1818–1823. [Google Scholar] [CrossRef]

- Boulenger, V.; Hauk, O.; Pulvermüller, F. Grasping Ideas with the Motor System: Semantic So-matotopy in Idiom Comprehension. Cereb. Cortex 2009, 19, 1905–1914. [Google Scholar] [CrossRef]

- Kemmerer, D.; Castillo, J.G.; Talavage, T.; Patterson, S.; Wiley, C. Neuroanatomical distribution of five semantic components of verbs: Evidence from fMRI. Brain Lang. 2008, 107, 16–43. [Google Scholar] [CrossRef]

- Klepp, A.; Weissler, H.; Niccolai, V.; Terhalle, A.; Geisler, H.; Schnitzler, A.; Biermann-Ruben, K. Neuromagnetic hand and foot motor sources recruited during action verb processing. Brain Lang. 2014, 128, 41–52. [Google Scholar] [CrossRef]

- Niccolai, V.; Klepp, A.; Weissler, H.; Hoogenboom, N.; Schnitzler, A.; Biermann-Ruben, K. Grasping Hand Verbs: Oscillatory Beta and Alpha Correlates of Action-Word Processing. PLoS ONE 2014, 9, e108059. [Google Scholar] [CrossRef]

- Rüschemeyer, S.-A.; Brass, M.; Friederici, A.D. Comprehending Prehending: Neural Correlates of Processing Verbs with Motor Stems. J. Cogn. Neurosci. 2007, 19, 855–865. [Google Scholar] [CrossRef]

- Tettamanti, M.; Buccino, G.; Saccuman, M.C.; Gallese, V.; Danna, M.; Scifo, P.; Fazio, F.; Rizzolatti, G.; Cappa, S.F.; Perani, D. Listening to Action-related Sentences Activates Fronto-parietal Motor Circuits. J. Cogn. Neurosci. 2005, 17, 273–281. [Google Scholar] [CrossRef]

- Kiefer, M.; Sim, E.-J.; Herrnberger, B.; Grothe, J.; Hoenig, K. The Sound of Concepts: Four Markers for a Link between Auditory and Conceptual Brain Systems. J. Neurosci. 2008, 28, 12224–12230. [Google Scholar] [CrossRef]

- Cao, L.; Klepp, A.; Schnitzler, A.; Gross, J.; Biermann-Ruben, K. Auditory perception modulated by word reading. Exp. Brain Res. 2016, 234, 3049–3057. [Google Scholar] [CrossRef][Green Version]

- Engel, A.K.; Fries, P. Beta-band oscillations—signalling the status quo? Curr. Opin. Neurobiol. 2010, 20, 156–165. [Google Scholar] [CrossRef]

- Pfurtscheller, G.; Stancák, A.; Neuper, C. Event-related synchronization (ERS) in the alpha band—An electrophysi-ological correlate of cortical idling: A review. Int. J. Psychophysiol. 1996, 24, 39–46. [Google Scholar] [CrossRef]

- Weisz, N.; Hartmann, T.; Müller, N.; Lorenz, I.; Obleser, J. Alpha Rhythms in Audition: Cognitive and Clinical Perspectives. Front. Psychol. 2011, 2, 73. [Google Scholar] [CrossRef]

- Niccolai, V.; Klepp, A.; van Dijk, H.; Schnitzler, A.; Biermann-Ruben, K. Auditory cortex sensitivity to the loudness attribute of verbs. Brain Lang. 2020, 202, 104726. [Google Scholar] [CrossRef]

- Han, J.-H.; Choi, W.; Chang, Y.; Jeong, O.-R.; Nam, K. Neuroanatomical Analysis for Onomatopoeia and Phainomime Words: fMRI Study. In Advances in Natural Computation. ICNC 2005. Lecture Notes in Computer Science; Wang, L., Chen, K., Ong, Y.S., Eds.; Springer: Berlin/Heidelberg, Germany, 2005; Volume 3610. [Google Scholar] [CrossRef]

- Hinton, L. (Ed.) Transferred to digital printing. In Sound Symbolism; Cambridge University Press: Cambridge, UK, 1997. [Google Scholar]

- Osaka, N. Walk-related mimic word activates the extrastriate visual cortex in the human brain: An fMRI study. Behav. Brain Res. 2009, 198, 186–189. [Google Scholar] [CrossRef]

- Osaka, N. Ideomotor response and the neural representation of implied crying in the human brain: An fMRI study using onomatopoeia1. Jpn. Psychol. Res. 2011, 53, 372–378. [Google Scholar] [CrossRef][Green Version]

- Osaka, N.; Osaka, M. Gaze-related mimic word activates the frontal eye field and related network in the human brain: An fMRI study. Neurosci. Lett. 2009, 461, 65–68. [Google Scholar] [CrossRef]

- Osaka, N.; Osaka, M.; Kondo, H.; Morishita, M.; Fukuyama, H.; Shibasaki, H. An emotion-based facial expression word activates laughter module in the human brain: A functional magnetic resonance imaging study. Neurosci. Lett. 2003, 340, 127–130. [Google Scholar] [CrossRef]

- Osaka, N.; Osaka, M.; Morishita, M.; Kondo, H.; Fukuyama, H. A word expressing affective pain activates the anterior cingulate cortex in the human brain: An fMRI study. Behav. Brain Res. 2004, 153, 123–127. [Google Scholar] [CrossRef]

- Hashimoto, T.; Usui, N.; Taira, M.; Nose, I.; Haji, T.; Kojima, S. The neural mechanism associated with the processing of onomatopoeic sounds. NeuroImage 2006, 31, 1762–1770. [Google Scholar] [CrossRef]

- Lockwood, G.; Tuomainen, J. Ideophones in Japanese modulate the P2 and late positive complex responses. Front. Psychol. 2015, 6, 933. [Google Scholar] [CrossRef] [PubMed]

- Kanero, J.; Imai, M.; Okuda, J.; Okada, H.; Matsuda, T. How Sound Symbolism Is Processed in the Brain: A Study on Japanese Mimetic Words. PLoS ONE 2014, 9, e97905. [Google Scholar] [CrossRef] [PubMed]

- Manfredi, M.; Cohn, N.; Kutas, M. When a hit sounds like a kiss: An electrophysiological exploration of semantic processing in visual narrative. Brain Lang. 2017, 169, 28–38. [Google Scholar] [CrossRef] [PubMed]

- Egashira, Y.; Choi, D.; Motoi, M.; Nishimura, T.; Watanuki, S. Differences in Event-Related Potential Responses to Japanese Onomatopoeias and Common Words. Psychology 2015, 06, 1653–1660. [Google Scholar] [CrossRef]

- Cummings, A.; Čeponienė, R.; Koyama, A.; Saygin, A.; Townsend, J.; Dick, F. Auditory semantic networks for words and natural sounds. Brain Res. 2006, 1115, 92–107. [Google Scholar] [CrossRef] [PubMed]

- Peeters, D. Processing consequences of onomatopoeic iconicity in spoken language comprehension. In Proceedings of the 38th Annual Meeting of the Cognitive Science Society (CogSci 2016): Cognitive Science Society, Philadelphia, PA, USA, 10–13 August 2016; pp. 1632–1647. [Google Scholar]

- Oldfield, R.C. The assessment and analysis of handedness: The Edinburgh inventory. Neuropsychologia 1971, 9, 97–113. [Google Scholar] [CrossRef]

- Knecht, S.; Dräger, B.; Deppe, M.; Bobe, L.; Lohmann, H.; Flöel, A.; Ringelstein, E.-B.; Henningsen, H. Handedness and hemispheric language dominance in healthy humans. Brain 2000, 123, 2512–2518. [Google Scholar] [CrossRef]

- Perani, D.; Dehaene, S.; Grassi, F.; Cohen, L.; Cappa, S.F.; Dupoux, E.; Fazio, F.; Mehler, J. Brain processing of native and foreign languages. NeuroReport 1996, 7, 2439–2444. [Google Scholar] [CrossRef]

- Sakamoto, M.; Ueda, Y.; Doizaki, R.; Shimizu, Y. Communication Support System Between Japanese Patients and Foreign Doctors Using Onomatopoeia to Express Pain Symptoms. J. Adv. Comput. Intell. Intell. Inform. 2014, 18, 1020–1025. [Google Scholar] [CrossRef]

- Van Casteren, M.; Davis, M.H. Match: A program to assist in matching the conditions of factorial experiments. Behav. Res. Methods 2007, 39, 973–978. [Google Scholar] [CrossRef]

- Oostenveld, R.; Fries, P.; Maris, E.; Schoffelen, J.-M. FieldTrip: Open Source Software for Advanced Analysis of MEG, EEG, and Invasive Electrophysiological Data. Comput. Intell. Neurosci. 2010, 2011, 156869. [Google Scholar] [CrossRef] [PubMed]

- R Development Core Team. R: A Language and Environment for Statistical Computing; R Foundation for Statistical Computing: Vienna, Austria, 2013; Available online: https://www.R-project.org/ (accessed on 28 January 2019).

- Jung, T.-P.; Makeig, S.; Westerfield, M.; Townsend, J.; Courchesne, E.; Sejnowski, T.J. Removal of eye activity artifacts from visual event-related potentials in normal and clinical subjects. Clin. Neurophysiol. 2000, 111, 1745–1758. [Google Scholar] [CrossRef]

- Maris, E.; Oostenveld, R. Nonparametric statistical testing of EEG- and MEG-data. J. Neurosci. Methods 2007, 164, 177–190. [Google Scholar] [CrossRef] [PubMed]

- Shtyrov, Y.; Hauk, O.; Pulvermüller, F. Distributed neuronal networks for encoding category-specific semantic information: The mismatch negativity to action words. Eur. J. Neurosci. 2004, 19, 1083–1092. [Google Scholar] [CrossRef]

- Assadollahi, R.; Rockstroh, B. Neuromagnetic brain responses to words from semantic sub-and supercategories. BMC Neurosci. 2005, 6, 57. [Google Scholar] [CrossRef]

- Ortigue, S.; Michel, C.M.; Murray, M.M.; Mohr, C.; Carbonnel, S.; Landis, T. Electrical neuroimaging reveals early generator modulation to emotional words. NeuroImage 2004, 21, 1242–1251. [Google Scholar] [CrossRef]

- Kelly, A.C.; Uddin, L.Q.; Biswal, B.B.; Castellanos, F.X.; Milham, M.P. Competition between functional brain networks mediates behavioral variability. Neuroimage 2008, 39, 527–537. [Google Scholar] [CrossRef]

- Niccolai, V.; Klepp, A.; Indefrey, P.; Schnitzler, A.; Biermann-Ruben, K. Semantic discrimination impacts tDCS modulation of verb processing. Sci. Rep. 2017, 7, 17162. [Google Scholar] [CrossRef]

- Weiss, S.; Mueller, H.M. “Too Many betas do not Spoil the Broth”: The Role of Beta Brain Oscillations in Language Processing. Front. Psychol. 2012, 3, 201. [Google Scholar] [CrossRef]

- Pulvermüller, F.; Berthier, M.L. Aphasia therapy on a neuroscience basis. Aphasiology 2008, 22, 563–599. [Google Scholar] [CrossRef]

- Ghio, M.; Locatelli, M.; Tettamanti, A.; Perani, D.; Gatti, R.; Tettamanti, M. Cognitive training with action-related verbs induces neural plasticity in the action representation system as assessed by gray matter brain morphometry. Neuropsychologia 2018, 114, 186–194. [Google Scholar] [CrossRef] [PubMed]

- Durand, E.; Berroir, P.; Ansaldo, A.I. The Neural and Behavioral Correlates of Anomia Recovery following Personalized Observation, Execution, and Mental Imagery Therapy: A Proof of Concept. Neural Plast. 2018, 2018, 5943759. [Google Scholar] [CrossRef] [PubMed]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Röders, D.; Klepp, A.; Schnitzler, A.; Biermann-Ruben, K.; Niccolai, V. Induced and Evoked Brain Activation Related to the Processing of Onomatopoetic Verbs. Brain Sci. 2022, 12, 481. https://doi.org/10.3390/brainsci12040481

Röders D, Klepp A, Schnitzler A, Biermann-Ruben K, Niccolai V. Induced and Evoked Brain Activation Related to the Processing of Onomatopoetic Verbs. Brain Sciences. 2022; 12(4):481. https://doi.org/10.3390/brainsci12040481

Chicago/Turabian StyleRöders, Dorian, Anne Klepp, Alfons Schnitzler, Katja Biermann-Ruben, and Valentina Niccolai. 2022. "Induced and Evoked Brain Activation Related to the Processing of Onomatopoetic Verbs" Brain Sciences 12, no. 4: 481. https://doi.org/10.3390/brainsci12040481

APA StyleRöders, D., Klepp, A., Schnitzler, A., Biermann-Ruben, K., & Niccolai, V. (2022). Induced and Evoked Brain Activation Related to the Processing of Onomatopoetic Verbs. Brain Sciences, 12(4), 481. https://doi.org/10.3390/brainsci12040481