Viewers Change Eye-Blink Rate by Predicting Narrative Content

Abstract

1. Introduction

1.1. Content: Storytelling and Attention

1.2. Style: Media and Attention

1.3. Synchronization in Media Perception

2. Materials and Methods

2.1. Participants

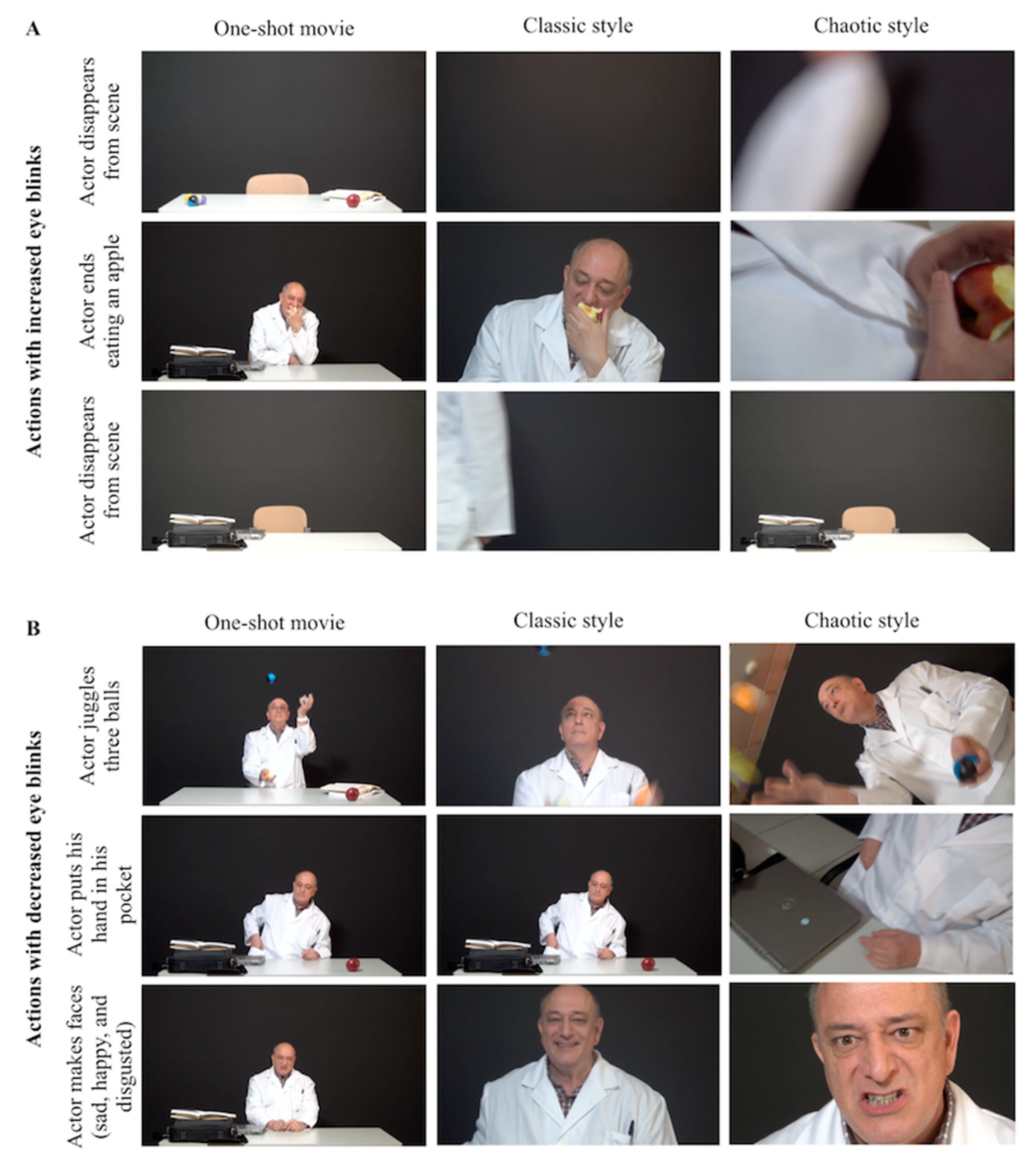

2.2. Stimuli

2.3. Data Acquisition

2.4. Data Analysis

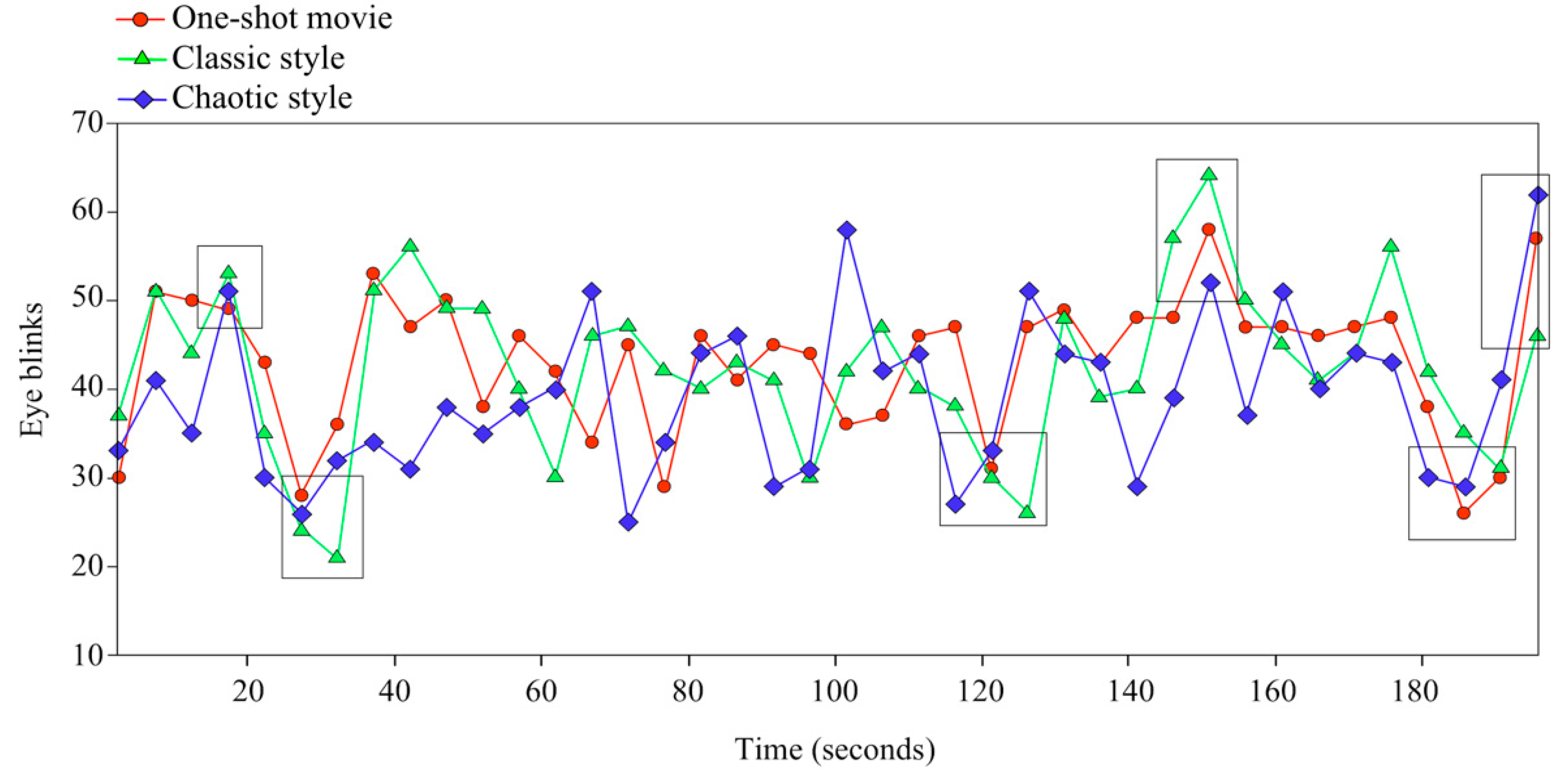

3. Results

4. Discussion

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Abelson, M.B. It’s Time to Think About the Blink. Rev. Ophtalmol. 2011, 58–61. [Google Scholar]

- Doane, M. Interaction of Eyelids and Tears in Corneal Wetting and the Dynamics of the Normal Human Eyeblink. Am. J. Ophthalmol. 1980, 89, 507–516. [Google Scholar] [CrossRef]

- Spinelli, H.M. Anatomy. In Atlas of Aesthetic Eyelid and Periocular Surgery; Spinelly, H.M., Ed.; Saunders: Philadelphia, PA, USA, 2004; pp. 2–27. [Google Scholar]

- Shapiro, K.L.; Raymond, J.E. The attentional blink: Temporal constraints on consciousness. In Attention and Time; Nobre, A.C., Coull, J.T., Eds.; Oxford University Press: Oxford, UK, 2008; pp. 35–48. [Google Scholar]

- Schiffman, H.R. Sensation and Perception. In An Integrated Approach; John Wiley and Sons: New York, NY, USA, 2000. [Google Scholar]

- Shultz, S.; Klin, A.; Jones, W. Inhibition of eye blinking reveals subjective perceptions of stimulus salience. Proc. Natl. Acad. Sci. USA 2011, 108, 21270–21275. [Google Scholar] [CrossRef] [PubMed]

- Kaminer, J.; Powers, A.; Horn, K. Characterizing the spontaneous blink generator: An animal model. J. Neurosci. 2011, 31, 11256–11267. [Google Scholar] [CrossRef]

- Nakano, T. Blink-related dynamic switching between internal and external orienting networks while viewing videos. Neurosci. Res. 2015, 96, 54–58. [Google Scholar] [CrossRef] [PubMed]

- Jongkees, B.J.; Colzato, L.S. Spontaneous eye blink rate as predictor of dopamine-related cognitive function—A review. Neurosci. Biobehav. Rev. 2016, 71, 58–82. [Google Scholar] [CrossRef] [PubMed]

- Slagter, H.A.; Georgopoulou, K.; Frank, M.J. Spontaneous eye blink rate predicts learning from negative, but not positive, outcomes. Neuropsychologia 2015, 71, 126–132. [Google Scholar] [CrossRef] [PubMed]

- Maffei, A.; Angrilli, A. Spontaneous blink rate as an index of attention and emotion during film clips viewing. Physiol. Behav. 2019, 204, 256–263. [Google Scholar] [CrossRef]

- Hömke, P.; Holler, J.; Levinson, S.C. Eye blinks are perceived as communicative signals in human face-to-face interaction. PLoS ONE 2018, 13, e0208030. [Google Scholar] [CrossRef] [PubMed]

- Leal, S.; Vrij, A. Blinking During and After Lying. J. Nonverbal Behav. 2008, 32, 187–194. [Google Scholar] [CrossRef]

- Fogarty, C.; Stern, J.A. Eye movements and blinks: Their relationship to higher cognitive processes. Int. J. Psychophysiol. 1989, 8, 35–42. [Google Scholar] [CrossRef]

- Hall, A. The Origin and Purposes Of Blinking. Br. J. Ophthalmol. 1945, 29, 445–467. [Google Scholar] [CrossRef] [PubMed]

- Fukuda, K. Analysis of Eyeblink Activity during Discriminative Tasks. Percept. Mot. Ski. 1994, 79, 1599–1608. [Google Scholar] [CrossRef] [PubMed]

- Al-Abdulmunem, M.; Briggs, S.T. Spontaneous blink rate of a normal population sample. Int. Contact Lens Clin. 1999, 26, 29–32. [Google Scholar] [CrossRef]

- Wiseman, R.J.; Nakano, T. Blink and you’ll miss it: The role of blinking in the perception of magic tricks. PeerJ 2016, 4, 1873. [Google Scholar] [CrossRef]

- Münsterberg, H. The Photoplay; Appleton and Company: New York, NY, USA, 1916. [Google Scholar]

- Aumont, J. L’Image; Editions Nathan: Paris, France, 1990. [Google Scholar]

- Casetti, F. Inside the gaze. In The Fiction Film and Its Spectator; Indiana University Press: Bloomington, IN, USA, 1998. [Google Scholar]

- Todorov, T.; Weinstein, A. Structural Analysis of Narrative. Nov. A Forum Fict. 1969, 3, 70. [Google Scholar] [CrossRef]

- Nakano, T.; Yamamoto, Y.; Kitajo, K.; Takahashi, T.; Kitazawa, S. Synchronization of spontaneous eyeblinks while viewing video stories. Proc. R. Soc. B Boil. Sci. 2009, 276, 3635–3644. [Google Scholar] [CrossRef]

- Andreu-Sánchez, C.; Martín-Pascual, M. Ángel; Gruart, A.; Delgado-García, J.M. Eyeblink rate watching classical Hollywood and post-classical MTV editing styles, in media and non-media professionals. Sci. Rep. 2017, 7, srep43267. [Google Scholar] [CrossRef]

- Martín-Pascual, M.A.; Andreu-Sánchez, C.; Delgado-Garcia, J.M.; Gruart, A. Using Electroencephalography Measurements and High-quality Video Recording for Analyzing Visual Perception of Media Content. J. Vis. Exp. 2018, 135, e57321. [Google Scholar] [CrossRef]

- Andreu-Sánchez, C.; Martín-Pascual, M.Á.; Gruart, A.; Delgado-García, J.M. Looking at reality versus watching screens: Media professionalization effects on the spontaneous eyeblink rate. PLoS ONE 2017, 12, e0176030. [Google Scholar] [CrossRef]

- Nakano, T.; Kitazawa, S. Eyeblink entrainment at breakpoints of speech. Exp. Brain Res. 2010, 205, 577–581. [Google Scholar] [CrossRef] [PubMed]

- Nakano, T.; Kato, N.; Kitazawa, S. Lack of eyeblink entrainments in autism spectrum disorders. Neuropsychologia 2011, 49, 2784–2790. [Google Scholar] [CrossRef]

- Gunning, T. DW Griffith and the Origins of American Narrative Film: The Early Years at Biograph; University of Illinois Press: Champaign, IL, USA, 1994. [Google Scholar]

- Bordwell, D.; Staiger, J.; Thompson, K. The Classical Hollywood Cinema: Film Style & Mode of Production to 1960. Poet. Today 1986, 7, 179. [Google Scholar] [CrossRef]

- Kuleshov, L. Kuleshov, L. Kuleshov on film. In Writings of Lev Kuleshov; Levaco, R., Ed.; University of California Press, Ltd.: Berkeley, CA, USA, 1974. [Google Scholar]

- Pudovkin, V.I. Film Technique and Film Acting-The Cinema Writings of V.I. Pudovkin; Vision: London, UK, 1954. [Google Scholar]

- Eisenstein, S.M. Towards a Theory of Montage. In Selected Works; British Film Institute: London, UK, 1991. [Google Scholar]

- Morante, L.F.M. Editing and Montage in International Film and Video; Taylor & Francis: Abingdon, UK, 2017. [Google Scholar]

- Treisman, A.M.; Gelade, G. A feature-integration theory of attention. Cogn. Psychol. 1980, 12, 97–136. [Google Scholar] [CrossRef]

- Andreu-Sánchez, C.; Martín-Pascual, M.Á.; Gruart, A.; Delgado-García, J.M. Chaotic and Fast Audiovisuals Increase Attentional Scope but Decrease Conscious Processing. Neuroscience 2018, 394, 83–97. [Google Scholar] [CrossRef] [PubMed]

- Biederman, I. Recognition-by-components: A theory of human image understanding: Clarification. Psychol. Rev. 1989, 96, 2. [Google Scholar] [CrossRef]

- Zacks, J.M.; Speer, N.K.; Swallow, K.M.; Maley, C.J. The brain′s cutting-room floor: Segmentation of narrative cinema. Front. Hum. Neurosci. 2010, 4, 1–15. [Google Scholar] [CrossRef] [PubMed]

- Zacks, J.M.; Speer, N.K.; Reynolds, J.R. Segmentation in reading and film comprehension. J. Exp. Psychol. Gen. 2009, 138, 307–327. [Google Scholar] [CrossRef] [PubMed]

- Smith, T.J.; Henderson, J.M. Edit Blindness: The relationship between attention and global change blindness in dynamic scenes. J. Eye Mov. Res. 2008, 2, 1–17. [Google Scholar] [CrossRef]

- Murch, W. In The Blink of An Eye: A Perspective on Film Editing, 2nd ed.; Silman-James Press: Beverly Hills, CA, USA, 1995. [Google Scholar]

- Hasson, U.; Nir, Y.; Levy, I.; Fuhrmann, G.; Malach, R. Intersubject Synchronization of Cortical Activity During Natural Vision. Science 2004, 303, 1634–1640. [Google Scholar] [CrossRef]

- Hasson, U.; Landesman, O.; Knappmeyer, B.; Vallines, I.; Rubin, N.; Heeger, D.J. Neurocinematics: The Neuroscience of Film. Projections 2008, 2, 1–26. [Google Scholar] [CrossRef]

- Hawkins, J.; Blakeslee, S. On Intelligence; Henry Holt and Company: New York, NY, USA, 2004. [Google Scholar]

- Andreu-Sánchez, C.; Martín-Pascual, M.Á.; Gruart, A.; Delgado-García, J.M. The Effect of Media Professionalization on Cognitive Neurodynamics during Audiovisual Cuts. Front. Syst. Neurosci. 2021, 15, 598383. [Google Scholar] [CrossRef] [PubMed]

- Ekman, P. An argument for basic emotions. Cogn. Emot. 1992, 6, 169–200. [Google Scholar] [CrossRef]

- Nakano, T.; Kato, M.; Morito, Y.; Itoi, S.; Kitazawa, S. Blink-related momentary activation of the default mode network while viewing videos. Proc. Natl. Acad. Sci. USA 2013, 110, 702–706. [Google Scholar] [CrossRef] [PubMed]

- Fox, M.D.; Snyder, A.Z.; Vincent, J.L.; Corbetta, M.; Van Essen, D.C.; Raichle, M.E. From The Cover: The human brain is intrinsically organized into dynamic, anticorrelated functional networks. Proc. Natl. Acad. Sci. USA 2005, 102, 9673–9678. [Google Scholar] [CrossRef]

- Mason, M.F.; Norton, M.I.; Van Horn, J.D.; Wegner, D.M.; Grafton, S.T.; Macrae, C.N. Wandering Minds: The Default Network and Stimulus-Independent Thought. Science 2007, 315, 393–395. [Google Scholar] [CrossRef] [PubMed]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Andreu-Sánchez, C.; Martín-Pascual, M.Á.; Gruart, A.; Delgado-García, J.M. Viewers Change Eye-Blink Rate by Predicting Narrative Content. Brain Sci. 2021, 11, 422. https://doi.org/10.3390/brainsci11040422

Andreu-Sánchez C, Martín-Pascual MÁ, Gruart A, Delgado-García JM. Viewers Change Eye-Blink Rate by Predicting Narrative Content. Brain Sciences. 2021; 11(4):422. https://doi.org/10.3390/brainsci11040422

Chicago/Turabian StyleAndreu-Sánchez, Celia, Miguel Ángel Martín-Pascual, Agnès Gruart, and José María Delgado-García. 2021. "Viewers Change Eye-Blink Rate by Predicting Narrative Content" Brain Sciences 11, no. 4: 422. https://doi.org/10.3390/brainsci11040422

APA StyleAndreu-Sánchez, C., Martín-Pascual, M. Á., Gruart, A., & Delgado-García, J. M. (2021). Viewers Change Eye-Blink Rate by Predicting Narrative Content. Brain Sciences, 11(4), 422. https://doi.org/10.3390/brainsci11040422