Consciousness Is a Thing, Not a Process

Abstract

Featured Application

Abstract

1. Introduction: What Is Meant By ‘Consciousness’?

2. Phenomenal Consciousness Aka Sensory Experience

2.1. The Central Dogma of Cognitive Science

2.2. Brain Processes Wrongly Equated with Sensory Consciousness

2.2.1. Focal Aka Top-Down Attention

2.2.2. Processes Occuring in ‘the Global Workspace’

2.2.3. Prefrontal Activity

2.3. Sequence of Post-Stimulus Neural Activity

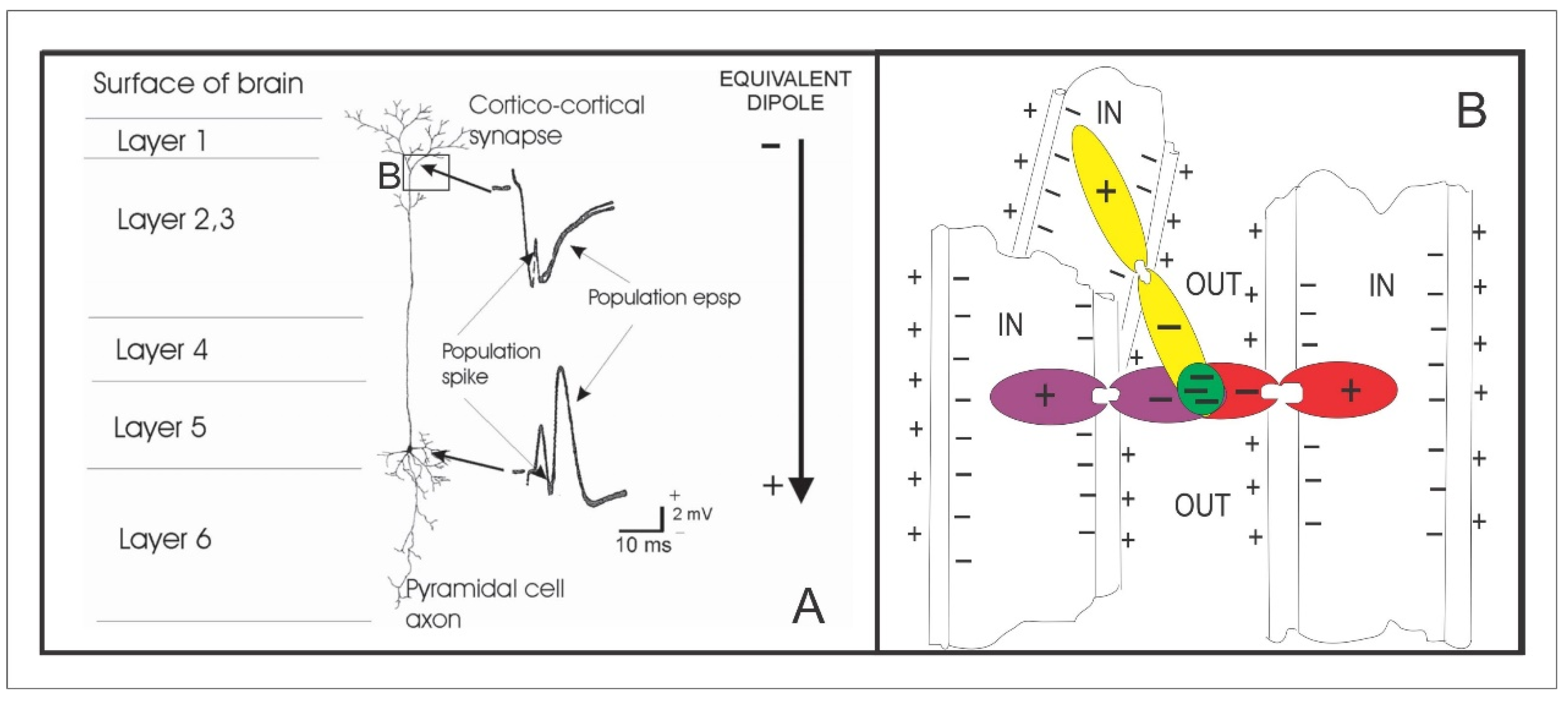

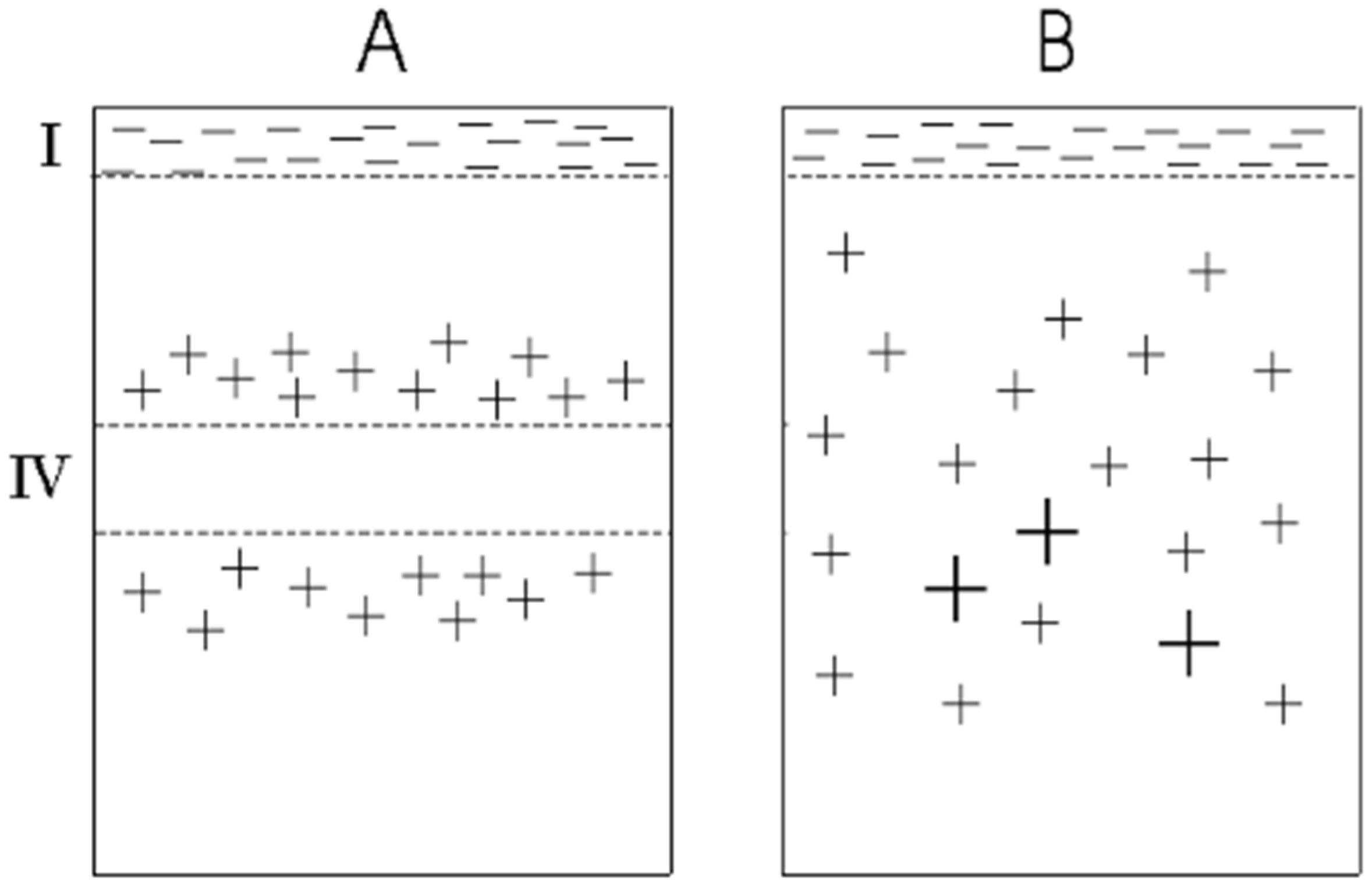

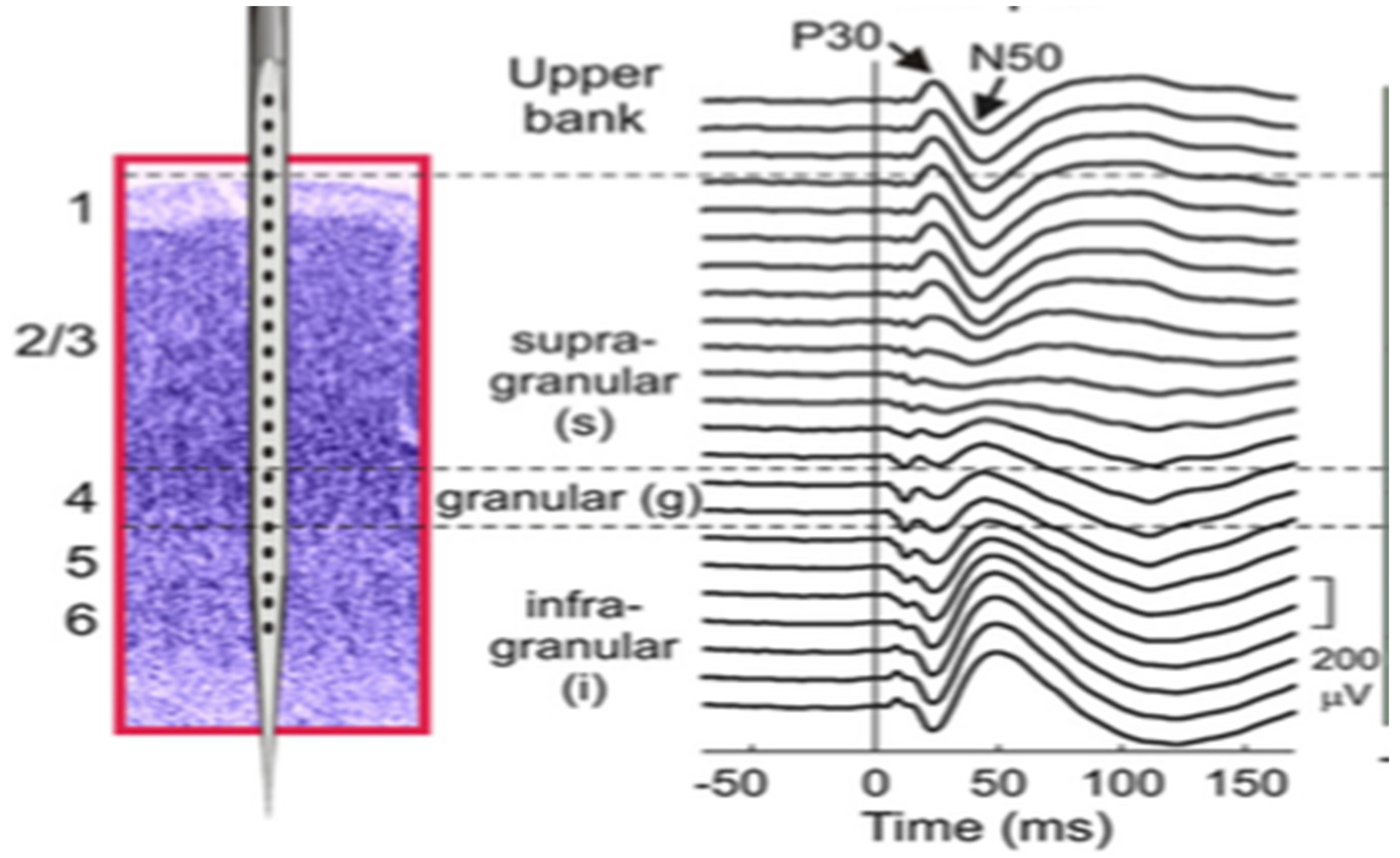

2.4. Neurophysiological Difference between Feed-Forward and Feed-Back Activity in Primary Sensory Cortex

2.5. What Manner of Thing Is Consciousness?

3. Are the Neural Processes That Result in Voluntary Actions Conscious?

3.1. The Anatomy of Action

3.2. Experimental Evidence on the Relationship of Consciousness to Action

3.3. Distinguishing Anatomical Feature of Motor Cortex and Predicted Characteristics of Conscious vs. Unconscious Fields

3.4. Long-Term Planning of Voluntary Actions

4. The Electromagnetic Field Theory of Consciousness

4.1. Proposed Characteristics of Conscious EM Fields

4.2. A Manifesto for Future Experimental Testing of the EM Field Theory of Consciousness

4.3. Implications for the Construction of Artificial Consciousness

4.4. Implications for Neuroscience

Acknowledgments

Conflicts of Interest

References

- Tononi, G.; Edelman, G.M. Consciousness and complexity. Science 1998, 282, 1846–1851. [Google Scholar] [CrossRef] [PubMed]

- James, W. Principles of Psychology; Henry Holt: New York, NY, USA, 1890; p. 1393. [Google Scholar]

- Watson, J.B. Psychology as the behaviorist views it. Psychol. Rev. 1913, 20, 158–177. [Google Scholar] [CrossRef]

- Miller, G.A. Psychology: The Science of Mental Life, 1st ed.; Harper and Row: New York, NY, USA, 1962. [Google Scholar]

- Velmans, M. Is human information processing conscious? Behav. Brain Sci. 1991, 14, 651–669. [Google Scholar] [CrossRef]

- Pockett, S. The Nature of Consciousness: A Hypothesis; iUniverse: New York, NY, USA, 2000; p. 212. [Google Scholar]

- Bachmann, T. Finding ERP-signatures of target awareness: Puzzle persists because of experimental co-variation of the objective and subjective variables. Conscious. Cognit. 2009, 18, 804–806. [Google Scholar] [CrossRef] [PubMed]

- Aru, J.; Bachmann, T.; Singer, W.; Melloni, L. Distilling the neural correlates of consciousness. Neurosci. Biobehav. Rev. 2012, 36, 737–746. [Google Scholar] [CrossRef] [PubMed]

- De Graaf, T.A.; Hsieh, P.J.; Sack, A.T. The ‘correlates’ in neural correlates of consciousness. Neurosci. Biobehav. Rev. 2012, 36, 191–197. [Google Scholar] [CrossRef] [PubMed]

- Neisser, U. Cognitive Psychology; Appleton-Century-Crofts: East Norwalk, CT, USA, 1967. [Google Scholar]

- Baars, B.J. A curious coincidence? Consciousness as an object of scientific scrutiny fits out personal experience remarkably well. Behav. Brain Sci. 1991, 14, 669–670. [Google Scholar] [CrossRef]

- Merikle, P.M.; Joordens, S. Parallels between perception without attention and perception without awareness. Conscious. Cognit. 1997, 6, 219–236. [Google Scholar] [CrossRef] [PubMed]

- Wyart, V.; Tallon-Baudry, C. Neural dissociation between visual awareness and spatial attention. J. Neurosci. 2008, 28, 2667–2679. [Google Scholar] [CrossRef] [PubMed]

- Baars, B.J. In the Theater of Consciousness: The Workspace of the Mind; Oxford University Press: New York, NY, USA, 1997. [Google Scholar]

- Baars, B.J. A Cognitive Theory of Consciousness; Cambridge University Press: New York, NY, USA, 1988. [Google Scholar]

- Baars, B.J. Some essential differences between consciousness and attention, perception and working memory. Conscious. Cognit. 1997, 6, 363–371. [Google Scholar] [CrossRef] [PubMed]

- Dehaene, S.; Naccache, L. Towards a cognitive neuroscience of consciousness: Basic evidence and a workspace framework. Cognition 2001, 79, 1–37. [Google Scholar] [CrossRef]

- Edelman, G.M. Group selection and phasic reentrant signaling: A theory of higher brain function. In The Mindful Brain: Cortical Organization and the Group Selective Theory of Higher Brain Function; Edelman, G.M., Mountcastle, V.B., Eds.; MIT Press: Boston, UK, 1978; pp. 51–98. [Google Scholar]

- Lamme, V.A.F.; Supèr, H.; Spekreijse, H. Feedforward, horizontal and feedback processing in the visual cortex. Curr. Opin. Neurobiol. 1998, 8, 529–535. [Google Scholar] [CrossRef]

- Lee, T.S.; Mumford, D.; Romero, R.; Lamme, V.A.F. The role of primary visual cortex in higher level vision. Vis. Res. 1998, 38, 2429–2454. [Google Scholar] [CrossRef]

- Pollen, D. On the neural correlates of visual perception. Cereb. Cortex 1999, 9, 4–19. [Google Scholar] [CrossRef] [PubMed]

- Pollen, D. Explicit neural representations, recursive neural networks and conscious visual perception. Cereb. Cortex 2003, 13, 807–814. [Google Scholar] [CrossRef] [PubMed]

- Pollen, D. Fundamental requirements for primary visual perception. Cereb. Cortex 2008, 18, 1991–1998. [Google Scholar] [CrossRef] [PubMed]

- Fahrenfort, J.J.; Scholte, H.S.; Lamme, V.A.F. Masking disrupts reentrant processing in human visual cortex. J. Cognit. Neurosci. 2007, 19, 1488–1497. [Google Scholar] [CrossRef] [PubMed]

- Lamme, V.A.F. Separate neural definitions of visual consciousness and visual attention: A case for phenomenal awareness. Neural Netw. 2004, 17, 861–872. [Google Scholar] [CrossRef] [PubMed]

- Lamme, V.A.F.; Roelfsema, P. The distinct modes of vision offered by feedforward and recurrent processing. Trends Neurosci. 2000, 23, 571–579. [Google Scholar] [CrossRef]

- Supèr, H.; Spekreijse, H.; Lamme, V.A.F. Two distinct modes of sensory processing observed in monkey primary visual cortex (V1). Nat. Neurosci. 2001, 4, 304–310. [Google Scholar] [CrossRef] [PubMed]

- Juan, C.-H.; Campana, G.; Walsh, V. Cortical interactions in vision and awareness: Hierarchies in reverse. Prog. Brain Res. 2004, 144, 117–130. [Google Scholar] [PubMed]

- Pascual-Leone, A.; Walsh, V. Fast backprojections from the motion to the primary visual area necessary for visual awareness. Science 2001, 292, 510–512. [Google Scholar] [CrossRef] [PubMed]

- Silvanto, J.; Lavie, N.; Walsh, V. Double dissociation of V1 and V5/MT activity in visual awareness. Cereb. Cortex 2005, 15, 1736–1741. [Google Scholar] [CrossRef] [PubMed]

- Ro, T.; Breitmeyer, B.; Burton, P.; Singhai, N.S.; Lane, D. Feedback con- tributions to visual awareness in human occipital cortex. Curr. Biol. 2003, 14, 1038–1041. [Google Scholar] [CrossRef]

- Thielscher, A.; Reichenback, A.; Uðurbil, K.; Uludağ, K. The cortical site of visual suppression by transcranial magnetic stimulation. Cereb. Cortex 2010, 20, 328–338. [Google Scholar] [CrossRef] [PubMed]

- Koivisto, M.; Mäntylä, T.; Silvanto, J. The role of early visual cortex (v1/v2) in conscious and unconscious visual perception. Neuroimage 2010, 51, 828–834. [Google Scholar] [CrossRef] [PubMed]

- Boyer, L.L.; Harrison, S.; Ro, T. Unconscious processing of orientation and color without primary visual cortex. Proc. Natl. Acad. Sci. USA 2005, 102, 16875–16879. [Google Scholar] [CrossRef] [PubMed]

- Pockett, S. On subjective back-referral and how long it takes to become conscious of a stimulus: A reinterpretation of Libet’s data. Conscious. Cognit. 2002, 11, 144–161. [Google Scholar] [CrossRef]

- Pockett, S. Backwards referral, flash lags and quantum free will: A response to commentaries on papers by Pockett, Klein, Gomes and Trevena & Miller. Conscious. Cognit. 2002, 11, 342–344. [Google Scholar]

- Brascamp, J.; Blake, R.; Knapen, T. Negligable front-parietal bold activity accompanying unreportable switches in bistable perception. Nat. Neurosci. 2015, 18, 1672–1678. [Google Scholar] [CrossRef] [PubMed]

- Frässle, S.; Sommer, J.; Jansen, A.; Naber, M.; Einhäuser, W. Binocular rivalry: Frontal activity relates to introspection and action but not to perception. J. Neurosci. 2014, 34, 1738–1747. [Google Scholar] [CrossRef] [PubMed]

- Boly, M.; Massimini, M.; Tsuchiya, N.; Postle, B.R.; Koch, C.; Tononi, G. Are the neural correlates of consciousness in the front or the back of the Cereb. Cortex? Clinical and neuroimaging evidence. J. Neurosci. 2017, 37, 9603–9613. [Google Scholar] [CrossRef] [PubMed]

- Tsuchiya, N.; Wilke, M.; Frässle, S.; Lamme, V.A.F. No-report paradigms: Extracting the true neural correlates of consciousness. Trends Cognit. Sci. 2015, 19, 757–770. [Google Scholar] [CrossRef] [PubMed]

- Pitts, M.A.; Padwal, J.; Fennelly, D.; Martinez, A.; Hillyard, S.A. Gamma band activity and the P3 reflect post-perceptual processes, not visual awareness. Neuroimage 2014, 101, 337–350. [Google Scholar] [CrossRef] [PubMed]

- Odegaard, B.; Knight, R.T.; Lau, H. Should a few null findings falsify prefrontal theories of conscious perception? J. Neurosci. 2017, 37, 9593–9602. [Google Scholar] [CrossRef] [PubMed]

- Knight, R.T. Aging decreases auditory event-related potentials to unexpected stimuli in humans. Neurobiol. Aging 1987, 8, 109–113. [Google Scholar] [CrossRef]

- Inui, K.; Wng, X.; Tamura, Y.; Kaneoke, Y.; Kakigi, R. Serial processing in the human somatosensory system. Cereb. Cortex 2004, 14, 851–857. [Google Scholar] [CrossRef] [PubMed]

- Moutard, C.; Dehaene, S.; Malach, R. Spontaneous fluctuations and non-linear ignitions: Two dynamic faces of cortical recurrent loops. Neuron 2015, 88, 194–206. [Google Scholar] [CrossRef] [PubMed]

- Salami, M.; Itami, C.; Tsumoto, T.; Kimura, F. Change of conduction velocity by regional myelination yeilds constant latency irrespective of distance between thalamus and cortex. Proc. Natl. Acad. Sci. USA 2003, 100, 6174–6179. [Google Scholar] [CrossRef] [PubMed]

- Anderson, J.C.; Martin, K.A.C. Connection from cortical area V2 to V3a in macaque monkey. J. Comp. Neurol. 2005, 488, 320–330. [Google Scholar] [CrossRef] [PubMed]

- He, S.; Cavanagh, P.; Intilligator, J. Attentional resolution and the locus of visual awareness. Nature 1996, 383, 334–337. [Google Scholar] [CrossRef] [PubMed]

- He, S.; MacLeod, D.I. Orientation-selective adaptation and tilt after-effect from invisible patterns. Nature 2001, 411, 473–476. [Google Scholar] [CrossRef] [PubMed]

- Cumming, B.G.; Parker, A. Responses of primary visual cortical neurons to binocular disparity without depth perception. Nature 1997, 389, 280–283. [Google Scholar] [CrossRef] [PubMed]

- Gawne, T.J.; Martin, J.M. Activity of primate V1 cortical neurons during blinks. J. Neurophysiol. 2000, 84, 2691–2694. [Google Scholar] [PubMed]

- Martinez-Conde, S.; Macknik, S.L.; Hubel, D.H. Microsaccadic eye movements and firing of single cells in the striate cortex of macaque monkeys. Nat. Neurosci. 2000, 3, 251–258. [Google Scholar] [CrossRef] [PubMed]

- Gur, M.; Snodderly, D.M. A dissociation between brain activity and perception: Chromatically opponent cortical neurons signal chromatic flicker that is not perceived. Vis. Res. 1997, 37, 377–382. [Google Scholar] [CrossRef]

- Sheinberg, D.L.; Logothetis, N.K. The role of temporal cortical areas in perceptual organization. Proc. Natl. Acad. Sci. USA 1997, 94, 3408–3413. [Google Scholar] [CrossRef] [PubMed]

- Polonsky, A.; Blake, R.; Braun, J.; Heeger, D.J. Neuronal activity in human primary visual cortex correlates with perception during binocular rivalry. Nat. Neurosci. 2000, 3, 1153–1159. [Google Scholar] [CrossRef] [PubMed]

- Tong, F.; Engel, S.A. Interocular rivalry revealed in the human cortical blind-spot representation. Nature 2001, 411, 195–199. [Google Scholar] [CrossRef] [PubMed]

- Wilke, M.; Logothetis, N.K.; Leopold, D.A. Local field potential reflects perceptual suppression in monkey visual cortex. Proc. Natl. Acad. Sci. USA 2006, 103, 17507–17512. [Google Scholar] [CrossRef] [PubMed]

- Buzsáki, G.; Anastassiou, C.A.; Koch, C. The origin of extracellular fields and currents—EEG, ECoG, LFP and spikes. Nat. Rev. Neurosci. 2012, 13, 407–420. [Google Scholar] [CrossRef] [PubMed]

- Einevoll, G.T.; Kayser, C.; Logothetis, N.K.; Panzeri, S. Modelling and analysis of local field potentials for studying the function of cortical circuits. Nat. Rev. Neurosci. 2013, 14, 770–785. [Google Scholar] [CrossRef] [PubMed]

- Adey, W.R. Tissue interactions with nonionizing electromagnetic fields. Physiol. Rev. 1981, 61, 435–514. [Google Scholar] [PubMed]

- Richardson, T.L.; Turner, R.W.; Miller, J.J. Extracellular fields influence transmembrane potentials and synchronization of hippocampal neuronal activity. Brain Res. 1984, 294, 255–262. [Google Scholar] [CrossRef]

- Turner, R.W.; Richardson, T.L.; Miller, J.J. Ephaptic interactions contribute to paired pulse and frequency potentiation of hippocampal field potentials. Exp. Brain Res. 1984, 54, 567–570. [Google Scholar] [CrossRef] [PubMed]

- Dudek, F.E.; Snow, R.W.; Taylor, C.P. Role of electrical interactions in synchronization of epileptiform bursts. Adv. Neurol. 1986, 44, 593–617. [Google Scholar] [PubMed]

- Taylor, C.P.; Krnjevic, K.; Ropert, N. Facilitation of hippocampal CA3 pyramidal cell firing by electrical fields generated antidromically. Neuroscience 1984, 11, 101–109. [Google Scholar] [CrossRef]

- Snow, R.W.; Dudek, F.E. Evidence for neuronal interactions by electrical field effects in the CA3 and dentate regions of rat hippocampal slices. Brain Res. 1986, 367, 292–295. [Google Scholar] [CrossRef]

- Dalkara, T.; Krnjevic, K.; Ropert, N.; Yim, C.Y. Chemical modulation of ephaptic interaction of CA3 hippocampal pyramids. Neuroscience 1986, 17, 361–370. [Google Scholar] [CrossRef]

- Yim, C.Y.; Krnjevic, K.; Dalkara, T. Ephaptically generated potentials in CA1 neurons of rat’s hippocampus in situ. J. Neurophysiol. 1986, 56, 99–122. [Google Scholar] [PubMed]

- Faber, D.S.; Korn, H. Electrical field effects: Their relevance in central neural networks. Physiol. Rev. 1989, 69, 821–863. [Google Scholar] [PubMed]

- Frölich, F.; McCormick, D. Endogenous electric fields may guide neocortical activity. Neuron 2010, 67, 129–143. [Google Scholar] [CrossRef] [PubMed]

- Pockett, S. The electromagnetic field theory of consciousness: A testable hypothesis about the characteristics of conscious as opposed to non-conscious fields. J. Conscious. Stud. 2012, 19, 191–223. [Google Scholar]

- Pockett, S. Initiation of intentional actions and the electromagnetic field theory of consciousness. Humana Mente 2011, 15, 159–175. [Google Scholar]

- Pockett, S.; Zhou, Z.Z.; Brennan, B.J.; Bold, G.E.J. Spatial resolution and the neural correlates of sensory experience. Brain Topogr. 2007, 20, 1–6. [Google Scholar] [CrossRef] [PubMed]

- Pockett, S. Brain basis of voluntary control. In Reference Module in Neuroscience and Biobehavioral Psychology; Elsevier: Amsterdam, The Netherlands, 2017; pp. 1–9. [Google Scholar]

- Pockett, S. Does consciousness cause behaviour? J. Conscious. Stud. 2004, 11, 23–40. [Google Scholar]

- Pockett, S. The concept of free will: Philosophy, neuroscience and the law. Behav. Sci. Law 2007, 25, 281–293. [Google Scholar] [CrossRef] [PubMed]

- Pockett, S.; Banks, W.P.; Gallagher, S. Does Consciousness Cause Behavior? MIT Press: Cambridge, MA, USA, 2006; p. 364. [Google Scholar]

- Pockett, S.; Banks, W.P.; Gallagher, S. Editors’ introduction. In Does Consciousness Cause Behavior? Pockett, S., Banks, W.P., Gallagher, S., Eds.; MIT Press: Cambridge, MA, USA, 2006; pp. 1–6. [Google Scholar]

- Pockett, S.; Purdy, S.C. Are voluntary movements initiated preconsciously? The relationships between readiness potentials, urges and decisions. In Conscious Will and Responsibility; Sinnot-Armstrong, W., Nadel, L., Eds.; Oxford University Press: New York, NY, USA, 2011; pp. 34–46. [Google Scholar]

- Wegner, D.M.; Wheatley, T. Apparent mental causation: Sources of the experience of will. Am. Psychol. 1999, 54, 480–492. [Google Scholar] [CrossRef] [PubMed]

- Wegner, D.M.; Sparrow, B.; Winerman, L. Vicarious agency: Experiencing control over the movements of others. J. Pers. Soc. Psychol. 2004, 86, 838–848. [Google Scholar] [CrossRef] [PubMed]

- Kajikawa, Y.; Schroeder, C.E. How local is the local field potential? Neuron 2011, 72, 847–858. [Google Scholar] [CrossRef] [PubMed]

- Newell, B.R.; Shanks, D.R. Unconscious influences on decision making: A critical review. Behav. Brain Sci. 2014, 37, 1–61. [Google Scholar] [CrossRef] [PubMed]

- Tononi, G.; Srinivasan, R.; Russell, D.P.; Edelman, G.M. Investigating neural correlates of conscious perception by frequency- tagged neuromagnetic responses. Proc. Natl. Acad. Sci. USA 1998, 95, 3198–3203. [Google Scholar] [CrossRef] [PubMed]

- Pockett, S.; Brennan, B.J.; Bold, G.E.J.; Holmes, M.D. A possible physiological basis for the discontinuity of consciousness. Front. Psychol. 2011, 2. [Google Scholar] [CrossRef] [PubMed]

- Pockett, S.; Purdy, S.C.; Brennan, B.J.; Holmes, M.D. Auditory click stimuli evoke event-related potentials in the visual cortex. Neuroreport 2013, 24, 837–840. [Google Scholar] [CrossRef] [PubMed]

- Freeman, W.J.; Baird, B. Relation of olfactory EEG to behavior: Spatial analysis. Behav. Neurosci. 1986, 101, 393–408. [Google Scholar] [CrossRef]

- Freeman, W.J.; Grajski, K.A. Relation of olfactory EEG to behavior: Factor analysis. Behav. Neurosci. 1987, 101, 766–777. [Google Scholar] [CrossRef] [PubMed]

- Freeman, W.J.; van Dijk, B.W. Spatial patterns of visual cortical fast EEG during conditioned reflex in a rhesus monkey. Brain Res. 1987, 422, 267–276. [Google Scholar] [CrossRef]

- Freeman, W.J.; Viana di Prisco, G. Relation of olfactory EEG to behavior: Time series analysis. Behav. Neurosci. 1986, 100, 753–763. [Google Scholar] [CrossRef] [PubMed]

- Finkel, A.S.; Redman, S. Theory and operation of a single microelectrode voltage clamp. J. Neurosci. Methods 1984, 11, 101–127. [Google Scholar] [CrossRef]

- Cole, K.S. Dynamic electrical characteristics of the squid axon membrane. Arch. Sci. Physiol. 1949, 3, 253–258. [Google Scholar]

- Barlow, H.B. Single units and sensation: A neuron doctrine for perceptual psychology? Perception 1972, 1, 371–394. [Google Scholar] [CrossRef] [PubMed]

- Gross, C.G. Genealogy of the “grandmother cell”. Neuroscientist 2002, 8, 512–518. [Google Scholar] [CrossRef] [PubMed]

- Connor, C.E. Friends and grandmothers. Nature 2005, 435, 1036–1037. [Google Scholar] [CrossRef] [PubMed]

- Hubel, D.H.; Wiesel, T.N. Receptive fields of single neurones in the cat’s striate cortex. J. Physiol. 1959, 148, 574–591. [Google Scholar] [CrossRef] [PubMed]

- Hubel, D.H.; Wiesel, T.N. Receptive fields and functional architecture in two non-striate visual areas (18 and 19) of the cat. J. Neurophysiol. 1965, 28, 229–289. [Google Scholar] [PubMed]

- Barlow, H.B.; Levick, W.R. The mechanism of directionally selective units in rabbit’s retina. J. Physiol. 1965, 178, 477–504. [Google Scholar] [CrossRef] [PubMed]

- Leopold, D.A.; Logothetis, N.K. Activity changes in early visual cortex reflect monkeys’ percepts during binocular rivalry. Nature 1996, 379, 549–553. [Google Scholar] [CrossRef] [PubMed]

- Logothetis, N.K.; Schall, J.D. Neuronal correlates of subjective visual perception. Science 1989, 245, 761–763. [Google Scholar] [CrossRef] [PubMed]

- Quiroga, R.Q.; Reddy, L.; Kreiman, G.; Koch, C.; Fried, I. Invariant visual representation by single neurons in the human brain. Nature 2005, 435, 1102–1107. [Google Scholar] [CrossRef] [PubMed]

- Blake, R.; Cormack, R. On utrocular discrimination. Percept. Psychophys. 1979, 26, 53–68. [Google Scholar] [CrossRef]

- Rees, G. Neural correlates of the contents of visual awareness in humans. Philos. Trans. R. Soc. B 2007, 362, 877–886. [Google Scholar] [CrossRef] [PubMed]

- Maier, A.; Wilke, M.; Aura, C.; Zhu, C.; Ye, F.Q.; Leopold, D.A. Divergence of fmri and neural signals in V1 during perceptual suppression in the awake monkey. Nat. Neurosci. 2008, 11, 1193–1200. [Google Scholar] [CrossRef] [PubMed]

- Lutz, A.; Lachaux, J.P.; Martineries, J.; Varela, F.J. Guiding the study of brain dynamics by using first-person data: Synchrony patterns correlate with ongoing conscious states during a simple visual task. Proc. Natl. Acad. Sci. USA 2002, 99, 1586–1591. [Google Scholar] [CrossRef] [PubMed]

- Melloni, L.; Molina, C.; Pena, M.; Torres, D.; Singer, W.; Rodriguez, E. Synchronization of neural activity across cortical areas correlates with conscious perception. J. Neurosci. 2007, 27, 2856–2858. [Google Scholar] [CrossRef] [PubMed]

- Gaillard, R.; Dehaene, S.; Adam, C.; Clémenceau, S.; Hasboun, D.; Baulac, M.; Cohen, L.; Naccache, L. Converging intracranial markers of conscious access. PLoS Biol. 2009, 7. [Google Scholar] [CrossRef] [PubMed]

- Tallon-Baudry, C. The roles of gamma-band oscillatory synchrony in human visual cognition. Front. Biosci. 2009, 14, 321–332. [Google Scholar] [CrossRef]

- Pockett, S.; Bold, G.E.J.; Freeman, W.J. EEG synchrony during a perceptual-cognitive task: Widespread phase synchrony at all frequencies. Clin. Neurophysiol. 2009, 120, 695–708. [Google Scholar] [CrossRef] [PubMed]

- Pockett, S.; Holmes, M.D. Intracranial EEG power spectra and phase synchrony during consciousness and unconsciousness. Conscious. Cognit. 2009, 18, 1049–1055. [Google Scholar] [CrossRef] [PubMed]

- Luo, Q.; Mitchell, D.; Cheng, X.; Mondillo, K.; McCaffrey, D.; Holroyd, T.; Carver, F.; Coppola, R.; Blair, J. Visual awareness, emotion and gamma band synchronization. Cereb. Cortex 2009, 19, 1896–1904. [Google Scholar] [CrossRef] [PubMed]

- Pockett, S. Problems with theories that equate consciousness with information or information processing. Front. Syst. Neurosci. 2014, 8. [Google Scholar] [CrossRef] [PubMed]

- Hales, C.G.; Pockett, S. The relationship between local field potentials (LFPs) and the electromagnetic fields that give rise to them. Front. Syst. Neurosci. 2014, 8. [Google Scholar] [CrossRef]

© 2017 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Pockett, S. Consciousness Is a Thing, Not a Process. Appl. Sci. 2017, 7, 1248. https://doi.org/10.3390/app7121248

Pockett S. Consciousness Is a Thing, Not a Process. Applied Sciences. 2017; 7(12):1248. https://doi.org/10.3390/app7121248

Chicago/Turabian StylePockett, Susan. 2017. "Consciousness Is a Thing, Not a Process" Applied Sciences 7, no. 12: 1248. https://doi.org/10.3390/app7121248

APA StylePockett, S. (2017). Consciousness Is a Thing, Not a Process. Applied Sciences, 7(12), 1248. https://doi.org/10.3390/app7121248