1. Introduction

As e-commerce platforms are accompanied by features such as openness and transparency, fierce competition in the same category, product diversification, and review functions, the consumers’ perceptions and evaluations of goods are transferred from the staff in the store to the public view online, and users can share their views on goods and merchants at any time and any place, and express their own feelings. Kiran et al. (2020) considers that online shopping brings convenience, but the virtual nature of the e-commerce platform will result in online product introduction information not matching the real product, as well as the issues of poor product quality or after-sales service which cannot meet the needs of the consumers [

1]. Users now have the ability to share their opinions on products and merchants, expressing their emotions at any time and from anywhere. Once a transaction is concluded, the users’ subjective product reviews convey a certain inclination of sentiment. Kaur and Sharmav (2023), Shuang et al. (2020), and Vijayaragavan et al. (2020) consider that these reviews serve a dual purpose as both “information providers” and “emotional influencers”, significantly impacting merchants [

2,

3,

4]. Not only do they influence a store’s reputation, but they also affect the long-term sales of their products. To enhance merchants’ comprehension of sentiment tendencies within reviews, it becomes imperative to categorize and analyze product review information, thereby discerning whether the feedback leans toward positivity or negativity. This enables merchants to refine their stores and products based on the emotional orientation of the review data, ultimately augmenting user satisfaction, product sales, and store ratings.

The evaluation of review information is not solely influenced by subjective factors such as consumers’ personal preferences, emotions, and personalities. It also exhibits a strong correlation with the objective factor of product quality itself. In order to conduct sentiment analysis on review data, the initial step involves extracting text features. Feature selection aims to extract pertinent information from raw attribute sets while reducing data dimensionality. Yuting Yang et al. encoded text into high-dimensional vectors with contextual semantic, sequential, and sentiment information through the Doc2Vec model and verified the effectiveness of the document’s distributed representation approach [

5]. These attribute sets can be derived based on dictionaries or commonly employed statistical methods [

6].

Following feature extraction, the subsequent step in sentiment analysis entails sentiment categorization. This can be accomplished through various approaches, including machine learning, vocabulary-based methods, and deep learning techniques [

7]. Sentiment analysis models employing polar vocabularies are frequently utilized for sentiment classification. However, the availability of sentiment vocabularies remains limited. Machine learning methods possess distinct advantages over sentiment dictionaries when it comes to nonlinear, high-dimensional pattern recognition problems.

Given that comments encompass a wealth of content, traditional neural network models may struggle to fully capture the complete context of a sentence or comment. Therefore, novel neural networks must employ different types of word embeddings. For instance, utilizing RNN variants like Bidirectional LSTM (BiLSTM) and Bidirectional GRU (BiGRU) proves beneficial for sentiment analysis tasks.

Traditional text data mining methodologies tend to overlook the relevant information pertaining to the online products themselves, particularly the descriptive textual details regarding product quality and the potential disparities between depicted images and the actual unadorned goods. In our contemporary reality, individuals exist within a multimodal and interconnected environment, wherein various forms of information modalities, including text, speech, images, and videos, converge. Enriching linguistic expression methods through the utilization of multiple information modalities allows computers to better comprehend and interpret input data, leading to more precise and comprehensive output results [

8].

Hence, when analyzing product reviews, it becomes essential to consider multimodal information in order to fully grasp consumers’ emotions. This approach not only enhances the users’ genuine experiences and satisfaction but also improves the merchants’ sales performance. By delving into review information, merchants can tailor their products to align with consumers’ current needs, thereby increasing relevance and appeal.

In order to enable AI to attain a deeper comprehension of the world, it is imperative to endow it with the capacity to learn, comprehend, and reason about multimodal information. Multimodal learning entails constructing models that enable machines to acquire knowledge from multiple modalities, allowing for effective communication and information transformation across each modality. Throughout the course of this research, feature representations were created using multimodal deep learning techniques, encompassing both image and text data. Sivakumar and Rajalakshmi (2022) showed that deep learning methods have achieved wide application in many areas such as image recognition, target detection, and network optimization, and are now also being integrated into sentiment analysis and traditional machine learning techniques. This integration has shown good results, especially in building sentiment vocabularies [

9].

In comparison to purely statistical models, machine learning models offer greater diversity and exhibit enhanced capability to capture the diverse features present in multimodal data. These models excel at approximating various nonlinear relationships, while showcasing superior adaptability.

2. Related Works

This section presents a review of the field of sentiment categorization of online review information, highlighting the main areas of research, analytical methods, and conclusions, with a literature review to understand the contributions and applications of each method in their respective areas of research.

Research in the field of multimodal sentiment analysis has been extensively explored in an attempt to combine data of different modalities (e.g., text, image, audio, etc.) to improve the accuracy and comprehensiveness of sentiment analysis tasks. And previous research findings have shown that multimodal sentiment analysis methods that combine different modal data have better performance compared to single-modal methods. The combined use of multimodal data provides a richer and more comprehensive representation of sentiment and helps to improve the accuracy and robustness of sentiment classification. Research on multimodal sentiment analysis has used various approaches to integrate multimodal data, including feature-level fusion, model-level fusion, and attention mechanisms. Deep learning frameworks such as convolutional neural networks (CNNs), recurrent neural networks (RNNs), and attention mechanisms have been widely used for multimodal sentiment analysis tasks. The existing findings suggest that combining multimodal data can improve the performance of sentiment analysis, especially in terms of categorizing and understanding sentiments at a more granular level. Deep learning frameworks have shown good results in multimodal sentiment analysis, which helps to exploit the association and complementarity between different data modalities.

Pang (2002) first applied the machine learning method of N-Gram to the field of sentiment analysis, and the experimental results show that N-Gram achieved the highest classification accuracy of 81.9% [

10]. Since feature selection affects the performance of machine learning methods, Abinash Tripathy (2016) analyzed online review comments through the N-gram model combined with machine learning methods, and the experimental results show that the SVM combined with unigram, bigram, and trigram features obtained the best classification results [

11]. Traditional multi-scalar sentiment categorization methods mainly include two routes: sentiment dictionary-based and machine learning-based. Sentiment lexicon-based methods usually calculate sentiment polarity on a whole-sentence or sub-sentence basis and apply it to all facets involved in a sentence, but they cannot handle the complex mapping relationship between sentences and facets well [

12,

13]. The core of this approach is the application of affective lexicons; however, there are differences in the affective tendencies of affective words in different domains, making it difficult to generalize domain-specific affective lexicons to other domains [

14,

15].

In particular, Duan Dandan et al. used a short text classification model based on BERT, which utilizes BERT’s own sentence vector training to achieve automatic text classification [

16,

17]. The main objective of multimodal learning is to correlate and process multimodal data by building models. According to the research summary of related scholars, the main content of current multimodal research can be summarized as five levels of multimodal data representation, namely data mapping, data alignment, data fusion, and co-learning [

18,

19]. Most of it revolves around cross-modal mapping between data. As the fields of computer vision and natural language processing continue to evolve, and as more large-scale datasets become available for research, multimodal data mapping methods continue to mature [

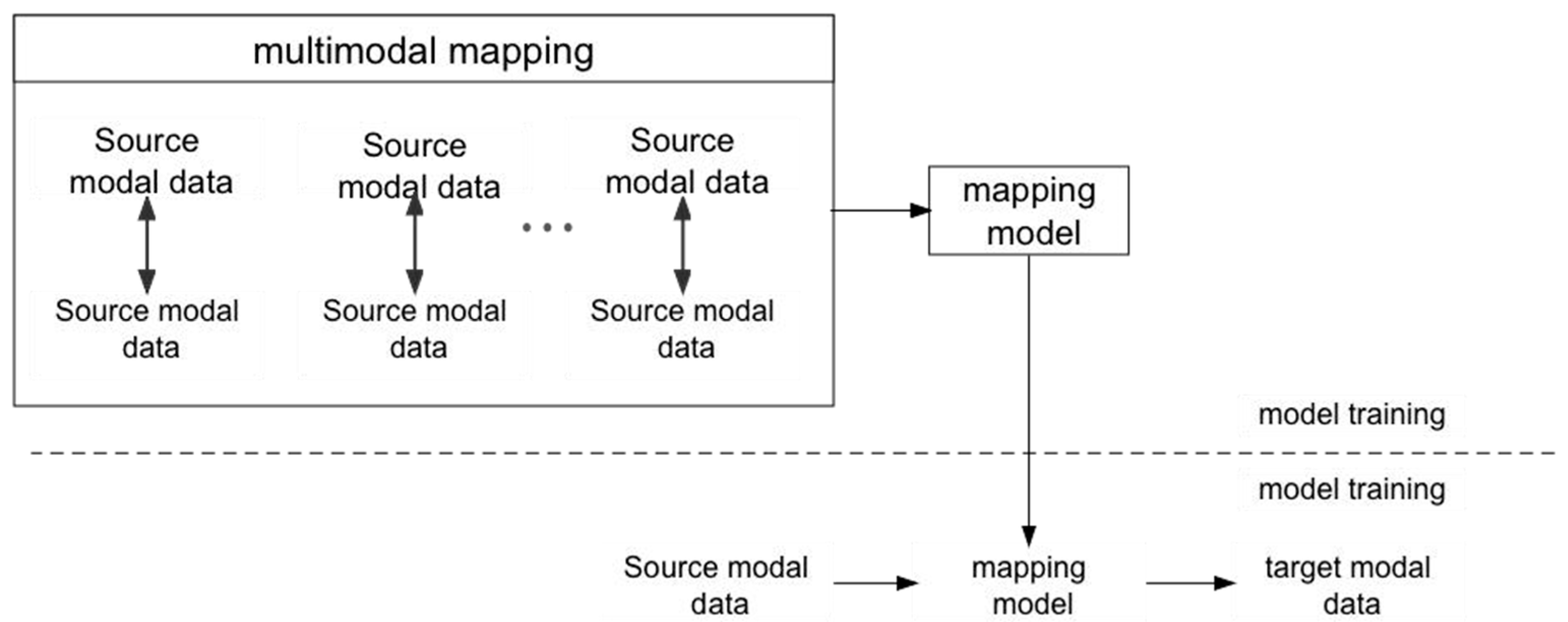

20]. The mainstream multimodal data mapping method is as follows: based on the existing mapping relationship, the existing multimodal data are first symbolized or vectorized, and are then used as the input to the neural network, and then combined with the existing correspondences, mapped to another modal. After continuous training based on massive datasets, a cross-modal data mapping model with universal applicability is obtained. The framework of this multimodal data mapping approach is shown in

Figure 1. One of the most common and widely used scenarios is image semantic recognition [

21,

22], which maps image modal data to textual modal data.

Sentiment analysis of online product review information faces two major challenges: dimension mapping and sentiment word disambiguation. While the dimension mapping problem lies in the correct use of dimensions to map online review texts, sentiment word disambiguation refers to the situation wherein two or more dimensions exist for a sentiment word. Therefore, sentiment analysis of online reviews is considered as a multidimensional classification process [

23]. Kim and Hovy (2004) applied synonyms and antonyms of WordNet dictionaries and hierarchical structures to analyze word vectors’ sentiment tendencies [

24]. Zhu Xiaoliang and other researchers solved the composition classification problem by using the TextRank model to filter key sentence words and combining it with a word embedding model to model documents. This was in consideration of the problem that the Word2Vec model cannot identify the importance of special words and scene words in Chinese in the text [

25].

The construction of a sentiment lexicon requires a significant amount of human intervention, and its completeness and accuracy have an important impact on the sentiment classification results. On the other hand, machine learning-based methods usually regard multi-scalar sentiment polarity recognition as a sequence-labeling problem, which requires manually designing features and labeling them, and then using classifiers for training and learning. Common sequence-labeling methods include conditional random fields, maximum entropy, plain Bayes, and support vector machines [

26]. Despite the achievements of machine learning methods in multi-scalar sentiment classification, feature engineering is time-consuming and labor-intensive, and the classification results are highly dependent on feature quality.

The research direction of the content of online review information is roughly divided into two categories: one is for the text mining of online review information; the other is from the multi-level of online review information, and the picture information in online review information has attracted a significant amount of attention in the academic community [

27,

28]. Zheng Fei et al. showed that a combination of the LDA model and Word2Vec word vectors can be used to complete the modeling of comment text word vectors for sentiment classification in problems such as varying lengths of comment texts and non-uniformity of unit schemas [

29]. Kim and Hovy (2004) applied synonyms and antonyms of WordNet dictionaries and hierarchical structures to analyze word vectors’ sentiment tendencies [

30]. Zhu Xiaoliang and other researchers solved the composition classification problem by using the TextRank model to filter key sentence words and combining it with a word embedding model to model documents. This was in consideration of the problem that the Word2Vec model cannot identify the importance of special words and scene words in Chinese in the text [

31]. Zhang Qian et al. proposed to introduce the TFIDF model to weigh the output word vector matrix to obtain the weighted text vectorization model and classify it [

32]. Yuting Yang et al. encoded text into high-dimensional vectors with contextual semantic, sequential, and sentiment information through the Doc2Vec model and verified the effectiveness of the document’s distributed representation approach [

33].

Du Lin et al. obtained word vectors by inputting the text into the BERT model, and then input it into the BiLSTM containing self-attention in chronological order to realize the extraction and automatic classification of the text of Chinese medical records [

34]. Yunfei Shao et al. used LDA and TF-IDF to expand the input text features, and then further aggregated the features to form classification basis vectors with a CNN, which improved the classification effect on news headline data [

35,

36]. Qu and Wang (2018) proposed a sentiment analysis model based on hierarchical attention networks with a 5% improvement in accuracy over recurrent neural networks [

37]. Tao, Zhiyong et al. fused the bidirectional features of the BiLSTM model and used them for attention weight computation, achieving improved classification results on various benchmark datasets [

38].

In particular, Duan Dandan et al. used a short text classification model based on BERT, which utilizes BERT’s own sentence vector training to achieve automatic text classification [

39,

40]. Zhao Liang considers multimodal data to be data obtained through different domains or perspectives for the same descriptive object, and calls each domain or perspective describing these data a modality [

41]. Trofimovich (2016) used LSTM (long short-term memory) to solve the problem of sentiment analysis in order to classify sentiment at the phrase level including linguistic rules such as negativity, intensity, and polarity, and trained on labeled text with BiLSTM for syntactic and semantic processing [

42]. In the field of traditional machine learning classifiers, some of the literature uses the Doc2Vec model and LDA model to obtain multi-channel text feature matrices and input the modeled text into SVM and LR for classification. By obtaining the final classification results through the voting mechanism among multiple classifiers, the model achieves excellent results on the short text classification problem [

43].

In the field of deep learning, H. Wang et al. obtained word embedding representations of documents and fed them into a two-channel classification model. The first channel is a three-layer CNN to extract local features. At the same time, the model fuses the input vectors obtained from the word embedding model with the output vectors of each layer of the CNN to realize the reuse of the original features; the second channel uses LSTM to obtain the context-associated semantics of the text. Finally, the vectors of the two channels are fused through a fully connected network to realize feature fusion, and the model achieves better results than the previous traditional model in the Sina news classification problem [

44,

45,

46].

It is worth noting that the model uses a unidirectional LSTM, while Bi-LSTM is considered to be a better option than unidirectional LSTM. LSTM models are used for phrase-level sentiment classification centered on regularization, which contains linguistics such as negativity, intensity, and polarity [

47], so BiLSTM will be better for sentiment classification. Multi-channel Text CNN is able to obtain more adequate keyword aggregation than multi-layer Text CNN. Cho (2014) proposed gated recursive units (GRUs) to analyze dependency contexts, which showed significant improvements in various tasks. This takes into consideration the multimodal, sparsely informative, highly unstructured, and word polysemous nature of text data review [

48,

49]. Basiri et al. (2021) presented a bidirectional convolutional neural network (CNN)–recurrent neural network (RNN) deep model for sentiment analysis of Twitter product reviews [

50].

In this paper, we propose the Bert BiGRU Softmax deep learning model with hybrid masking, comment extraction, and attention mechanisms combined with multimodality. In order to improve the correctness of the results, this paper uses several models for image content recognition, respectively, and finds that Squeeze Net performs better in terms of execution efficiency and accuracy, so Squeeze Net is finally adopted for image content recognition. The Bert BiGRU Softmax model extracts multidimensional product features from online reviews using the Bert model as an input layer. The bidirectional GRU model is used as a hidden layer to obtain the semantic code and compute the sentiment weights of the comments; finally, Softmax and the attention mechanism are utilized as an output layer to classify the positive or negative nuances.

There are some important studies in the current research area which is summarized as follows: first, there is a research gap on how to effectively integrate text and image data to improve the performance of sentiment analysis. Currently, although there have been studies on text sentiment analysis and image sentiment analysis, there is not enough research on how to effectively integrate these two different modal data for sentiment analysis. Such integration can help the model understand the content of the comments more comprehensively, thus improving the accuracy of sentiment classification.

Second, efficient models specifically designed for multimodal sentiment analysis tasks are still lacking. Most of the current research focuses on the sentiment analysis of single-modal data (text or images), while there are relatively few sentiment analysis models for multimodal data. Therefore, how to design a multimodal sentiment analysis model that can fully utilize the information of text and image data to improve the accuracy of sentiment classification is a research direction that needs to be explored in depth.

Finally, there is still a relative lack of research on the reliability and scalability of multimodal sentiment analysis models applied to different domains and datasets. Understanding the applicability of multimodal sentiment analysis models on different domains and datasets, as well as the stability and reliability of their performance in real-world applications, are key research issues. Therefore, more in-depth research is needed to explore the application scope and generalization capability of multimodal sentiment analysis models.

Research question: How can multimodal data be processed using the multimodal Bert BiGRU Softmax model to achieve more accurate online review information classification to improve the consistency and accuracy of review information classification?

Objective: To realize the enhancement of online comment information classification based on multimodal deep learning to fill the identified gaps and improve the accuracy, consistency, and application scope of the classification.

3. Collection and Analysis of Data Sets

In this paper, the food review information of the Meituan online platform is selected as the data source. We chose the platform Meituan because it is a leading e-commerce platform for life services in China, which provides a wide range of services such as takeout, restaurant reservation, hotel booking, travel, movie tickets, bike sharing, and so on. Users can order, pay for, and book services online through Meituan. Meituan has a huge user base in China, covering both cities and villages, providing users with a convenient life service experience. Meituan has a large number of users in China, covering all ages and social groups. Users can enjoy fast and convenient services through the Meituan platform. Meituan’s user experience is designed to be simple and easy to use, and the payment process is convenient, which is widely welcomed by users. Meituan has a large market share and influence in China’s living service sector. As one of the largest e-commerce platforms for lifestyle services in China, Meituan’s development has not only promoted the popularization of online consumption patterns, but also the digital transformation and enhancement of the service industry. Its convenient service model and rich product offerings are popular among Chinese consumers and have had a positive impact on China’s lifestyle and consumption habits. With the sky-rocketing changes in the food business model, people’s consumption habits are also quietly shifting. By clicking on your favorite food items on the mobile app, these food items are delivered on time and accurately to the designated area. It is worth noting that people habitually look at the information in the online reviews of the restaurant or store before they decide to spend their money there.

However, with the rapid development of various platforms, the safety hazards of certain food products, as reflected in the review information, cannot be ignored. The occurrence of food safety incidents has serious harmful effects on consumers, takeaway platforms, food merchants, and society beyond our imagination. Therefore, this paper aims to strengthen the food safety regulation of stores by the relevant authorities through the analysis of store reviews.

In reviews, users explicitly or implicitly rate the attributes of multiple items, including environment, price, food, and service. In this paper, four preprocessing steps were performed on the collected data to ensure the ethics, quality, and reliability of the reviews. Specifically, they include: (1) user information (e.g., user ID, user name, avatar, and posting time) deletion due to privacy considerations; (2) filtering of short comments with fewer than 50 Chinese characters and long comments with more than 1000 Chinese characters; (3) discarding comments in which the percentage of non-Chinese characters is more than 70%; and (4) preprocessing of the data which includes data cleansing, Chinese word splitting, de-duplication of words, etc.

In addition, in order to avoid ethical issues and minimize potential bias during data processing and model training, it is necessary to eliminate bias in the data, including characteristics such as gender and ethnicity, and to ensure the diversity and transparency of the dataset. For the model training process, it is necessary to review the model’s processing of the data and monitor the output of the model training at regular intervals, and to modify the model training process as soon as any bias occurs, as detailed below.

Integration of multimodal data from diverse datasets: ensure that the dataset is representative, covers diversity, and avoids the existence of specific groups or biases in the dataset in order to reduce the bias in the model training process. It is also important to integrate data of different modalities, such as text, images, etc., to synthesize multifaceted information and reduce the bias that may be brought by a single modality.

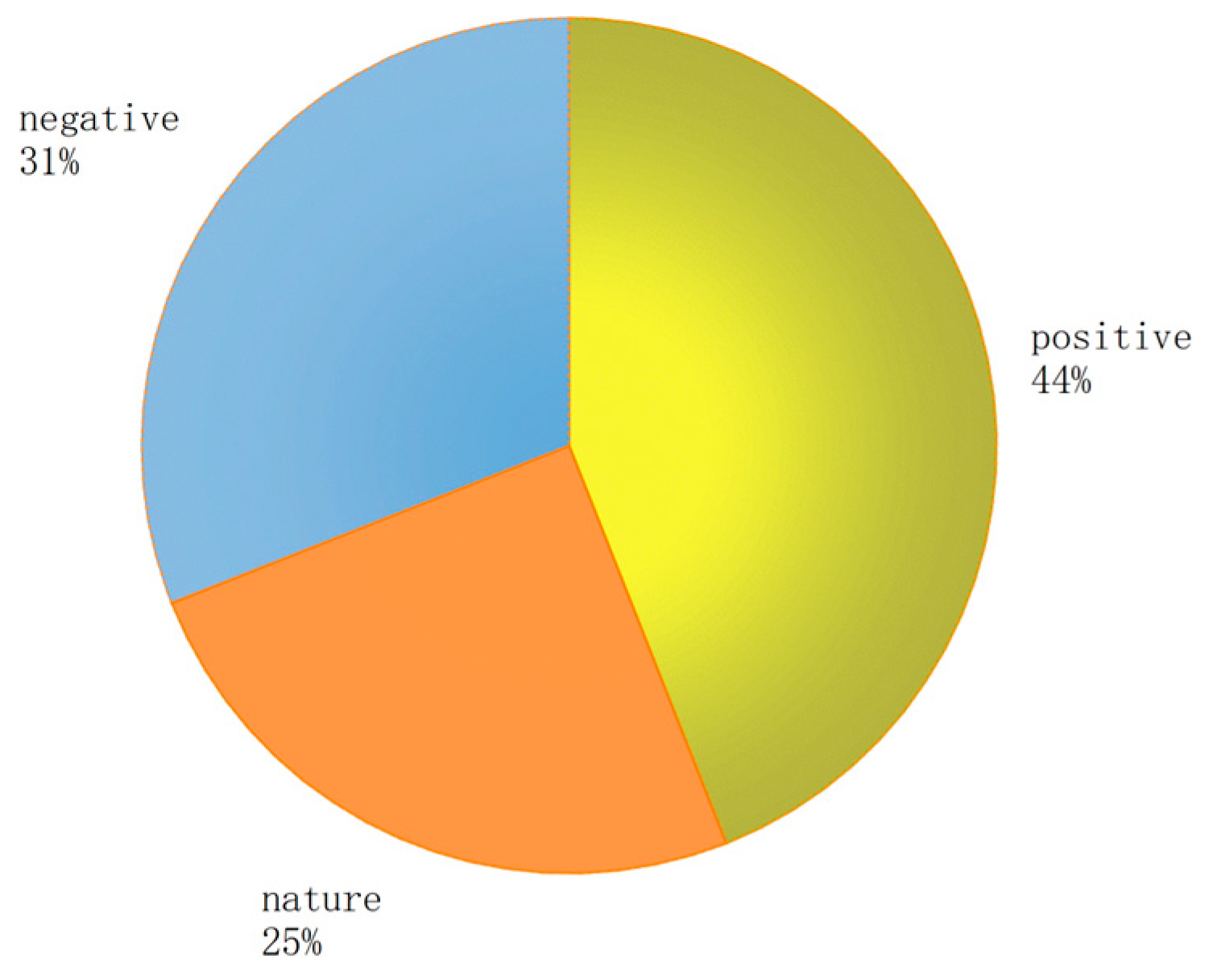

Balancing the dataset: address the problem of category imbalance to ensure that the samples of different categories are balanced to avoid the model bias. In the process of performing sentiment analysis of review messages, ensuring a balance between the number of reviews, positive, neutral, and negative sentiments is crucial for model training and performance, as well as for the results and rationality of the analysis. A balanced dataset not only helps the model to better learn the differences between various sentiment categories, but also improves classification accuracy. When dealing with multimodal data, it is therefore important to ensure that the number of comment sentiment categories is balanced to avoid sample imbalance which can lead to model training biased toward one category.

Transparency and interpretation: improve the transparency and interpretation of the model to ensure that the decision-making process of the model can be understood and interpreted, reducing potential bias and discrimination.

Review and monitoring: regularly review the model and the data processing process, monitor the model outputs, and identify potential biases and inequities in a timely manner and take corrective action.

Elimination of implicit bias and ethical review and moral considerations: review the dataset and features to eliminate possible implicit bias in them, such as sensitive features like gender, race, etc., and avoid unfairness in the model in these areas. At the same time, an ethical review should also be conducted to consider the possible social impacts of the model application and to ensure that the model design and application comply with ethical standards and laws and regulations.

The methods and measures in the above content can not only reduce potential bias and unfairness, but also ensure that ethical issues are paid attention to in the process of model training and data processing, improve the fairness and credibility of the model, and prevent the model from generating or expanding potential bias in the analysis.

For the Bert BiGRU Softmax model, the preprocessing steps and feature extraction techniques applicable to both textual and non-textual data have shown good adaptability in experimental simulations, but since most of the data randomly collected during data acquisition are textual and due to the space limitation of this article, more specific details on image processing will be demonstrated in future work. For the Bert BiGRU Softmax model, the input layer configuration is crucial to efficiently preprocess and extract features from both textual and non-textual data to provide key support for model training and performance.

Step 1: In the case of text data, preprocessing includes the application of tokenization, stemming/lemmatization, and word embeddings to convert text into numerical representations and capture semantic and emotional information.

Step 2: For image data, the preprocessing phase includes resizing, normalization, and augmentation techniques to ensure that the images are uniformly sized, the pixel values are normalized, and a diversity of augmented data is generated to improve generalization.

Step 3: In terms of feature selection and integration, multimodal feature integration is key. When combining text and image features, a parallel structure or a fusion structure can be used. The parallel structure processes text and image features separately and then concatenates or sums the features; the fusion structure can utilize the attention mechanism or joint training to fuse text and image information to obtain a more comprehensive multimodal feature representation.

In this paper, we use the Bert BiGRU Softmax deep learning model to perform sentiment analysis of online product quality reviews in terms of multiple dimensions such as service, taste, price, hygiene, packaging, and delivery time. The polarity is categorized into positive, neutral, and negative dimensions.

4. Introduction to Multimodal Learning and Modeling

Multimodality refers to the different forms in which things are presented or experienced. Multimodality can be based on the human senses, including visual, auditory, and tactile modalities, each of which can represent human perception. The combination of multiple modal perceptions gives the complete human modal perception. At the same time, multimodal information can be used to represent different forms of data forms and can also be the same form of different formats, generally expressed as text, images, audio, video, or mixed data.

Text data can provide detailed semantic information, including sentiment vocabulary, emotional expressions, and contextual information, which helps to capture the emotional color and sentiment tendency in comments. Text features are transformed into numerical forms by natural language processing techniques (e.g., word embedding), which provide rich information about the content of the comments and play an important role in sentiment analysis. Image data contain visual information that conveys visual emotional content and emotional expression in comments, such as image mood, color, and emotional expression. Image features provide supplementation and enrichment of the comment content, which can help the model understand the emotional meaning of the comments more comprehensively. There is complementarity between text features and image features, and combining information from both modalities can provide a more comprehensive and multidimensional emotional expression.

The synthesis mechanism for integrating text features and image features is the key, and the features of the two modalities are fused by designing an effective feature fusion strategy. Methods such as parallel structure, fusion structure, and attention mechanism are used to combine text and image features dynamically so that the model can synthesize different modal information. By integrating text and image features, the model is able to more accurately capture the sentiment information in the comments and enhance the accuracy and robustness of sentiment analysis.

Improving the integration of multimodal data (e.g., text and images) by combining sophisticated approaches from different modalities can help improve the accuracy of product review classification. Combining information from text and image data can enhance the model’s ability to understand and classify product reviews. The use of sophisticated multimodal integration methods can better capture the associations and interactions between different data modalities, thereby enhancing the performance of the model.

Specific methods include designing effective multimodal feature fusion strategies, optimizing model parameters using joint training, introducing an attention mechanism in the model to dynamically focus on key information parts, and designing complex multimodal neural network architectures to achieve the effective fusion of text and image data. Improved multimodal data integration through these complex methods can improve the accuracy and performance of the product review classification task, allowing the model to understand the review content more comprehensively and to classify and analyze it more accurately. This integrated approach of utilizing different data modalities helps to expand the range of model applications and enhance the effectiveness of practical applications.

Image content recognition has been an important research problem. With the continuous development of learning methods, the accuracy of image recognition is constantly improving. Due to the fact that the original image consists of a pixel matrix, and that the traditional edge recognition and other image recognition methods can only be divided by pixel blocks each time, the recognition effect is poor; and due to the existence of a convolutional layer, the pixel matrix of the image after convolutional processing turns into a high-dimensional matrix of features, and transforms the simple pixel information into composite feature information. Through such a convolution operation, the computer is not only able to recognize the basic edge detection, but also able to recognize shapes, such as circles, rectangles, etc., and then carry out continuous convolution and ultimately realize the recognition of the object.

Light weighted based on inception is beneficial. In this paper, we use the open-source tool Image AI based on Python language, which is based on the ImageNet dataset for model training, integrating the mainstream ResNet50, DenseNet121, InceptionV3 and Squeeze Net, which are four kinds of convolutional neural network-based deep learning models for image recognition, and also support the customized model training. In order to improve the correctness of the results, this paper uses the above four models for image content recognition and finds that Squeeze Net performs better in terms of efficiency and accuracy, so Squeeze Net is finally adopted for recognition.

Mathematical modeling for text is still an essential aspect in the field of natural language processing. How text is modeled has a significant impact on the effectiveness of downstream feature extraction models and classification models. Most of the common text modeling models are designed for English corpuses, either question and answer corpuses or comment corpuses. However, in addition to the sparse information and highly unstructured characteristics of English commentary texts, Chinese commentary texts also have the problems of multiple meanings of words and non-uniformity of the smallest unit of expression. These problems usually result in limiting the classification effectiveness of traditional classification models on Chinese text.

At the same time, the information contained in the comment text includes not only textual information, but also image information. To textually model multimodal data expressed by these two kinds of information, there are not only the problems of word polysemy and textual representation granularity, but also the difficulties of acquiring picture information and performing sentiment tendency analysis.

The BERT model was proposed in 2018 (pre-training of deep bidirectional transformers for language understanding) as a milestone work in the field of pre-training, achieving the best current results on several NLP tasks and opening a new chapter. BERT is a pre-trained model on deep bi-directional transformers for language understanding, wherein transformer refers to a network structure for processing sequential data. BERT learns the semantic information of the text and applies it to tasks such as categorization, semantic similarity, etc. through outputs in the form of vectors. It is a pre-trained language model, i.e., it has been trained unsupervised on a large-scale corpus [

51], and in using it we only need to train and update its parameters on this basis.

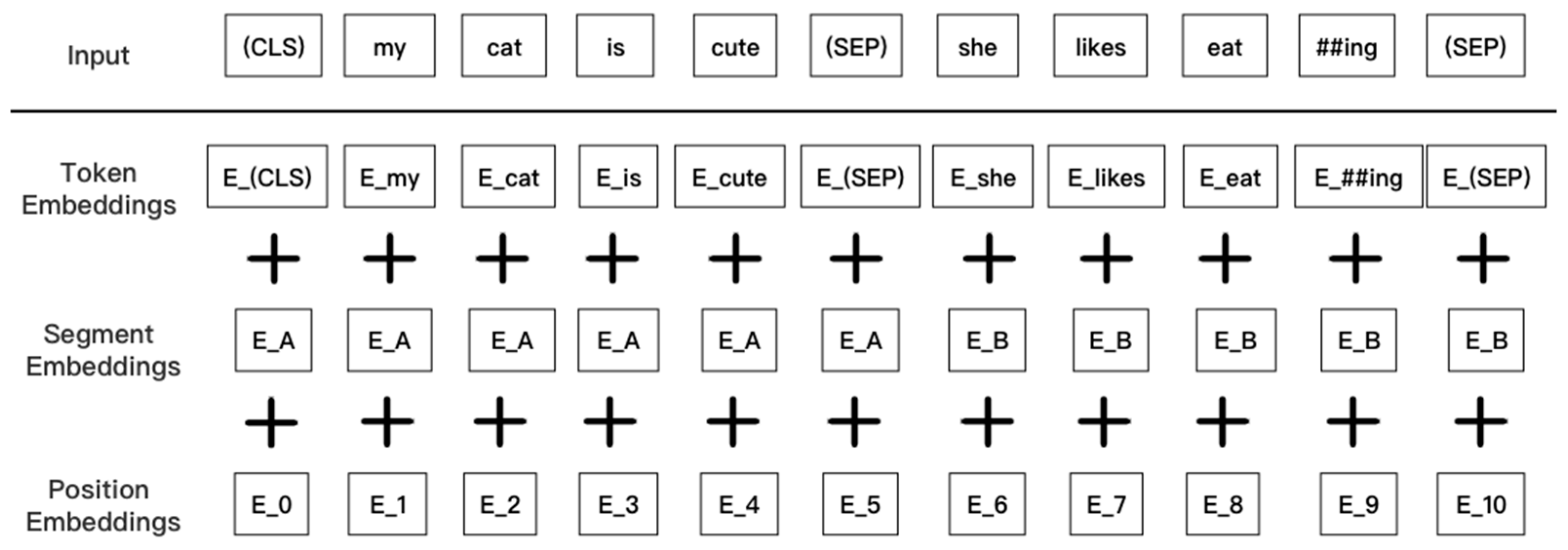

Unlike other language models, BERT is trained on unsupervised precisions, wherein information related to the left and right of the text is considered in each layer. Xia et al. argue that the supervised deep learning methodology approach relies on a large number of clean seismic records without ground-rolling noise as a reference label. The unsupervised learning method approach considers different temporal, lateral, and frequency features that distinguish the ground-roll noise from the real reflected waves in the seismic records before deep stacking. By designing the ground-roll suppression loss function, the deep learning network can learn the specific distribution characteristics of the real reflected waves in seismic records containing ground-roll noise. BERT’s linguistic input representation consists of three components: word embeddings, segmentation embeddings, and position embeddings. The final embedding vector is a direct sum of the above three vectors, as shown in

Figure 2.

In summary, for text classification tasks, the BERT model inserts a [CLS] symbol in front of the text and uses the output vector corresponding to this symbol as a semantic representation of the whole text for text classification. This notation has no obvious semantic information, so it can more “fairly” incorporate the semantic information of individual words or phrases in the text, as shown in

Figure 3. I In addition, we can add additional structures such as fully connected layers after the BERT model to perform fine-tuning operations for specific tasks, such as linguistic reasoning tasks like Q&A.

By learning the distribution over the input text vectors, the Emotion Bert model can be efficiently used to learn feature extraction over variable-length sequences S. Given a review sentence S, we can directly obtain its category and the set of dimensions of the category Dc. For each word

(

) and dimension dj in a review sentence, we assign a probability score that describes the probability

that word

belongs to class dj in an online product quality review (as in Equation (1)):

The transformer adds sequence information to the sequence via word position embedding (

) Formulas (2) and (3).

When

is 64, the text sequence is represented as 512 characters, the

is the even position in the given sequence of the input vector, and

is the odd position. When the transformer extracts the features

and

from the two special words

and

in the S-sequence, the BERT loss function considers only the prediction of the masked values and thus ignores the prediction of the non-masked words, as shown in Equations (4) and (5).

GRU is a specific model of a recurrent neural network that performs machine learning tasks related to memory and clustering using connections through a series of nodes, which allows GRUs to pass information over multiple time periods in order to influence subsequent time periods. A GRU can be considered as a variant of LSTM as both are similar in design and produce equally good results, both gate recursive units help in tuning the neural network input weights to solve the vanishing gradient problem. As a refinement of the recurrent neural network, a GRU has a gate called update gate and reset gate rt. Using an input vector

and an output vector

, the model refines the information flow in the output-1 model by controlling ht. As with other types of recurrent network models, a GRU with gated recurrent units can retain information over a period of time, which is why it is easiest to describe these techniques as “memory-centric” types of neural networks. In contrast, other types of neural networks without gated recurrent units typically do not have the ability to retain information. The structure of the GRU is shown in

Figure 4.

BiGRU refers to bidirectional gated recurrent unit (BGRU), i.e., an additional reverse layer is added to the GRU. BiGRU can process both forward and reverse information of the input sequence, thus capturing features in the sequence more comprehensively and improving model performance. It can be used for a variety of tasks such as speech recognition, person name recognition, lexical annotation, etc.

BiGRU has advantages over GRU such as bi-directionality, better performance, better handling of long sequences, and finer-grained feature representation. Therefore, BiGRU has become a very effective model in various sequence learning tasks. Since the GRU retains only less state information and is prone to the problems of gradient vanishing or gradient explosion, its processing of long sequences may not be as effective as BiGRU.

BiGRU, on the other hand, introduces more state information through the inverse layer, which improves the handling of long sequences and reduces the risk of vanishing or exploding gradients. In combining BiGRU and Softmax, the input text sequence can be encoded using BiGRU, and then the encoded result is passed to a fully connected layer, and finally classified using the Softmax activation function. Specifically, the output of BiGRU can be used as an input to the fully connected layer, and the output of the fully connected layer is then used for label prediction via Softmax. This combination can effectively improve the performance of text categorization, especially when facing complex text datasets.

The BiGRU model operates on a given sequence of input vectors

(where

denotes a concatenation of input features) and computes the corresponding hidden activations

. At the same time, a sequence of output vectors is generated from the input data

At time

, the current hidden state is determined by three components: the input vectors

, the forward hidden state, and the backward hidden state. The reset gate

controls how much previous state information is ignored; the smaller the value of

, the more previous state information is ignored. The update gate

controls the extent to which the unit state receives new input information. The symbol

represents elemental multiplication,

denotes a sigmoid function, and tanh denotes a hyperbolic tangent function. The hidden state

, update gate

, and reset gate

of the BiGRU are computed by Equations (6)–(9).

Softmax functions are widely used in tasks such as text categorization, sentiment analysis, and machine translation. Often, we need to represent a piece of text as a vector to facilitate subsequent computation and analysis. One of the common ways to represent text vectors is to use the word embedding technique to map each word to a low-dimensional vector of real numbers, and then transform the entire text into a fixed-length vector through some aggregation or transformation operations. In the following algorithm,

represents the weight matrix of the attention function,

refers to the hyperbolic tangent function,

represents the sentiment analysis results, and

represents the corresponding bias of the output layer.

After completing the text vector representation, we also need to perform tasks such as classification and labeling, see

Figure 5 below. At this point, the Softmax function can be used to map the text vectors to different classes of probability distributions (the processes represented by the arrows pointing to them in the figure below). Specifically, in natural language processing tasks, a neural network model is usually used as a classifier, with text vectors as inputs, and after several layers of fully connected layers and nonlinear activation functions, the outputs are finally mapped to individual categories using the Softmax function, and the probability values of each category are calculated. Ultimately, we can consider the category with the largest probability value as the category to which the text belongs.

This paper investigates the Bert BiGRU Softmax model for sentiment analysis of online product quality reviews. The sentiment Bert model is used as an input layer for feature extraction in the preprocessing stage. The hidden layer of the bi-directional GRU performs dimension-oriented sentiment classification by using bi-directional long and short-term memory and selective recursive units to maintain the long-term dependencies inherent in the text regardless of length and number of occurrences. The output layer of Softmax calculates sentiment polarity by merging to smaller weighted dimensions according to the attraction mechanism. The output layer of Softmax calculates sentiment polarity by merging to smaller weighted dimensions according to the attraction mechanism.

BERT, as a pre-trained transformer model, has advantages in understanding text semantics and context, capturing rich semantic information, providing powerful text representation and improving the ability of semantic understanding. BiGRU, as a bi-directional recurrent neural network, is able to efficiently capture long-distance dependencies in text sequences, which is conducive to modeling text sequences with semantic and sentiment information, and has celebrated its sequence modeling ability. Softmax, as a classifier, is suitable for multi-category sentiment classification task, which can map the features extracted by BERT and BiGRU to different sentiment categories and realize the decision of sentiment classification, which greatly improves the classification ability of the model. As for multimodal feature fusion, by integrating text features extracted by BERT and sequence features captured by BiGRU, combined with image features, it can comprehensively utilize the information of text and image data to provide a more comprehensive multimodal feature representation. Optimizing and tuning the model and reasonably setting hyperparameters such as learning rate, batch size, dropout rate, etc., can improve the performance and generalization ability of the model through cross-validation or experimental adjustment.

In summary, the in-depth exploration of the model indicates that the combination of BERT, BiGRU, and Softmax leads to higher accuracy, avoids logical ambiguity in model validity, and enhances the understanding of model design and performance enhancement mechanisms.

When processing data and training models, attention also needs to be paid to the stability and performance capability of the research-designed models in the face of noisy or incomplete data, i.e., to ensure that the BERT BiGRU Softmax model is robust and noise-resistant, which implies the need to take a series of measures in the process of data processing, model design, and training, such as data cleansing, outlier handling, feature selection, regularization, integrated learning, etc. These methods are used to effectively reduce the noise and interference in the data, improve the generalization ability and applicability of the model, and thus ensure the robustness and reliability of the model in complex or noisy environments, as follows.

Step 1: Data cleaning and outlier handling: data cleaning is performed to identify and handle outliers and noise to reduce interference in the data. Robust statistical methods are used to deal with outliers, such as median instead of mean, or standardize the data using RobustScaler.

Step 2: Feature Selection as well as dimensionality reduction: select features that are robust and filter features that have a high impact on model predictions through feature selection methods. Dimensionality reduction techniques such as principal component analysis (PCA) can be used to reduce data dimensionality and noise.

Step 3: Regularization and model complexity control: add regularization terms (e.g., L1, L2 regularization) to control model complexity, prevent overfitting, and improve noise immunity. Consider using simple models or integrated learning to reduce model complexity and enhance robustness.

Step 4: Integrated learning as well as model fusion: use integrated learning methods, such as random forests and gradient boosting trees, to combine the prediction results of multiple models and reduce the impact of noise on the model. Combining the prediction results of different models, model fusion is carried out through voting or weighted average to improve the robustness and noise resistance of the model.

Step 5: Cross-validation as well as model evaluation: use cross-validation techniques to evaluate the stability and generalization ability of the model and reduce the impact of noise on model performance. The average performance on different data subsets is considered in the model evaluation to improve the robustness and reliability of the model.

By combining the above methods and steps, it is possible to ensure that the model maintains stable performance and reliable prediction ability in the face of complex or noisy environments. The risk of data noise and model overfitting is greatly reduced, and the robustness and generalization ability of the model is improved.

The objective of sentiment analysis is to uncover the subjective emotional inclinations expressed by users toward products, as conveyed through online information. By leveraging deep learning techniques, sentiment analysis aims to establish connections between various features such as syntax, semantics, emoticons, and sentiments. It involves categorizing user-generated content into positive, negative, or neutral sentiments, thereby enabling a better understanding of users’ opinions and attitudes toward goods.