First Steps toward Voice User Interfaces for Web-Based Navigation of Geographic Information: A Spanish Terms Study

Abstract

1. Introduction

2. Materials and Methods

3. Results

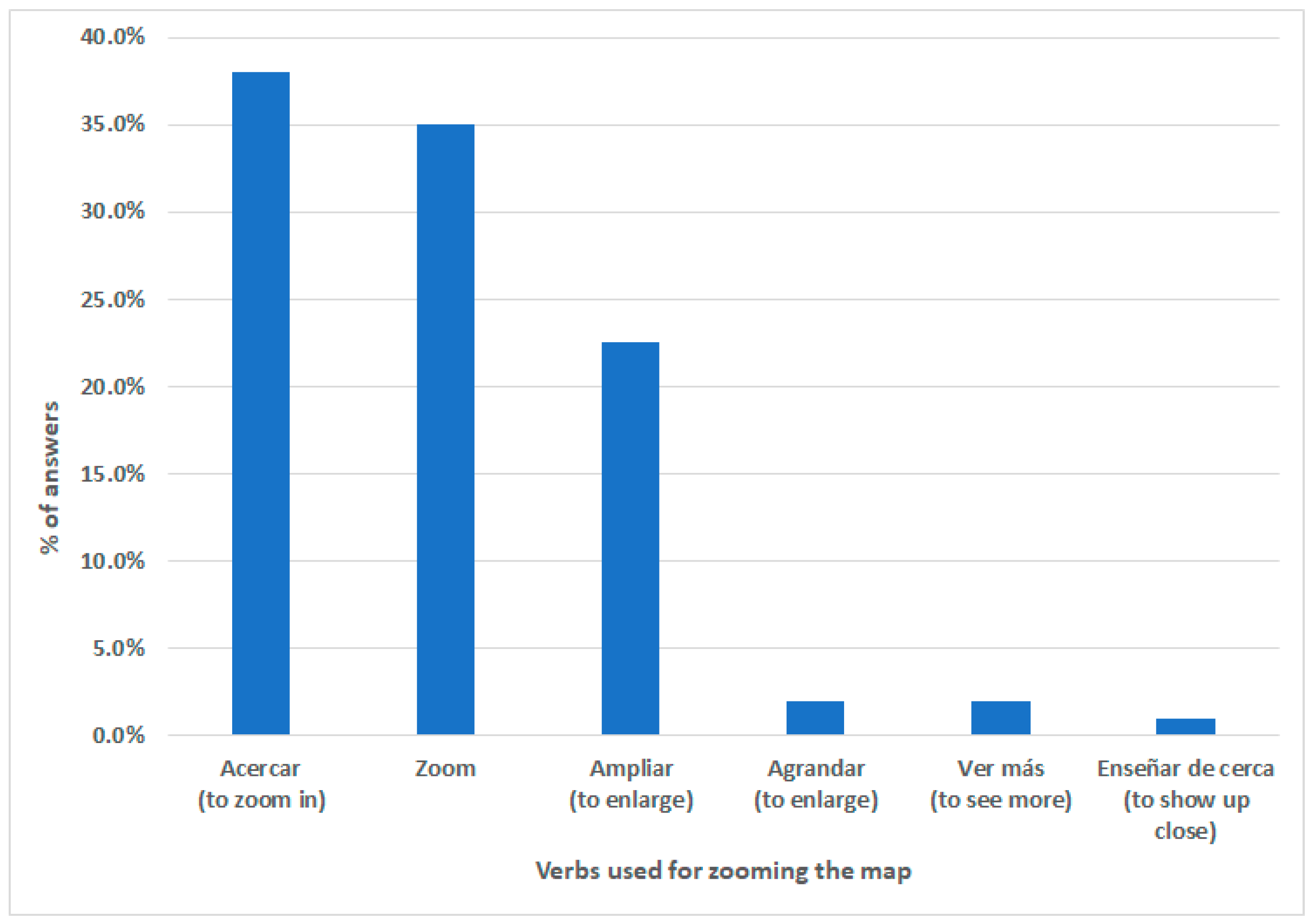

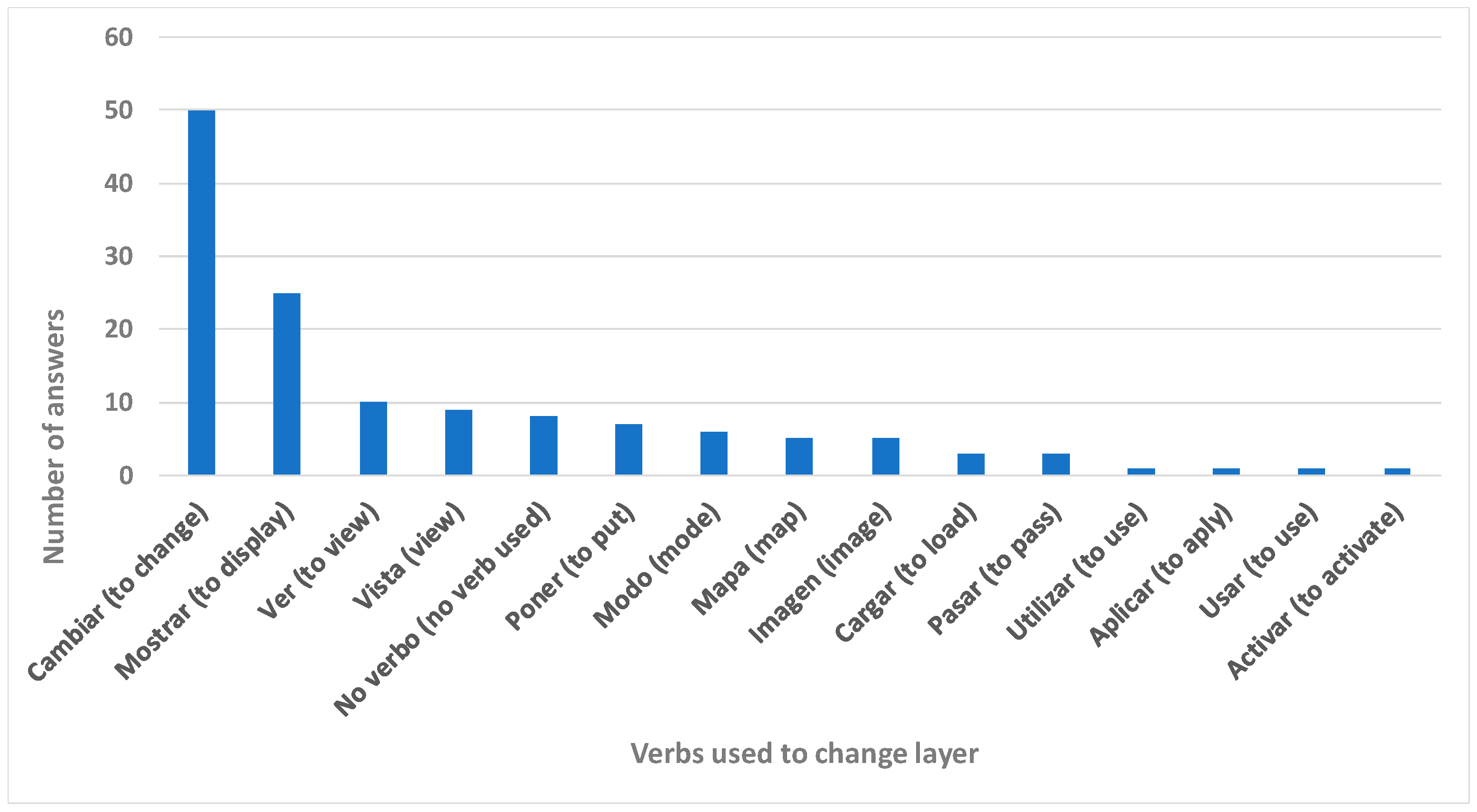

3.1. Identifying the Words to Command Maps

3.2. Identifying the Chain of Commands Used

3.2.1. Wizard of Oz Experiment

3.2.2. Prototype Creation and Validation

4. Discussion

- Me in the world. The user locates themself in the world represented by the system, maybe flying. They use expressions such as “go to the north, to the west”, “get me closer”, and “move me”.

- Me on the street. The user imagines themself walking on the ground. They use expressions like “turn right”, “move away”, “go straight ahead”, and “how to go to”.

- Me in front of a screen. The user sees the system as a system and does not try to interact naturally but tries to find out how to provoke the desired response. The user interprets the system as an interactive map (“move it”), and the most differentiating factor is that they use languages learned from other systems. This may happen for the following reasons. The user is very connected to the mobile web, app, etc. They use expressions such as “back” (referring to a previous screen or back button), “reverse zoom”, or, in the case of a user who directed the cursor orthogonally, “up-up-right-right-up-up” to traverse a diagonal. Another possible reason derives from a subgroup of users with a technical profile, e.g., geographers, computer scientists, and engineers, that prompts them to speak to the system in a programming language or with technical words (e.g., “scroll” or “pan”). In these cases, the user adopts non-conjugated and non-personalized expressions.

- Voice recognition improvement. The recognition of the moment the user initiates the instruction is crucial. Currently this is solved with the keywords the user must say before verbalizing the request, e.g., the assistant’s name. In addition, the transitions between instructions require enhancement. Another issue is the understanding of phonetically similar words. For example, the user says mueve (“move”), and the system might interpret it as nueve (“nine”). This is understandable and can perhaps be solved by limiting the vocabulary the system recognizes. Most speech recognizers use all the vocabulary of the language in which they are executed, and many misunderstandings occur with words that are not used in the GIS context. When similar words are necessary for a certain context, as in a GIS (e.g., mueve and nueve), a feasible solution is to improve the voice recognition software. Finally, the recognition software can be enhanced to achieve comfortable use without requiring loud voice commands (e.g., when outdoors). In addition, we recommend using high-quality microphones, such as the ones used in our experiments.

- Toponym problem. Most words and speech recognizers use the vocabulary of the language being spoken. The problem is that toponyms do not always belong to that language. Some have several accepted pronunciations, and users pronounce them differently, whether accepted or not. Therefore, a speech recognizer capable of detecting the correct toponym being pronounced and selecting the appropriate one from the endless list of possible toponyms is a challenge to design.

- Persistence of the context. In the developed prototype, if a user asks the map to move to the right, the system moves it to the right a certain distance, and if the user makes the same request again, the map moves that same distance. To improve the user experience significantly, the context can be addressed such that if the user asks to move to the right and then says “further” or “further to the right,” the map moves a distance larger than the first. In other words, the system can respond according to prior commands. Context persistence and machine learning can also teach the system iteratively based on the user’s pronunciation and vocabulary. As a result, the product can better adapt to the range of possible users (considering pronunciation and the different user profiles previously mentioned), improving the user experience.

- More user studies. Additional user studies should be conducted to observe the behavior and attitudes of the different profiles in different place-onas. The groups at risk of isolation in the spoken interaction paradigm should also be considered. Specifically, we need to address the specific difficulties faced by people with social communication disorders, such as dyslalia, dysphemia, dysarthria, and dysglossia, and to what extent they can make use of speech recognition standards. Similarly, for people with aphasia or cognitive disabilities, specific solutions can allow them to access these systems. Finally, the vocabulary and interaction of children and the elderly with GISs should be studied.

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Gartner. 5 Trends Emerge in Gartner Hype Cycle for Emerging Technologies. 2018. Available online: https://www.gartner.com/smarterwithgartner/5-trends-emerge-in-gartner-hype-cycle-for-emerging-technologies-2018 (accessed on 6 July 2022).

- Perera, C.; Zaslavsky, A.; Christen, P.; Georgakopoulos, D. Context aware computing for the internet of things: A survey. IEEE Commun. Surv. Tutor. 2014, 16, 414–454. [Google Scholar] [CrossRef]

- Blanco, T.; Casas, R.; Manchado-Pérez, E.; Asensio, Á.; López-Pérez, J.M. From the islands of knowledge to a shared understanding: Interdisciplinarity and technology literacy for innovation in smart electronic product design. Int. J. Technol. Des. Educ. 2017, 27, 329–362. [Google Scholar] [CrossRef]

- Medrano, F.A. Effects of raster terrain representation on GIS shortest path analysis. PLoS ONE 2021, 16, e0250106. [Google Scholar] [CrossRef] [PubMed]

- Blaser, A.D.; Sester, M.; Egenhofer, M.J. Visualization in an early stage of the problem-solving process in GIS. Comput. Geosci. 2000, 26, 57–66. [Google Scholar] [CrossRef]

- Rauschert, I.; Agrawal, P.; Sharma, R.; Fuhrmann, S.; Brewer, I.; MacEachren, A. Designing a human-centered, multimodal GIS interface to support emergency management. In Proceedings of the Tenth ACM International Symposium on Advances in Geographic Information Systems—GIS ’02, McLean, VA, USA, 8–9 November 2002. [Google Scholar]

- Wikipedia Contributors. Wikipedia, The Free Encyclopedia. File: Tektronics 4014 US Map.jpg. Available online: https://en.wikipedia.org/wiki/Tektronix_4010#/media/File:Tektronics_4014_US_map.jpg (accessed on 30 December 2022).

- Gesswein, D. Pdp8.net. Tektronix 4010-1 Information and Pictures. Available online: https://www.pdp8.net/tek4010/tek4010.shtml (accessed on 30 December 2022).

- Granell, C.; Pesántez-Cabrera, P.G.; Vilches-Blázquez, L.M.; Achig, R.; Luaces, M.R.; Cortiñas-Álvarez, A.; Chayle, C.; Morocho, V. A scoping review on the use, processing and fusion of geographic data in virtual assistants. Trans. GIS 2021, 25, 1784–1808. [Google Scholar] [CrossRef]

- Bartie, P.; Mackaness, W.; Lemon, O.; Dalmas, T.; Janarthanam, S.; Hill, R.L.; Dickinson, A.; Liu, X. A dialogue based mobile virtual assistant for tourists: The SpaceBook Project. Comput. Environ. Urban Syst. 2018, 67, 110–123. [Google Scholar] [CrossRef]

- Sodnik, J.; Dicke, C.; Tomažič, S.; Billinghurst, M. A user study of auditory versus visual interfaces for use while driving. Int. J. Hum. Comput. Stud. 2008, 66, 318–332. [Google Scholar] [CrossRef]

- The European Parliament and the Council of the European Union. Directive (EU) 2016/2102 of the European Parliament and of the Council of 26 October 2016 on the Accessibility of the Websites and Mobile Applications of Public Sector Bodies. Available online: https://eur-lex.europa.eu/legal-content/en/txt/html/?uri=celex:32016l2102 (accessed on 6 July 2022).

- Boletín Oficial del Estado (BOE). Real Decreto 1112/2018, de 7 de septiembre, sobre accesibilidad de los sitios web y aplicaciones para dispositivos móviles del sector público. 2018. Available online: https://www.boe.es/buscar/act.php?id=BOE-A-2018-12699 (accessed on 6 July 2022).

- Calle-Jimenez, T.; Luján-Mora, S. Web Accessibility Barriers in Geographic Maps. In Proceedings of the 7th International Conference on Computer Science and Information Technology (ICCSIT 2014), Barcelona, Spain, 22–24 December 2014; Volume 7. [Google Scholar] [CrossRef]

- Sieber, R.E.; Robinson, P.J.; Johnson, P.A.; Corbett, J.M. Doing public participation on the geospatial web. Ann. Am. Assoc. Geogr. 2016, 106, 1030–1046. [Google Scholar] [CrossRef]

- Calle-Jimenez, T.; Eguez-Sarzosa, A.; Luján-Mora, S. Design of an architecture for accessible web maps for visually impaired users. In Advances in Intelligent Systems and Computing; Springer International Publishing: Cham, Switzerland, 2019; pp. 221–232. [Google Scholar] [CrossRef]

- Chan, A.; Dascalu, S. Using brain computer interface technology in connection with Google street view. In Proceedings of the 2017 21st International Conference on Control Systems and Computer Science (CSCS), Bucharest, Romania, 29–31 May 2017. [Google Scholar] [CrossRef]

- Götzelmann, T.; Eichler, L. BlindWeb maps—An interactive web service for the selection and generation of personalized audio-tactile maps. In Lecture Notes in Computer Science; Springer International Publishing: Cham, Switzerland, 2016; pp. 139–145. [Google Scholar] [CrossRef]

- Brule, E.; Bailly, G.; Brock, A.; Valentin, F.; Denis, G.; Jouffrais, C. MapSense: Multi-sensory interactive maps for children living with visual impairments. In Proceedings of the 2016 CHI Conference on Human Factors in Computing Systems, San Jose, CA, USA, 7–12 May 2016. [Google Scholar] [CrossRef]

- Preiser, W.; Smith, K.H. Universal Design Handbook, 2nd ed.; McGraw-Hill Professional: New York, NY, USA, 2010. [Google Scholar]

- Clarkson, P.J.; Coleman, R.; Keates, S.; Lebbon, C. Inclusive Design: Design for the Whole Population, 2003rd ed.; Springer: London, UK, 2013. [Google Scholar]

- Blanco, T. Design Methodologies as a Platform for X-Disciplinarity in Technological Projects: Surfing Disciplines. Ph.D. Dissertation, Universitat Politècnica de València, Valencia, Spain, 2016. [Google Scholar] [CrossRef]

- Hayat, S.; Rextin, A.; Bilal, A. Understanding the usability issues in contact management of illiterate and semi-literate users. PLoS ONE 2021, 16, e0259719. [Google Scholar] [CrossRef] [PubMed]

- Morrison, C.; Corish, R.; Sellen, A.J. Place-onas: Shared resource for designing body tracking applications. In Proceedings of the Extended Abstracts of the 32nd Annual ACM Conference on Human Factors in Computing Systems—CHI EA ’14, Toronto, ON, Canada, 26 April–1 May 2014. [Google Scholar]

- Blanco, T.; López-Forniés, I.; Zarazaga-Soria, F.J. Deconstructing the Tower of Babel: A design method to improve empathy and teamwork competences of informatics students. Int. J. Technol. Des. Educ. 2017, 27, 307–328. [Google Scholar] [CrossRef]

- Moss, B.; Webdesigner Depot. The Role of Place-ONAS in the Future of UX. 2018. Available online: https://www.webdesignerdepot.com/2018/04/the-role-of-place-onas-in-the-future-of-ux (accessed on 6 July 2022).

- Norman, D.A.; Verganti, R. Incremental and radical innovation: Design research vs. Technology and meaning change. Des. Issues 2014, 30, 78–96. [Google Scholar] [CrossRef]

- Bartling, M.; Robinson, A.C.; Achicanoy Estrella, H.; Eitzinger, A. The impact of user characteristics of smallholder farmers on user experiences with collaborative map applications. PLoS ONE 2022, 17, e0264426. [Google Scholar] [CrossRef] [PubMed]

- Blasco, R.; Blanco, T.; Marco, A.; Berbegal, A.; Casas, R. Needs identification methodology for inclusive design. Behav. Inf. Technol. 2016, 35, 304–318. [Google Scholar] [CrossRef]

- Thompson, W. Annual digital assistant IQ test—Siri, Google Assistant, Alexa, Cortana. Loup. 2018. Available online: https://loupfunds.com/annual-digital-assistant-iq-test-siri-google-assistant-alexa-cortana (accessed on 6 July 2022).

- Béjar, R.; Latre, M.Á.; Nogueras-Iso, J.; Muro-Medrano, P.R.; Zarazaga-Soria, F.J. An RM-ODP enterprise view for spatial data infrastructures. Comput. Stand. Interfaces 2012, 34, 263–272. [Google Scholar] [CrossRef]

- Blanco, T.; Berbegal, A.; Blasco, R.; Casas, R. Xassess: Crossdisciplinary framework in user-centred design of assistive products. J. Eng. Des. 2016, 27, 636–664. [Google Scholar] [CrossRef]

- Mahatody, T.; Sagar, M.; Kolski, C. State of the art on the cognitive walkthrough method, its variants and evolutions. Int. J. Hum. Comput. Interact. 2010, 26, 741–785. [Google Scholar] [CrossRef]

- Brown, M.; Sharples, S.; Harding, J. Introducing PEGI: A usability process for the practical evaluation of Geographic Information. Int. J. Hum. Comput. Stud. 2013, 71, 668–678. [Google Scholar] [CrossRef]

- Rakhra, M.; Sanober, S.; Quadri, N.N.; Verma, N.; Ray, S.; Asenso, E. Implementing machine learning for smart farming to forecast farmers’ interest in hiring equipment. J. Food Qual. 2022, 2022, 4721547. [Google Scholar] [CrossRef]

- Blanco, T.; Casas, R.; Marco, A.; Martínez, I. Micro ad-hoc Health Social Networks (uHSN). Design and evaluation of a social-based solution for patient support. J. Biomed. Inform. 2019, 89, 68–80. [Google Scholar] [CrossRef]

- Casas Nebra, R.; Asensio, A.; Tamargo, A.; Blanco, T. Design of water cycle management in small urban centers using design thinking. Dyna 2018, 93, 114–119. [Google Scholar] [CrossRef]

- Spencer, D. Card Sorting: Designing Usable Categories; Rosenfeld Media: New York, NY, USA, 2009. [Google Scholar]

- Green, P.A.; Wei-Hass, L. The Wizard of Oz: A Tool for Rapid Development of User Interfaces, Final Report; UMTRI-85-27; Transportation Research Institute: Ann Arbor, MI, USA, 1985. [Google Scholar]

- Freepik-Flaticon. Gamer Icons. Flaticon. Available online: https://www.flaticon.com/free-icons/gamer (accessed on 30 December 2022).

- Freepik-Flaticon. Maps and Location Icons. Flaticon. Available online: https://www.flaticon.com/free-icons/maps-and-location (accessed on 30 December 2022).

| Step | Objective | Method | No. of Samples n |

|---|---|---|---|

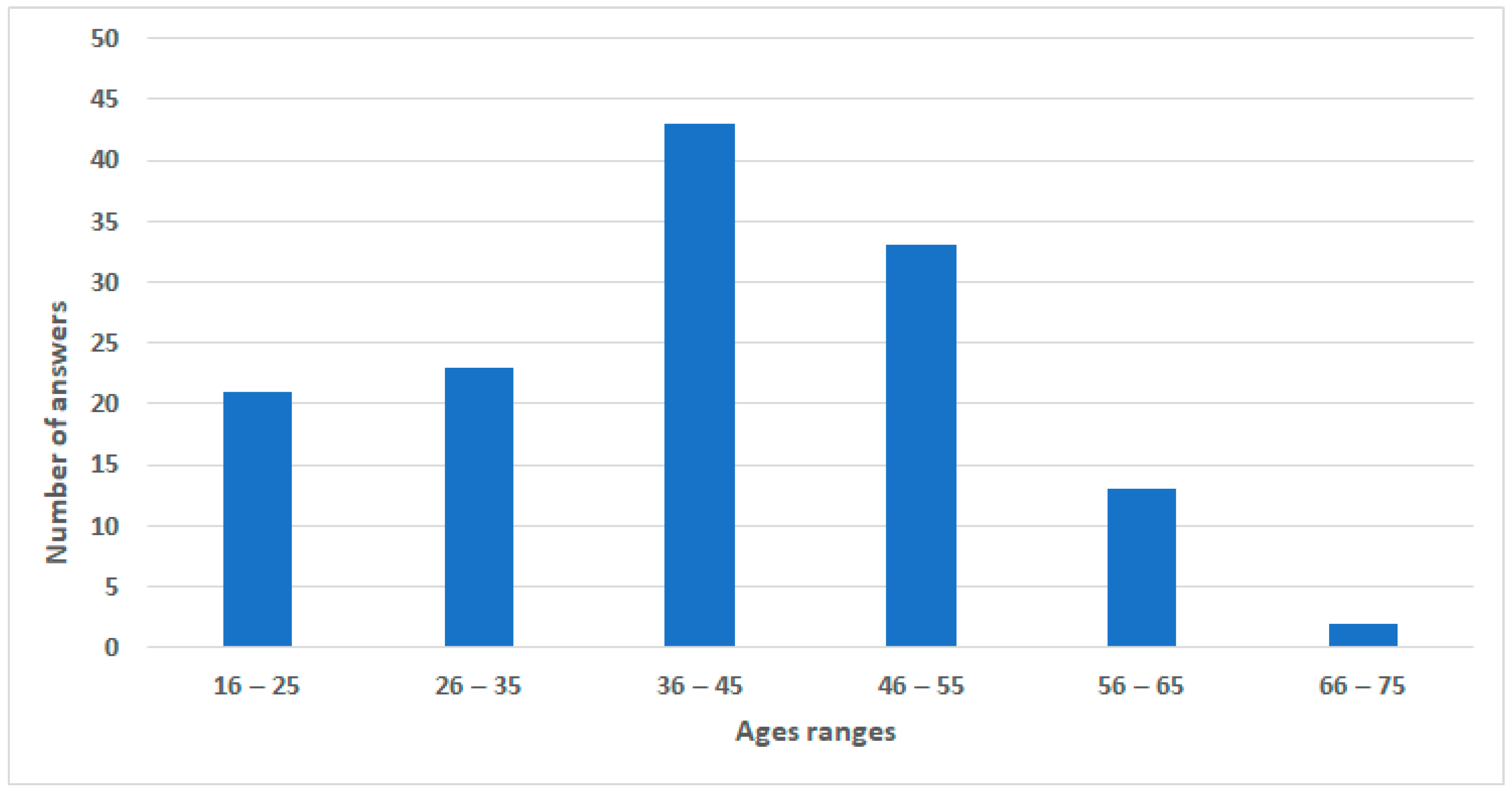

| 1 | Identify terminological corpus. | Survey. | 137 |

| 2 | Identify the chain of commands. User behavior and acceptance. | Wizard of Oz. Interviews. | 10 |

| 3 | Expert testing before user test. Validate prototype functionality. | Cognitive walkthrough + heuristics. | 3 |

| Create technology for VUI as a first approach.Validate results obtained in previous steps. | Prototype + user testing. Interviews. | 8 | |

| 4 | Emerging issues (transversal). | Triangulation of methods. | |

| 158 (total) |

| Scenario Description | Planned Errors | Planned Searches for the Wizard |

|---|---|---|

| Your Aunt Lisa is on a business trip to Sri Lanka and you would like to know about the island. Locate the island and research the capital city (Colombo) and national parks. | The difficulty of pronouncing the name of the island. | Colombo, Kandy, Jaffna, Batticaloa, Galle, Srilanka, Sri Lanka, India, Asia |

| You are looking for a park in Paris to have a picnic. It is summer, and you want a place with plenty of trees and shade. Compare the leafiness of the parks using satellite images. | If you look for parque París (Paris park) in Google Maps from an IP in Spain, the first answer is Paris Park in Madrid. | París, París Francia, Parque París, París parque, Parque, Bois de Bolougne, Bois de Vincennes |

| You have an appointment with a friend on the first roundabout of the city of Vitoria (Spain), arriving via highway A-132. Look for this point on the map to learn how to get there. | The command rotonda en Vitoria (roundabout in Vitoria) sends users to a street with Vitoria in its name (depending on the geographic context of the user). The command rotonda A-132 shows only the road. | Vitoria, A132, A132 Vitoria, Vitoria A132 |

| You have to carry a package from the Grancasa shopping center in Zaragoza to Pilar Square. Find the pedestrian route without using the routing functionality provided by Google Maps. | You need to zoom in to see the names of the streets. | Grancasa, Centro Comercial Grancasa, CC Grancasa, Calle Gertrudis Gómez de Avellaneda, Gertrudis Gómez de Avellaneda, Calle Gómez de Avellaneda, Gómez de Avellaneda, Calle María Zambrano, María Zambrano |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Blanco, T.; Martín-Segura, S.; de Larrinzar, J.L.; Béjar, R.; Zarazaga-Soria, F.J. First Steps toward Voice User Interfaces for Web-Based Navigation of Geographic Information: A Spanish Terms Study. Appl. Sci. 2023, 13, 2083. https://doi.org/10.3390/app13042083

Blanco T, Martín-Segura S, de Larrinzar JL, Béjar R, Zarazaga-Soria FJ. First Steps toward Voice User Interfaces for Web-Based Navigation of Geographic Information: A Spanish Terms Study. Applied Sciences. 2023; 13(4):2083. https://doi.org/10.3390/app13042083

Chicago/Turabian StyleBlanco, Teresa, Sergio Martín-Segura, Juan López de Larrinzar, Rubén Béjar, and Francisco Javier Zarazaga-Soria. 2023. "First Steps toward Voice User Interfaces for Web-Based Navigation of Geographic Information: A Spanish Terms Study" Applied Sciences 13, no. 4: 2083. https://doi.org/10.3390/app13042083

APA StyleBlanco, T., Martín-Segura, S., de Larrinzar, J. L., Béjar, R., & Zarazaga-Soria, F. J. (2023). First Steps toward Voice User Interfaces for Web-Based Navigation of Geographic Information: A Spanish Terms Study. Applied Sciences, 13(4), 2083. https://doi.org/10.3390/app13042083