1. Introduction

The practice of trying to determine the future value of a business stock or other financial instrument that is traded on an exchange is known as a stock market prediction. A stock trader who can accurately forecast the price of the stock in the future may realize big profits. According to the efficient-market theory, stock prices should reflect all of the information that is currently accessible, and any price changes that are not based on newly revealed information are therefore intrinsically unpredictable. The efficacy of prior prediction algorithms is limited when they just use traditional stock data since the stock market is extremely sensitive to the information that is provided from outside sources. The proliferation of the Internet has led to the development of new types of collective intelligence, such as Google Trends and Wikipedia. The alterations that are being made to these platforms will have a big impact on the stock market. It is considered that the stock price is affected not only by the feeling of financial news but also by the amount of trading activity. Predicting the stock market has received a lot of interest from both academics and businesspeople. The question still stands: How well can the past price history of common stock be used to make predictions about the stock’s future price?

The efficient market hypothesis (EMH) and the random walk theory were used in the early research on stock market predictions. These early models said that you cannot predict stock prices because they are based on new information (news) and not on current or past prices. So, stock prices will move in a random way and their accuracy cannot be more than 50%. There are more and more studies that show that the EMH and random walk hypotheses are wrong. These studies show that the stock market can be predicted to some extent, which calls into question the EMH’s basic ideas. Many people in the business world also see Warren Buffet’s ability to consistently beat the S&P index (as a practical sign that the market can be predicted.

It is difficult to build an appropriate model since the price might vary depending on several different variables including news, data from social media platforms, fundamentals, output of the firm, historical data, and government bonds price in relation to the economy of the nation. A prediction model that takes into account just one element may not be accurate. As a result, taking into account several elements, such as news, data from social media, and historical pricing, raises the level of precision achieved by the model.

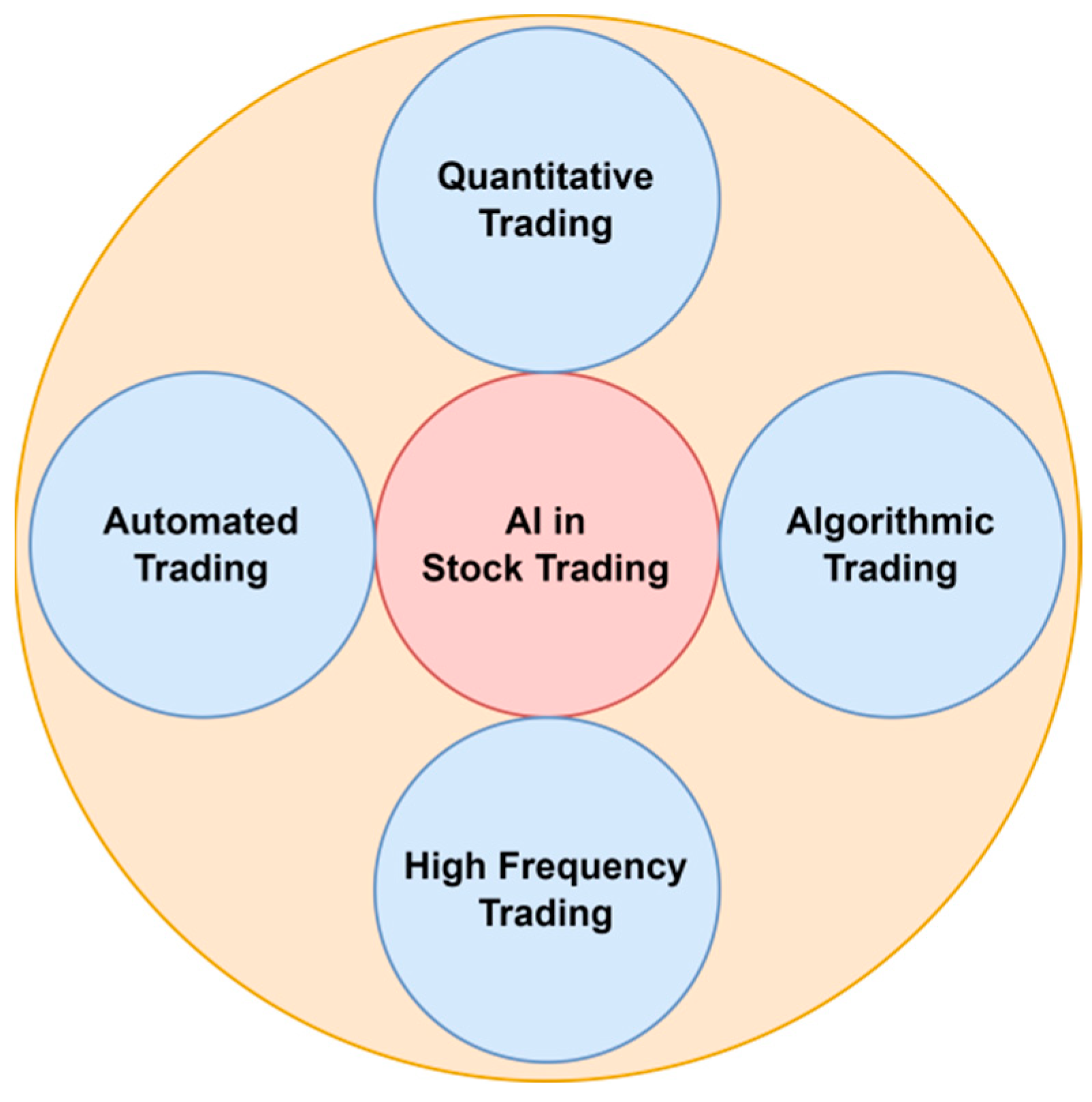

Figure 1 describes various applications of AI in Stock trading. Quantitative trading uses computer algorithms and programs based on basic or complicated mathematical models to discover and profit from trading opportunities. Algorithmic trading executes orders based on time, price, and volume using pre-programmed trading instructions. High-frequency trading, which is commonly abbreviated as HFT, is a type of trading that makes use of highly advanced computer algorithms to execute a huge number of orders in a very short amount of time (fractions of a second). An automated trading system (ATS), a form of algorithmic trading, employs computer software to issue buy and sell orders and automatically send them to a market center or exchange (

Figure 1).

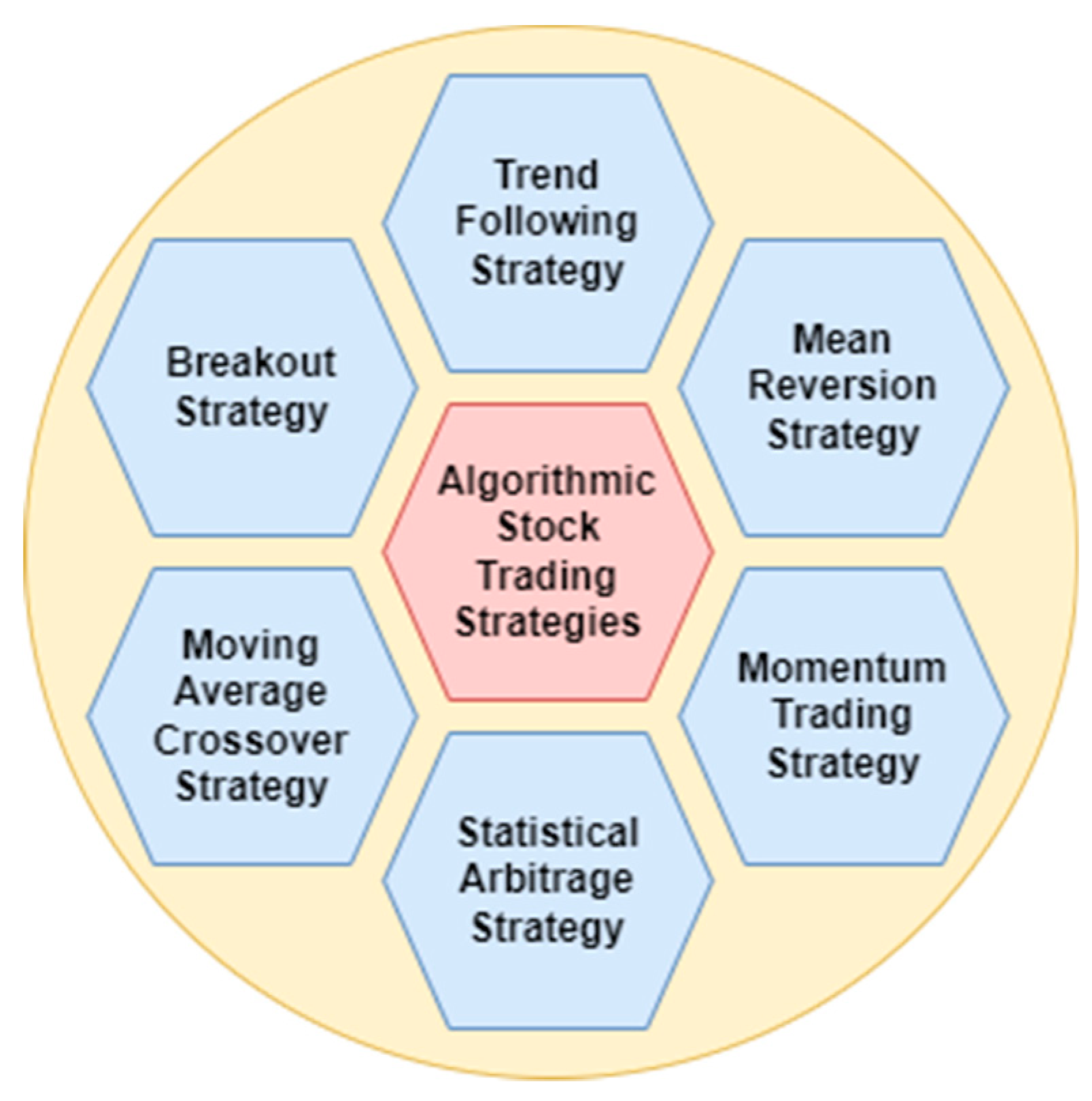

Figure 2 shows different algorithmic trading strategies. Trend following involves buying an item when its price trend rises and selling when it falls, anticipating price fluctuations to continue. In mean reversal asset prices and historical returns gradually revert to their long-term mean or average, according to mean reversion. Momentum trading involves purchasing and selling assets based on current price patterns. Statistical arbitrage uses big, varied portfolios to trade short-term. The moving average crossover approach identifies possible locations of support or resistance in the market. Moving averages are always calculated using historical data; all they do is indicate the price that was averaged over a certain period, despite the fact that this may give the impression of being predictive. The breakout approach investigates levels or regions that security has not been able to advance beyond and then it waits for the security to move beyond those levels and regions. The term “breakout” refers to what happens when a price rises beyond one of these thresholds (

Figure 2).

Our main contributions in this survey are summarized as follows:

In this article, we will examine the most recent developments in the field of applying methods of artificial intelligence, particularly those that have emerged in the previous three years.

We analyze the work performed by Machine Learning, Deep Learning, and Reinforcement Learning in Stock Market Prediction over the last three years.

We analyze the general process of the stock market based on past research work.

We will suggest further research direction to be taken in stock market prediction so that new researchers can find a new way to do some research.

In addition, finally, we will tell from where we can collect the data source for research.

The remaining sections of this work are structured as follows: In

Section 2, we detail the related research works that were used in this paper. In

Section 3 we have given a brief overview of this study. Data processing and features are discussed in

Section 4. In

Section 5 the various prediction methods are described in-depth, along with some contextual information. The evaluation metrics are described in

Section 6. The implementation framework and data availability are discussed in

Section 7.

Section 8 describes the future work in this area.

Section 9 describes the summary of the paper and the study is wrapped up in

Section 10.

2. Related Work

Weiwei Jianghas tried to tell the impact of machine learning on the stock market by analyzing about 66 research papers in 2021. He analyzed various stock market indexes, different types of data, different types of input features, and different types of Artificial Intelligent techniques including machine learning, deep learning, and reinforcement learning in his review [

1]. He discusses the trading process and how it fits the RL framework. Next, he explores RL preliminaries, including Markov Decision Process components and the basic RL framework techniques for deriving optimum policies. Moreover, he examines the QT literature under tabular and function approximation RL. Finally, he discusses why RL implementation on QT applications remains a major study area [

2]. He summarizes many deep learning approaches that work well in algorithmic trading applications and quickly present specific examples. He attempts to present the current snapshot application for algorithmic trading based on deep learning technology and exhibit the multiple implementations of the built algorithmic trading model. This work aims to present a complete research progress of deep learning applications in algorithmic trading and benefit future research of computer program trading systems [

3]. Deep reinforcement learning, often known as DRL, has shown impressive performance across a variety of machine learning benchmarks. We provide an overview of DRL as it relates to trading on financial markets. We also uncover common trading community structures that make use of DRL, as well as identify common problems and limits associated with such methods [

4]. With the development of computationally sophisticated approaches, it is feasible to mitigate a significant portion of the risk. The primary emphasis is on the use of computationally intelligent methods for stock market forecasting. Some examples of such methods are artificial neural networks, fuzzy logic, genetic algorithms, and other evolutionary techniques. We give an up-to-date look at the research that has been performed in the field of stock market forecasting using methods based on computational intelligence [

5].

Many of the evaluated studies were proof-of-concept notions with unrealistic trials and no real-time trading implementations. The bulk of works showed statistically significant performance gains relative to baseline techniques, but none achieved respectable profitability. There is also a paucity of experimental testing on real-time, online trading platforms and meaningful comparisons between agents based on various DRL or human traders. We conclude that DRL in stock trading has great application potential, resembling expert traders under strong assumptions, although the study is still in its early phases [

6]. We describe the methodology behind machine learning-based methods to stock market prediction, with the use of a generalized structure as the basis for the discussion. The findings from the most recent decade, 2011–2021, were gathered from several online digital libraries and databases, such as the ACM digital library and Scopus, and then subjected to an in-depth critical analysis. In addition, a comprehensive comparative analysis was conducted to determine the general direction of importance [

7]. Yue Deng et al. develop a system that makes use of both DL and RL. The DL component should be responsible for automatically sensing the changing market conditions to perform useful feature learning. The RL module will then interact with deep representations and make trade choices in order to accrue the most possible rewards while operating in an unknown environment. The learning method is built in a sophisticated NN that displays deep as well as recurrent structures [

8]. This suggests a nested reinforcement learning (Nested RL) technique that integrates reinforcement learning on the fundamental decision maker using three deep reinforcement learning models: Advantage Actor-Critic, Deep Deterministic Policy Gradient, and Soft Actor-Critic. This technique can dynamically pick agents to make market-specific trading choices. Second, it proposes weight random selection with confidence (WRSC) to inherit the benefits of three fundamental decision makers. Thus, investors may benefit from all agents’ advantages [

9]. Present a hybrid time series decomposition stock index forecasting model. CEEMDAN decomposes the stock index into Intrinsic Mode Functions (IMFs) with distinct feature scales and trend terms. ADF evaluates IMF and trend-term stability. Stationary time series employ the Autoregressive Moving Average (ARMA) model, whereas unstable time series use LSTM models to extract abstract characteristics. Reconstructing each time sequence’s projected outcomes yields the final value [

10].

Researchers employ the continuous transfer entropy approach as a feature selection criterion and analyze the prediction ability of a wide range of factors on the direction that bitcoin’s price will move in [

11]. By developing the RCSNet hybrid model, which combines linear and nonlinear models into a single framework, the issue was addressed. When it comes to anticipating the movement of stock market values, the author has collected new evidence [

12]. Two hundred and forty-four publicly listed Dhaka stock market businesses were examined between 2015 and 2019. The AUC for this model was 96%, while the accuracy and sensitivity of the artificial neural network’s classifier were found to be 88%. However, the ensemble classifier outperforms all other models when taking into account log loss and certain other measures [

13]. The wavelet and phase difference analyses show that, with the exception of Brazil, COVID-19 pandemic cases had no negative impact on stock market indices over the entire sample period, but had a negative impact on stock markets during some sub-sample periods of each Latin American country’s entire sample [

14].

The authors show how adversarial reinforcement learning (ARL) can be used to make market-marking agents that can handle competing and changing market conditions. They compare two traditional single-agent RL agents with ARL and show that their ARL approach leads to the emergence of risk-averse behavior without constraints or domain-specific penalties, as well as significant improvements in performance across a set of standard metrics, whether the agents are tested with or without an opponent [

15]. They create and train an RL execution agent with the help of the Double Deep Q-Learning (DDQL) method and the ABIDES (Agent-Based Interactive Discrete Event Simulation) environment. In certain situations, RL agents tend to use the Time-Weighted Average Price (TWAP) method [

16]. The author showed how RL-based decision trees can be used to train autonomous agents that are aware of risks. The specific parts of their proposed architecture are a LOB (Limit order book) simulator that can use historical data to predict how the market will react to aggressive trading and a risk-sensitive Q-learning process that uses decision trees to build compact execution agents [

17].

3. Overview

In this section of the article, we are going to talk about the document that is going to be the primary focus of our study in this specific piece of research. All of the material, including this one, has been searched for and compiled from a wide variety of sources, including Google scholar. A literature search was conducted utilizing academic and scientific databases to discover articles from well-known research journals and publications (e.g., Scopus, Google Scholar, Springer, IEEE explore, Science Direct, and Web of Science). The keywords that were chosen for these literary analyses were: “Artificial Intelligence in quantitative finance”, “Machine learning in quantitative finance”, “Deep Learning in quantitative finance”, “Reinforcement Learning in quantitative finance”, “Q-Learning in stock trading strategy”, “Deep Q-Learning in stock trading strategy”, “reinforcement learning in stock trading”, “Artificial Intelligence in stock market”, “Machine learning in stock market”, “Using AI to make a prediction of the stock market”, “AI-based stock forecasting”, ”Survey paper on machine learning in stock trading”, “Deep reinforcement learning in trading”, and ”Neural Network for financial time series forecasting”. Research papers that were less related have not been taken for the review/survey process.

Table 1 shows the year-wise article surveyed in this review paper and also mentions the article references.

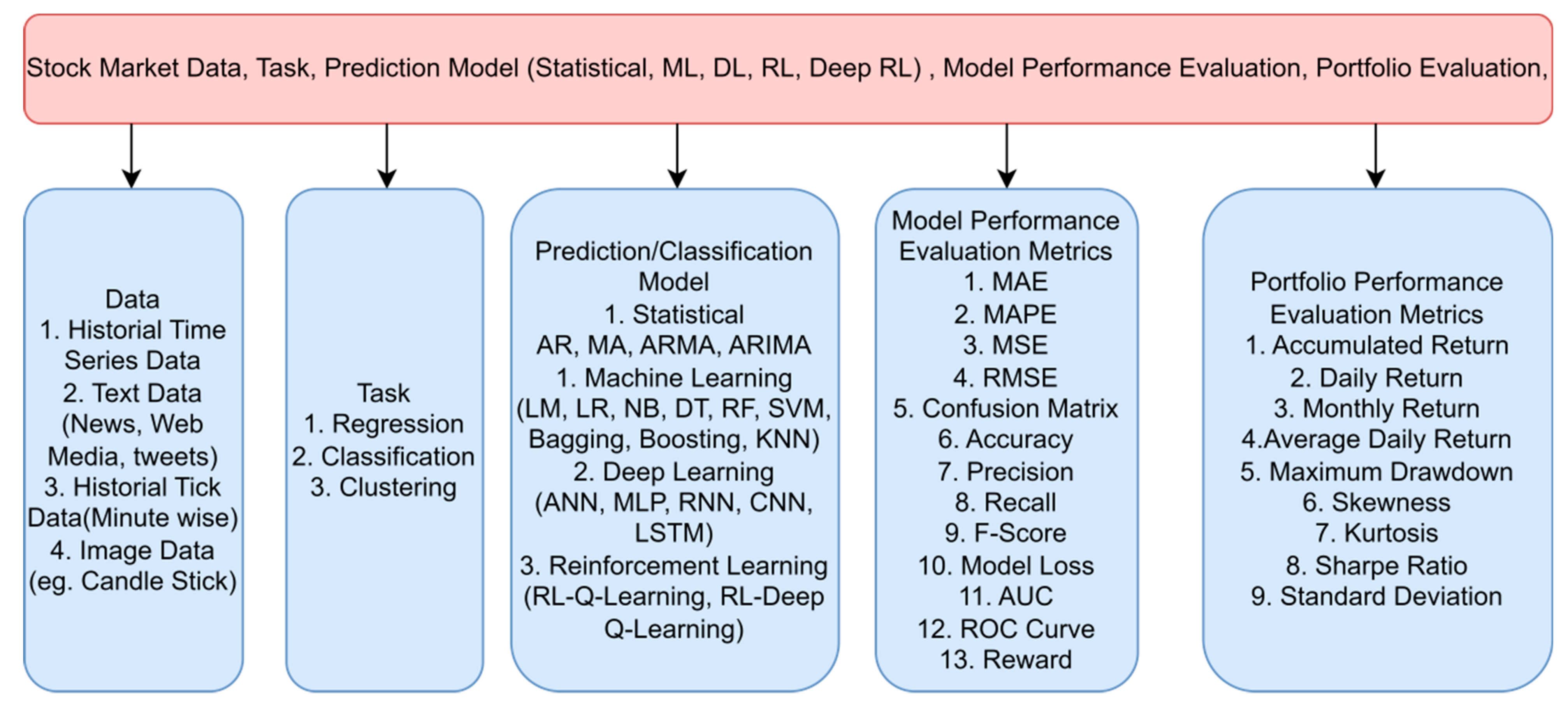

Figure 3 describes the overall process of the stock market using artificial intelligence. Including Different types of Stock Market Data, Machine Learning Tasks, Prediction Models, Model Performance Evaluation Metrics, and portfolio performance evaluation metrics.

We have focused on more recent papers so that we can know about the advancement in this field, and we can analyze the recent development. We analyzed more than 70 papers and found that about 7% of the researchers used only machine learning while about 20% of the researchers used only deep learning. Most of the researchers have used reinforcement learning, around 39%, while the percentage of researchers using the hybrid model, i.e., two or more models is around 25%.

This study includes the market index of the US (S&P 500, Dow Jones Industrial Average, NASDAQ Composite, NYSE Composite), the market index of ASIA (NIFTY50, BSE30, CSI Composite, HIS, SSE Composite index (000001.SS), market index of Europe (Euronext 100 (N100), and the Deutsche Boese DAX index (GDAXI)) market index of England, Germany, Africa, and Nigeria.

Table 2 shows the important stock indices and their country.

Table 3 shows Journal titles and the total number of papers under consideration are shown below.

4. Data Processing and Features

Most researchers used day-wise data having OPEN, HIGH, LOW, CLOSE, and VOLUME (OHLCV), and some researchers used tick data may be a minute-wise or five-minute tick, or fifteen-minute tick data for their research. Some researchers work on sentiment analysis used text data such as tweets and comments.

Different Types of Data Used in Stock Market Prediction

(a) Historical Price Market Data (Day Wise)

Generally, the historical data consist of the stock’s open price, high price, low price, close price, and traded volume. The following is an example of the TATAMOTORS day-wise data set [

9,

10,

26,

27,

28,

36,

43,

54,

79,

80].

Table 4 shows date-wise stock market data.

(b) Historical Price Market Tick Data (Minute Wise)

Generally, tick historical data contain the open price, high price low price, close price, and trading volume of the stock, and they are used for prediction in intraday trading.

Table 5 shows minute-wise stock market data.

(c) Tweets or Comments (Text Data)

Twitter is now one of the most often used social networks for performing sentiment research. The stock market is the topic of discussion in this collection of tweets’ data. Tweets were carefully labeled as being in either the favorable, neutral, or negative categories [

81,

82].

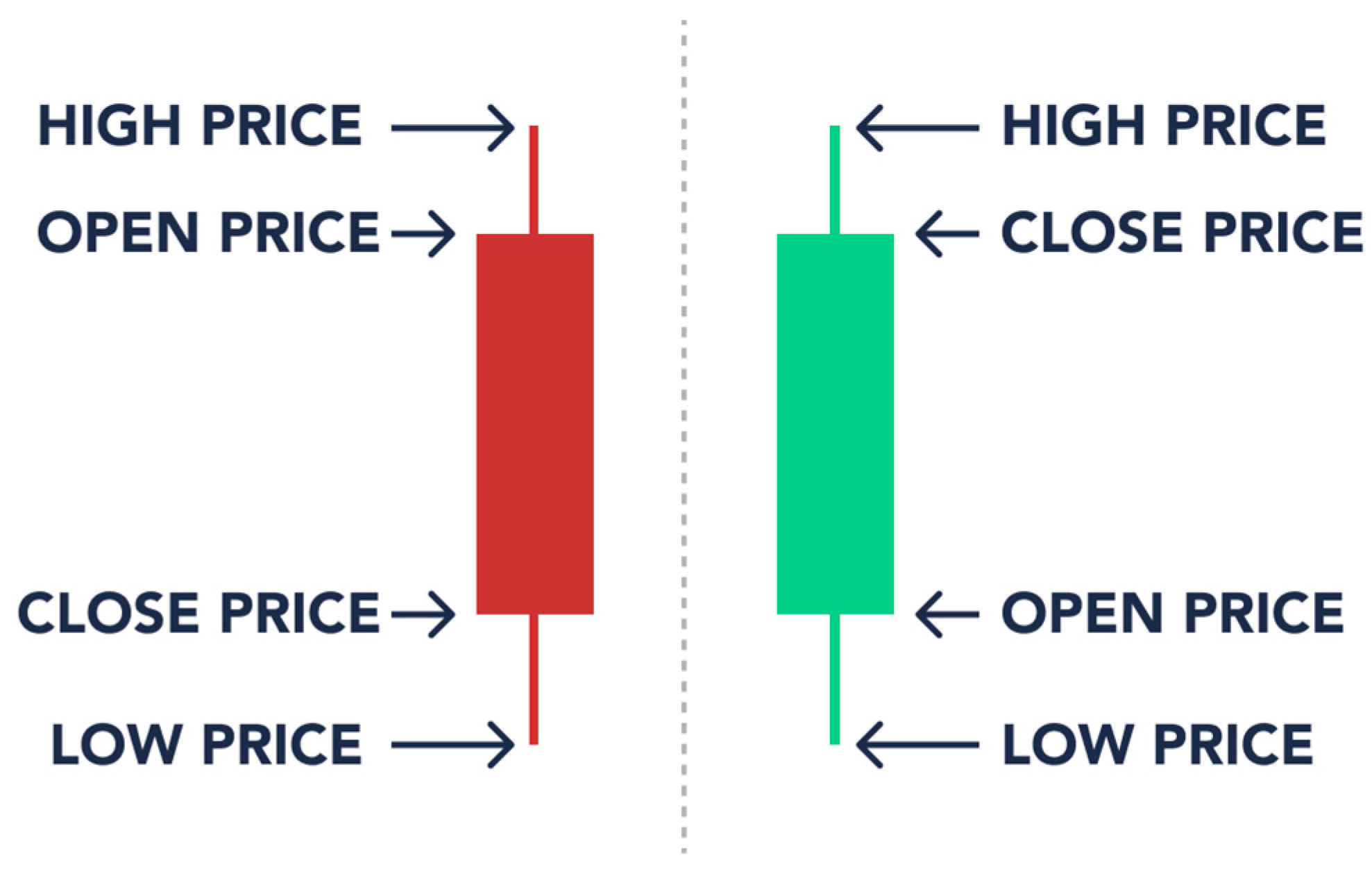

(d) Image data

Image processing is strong with CNN. These algorithms are the best for automated image processing. Many firms utilize these algorithms to detect visual items. Candlestick charts are used as the input image for stock prediction. Input image for stock prediction is often represented by a candlestick chart.

Figure 4 describes the candlestick in stock trading, candlesticks are helpful since they display four price levels (open, close, high, and low) throughout a certain time frame (

Figure 4) [

22,

40].

(e) Fundamental data

Fundamental analysts focus on the underlying business of a company’s stock. They look into how well a firm has performed in the past and how reliable its financial statements are. One example of this is the study of financial statements. Annual Report and Balance Sheet, Profit Loss Statement, Management information, Competitor information, Products information, Economic Environment, Political Factor, and Industrial Relationship. Taking into consideration the above facts and documents for the basic analysis,

Table 6 shows the factors that are employed as inputs in the machine learning models being applied, as well as the literature around these topics.

5. Prediction Models-Artificial Intelligence in Quantitative Finance

Artificial intelligence (AI) and particularly machine learning (ML) have sparked a significant amount of interest and investment from financial institutions such as banks, asset managers, pension funds, and stock trading. These businesses make it a priority to apply a variety of machine learning (ML), deep learning (DL), and reinforcement learning (RL) methods, including supervised and unsupervised ML, natural language processing (NLP), and data science, in order to improve their investment strategies, gain new insights into the competitive landscape using the data they collect and purchase, and ultimately boost their profits and outperform their rivals.

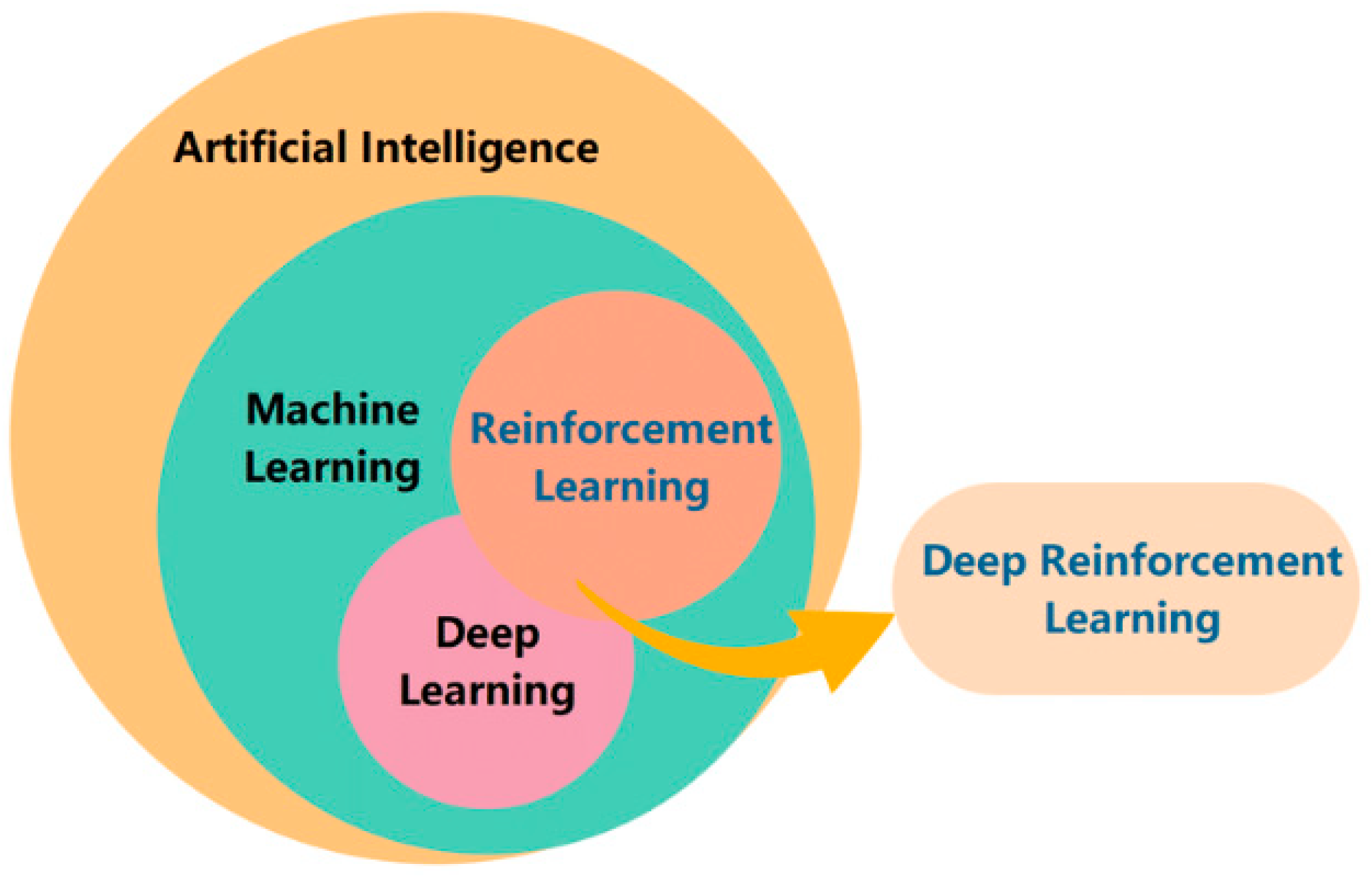

Figure 5 shows the relationship among AI, ML, DL, RL, and DRL. Both machine learning and deep learning are subsets of artificial intelligence. ML is the subset of AI. DL is the subset of ML.

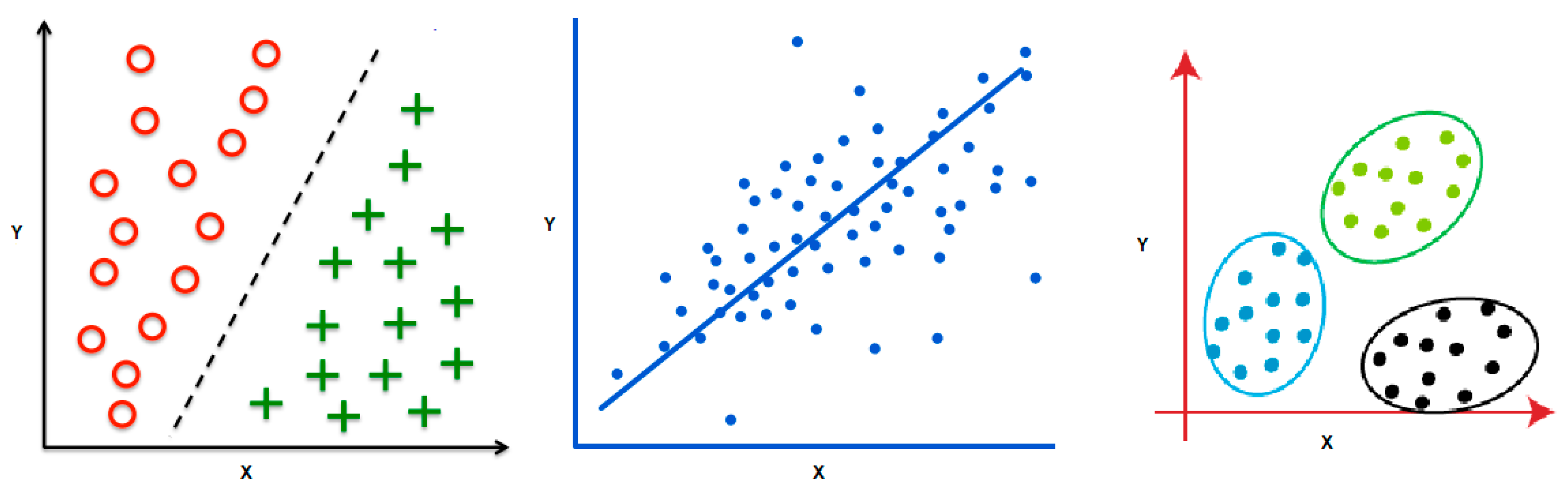

Figure 6 shows Machine learning algorithms are used for Classification, Regression, and Clustering.

Many machine learning (ML) systems, particularly deep neural networks, are black boxes since it is difficult to acquire a thorough knowledge of their inner functioning once they have been trained. There are three types of problems such as Classification, Regression, and Clustering which can be solved by the learning techniques such as ML, DL, or RL (

Figure 6). Linear Regression (Regression) and Decision Trees (Classification) are two examples of basic models with interpretable structures and few parameters that do not need further explanatory techniques. In contrast, black boxes are often used to describe complicated models such as Deep Neural Networks with millions of parameters (weights), since the model’s behavior is incomprehensible even when its structure and weights are known. With the help of ML, computers can analyze massive information in search of trends and patterns. In the business of trading, recognizing patterns and trends is essential. Machine learning algorithms are adept at digesting vast volumes of data to uncover patterns that are difficult for humans to recognize.

The area of machine learning (ML) is expanding quickly and is now being used in a wide variety of sectors, including quantitative finance. Quantitative finance is the practice of analyzing financial data through the use of mathematical and statistical methods. Machine learning methods are increasingly being utilized in quantitative finance to create more accurate forecasts and enhance the performance of financial models. The following is a selection of applications of machine learning that may be found in quantitative finance:

Algorithmic trading: Machine learning algorithms are used in algorithmic trading to create trading strategies that can automatically assess massive volumes of financial data and execute trades based on the algorithm’s predictions.

Risk management: Machine learning may uncover risky trends in massive financial data sets. This helps financial firms manage risk.

Portfolio optimization: Using factors such as volatility, risk, and return, machine learning can be used to find the best investments for a portfolio.

Forecasting: By using machine learning, one may analyze past financial data and forecast future market patterns, exchange rates, and other parameters.

Sentiment analysis: Machine learning may also be used to look at social media and news to find out what people think about a company, product, or industry and how they feel about it.

Traditional financial models may be coupled with machine learning models to enhance forecasts and outcomes. However, it is crucial to remember that not all machine learning models are suited for all issues. The model chosen relies on the problem and the available data, so machine learning and finance professionals should work together to obtain the best results.

Prediction task using AI in quantitative finance: Using machine learning, Shares Price Prediction reveals the future worth of business stock and other financial assets traded on an exchange. The purpose of stock price prediction is to generate substantial profits. Predicting the performance of the stock market is a difficult endeavor. Other aspects, such as physical and psychological characteristics and rational and illogical conduct, also influence the forecast. All of these variables contribute to the dynamic and volatile nature of share pricing. This makes it very difficult to accurately estimate stock values.

Classification task using AI in quantitative finance: This recommendation model is supposed to categorize a certain stock as a “STRONG BUY” or a “STRONG SELL,” as well as “BUY,” “SELL,” and “HOLD.” The imbalanced natures of the different class labels provide the greatest difficulty in this classification issue. The majority of the time, the user will be required to HOLD the stocks, and only a very small percentage of the time will a STRONG BUY or STRONG SELL Signal be present.

Portfolio construction using AI in quantitative finance: An investor’s portfolio consists of diverse assets including stocks, bonds, and cash that are allocated based on many considerations, including investment risk, projected return, and liquidity needs. The goal is to obtain an estimated return with as little risk as possible. The portfolio was chosen based on how well the assets would do in the future, as evaluated by machine learning methods.

Table 7 shows the list of the standard machine learning prediction models that were used.

Table 8 shows standard deep-learning prediction models that were used.

Table 9 shows standard reinforcement learning models that were used and

Table 10 shows hybrid prediction models that were used.

5.1. Machine Learning

In the discipline of computer science known as “machine learning,” programmatic learning is made possible. Algorithms for machine learning may be broken down into two broad classes. Supervised learning is one kind, whereas unsupervised learning is another. To train a machine learning model, one must first provide both the method and the training data for the model to use in determining its parameters. Various types of machine learning models in the prediction of the stock market. They are Autoregressive Integrated moving average (ARIMA) [

10], Linear Regression [

28], Logistic Regression (LR) [

51], Decision Tree (DT) [

37,

49,

57], Random Forest (RF) [

30,

37,

49,

57,

66,

68] Support Vector Machine (SVM) [

33,

50,

51,

61,

65,

68,

74,

76], and k-Nearest Neighbor (kNN) [

37].

5.1.1. Supervised Learning

Supervised learning trains the machine learning algorithm using input samples and labels. The model approximates the function y = f(x) as closely as feasible. Using a training dataset with proper labels to instruct the algorithm is supervised learning. Supervised learning algorithms are classified:

- (a)

Regression: is determined by the variable that represents the output. If the output variable is continuous, then the job in question is referred to as a regression task. Predicting the price of a home and the price of a stock are both instances of regression problems.

- (b)

Classification: Classification tasks use categorical variables such as color and form. Most machine learning applications employ supervised learning. Supervised learning methods include logistic regression, linear regression, SVMs, and random forests.

5.1.2. Unsupervised Learning

In Unsupervised learning, we train the machine learning algorithm with only the input examples but no output labels. The algorithm tries to learn the underlying structure of the input examples and discover patterns. Unsupervised learning algorithms can be further categorized based on two tasks, namely Clustering and Association. In clustering, an algorithm such as k-means tries to discover inherent clusters or groups in the data.

Here are some advantages and disadvantages of using machine learning techniques to predict the stock market (

Table 11).

5.2. Deep Learning

Deep learning, a subfield of machine learning, is simply a multilayered neural network. To understand how the human brain functions, these neural networks are being developed.

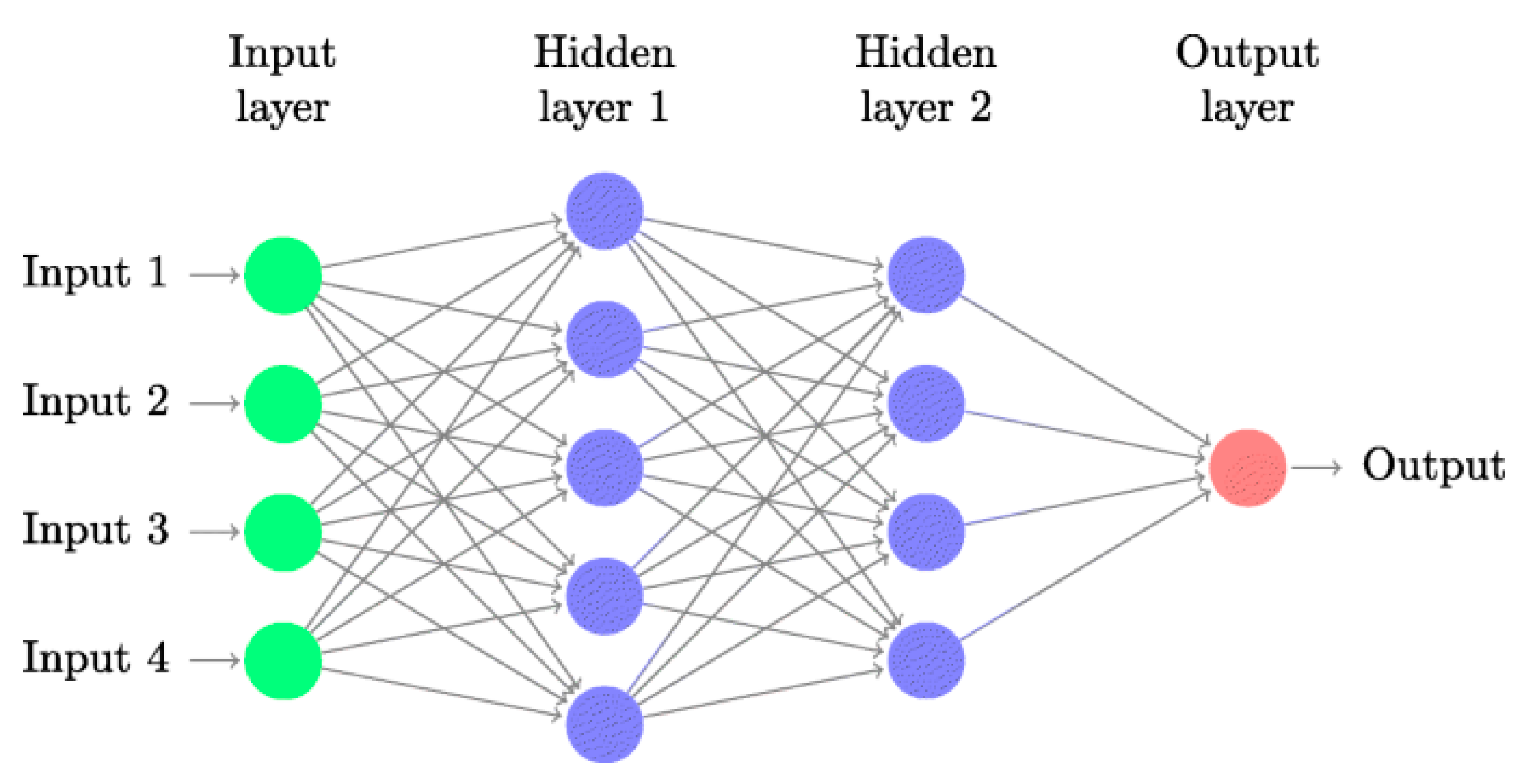

5.2.1. Artificial Neural Network

Deep learning techniques use artificial neural networks (ANNs), a type of machine learning. Artificial neural networks (ANNs) have a node layer, input layer, hidden layer, and output layer. Each artificial neuron has a weight and threshold and connects to others. Nodes deliver data to the next tier if their output exceeds the threshold. No data pass to the next network layer.

Figure 7 shows the basic structure of ANN. To imitate the human brain, artificial neural networks (ANNs) use layers of input, hidden, and output neurons (nodes) that are interconnected [

25,

27,

33,

57,

61,

65,

74,

76].

Using a combination of financial statistics, technical indicators, and public opinion, 26 efficient factors are chosen to create a factor database. Second, construct a stock selection model using a Gated Recurrent Unit (GRU) neural network trained with the Cuckoo Search (CS) optimization method. The suggested multi-factor deep learning stock selection model based on intelligent optimization is then put to the test in the real world, culminating in the development of a quantitative investing strategy. The back-test findings demonstrate that the model-based quantitative trading strategy generated a Sharpe ratio of 127.08%, an annualized rate of return of 40.66%, an excess return of 13.13%, and a maximum drawdown rate of 17.38% [

20].

Advantages of using ANN [

85]

Outstanding capability in dealing with complicated nonlinear patterns.

Extremely precise group-data modeling. The model is adaptable to both linear and non-linear dynamics.

Ability to accommodate missing and noisy data without breaking the model.

Disadvantages of using ANN [85] Deep Learning (DL) has quickly become a formidable technique for modeling and predicting volatile financial markets globally. Long Short-Term Memory (LSTM), Convolutional Neural Networks (CNNs), and Recurrent Neural Networks (RNNs) are among the many DL approaches used in diverse applications.

5.2.2. Recurrent Neural Network (RNN)

Recurrent Neural Networks (RNNs), FFNNs with a temporal twist, solve this problem. This neural network features links between passes and time. They are a kind of artificial neural network whose nodes form a directed graph along a sequence, enabling information to flow back into previous levels and remain [

37,

68,

69].

Advantages of using RNN [

85]

Disadvantages of using RNN [

85]

5.2.3. Long Short-Term Memory (LSTM)

The Recurrent Neural Network (RNN) is used to learn patterns that happen one after the other, but it has a problem with vanishing gradient. There is a variation of the RNN known as the LSTM that does not suffer from the issue of vanishing gradient. It consists of a series of repeated memory modules, each of which consists of three gates [

3,

10,

18,

26,

29,

34,

37,

54,

57,

68].

Advantages of using LSTM [

85]

Capable of self-learning data interactions and patterns.

Analyses data interactions and hidden patterns to make effective predictions.

Capable of retaining knowledge for an extended period of time.

Disadvantages of using LSTM [

85]

Due to the correlation between the number of memory cells and the dimensions of recurrent weight matrices, it is difficult to index the memory while it is being written to or read from.

5.2.4. Convolutional Neural Networks (CNNs)

CNNs are biologically inspired such as ANNs. Their design is inspired by the brain’s visual cortex, which alternates basic and sophisticated cells. CNNs are feed-forward networks that exclusively pass information from inputs to outputs. CNN designs include modules of convolutional and pooling layers. CNN has Input Layer, Convolution Layer, Pooling Layer, Dense Layer, and Output Layer [

22,

36,

64].

Advantages of using CNN [

64]

Compared to conventional classification algorithms, they have lower pre-processing overhead and can teach themselves new filters and attributes.

Another important benefit offered by CNNs is the distribution of weight.

Disadvantages of using CNN [

64]

For the CNN to function properly, a significant amount of training data are required.

Because of procedures such as maxpool, CNNs often have substantially higher latency.

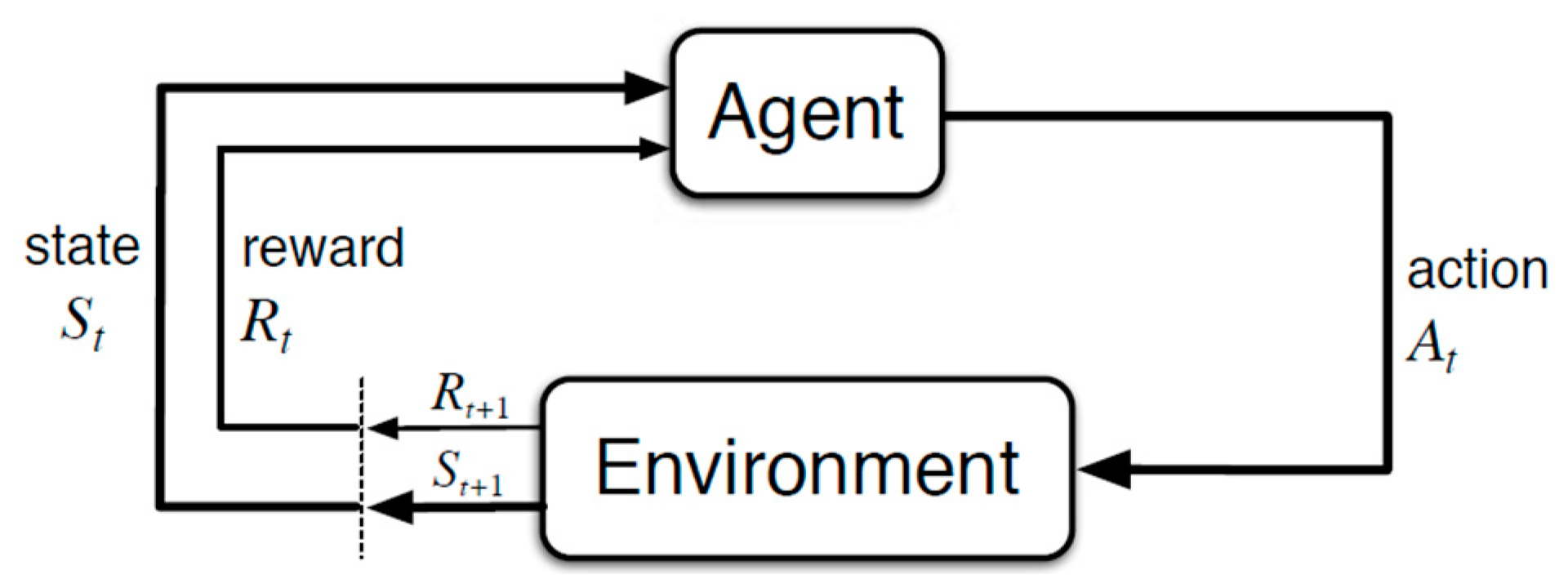

5.3. Reinforcement Learning

Reinforcement learning (RL) is a branch of machine learning that looks at how smart agents should act in an environment to obtain the most rewards over time. The setting in which the agent learns and makes decisions is referred to as the environment. In response to the activities taken by the agent, the environment will either deliver rewards or transition to a new state. Therefore, in the learning method known as reinforcement, do not instruct an agent on how it should carry out a certain task; rather, we give the agent incentives, either positive or negative, depending on the agent’s behavior [

21,

23,

31,

40,

44,

63,

72].

Figure 8 shows the basic concept of reinforcement learning. The following are the fundamental components of a reinforcement learning problem:

Environment: The external environment in which the agent has interactions.

State: Current circumstances involving the agent.

Reward: Feedback signal in the form of numbers from the environment.

Policy: A method for mapping the state of the agent to its actions. A policy is what determines the course of action to take in any particular condition.

Value: The future reward, that an agent would be eligible to earn if they act while in a certain condition.

The various states of the environment were represented in two distinct ways, both of which were offered. To determine the most effective methods of dynamic trading, the Q-learning algorithm of reinforcement learning was used during the training of the trading agent in this study. Conducted tests using actual data taken from the Indian and American stock markets and used both of the models that were offered. In terms of profitability, the suggested models demonstrated superior performance to both the Buy-and-Hold and Decision-Tree-based trading strategies [

40,

58].

5.3.1. Q-Learning

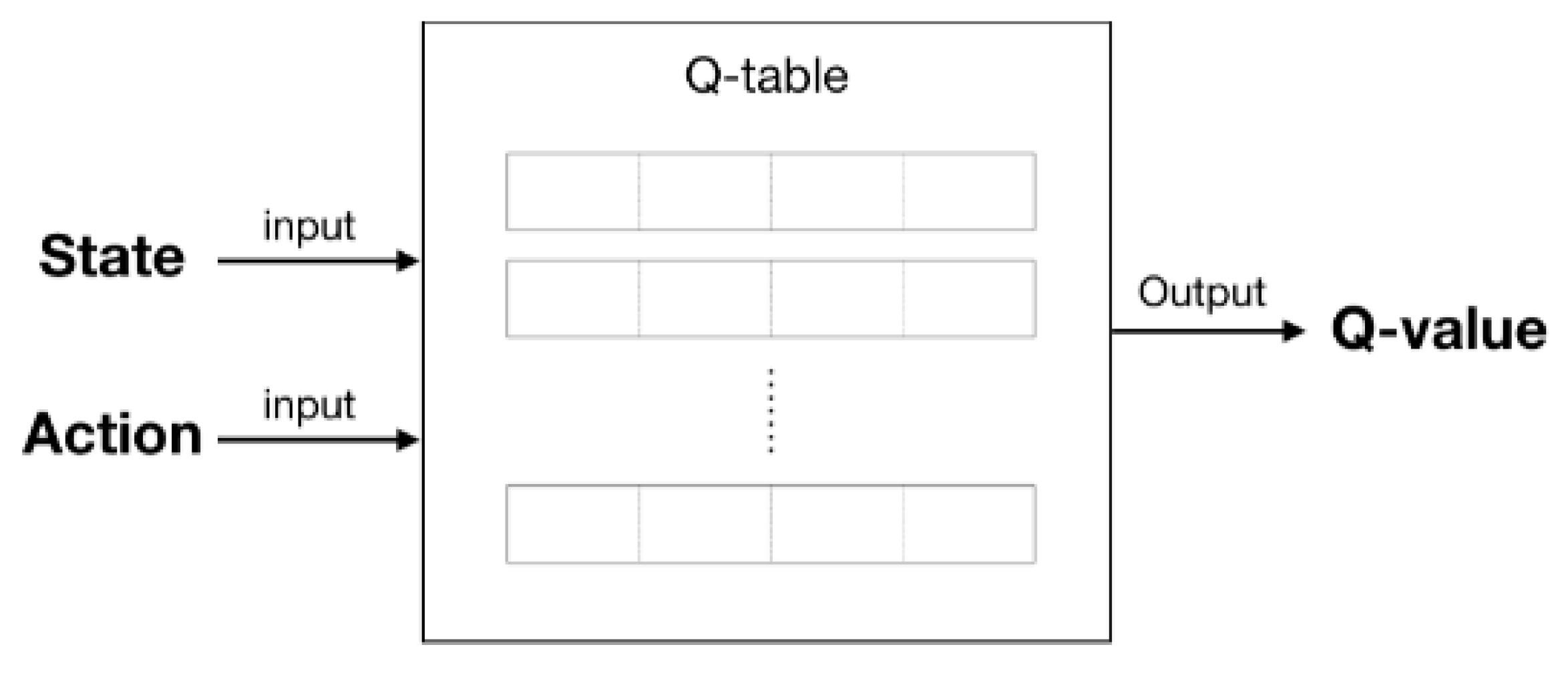

Q-Learning is a Reinforcement learning strategy that, given the current state, determines the next optimal action. It selects this action at random and maximizes its reward potential. Q-learning is a model-free, off-policy kind of reinforcement learning that, given the present state of the agent, will determine the most effective path of action and recommend it. The next action that has to be performed will be decided by the agent based on its current position within the environment.

Figure 9 shows the Q-Learning that employs Q-values (also known as action values) to iteratively enhance the learning agent’s behavior.

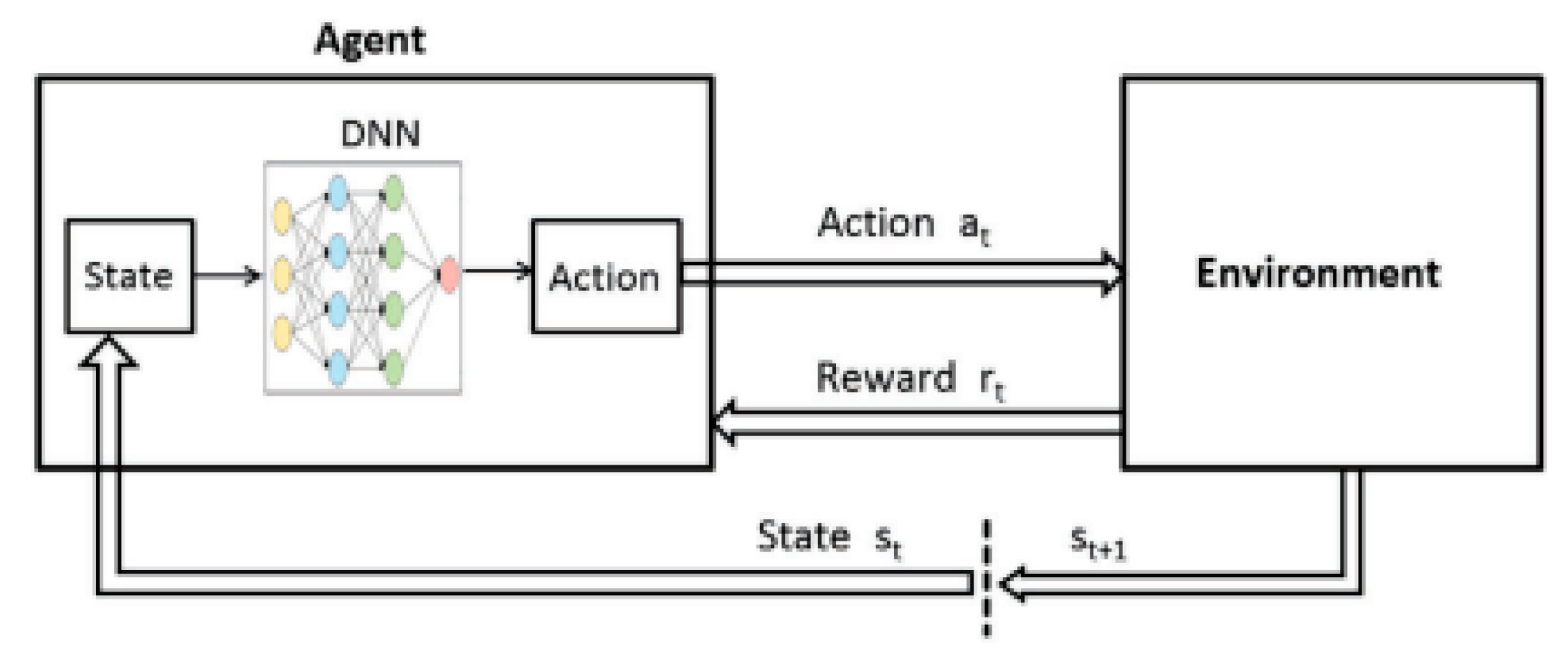

5.3.2. Deep Q-Learning (Q-Learning with Neural Networks)

Deep Q-Learning saves all previous events in memory and defines future action using Q-Network output. Thus, the Q-network obtains the Q-value at state s

t, and the target network (Neural Network) calculates the Q-value for state S

t+1 (next state) to stabilize training and stop abrupt Q-value count increases by duplicating it as training data on each iterated Q-value of the Q-network [

4,

9,

23,

32,

35,

39,

41,

42,

43,

48,

55,

56,

58,

60,

67,

70,

71].

Table 12 shows the advantages and disadvantages of using reinforcement learning in quantitative finance.

Figure 10 shows the basic structure of deep Q-Learning. Deep Q-learning is a technique that is used to train artificial intelligence agents to function in settings that have distinct action spaces.

6. Evaluation Metrics

There are two different types of performance evaluation metrics often used. The first one evaluates the model performance (

Table 13) and the second one is used for portfolio performance (

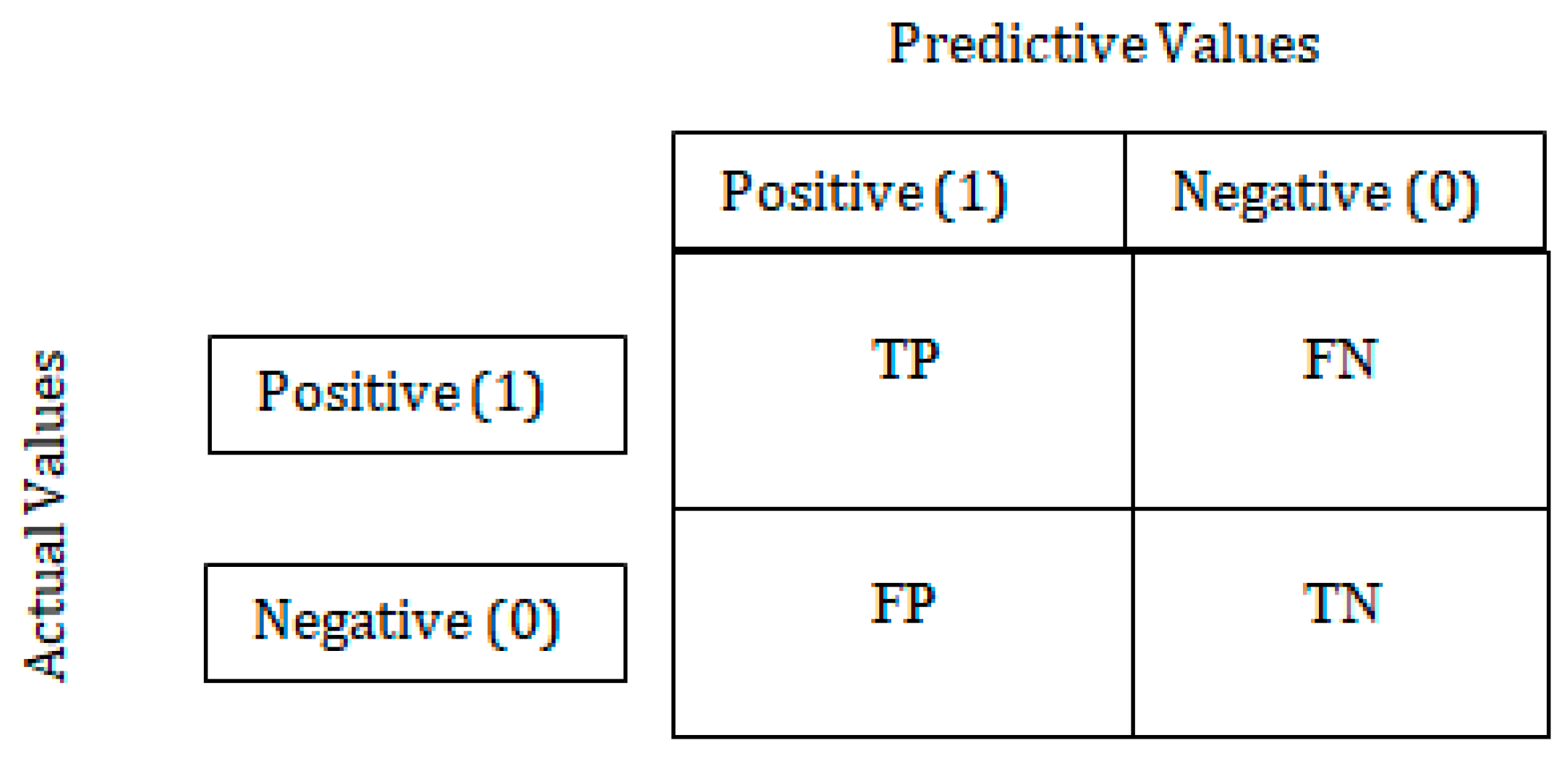

Table 14). The confusion matrix is used to evaluate the classification models’ performance for a given set of test data.

Figure 11 shows the confusion matrix of binary classification and has only two conditions positive and negative.

The confusion matrix is a matrix that is used to assess the performance of classification models for a certain set of test data by comparing those models against one another. The following cases are included in the table above.

True Positive (TP): YES, as predicted by the model, and YES, as measured by the actual value.

True Negative (TN): The model predicted NO, and the actual value was also NO.

False Positive (FP): YES was predicted by the model, but the actual value was NO.

False Negative (FN): When the model predicted NO but the actual value was YES.

6.1. Learning Model Evaluation Metrics

We shall now examine strategies for evaluating the quality of models that are generated by our Machine Learning Algorithms. Let

be the

ith actual value and

be the

ith predicted value and N is the total predicted points. Following are the learning model evaluation metrics:

Table 13.

Model evaluation metrics and article used.

Table 13.

Model evaluation metrics and article used.

| Learning Model Evaluation Metrics | Description | Formula | Article |

|---|

| Mean Absolute Error (MAE) | The average absolute difference between the values that are fitted by the model and the historical data that have been observed. | | [10,27,29,57] |

| Mean Squared Error (MSE) | The sum of squared differences between model-fitted values and observed values divided by the number of historical points minus the model’s parameters. | | [10,27,57,79] |

| Root Mean Squared Error (RMSE) | The root square of the mean square error. It uses the same scale as the values that were seen in the data. | | [27,28,29,80] |

| Mean Absolute Percent Error (MAPE) | The average absolute percentage disparity between the values predicted by the model and the values actually found in the data. | | [10,27,57] |

| Classification Accuracy | It determines the frequency with which the model correctly predicts the output. | | [30,37,49,57,66,68] |

| Misclassification rate | It determines the frequency with which the model correctly predicts the output. | | |

| Precision | Precision is also known as Positive Predictive Value. Precision is the proportion of correct positive predictions to total positive predictions. | | [30,37,49,57,66,68] |

| Recall | It is defined as the positive classes that our model accurately predicted out of a total of all positive classes. | | [30,37,49,57,66,68] |

| F1-Score | It is difficult to compare two models if one has low precision and the other has a high recall. We can use F-score for this. If the recall equals the precision, the F-score is maximum | | [30,37,49,57,66,68] |

| AUC | AUC is the probability that a random positive case comes after a random negative example. AUC varies from 0 to 1. A model whose forecasts are 100% inaccurate has an AUC of 0.0; one whose predictions are 100% right has an AUC of 1.0. | | [37] |

6.2. Portfolio Evaluation Metrics

Measures of the overall performance of the portfolio are an essential component of the decision to invest. Tracking and measuring return and risk are the two basic metrics for evaluating portfolio performance. Several other indicators may be used from within those broad categories to track the development of an investment portfolio over time, below

Table 14 shows seven performance and risk criteria used by investors to assess portfolios.

Table 14.

Portfolio evaluation metrics, description, and article.

Table 14.

Portfolio evaluation metrics, description, and article.

| Portfolio Evaluation Metrics | Description | Article |

|---|

| Accumulated Return | Accumulated Return refers to the percentage increase or decrease in value over the life of an investment. The Accumulated Return value ought to be positive and as high as feasible. | [9,40,58,86] |

| Average daily return | The term “average daily return” refers to the mathematical mean of a set of returns made over time | [40,58,86] |

| Maximum Drawdown | Maximum drawdown is a risk indicator for a portfolio chosen based on a certain strategy. It calculates the largest single decline in a portfolio’s value from its peak to its bottom. | [9,40,58,86] |

| Skewness | Skewness is a measure of the distribution’s symmetry or asymmetry. Skewness is 0 in the perfectly symmetric distribution. If Skewness is less than or more than one, the data are skewed. | [40,56,58,87] |

| Kurtosis | Kurtosis is a measure of how much a variable’s value fluctuates below or above the mean. A Kurtosis score greater than 3 implies a wide variance around the mean. | [40,56,58,87] |

| Standard Deviation | Investors use standard deviation to calculate the volatility of a stock’s performance. The greater the number represented by the standard deviation, the more volatile the stock. | [40,58,87] |

| Sharpe ratio | Portfolio risk-return analysis uses a Sharpe ratio. The strategy with the highest Sharpe ratio has the lowest risk. | [9,40,58,86] |

7. Data Availability and Implementation

To carry out research on which to base stock market predictions, the Internet provides access to a wealth of free data sources. Yahoo! Finance, which is used by a large number of people and offers free access to a variety of data such as stock quotations, current news, and statistics about foreign markets, and has been cited in at least 25 out of 72 publications, should be your first pick. If you are looking for historical price and volume, Yahoo! Finance should be your first choice. Websites that host data competitions, such as Kaggle, are increasingly becoming a suitable option when looking for a library of data for predicting the stock market. In addition, quantitative firms may work along with these websites to organize a competition for making stock market predictions. Some data source link

https://finance.yahoo.com,

https://in.investing.com/,

https://github.com/,

https://www.kaggle.com/,

https://www.nseindia.com/,

https://www.wikipedia.org/ (accessed on 15 October 2022).

8. Challenges and Future Research Direction

The current work on DRL-based QT consists of applying several traditional DRL algorithms to various QT circumstances. Exploring the efficacy of more sophisticated DRL approaches on financial data is a research path that is expected to become more prominent in the near future. The lack of available data is the first significant obstacle in the process of developing lucrative DRL-based QT algorithms. Model-based DRL has the potential to solve this problem by acquiring knowledge about a financial market model to accelerate the training process. It is possible to employ the worst-case scenario, such as a financial catastrophe, as a regulator while simultaneously maximizing the cumulative gain. Second, the primary objective of the various QT duties is to strike a healthy balance between increasing profits and reducing losses as much as possible [

1].

Doing more comprehensive tests on live-trading platforms as opposed to backtesting or very limited real-time trading. This is one of the probable future areas that research might go in. Additional investigation on how DRL agents behave in volatile market settings (stock market). There are direct comparisons between the trading strategies used by DRL agents and those used by human traders. As an example, in a controller experiment, a professional day trader may be pitted against an algorithmic DRL agent while trading in the same market for the same amount of time to see which of the two would generate superior results [

6].

8.1. Multi-Agent Advanced DRL Techniques on Quantitative Finance

Data scarcity is a key obstacle to creating lucrative DRL-based QT methods. To combat this issue, model-based DRL can quickly develop a model of the financial market. A worst-case scenario (a financial catastrophe, for instance) might serve as a regularizer while maximizing the cumulative gain. Second, achieving a reasonable compromise between profit maximization and risk reduction is a common theme across a wide variety of QT jobs. To cultivate diverse trading policies with flexible risk tolerance, multiobjective DRL methods may be used as a tool [

53].

8.2. Fresh Configurations for Quantitative Trading

QT settings, including High-frequency and pairs trading, have not been investigated. Intraday trading captures ephemeral trading opportunities; high-frequency trading captures the Microtrades; pairs trading focuses on two very linked assets. Research is promising to create DRL-based approaches that meet the peculiarities of situations [

53].

8.3. Towards a More Accurate Simulation of the Market

The success of DRL approaches is built on a critical foundation which is high-fidelity simulation. Although previous research has taken into account a great number of practical constraints, such as transaction fees, execution costs, and slippage, there is still a significant amount of work to be performed to provide a realistic simulation of the financial market. This is primarily because the ubiquitous market impact has been largely ignored. Even though there have been some attempts made to mimic the effects of the market, developing high-fidelity market simulators is still a very difficult undertaking [

53].

8.4. Improvements with Auto-ML Approaches

Auto-ML approaches, which include automated, hyperparameter tuning, feature selection, and neural architecture, can considerably increase the efficiency of constructing Deep RL-based Quantitative Trading methods and make them simple to use for those who do not have in-depth knowledge of DRL [

53].

9. Discussion

In this study, we outlined some of the most promising approaches that have been taken to handle various financial issues. We began our analysis by picking the primary methodologies that were mostly based on conventional methods, which are often used for time-series forecasting. In recent years, numerous machine-learning approaches have been developed for modeling financial time series. This class of algorithms includes the random forest classifier and support vector machine model. The fundamental concept of support vector machines is to determine an ideal hyperplane by mapping the data into higher-dimensional spaces in which the values may be linearly separated from one another. Three primary hyperparameters in random forest algorithms must be adjusted before beginning training. The size of the nodes, the number of trees, and the number of characteristics sampled are some of these. In light of these facts, various machine learning algorithms, such as ANN, LSTM, and RNN, have seen a significant increase in use for time-series forecasting in recent years. The artificial neural network (ANN) model was considered one of the key techniques. ANNs work to identify patterns in the data they are given to provide solutions that are as generalizable as possible based on the information they already possess. According to the findings that have been provided, ANNs have found the most use in the financial sector. In recent years, a substantial amount of research has been conducted in the field of time-series forecasting on the use of RNN as well as its derivatives, such as LSTM. These models obtained superior outcomes in predicting difficulties compared to the traditional ANN model owing to their remarkable capacity to grasp hidden correlations within data. This ability allowed the models to achieve higher results. In the end, we explored the topic of defining a profitable trading strategy by applying RL techniques. As a result, recent developments in this area have combined DL and RL techniques, making use of their great abilities to generate complicated data. The purpose of this study was to explore how techniques based on machine learning; deep learning and reinforcement learning perform better than methods based on non-linear algorithms that are underlying their advantages and disadvantages. The primary advantage of using it is that it cuts down on the amount of time spent computing while guaranteeing that work is performed to a good standard. At the moment, there is a growing trend toward the implementation of dynamic trading techniques that are based on deep reinforcement learning. It is possible to develop dynamic trading strategies via the use of reinforcement learning, which overcomes the issue of sequential decision making. However, reinforcement learning does not include the capacity to sense the surrounding environment [

88].

10. Conclusions

Both the practical and theoretical applications of our ideas are outlined in this survey’s conclusion. Concerning the use of this information, a straightforward and generic process flow has been laid out for beginners in this field, making it simple for them to follow. When putting the surveyed articles into use as baselines, the debate regarding how they should be implemented and whether or not they can be reproduced would be incredibly helpful. Deep learning is the primary focus of our research from a theoretical point of view. When compared to other studies that are pertinent to the field of expert and intelligent systems, our research focuses on deep learning because it has been demonstrated to be effective for a wide variety of applications. In this study, the most recent advancement of deep learning approaches to a particular situation, such as predicting the stock market, is explored and summarized. Concurrently, a fundamental theoretical introduction is provided to these deep learning techniques. In addition to this, potential future study areas are outlined for scholars that are interested in the topic. When we use machine learning, deep learning, and reinforcement learning in the prediction of the stock market it depends on the input data as per the current situation of the stock market. However, it is not necessary that the same model give us the same result with the same efficiency on the future data because stock prices reflect all currently available information, and any price changes that are not based on newly revealed information thus are inherent. The stock market is very unpredictable and subject to influence from a variety of sources (social, political, economic, and demographic, etc.).

Author Contributions

Conceptualization, S.K.S., A.M. and N.D.B.; methodology, S.K.S.; software, S.K.S., A.M. and N.D.B.; validation, A.M. and N.D.B.; formal analysis, S.K.S. and N.D.B.; investigation, S.K.S., A.M. and N.D.B.; resources, A.M. and N.D.B.; data curation, S.K.S. and A.M.; writing—original draft preparation, S.K.S., A.M. and N.D.B.; writing—review and editing, S.K.S., A.M. and N.D.B.; visualization, S.K.S. and N.D.B.; supervision, A.M. and N.D.B.; project administration, S.K.S., A.M. and N.D.B.; funding acquisition, A.M. and N.D.B. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Data Availability Statement

The raw data required to reproduce the above findings are available from different sources. The corresponding links and sources are provided in the references.

Conflicts of Interest

The authors declare no conflict of interest to any party.

Abbreviations

| A/D | The Accumulation/Distribution Indicator |

| ACC | Accuracy |

| ACF | Auto Correlation Function |

| ADA | Ada Boosted Decision Trees |

| AdaBoost | Adaptive Boosting |

| ADR | Average Daily Return |

| ADX | Average Directional Movement Index |

| AE | Auto Encoder |

| AI | Artificial Intelligence |

| ANN | Artificial Neural Network |

| ARIMA | Auto-Regressive Integrated Moving Average |

| ARMA | Auto Regressive Moving Average |

| ATR | Average True Range |

| AUC | Area Under the (ROC) Curve |

| BBANDS | Bollinger Bands |

| BDT | Boosted Decision Tree |

| BERT | Bidirectional Encoder Representation from Transformers |

| BILSTM | Bidirectional Long Short-Term Memory |

| BN | Bayesian Network |

| BNN | Bayesian Neural Network |

| BP | Back Propagation |

| BRNN | Bidirectional Recurrent Neural Network |

| CART | Classification And Regression Tree |

| CCI | Commodity Channel Index |

| CMMs | Conditional Markov Model |

| CNN | Convolutional Neural Network |

| ConvNet | Convolutional Neural Network |

| CRNN | Convolutional Recurrent Neural Network |

| DANN | Dynamic Artificial Neural Network |

| DBN | Deep Belief Network |

| DEMA | Double Exponential Moving Average |

| DL | Deep Learning |

| DNN | Deep Neural Network |

| DQN | Deep Q-Network |

| DT | Decision Tree |

| ELM | Extreme Learning Machine |

| EMA | Exponential Moving Average |

| FA | Fundamental Analysis |

| FC-CNN | Fully Convolutional Neural Network |

| FC-LSTM | Fully Connected Long Short-Term Memory |

| FCM | Fuzzy C-Means |

| FCN | Fully Convolutional Network |

| FN | False Negative |

| FNN | Feedforward Neural Network |

| FNR | False Negative Rate |

| FPR | False Positive Rate |

| FP | False Positive |

| GA | Genetic Algorithm |

| GAN | Generative Adversarial Network |

| GD | Gradient Descent |

| GRU | Gated Recurrent Unit |

| HMM | Hidden Markov Model |

| HNN | Hybrid Neural Network |

| k-NN | k-Nearest Neighbor |

| LDA | Linear Discriminant Analysis |

| LR | Logistic Regression |

| LSTM | Long Short-Term Memory |

| MACD | Moving Average Convergence/Divergence |

| MAE | Mean Absolute Error |

| MAPE | Mean Absolute Prediction Error |

| MCMC | Markov Chain Monte Carlo |

| MD | Maximum Drawdown |

| MDP | Markov Decision Process |

| MDRNN | Multidimensional recurrent neural network |

| MIS | Management Information System |

| ML | Machine Learning |

| MLP | Multi-Layer Perceptron |

| MOM | Momentum |

| MSE | Mean Squared Error |

| NB | Naïve Bayes |

| NLP | Natural Language Processing |

| NLT | Neural Machine Translation |

| NN | Neural Network |

| NSE | National Stock Exchange |

| OBV | On Balance Volume |

| PCA | Principal Component Analysis |

| QA | Quantitative Analysis |

| QF | Quantitative Finance |

| QT | Quantitative Trading |

| RBF | Radial Basis Function |

| ReLU | Rectified Linear Unit |

| RF | Random Forest |

| RL | Reinforcement Learning |

| RMSE | Root Mean Squared Error |

| RNN | Recurrent Neural Network |

| ROC | Received Operating Characteristic |

| RSI | Relative Strength Index |

| RTRL | Real-Time Recurrent Learning |

| SGBoost | Stochastic Gradient Boosting |

| SGD | Stochastic Gradient Descent |

| SLP | Single-Layer Perceptron |

| SMA | Simple Moving Average |

| STDDEV | Standard Deviation |

| STOCH | Stochastic |

| SVM | Support Vector Machine |

| SVR | Support Vector Regression |

| TA | Technical Analysis |

| TEMA | Triple Exponential Moving Average |

| TI | Technical Indicators |

| TNR | True Negative Rate |

| TN | True Negative |

| TP | True Positive |

| TPR | True Positive Rate |

| TSF | Time Series Forecast |

| VAR | Variance |

| WILLR | William’s % R |

| WMA | Weighted Moving Average |

| XGBoost | eXtreme Gradient Boosting |

| Yi | ith actual value |

| ith predicted value |

References

- Jiang, W. Applications of deep learning in stock market prediction: Recent progress. Expert Syst. Appl. 2021, 184, 115537. [Google Scholar] [CrossRef]

- Alameer, A.; Saleh, H.; Alshehri, K. Reinforcement Learning in Quantitative Trading: A Survey. TechRxiv 2022. [Google Scholar] [CrossRef]

- Wang, Y.; Yan, G. Survey on the application of deep learning in algorithmic trading. Data Sci. Financ. Econ. 2021, 1, 345–361. [Google Scholar] [CrossRef]

- Millea, A. Deep reinforcement learning for trading—A critical survey. Data 2021, 6, 119. [Google Scholar] [CrossRef]

- Kumar, G.; Jain, S.; Singh, U.P. Stock Market Forecasting Using Computational Intelligence: A Survey; Springer: Dordrecht, The Netherlands, 2021; Volume 28, pp. 1069–1101. [Google Scholar]

- Pricope, T.-V. Deep Reinforcement Learning in Quantitative Algorithmic Trading: A Review. arXiv 2021, arXiv:2106.00123. [Google Scholar] [CrossRef]

- Rouf, N.; Malik, M.B.; Arif, T.; Sharma, S.; Singh, S.; Aich, S.; Kim, H.-C. Stock market prediction using machine learning techniques: A decade survey on methodologies, recent developments, and future directions. Electronics 2021, 10, 2717. [Google Scholar] [CrossRef]

- Deng, Y.; Bao, F.; Kong, Y.; Ren, Z.; Dai, Q. Deep Direct Reinforcement Learning for Financial Signal Representation and Trading. IEEE Trans. Neural Networks Learn. Syst. 2017, 28, 653–664. [Google Scholar] [CrossRef]

- Yu, X.; Wu, W.; Liao, X.; Han, Y. Dynamic stock-decision ensemble strategy based on deep reinforcement learning. Appl. Intell. 2023, 53, 2452–2470. [Google Scholar] [CrossRef]

- Lv, P.; Wu, Q.; Xu, J.; Shu, Y. Stock Index Prediction Based on Time Series Decomposition and Hybrid Model. Entropy 2022, 24, 146. [Google Scholar] [CrossRef] [PubMed]

- García-Medina, A.; Huynh, T.L.D. What drives bitcoin? An approach from continuous local transfer entropy and deep learning classification models. Entropy 2021, 23, 1582. [Google Scholar] [CrossRef]

- Zhao, Y.; Chen, Z. Forecasting stock price movement: New evidence from a novel hybrid deep learning model. J. Asian Bus. Econ. Stud. 2022, 29, 91–104. [Google Scholar] [CrossRef]

- Abdullah, M. The implication of machine learning for financial solvency prediction: An empirical analysis on public listed companies of Bangladesh. J. Asian Bus. Econ. Stud. 2021, 28, 303–320. [Google Scholar] [CrossRef]

- Bilgili, F.; Koçak, E.; Kuşkaya, S. Dynamics and Co-movements between the COVID-19 Outbreak and the Stock Market in Latin American Countries: An Evaluation Based on the Wavelet-Partial Wavelet Coherence Model. Eval. Rev. 2022. Online ahead of print. [Google Scholar] [CrossRef] [PubMed]

- Spooner, T.; Savani, R. Robust market making via adversarial reinforcement learning. In Proceedings of the Twenty-Ninth International Joint Conference on Artificial Intelligence, Yokohama, Japan, 7–15 January 2021; pp. 4590–4596. [Google Scholar] [CrossRef]

- Karpe, M.; Fang, J.; Ma, Z.; Wang, C. Multi-agent reinforcement learning in a realistic limit order book market simulation. In Proceedings of the ICAIF 2020-1st ACM International Conference on AI in Finance, New York, NY, USA, 15–16 October 2020. [Google Scholar] [CrossRef]

- Vyetrenko, S.; Xu, S. Risk-Sensitive Compact Decision Trees for Autonomous Execution in Presence of Simulated Market Response. arXiv 2019, arXiv:1906.02312. [Google Scholar] [CrossRef]

- Yildiz, Z.C.; Yildiz, S.B. A portfolio construction framework using LSTM-based stock markets forecasting. Int. J. Financ. Econ. 2022, 27, 2356–2366. [Google Scholar] [CrossRef]

- Khan, W.; Ghazanfar, M.A.; Azam, M.A.; Karami, A.; Alyoubi, K.H.; Alfakeeh, A.S. Stock market prediction using machine learning classifiers and social media, news. J. Ambient Intell. Humaniz. Comput. 2022, 13, 3433–3456. [Google Scholar] [CrossRef]

- Wang, J.; Zhuang, Z.; Feng, L. Intelligent Optimization Based Multi-Factor Deep Learning Stock Selection Model and Quantitative Trading Strategy. Mathematics 2022, 10, 566. [Google Scholar] [CrossRef]

- Xu, H.; Chai, L.; Luo, Z.; Li, S. Stock movement prediction via gated recurrent unit network based on reinforcement learning with incorporated attention mechanisms. Neurocomputing 2022, 467, 214–228. [Google Scholar] [CrossRef]

- Brim, A.; Flann, N.S. Deep reinforcement learning stock market trading, utilizing a CNN with candlestick images. PLoS ONE 2022, 17, e0263181. [Google Scholar] [CrossRef]

- Li, Y.; Liu, P.; Wang, Z. Stock Trading Strategies Based on Deep Reinforcement Learning. Sci. Program. 2022, 2022, 4698656. [Google Scholar] [CrossRef]

- Yao, J.; Li, Z.; Cui, T.; Xi, H. Quantitative Investment Trading Model Based on Model Recognition Strategy with Deep Learning Method. Wirel. Commun. Mob. Comput. 2022, 2022, 8856215. [Google Scholar] [CrossRef]

- Saini, A.; Sharma, A. Predicting the Unpredictable: An Application of Machine Learning Algorithms in Indian Stock Market. Ann. Data Sci. 2022, 9, 791–799. [Google Scholar] [CrossRef]

- Zou, Z.; Qu, Z.; Using LSTM in Stock prediction and Quantitative Trading. CS230 Deep. Learn. Winter 2022, 1–6. Available online: https://cs230.stanford.edu/projects_winter_2020/reports/32066186.pdf (accessed on 15 November 2022).

- Wysocki, M.; Ślepaczuk, R. Artificial neural networks performance in WIG20 index options pricing. Entropy 2021, 24, 35. [Google Scholar] [CrossRef] [PubMed]

- Raubitzek, S.; Neubauer, T. An Exploratory Study on the Complexity and Machine Learning Predictability of Stock Market Data. Entropy 2022, 24, 332. [Google Scholar] [CrossRef] [PubMed]

- Sako, K.; Mpinda, B.N.; Rodrigues, P.C. Neural Networks for Financial Time Series Forecasting. Entropy 2022, 24, 657. [Google Scholar] [CrossRef]

- Ghosh, P.; Neufeld, A.; Sahoo, J.K. Forecasting directional movements of stock prices for intraday trading using LSTM and random forests. Financ. Res. Lett. 2022, 46, 102280. [Google Scholar] [CrossRef]

- Zhang, W.; Yin, T.; Zhao, Y.; Han, B.; Liu, H. Reinforcement Learning for Stock Prediction and High-Frequency Trading with T+1 Rules. IEEE Access 2022, 1. [Google Scholar] [CrossRef]

- Park, D.-Y.; Lee, K.-H. Practical Algorithmic Trading Using State Representation Learning and Imitative Reinforcement Learning. IEEE Access 2021, 9, 152310–152321. [Google Scholar] [CrossRef]

- Ayala, J.; García-Torres, M.; Noguera, J.L.V.; Gómez-Vela, F.; Divina, F. Technical analysis strategy optimization using a machine learning approach in stock market indices. Knowledge-Based Syst. 2021, 225, 107119. [Google Scholar] [CrossRef]

- Ma, C.; Zhang, J.; Liu, J.; Ji, L.; Gao, F. A parallel multi-module deep reinforcement learning algorithm for stock trading. Neurocomputing 2021, 449, 290–302. [Google Scholar] [CrossRef]

- AbdelKawy, R.; Abdelmoez, W.M.; Shoukry, A. A synchronous deep reinforcement learning model for automated multi-stock trading. Prog. Artif. Intell. 2021, 10, 83–97. [Google Scholar] [CrossRef]

- Carta, S.; Corriga, A.; Ferreira, A.; Podda, A.S.; Recupero, D.R. A multi-layer and multi-ensemble stock trader using deep learning and deep reinforcement learning. Appl. Intell. 2021, 51, 889–905. [Google Scholar] [CrossRef]

- Wu, D.; Wang, X.; Wu, S. A hybrid method based on extreme learning machine and wavelet transform denoising for stock prediction. Entropy 2021, 23, 440. [Google Scholar] [CrossRef] [PubMed]

- Chang, V.; Man, X.; Xu, Q.; Hsu, C. Pairs trading on different portfolios based on machine learning. Expert Syst. 2021, 38, e12649. [Google Scholar] [CrossRef]

- Théate, T.; Ernst, D. An application of deep reinforcement learning to algorithmic trading. Expert Syst. Appl. 2021, 173, 114632. [Google Scholar] [CrossRef]

- Chakole, J.B.; Kolhe, M.S.; Mahapurush, G.D.; Yadav, A.; Kurhekar, M.P. A Q-learning agent for automated trading in equity stock markets. Expert Syst. Appl. 2021, 163, 113761. [Google Scholar] [CrossRef]

- Li, Z.; Liu, X.-Y.; Zheng, J.; Wang, Z.; Walid, A.; Guo, J. FinRL-Podracer: High Performance and Scalable Deep Reinforcement Learning for Quantitative Finance. In Proceedings of the ICAIF 2021-2nd ACM International Conference on AI in Finance, Virtual, 3–5 November 2021. [Google Scholar] [CrossRef]

- Liu, X.Y.; Yang, H.; Gao, J.; Wang, C.D. FinRL: Deep Reinforcement Learning Framework to Automate Trading in Quantitative Finance; Association for Computing Machinery: New York, NY, USA, 2021. [Google Scholar]

- Liu, Y.; Liu, Q.; Zhao, H.; Pan, Z.; Liu, C. Adaptive quantitative trading: An imitative deep reinforcement learning approach. In Proceedings of the AAAI 2020-34th AAAI Conference on Artificial Intelligence, New York, NY, USA, 7–12 February 2020; pp. 2128–2135. [Google Scholar] [CrossRef]

- Dang, Q.V. Reinforcement Learning in Stock Trading; 1121 AISC; Springer International Publishing: Berlin/Heidelberg, Germany, 2020. [Google Scholar]

- Nabipour, M.; Nayyeri, P.; Jabani, H.; Shahab, S.; Mosavi, A. Predicting Stock Market Trends Using Machine Learning and Deep Learning Algorithms Via Continuous and Binary Data; A Comparative Analysis. IEEE Access 2020, 8, 150199–150212. [Google Scholar] [CrossRef]

- Wu, X.; Chen, H.; Wang, J.; Troiano, L.; Loia, V.; Fujita, H. Adaptive stock trading strategies with deep reinforcement learning methods. Inf. Sci. 2020, 538, 142–158. [Google Scholar] [CrossRef]

- Naik, N.; Mohan, B.R. Intraday Stock Prediction Based on Deep Neural Network. Natl. Acad. Sci. Lett. 2020, 43, 241–246. [Google Scholar] [CrossRef]

- Conegundes, L.; Pereira, A.C.M. Beating the Stock Market with a Deep Reinforcement Learning Day Trading System. In Proceedings of the 020 International Joint Conference on Neural Networks (IJCNN), Glasgow, UK, 19–24 July 2020. [Google Scholar] [CrossRef]

- Khan, W.; Malik, U.; Ghazanfar, M.A.; Azam, M.A.; Alyoubi, K.H.; Alfakeeh, A.S. Predicting stock market trends using machine learning algorithms via public sentiment and political situation analysis. Soft Comput. 2020, 24, 11019–11043. [Google Scholar] [CrossRef]

- Chen, Y.; Hao, Y. A novel framework for stock trading signals forecasting. Soft Comput. 2020, 24, 12111–12130. [Google Scholar] [CrossRef]

- Parray, I.R.; Khurana, S.S.; Kumar, M.; Altalbe, A.A. Time series data analysis of stock price movement using machine learning techniques. Soft Comput. 2020, 24, 16509–16517. [Google Scholar] [CrossRef]

- Ananthi, M.; Vijayakumar, K. Stock market analysis using candlestick regression and market trend prediction (CKRM). J. Ambient. Intell. Humaniz. Comput. 2020, 12, 4819–4826. [Google Scholar] [CrossRef]

- Zhang, Z.; Zohren, S.; Roberts, S.J. Deep Reinforcement Learning for Trading. J. Financ. Data Sci. 2020, 2, 25–40. [Google Scholar] [CrossRef]

- Ta, V.-D.; Liu, C.-M.; Tadesse, D.A. Portfolio optimization-based stock prediction using long-short term memory network in quantitative trading. Appl. Sci. 2020, 10, 437. [Google Scholar] [CrossRef]

- Li, Y.; Ni, P.; Chang, V. Application of deep reinforcement learning in stock trading strategies and stock forecasting. Computing 2020, 102, 1305–1322. [Google Scholar] [CrossRef]

- Yuan, Y.; Wen, W.; Yang, J. Using data augmentation based reinforcement learning for daily stock trading. Electronics 2020, 9, 1384. [Google Scholar] [CrossRef]

- Nabipour, M.; Nayyeri, P.; Jabani, H.; Mosavi, A.; Salwana, E. Deep learning for stock market prediction. Entropy 2020, 22, 840. [Google Scholar] [CrossRef] [PubMed]

- Chakole, J.; Kurhekar, M. Trend following deep Q-Learning strategy for stock trading. Expert Syst. 2020, 37, e12514. [Google Scholar] [CrossRef]

- Park, H.; Sim, M.K.; Choi, D.G. An intelligent financial portfolio trading strategy using deep Q-learning. Expert Syst. Appl. 2020, 158, 113573. [Google Scholar] [CrossRef]

- Yang, H.; Liu, X.-Y.; Zhong, S.; Walid, A. Deep reinforcement learning for automated stock trading: An ensemble strategy. In Proceedings of the ICAIF 2020-1st ACM International Conference on AI in Finance, New York, NY, USA; 2020. [Google Scholar] [CrossRef]

- Yuan, X.; Yuan, J.; Jiang, T.; Ain, Q.U. Integrated Long-Term Stock Selection Models Based on Feature Selection and Machine Learning Algorithms for China Stock Market. IEEE Access 2020, 8, 22672–22685. [Google Scholar] [CrossRef]

- Li, Y.; Ni, P.; Chang, V. An Empirical Research on the Investment Strategy of Stock Market based on Deep Reinforcement Learning model. In Proceedings of the COMPLEXIS 2019-4th International Conference on Complexity, Future Information Systems and Risk, Crete, Greece, 2–4 May 2019; pp. 52–58. [Google Scholar] [CrossRef]

- Meng, T.L.; Khushi, M. Reinforcement learning in financial markets. Data 2019, 4, 110. [Google Scholar] [CrossRef]

- Hoseinzade, E.; Haratizadeh, S. CNNpred: CNN-based stock market prediction using a diverse set of variables. Expert Syst. Appl. 2019, 129, 273–285. [Google Scholar] [CrossRef]

- Selvamuthu, D.; Kumar, V.; Mishra, A. Indian stock market prediction using artificial neural networks on tick data. Financ. Innov. 2019, 5, 16. [Google Scholar] [CrossRef]

- Tan, Z.; Yan, Z.; Zhu, G. Stock selection with random forest: An exploitation of excess return in the Chinese stock market. Heliyon 2019, 5, e02310. [Google Scholar] [CrossRef]

- Li, Y.; Zheng, W.; Zheng, Z. Deep Robust Reinforcement Learning for Practical Algorithmic Trading. IEEE Access 2019, 7, 108014–108021. [Google Scholar] [CrossRef]

- Lv, D.; Yuan, S.; Li, M.; Xiang, Y. An Empirical Study of Machine Learning Algorithms for Stock Daily Trading Strategy. Math. Probl. Eng. 2019, 2019, 7816154. [Google Scholar] [CrossRef]

- Wang, Q.; Xu, W.; Huang, X.; Yang, K. Enhancing intraday stock price manipulation detection by leveraging recurrent neural networks with ensemble learning. Neurocomputing 2019, 347, 46–58. [Google Scholar] [CrossRef]

- YHu, Y.-J.; Lin, S.-J. Deep Reinforcement Learning for Optimizing Finance Portfolio Management. In Proceedings of the 2019 Amity International Conference on Artificial Intelligence (AICAI), Dubai, United Arab Emirates, 4–6 February 2019; pp. 14–20. [Google Scholar] [CrossRef]

- Wu, J.; Wang, C.; Xiong, L.; Sun, H. Quantitative Trading on Stock Market Based on Deep Reinforcement Learning. In Proceedings of the 2019 International Joint Conference on Neural Networks (IJCNN), Budapest, Hungary, 14-19 July 2019; pp. 1–8. [Google Scholar] [CrossRef]

- Pendharkar, P.C.; Cusatis, P. Trading financial indices with reinforcement learning agents. Expert Syst. Appl. 2018, 103, 1–13. [Google Scholar] [CrossRef]

- Chong, E.; Han, C.; Park, F.C. Deep learning networks for stock market analysis and prediction: Methodology, data representations, and case studies. Expert Syst. Appl. 2017, 83, 187–205. [Google Scholar] [CrossRef]

- Patel, J.; Shah, S.; Thakkar, P.; Kotecha, K. Predicting stock and stock price index movement using Trend Deterministic Data Preparation and machine learning techniques. Expert Syst. Appl. 2015, 42, 259–268. [Google Scholar] [CrossRef]

- Guresen, E.; Kayakutlu, G.; Daim, T.U. Using artificial neural network models in stock market index prediction. Expert Syst. Appl. 2011, 38, 10389–10397. [Google Scholar] [CrossRef]

- Kara, Y.; Boyacioglu, M.A.; Baykan, K. Predicting direction of stock price index movement using artificial neural networks and support vector machines: The sample of the Istanbul Stock Exchange. Expert Syst. Appl. 2011, 38, 5311–5319. [Google Scholar] [CrossRef]

- Zhong, X.; Enke, D. Predicting the daily return direction of the stock market using hybrid machine learning algorithms. Financ. Innov. 2019, 5, 4. [Google Scholar] [CrossRef]

- Shah, D.; Isah, H.; Zulkernine, F. Stock market analysis: A review and taxonomy of prediction techniques. Int. J. Financ. Stud. 2019, 7, 26. [Google Scholar] [CrossRef]

- Bajpai, S. Application of deep reinforcement learning for Indian stock trading automation. arXiv 2021, arXiv:2106.16088. [Google Scholar] [CrossRef]

- Xu, C.; Ke, J.; Peng, Z.; Fang, W.; Duan, Y. Asymmetric Fractal Characteristics and Market Efficiency Analysis of Style Stock Indices. Entropy 2022, 24, 969. [Google Scholar] [CrossRef]

- Mendoza-Urdiales, R.A.; Núñez-Mora, J.A.; Santillán-Salgado, R.J.; Valencia-Herrera, H. Twitter Sentiment Analysis and Influence on Stock Performance Using Transfer Entropy and EGARCH Methods. Entropy 2022, 24, 874. [Google Scholar] [CrossRef]

- Li, Q.; Chen, Y.; Wang, J.; Chen, Y.; Chen, H. Web Media and Stock Markets: A Survey and Future Directions from a Big Data Perspective. IEEE Trans. Knowl. Data Eng. 2017, 30, 381–399. [Google Scholar] [CrossRef]

- Vanstone, B.; Finnie, G. An empirical methodology for developing stockmarket trading systems using artificial neural networks. Expert Syst. Appl. 2009, 36, 6668–6680. [Google Scholar] [CrossRef]

- Soni, P.; Tewari, Y.; Krishnan, D. Machine Learning Approaches in Stock Price Prediction: A Systematic Review. J. Phys. Conf. Ser. 2022, 2161, 012065. [Google Scholar] [CrossRef]

- Obthong, M.; Tantisantiwong, N.; Jeamwatthanachai, W.; Wills, G. A survey on machine learning for stock price prediction: Algorithms and techniques. In Proceedings of the 2nd International Conference on Finance, Economics, Management and IT Business, Vienna House Diplomat Prague, Prague, Czech Republic, 5–6 May 2020; pp. 63–71. [Google Scholar] [CrossRef]

- Liu, X.-Y.; Rui, J.; Gao, J.; Yang, L.; Yang, H.; Wang, Z.; Wang, C.; Guo, J. FinRL-Meta: A Universe of Near-Real Market Environments for Data-Driven Deep Reinforcement Learning in Quantitative Finance. arXiv 2021, arXiv:2112.06753. [Google Scholar] [CrossRef]

- Nie, C.-X.; Xiao, J. Dynamics of Information Flow between the Chinese A-Share Market and the U.S. Stock Market: From the 2008 Crisis to the COVID-19 Pandemic Period. Entropy 2022, 24, 1102. [Google Scholar] [CrossRef]

- Rundo, F.; Trenta, F.; Di Stallo, A.L.; Battiato, S. Machine learning for quantitative finance applications: A survey. Appl. Sci. 2019, 9, 5574. [Google Scholar] [CrossRef]

Figure 1.

Application of Artificial Intelligence in quantitative finance and Stock trading.

Figure 1.

Application of Artificial Intelligence in quantitative finance and Stock trading.

Figure 2.

Different Stock trading strategies using AI in algorithm trading.

Figure 2.

Different Stock trading strategies using AI in algorithm trading.

Figure 3.

Different types of Stock Market Data, Machine Learning Tasks, Prediction Models, Model Performance Evaluation Metrics, and portfolio performance evaluation metrics.

Figure 3.

Different types of Stock Market Data, Machine Learning Tasks, Prediction Models, Model Performance Evaluation Metrics, and portfolio performance evaluation metrics.

Figure 4.

A candlestick pattern is a price chart pattern used in technical analysis of the financial markets.

Figure 4.

A candlestick pattern is a price chart pattern used in technical analysis of the financial markets.

Figure 5.

Relationship of AI, ML, DL, RL, and DRL. Machine learning, deep learning, and reinforcement learning are subsets of artificial intelligence.

Figure 5.

Relationship of AI, ML, DL, RL, and DRL. Machine learning, deep learning, and reinforcement learning are subsets of artificial intelligence.

Figure 6.

Classification, Regression, and Clustering task performed by the machine learning algorithm.

Figure 6.

Classification, Regression, and Clustering task performed by the machine learning algorithm.

Figure 7.

The basic structure of an artificial neural network consists input layer, a hidden layer, and an output layer.

Figure 7.

The basic structure of an artificial neural network consists input layer, a hidden layer, and an output layer.

Figure 8.

Basic structure of reinforcement learning having agent-environment interaction.

Figure 8.

Basic structure of reinforcement learning having agent-environment interaction.

Figure 9.

Q-Learning—Simple Reinforcement Learning method that employs Q-values (action values) to enhance the learning agent’s behavior.

Figure 9.

Q-Learning—Simple Reinforcement Learning method that employs Q-values (action values) to enhance the learning agent’s behavior.

Figure 10.

Deep Q-learning to design AI agents for discrete action spaces.

Figure 10.

Deep Q-learning to design AI agents for discrete action spaces.

Figure 11.

Confusion matrix measures classification model performance on test data.

Figure 11.

Confusion matrix measures classification model performance on test data.

Table 1.

Counts of the research papers published over the years are included in this analysis.

Table 1.

Counts of the research papers published over the years are included in this analysis.

| Year | Count | Article |

|---|

| 2022 | 17 | [2,9,10,18,19,20,21,22,23,24,25,26,27,28,29,30,31] |

| 2021 | 14 | [1,4,7,32,33,34,35,36,37,38,39,40,41,42] |

| 2020 | 16 | [43,44,45,46,47,48,49,50,51,52,53,54,55,56,57,58,59,60,61] |

| 2019 | 9 | [62,63,64,65,66,67,68,69,70,71] |

| 2018 | 1 | [72] |

| 2017 | 2 | [8,73] |

| Others | 5+ | [74,75,76] |

Table 2.

A listing of the most important stock indices and markets that were assessed.

Table 2.

A listing of the most important stock indices and markets that were assessed.

| Index | Country |

|---|

| S&P 500 | US |

| Dow Jones Industrial Average | US |

| CSI Composite | China |

| HIS | Hong Kong |

| NIFTY50 | India |

| BSE30 | India |

| FTSE 100 | England |

| DAX | Germany |

| NASDAQ Composite | US |

| NYSE Composite | US |

| Euronext 100 (N100) | Europe |

| Deutsche Boese DAX index (GDAXI) | Europe |

| SSE Composite index (000001.SS) | Asia |

| Nikkei 225 (N225) | Asia, Japan |

| Global X MSCI Nigeria ETF (NGE) and | Nigeria |

| FTSE/JSE Africa index (J580.JO) | Africa |

Table 3.

Journal names and the total number of articles considered are included in this review.

Table 3.

Journal names and the total number of articles considered are included in this review.

| Journal/Publisher | Count | Article |

|---|

| MDPI | 12 | [4,7,10,20,27,28,29,37,54,56,57,63] |

| Expert System with Application | 10 | [1,39,40,59,64,72,73,74,75,76] |

| Expert Systems | 2 | [38,58] |

| IEEE Access | 5 | [31,32,45,61,67] |

| neurocomputing | 3 | [21,34,69] |

| Applied Intelligence | 2 | [9,36] |

| IEEE Transactions | 1 | [8] |

| Information Sciences | 1 | [46] |

| Soft Computing | 3 | [49,50,51] |

| PLoS ONE | 1 | [22] |

| Conference Papers | 3 | [48,70,71] |

| Others | 19+ | [3,23,24,25,26,30,33,35,44,47,53,65,66,68,77,78] |

Table 4.

A sample of the TATAMOTORS company’s day-wise historical data.

Table 4.

A sample of the TATAMOTORS company’s day-wise historical data.

| Date | Prev. Close | Open | High | Low | Close | Volume |

|---|

| 1 December 2014 | 533.5 | 539.85 | 539.85 | 530.1 | 536 | 5093458 |

| 2 December 2014 | 536 | 535.7 | 535.8 | 528.1 | 528.95 | 3555543 |

| 3 December 2014 | 528.95 | 535.2 | 538.8 | 526.3 | 529 | 3997174 |

| 4 December 2014 | 529 | 534 | 537.25 | 522 | 527.75 | 3600835 |

| …… | …… | …… | …… | …… | …… | …… |

Table 5.

An example of the minute-by-minute historical data collected by the State Bank of India.

Table 5.

An example of the minute-by-minute historical data collected by the State Bank of India.

| Stock Symbol | Time | Open | High | Low | Close | Volume |

|---|

| SBIN | 09:08 | 492.65 | 492.65 | 492.65 | 492.65 | 53244 |

| SBIN | 09:16 | 492.8 | 494.6 | 491.85 | 494 | 159589 |