Abstract

Pre-operative imaging has been used earlier to guide traditional surgical navigation systems. There has been a lot of effort in the last decade to integrate augmented reality into the operating room to help surgeons intra-operatively. An augmented reality (AR) based navigation system provides a clear three-dimensional picture of the interested areas over the patient to aid surgical navigation and operations, which is a promising approach. The goal of this study is to review the application of AR technology in various fields of surgery and how the technology is used for its performance in each field. Assessment of the available AR assisted navigation systems being used for surgery is reviewed in this paper. Furthermore, a discussion about the required evaluation and validation metric for these systems is also presented. The paper comprehensively reviews the literature since the year 2008 for providing relevant information on applying the AR technology for training, planning and surgical navigation. It also describes the limitations which need to be addressed before one can completely rely on this technology for surgery. Thus, additional research is desirable in this emerging field, particularly to evaluate and validate the use of AR technology for surgical navigation.

1. Introduction

Surgical techniques utilized in different parts of the world have advanced technologically in recent decades. To give surgeons reference information, traditional surgical visualization systems used ultrasound, magnetic resonance imaging (MRI), or patient pre-operative computed tomography (CT) and other medical imaging [1]. However, the surgeon should integrate the two-dimensional (2D) image with three-dimensional (3D) space throughout the surgery, resulting in a mismatch between pre-operative and intra-operative information. During the surgery, with a standard surgical visualization system, the practitioner needs to frequently switch his or her line of sight between the surgical scene and the auxiliary display, extending the operation duration. At the same time, surgical navigation is not achieved, which allows surgeons to precisely track as well as project the instrument position during surgery. Surgical targets and instruments are frequently concealed in other anatomical structures. As a result of these factors, surgical navigation accuracy falls short of expectations.

There has been significant progress in the surgeon’s search for safer, less intrusive, and more affordable techniques and the surgical community is becoming more interested in alternative, yet intuitive intra-operative visual guidance systems [2]. The disadvantages of the above mentioned surgical systems can be efficiently addressed by using an augmented reality visualization and navigation system [3,4,5]. Augmented reality (AR) based navigation systems were developed in response to a growing demand for visual feedback in the operating room [6]. Augmented reality is a new technology that works in a similar way as virtual reality (VR) [7]. AR technology is utilized to build 3D images from CT and/or MRI data and to overlay virtual images over a view of the surgical field. AR technology, in general, consists of 3D reconstruction, display, registration, and tracking techniques, and it has been recently used in surgical interventions. Consequently, AR technology can combine the pre-operative model with the intra-operative scenario, giving clinicians real-time information and surgical guidance [8].

Until recently, AR navigation technology has been widely employed in various fields of surgery, including neurosurgery [9,10,11,12,13], orthopedic and spine surgery [14,15,16], laparoscopic surgery [17,18], hepatobiliary surgery [19,20,21,22,23,24], oral surgery [25,26], etc. [27,28,29]. The goal to do brain surgery as minimally invasive as feasible may be seen throughout the entire history of neurosurgery. The reason for this is that neurosurgery is the practice of performing surgery on or within an organ that contains many sensitive regions that have a direct impact on a patient’s mental and physical well-being. As a result, neurosurgeons frequently accept new technology before others do, with the hope that it may reduce surgical risks and improve patient outcomes. Similarly, researchers have explored using AR technology for image guided surgery in various other fields to overcome surgical complexities by enhancing the operating room (OR) with virtual content.

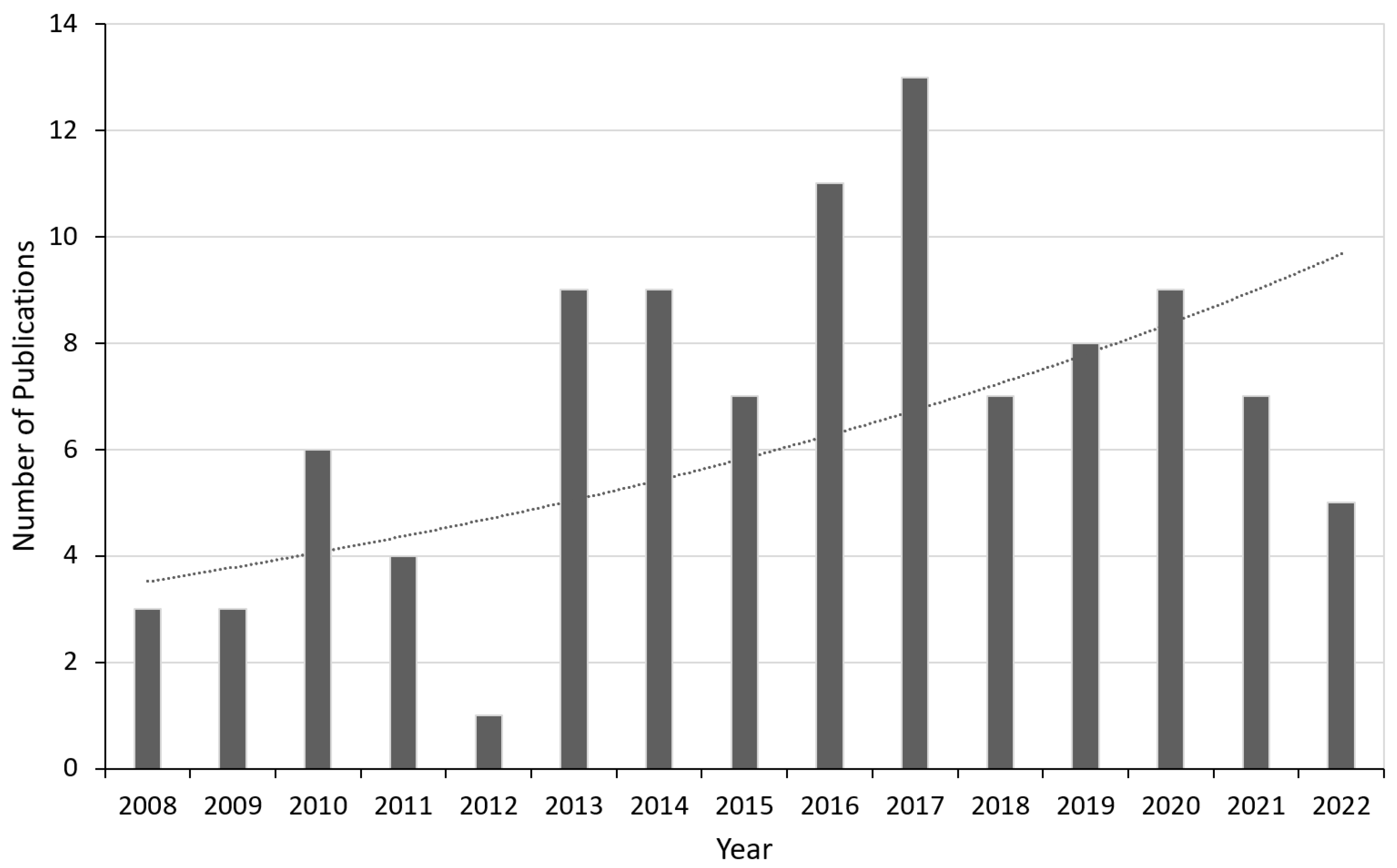

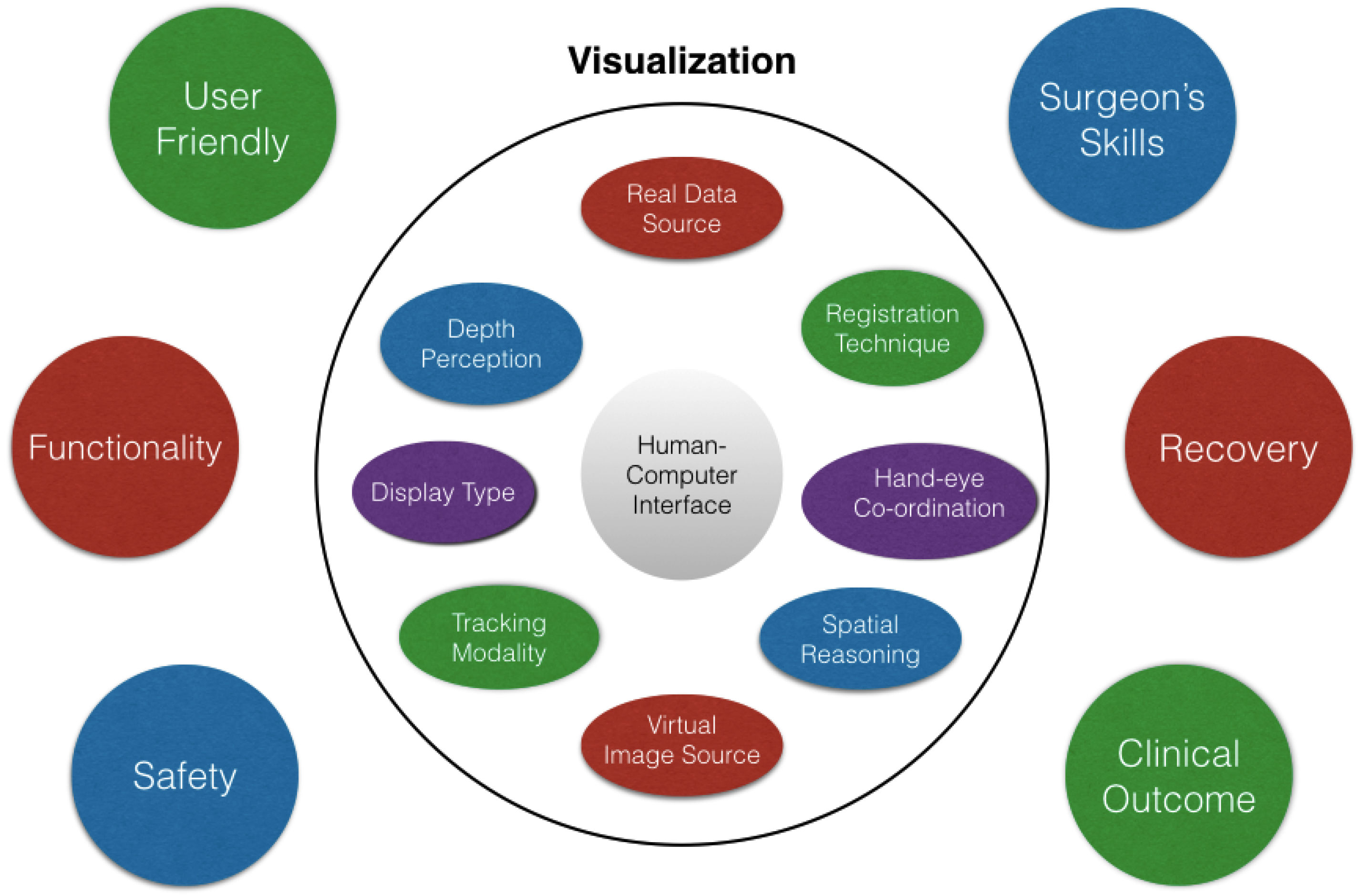

The application of AR systems in different surgical fields and their performance is described in the next section. In Section 3, the evaluation pertaining to surgical techniques used in AR are described and some validation techniques have been suggested. The last section discusses the limitations of this technology in the surgical field and how the AR navigation systems can be improved so that it can prove to be beneficial for the society in the near future. For this study, many recent papers have been reviewed especially concentrating on articles published after 2008, since the adoption of AR technology began to rise in many surgical fields around that time. Our search criteria involved mainly the following keywords (but not limited to): augmented reality, evaluation, assessment, validation, surgery, etc. at the e-resources platform available within our university’s research collection. Figure 1 shows the distribution of the chosen papers taken into consideration for this study by year. The last search was conducted in May 2022 which explains the drop from 2021, although the dotted curve showing the trend-line over the years shows an exponential growth.

Figure 1.

Year-wise distribution of the selected papers considered for this review along with a trend-line (dotted curve) showing an exponential growth.

2. State of the Art AR Based Surgical Systems

Many areas of medical imaging, from simulation to training and therapy, are significantly benefiting from the use of mixed, augmented, and virtual reality technologies [30,31,32]. While VR is primarily used for training, AR may be used for everything from training to planning and navigation in the surgery room [33]. Most research compared user comprehension, which was assessed using Likert scale surveys, to the usability of the AR and VR system [34,35,36,37,38]. Overall, the findings imply that participants found AR/VR systems simple to comprehend. Some studies provided a thorough analysis of usability by evaluating particular AR/VR system tools and contrasting the selected interface with more conventional interfaces such as the keyboard and mouse [39]. Most users preferred the interface of the AR/VR system. Numerous studies also mentioned generic characteristics that improve usefulness in the learning environment, such as system mobility and adaptability. Finally, the ability of AR/VR technologies to provide a seamless transition between reality and the augmented or virtual world was a critical component of their usability in anatomy learning [40,41,42].

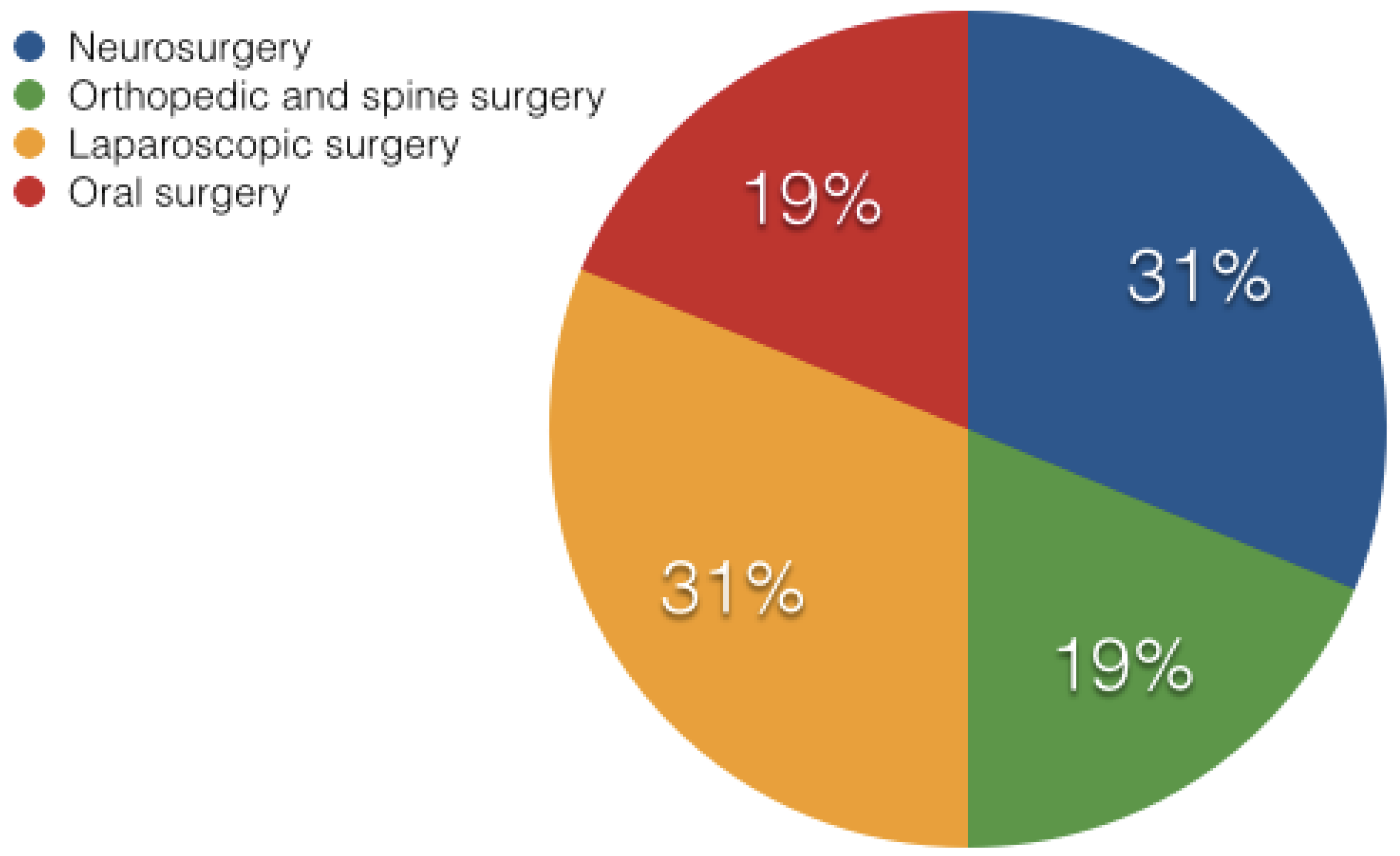

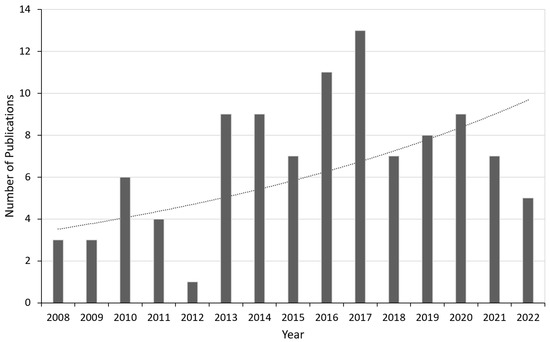

The beauty of augmented reality is its potential to show an easily recognized and simplified anatomy during surgery [43]. This section describes various fields in surgery where AR technology has been applied more often. Figure 2 shows the breakdown of the papers’ percentage contributions from the various surgical specialties under discussion. The level of flexibility, functionality, and work-flow that each surgical field, each institution, and each surgeon requires from their navigation systems varies. Every field utilizes different methods for evaluating their setup and the overall technology as described below.

Figure 2.

Pie chart showing the percentage division of the publications from various fields of surgery being discussed in this paper.

2.1. Neurosurgery

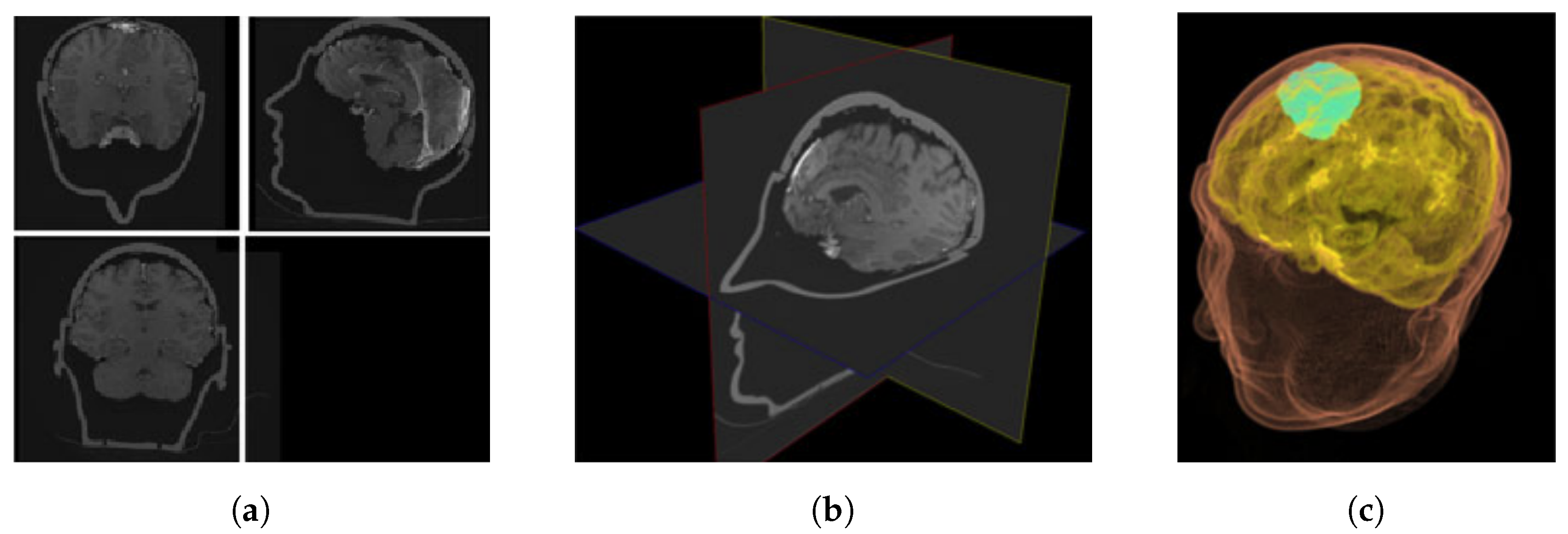

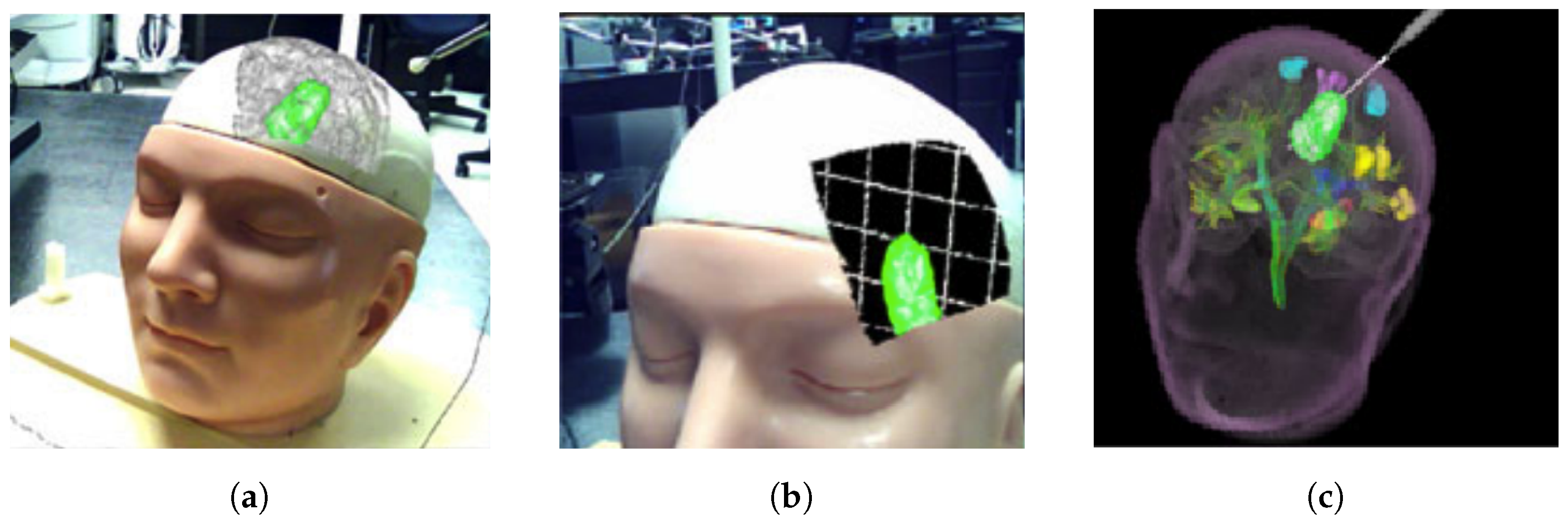

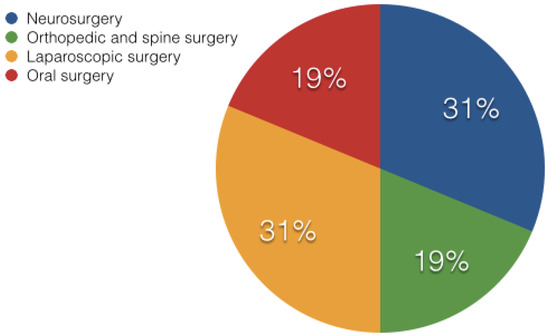

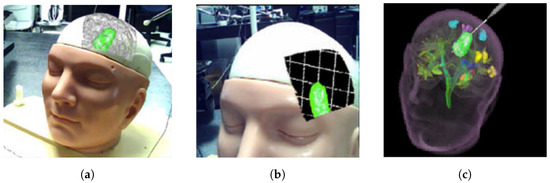

The first surgical field to employ navigation and successfully incorporate it into regular practice was neurosurgery. In the last decade, a growing number of scientific and commercial systems for neurosurgical applications have emerged [44,45]. The visual perception and human–computer interface features of computer graphics for supplementing the display in neurosurgery are the main focus here [10]. AR has already been evaluated for the training of tumor resection planning in terms of perceptual and spatial reasoning ability [46]. Their technology was specifically created and tested with human aspects in mind, with the purpose of reducing cognitive stress. In comparison to traditional planning scenarios, their proposed method significantly increased novice performance, regardless of the sensorimotor tasks performed (as shown in Figure 3 and Figure 4). Furthermore, when practitioners used the technology, they were able to complete clinically relevant activities in significantly less time. This showed that augmented reality systems can help residents gain the spatial reasoning abilities they require to plan neurosurgical treatments and enhance patient outcomes.

Figure 3.

Planning environments for neurosurgery (a) 2D view of axial/coronal/sagittal slices; (b) XP representation of 2D slices; and (c) 3D volume rendering [46].

Figure 4.

Visualization data in (a) AR; (b) AR with grid lines to promote the sensation of depth; and (c) VR [46].

Augmented reality systems represent a significant advancement over current neuro-navigation systems. In particular, the wide range of technical implementations gives the neurosurgeon viable options for various operations (generally neuro-oncological and neuro-vascular), treatment methods (endo-vascular, endo-nasal, open), and various stages of the same operation (microscopic and macroscopic part). Although no prospective randomized research has been published, available literature [9] confirms that this technology in neurosurgery is a reliable, versatile, and a promising tool. In the work presented by [47], a target registration error of 2.5 mm was achieved using augmented reality neuro-navigation (data from 5 publications), with no significant difference (2.6 mm) when compared to conventional infrared neuro-navigation systems (data from 23 publications).

According to the review of 12 publications on intra-operative medical applications in [48], the injection of 3D images with AR enables successful image integration in vascular, oncological, and other lesions without the need to look away from the surgical field which improves safety, surgical experience, and clinical outcome. According to the results of clinical trials presented in [44], the augmented reality system proved to be accurate and reliable for intra-operative image projection to the head, skull, and brain surface, with a mean registration time of 3.8 min and a mean projection error of 0.8 ± 0.25 mm. Based on the evaluation completed in [45], better visualization techniques which differentiate between arteries and veins and ascertain the absolute depth of a vessel of interest are required for more complicated anomalies such as arteriovenous malformations (AVM) and arteriovenous fistulae (AVF); however, AR is favourable technology in neuro-vascular surgery. To conclude, there is no universal method for reporting navigation accuracy in augmented reality neuro-navigation; comparative studies, however, still need to be assessed to identify its effectiveness [49].

2.2. Orthopedic and Spine Surgery

The increased interest in augmented reality in orthopedics and trauma is unsurprising considering that surgical techniques in orthopedics frequently involve visual data such as medical pictures gathered both pre- and intra-operatively. Additionally, mechanical stages such as osteotomies, screw or implant insertions, and the correction of deformities are routinely used during orthopedic surgery and can be witnessed in AR environments [50]. Spinal disorders such as degenerative ailments, deformities, trauma/spinal injuries, and spinal tumors are managed, assessed, and treated by the spine surgery. In the past ten years, minimally invasive spine surgery has made significant advancements and is now being utilized more frequently to address challenging situations [51].

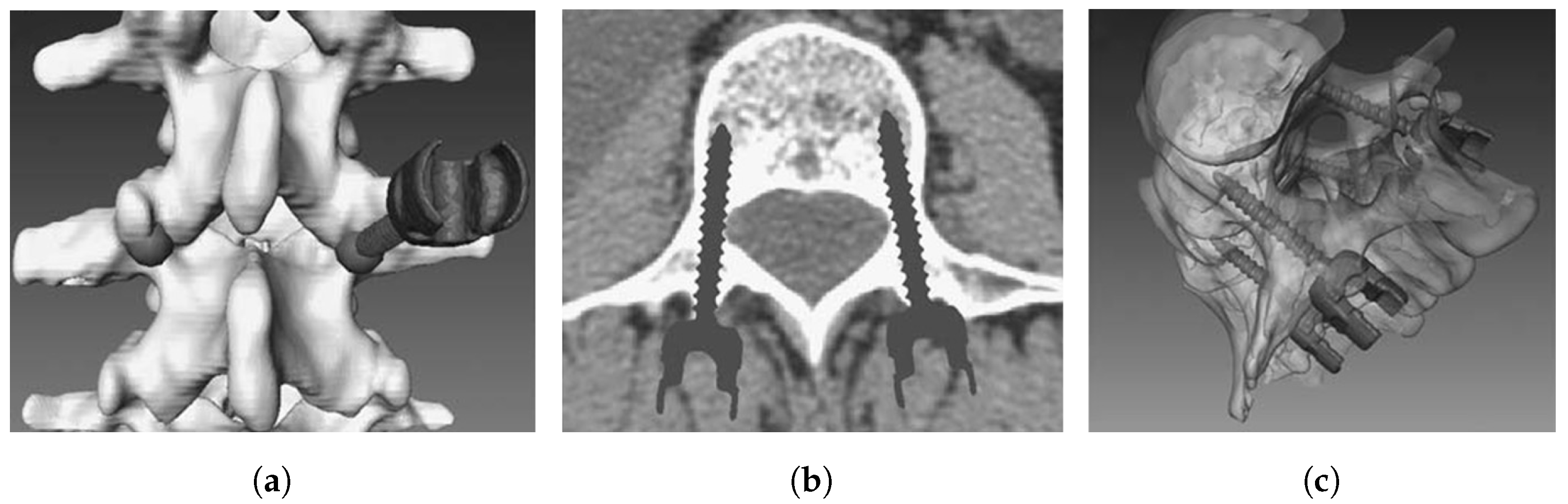

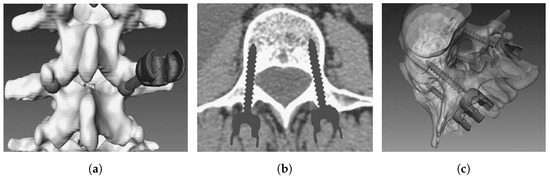

Pedicle screw implantation (a distinctly difficult procedure) in spine surgery can offer a firm fixation to the spine, preventing harm when the back extends, bends, or rotates excessively [52]. Usually, a screw that is incorrectly placed can cause neurologic and vascular problems; therefore, a navigation system is employed to assist with correct positioning [53]. During surgery, however, the surgical targets and surgical devices such as pedicle screws may be concealed in other tissues. To address these issues, research on a 3D integral videography (IV) AR system [15] for pedicle screw placement (with ultrasound-assisted registration) has been proposed [54]. Figure 5 shows a patient-specific, 3D spine model with a screw inserted at the entry point, a 2D axial image of the screw(s), and a 3D translucent image of the screw(s). To eliminate the effect of extracting anatomical markers on the surface and limit radiation exposure, ultrasound is employed to accomplish registration between the pre-operative medical imaging and the patient. Experiments show that the suggested system has adequate targeting accuracy, and that both the patient and the surgeon are exposed to less radiation.

Figure 5.

(a) Screw insertion on 3D, patient-specific spine model; (b) 2D axial image of placed pedical screws; and (c) 3D translucent image of implanted screws [52].

According to a 12-question survey conducted globally in [16], spine physicians recognise the usefulness of computer assisted surgery, but current technologies fall short of their expectations in terms of usability and integration into the surgical work flow. Speed, accuracy, and urgency are the key in spine or orthopedic treatments. For instance, the majority of orthopedic or spinal surgeries require anesthesia before the surgery because of the severity of pain and other procedural requirements. Thus, before the effect of anesthesia is over, the procedure has to be finished. The post Deep Venous Thrombosis (DVT) stage is so acute that the patient needs urgent attention. Thus, the computer assisted models should be affordable in terms of user friendliness, computationally inexpensive, and fast so that they can be used frequently. Even after reaching the state, where supercomputer is available (although not available abundantly), appropriate visualization still remains a problem, which the clinicians rank the most among others. Thus, in orthopedic and spine surgery, augmented reality has the potential and capability to save time, reduce radiation and risk, and improve precision.

2.3. Laparoscopic Surgery

Laparoscopy, in which physicians try surgical procedures through small incisions, is one of the most popular minimally invasive surgical treatments because of the development of video technology. However, despite the fact that a laparoscopic method is frequently favored over an open surgical approach due to the much lower patient morbidity and shortened recovery time [55], even for expert surgeons, it is highly difficult physically and cognitively. This is partly because of constraints in the typical arrangement, such as restricted flexibility in manipulation and poor depth perception in 2D displays, and partly because the surgeons’ perception is dislocated from the action site. However, for many laparoscopic procedures, whether it be reconstruction of a bile duct while avoiding critical blood vessels or resection of a tumor located deep within an organ parenchyma, surgeons need to see hidden surgical targets. Keyhole techniques restrict the surgeon’s 3D perception within the human body, despite the fact that minimally invasive surgery has several benefits [56,57]. For instance, the surgeon’s visual and tactile cues may be reduced by the restricted visual field of endoscopes and laparoscopes as well as the absence of haptic input.

Augmented reality is a viable contender in the field of laparoscopic imaging [58,59,60,61]. Given the standard endoscope or laparoscope’s small field of vision, it makes perfect sense to supplement the video image with a model of the organ being inspected [62,63]. This can include a depiction for internal organ features that are hidden in the typical laparoscopic picture or an extended surface view of the organ [64,65,66]. Many technical elements of correct registration and visualization of pre-operative imagery with the laparoscopic view, including the image registration, segmentation, calibration, tracking, and intra-operative imaging with MRI, CT, and ultrasound, have been addressed in [17,67]. Recent advancements have revealed promising findings, suggesting that the proposed method requires substantially less cognitive and physical effort than the current 2D ultrasound imaging method utilized in the operating room [68].

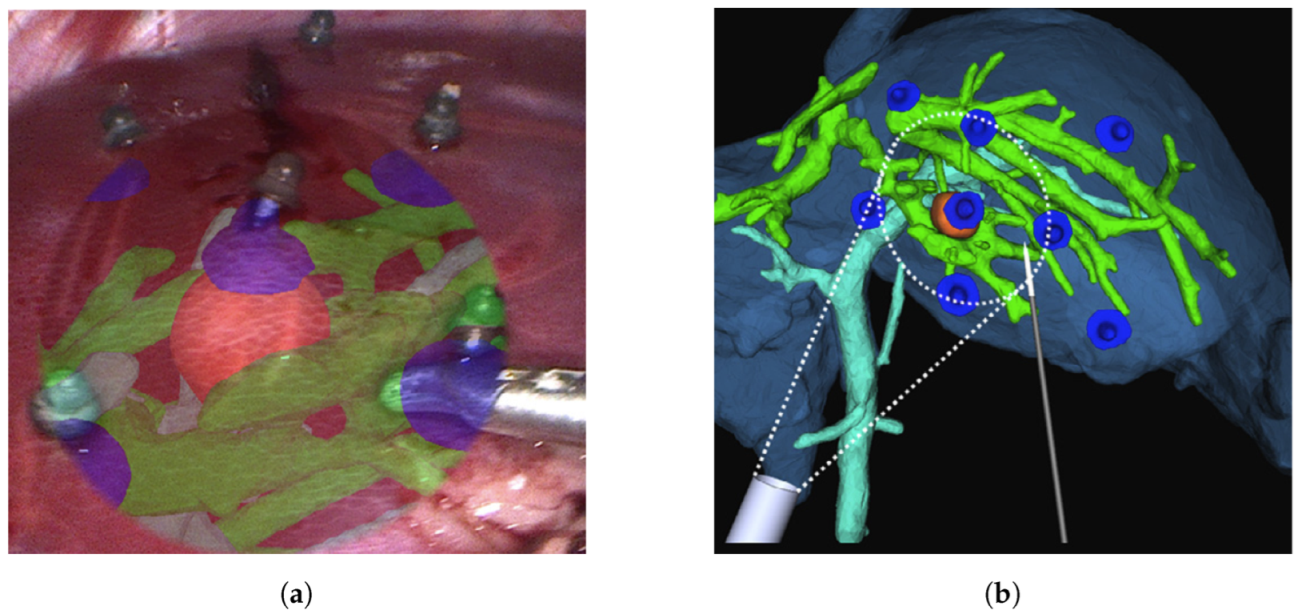

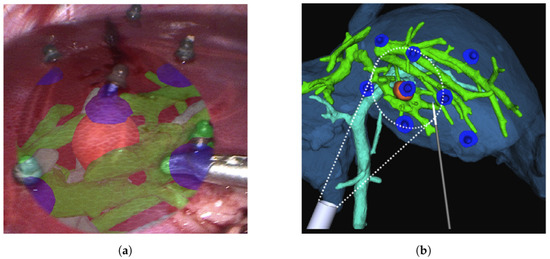

Figure 6 provides an example of visualization during laparoscopy through navigation system monitors. A thorough overview of the state of the art as well as the fundamentals of AR in laparoscopy is provided in [18], which makes it very useful. Several types of data, rendering, and display are accessible depending on the augmentation desired. A dependable technical foundation for laparoscopic liver resection is provided by the cutting-edge image navigation technology, as presented in [61]. Although the fundamental challenge facing laparoscopic AR is now its accuracy, the lack of accuracy necessitates the use of validation methods in surgical AR [1].

Figure 6.

An illustration of visualization during liver laparoscopy using navigation system [60] with (a) the surface fiducials being laparoscopically inserted on the surface of the liver, and (b) location of the surface fiducials being reprojected as AR objects.

2.4. Oral Surgery

Oral surgery normally involves surgeries on the jaws and the teeth to alter the dentition. Drilling, cutting, fixing, resection, and implantation are the most common oral surgical procedures [69,70]. The tight space and the fact that perhaps the surgical targets may be covered, limit operations in most circumstances. To ensure surgical safety, surgeons must practice avoiding injury to the surrounding important structures. Dental surgery still experiences imperfect hand-eye coordination and a loss of 3D information in surgical navigation, despite the introduction of computer assisted oral surgery.

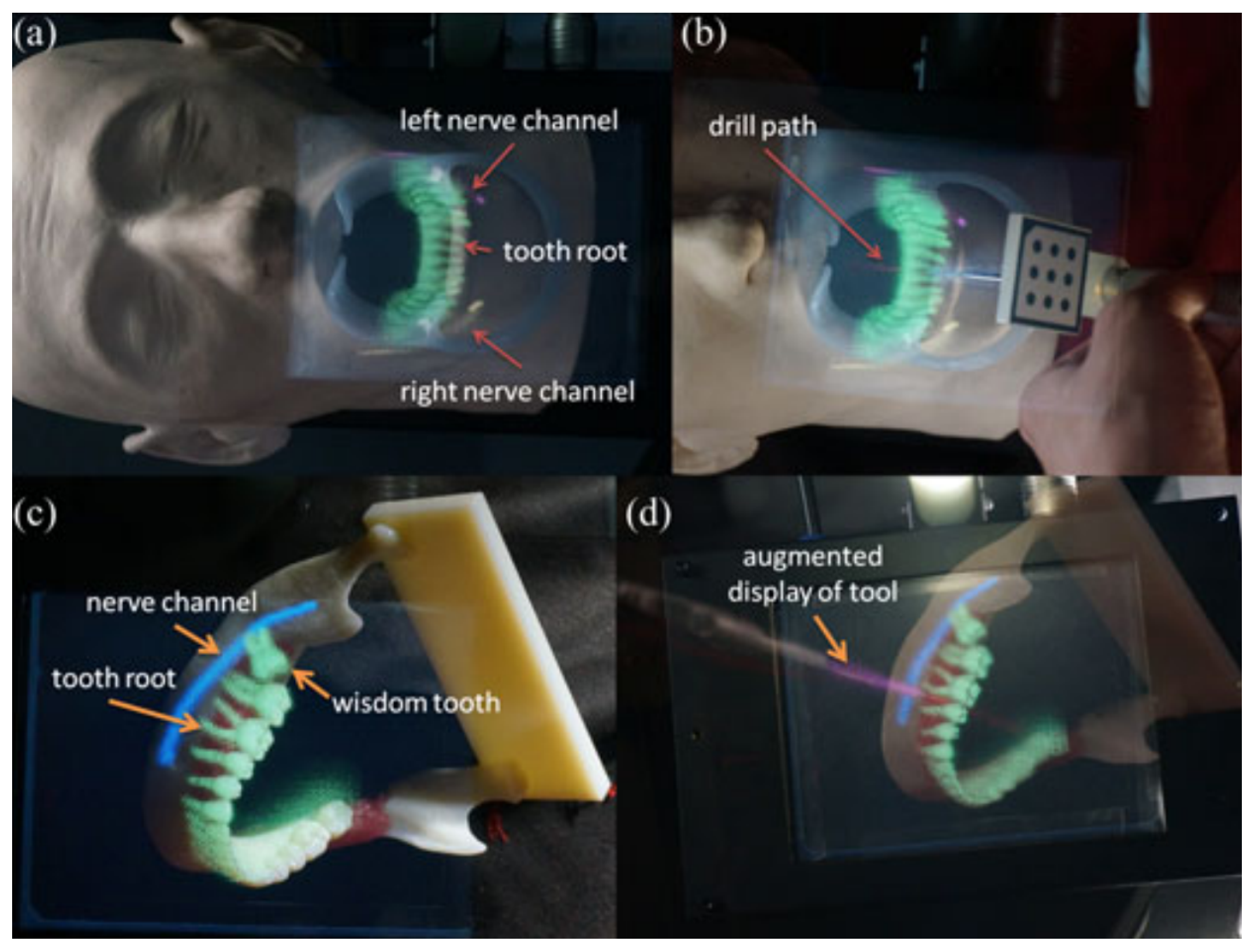

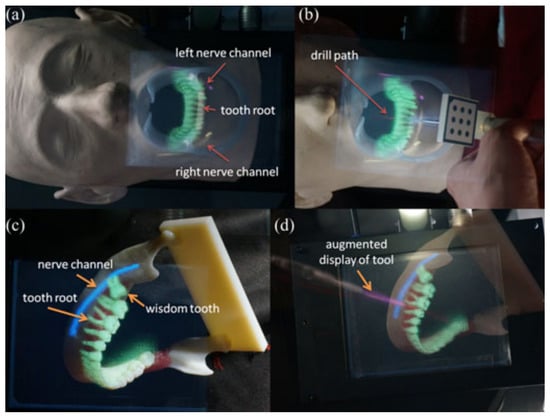

Using an IV based 3D image overlay system and stereo tracking, Tran and Wang created a 3D augmented reality based navigation system with automatic marker-free registration of image for oral surgery [71,72]. A stereo camera for tracking patients and equipment, a real-time patient’s 3D image registration method, an IV camera registration method, and an optical see-through device are all part of the proposed system [8]. The surgeon can use the AR information to intuitively examine hidden structures as well as the surgical tools. Figure 7 shows the AR model overlay with surgical scene and the surgical instrument in 3D, with an overlay showing the tool’s location in pink and nerve channel in blue.

Figure 7.

(a) A teeth model overlay with important features to show the tooth roots that are not visible; (b) an enhanced visual representation of the surgical tool with the drill route superimposed; (c) a lower jaw model was superimposed with three-dimensional photographs of molars, including a developing wisdom tooth; and (d) an enhanced display on the surgical tool that shows the drill path [72].

Experiments were conducted to confirm the practicality, and the suggested system’s overlay error was less than 1 mm. Surgeons can obtain intuitive depth information and then treat while using the IV based 3D AR system. Another knowledge-based strategy for a contextual intra-operative support system is presented in [73], where two phantom experiments were performed examining the medical usability, accuracy, and recognition rate. In conclusion, the system demonstrated its ability to accurately identify circumstances and give beneficial visualizations in real-time; however, issues with low accuracy continue to exist. Future research will concentrate on increasing the accuracy and recognition rate as well as assessing the concepts on complex procedures.

To summarize, Table 1 shows the publication list where evaluation and validation studies have been performed using AR technology. The kind of evaluation being conducted varied from testing the AR system for medical imaging, training and guidance, pre-operative planning and surgical navigation. The use of AR technology for pre-clinical trials, various clinical testing and applications, and surgery on patients is also evaluated. This table makes it very evident that the majority of the applications lack a proper validation on using AR surgical technology.

Table 1.

Table summarizing the evaluation and validation performed in various fields.

3. Evaluation and Validation of AR Surgical Technology

The clinical practice of simulation surgery utilizing 3D images has recently been accomplished, and AR is an effective tool in conducting surgical procedures, due to advancement of diagnostic imaging and creative computer programs [78]. In recent decades, surgery procedures aiming at minimizing invasiveness have grown in popularity [79]. AR is a technique that adds computer generated information to the perception of reality. When surgeons make decisions about anatomical structures and associated environment, they use their perceptual and spatial thinking skills. It is critical to develop methods and approaches to operationalize objective metrics of performance in tasks that involve the perception of and spatial reasoning regarding anatomical structures in order to study the facilitation offered by AR interactive displays of 2D and 3D, volumetric, biomedical visualization [80]. The driving force behind AR’s development was the need for an optimal head tracking system that continuously and perfectly tracks all subtle changes of the surgical area and transparently modifies the baseline registration all throughout the surgical intervention [81,82,83]. The display device must fit seamlessly into the physician’s workflow because AR visualization is supposed to make the physician’s task easier. As a result, the optimal presenting technique is one that blends in with the surroundings while delivering the necessary details.

The crucial point is that the human visual system responds to the resultant surface’s high frequency components, forming a sparse 3D picture of the surface. The buried virtual object no longer seems to be layered on top of a solid-looking surface, and the buried object’s relative depth perception is restored. The visual clarity achieved is suitable for the high AR depth perception, according to the experimental results using both phantom and in vivo data [84,85]. The goal of AR visualization is to be able to provide a selective and simplified portrayal of anatomy that may be easily understood during surgery in a variety of surgical specialties [86]. It is now also possible to boost surgeons’ sensory and motor skills during diverse surgical activities, from macro to micro levels, using computerized, robotics-assisted surgical systems. In comprehensive clinical investigations over the last two decades, the performance, advantages, and efficacy of established surgical robotic systems and many scientific platforms have been evaluated and validated [87,88,89].

The main challenge that AR technology is currently facing is the accuracy. Aside from a qualitative visual evaluation, there is currently no way to measure the correctness of an augmentation or predict the error. This is owing to the utilization of several complicated systems, each of which contributes to the uncertainty. For instance, optical tracking is widely utilized as a source of information in augmented reality for camera movement and fiducial pointing in AR [76]. However, as mentioned in [90], camera calibration, tracking, and hand-eye calibration errors are all accumulated by such systems. A clear requirement for validation methods in surgical AR is created by this lack of precision [1].

Validation requires demonstrating that perhaps the system performs as expected. Validation will mostly consist of establishing that implemented AR improves the knowledge and understanding of the operational domain if AR is designed to deliver an ideal understanding of the work domain. Validation has primarily been studied in terms of its impact on perception so far. As a result, validation is carried out as a research including a representative group of users, covering a wide range of abilities, age, technological skills, gender, and other applicable characteristics, as well as under unique situations such as fatigue and stress.

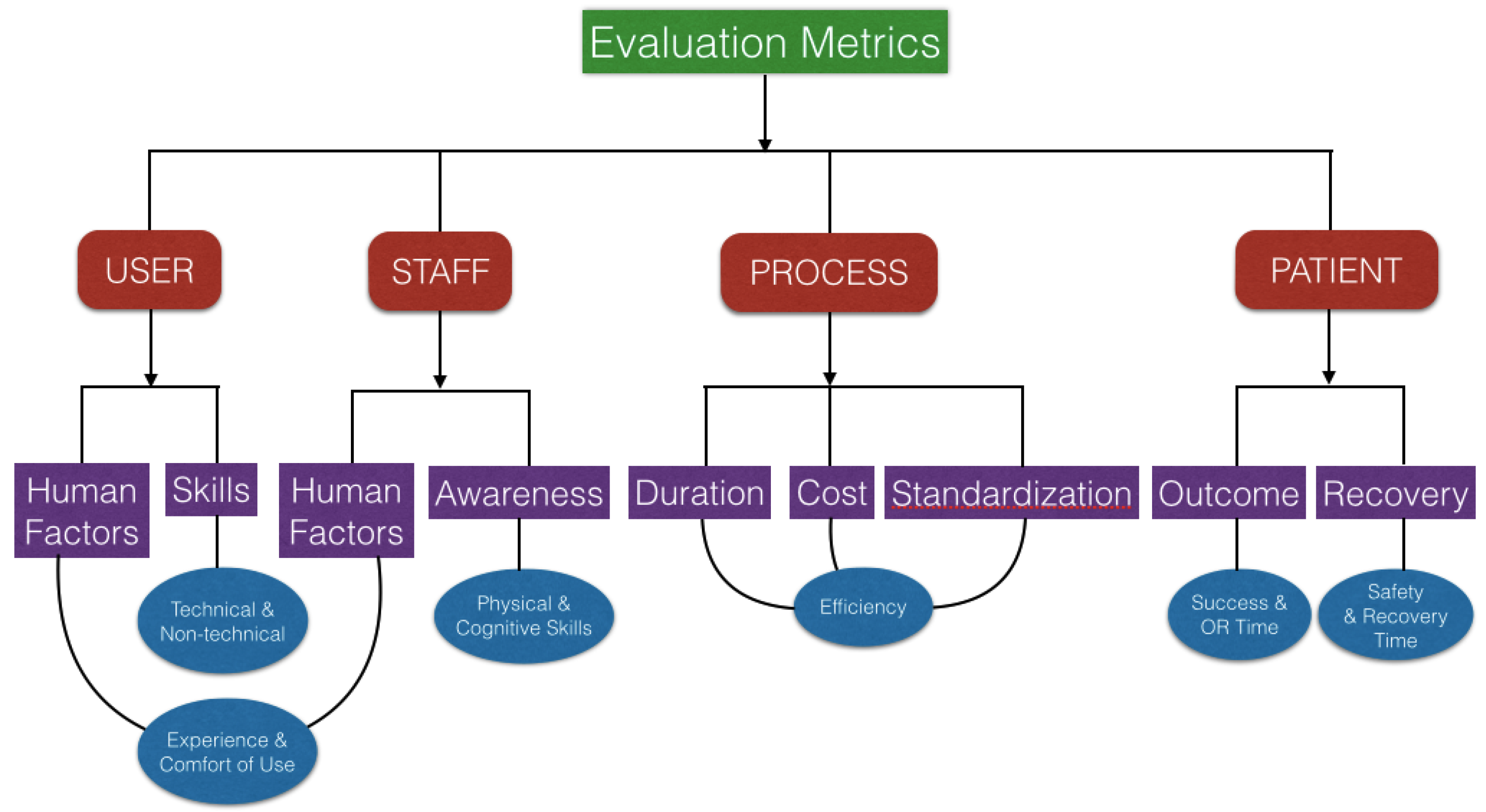

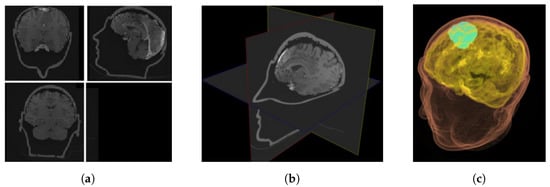

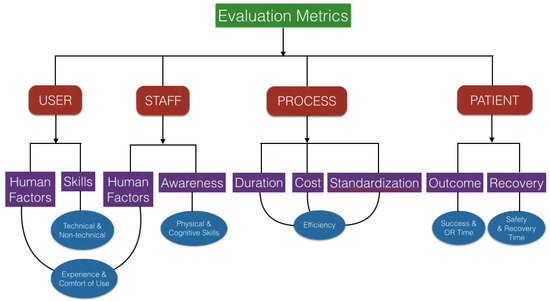

AR designers want to know how their system affects decision-making and action, and there are numerous metrics that may be used to evaluate and validate this system, which are mentioned in [91]. Figure 8 presents one of the evaluation metrics which can be classified into four groups. The first is about the user who is testing the AR system (e.g., head-mounted system). The system’s human elements, such as the system’s ergonomics, have a direct impact on the user (e.g., comfort of use). The system may also have an effect on the user’s technical (dexterity, tremor) and non-technical skills (such as situation awareness, stress, or risk anticipation). The technology may have an impact on the entire medical team by raising situation awareness and influencing human factors. Although it is well understood that the success of a process is primarily determined by its goal, the procedure used to achieve the goal, such as minimal duration, minimal energy, minimal workspace, cost, repeatability, or standardization, may be of interest and serve as a metric for evaluation of the AR system. Finally, the system may have an impact on the patient by affecting clinical scores, operating room time, or recovery time.

Figure 8.

Evaluation metrics for augmented reality surgical technology.

When conducting AR validation studies, it is critical to follow the standard procedures to ensure accurate reporting and interpretation of the findings. It is relevant to describe the clear design and reporting in the context of assessing image-guided navigation systems [92]. It is also critical to be able to clearly specify the conditions under which validation can be carried out in order to acquire a thorough grasp of the results and any potential bias. The suggested validation workflow is shown in Table 2 based on the operator level, which ranges from low to high clinical realism (i.e., from engineer to medical student to the surgeon). It describes the parameters of the place/setup where the AR system is being used by the various operators, which is laboratory for an engineer, simulated operation room for a medical student and operation room for the surgeon. An engineer’s work is mainly focused on technical scenarios and testing with simulations, whereas a medical student performs a simulated procedure using phantoms, and the real procedure is being performed by the surgeon using a clinical dataset.

Table 2.

Validation workflow based on the operator level (from low to high clinical realism).

Following the above described evaluation and validation method step by step can aid in the development of a better AR surgical technique. We advise assessing the degree of expertise in the work domain for each operator. This can be calculated using surveys such as the one given in [74], which has questions that span the entire abstraction hierarchy of the relevant work domain. The framework used here can serve as a user-oriented approach in evaluating the amount of perceived information using image-guided clinical systems by surgeons and medical students and supporting their design from the viewpoint of cognitive engineer.

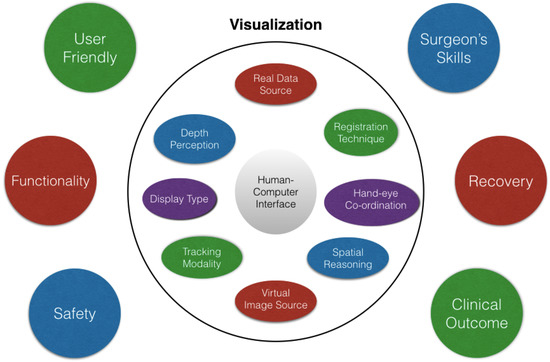

Figure 9 summarizes the suggested parameters which are relevant in evaluating and validating the current AR systems. To have the required precision of any of the AR system under consideration, visualization is the key factor which is further dependent upon many factors, mainly the human–computer interface. The source of real data and the virtual image are important factors affecting the visualization. With an efficient hand-eye coordination and display type, proper spatial reasoning and depth perception are obtained. Various other factors such as the registration technique, tracking modality, rendering time, etc. also contribute towards a reliable visualization. The system being user friendly will ensure the easy acceptance from the surgical community. The surgeon’s ability to use the equipment in the operating room may be constrained by a lack of intuitive design. For a human-centered AR system, the user experience is important additional to the user interface and should be in line with the requirements of the surgeon, the major stakeholder.

Figure 9.

Various parameters to be taken into consideration while performing the evaluation and validation study of AR systems.

The overall functionality of the system and patient’s safety during the whole procedure are the useful factors to evaluate and validate the usage of the AR based navigation in surgery. As already discussed in this section, the surgeons’ skills (such as sensory, motor, and cognitive abilities) are also crucial in achieving a precise surgical result as well as managing the stress and exhaustion that arise during the procedure. Another crucial element in the validation of the AR systems should be the patient’s post-operative recovery period, success, and relapse rate. The effective approach to validate a surgical procedure is to look at the clinical outcome in terms of operating time and efficiency, which is the result of all these elements. Currently, it is challenging to compare accuracy measurements between various systems due to differences in evaluation techniques. However, feedback from surgeons can help determine whether the most advanced and current AR assisted navigation systems are effective or not [66,93,94].

4. Discussion

4.1. Limitations

In the last 20 years, augmented reality has been used in medical operations to aid physicians in understanding and performing procedures, as well as for training and planning. However, as other review papers have pointed out, AR has yet to attain its full potential in terms of utility and deployment in the medical workflow. Many reasons have been given for this; one is that the AR system design approach is primarily technology driven, necessitating the development of clinically useful AR applications. Another reason is the lack of meaningful assessments of planned AR systems to prove their benefits across the range of medical care [95]. The key challenges in evaluating AR technology include comparing results with and without the AR system, assuming that there will be a statistically significant difference in results attributable to the use of the navigation system, which is also not easy to achieve or demonstrate.

It should also be emphasized that, in the AR environment, a common concern for any surgeon is that the target organ may not behave as intended. All the organs in the human body are not rigid; they deform in response to heartbeat and respiratory rhythms, laparoscopic insufflation pressure, and when physically probed [96]. These physical conditions are more noticeable during surgery on the liver and intestines (pliable organs) than the surgery on the bones and brain (semi-rigid organs) [97]. These deformations result in registration problems during surgery, which is one of the factors limiting the usage of AR technology. This is due to the fact that actual surgical confirmation of AR would include medically exposing the concealed structures revealed by the augmentation without modifying the scene or shifting the structures, which is highly unlikely in these conditions.

In AR surgical applications, the tracking of calibrated tools is a basic necessity. In tracking and calibration, the use of error analysis techniques and numerical methods established for registration purposes has numerous purposes [98,99]. Registration methods have been used in tracking to improve target design, predict tracking error, and improve tracking accuracy [100]. Given the widespread usage of calibrated tools in augmented reality surgical applications, there is a lot of opportunity for expanded use of registration techniques in calibration [101,102]. Based on the review completed in [103], there is also a need to build adequate segmentation algorithms to overcome the limits of existing methodologies and imaging modalities.

It can be challenging to select the best metric for a certain image processing task. The work described in [104] highlights some of the most common faults in the most often used reference-based validation criteria in image processing. It is crucial to validate biomedical image analysis algorithms in order to advance understanding and put methodological research into implementation. Validation metrics, or the metrics by which system’s performance is measured, are a crucial part of the validation design phase. In addition, to improve the accuracy of current AR systems, more research is required in the fusion of different imaging modalities, increasing bio-mechanical modeling, and improving image processing and tracking technologies [21]. Efforts should be made to improve the setup of AR systems, creating them to be more user friendly throughout all stages of surgery (microscopic and macroscopic) and also across different surgical procedures. The virtual models must be refined so that they perfectly blend with the real environment. Finally, new imaging modalities such as intra-operative ultrasound or MRI have the potential to add new details to virtual models as well as improve registration.

Both pre-operative planning and intra-operative guidance for surgery are aided by improved visualization, especially when combined with intra-operative imaging for real-time visualization. The incorporation of supplementary technologies to assist the incorporation of AR systems into clinical use may address technical and clinical limitations [75]. AR technology provides a novel visualization paradigm with exciting clinical navigation and guidance potential. However, user acceptance of novel interfaces is always slower, and it is unclear if this is due to a history of slow adoption of new technology in the medical field, regulatory roadblocks, or inherent usability challenges rooted in problems with human perceptual system restrictions [105]. To summarize, when creating the user interface of AR systems in medicine, significant caution must be exercised. An otherwise reliable system may become useless if the representation loses its credibility or becomes unclear.

4.2. Future Outlook

An essential prerequisite for enabling AR assisted navigation is medical imaging. The progress of AR surgical navigation is still being influenced by ingenious surgeons. To address their surgical concerns, these surgeons pushed for the development of this new technology. Thus, to better comprehend how to correlate intuitive and natural multi-sensory manipulation with the patient, some more research must be conducted. The potential for shared control in robotics will be further expanded by the integration of AR with active guidance mechanisms, leading to advancements in navigation, spatial orientation, and intra-operative confidence. AR in medical must overcome its obstacles in addition to technological ones in order to justify its cost, portability, and utility.

In the field of AR based navigation surgery, the creation of new interaction strategies has received less attention than that of hardware development, precise and reliable calibration techniques, and AR visual analytics. However, properly designed interface strategies have the ability to make tasks simpler, enable more natural ways to handle image guided surgery systems and optimize surgical workflow, as well as improve how the guided visuals and augmented reality representations are perceived. Tangible user interfaces are another strategy that might offer a cutting-edge response to interactions for surgical domain management, even though they are not completely investigated in the literature.

There is a lot of room for future research, especially when it comes to letting the surgeon interact within the surgical field of vision to adjust for system errors (such as brain shift, registration error, etc.). As a result, AR based image guided surgery devices could be employed more frequently and with greater accuracy. For appropriate guidance, it is necessary to have a better understanding of the spatial relationships between virtual elements in the surgical field of view and virtual anatomy, as well as their depth perception. AR will become more prevalent in clinical practice if interactivity and visualization are combined in a way that improves perception and enables correct localization of anatomy. It is also crucial to keep in mind that representation and perception are user-dependent; therefore, depending on their area of expertise, level of skill, and psychological makeup, different users will respond differently to various representations.

In conclusion, this paper focused on the need for effective evaluation and validation of the AR assisted navigation systems being employed in surgery. This strategy will enable the creation of clinically applicable systems with an emphasis on evaluating adequate comprehension of key functional concepts (such as data, information, and knowledge) to support decision-making during surgery. To make sure that the reliance on the operational work domain is maintained, evaluation of the AR assisted navigation systems is necessary both at the clinical and technological levels. It will be challenging to guarantee the patient’s surgical safety without a thorough validation of the system by the surgeons. Thus, the AR approach in surgery cannot be translated into a genuine product until it does not meet the stringent certification standards with a credible validation methodology.

To successfully integrate AR technology into current practice, academic researchers, technical industries, and clinical users must work together to pool their knowledge, resources, and expertise in order to migrate AR based navigation technology from the research laboratory to the medical environment. With advances in computer technology and a move towards sophisticated information processing, AR navigation will soon become a more common part of surgical procedures, although further study is required to assess its long-term clinical effect on patients, surgeons, and administrators in hospitals. The advancement of augmented reality to make it more user-friendly has revived interest and will maximize the number of skilled and safe surgical hands in the twenty-first century.

Author Contributions

Conceptualization, O.H. and S.P.D.; methodology, S.M., O.H. and S.P.D.; software, J.P., S.P. and W.P.; validation, S.M., O.H. and S.P.D.; formal analysis and investigation, S.M.; resources, S.M., O.H. and S.P.D; writing—original draft preparation and editing, S.M.; writing—review, O.H., S.P.D., J.P., S.P. and W.P.; supervision, O.H. and S.P.D.; project administration, O.H. and S.P.D.; funding acquisition, O.H. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded by Qatar National Research Fund (Grant No. NPRP-11S-1219-170106).

Institutional Review Board Statement

Not applicable as this article does not contain any studies with human participants or animals performed by any of the authors.

Informed Consent Statement

Not applicable as this article does not contain patient data.

Data Availability Statement

Not applicable as this is a literature review.

Acknowledgments

This publication was made possible by NPRP-11S-1219-170106 from the Qatar National Research Fund (a member of Qatar Foundation). The findings herein reflect the work, and are solely the responsibility of the authors.

Conflicts of Interest

The authors, i.e., Shivali Malhotra, Osama Halabi, Sarada P. Dakua, Jhasketan Padhan, Santu Paul and Waseem Palliyali, declare that they have no conflict of interest.

References

- Cleary, K.; Peters, T.M. Image-guided interventions: Technology review and clinical applications. Annu. Rev. Biomed. Eng. 2010, 12, 119–142. [Google Scholar] [CrossRef] [PubMed]

- Mezger, U.; Jendrewski, C.; Bartels, M. Navigation in surgery. Langenbeck’s Arch. Surg. 2013, 398, 501–514. [Google Scholar] [CrossRef] [PubMed]

- Azuma, R.T. A survey of augmented reality. Presence Teleoperators Virtual Environ. 1997, 6, 355–385. [Google Scholar] [CrossRef]

- Sielhorst, T.; Feuerstein, M.; Navab, N. Advanced medical displays: A literature review of augmented reality. J. Disp. Technol. 2008, 4, 451–467. [Google Scholar] [CrossRef]

- Kalkofen, D.; Mendez, E.; Schmalstieg, D. Comprehensible visualization for augmented reality. IEEE Trans. Vis. Comput. Graph. 2008, 15, 193–204. [Google Scholar] [CrossRef]

- Shuhaiber, J.H. Augmented reality in surgery. Arch. Surg. 2004, 139, 170–174. [Google Scholar] [CrossRef]

- Marescaux, J.; Clément, J.M.; Tassetti, V.; Koehl, C.; Cotin, S.; Russier, Y.; Mutter, D.; Delingette, H.; Ayache, N. Virtual reality applied to hepatic surgery simulation: The next revolution. Ann. Surg. 1998, 228, 627. [Google Scholar] [CrossRef]

- Wang, J.; Suenaga, H.; Liao, H.; Hoshi, K.; Yang, L.; Kobayashi, E.; Sakuma, I. Real-time computer-generated integral imaging and 3D image calibration for augmented reality surgical navigation. Comput. Med. Imaging Graph. 2015, 40, 147–159. [Google Scholar] [CrossRef]

- Meola, A.; Cutolo, F.; Carbone, M.; Cagnazzo, F.; Ferrari, M.; Ferrari, V. Augmented reality in neurosurgery: A systematic review. Neurosurg. Rev. 2017, 40, 537–548. [Google Scholar] [CrossRef]

- Tagaytayan, R.; Kelemen, A.; Sik-Lanyi, C. Augmented reality in neurosurgery. Arch. Med. Sci. AMS 2018, 14, 572. [Google Scholar] [CrossRef]

- Si, W.; Liao, X.; Wang, Q.; Heng, P.A. Augmented reality-based personalized virtual operative anatomy for neurosurgical guidance and training. In Proceedings of the 2018 IEEE Conference on Virtual Reality and 3D User Interfaces (VR), Tuebingen/Reutlingen, Germany, 18–22 March 2018; pp. 683–684. [Google Scholar]

- Lee, J.D.; Wu, H.K.; Wu, C.T. A projection-based AR system to display brain angiography via stereo vision. In Proceedings of the 2018 IEEE 7th Global Conference on Consumer Electronics (GCCE), Nara, Japan, 9–12 October 2018; pp. 130–131. [Google Scholar]

- Shirai, R.; Chen, X.; Sase, K.; Komizunai, S.; Tsujita, T.; Konno, A. AR brain-shift display for computer-assisted neurosurgery. In Proceedings of the 2019 58th Annual Conference of the Society of Instrument and Control Engineers of Japan (SICE), Hiroshima, Japan, 10–13 September 2019; pp. 1113–1118. [Google Scholar]

- Fallavollita, P.; Wang, L.; Weidert, S.; Navab, N. Augmented reality in orthopaedic interventions and education. In Computational Radiology for Orthopaedic Interventions; Springer: Cham, Switzerland, 2016; pp. 251–269. [Google Scholar]

- Zhang, X.; Fan, Z.; Wang, J.; Liao, H. 3D augmented reality based orthopaedic interventions. In Computational Radiology for Orthopaedic Interventions; Springer: Cham, Switzerland, 2016; pp. 71–90. [Google Scholar]

- Härtl, R.; Lam, K.S.; Wang, J.; Korge, A.; Kandziora, F.; Audigé, L. Worldwide survey on the use of navigation in spine surgery. World Neurosurg. 2013, 79, 162–172. [Google Scholar] [CrossRef] [PubMed]

- Nicolau, S.; Soler, L.; Mutter, D.; Marescaux, J. Augmented reality in laparoscopic surgical oncology. Surg. Oncol. 2011, 20, 189–201. [Google Scholar] [CrossRef] [PubMed]

- Bernhardt, S.; Nicolau, S.A.; Soler, L.; Doignon, C. The status of augmented reality in laparoscopic surgery as of 2016. Med. Image Anal. 2017, 37, 66–90. [Google Scholar] [CrossRef] [PubMed]

- Tsutsumi, N.; Tomikawa, M.; Uemura, M.; Akahoshi, T.; Nagao, Y.; Konishi, K.; Ieiri, S.; Hong, J.; Maehara, Y.; Hashizume, M. Image-guided laparoscopic surgery in an open MRI operating theater. Surg. Endosc. 2013, 27, 2178–2184. [Google Scholar] [CrossRef] [PubMed]

- Hallet, J.; Soler, L.; Diana, M.; Mutter, D.; Baumert, T.F.; Habersetzer, F.; Marescaux, J.; Pessaux, P. Trans-thoracic minimally invasive liver resection guided by augmented reality. J. Am. Coll. Surg. 2015, 220, e55–e60. [Google Scholar] [CrossRef]

- Tang, R.; Ma, L.F.; Rong, Z.X.; Li, M.D.; Zeng, J.P.; Wang, X.D.; Liao, H.E.; Dong, J.H. Augmented reality technology for preoperative planning and intraoperative navigation during hepatobiliary surgery: A review of current methods. Hepatobiliary Pancreat. Dis. Int. 2018, 17, 101–112. [Google Scholar] [CrossRef]

- Zhang, F.; Zhang, S.; Zhong, K.; Yu, L.; Sun, L.N. Design of navigation system for liver surgery guided by augmented reality. IEEE Access 2020, 8, 126687–126699. [Google Scholar] [CrossRef]

- Gavriilidis, P.; Edwin, B.; Pelanis, E.; Hidalgo, E.; de’Angelis, N.; Memeo, R.; Aldrighetti, L.; Sutcliffe, R.P. Navigated liver surgery: State of the art and future perspectives. Hepatobiliary Pancreat. Dis. Int. 2022, 21, 226–233. [Google Scholar] [CrossRef]

- Okamoto, T.; Onda, S.; Matsumoto, M.; Gocho, T.; Futagawa, Y.; Fujioka, S.; Yanaga, K.; Suzuki, N.; Hattori, A. Utility of augmented reality system in hepatobiliary surgery. J. Hepato-Biliary Sci. 2013, 20, 249–253. [Google Scholar] [CrossRef]

- Wang, J.; Suenaga, H.; Yang, L.; Kobayashi, E.; Sakuma, I. Video see-through augmented reality for oral and maxillofacial surgery. Int. J. Med. Robot. Comput. Assist. Surg. 2017, 13, e1754. [Google Scholar] [CrossRef]

- Jiang, J.; Huang, Z.; Qian, W.; Zhang, Y.; Liu, Y. Registration technology of augmented reality in oral medicine: A review. IEEE Access 2019, 7, 53566–53584. [Google Scholar] [CrossRef]

- Okamoto, T.; Onda, S.; Yanaga, K.; Suzuki, N.; Hattori, A. Clinical application of navigation surgery using augmented reality in the abdominal field. Surg. Today 2015, 45, 397–406. [Google Scholar] [CrossRef] [PubMed]

- Navab, N.; Heining, S.M.; Traub, J. Camera augmented mobile C-arm (CAMC): Calibration, accuracy study, and clinical applications. IEEE Trans. Med. Imaging 2009, 29, 1412–1423. [Google Scholar] [CrossRef] [PubMed]

- von der Heide, A.M.; Fallavollita, P.; Wang, L.; Sandner, P.; Navab, N.; Weidert, S.; Euler, E. Camera-augmented mobile C-arm (CamC): A feasibility study of augmented reality imaging in the operating room. Int. J. Med. Robot. Comput. Assist. Surg. 2018, 14, e1885. [Google Scholar] [CrossRef]

- Cartucho, J.; Shapira, D.; Ashrafian, H.; Giannarou, S. Multimodal mixed reality visualisation for intraoperative surgical guidance. Int. J. Comput. Assist. Radiol. Surg. 2020, 15, 819–826. [Google Scholar] [CrossRef]

- Kersten-Oertel, M.; Jannin, P.; Collins, D.L. The state of the art of visualization in mixed reality image guided surgery. Comput. Med. Imaging Graph. 2013, 37, 98–112. [Google Scholar] [CrossRef]

- Chou, B.; Handa, V.L. Simulators and virtual reality in surgical education. Obstet. Gynecol. Clin. 2006, 33, 283–296. [Google Scholar] [CrossRef]

- Shamir, R.R.; Horn, M.; Blum, T.; Mehrkens, J.; Shoshan, Y.; Joskowicz, L.; Navab, N. Trajectory planning with augmented reality for improved risk assessment in image-guided keyhole neurosurgery. In Proceedings of the 2011 IEEE International Symposium on Biomedical Imaging: From Nano to Macro, Chicago, IL, USA, 30 March–2 April 2011; pp. 1873–1876. [Google Scholar]

- Thomas, R.G.; William John, N.; Delieu, J.M. Augmented reality for anatomical education. J. Vis. Commun. Med. 2010, 33, 6–15. [Google Scholar] [CrossRef]

- Fang, T.Y.; Wang, P.C.; Liu, C.H.; Su, M.C.; Yeh, S.C. Evaluation of a haptics-based virtual reality temporal bone simulator for anatomy and surgery training. Comput. Methods Programs Biomed. 2014, 113, 674–681. [Google Scholar] [CrossRef]

- Stefan, P.; Wucherer, P.; Oyamada, Y.; Ma, M.; Schoch, A.; Kanegae, M.; Shimizu, N.; Kodera, T.; Cahier, S.; Weigl, M.; et al. An AR edutainment system supporting bone anatomy learning. In Proceedings of the 2014 IEEE Virtual Reality (VR), Minneapolis, MN, USA, 29 March–2 April 2014; pp. 113–114. [Google Scholar]

- Ma, M.; Fallavollita, P.; Seelbach, I.; von der Heide, A.M.; Euler, E.; Waschke, J.; Navab, N. Personalized augmented reality for anatomy education. Clin. Anat. 2016, 29, 446–453. [Google Scholar] [CrossRef]

- Krueger, E.; Messier, E.; Linte, C.A.; Diaz, G. An interactive, stereoscopic virtual environment for medical imaging visualization, simulation and training. In Medical Imaging 2017: Image Perception, Observer Performance, and Technology Assessment; SPIE: Bellingham, WA, USA, 2017; Volume 10136, pp. 399–405. [Google Scholar]

- Huang, H.M.; Liaw, S.S.; Lai, C.M. Exploring learner acceptance of the use of virtual reality in medical education: A case study of desktop and projection-based display systems. Interact. Learn. Environ. 2016, 24, 3–19. [Google Scholar] [CrossRef]

- Küçük, S.; Kapakin, S.; Göktaş, Y. Learning anatomy via mobile augmented reality: Effects on achievement and cognitive load. Anat. Sci. Educ. 2016, 9, 411–421. [Google Scholar] [CrossRef] [PubMed]

- Moro, C.; Štromberga, Z.; Raikos, A.; Stirling, A. The effectiveness of virtual and augmented reality in health sciences and medical anatomy. Anat. Sci. Educ. 2017, 10, 549–559. [Google Scholar] [CrossRef]

- Peterson, D.C.; Mlynarczyk, G.S. Analysis of traditional versus three-dimensional augmented curriculum on anatomical learning outcome measures. Anat. Sci. Educ. 2016, 9, 529–536. [Google Scholar] [CrossRef] [PubMed]

- Cabrilo, I.; Bijlenga, P.; Schaller, K. Augmented reality in the surgery of cerebral arteriovenous malformations: Technique assessment and considerations. Acta Neurochir. 2014, 156, 1769–1774. [Google Scholar] [CrossRef] [PubMed]

- Tabrizi, L.B.; Mahvash, M. Augmented reality–guided neurosurgery: Accuracy and intraoperative application of an image projection technique. J. Neurosurg. 2015, 123, 206–211. [Google Scholar] [CrossRef] [PubMed]

- Kersten-Oertel, M.; Gerard, I.; Drouin, S.; Mok, K.; Sirhan, D.; Sinclair, D.S.; Collins, D.L. Augmented reality in neurovascular surgery: Feasibility and first uses in the operating room. Int. J. Comput. Assist. Radiol. Surg. 2015, 10, 1823–1836. [Google Scholar] [CrossRef]

- Abhari, K.; Baxter, J.S.; Chen, E.C.; Khan, A.R.; Peters, T.M.; De Ribaupierre, S.; Eagleson, R. Training for planning tumour resection: Augmented reality and human factors. IEEE Trans. Biomed. Eng. 2014, 62, 1466–1477. [Google Scholar] [CrossRef]

- Fick, T.; van Doormaal, J.A.; Hoving, E.W.; Willems, P.W.; van Doormaal, T.P. Current accuracy of augmented reality neuronavigation systems: Systematic review and meta-analysis. World Neurosurg. 2021, 146, 179–188. [Google Scholar] [CrossRef]

- López, W.O.C.; Navarro, P.A.; Crispin, S. Intraoperative clinical application of augmented reality in neurosurgery: A systematic review. Clin. Neurol. Neurosurg. 2019, 177, 6–11. [Google Scholar] [CrossRef]

- Rennert, R.C.; Santiago-Dieppa, D.R.; Figueroa, J.; Sanai, N.; Carter, B.S. Future directions of operative neuro-oncology. J. Neuro-Oncol. 2016, 130, 377–382. [Google Scholar] [CrossRef] [PubMed]

- Jud, L.; Fotouhi, J.; Andronic, O.; Aichmair, A.; Osgood, G.; Navab, N.; Farshad, M. Applicability of augmented reality in orthopedic surgery–a systematic review. BMC Musculoskelet. Disord. 2020, 21, 1–13. [Google Scholar] [CrossRef] [PubMed]

- Phillips, F.M.; Cheng, I.; Rampersaud, Y.R.; Akbarnia, B.A.; Pimenta, L.; Rodgers, W.B.; Uribe, J.S.; Khanna, N.; Smith, W.D.; Youssef, J.A.; et al. Breaking through the “glass ceiling” of minimally invasive spine surgery. Spine 2016, 41, S39–S43. [Google Scholar] [CrossRef]

- Manbachi, A.; Cobbold, R.S.; Ginsberg, H.J. Guided pedicle screw insertion: Techniques and training. Spine J. 2014, 14, 165–179. [Google Scholar] [CrossRef]

- Mason, A.; Paulsen, R.; Babuska, J.M.; Rajpal, S.; Burneikiene, S.; Nelson, E.L.; Villavicencio, A.T. The accuracy of pedicle screw placement using intraoperative image guidance systems: A systematic review. J. Neurosurg. Spine 2014, 20, 196–203. [Google Scholar] [CrossRef]

- Ma, L.; Zhao, Z.; Chen, F.; Zhang, B.; Fu, L.; Liao, H. Augmented reality surgical navigation with ultrasound-assisted registration for pedicle screw placement: A pilot study. Int. J. Comput. Assist. Radiol. Surg. 2017, 12, 2205–2215. [Google Scholar] [CrossRef]

- Bonjer, H.J.; Deijen, C.L.; Abis, G.A.; Cuesta, M.A.; van der Pas, M.H.; De Lange-De Klerk, E.S.; Lacy, A.M.; Bemelman, W.A.; Andersson, J.; Angenete, E.; et al. A randomized trial of laparoscopic versus open surgery for rectal cancer. N. Engl. J. Med. 2015, 372, 1324–1332. [Google Scholar] [CrossRef]

- Lamata, P.; Ali, W.; Cano, A.; Cornella, J.; Declerck, J.; Elle, O.J.; Freudenthal, A.; Furtado, H.; Kalkofen, D.; Naerum, E.; et al. Augmented reality for minimally invasive surgery: Overview and some recent advances. In Augmented Reality; IntechOpen: London, UK, 2010; p. 73. [Google Scholar]

- Wild, C.; Lang, F.; Gerhäuser, A.; Schmidt, M.; Kowalewski, K.; Petersen, J.; Kenngott, H.; Müller-Stich, B.; Nickel, F. Telestration with augmented reality for visual presentation of intraoperative target structures in minimally invasive surgery: A randomized controlled study. Surg. Endosc. 2022, 36, 7453–7461. [Google Scholar] [CrossRef]

- Fuchs, H.; Livingston, M.A.; Raskar, R.; Colucci, D.; Keller, K.; State, A.; Crawford, J.R.; Rademacher, P.; Drake, S.H.; Meyer, A.A. Augmented reality visualization for laparoscopic surgery. In International Conference on Medical Image Computing and Computer-Assisted Intervention; Springer: Berlin/Heidelberg, Germany, 1998; pp. 934–943. [Google Scholar]

- Modrzejewski, R.; Collins, T.; Seeliger, B.; Bartoli, A.; Hostettler, A.; Marescaux, J. An in vivo porcine dataset and evaluation methodology to measure soft-body laparoscopic liver registration accuracy with an extended algorithm that handles collisions. Int. J. Comput. Assist. Radiol. Surg. 2019, 14, 1237–1245. [Google Scholar] [CrossRef] [PubMed]

- Pelanis, E.; Teatini, A.; Eigl, B.; Regensburger, A.; Alzaga, A.; Kumar, R.P.; Rudolph, T.; Aghayan, D.L.; Riediger, C.; Kvarnstr, N.; et al. Evaluation of a novel navigation platform for laparoscopic liver surgery with organ deformation compensation using injected fiducials. Med. Image Anal. 2021, 69, 101946. [Google Scholar] [CrossRef]

- Zhang, W.; Zhu, W.; Yang, J.; Xiang, N.; Zeng, N.; Hu, H.; Jia, F.; Fang, C. Augmented reality navigation for stereoscopic laparoscopic anatomical hepatectomy of primary liver cancer: Preliminary experience. Front. Oncol. 2021, 11, 996. [Google Scholar] [CrossRef] [PubMed]

- Teatini, A.; Pelanis, E.; Aghayan, D.L.; Kumar, R.P.; Palomar, R.; Fretland, Å.A.; Edwin, B.; Elle, O.J. The effect of intraoperative imaging on surgical navigation for laparoscopic liver resection surgery. Sci. Rep. 2019, 9, 18687. [Google Scholar] [CrossRef] [PubMed]

- Liu, X.; Plishker, W.; Kane, T.D.; Geller, D.A.; Lau, L.W.; Tashiro, J.; Sharma, K.; Shekhar, R. Preclinical evaluation of ultrasound-augmented needle navigation for laparoscopic liver ablation. Int. J. Comput. Assist. Radiol. Surg. 2020, 15, 803–810. [Google Scholar] [CrossRef] [PubMed]

- Shekhar, R.; Dandekar, O.; Bhat, V.; Philip, M.; Lei, P.; Godinez, C.; Sutton, E.; George, I.; Kavic, S.; Mezrich, R.; et al. Live augmented reality: A new visualization method for laparoscopic surgery using continuous volumetric computed tomography. Surg. Endosc. 2010, 24, 1976–1985. [Google Scholar] [CrossRef]

- Luo, H.; Yin, D.; Zhang, S.; Xiao, D.; He, B.; Meng, F.; Zhang, Y.; Cai, W.; He, S.; Zhang, W.; et al. Augmented reality navigation for liver resection with a stereoscopic laparoscope. Comput. Methods Programs Biomed. 2020, 187, 105099. [Google Scholar] [CrossRef]

- Schneider, C.; Allam, M.; Stoyanov, D.; Hawkes, D.; Gurusamy, K.; Davidson, B. Performance of image guided navigation in laparoscopic liver surgery–A systematic review. Surg. Oncol. 2021, 38, 101637. [Google Scholar] [CrossRef]

- Bernhardt, S.; Nicolau, S.A.; Agnus, V.; Soler, L.; Doignon, C.; Marescaux, J. Automatic localization of endoscope in intraoperative CT image: A simple approach to augmented reality guidance in laparoscopic surgery. Med. Image Anal. 2016, 30, 130–143. [Google Scholar] [CrossRef]

- Jayarathne, U.L.; Moore, J.; Chen, E.; Pautler, S.E.; Peters, T.M. Real-time 3D ultrasound reconstruction and visualization in the context of laparoscopy. In International Conference on Medical Image Computing and Computer-Assisted Intervention; Springer: Cham, Switzerland, 2017; pp. 602–609. [Google Scholar]

- Casap, N.; Nadel, S.; Tarazi, E.; Weiss, E.I. Evaluation of a navigation system for dental implantation as a tool to train novice dental practitioners. J. Oral Maxillofac. Surg. 2011, 69, 2548–2556. [Google Scholar] [CrossRef]

- Yamaguchi, S.; Ohtani, T.; Yatani, H.; Sohmura, T. Augmented reality system for dental implant surgery. In International Conference on Virtual and Mixed Reality; Springer: Berlin/Heidelberg, Germany, 2009; pp. 633–638. [Google Scholar]

- Tran, H.H.; Suenaga, H.; Kuwana, K.; Masamune, K.; Dohi, T.; Nakajima, S.; Liao, H. Augmented reality system for oral surgery using 3D auto stereoscopic visualization. In International Conference on Medical Image Computing and Computer-Assisted Intervention; Springer: Berlin/Heidelberg, Germany, 2011; pp. 81–88. [Google Scholar]

- Wang, J.; Suenaga, H.; Hoshi, K.; Yang, L.; Kobayashi, E.; Sakuma, I.; Liao, H. Augmented reality navigation with automatic marker-free image registration using 3-D image overlay for dental surgery. IEEE Trans. Biomed. Eng. 2014, 61, 1295–1304. [Google Scholar] [CrossRef]

- Katić, D.; Sudra, G.; Speidel, S.; Castrillon-Oberndorfer, G.; Eggers, G.; Dillmann, R. Knowledge-based situation interpretation for context-aware augmented reality in dental implant surgery. In International Workshop on Medical Imaging and Virtual Reality; Springer: Berlin/Heidelberg, Germany, 2010; pp. 531–540. [Google Scholar]

- Morineau, T.; Morandi, X.; Le Moëllic, N.; Jannin, P. A cognitive engineering framework for the specification of information requirements in medical imaging: Application in image-guided neurosurgery. Int. J. Comput. Assist. Radiol. Surg. 2013, 8, 291–300. [Google Scholar] [CrossRef]

- Mikhail, M.; Mithani, K.; Ibrahim, G.M. Presurgical and intraoperative augmented reality in neuro-oncologic surgery: Clinical experiences and limitations. World Neurosurg. 2019, 128, 268–276. [Google Scholar] [CrossRef] [PubMed]

- Andress, S.; Johnson, A.; Unberath, M.; Winkler, A.F.; Yu, K.; Fotouhi, J.; Weidert, S.; Osgood, G.M.; Navab, N. On-the-fly augmented reality for orthopedic surgery using a multimodal fiducial. J. Med. Imaging 2018, 5, 021209. [Google Scholar] [CrossRef] [PubMed]

- Wang, J.; Suenaga, H.; Yang, L.; Liao, H.; Kobayashi, E.; Takato, T.; Sakuma, I. Real-time marker-free patient registration and image-based navigation using stereovision for dental surgery. In Augmented Reality Environments for Medical Imaging and Computer-Assisted Interventions; Springer: Berlin/Heidelberg, Germany, 2013; pp. 9–18. [Google Scholar]

- Gribaudo, M.; Piazzolla, P.; Porpiglia, F.; Vezzetti, E.; Violante, M.G. 3D augmentation of the surgical video stream: Toward a modular approach. Comput. Methods Programs Biomed. 2020, 191, 105505. [Google Scholar] [CrossRef] [PubMed]

- Linte, C.A.; White, J.; Eagleson, R.; Guiraudon, G.M.; Peters, T.M. Virtual and augmented medical imaging environments: Enabling technology for minimally invasive cardiac interventional guidance. IEEE Rev. Biomed. Eng. 2010, 3, 25–47. [Google Scholar] [CrossRef]

- Baum, Z.; Ungi, T.; Lasso, A.; Fichtinger, G. Usability of a real-time tracked augmented reality display system in musculoskeletal injections. In Medical Imaging 2017: Image-Guided Procedures, Robotic Interventions, and Modeling; SPIE: Bellingham, WA, USA, 2017; Volume 10135, pp. 727–734. [Google Scholar]

- Cercenelli, L.; Carbone, M.; Condino, S.; Cutolo, F.; Marcelli, E.; Tarsitano, A.; Marchetti, C.; Ferrari, V.; Badiali, G. The wearable VOSTARS system for augmented reality-guided surgery: Preclinical phantom evaluation for high-precision maxillofacial tasks. J. Clin. Med. 2020, 9, 3562. [Google Scholar] [CrossRef]

- Maisto, M.; Pacchierotti, C.; Chinello, F.; Salvietti, G.; De Luca, A.; Prattichizzo, D. Evaluation of wearable haptic systems for the fingers in augmented reality applications. IEEE Trans. Haptics 2017, 10, 511–522. [Google Scholar] [CrossRef]

- Cutolo, F.; Fida, B.; Cattari, N.; Ferrari, V. Software framework for customized augmented reality headsets in medicine. IEEE Access 2019, 8, 706–720. [Google Scholar] [CrossRef]

- Gavaghan, K.; Oliveira-Santos, T.; Peterhans, M.; Reyes, M.; Kim, H.; Anderegg, S.; Weber, S. Evaluation of a portable image overlay projector for the visualisation of surgical navigation data: Phantom studies. Int. J. Comput. Assist. Radiol. Surg. 2012, 7, 547–556. [Google Scholar] [CrossRef]

- Rosenthal, M.; State, A.; Lee, J.; Hirota, G.; Ackerman, J.; Keller, K.; Pisano, E.D.; Jiroutek, M.; Muller, K.; Fuchs, H. Augmented reality guidance for needle biopsies: An initial randomized, controlled trial in phantoms. Med. Image Anal. 2002, 6, 313–320. [Google Scholar] [CrossRef]

- Jiang, Y.; Wang, H.R.; Wang, P.F.; Xu, S.G. The Surgical Approach Visualization and Navigation (SAVN) System reduces radiation dosage and surgical trauma due to accurate intraoperative guidance. Injury 2019, 50, 859–863. [Google Scholar] [CrossRef]

- Wen, R.; Tay, W.L.; Nguyen, B.P.; Chng, C.B.; Chui, C.K. Hand gesture guided robot-assisted surgery based on a direct augmented reality interface. Comput. Methods Programs Biomed. 2014, 116, 68–80. [Google Scholar] [CrossRef] [PubMed]

- Wen, R.; Chng, C.B.; Chui, C.K. Augmented reality guidance with multimodality imaging data and depth-perceived interaction for robot-assisted surgery. Robotics 2017, 6, 13. [Google Scholar] [CrossRef]

- Giannone, F.; Felli, E.; Cherkaoui, Z.; Mascagni, P.; Pessaux, P. Augmented Reality and Image-Guided Robotic Liver Surgery. Cancers 2021, 13, 6268. [Google Scholar] [CrossRef]

- Maier-Hein, L.; Mountney, P.; Bartoli, A.; Elhawary, H.; Elson, D.; Groch, A.; Kolb, A.; Rodrigues, M.; Sorger, J.; Speidel, S.; et al. Optical techniques for 3D surface reconstruction in computer-assisted laparoscopic surgery. Med. Image Anal. 2013, 17, 974–996. [Google Scholar] [CrossRef] [PubMed]

- Peters, T.M.; Linte, C.A.; Yaniv, Z.; Williams, J. Mixed and Augmented Reality in Medicine; CRC Press: Boca Raton, FL, USA, 2018. [Google Scholar]

- Jannin, P.; Korb, W. Assessment of image-guided interventions. In Image-Guided Interventions; Springer: Boston, MA, USA, 2008; pp. 531–549. [Google Scholar]

- Benckert, C.; Bruns, C. The surgeon’s contribution to image-guided oncology. Visc. Med. 2014, 30, 232–236. [Google Scholar] [CrossRef]

- Marcus, H.J.; Pratt, P.; Hughes-Hallett, A.; Cundy, T.P.; Marcus, A.P.; Yang, G.Z.; Darzi, A.; Nandi, D. Comparative effectiveness and safety of image guidance systems in surgery: A preclinical randomised study. Lancet 2015, 385, S64. [Google Scholar] [CrossRef]

- Khor, W.S.; Baker, B.; Amin, K.; Chan, A.; Patel, K.; Wong, J. Augmented and virtual reality in surgery-the digital surgical environment: Applications, limitations and legal pitfalls. Ann. Transl. Med. 2016, 4, 454. [Google Scholar] [CrossRef] [PubMed]

- Chen, F.; Cui, X.; Liu, J.; Han, B.; Zhang, X.; Zhang, D.; Liao, H. Tissue structure updating for In situ augmented reality navigation using calibrated ultrasound and two-level surface warping. IEEE Trans. Biomed. Eng. 2020, 67, 3211–3222. [Google Scholar] [CrossRef]

- Nicolau, S.; Pennec, X.; Soler, L.; Buy, X.; Gangi, A.; Ayache, N.; Marescaux, J. An augmented reality system for liver thermal ablation: Design and evaluation on clinical cases. Med. Image Anal. 2009, 13, 494–506. [Google Scholar] [CrossRef]

- Chen, E.C.; Morgan, I.; Jayarathne, U.; Ma, B.; Peters, T.M. Hand-eye calibration using a target registration error model. Healthc. Technol. Lett. 2017, 4, 157–162. [Google Scholar] [CrossRef]

- Zhang, Z.; Zhang, L.; Yang, G.Z. A computationally efficient method for hand-eye calibration. Int. J. Comput. Assist. Radiol. Surg. 2017, 12, 1775–1787. [Google Scholar] [CrossRef] [PubMed]

- Chen, E.; Ma, B.; Peters, T.M. Contact-less stylus for surgical navigation: Registration without digitization. Int. J. Comput. Assist. Radiol. Surg. 2017, 12, 1231–1241. [Google Scholar] [CrossRef] [PubMed]

- Schneider, C.; Thompson, S.; Totz, J.; Song, Y.; Allam, M.; Sodergren, M.; Desjardins, A.; Barratt, D.; Ourselin, S.; Gurusamy, K.; et al. Comparison of manual and semi-automatic registration in augmented reality image-guided liver surgery: A clinical feasibility study. Surg. Endosc. 2020, 34, 4702–4711. [Google Scholar] [CrossRef]

- Golse, N.; Petit, A.; Lewin, M.; Vibert, E.; Cotin, S. Augmented reality during open liver surgery using a markerless non-rigid registration system. J. Gastrointest. Surg. 2021, 25, 662–671. [Google Scholar] [CrossRef]

- Ansari, M.Y.; Abdalla, A.; Ansari, M.Y.; Ansari, M.I.; Malluhi, B.; Mohanty, S.; Mishra, S.; Singh, S.S.; Abinahed, J.; Al-Ansari, A.; et al. Practical utility of liver segmentation methods in clinical surgeries and interventions. BMC Med. Imaging 2022, 22, 1–17. [Google Scholar]

- Reinke, A.; Eisenmann, M.; Tizabi, M.D.; Sudre, C.H.; Rädsch, T.; Antonelli, M.; Arbel, T.; Bakas, S.; Cardoso, M.J.; Cheplygina, V.; et al. Common limitations of image processing metrics: A picture story. arXiv 2021, arXiv:2104.05642. [Google Scholar]

- Dixon, B.J.; Daly, M.J.; Chan, H.; Vescan, A.D.; Witterick, I.J.; Irish, J.C. Surgeons blinded by enhanced navigation: The effect of augmented reality on attention. Surg. Endosc. 2013, 27, 454–461. [Google Scholar] [CrossRef] [PubMed]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).